CORRELATION THE DEGREE OF RELATIONSHIP BETWEEN VARIABLES CORRELATION

CORRELATION THE DEGREE OF RELATIONSHIP BETWEEN VARIABLES

CORRELATION • Correlation examines the strength of a connection between two characteristics belonging to the same individual, or event or equipment • The concept of correlation does not include the proposition that one thing is the cause and the other the effect • We merely say that two things are systematically connected

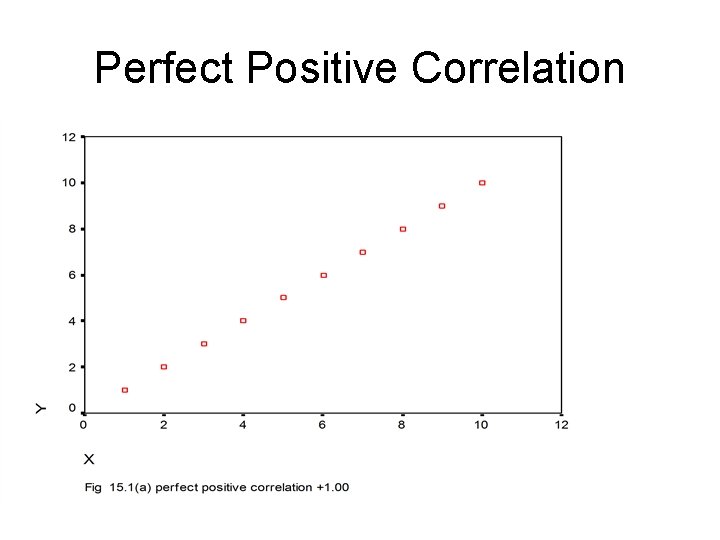

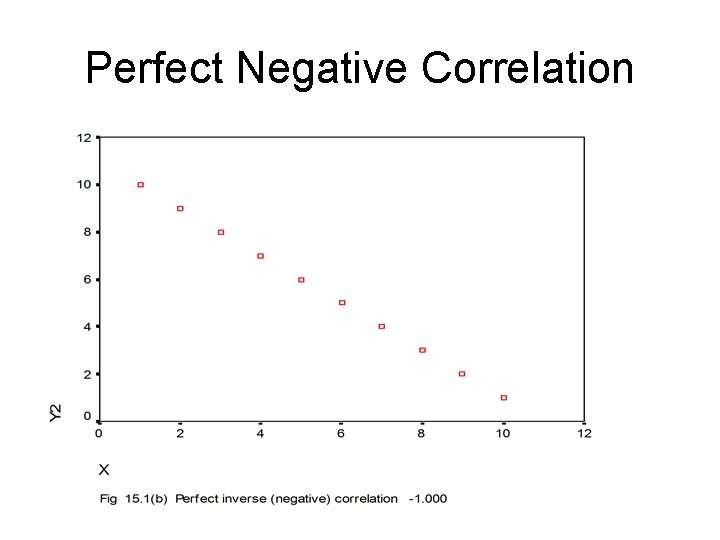

RELATIONSHIPS • Two variables can be positively correlated - an increase in one variable coincides with an increase in another variable, e. g. the more electricity used the higher the power bill. • A negative correlation - when one variable increases as the other decreases, e. g. as price increases, demand decreases. • A zero or random correlation - when variations in two variables occur randomly, e. g. number of accountants graduating per year with total annual attendance at national football league matches

THE CORRELATION COEFFICIENT (R) • The Correlation is measured by the correlation coefficient which is usually designated as ‘r’. • Correlations (r) range from +1. 00 perfect positive to -1. 00 perfect inverse with a midpoint 0. 00 indicating absolute randomness

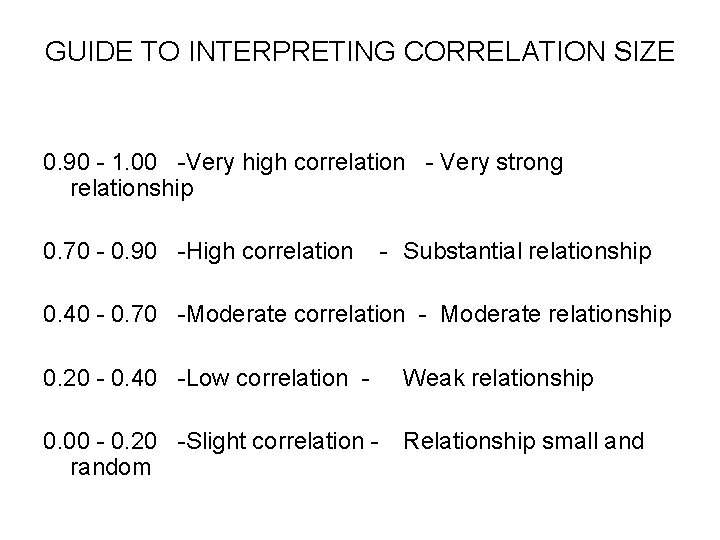

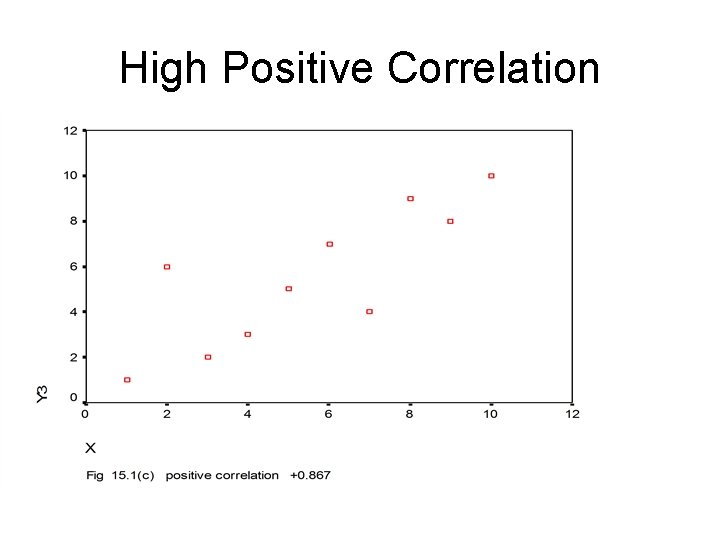

GUIDE TO INTERPRETING CORRELATION SIZE 0. 90 - 1. 00 -Very high correlation - Very strong relationship 0. 70 - 0. 90 -High correlation - Substantial relationship 0. 40 - 0. 70 -Moderate correlation - Moderate relationship 0. 20 - 0. 40 -Low correlation - Weak relationship 0. 00 - 0. 20 -Slight correlation random Relationship small and

Perfect Positive Correlation

Perfect Negative Correlation

High Positive Correlation

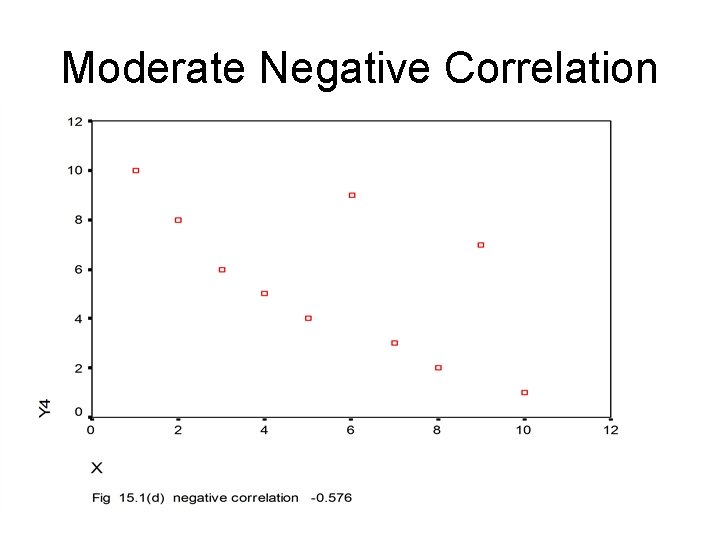

Moderate Negative Correlation

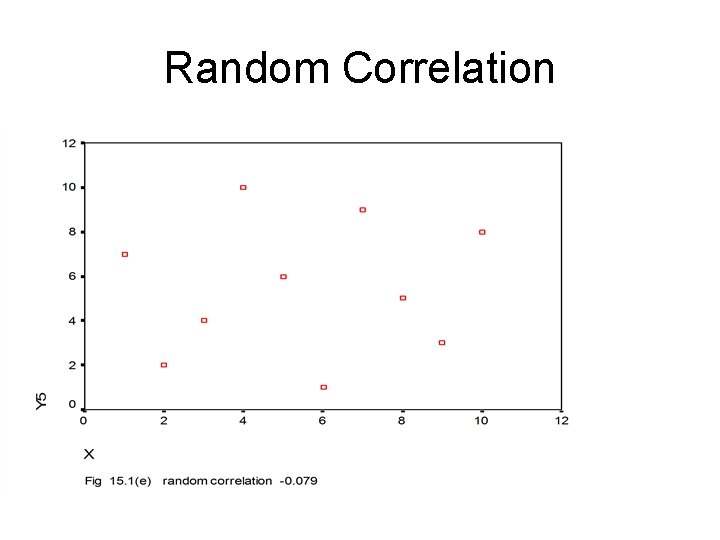

Random Correlation

DIRECTION AND STRENGTH OF CORRELATION COEFFICIENTS • The direction of relationship is indicated by the mathematical sign + or – • The strength of relationship is represented by the absolute size of the coefficient how close it is to +1. 00 or -1. 00.

CORRELATION TESTS There are many correlation tests designed for particular conditions such as whether data is ordinal or interval or combinations of the two. The major ones are: • Parametric Tests of Correlation – Pearson Product Moment Correlation or ‘r’ – Used with scale data • Non-Parametric Test of Correlation: – Rank Order Correlation (Spearman's Rho) – Cramer’s V, Kendall’s tau and phi, biserial correlation, tetrachoric correlation

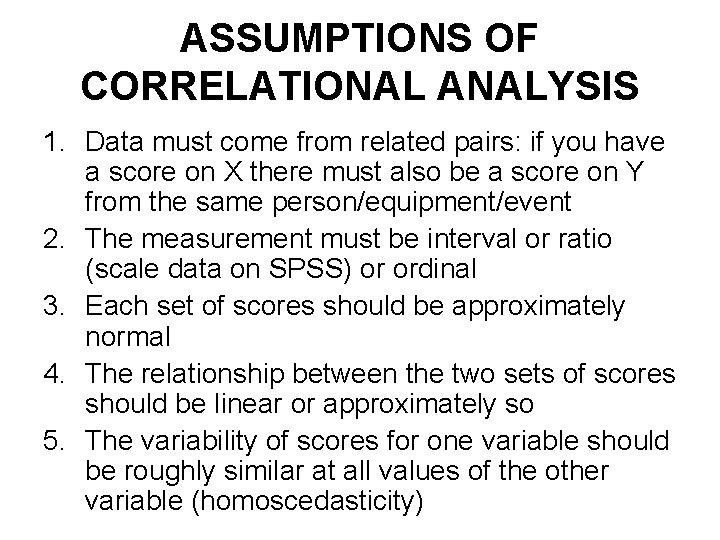

ASSUMPTIONS OF CORRELATIONAL ANALYSIS 1. Data must come from related pairs: if you have a score on X there must also be a score on Y from the same person/equipment/event 2. The measurement must be interval or ratio (scale data on SPSS) or ordinal 3. Each set of scores should be approximately normal 4. The relationship between the two sets of scores should be linear or approximately so 5. The variability of scores for one variable should be roughly similar at all values of the other variable (homoscedasticity)

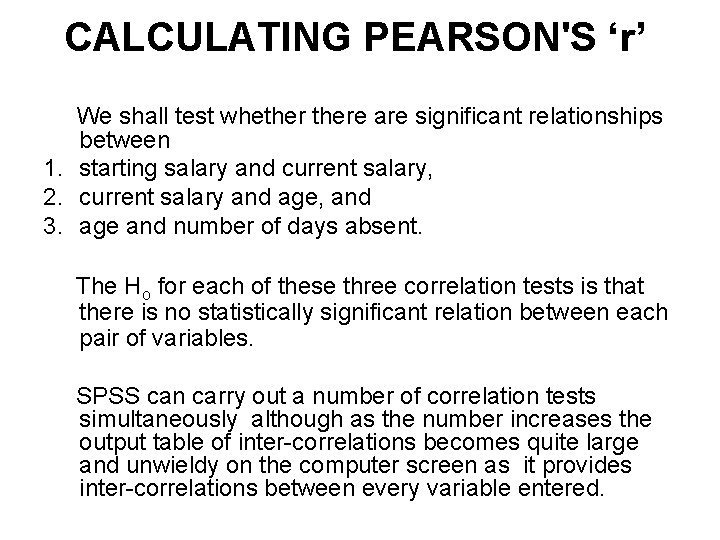

CALCULATING PEARSON'S ‘r’ We shall test whethere are significant relationships between 1. starting salary and current salary, 2. current salary and age, and 3. age and number of days absent. The Ho for each of these three correlation tests is that there is no statistically significant relation between each pair of variables. SPSS can carry out a number of correlation tests simultaneously although as the number increases the output table of inter-correlations becomes quite large and unwieldy on the computer screen as it provides inter-correlations between every variable entered.

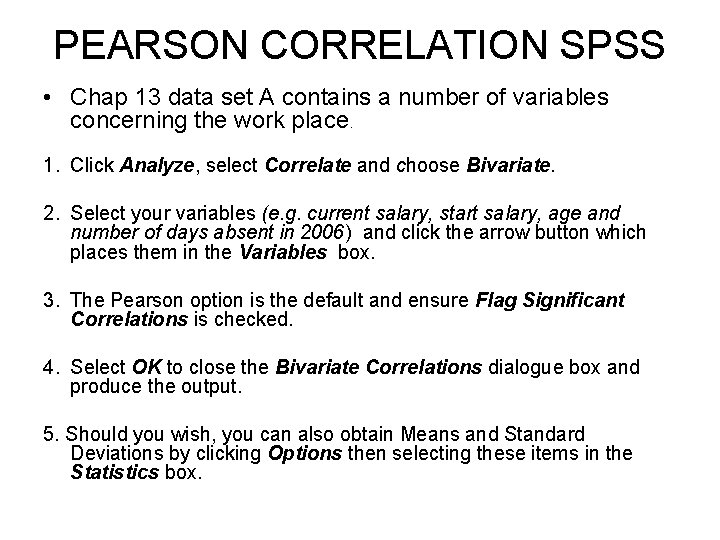

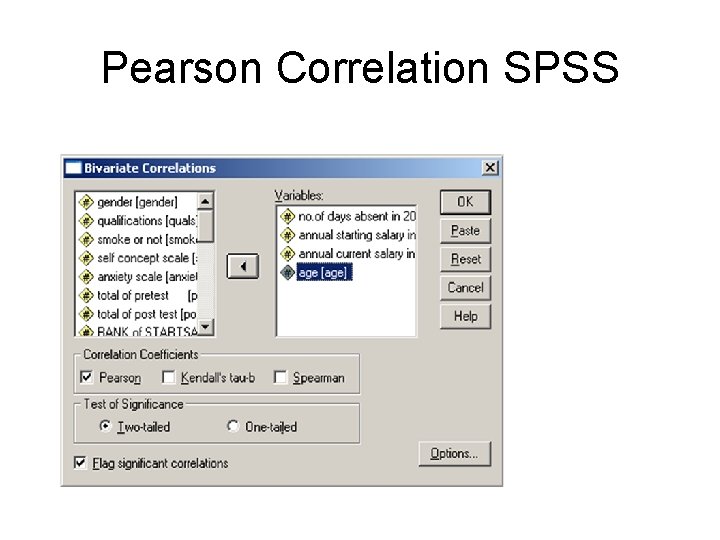

PEARSON CORRELATION SPSS • Chap 13 data set A contains a number of variables concerning the work place. 1. Click Analyze, select Correlate and choose Bivariate. 2. Select your variables (e. g. current salary, start salary, age and number of days absent in 2006) and click the arrow button which places them in the Variables box. 3. The Pearson option is the default and ensure Flag Significant Correlations is checked. 4. Select OK to close the Bivariate Correlations dialogue box and produce the output. 5. Should you wish, you can also obtain Means and Standard Deviations by clicking Options then selecting these items in the Statistics box.

Pearson Correlation SPSS

PEARSON CORRELATION SPSS • Additionally, you should create a scattergraph to display the relationship visually. • It lets you see whether you have a linear or nonlinear relationship. Correlation assumes a linear or closely linear relationship. • If the scattergraph shows a curvilinear shape then you cannot use Pearson.

PRODUCING A SCATTERGRAPH • 1. Click Graph and then Scatter. • 2. Simple is the default mode and will provide a single scattergraph for one pair of variables. Click Define. (If you wish to obtain a number of scattergraphs simultaneously click on Matrix then Define. ) • 3. Move two variables (e. g. current and starting salary) into the box with the arrow button, then select OK to produce the scattergraph • 4. With a correlation it does not really matter which variable you place on which axis

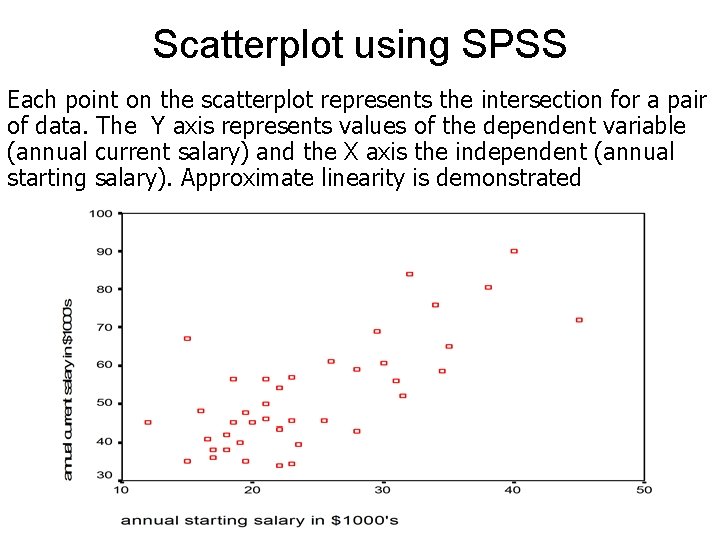

Scatterplot using SPSS Each point on the scatterplot represents the intersection for a pair of data. The Y axis represents values of the dependent variable (annual current salary) and the X axis the independent (annual starting salary). Approximate linearity is demonstrated

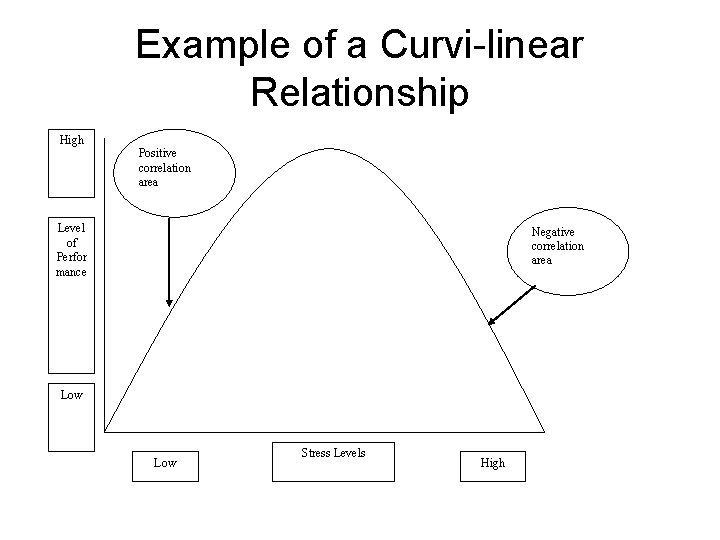

Example of a Curvi-linear Relationship High Positive correlation area Level of Perfor mance Negative correlation area Low Stress Levels High

Output Inter-correlation Table Pearson Correlations

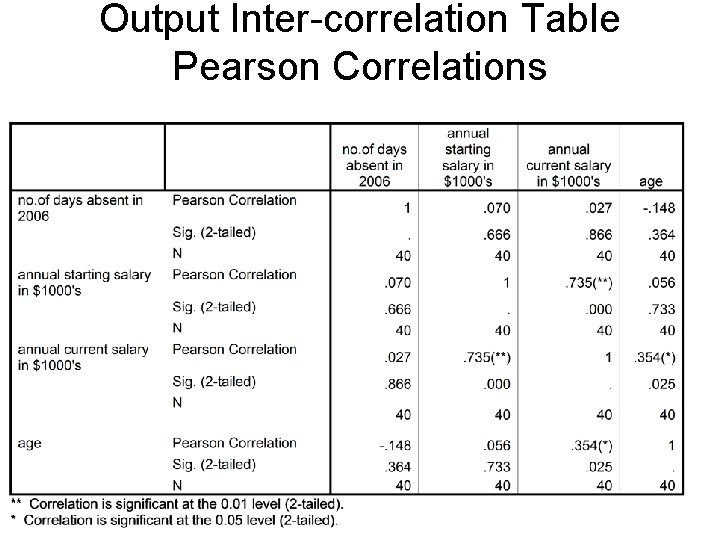

INTERPRETING PEARSON OUTPUT SPSS • Only a correlation matrix is produced. The diagonal of this matrix (from top left to bottom right) consists of each variable correlated with itself, obviously giving a perfect correlation of 1. No significance level is quoted for this value. • Each result is tabulated twice at the intersections of both variables. The exact significance level is given to three decimal places. • Forty pairs of scores were used to obtain the correlation coefficient.

INTERPRETING PEARSON OUTPUT SPSS • The correlation between starting and current salary is +0. 735; this is significant at the. 01 level, i. e high starting salaries are related to high current salaries and low with low • The correlation between current salary and age is +0. 354; this is significant at the. 05 level, i. e a weak relationship between increasing salary and increasing age. • The correlations between age and absence, and age and annual starting salary are not significant and both are close to a random relationship, i. e. they are not related at all

SPEARMAN’S RANK ORDER CORRELATION • This correlation is typically used when there are only a few cases (or subjects) involved and the data are already ordinal or can be changed into ranks. • This correlation is usually designated as 'rho' to distinguish it from Pearson's ‘r’. It is based on the differences in ranks between each of the pairs of ranks. E. g. if a subject is ranked first on one measure but only fifth on another the difference (d) = 1 - 5 = 4. • Rank order correlation follows the same principles as outlined earlier and ranges from +1 to -1. • While rho is suitable only for ranked (or ordinal) data, interval data can also be used provided it is first ranked. • However, rho will always provide a lower estimate of correlation than r because the data is degraded

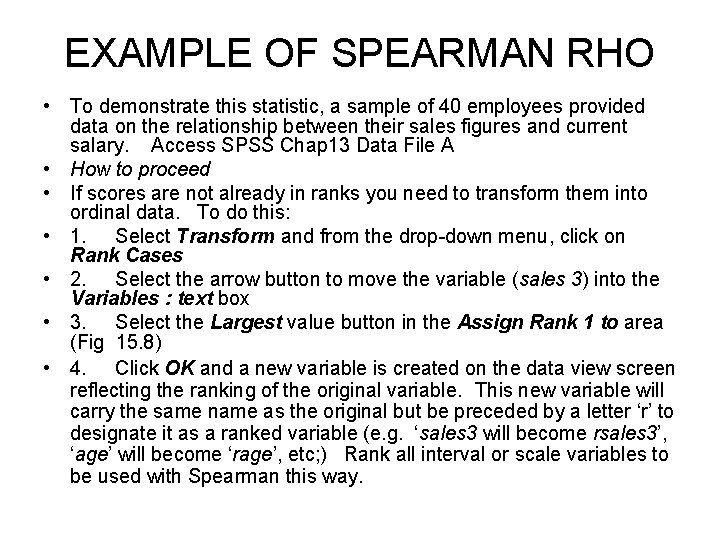

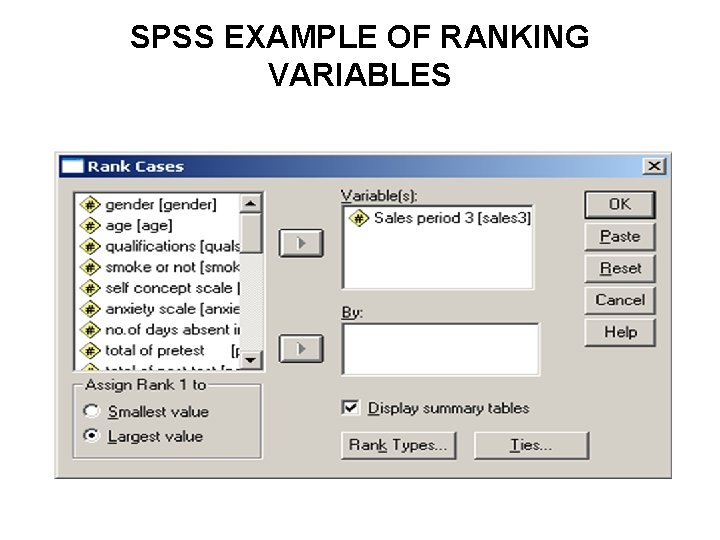

EXAMPLE OF SPEARMAN RHO • To demonstrate this statistic, a sample of 40 employees provided data on the relationship between their sales figures and current salary. Access SPSS Chap 13 Data File A • How to proceed • If scores are not already in ranks you need to transform them into ordinal data. To do this: • 1. Select Transform and from the drop-down menu, click on Rank Cases • 2. Select the arrow button to move the variable (sales 3) into the Variables : text box • 3. Select the Largest value button in the Assign Rank 1 to area (Fig 15. 8) • 4. Click OK and a new variable is created on the data view screen reflecting the ranking of the original variable. This new variable will carry the same name as the original but be preceded by a letter ‘r’ to designate it as a ranked variable (e. g. ‘sales 3 will become rsales 3’, ‘age’ will become ‘rage’, etc; ) Rank all interval or scale variables to be used with Spearman this way.

SPSS EXAMPLE OF RANKING VARIABLES

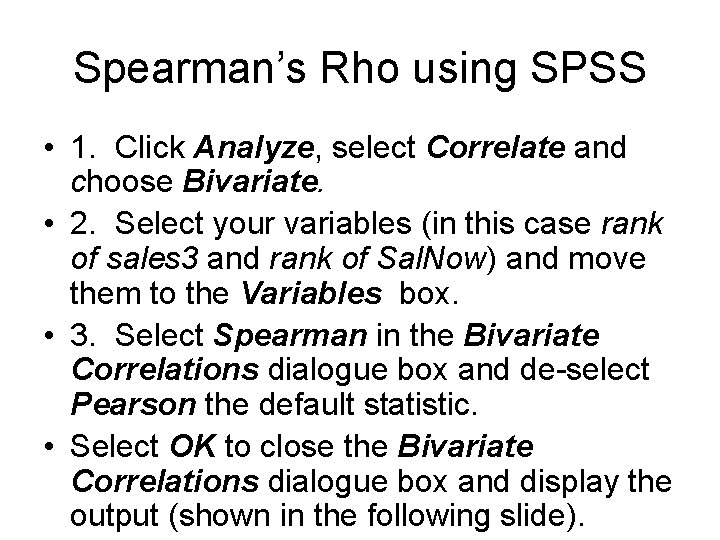

Spearman’s Rho using SPSS • 1. Click Analyze, select Correlate and choose Bivariate. • 2. Select your variables (in this case rank of sales 3 and rank of Sal. Now) and move them to the Variables box. • 3. Select Spearman in the Bivariate Correlations dialogue box and de-select Pearson the default statistic. • Select OK to close the Bivariate Correlations dialogue box and display the output (shown in the following slide).

Spearman’s Rho SPSS

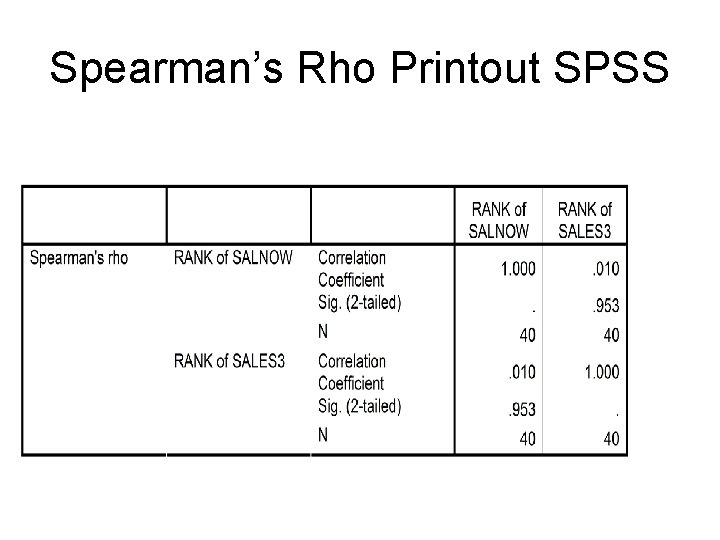

Spearman’s Rho Printout SPSS

INTERPRETATION OF SPEARMAN’S RHO PRINTOUT • • • Spearman's rho is printed in a matrix like that for Pearson with 1 in the top right diagonal and the results replicated in the top right to bottom left diagonal. Spearman's correlation of +0. 010 between the ranks for current salary and sales performance at period three is close to random and not statistically significant ( p =. 953) The number of cases on which that correlation was based is 40.

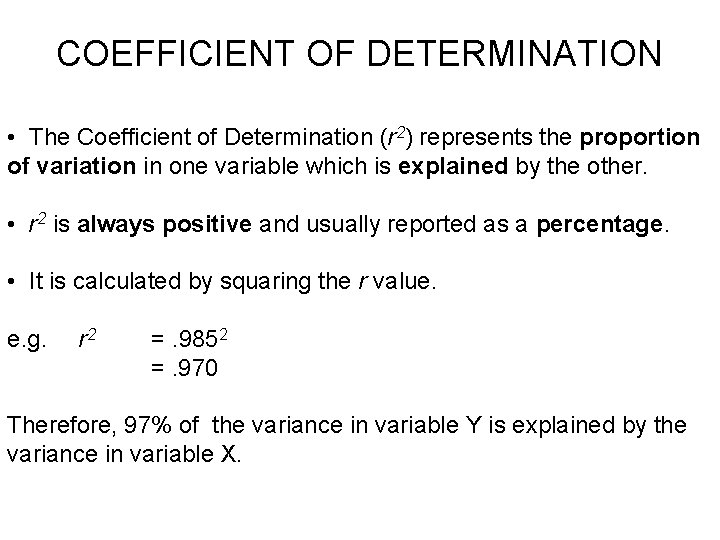

COEFFICIENT OF DETERMINATION • The Coefficient of Determination (r 2) represents the proportion of variation in one variable which is explained by the other. • r 2 is always positive and usually reported as a percentage. • It is calculated by squaring the r value. e. g. r 2 =. 9852 =. 970 Therefore, 97% of the variance in variable Y is explained by the variance in variable X.

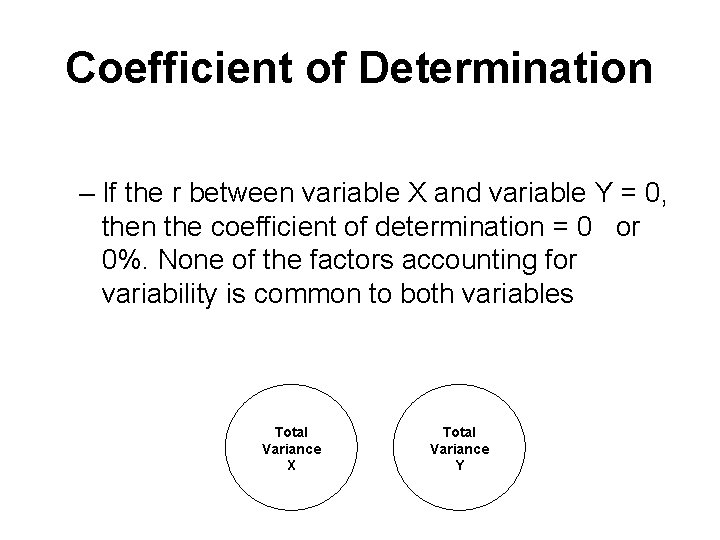

Coefficient of Determination – If the r between variable X and variable Y = 0, then the coefficient of determination = 0 or 0%. None of the factors accounting for variability is common to both variables Total Variance X Total Variance Y

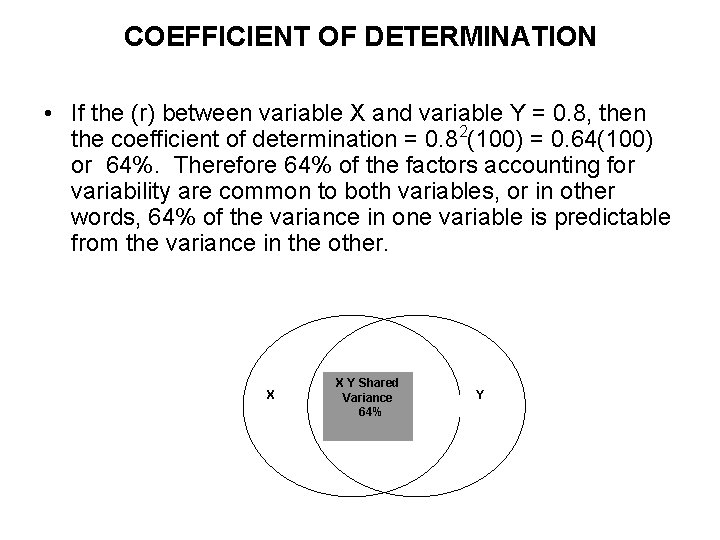

COEFFICIENT OF DETERMINATION • If the (r) between variable X and variable Y = 0. 8, then the coefficient of determination = 0. 82(100) = 0. 64(100) or 64%. Therefore 64% of the factors accounting for variability are common to both variables, or in other words, 64% of the variance in one variable is predictable from the variance in the other. X X Y Shared Variance 64% Y

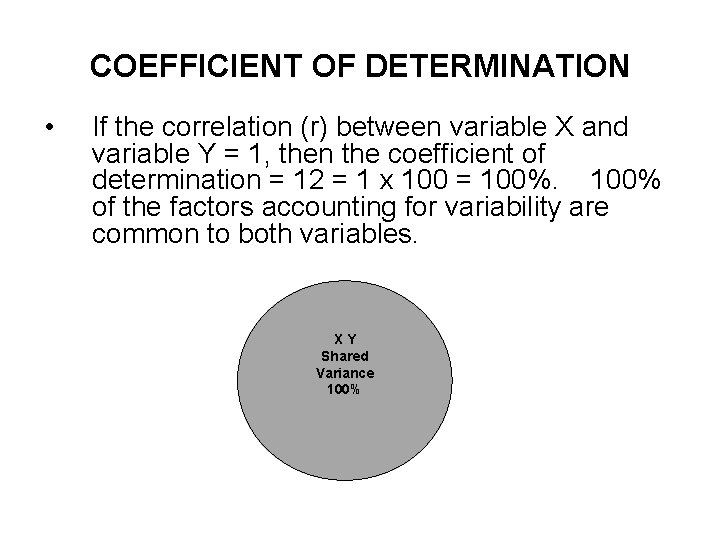

COEFFICIENT OF DETERMINATION • If the correlation (r) between variable X and variable Y = 1, then the coefficient of determination = 12 = 1 x 100 = 100% of the factors accounting for variability are common to both variables. XY Shared Variance 100%

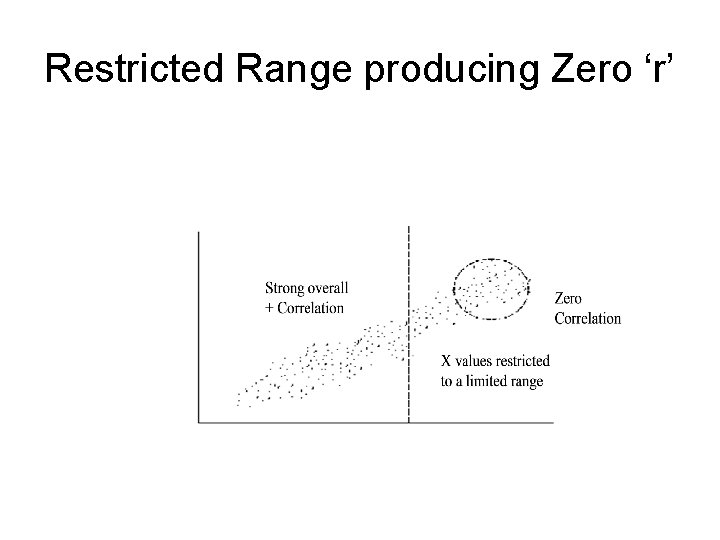

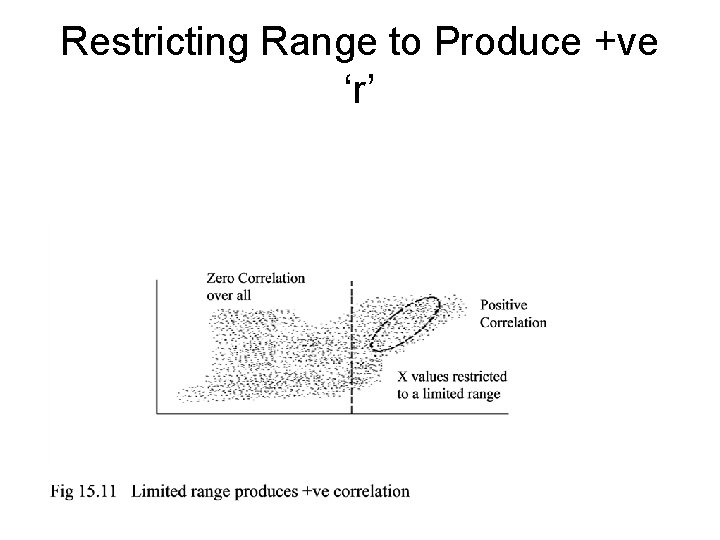

PROBLEMS IN INTERPRETING A CORRELATION • Whenever a correlation is computed from values that do not represent the full range of the distribution caution must be used in interpreting it. • A high positive correlation can be obscured if only a limited range of data is obtained (following slide) • An overall zero ‘r’ can be inflated to a positive one by restricting the range too (following slide)

Restricted Range producing Zero ‘r’

Restricting Range to Produce +ve ‘r’

PARTIAL CORRELATION • Partialling out a variable is used when you wish to eliminate the influence of a third variable on the correlation between two other variables. It simply means controlling the influence of that variable. • Other terms for partialling out are ‘holding constant’, and ‘controlling for’. • The partial correlation coefficient, which, like other correlations takes values between -1 and +1, is essentially an ordinary bivariate correlation, except that some third variable is being controlled for.

EXAMPLE OF PARTIAL CORRELATION • We will illustrate this using Chap 13 data set with data from 40 employees on their age, their current salary, and their starting salary. • A previous study had shown a significant Pearson’s ‘r’ of +0. 735 between starting salary and current salary but this could be an artifact of their age since this likely impacts on both. • A partial correlation was computed to ‘hold constant’ or ‘partial out’ the variable of age in the relationship between starting and current salary to check whether this assumption is correct.

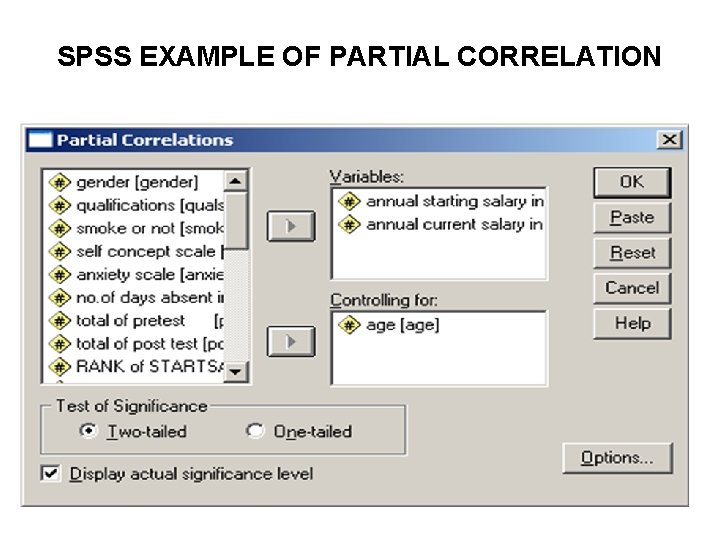

PARTIAL CORRELATION SPSS • 1. Select Analyze and click on Correlate. • 2. Choose Partial which brings the Partial Correlations box into view. • 3. Select the variables that represent starting salary and current salary and move them into the variables box. • 4. Select the age variable and move it into the Controlling for box • 5. Click the two- tail option in the Test of Significance box. • 6. To obtain the correlation between starting salary and current salary without holding age constant click Options and select Zero order correlations • 7. Click Continue then OK. Two tables are produced

SPSS EXAMPLE OF PARTIAL CORRELATION

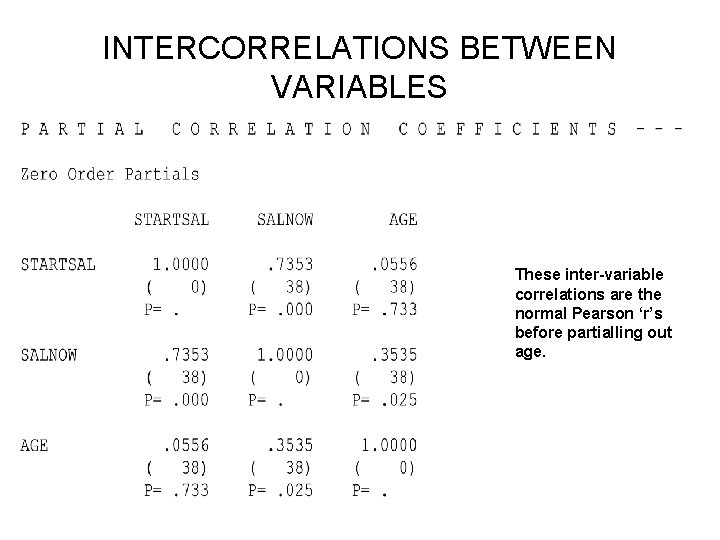

INTERCORRELATIONS BETWEEN VARIABLES These inter-variable correlations are the normal Pearson ‘r’s before partialling out age.

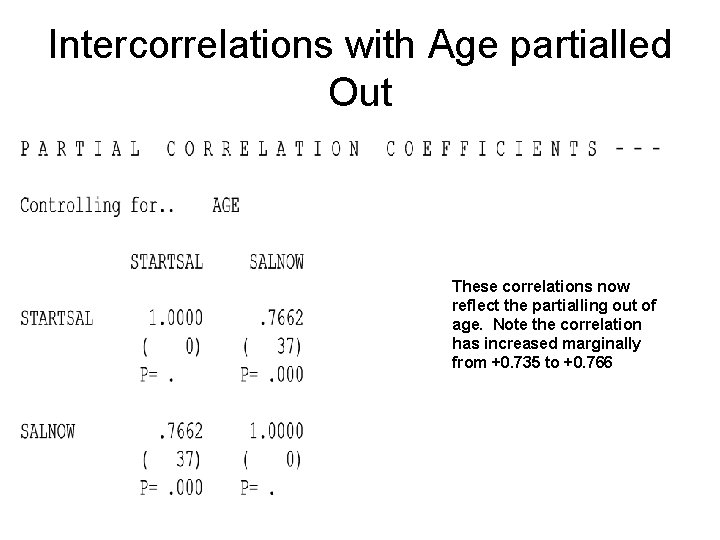

Intercorrelations with Age partialled Out These correlations now reflect the partialling out of age. Note the correlation has increased marginally from +0. 735 to +0. 766

INTERPRETATION OF PARTIAL CORRELATION PRINTOUT • • The first table provides usual bivariate correlations between starting salary and current salary now without age held constant. This displays a correlation of +0. 7353, p < 0. 001. The second table provides the partial correlation coefficient, with age held constant and the p value on a diagonal format as usual. The partial correlation between starting salary and current salary with age held constant is +0. 7662, with p < 0. 001 which indicates a stronger significant relationship than the zero order one of +0. 7353. Age has a small but important effect in moderating the correlation between starting salary and current salary. The null hypothesis that there is no significant relationship between starting salary and current salary with age partialled out must be rejected.

- Slides: 44