Conversational Sensemaking Alun Preece Will Webberley Cardiff Dave

Conversational Sensemaking Alun Preece, Will Webberley (Cardiff) Dave Braines (IBM UK)

Introduction

The story so far • Human-centric sensing (2012) Srivastava, M. , Abdelzaher, T. , & Szymanski, B. (2012). Human-centric sensing. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 370(1958), 176 -197. • CE-SAM: a conversational interface for ISR mission support (2013) Pizzocaro, D. , Parizas, C. , Preece, A. , Braines, D. , Mott, D. , & Bakdash, J. Z. (2013, May). CESAM: a conversational interface for ISR mission support. In SPIE Defense, Security, and Sensing (pp. 87580 I-87580 I). International Society for Optics and Photonics. • Human-machine conversations to support multi-agency missions (2014) Preece, A. , Braines, D. , Pizzocaro, D. , & Parizas, C. (2014). Human-machine conversations to support multi-agency missions. ACM SIGMOBILE Mobile Computing and Communications Review, 18(1), 75 -84. • Conversational sensing (2014) Preece, A. , Gwilliams, C. , Parizas, C. , Pizzocaro, D. , Bakdash, J. Z. , & Braines, D. (2014, May). Conversational sensing. In SPIE Sensing Technology+ Applications (pp. 91220 I-91220 I). International Society for Optics and Photonics. • Conversational sensemaking (2015)

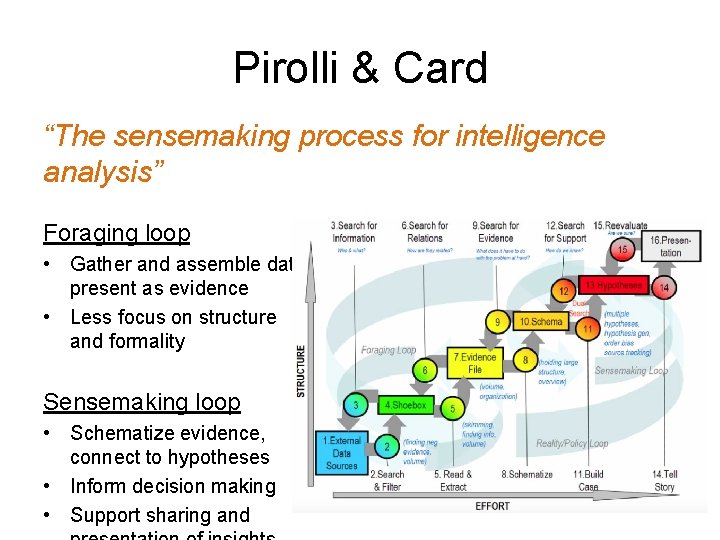

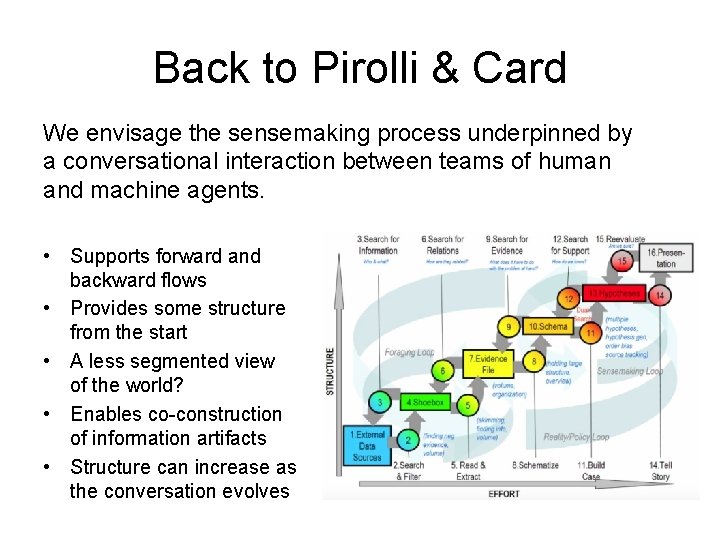

Pirolli & Card “The sensemaking process for intelligence analysis” Foraging loop • Gather and assemble data, present as evidence • Less focus on structure and formality Sensemaking loop • Schematize evidence, connect to hypotheses • Inform decision making • Support sharing and

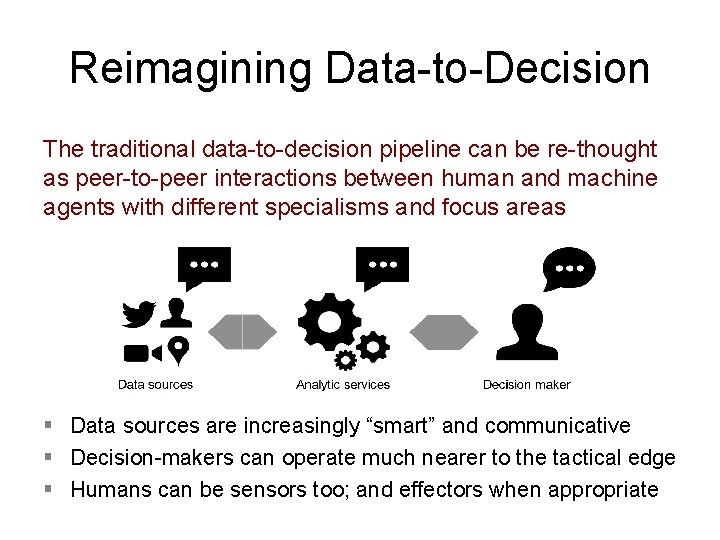

Reimagining Data-to-Decision The traditional data-to-decision pipeline can be re-thought as peer-to-peer interactions between human and machine agents with different specialisms and focus areas § Data sources are increasingly “smart” and communicative § Decision-makers can operate much nearer to the tactical edge § Humans can be sensors too; and effectors when appropriate

Back to Pirolli & Card We envisage the sensemaking process underpinned by a conversational interaction between teams of human and machine agents. • Supports forward and backward flows • Provides some structure from the start • A less segmented view of the world? • Enables co-construction of information artifacts • Structure can increase as the conversation evolves

Human-Machine Conversational Model

Background: Format for conversation An appropriate form for human-machine interaction is a challenge: § humans prefer natural language (NL) or images § these forms are difficult for machines to process, leading to ambiguity and miscommunication Compromise: controlled natural language (CNL) there is a person named p 1 that is known as ‘John Smith’ and is a person of interest. low complexity | no ambiguity ITA Controlled English (CE)

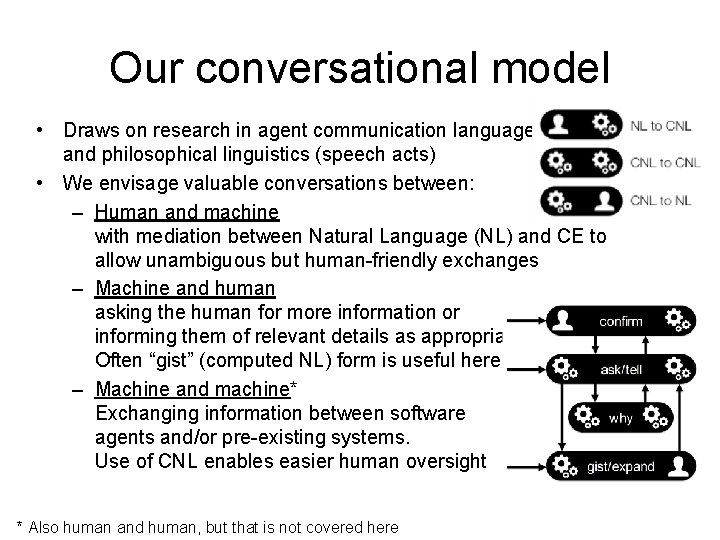

Our conversational model • Draws on research in agent communication languages and philosophical linguistics (speech acts) • We envisage valuable conversations between: – Human and machine with mediation between Natural Language (NL) and CE to allow unambiguous but human-friendly exchanges – Machine and human asking the human for more information or informing them of relevant details as appropriate. Often “gist” (computed NL) form is useful here – Machine and machine* Exchanging information between software agents and/or pre-existing systems. Use of CNL enables easier human oversight * Also human and human, but that is not covered here

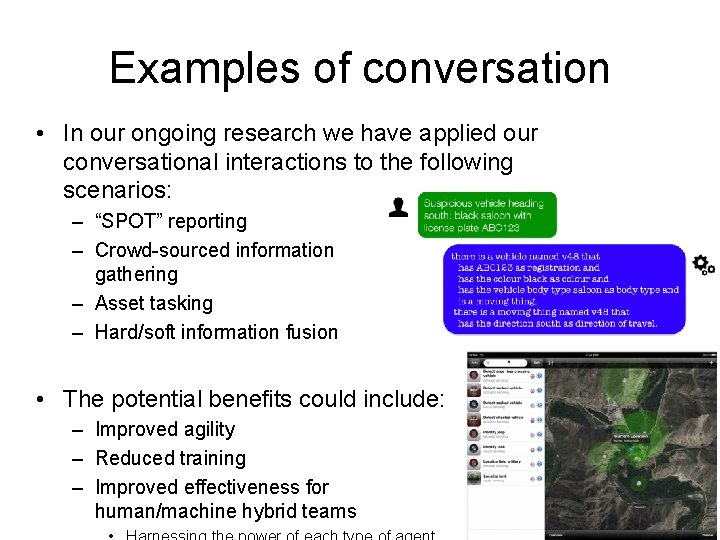

Examples of conversation • In our ongoing research we have applied our conversational interactions to the following scenarios: – “SPOT” reporting – Crowd-sourced information gathering – Asset tasking – Hard/soft information fusion • The potential benefits could include: – Improved agility – Reduced training – Improved effectiveness for human/machine hybrid teams

“Bag of words” NLP • The purpose of the conversational interaction is to allow humans to use natural English language (NL) • NL is converted to CE through simple “bag of words” NL processing – Consult the knowledge base for matches and synonyms – Covering the model (concepts, relations, rules) and the “facts” • Confirmation of interpretation can (optionally) be sent to the user – Confirmation is in CE; the machine format but human readable – Not always appropriate to share • Model can be expanded through the conversation too – Learning new terms – Developing specialisations – Extending relations • Could be integrated with a deeper linguistic parser

Conversational Foraging

Introducing Moira • “Moira” – Mobile Intelligence Reporting Application • A machine agent able to engage in conversation • Access to CE knowledge base – Can read all available knowledge, explore and answer questions – Can help the human user contribute new knowledge • Model, fact, rule • Contextual operation – Aware of the users role, location, status – Able to alert “interesting” information

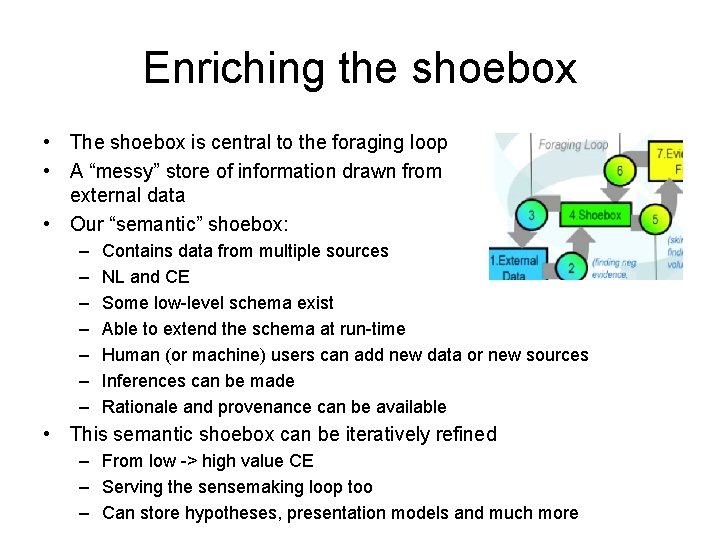

Enriching the shoebox • The shoebox is central to the foraging loop • A “messy” store of information drawn from external data • Our “semantic” shoebox: – – – – Contains data from multiple sources NL and CE Some low-level schema exist Able to extend the schema at run-time Human (or machine) users can add new data or new sources Inferences can be made Rationale and provenance can be available • This semantic shoebox can be iteratively refined – From low -> high value CE – Serving the sensemaking loop too – Can store hypotheses, presentation models and much more

A sensemaking blackboard • This “semantic shoebox” is actually a sensemaking blackboard – An open “sandpit” blackboard; not task/solution specific • The agents: – Human users • Define/extend the model • Capture local knowledge & insight • Direct agent activities – Machine agents • Execute logical inference rules (general) • Existing software algorithms (specialised) • Control through triggers, alerts, commands etc • The single language is ITA CE, with “rationale” for explanation

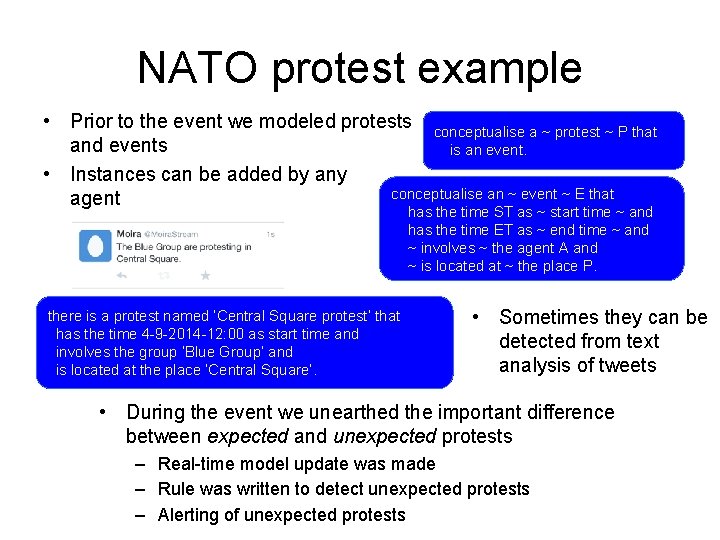

NATO protest example • Prior to the event we modeled protests conceptualise a ~ protest ~ P that and events is an event. • Instances can be added by any conceptualise an ~ event ~ E that agent has the time ST as ~ start time ~ and has the time ET as ~ end time ~ and ~ involves ~ the agent A and ~ is located at ~ the place P. there is a protest named ‘Central Square protest’ that has the time 4 -9 -2014 -12: 00 as start time and involves the group ‘Blue Group’ and is located at the place ‘Central Square’. • Sometimes they can be detected from text analysis of tweets • During the event we unearthed the important difference between expected and unexpected protests – Real-time model update was made – Rule was written to detect unexpected protests – Alerting of unexpected protests

Conversational Sensemaking

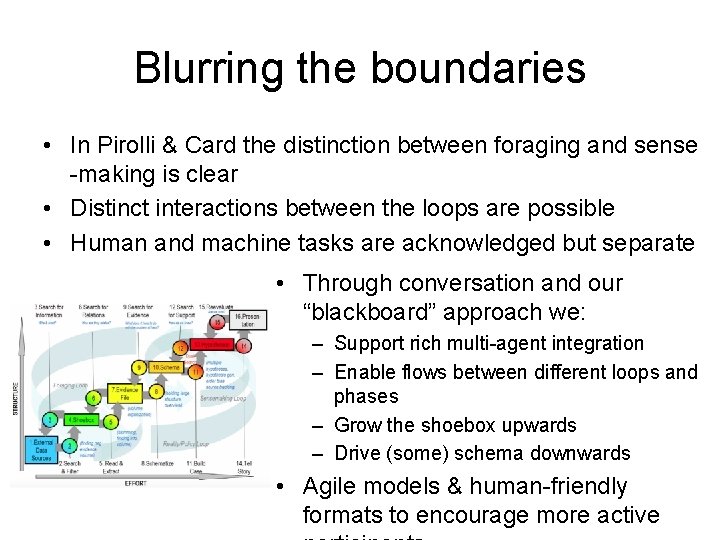

Blurring the boundaries • In Pirolli & Card the distinction between foraging and sense -making is clear • Distinct interactions between the loops are possible • Human and machine tasks are acknowledged but separate • Through conversation and our “blackboard” approach we: – Support rich multi-agent integration – Enable flows between different loops and phases – Grow the shoebox upwards – Drive (some) schema downwards • Agile models & human-friendly formats to encourage more active

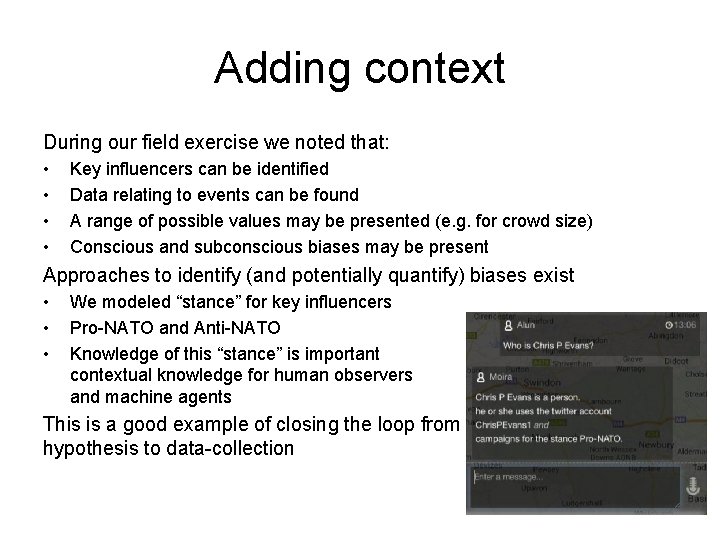

Adding context During our field exercise we noted that: • • Key influencers can be identified Data relating to events can be found A range of possible values may be presented (e. g. for crowd size) Conscious and subconscious biases may be present Approaches to identify (and potentially quantify) biases exist • • • We modeled “stance” for key influencers Pro-NATO and Anti-NATO Knowledge of this “stance” is important contextual knowledge for human observers and machine agents This is a good example of closing the loop from hypothesis to data-collection

Moving to richer models • We can “grow the shoebox” as we progress to higher levels • Rather than “increased schematization” we introduce richer models, or refine models through conversation • Hypotheses can be modeled, subjectivity can be captured (or computed) – Including propagation through inference or other computation • Rationale (asking “why? ”) can link higher level models to lower level information • Related work: – “Collaborative human-machine analysis using a controlled natural language” – Mott et al [The next presentation!] – Argumentation, trust and subjective logic

Presenting through storytelling • Using connected hypotheses, evidence and data to tell a story • Asking “why? ” to uncover rationale for information • Apply narrative framings to the body of knowledge • Also expressable in CE – A generalised abstraction of storytelling that can be applied to any domain – Organising the domain into an episodic sequence – Applying additional multi-modal information

Wrapping up

Summary • Envisage Pirolli & Card feedback loops as a series of human-machine conversations • Helping to harness each agents strengths? – Humans: Interpreting & hypothesising – Machines: large scale data, pattern collection • Rationale to promote transparency and trust • Enabling debate and argument – Reveal conflicts (and agreements) – Explore (and maybe reconcile) differences • Currently focus is on text communications • Future experiments: – Mix of human and machine-based sensing – Grow links with argumentation research

Conversational Sensemaking SPIE DSS 2015 – Next Generation Analyst III (Human Machine Interaction) Research was sponsored by US Army Research Laboratory and the UK Ministry of Defence and was accomplished under Agreement Number W 911 NF-06 -3 -0001. The views and conclusions contained in this document are those of the authors and should not be interpreted as representing the official policies, either expressed or implied, of the US Army Research Laboratory, the U. S. Government, the UK Ministry of Defense, or the UK Government. The US and UK Governments are authorized to reproduce and distribute reprints for Government purposes notwithstanding any copyright notation hereon. Many of the examples in this paper were informed by collaborative work between the authors and members of Cardiff Universities Police Science Institute, http: //www. upsi. org. uk. We especially thank Martin Innes, Colin Roberts, and Sarah Tucker for their valuable insights on policing and community reaction. Any questions? dave_braines@uk. ibm. com

- Slides: 24