Contrastive Training for Improved Outof Distribution Detection Student

Contrastive Training for Improved Out-of. Distribution Detection Student: Kulakov Mykhailo Tutor: Paschali Magdalini

Introduction

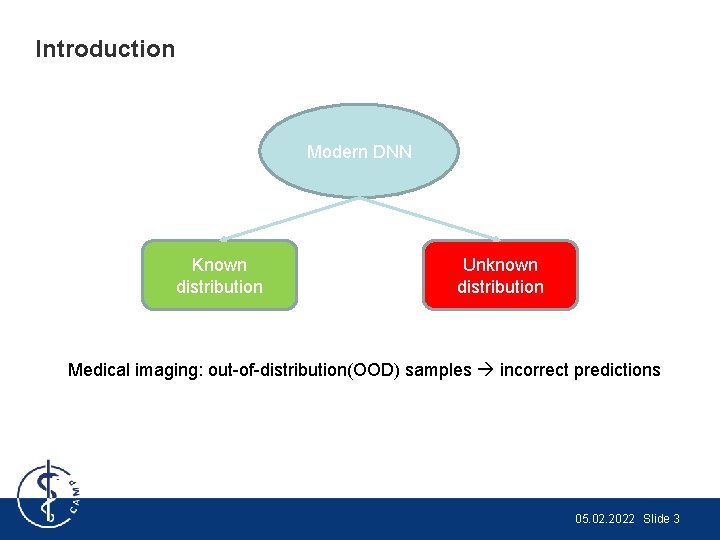

Introduction Modern DNN Known distribution Unknown distribution Medical imaging: out-of-distribution(OOD) samples incorrect predictions 05. 02. 2022 Slide 3

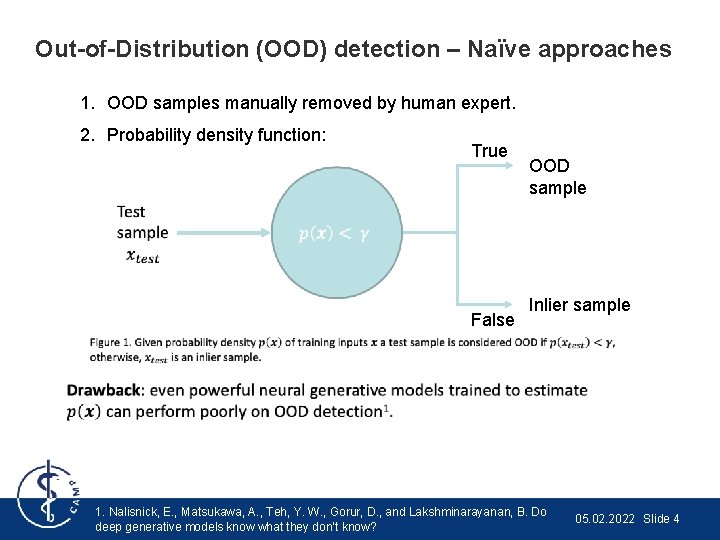

Out-of-Distribution (OOD) detection – Naïve approaches 1. OOD samples manually removed by human expert. 2. Probability density function: True False OOD sample Inlier sample 1. Nalisnick, E. , Matsukawa, A. , Teh, Y. W. , Gorur, D. , and Lakshminarayanan, B. Do deep generative models know what they don’t know? 05. 02. 2022 Slide 4

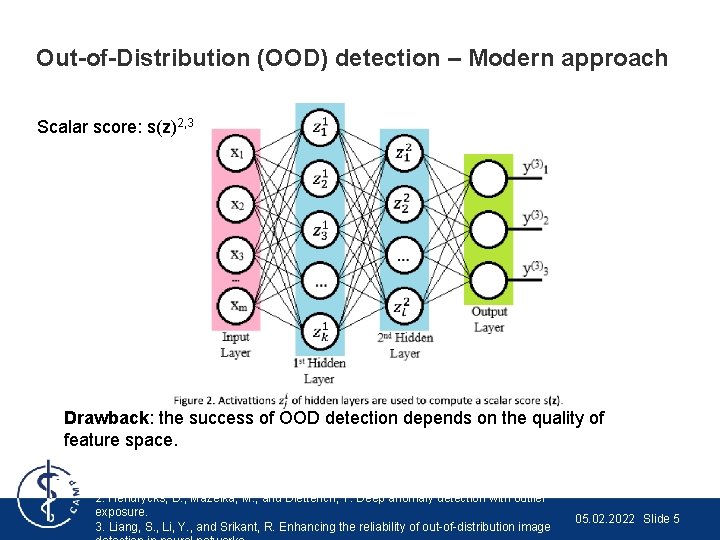

Out-of-Distribution (OOD) detection – Modern approach Scalar score: s(z)2, 3 Drawback: the success of OOD detection depends on the quality of feature space. 2. Hendrycks, D. , Mazeika, M. , and Dietterich, T. Deep anomaly detection with outlier exposure. 3. Liang, S. , Li, Y. , and Srikant, R. Enhancing the reliability of out-of-distribution image 05. 02. 2022 Slide 5

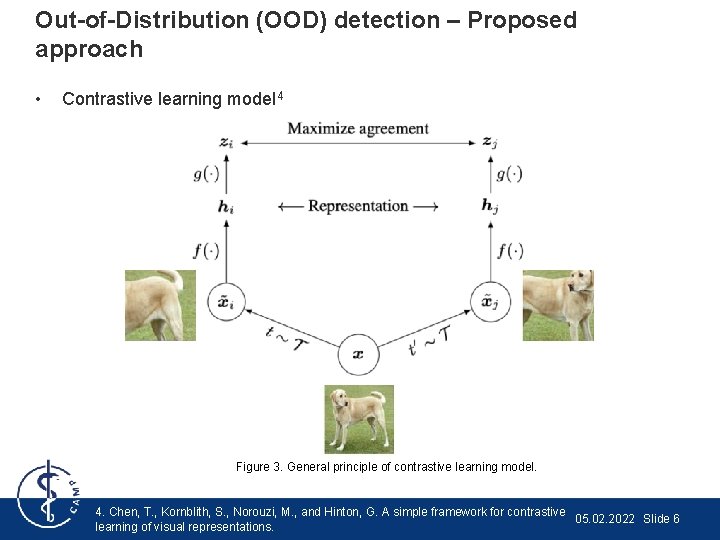

Out-of-Distribution (OOD) detection – Proposed approach • Contrastive learning model 4 Figure 3. General principle of contrastive learning model. 4. Chen, T. , Kornblith, S. , Norouzi, M. , and Hinton, G. A simple framework for contrastive 05. 02. 2022 Slide 6 learning of visual representations.

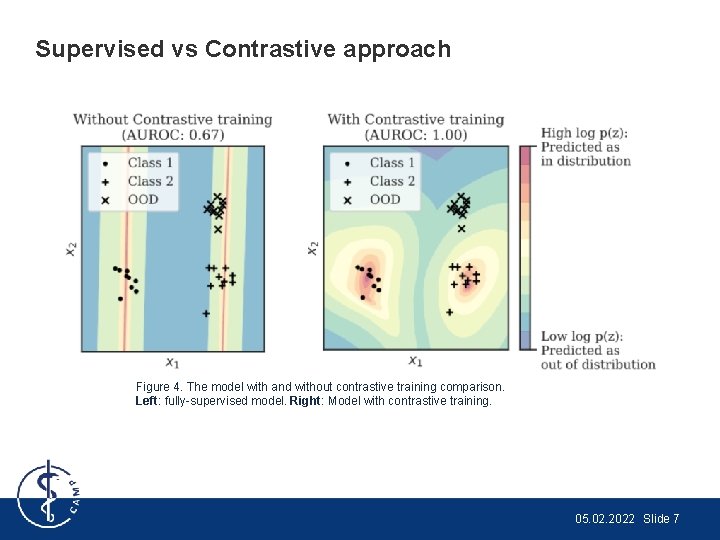

Supervised vs Contrastive approach Figure 4. The model with and without contrastive training comparison. Left: fully-supervised model. Right: Model with contrastive training. 05. 02. 2022 Slide 7

Proposed Method

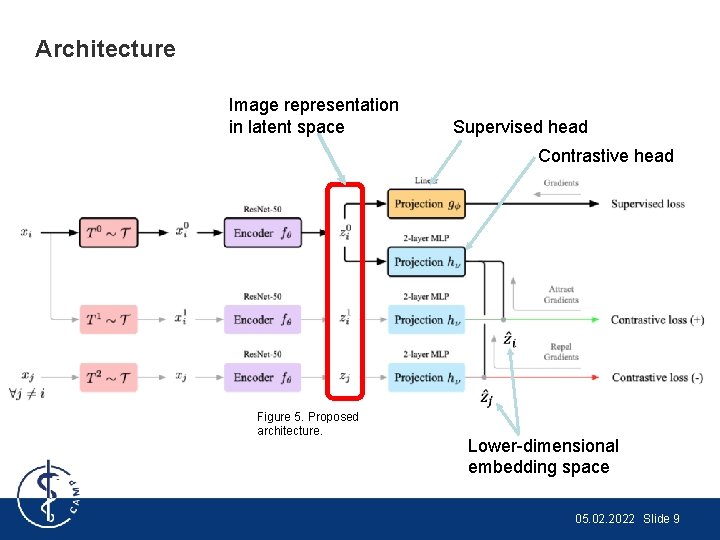

Architecture Image representation in latent space Supervised head Contrastive head Figure 5. Proposed architecture. Lower-dimensional embedding space 05. 02. 2022 Slide 9

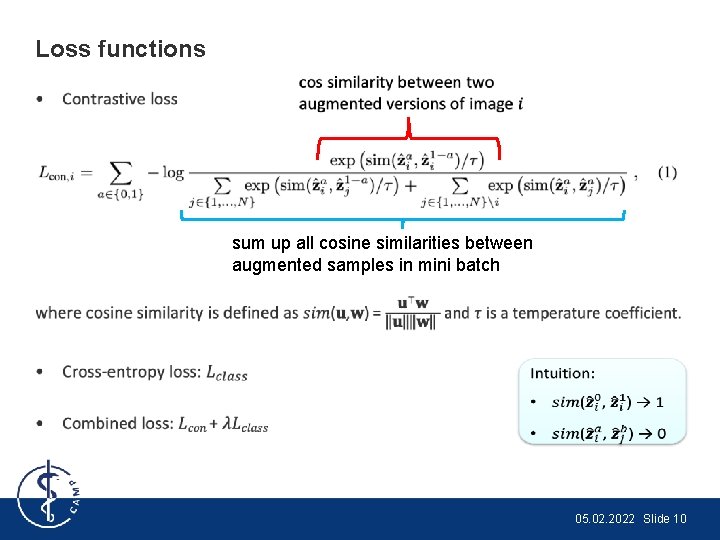

Loss functions • sum up all cosine similarities between augmented samples in mini batch 05. 02. 2022 Slide 10

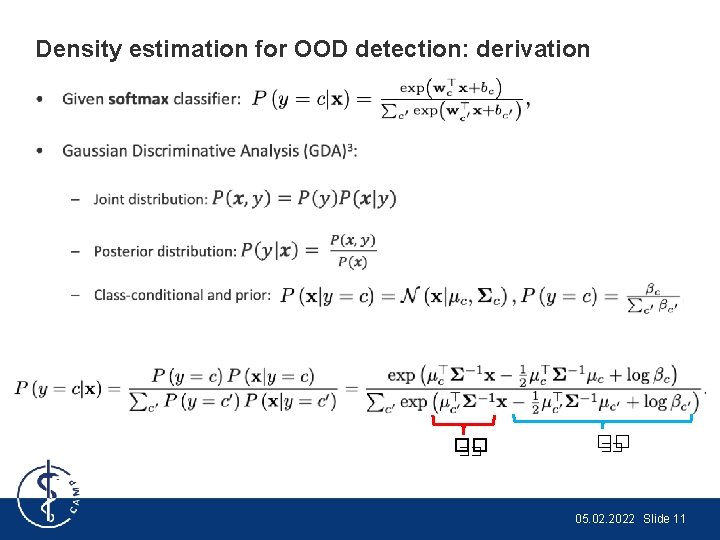

Density estimation for OOD detection: derivation • �� �� 05. 02. 2022 Slide 11

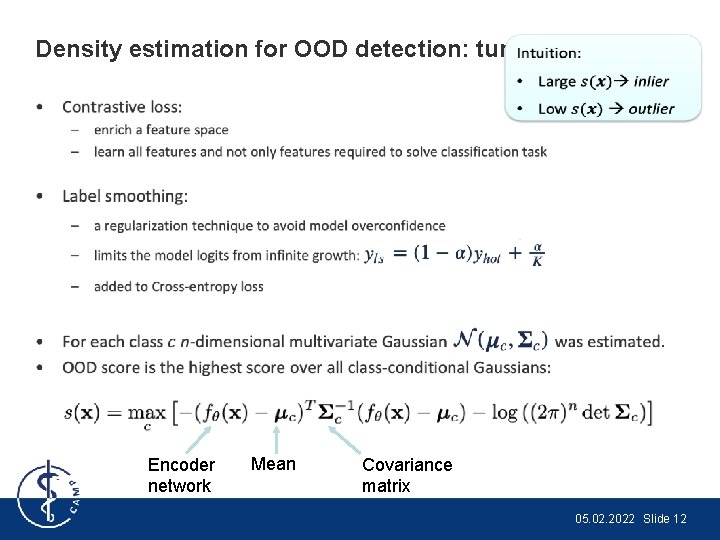

Density estimation for OOD detection: tuning • Encoder network Mean Covariance matrix 05. 02. 2022 Slide 12

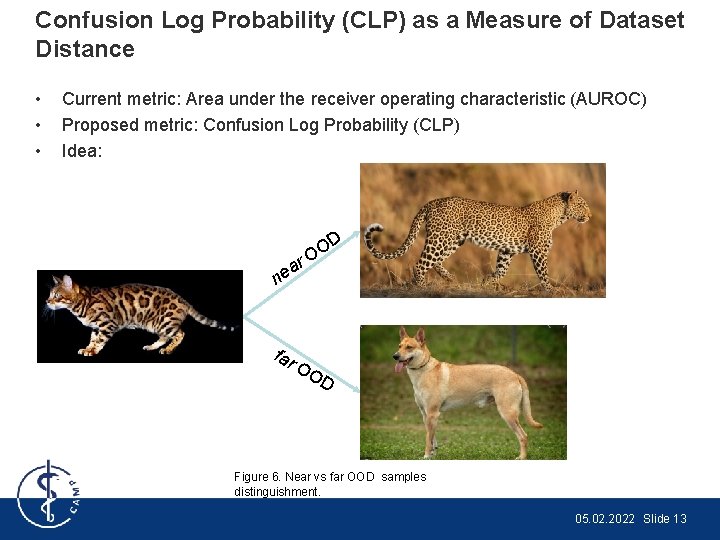

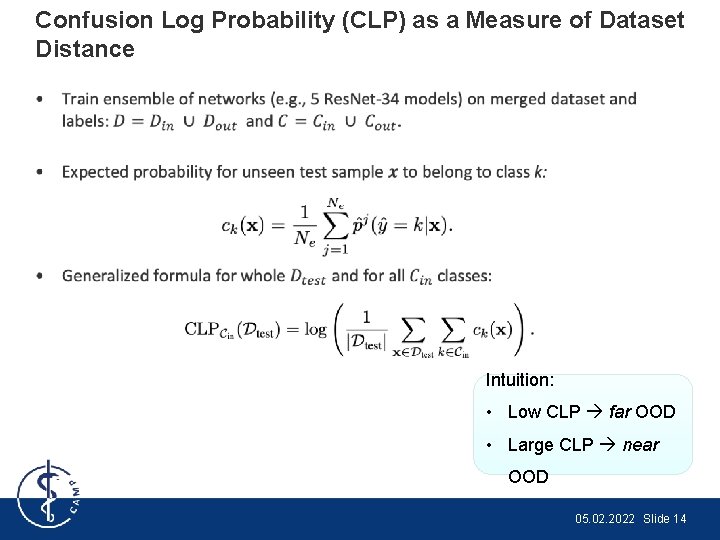

Confusion Log Probability (CLP) as a Measure of Dataset Distance • • • Current metric: Area under the receiver operating characteristic (AUROC) Proposed metric: Confusion Log Probability (CLP) Idea: r a ne far D O O OO D Figure 6. Near vs far OOD samples distinguishment. 05. 02. 2022 Slide 13

Confusion Log Probability (CLP) as a Measure of Dataset Distance • Intuition: • Low CLP far OOD • Large CLP near OOD 05. 02. 2022 Slide 14

Experiments & Results

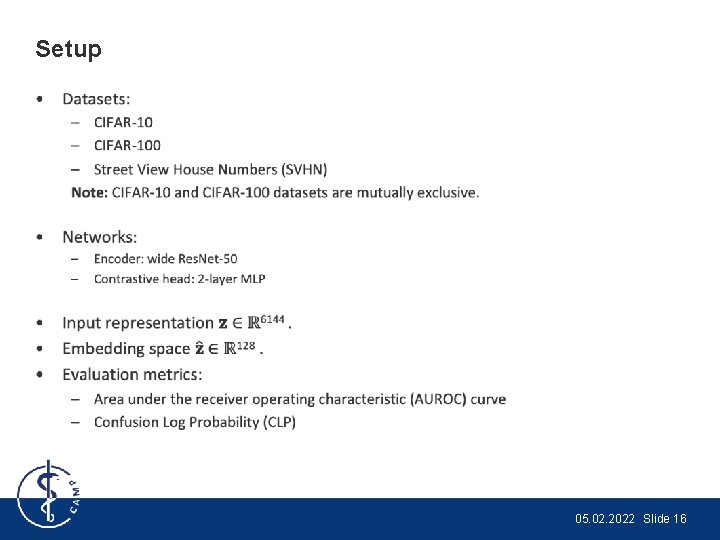

Setup • 05. 02. 2022 Slide 16

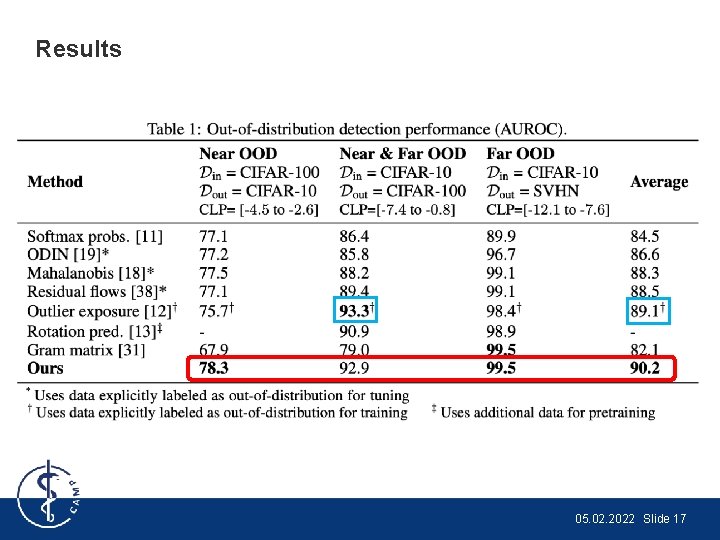

Results 05. 02. 2022 Slide 17

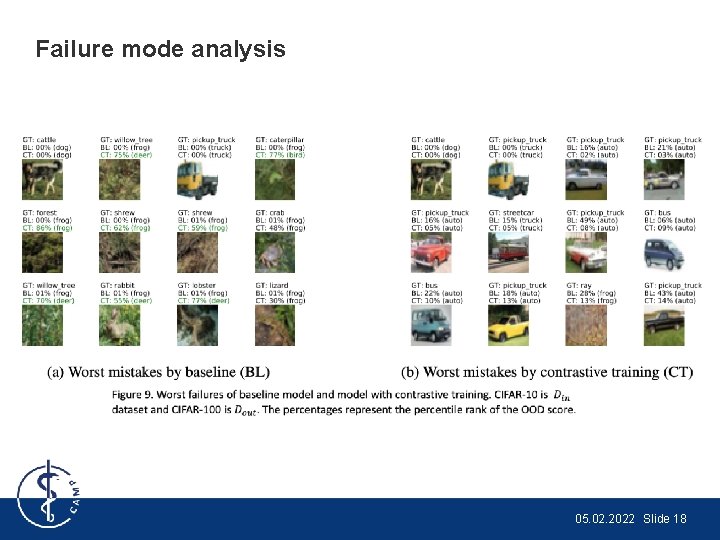

Failure mode analysis 05. 02. 2022 Slide 18

Conclusion & Discussion

Conclusion • Novel approach to detect OOD samples using contrastive training. • New metric Confusion Log Probability (CLP) to capture the differences between test samples from inlier and outlier distributions was introduced. • Contrastive loss pushes image representations apart, while Supervised training helps to cluster the samples by classes. • Density estimation using Gaussian distributions is used for OOD detection. • Detect near OOD samples is crucial for medical imaging. 05. 02. 2022 Slide 20

Student Review • Pros: – very well structured, written in a clear way, doesn't contain any errors or inconsistencies; – detailed introduction part provides domain-specific details; – novel approach in the domain of OOD detection was proposed, i. e. , contrastive training; – novel metric to measure similarities between inlier/outlier samples was proposed (CLP); – numerous experiments on three different datasets were conducted. • Cons: – Lack of clarity in explanation of Density estimation section, no mathematical derivations were provided. – Authors claimed their solution is scalable and applicable to medical imaging, but no experiments were performed in that direction. – Tested the approach only on classification task, while in medical imaging the 05. 02. 2022 Slide 21 segmentation task is more important (e. g. , detect cancer regions or pathologies).

- Slides: 21