ContextBased Adaptive Entropy Coding Xiaolin Wu Mc Master

Context-Based Adaptive Entropy Coding Xiaolin Wu Mc. Master University Hamilton, Ontario, Canada

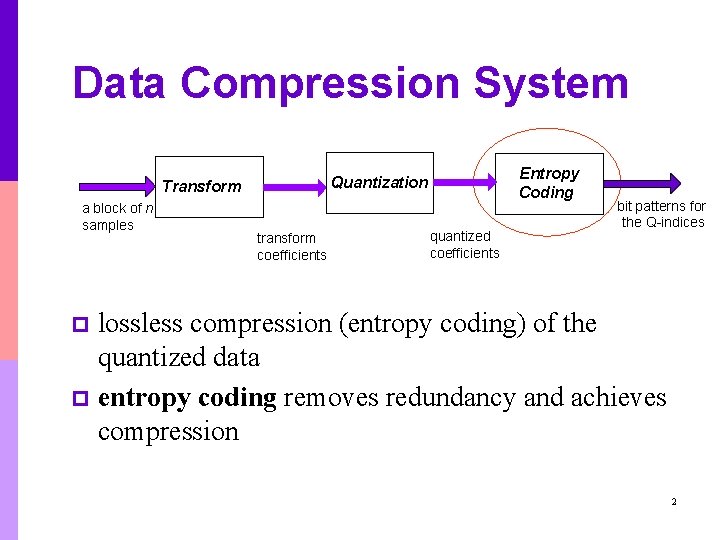

Data Compression System Transform a block of n samples Entropy Coding Quantization transform coefficients quantized coefficients bit patterns for the Q-indices lossless compression (entropy coding) of the quantized data p entropy coding removes redundancy and achieves compression p 2

Entropy Coding Techniques n n n Huffman code Golomb-Rice code Arithmetic code Optimal in the sense that it can approach source entropy p Easily adapts to non-stationary source statistics via context modeling (context selection and conditional probability estimation). p Context modeling governs the performance of arithmetic coding. p 3

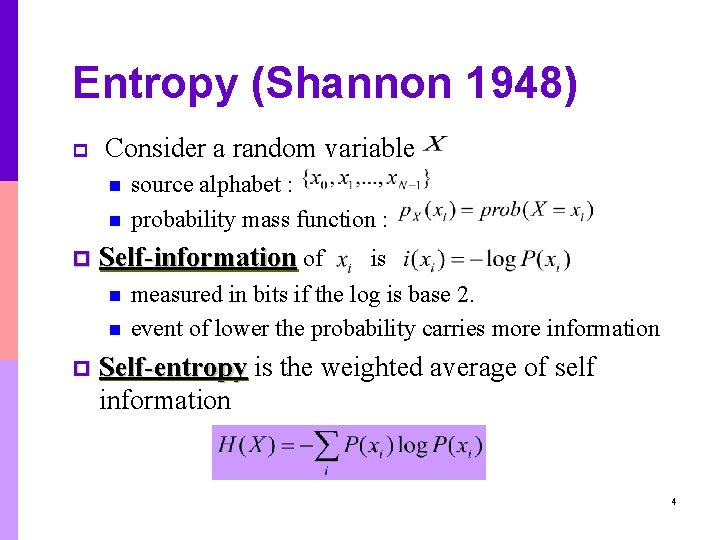

Entropy (Shannon 1948) p Consider a random variable n n p Self-information of n n p source alphabet : probability mass function : is measured in bits if the log is base 2. event of lower the probability carries more information Self-entropy is the weighted average of self information 4

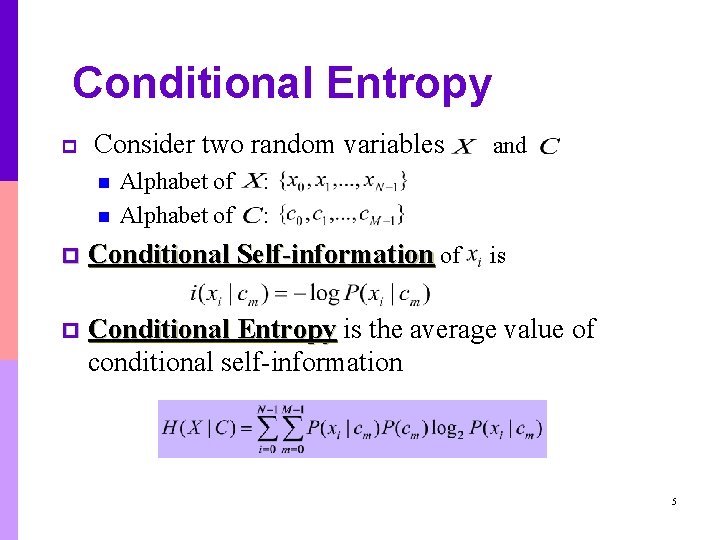

Conditional Entropy p Consider two random variables n n Alphabet of and : : p Conditional Self-information of p Conditional Entropy is the average value of conditional self-information is 5

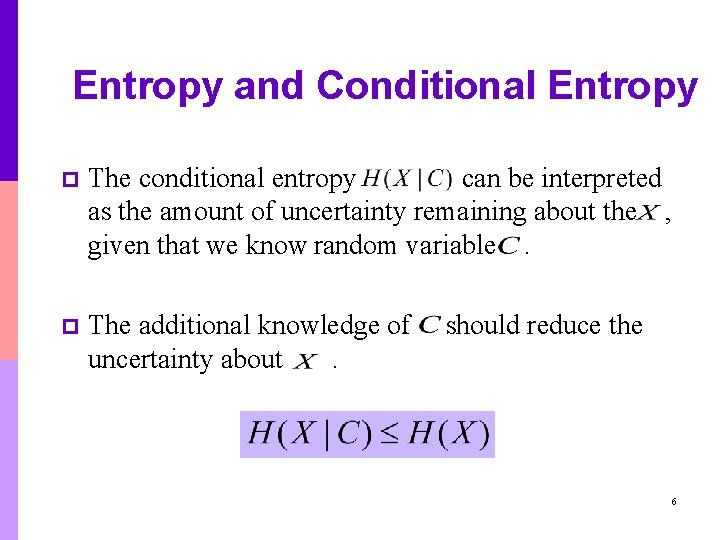

Entropy and Conditional Entropy p The conditional entropy can be interpreted as the amount of uncertainty remaining about the , given that we know random variable. p The additional knowledge of uncertainty about. should reduce the 6

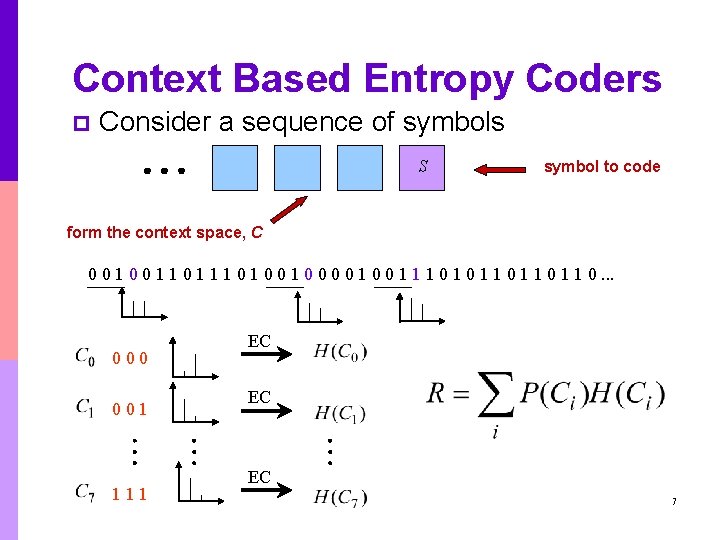

Context Based Entropy Coders p Consider a sequence of symbols S symbol to code form the context space, C 0 0 1 1 1 0 0 1 0 0 1 1 1 0 1 1 0. . . 000 001 111 EC EC EC 7

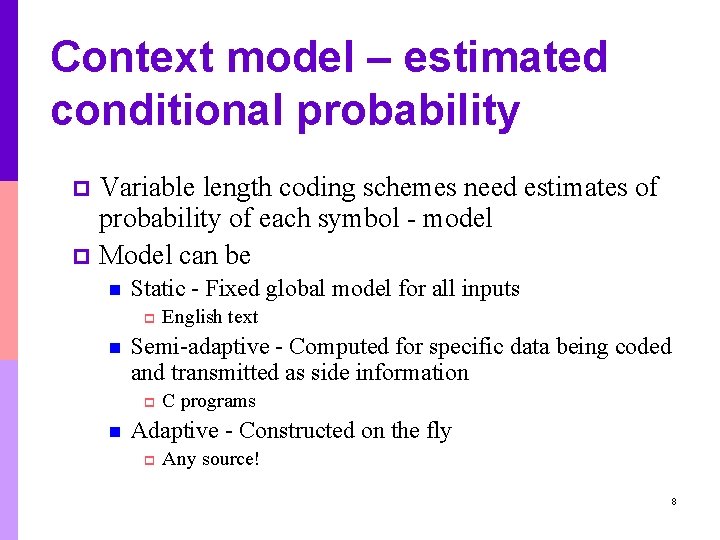

Context model – estimated conditional probability Variable length coding schemes need estimates of probability of each symbol - model p Model can be p n Static - Fixed global model for all inputs p n Semi-adaptive - Computed for specific data being coded and transmitted as side information p n English text C programs Adaptive - Constructed on the fly p Any source! 8

Adaptive vs. Semi-adaptive p Advantages of semi-adaptive n p Disadvantages of semi-adaptive n n p Overhead of specifying model can be high Two-passes of data required Advantages of adaptive n p Simple decoder one-pass universal As good if not better Disadvantages of adaptive n n Decoder as complex as encoder Errors propagate 9

Adaptation with Arithmetic and Huffman Coding - Manipulate Huffman tree on the fly - Efficient algorithms known but nevertheless they remain complex. p Arithmetic Coding - Update cumulative probability distribution table. Efficient data structure / algorithm known. Rest essentially same. p Main advantage of arithmetic over Huffman is the ease by which the former can be used in conjunction with adaptive modeling techniques. p 10

Context models p p p If source is not iid then there is complex dependence between symbols in the sequence In most practical situations, pdf of symbol depends on neighboring symbol values - i. e. context. Hence we condition encoding of current symbol to its context. How to select contexts? - Rigorous answer beyond our scope. Practical schemes use a fixed neighborhood. 11

Context dilution problem p The minimum code length of sequence achievable by arithmetic coding, if is known. p The difficulty in estimating due to insufficient sample statistics, preventing the use of high-order Markov models. 12

Estimating probabilities in different contexts p Two approaches n Maintain symbol occurrence counts within each context p n number of contexts needs to be modest to avoid context dilution Assume pdf shape within each context same (e. g. Laplacian), only parameters (e. g. mean and variance) different p Estimation may not be as accurate but much larger number of contexts can be used 13

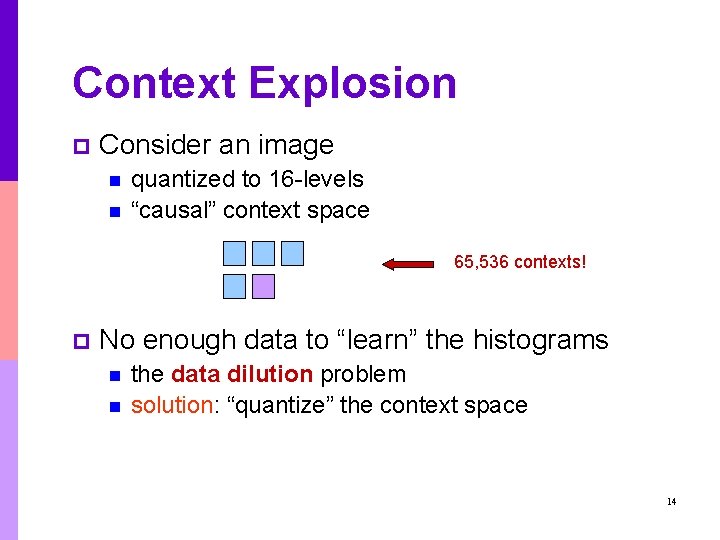

Context Explosion p Consider an image n n quantized to 16 -levels “causal” context space 65, 536 contexts! p No enough data to “learn” the histograms n n the data dilution problem solution: “quantize” the context space 14

Current Solutions p Non-Binary Source n n p JPEG: simple entropy coding without context J 2 K: ad-hoc context quantization strategy Binary Source n JBIG: use suboptimal context templates of modest sizes 15

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 16

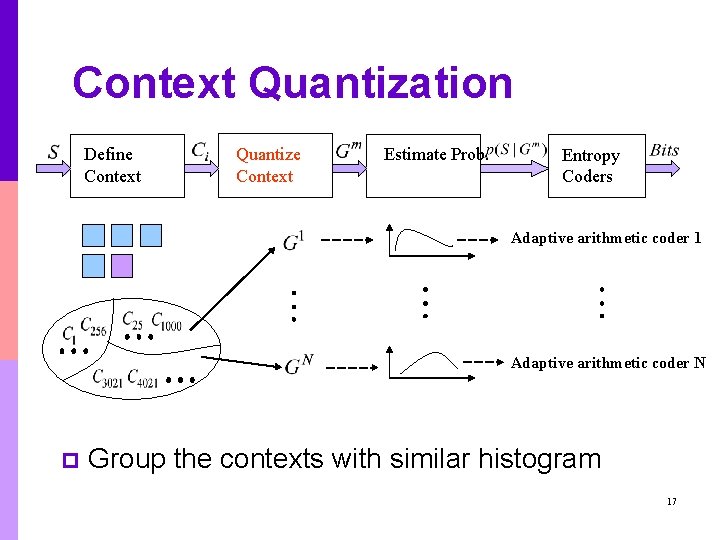

Context Quantization Define Context Quantize Context Estimate Prob. Entropy Coders Adaptive arithmetic coder 1 Adaptive arithmetic coder N p Group the contexts with similar histogram 17

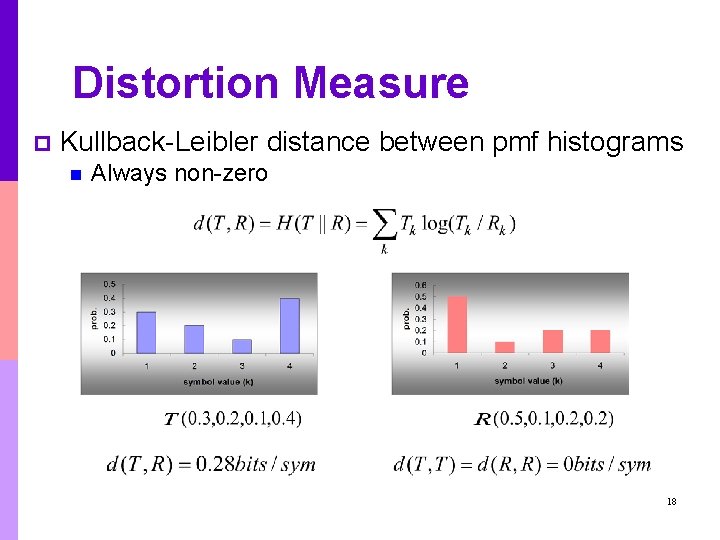

Distortion Measure p Kullback-Leibler distance between pmf histograms n Always non-zero 18

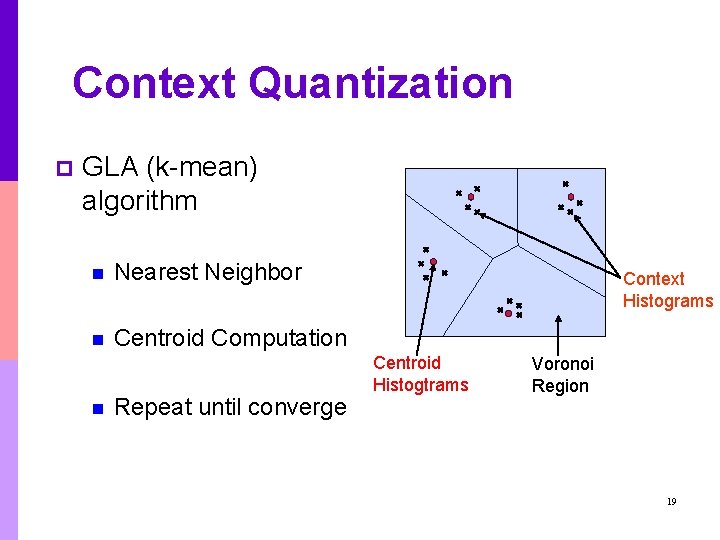

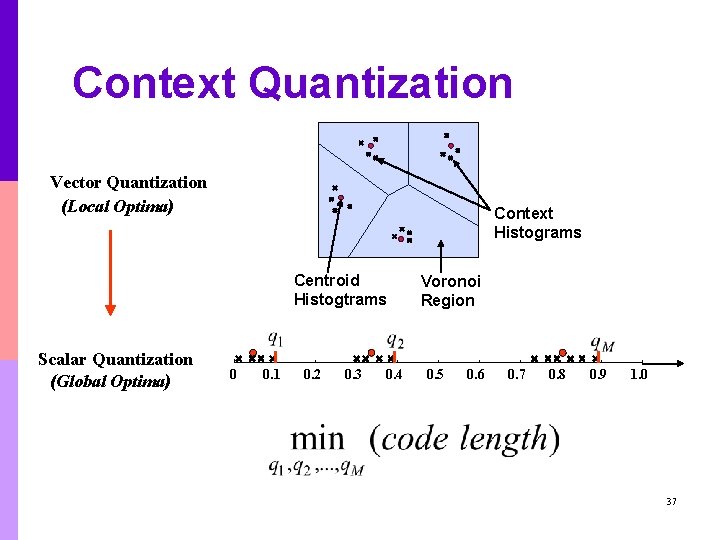

Context Quantization p GLA (k-mean) algorithm n Nearest Neighbor n Centroid Computation n Repeat until converge Context Histograms Centroid Histogtrams Voronoi Region 19

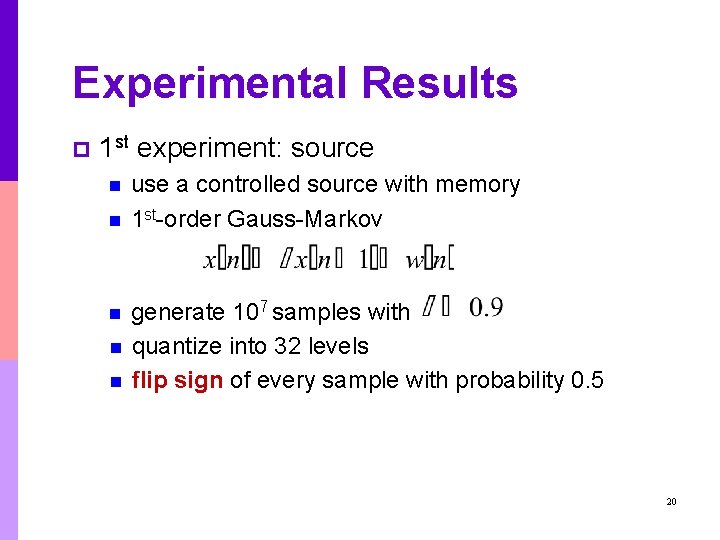

Experimental Results p 1 st experiment: source n n n use a controlled source with memory 1 st-order Gauss-Markov generate 107 samples with quantize into 32 levels flip sign of every sample with probability 0. 5 20

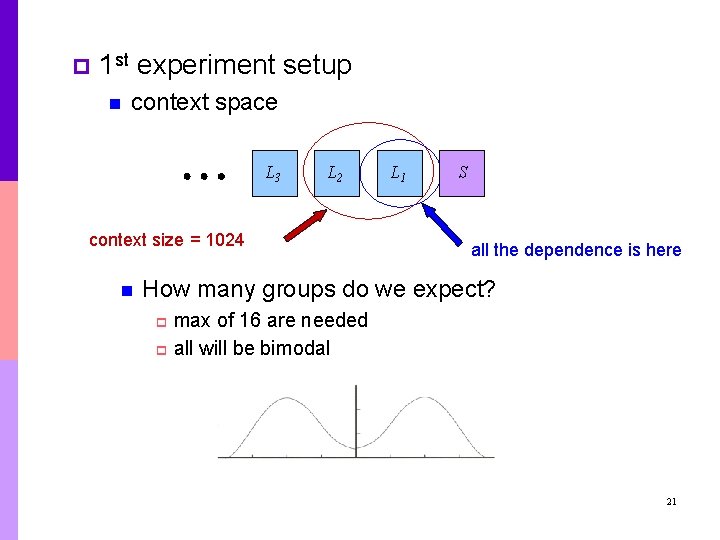

p 1 st experiment setup n context space L 3 L 2 context size = 1024 n L 1 S all the dependence is here How many groups do we expect? max of 16 are needed p all will be bimodal p 21

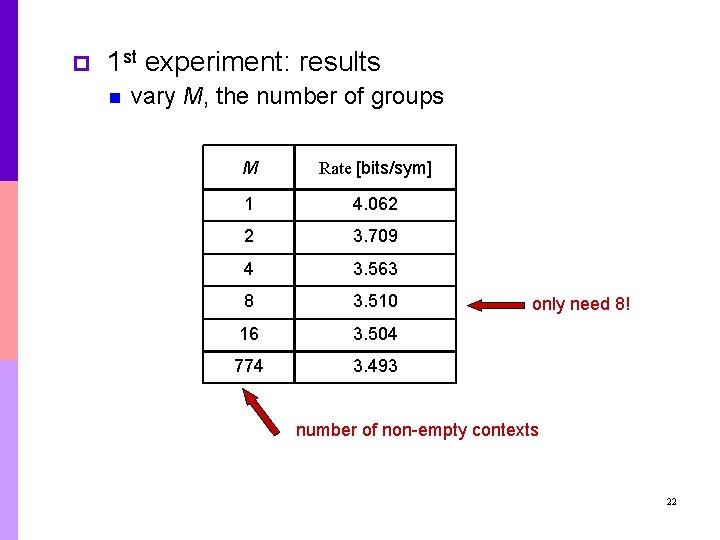

p 1 st experiment: results n vary M, the number of groups M Rate [bits/sym] 1 4. 062 2 3. 709 4 3. 563 8 3. 510 16 3. 504 774 3. 493 only need 8! number of non-empty contexts 22

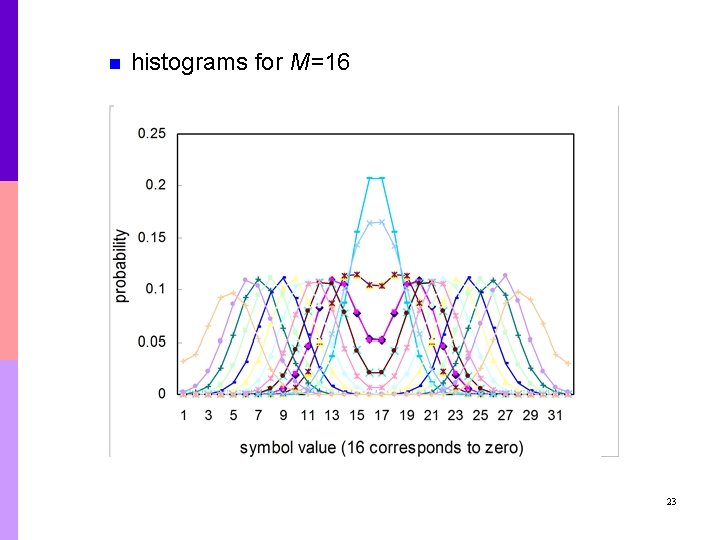

n histograms for M=16 23

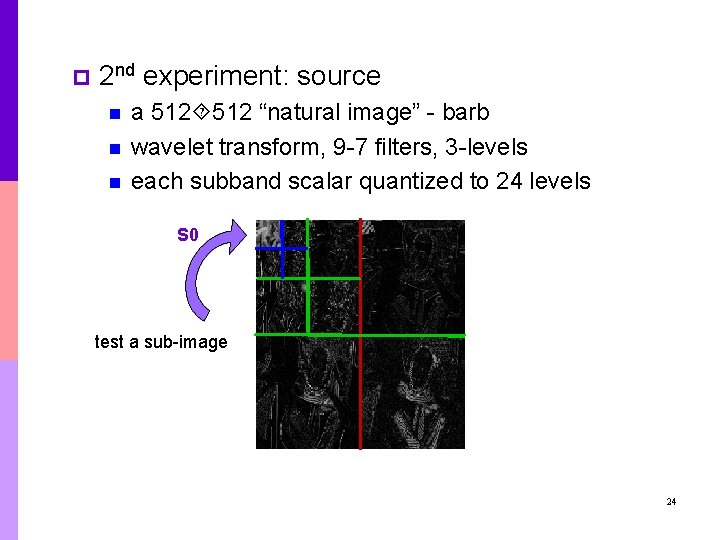

p 2 nd experiment: source n n n a 512 “natural image” - barb wavelet transform, 9 -7 filters, 3 -levels each subband scalar quantized to 24 levels S 0 test a sub-image 24

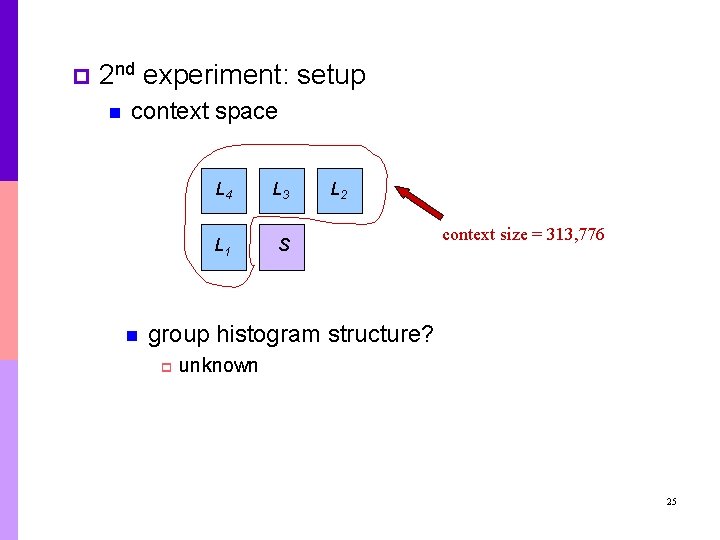

p 2 nd experiment: setup n context space n L 4 L 3 L 1 S L 2 context size = 313, 776 group histogram structure? p unknown 25

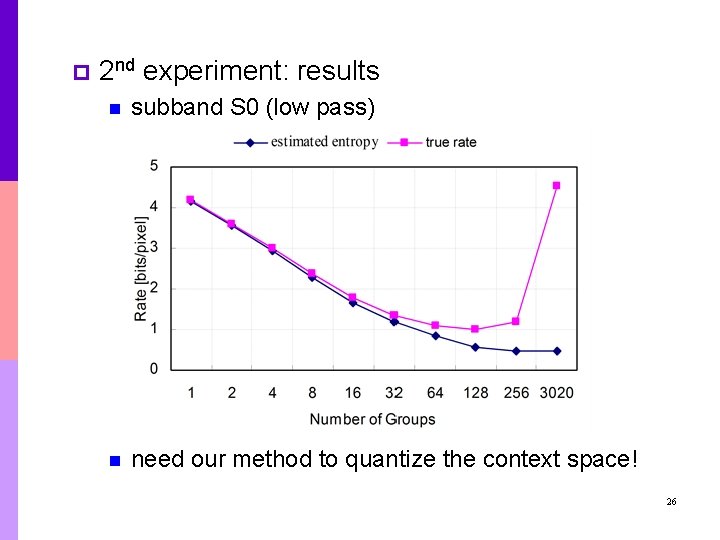

p 2 nd experiment: results n subband S 0 (low pass) n need our method to quantize the context space! 26

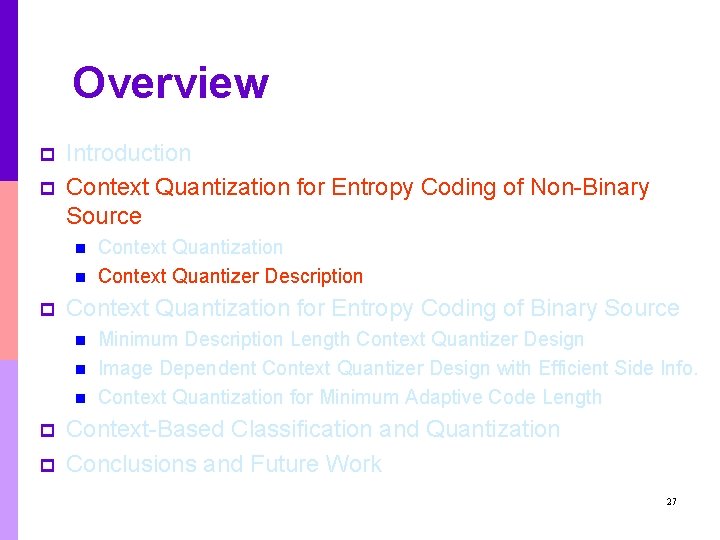

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 27

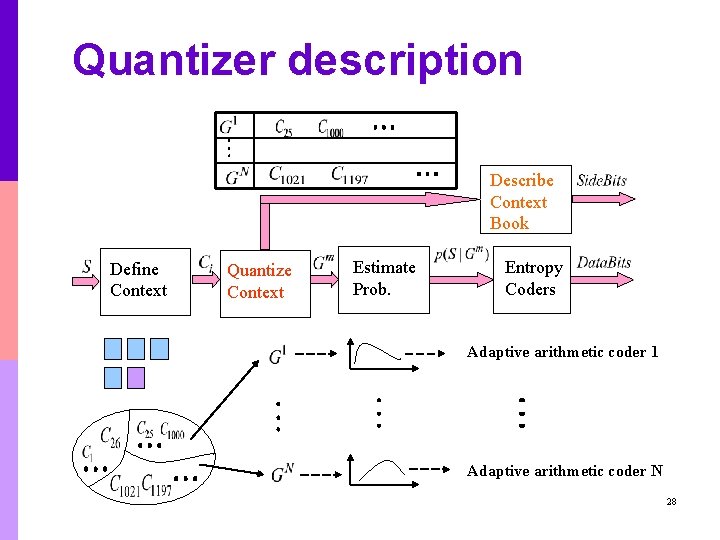

Quantizer description Describe Context Book Define Context Quantize Context Estimate Prob. Entropy Coders Adaptive arithmetic coder 1 Adaptive arithmetic coder N 28

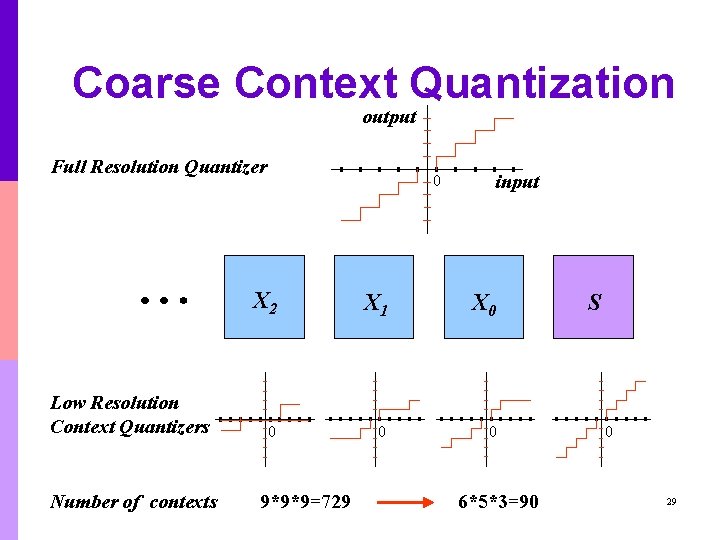

Coarse Context Quantization output Full Resolution Quantizer Low Resolution Context Quantizers Number of contexts 0 input X 2 X 1 X 0 0 9*9*9=729 6*5*3=90 S 0 29

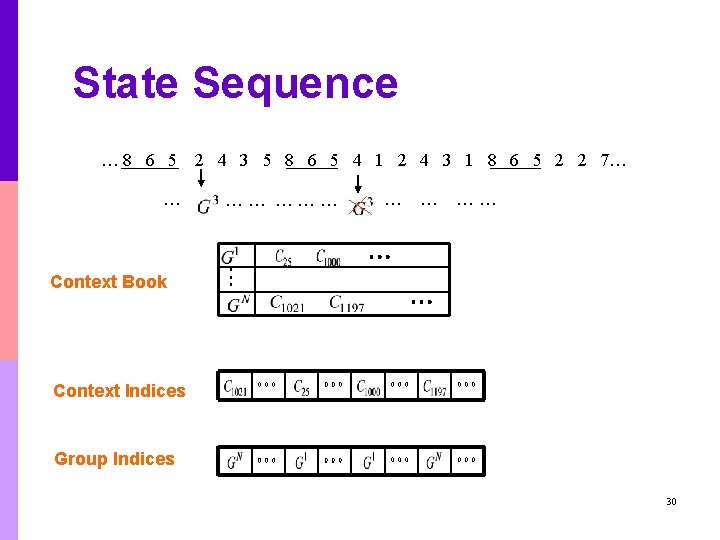

State Sequence … 8 6 5 … 2 4 3 5 8 6 5 4 1 2 4 3 1 8 6 5 2 2 7… …… … … …… Context Book Context Indices Group Indices 30

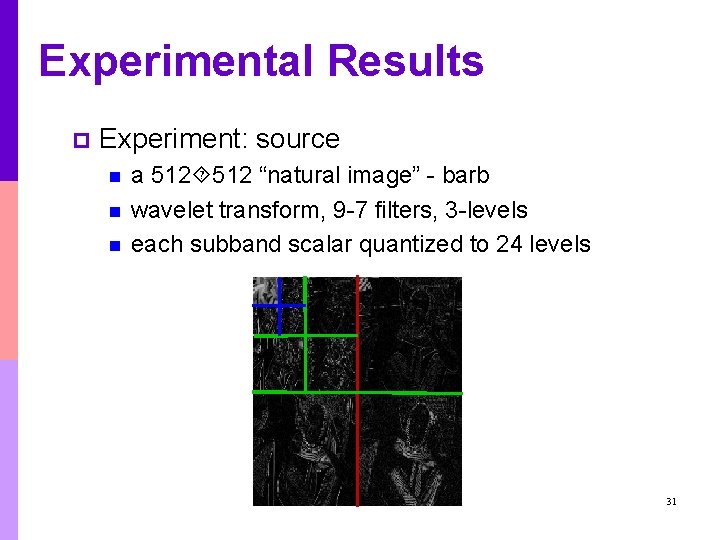

Experimental Results p Experiment: source n n n a 512 “natural image” - barb wavelet transform, 9 -7 filters, 3 -levels each subband scalar quantized to 24 levels 31

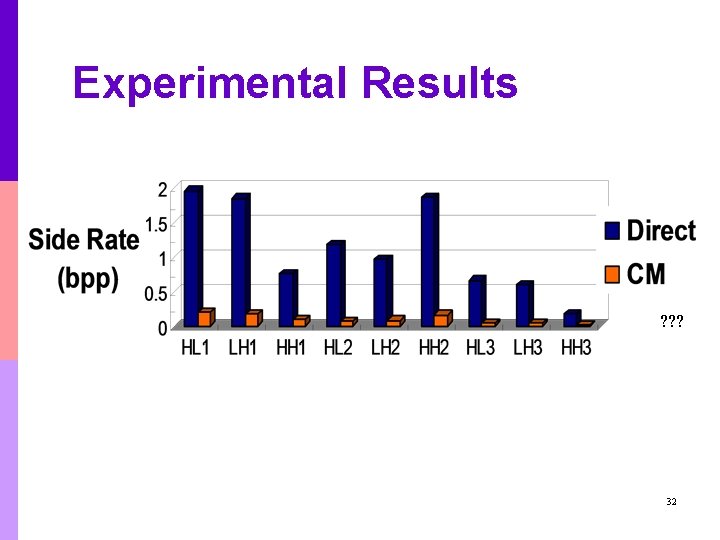

Experimental Results ? ? ? 32

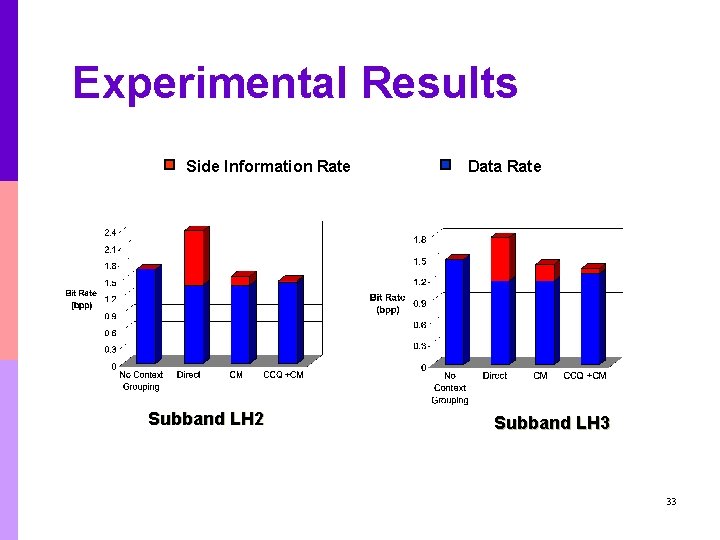

Experimental Results Side Information Rate Subband LH 2 Data Rate Subband LH 3 33

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p p Context-Based Classification and Quantization Context Quantization for Entropy Coding of Binary Source n n n p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Conclusions and Future work 34

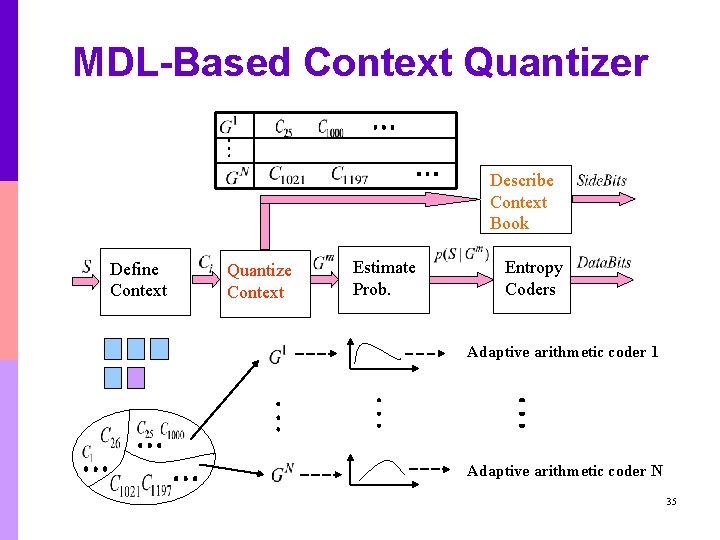

MDL-Based Context Quantizer Describe Context Book Define Context Quantize Context Estimate Prob. Entropy Coders Adaptive arithmetic coder 1 Adaptive arithmetic coder N 35

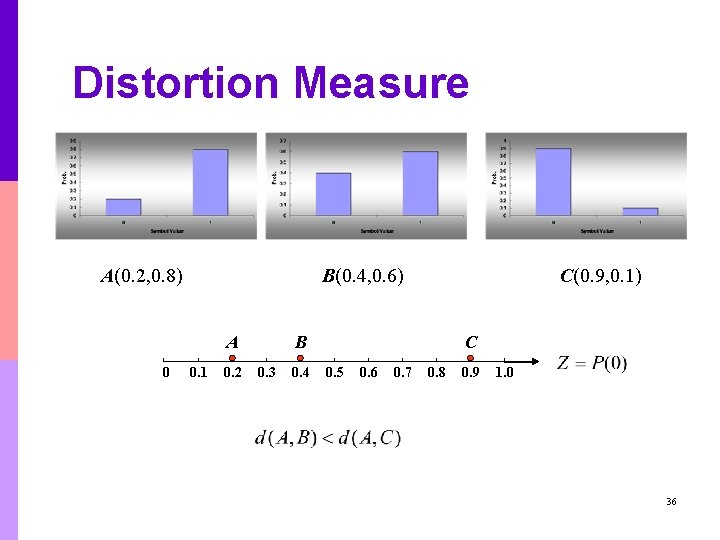

Distortion Measure A(0. 2, 0. 8) B(0. 4, 0. 6) A 0 0. 1 0. 2 C(0. 9, 0. 1) B 0. 3 0. 4 C 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 36

Context Quantization Vector Quantization (Local Optima) Context Histograms Centroid Histogtrams Scalar Quantization (Global Optima) 0 0. 1 0. 2 0. 3 0. 4 Voronoi Region 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 37

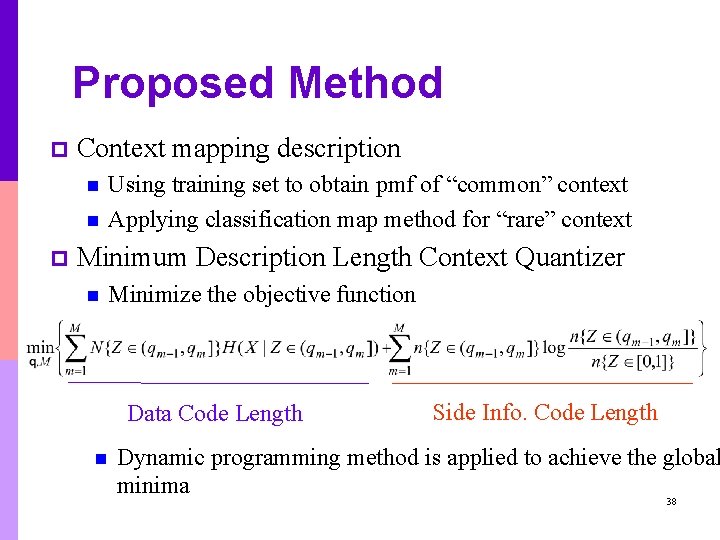

Proposed Method p Context mapping description n n p Using training set to obtain pmf of “common” context Applying classification map method for “rare” context Minimum Description Length Context Quantizer n Minimize the objective function Data Code Length n Side Info. Code Length Dynamic programming method is applied to achieve the global minima 38

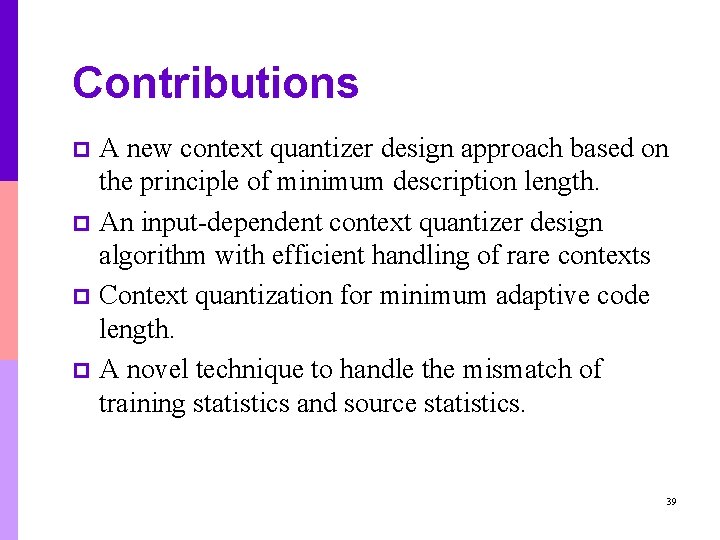

Contributions A new context quantizer design approach based on the principle of minimum description length. p An input-dependent context quantizer design algorithm with efficient handling of rare contexts p Context quantization for minimum adaptive code length. p A novel technique to handle the mismatch of training statistics and source statistics. p 39

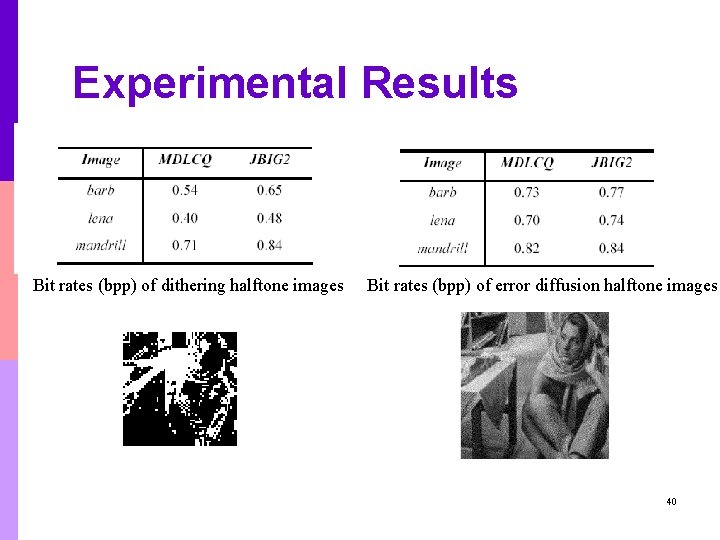

Experimental Results Bit rates (bpp) of dithering halftone images Bit rates (bpp) of error diffusion halftone images 40

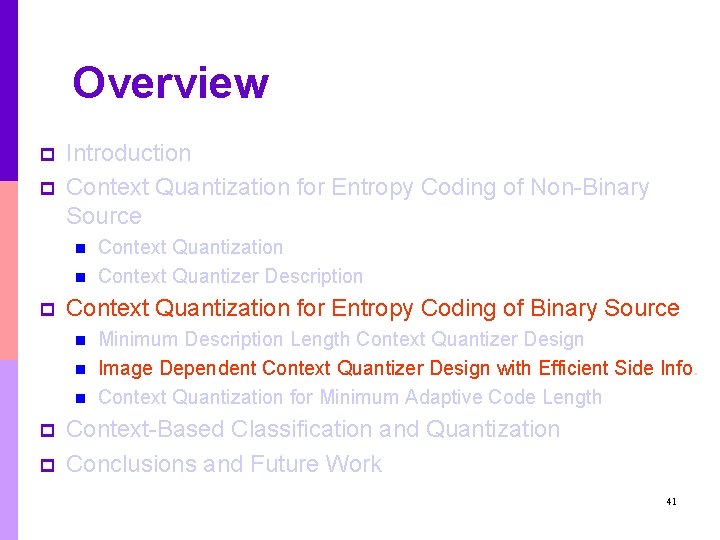

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 41

Motivation p MDL-based context quantizer is designed mainly based on the training set statistics. p If there is any mismatch in statistics between the input and the training set, the optimality of the predesigned context quantizer can be compromised 42

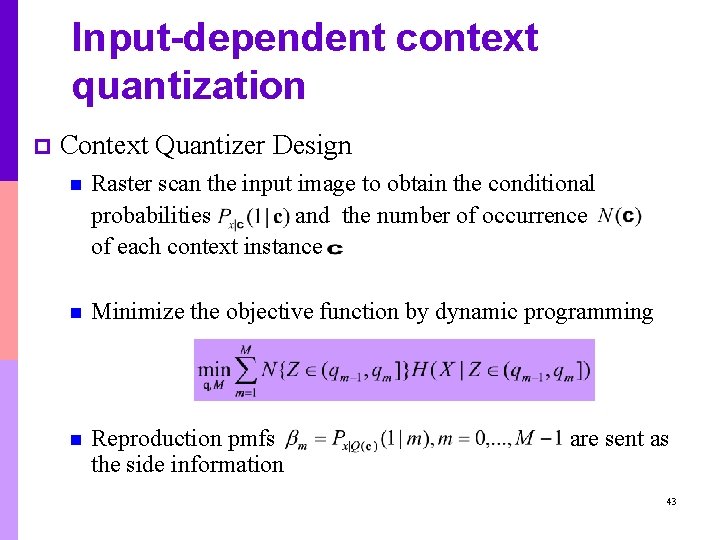

Input-dependent context quantization p Context Quantizer Design n Raster scan the input image to obtain the conditional probabilities and the number of occurrence of each context instance n Minimize the objective function by dynamic programming n Reproduction pmfs the side information are sent as 43

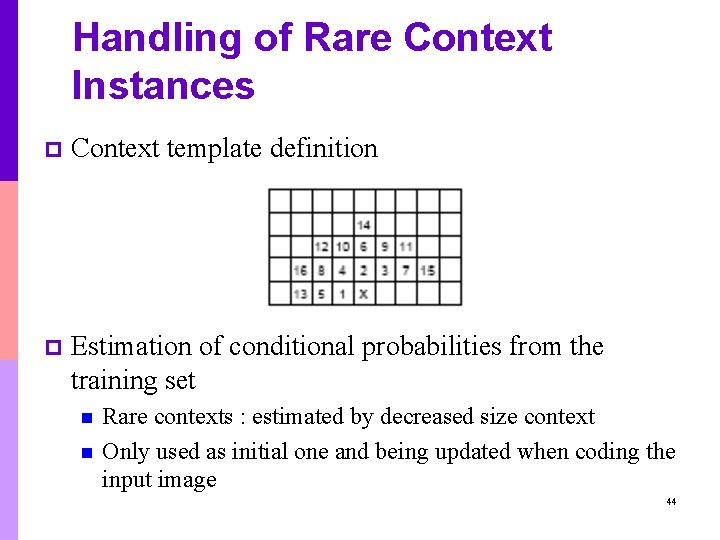

Handling of Rare Context Instances p Context template definition p Estimation of conditional probabilities from the training set n n Rare contexts : estimated by decreased size context Only used as initial one and being updated when coding the input image 44

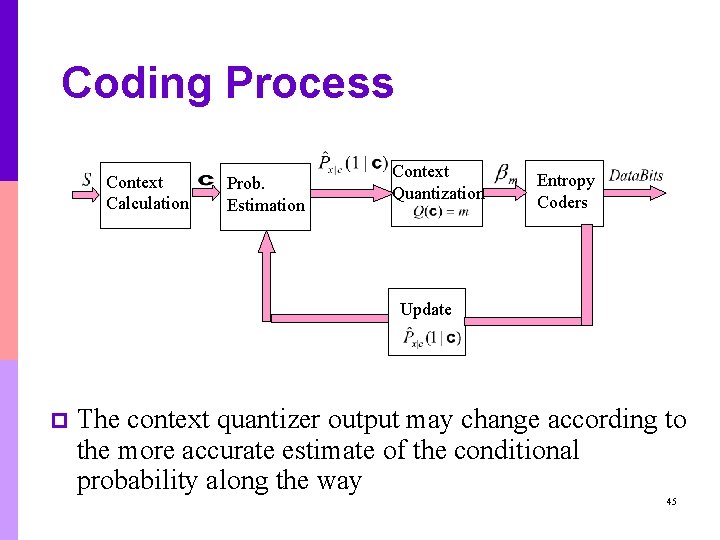

Coding Process Context Calculation Prob. Estimation Context Quantization Entropy Coders Update p The context quantizer output may change according to the more accurate estimate of the conditional probability along the way 45

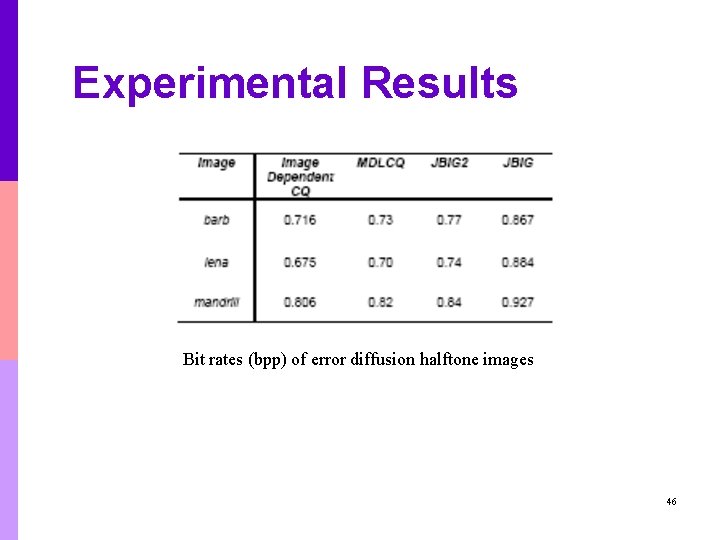

Experimental Results Bit rates (bpp) of error diffusion halftone images 46

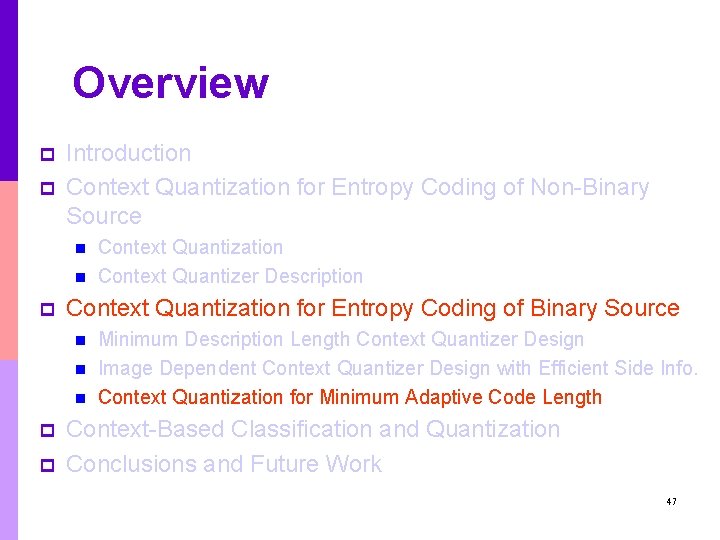

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 47

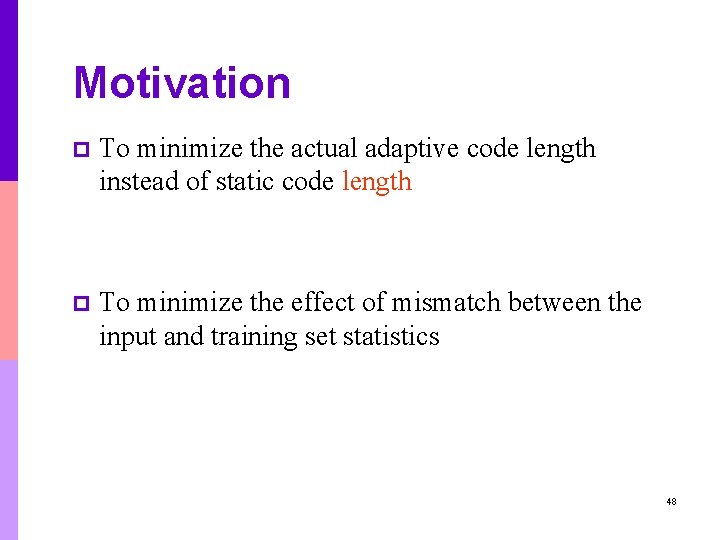

Motivation p To minimize the actual adaptive code length instead of static code length p To minimize the effect of mismatch between the input and training set statistics 48

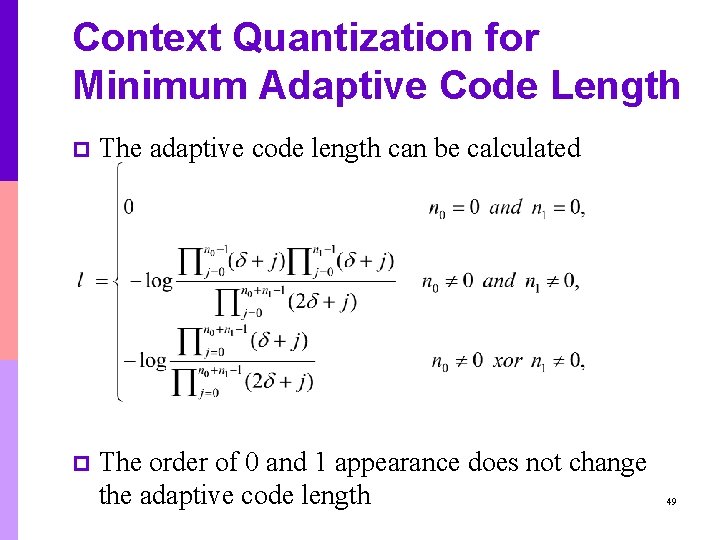

Context Quantization for Minimum Adaptive Code Length p The adaptive code length can be calculated p The order of 0 and 1 appearance does not change the adaptive code length 49

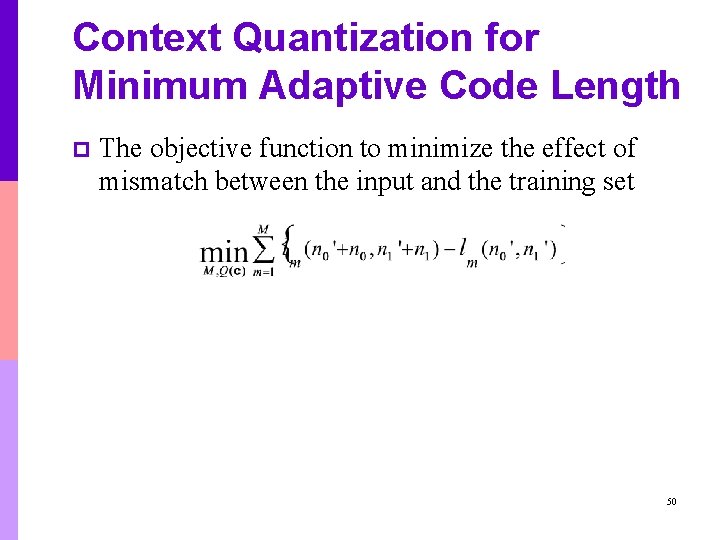

Context Quantization for Minimum Adaptive Code Length p The objective function to minimize the effect of mismatch between the input and the training set 50

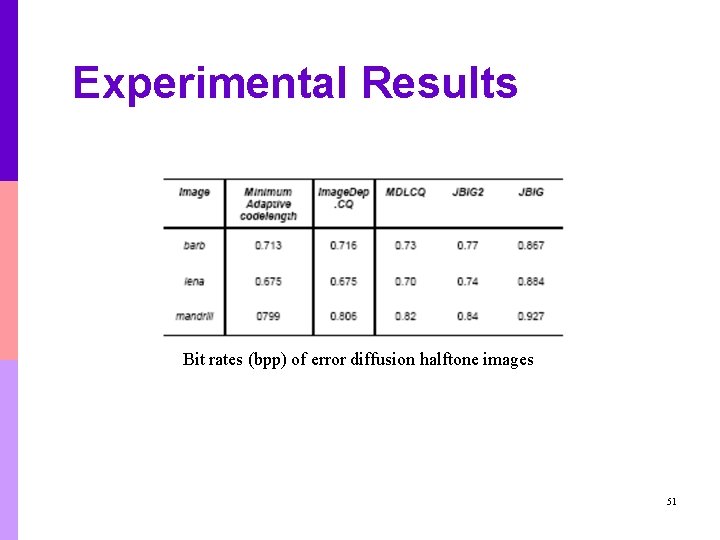

Experimental Results Bit rates (bpp) of error diffusion halftone images 51

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 52

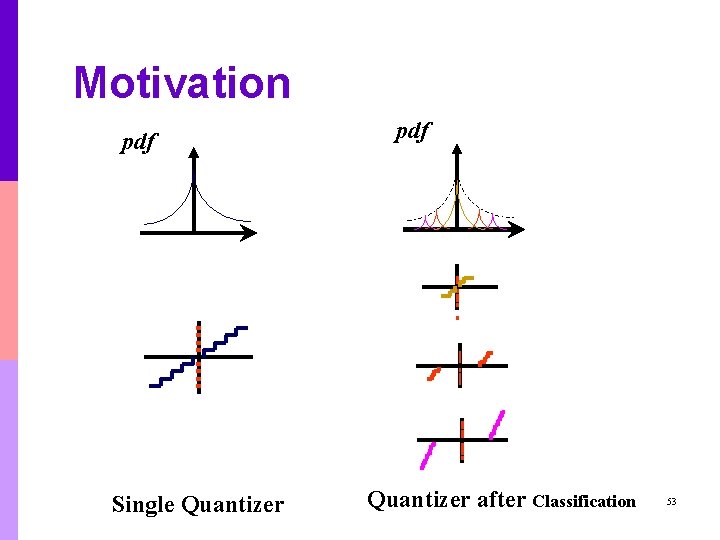

Motivation pdf Single Quantizer pdf Quantizer after Classification 53

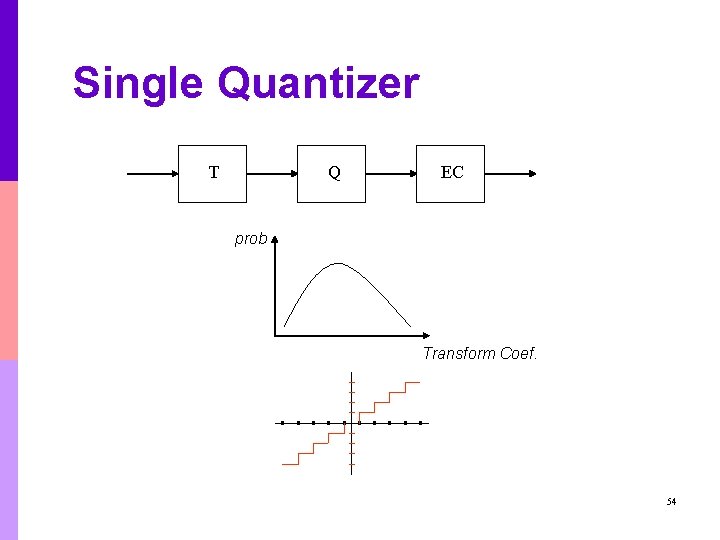

Single Quantizer T Q EC prob Transform Coef. 54

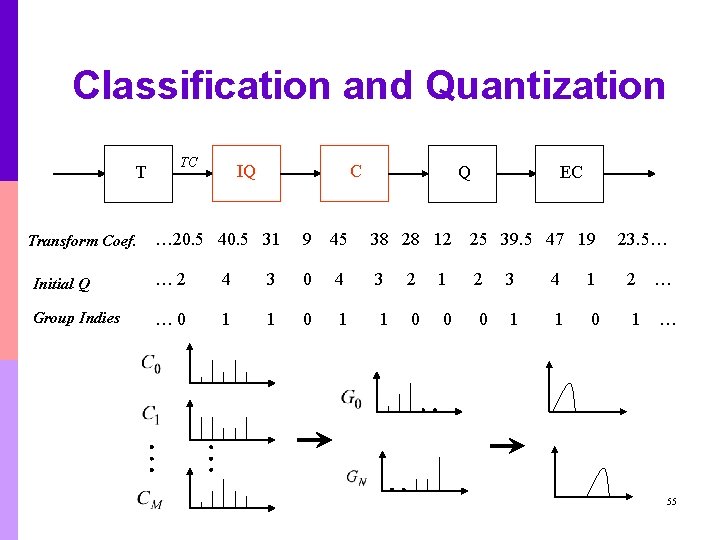

Classification and Quantization T TC IQ C Q EC … 20. 5 40. 5 31 9 45 38 28 12 25 39. 5 47 19 Initial Q … 2 4 3 0 4 3 2 1 2 3 4 1 2 … Group Indies … 0 1 1 0 0 0 1 1 0 1 … Transform Coef. 23. 5… 55

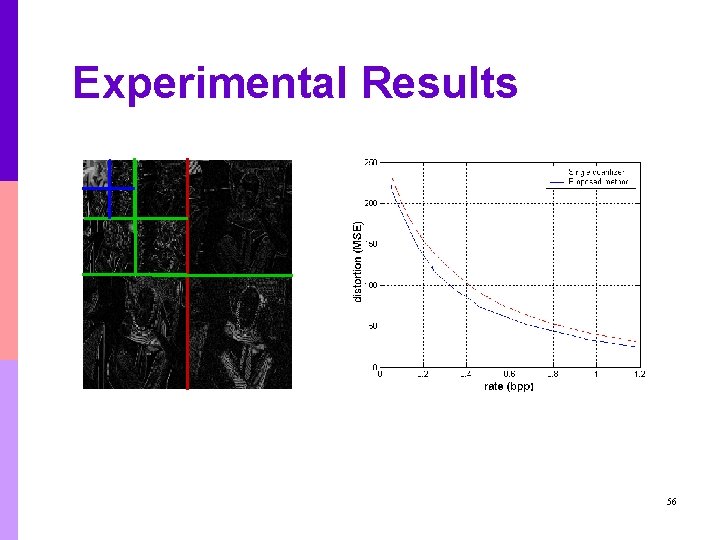

Experimental Results 56

Overview p p Introduction Context Quantization for Entropy Coding of Non-Binary Source n n p Context Quantization for Entropy Coding of Binary Source n n n p p Context Quantization Context Quantizer Description Minimum Description Length Context Quantizer Design Image Dependent Context Quantizer Design with Efficient Side Info. Context Quantization for Minimum Adaptive Code Length Context-Based Classification and Quantization Conclusions and Future Work 57

Conclusions p Non-Binary Source: n n p New context quantization method is proposed Efficient context description strategies Global optimal partition of the context space MDL-based context quantizer Context-based classification and quantization 58

Future Work p Context-based entropy coder n n p Classification and Quantization n p Context shape optimization Mismatch between the training set and test data Classification tree among the wavelet subbands Apply these techniques to video codec 59

? 60

- Slides: 60