Conjugate Gradient Problem SD too slow to converge

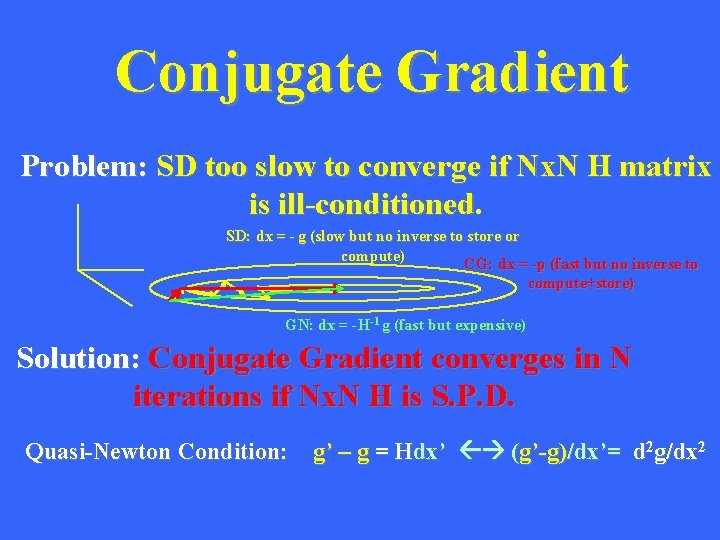

Conjugate Gradient Problem: SD too slow to converge if Nx. N H matrix is ill-conditioned. SD: dx = - g (slow but no inverse to store or compute) CG: dx = -p (fast but no inverse to compute+store) GN: dx = -H-1 g (fast but expensive) Solution: Conjugate Gradient converges in N iterations if Nx. N H is S. P. D. Quasi-Newton Condition: g’ – g = Hdx’ (g’-g)/dx’= d 2 g/dx 2

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

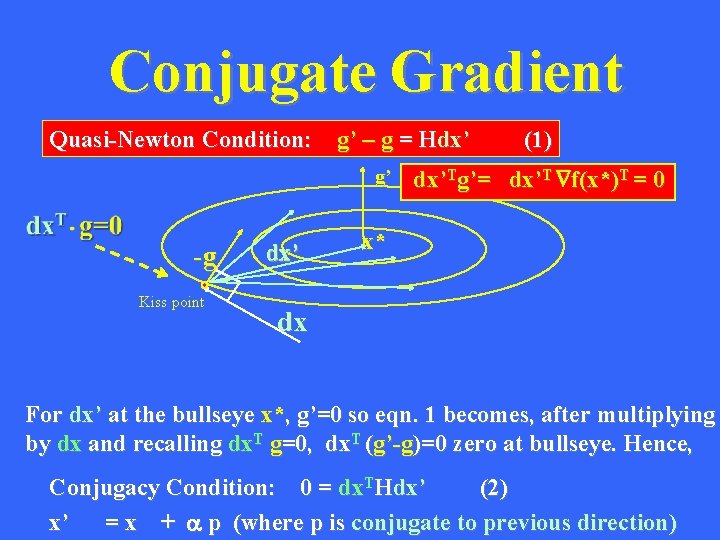

Conjugate Gradient g’ -g Kiss point dx’ (1) D Quasi-Newton Condition: g’ – g = Hdx’ dx’Tg’= dx’T f(x*)T = 0 x* dx For dx’ at the bullseye x*, g’=0 so eqn. 1 becomes, after multiplying by dx and recalling dx. T g=0, dx. T (g’-g)=0 zero at bullseye. Hence, Conjugacy Condition: 0 = dx. THdx’ (2) x’ = x + a p (where p is conjugate to previous direction)

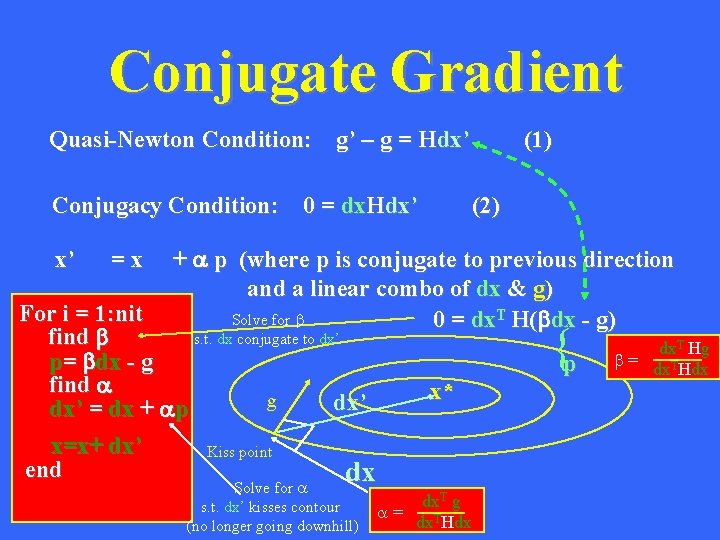

Conjugate Gradient Quasi-Newton Condition: g’ – g = Hdx’ Conjugacy Condition: 0 = dx. Hdx’ x’ =x (1) (2) + a p (where p is conjugate to previous direction and a linear combo of dx & g) Solve for b 0 = dx. T H(bdx - g) For i = 1: nit s. t. dx conjugate to dx’ find b p= bdx - g find a g dx’ = dx + ap x=x+ dx’ Kiss point end dx Solve for a s. t. dx’ kisses contour (no longer going downhill) {p x* dx. T g a= dx. THdx dx. T Hg b= dx. THdx

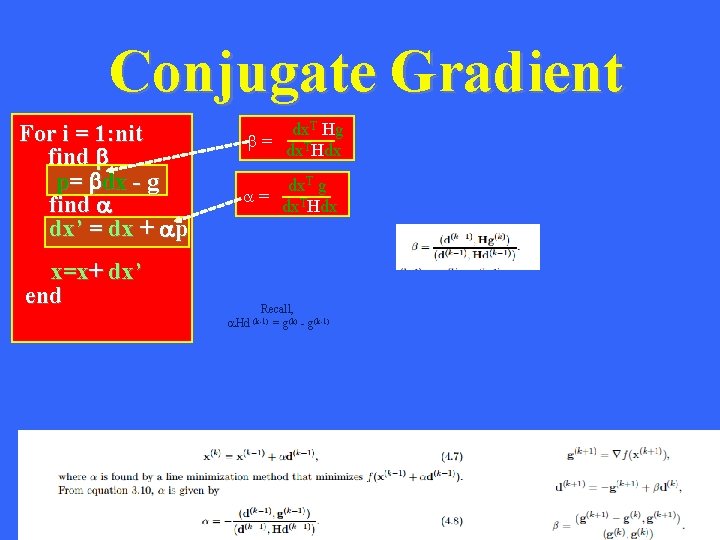

Conjugate Gradient For i = 1: nit find b p= bdx - g find a dx’ = dx + ap x=x+ dx’ end dx. T Hg b= dx. THdx dx. T g a= dx. THdx Recall, a. Hd (k-1) = g(k) - g(k-1)

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

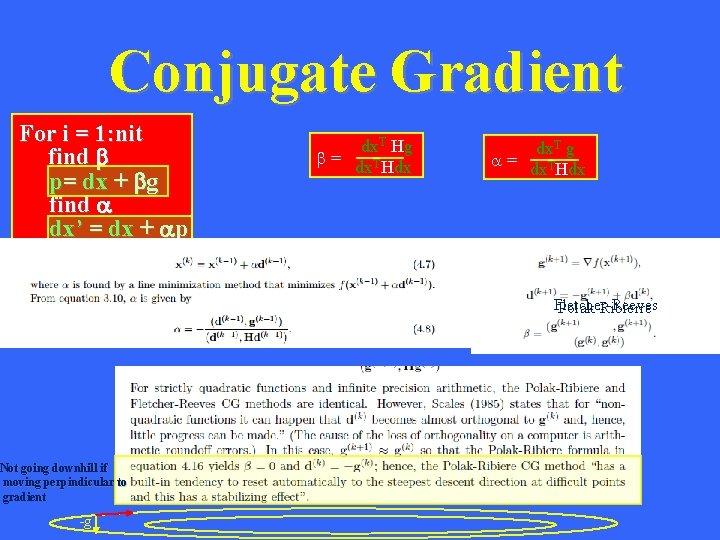

Conjugate Gradient For i = 1: nit find b p= dx + bg find a dx’ = dx + ap x=x+ dx’ end Not going downhill if moving perpindicular to gradient -g dx. T Hg b= dx. THdx dx. T g a= dx. THdx Fletcher-Reeves Polak-Ribierre

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

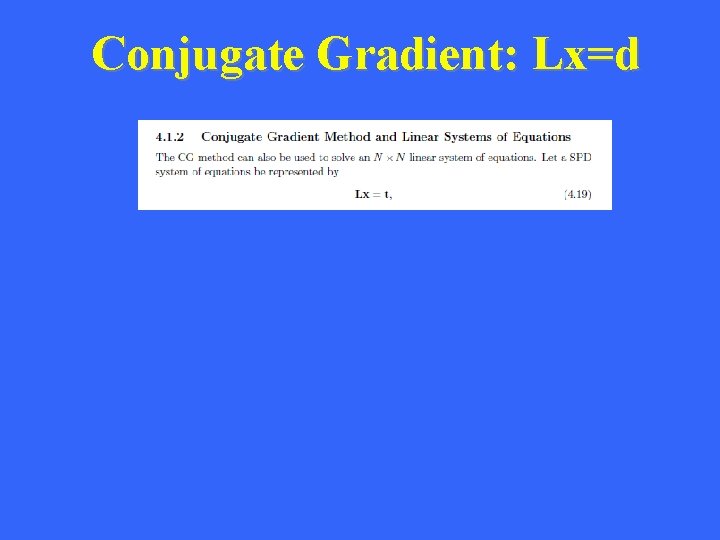

Conjugate Gradient: Lx=d

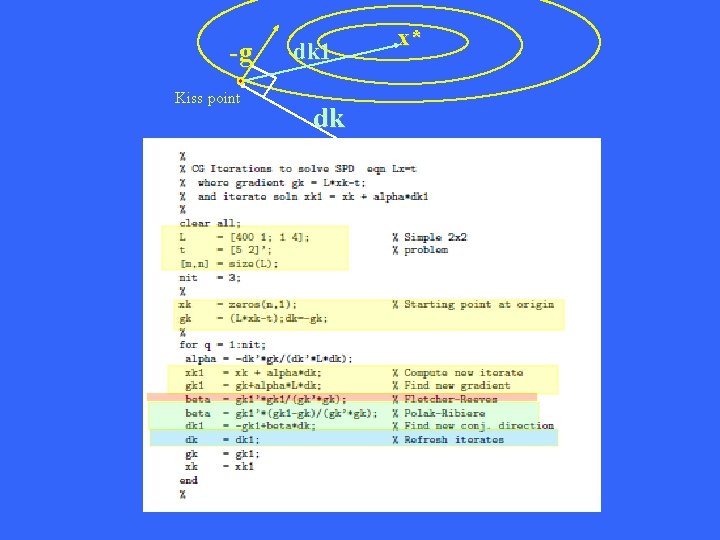

-g Kiss point dk 1 dk x*

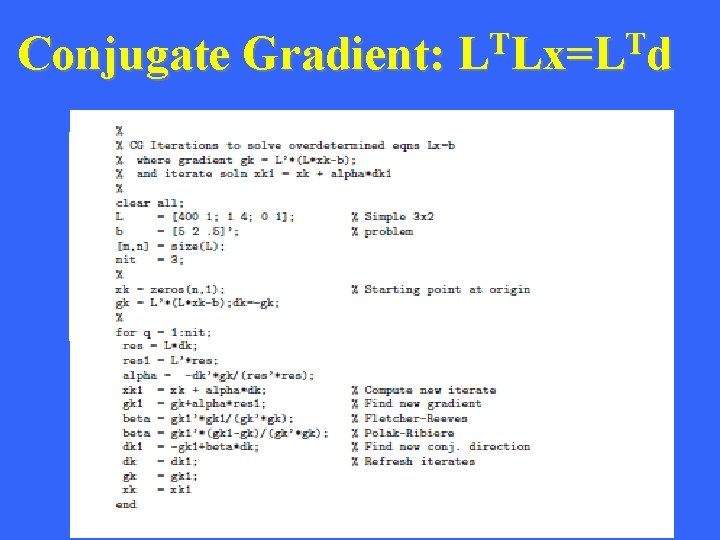

Conjugate Gradient: T T L Lx=L d Compared to square system of equations, the gradient for overdetermined system of equations has an extra LT However, LLT has squared condition number

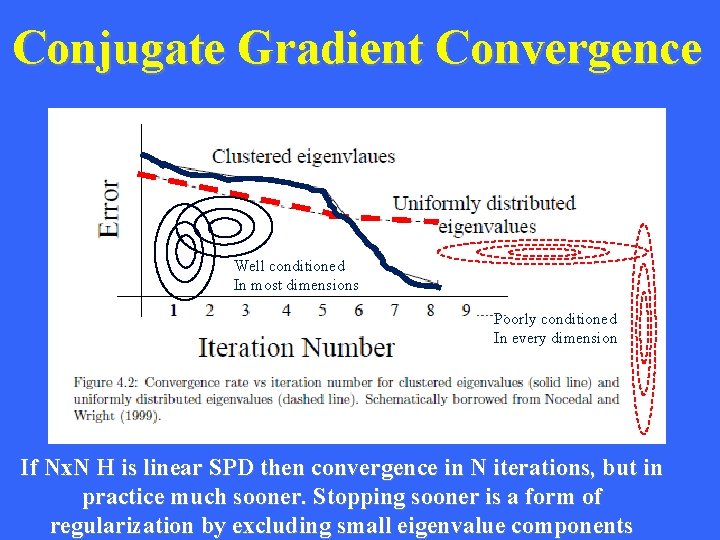

Conjugate Gradient Convergence Well conditioned In most dimensions Poorly conditioned In every dimension If Nx. N H is linear SPD then convergence in N iterations, but in practice much sooner. Stopping sooner is a form of regularization by excluding small eigenvalue components

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

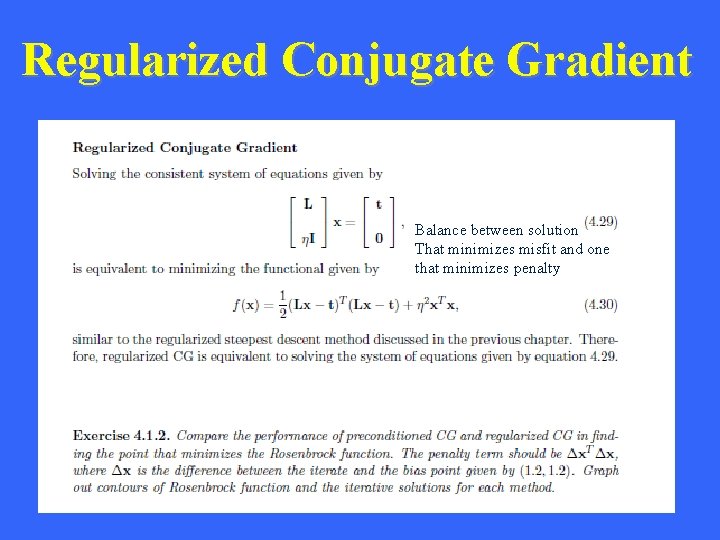

Regularized Conjugate Gradient Balance between solution That minimizes misfit and one that minimizes penalty

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

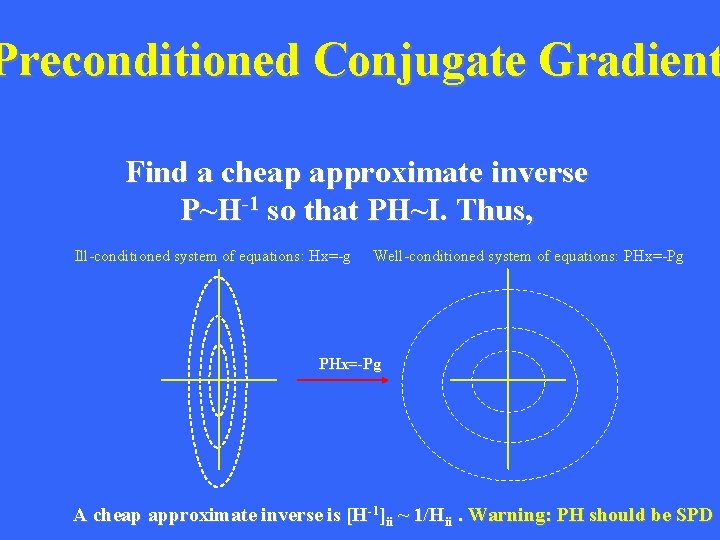

Preconditioned Conjugate Gradient Find a cheap approximate inverse P~H-1 so that PH~I. Thus, Ill-conditioned system of equations: Hx=-g Well-conditioned system of equations: PHx=-Pg A cheap approximate inverse is [H-1]ii ~ 1/Hii. Warning: PH should be SPD

Outline • CG Algorithm • Step Length: Polak-Ribiere vs Fletcher-Reeves • CG Soln to Even & Overdetermined Equations • Regularized CG • Preconditioned CG • Non-Linear CG

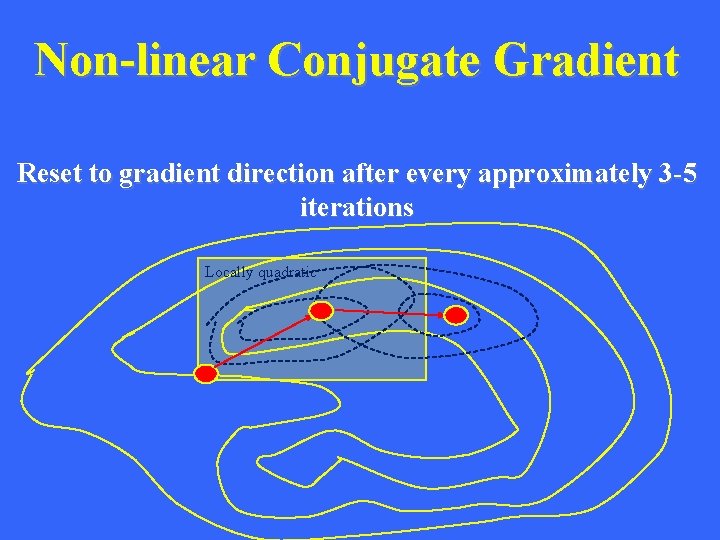

Non-linear Conjugate Gradient Reset to gradient direction after every approximately 3 -5 iterations Locally quadratic

- Slides: 18