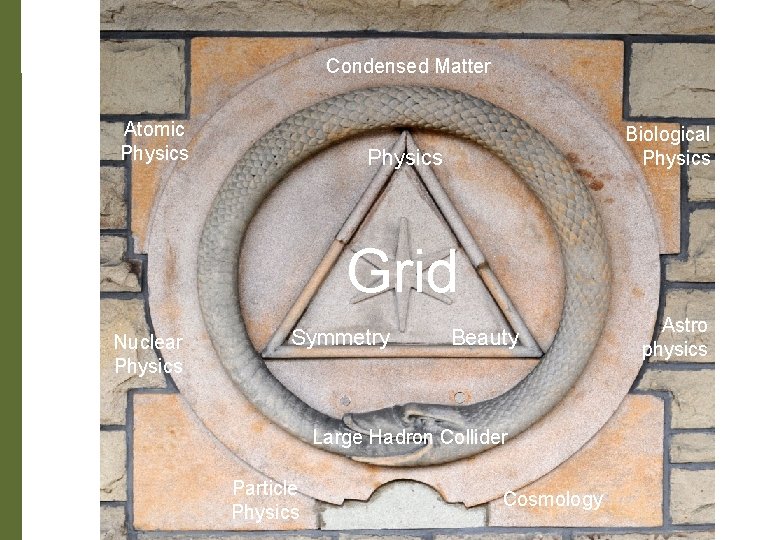

Condensed Matter Atomic Physics Biological Physics Grid Nuclear

Condensed Matter Atomic Physics Biological Physics Grid Nuclear Physics Symmetry Beauty Large Hadron Collider Particle Physics Cosmology Astro physics

Avoiding Gridlock Tony Doyle Particle Physics Masterclass Glasgow, 11 June 2009

Outline Introduction – Origin - Why? What is the Grid? The Icemen Cometh How does the Grid work? When will it be ready?

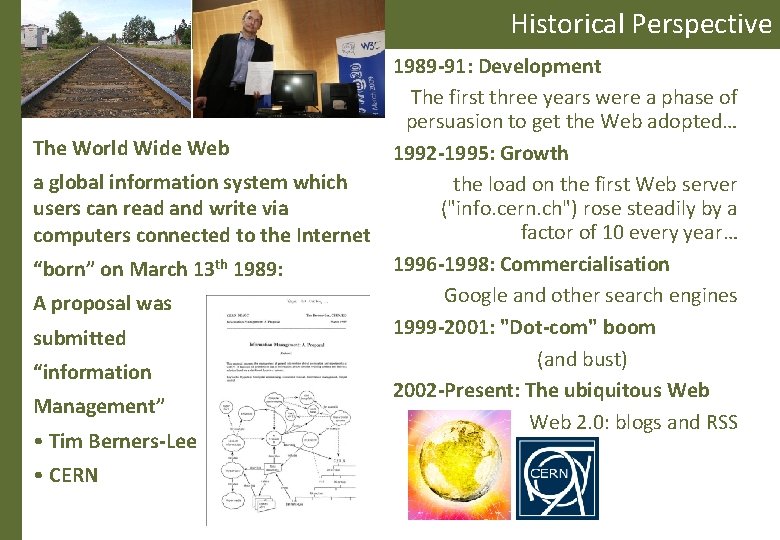

Historical Perspective The World Wide Web a global information system which users can read and write via computers connected to the Internet “born” on March 13 th 1989: A proposal was submitted “information Management” • Tim Berners-Lee • CERN 1989 -91: Development The first three years were a phase of persuasion to get the Web adopted… 1992 -1995: Growth the load on the first Web server ("info. cern. ch") rose steadily by a factor of 10 every year… 1996 -1998: Commercialisation Google and other search engines 1999 -2001: "Dot-com" boom (and bust) 2002 -Present: The ubiquitous Web 2. 0: blogs and RSS

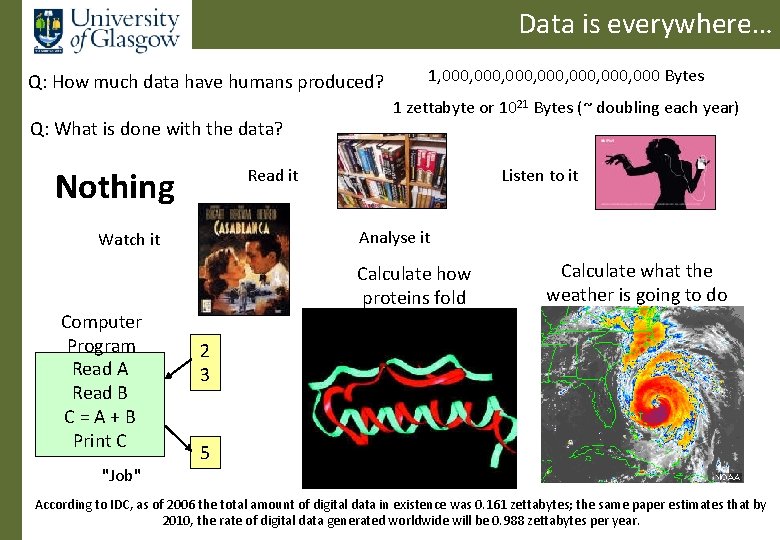

Data is everywhere… Q: How much data have humans produced? Q: What is done with the data? Nothing "Job" 1 zettabyte or 1021 Bytes (~ doubling each year) Read it Listen to it Analyse it Watch it Computer Program Read A Read B C=A+B Print C 1, 000, 000, 000 Bytes Calculate how proteins fold Calculate what the weather is going to do 2 3 5 According to IDC, as of 2006 the total amount of digital data in existence was 0. 161 zettabytes; the same paper estimates that by 2010, the rate of digital data generated worldwide will be 0. 988 zettabytes per year.

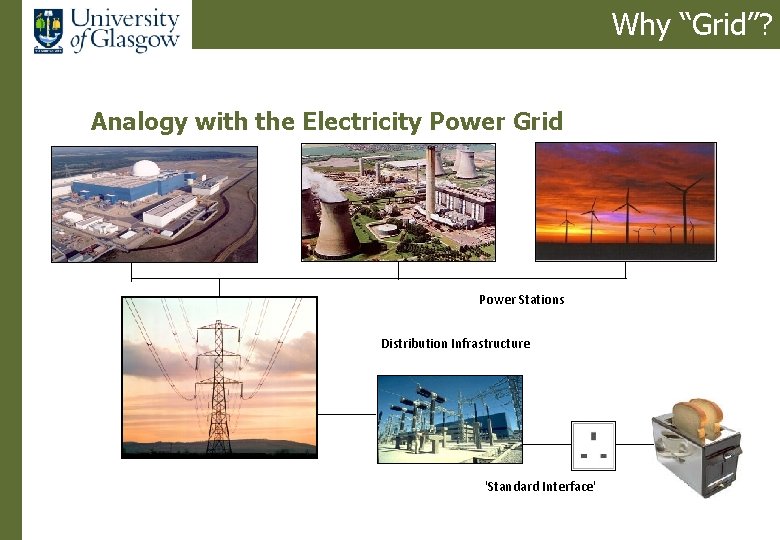

Why “Grid”? Analogy with the Electricity Power Grid Power Stations Distribution Infrastructure 'Standard Interface'

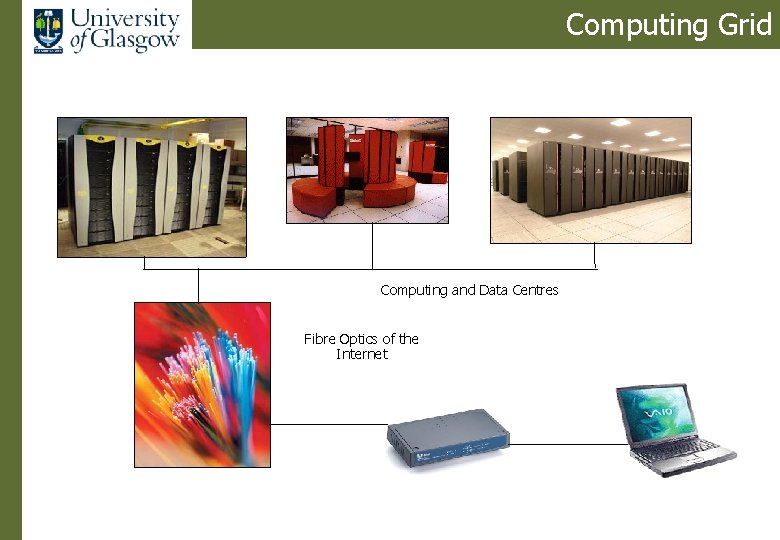

Computing Grid Computing and Data Centres Fibre Optics of the Internet

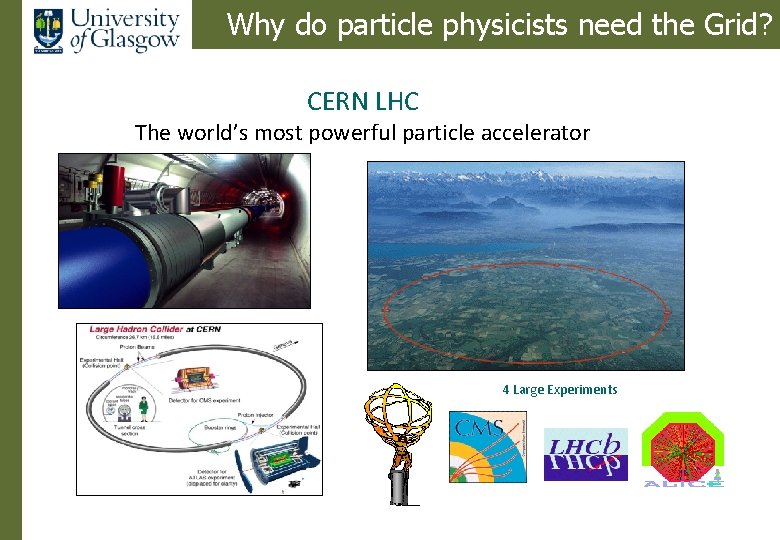

Why do particle physicists need the Grid? CERN LHC The world’s most powerful particle accelerator 4 Large Experiments

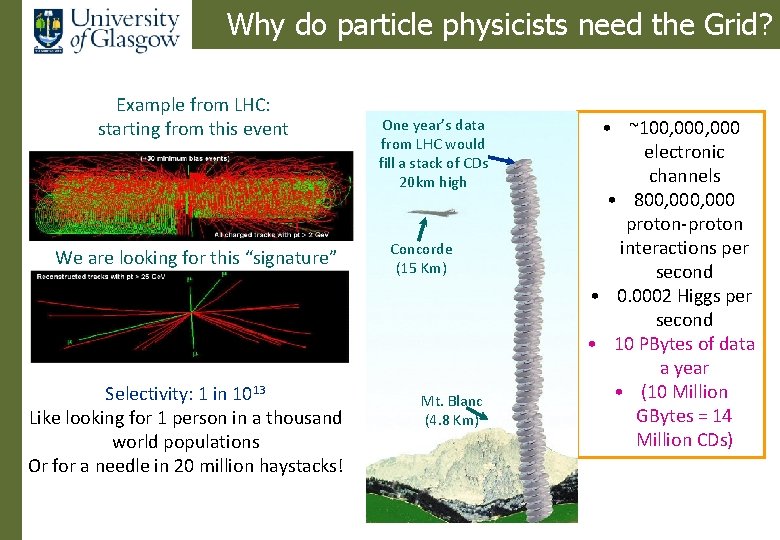

Why do particle physicists need the Grid? Example from LHC: starting from this event We are looking for this “signature” Selectivity: 1 in 1013 Like looking for 1 person in a thousand world populations Or for a needle in 20 million haystacks! One year’s data from LHC would fill a stack of CDs 20 km high Concorde (15 Km) Mt. Blanc (4. 8 Km) • ~100, 000 electronic channels • 800, 000 proton-proton interactions per second • 0. 0002 Higgs per second • 10 PBytes of data a year • (10 Million GBytes = 14 Million CDs)

The Grid Data • The Grid enables us to analyse all the data that comes from the LHC • Petabytes • 100, 000 CPUs • Distributed around the world • Now used in many other areas

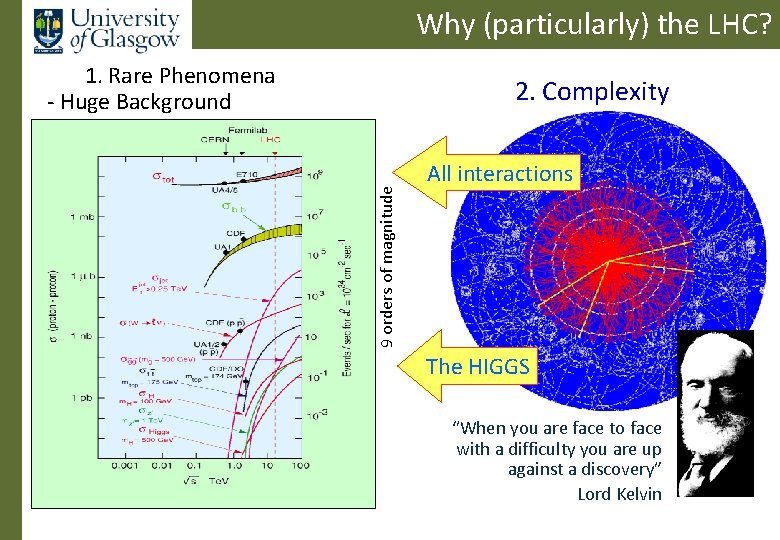

Why (particularly) the LHC? 1. Rare Phenomena - Huge Background 9 orders of magnitude 2. Complexity All interactions The HIGGS “When you are face to face with a difficulty you are up against a discovery” Lord Kelvin

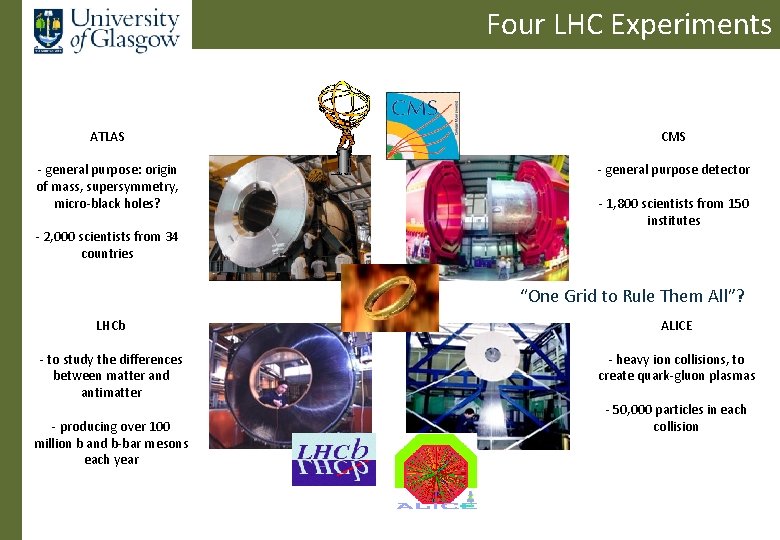

Four LHC Experiments ATLAS CMS - general purpose: origin of mass, supersymmetry, micro-black holes? - general purpose detector - 2, 000 scientists from 34 countries - 1, 800 scientists from 150 institutes “One Grid to Rule Them All”? LHCb ALICE - to study the differences between matter and antimatter - heavy ion collisions, to create quark-gluon plasmas - producing over 100 million b and b-bar mesons each year - 50, 000 particles in each collision

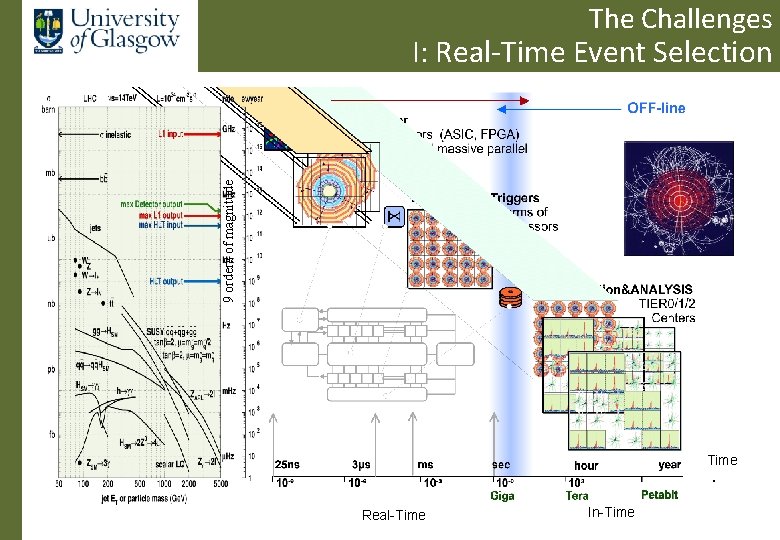

The Challenges 9 orders of magnitude I: Real-Time Event Selection Time Real-Time In-Time

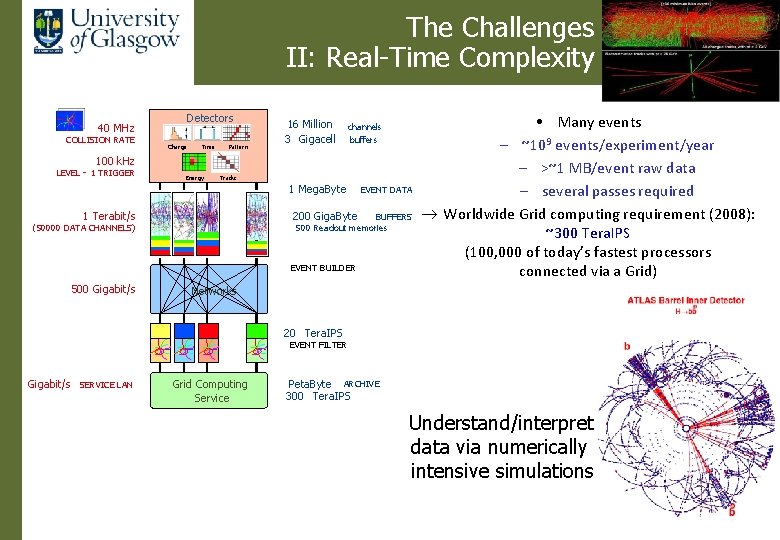

The Challenges II: Real-Time Complexity Detectors 40 MHz COLLISION RATE Charge Time Pattern 16 Million 3 Gigacell channels buffers 100 k. Hz LEVEL - 1 TRIGGER Energy Tracks 1 Mega. Byte 1 Terabit/s EVENT DATA 200 Giga. Byte BUFFERS 500 Readout memories (50000 DATA CHANNELS) EVENT BUILDER 500 Gigabit/s • Many events – ~109 events/experiment/year – >~1 MB/event raw data – several passes required ® Worldwide Grid computing requirement (2008): ~300 Tera. IPS (100, 000 of today’s fastest processors connected via a Grid) Networks 20 Tera. IPS EVENT FILTER Gigabit/s SERVICE LAN Grid Computing Service Peta. Byte ARCHIVE 300 Tera. IPS Understand/interpret data via numerically intensive simulations

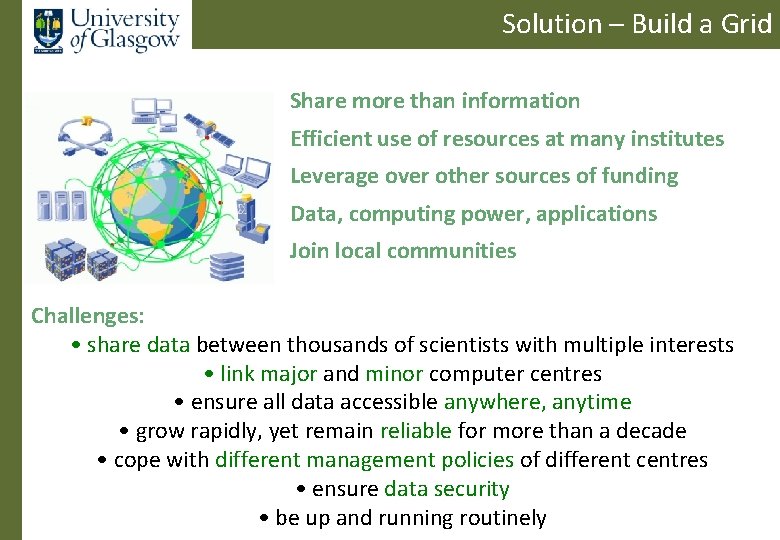

Solution – Build a Grid Share more than information Efficient use of resources at many institutes Leverage over other sources of funding Data, computing power, applications Join local communities Challenges: • share data between thousands of scientists with multiple interests • link major and minor computer centres • ensure all data accessible anywhere, anytime • grow rapidly, yet remain reliable for more than a decade • cope with different management policies of different centres • ensure data security • be up and running routinely

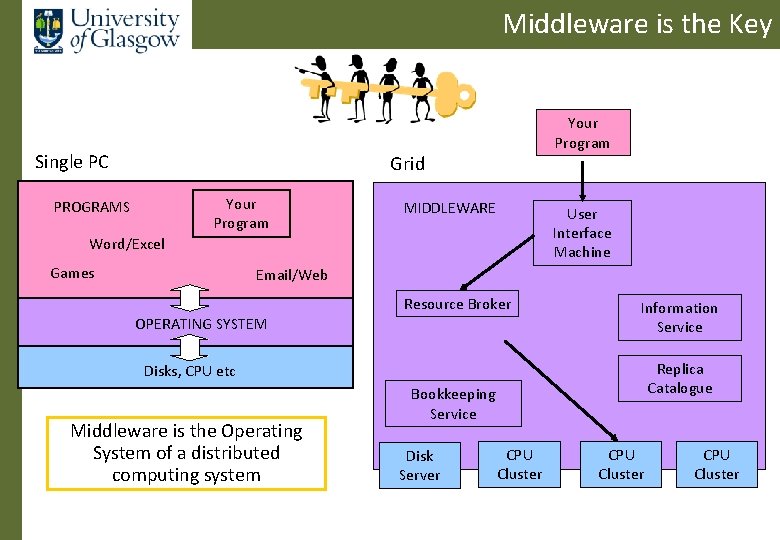

Middleware is the Key Single PC Your Program Grid Your Program PROGRAMS MIDDLEWARE User Interface Machine Word/Excel Games Email/Web Resource Broker OPERATING SYSTEM Information Service CPU Disks, CPU etc Middleware is the Operating System of a distributed computing system Replica Catalogue Bookkeeping Service Disk Server CPU Cluster

Something like this…

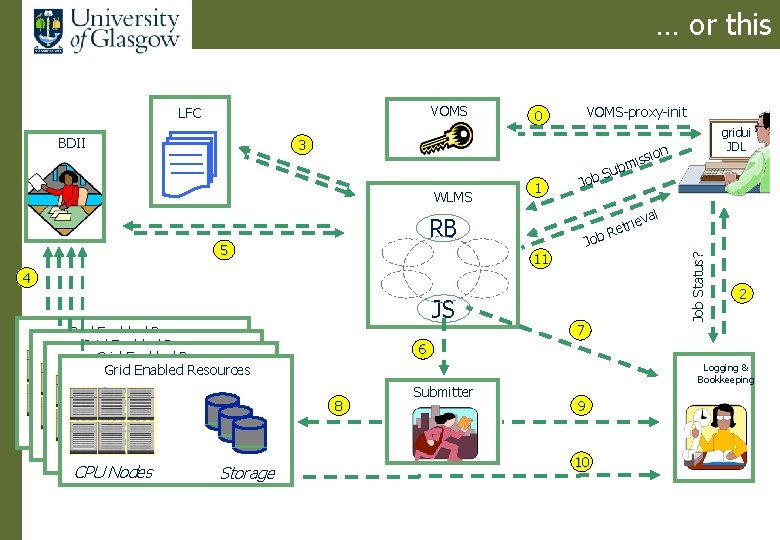

… or this BDII VOMS-proxy-init 0 3 WLMS 1 Job 4 Grid Enabled Resources Storage 7 rie Ret 2 6 8 CPU Nodes Sub Job 11 JS on si mis val RB 5 gridui JDL Job Status? VOMS LFC Submitter Logging & Bookkeeping 9 10

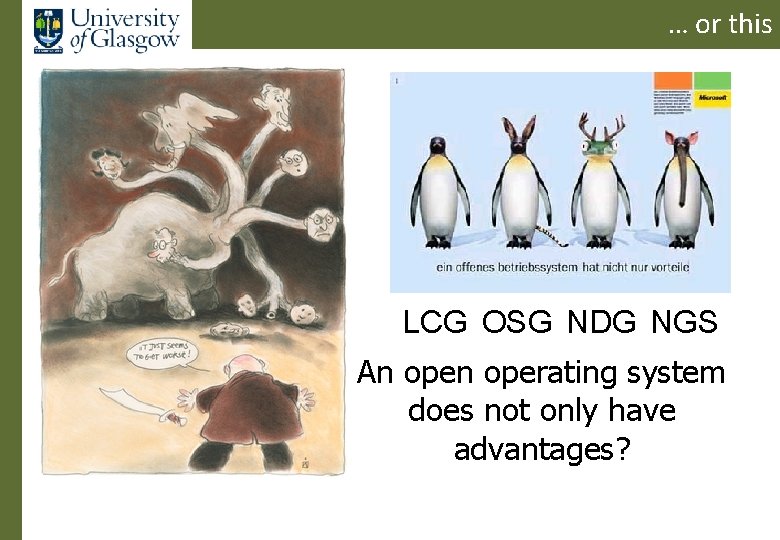

… or this LCG OSG NDG NGS An operating system does not only have advantages?

Who do you trust? No-one? It depends on what you want… (assume its scientific collaboration)

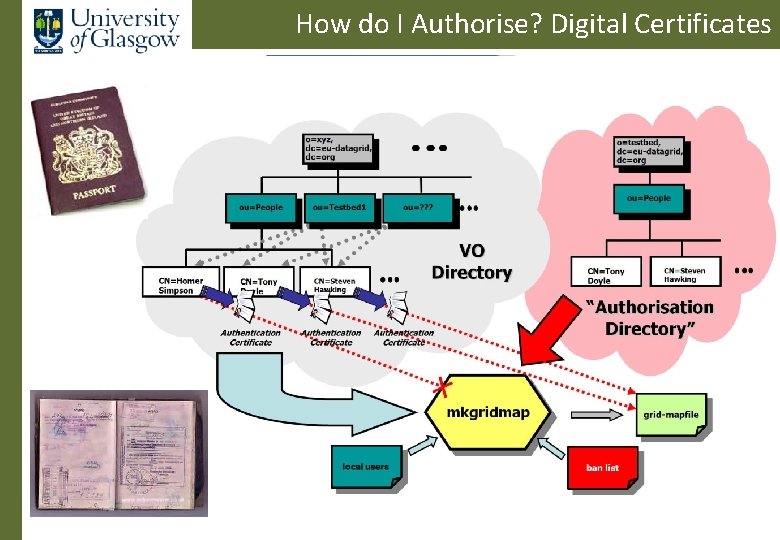

How do I Authorise? Digital Certificates

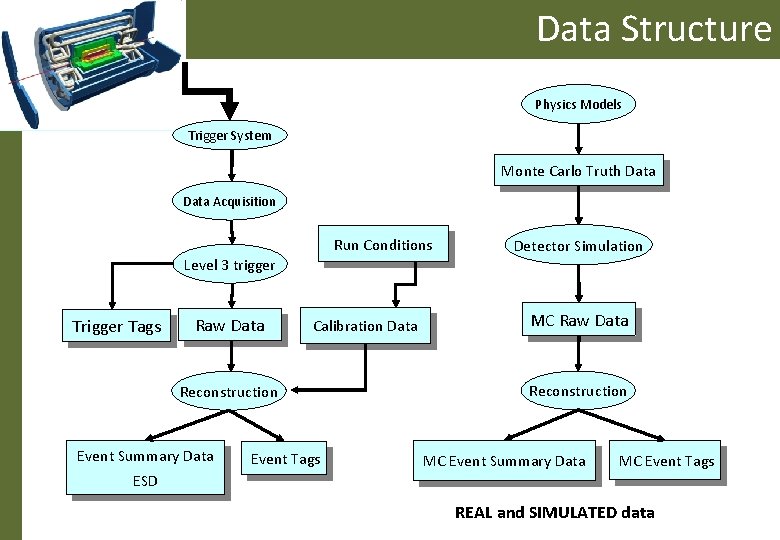

Data Structure Physics Models Trigger System Monte Carlo Truth Data Acquisition Run Conditions Level 3 trigger Tags Raw Data Calibration Data Reconstruction Event Summary Data Event Tags Detector Simulation MC Raw Data Reconstruction MC Event Summary Data MC Event Tags ESD REAL and SIMULATED data

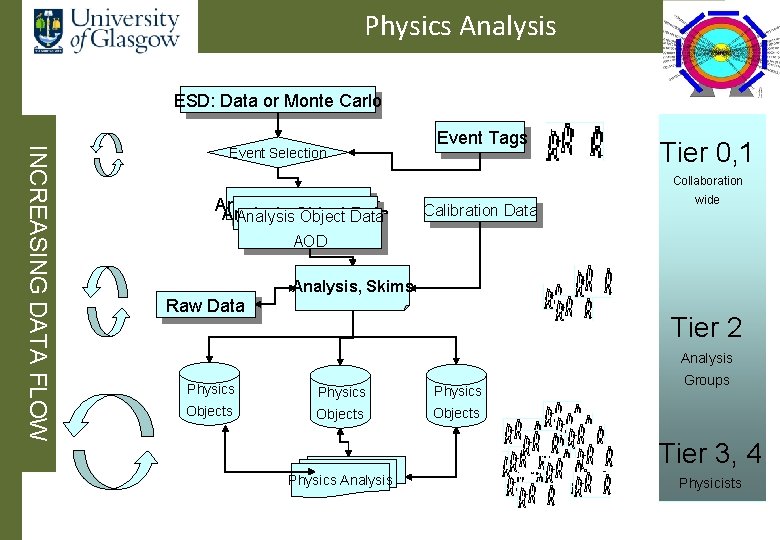

Physics Analysis ESD: Data or Monte Carlo INCREASING DATA FLOW Event Selection Event Tags Tier 0, 1 Collaboration Analysis Object Data Analysis. Object Data Calibration Data wide AOD Analysis, Skims Raw Data Tier 2 Analysis Physics Objects Groups Tier 3, 4 Physics Analysis Physicists

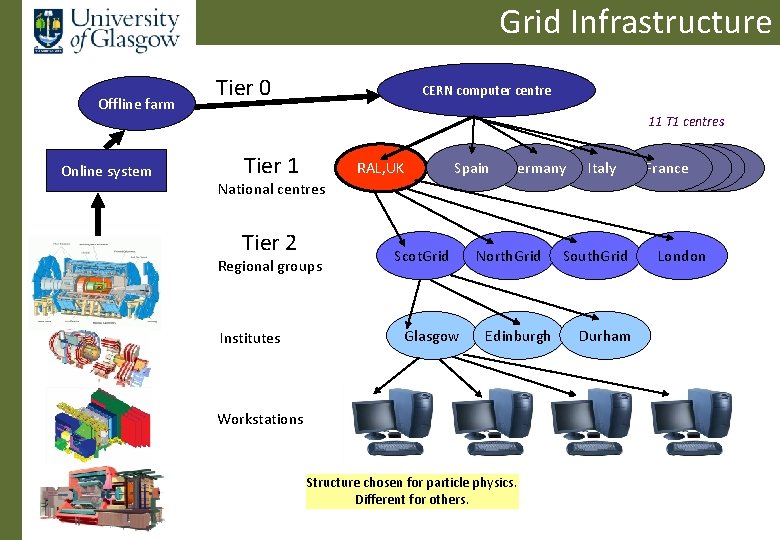

Grid Infrastructure Offline farm Online system Tier 0 CERN computer centre 11 T 1 centres Tier 1 RAL, UK Spain Germany Italy France National centres Tier 2 Regional groups Institutes Scot. Grid Glasgow North. Grid Edinburgh Workstations Structure chosen for particle physics. Different for others. South. Grid Durham London

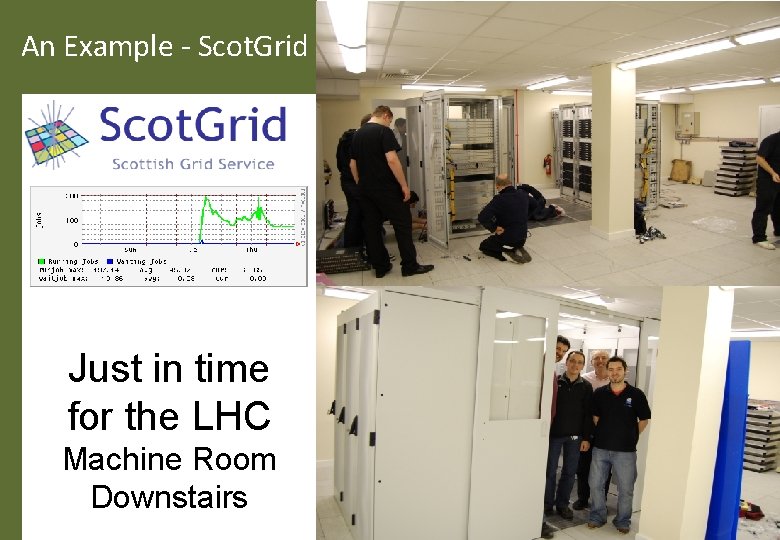

An Example - Scot. Grid Just in time for the LHC Machine Room Downstairs

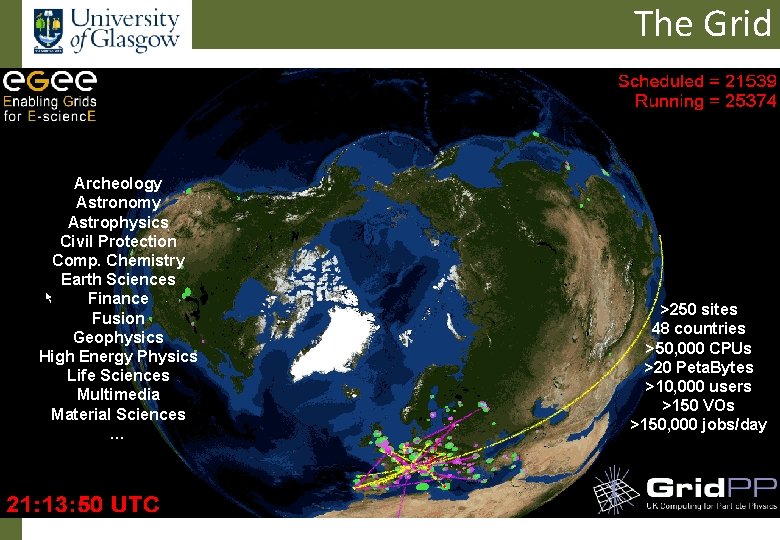

The Grid Archeology Astronomy Astrophysics Civil Protection Comp. Chemistry Earth Sciences Finance Fusion Geophysics High Energy Physics Life Sciences Multimedia Material Sciences … >250 sites 48 countries >50, 000 CPUs >20 Peta. Bytes >10, 000 users >150 VOs >150, 000 jobs/day

1. Why? 2. What? 3. How? 4. When? From Particle Physics perspective the Grid is: 1. needed to utilise large-scale computing resources efficiently and securely 2. a) a working system running today on large resources b) about seamless discovery of computing resources c) using evolving standards for interoperation d) the basis for computing in the 21 st Century 3. Using middleware 4. Now available – ready for LHC data

Avoiding Gridlock? Avoid computer lockup using a Grid provided you have a star network (basis of the internet). . Avoiding Gridlock Computing is then almost limitless

Thank YOU

- Slides: 29