Concurrent Hierarchical Reinforcement Learning Bhaskara Marthi Stuart Russell

Concurrent Hierarchical Reinforcement Learning Bhaskara Marthi, Stuart Russell, David Latham Carlos Guestrin UC Berkeley CMU 1

Example problem: Stratagus § Challenges § Large state space § Large action space § Complex policies 2

Writing agents for Stratagus Game company : N person-years to write “AI” for game Hierarchical RL : concurrent partial program + learning optimal completion Standard RL – learn from tabula rasa 3

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 4

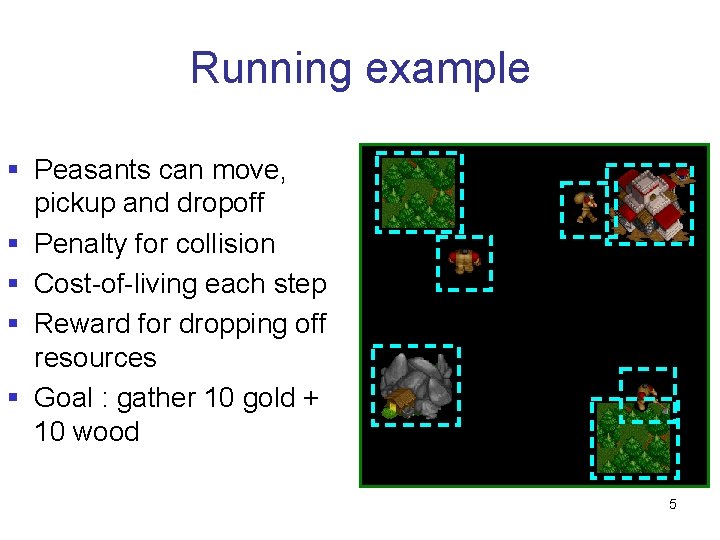

Running example § Peasants can move, § § pickup and dropoff Penalty for collision Cost-of-living each step Reward for dropping off resources Goal : gather 10 gold + 10 wood 5

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 6

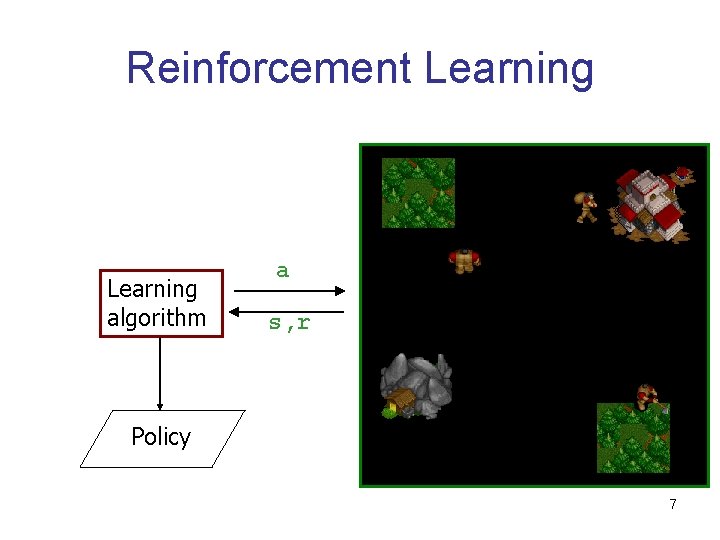

Reinforcement Learning algorithm a s, r Policy 7

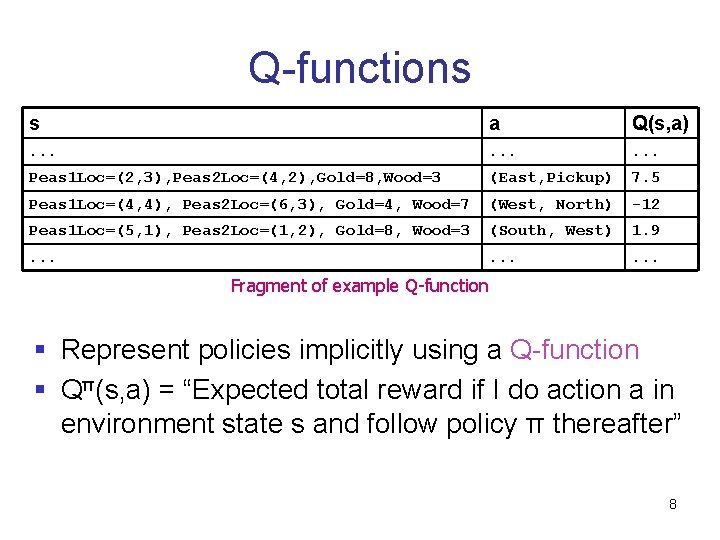

Q-functions s a Q(s, a) . . Peas 1 Loc=(2, 3), Peas 2 Loc=(4, 2), Gold=8, Wood=3 (East, Pickup) 7. 5 Peas 1 Loc=(4, 4), Peas 2 Loc=(6, 3), Gold=4, Wood=7 (West, North) -12 Peas 1 Loc=(5, 1), Peas 2 Loc=(1, 2), Gold=8, Wood=3 (South, West) 1. 9 . . Fragment of example Q-function § Represent policies implicitly using a Q-function § Qπ(s, a) = “Expected total reward if I do action a in environment state s and follow policy π thereafter” 8

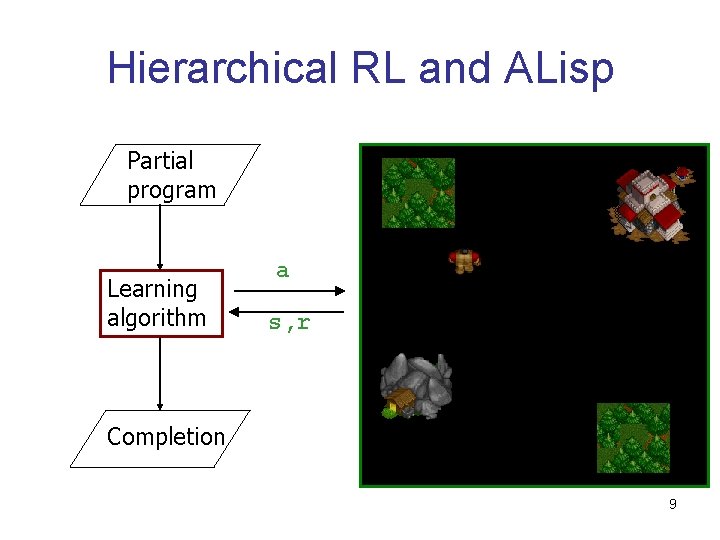

Hierarchical RL and ALisp Partial program Learning algorithm a s, r Completion 9

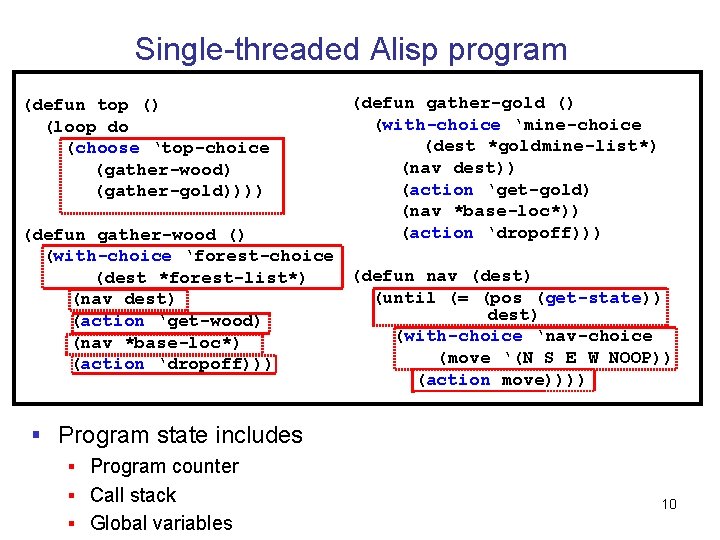

Single-threaded Alisp program (defun top () (loop do (choose ‘top-choice (gather-wood) (gather-gold)))) (defun gather-gold () (with-choice ‘mine-choice (dest *goldmine-list*) (nav dest)) (action ‘get-gold) (nav *base-loc*)) (action ‘dropoff))) (defun gather-wood () (with-choice ‘forest-choice (defun nav (dest) (dest *forest-list*) (until (= (pos (get-state)) (nav dest) (action ‘get-wood) (with-choice ‘nav-choice (nav *base-loc*) (move ‘(N S E W NOOP)) (action ‘dropoff))) (action move)))) § Program state includes § Program counter § Call stack § Global variables 10

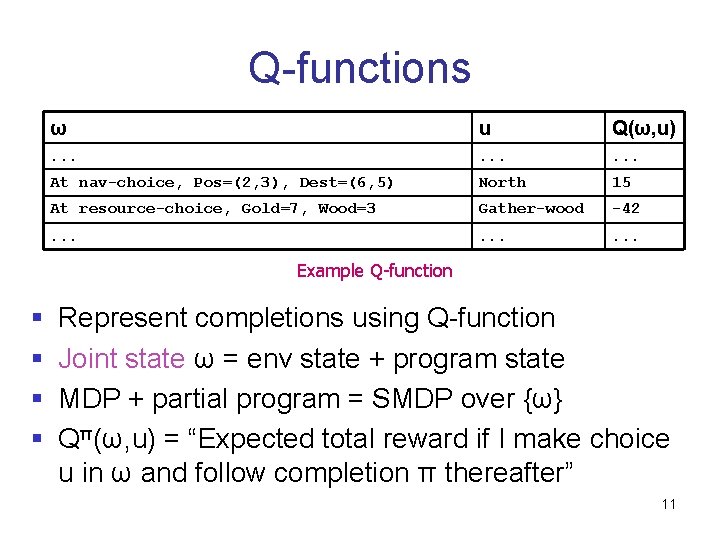

Q-functions ω u Q(ω, u) . . At nav-choice, Pos=(2, 3), Dest=(6, 5) North 15 At resource-choice, Gold=7, Wood=3 Gather-wood -42 . . Example Q-function § Represent completions using Q-function § Joint state ω = env state + program state § MDP + partial program = SMDP over {ω} § Qπ(ω, u) = “Expected total reward if I make choice u in ω and follow completion π thereafter” 11

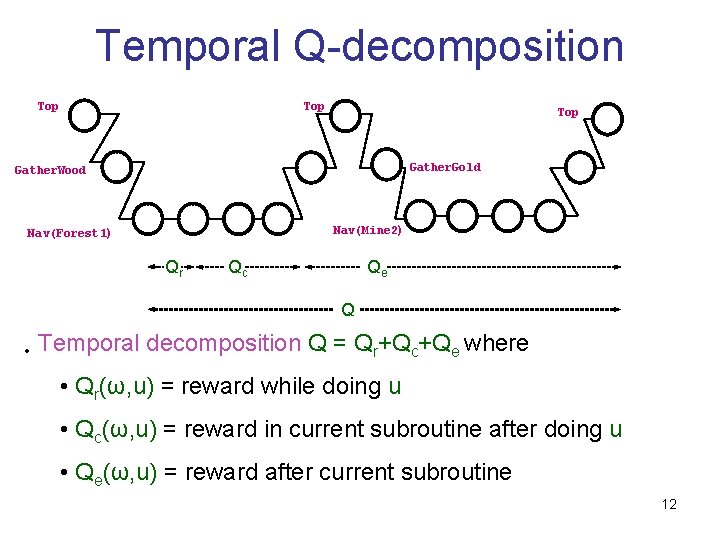

Temporal Q-decomposition Top Top Gather. Gold Gather. Wood Nav(Mine 2) Nav(Forest 1) Qr Qc Qe Q • Temporal decomposition Q = Qr+Qc+Qe where • Qr(ω, u) = reward while doing u • Qc(ω, u) = reward in current subroutine after doing u • Qe(ω, u) = reward after current subroutine 12

Q-learning for ALisp § Temporal decomposition => state abstraction § E. g. , while navigating, Qr doesn’t depend on gold reserves § State abstraction => fewer parameters, faster learning, better generalization § Algorithm for learning temporally decomposed Q-function (Andre+Russell 02) § Yields optimal completion of partial program 13

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 14

Multieffector domains § Domains where the action is a vector § Each component of action vector corresponds to an effector § Stratagus: each unit/building is an effector § #actions exponential in #effectors 15

HRL in multieffector domains § Single Alisp program § Partial program must essentially implement multiple control stacks § Independencies between tasks not used § Temporal decomposition is lost § Separate Alisp program for each effector § Hard to achieve coordination among effectors § Our approach - single multithreaded partial program to control all effectors 16

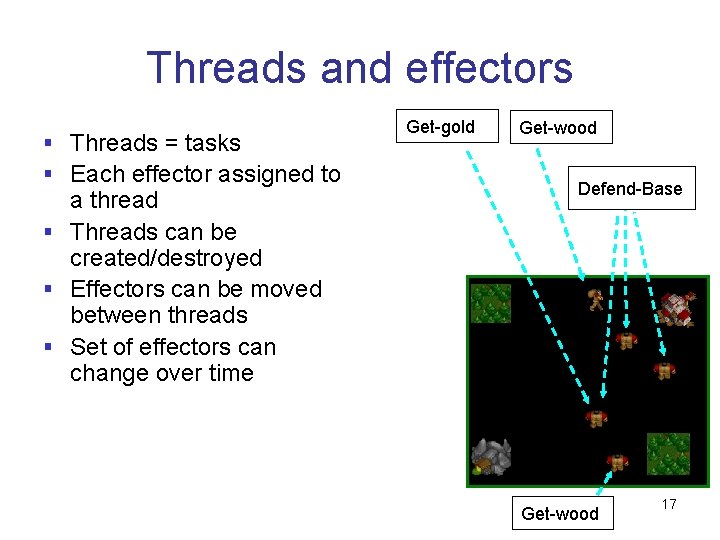

Threads and effectors § Threads = tasks § Each effector assigned to a thread § Threads can be created/destroyed § Effectors can be moved between threads § Set of effectors can change over time Get-gold Get-wood Defend-Base Get-wood 17

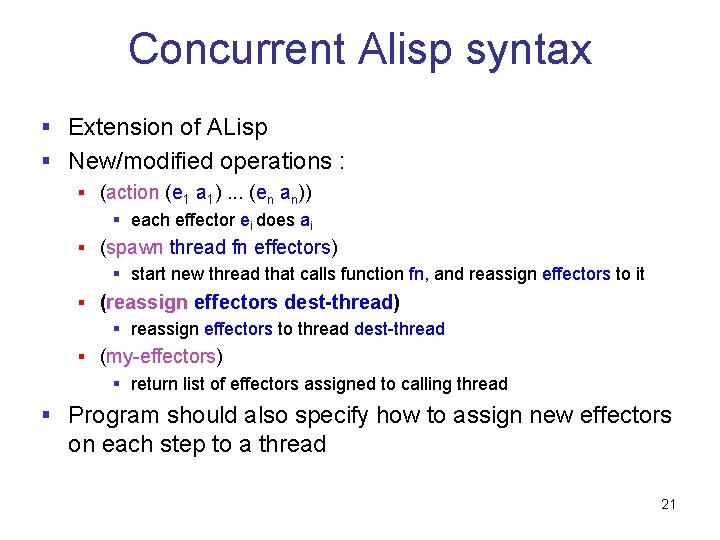

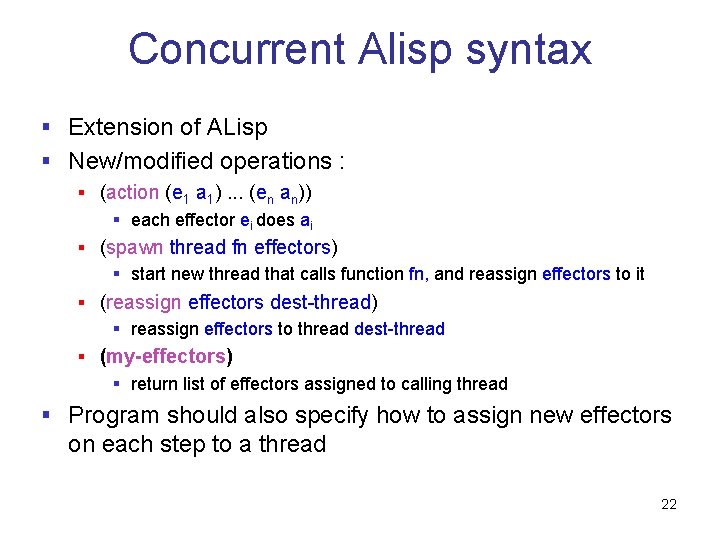

Concurrent Alisp syntax § Extension of ALisp § New/modified operations : § (action (e 1 a 1). . . (en an)) § each effector ei does ai § (spawn thread fn effectors) § start new thread that calls function fn, and reassign effectors to it § (reassign effectors dest-thread) § reassign effectors to thread dest-thread § (my-effectors) § return list of effectors assigned to calling thread § Program should also specify how to assign new effectors on each step to a thread 18

Concurrent Alisp syntax § Extension of ALisp § New/modified operations : § (action (e 1 a 1). . . (en an)) § each effector ei does ai § (spawn thread fn effectors) § start new thread that calls function fn, and reassign effectors to it § (reassign effectors dest-thread) § reassign effectors to thread dest-thread § (my-effectors) § return list of effectors assigned to calling thread § Program should also specify how to assign new effectors on each step to a thread 19

Concurrent Alisp syntax § Extension of ALisp § New/modified operations : § (action (e 1 a 1). . . (en an)) § each effector ei does ai § (spawn thread fn effectors) § start new thread that calls function fn, and reassign effectors to it § (reassign effectors dest-thread) § reassign effectors to thread dest-thread § (my-effectors) § return list of effectors assigned to calling thread § Program should also specify how to assign new effectors on each step to a thread 20

Concurrent Alisp syntax § Extension of ALisp § New/modified operations : § (action (e 1 a 1). . . (en an)) § each effector ei does ai § (spawn thread fn effectors) § start new thread that calls function fn, and reassign effectors to it § (reassign effectors dest-thread) § reassign effectors to thread dest-thread § (my-effectors) § return list of effectors assigned to calling thread § Program should also specify how to assign new effectors on each step to a thread 21

Concurrent Alisp syntax § Extension of ALisp § New/modified operations : § (action (e 1 a 1). . . (en an)) § each effector ei does ai § (spawn thread fn effectors) § start new thread that calls function fn, and reassign effectors to it § (reassign effectors dest-thread) § reassign effectors to thread dest-thread § (my-effectors) § return list of effectors assigned to calling thread § Program should also specify how to assign new effectors on each step to a thread 22

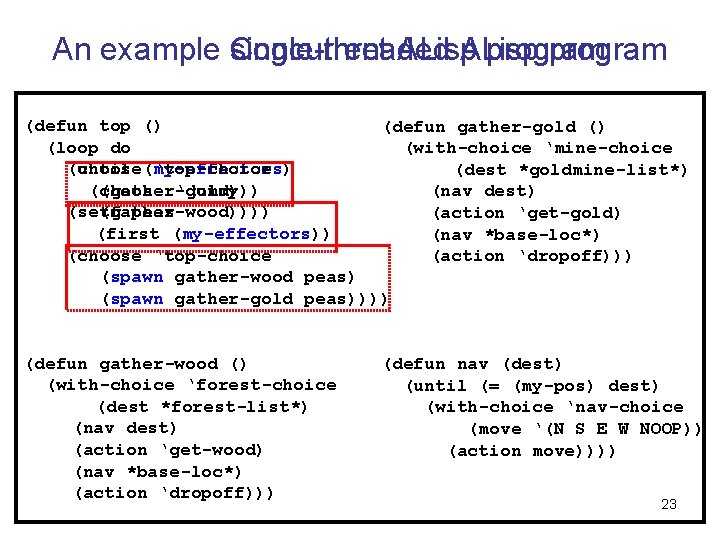

An example single-threaded Concurrent ALisp program (defun top () (defun gather-gold () (loop do (with-choice ‘mine-choice (until (my-effectors) (choose ‘top-choice (dest *goldmine-list*) (choose (gather-gold) ‘dummy)) (nav dest) (setf (gather-wood)))) peas (action ‘get-gold) (first (my-effectors)) (nav *base-loc*) (choose ‘top-choice (action ‘dropoff))) (spawn gather-wood peas) (spawn gather-gold peas)))) (defun gather-wood () (with-choice ‘forest-choice (dest *forest-list*) (nav dest) (action ‘get-wood) (nav *base-loc*) (action ‘dropoff))) (defun nav (dest) (until (= (my-pos) dest) (with-choice ‘nav-choice (move ‘(N S E W NOOP)) (action move)))) 23

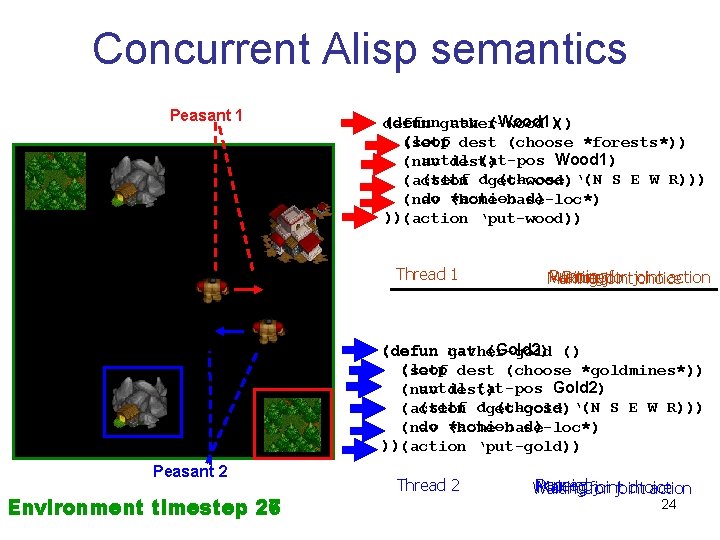

Concurrent Alisp semantics Peasant 1 (defungather-wood nav (Wood 1)() defun (loop dest (choose *forests*)) (setf until (at-pos Wood 1) (nav dest) (setf ‘get-wood) d (choose ‘(N S E W R))) (action do *home-base-loc*) (action d) (nav ))(action ‘put-wood)) Thread 1 Running Paused Waiting for joint action Making joint choice (defun gather-gold nav (Gold 2) () (loop (setf dest (choose *goldmines*)) until (at-pos Gold 2) (nav dest) (setf ‘get-gold) d (choose ‘(N S E W R))) (action do (action d) (nav *home-base-loc*) ))(action ‘put-gold)) Peasant 2 27 Environment timestep 26 Thread 2 Paused Running Making for joint choice Waiting joint action 24

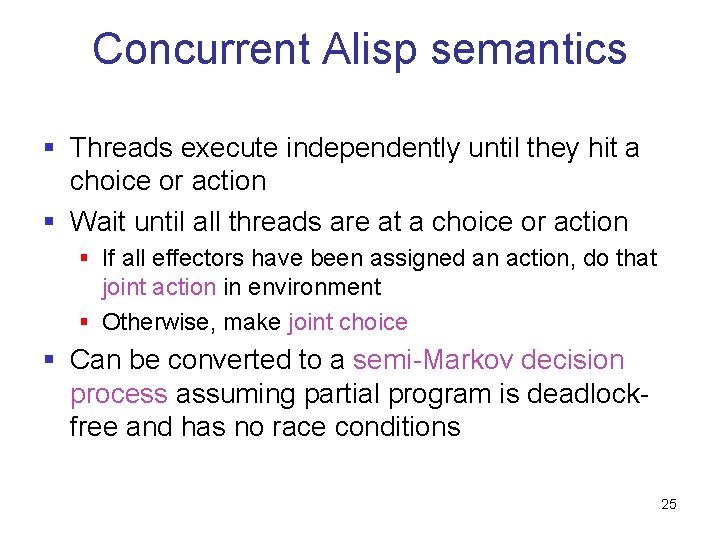

Concurrent Alisp semantics § Threads execute independently until they hit a choice or action § Wait until all threads are at a choice or action § If all effectors have been assigned an action, do that joint action in environment § Otherwise, make joint choice § Can be converted to a semi-Markov decision process assuming partial program is deadlockfree and has no race conditions 25

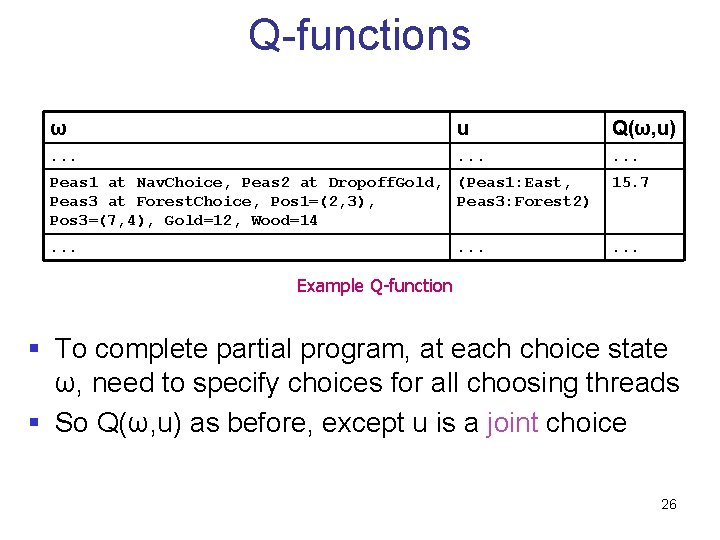

Q-functions ω u Q(ω, u) . . Peas 1 at Nav. Choice, Peas 2 at Dropoff. Gold, (Peas 1: East, Peas 3 at Forest. Choice, Pos 1=(2, 3), Peas 3: Forest 2) Pos 3=(7, 4), Gold=12, Wood=14 15. 7 . . Example Q-function § To complete partial program, at each choice state ω, need to specify choices for all choosing threads § So Q(ω, u) as before, except u is a joint choice 26

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 27

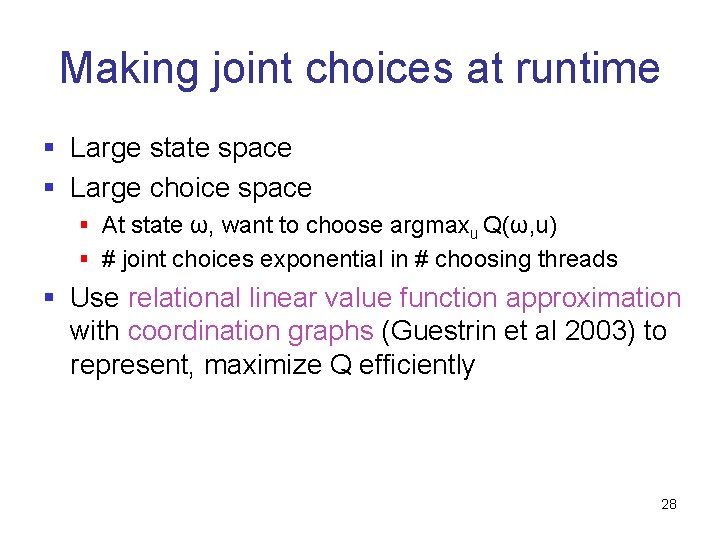

Making joint choices at runtime § Large state space § Large choice space § At state ω, want to choose argmaxu Q(ω, u) § # joint choices exponential in # choosing threads § Use relational linear value function approximation with coordination graphs (Guestrin et al 2003) to represent, maximize Q efficiently 28

Basic learning procedure § Apply Q-learning on equivalent SMDP § Boltzmann exploration, maximization on each step done efficiently using coordination graph § Theorem: in tabular case, converges to the optimal completion of the partial program 29

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 30

Problems with basic learning method § Temporal decomposition of Q-function lost § No credit assignment among threads § Suppose peasant 1 drops off some gold at base, while peasant 2 wanders aimlessly § Peasant 2 thinks he’s done very well!! § Significantly slows learning as number of peasants increases 31

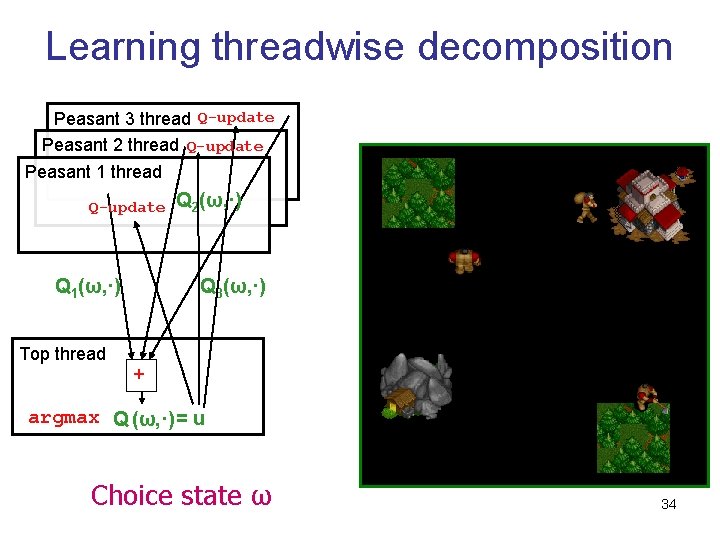

Threadwise decomposition § Idea : decompose reward among threads (Russell+Zimdars, 2003) § E. g. , rewards for thread j only when peasant j drops off resources or collides with other peasants § Qj(ω, u) = “Expected total reward received by thread j if we make joint choice u and make globally optimal choices thereafter” § Threadwise Q-decomposition Q = Q 1+…Qn 32

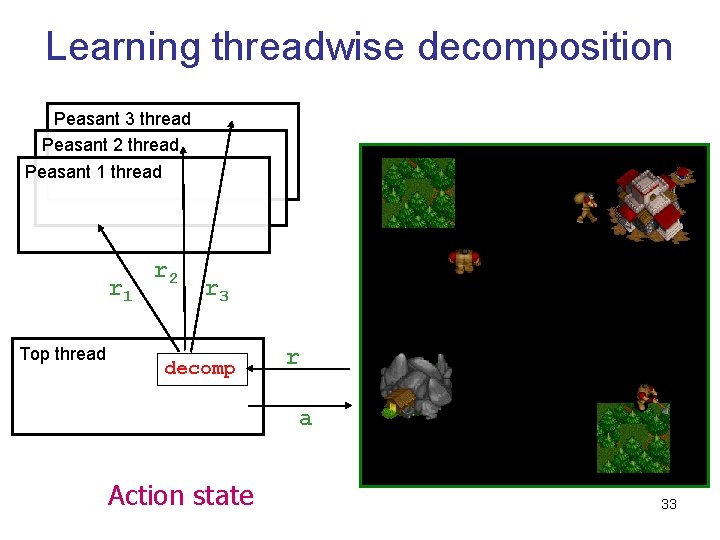

Learning threadwise decomposition Peasant 3 thread Peasant 2 thread Peasant 1 thread r 1 Top thread r 2 r 3 decomp r a Action state 33

Learning threadwise decomposition Peasant 3 thread Q-update Peasant 2 thread Q-update Peasant 1 thread Q-update Q 1(ω, ·) Q 2(ω, ·) Q 3(ω, ·) Top thread + argmax Q (ω, ·) = u Choice state ω 34

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 35

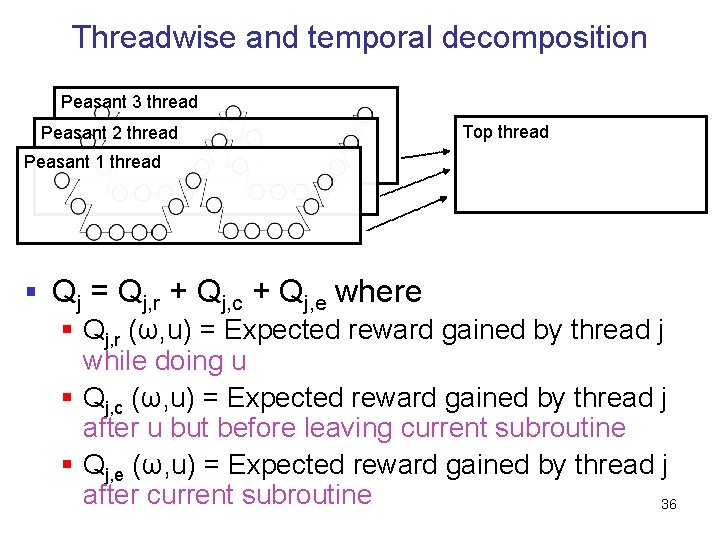

Threadwise and temporal decomposition Peasant 3 thread Peasant 2 thread Top thread Peasant 1 thread § Qj = Qj, r + Qj, c + Qj, e where § Qj, r (ω, u) = Expected reward gained by thread j while doing u § Qj, c (ω, u) = Expected reward gained by thread j after u but before leaving current subroutine § Qj, e (ω, u) = Expected reward gained by thread j after current subroutine 36

Outline § Running example § Background § Our contributions § Concurrent Alisp language for partial programs § Basic learning method § … with threadwise decomposition § … and temporal decomposition § Results 37

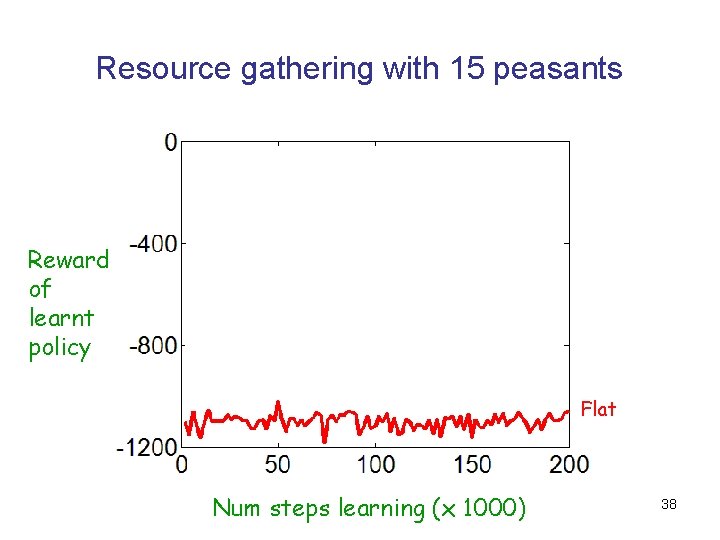

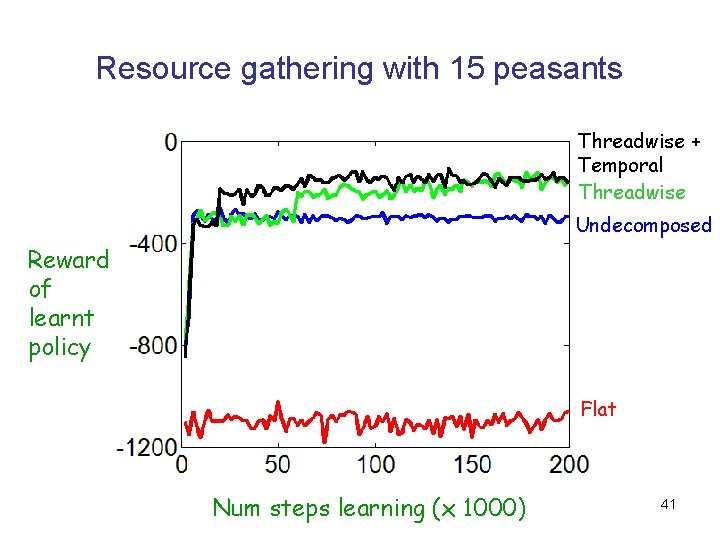

Resource gathering with 15 peasants Reward of learnt policy Flat Num steps learning (x 1000) 38

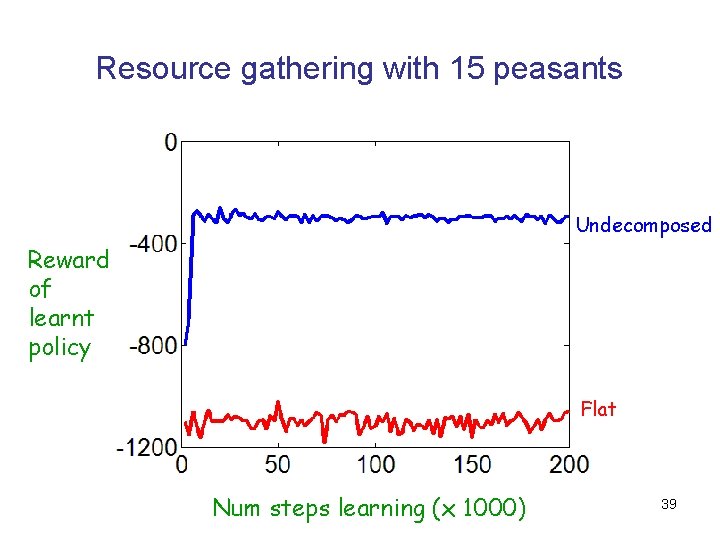

Resource gathering with 15 peasants Undecomposed Reward of learnt policy Flat Num steps learning (x 1000) 39

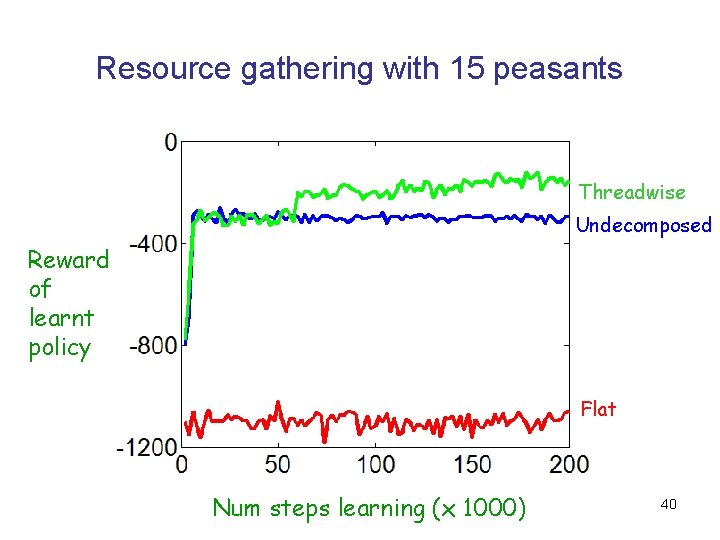

Resource gathering with 15 peasants Threadwise Undecomposed Reward of learnt policy Flat Num steps learning (x 1000) 40

Resource gathering with 15 peasants Threadwise + Temporal Threadwise Undecomposed Reward of learnt policy Flat Num steps learning (x 1000) 41

Conclusion § Contributions § Concurrent ALisp language for partial programs § Algorithms for choice selection, learning § Threadwise and temporal decomposition of Qfunction § Encode prior knowledge and use structure in Q-function for fast learning in very complex multieffector domains Questions? 42

- Slides: 42