Conceptualizing and Assessing the Broader Impacts of Your

Conceptualizing and Assessing the Broader Impacts of Your Research Miles Mc. Nall & Robert Griffore University Outreach & Engagement mcnall@msu. edu May 18, 2020

Zoom Protocols • • Participants are muted Please use the chat box to ask questions Please feel free to take a break when needed Two scheduled 10 -minute breaks

Structure of Workshop • BI Basics • 6 Elements of a BI Plan (Worksheets 1 -6) – Goals/Impacts – Audience/Population – Setting/Partners – Activity – Budget – Evaluation • BI Wizard • Resources Sheet

BI Basics What are broader impacts? Why are they important?

NSF Proposal Review Criteria • Broader impacts are one of two major NSF proposal review criteria • The potential for the proposed activity to: – Advance knowledge and understanding within its own field or across different fields (Intellectual Merit) – Benefit society or advance desired societal outcomes (Broader Impacts)

Why are Broader Impacts important? • NSF Institutional Perspective – “…we need to engage the public in order to help improve understanding of the value of basic research and why our projects are worthy of investment. ” France A. Córdova, past NSF Director • Researcher Perspective – I want my work to make a difference in the world • Community member perspective – How is my government and the researchers it funds working to improve the lives of people like me?

“While a great Broader Impacts statement won’t float a proposal with poor science, a poor Broader Impacts statement can sink a proposal with good science. ” Jennifer de. Bruyn, 2018

Impacts of Research on Society • Not just a concern for NSF proposals • Nearly all funding agencies and foundations expect researchers to articulate the potential benefits of their research on society

Achieving Broader Impacts • Broader impacts are accomplished through: Øthe research itself Øactivities directly related to specific research projects Øactivities supported by, but complementary to the project

If accomplished through the research itself… • You still need a BI statement that makes the case for – What the impacts are – How they will be achieved – How they will be assessed • Will your research itself have broader impacts without additional efforts at dissemination or commercialization? • If not, you still need to consider audiences and partners.

Core Elements of a BI Plan Goal Audience/ Population Evaluation BI PLAN Setting & Partners Budget Activity

BI Goals What impacts do you want your research to have?

NSF BI Goals 1) full participation of women, persons with disabilities, and underrepresented minorities in science, technology, engineering, and mathematics (STEM); 2) improved STEM education and educator development at any level; 3) increased public scientific literacy and public engagement with science and technology; 4) improved well-being of individuals in society; 5) development of a diverse, globally competitive STEM workforce;

BI Goals, continued 6) increased partnerships between academia, industry, and others; 7) improved national security; 8) increased economic competitiveness of the United States; and 9) enhanced infrastructure for research and education.

1) Broadening Participation • Problem: “…non-dominant populations in the US have been significantly underrepresented in STEM academics, professions, and civic decision-making…” (Bevan, Calabrese Barton & Garibay, 2018). • Goal: full participation of women, persons with disabilities, and underrepresented minorities in science, technology, engineering, and mathematics (STEM) • You do not have to achieve every BI goal, but you might be taking a risk if you skip this one!

1) Broadening Participation • For underrepresented groups, it is not enough to simply increase access to existing STEM opportunities • STEM opportunities designed for dominant-culture populations may not be appropriate for underrepresented groups • Work with community partners and intended participants to design culturally responsive STEM opportunities (More about this in the Partnerships section) Bevan, Calabrese Barton & Garibay, 2018

2) STEM education/educator development • Audiences/Populations – Students: Pre-K Graduate Students – Educators: Pre-K Four-year colleges • Settings – Formal and informal education settings • NSF Funding Opportunities $$$ – IUSE – Improving Undergraduate STEM Education – ITEST – Innovative Technology Experiences for Teachers and Students – RET – Research Experience for Teachers

Research Experience for Teachers (RET) • College of Engineering – Wen Li and Drew Kim (kima@egr. msu. edu) • • Middle and High School Teachers Six-week Summer Institute Participate in cutting-edge research One-on-one mentoring by MSU graduate students and faculty • Development of innovative curriculum modules for classrooms

3) Increased Public Scientific Literacy and Public Engagement with STEM • Audiences and settings vary widely! • Common BI Activities – Informal Science Education – Science Communication – Citizen (Community) Science

4) Improved Well-Being of Individuals • • Physical well-being Economic well-being Social well-being Emotional and psychological well-being Life satisfaction Domain specific satisfaction Engaging activities and work Source: Centers for Disease Control and Prevention

9) Enhanced Infrastructure for Research & Education: Building Shared Research Capacity Museum research collections – Natural science collections • MSU Mammal Research Collection has more than 41, 000 specimens. [Photo of Science on a Sphere] – Cultural and historical collections • Anthropology • Folklife and cultural heritage • History Science on a Sphere

Specialized Facilities, Labs, Instruments Facility for Rare Isotope Beams (FRIB)

High-Speed Computing Institute for Cyber-Enabled Research (ICER)

Education Facilities and Technology MSU’s STEM facility Modern teaching laboratories that incorporate active learning principles and foster crossdisciplinary teaching and learning

Your Impact Identity Leveraging multiple identities to maximize impacts

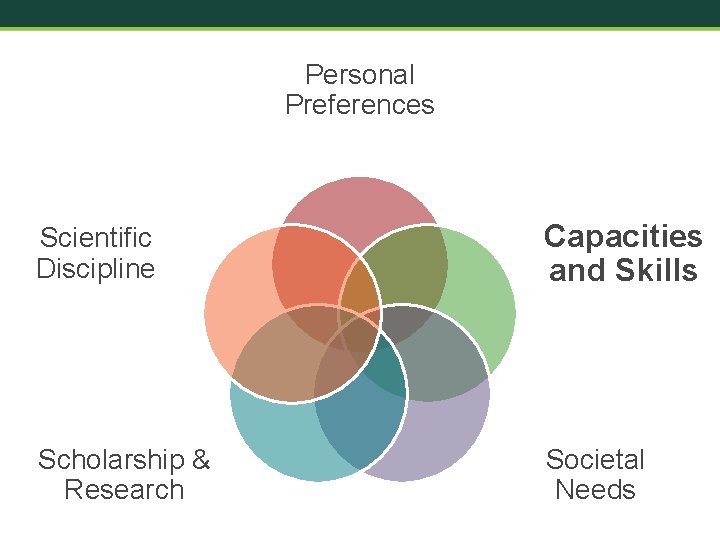

Personal Preferences Scientific Discipline Capacities and Skills Impact Identity Scholarship & Research Institutional Context Societal Needs Adapted from Risien & Storksdiek, 2018

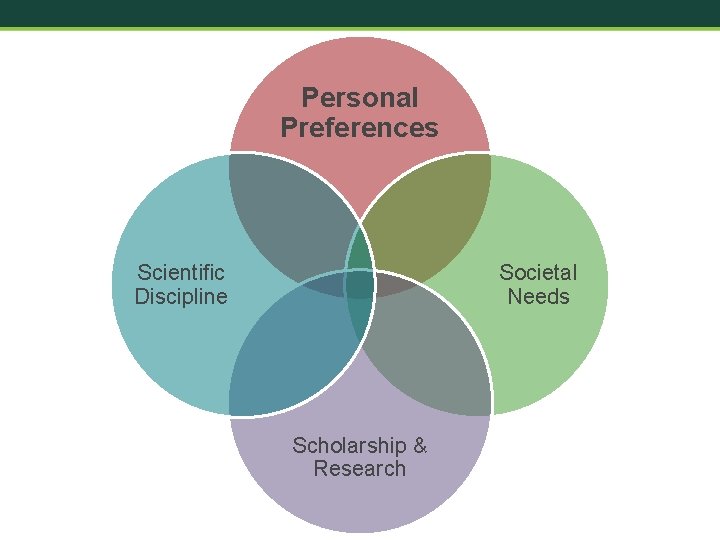

Societal Needs Scientific Discipline Your Broader Impacts Scholarship & Research

Worksheet 1: Impact Identity (10 min) 1. What are the leading questions in my discipline? 2. What questions drive my own research? 3. Where do the leading questions of my discipline and my own scholarship intersect with urgent societal needs? (Consider the nine NSF BI Goals). 4. What societal need would you like to address through your research or related BI activity? 5. What specific impact(s) would you like to achieve? POST YOUR QUESTIONS IN THE CHAT!

BI Goals • Full participation of women, persons with disabilities, and underrepresented minorities in science, technology, engineering, and mathematics (STEM) • Improved STEM education and educator development at any level • Increased public scientific literacy and public engagement with science and technology • Improved well-being of individuals in society • Development of a diverse, globally competitive STEM workforce • Enhanced infrastructure for research and education • Increased partnerships between academia, industry, and others • Increased economic competitiveness of the United States • Improved national security

Worksheet 1: Impacts Example NSF Goal: Improved STEM education and educator development at any level My Impact: Increased interest in and awareness of careers in science among K-12 students who are members of groups under-represented in STEM careers

Questions?

Personal Preferences Scientific Discipline Societal Needs Scholarship & Research

Personal Preferences Scientific Discipline Capacities and Skills Scholarship & Research Societal Needs

Personal Preferences Scientific Discipline Capacities and Skills Scholarship & Research Institutional Context Societal Needs

BI Audiences* What audiences will you engage? *audience implies passive one-way reception of scientific information

BI Audiences or Populations • • Underrepresented or underserved populations K-12 Students K-12 Teachers General public Hobbyists Citizen Scientists Industry Government

Outreach & Engagement Support • How comfortable or competent are you in working with your target audiences? – K-12 students or teachers? – Minoritized and historically marginalized groups? – Members of the general public with low scientific literacy? – Policymakers?

Outreach & Engagement Support • If you lack experience working successfully with your target audiences, ask for help! – University Outreach & Engagement (engage. msu. edu 353 -8977) – Extension (canr. msu. edu/outreach/ - 1 -888 -678 -3464) – IPSSR (ippsr. msu. edu) – working with policymakers – Faculty or staff with prior experience

Citizen (Public) Science • citizen science n. scientific work undertaken by members of the general public, often in collaboration with or under the direction of professional scientists and scientific institutions. Oxford English Dictionary (oed. com)

Citizen (Public) Science Examples • SETI@home (UC Berkeley) – Search for extraterrestrial life using volunteers’ computers • Genographic Project (National Geographic Society) – Mapping historical human migration patterns using DNA samples • Audubon Christmas Bird Count – Taking an annual census of bird species in the U. S. through volunteer observations and reporting

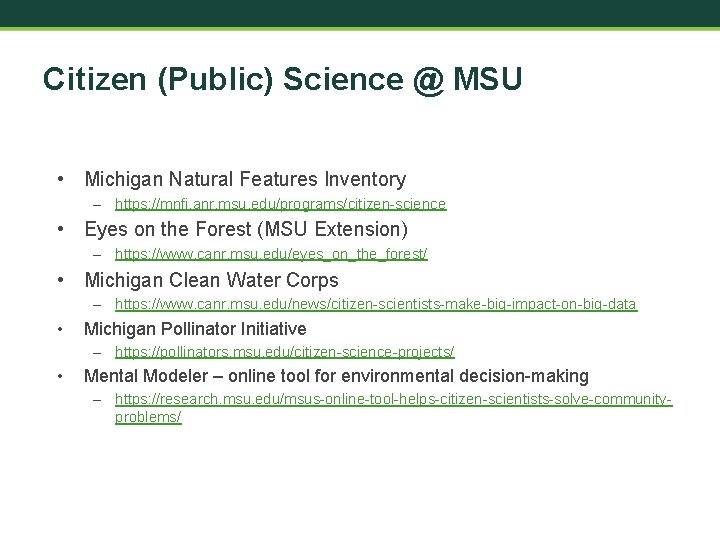

Citizen (Public) Science @ MSU • Michigan Natural Features Inventory – https: //mnfi. anr. msu. edu/programs/citizen-science • Eyes on the Forest (MSU Extension) – https: //www. canr. msu. edu/eyes_on_the_forest/ • Michigan Clean Water Corps – https: //www. canr. msu. edu/news/citizen-scientists-make-big-impact-on-big-data • Michigan Pollinator Initiative – https: //pollinators. msu. edu/citizen-science-projects/ • Mental Modeler – online tool for environmental decision-making – https: //research. msu. edu/msus-online-tool-helps-citizen-scientists-solve-communityproblems/

Citizen Science Organizations

Be Specific About Your Audience • Demographics – – – Location Ages Gender Race/Ethnicity Income UNDERREPRESENTED GROUPS? • Numbers: How many? • Rationale: Why is it important to work with this audience? • Recruitment: How will you reach them? • Benefits: How will they benefit?

Worksheet 2: Your BI Audience (5 min) • What is your audience/population? – Characteristics (age, gender, race/ethnicity, etc. ) – Number(estimate) • Why is it important to work with this audience? • How will you reach them? • How will they benefit from participating? POST YOUR QUESTIONS IN THE CHAT!

Questions?

Time to Stretch – 10 minute break

BI Settings Where will you engage with your audiences/populations?

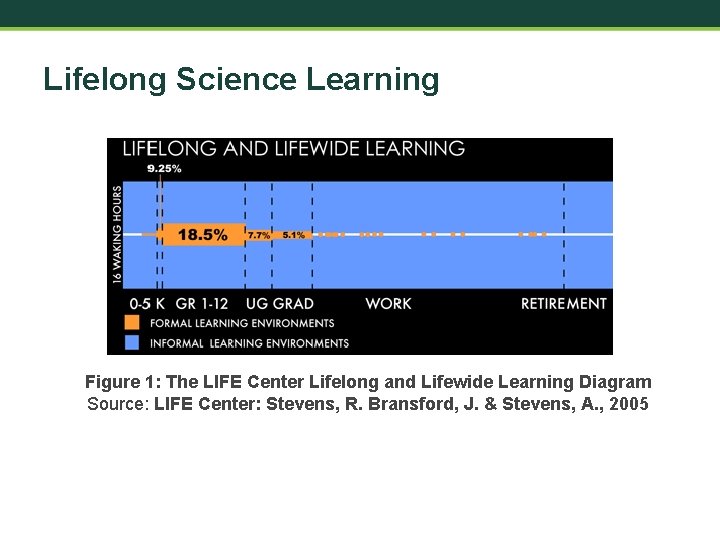

Lifelong Science Learning Figure 1: The LIFE Center Lifelong and Lifewide Learning Diagram Source: LIFE Center: Stevens, R. Bransford, J. & Stevens, A. , 2005

Informal Science Education (ISE) “…lifelong learning in science, technology, engineering, and math (STEM) that takes place across a multitude of designed settings and experiences outside of the formal classroom” informalscience. org

Formal vs. Informal Learning Environments FORMAL INFORMAL Evaluative, high consequence Type and Use of Assessment Situated feedback, low consequence Mandated Degree of Choice Voluntary Structured by other Design Structured by learner Bell et al. , 2009

SCIENCE EDUCATION SETTINGS FORMAL INFORMAL • Classrooms Designed Spaces • Museums • Science centers • Zoos • Aquariums • Environmental centers • Laboratories • Online courses Programs for Science Learning • Hobbyist groups • Citizen science • Library programs • Making and tinkering groups • Outdoor and garden programs • Summer programs Science Media • Radio, TV, internet (interactive and online games, simulations, websites, mobile apps, etc. ) Source: Bell et al. , 2009

K-12 Settings • Be mindful of burden on area K 12 schools • Build on existing K-12 partnerships • Demonstrate understanding of: – K-12 STEM learning standards (e. g. , NGSS) – Best practices for teacher professional development – Student demographics Mc. Fadden, 2019

K-12 Settings: Challenges CHALLENGES • Scientists lack training and experience in K-12 pedagogy, classroom management, and how to communicate with younger audiences • Teachers’ curricula and lesson plans are scheduled far in advance • Teachers’ performance appraisals are linked to student achievement as measured by statewide standardized tests (i. e. , “teaching to the test”) Mc. Fadden, 2019 SOLUTION Ø Find STEM education faculty or researchers with experience in K-12 settings to advise you or partner with you

Summer Pre-College Programs Spartan youth programs: • • • Spartan LEGO Robotics Camp Math, Science, Technology Leadership Camp Physics of Atomic Nuclei (PAN) Osteo. CHAMPS ANR Institute for Multicultural Students https: //spartanyouth. msu. edu/Default. aspx

Science Communication (Sci. Com) “…the use of appropriate skills, media, activities, and dialogue to produce one or more of the following personal responses to science (the AEIOU vowel analogy): Awareness, Enjoyment, Interest, Opinion-forming, and Understanding. ” Burns, O'Connor, & Stocklmayer, 2003

Sci. Com Media • Print: Scientific American, NY Times science supplement • Radio - NPR: Science Friday, Hidden Brain • TV – PBS: NOVA, Nature • Internet • • Interactive and online games and simulations Websites Mobile apps – i. Naturalist Podcasts • Radio Lab • Star Talk • Science Vs

Worksheet 3: BI Settings (5 min) Given your goals and audiences, what are the likely settings for your BI activities?

Questions?

BI Partners Who will you partner with to design and implement your BI activities?

BI Partners • Given your BI audience, who are your likely BI partners? – MSU Partners? – External Partners? • How will you find your partners?

Possible MSU Connectors and Partners Offices Supporting Engagement Designed Spaces • University Outreach & Engagement • Abrams Planetarium • Cntr for Community-Engaged Learning • MSU Museum • Director of Youth Programs • MSU Science Festival • Gifted and Talented Education • Science Gallery Lab Detroit • MSU Detroit Center • Wharton Center Inst. for Arts and Creativity • MSU Extension • Office of K-12 Outreach • Undergraduate Research • Upward Bound Research Centers Science Media • Create for STEM Institute • • Julian Samora Research Institute • Native American Institute WKAR Public Media

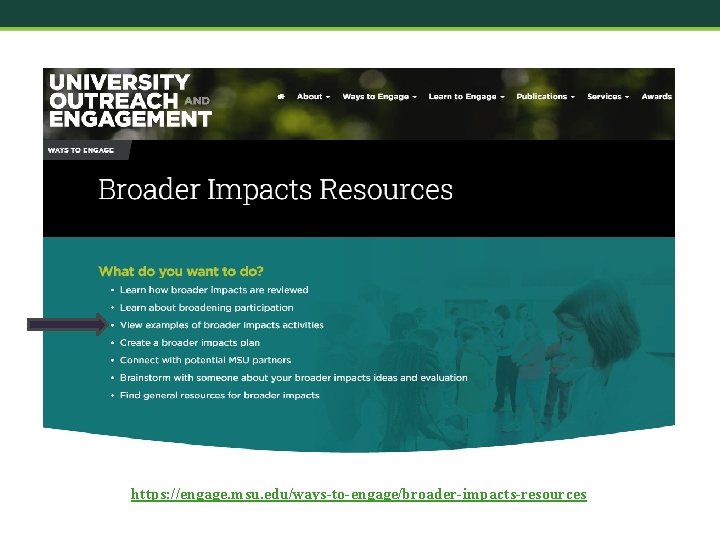

Finding MSU Partners https: //engage. msu. edu/ways-to-engage/broader-impacts-resources

Culturally Responsive BI Activities • Identify internal or external partners (or both) with a history of successfully working with your target audience • Establish an agreement to work with partners to develop culturally responsive BI activities • Consider partners as Co-PIs, if appropriate • Put your partners in your BI budget, if appropriate

Worksheet 4: Your BI Partners (5 min) • Who will your MSU partners be? • Who will your external partners be? • How will you reach your external partners?

Questions?

BI Activity Given your goals, audiences/populations, settings and partners, what will you do together? * *This should be decided in collaboration with your partners.

Your BI activities should be… • Meaningfully connected to your research • Innovative • Impactful • Fun

https: //engage. msu. edu/ways-to-engage/broader-impacts-resources

Worksheet 5: Your BI Activity (5 min) Given your goals, audiences, settings and partners, what are some possible BI activities?

BI Plan Goal Evaluation Audience BI PLAN Setting & Partners Budget Activity

Tips for a Great BI Plan • Developed concurrently with and integrated into the research plan - instead of hastily tacked on at the end • BI language unique to the proposed research plan – instead of recycled “boilerplate” BI language • Partners identified and partnerships established • An appropriate budget – Don’t starve your BI plan for funds! – Reviewers will look to see of your BI plan is funded at an appropriate level – Usually 8 -10% of direct costs Mc. Fadden, 2019

Questions?

Time to Stretch! 10 Minute Break

Evaluating your Broader Impacts What impacts will your research have on society?

Why Evaluate Broader Impacts? Each proposal submitted to the NSF must include a section about its intended broader impacts and a plan for assessing them.

Basic Terminology Evaluation – the effectiveness of a program, activity or project – how it might be improved – systematic investigation of the merit, worth, or value of something – goal is not to produce or disseminate generalizable knowledge

Types of Evaluation • Needs assessment (Sometimes called “front-end evaluation”): Need for project, merit of project, feasibility • Formative evaluation: Conducted throughout project design and development to guide improvements during piloting/prototyping of something new, or to improve an existing program or project. • Summative evaluation: Conducted at the end to document successes, failures and lessons learned. • Process evaluation: Project implementation processes • Monitoring: Routine, automatic measurement of indicators

Formative v. Summative Evaluation When the cook tastes the soup, that's formative. When the guests taste it, that's summative. – Robert Stake

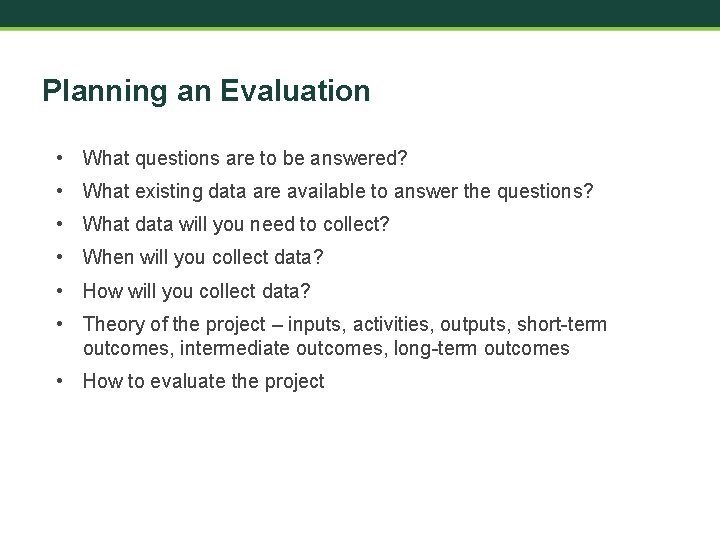

Planning an Evaluation • What questions are to be answered? • What existing data are available to answer the questions? • What data will you need to collect? • When will you collect data? • How will you collect data? • Theory of the project – inputs, activities, outputs, short-term outcomes, intermediate outcomes, long-term outcomes • How to evaluate the project

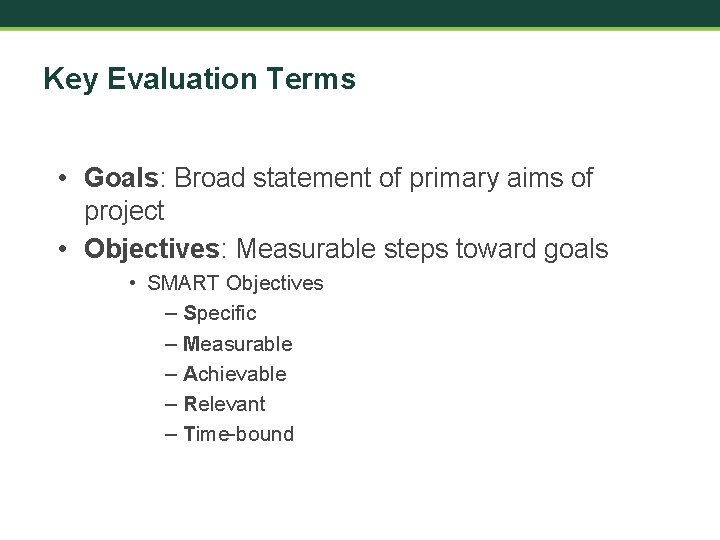

Key Evaluation Terms • Goals: Broad statement of primary aims of project • Objectives: Measurable steps toward goals • SMART Objectives – Specific – Measurable – Achievable – Relevant – Time-bound

Key Evaluation Terms • Metrics or Indicators: Something that can be measured; performance indicators • Outputs: Deliverables and activities that result from project • Outcomes: Description of what happened, or project achievements • Impacts: Did the project make a difference, or effect change? What are the benefits to target audience(s)/society due to the activity?

Examples of Impacts • • • Improved STEM education and educator development Increased partnerships among academia, industry, others? Public scientific literacy and public engagement with STEM Increased economic competitiveness of the United States Enhanced infrastructure for research and education Participation of women, persons with disabilities, and underrepresented minorities in STEM)

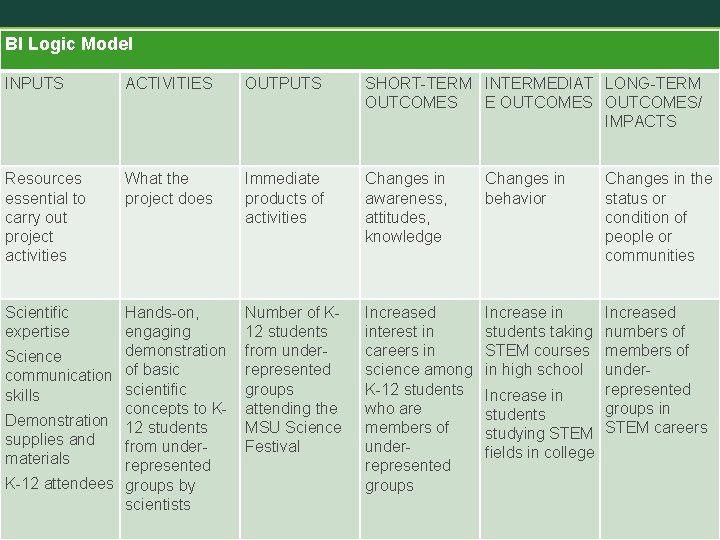

Example – The MSU Science Festival • GOAL: Increased awareness of and interest in careers in science among K-12 students who are members of groups underrepresented in STEM careers.

BI Logic Model INPUTS ACTIVITIES OUTPUTS SHORT-TERM INTERMEDIAT LONG-TERM OUTCOMES E OUTCOMES/ IMPACTS Resources essential to carry out project activities What the project does Immediate products of activities Changes in awareness, attitudes, knowledge Changes in behavior Number of K 12 students from underrepresented groups attending the MSU Science Festival Increased interest in careers in science among K-12 students who are members of underrepresented groups Increase in students taking STEM courses in high school Scientific expertise Hands-on, engaging demonstration Science communication of basic scientific skills concepts to KDemonstration 12 students supplies and from undermaterials represented K-12 attendees groups by scientists Changes in the status or condition of people or communities Increased numbers of members of underrepresented Increase in groups in students studying STEM careers fields in college

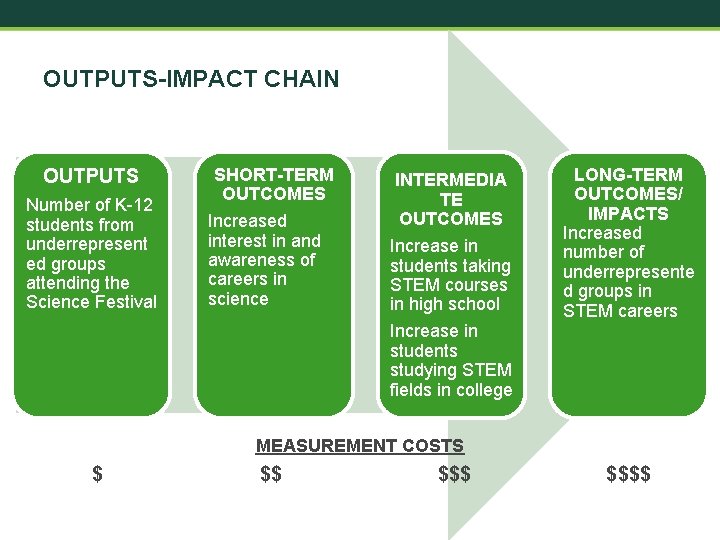

OUTPUTS-IMPACT CHAIN OUTPUTS Number of K-12 students from underrepresent ed groups attending the Science Festival SHORT-TERM OUTCOMES Increased interest in and awareness of careers in science INTERMEDIA TE OUTCOMES Increase in students taking STEM courses in high school Increase in students studying STEM fields in college LONG-TERM OUTCOMES/ IMPACTS Increased number of underrepresente d groups in STEM careers MEASUREMENT COSTS $ $$ $$$$

OUTPUTS-IMPACT CHAIN OUTPUTS Number of K-12 students from underrepresent ed groups attending the Science Festival SHORT-TERM OUTCOMES Increased interest in and awareness of careers in science What you might actually measure INTERMEDIA TE OUTCOMES Increase in students taking STEM courses in high school Increase in students studying STEM fields in college LONG-TERM OUTCOMES/ IMPACTS Increased number of underrepresente d groups in STEM careers

EVALUATION PLAN OUTCOME OR IMPACT INFORMATON NEEDED SAMPLE DESIGN DATA COLLECTIO N METHOD DATA ANALYSIS REPORTING • Timing • Format • Audience Increased interest in and awareness of careers in science among K-12 students who are members of groups underrepresented in STEM careers. Self-reported interest in and awareness of careers in science among K-12 Science Festival attendees Systematic sample (every 5 th attendee who crosses an intercept point) Single-group, posttest only i. Pad survey Descriptive statistics NSF Demographic characteristics of Science Festival attendees (gender, race, ethnicity, etc. ) OR Retrospective pretest-posttest

Sampling – An Essential Step in Planning Sample vs. Census Identify population Can entire population be included in the evaluation? Yes Census No Sample

Sampling Options Simple Random Sample Yes Stratified Random Sample Is random (probability) sampling possible? Convenience sampling Snowball sampling No Systematic sampling Purposive sampling Quota sampling

Standard Data Collection Options • Surveys –Paper –Phone –Online – computer, tablet, phone • Interviews • Focus groups • Observations • Secondary data • Document review http: //www. socialbrite. org/author/jd-lasica/

Standard Types of Question Items • Likert-type – measure degree of agreement with statement • Multiple choice – select correct or preferred answer • True-False – assumes an assertion is either true of false • Rank–order – ranked based on characteristic(s) • Rating scale – attitude direction and intensity • Open-ended – no specific response options

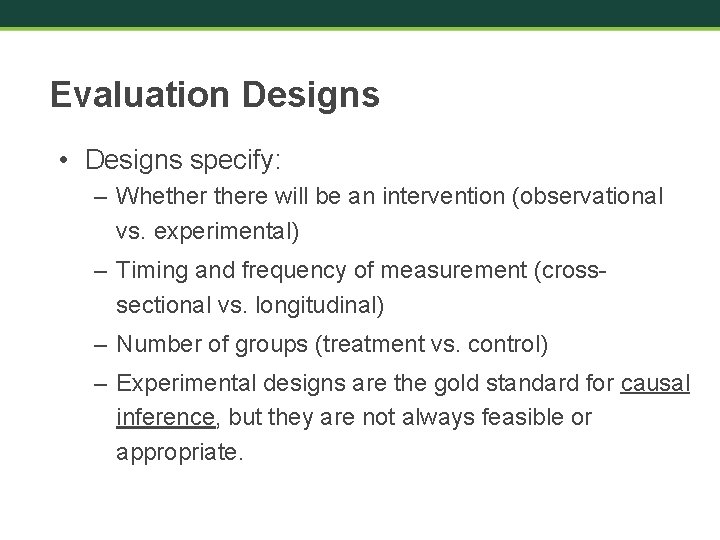

Evaluation Designs • Designs specify: – Whethere will be an intervention (observational vs. experimental) – Timing and frequency of measurement (crosssectional vs. longitudinal) – Number of groups (treatment vs. control) – Experimental designs are the gold standard for causal inference, but they are not always feasible or appropriate.

True Experimental Design: Pretest - Posttest Control Group Design R Treatment O R Control O X R = Random Assignment (NR = Non-Random Assignment) O = Observation X = Intervention Strengths: Controls for everything including selection bis Does not control for pretest effect O O

True Experimental Design: Posttest Only Control Group Design R Treatment R Control X O O No Pretest Strengths: • Controls selection bias • No testing effect Weakness • Cannot measure change over time

Quasi-Experimental Design Pretest - Posttest Control Group Design NR Treatment O NR Control O X O O Strengths: • Controls for several threats to IV (maturation, history, testing) Limitations: • Does not control for pretest effect • No random assignment • Does not control for selection bias

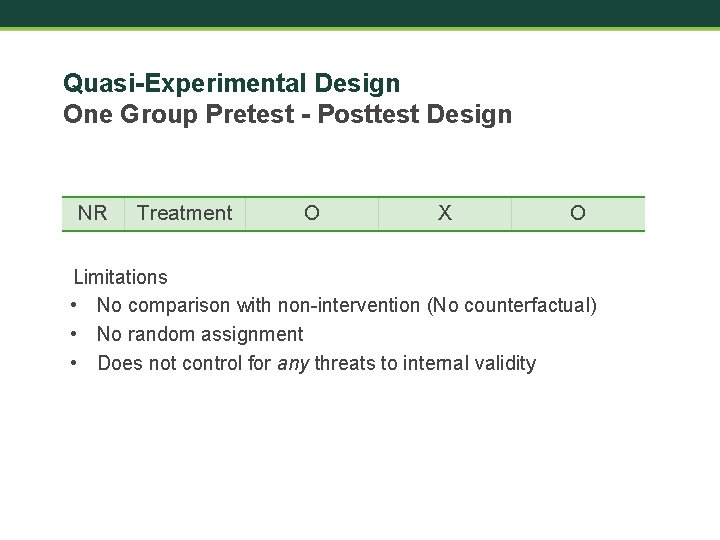

Quasi-Experimental Design One Group Pretest - Posttest Design NR Treatment O X O Limitations • No comparison with non-intervention (No counterfactual) • No random assignment • Does not control for any threats to internal validity

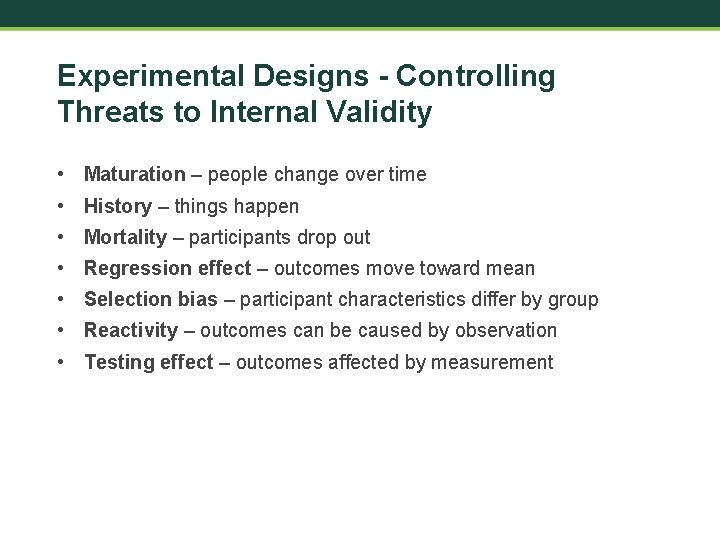

Experimental Designs - Controlling Threats to Internal Validity • Maturation – people change over time • History – things happen • Mortality – participants drop out • Regression effect – outcomes move toward mean • Selection bias – participant characteristics differ by group • Reactivity – outcomes can be caused by observation • Testing effect – outcomes affected by measurement

EVALUATION PLAN OUTCOME OR IMPACT INFORMATON NEEDED SAMPLING STRATEGY DESIGN DATA COLLECTION ANALYSIS METHOD REPORTING • Timing • Format • Audience

Worksheet 6: Evaluation Plan (10 min) • Complete evaluation plan for a single broader impact

Informal STEM Education: Flexibility Is Essential • Not all STEM education takes place in schools or other formal settings • Everyday ISE experiences: Encountering something from the natural world (e. g. , a spider in your house) and looking it up on the internet to identify it and learn something about its habit and behaviors. • Science media: radio, tv, internet, etc. • Some specific designed spaces: museums, science centers, zoos, aquariums, and environmental centers • Programs for science learning: In school, community-based science-rich organizations, hobbyist groups, etc. Source: Bell et al. , 2009

Issues of Using Standard Evaluation Techniques in ISE Many or most are disruptive of the experience of ISE • You will need to avoid this. • Find ways to measure without disruption– make it part of the activity • There is still emphasis on achieving rigor – using standard methods • However, perhaps moderated • Call for quasi-experimental designs, deferral comparison group designs, etc. Source: Allen & Peterman, 2019

Informal Education Outcomes • • • Knowledge – memory, cognitive operations Engagement – emotional, short-term motivation Attitude: long-term engagement Behavior: one time or repeated measures Skills: specific actions Source: Allen, 2008.

Measuring ISE Outcomes Without Disruption • Noninvasive techniques – not disruptive • Embedded assessments, clickstream of all action • Surveillance cameras, cordless microphones, radiofrequency identification tags • Voice recognition, semantic analysis • There are ethical and technical issues - AEA • Evaluators not familiar with some of these techniques Source: Allen & Peterman, 2019

Flexibility in Evaluation Design in ISE • ISE requires use of a nondisruptive design • Is pretesting reasonable in ISE? Too invasive? • Sometimes goals will change over time – unlike traditional fixed goals • Adaptive designs • Noninvasive case studies and naturalistic methods • Self-reports? • Will participants report? Source: Allen, 2008

The MSU Institutional Review Board (IRB) Research that involves human subjects requires IRB review and approval. Evaluations are often exempt because they are not considered research, but investigators should always apply for rather than assume exempt status. The Human Research Protection Program https: //hrpp. msu. edu/

How to find an evaluator • Call Miles. He can connect you with MSU, MI and US evaluators: – (517) 290 -7554 – mcnall@msu. edu

The BI Wizard A website that will help you draft your BI plan!

Build a Broader Impacts Plan • COSEE NOW Broader Impacts Wizard – Step 1: Audience – Step 2: Budget – Step 3: Activity – Step 4: Project Description – Step 5: Evaluation

http: //coseenow. net/wizard/

Your BI Evaluation Consultants Miles Mc. Nall – mcnall@msu. edu Robert Griffore - griffore@msu. edu

Wrap Up • Final Questions? (Use chat) • Workshop evaluation survey

REFERENCES • Allen, S. (2008). Evaluating exhibitions. In A. J. Friedman (Ed). Framework for Evaluating Impacts of Informal Science Education Projects. Report from a National Science Foundation Workshop. Pp. 4256. The National Science Foundation, Directorate for Education and Human Resources, Division of Research on Learning in Formal and Informal Settings. • Allen, S. & Peterman, K. (2019). Evaluating informal STEM education: Issues and challenges in context. New Directions for Evaluation, 2019(161), 17 -33. • Bell, P. , Lewenstein, B. , Shouse, A. W. , & Feder, M. A. (2009). Learning Science in Informal Environments: People. Places and Pursuits. • Bevan, Calabrese Barton & Garibay. (2018). Broadening Perspectives on Broadening Participation in STEM. Washington, DC: Center for Advancement of Informal Science Education. • Burns, T. W. , O'Connor, D. J. , & Stocklmayer, S. M. (2003). Science communication: a contemporary definition. Public understanding of science, 12(2), 183 -202.

REFERENCES • de. Bruyn, J. (2018, June 1). How to nail broader impacts in your next NSF grant. American Society for Microbiology https: //www. asm. org/Articles/2018/June/how-to-nail-broaderimpacts-in-your-next-nsf-grant) • Centers for Disease Control and Prevention (n. d. ) Well-being concepts. https: //www. cdc. gov/hrqol/wellbeing. htm • Mc. Fadden, B. (2019). Broader Impacts of Science on Society. Cambridge: Cambridge University Press. • Risien, J. , & Storksdieck, M. (2018). Unveiling impact identities: A path for connecting science and society. Integrative and comparative biology, 58(1), 58 -66. • Stevens, R. , Bransford, J. , & Stevens, A. (2005). The LIFE Center Lifelong and Lifewide Learning Diagram. Seattle, WA: LIFE Center. http: //life-slc. org/about/citationdetails. html

- Slides: 113