Computing LHCb Umberto Marconi V INFN Grid Workshop

Computing LHCb Umberto Marconi V INFN Grid Workshop, Padova 19/12/2006

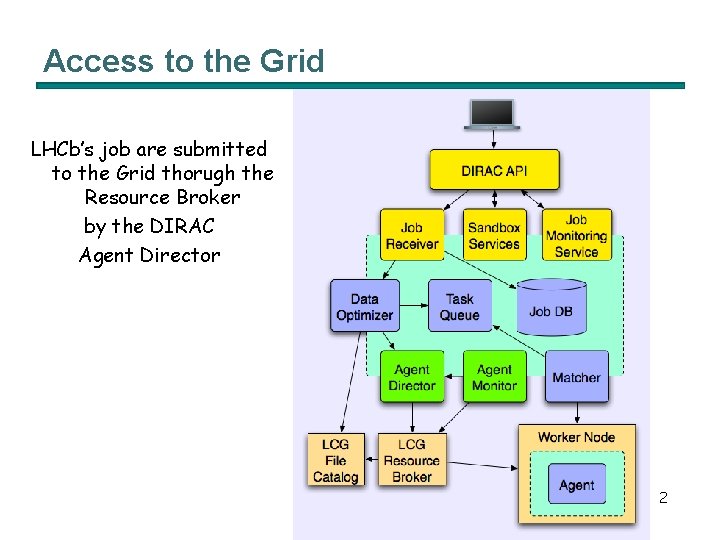

Access to the Grid LHCb’s job are submitted to the Grid thorugh the Resource Broker by the DIRAC Agent Director 2

Interesting new feautures n n The VO-View looks very promising to effectively exploits advanced rank expressions. We are testing also: n The bulk submission capability. n The shallow resubmission for errors recovery. n The VOMS-Proxy extension renewal mechanism. n Remote upload (download) of the Input (Output) Sand Box. 3

g. Lite RB test activities n Preliminary test on different RBs n Ibm 139. cnaf. infn. it n Cert-rb-04. cnaf. infn. it n Rb 103. cern. ch n Rb 112. cern. ch n Tests of the RB performances. n Evaluate new functionalities n n WM-Proxy vs. the Network Server. Integration of the g. Lite RB with the LHCb DIRAC software. 4

g. Lite RB test activities (II) n n We measured how the submission time, the dispatching time, the load of the RB changes with: n The number of submitted job. n The job type. n The number of submitting threads. n The requirements in the JDL. Performance results will be presented in a LHCb note in preparation. n Thanks to Daniele Cesini for his help. 5

Interface to the g. Lite RB n We developed the g. Lite. Director Agent which is the interface used by DIRAC to submit the LHCb job to the RB. n Right now the rb 103. cern. ch is the mainly used RB with the DIRAC test system. n It has been shown we can deal with: n Production Jobs. n Analysis Jobs. n LHCb makes use of the Service Availability Monitor (SAM) and FCR. n We are also using multiple LFC mirrors through DLI interface. n The next steps are: n Move on from the grid-proxy to the voms-proxy and use the group extension. n Use the new WM-Proxy functionalities instead of the NS ones. 6

Production and Analysis n n Production, Reconstruction and Pre-selection are scheduled activities, centrally managed. n Pilot Agents running on the WNs download these jobs from the central task queues. n Pilot Agents run with generic identifier. Concerining the analysis jobs there are two possible strategies to be evaluated. n Use Pilot Agents and a centralized task queue and prioritization mechanism as in the previous cases. n LHCb request to have glexec available to change job identity. n Access the Grid directly. n Using the Ganga UI in both the cases with different backends. 7

Storage n n Separate disk & tape SRM endpoints already in place. We wish to have SRM v 2. 2 endpoints with at least 2 storage classes implemented: T 0 D 1 & T 1 D 0 n n n Available by February ’ 07. Access rights to storage according to LFC ACL’s. Efficient storage namespace browsing for integrity checking (Feb’ 07 - come with SRM v 2. 2) Uniform implementation across different backend (Feb’ 07 come with SRM v 2. 2) Together with Castor we wish to have sto. RM available in production at CNAF. n Willing to cooperate to test sto. RM’s performances and functionalities. 8

LFC Catalogue n Read-only LFC instances with streaming from CERN available at the Tier 1 centres. n n n Available at CNAF. Some other LFC at T 1 available, before April ’ 07 analysis phase. LHCb developed efficient browsing tools for data catalogues integrity checking. n Integrity of the Bookkeeping DB, LFC and SE information. 9

COOL Oracle Conditions DB n COOL/3 D DB: instance of read-only COOL DB (at least phase 1 sites) for January’ 07 tests. At all T 1 sites by March’ 07 calibration challenge 10

FTS n CERN-T 1 connections, T 1 -T 1 connections (now) n T 2 -T 1 connections are also required. n n Grid. Ka-Manno, CERN-Russia. Automatic pre-staging of files on top of the transfers (optimization) (useful now) "fat client", i. e. library discovering automatically the end point to be used for any transfer from the source and destination end-points (useful now) Failover mechanisms based on VOBox already in use to overcome data transfer problems. n DST and MC data distribution amongst the Tier 1 centres. 11

Accounting, prioritization and monitoring n n LHCb will use its own tools. The centralized prioritization mechanism will go through a replica of the MAUI scheduler algorithm. GPBOX could be used to enforce glexec requests for job identity changes on site. Monitoring n The Dashboard ARDA project is under evaluation. n DIRAC monitor system. 12

- Slides: 12