Computer Systems Spring 2008 Lecture 28 Network Connected

- Slides: 27

Computer Systems Spring 2008 Lecture 28: Network Connected Multiprocessors Adapted from Mary Jane Irwin ( www. cse. psu. edu/~mji ) [Adapted from Computer Organization and Design, Patterson & Hennessy, © 2005] Plus, slides from Chapter 18 Parallel Processing by Stallings Lecture 28 Spring 2008

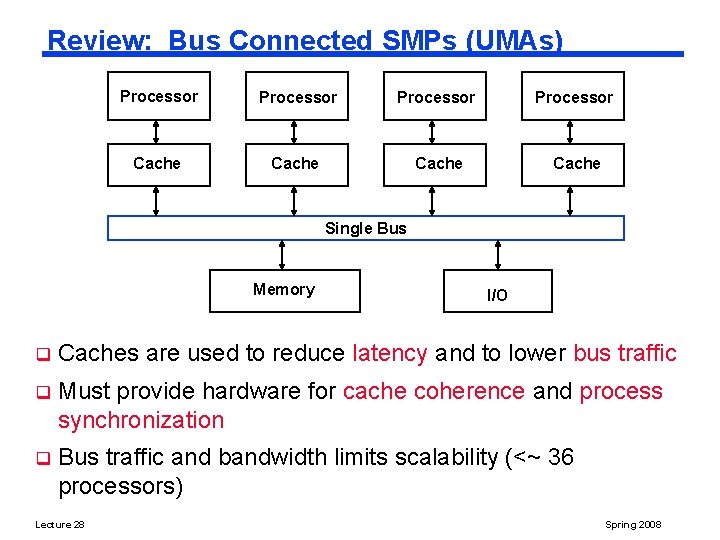

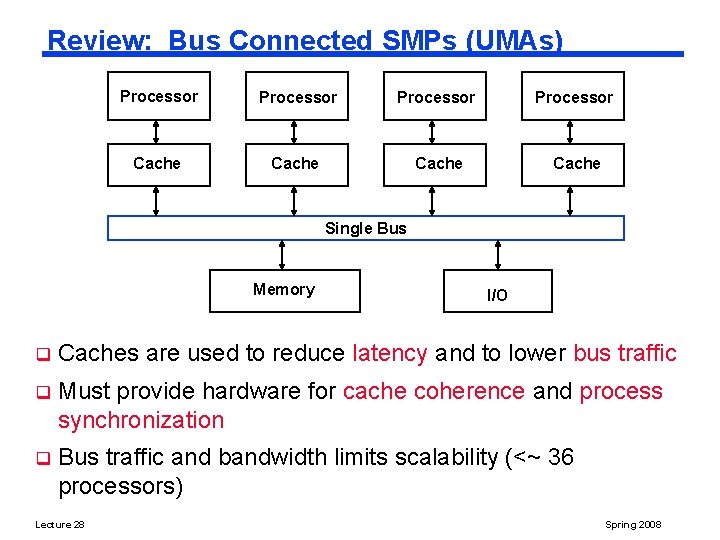

Review: Bus Connected SMPs (UMAs) Processor Cache Single Bus Memory I/O q Caches are used to reduce latency and to lower bus traffic q Must provide hardware for cache coherence and process synchronization q Bus traffic and bandwidth limits scalability (<~ 36 processors) Lecture 28 Spring 2008

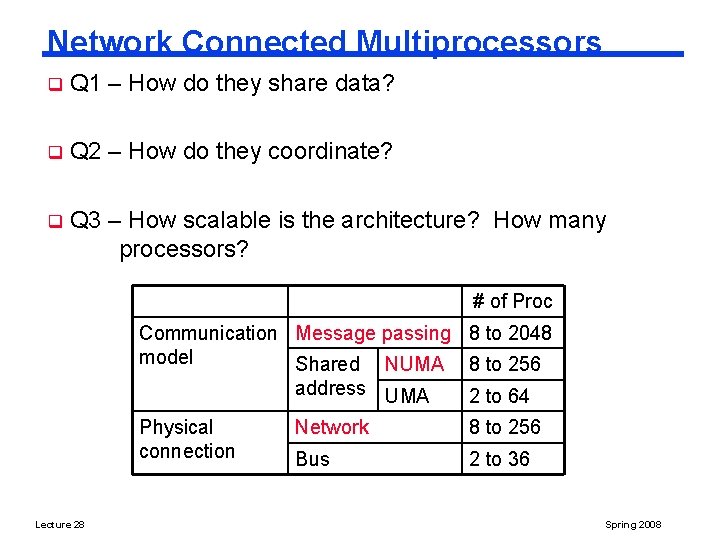

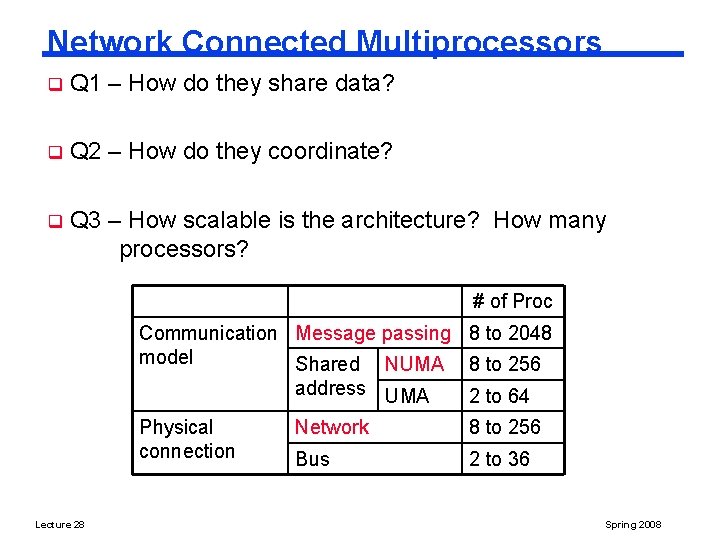

Network Connected Multiprocessors q Q 1 – How do they share data? q Q 2 – How do they coordinate? q Q 3 – How scalable is the architecture? How many processors? # of Proc Communication Message passing 8 to 2048 model Shared NUMA 8 to 256 address UMA 2 to 64 Physical connection Lecture 28 Network 8 to 256 Bus 2 to 36 Spring 2008

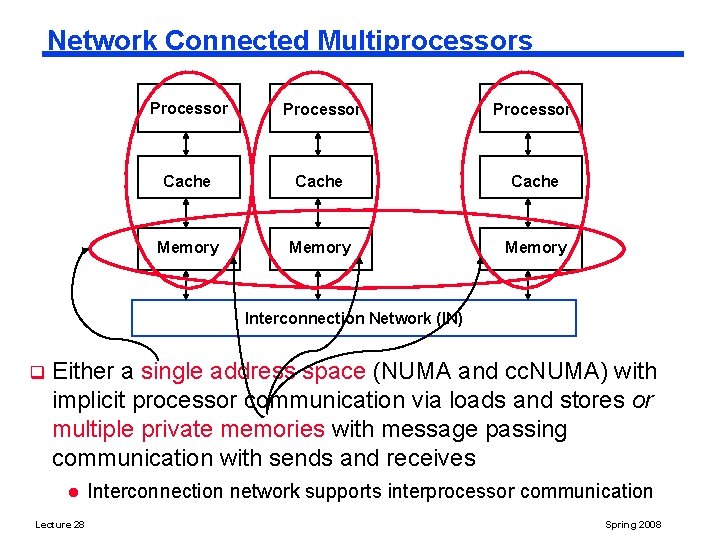

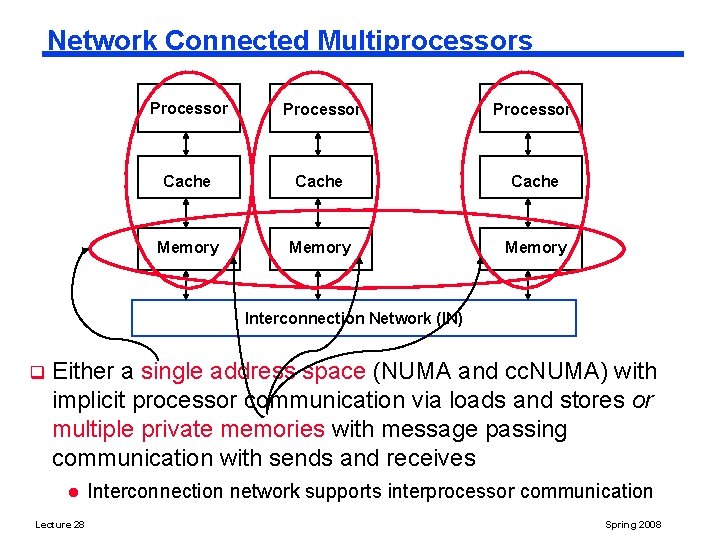

Network Connected Multiprocessors Processor Cache Memory Interconnection Network (IN) q Either a single address space (NUMA and cc. NUMA) with implicit processor communication via loads and stores or multiple private memories with message passing communication with sends and receives l Lecture 28 Interconnection network supports interprocessor communication Spring 2008

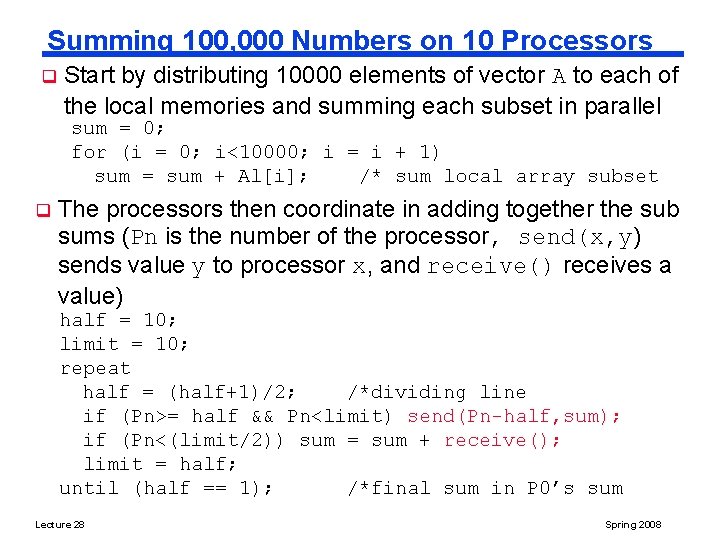

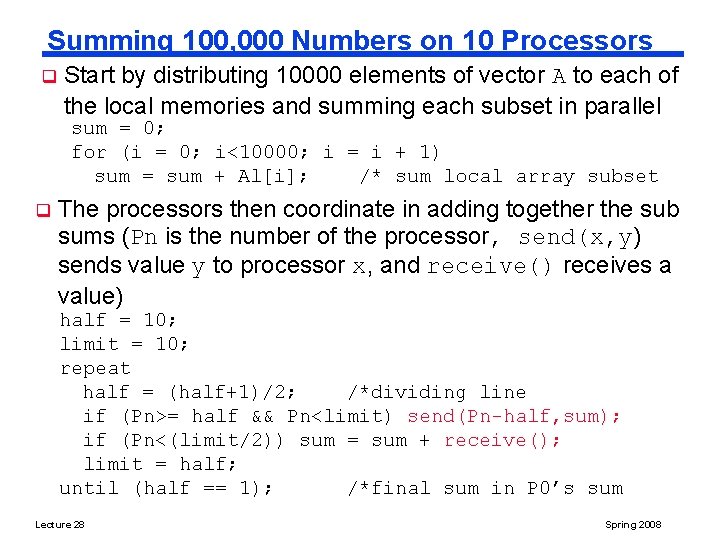

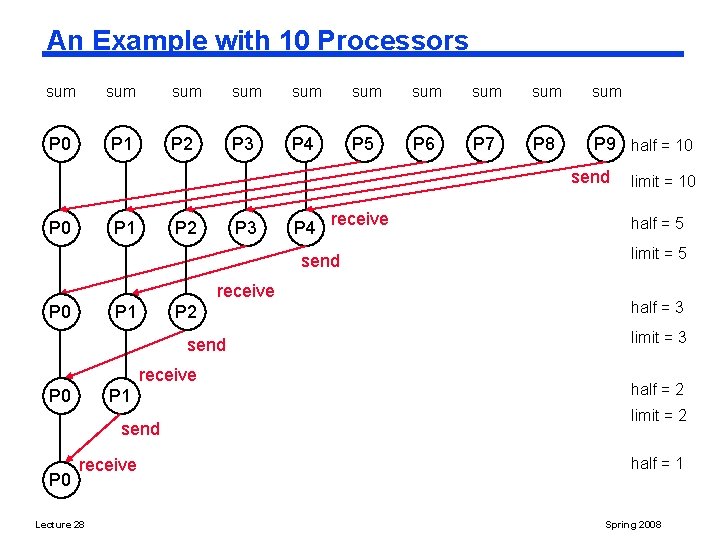

Summing 100, 000 Numbers on 10 Processors q Start by distributing 10000 elements of vector A to each of the local memories and summing each subset in parallel sum = 0; for (i = 0; i<10000; i = i + 1) sum = sum + Al[i]; /* sum local array subset q The processors then coordinate in adding together the sub sums (Pn is the number of the processor, send(x, y) sends value y to processor x, and receive() receives a value) half = 10; limit = 10; repeat half = (half+1)/2; /*dividing line if (Pn>= half && Pn<limit) send(Pn-half, sum); if (Pn<(limit/2)) sum = sum + receive(); limit = half; until (half == 1); /*final sum in P 0’s sum Lecture 28 Spring 2008

An Example with 10 Processors sum sum sum P 0 P 1 P 2 P 3 P 4 P 5 P 6 P 7 P 8 P 9 Lecture 28 half = 10 Spring 2008

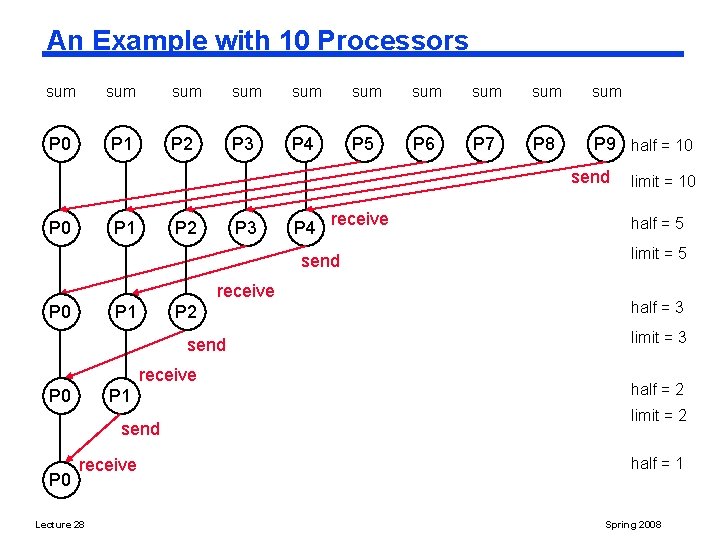

An Example with 10 Processors sum sum sum P 0 P 1 P 2 P 3 P 4 P 5 P 6 P 7 P 8 P 9 half = 10 send P 0 P 1 P 2 P 3 P 4 receive send receive P 0 P 1 P 2 send receive P 0 P 1 send P 0 receive Lecture 28 limit = 10 half = 5 limit = 5 half = 3 limit = 3 half = 2 limit = 2 half = 1 Spring 2008

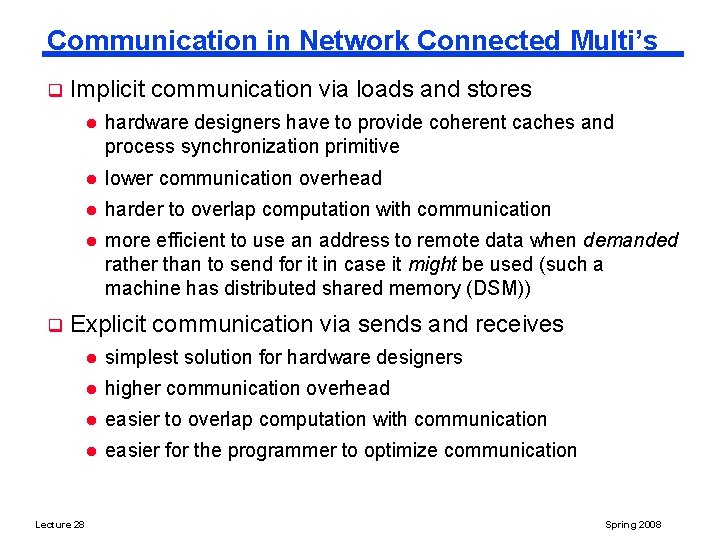

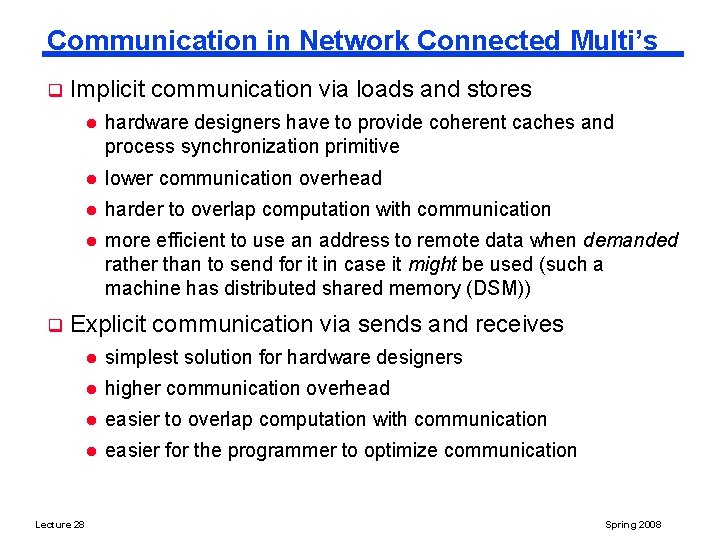

Communication in Network Connected Multi’s q q Implicit communication via loads and stores l hardware designers have to provide coherent caches and process synchronization primitive l lower communication overhead l harder to overlap computation with communication l more efficient to use an address to remote data when demanded rather than to send for it in case it might be used (such a machine has distributed shared memory (DSM)) Explicit communication via sends and receives Lecture 28 l simplest solution for hardware designers l higher communication overhead l easier to overlap computation with communication l easier for the programmer to optimize communication Spring 2008

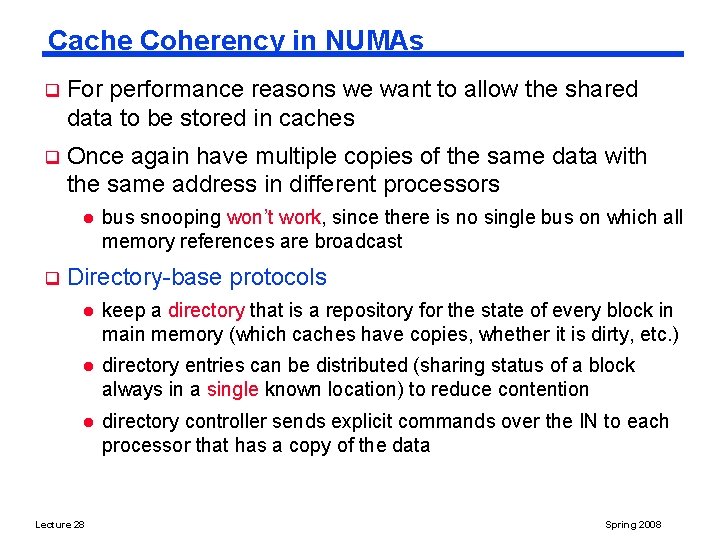

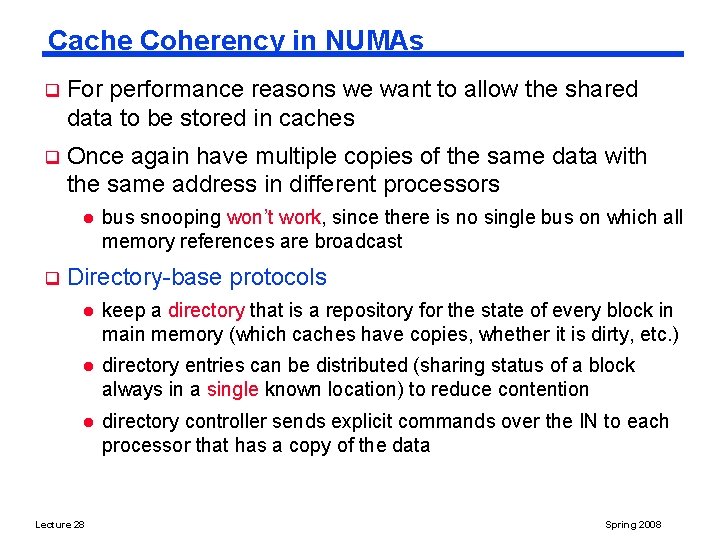

Cache Coherency in NUMAs q For performance reasons we want to allow the shared data to be stored in caches q Once again have multiple copies of the same data with the same address in different processors l q bus snooping won’t work, since there is no single bus on which all memory references are broadcast Directory-base protocols l keep a directory that is a repository for the state of every block in main memory (which caches have copies, whether it is dirty, etc. ) l directory entries can be distributed (sharing status of a block always in a single known location) to reduce contention l directory controller sends explicit commands over the IN to each processor that has a copy of the data Lecture 28 Spring 2008

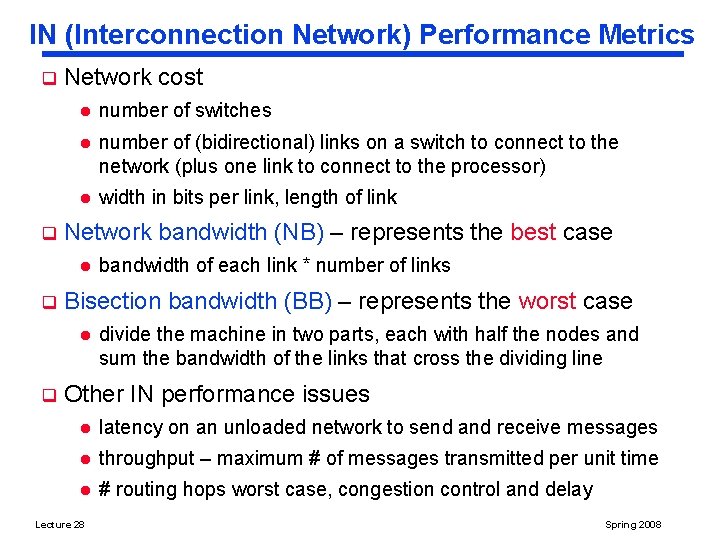

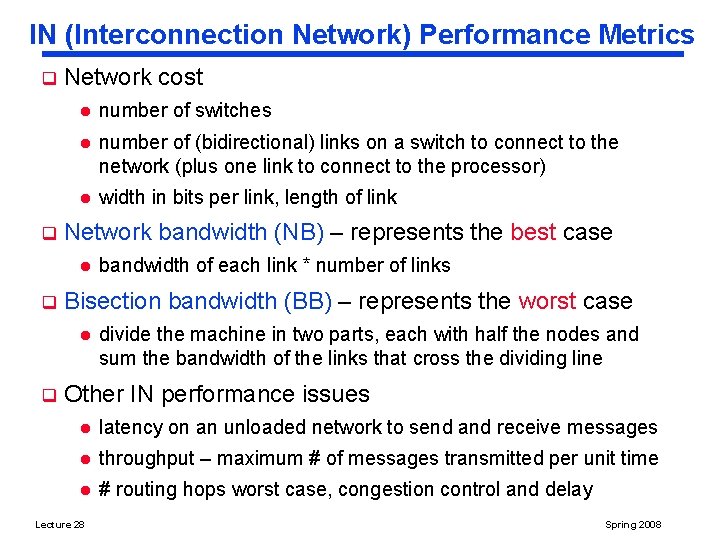

IN (Interconnection Network) Performance Metrics q q Network cost l number of switches l number of (bidirectional) links on a switch to connect to the network (plus one link to connect to the processor) l width in bits per link, length of link Network bandwidth (NB) – represents the best case l q Bisection bandwidth (BB) – represents the worst case l q bandwidth of each link * number of links divide the machine in two parts, each with half the nodes and sum the bandwidth of the links that cross the dividing line Other IN performance issues l latency on an unloaded network to send and receive messages l throughput – maximum # of messages transmitted per unit time l # routing hops worst case, congestion control and delay Lecture 28 Spring 2008

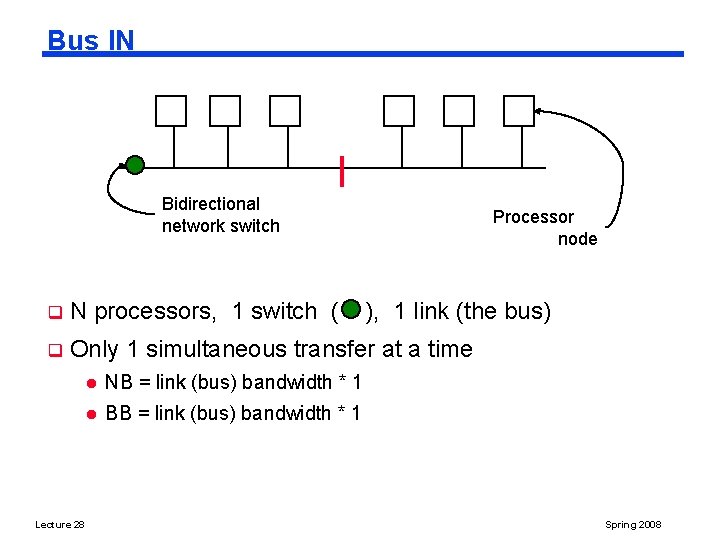

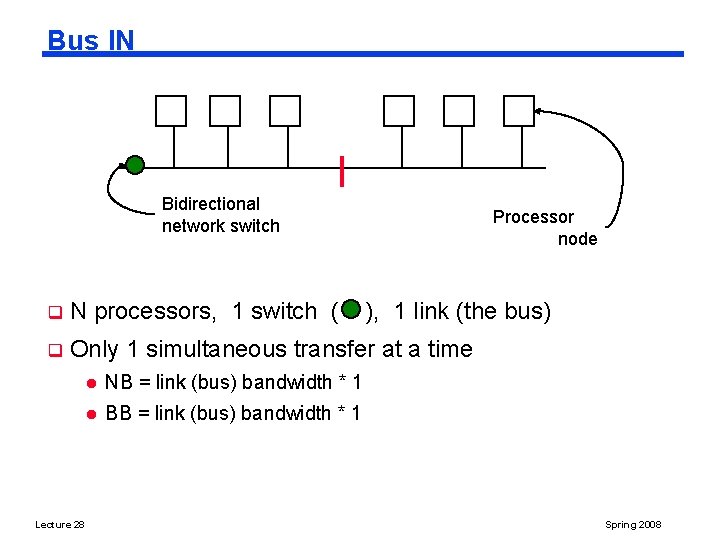

Bus IN Bidirectional network switch Processor node q N processors, 1 switch ( q Only 1 simultaneous transfer at a time Lecture 28 l NB = link (bus) bandwidth * 1 l BB = link (bus) bandwidth * 1 ), 1 link (the bus) Spring 2008

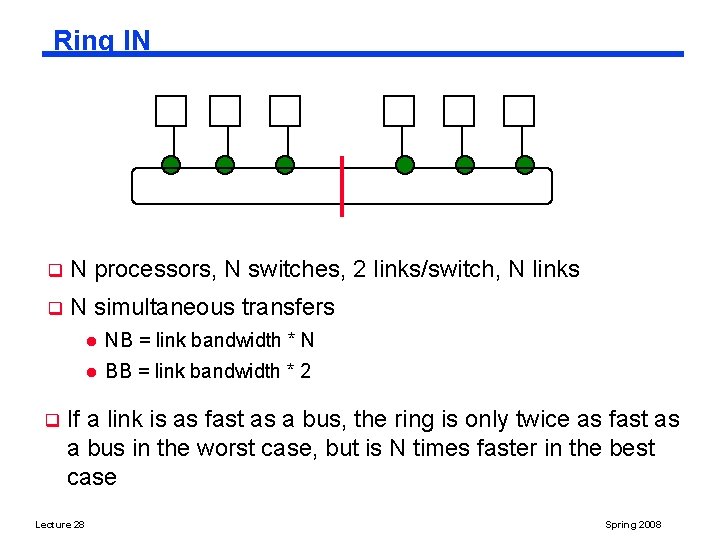

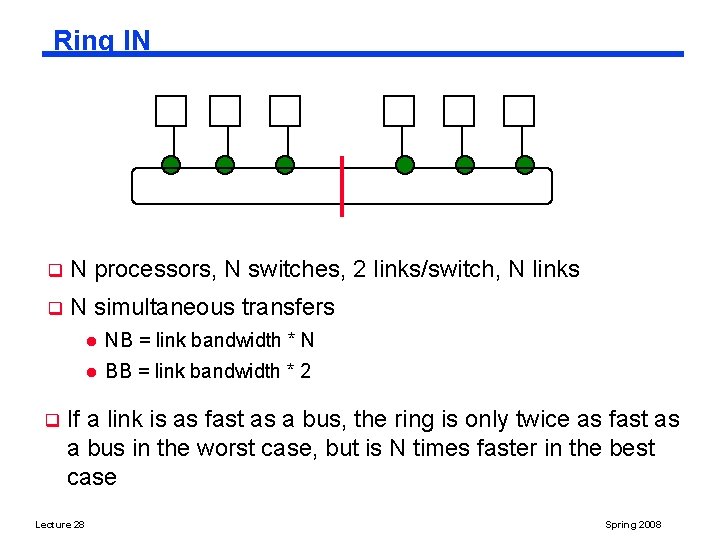

Ring IN q N processors, N switches, 2 links/switch, N links q N simultaneous transfers q l NB = link bandwidth * N l BB = link bandwidth * 2 If a link is as fast as a bus, the ring is only twice as fast as a bus in the worst case, but is N times faster in the best case Lecture 28 Spring 2008

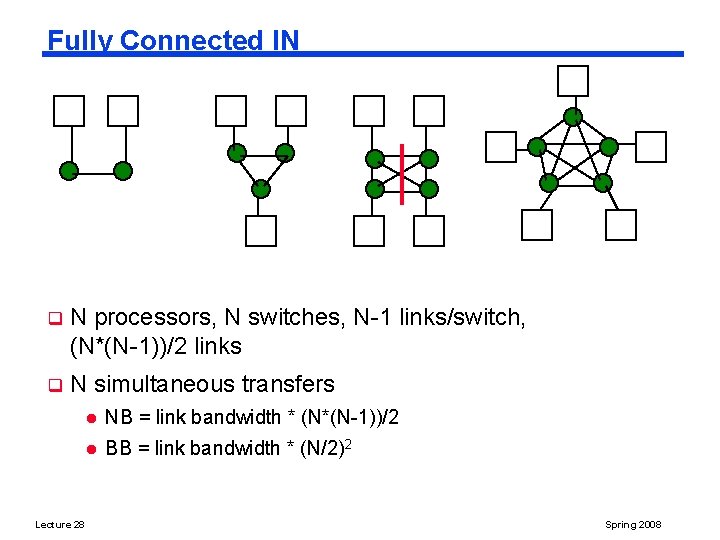

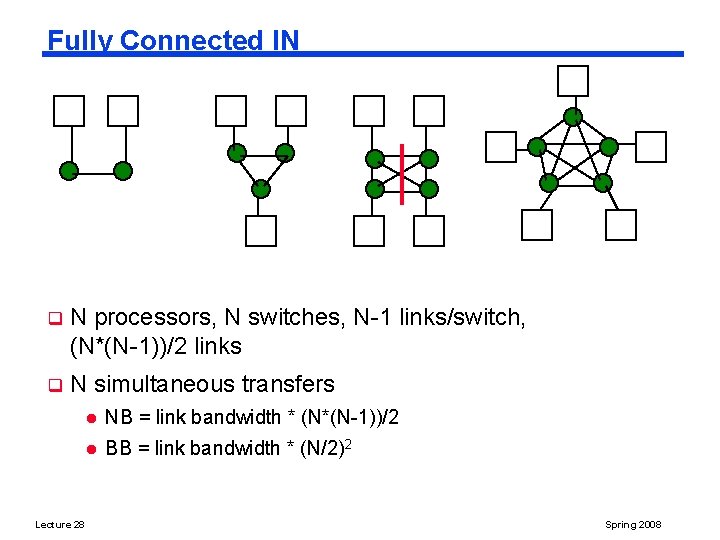

Fully Connected IN q N processors, N switches, N-1 links/switch, (N*(N-1))/2 links q N simultaneous transfers Lecture 28 l NB = link bandwidth * (N*(N-1))/2 l BB = link bandwidth * (N/2)2 Spring 2008

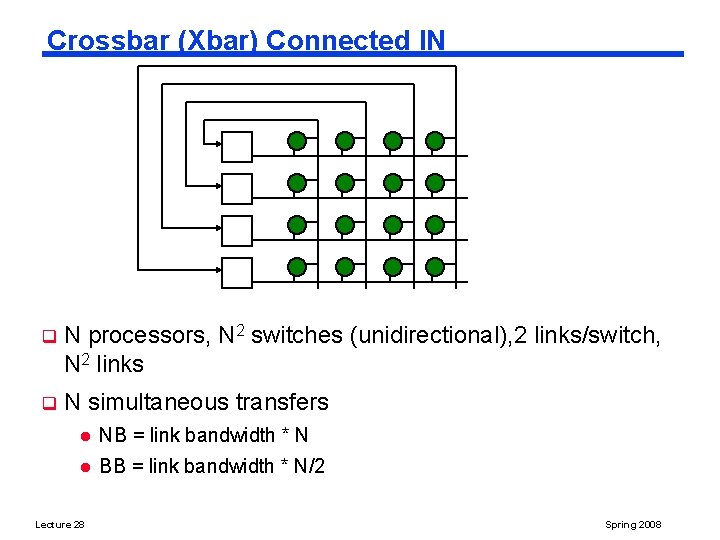

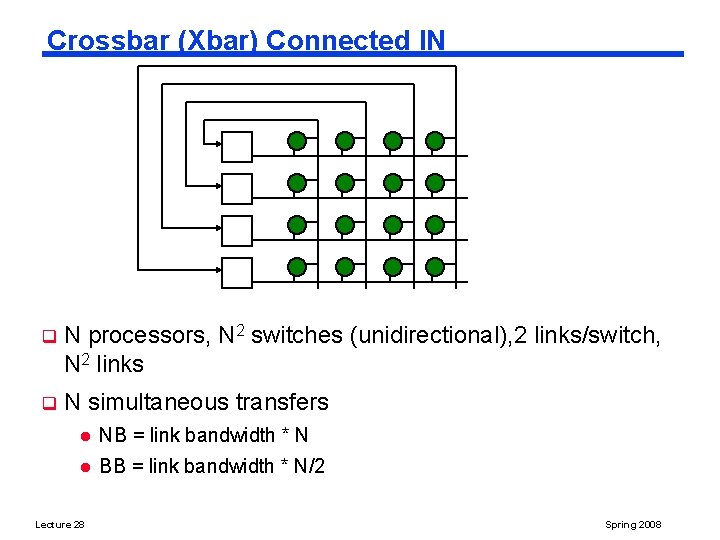

Crossbar (Xbar) Connected IN q N processors, N 2 switches (unidirectional), 2 links/switch, N 2 links q N simultaneous transfers l NB = link bandwidth * N l BB = link bandwidth * N/2 Lecture 28 Spring 2008

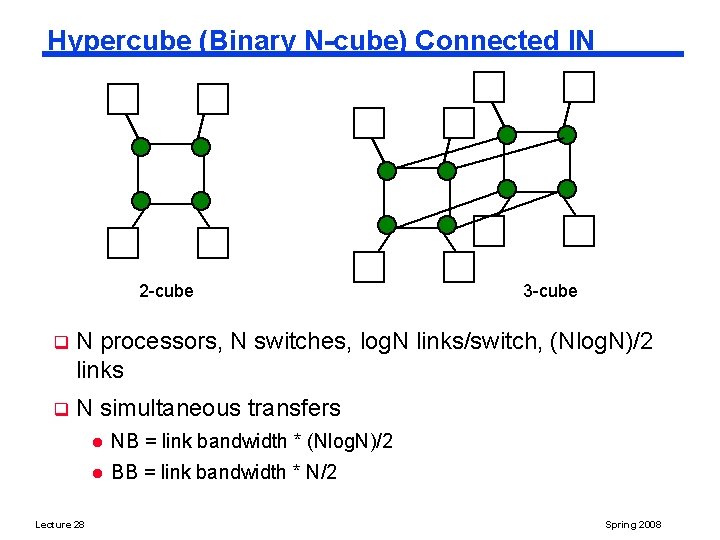

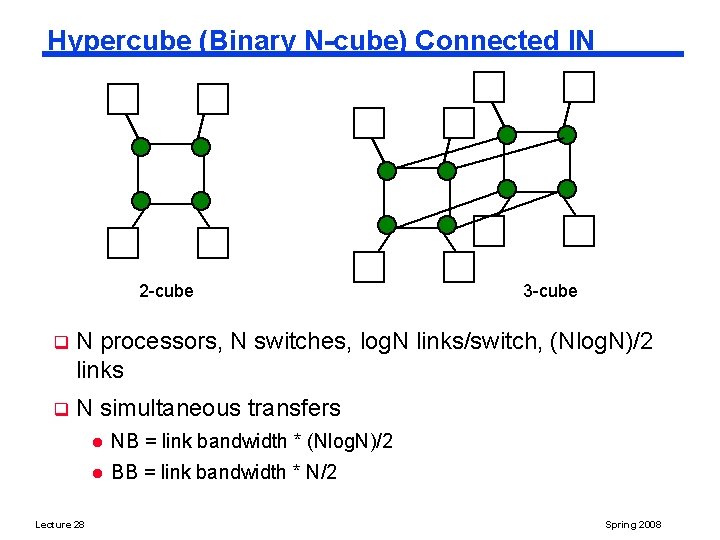

Hypercube (Binary N-cube) Connected IN 2 -cube 3 -cube q N processors, N switches, log. N links/switch, (Nlog. N)/2 links q N simultaneous transfers Lecture 28 l NB = link bandwidth * (Nlog. N)/2 l BB = link bandwidth * N/2 Spring 2008

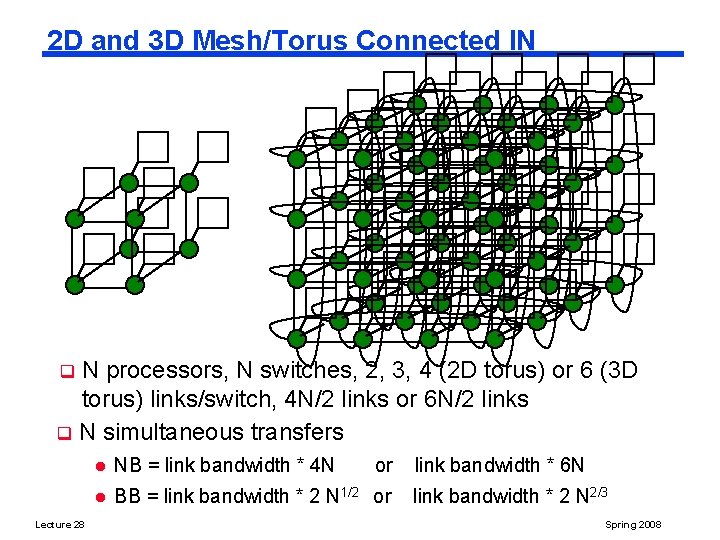

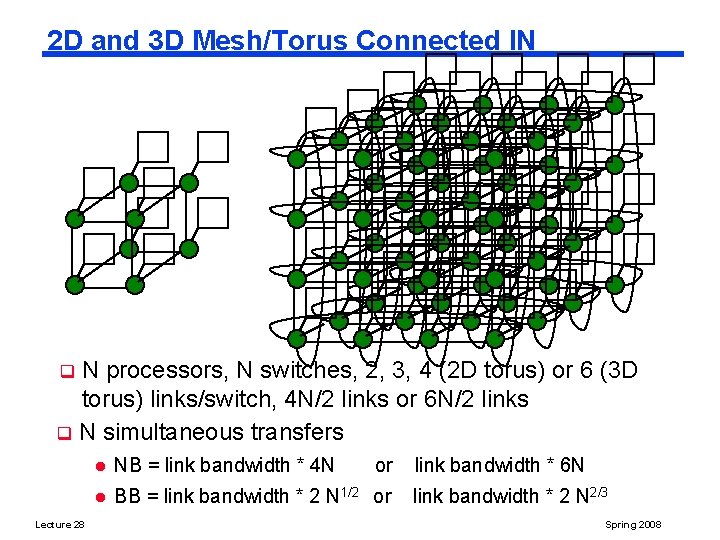

2 D and 3 D Mesh/Torus Connected IN N processors, N switches, 2, 3, 4 (2 D torus) or 6 (3 D torus) links/switch, 4 N/2 links or 6 N/2 links q N simultaneous transfers q Lecture 28 l NB = link bandwidth * 4 N or l BB = link bandwidth * 2 N 1/2 or link bandwidth * 6 N link bandwidth * 2 N 2/3 Spring 2008

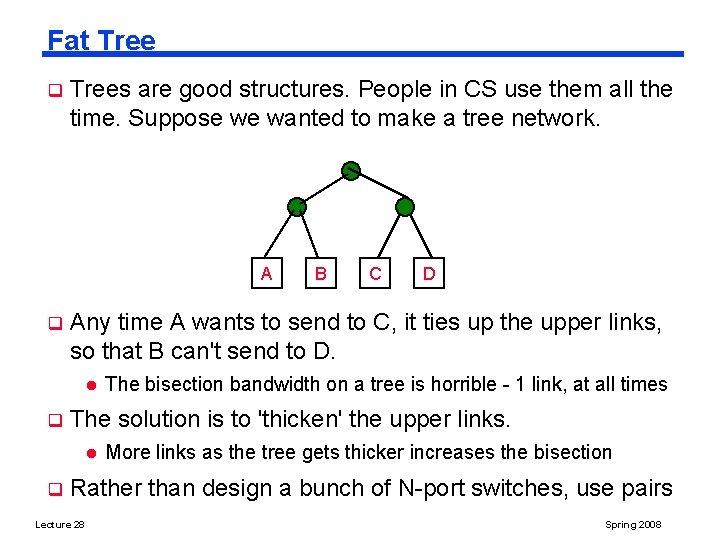

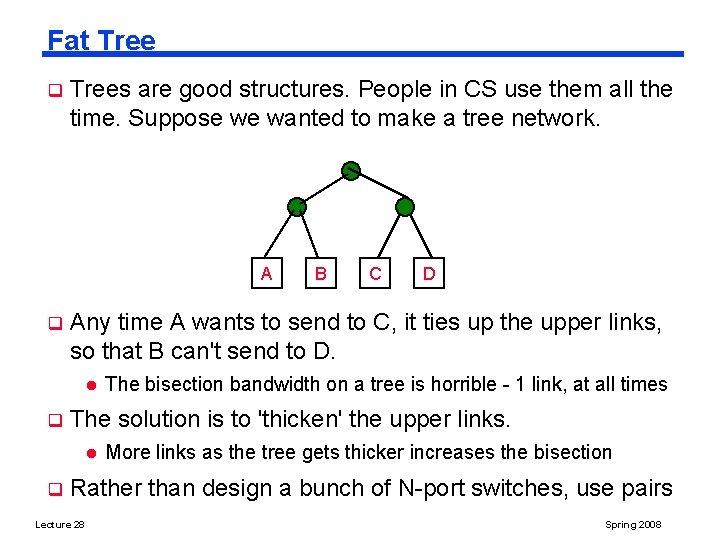

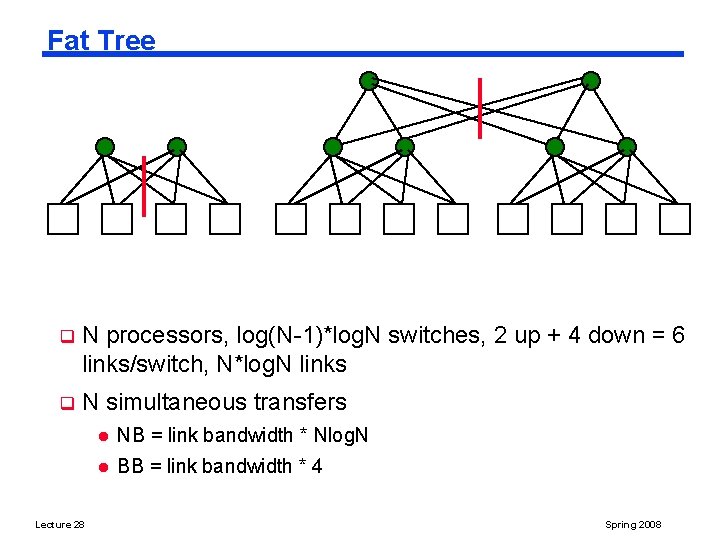

Fat Tree q Trees are good structures. People in CS use them all the time. Suppose we wanted to make a tree network. A q D The bisection bandwidth on a tree is horrible - 1 link, at all times The solution is to 'thicken' the upper links. l q C Any time A wants to send to C, it ties up the upper links, so that B can't send to D. l q B More links as the tree gets thicker increases the bisection Rather than design a bunch of N-port switches, use pairs Lecture 28 Spring 2008

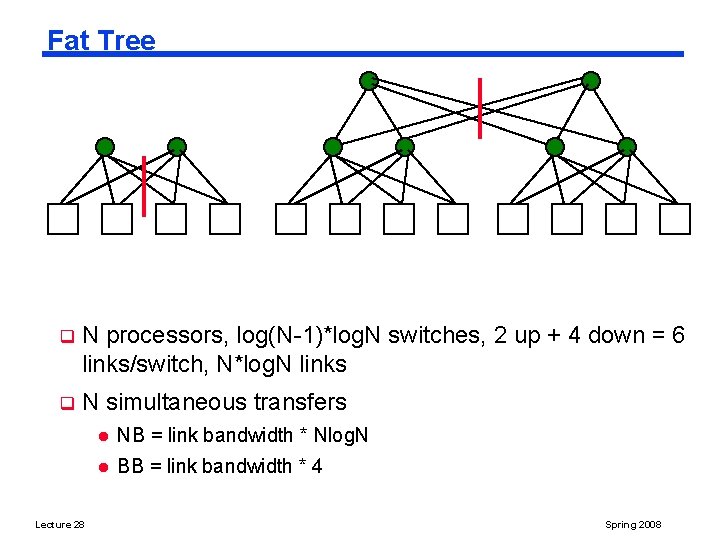

Fat Tree q N processors, log(N-1)*log. N switches, 2 up + 4 down = 6 links/switch, N*log. N links q N simultaneous transfers Lecture 28 l NB = link bandwidth * Nlog. N l BB = link bandwidth * 4 Spring 2008

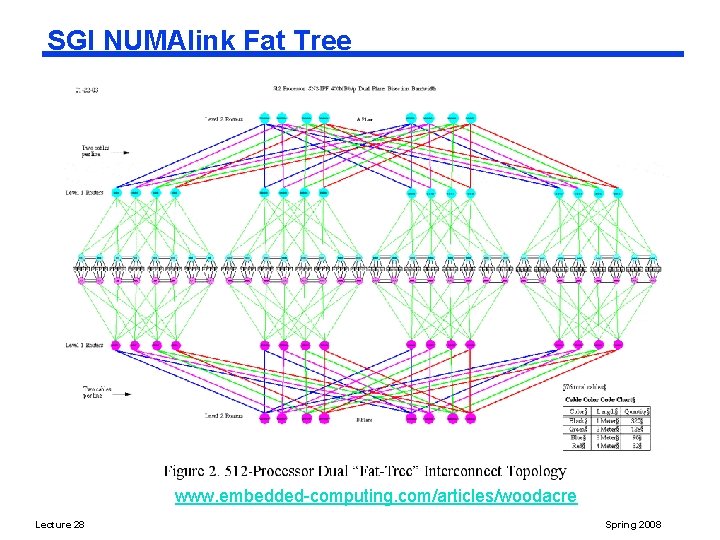

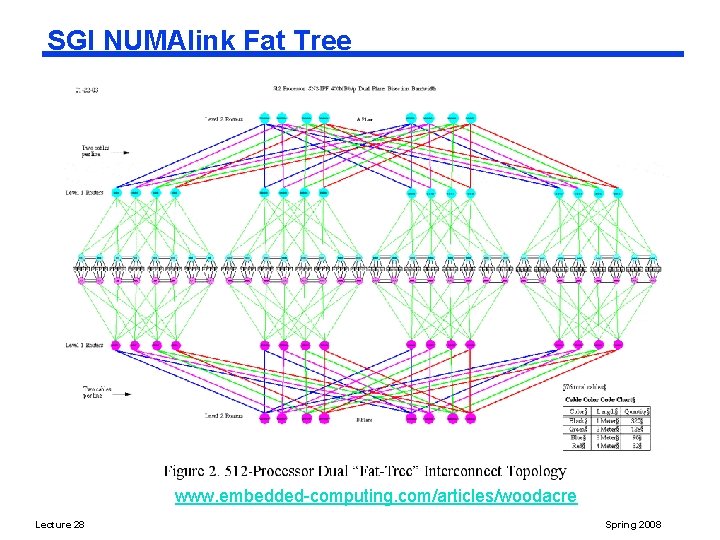

SGI NUMAlink Fat Tree www. embedded-computing. com/articles/woodacre Lecture 28 Spring 2008

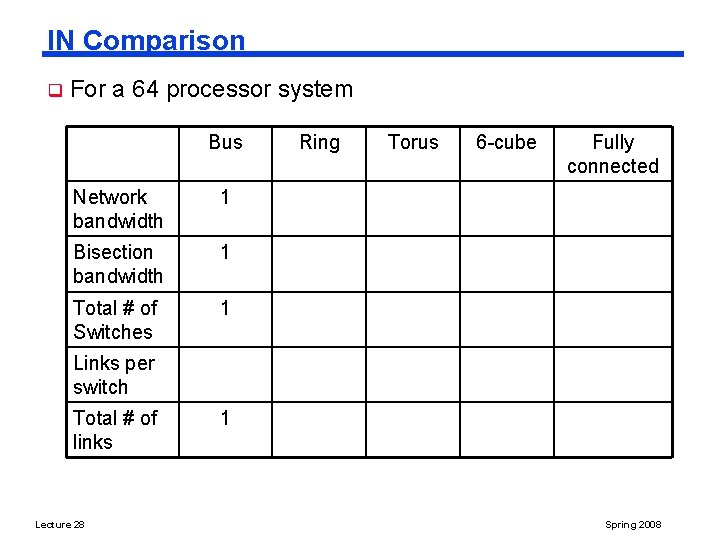

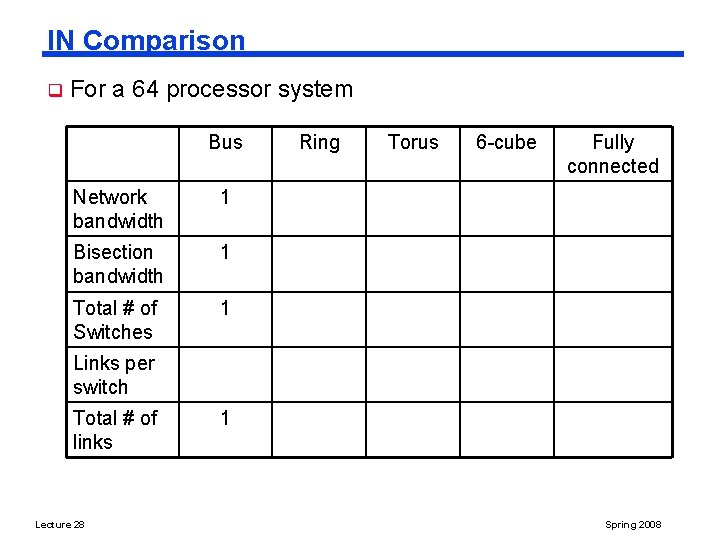

IN Comparison q For a 64 processor system Bus Network bandwidth 1 Bisection bandwidth 1 Total # of Switches 1 Ring Torus 6 -cube Fully connected Links per switch Total # of links Lecture 28 1 Spring 2008

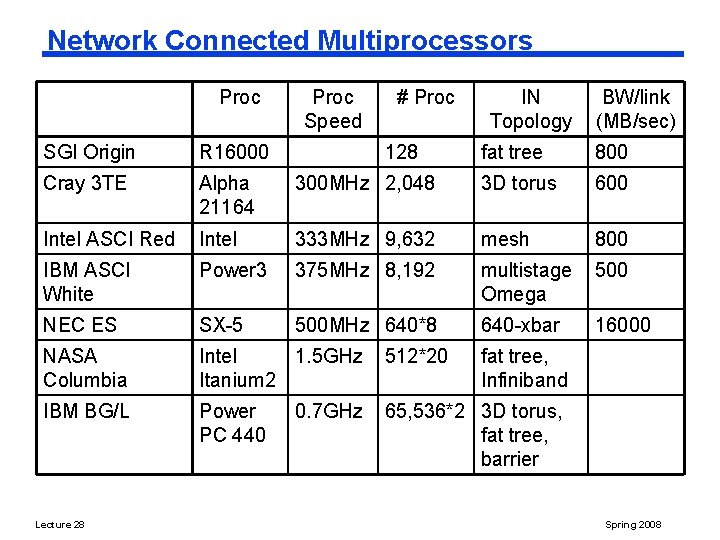

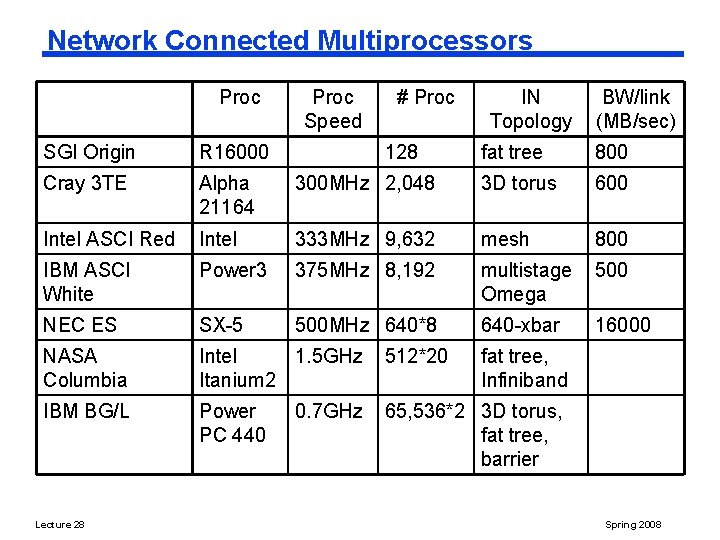

Network Connected Multiprocessors Proc SGI Origin R 16000 Cray 3 TE Alpha 21164 Intel ASCI Red Proc Speed # Proc BW/link (MB/sec) fat tree 800 300 MHz 2, 048 3 D torus 600 Intel 333 MHz 9, 632 mesh 800 IBM ASCI White Power 3 375 MHz 8, 192 multistage Omega 500 NEC ES SX-5 500 MHz 640*8 640 -xbar 16000 NASA Columbia Intel 1. 5 GHz Itanium 2 512*20 IBM BG/L Power PC 440 65, 536*2 3 D torus, fat tree, barrier Lecture 28 128 IN Topology 0. 7 GHz fat tree, Infiniband Spring 2008

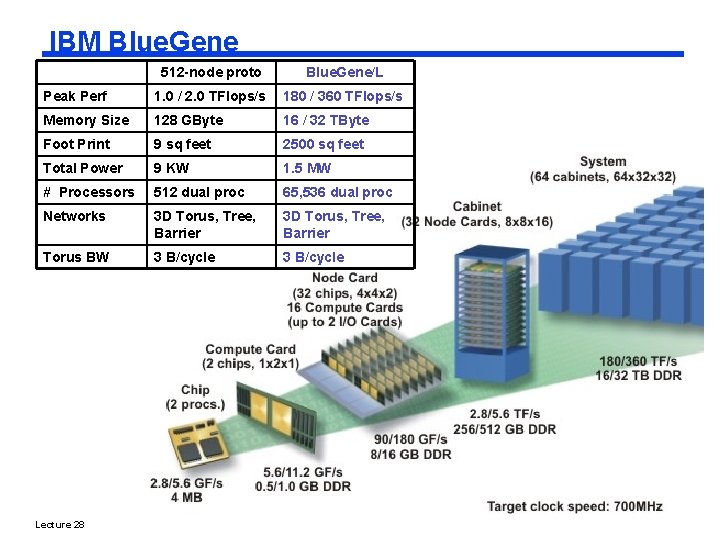

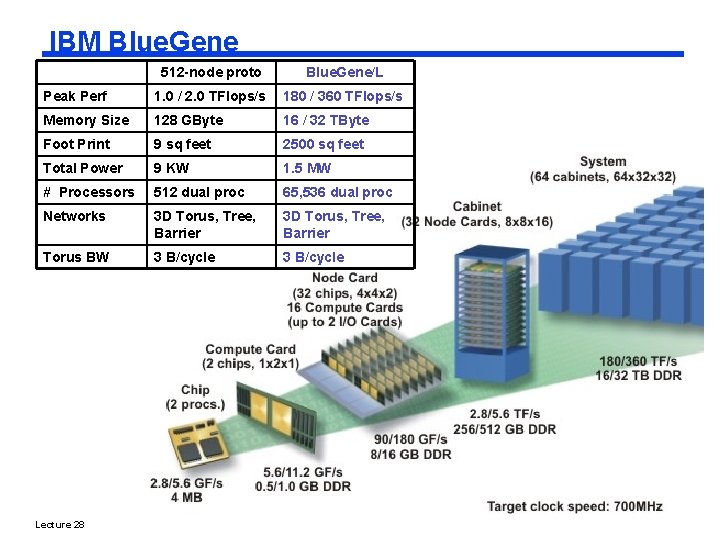

IBM Blue. Gene 512 -node proto Blue. Gene/L Peak Perf 1. 0 / 2. 0 TFlops/s 180 / 360 TFlops/s Memory Size 128 GByte 16 / 32 TByte Foot Print 9 sq feet 2500 sq feet Total Power 9 KW 1. 5 MW # Processors 512 dual proc 65, 536 dual proc Networks 3 D Torus, Tree, Barrier Torus BW 3 B/cycle Lecture 28 Spring 2008

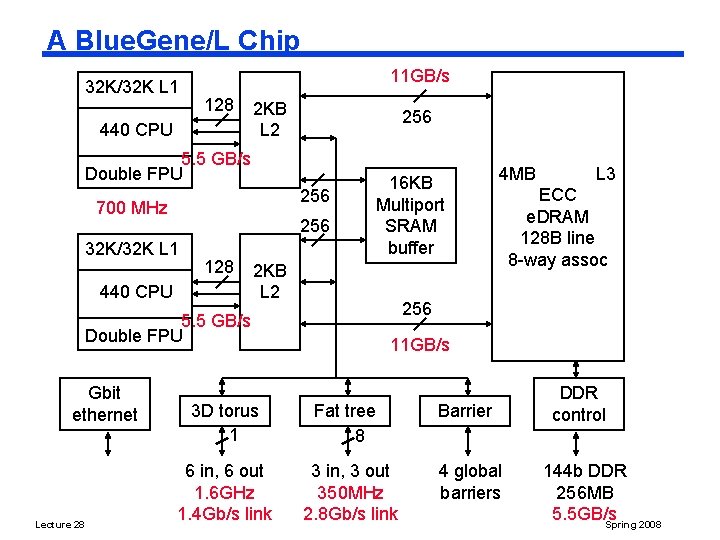

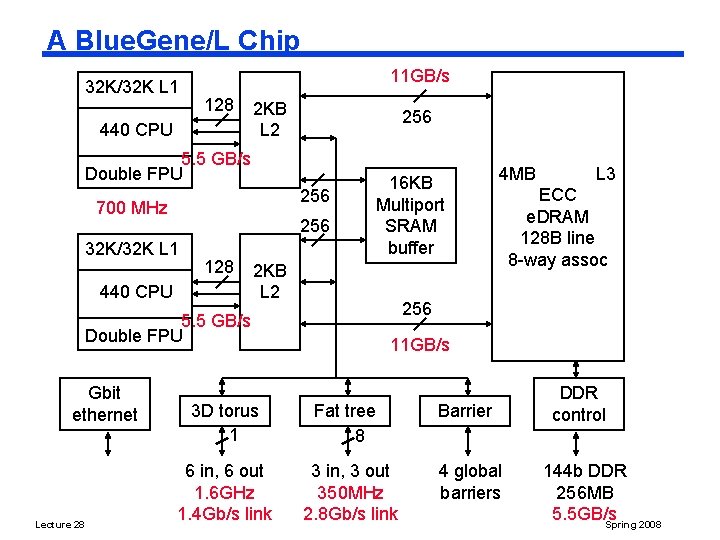

A Blue. Gene/L Chip 32 K/32 K L 1 11 GB/s 128 440 CPU 2 KB L 2 256 5. 5 GB/s Double FPU 256 700 MHz 256 32 K/32 K L 1 128 440 CPU 2 KB L 2 Lecture 28 3 D torus 1 6 in, 6 out 1. 6 GHz 1. 4 Gb/s link 4 MB L 3 ECC e. DRAM 128 B line 8 -way assoc 256 5. 5 GB/s Double FPU Gbit ethernet 16 KB Multiport SRAM buffer 11 GB/s Fat tree 8 3 in, 3 out 350 MHz 2. 8 Gb/s link Barrier 4 global barriers DDR control 144 b DDR 256 MB 5. 5 GB/s Spring 2008

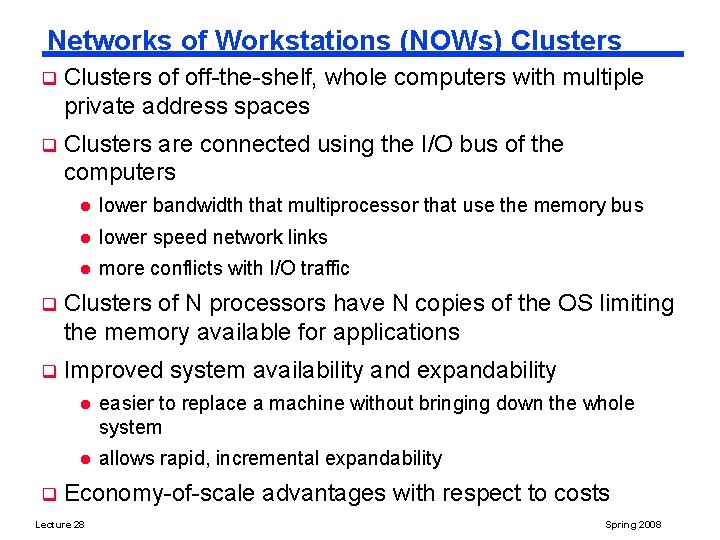

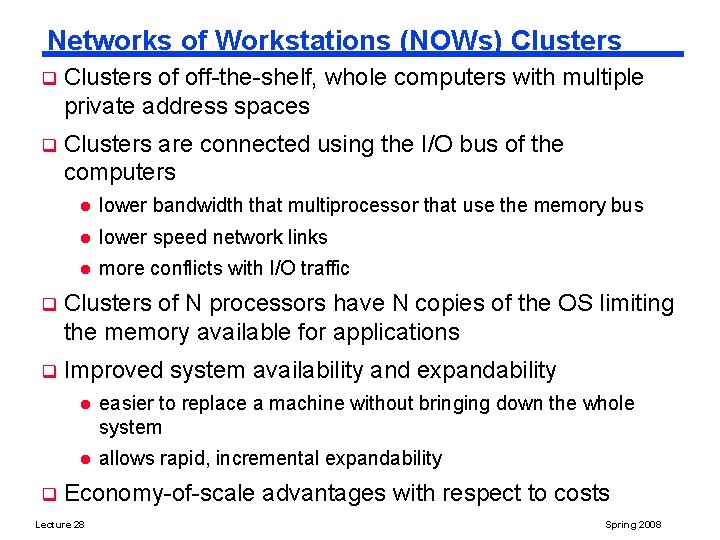

Networks of Workstations (NOWs) Clusters q Clusters of off-the-shelf, whole computers with multiple private address spaces q Clusters are connected using the I/O bus of the computers l lower bandwidth that multiprocessor that use the memory bus l lower speed network links l more conflicts with I/O traffic q Clusters of N processors have N copies of the OS limiting the memory available for applications q Improved system availability and expandability q l easier to replace a machine without bringing down the whole system l allows rapid, incremental expandability Economy-of-scale advantages with respect to costs Lecture 28 Spring 2008

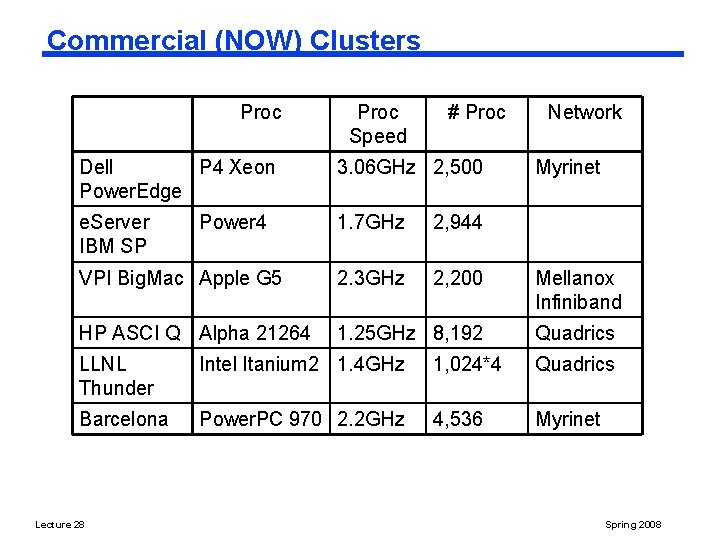

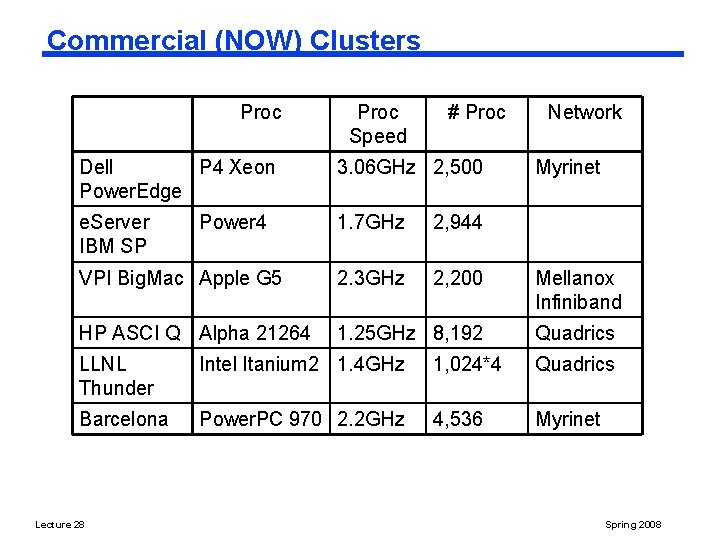

Commercial (NOW) Clusters Proc Speed # Proc Dell P 4 Xeon Power. Edge 3. 06 GHz 2, 500 e. Server IBM SP 1. 7 GHz 2, 944 VPI Big. Mac Apple G 5 2. 3 GHz 2, 200 HP ASCI Q Alpha 21264 1. 25 GHz 8, 192 Power 4 Network Myrinet Mellanox Infiniband Quadrics LLNL Thunder Intel Itanium 2 1. 4 GHz 1, 024*4 Quadrics Barcelona Power. PC 970 2. 2 GHz 4, 536 Myrinet Lecture 28 Spring 2008

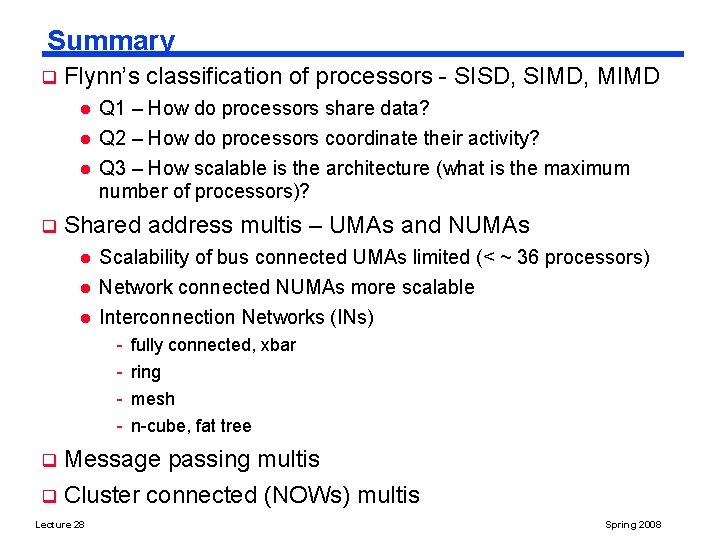

Summary q Flynn’s classification of processors - SISD, SIMD, MIMD l l l q Q 1 – How do processors share data? Q 2 – How do processors coordinate their activity? Q 3 – How scalable is the architecture (what is the maximum number of processors)? Shared address multis – UMAs and NUMAs l Scalability of bus connected UMAs limited (< ~ 36 processors) Network connected NUMAs more scalable l Interconnection Networks (INs) l - fully connected, xbar ring mesh n-cube, fat tree Message passing multis q Cluster connected (NOWs) multis q Lecture 28 Spring 2008

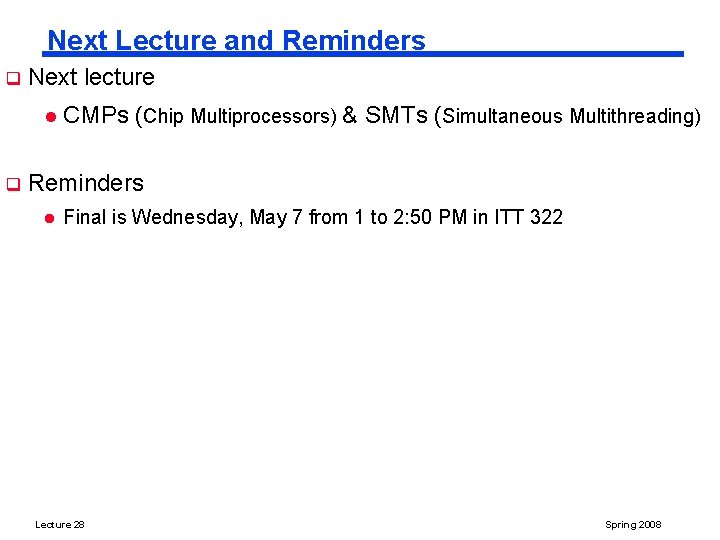

Next Lecture and Reminders q Next lecture l q CMPs (Chip Multiprocessors) & SMTs (Simultaneous Multithreading) Reminders l Final is Wednesday, May 7 from 1 to 2: 50 PM in ITT 322 Lecture 28 Spring 2008