Computer Architecture SIMD and GPUs Part II Prof

![Scalar Code Example n For I = 0 to 49 q n C[i] = Scalar Code Example n For I = 0 to 49 q n C[i] =](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-18.jpg)

![Gather/Scatter Operations Want to vectorize loops with indirect accesses: for (i=0; i<N; i++) A[i] Gather/Scatter Operations Want to vectorize loops with indirect accesses: for (i=0; i<N; i++) A[i]](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-26.jpg)

![Another Example with Masking for (i = 0; i < 64; ++i) if (a[i] Another Example with Masking for (i = 0; i < 64; ++i) if (a[i]](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-29.jpg)

![Automatic Code Vectorization for (i=0; i < N; i++) C[i] = A[i] + B[i]; Automatic Code Vectorization for (i=0; i < N; i++) C[i] = A[i] + B[i];](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-38.jpg)

![Loop Iterations as Threads for (i=0; i < N; i++) C[i] = A[i] + Loop Iterations as Threads for (i=0; i < N; i++) C[i] = A[i] +](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-47.jpg)

- Slides: 66

Computer Architecture: SIMD and GPUs (Part II) Prof. Onur Mutlu Carnegie Mellon University

A Note on This Lecture n n These slides are partly from 18 -447 Spring 2013, Computer Architecture, Lecture 19: SIMD and GPUs Video of the part related to only SIMD and GPUs: q http: //www. youtube. com/watch? v=dl 5 TZ 4 oao 0&list=PL 5 PHm 2 jkk. Xmid. JOd 59 REog 9 j. Dn. PDTG 6 IJ&index=19 2

Readings for Today n n n Lindholm et al. , "NVIDIA Tesla: A Unified Graphics and Computing Architecture, " IEEE Micro 2008. Fatahalian and Houston, “A Closer Look at GPUs, ” CACM 2008. See slides today for more readings (optional but recommended) 3

Today n n n SIMD Processing GPU Fundamentals VLIW 4

Approaches to (Instruction-Level) Concurrency n Pipelined execution n n n Out-of-order execution Dataflow (at the ISA level) SIMD Processing VLIW Systolic Arrays Decoupled Access Execute 5

Review: SIMD Processing n Single instruction operates on multiple data elements q In time or in space n Multiple processing elements n Time-space duality q q Array processor: Instruction operates on multiple data elements at the same time Vector processor: Instruction operates on multiple data elements in consecutive time steps 6

Review: SIMD Array Processing vs. VLIW n VLIW 7

Review: SIMD Array Processing vs. VLIW n Array processor 8

Review: Vector Processors n n A vector is a one-dimensional array of numbers Many scientific/commercial programs use vectors for (i = 0; i<=49; i++) C[i] = (A[i] + B[i]) / 2 n n A vector processor is one whose instructions operate on vectors rather than scalar (single data) values Basic requirements q q q Need to load/store vectors vector registers (contain vectors) Need to operate on vectors of different lengths vector length register (VLEN) Elements of a vector might be stored apart from each other in memory vector stride register (VSTR) n Stride: distance between two elements of a vector 9

Review: Vector Processor Advantages + No dependencies within a vector q q Pipelining, parallelization work well Can have very deep pipelines, no dependencies! + Each instruction generates a lot of work q Reduces instruction fetch bandwidth + Highly regular memory access pattern q q Interleaving multiple banks for higher memory bandwidth Prefetching + No need to explicitly code loops q Fewer branches in the instruction sequence 10

Review: Vector Processor Disadvantages -- Works (only) if parallelism is regular (data/SIMD parallelism) ++ Vector operations -- Very inefficient if parallelism is irregular -- How about searching for a key in a linked list? Fisher, “Very Long Instruction Word architectures and the ELI-512, ” ISCA 1983. 11

Review: Vector Processor Limitations -- Memory (bandwidth) can easily become a bottleneck, especially if 1. compute/memory operation balance is not maintained 2. data is not mapped appropriately to memory banks 12

Vector Registers n n n Each vector data register holds N M-bit values Vector control registers: VLEN, VSTR, VMASK Vector Mask Register (VMASK) q Indicates which elements of vector to operate on q Set by vector test instructions n n e. g. , VMASK[i] = (Vk[i] == 0) Maximum VLEN can be N q Maximum number of elements stored in a vector register V 0, 0 V 0, 1 V 0, N-1 M-bit wide V 1, 0 V 1, 1 V 1, N-1 13

Vector Functional Units n n Use deep pipeline (=> fast clock) to execute element operations Simplifies control of deep pipeline because elements in vector are independent V 1 V 2 V 3 Six stage multiply pipeline V 3 <- v 1 * v 2 Slide credit: Krste Asanovic 14

Vector Machine Organization (CRAY 1) n CRAY-1 n n n n Russell, “The CRAY-1 computer system, ” CACM 1978. Scalar and vector modes 8 64 -element vector registers 64 bits per element 16 memory banks 8 64 -bit scalar registers 8 24 -bit address registers 15

Memory Banking n n n Example: 16 banks; can start one bank access per cycle Bank latency: 11 cycles Can sustain 16 parallel accesses if they go to different banks Bank 0 Bank 1 Bank 2 Bank 15 MDR MAR Data bus Address bus CPU Slide credit: Derek Chiou 16

Vector Memory System Base Stride Vector Registers Address Generator + 0 1 2 3 4 5 6 7 8 9 A B C D E F Memory Banks Slide credit: Krste Asanovic 17

![Scalar Code Example n For I 0 to 49 q n Ci Scalar Code Example n For I = 0 to 49 q n C[i] =](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-18.jpg)

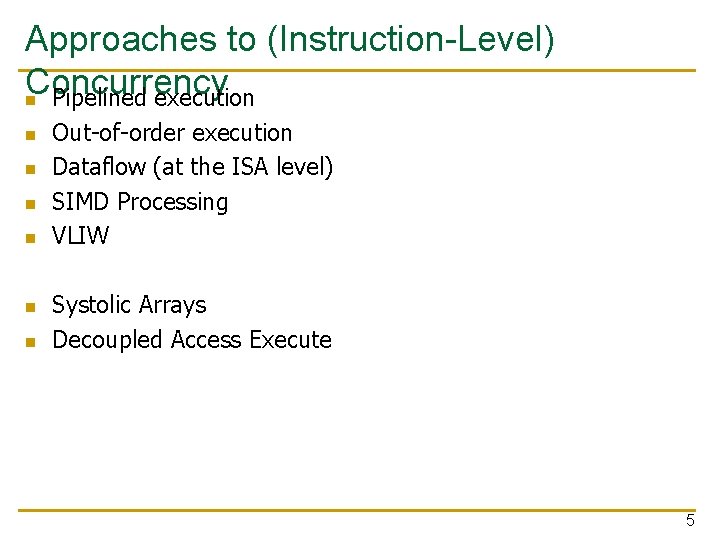

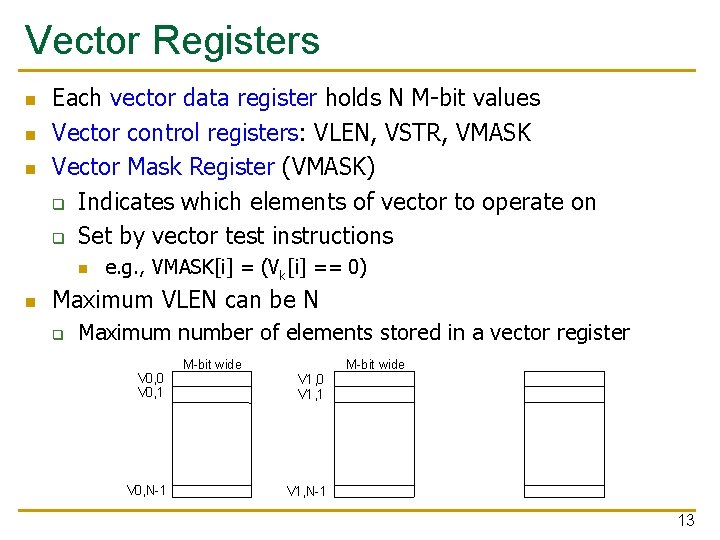

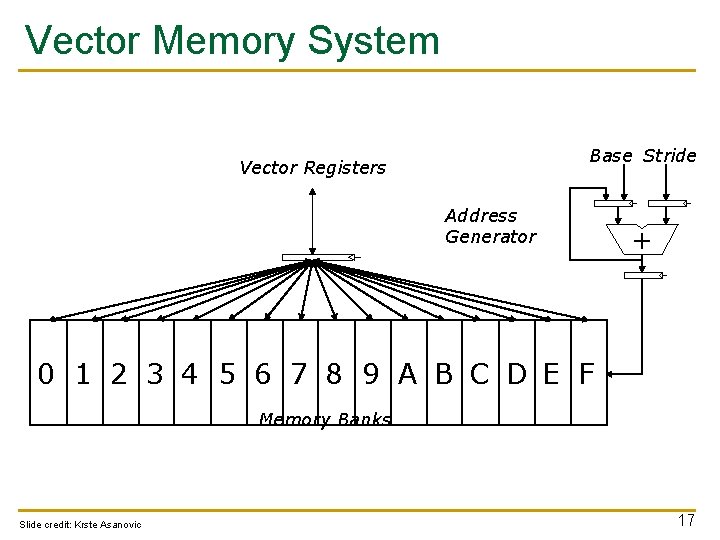

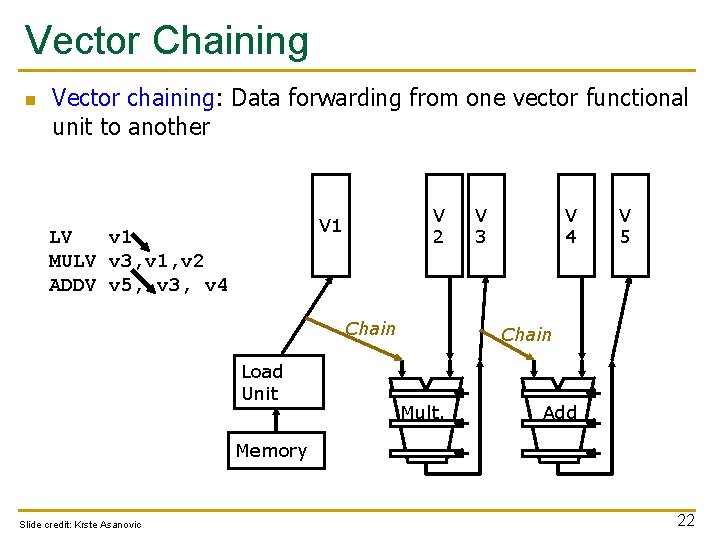

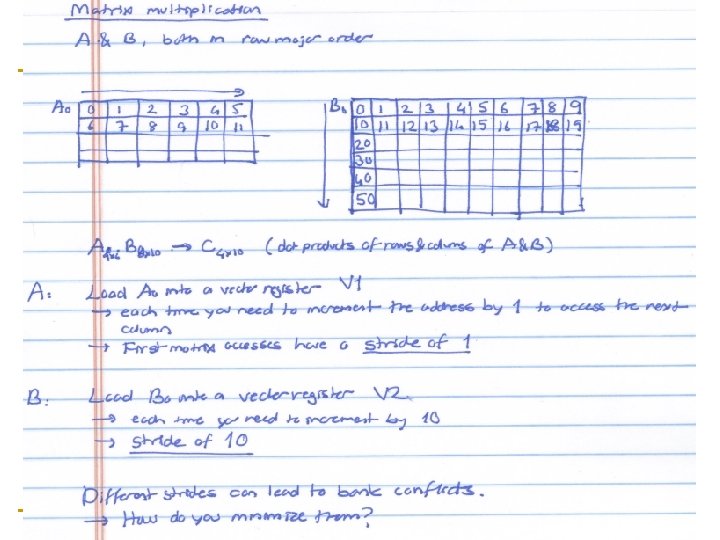

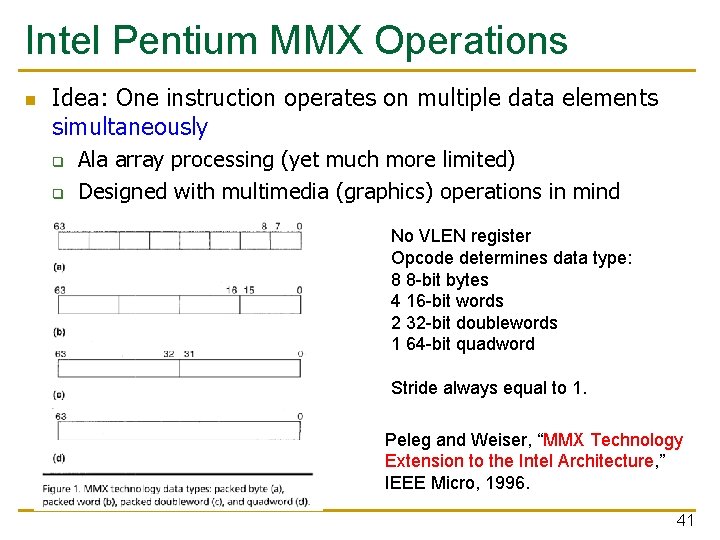

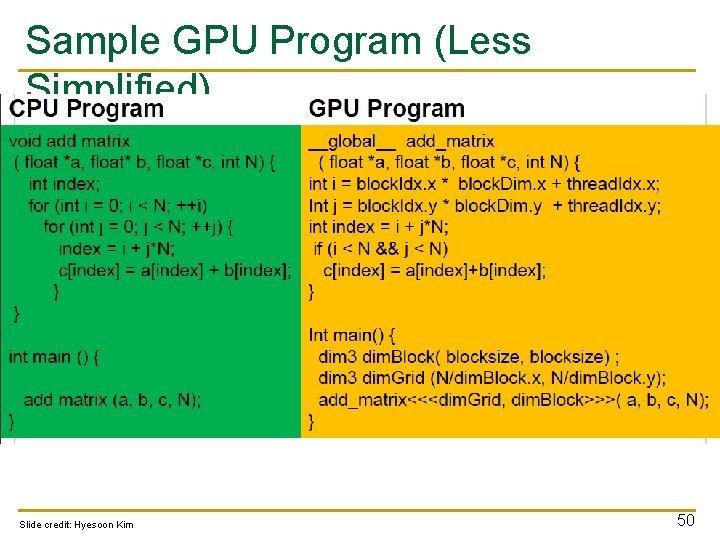

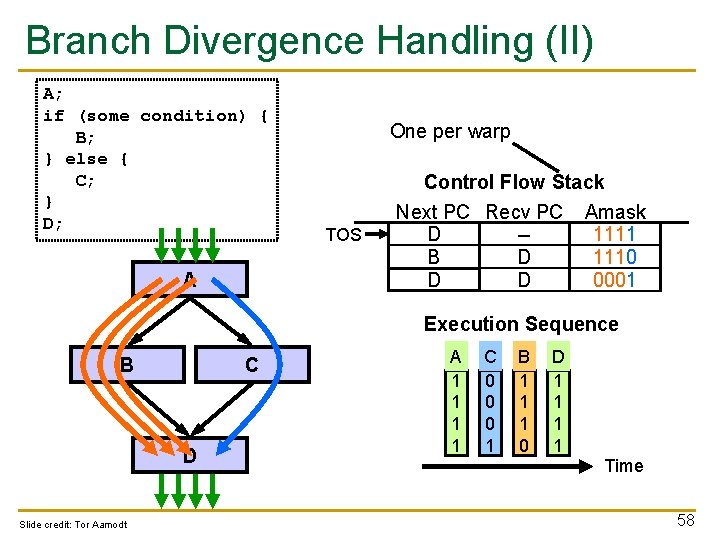

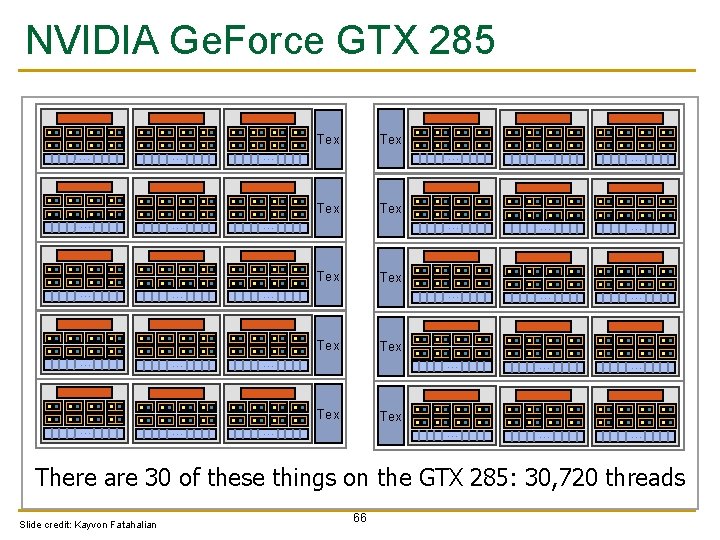

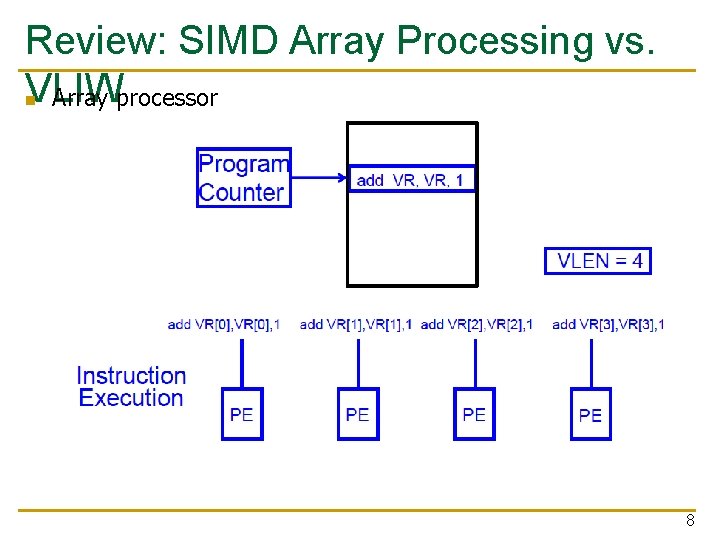

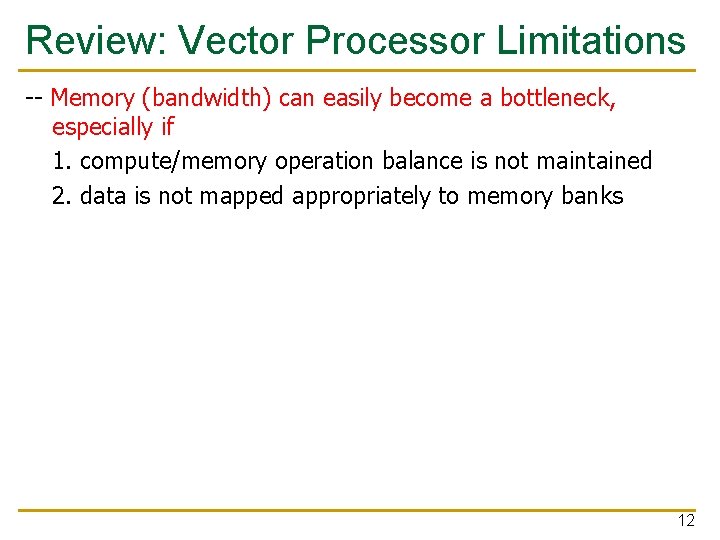

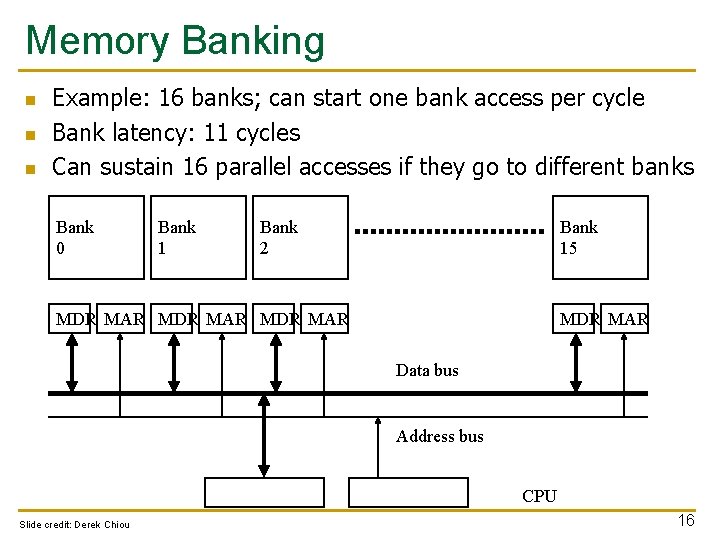

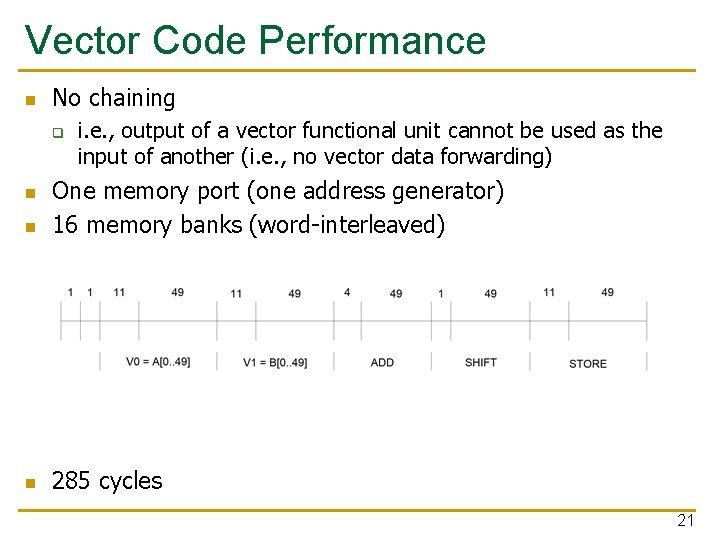

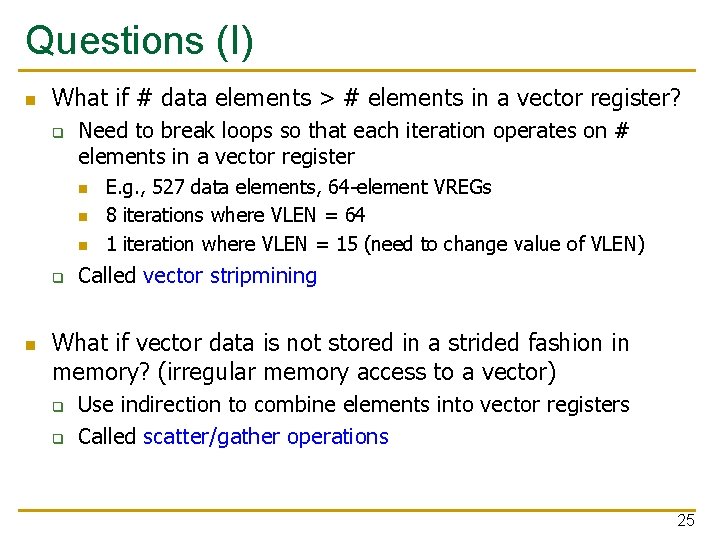

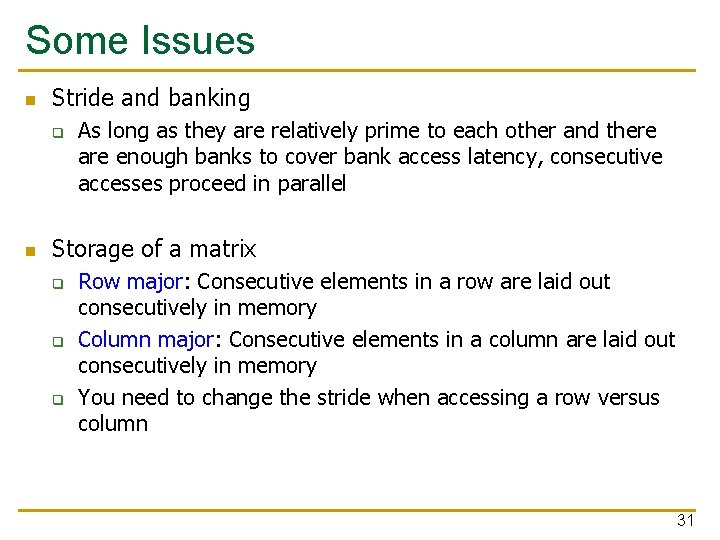

Scalar Code Example n For I = 0 to 49 q n C[i] = (A[i] + B[i]) / 2 Scalar code MOVI R 0 = 50 MOVA R 1 = A MOVA R 2 = B MOVA R 3 = C X: LD R 4 = MEM[R 1++] LD R 5 = MEM[R 2++] ADD R 6 = R 4 + R 5 SHFR R 7 = R 6 >> 1 ST MEM[R 3++] = R 7 DECBNZ --R 0, X 1 304 dynamic instructions 1 11 ; autoincrement addressing 11 4 1 11 2 ; decrement and branch if NZ 18

Scalar Code Execution Time n Scalar execution time on an in-order processor with 1 bank q q n Scalar execution time on an in-order processor with 16 banks (word-interleaved) q q n First two loads in the loop cannot be pipelined: 2*11 cycles 4 + 50*40 = 2004 cycles First two loads in the loop can be pipelined 4 + 50*30 = 1504 cycles Why 16 banks? q q 11 cycle memory access latency Having 16 (>11) banks ensures there are enough banks to overlap enough memory operations to cover memory latency 19

Vectorizable Loops n n A loop is vectorizable if each iteration is independent of any other For I = 0 to 49 q n C[i] = (A[i] + B[i]) / 2 7 dynamic instructions Vectorized loop: MOVI VLEN = 50 MOVI VSTR = 1 VLD V 0 = A VLD V 1 = B VADD V 2 = V 0 + V 1 VSHFR V 3 = V 2 >> 1 VST C = V 3 1 1 11 + VLN - 1 11 + VLN – 1 4 + VLN - 1 11 + VLN – 1 20

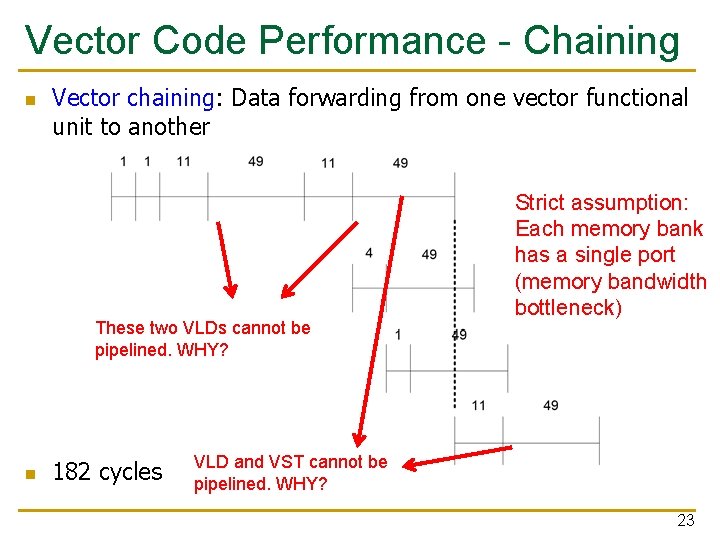

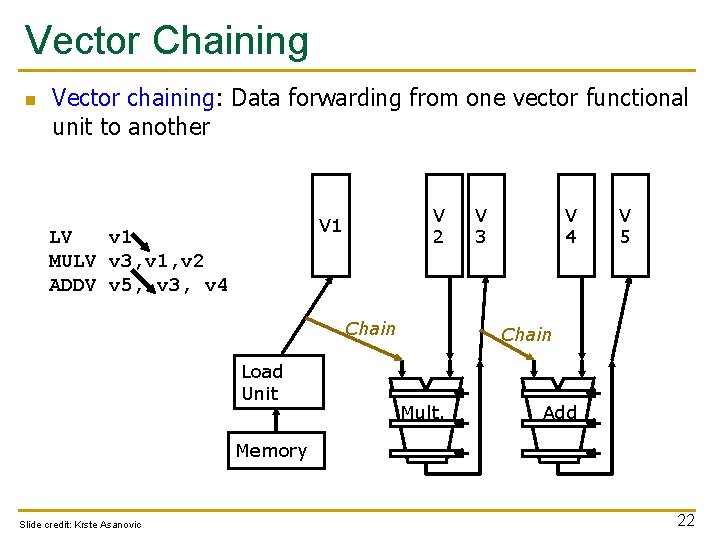

Vector Code Performance n No chaining q i. e. , output of a vector functional unit cannot be used as the input of another (i. e. , no vector data forwarding) n One memory port (one address generator) 16 memory banks (word-interleaved) n 285 cycles n 21

Vector Chaining n Vector chaining: Data forwarding from one vector functional unit to another V 2 V 1 LV v 1 MULV v 3, v 1, v 2 ADDV v 5, v 3, v 4 Chain Load Unit V 3 V 4 V 5 Chain Mult. Add Memory Slide credit: Krste Asanovic 22

Vector Code Performance - Chaining n Vector chaining: Data forwarding from one vector functional unit to another These two VLDs cannot be pipelined. WHY? n 182 cycles Strict assumption: Each memory bank has a single port (memory bandwidth bottleneck) VLD and VST cannot be pipelined. WHY? 23

Vector Code Performance – Multiple Memory Ports n Chaining and 2 load ports, 1 store port in each bank n 79 cycles 24

Questions (I) n What if # data elements > # elements in a vector register? q Need to break loops so that each iteration operates on # elements in a vector register n n n q n E. g. , 527 data elements, 64 -element VREGs 8 iterations where VLEN = 64 1 iteration where VLEN = 15 (need to change value of VLEN) Called vector stripmining What if vector data is not stored in a strided fashion in memory? (irregular memory access to a vector) q q Use indirection to combine elements into vector registers Called scatter/gather operations 25

![GatherScatter Operations Want to vectorize loops with indirect accesses for i0 iN i Ai Gather/Scatter Operations Want to vectorize loops with indirect accesses: for (i=0; i<N; i++) A[i]](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-26.jpg)

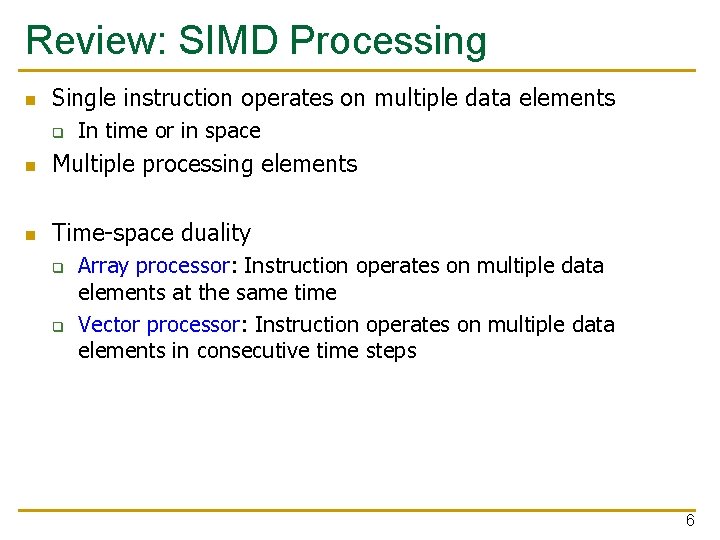

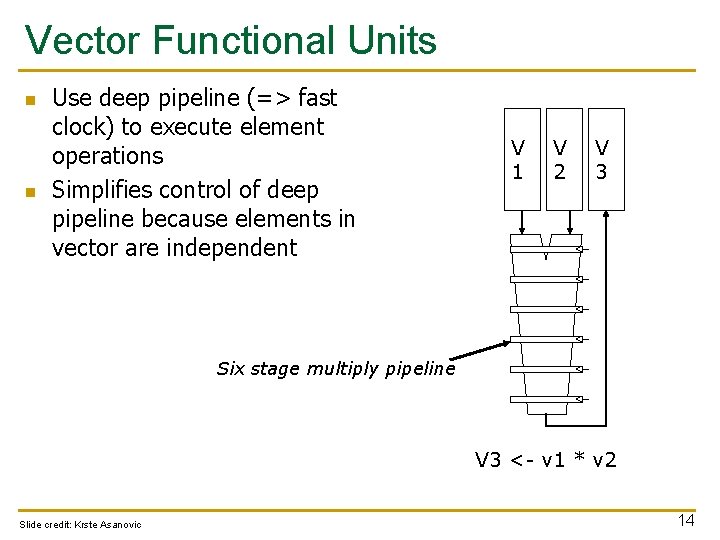

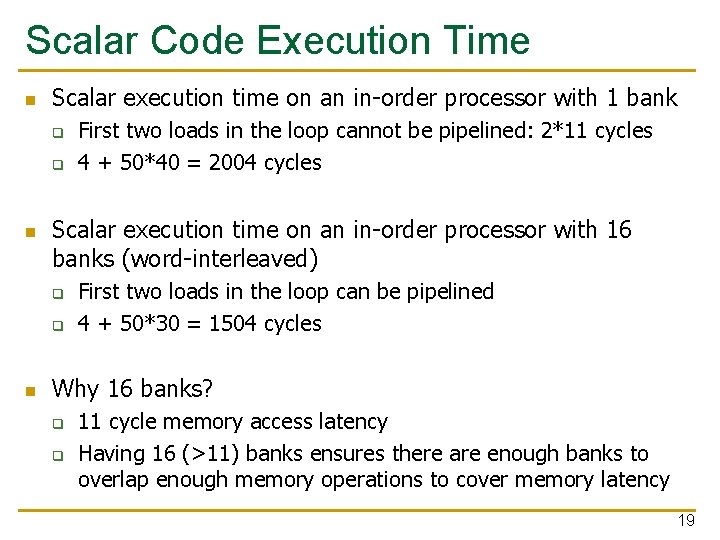

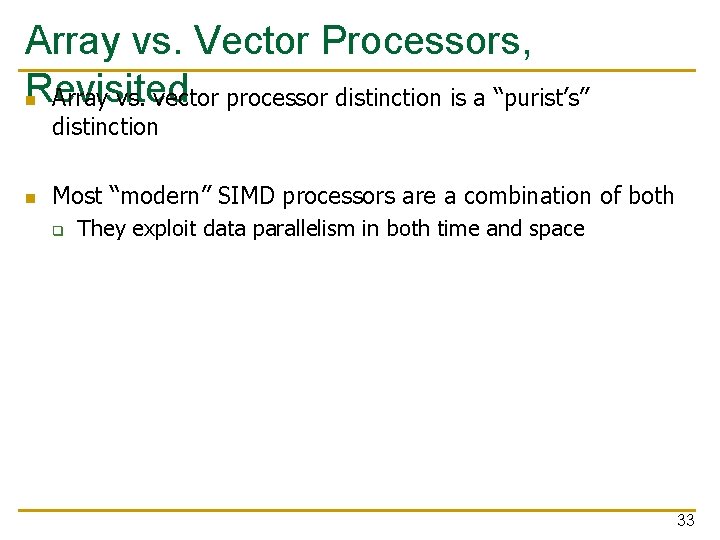

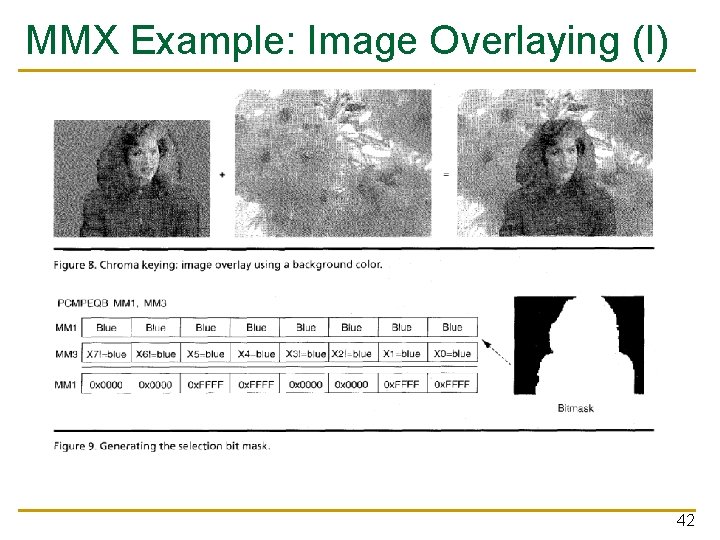

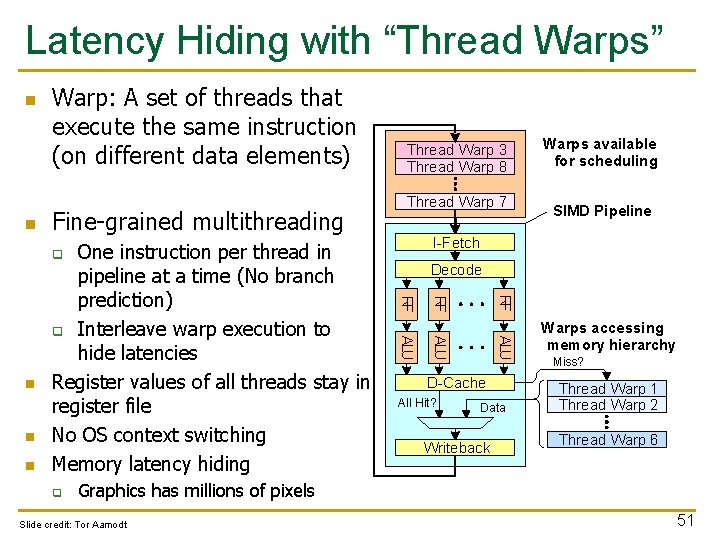

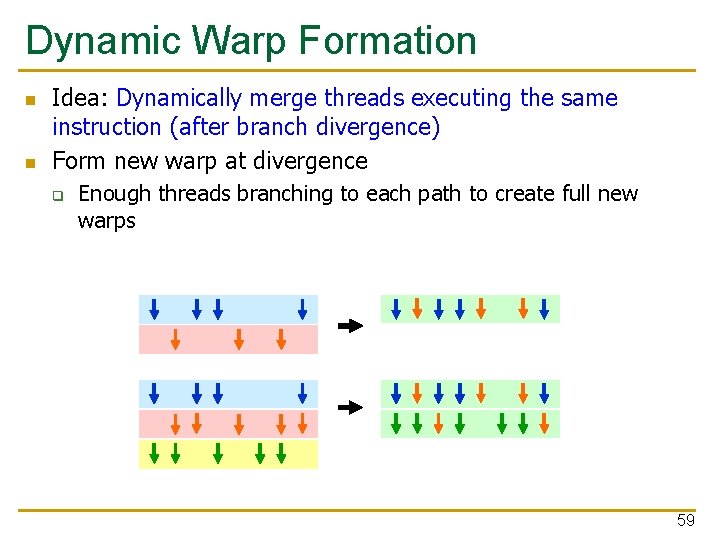

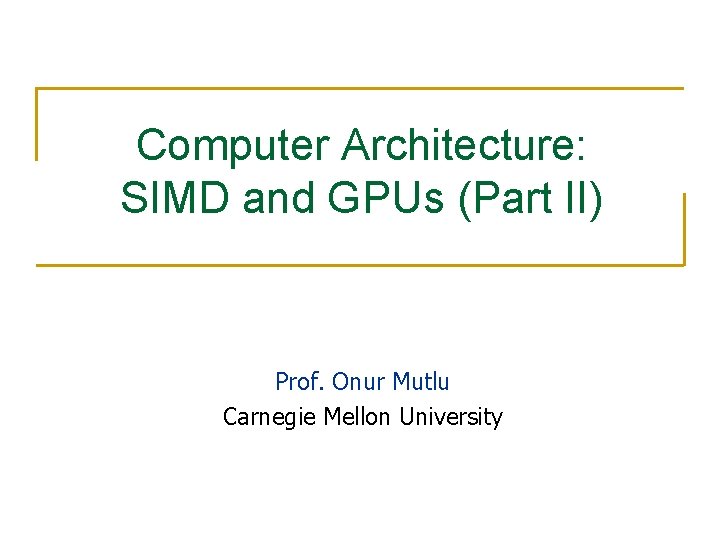

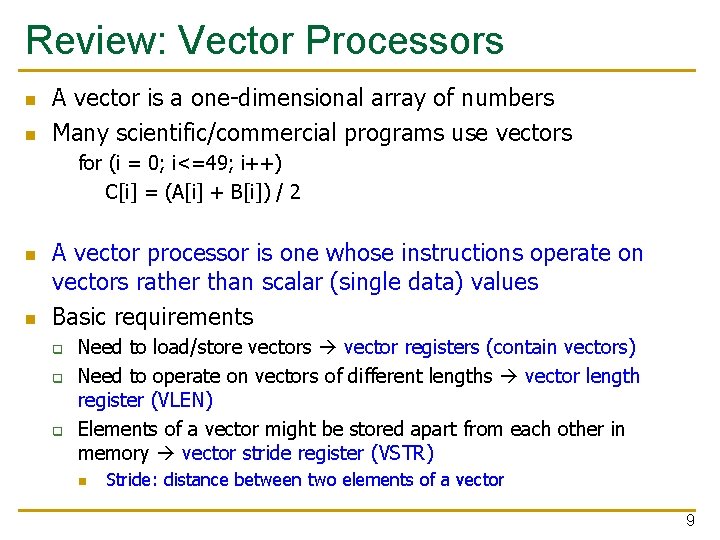

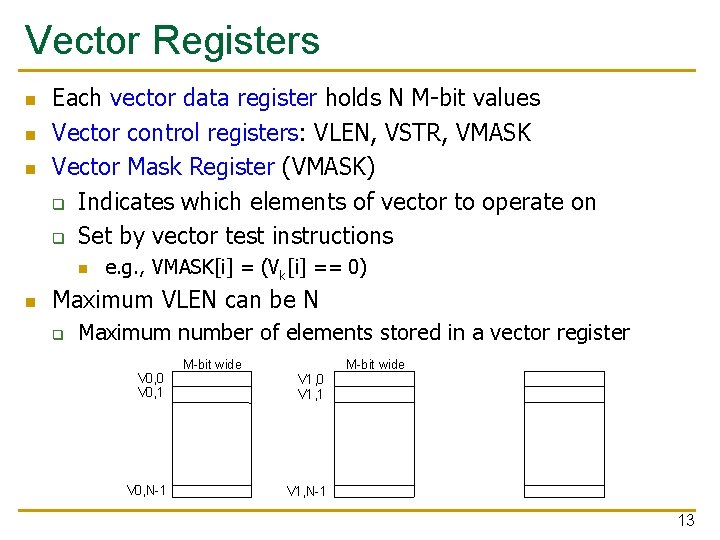

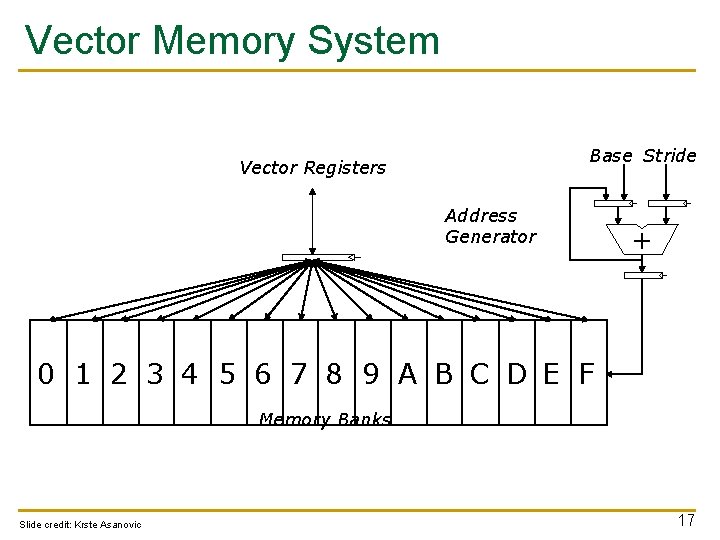

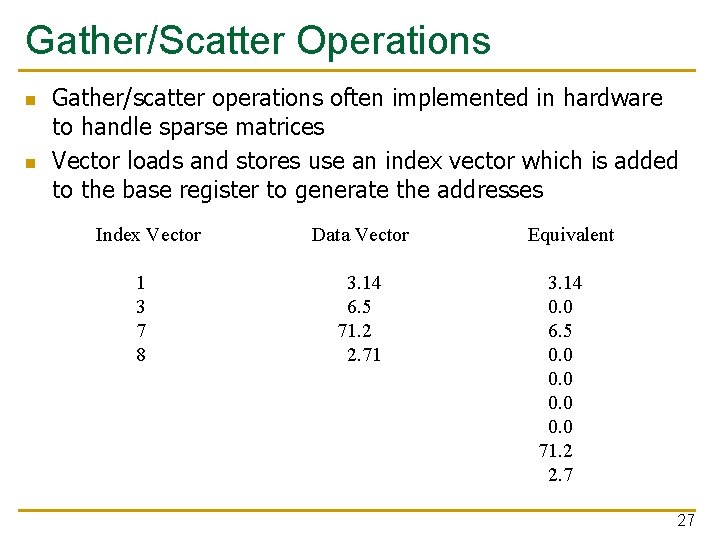

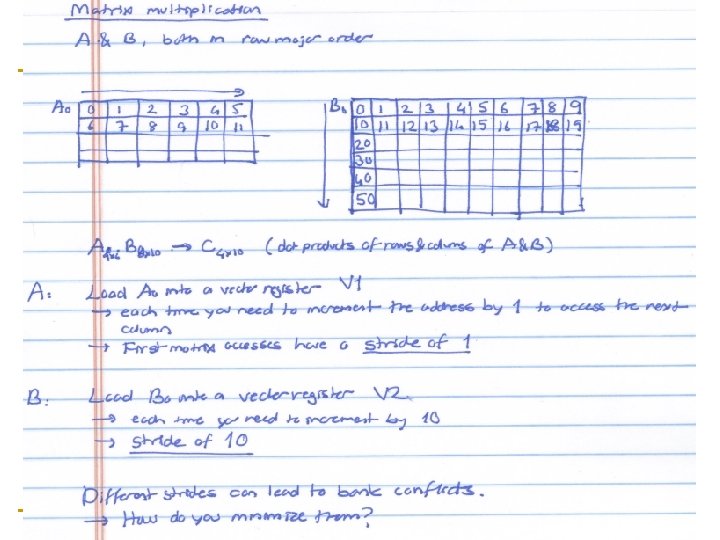

Gather/Scatter Operations Want to vectorize loops with indirect accesses: for (i=0; i<N; i++) A[i] = B[i] + C[D[i]] Indexed load instruction (Gather) LV v. D, r. D LVI v. C, r. C, v. D LV v. B, r. B ADDV. D v. A, v. B, v. C SV v. A, r. A # # # Load indices in D vector Load indirect from r. C base Load B vector Do add Store result 26

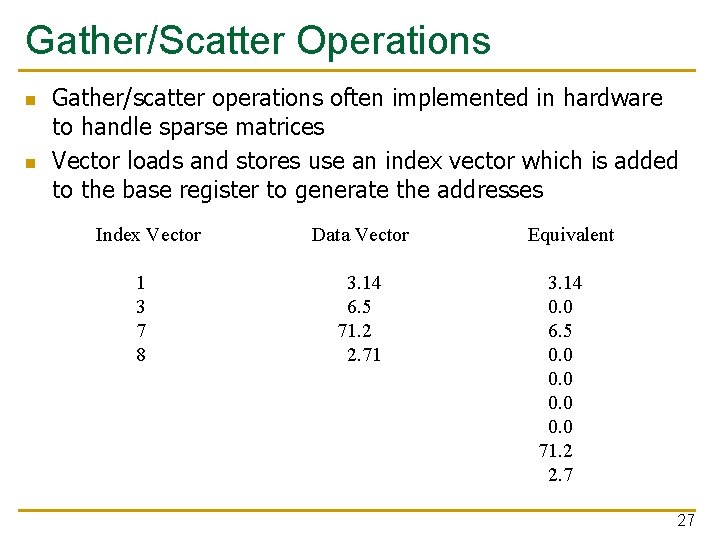

Gather/Scatter Operations n n Gather/scatter operations often implemented in hardware to handle sparse matrices Vector loads and stores use an index vector which is added to the base register to generate the addresses Index Vector 1 3 7 8 Data Vector 3. 14 6. 5 71. 2 2. 71 Equivalent 3. 14 0. 0 6. 5 0. 0 71. 2 2. 7 27

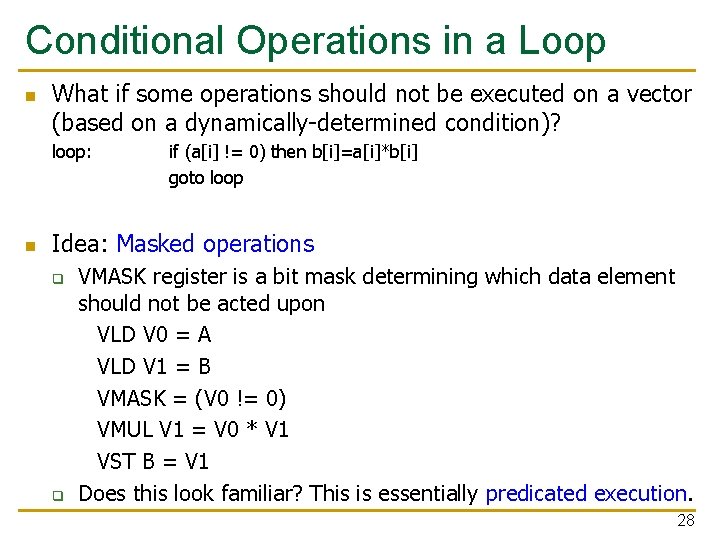

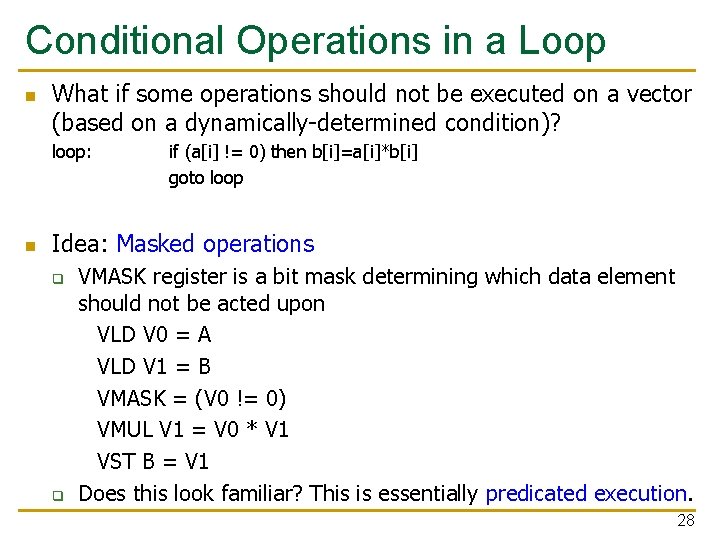

Conditional Operations in a Loop n What if some operations should not be executed on a vector (based on a dynamically-determined condition)? loop: n if (a[i] != 0) then b[i]=a[i]*b[i] goto loop Idea: Masked operations q q VMASK register is a bit mask determining which data element should not be acted upon VLD V 0 = A VLD V 1 = B VMASK = (V 0 != 0) VMUL V 1 = V 0 * V 1 VST B = V 1 Does this look familiar? This is essentially predicated execution. 28

![Another Example with Masking for i 0 i 64 i if ai Another Example with Masking for (i = 0; i < 64; ++i) if (a[i]](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-29.jpg)

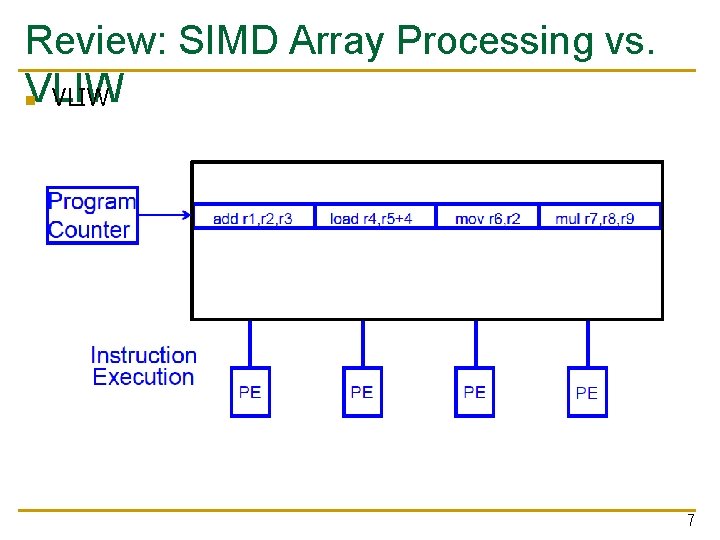

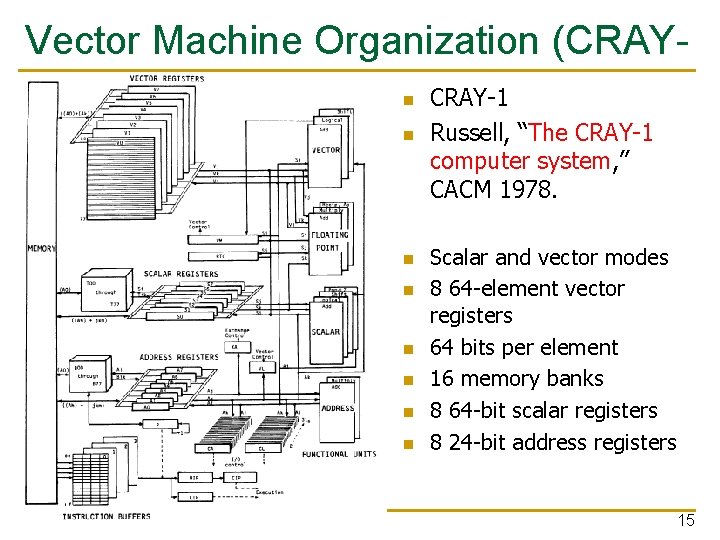

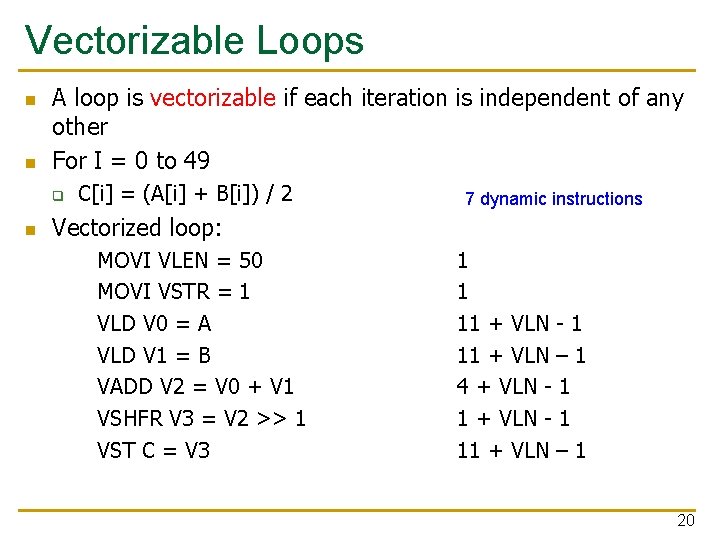

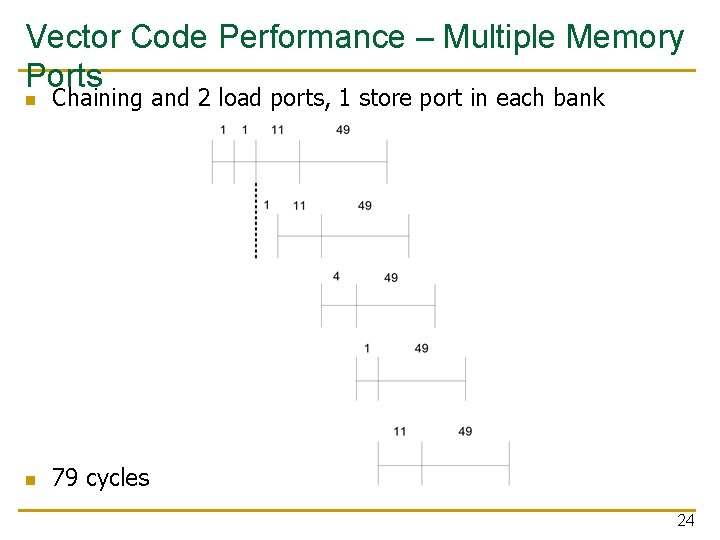

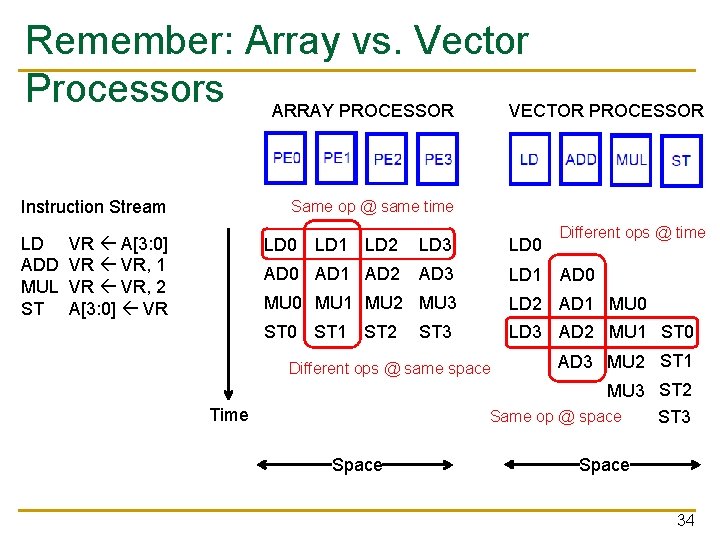

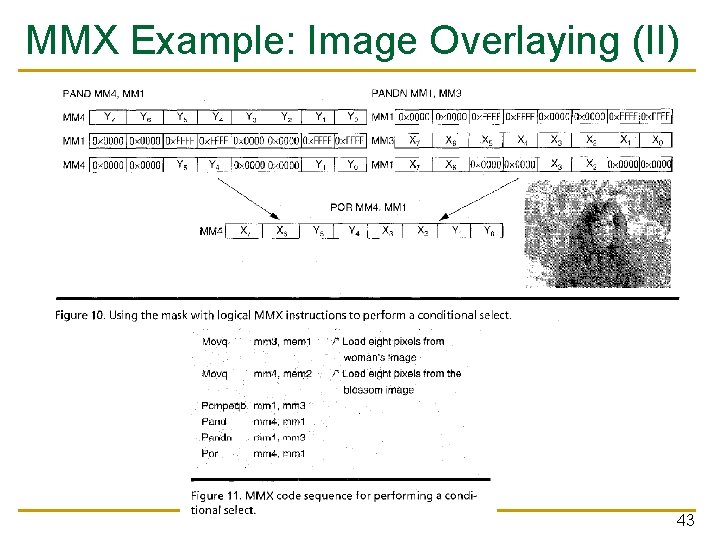

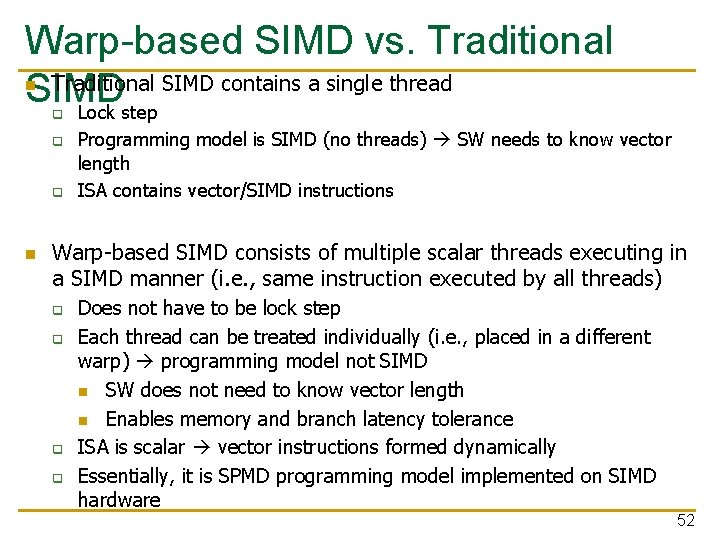

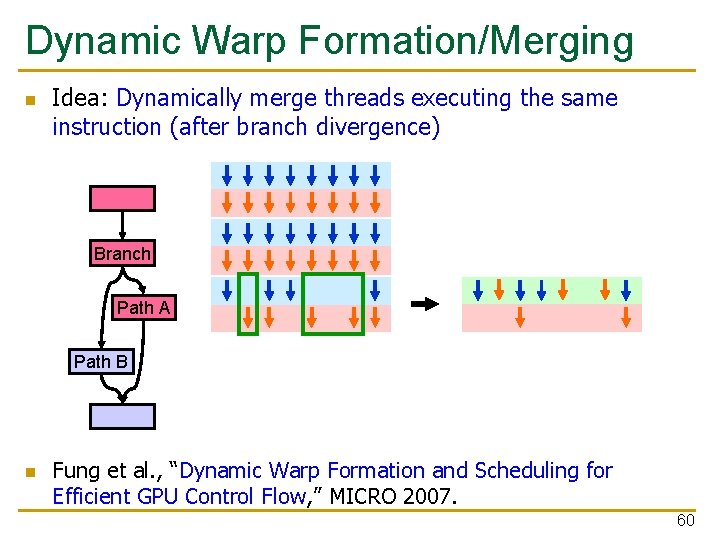

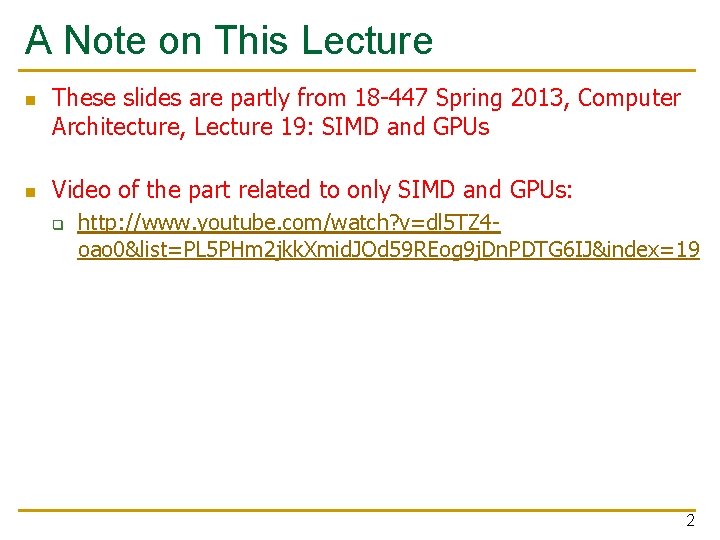

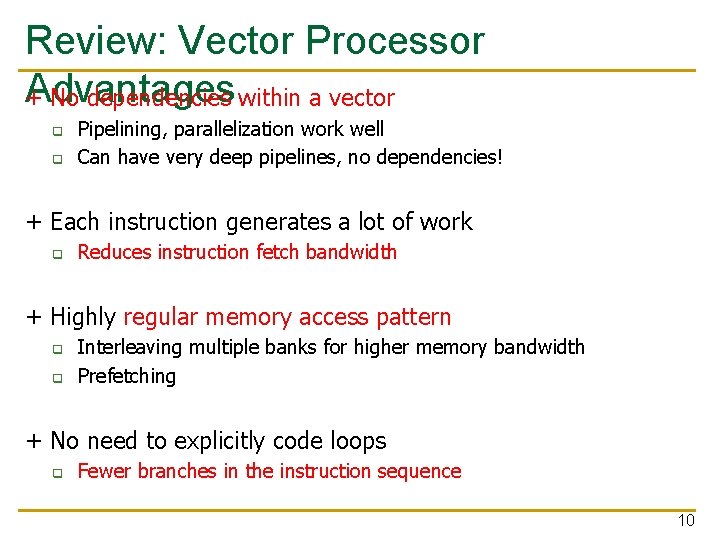

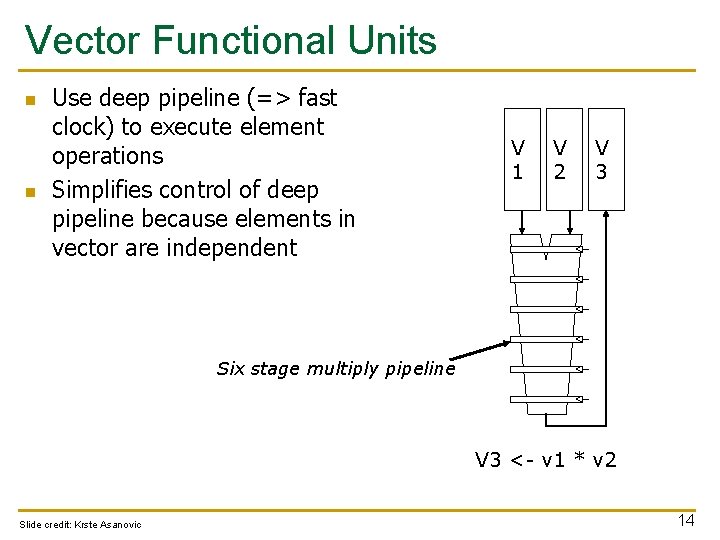

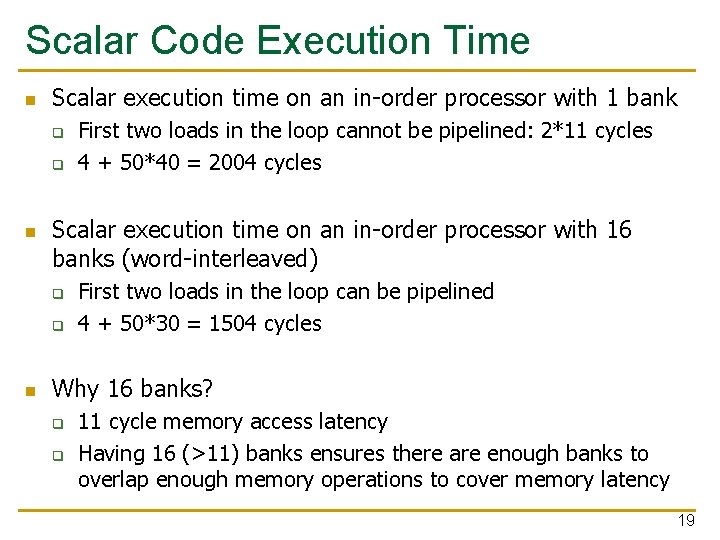

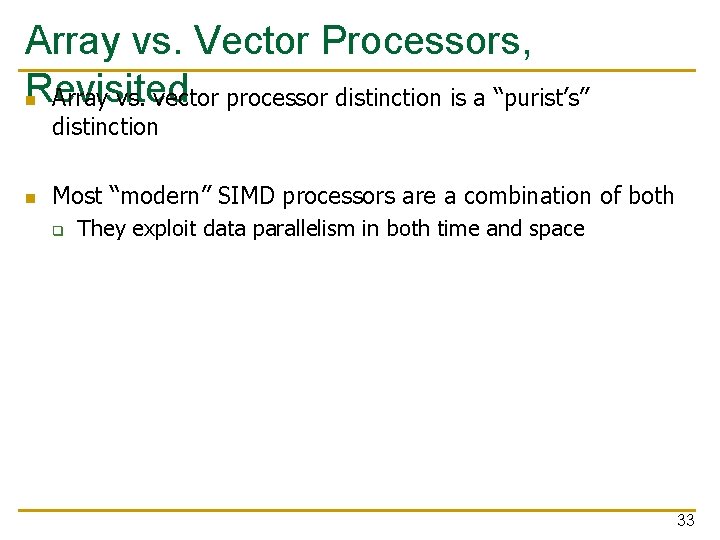

Another Example with Masking for (i = 0; i < 64; ++i) if (a[i] >= b[i]) then c[i] = a[i] else c[i] = b[i] A 1 2 3 4 -5 0 6 -7 B 2 2 2 10 -4 -3 5 -8 VMASK 0 1 1 0 0 1 1 1 Steps to execute loop 1. Compare A, B to get VMASK 2. Masked store of A into C 3. Complement VMASK 4. Masked store of B into C 29

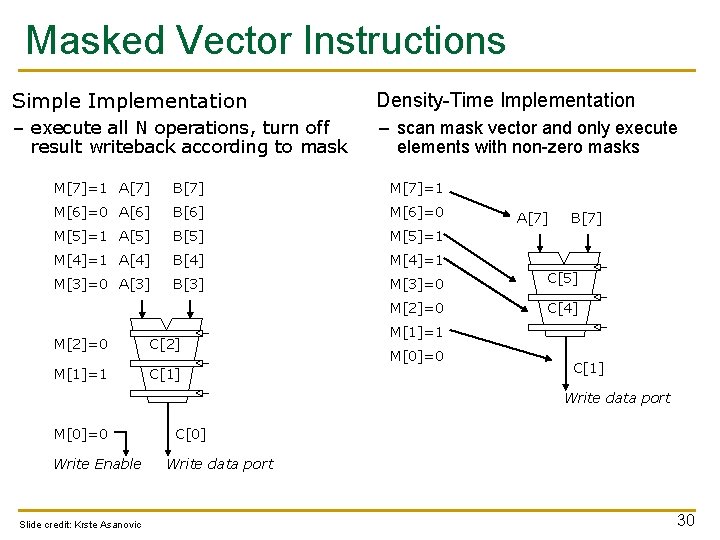

Masked Vector Instructions Simple Implementation Density-Time Implementation – execute all N operations, turn off result writeback according to mask – scan mask vector and only execute elements with non-zero masks M[7]=1 A[7] B[7] M[7]=1 M[6]=0 A[6] B[6] M[6]=0 M[5]=1 A[5] B[5] M[5]=1 M[4]=1 A[4] B[4] M[4]=1 M[3]=0 A[3] B[3] M[3]=0 C[5] M[2]=0 C[4] M[2]=0 C[2] M[1]=1 C[1] A[7] B[7] M[1]=1 M[0]=0 C[1] Write data port M[0]=0 Write Enable Slide credit: Krste Asanovic C[0] Write data port 30

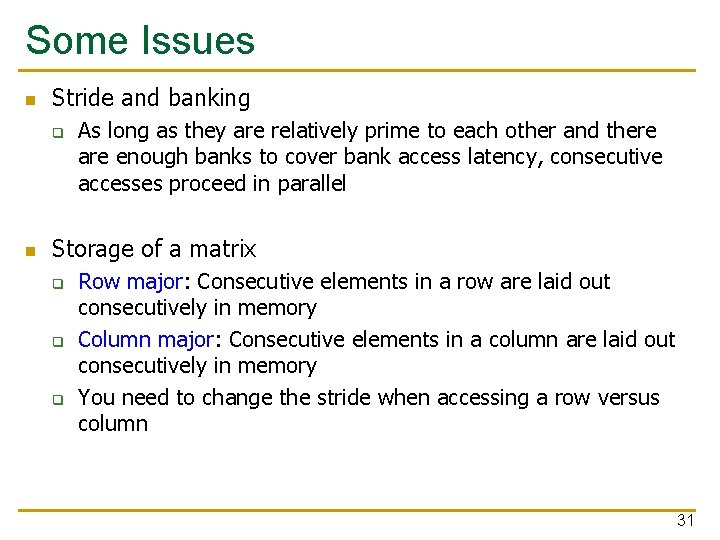

Some Issues n Stride and banking q n As long as they are relatively prime to each other and there are enough banks to cover bank access latency, consecutive accesses proceed in parallel Storage of a matrix q q q Row major: Consecutive elements in a row are laid out consecutively in memory Column major: Consecutive elements in a column are laid out consecutively in memory You need to change the stride when accessing a row versus column 31

32

Array vs. Vector Processors, Revisited n Array vs. vector processor distinction is a “purist’s” distinction n Most “modern” SIMD processors are a combination of both q They exploit data parallelism in both time and space 33

Remember: Array vs. Vector Processors ARRAY PROCESSOR VECTOR PROCESSOR Instruction Stream LD ADD MUL ST Same op @ same time VR A[3: 0] VR VR, 1 VR VR, 2 A[3: 0] VR Different ops @ time LD 0 LD 1 LD 2 LD 3 LD 0 AD 1 AD 2 AD 3 LD 1 AD 0 MU 1 MU 2 MU 3 LD 2 AD 1 MU 0 ST 1 ST 2 LD 3 AD 2 MU 1 ST 0 ST 3 Different ops @ same space AD 3 MU 2 ST 1 MU 3 ST 2 Same op @ space ST 3 Time Space 34

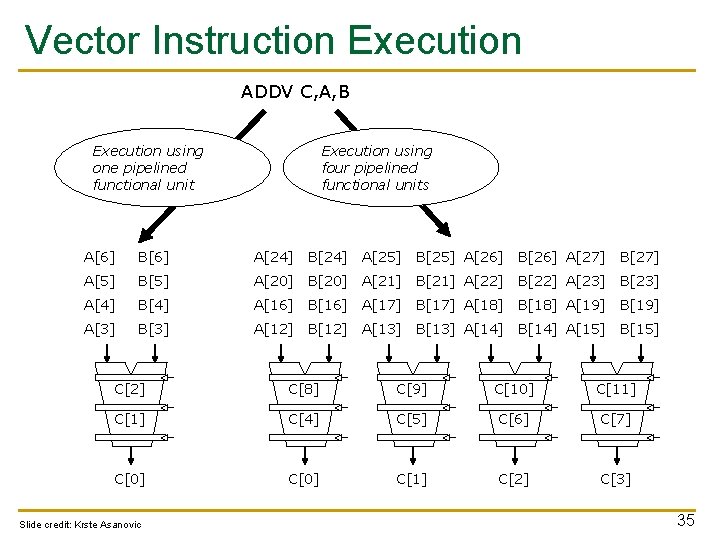

Vector Instruction Execution ADDV C, A, B Execution using one pipelined functional unit Execution using four pipelined functional units A[6] B[6] A[24] B[24] A[25] B[25] A[26] B[26] A[27] B[27] A[5] B[5] A[20] B[20] A[21] B[21] A[22] B[22] A[23] B[23] A[4] B[4] A[16] B[16] A[17] B[17] A[18] B[18] A[19] B[19] A[3] B[3] A[12] B[12] A[13] B[13] A[14] B[14] A[15] B[15] C[2] C[8] C[9] C[10] C[11] C[4] C[5] C[6] C[7] C[0] C[1] C[2] C[3] Slide credit: Krste Asanovic 35

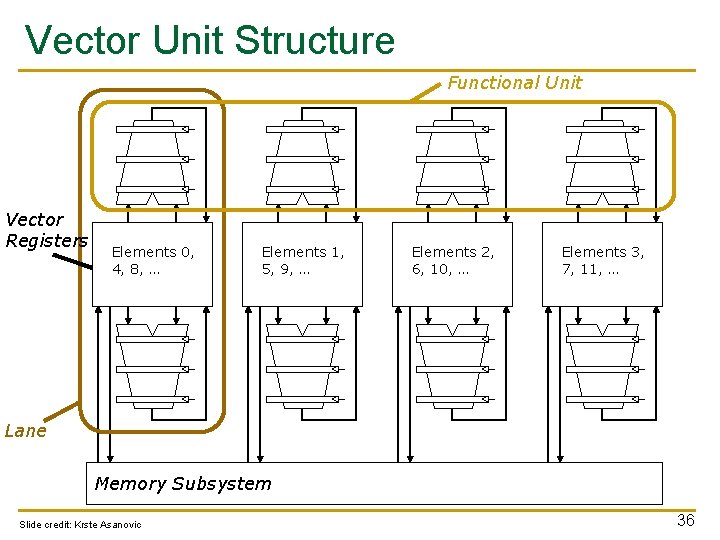

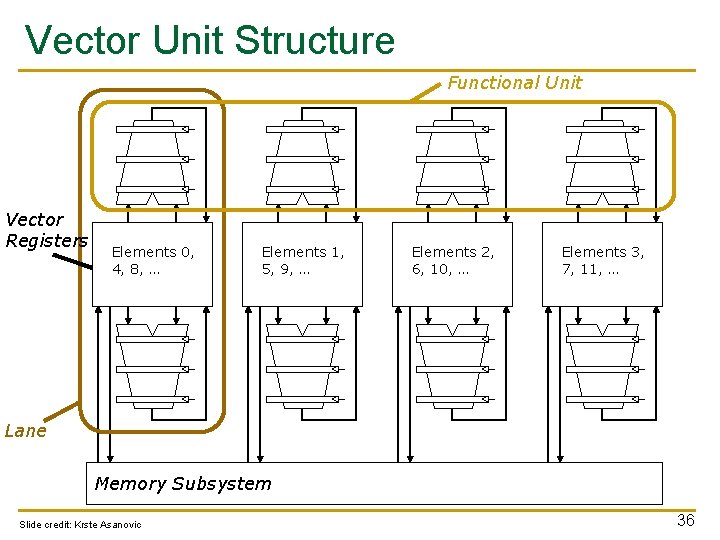

Vector Unit Structure Functional Unit Vector Registers Elements 0, 4, 8, … Elements 1, 5, 9, … Elements 2, 6, 10, … Elements 3, 7, 11, … Lane Memory Subsystem Slide credit: Krste Asanovic 36

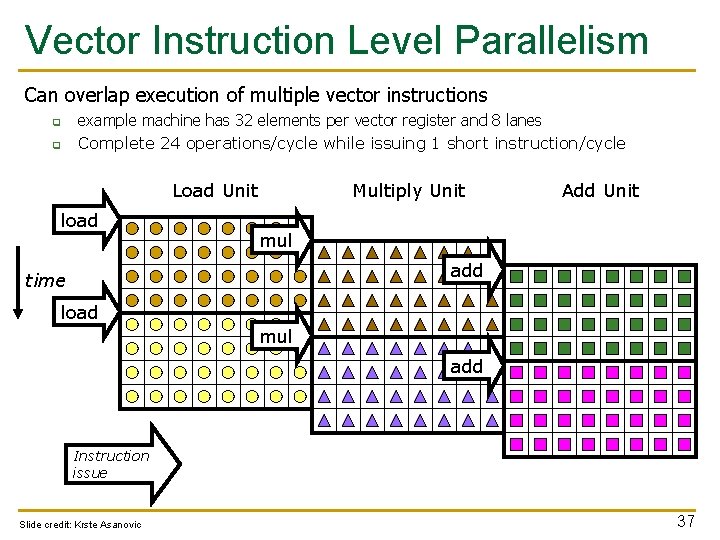

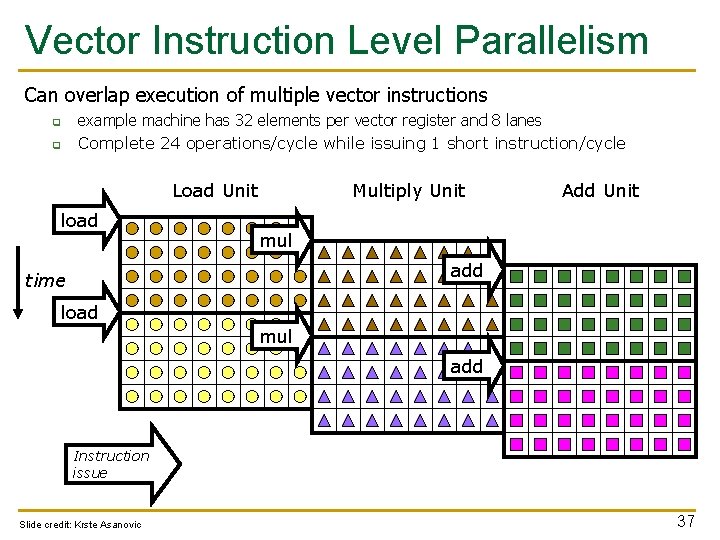

Vector Instruction Level Parallelism Can overlap execution of multiple vector instructions q q example machine has 32 elements per vector register and 8 lanes Complete 24 operations/cycle while issuing 1 short instruction/cycle Load Unit load Multiply Unit Add Unit mul add time load mul add Instruction issue Slide credit: Krste Asanovic 37

![Automatic Code Vectorization for i0 i N i Ci Ai Bi Automatic Code Vectorization for (i=0; i < N; i++) C[i] = A[i] + B[i];](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-38.jpg)

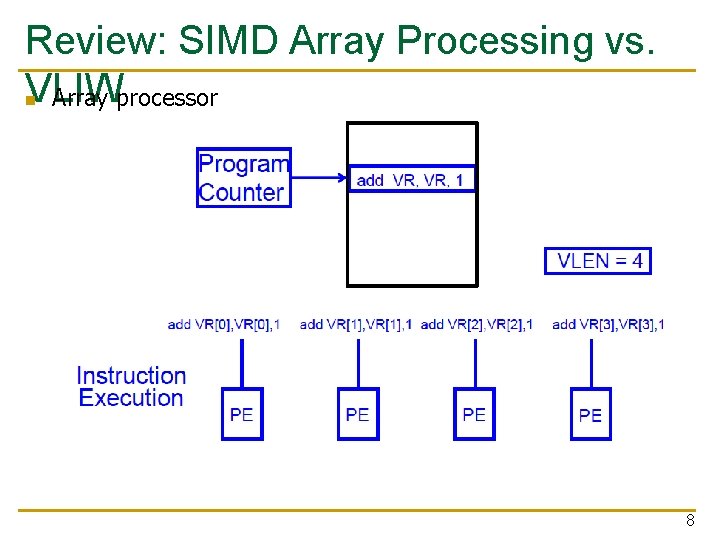

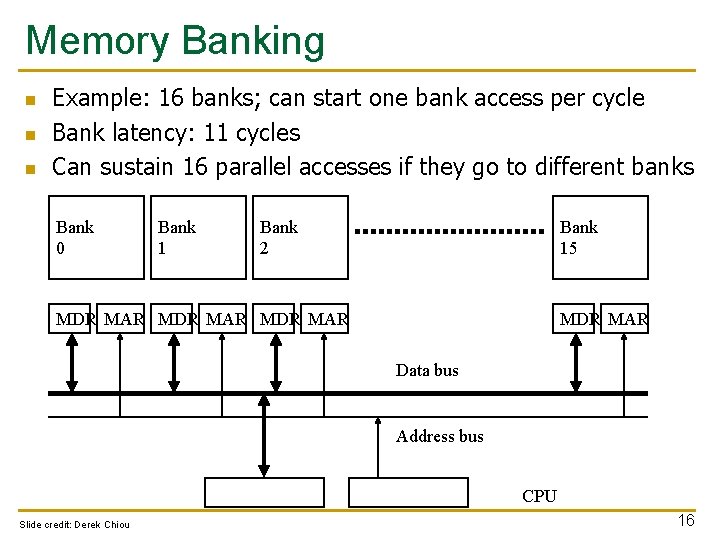

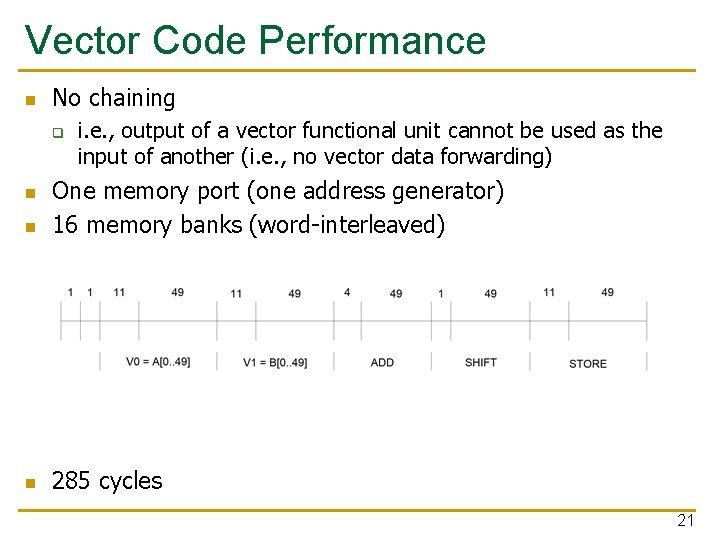

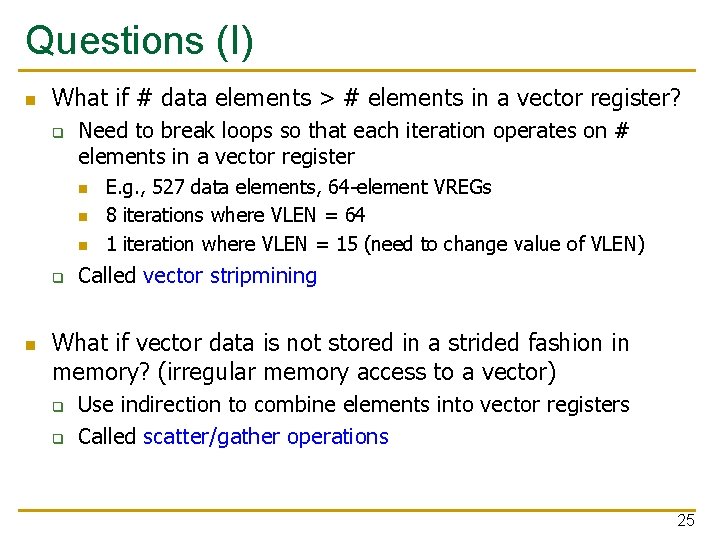

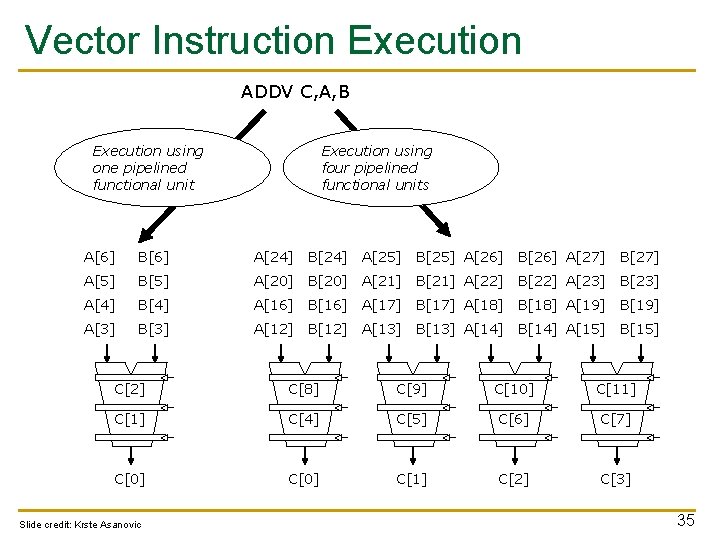

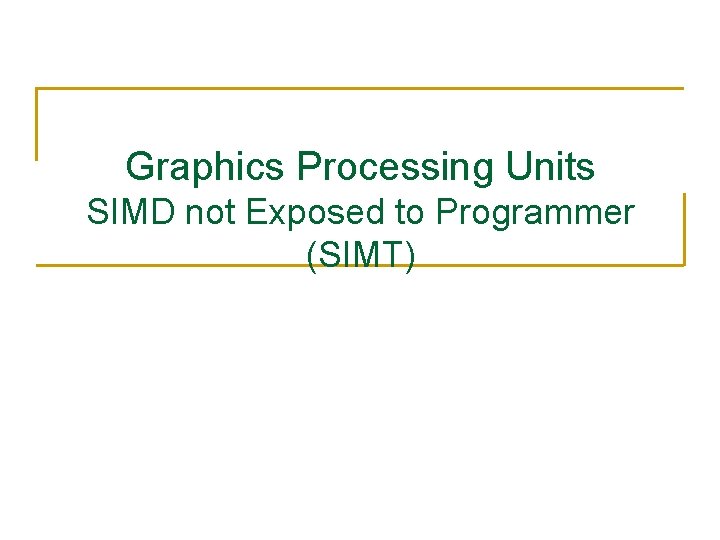

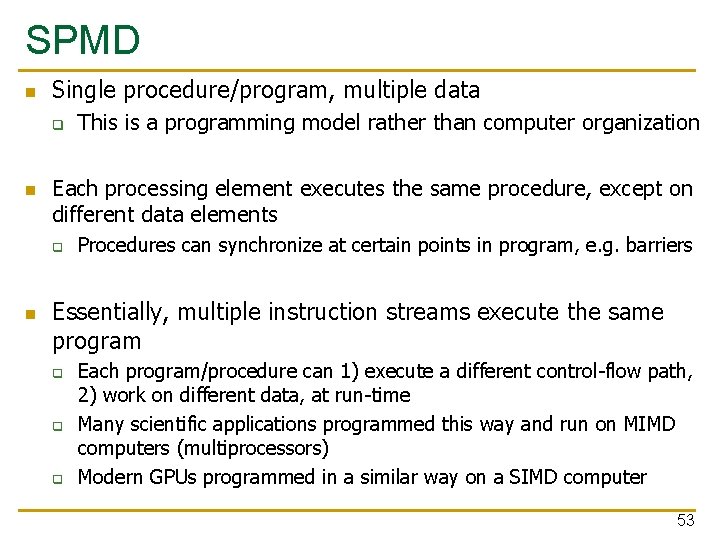

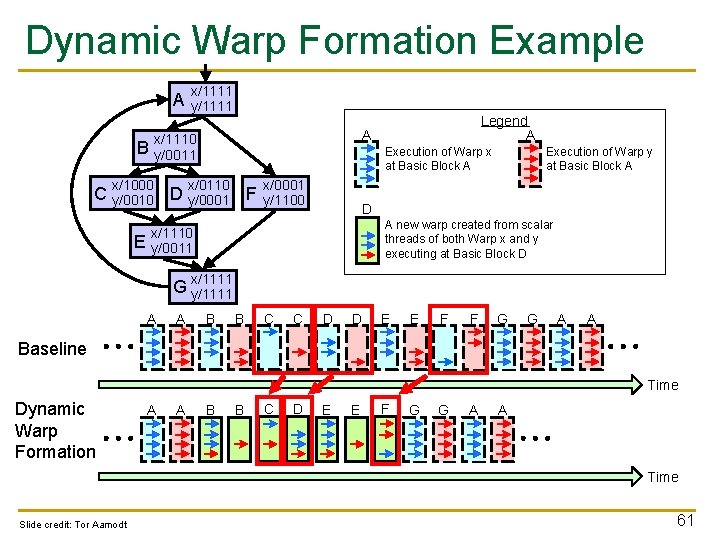

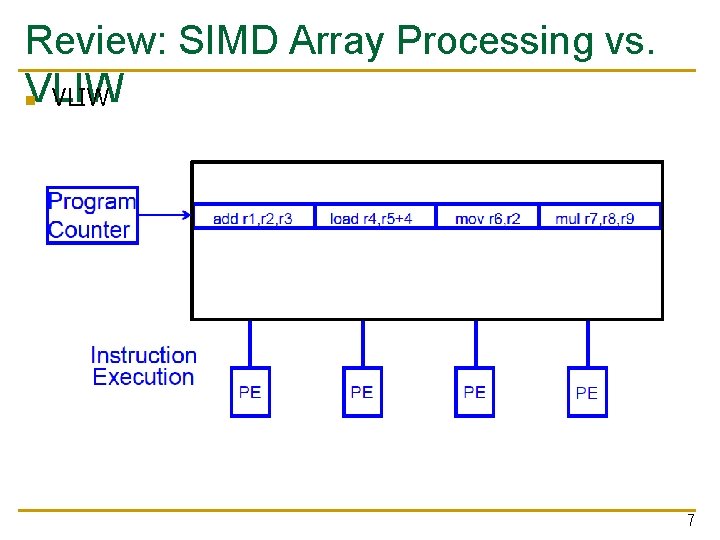

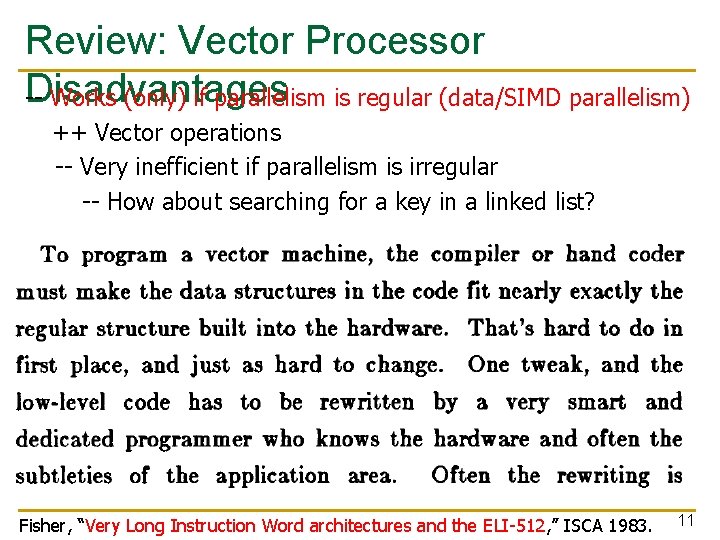

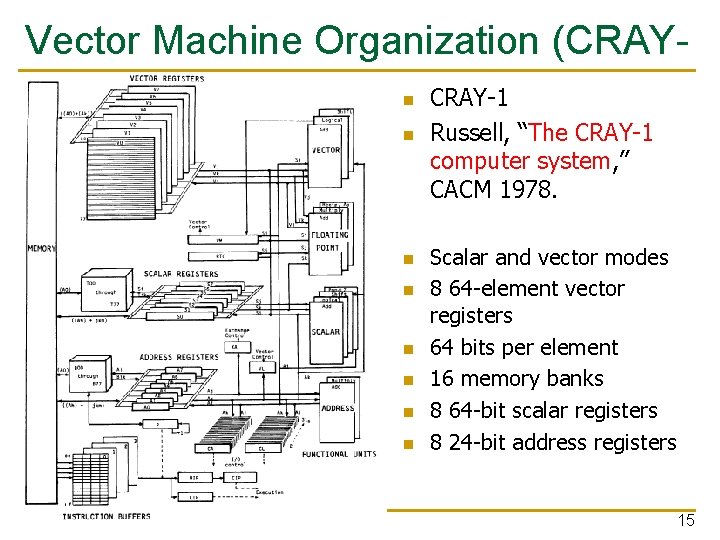

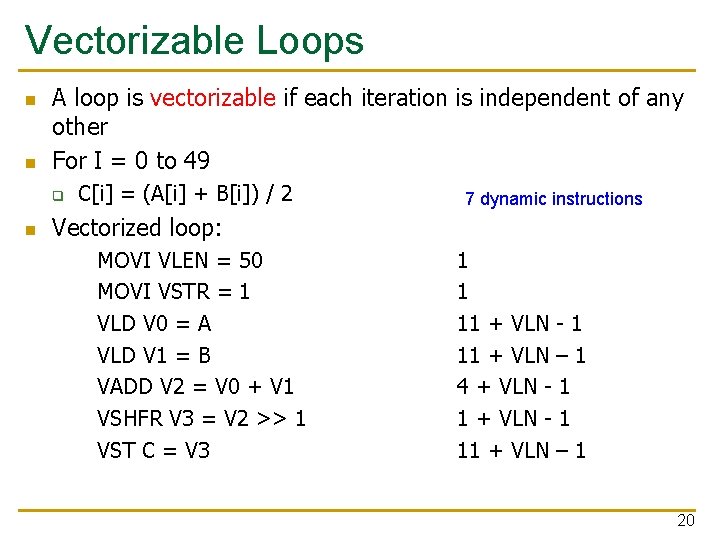

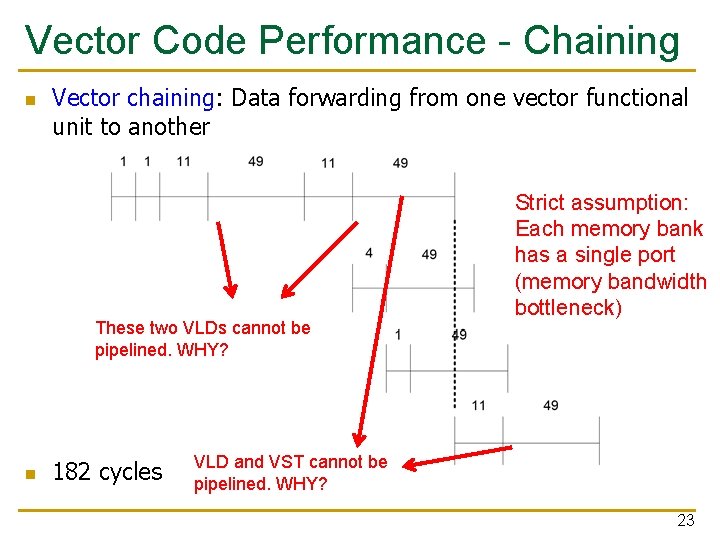

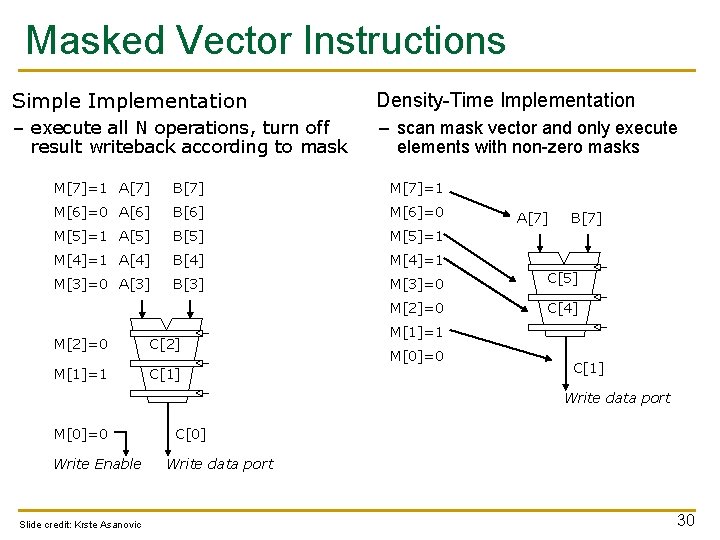

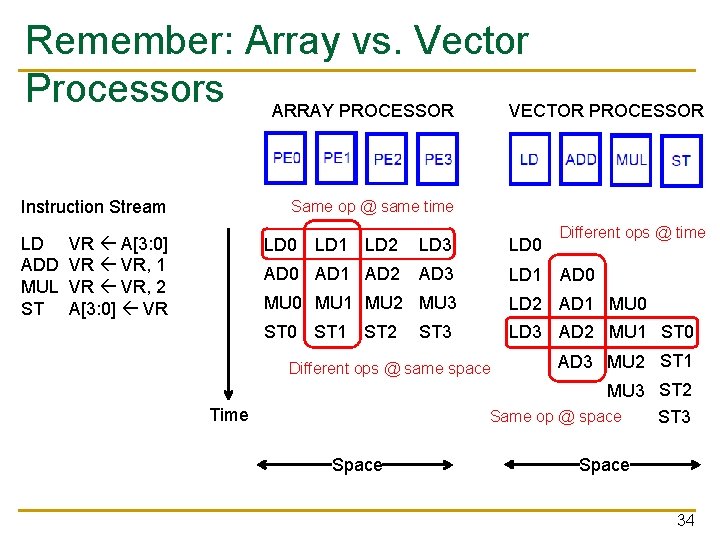

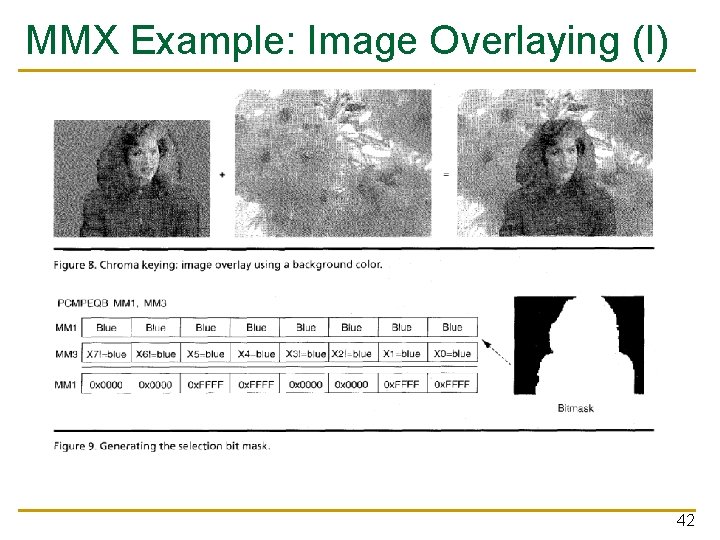

Automatic Code Vectorization for (i=0; i < N; i++) C[i] = A[i] + B[i]; Vectorized Code Scalar Sequential Code load Time Iter. 1 add store load Iter. 2 add store load Iter. 1 load add store Iter. 2 Vector Instruction Vectorization is a compile-time reordering of operation sequencing requires extensive loop dependence analysis Slide credit: Krste Asanovic 38

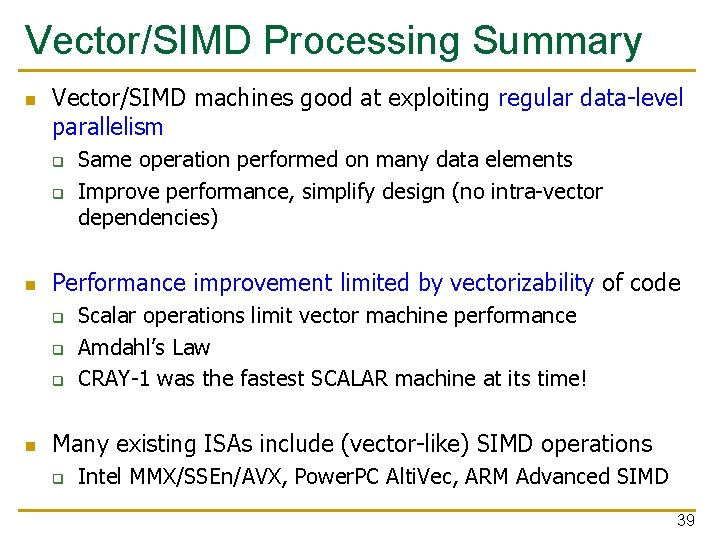

Vector/SIMD Processing Summary n Vector/SIMD machines good at exploiting regular data-level parallelism q q n Performance improvement limited by vectorizability of code q q q n Same operation performed on many data elements Improve performance, simplify design (no intra-vector dependencies) Scalar operations limit vector machine performance Amdahl’s Law CRAY-1 was the fastest SCALAR machine at its time! Many existing ISAs include (vector-like) SIMD operations q Intel MMX/SSEn/AVX, Power. PC Alti. Vec, ARM Advanced SIMD 39

SIMD Operations in Modern ISAs

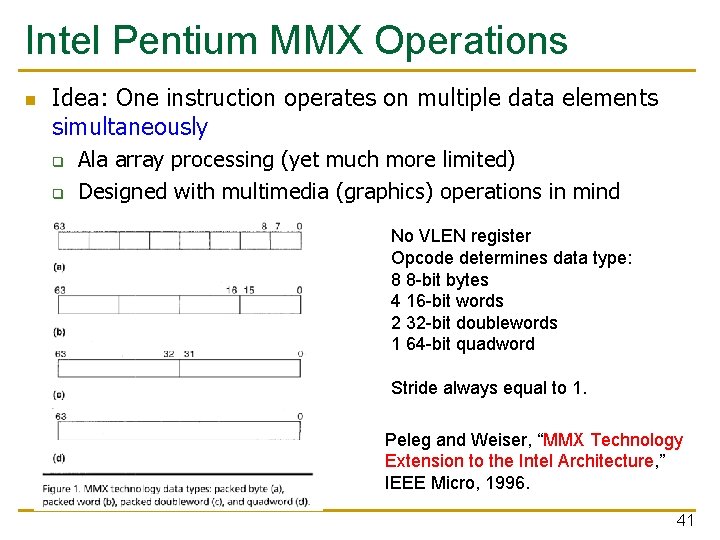

Intel Pentium MMX Operations n Idea: One instruction operates on multiple data elements simultaneously q q Ala array processing (yet much more limited) Designed with multimedia (graphics) operations in mind No VLEN register Opcode determines data type: 8 8 -bit bytes 4 16 -bit words 2 32 -bit doublewords 1 64 -bit quadword Stride always equal to 1. Peleg and Weiser, “MMX Technology Extension to the Intel Architecture, ” IEEE Micro, 1996. 41

MMX Example: Image Overlaying (I) 42

MMX Example: Image Overlaying (II) 43

Graphics Processing Units SIMD not Exposed to Programmer (SIMT)

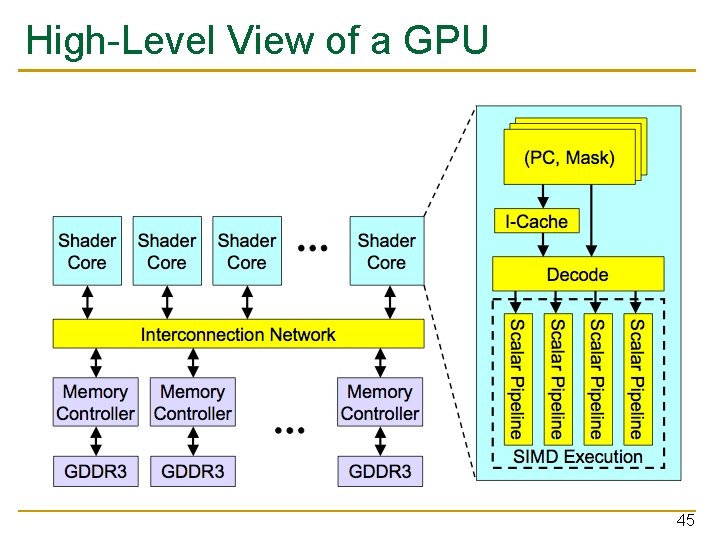

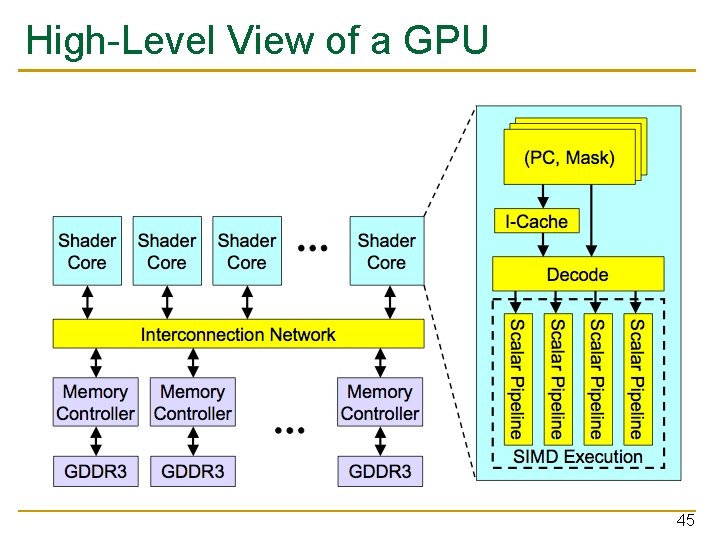

High-Level View of a GPU 45

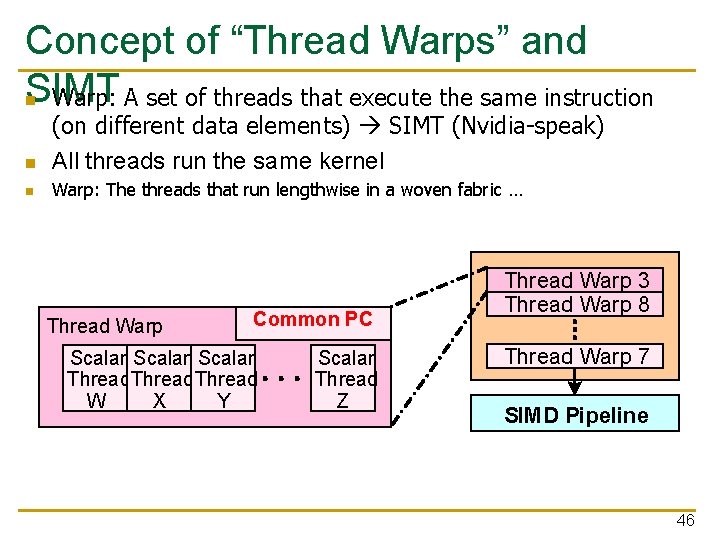

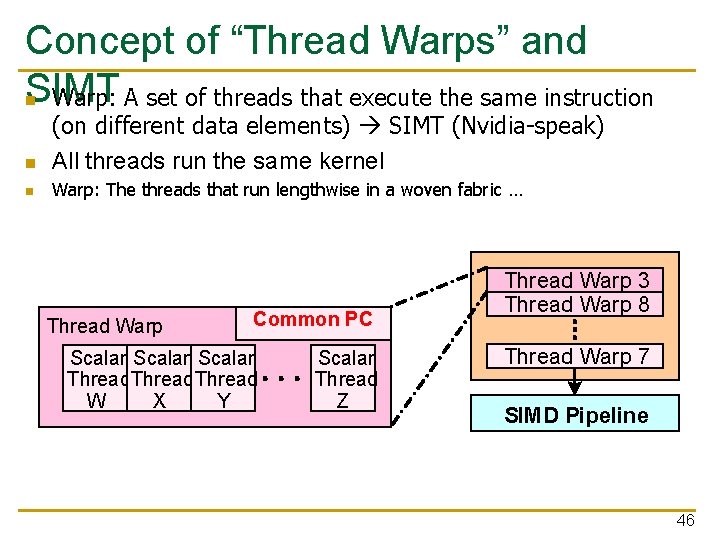

Concept of “Thread Warps” and SIMT n Warp: A set of threads that execute the same instruction n (on different data elements) SIMT (Nvidia-speak) All threads run the same kernel n Warp: The threads that run lengthwise in a woven fabric … Thread Warp Common PC Scalar Thread W X Y Scalar Thread Z Thread Warp 3 Thread Warp 8 Thread Warp 7 SIMD Pipeline 46

![Loop Iterations as Threads for i0 i N i Ci Ai Loop Iterations as Threads for (i=0; i < N; i++) C[i] = A[i] +](https://slidetodoc.com/presentation_image_h/ec0fa78e3f246b5994b6922a6a6beddc/image-47.jpg)

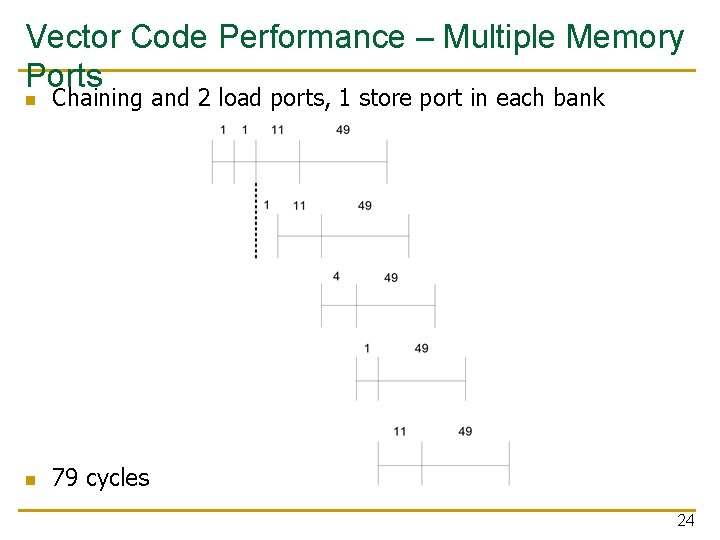

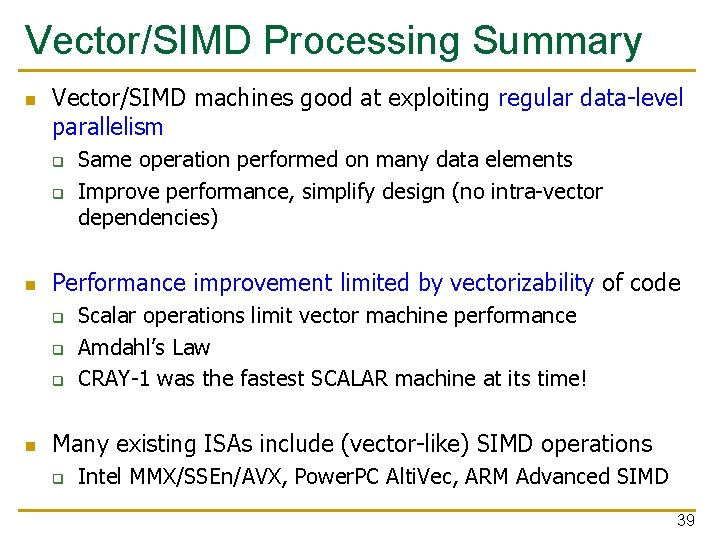

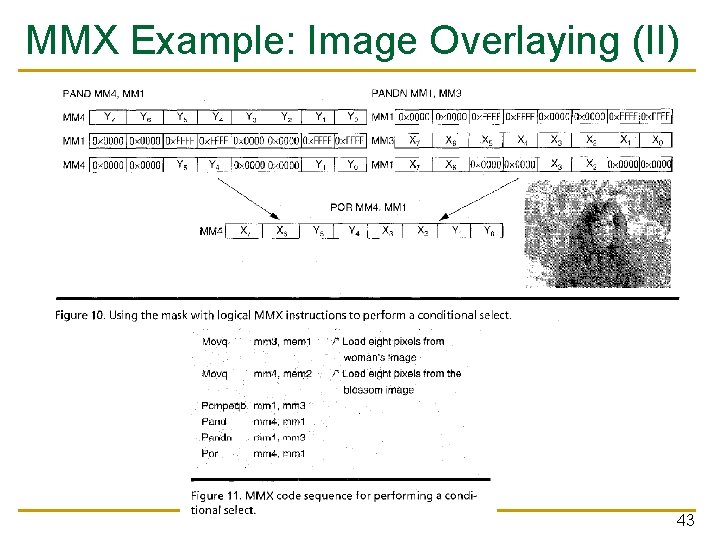

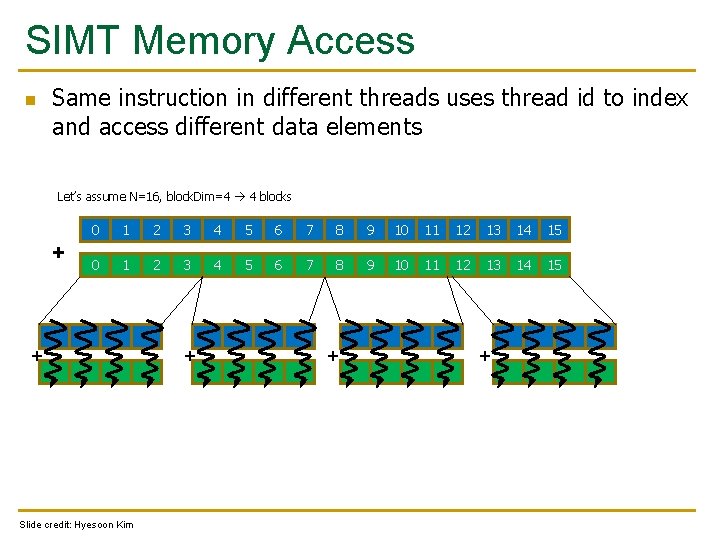

Loop Iterations as Threads for (i=0; i < N; i++) C[i] = A[i] + B[i]; Vectorized Code Scalar Sequential Code load Time Iter. 1 add store load Iter. 2 load Iter. 1 load add store Iter. 2 Vector Instruction add store Slide credit: Krste Asanovic 47

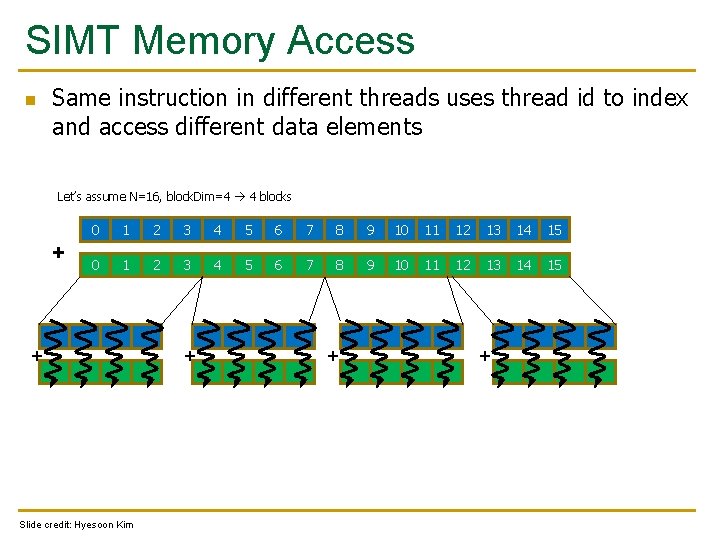

SIMT Memory Access n Same instruction in different threads uses thread id to index and access different data elements Let’s assume N=16, block. Dim=4 4 blocks + 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 + Slide credit: Hyesoon Kim + + +

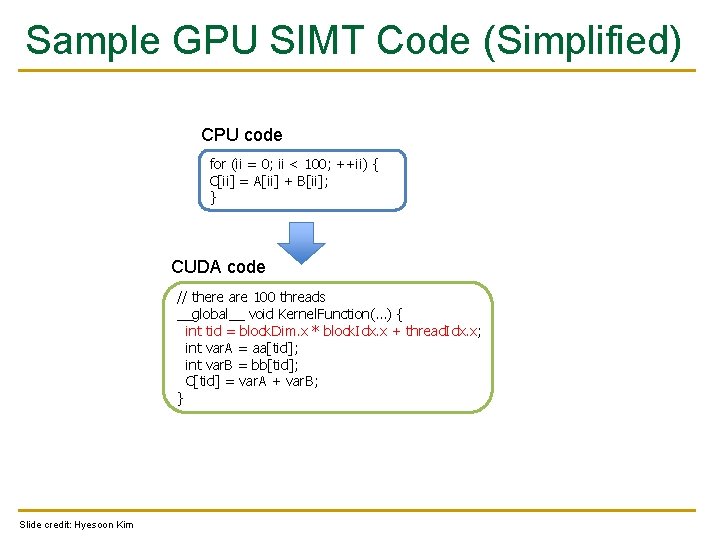

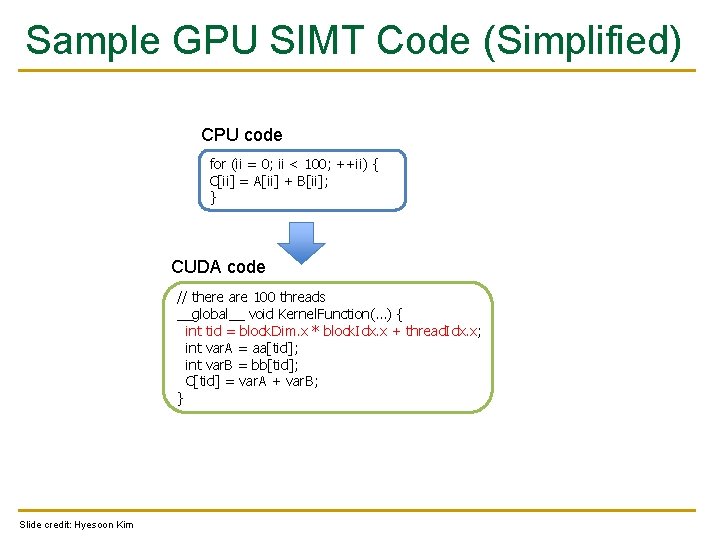

Sample GPU SIMT Code (Simplified) CPU code for (ii = 0; ii < 100; ++ii) { C[ii] = A[ii] + B[ii]; } CUDA code // there are 100 threads __global__ void Kernel. Function(…) { int tid = block. Dim. x * block. Idx. x + thread. Idx. x; int var. A = aa[tid]; int var. B = bb[tid]; C[tid] = var. A + var. B; } Slide credit: Hyesoon Kim

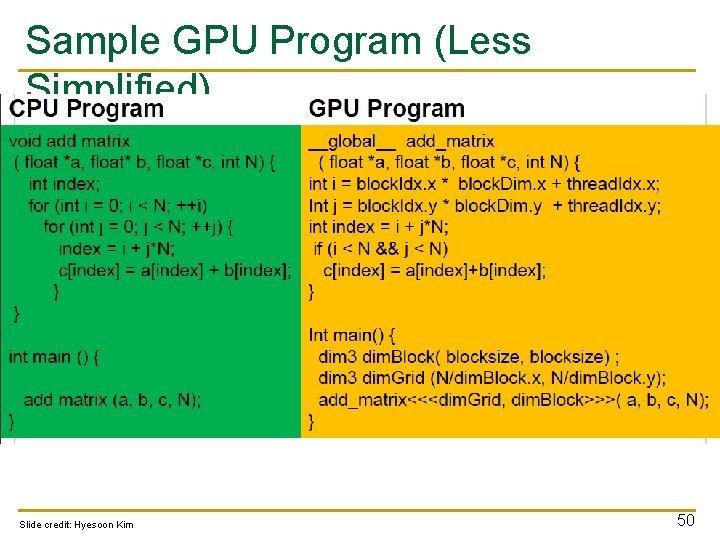

Sample GPU Program (Less Simplified) Slide credit: Hyesoon Kim 50

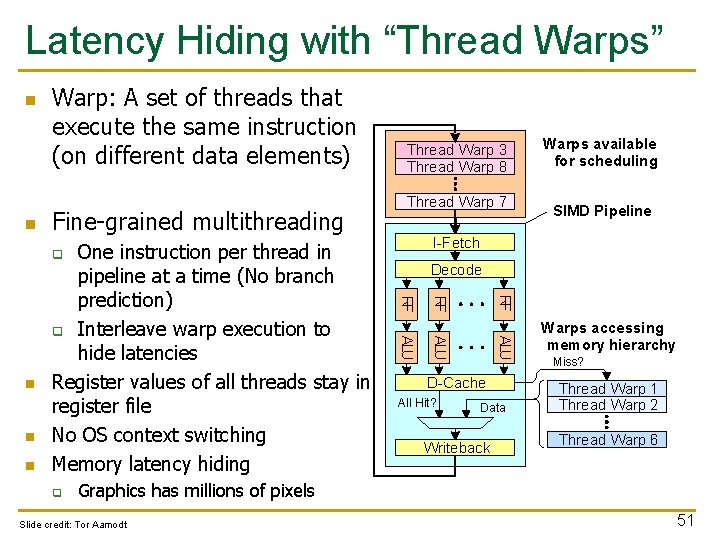

Latency Hiding with “Thread Warps” n n Warp: A set of threads that execute the same instruction (on different data elements) Fine-grained multithreading Thread Warp 7 RF ALU q ALU n SIMD Pipeline Decode RF n Warps available for scheduling I-Fetch q RF One instruction per thread in pipeline at a time (No branch prediction) q Interleave warp execution to hide latencies Register values of all threads stay in register file No OS context switching Memory latency hiding Thread Warp 3 Thread Warp 8 D-Cache All Hit? Data Writeback Warps accessing memory hierarchy Miss? Thread Warp 1 Thread Warp 2 Thread Warp 6 Graphics has millions of pixels Slide credit: Tor Aamodt 51

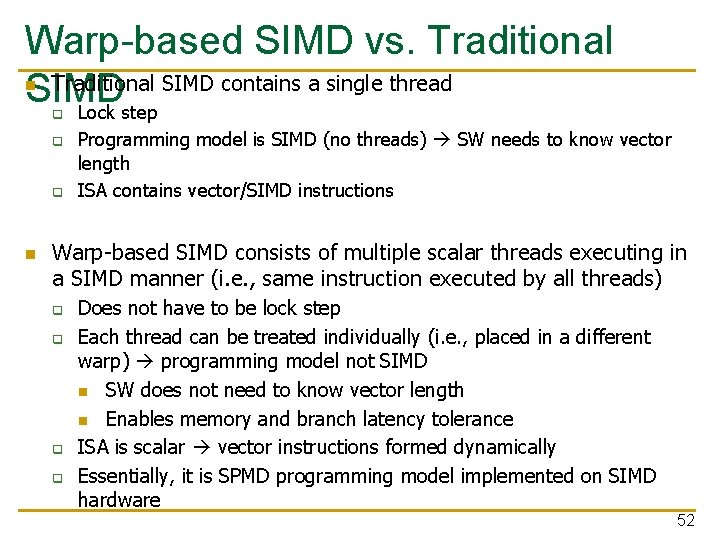

Warp-based SIMD vs. Traditional SIMD contains a single thread SIMD Lock step n q q q n Programming model is SIMD (no threads) SW needs to know vector length ISA contains vector/SIMD instructions Warp-based SIMD consists of multiple scalar threads executing in a SIMD manner (i. e. , same instruction executed by all threads) q q Does not have to be lock step Each thread can be treated individually (i. e. , placed in a different warp) programming model not SIMD n SW does not need to know vector length n Enables memory and branch latency tolerance ISA is scalar vector instructions formed dynamically Essentially, it is SPMD programming model implemented on SIMD hardware 52

SPMD n Single procedure/program, multiple data q n Each processing element executes the same procedure, except on different data elements q n This is a programming model rather than computer organization Procedures can synchronize at certain points in program, e. g. barriers Essentially, multiple instruction streams execute the same program q q q Each program/procedure can 1) execute a different control-flow path, 2) work on different data, at run-time Many scientific applications programmed this way and run on MIMD computers (multiprocessors) Modern GPUs programmed in a similar way on a SIMD computer 53

We did not cover the following slides in lecture. These are for your preparation for the next lecture.

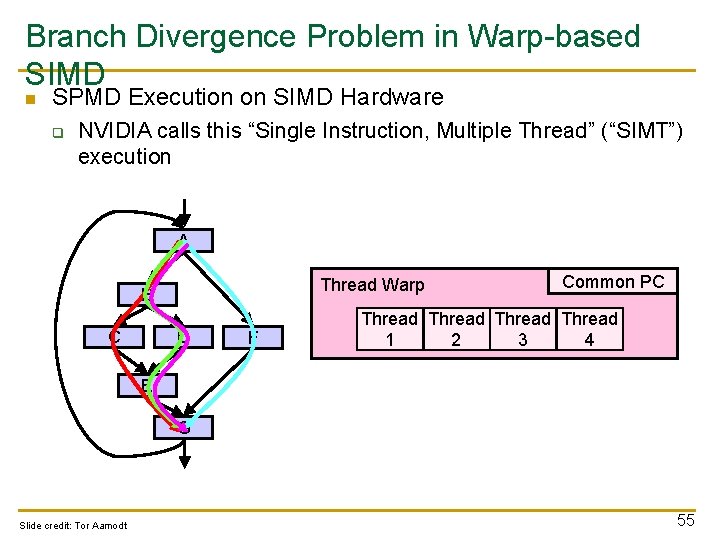

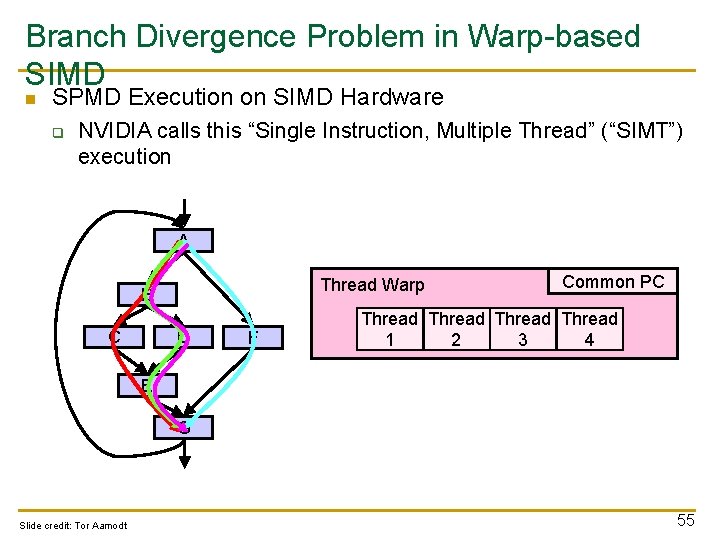

Branch Divergence Problem in Warp-based SIMD n SPMD Execution on SIMD Hardware q NVIDIA calls this “Single Instruction, Multiple Thread” (“SIMT”) execution A Thread Warp B C D F Common PC Thread 1 2 3 4 E G Slide credit: Tor Aamodt 55

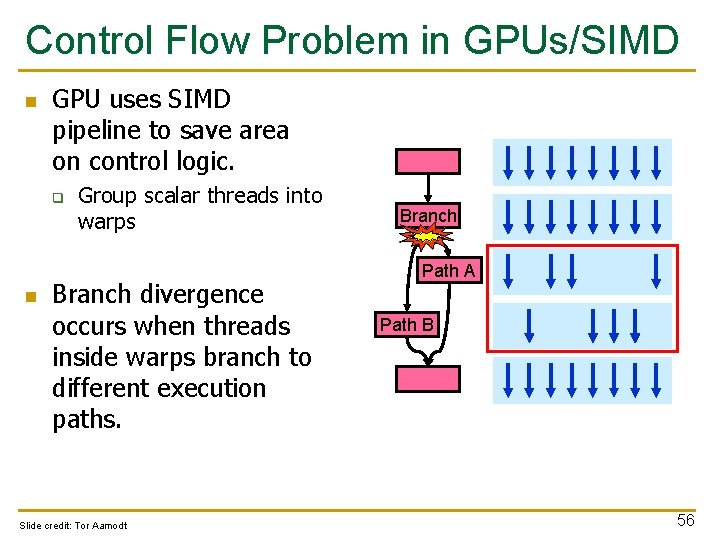

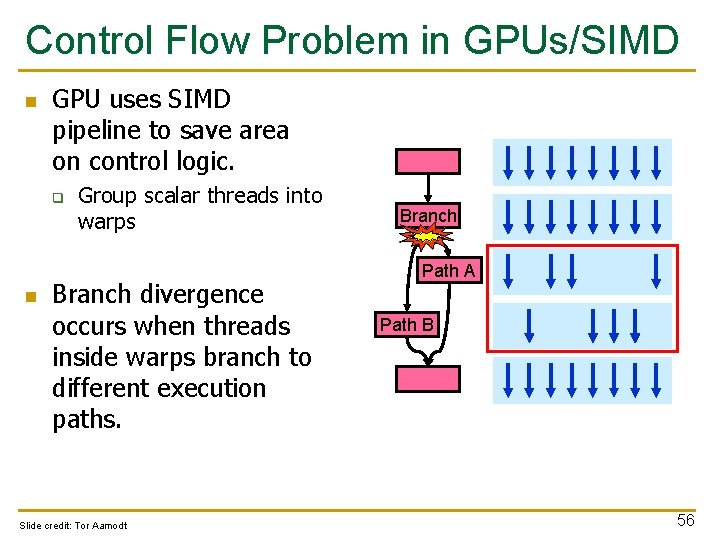

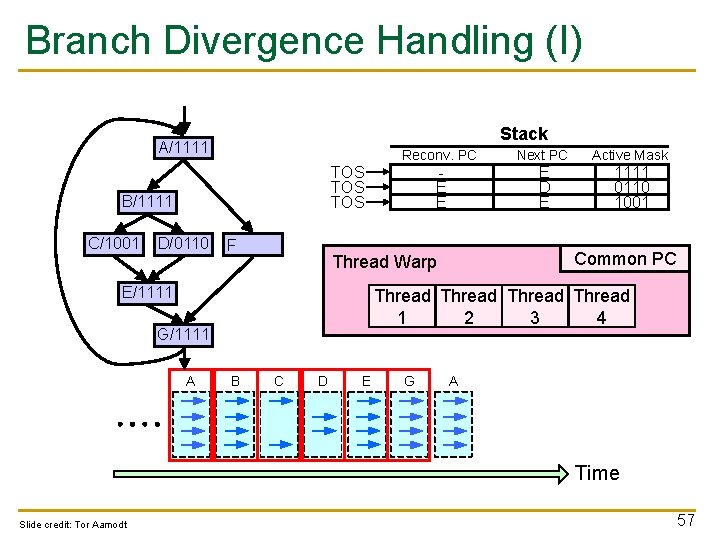

Control Flow Problem in GPUs/SIMD n GPU uses SIMD pipeline to save area on control logic. q n Group scalar threads into warps Branch divergence occurs when threads inside warps branch to different execution paths. Slide credit: Tor Aamodt Branch Path A Path B 56

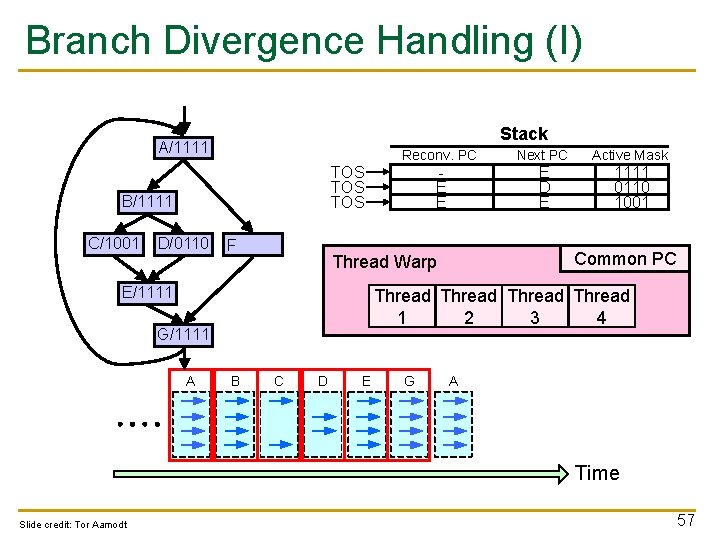

Branch Divergence Handling (I) Stack A/1111 A Reconv. PC B/1111 B C/1001 C E E TOS TOS D/0110 D F Active Mask 1111 0110 1001 Common PC Thread 1 2 3 4 G/1111 G A A B G E D C E Thread Warp E/1111 E Next PC B C D E G A Time Slide credit: Tor Aamodt 57

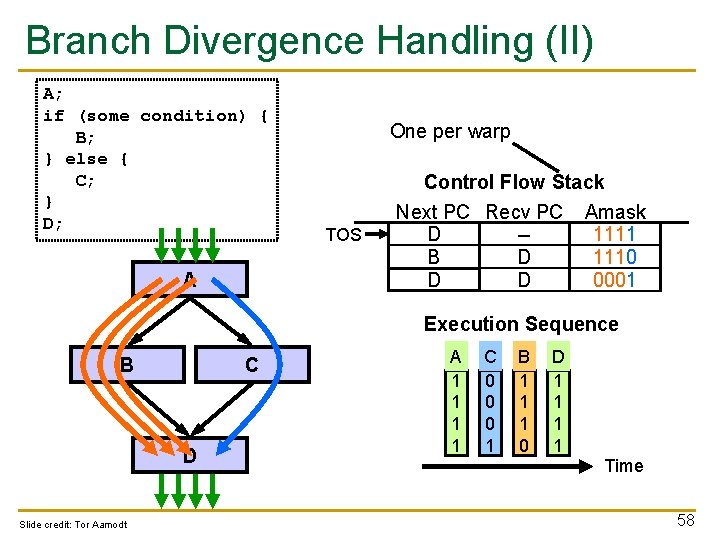

Branch Divergence Handling (II) A; if (some condition) { B; } else { C; } D; A One per warp TOS Control Flow Stack Next PC Recv PC Amask D A -1111 B D 1110 C D D 0001 Execution Sequence B C D Slide credit: Tor Aamodt A 1 1 C 0 0 0 1 B 1 1 1 0 D 1 1 Time 58

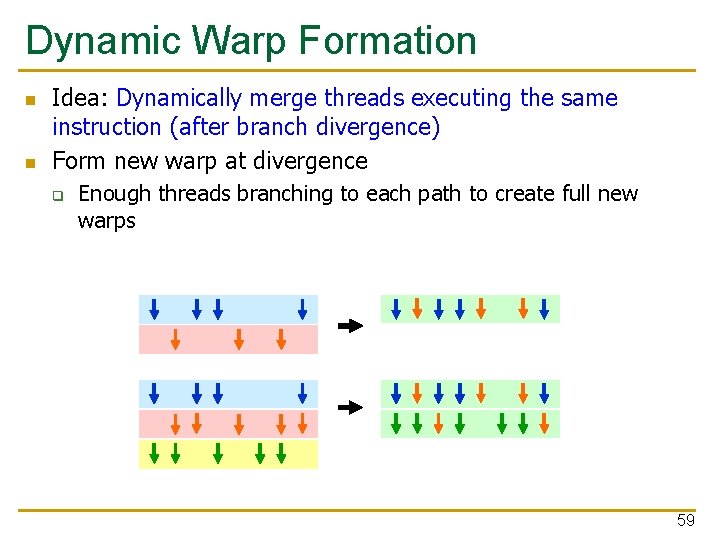

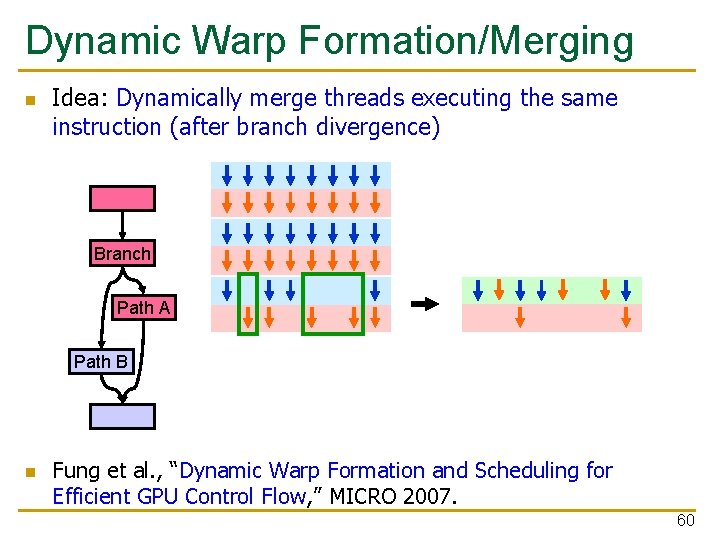

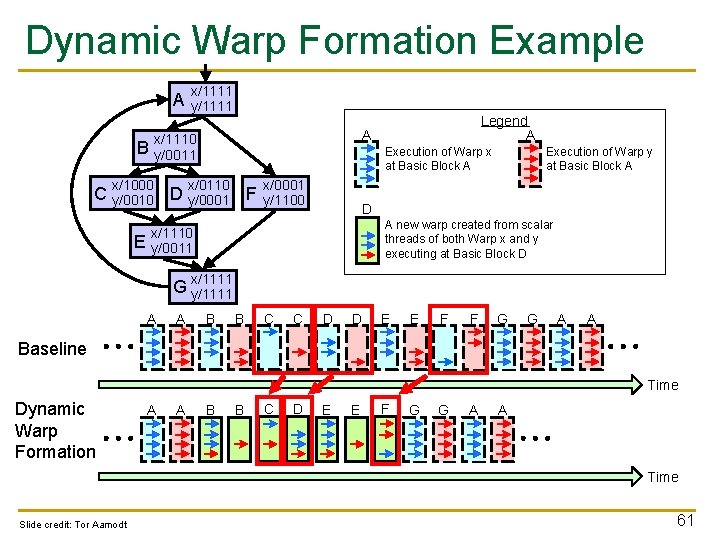

Dynamic Warp Formation n n Idea: Dynamically merge threads executing the same instruction (after branch divergence) Form new warp at divergence q Enough threads branching to each path to create full new warps 59

Dynamic Warp Formation/Merging n Idea: Dynamically merge threads executing the same instruction (after branch divergence) Branch Path A Path B n Fung et al. , “Dynamic Warp Formation and Scheduling for Efficient GPU Control Flow, ” MICRO 2007. 60

Dynamic Warp Formation Example A x/1111 y/1111 A x/1110 y/0011 B x/1000 Execution of Warp x at Basic Block A x/0110 C y/0010 D y/0001 F E Legend A x/0001 y/1100 Execution of Warp y at Basic Block A D A new warp created from scalar threads of both Warp x and y executing at Basic Block D x/1110 y/0011 x/1111 G y/1111 A A B B C C D D E E F F G G A A Baseline Time Dynamic Warp Formation A A B B C D E E F G G A A Time Slide credit: Tor Aamodt 61

What About Memory Divergence? n n Modern GPUs have caches Ideally: Want all threads in the warp to hit (without conflicting with each other) Problem: One thread in a warp can stall the entire warp if it misses in the cache. Need techniques to q q Tolerate memory divergence Integrate solutions to branch and memory divergence 62

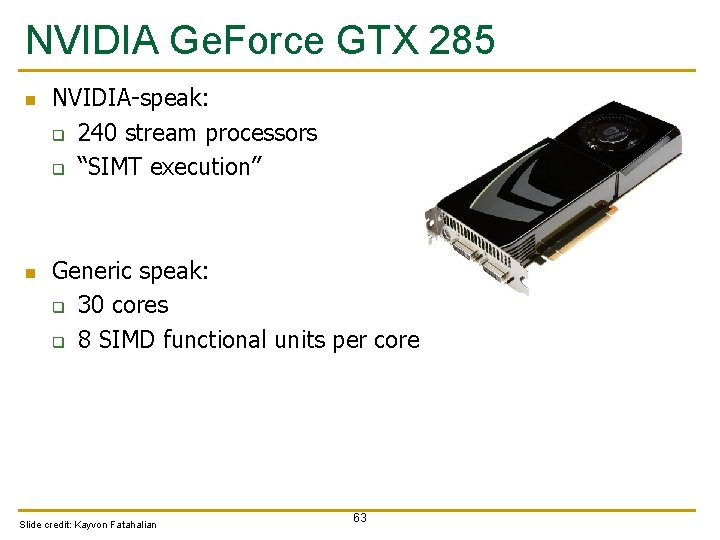

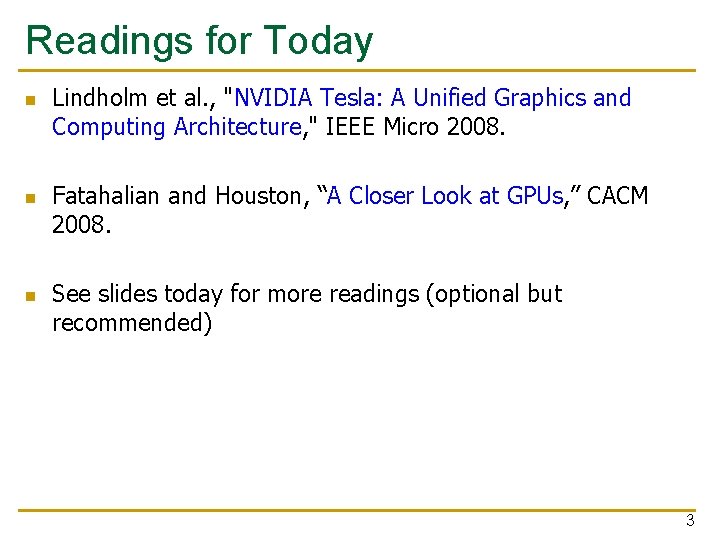

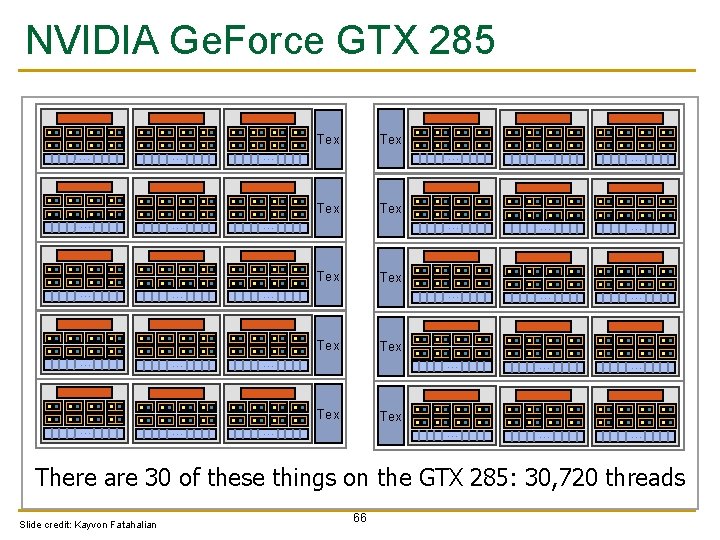

NVIDIA Ge. Force GTX 285 n n NVIDIA-speak: q 240 stream processors q “SIMT execution” Generic speak: q 30 cores q 8 SIMD functional units per core Slide credit: Kayvon Fatahalian 63

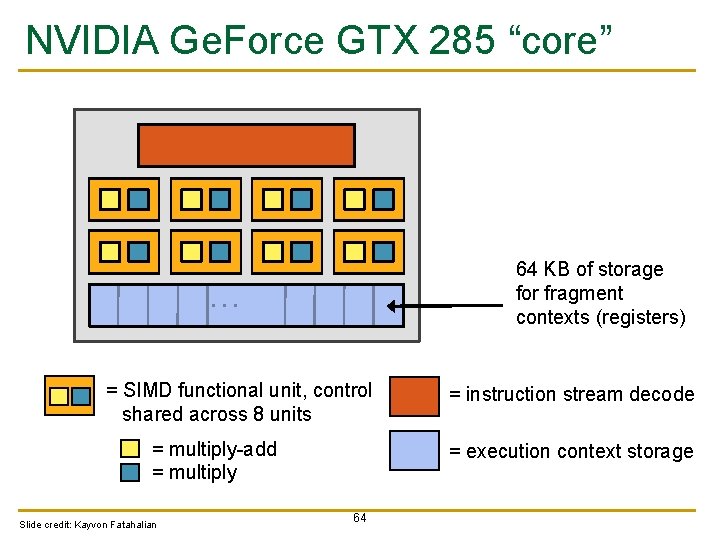

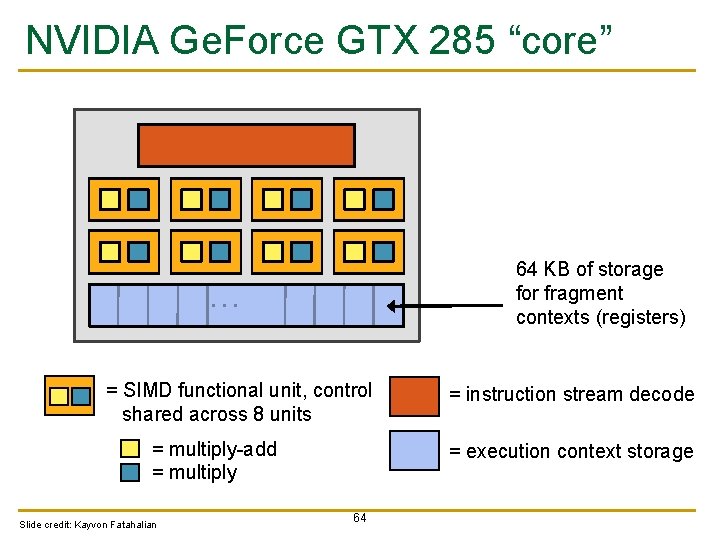

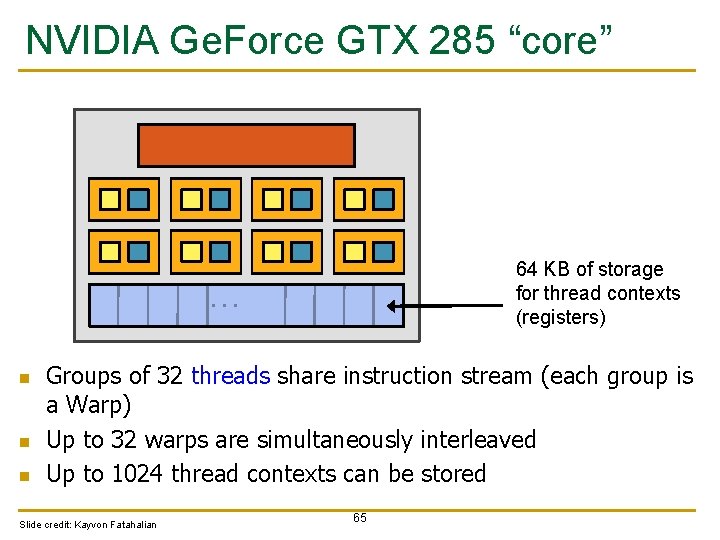

NVIDIA Ge. Force GTX 285 “core” 64 KB of storage for fragment contexts (registers) … = SIMD functional unit, control shared across 8 units = multiply-add = multiply Slide credit: Kayvon Fatahalian = instruction stream decode = execution context storage 64

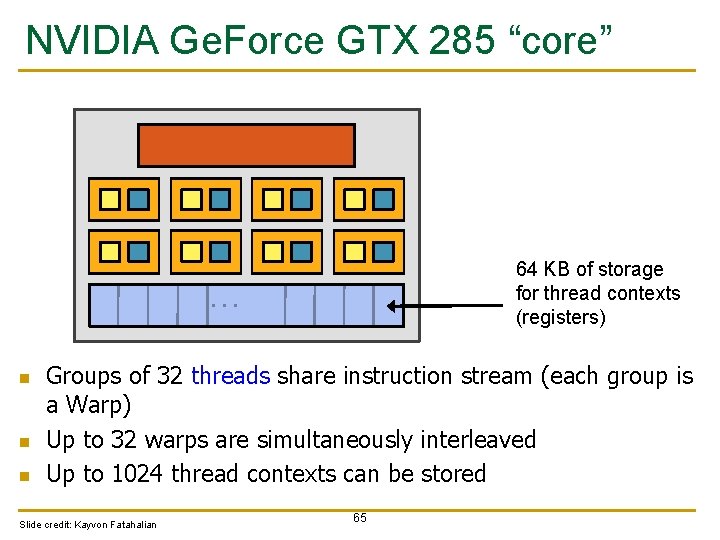

NVIDIA Ge. Force GTX 285 “core” 64 KB of storage for thread contexts (registers) … n n n Groups of 32 threads share instruction stream (each group is a Warp) Up to 32 warps are simultaneously interleaved Up to 1024 thread contexts can be stored Slide credit: Kayvon Fatahalian 65

NVIDIA Ge. Force GTX 285 Tex … … … … … … … Tex … … Tex … There are 30 of these things on the GTX 285: 30, 720 threads Slide credit: Kayvon Fatahalian 66