Computer Architecture Project Dynamic Branch Prediction using perceptrons

Computer Architecture Project Dynamic Branch Prediction using perceptrons Arjun Ratnam Balachandran Girish Vijayakumar Supervised by: Tao Li, Fall 2006 Computer Architecture Project Fall 2006 1

Motivation u Branch Prediction has always been a “hot” topic • 20% of all instructions are branches. Branch prediction is used to speculatively fetch and execute instructions along the predicted path. u Correct prediction makes faster execution • • Saves time and effort of the processor. High branch prediction rates lead to faster program execution and better Power Management. Misprediction has high costs u Recent efforts focus on removing aliasing alone • • Lack of focus on improving the accuracy of the prediction mechanism itself in the two level adaptive predictors. Computer Architecture Project Fall 2006 2

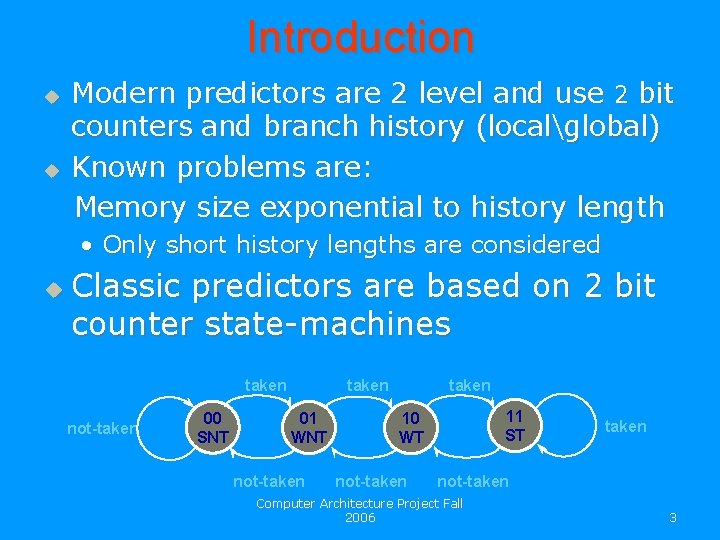

Introduction u u Modern predictors are 2 level and use 2 bit counters and branch history (localglobal) Known problems are: Memory size exponential to history length • Only short history lengths are considered u Classic predictors are based on 2 bit counter state-machines taken not-taken 00 SNT taken 01 WNT not-taken 11 ST 10 WT not-taken Computer Architecture Project Fall 2006 3

Project Objective u u Implement a mechanism for branch prediction Explore the mechanism practicability and applicability of such and its success rates Use of a known Neural Networks technology: The Perceptron Compare and analyze against “old” predictors using standard SPEC 2000 benchmarks. Computer Architecture Project Fall 2006 4

Project Requirements u u Develop for Simple. Scalar platform to simulate OOOE processors Run developed predictor on accepted benchmarks C language No hardware components equivalence needed, software implementation only Computer Architecture Project Fall 2006 5

Why choose perceptrons? Fastest learning capability among neural networks. Easiest to implement and tune. Easiest to understand. Low cost. Other neural methods which can be applied to branch prediction: - ADALINE ( Requires a lot of space) - Hebb ( Inaccurate) - Learning vector quantization ( Slow) Computer Architecture Project Fall 2006 6

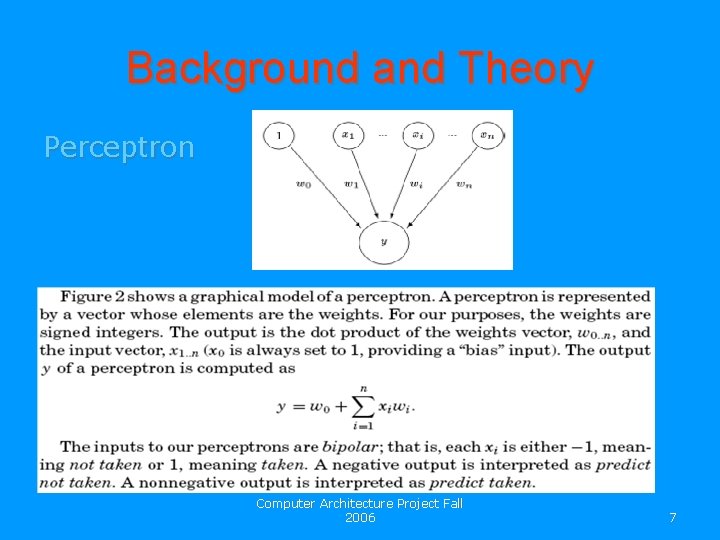

Background and Theory Perceptron Computer Architecture Project Fall 2006 7

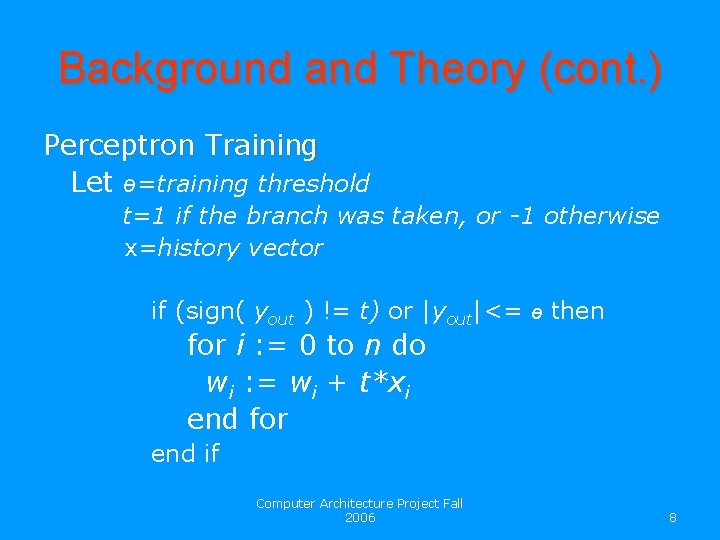

Background and Theory (cont. ) Perceptron Training Let ө=training threshold t=1 if the branch was taken, or -1 otherwise x=history vector if (sign( yout ) != t) or |yout|<= ө then for i : = 0 to n do wi : = wi + t*xi end for end if Computer Architecture Project Fall 2006 8

Development Stages 1. 2. 3. 4. 5. 6. Studying the background Learning the Simple. Scalar platform Evaluating the present prediction mechanism in Simple. Scalar using the standard benchmarks Understanding how branch prediction is handled in the Simple. Scalar platform Coding the perceptron predictor Benchmarking (smart environment) and comparison with the old prediction mechanism. Computer Architecture Project Fall 2006 9

Principles u u u Branch prediction needs a learning methodology, NN provides it based on inputs and outputs (patterns recognition) As history grows, the data structures of our predictor grows linearly. Perceptrons are used to learn correlations between particular branch outcomes in the global history and the behavior of the current branch. These correlations are represented by weights. The larger the weight, the stronger the correlation (The more that particular branch in the history contributes to the prediction of the current branch). The input to the bias weight is always 1, so instead of learning a correlation with a previous branch outcome, the bias weight learns the bias of the branch, independent of the history. Computer Architecture Project Fall 2006 10

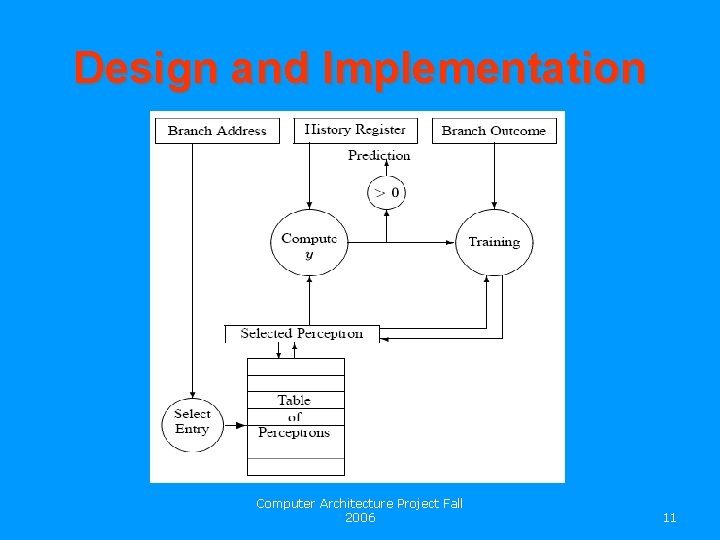

Design and Implementation Computer Architecture Project Fall 2006 11

Algorithm u u Fetch stage 1. The branch address is hashed to produce an index Є 0. . n - 1 into the table of perceptrons. 2. The i-th perceptron is fetched from the table into a vector register, of weights P. 3. The value of y is computed as the dot product of P and the global history register. 4. The branch is predicted not taken when y is negative, or taken otherwise. Execution stage 1. Once the actual outcome of the branch becomes known, the training algorithm uses this outcome and the value of y to update the weights in P (training) 2. P is written back to the i-th entry in the table. Computer Architecture Project Fall 2006 12

Hardware budget Given a fixed hardware budget, the three critical parameters to be tuned to achieve the best performance, u History length Long history length -> less neurons represented. u Threshold Used to decide whether the predictor needs more training. ө= 1. 93 h + 14 u Representation of weights Weights are signed integers. Computer Architecture Project Fall 2006 13

Progress and plans Tasks completed Study of the background of branch prediction mechanisms and neural networks. Learning the simplescalar platform. Understanding how branch prediction works in Simple. Scalar. Coding the perceptron based branch prediction mechanism (Not fully completed). Tasks ahead Implementing the perceptron based branch predictor. Evaluating the performance of this mechanism and comparing it with the performance of the existing gshare mechanism using the SPEC 2000 benchmarks. Analyzing methods to improve the performance of the perceptron method. Computer Architecture Project Fall 2006 14

Summary u We are implementing a different branch prediction mechanism and comparing with the existing ones. u Hardware implementation of the mechanism is hard, but possible u Longer history in perceptron helps getting better predictions u Once the implementation and comparison is finished, we will look at steps through which the perceptron prediction mechanism can be improved. Computer Architecture Project Fall 2006 15

- Slides: 15