COMPUTER ARCHITECTURE CS 6354 Branch Prediction I Samira

COMPUTER ARCHITECTURE CS 6354 Branch Prediction I Samira Khan University of Virginia Sep 26, 2018 The content and concept of this course are adapted from CMU ECE 740

AGENDA • Logistics • More on multi-core • Branch Prediction

LOGISTICS • Project proposal due today • Project proposal presentation • Oct 1 and Oct 3 • Each group gets 10 minutes for presentation, 2 minutes for Q&A session

USES OF ASYMMETRY • So far: • Improvement in serial performance (sequential bottleneck) • What else can we do with asymmetry? • Energy reduction? • Energy/performance tradeoff? • Improvement in parallel portion? 4

USES OF CMPs • Can you think about using these ideas to improve singlethreaded performance? • Implicit parallelization: thread level speculation • Slipstream processors • Leader-follower architectures • Helper threading • Prefetching • Branch prediction • Exception handling • Redundant execution to tolerate soft (and hard? ) errors 5

SLIPSTREAM PROCESSORS • Goal: use multiple hardware contexts to speed up single thread execution (implicitly parallelize the program) • Idea: Divide program execution into two threads: • Advanced thread executes a reduced instruction stream, speculatively • Redundant thread uses results, prefetches, predictions generated by advanced thread and ensures correctness • Benefit: Execution time of the overall program reduces • Core idea is similar to many thread-level speculation approaches, except with a reduced instruction stream • Sundaramoorthy et al. , “Slipstream Processors: Improving both Performance and Fault Tolerance, ” ASPLOS 2000. 6

SLIPSTREAMING • “At speeds in excess of 190 m. p. h. , high air pressure forms at the front of a race car and a partial vacuum forms behind it. This creates drag and limits the car’s top speed. • A second car can position itself close behind the first (a process called slipstreaming or drafting). This fills the vacuum behind the lead car, reducing its drag. And the trailing car now has less wind resistance in front (and by some accounts, the vacuum behind the lead car actually helps pull the trailing car). • As a result, both cars speed up by several m. p. h. : the two combined go faster than either can alone. ” 7

SLIPSTREAM PROCESSORS • Detect and remove ineffectual instructions; run a shortened “effectual” version of the program (Advanced or A-stream) in one thread context • Ensure correctness by running a complete version of the program (Redundant or R-stream) in another thread context • Shortened A-stream runs fast; R-stream consumes nearperfect control and data flow outcomes from A-stream and finishes close behind • Two streams together lead to faster execution (by helping each other) than a single one alone 8

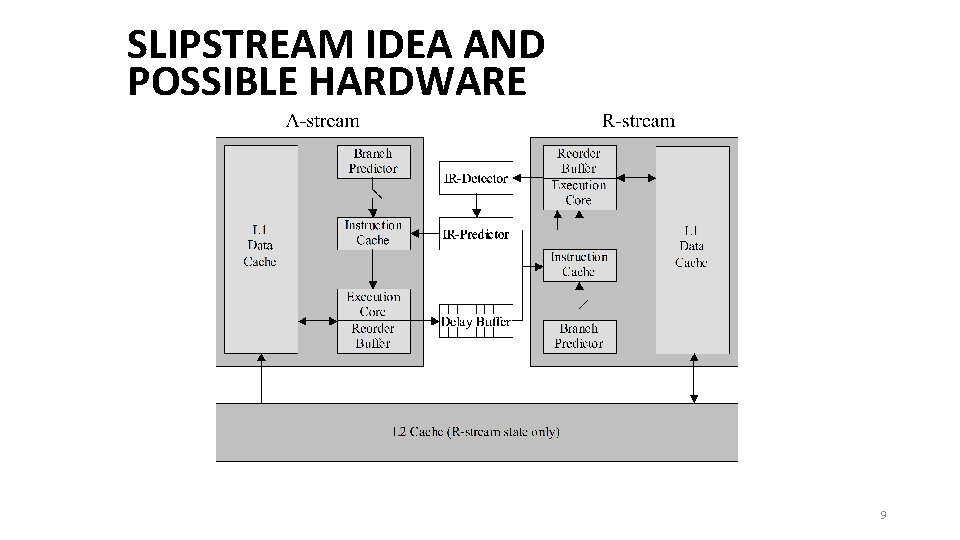

SLIPSTREAM IDEA AND POSSIBLE HARDWARE 9

INSTRUCTION REMOVAL IN SLIPSTREAM • IR detector • Monitors retired R-stream instructions • Detects ineffectual instructions and conveys them to the IR predictor • Ineffectual instruction examples: • dynamic instructions that repeatedly and predictably have no observable effect (e. g. , unreferenced writes, non-modifying writes) • dynamic branches whose outcomes are consistently predicted correctly. • IR predictor • Removes an instruction from A-stream after repeated indications from the IR detector • A stream skips ineffectual instructions, executes everything else and inserts their results into delay buffer • R stream executes all instructions but uses results from the delay buffer as predictions 10

WHAT IF A-STREAM DEVIATES FROM CORRECT EXECUTION? • Why • A-stream deviates due to incorrect removal or stale data access in L 1 data cache • How to detect it? • Branch or value misprediction happens in R-stream (known as an IR misprediction) • How to recover? • Restore A-stream register state: copy values from R-stream registers using delay buffer or shared-memory exception handler • Restore A-stream memory state: invalidate A-stream L 1 data cache (or speculatively written blocks by A-stream) 11

Slipstream Questions • How to construct the advanced thread • Original proposal: • Dynamically eliminate redundant instructions (silent stores, dynamically dead instructions) • Dynamically eliminate easy-to-predict branches • Other ways: • Dynamically ignore long-latency stalls • Static based on profiling • How to speed up the redundant thread • Original proposal: Reuse instruction results (control and data flow outcomes from the A-stream) • Other ways: Only use branch results and prefetched data as predictions 12

BRANCH PREDICTION

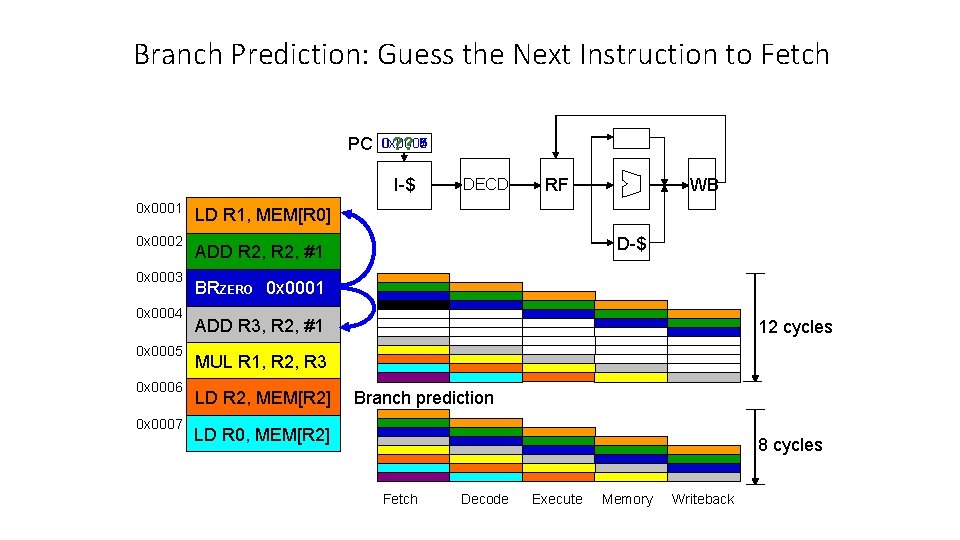

Branch Prediction: Guess the Next Instruction to Fetch PC 0 x 0006 0 x 0008 0 x 0007 0 x 0005 0 x 0004 ? ? I-$ 0 x 0001 0 x 0002 0 x 0003 0 x 0004 0 x 0005 0 x 0006 0 x 0007 DECD RF WB LD R 1, MEM[R 0] D-$ ADD R 2, #1 BRZERO 0 x 0001 ADD R 3, R 2, #1 12 cycles MUL R 1, R 2, R 3 LD R 2, MEM[R 2] Branch prediction LD R 0, MEM[R 2] 8 cycles Fetch Decode Execute Memory Writeback

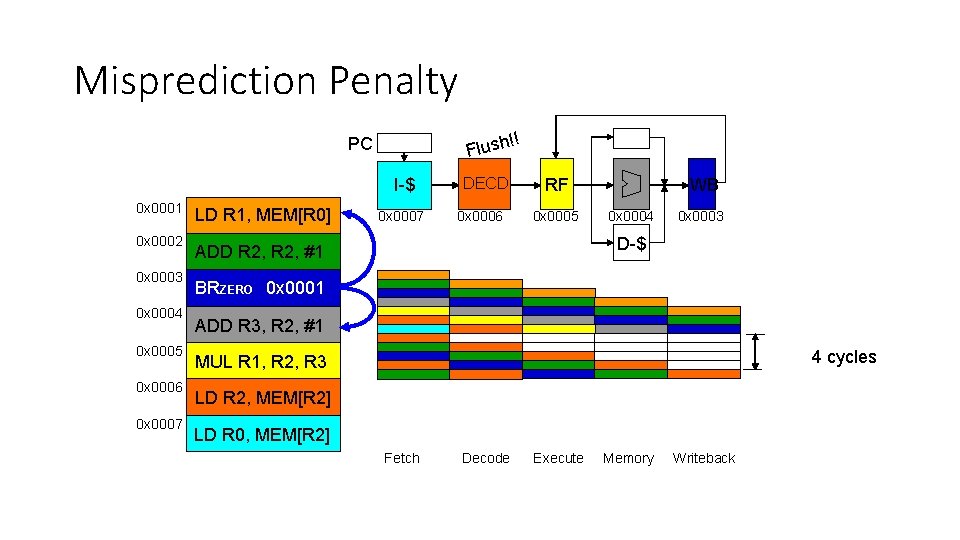

Misprediction Penalty !! Flush PC I-$ 0 x 0001 0 x 0002 0 x 0003 0 x 0004 0 x 0005 0 x 0006 0 x 0007 LD R 1, MEM[R 0] 0 x 0007 DECD 0 x 0006 RF 0 x 0005 WB 0 x 0004 0 x 0003 D-$ ADD R 2, #1 BRZERO 0 x 0001 ADD R 3, R 2, #1 4 cycles MUL R 1, R 2, R 3 LD R 2, MEM[R 2] LD R 0, MEM[R 2] Fetch Decode Execute Memory Writeback

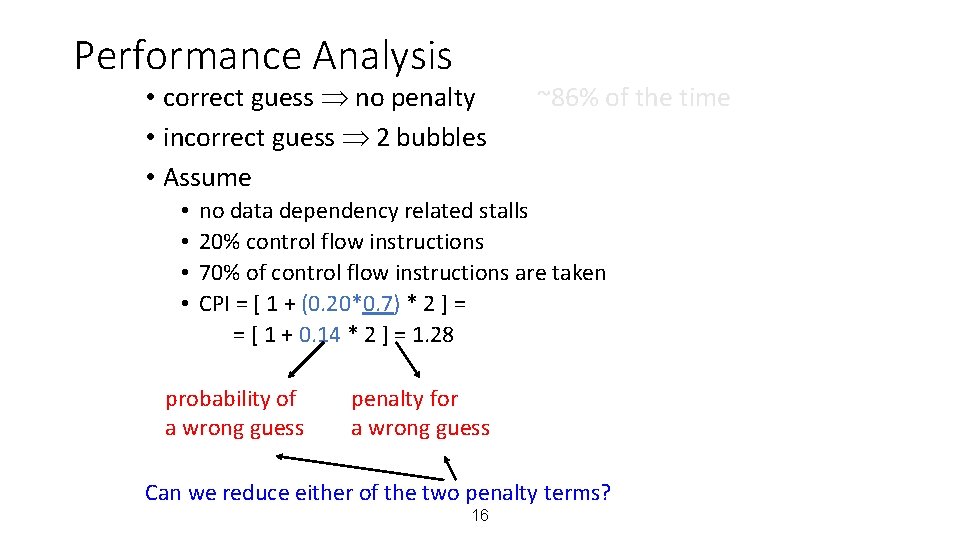

Performance Analysis • correct guess no penalty • incorrect guess 2 bubbles • Assume • • ~86% of the time no data dependency related stalls 20% control flow instructions 70% of control flow instructions are taken CPI = [ 1 + (0. 20*0. 7) * 2 ] = = [ 1 + 0. 14 * 2 ] = 1. 28 probability of a wrong guess penalty for a wrong guess Can we reduce either of the two penalty terms? 16

BRANCH PREDICTION • Idea: Predict the next fetch address (to be used in the next cycle) • Requires three things to be predicted at fetch stage: • Whether the fetched instruction is a branch • (Conditional) branch direction • Branch target address (if taken) • Observation: Target address remains the same for a conditional direct branch across dynamic instances • Idea: Store the target address from previous instance and access it with the PC • Called Branch Target Buffer (BTB) or Branch Target Address Cache 17

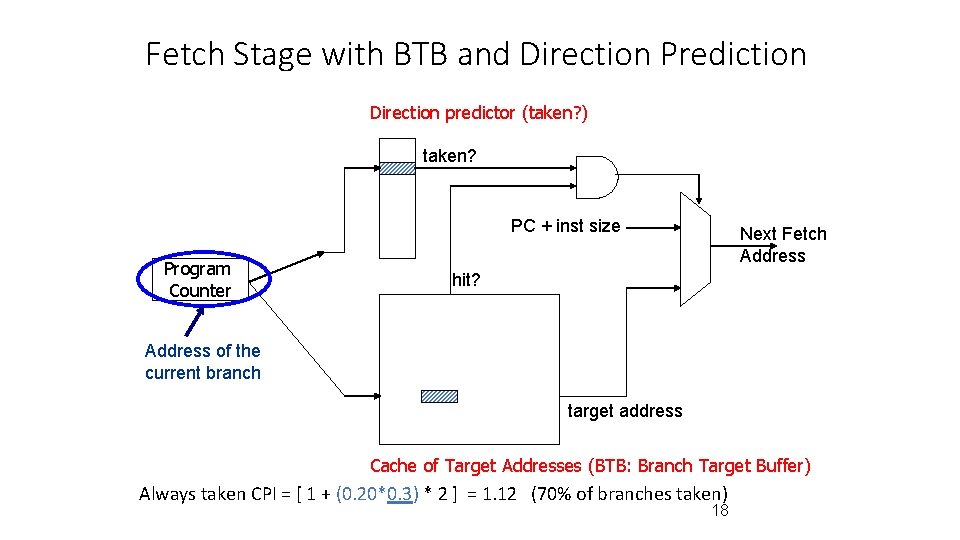

Fetch Stage with BTB and Direction Prediction Direction predictor (taken? ) taken? PC + inst size Program Counter Next Fetch Address hit? Address of the current branch target address Cache of Target Addresses (BTB: Branch Target Buffer) Always taken CPI = [ 1 + (0. 20*0. 3) * 2 ] = 1. 12 (70% of branches taken) 18

Three Things to Be Predicted • Requires three things to be predicted at fetch stage: 1. Whether the fetched instruction is a branch 2. (Conditional) branch direction 3. Branch target address (if taken) • Third (3. ) can be accomplished using a BTB • Remember target address computed last time branch was executed • First (1. ) can be accomplished using a BTB • If BTB provides a target address for the program counter, then it must be a branch • Or, we can store “branch metadata” bits in instruction cache/memory partially decoded instruction stored in I-cache • Second (2. ): How do we predict the direction? 19

HOW TO HANDLE CONTROL DEPENDENCES • Critical to keep the pipeline full with correct sequence of dynamic instructions. • Potential solutions if the instruction is a control-flow instruction: • • • Stall the pipeline until we know the next fetch address Guess the next fetch address (branch prediction) Employ delayed branching (branch delay slot) Eliminate control-flow instructions (predicated execution) Fetch from both possible paths (if you know the addresses of both possible paths) (multipath execution) 20

DELAYED BRANCHING • Change the semantics of a branch instruction • Branch after N instructions • Branch after N cycles • Idea: Delay the execution of a branch. N instructions (delay slots) that come after the branch are always executed regardless of branch direction. • Problem: How do you find instructions to fill the delay slots? • Branch must be independent of delay slot instructions • Unconditional branch: Easier to find instructions to fill the delay slot • Conditional branch: Condition computation should not depend on instructions in delay slots difficult to fill the delay slot 21

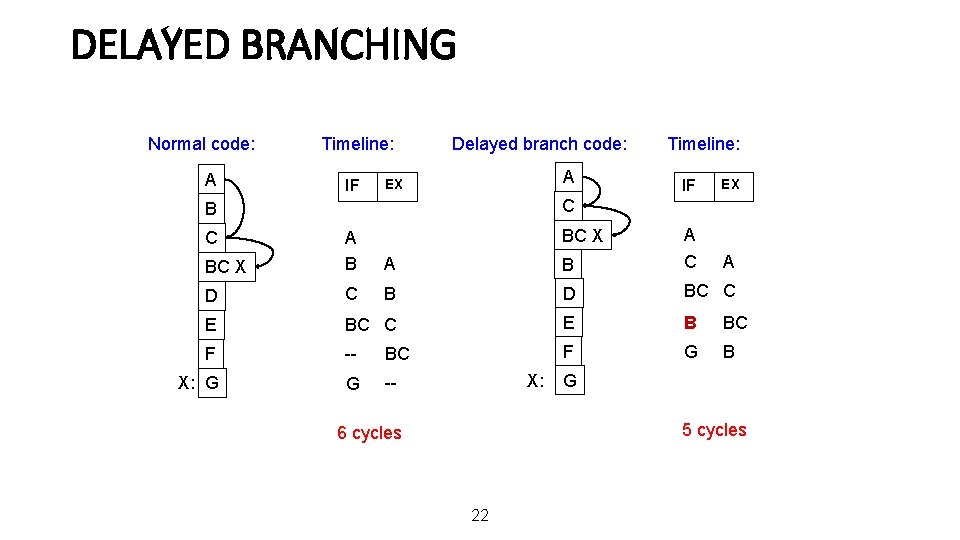

DELAYED BRANCHING Normal code: A Timeline: IF Delayed branch code: A EX Timeline: IF EX A C B BC X A B A C D C B D BC C E B BC F -- BC F G B X: G G -- C BC X X: G 5 cycles 6 cycles 22

DELAYED BRANCHING (III) • Advantages: + Keeps the pipeline full with useful instructions in a simple way assuming 1. Number of delay slots == number of instructions to keep the pipeline full before the branch resolves 2. All delay slots can be filled with useful instructions • Disadvantages: -- Not easy to fill the delay slots (even with a 2 -stage pipeline) 1. Number of delay slots increases with pipeline depth, superscalar execution width 2. Number of delay slots should be variable with variable latency operations. Why? -- Ties ISA semantics to hardware implementation -- SPARC, MIPS: 1 delay slot -- What if pipeline implementation changes with the next design? 23

HOW TO HANDLE CONTROL DEPENDENCES • Critical to keep the pipeline full with correct sequence of dynamic instructions. • Potential solutions if the instruction is a control-flow instruction: • • • Stall the pipeline until we know the next fetch address Guess the next fetch address (branch prediction) Employ delayed branching (branch delay slot) Eliminate control-flow instructions (predicated execution) Fetch from both possible paths (if you know the addresses of both possible paths) (multipath execution) 24

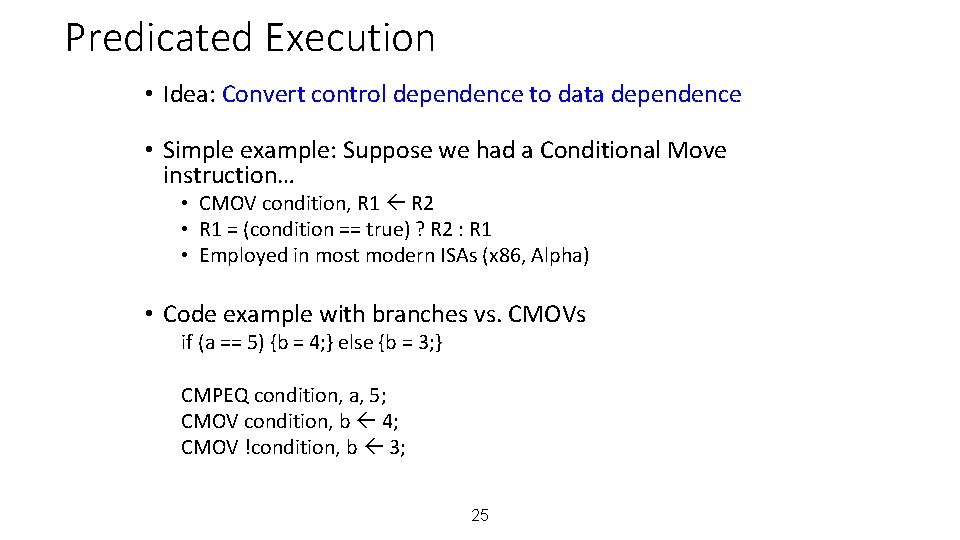

Predicated Execution • Idea: Convert control dependence to data dependence • Simple example: Suppose we had a Conditional Move instruction… • CMOV condition, R 1 R 2 • R 1 = (condition == true) ? R 2 : R 1 • Employed in most modern ISAs (x 86, Alpha) • Code example with branches vs. CMOVs if (a == 5) {b = 4; } else {b = 3; } CMPEQ condition, a, 5; CMOV condition, b 4; CMOV !condition, b 3; 25

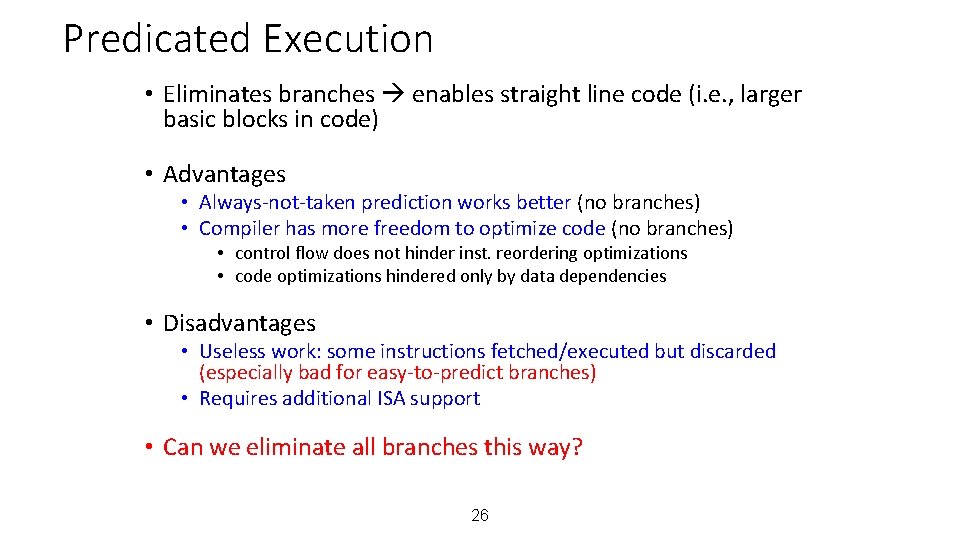

Predicated Execution • Eliminates branches enables straight line code (i. e. , larger basic blocks in code) • Advantages • Always-not-taken prediction works better (no branches) • Compiler has more freedom to optimize code (no branches) • control flow does not hinder inst. reordering optimizations • code optimizations hindered only by data dependencies • Disadvantages • Useless work: some instructions fetched/executed but discarded (especially bad for easy-to-predict branches) • Requires additional ISA support • Can we eliminate all branches this way? 26

How to Handle Control Dependences • Critical to keep the pipeline full with correct sequence of dynamic instructions. • Potential solutions if the instruction is a control-flow instruction: • • • Stall the pipeline until we know the next fetch address Guess the next fetch address (branch prediction) Employ delayed branching (branch delay slot) Eliminate control-flow instructions (predicated execution) Fetch from both possible paths (if you know the addresses of both possible paths) (multipath execution) 27

SIMPLE BRANCH DIRECTION PREDICTION SCHEMES • Compile time (static) • • Always not taken Always taken BTFN (Backward taken, forward not taken) Profile based (likely direction) • Run time (dynamic) • Last time prediction (single-bit) 28

MORE SOPHISTICATED DIRECTION PREDICTION • Compile time (static) • • • Always not taken Always taken BTFN (Backward taken, forward not taken) Profile based (likely direction) Program analysis based (likely direction) • Run time (dynamic) • • Last time prediction (single-bit) Two-bit counter based prediction Two-level prediction (global vs. local) Hybrid 29

STATIC BRANCH PREDICTION (I) • Always not-taken • Simple to implement: no need for BTB, no direction prediction • Low accuracy: ~30 -40% • Compiler can layout code such that the likely path is the “not-taken” path • Always taken • No direction prediction • Better accuracy: ~60 -70% • Backward branches (i. e. loop branches) are usually taken • Backward branch: target address lower than branch PC • Backward taken, forward not taken (BTFN) • Predict backward (loop) branches as taken, others not-taken 30

STATIC BRANCH PREDICTION (II) • Profile-based • Idea: Compiler determines likely direction for each branch using profile run. Encodes that direction as a hint bit in the branch instruction format. + Per branch prediction (more accurate than schemes in previous slide) accurate if profile is representative! -- Requires hint bits in the branch instruction format -- Accuracy depends on dynamic branch behavior: TTTTTNNNNN 50% accuracy TNTNTNTNTN 50% accuracy -- Accuracy depends on the representativeness of profile input set 31

STATIC BRANCH PREDICTION (III) • Program-based (or, program analysis based) • Idea: Use heuristics based on program analysis to determine staticallypredicted direction • Opcode heuristic: Predict BLEZ as NT (negative integers used as error values in many programs) • Loop heuristic: Predict a branch guarding a loop execution as taken (i. e. , execute the loop) • Pointer and FP comparisons: Predict not equal + Does not require profiling -- Heuristics might be not representative or good -- Requires compiler analysis and ISA support • Ball and Larus, ”Branch prediction for free, ” PLDI 1993. • 20% misprediction rate 32

STATIC BRANCH PREDICTION (III) • Programmer-based • Idea: Programmer provides the statically-predicted direction • Via pragmas in the programming language that qualify a branch as likelytaken versus likely-not-taken + Does not require profiling or program analysis + Programmer may know some branches and their program better than other analysis techniques -- Requires programming language, compiler, ISA support -- Burdens the programmer? 33

STATIC BRANCH PREDICTION • All previous techniques can be combined • Profile based • Programmer based • How would you do that? • What are common disadvantages of all three techniques? • Cannot adapt to dynamic changes in branch behavior • This can be mitigated by a dynamic compiler, but not at a fine granularity (and a dynamic compiler has its overheads…) 34

COMPUTER ARCHITECTURE CS 6354 Branch Prediction I Samira Khan University of Virginia Sep 26, 2018 The content and concept of this course are adapted from CMU ECE 740

- Slides: 35