Computer and Information Security Handbook Chapter 43 PrivacyEnhancing

Computer and Information Security Handbook Chapter 43 Privacy-Enhancing Technologies Simone Fischer-Hbner Stefan Berthold Karlstad University, Sweden Copyright © 2014, Elsevier Inc. All rights Reserved 1

n n n In most legal systems, the right to privacy applies to people only, not institutions Privacy can be in conflict with other human rights General scope of privacy laws n n The Concept of Privacy Define principles of collecting, processing, and storing personal data This chapter addresses informational privacy Copyright © 2014, Elsevier Inc. All rights Reserved 2

n Legitimacy of need to collect /store PII n n Purpose specification and purpose binding n n Informed consent, legal obligation, or contractual agreement Legal Privacy Principles Data cannot be processed in a way incompatible with the stated purpose Data minimization n Limit data collected to minimum required Copyright © 2014, Elsevier Inc. All rights Reserved 3

n Transparency and rights of data subjects n n Data subject must be informed of purpose and circumstances of data processing Legal Privacy Principles Security n Appropriate security mechanisms must be used to ensure personal data integrity, confidentiality, and availability Copyright © 2014, Elsevier Inc. All rights Reserved 4

n Privacy-enhancing technologies (PETs) n n Technologies that enforce legal privacy principles Classification of PETs Three different classes of PETs n n n Those that minimize or avoid the collection and use of personal data Those that enforce legal privacy requirements Those that combine characteristics of the first two classes Copyright © 2014, Elsevier Inc. All rights Reserved 5

n Anonymity of a subject n n Unlinkability n n Example: not being able to link the sender and recipient of a message Strongest privacy goal is unobservability n n Subject is not identifiable within a set of subjects Traditional Privacy Goals of PETs Combines undetectability and anonymity Pseudonymity n Use of pseudonyms as identifiers Copyright © 2014, Elsevier Inc. All rights Reserved 6

n Goal of privacy metrics n n All subjects that may have caused an event observed by the adversary K-anonymity n n Quantify the effectiveness of schemes or technologies in meeting privacy goals Simple metric: the anonymity set n n Privacy Metrics Property or requirement for databases that must not leak sensitive information Other metrics n 1 -diversity, T-closeness Copyright © 2014, Elsevier Inc. All rights Reserved 7

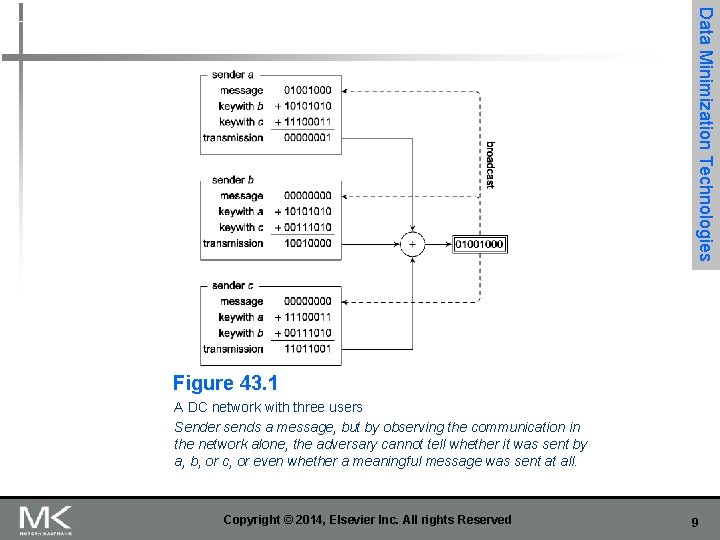

n Anonymous communication n n Users cannot be identified by their IP addresses Chaum’s DC network protocol n n Anonymous communication protocol Not easily used in practice Copyright © 2014, Elsevier Inc. All rights Reserved Data Minimization Technologies 8

Data Minimization Technologies Figure 43. 1 A DC network with three users Sender sends a message, but by observing the communication in the network alone, the adversary cannot tell whether it was sent by a, b, or c, or even whether a meaningful message was sent at all. Copyright © 2014, Elsevier Inc. All rights Reserved 9

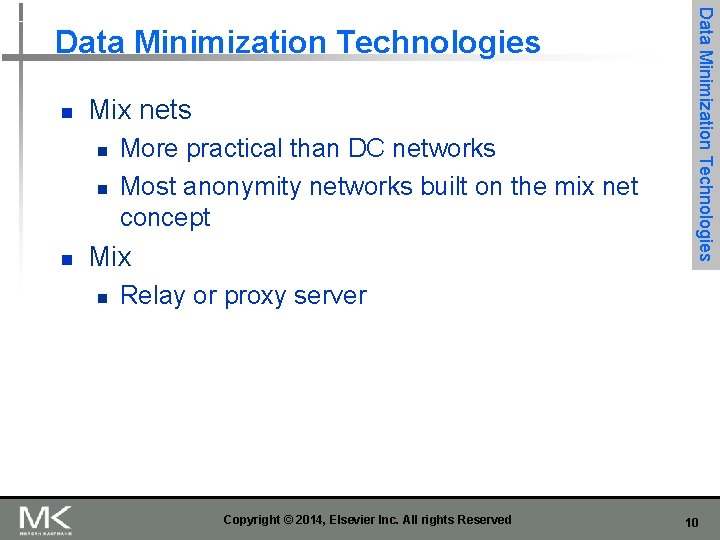

n Mix nets n n n More practical than DC networks Most anonymity networks built on the mix net concept Mix n Data Minimization Technologies Relay or proxy server Copyright © 2014, Elsevier Inc. All rights Reserved 10

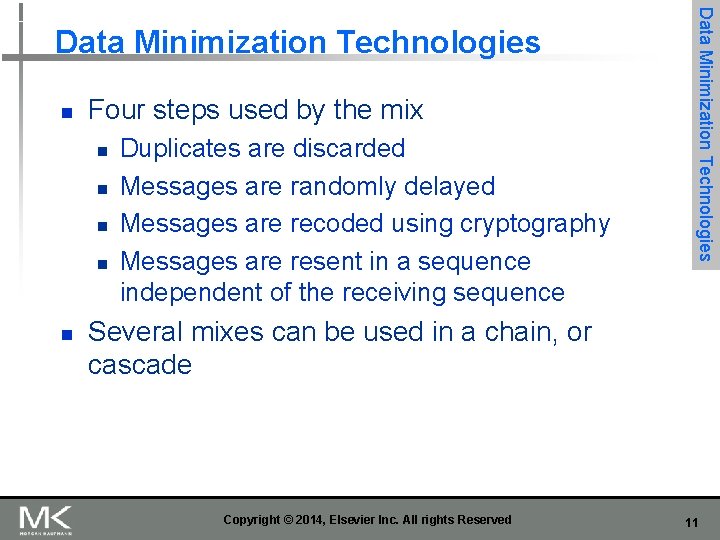

n Four steps used by the mix n n n Duplicates are discarded Messages are randomly delayed Messages are recoded using cryptography Messages are resent in a sequence independent of the receiving sequence Data Minimization Technologies Several mixes can be used in a chain, or cascade Copyright © 2014, Elsevier Inc. All rights Reserved 11

Data Minimization Technologies Figure 43. 2 Processing steps within a mix Different recoding functions are used. Copyright © 2014, Elsevier Inc. All rights Reserved 12

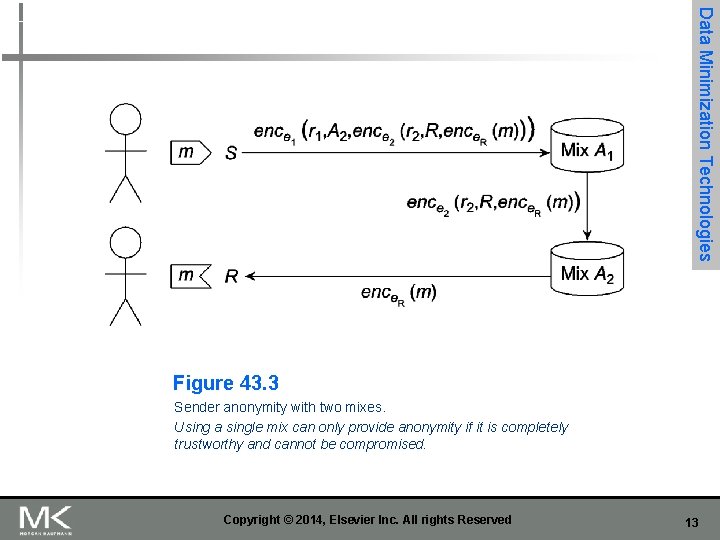

Data Minimization Technologies Figure 43. 3 Sender anonymity with two mixes. Using a single mix can only provide anonymity if it is completely trustworthy and cannot be compromised. Copyright © 2014, Elsevier Inc. All rights Reserved 13

n AN. ON n Anonymity service developed and operated since the late 1990 s n n n Technical University of Dresden Uses a network of mixes for low-latency traffic routing Messaging delays are minimal to none Copyright © 2014, Elsevier Inc. All rights Reserved Data Minimization Technologies 14

n Advantages of stable mix cascades n n Mixes can be audited and certified Cascades can be designed to cross national boundaries Security measures focus on a small number of mixes Data Minimization Technologies Disadvantages n n Each mix is a potential bottleneck Setting up and operating mixes is expensive Copyright © 2014, Elsevier Inc. All rights Reserved 15

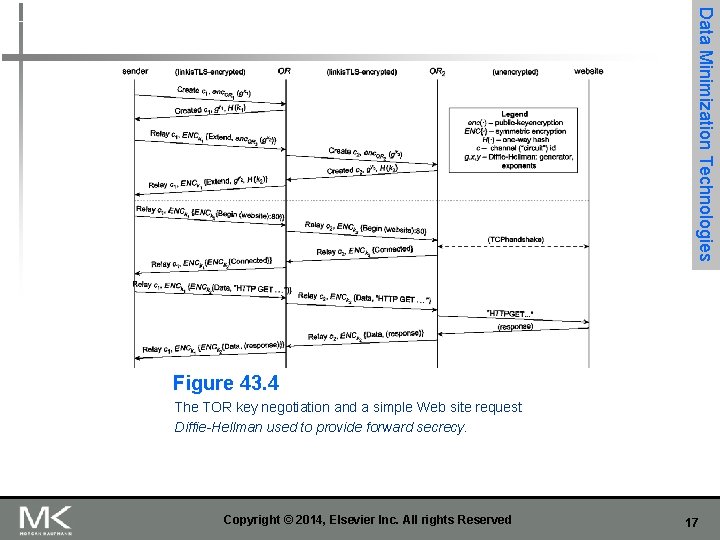

n Onion routing/Tor n n n Low-latency, mix-based routing protocol Developed in 1990 s at Naval Research Laboratory Provides anonymous socket connections using proxy servers Uses the mix net concept of layers of public key encryption Tor: second generation of onion routing n Data Minimization Technologies Uses Diffie-Hellman to provide forward secrecy Copyright © 2014, Elsevier Inc. All rights Reserved 16

Data Minimization Technologies Figure 43. 4 The TOR key negotiation and a simple Web site request Diffie-Hellman used to provide forward secrecy. Copyright © 2014, Elsevier Inc. All rights Reserved 17

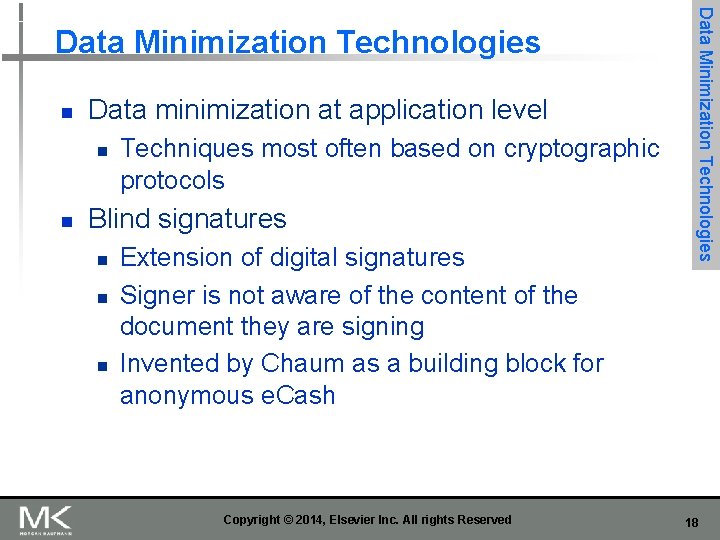

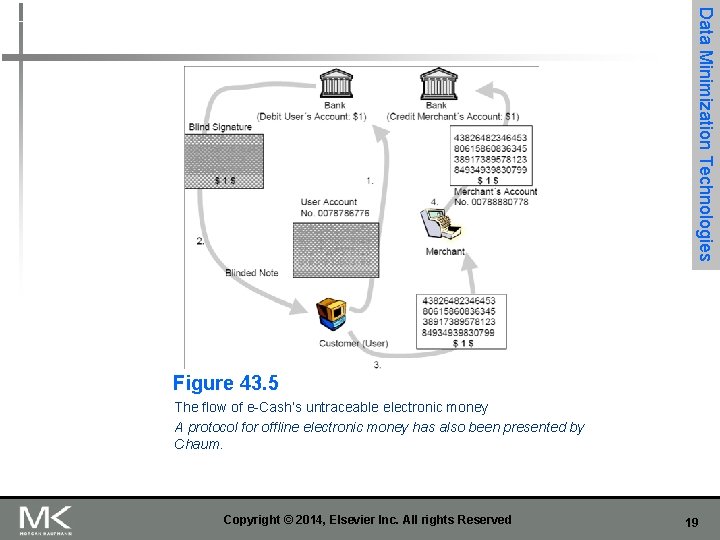

n Data minimization at application level n n Techniques most often based on cryptographic protocols Blind signatures n n n Extension of digital signatures Signer is not aware of the content of the document they are signing Invented by Chaum as a building block for anonymous e. Cash Copyright © 2014, Elsevier Inc. All rights Reserved Data Minimization Technologies 18

Data Minimization Technologies Figure 43. 5 The flow of e-Cash’s untraceable electronic money A protocol for offline electronic money has also been presented by Chaum. Copyright © 2014, Elsevier Inc. All rights Reserved 19

n n Tools for end users for making personal data processing more transparent Four classes of transparency-enhancing tools n n Those that provide information about the intended data collection and processing Those that provide the data subject with an overview of disclosed personal data Those that provide the data subject with online access to personal data Those that provide counter-profiling capabilities Copyright © 2014, Elsevier Inc. All rights Reserved Transparency-Enhancing Tools 20

n Ex-ante TETs n n Examples: privacy policy language tools, human-computer interaction components that make privacy policies more transparent Visualization techniques based on the concept of a “nutrition label” Copyright © 2014, Elsevier Inc. All rights Reserved Transparency-Enhancing Tools 21

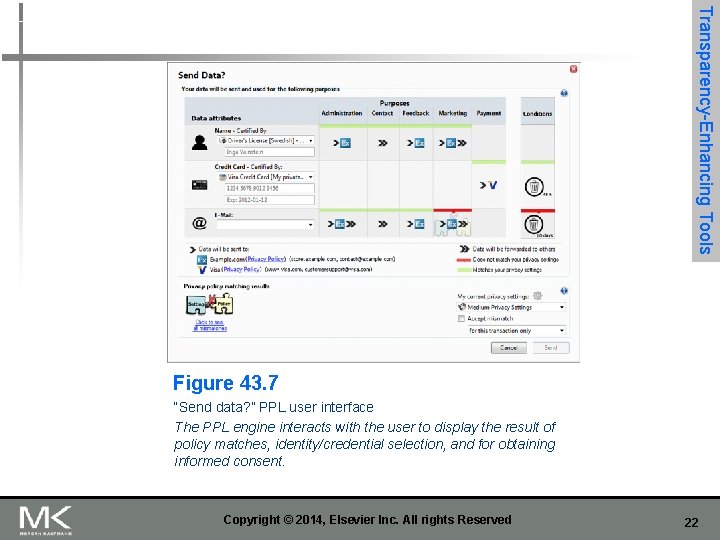

Transparency-Enhancing Tools Figure 43. 7 “Send data? ” PPL user interface The PPL engine interacts with the user to display the result of policy matches, identity/credential selection, and for obtaining informed consent. Copyright © 2014, Elsevier Inc. All rights Reserved 22

n Ex-post TETs n The data track: a user-side transparency tool n n n Google dashboard n n n Includes history and online access functions Transactions are stored at the user side or in the cloud Transparency-Enhancing Tools Shows users a summary of data stored within a user account Does not show all data has been used, however Prime. Life Project secure logging system Copyright © 2014, Elsevier Inc. All rights Reserved 23

Summary n n Privacy-enhancing technologies (PETs) can be classified into three categories Privacy metrics attempt to quantify the concepts of anonymity, unlinkability, unobservability, and pseudonymity Transparency-enhancing technologies exist to make processing of personal data more transparent PET solutions have not been adopted widely by industry or users Copyright © 2014, Elsevier Inc. All rights Reserved 24

- Slides: 24