Computational Lexical Semantics Om Damani IIT Bombay Study

Computational Lexical Semantics Om Damani, IIT Bombay

Study of Word Meaning n Word Sense Disambiguation Word Similarity Word. Net Relations n Do we really know the meaning of meaning n n q We will just take the dictionary definition as meaning

Word Sense Disambiguation (WSD) WSD Applications: Search, ______

Sense Inventory n n n Wordnet, Dictionary etc. Plant in English Wordnet (#senses ? ? ): Noun Senses: q q plant, works, industrial plant (buildings for carrying on industrial labor) "they built a large plant to manufacture automobiles" plant, flora, plant life ((botany) a living organism lacking the power of locomotion) plant (an actor situated in the audience whose acting is rehearsed but seems spontaneous to the audience) plant (something planted secretly for discovery by another) "the police used a plant to trick the thieves"; "he claimed that the evidence against him was a plant"

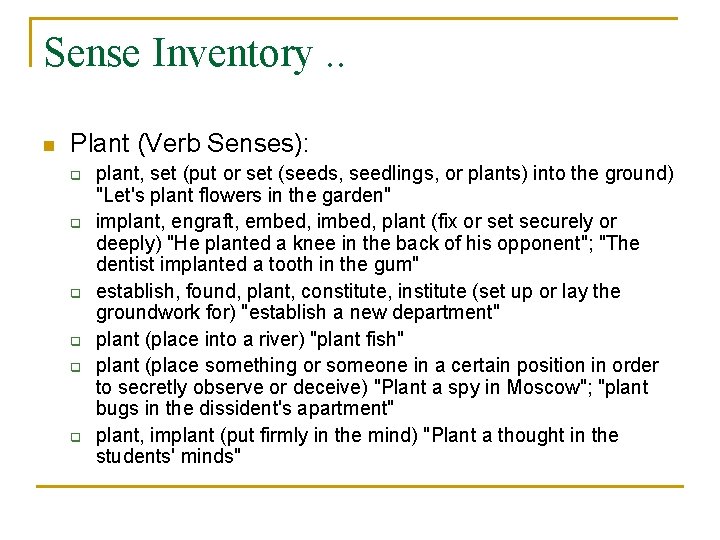

Sense Inventory. . n Plant (Verb Senses): q q q plant, set (put or set (seeds, seedlings, or plants) into the ground) "Let's plant flowers in the garden" implant, engraft, embed, imbed, plant (fix or set securely or deeply) "He planted a knee in the back of his opponent"; "The dentist implanted a tooth in the gum" establish, found, plant, constitute, institute (set up or lay the groundwork for) "establish a new department" plant (place into a river) "plant fish" plant (place something or someone in a certain position in order to secretly observe or deceive) "Plant a spy in Moscow"; "plant bugs in the dissident's apartment" plant, implant (put firmly in the mind) "Plant a thought in the students' minds"

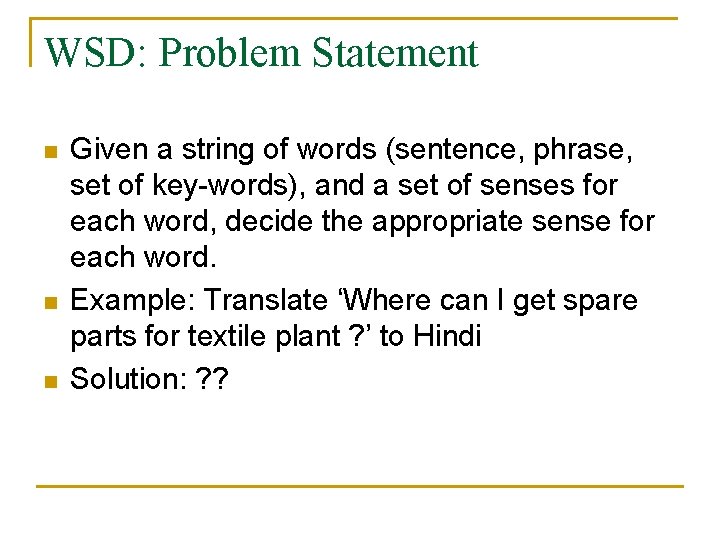

WSD: Problem Statement n n n Given a string of words (sentence, phrase, set of key-words), and a set of senses for each word, decide the appropriate sense for each word. Example: Translate ‘Where can I get spare parts for textile plant ? ’ to Hindi Solution: ? ?

Solution Approaches n Solution depends on what resources do you have: q q q Definition, Gloss Topic/Category label for each sense definition Selectional preference for each sense Sense Marked Corpora Parallel Sense-Marked Corpora

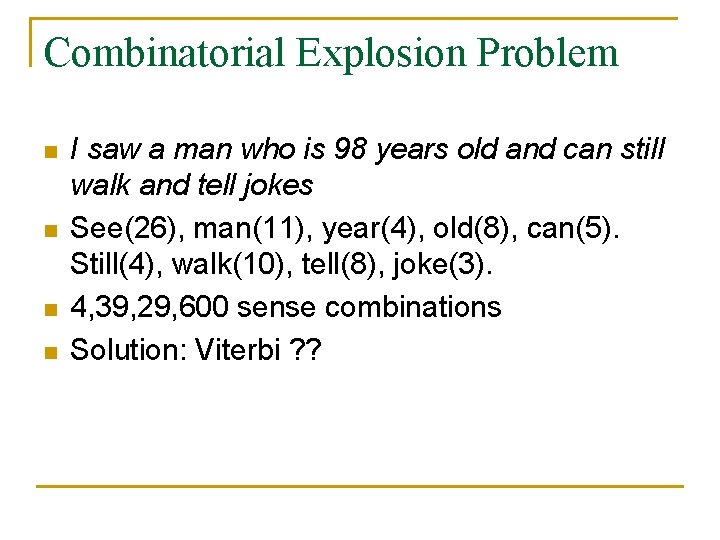

Combinatorial Explosion Problem n n I saw a man who is 98 years old and can still walk and tell jokes See(26), man(11), year(4), old(8), can(5). Still(4), walk(10), tell(8), joke(3). 4, 39, 29, 600 sense combinations Solution: Viterbi ? ?

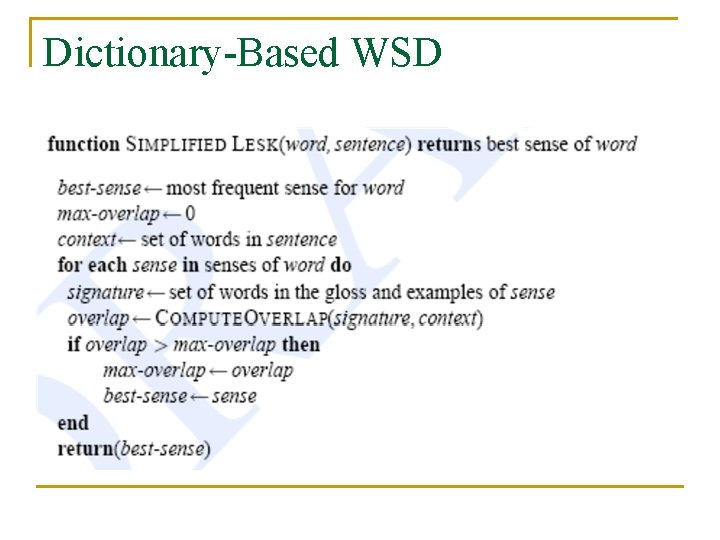

Dictionary-Based WSD

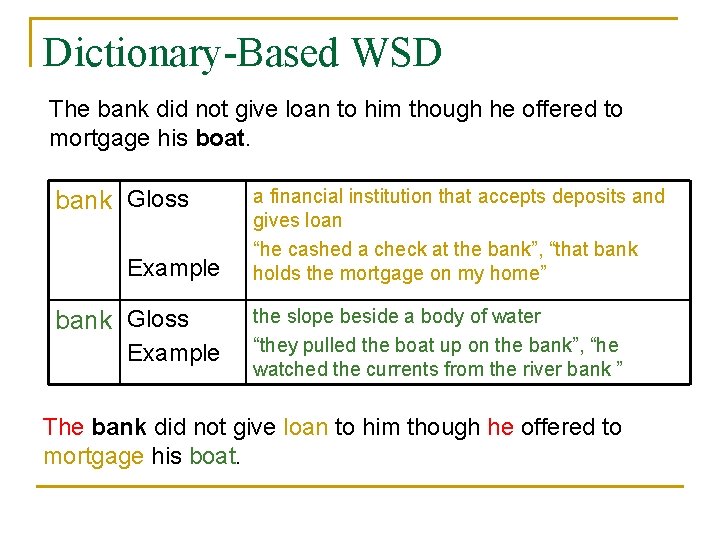

Dictionary-Based WSD The bank did not give loan to him though he offered to mortgage his boat. bank Gloss Example a financial institution that accepts deposits and gives loan “he cashed a check at the bank”, “that bank holds the mortgage on my home” the slope beside a body of water “they pulled the boat up on the bank”, “he watched the currents from the river bank ” The bank did not give loan to him though he offered to mortgage his boat.

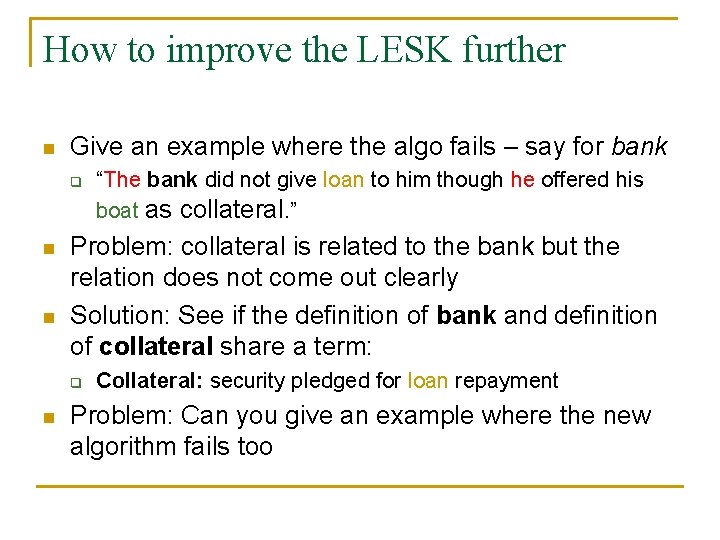

How to improve the LESK further n Give an example where the algo fails – say for bank q n n Problem: collateral is related to the bank but the relation does not come out clearly Solution: See if the definition of bank and definition of collateral share a term: q n “The bank did not give loan to him though he offered his boat as collateral. ” Collateral: security pledged for loan repayment Problem: Can you give an example where the new algorithm fails too

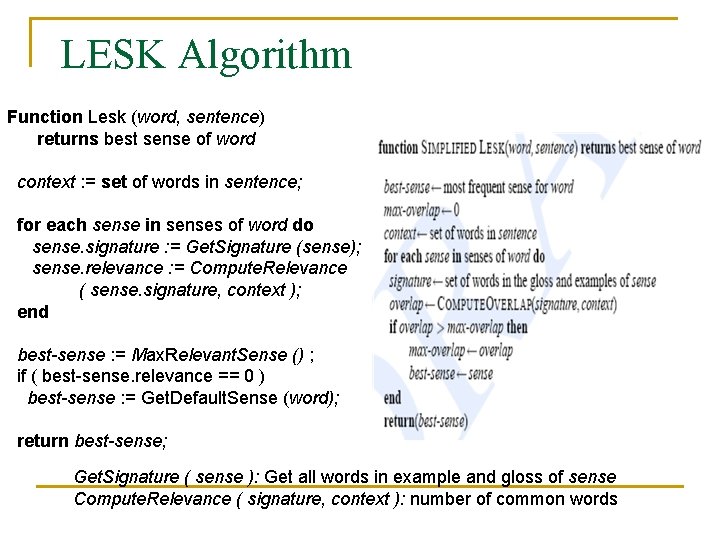

LESK Algorithm Function Lesk (word, sentence) returns best sense of word context : = set of words in sentence; for each sense in senses of word do sense. signature : = Get. Signature (sense); sense. relevance : = Compute. Relevance ( sense. signature, context ); end best-sense : = Max. Relevant. Sense () ; if ( best-sense. relevance == 0 ) best-sense : = Get. Default. Sense (word); return best-sense; Get. Signature ( sense ): Get all words in example and gloss of sense Compute. Relevance ( signature, context ): number of common words

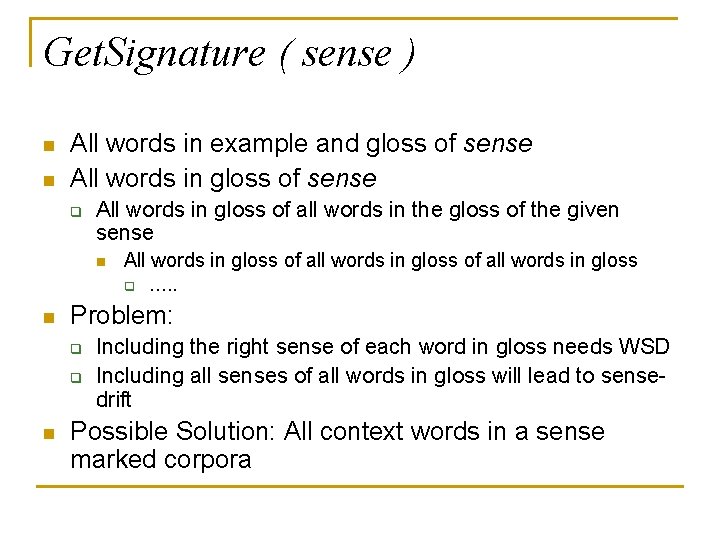

Get. Signature ( sense ) n n All words in example and gloss of sense All words in gloss of sense q All words in gloss of all words in the gloss of the given sense n All words in gloss of all words in gloss q n Problem: q q n …. . Including the right sense of each word in gloss needs WSD Including all senses of all words in gloss will lead to sensedrift Possible Solution: All context words in a sense marked corpora

Ideal Signature n n n For each word, get a Vector of all the words in the language Work with a |V|x|V| Matrix Iterate over it, till it converges

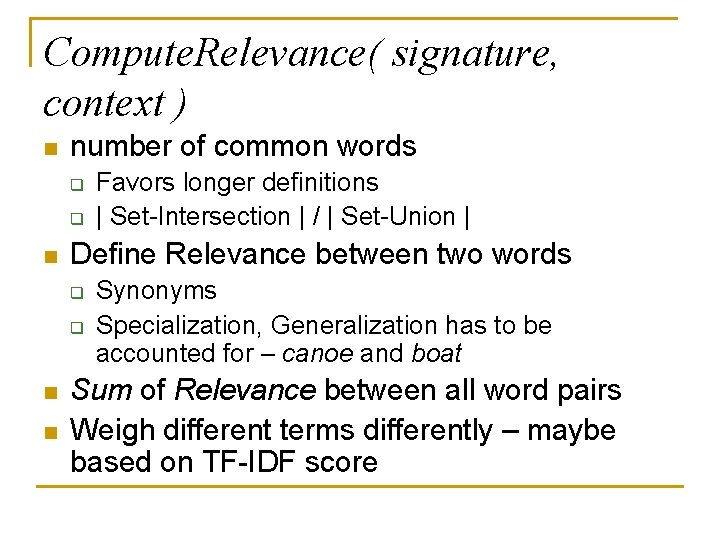

Compute. Relevance( signature, context ) n number of common words q q n Define Relevance between two words q q n n Favors longer definitions | Set-Intersection | / | Set-Union | Synonyms Specialization, Generalization has to be accounted for – canoe and boat Sum of Relevance between all word pairs Weigh different terms differently – maybe based on TF-IDF score

Get. Default. Sense ( word ) n n n The most frequent sense in a given domain The most frequent sense as per the topic of the document

Power of the LESK Schema n n Signature can even be a topic/domain code: finance, poetry, geo-physics All variations of Compute. Relevance function are still applicable

Possible Improvements n LESK gives equal weightage to all senses ‘right’ sense should be given more weight q q n Iterative fashion – one at a time – most certain first Page Rank like algo Give more weightage to Gloss than to Example

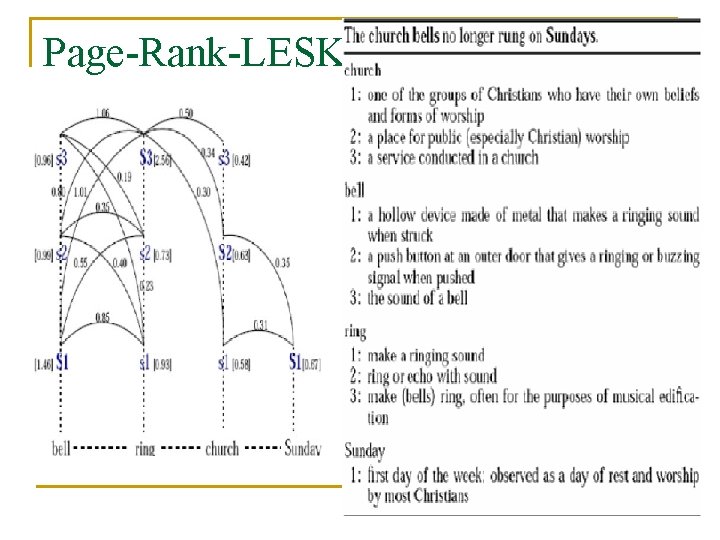

Page-Rank-LESK

Fundamental Limitation of Dictionary Based Methods n Depends too much on the exact word q q Another dictionary may use different gloss and example Use the context words from a tagged corpus as signature

Supervised Learning n Lesk-like methods depend too much on the exact word q n n Another dictionary may use different gloss and example Use a sense-tagged corpora Employ a machine learning algorithm

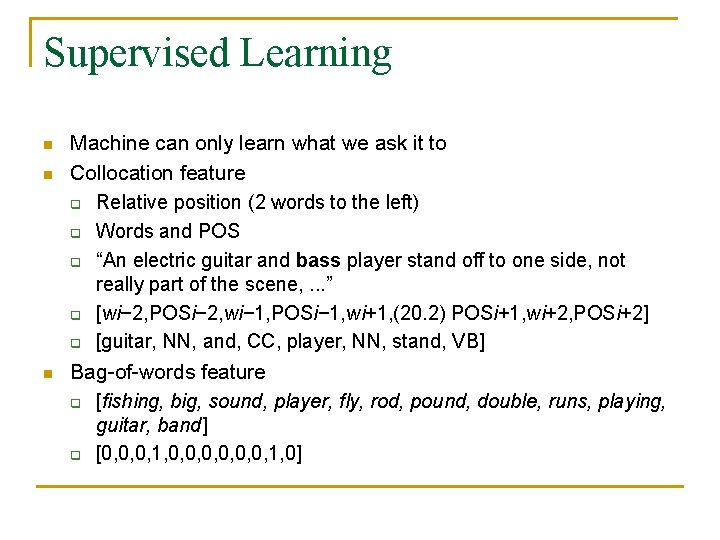

Supervised Learning n n n Machine can only learn what we ask it to Collocation feature q Relative position (2 words to the left) q Words and POS q “An electric guitar and bass player stand off to one side, not really part of the scene, . . . ” q [wi− 2, POSi− 2, wi− 1, POSi− 1, wi+1, (20. 2) POSi+1, wi+2, POSi+2] q [guitar, NN, and, CC, player, NN, stand, VB] Bag-of-words feature q [fishing, big, sound, player, fly, rod, pound, double, runs, playing, guitar, band] q [0, 0, 0, 1, 0]

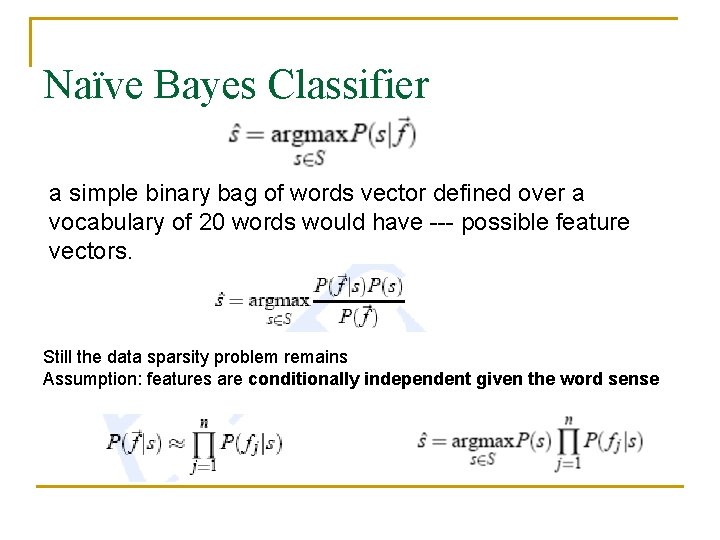

Naïve Bayes Classifier a simple binary bag of words vector defined over a vocabulary of 20 words would have --- possible feature vectors. Still the data sparsity problem remains Assumption: features are conditionally independent given the word sense

![Computing Naïve Bayes Probabilities if a collocational feature such as [wi. 2 = guitar] Computing Naïve Bayes Probabilities if a collocational feature such as [wi. 2 = guitar]](http://slidetodoc.com/presentation_image_h/c0025e665f7780f4bfd4eca77aa4dbbc/image-26.jpg)

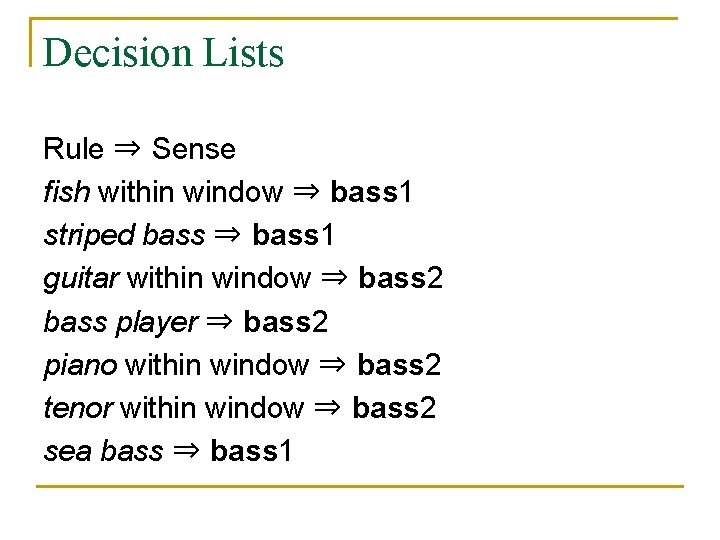

Computing Naïve Bayes Probabilities if a collocational feature such as [wi. 2 = guitar] occurred 3 times for sense bass 1, and sense bass 1 itself occurred 60 times in training, the. MLE estimate is P( f j |s)= 0. 05. it’s hard for humans to examine Naïve Bayes’s workings and understand its decisions. Hence use Decision lists

Decision Lists Rule ⇒ Sense fish within window ⇒ bass 1 striped bass ⇒ bass 1 guitar within window ⇒ bass 2 bass player ⇒ bass 2 piano within window ⇒ bass 2 tenor within window ⇒ bass 2 sea bass ⇒ bass 1

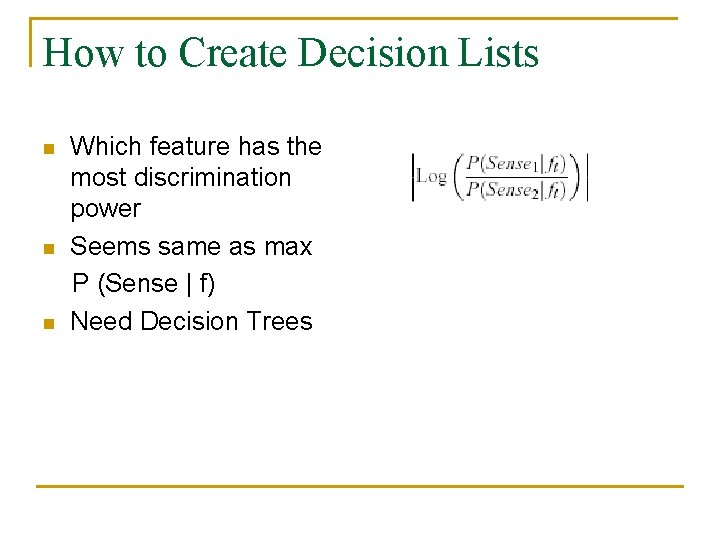

How to Create Decision Lists n n n Which feature has the most discrimination power Seems same as max P (Sense | f) Need Decision Trees

Selectional Restrictions and Selectional Preferences n n n “In our house, everybody has a career and none of them includes washing dishes, ” he says. In her kitchen, Ms. Chen works efficiently, cooking several simple dishes. Wash[+WASHABLE], cook[+EDIBLE] Used more often for elimination than selection Problem: Gold-rush fell apart in 1931, perhaps because people realized you can’t eat gold for lunch if you’re hungry. Solution: Use these preferences as features/probabilities

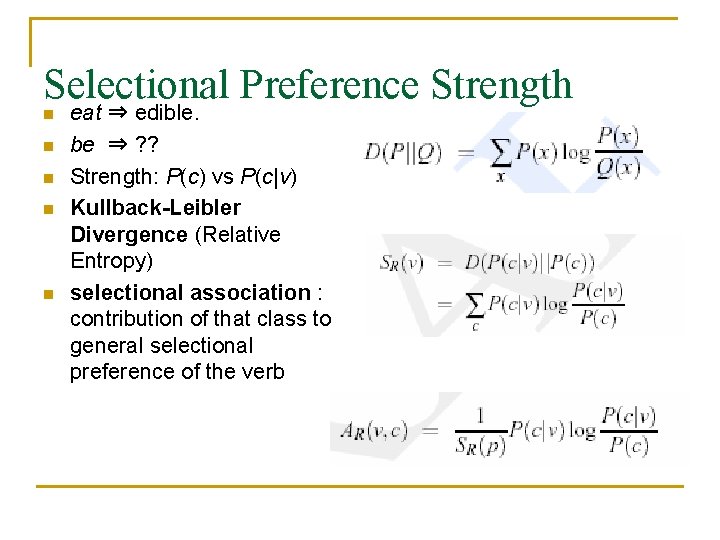

Selectional Preference Strength n n n eat ⇒ edible. be ⇒ ? ? Strength: P(c) vs P(c|v) Kullback-Leibler Divergence (Relative Entropy) selectional association : contribution of that class to general selectional preference of the verb

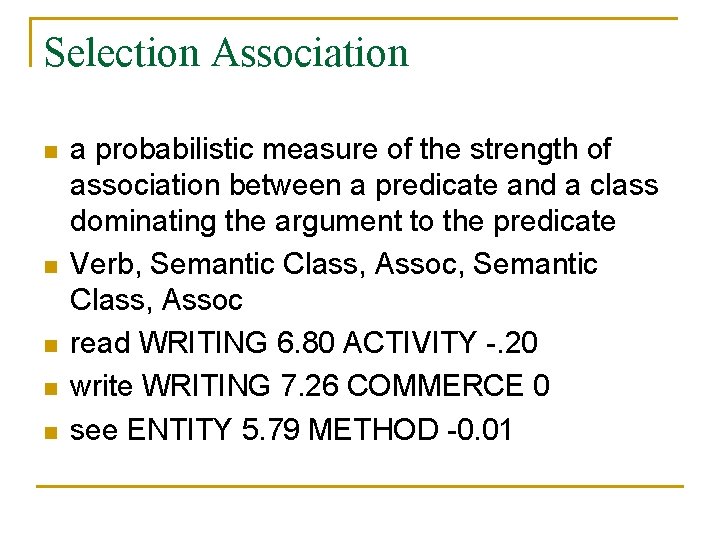

Selection Association n n a probabilistic measure of the strength of association between a predicate and a class dominating the argument to the predicate Verb, Semantic Class, Assoc read WRITING 6. 80 ACTIVITY -. 20 write WRITING 7. 26 COMMERCE 0 see ENTITY 5. 79 METHOD -0. 01

How do we use Selection Association for WSD n n Use as a relatedness model select the sense with highest selectional association between one of its ancestor hypernyms and the predicate.

Minimally Supervised WSD n n Supervised: needs sense tagged corpora Dictionary based: needs large examples and gloss Supervised approaches do better but are much more expensive Can we get best of both words

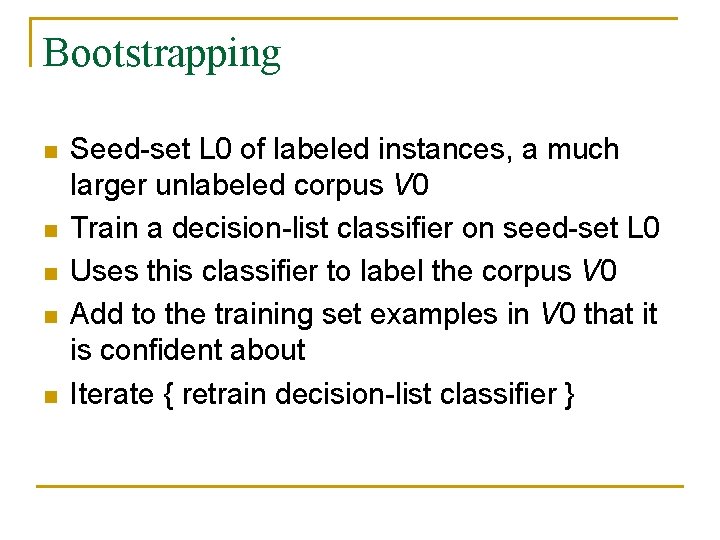

Bootstrapping n n n Seed-set L 0 of labeled instances, a much larger unlabeled corpus V 0 Train a decision-list classifier on seed-set L 0 Uses this classifier to label the corpus V 0 Add to the training set examples in V 0 that it is confident about Iterate { retrain decision-list classifier }

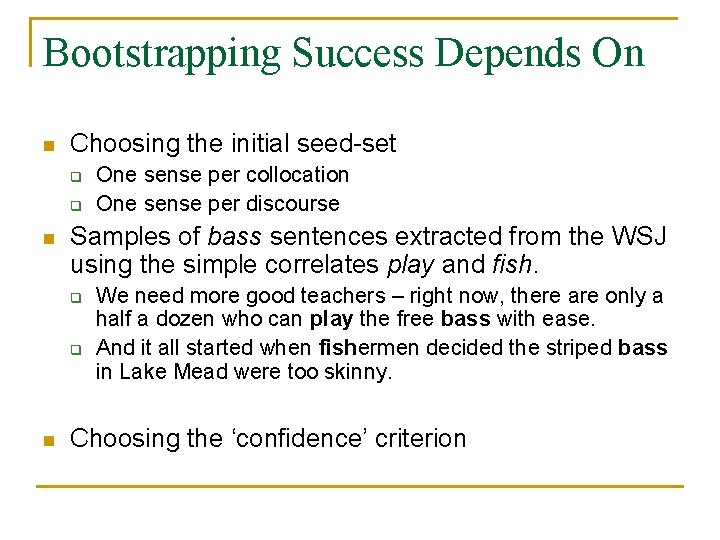

Bootstrapping Success Depends On n Choosing the initial seed-set q q n Samples of bass sentences extracted from the WSJ using the simple correlates play and fish. q q n One sense per collocation One sense per discourse We need more good teachers – right now, there are only a half a dozen who can play the free bass with ease. And it all started when fishermen decided the striped bass in Lake Mead were too skinny. Choosing the ‘confidence’ criterion

WSD: Summary n n It is a hard problem In part because it is not a well-defined problem Or it cannot be well-defined Because making sense of ‘Sense’ is hard

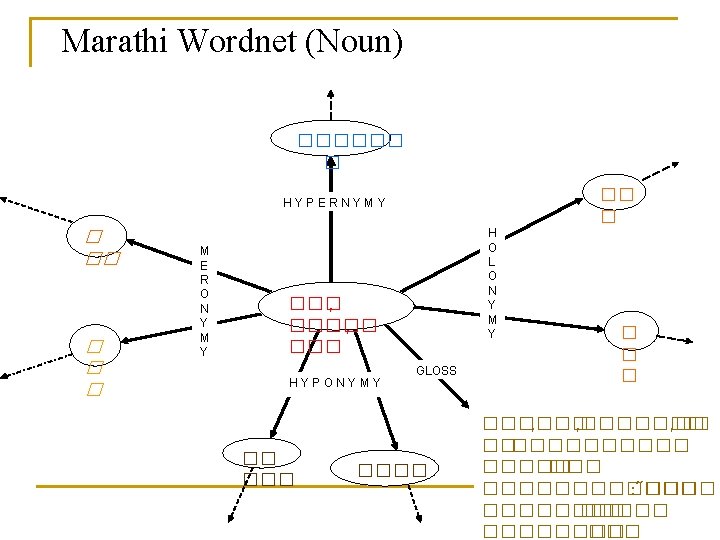

Hindi Wordnet n n Wordnet - A lexical database Inspired by the English Word. Net Built conceptually Synset )synonym set) is the basic building block.

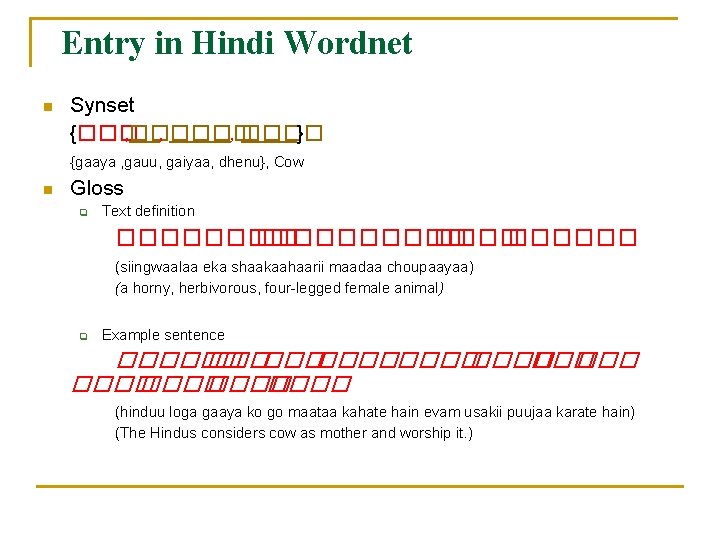

Entry in Hindi Wordnet n Synset {��� , ���� } {gaaya , gauu, gaiyaa, dhenu}, Cow n Gloss q Text definition ���������� (siingwaalaa eka shaakaahaarii maadaa choupaayaa) (a horny, herbivorous, four-legged female animal) q Example sentence ������������ ���� (hinduu loga gaaya ko go maataa kahate hain evam usakii puujaa karate hain) (The Hindus considers cow as mother and worship it. )

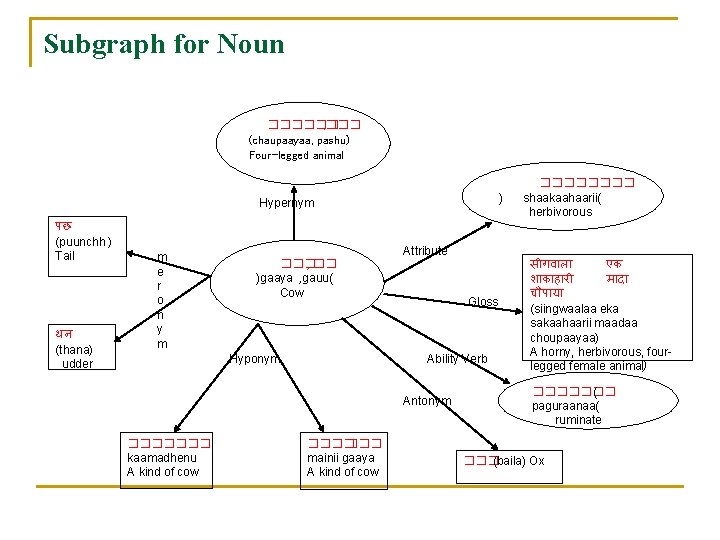

Subgraph for Noun ������ , ��� (chaupaayaa, pashu) Four-legged animal ) Hypernym पछ (puunchh ) Tail थन (thana) udder m e r o n y m ��� , �� )gaaya , gauu( Cow Hyponym Attribute Gloss Ability Verb Antonym ������� kaamadhenu A kind of cow ���� mainii gaaya A kind of cow ���� shaakaahaarii( herbivorous स गव ल एक श क ह र म द च प य (siingwaalaa eka sakaahaarii maadaa choupaayaa) A horny, herbivorous, fourlegged female animal) ������� ( paguraanaa( ruminate ��� (baila) Ox

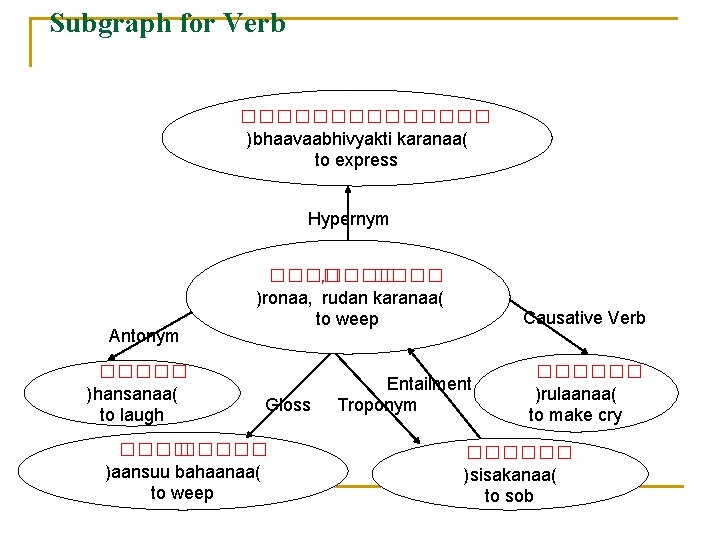

Subgraph for Verb ������� )bhaavaabhivyakti karanaa( to express Hypernym Antonym ����� )hansanaa( to laugh ���� , ���� )ronaa, rudan karanaa( to weep Gloss ����� )aansuu bahaanaa( to weep Causative Verb Entailment Troponym ������ )rulaanaa( to make cry ������ )sisakanaa( to sob

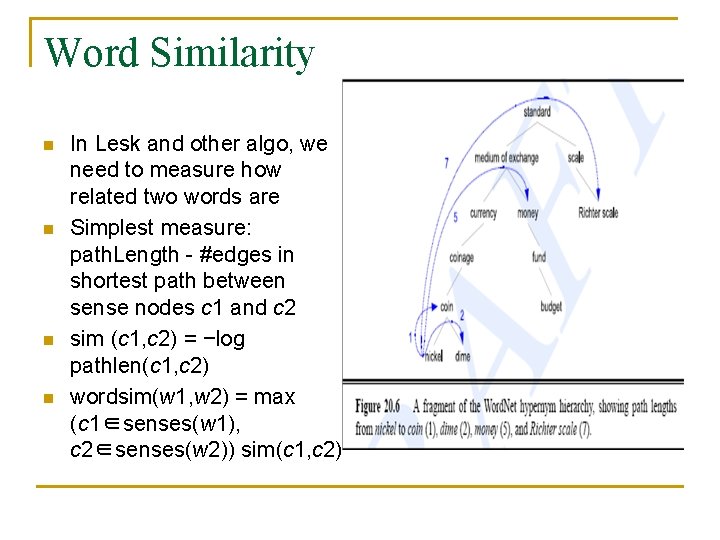

Word Similarity n n In Lesk and other algo, we need to measure how related two words are Simplest measure: path. Length - #edges in shortest path between sense nodes c 1 and c 2 sim (c 1, c 2) = −log pathlen(c 1, c 2) wordsim(w 1, w 2) = max (c 1∈senses(w 1), c 2∈senses(w 2)) sim(c 1, c 2)

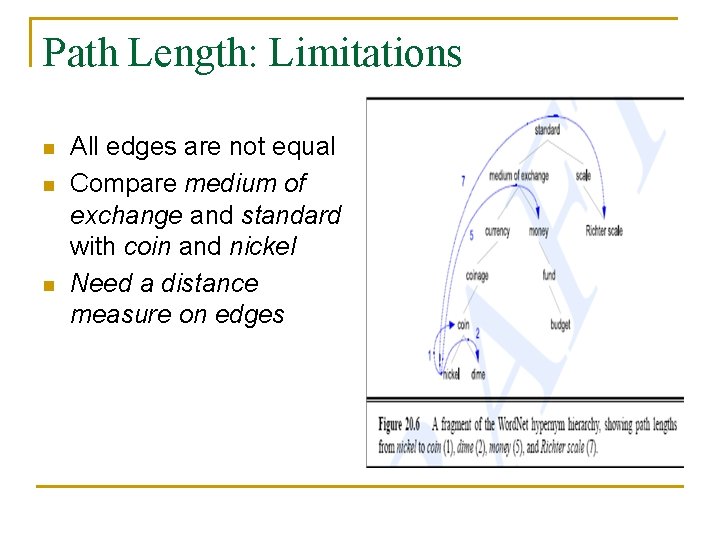

Path Length: Limitations n n n All edges are not equal Compare medium of exchange and standard with coin and nickel Need a distance measure on edges

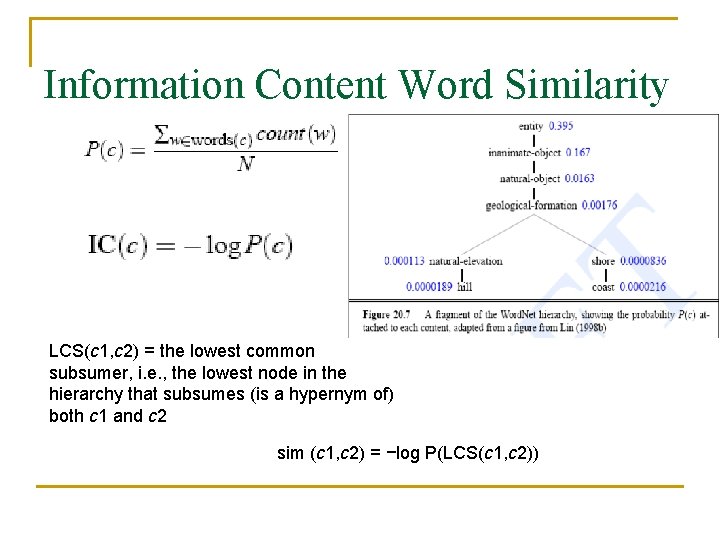

Information Content Word Similarity LCS(c 1, c 2) = the lowest common subsumer, i. e. , the lowest node in the hierarchy that subsumes (is a hypernym of) both c 1 and c 2 sim (c 1, c 2) = −log P(LCS(c 1, c 2))

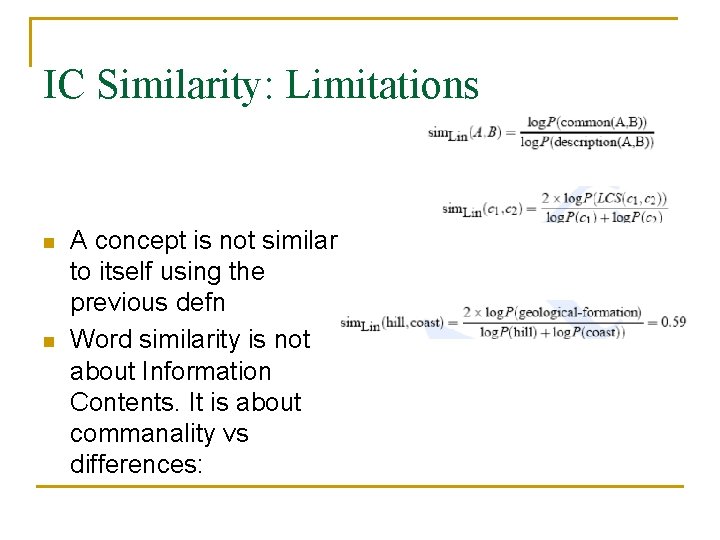

IC Similarity: Limitations n n A concept is not similar to itself using the previous defn Word similarity is not about Information Contents. It is about commanality vs differences:

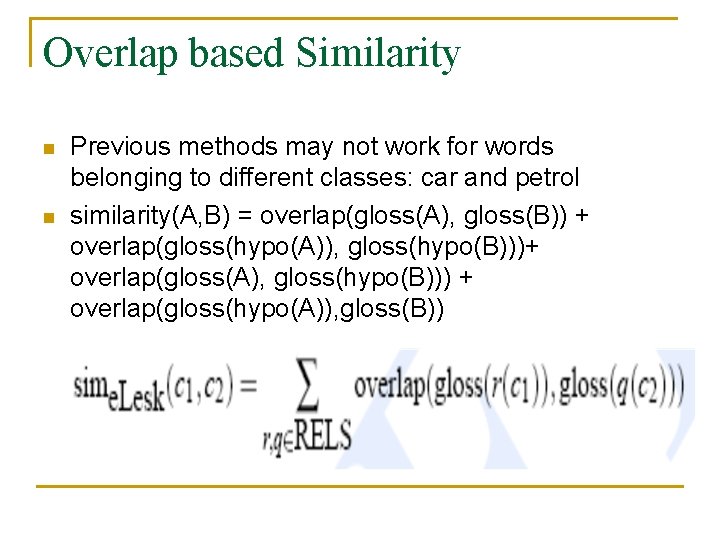

Overlap based Similarity n n Previous methods may not work for words belonging to different classes: car and petrol similarity(A, B) = overlap(gloss(A), gloss(B)) + overlap(gloss(hypo(A)), gloss(hypo(B)))+ overlap(gloss(A), gloss(hypo(B))) + overlap(gloss(hypo(A)), gloss(B))

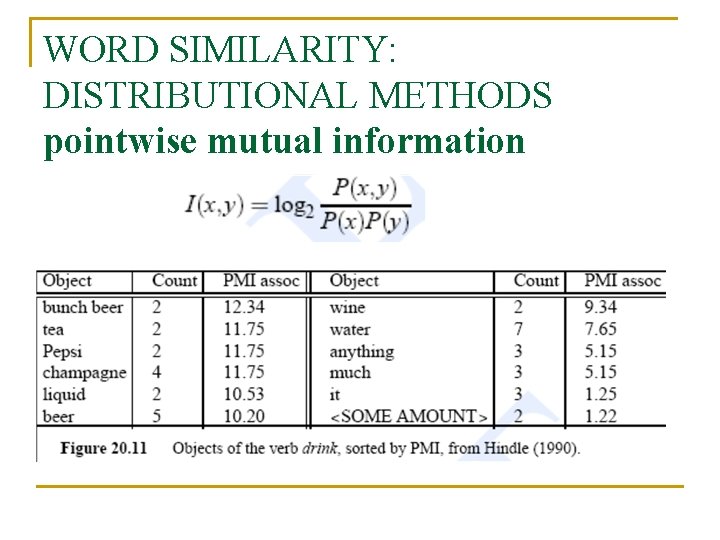

WORD SIMILARITY: DISTRIBUTIONAL METHODS pointwise mutual information

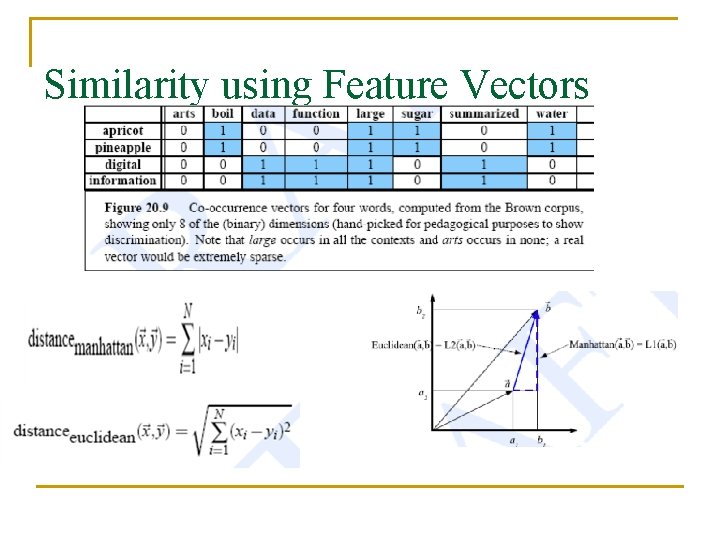

Similarity using Feature Vectors

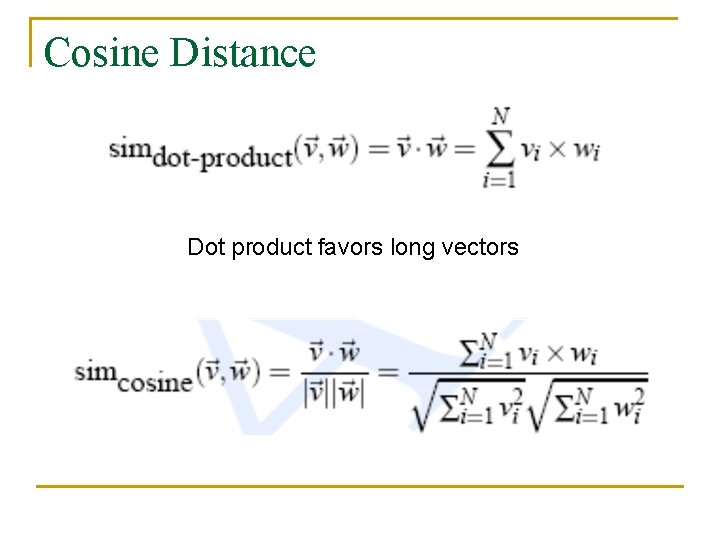

Cosine Distance Dot product favors long vectors

Conclusion n n Lot of care is needed in defining similarity measures Impressive results can be obtained once similarity is carefully defined

Backup

Org. LESK : Taking Signature of Context Words into Account for Relatedness n n n Disambiguating “pine cone” q Neither ‘pine’ nor ‘cone’ appears in each other definitions pine q 1 a evergreen tree with needle-shaped leaves and solid wood q 2 waste away through sorrow or illness cone q 1 solid body which narrows to a point q 2 something of this shape whether solid or hollow q 3 fruit of certain evergreen trees

Does the Improvement Really Work n n Problem: Collateral has not one but many senses: q Noun: collateral (a security pledged for the repayment of a loan) q Adjective q S: (adj) collateral, indirect (descended from a common ancestor but through different lines) "cousins are collateral relatives"; "an indirect descendant of the Stuarts" q S: (adj) collateral, confirmative, confirming, confirmatory, corroborative, corroboratory, substantiating, substantiative, validating, validatory, verificatory, verifying (serving to support or corroborate) "collateral evidence" q S: (adj) collateral (accompany, concomitant) "collateral target damage from a bombing run" q S: (adj) collateral (situated or running side by side) "collateral ridges of mountains“ Solution ? ?

- Slides: 53