Computational intelligence methods for information understanding and information

- Slides: 78

Computational intelligence methods for information understanding and information management Włodzisław Duch Department of Informatics Nicolaus Copernicus University, Torun, Poland & School of Computer Engineering, Nanyang Technological University, Singapore IMS 2005, Kunming, China

Plan What is this about ? • • How to discover knowledge in data; how to create comprehensible models of data; how to evaluate new data; how to understand what computational intelligence (CI) methods really do. 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. AI, CI & Data Mining Forms of useful knowledge Integration of different methods in Ghost. Miner Exploration & Visualization Rule-based data analysis Neurofuzzy models Neural models, understanding what they do Similarity-based models, prototype rules Case studies From data to expert system

AI, CI & DM Artificial Intelligence: symbolic models of knowledge. • Higher-level cognition: reasoning, problem solving, planning, heuristic search for solutions. • Machine learning, inductive, rule-based methods. • Technology: expert systems. Computational Intelligence, Soft Computing: methods inspired by many sources: • biology – evolutionary, immune, neural computing • statistics, patter recognition • probability – Bayesian networks • logic – fuzzy, rough … Perception, object recognition. Data Mining, Knowledge Discovery in Databases. • discovery of interesting rules, knowledge => info understanding. • building predictive data models => part of info management.

Forms of useful knowledge AI/Machine Learning camp: Neural nets are black boxes. Unacceptable! Symbolic rules forever. But. . . knowledge accessible to humans is in: • symbols and rules; • similarity to prototypes, structures, known cases; • images, visual representations. What type of explanation is satisfactory? Interesting question for cognitive scientists but. . . in different fields answers are different!

Forms of knowledge • Humans remember examples of each category and refer to such examples – as similarity-based, case based or nearest-neighbors methods do. • Humans create prototypes out of many examples – as Gaussian classifiers, RBF networks, or neurofuzzy systems modeling probability densities do. • Logical rules are the highest form of summarization of simple forms of knowledge; • Bayesian networks present complex relationships. 3 types of explanation presented here: • logic-based: symbols and rules; • exemplar-based: prototypes and similarity of structures; • visualization-based: maps, diagrams, relations. . .

Ghost. Miner Philosophy Ghost. Miner tools for data mining & knowledge discovery, from our lab + Fujitsu: http: //www. fqspl. com. pl/ghostminer/ • Separate the process of model building (hackers) and knowledge discovery, from model use (lamers) => Ghost. Miner Developer & Ghost. Miner Analyzer (ver. 3. 0 & newer) • There is no free lunch – provide different type of tools for knowledge discovery. Decision tree, neural, neurofuzzy, similarity-based, SVM, committees. • Provide tools for visualization of data. • Support the process of knowledge discovery/model building and evaluating, organizing it into projects.

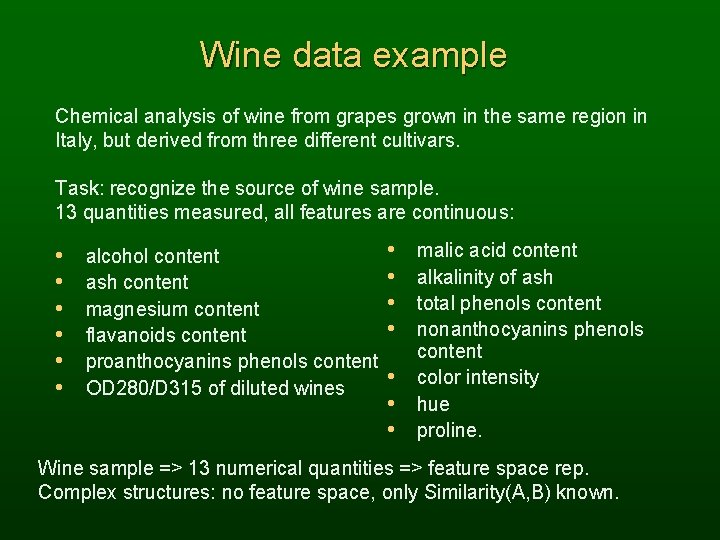

Wine data example Chemical analysis of wine from grapes grown in the same region in Italy, but derived from three different cultivars. Task: recognize the source of wine sample. 13 quantities measured, all features are continuous: • • • alcohol content ash content magnesium content flavanoids content proanthocyanins phenols content OD 280/D 315 of diluted wines • • malic acid content alkalinity of ash total phenols content nonanthocyanins phenols content color intensity hue proline. Wine sample => 13 numerical quantities => feature space rep. Complex structures: no feature space, only Similarity(A, B) known.

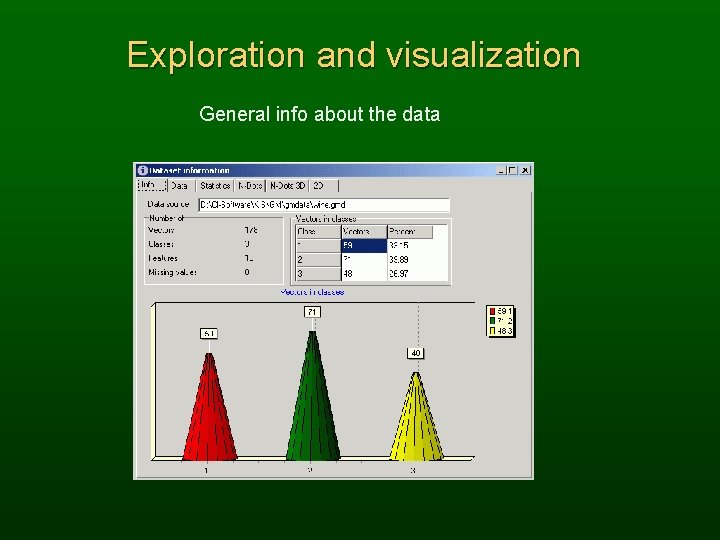

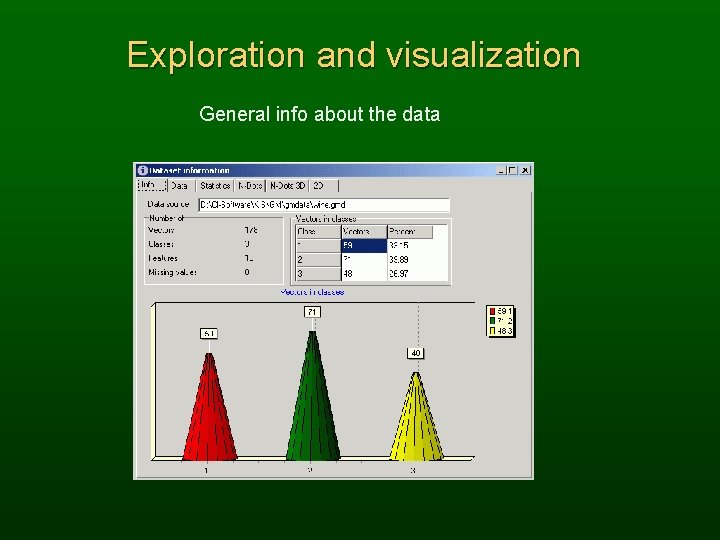

Exploration and visualization General info about the data

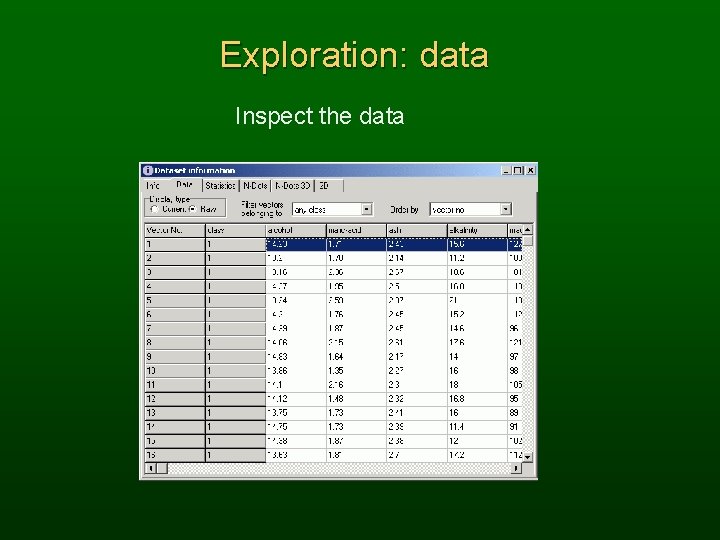

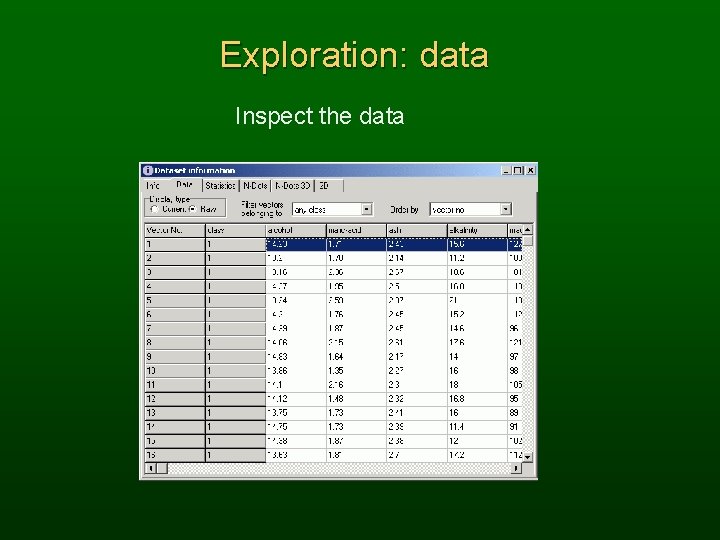

Exploration: data Inspect the data

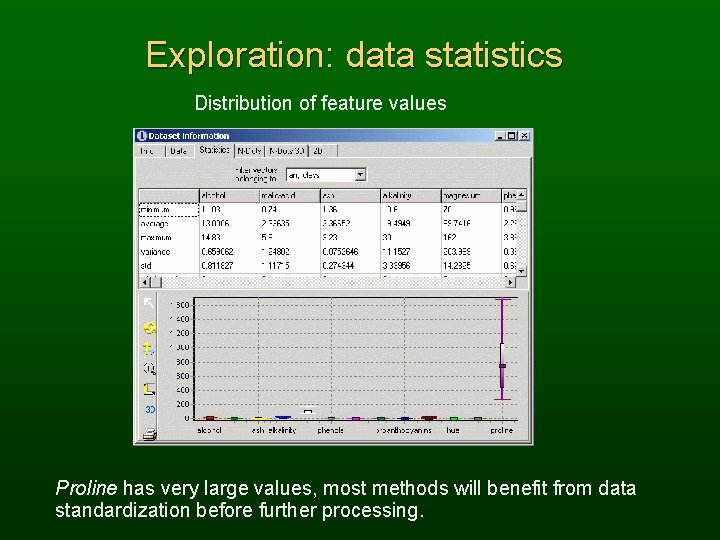

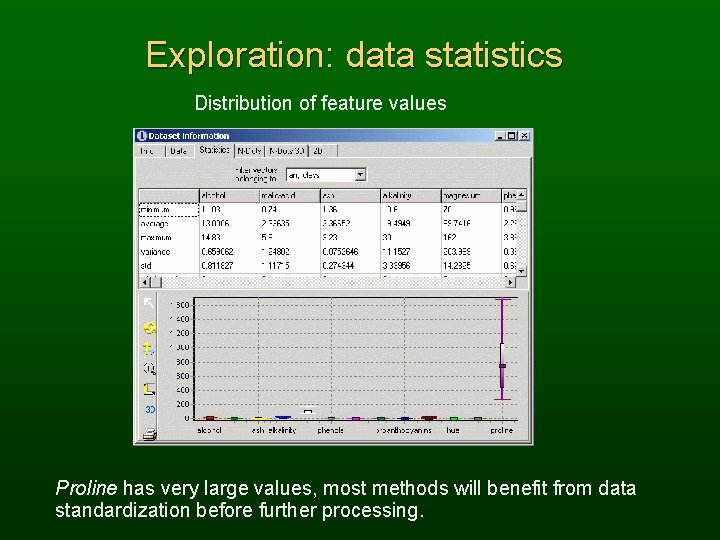

Exploration: data statistics Distribution of feature values Proline has very large values, most methods will benefit from data standardization before further processing.

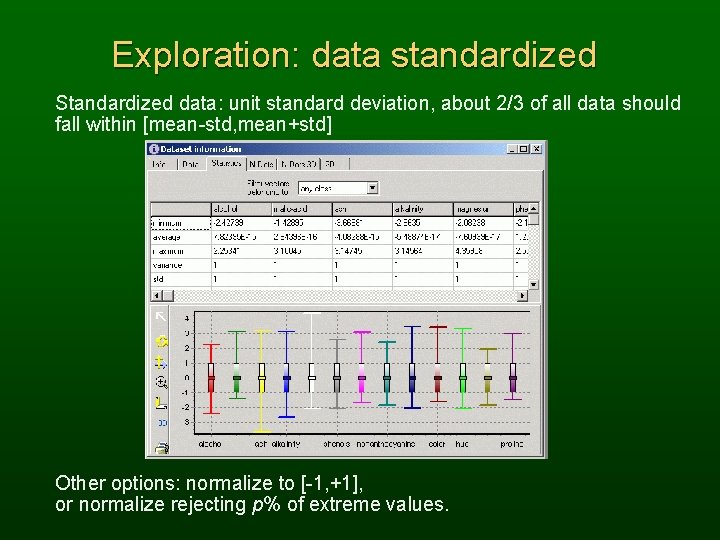

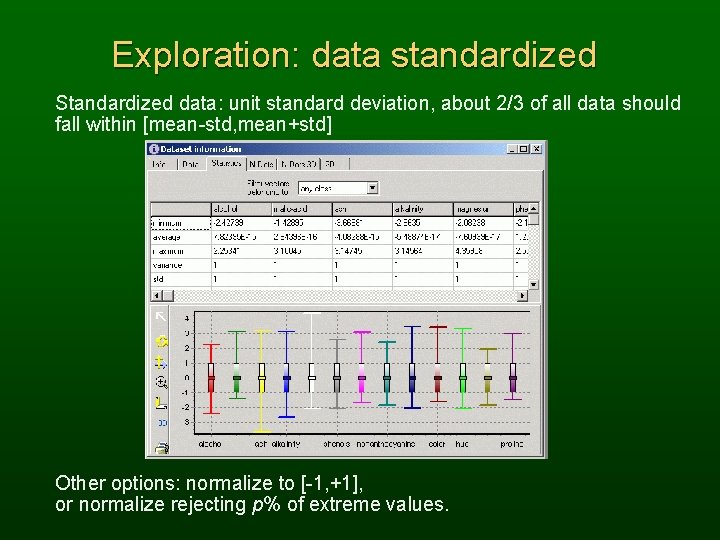

Exploration: data standardized Standardized data: unit standard deviation, about 2/3 of all data should fall within [mean-std, mean+std] Other options: normalize to [-1, +1], or normalize rejecting p% of extreme values.

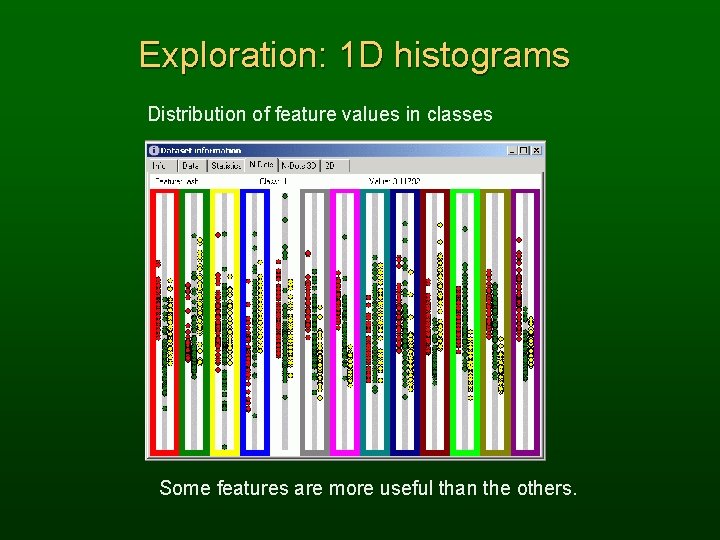

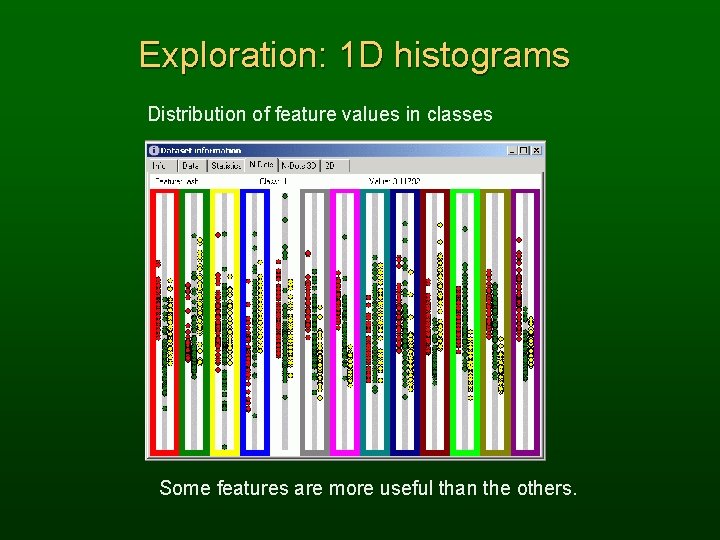

Exploration: 1 D histograms Distribution of feature values in classes Some features are more useful than the others.

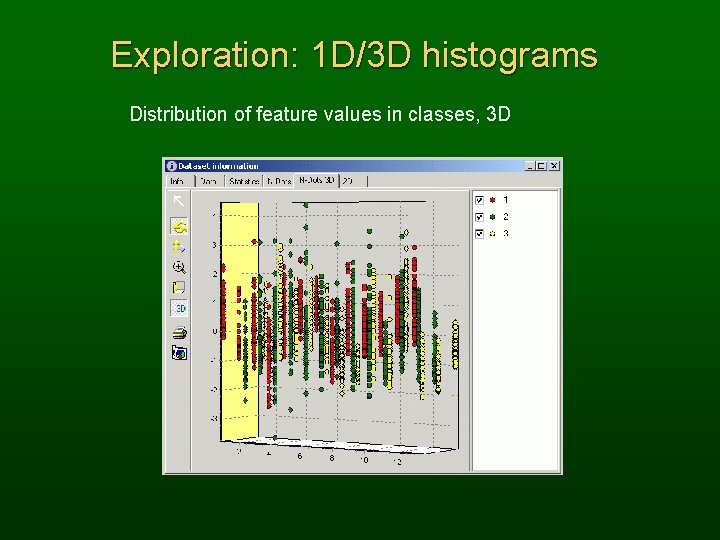

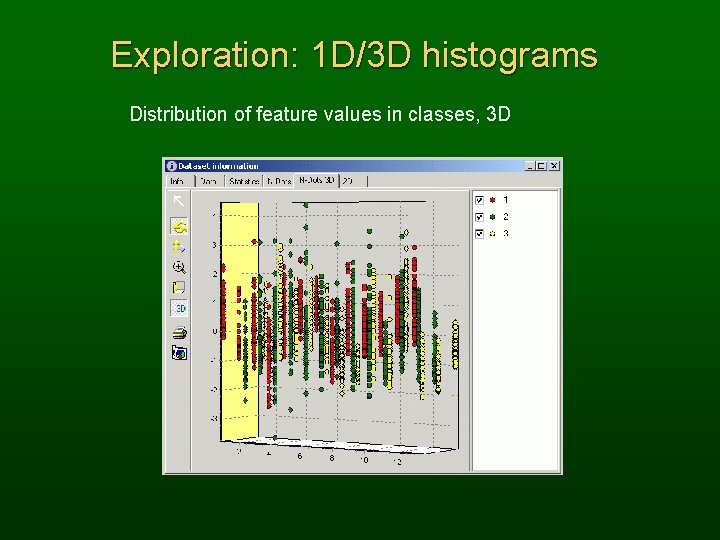

Exploration: 1 D/3 D histograms Distribution of feature values in classes, 3 D

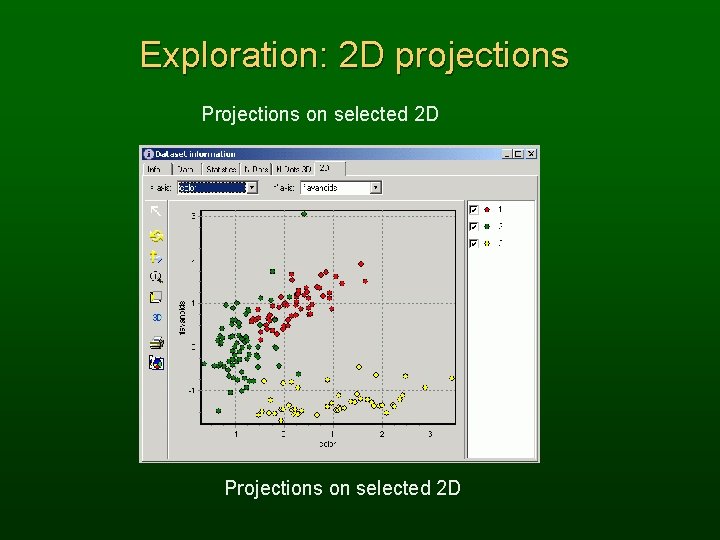

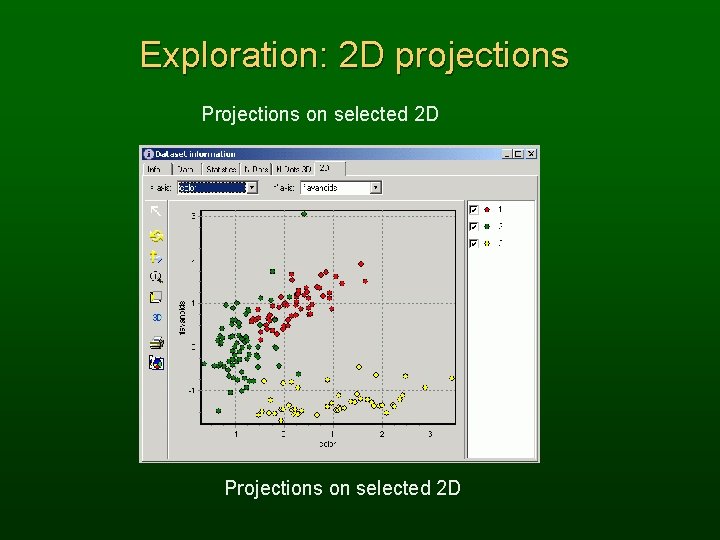

Exploration: 2 D projections Projections on selected 2 D

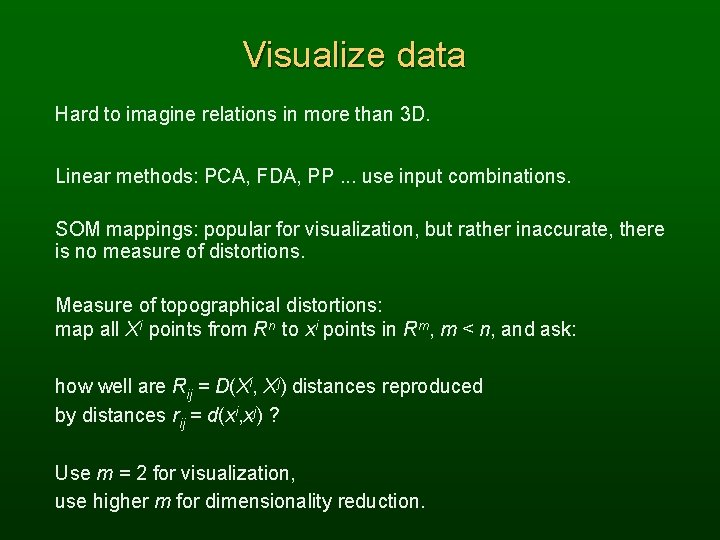

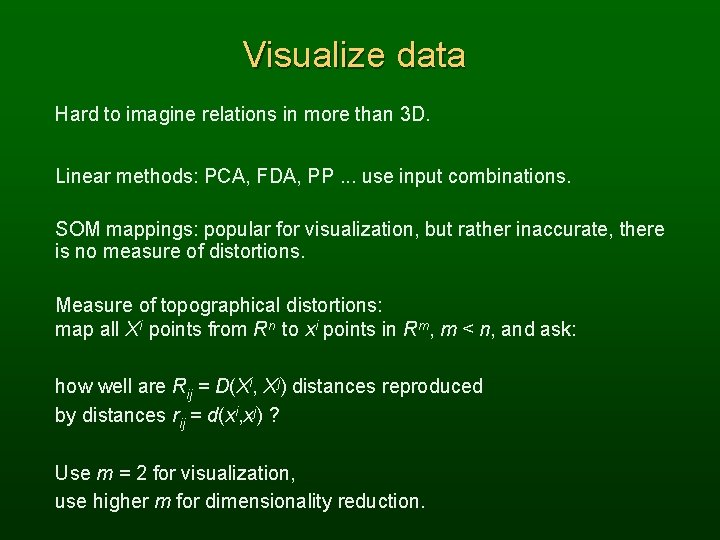

Visualize data Hard to imagine relations in more than 3 D. Linear methods: PCA, FDA, PP. . . use input combinations. SOM mappings: popular for visualization, but rather inaccurate, there is no measure of distortions. Measure of topographical distortions: map all Xi points from Rn to xi points in Rm, m < n, and ask: how well are Rij = D(Xi, Xj) distances reproduced by distances rij = d(xi, xj) ? Use m = 2 for visualization, use higher m for dimensionality reduction.

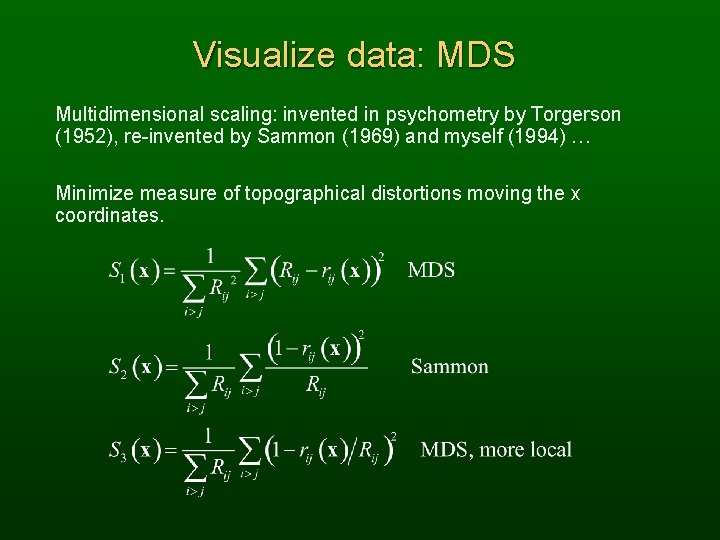

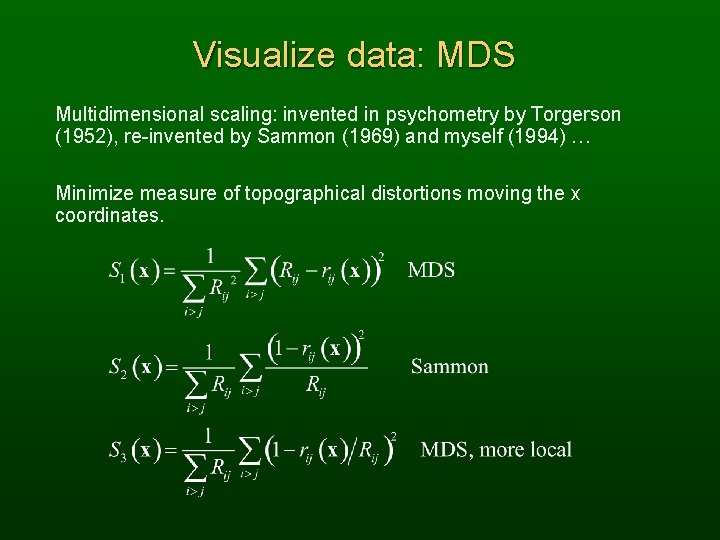

Visualize data: MDS Multidimensional scaling: invented in psychometry by Torgerson (1952), re-invented by Sammon (1969) and myself (1994) … Minimize measure of topographical distortions moving the x coordinates.

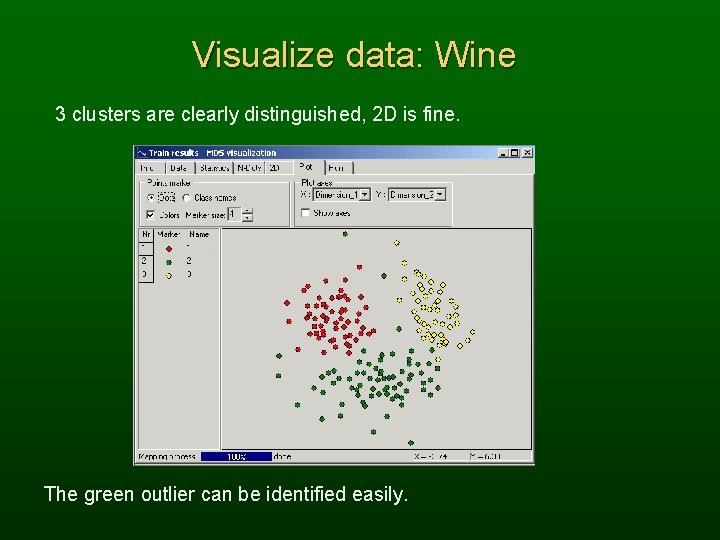

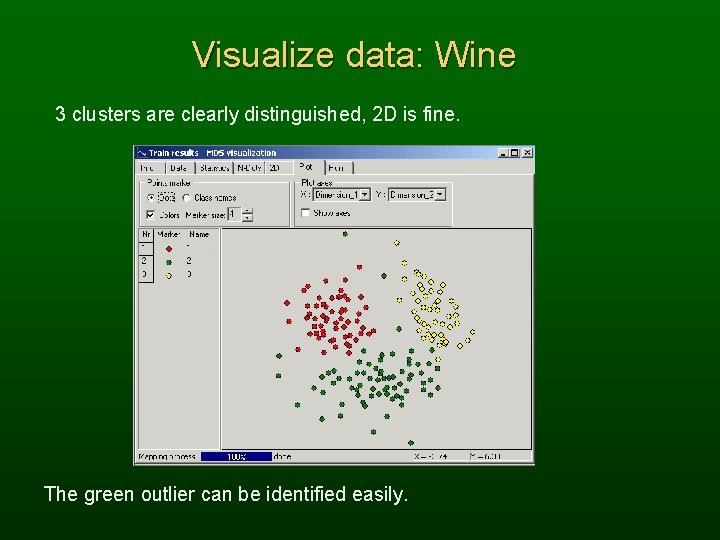

Visualize data: Wine 3 clusters are clearly distinguished, 2 D is fine. The green outlier can be identified easily.

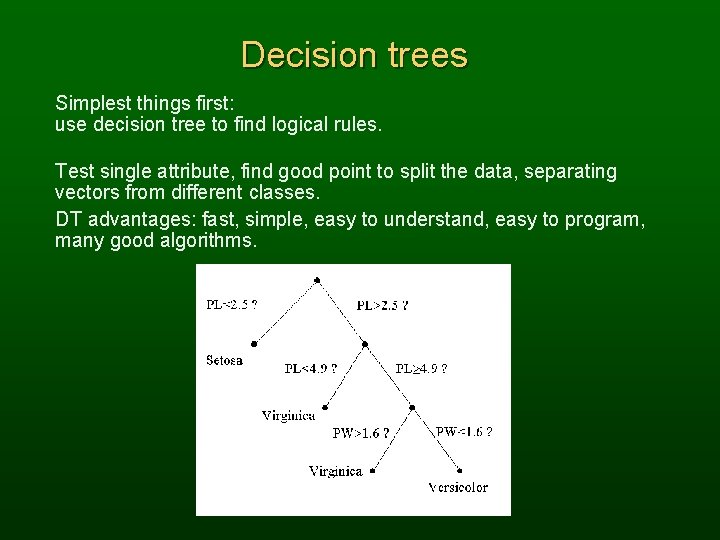

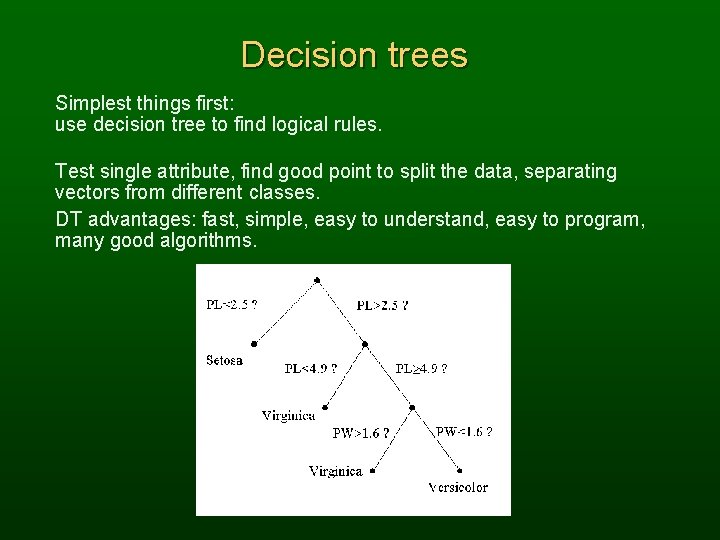

Decision trees Simplest things first: use decision tree to find logical rules. Test single attribute, find good point to split the data, separating vectors from different classes. DT advantages: fast, simple, easy to understand, easy to program, many good algorithms.

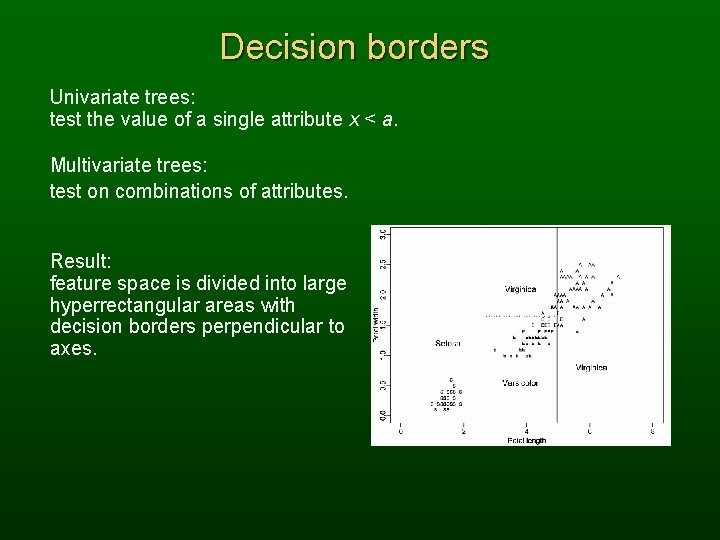

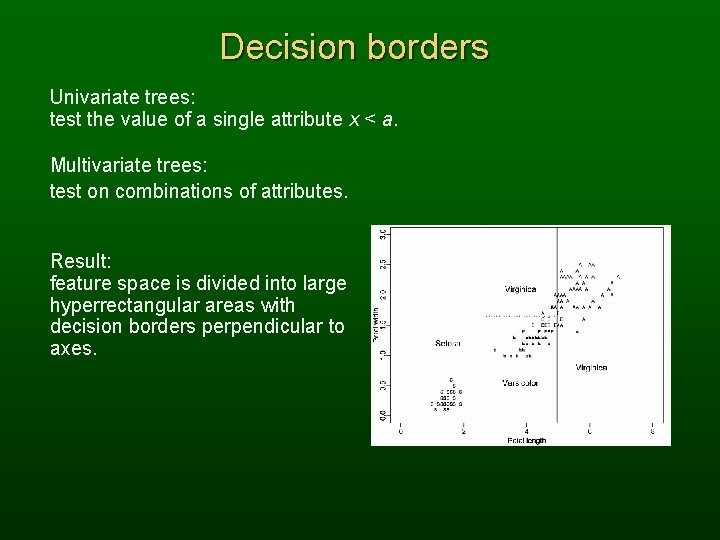

Decision borders Univariate trees: test the value of a single attribute x < a. Multivariate trees: test on combinations of attributes. Result: feature space is divided into large hyperrectangular areas with decision borders perpendicular to axes.

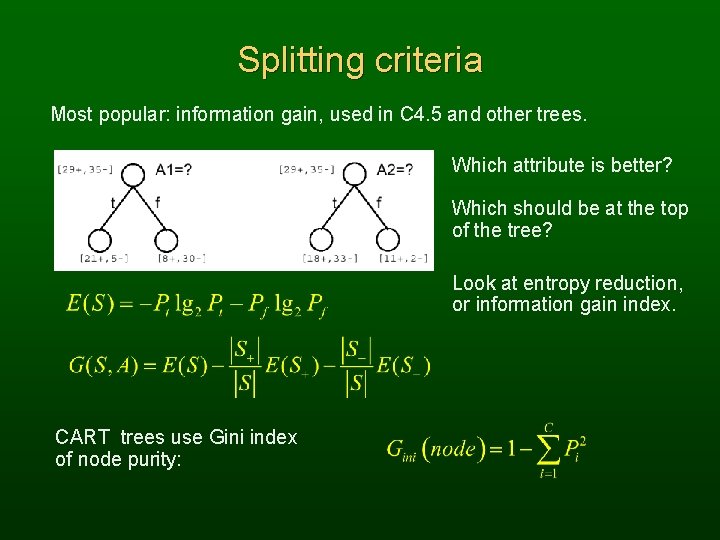

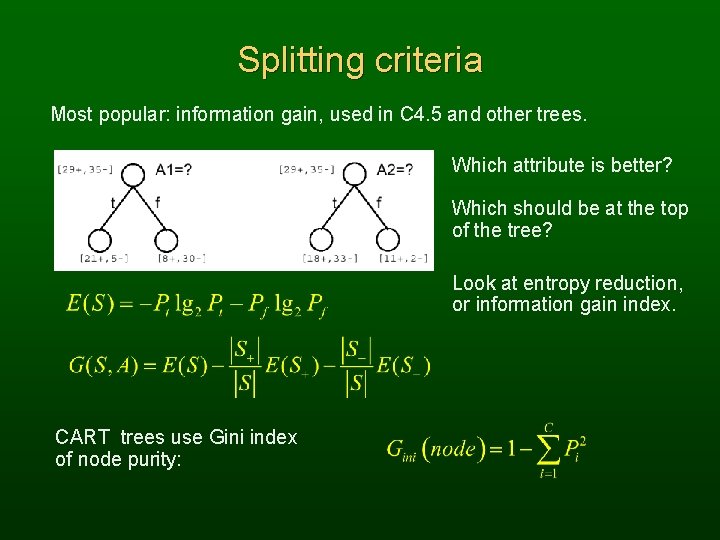

Splitting criteria Most popular: information gain, used in C 4. 5 and other trees. Which attribute is better? Which should be at the top of the tree? Look at entropy reduction, or information gain index. CART trees use Gini index of node purity:

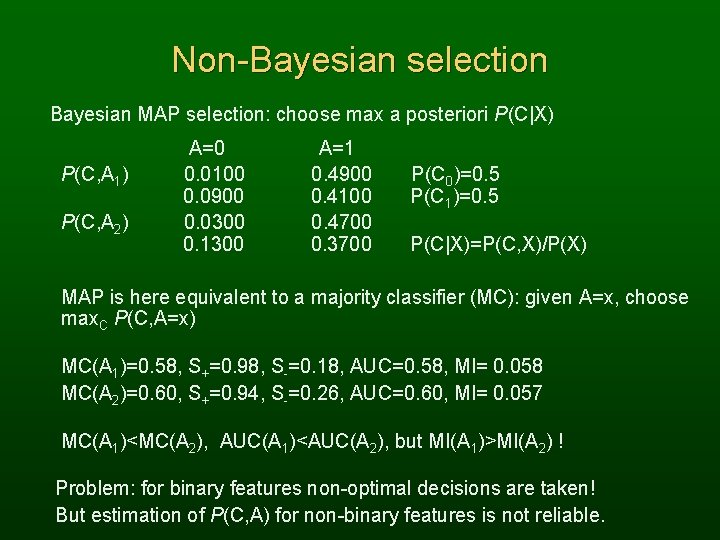

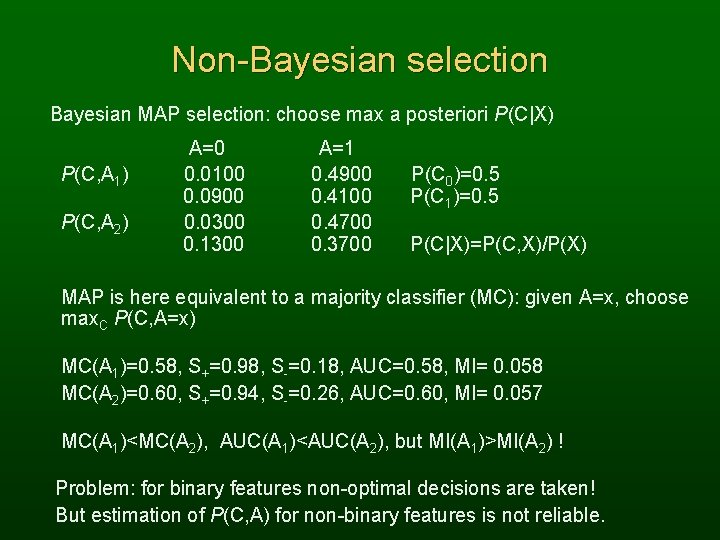

Non-Bayesian selection Bayesian MAP selection: choose max a posteriori P(C|X) P(C, A 1) P(C, A 2) A=0 0. 0100 0. 0900 0. 0300 0. 1300 A=1 0. 4900 0. 4100 0. 4700 0. 3700 P(C 0)=0. 5 P(C 1)=0. 5 P(C|X)=P(C, X)/P(X) MAP is here equivalent to a majority classifier (MC): given A=x, choose max. C P(C, A=x) MC(A 1)=0. 58, S+=0. 98, S-=0. 18, AUC=0. 58, MI= 0. 058 MC(A 2)=0. 60, S+=0. 94, S-=0. 26, AUC=0. 60, MI= 0. 057 MC(A 1)<MC(A 2), AUC(A 1)<AUC(A 2), but MI(A 1)>MI(A 2) ! Problem: for binary features non-optimal decisions are taken! But estimation of P(C, A) for non-binary features is not reliable.

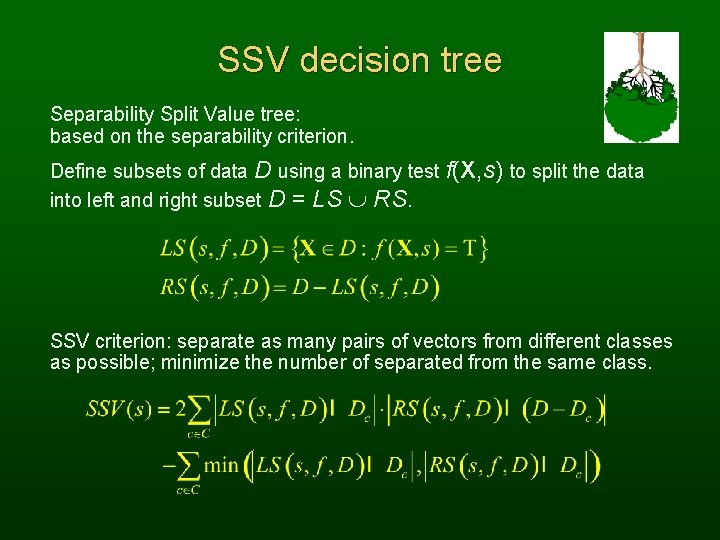

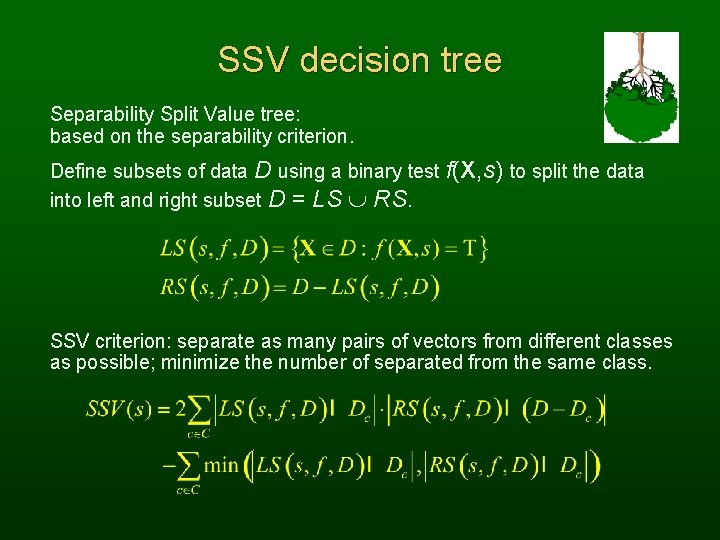

SSV decision tree Separability Split Value tree: based on the separability criterion. Define subsets of data D using a binary test f(X, s) to split the data into left and right subset D = LS RS. SSV criterion: separate as many pairs of vectors from different classes as possible; minimize the number of separated from the same class.

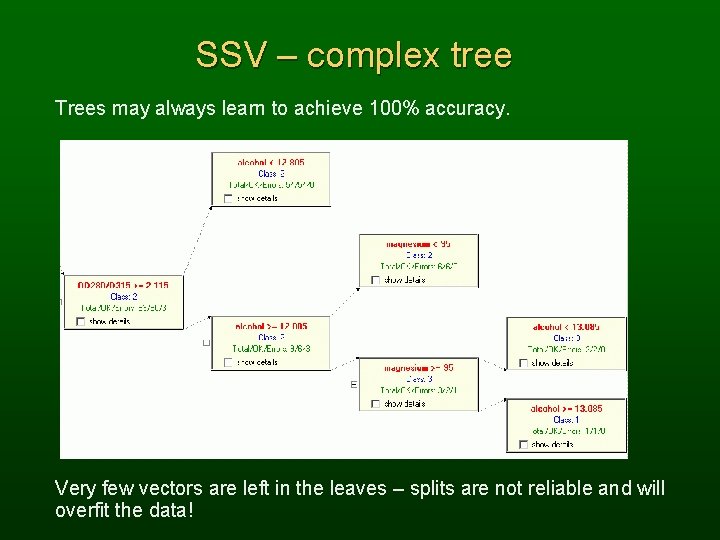

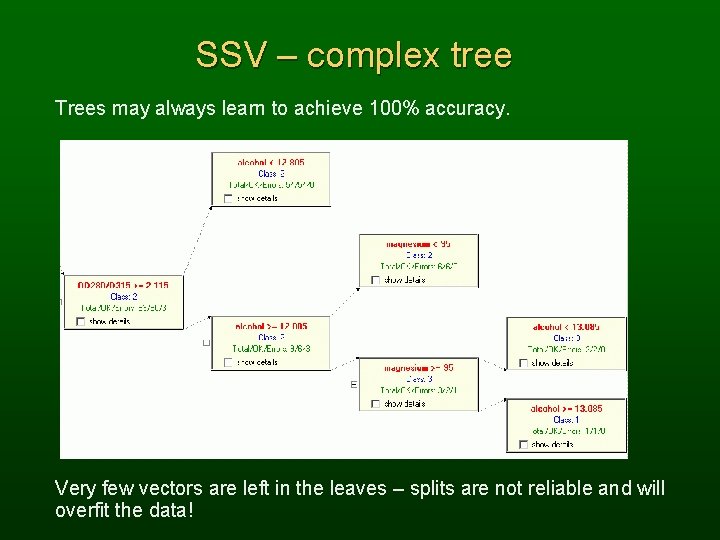

SSV – complex tree Trees may always learn to achieve 100% accuracy. Very few vectors are left in the leaves – splits are not reliable and will overfit the data!

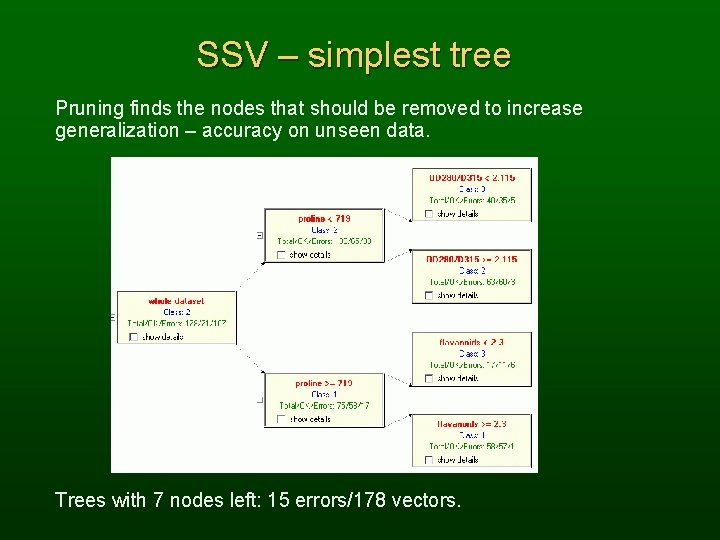

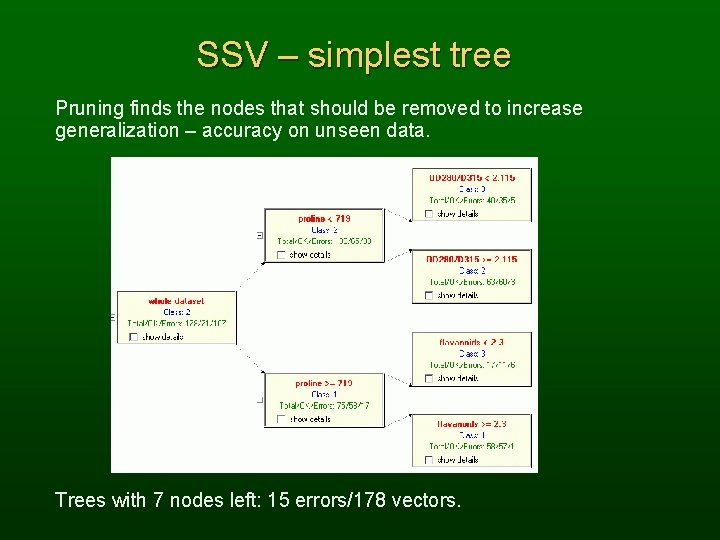

SSV – simplest tree Pruning finds the nodes that should be removed to increase generalization – accuracy on unseen data. Trees with 7 nodes left: 15 errors/178 vectors.

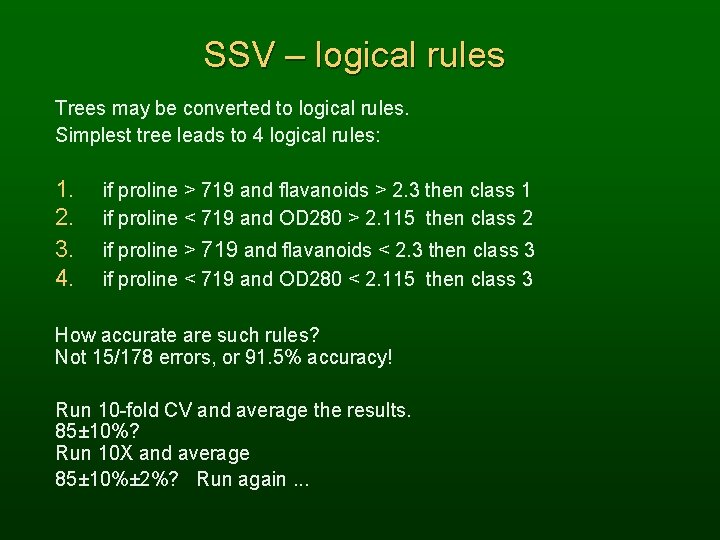

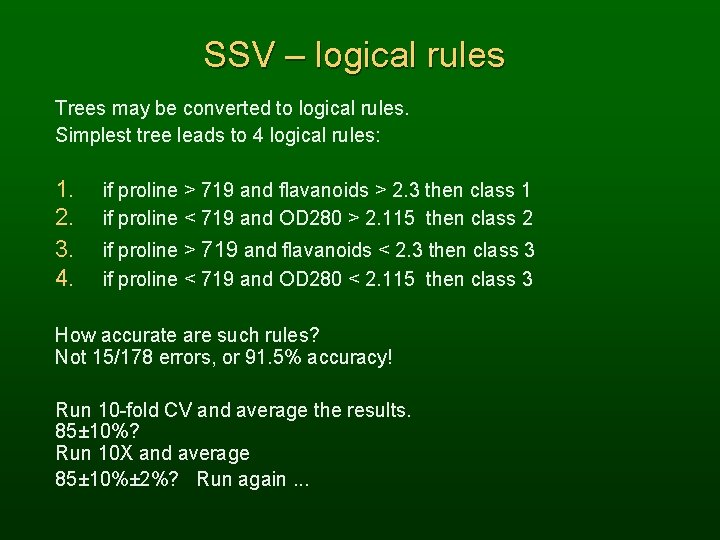

SSV – logical rules Trees may be converted to logical rules. Simplest tree leads to 4 logical rules: 1. 2. 3. 4. if proline > 719 and flavanoids > 2. 3 then class 1 if proline < 719 and OD 280 > 2. 115 then class 2 if proline > 719 and flavanoids < 2. 3 then class 3 if proline < 719 and OD 280 < 2. 115 then class 3 How accurate are such rules? Not 15/178 errors, or 91. 5% accuracy! Run 10 -fold CV and average the results. 85± 10%? Run 10 X and average 85± 10%± 2%? Run again. . .

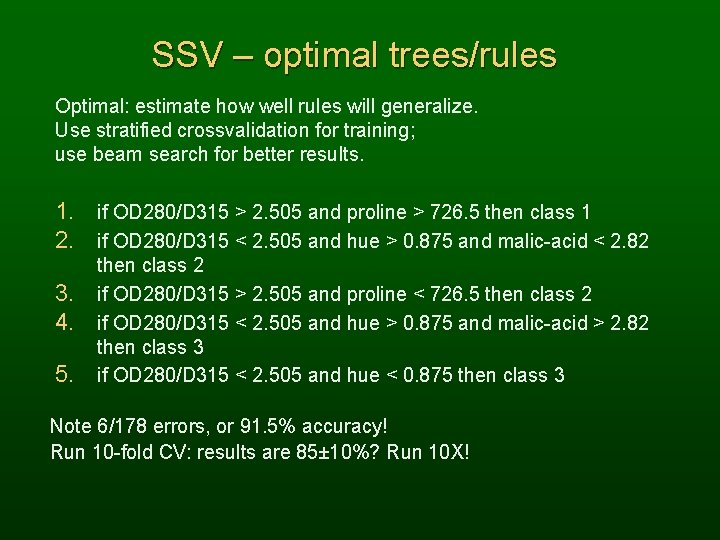

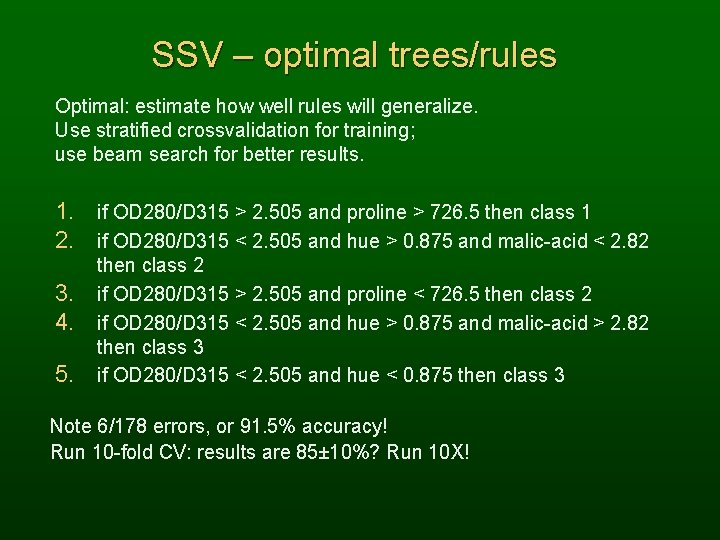

SSV – optimal trees/rules Optimal: estimate how well rules will generalize. Use stratified crossvalidation for training; use beam search for better results. 1. if OD 280/D 315 > 2. 505 and proline > 726. 5 then class 1 2. if OD 280/D 315 < 2. 505 and hue > 0. 875 and malic-acid < 2. 82 3. 4. 5. then class 2 if OD 280/D 315 > 2. 505 and proline < 726. 5 then class 2 if OD 280/D 315 < 2. 505 and hue > 0. 875 and malic-acid > 2. 82 then class 3 if OD 280/D 315 < 2. 505 and hue < 0. 875 then class 3 Note 6/178 errors, or 91. 5% accuracy! Run 10 -fold CV: results are 85± 10%? Run 10 X!

Logical rules Crisp logic rules: for continuous x use linguistic variables (predicate functions). sk(x) ş True [XkŁ x Ł X'k], for example: small(x) = True{x|x < 1} medium(x) = True{x|x [1, 2]} large(x) = True{x|x > 2} Linguistic variables are used in crisp (prepositional, Boolean) logic rules: IF small-height(X) AND has-hat(X) AND has-beard(X) THEN (X is a Brownie) ELSE IF. . . ELSE. . .

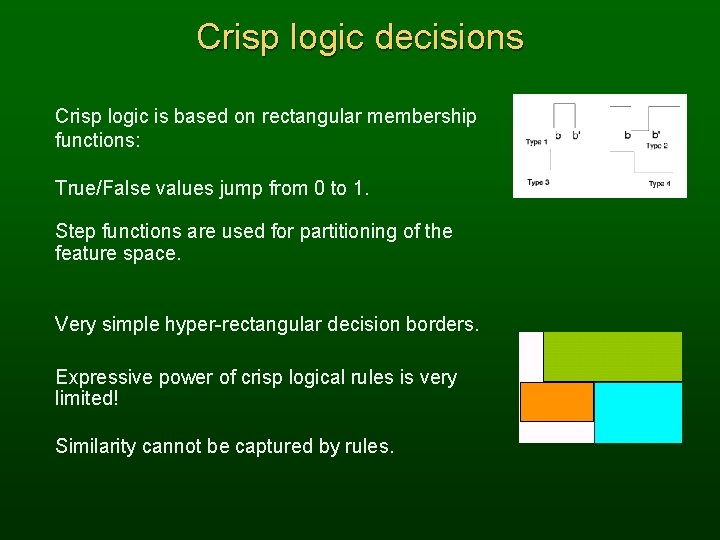

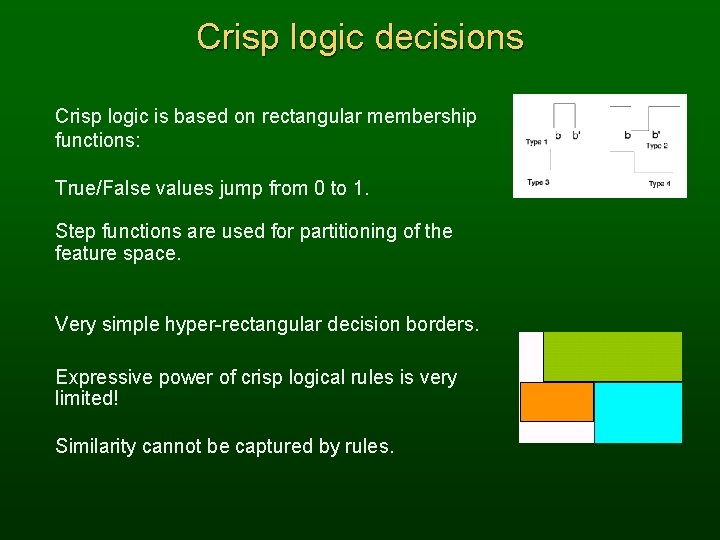

Crisp logic decisions Crisp logic is based on rectangular membership functions: True/False values jump from 0 to 1. Step functions are used for partitioning of the feature space. Very simple hyper-rectangular decision borders. Expressive power of crisp logical rules is very limited! Similarity cannot be captured by rules.

Logical rules - advantages Logical rules, if simple enough, are preferable. • Rules may expose limitations of black box solutions. • Only relevant features are used in rules. • Rules may sometimes be more accurate than NN and other CI methods. • Overfitting is easy to control, rules usually have small number of parameters. • Rules forever !? A logical rule about logical rules is: IF the number of rules is relatively small AND the accuracy is sufficiently high. THEN rules may be an optimal choice.

Logical rules - limitations Logical rules are preferred but. . . • Only one class is predicted p(Ci|X, M) = 0 or 1; such black-and-white picture may be inappropriate in many applications. • Discontinuous cost function allow only non-gradient optimization methods, more expensive. • Sets of rules are unstable: small change in the dataset leads to a large change in structure of sets of rules. • Reliable crisp rules may reject some cases as unclassified. • Interpretation of crisp rules may be misleading. • Fuzzy rules remove some limitations, but are not so comprehensible.

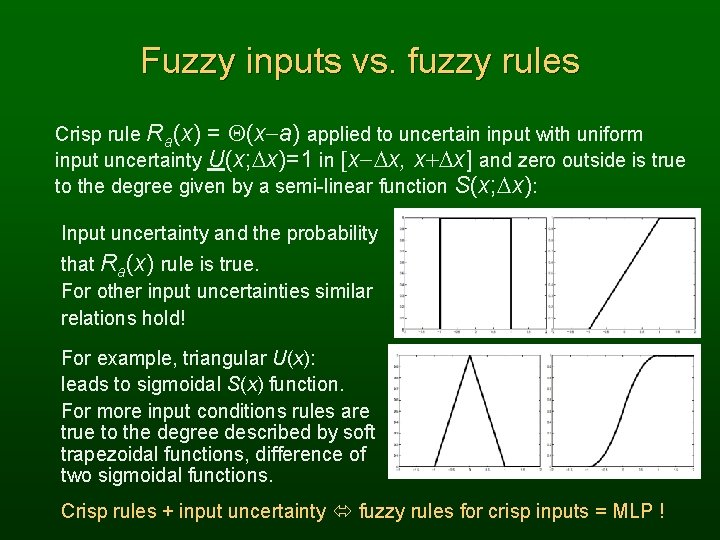

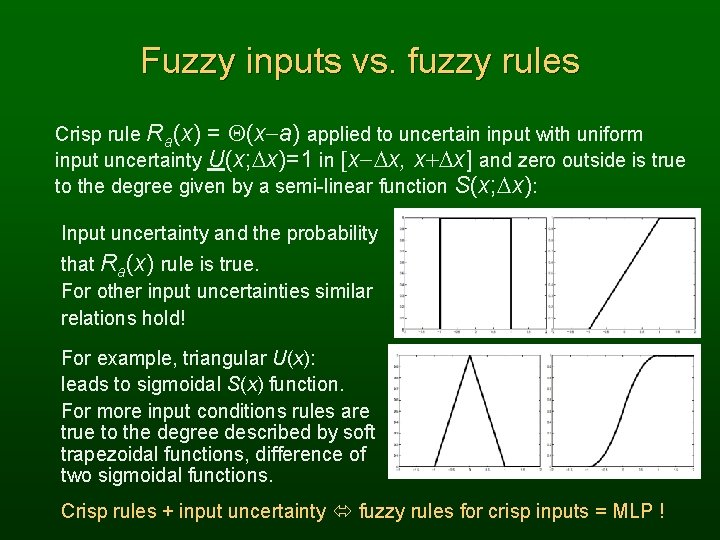

Fuzzy inputs vs. fuzzy rules Crisp rule Ra(x) = Q(x-a) applied to uncertain input with uniform input uncertainty U(x; Dx)=1 in [x-Dx, x+Dx] and zero outside is true to the degree given by a semi-linear function S(x; Dx): Input uncertainty and the probability that Ra(x) rule is true. For other input uncertainties similar relations hold! For example, triangular U(x): leads to sigmoidal S(x) function. For more input conditions rules are true to the degree described by soft trapezoidal functions, difference of two sigmoidal functions. Crisp rules + input uncertainty fuzzy rules for crisp inputs = MLP !

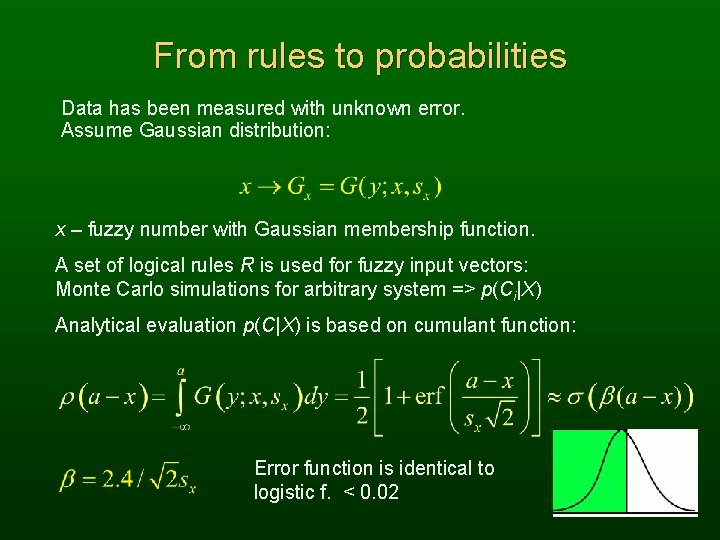

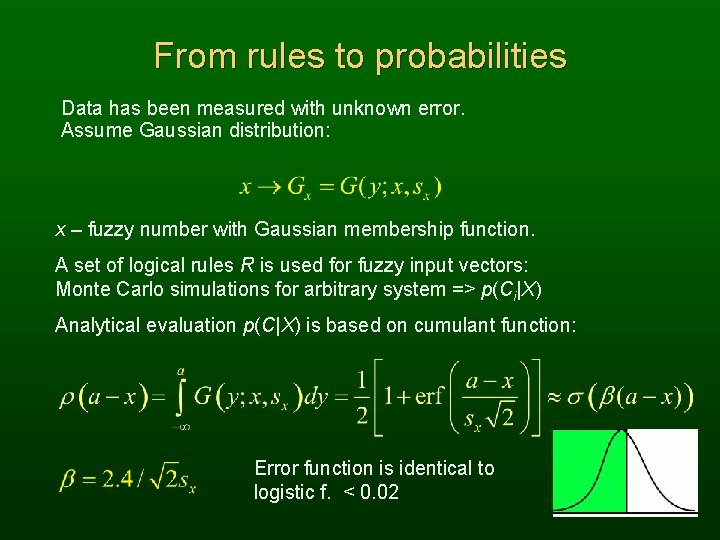

From rules to probabilities Data has been measured with unknown error. Assume Gaussian distribution: x – fuzzy number with Gaussian membership function. A set of logical rules R is used for fuzzy input vectors: Monte Carlo simulations for arbitrary system => p(Ci|X) Analytical evaluation p(C|X) is based on cumulant function: Error function is identical to logistic f. < 0. 02

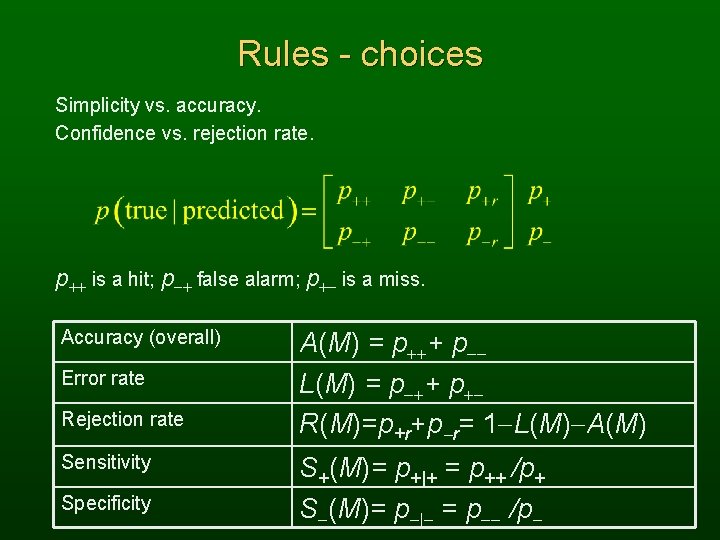

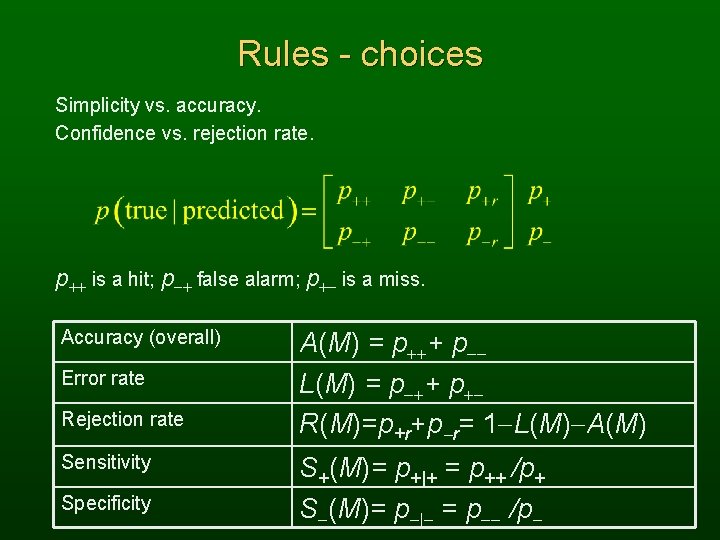

Rules - choices Simplicity vs. accuracy. Confidence vs. rejection rate. p++ is a hit; p-+ false alarm; p+- is a miss. Accuracy (overall) Error rate Rejection rate Sensitivity Specificity A(M) = p+++ p-L(M) = p-++ p+R(M)=p+r+p-r= 1 -L(M)-A(M) S+(M)= p+|+ = p++ /p+ S-(M)= p-|- = p-- /p-

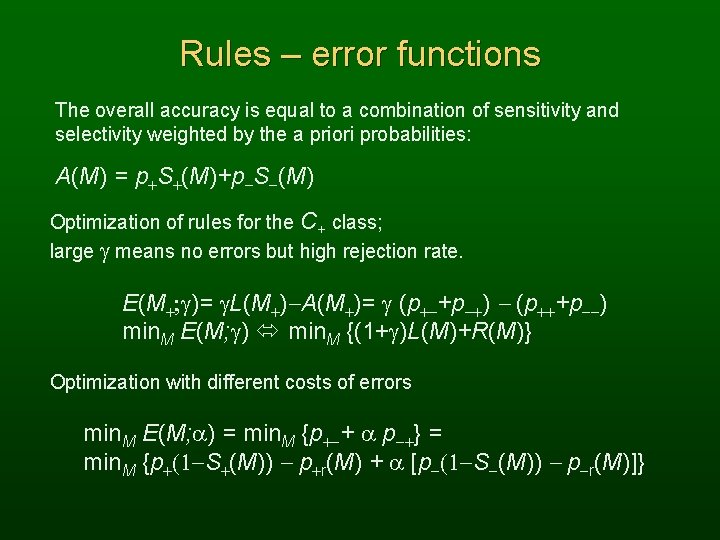

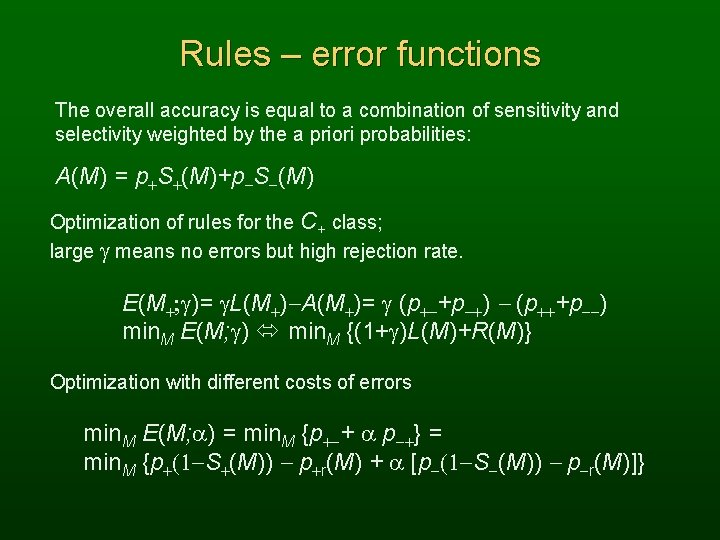

Rules – error functions The overall accuracy is equal to a combination of sensitivity and selectivity weighted by the a priori probabilities: A(M) = p+S+(M)+p-S-(M) Optimization of rules for the C+ class; large g means no errors but high rejection rate. E(M+; g)= g. L(M+)-A(M+)= g (p+-+p-+) - (p+++p--) min. M E(M; g) min. M {(1+g)L(M)+R(M)} Optimization with different costs of errors min. M E(M; a) = min. M {p+-+ a p-+} = min. M {p+(1 -S+(M)) - p+r(M) + a [p-(1 -S-(M)) - p-r(M)]}

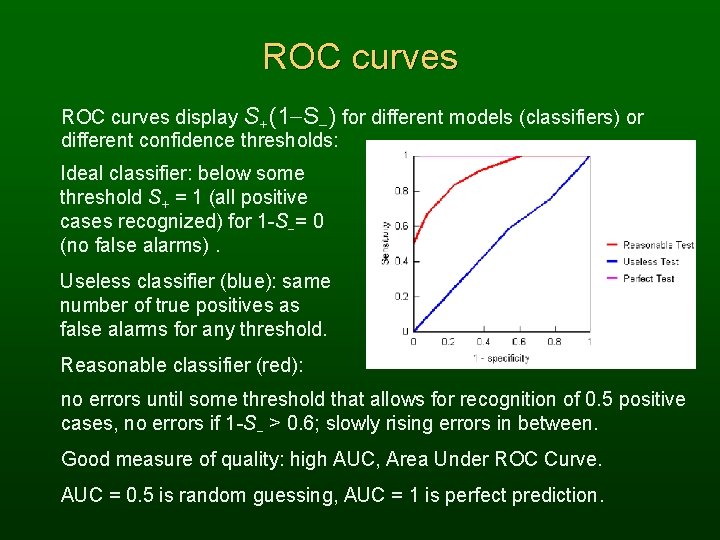

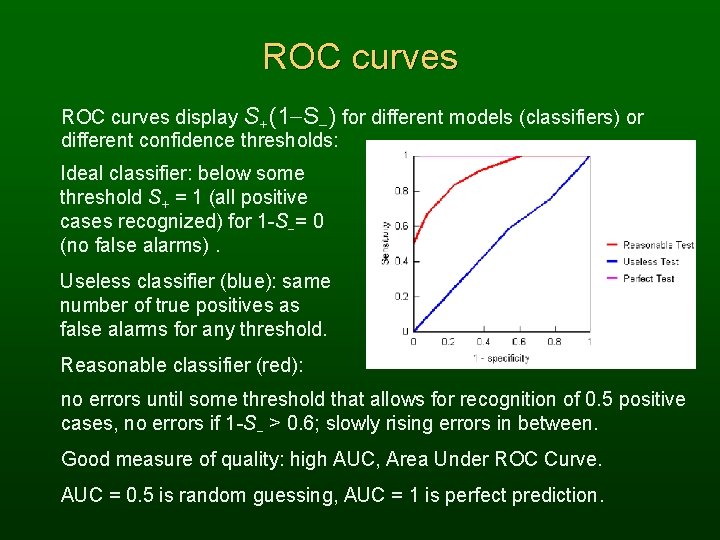

ROC curves display S+(1 -S-) for different models (classifiers) or different confidence thresholds: Ideal classifier: below some threshold S+ = 1 (all positive cases recognized) for 1 -S-= 0 (no false alarms). Useless classifier (blue): same number of true positives as false alarms for any threshold. Reasonable classifier (red): no errors until some threshold that allows for recognition of 0. 5 positive cases, no errors if 1 -S- > 0. 6; slowly rising errors in between. Good measure of quality: high AUC, Area Under ROC Curve. AUC = 0. 5 is random guessing, AUC = 1 is perfect prediction.

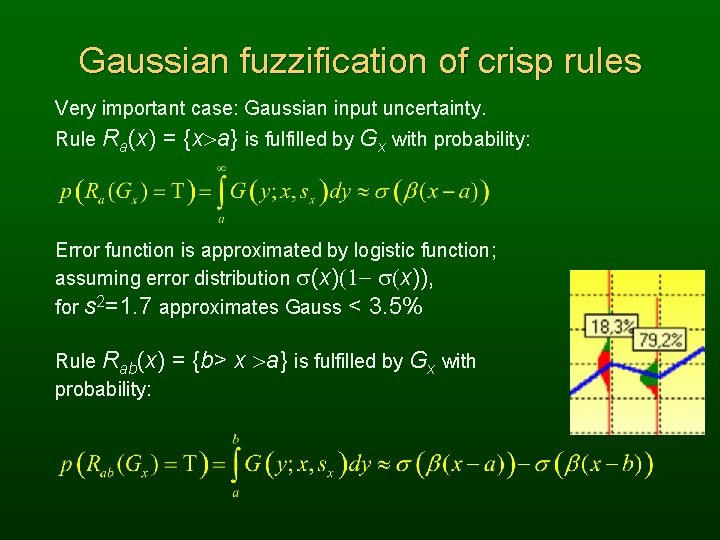

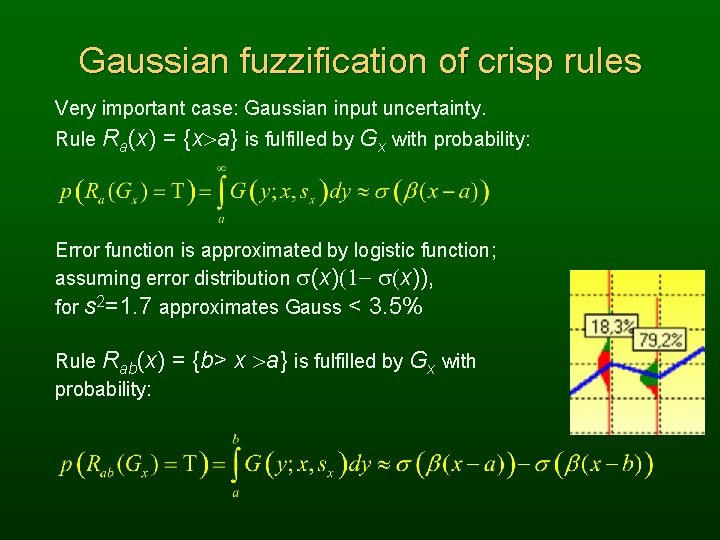

Gaussian fuzzification of crisp rules Very important case: Gaussian input uncertainty. Rule Ra(x) = {x>a} is fulfilled by Gx with probability: Error function is approximated by logistic function; assuming error distribution s(x)(1 - s(x)), for s 2=1. 7 approximates Gauss < 3. 5% Rule Rab(x) probability: = {b> x >a} is fulfilled by Gx with

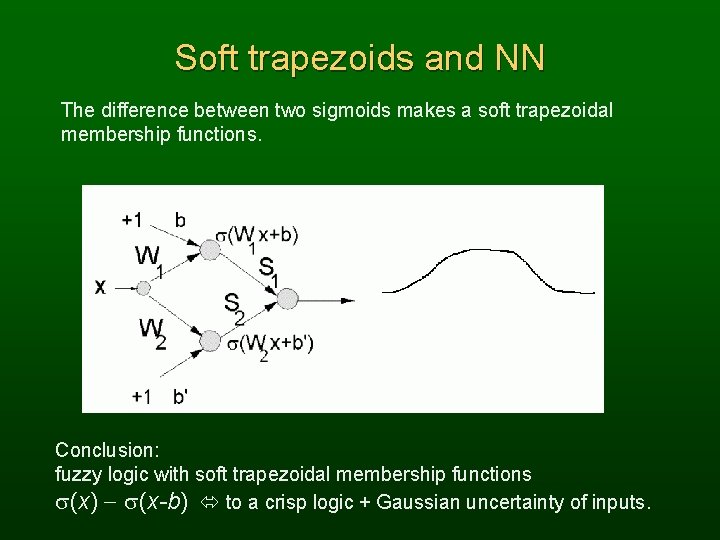

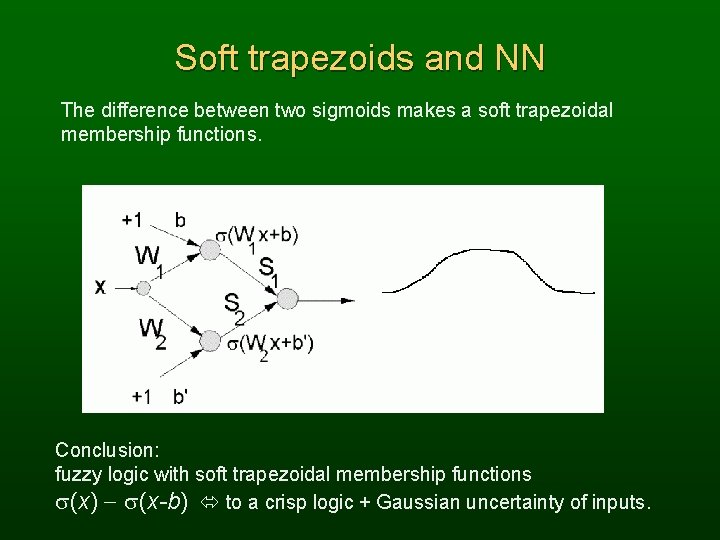

Soft trapezoids and NN The difference between two sigmoids makes a soft trapezoidal membership functions. Conclusion: fuzzy logic with soft trapezoidal membership functions s(x) - s(x-b) to a crisp logic + Gaussian uncertainty of inputs.

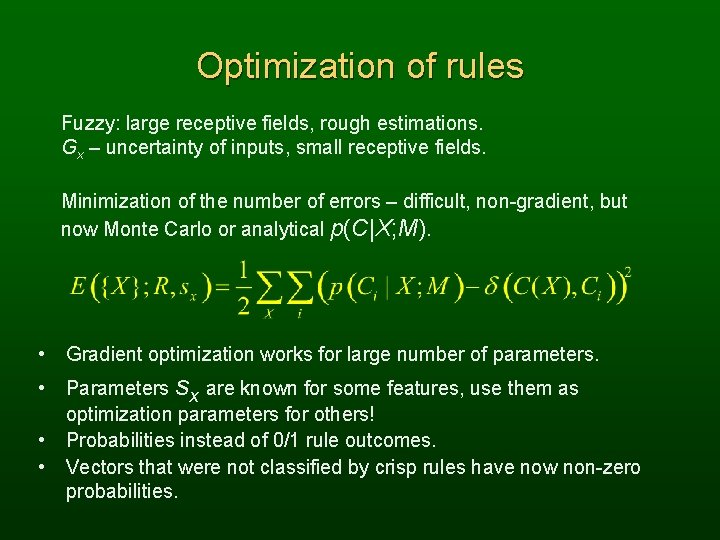

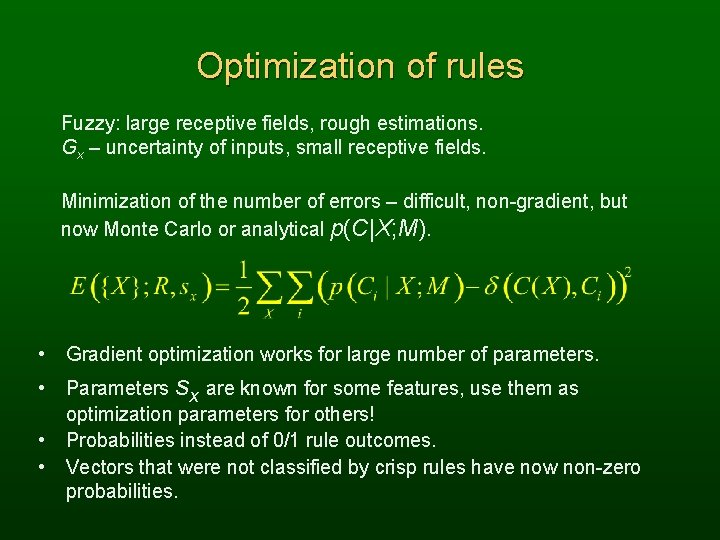

Optimization of rules Fuzzy: large receptive fields, rough estimations. Gx – uncertainty of inputs, small receptive fields. Minimization of the number of errors – difficult, non-gradient, but now Monte Carlo or analytical p(C|X; M). • Gradient optimization works for large number of parameters. • Parameters sx are known for some features, use them as optimization parameters for others! Probabilities instead of 0/1 rule outcomes. Vectors that were not classified by crisp rules have now non-zero probabilities. • •

Mushrooms The Mushroom Guide: no simple rule for mushrooms; no rule like: ‘leaflets three, let it be’ for Poisonous Oak and Ivy. 8124 cases, 51. 8% are edible, the rest non-edible. 22 symbolic attributes, up to 12 values each, equivalent to 118 logical features, or 2118=3 1035 possible input vectors. Cap Shape: bell, conical, convex, flat, knobbed, sunken Cap Surface: fibrous, grooves, scaly, smooth Cap Color: brown, buff, cinnamon, gray, green, pink, red, white, yellow Bruises: bruised, not bruised Odor: almond, anise, creosote, fishy, foul, musty, none, pungent, spicy Spore print color: black, brown, buff, chocolate, green. . . yellow. Gill Attachment: attached, descending, free, notched Gill Spacing: close, crowded, distant Population: abundant, clustered, numerous, scattered, several, solitary Habitat: grasses, leaves, meadows, paths, urban, waste, woods. . .

Mushrooms data Mushroom-3 is: edible, bell, smooth, white, bruises, almond, free, close, broad, white, enlarging, club, smooth, white, partial, white, one, pendant, black, scattered, meadows Mushroom-4 is: poisonous, convex, smooth, white, bruises, pungent, free, close, narrow, white, enlarging, equal, smooth, white, partial, white, one, pendant, black, scattered, urban Mushroom-5 is: poisonous, convex, smooth, white, bruises, pungent, free, close, narrow, pink, enlarging, equal, smooth, white, partial, white, one, pendant, black, several, urban Mushroom-8000 is: poisonous, convex, smooth, white, bruises, pungent, free, close, narrow, pink, enlarging, equal, smooth, white, partial, white, one, pendant, brown, scattered, urban. What knowledge is hidden in this data?

Mushroom rule To eat or not to eat, this is the question! Well, not any more. . . Safe rule for edible mushrooms found by the SSV decision tree and MLP 2 LN network: IF odor is none or almond or anise AND spore_print_color is not green THEN mushroom is edible This rule makes only 48 errors, is 99. 41% correct. This is why animals have such a good sense of smell ! What does it tell us about odor receptors in animal noses ? This rule has been quoted by > 50 encyclopedias so far!

Mushrooms rules To eat or not to eat, this is the question! Well, not any more. . . A mushroom is poisonous if: R 1) odor = Ř (almond anise none); 120 errors, 98. 52% R 2) spore-print-color = green 48 errors, 99. 41% R 3) odor = none Ů stalk-surface-below-ring = scaly Ů stalk-color-above-ring = Ř brown 8 errors, 99. 90% R 4) habitat = leaves Ů cap-color = white no errors! R 1 + R 2 are quite stable, found even with 10% of data; R 3 and R 4 may be replaced by other rules, ex: R'3): gill-size=narrow Ů stalk-surface-above-ring=(silky scaly) R'4): gill-size=narrow Ů population=clustered Only 5 of 22 attributes used! Simplest possible rules? 100% in CV tests - structure of this data is completely clear.

Recurrence of breast cancer Institute of Oncology, University Medical Center, Ljubljana. 286 cases, 201 no (70. 3%), 85 recurrence cases (29. 7%) 9 symbolic features: age (9 bins), tumor-size (12 bins), nodes involved (13 bins), degree-malignant (1, 2, 3), area, radiation, menopause, nodecaps. no-recurrence, 40 -49, premeno, 25 -29, 0 -2, ? , 2, left, right_low, yes Many systems were used on this data with 65 -78% accuracy reported. Best single rule: IF (nodes-involved [0, 2] degree-malignant = 3 THEN recurrence ELSE no-recurrence 77% accuracy, only trivial knowledge in the data: highly malignant cancer involving many nodes is likely to strike back.

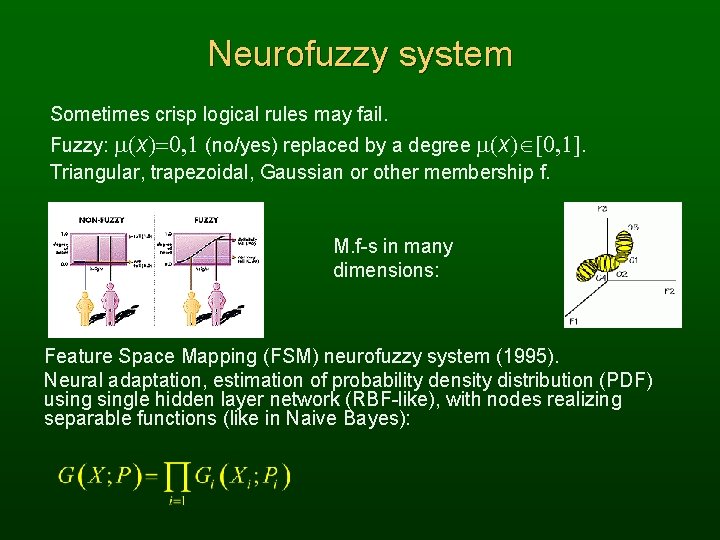

Neurofuzzy system Sometimes crisp logical rules may fail. Fuzzy: m(x)=0, 1 (no/yes) replaced by a degree m(x) [0, 1]. Triangular, trapezoidal, Gaussian or other membership f. M. f-s in many dimensions: Feature Space Mapping (FSM) neurofuzzy system (1995). Neural adaptation, estimation of probability density distribution (PDF) usingle hidden layer network (RBF-like), with nodes realizing separable functions (like in Naive Bayes):

FSM Initialize using clusterization or decision trees. Triangular & Gaussian f. for fuzzy rules. Rectangular functions for crisp rules. Between 9 -14 rules with triangular membership functions are created; accuracy in 10 x. CV tests about 96± 4. 5% Similar results obtained with Gaussian functions. Rectangular functions: simple rules are created, many nearly equivalent descriptions of this data exist. If proline > 929. 5 then class 1 (48 cases, 45 correct + 2 recovered by other rules). If color < 3. 79285 then class 2 (63 cases, 60 correct) Interesting rules, but overall accuracy is only 88± 9%

Prototype-based rules C-rules (Crisp), are a special case of F-rules (fuzzy rules) are a special case of P-rules (Prototype). P-rules have the form: IF P = arg min. R D(X, R) THAN Class(X)=Class(P) D(X, R) is a dissimilarity (distance) function, determining decision borders around prototype P. P-rules are easy to interpret! F-rules may always be presented as P-rules, so this is an alternative to neurofuzzy systems. IF X=You are most similar to the P=Superman THAN You are in the Super-league. IF X=You are most similar to the P=Weakling THAN You are in the Failed-league. “Similar” may involve different features or D(X, P).

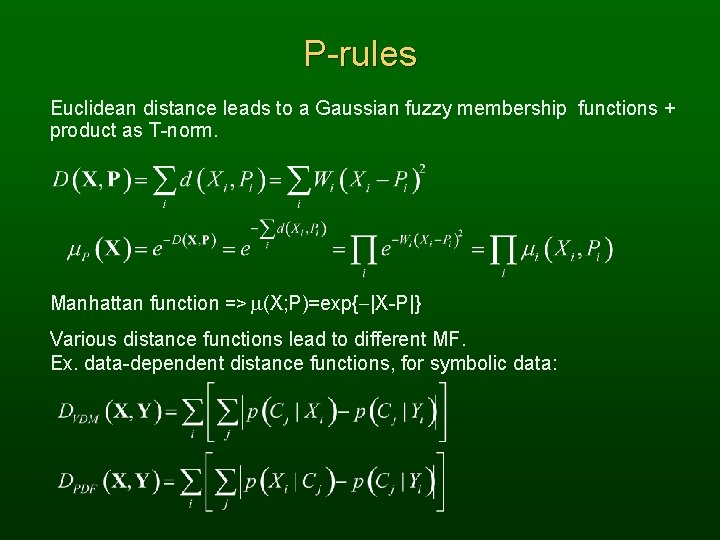

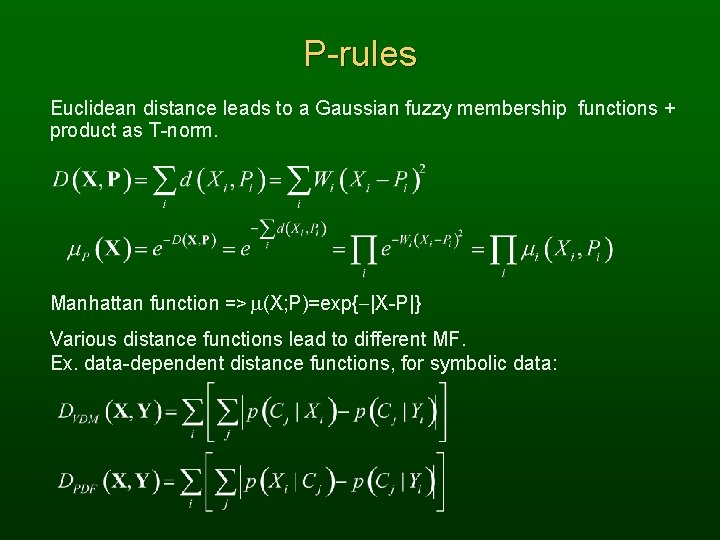

P-rules Euclidean distance leads to a Gaussian fuzzy membership functions + product as T-norm. Manhattan function => m(X; P)=exp{-|X-P|} Various distance functions lead to different MF. Ex. data-dependent distance functions, for symbolic data:

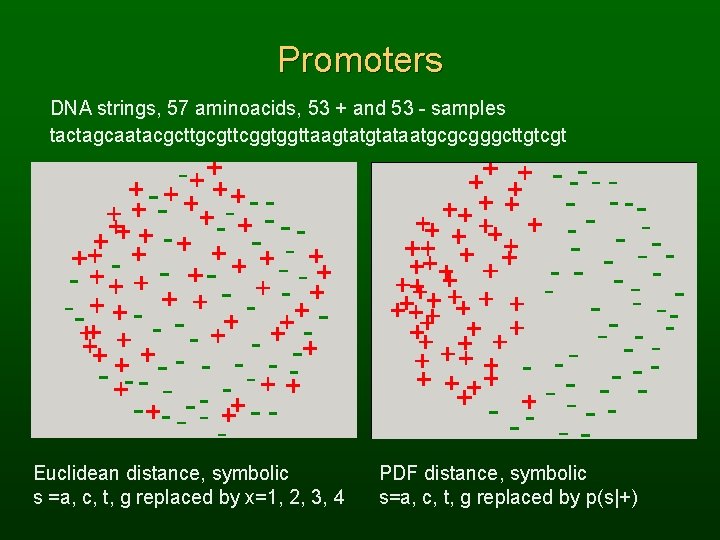

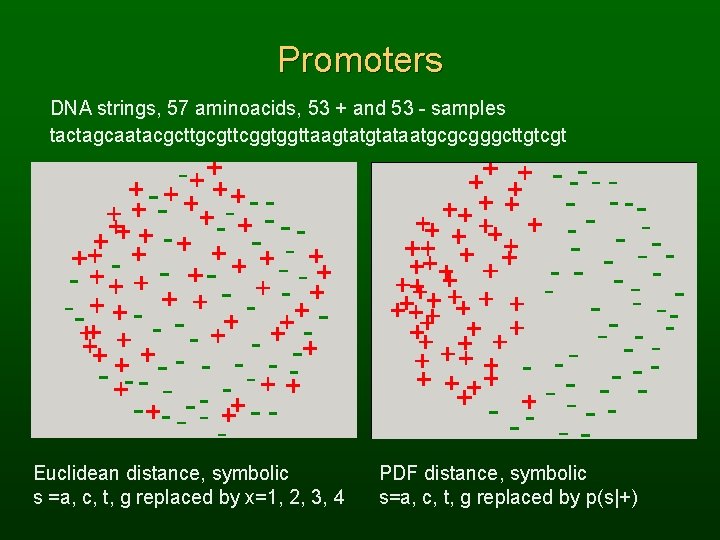

Promoters DNA strings, 57 aminoacids, 53 + and 53 - samples tactagcaatacgcttgcgttcggtggttaagtataatgcgcgggcttgtcgt Euclidean distance, symbolic s =a, c, t, g replaced by x=1, 2, 3, 4 PDF distance, symbolic s=a, c, t, g replaced by p(s|+)

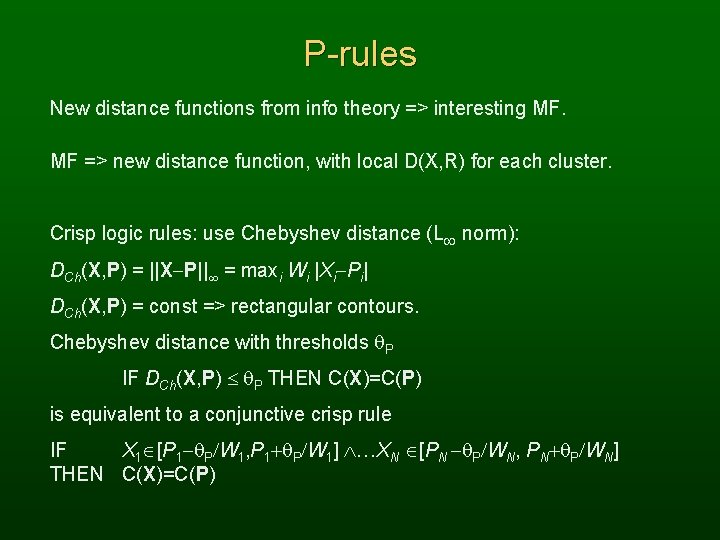

P-rules New distance functions from info theory => interesting MF. MF => new distance function, with local D(X, R) for each cluster. Crisp logic rules: use Chebyshev distance (L norm): DCh(X, P) = ||X-P|| = maxi Wi |Xi-Pi| DCh(X, P) = const => rectangular contours. Chebyshev distance with thresholds P IF DCh(X, P) P THEN C(X)=C(P) is equivalent to a conjunctive crisp rule IF X 1 [P 1 - P/W 1, P 1+ P/W 1] …XN [PN - P/WN, PN+ P/WN] THEN C(X)=C(P)

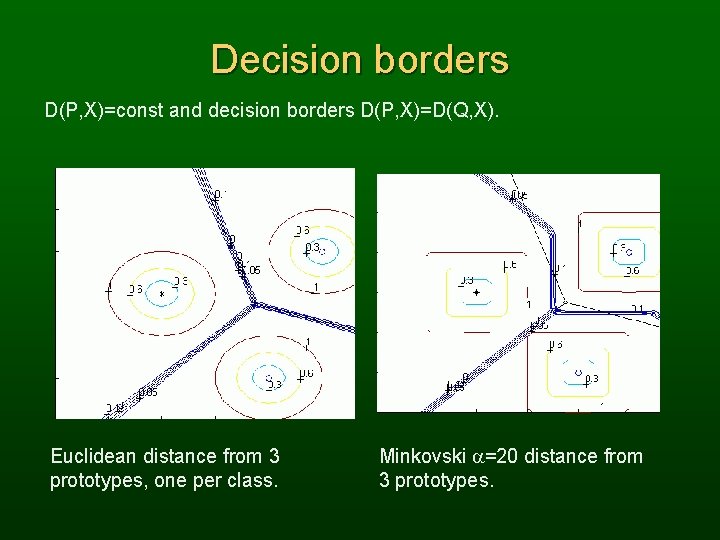

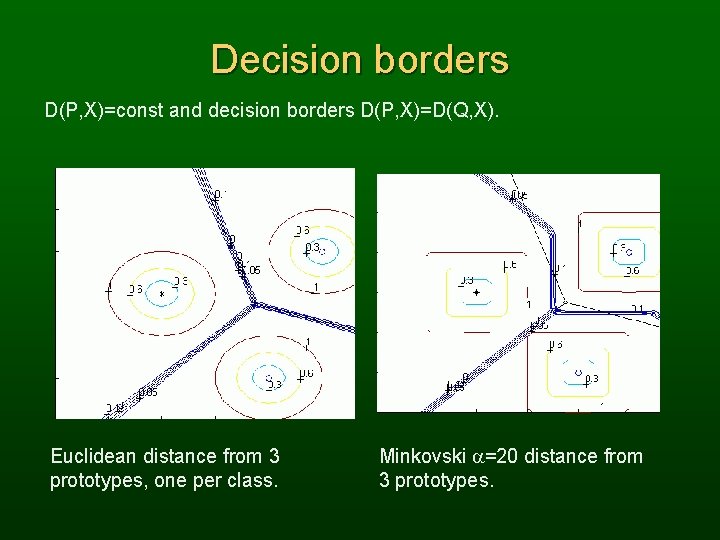

Decision borders D(P, X)=const and decision borders D(P, X)=D(Q, X). Euclidean distance from 3 prototypes, one per class. Minkovski a=20 distance from 3 prototypes.

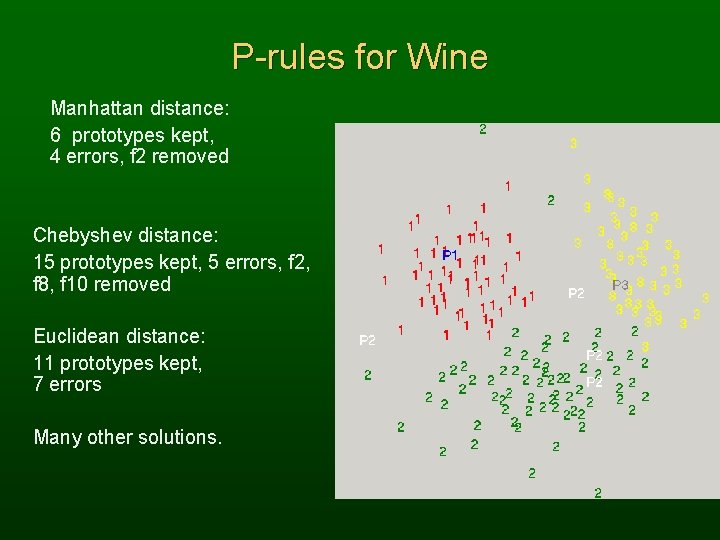

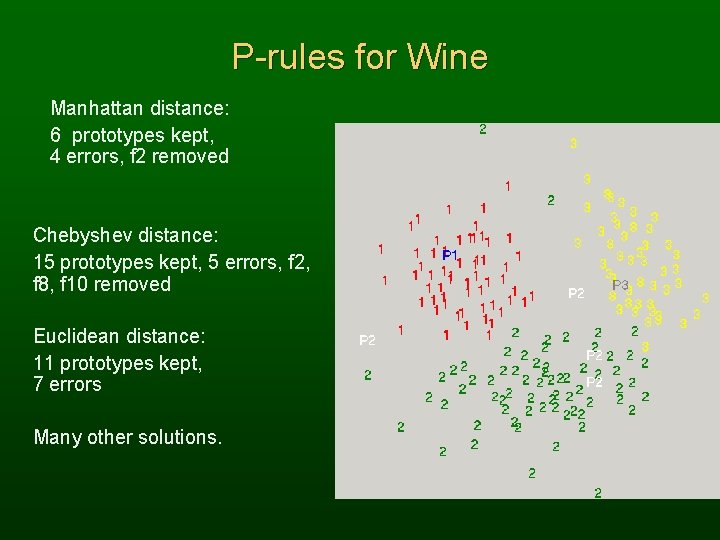

P-rules for Wine Manhattan distance: 6 prototypes kept, 4 errors, f 2 removed Chebyshev distance: 15 prototypes kept, 5 errors, f 2, f 8, f 10 removed Euclidean distance: 11 prototypes kept, 7 errors Many other solutions.

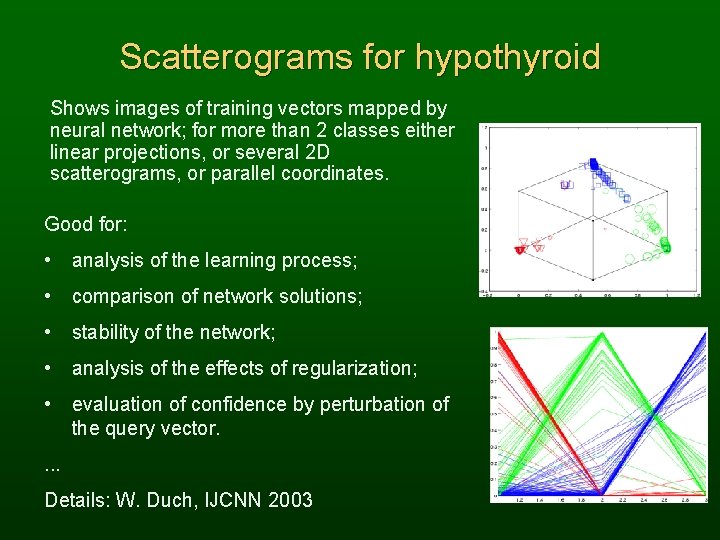

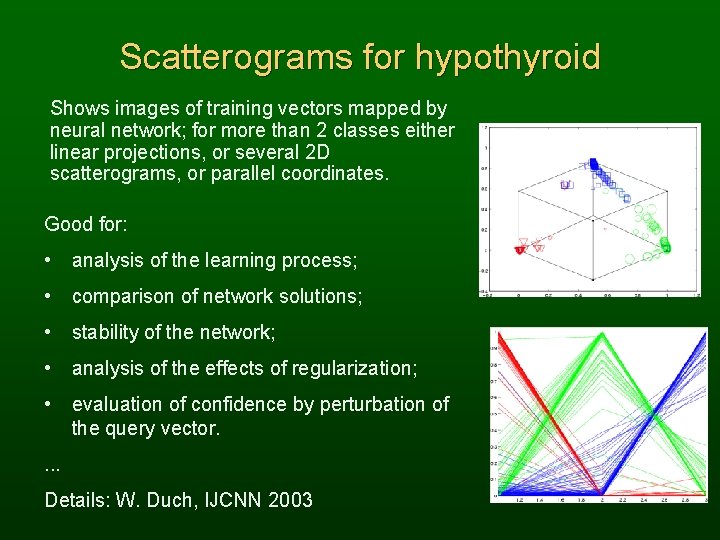

Scatterograms for hypothyroid Shows images of training vectors mapped by neural network; for more than 2 classes either linear projections, or several 2 D scatterograms, or parallel coordinates. Good for: • analysis of the learning process; • comparison of network solutions; • stability of the network; • analysis of the effects of regularization; • evaluation of confidence by perturbation of the query vector. . Details: W. Duch, IJCNN 2003

Neural networks • MLP – Multilayer Perceptrons, most popular NN models. Use soft hyperplanes for discrimination. Results are difficult to interpret, complex decision borders. Prediction, approximation: infinite number of classes. • RBF – Radial Basis Functions. RBF with Gaussian functions are equivalent to fuzzy systems with Gaussian membership functions, but … No feature selection => complex rules. Other radial functions => not separable! Use separable functions, not radial => FSM. • Many methods to convert MLP NN to logical rules.

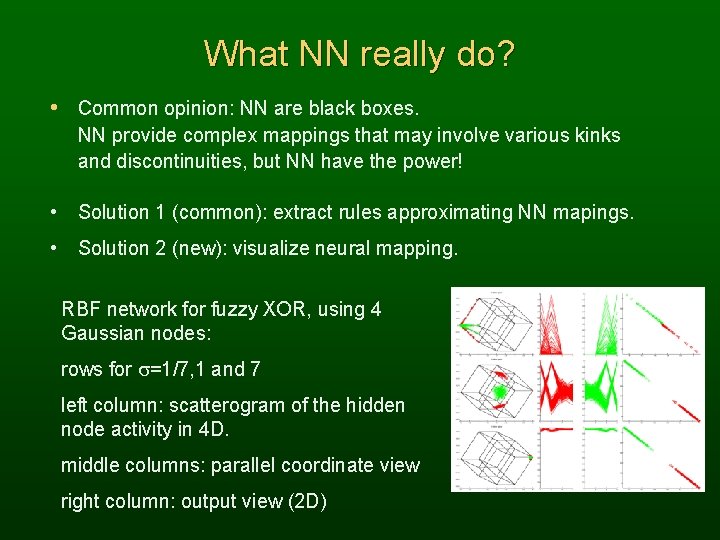

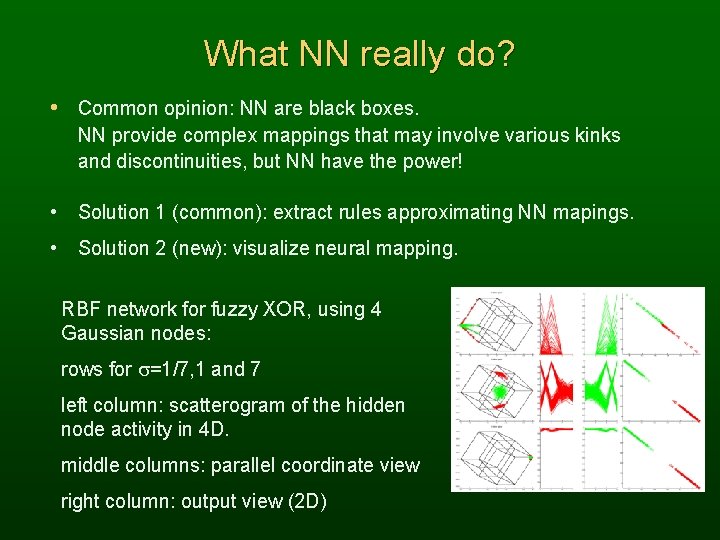

What NN really do? • Common opinion: NN are black boxes. NN provide complex mappings that may involve various kinks and discontinuities, but NN have the power! • Solution 1 (common): extract rules approximating NN mapings. • Solution 2 (new): visualize neural mapping. RBF network for fuzzy XOR, using 4 Gaussian nodes: rows for s=1/7, 1 and 7 left column: scatterogram of the hidden node activity in 4 D. middle columns: parallel coordinate view right column: output view (2 D)

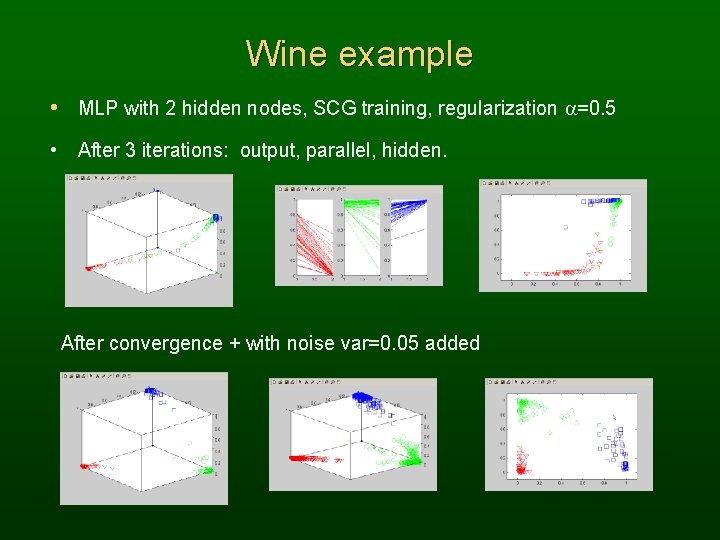

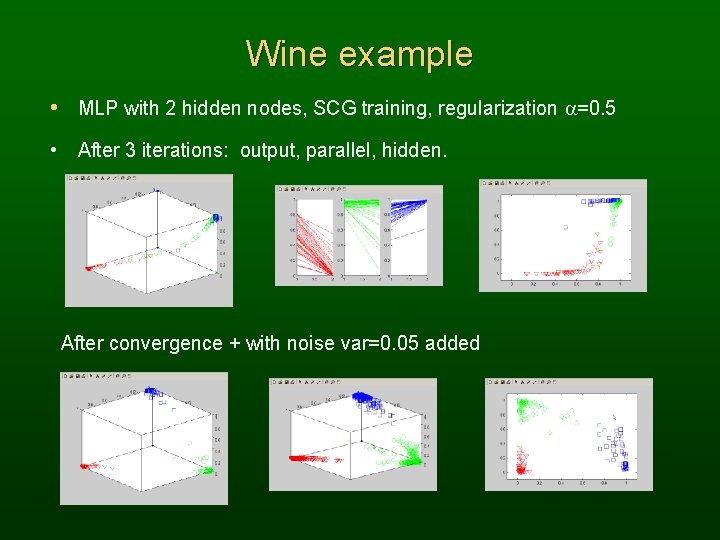

Wine example • MLP with 2 hidden nodes, SCG training, regularization a=0. 5 • After 3 iterations: output, parallel, hidden. After convergence + with noise var=0. 05 added

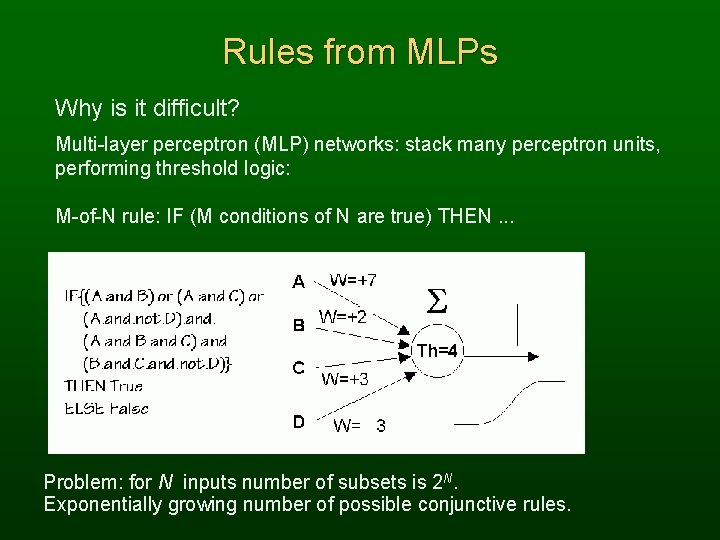

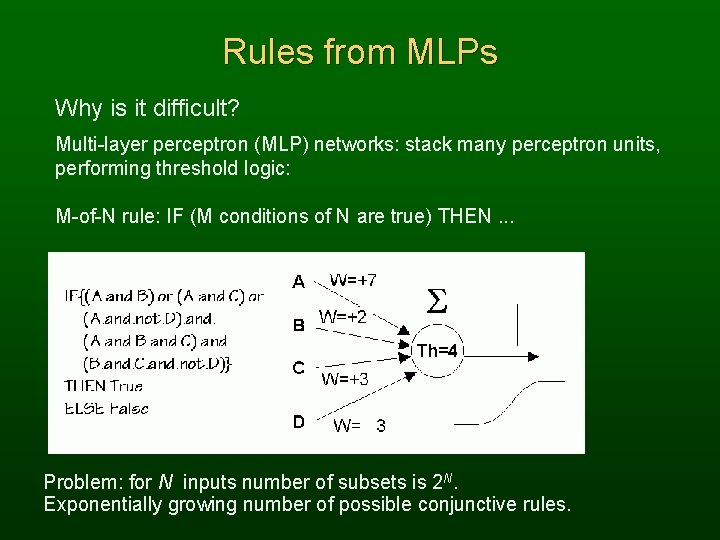

Rules from MLPs Why is it difficult? Multi-layer perceptron (MLP) networks: stack many perceptron units, performing threshold logic: M-of-N rule: IF (M conditions of N are true) THEN. . . Problem: for N inputs number of subsets is 2 N. Exponentially growing number of possible conjunctive rules.

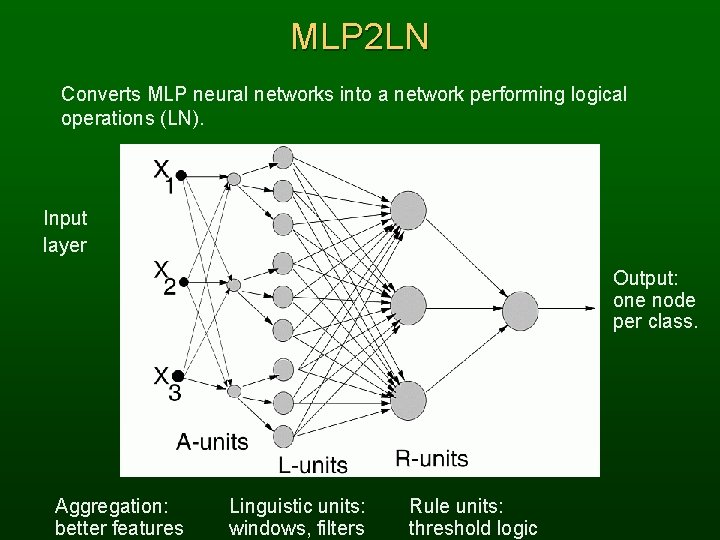

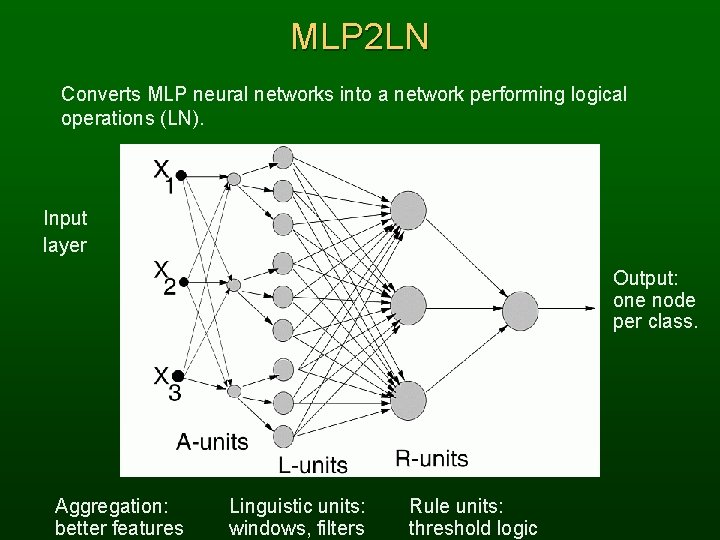

MLP 2 LN Converts MLP neural networks into a network performing logical operations (LN). Input layer Output: one node per class. Aggregation: better features Linguistic units: windows, filters Rule units: threshold logic

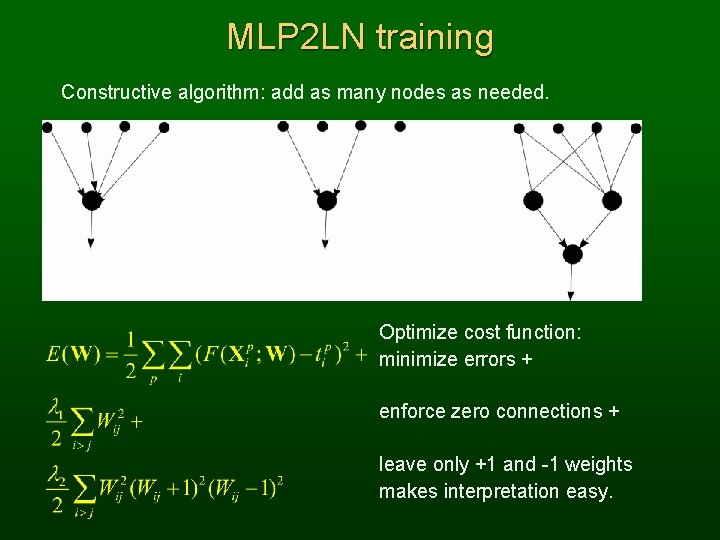

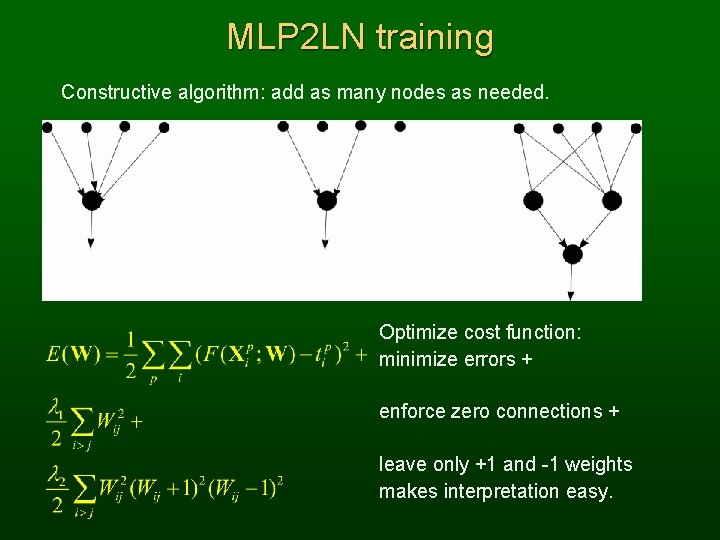

MLP 2 LN training Constructive algorithm: add as many nodes as needed. Optimize cost function: minimize errors + enforce zero connections + leave only +1 and -1 weights makes interpretation easy.

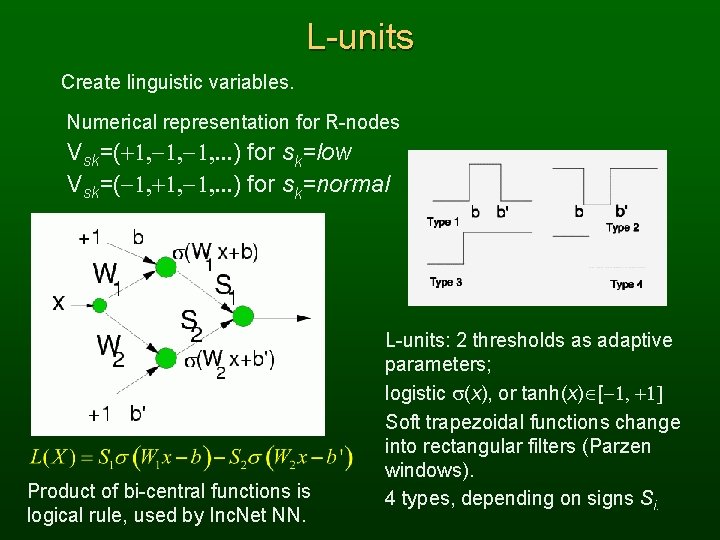

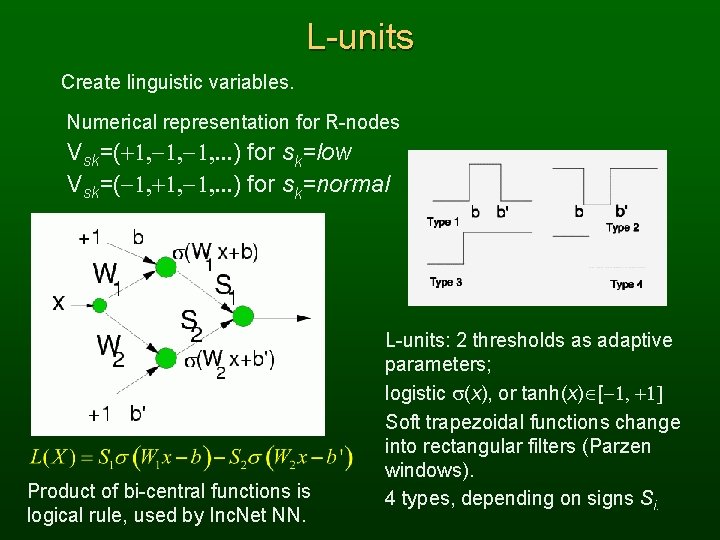

L-units Create linguistic variables. Numerical representation for R-nodes Vsk=(+1, -1, . . . ) for sk=low Vsk=(-1, +1, -1, . . . ) for sk=normal Product of bi-central functions is logical rule, used by Inc. Net NN. L-units: 2 thresholds as adaptive parameters; logistic s(x), or tanh(x) [-1, +1] Soft trapezoidal functions change into rectangular filters (Parzen windows). 4 types, depending on signs Si.

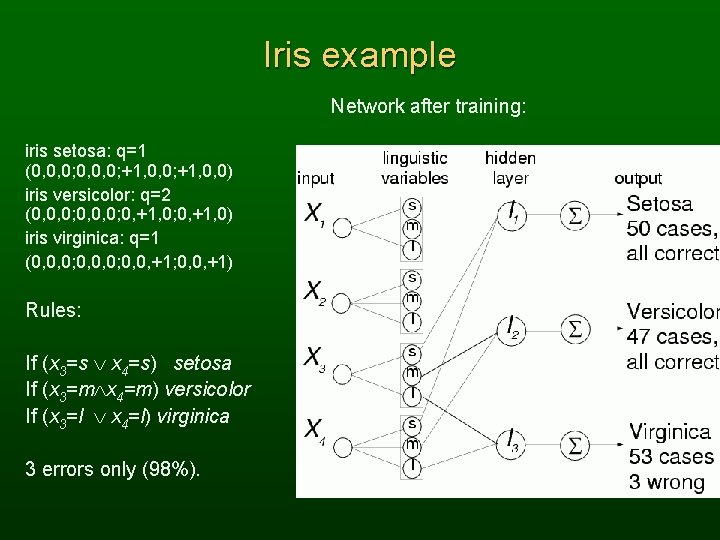

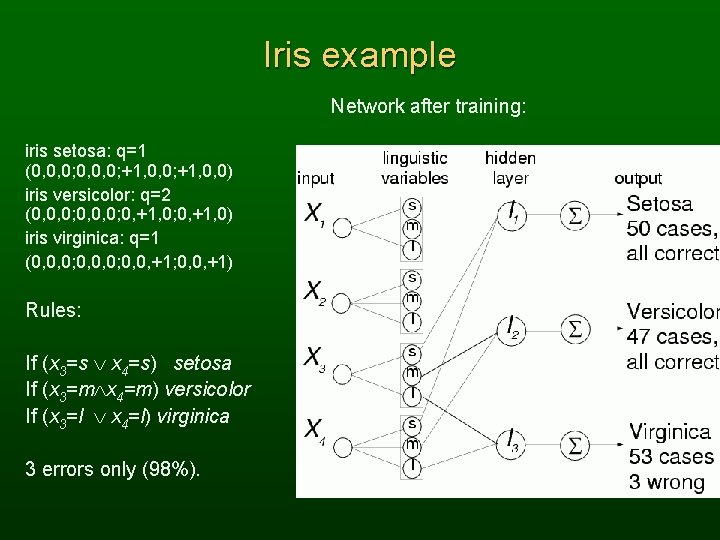

Iris example Network after training: iris setosa: q=1 (0, 0, 0; +1, 0, 0) iris versicolor: q=2 (0, 0, 0; 0, +1, 0) iris virginica: q=1 (0, 0, 0; 0, 0, +1; 0, 0, +1) Rules: If (x 3=s x 4=s) setosa If (x 3=m x 4=m) versicolor If (x 3=l x 4=l) virginica 3 errors only (98%).

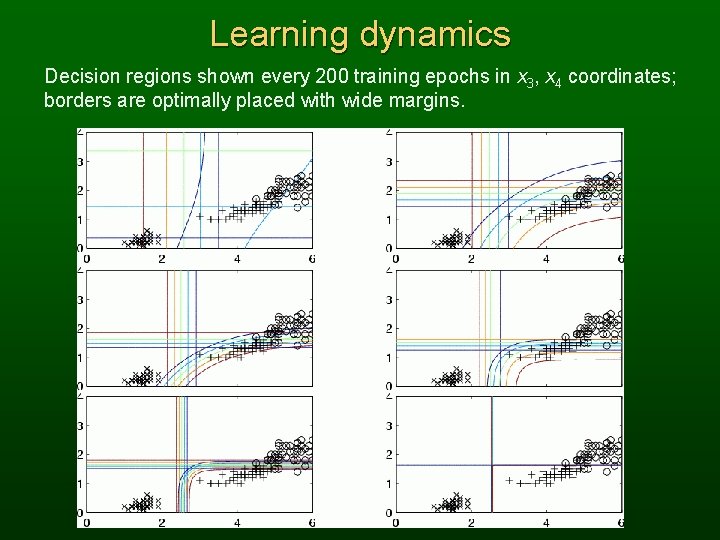

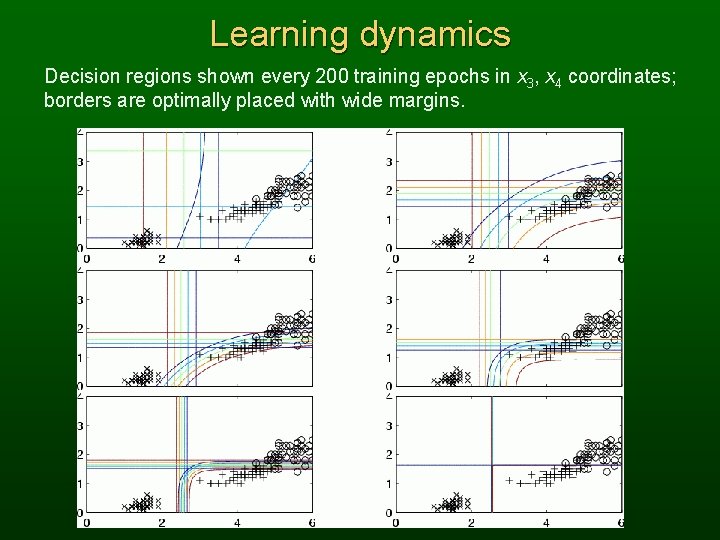

Learning dynamics Decision regions shown every 200 training epochs in x 3, x 4 coordinates; borders are optimally placed with wide margins.

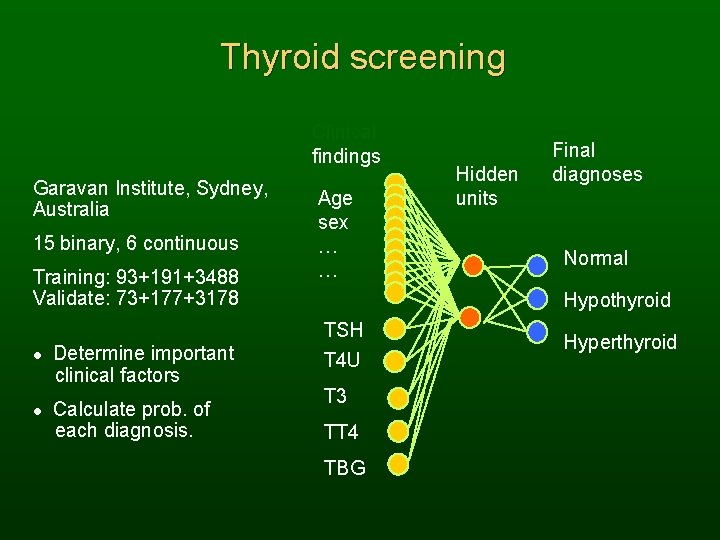

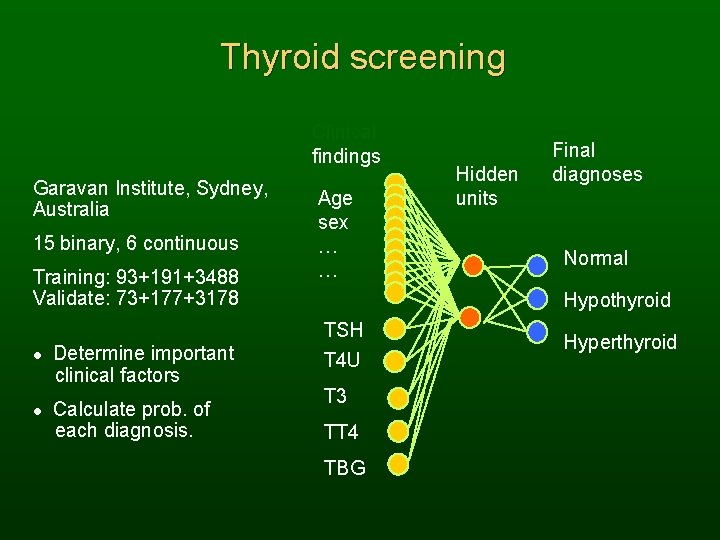

Thyroid screening Clinical findings Garavan Institute, Sydney, Australia 15 binary, 6 continuous Training: 93+191+3488 Validate: 73+177+3178 l l Determine important clinical factors Calculate prob. of each diagnosis. Age sex … … Hidden units Final diagnoses Normal Hypothyroid TSH T 4 U T 3 TT 4 TBG Hyperthyroid

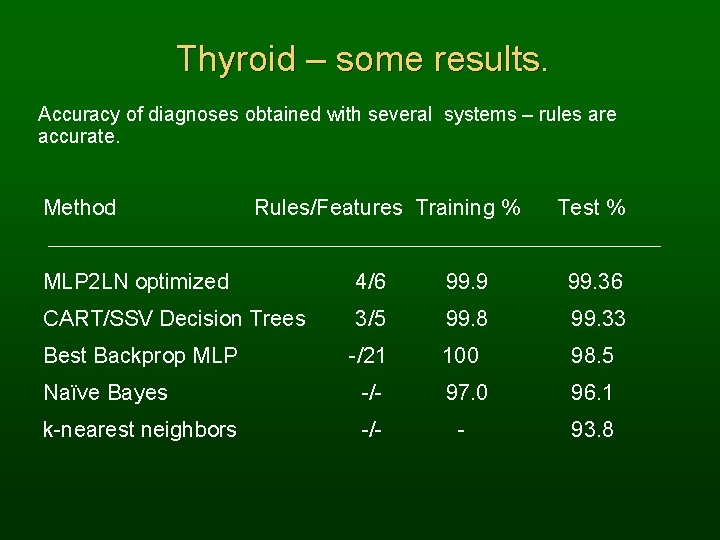

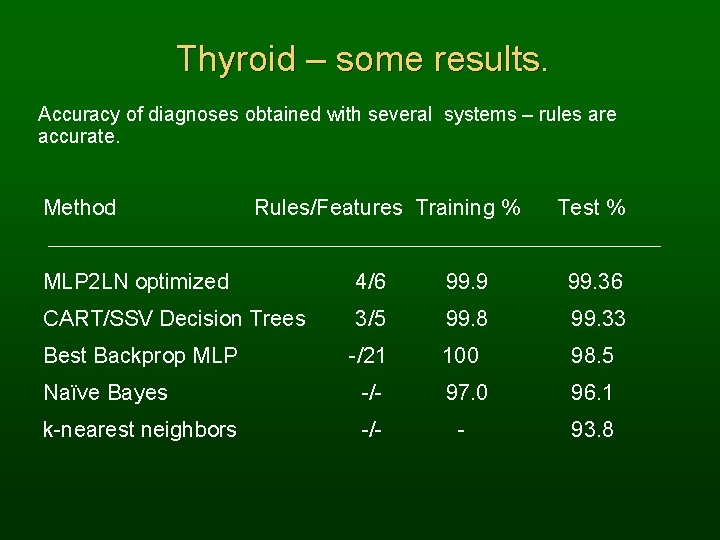

Thyroid – some results. Accuracy of diagnoses obtained with several systems – rules are accurate. Method Rules/Features Training % Test % MLP 2 LN optimized 4/6 99. 9 99. 36 CART/SSV Decision Trees 3/5 99. 8 99. 33 Best Backprop MLP -/21 100 98. 5 Naïve Bayes -/- 97. 0 96. 1 k-nearest neighbors -/- - 93. 8

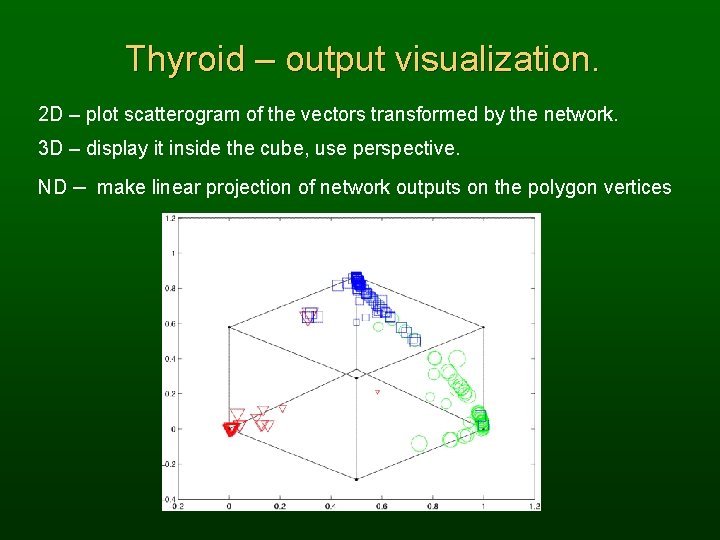

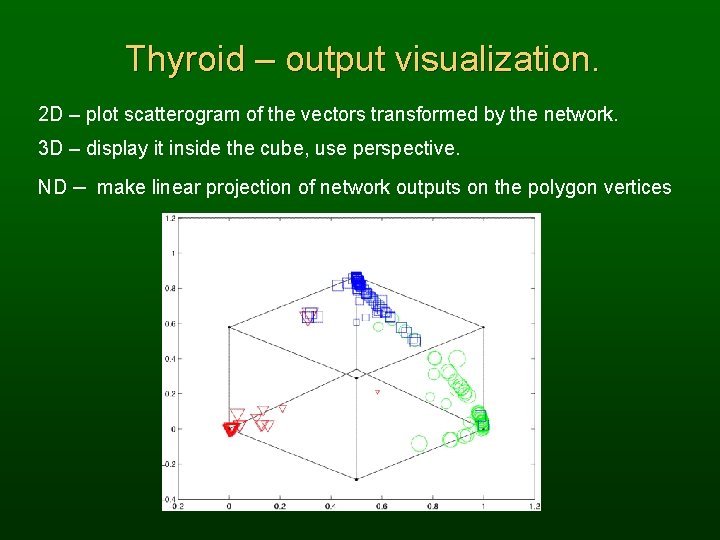

Thyroid – output visualization. 2 D – plot scatterogram of the vectors transformed by the network. 3 D – display it inside the cube, use perspective. ND – make linear projection of network outputs on the polygon vertices

Feature selection Feature Extraction, Foundations and Applications. Eds. Guyon, I, Gunn S, Nikravesh M, and Zadeh L, Springer Verlag, Heidelberg, 2005. NIPS 2003 competition: databases with 10. 000 -100. 000 features, data obtained from text analysis and bioinformatics problems. Without feature selection or extraction analysis is not possible. Our Info. Sel++ library (in C++) implements >20 methods for feature ranking & selection based on: mutual information, information gain, symmetrical uncertainty coefficient, asymmetric dependency coefficients, Mantaras distance using transinformation matrix, distances between probability distributions (Kolmogorov-Smirnov, Kullback. Leibler), Markov blanket, Pearson’s correlations coefficient (CC), Bayesian accuracy, etc. Such indices depend very strongly on discretization procedures; care has been taken to use unbiased probability and entropy estimators and to find appropriate discretization of continuous feature values.

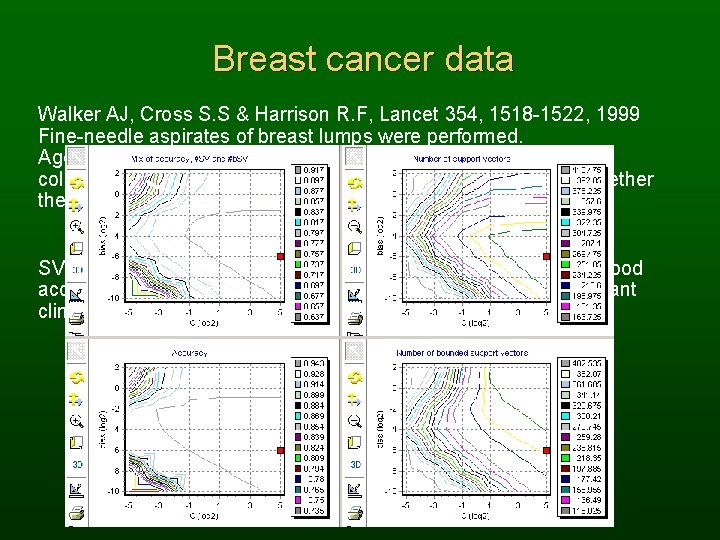

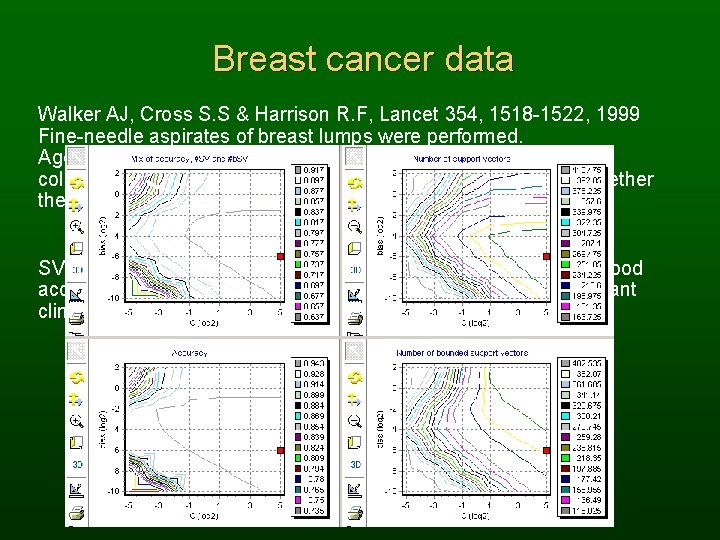

Breast cancer data Walker AJ, Cross S. S & Harrison R. F, Lancet 354, 1518 -1522, 1999 Fine-needle aspirates of breast lumps were performed. Age plus 10 observations made by experienced pathologist were collected for each breast cancer case; the final determination whether the cancer was malignant or benign was confirmed by biopsy. SVM with optimized Gaussian kernel gives in 10 x. CV tests very good accuracy 95, 49± 0, 29%, but gives no understanding of the important clinical factors.

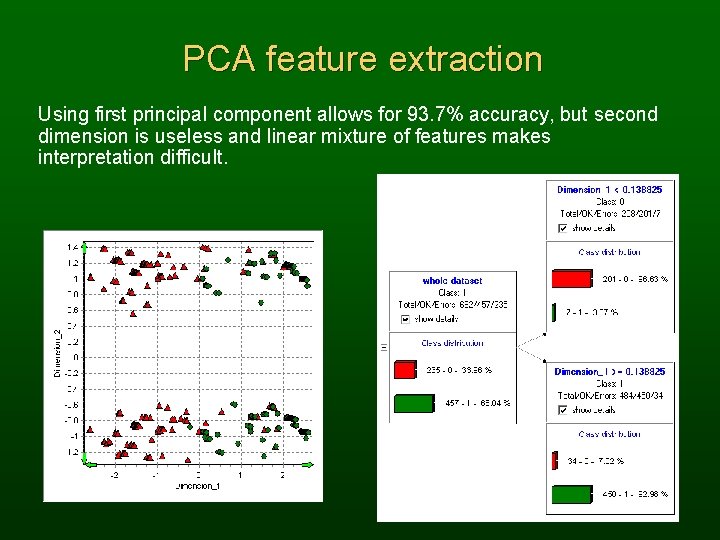

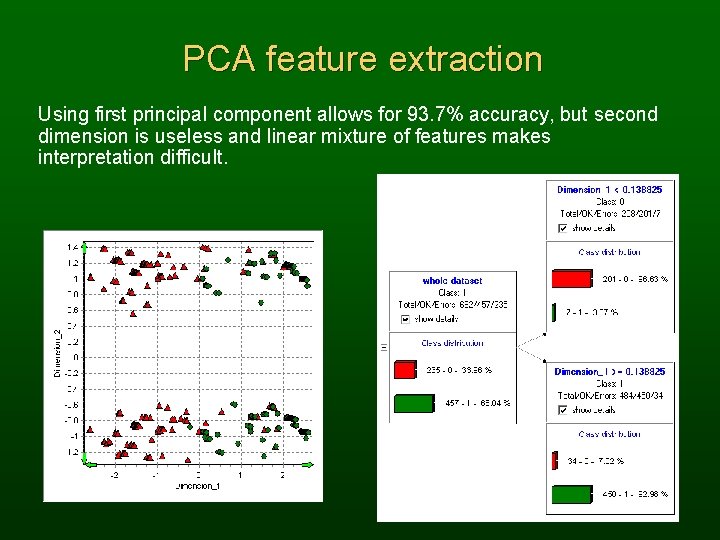

PCA feature extraction Using first principal component allows for 93. 7% accuracy, but second dimension is useless and linear mixture of features makes interpretation difficult.

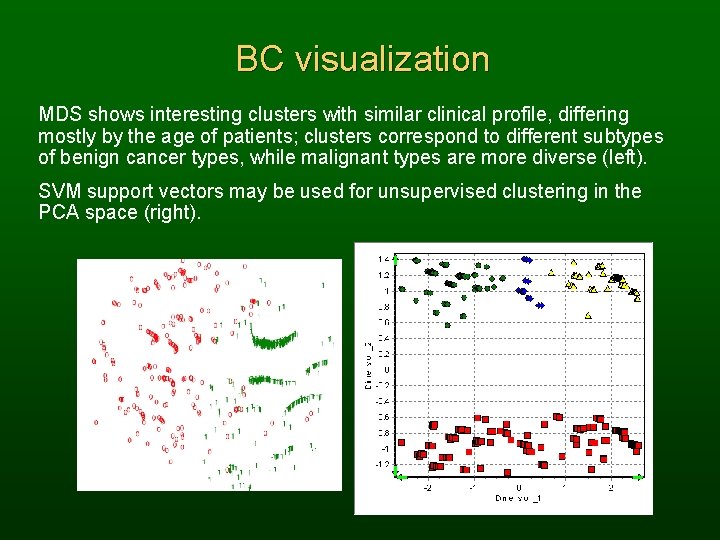

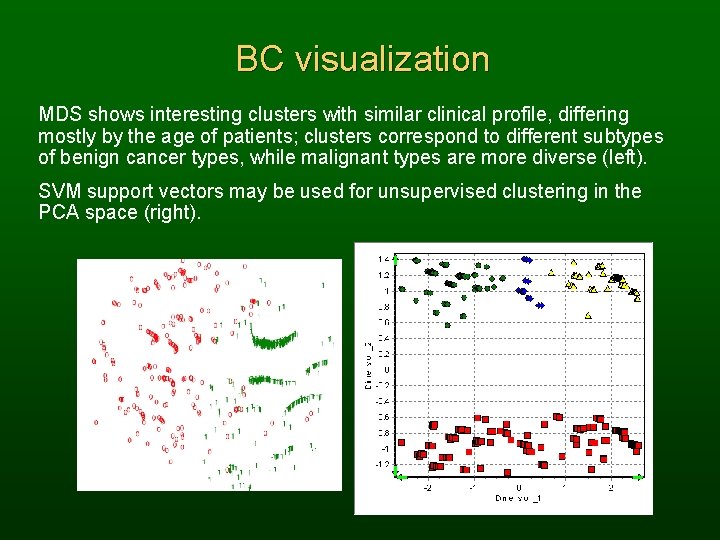

BC visualization MDS shows interesting clusters with similar clinical profile, differing mostly by the age of patients; clusters correspond to different subtypes of benign cancer types, while malignant types are more diverse (left). SVM support vectors may be used for unsupervised clustering in the PCA space (right).

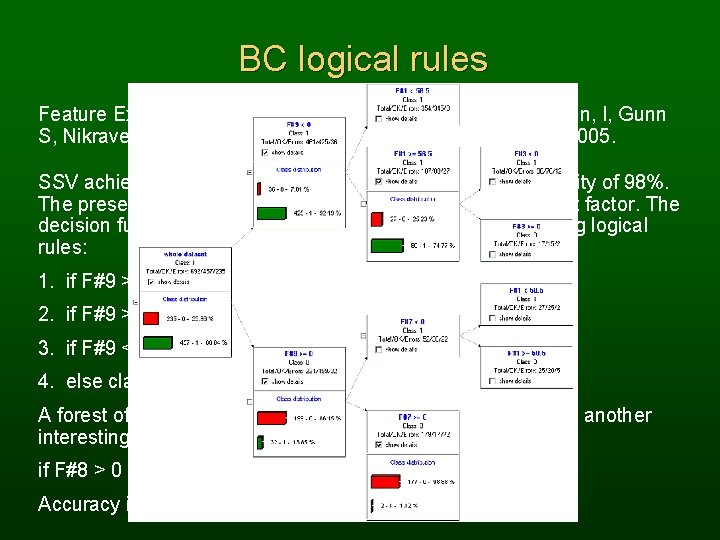

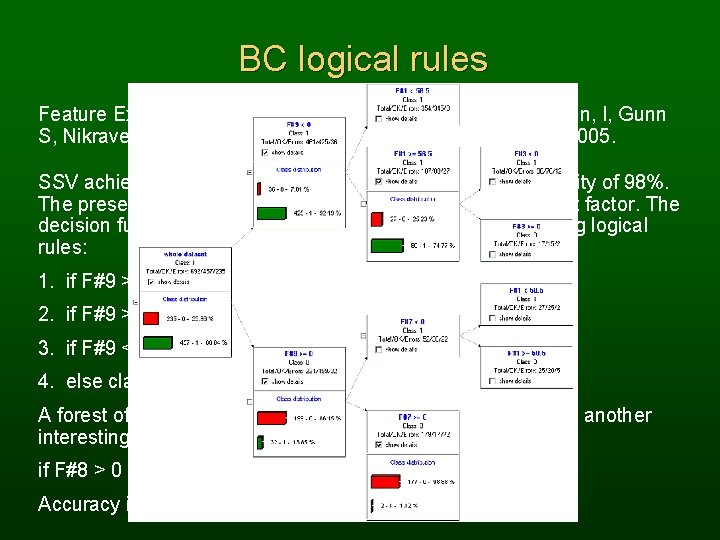

BC logical rules Feature Extraction, Foundations and Applications. Eds. Guyon, I, Gunn S, Nikravesh M, and Zadeh L, Springer Verlag, Heidelberg, 2005. SSV achieves 95. 4, % accuracy, 90% sensitivity, and specificity of 98%. The presence of necrotic epithelial cells is the most important factor. The decision function can be presented in the form of the following logical rules: 1. if F#9 > 0 and F#7 > 0 then class 0 2. if F#9 > 0 and F#7 < 0 and F#1 > 50. 5 then class 0 3. if F#9 < 0 and F#1 > 56. 5 and F#3 > 0 then class 0 4. else class 1 A forest of trees generated for the breast cancer data reveals another interesting classification rules: if F#8 > 0 and F#7 > 0 then class 0 else class 1. Accuracy is lower, 90. 6%, but the rule has 100% specificity.

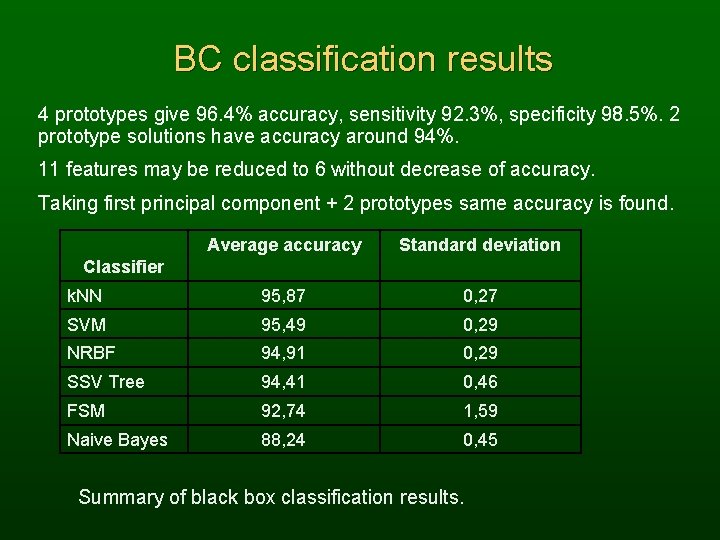

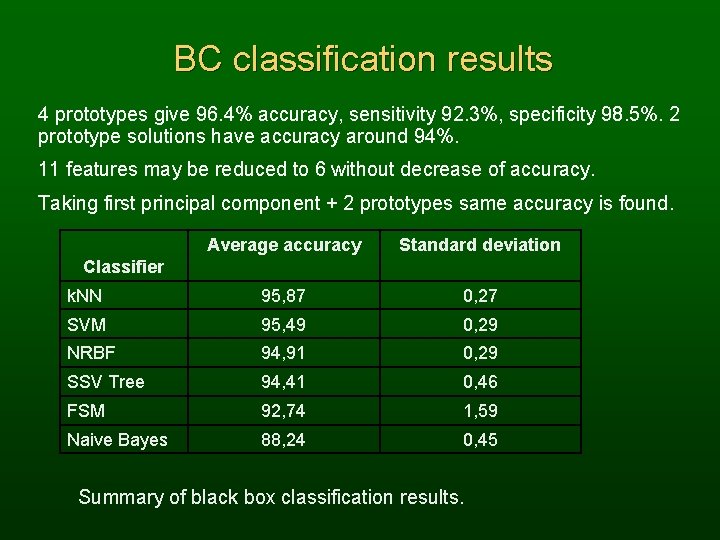

BC classification results 4 prototypes give 96. 4% accuracy, sensitivity 92. 3%, specificity 98. 5%. 2 prototype solutions have accuracy around 94%. 11 features may be reduced to 6 without decrease of accuracy. Taking first principal component + 2 prototypes same accuracy is found. Average accuracy Standard deviation k. NN 95, 87 0, 27 SVM 95, 49 0, 29 NRBF 94, 91 0, 29 SSV Tree 94, 41 0, 46 FSM 92, 74 1, 59 Naive Bayes 88, 24 0, 45 Classifier Summary of black box classification results.

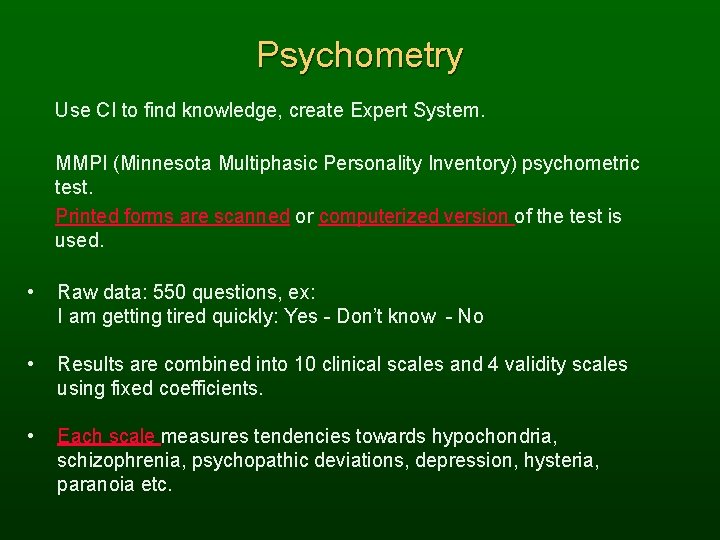

Psychometry Use CI to find knowledge, create Expert System. MMPI (Minnesota Multiphasic Personality Inventory) psychometric test. Printed forms are scanned or computerized version of the test is used. • Raw data: 550 questions, ex: I am getting tired quickly: Yes - Don’t know - No • Results are combined into 10 clinical scales and 4 validity scales using fixed coefficients. • Each scale measures tendencies towards hypochondria, schizophrenia, psychopathic deviations, depression, hysteria, paranoia etc.

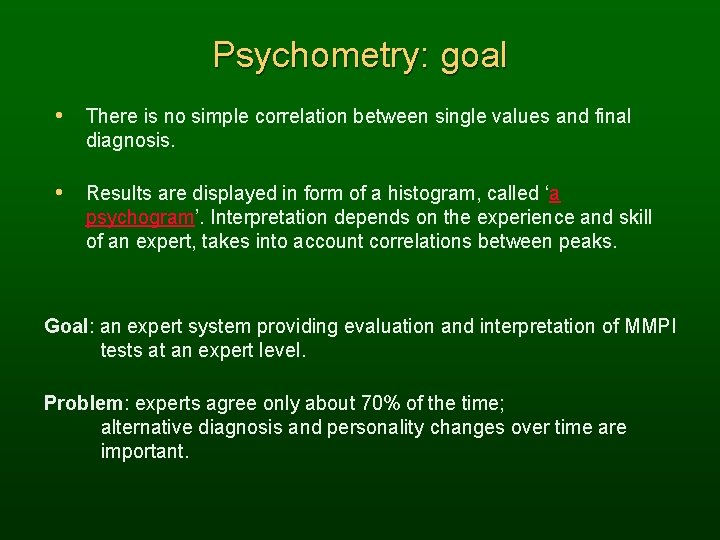

Psychometry: goal • There is no simple correlation between single values and final diagnosis. • Results are displayed in form of a histogram, called ‘a psychogram’. Interpretation depends on the experience and skill of an expert, takes into account correlations between peaks. Goal: an expert system providing evaluation and interpretation of MMPI tests at an expert level. Problem: experts agree only about 70% of the time; alternative diagnosis and personality changes over time are important.

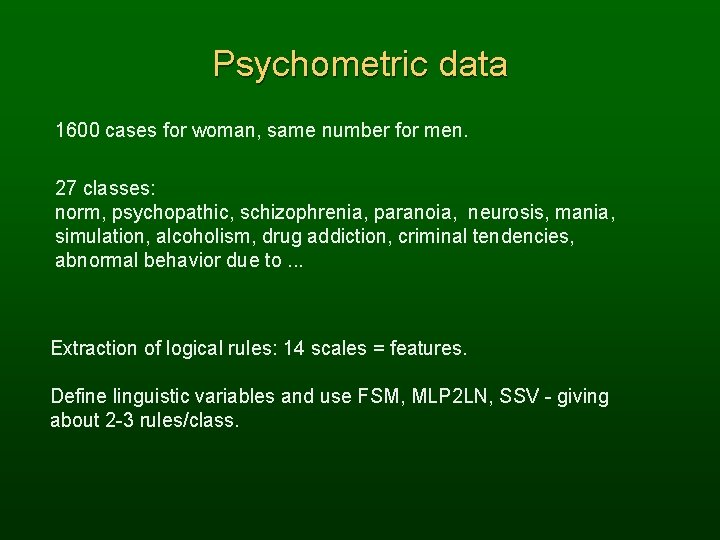

Psychometric data 1600 cases for woman, same number for men. 27 classes: norm, psychopathic, schizophrenia, paranoia, neurosis, mania, simulation, alcoholism, drug addiction, criminal tendencies, abnormal behavior due to. . . Extraction of logical rules: 14 scales = features. Define linguistic variables and use FSM, MLP 2 LN, SSV - giving about 2 -3 rules/class.

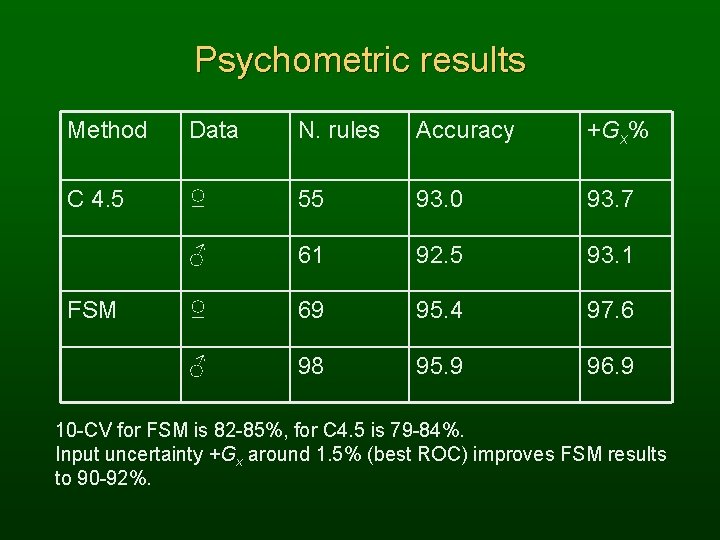

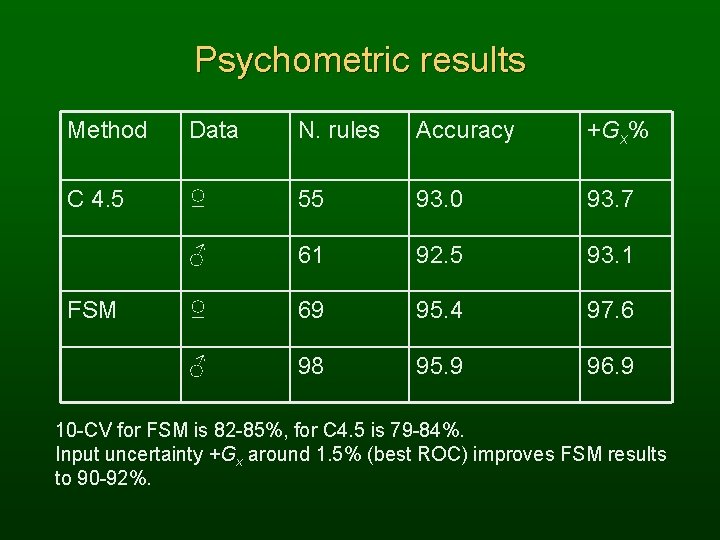

Psychometric results Method Data N. rules Accuracy +Gx% C 4. 5 ♀ 55 93. 0 93. 7 ♂ 61 92. 5 93. 1 ♀ 69 95. 4 97. 6 ♂ 98 95. 9 96. 9 FSM 10 -CV for FSM is 82 -85%, for C 4. 5 is 79 -84%. Input uncertainty +Gx around 1. 5% (best ROC) improves FSM results to 90 -92%.

Psychometric Expert Probabilities for different classes. For greater uncertainties more classes are predicted. Fitting the rules to the conditions: typically 3 -5 conditions per rule, Gaussian distributions around measured values that fall into the rule interval are shown in green. Verbal interpretation of each case, rule and scale dependent.

Visualization Probability of classes versus input uncertainty. Detailed input probabilities around the measured values vs. change in the single scale; changes over time define ‘patients trajectory’. Interactive multidimensional scaling: zooming on the new case to inspect its similarity to other cases.

Summary Computational intelligence methods: neural, decision trees, similaritybased & other, help to understand the data. Understanding data: achieved by rules, prototypes, visualization. Small is beautiful => simple is the best! Simplest possible, but not simpler - regularization of models; accurate but not too accurate - handling of uncertainty; high confidence, but not paranoid - rejecting some cases. • Challenges: hierarchical systems – higher-order rules, missing information; discovery of theories rather than data models; reasoning in complex domains/objects; integration with image/signal analysis; applications in data mining, bioinformatics, signal processing, vision. . .

The End We are slowly addressing the challenges. The methods used here (+ many more) are included in the Ghostminer, data mining software developed by my group, in collaboration with FQS, Fujitsu Kyushu Systems http: //www. fqspl. com. pl/ghostminer/ Completely new version of these tools is being developed. Papers describing in details some of the ideas presented here may be accessed through my home page: Google: Duch or http: //www. phys. uni. torun. pl/~duch