Compiler Optimization of Scalar Value Communication Between Speculative

Compiler Optimization of Scalar Value Communication Between Speculative Threads Antonia Zhai, Christopher B. Colohan, J. Gregory Steffan and Todd C. Mowry School of Computer Science Carnegie Mellon University Carnegie Mellon

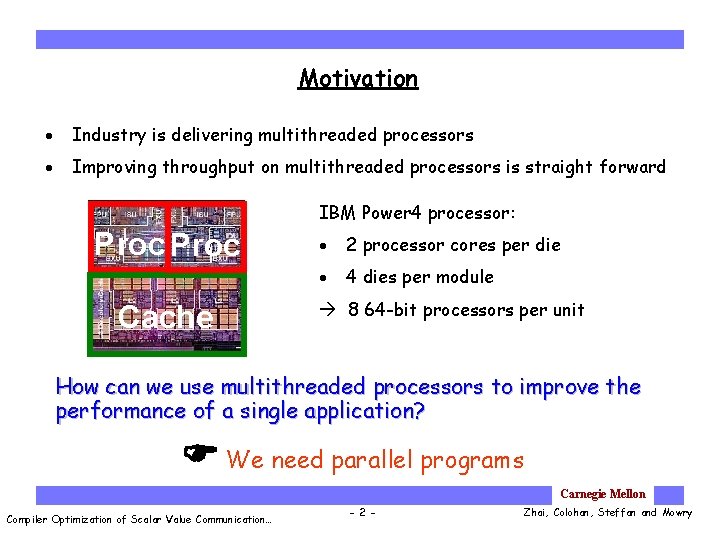

Motivation · Industry is delivering multithreaded processors · Improving throughput on multithreaded processors is straight forward IBM Power 4 processor: Proc Cache · 2 processor cores per die · 4 dies per module 8 64 -bit processors per unit How can we use multithreaded processors to improve the performance of a single application? We need parallel programs Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 2 - Zhai, Colohan, Steffan and Mowry

Automatic Parallelization • Finding independent threads from integer programs is limited by Complex control flow Ambiguous data dependences Runtime inputs • More fundamentally • Parallelization is determined at compile time Thread-Level Speculation Detecting data dependences at runtime Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 3 - Zhai, Colohan, Steffan and Mowry

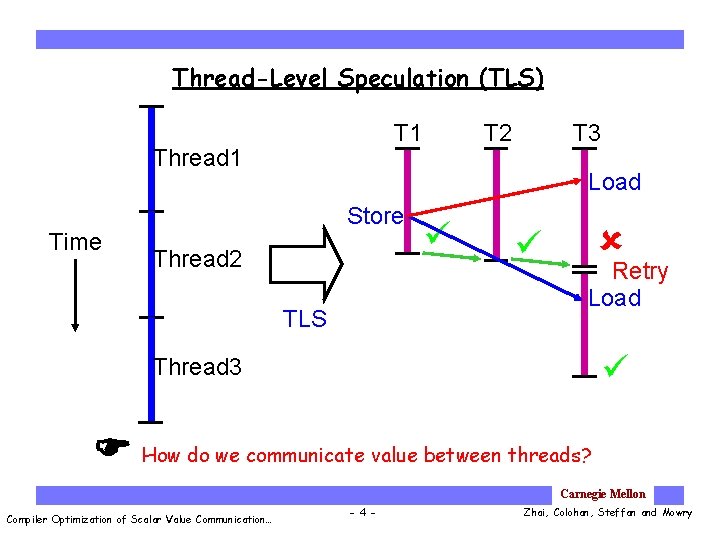

Thread-Level Speculation (TLS) Thread 1 Time T 2 T 1 T 3 Load Store Thread 2 TLS Retry Load Thread 3 How do we communicate value between threads? Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 4 - Zhai, Colohan, Steffan and Mowry

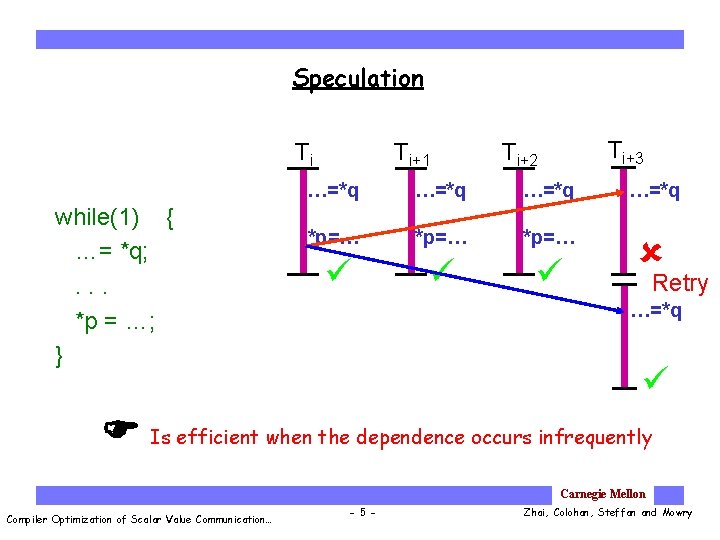

Speculation Ti while(1) { …= *q; . . . *p = …; } Ti+1 Ti+3 Ti+2 …=*q *p=… Retry …=*q Is efficient when the dependence occurs infrequently Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 5 - Zhai, Colohan, Steffan and Mowry

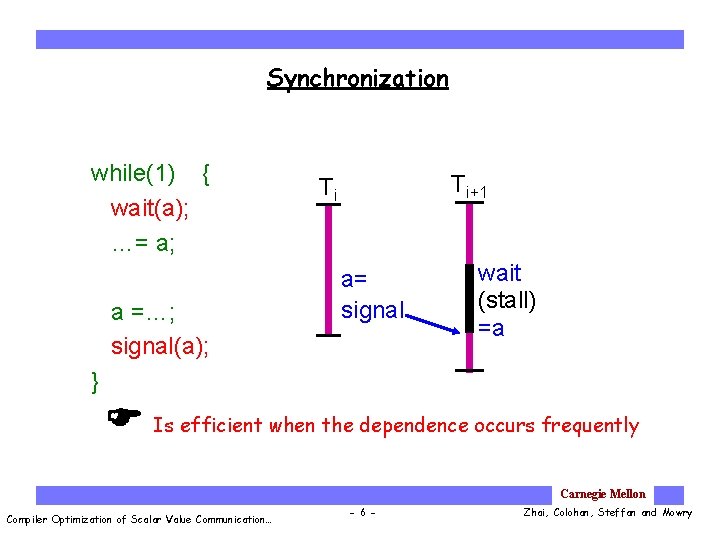

Synchronization while(1) { wait(a); …= a; a =…; signal(a); Ti+1 Ti a= signal wait (stall) =a } Is efficient when the dependence occurs frequently Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 6 - Zhai, Colohan, Steffan and Mowry

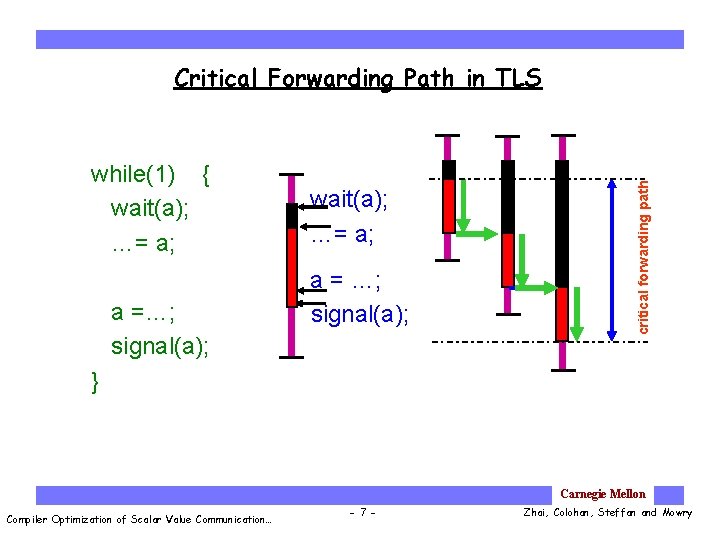

while(1) { wait(a); …= a; a =…; signal(a); wait(a); …= a; a = …; signal(a); critical forwarding path Critical Forwarding Path in TLS } Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 7 - Zhai, Colohan, Steffan and Mowry

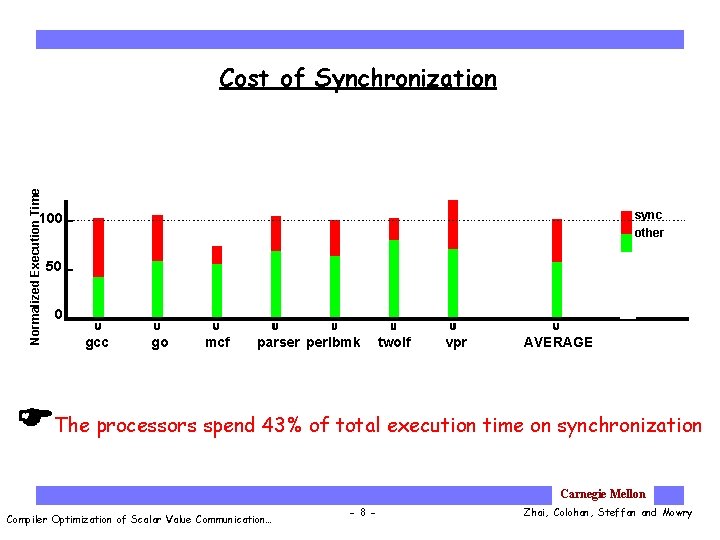

Normalized Execution Time Cost of Synchronization sync other 100 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE The processors spend 43% of total execution time on synchronization Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 8 - Zhai, Colohan, Steffan and Mowry

Outline Ø Compiler optimization Ø Optimization opportunity • Conservative instruction scheduling • Aggressive instruction scheduling · Performance · Conclusions Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 9 - Zhai, Colohan, Steffan and Mowry

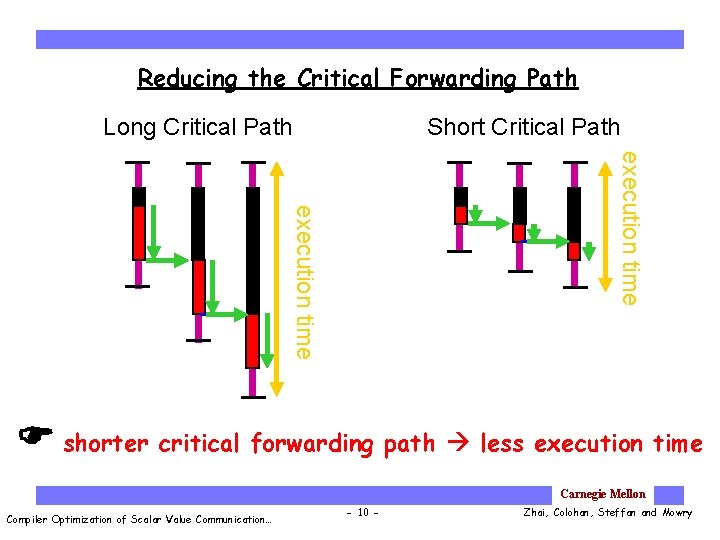

Reducing the Critical Forwarding Path Long Critical Path Short Critical Path execution time shorter critical forwarding path less execution time Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 10 - Zhai, Colohan, Steffan and Mowry

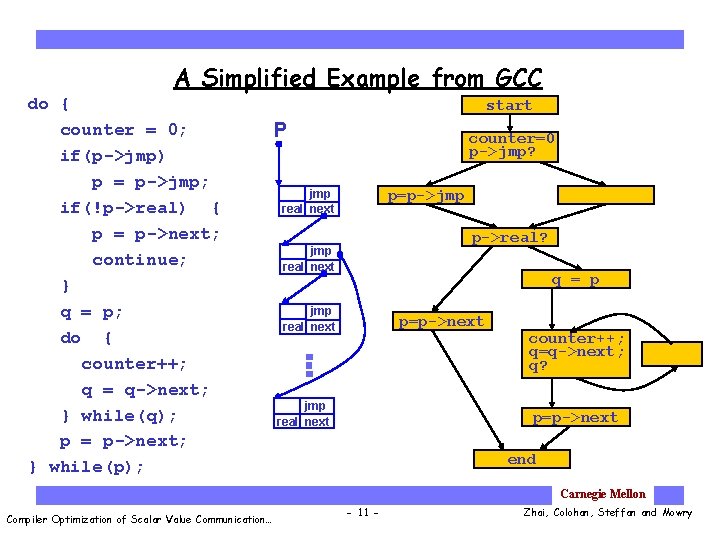

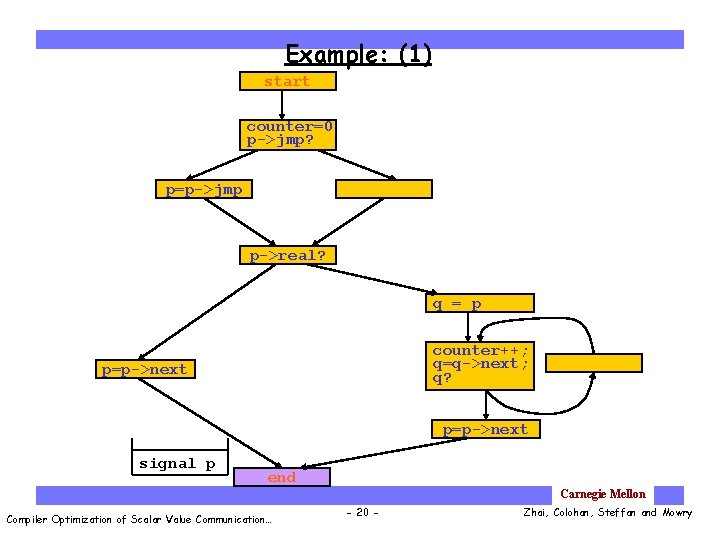

A Simplified Example from GCC do { counter = 0; if(p->jmp) p = p->jmp; if(!p->real) { p = p->next; continue; } q = p; do { counter++; q = q->next; } while(q); p = p->next; } while(p); start P counter=0 p->jmp? p=p->jmp real next p->real? jmp real next q = p jmp real next p=p->next jmp real next counter++; q=q->next; q? p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 11 - Zhai, Colohan, Steffan and Mowry

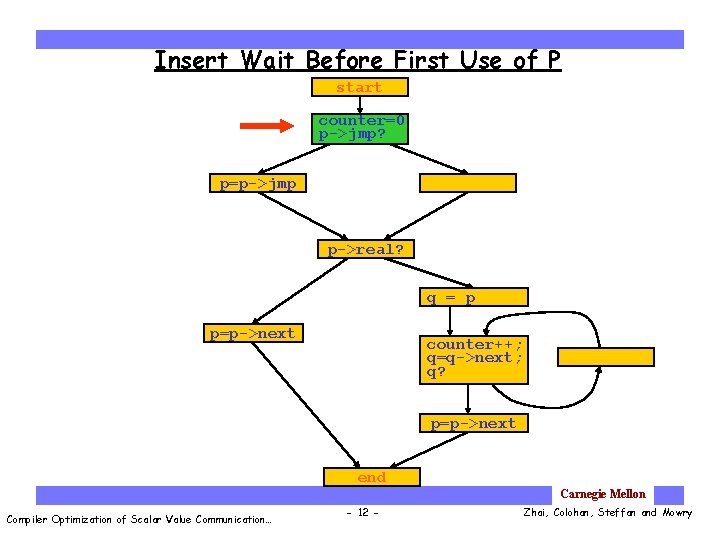

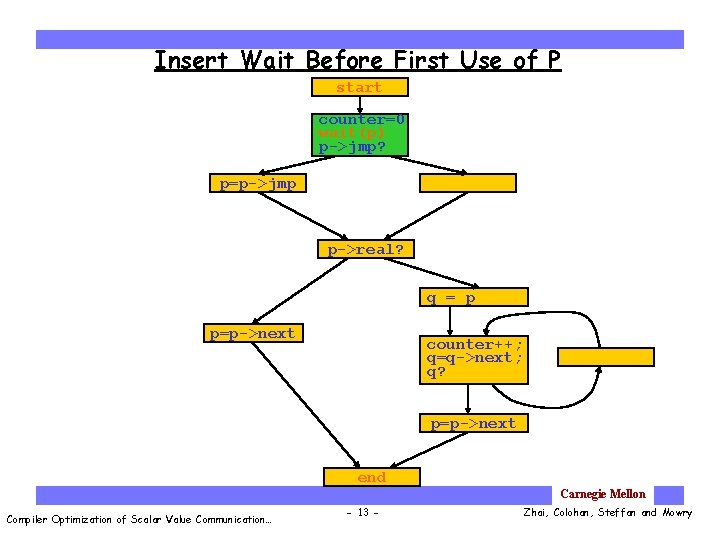

Insert Wait Before First Use of P start counter=0 p->jmp? p=p->jmp p->real? q = p p=p->next counter++; q=q->next; q? p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 12 - Zhai, Colohan, Steffan and Mowry

Insert Wait Before First Use of P start counter=0 wait(p) p->jmp? p=p->jmp p->real? q = p p=p->next counter++; q=q->next; q? p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 13 - Zhai, Colohan, Steffan and Mowry

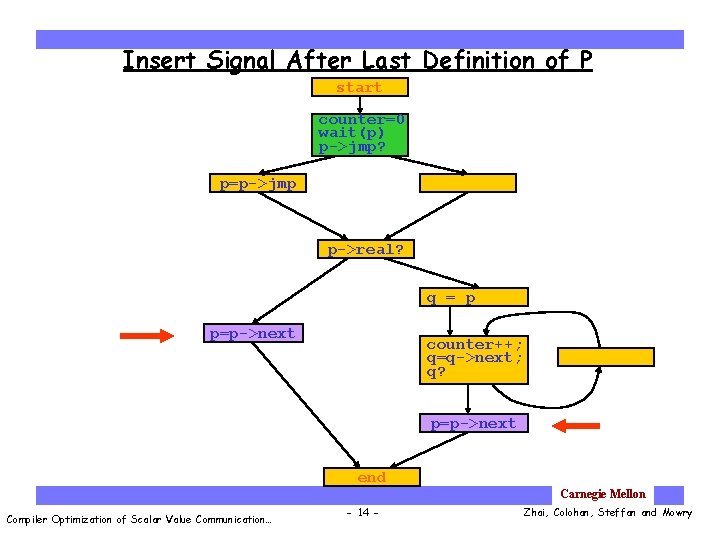

Insert Signal After Last Definition of P start counter=0 wait(p) p->jmp? p=p->jmp p->real? q = p p=p->next counter++; q=q->next; q? p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 14 - Zhai, Colohan, Steffan and Mowry

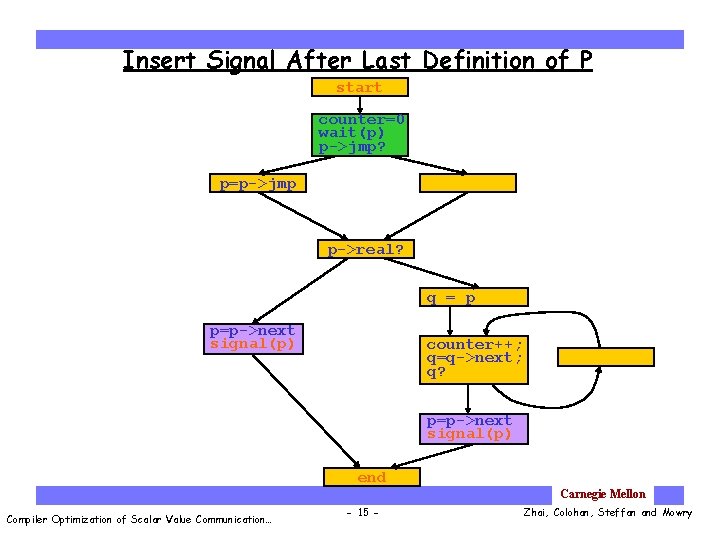

Insert Signal After Last Definition of P start counter=0 wait(p) p->jmp? p=p->jmp p->real? q = p p=p->next signal(p) counter++; q=q->next; q? p=p->next signal(p) end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 15 - Zhai, Colohan, Steffan and Mowry

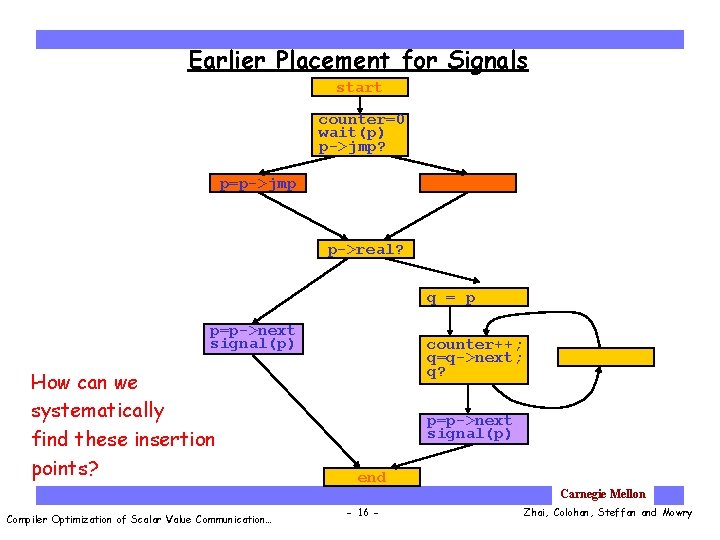

Earlier Placement for Signals start counter=0 wait(p) p->jmp? p=p->jmp p->real? q = p p=p->next signal(p) How can we systematically find these insertion points? counter++; q=q->next; q? p=p->next signal(p) end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 16 - Zhai, Colohan, Steffan and Mowry

Outline Ø Compiler optimization Optimization opportunity Ø Conservative instruction scheduling Ø How to compute the forwarding value Ø Where to compute the forwarding value • Aggressive instruction scheduling · Performance · Conclusions Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 17 - Zhai, Colohan, Steffan and Mowry

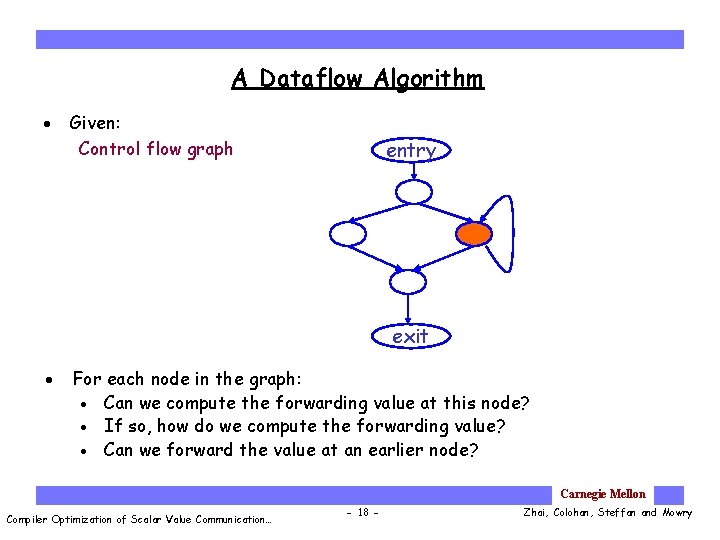

A Dataflow Algorithm · Given: Control flow graph entry exit · For each node in the graph: · Can we compute the forwarding value at this node? · If so, how do we compute the forwarding value? · Can we forward the value at an earlier node? Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 18 - Zhai, Colohan, Steffan and Mowry

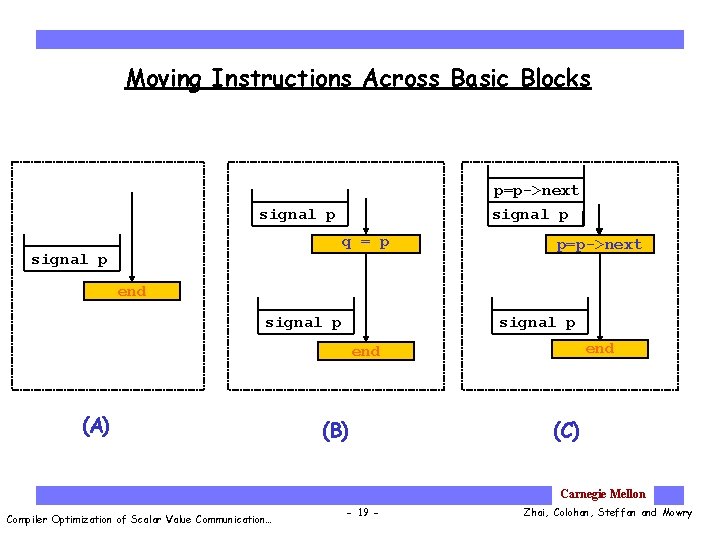

Moving Instructions Across Basic Blocks p=p->next signal p q = p signal p p=p->next end signal p end (A) (B) (C) Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 19 - Zhai, Colohan, Steffan and Mowry

Example: (1) start counter=0 p->jmp? p=p->jmp p->real? q = p counter++; q=q->next; q? p=p->next signal p end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 20 - Zhai, Colohan, Steffan and Mowry

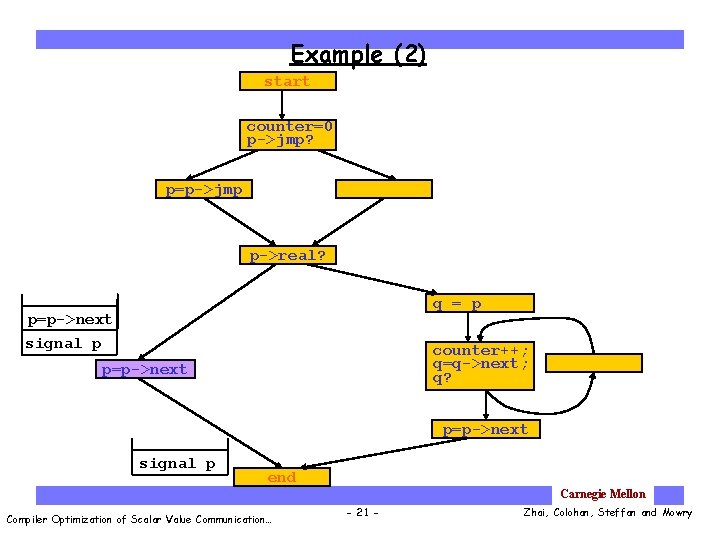

Example (2) start counter=0 p->jmp? p=p->jmp p->real? q = p p=p->next signal p counter++; q=q->next; q? p=p->next signal p end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 21 - Zhai, Colohan, Steffan and Mowry

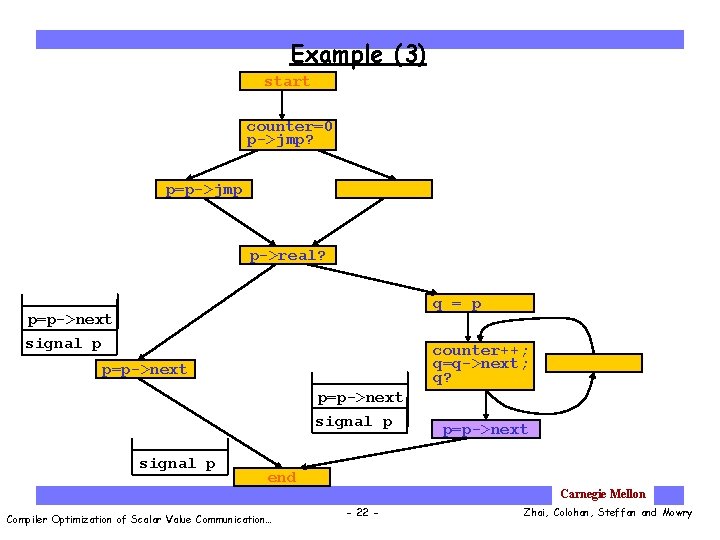

Example (3) start counter=0 p->jmp? p=p->jmp p->real? q = p p=p->next signal p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 22 - Zhai, Colohan, Steffan and Mowry

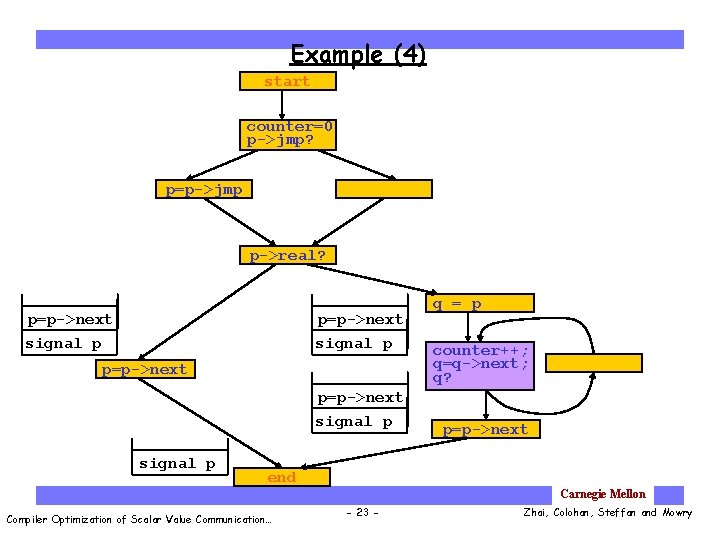

Example (4) start counter=0 p->jmp? p=p->jmp p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 23 - Zhai, Colohan, Steffan and Mowry

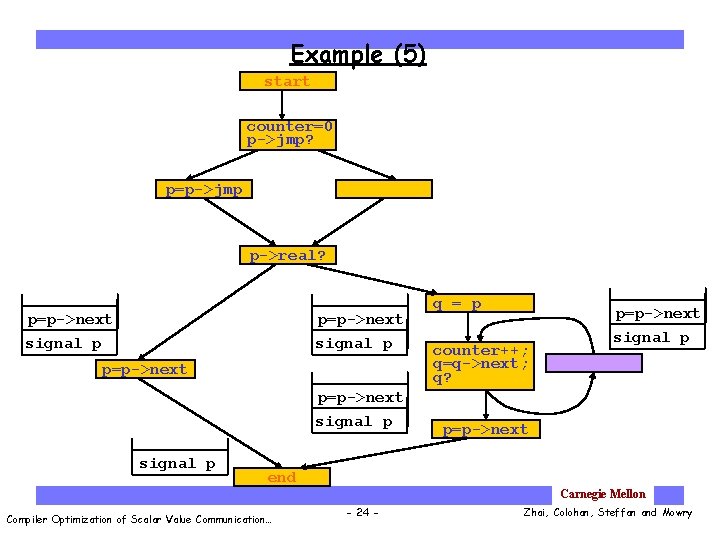

Example (5) start counter=0 p->jmp? p=p->jmp p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 24 - Zhai, Colohan, Steffan and Mowry

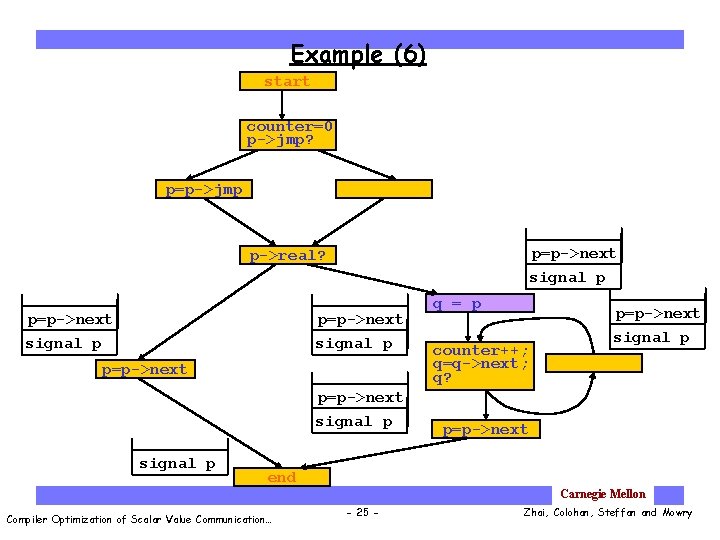

Example (6) start counter=0 p->jmp? p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 25 - Zhai, Colohan, Steffan and Mowry

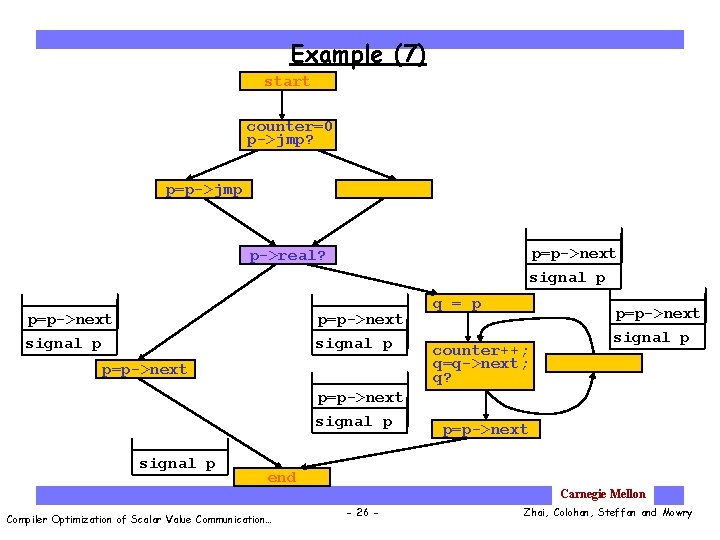

Example (7) start counter=0 p->jmp? p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 26 - Zhai, Colohan, Steffan and Mowry

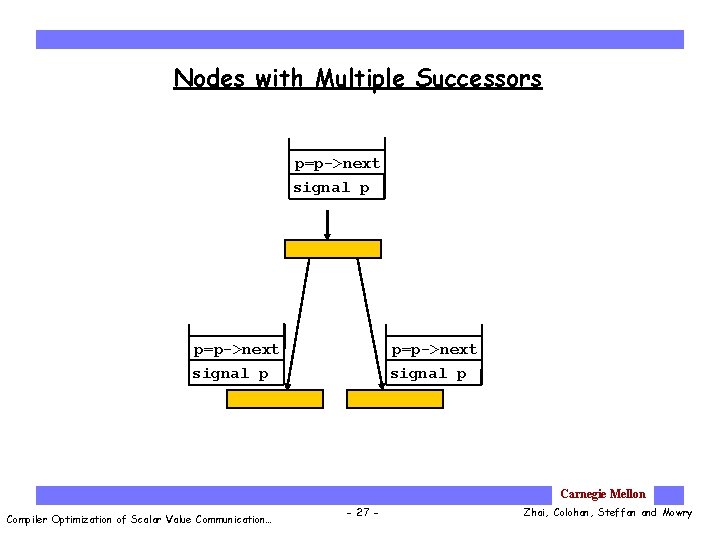

Nodes with Multiple Successors p=p->next signal p Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 27 - Zhai, Colohan, Steffan and Mowry

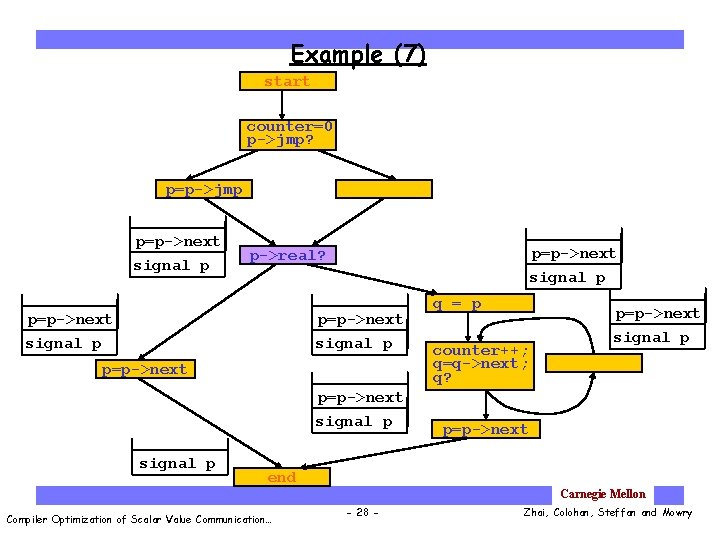

Example (7) start counter=0 p->jmp? p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 28 - Zhai, Colohan, Steffan and Mowry

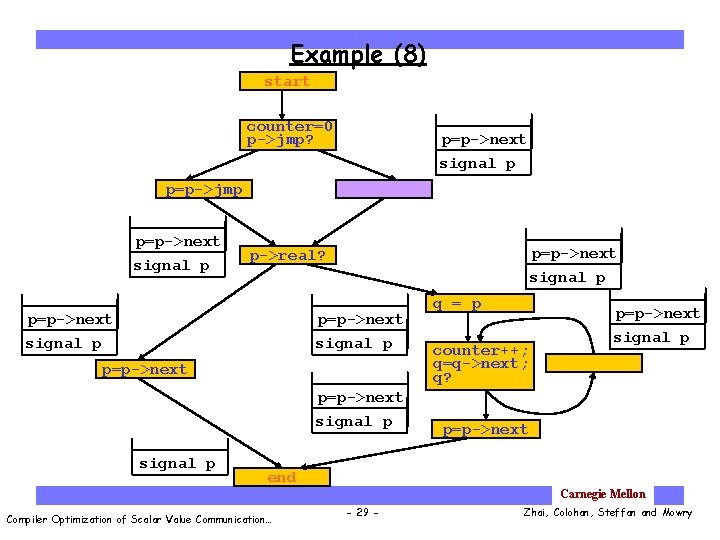

Example (8) start counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 29 - Zhai, Colohan, Steffan and Mowry

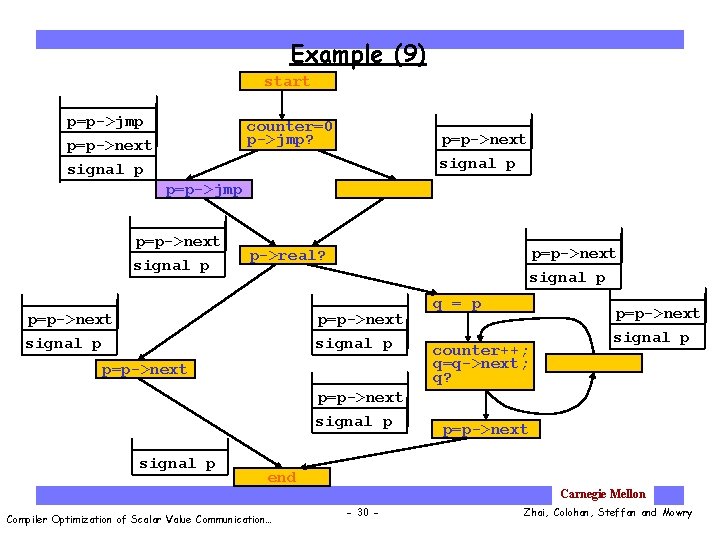

Example (9) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 30 - Zhai, Colohan, Steffan and Mowry

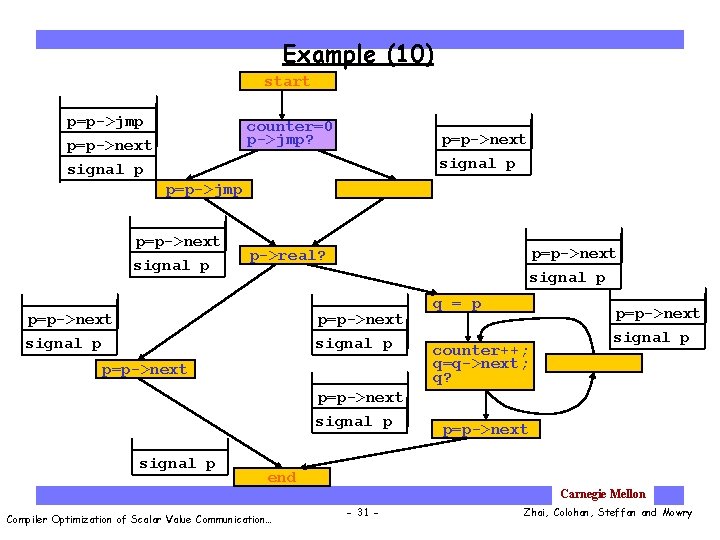

Example (10) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 31 - Zhai, Colohan, Steffan and Mowry

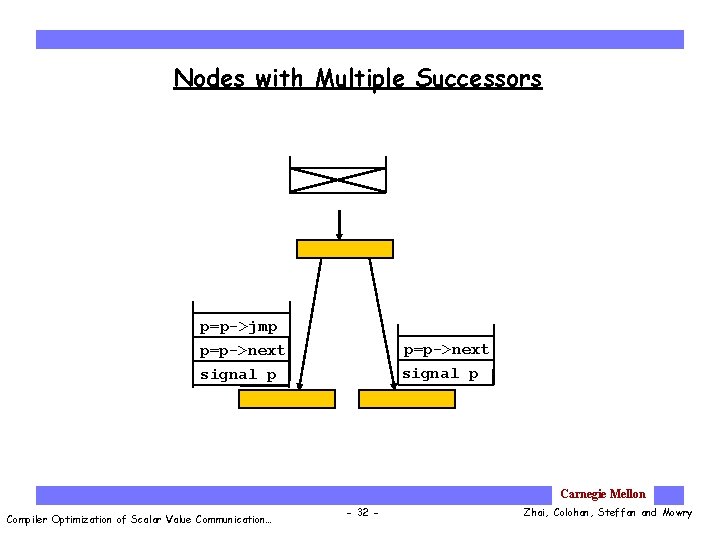

Nodes with Multiple Successors p=p->jmp p=p->next signal p Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 32 - Zhai, Colohan, Steffan and Mowry

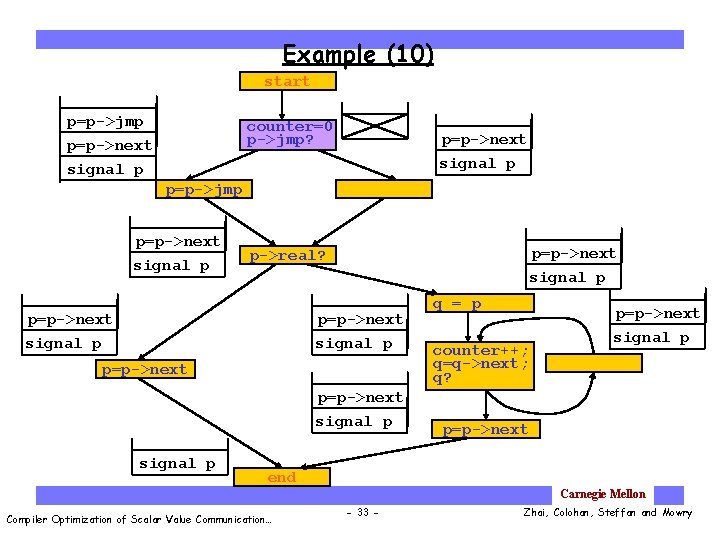

Example (10) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 33 - Zhai, Colohan, Steffan and Mowry

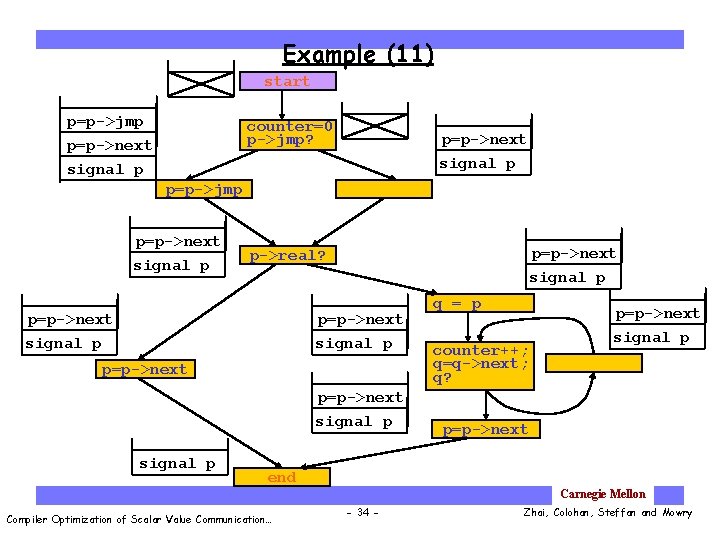

Example (11) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 34 - Zhai, Colohan, Steffan and Mowry

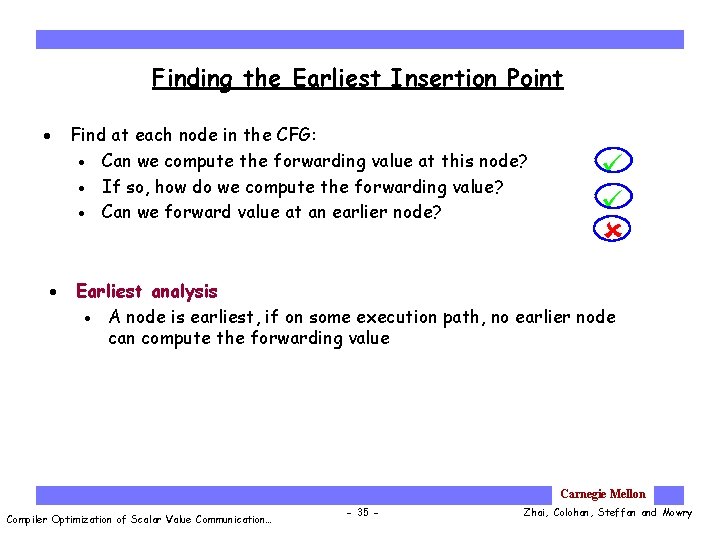

Finding the Earliest Insertion Point · · Find at each node in the CFG: · Can we compute the forwarding value at this node? · If so, how do we compute the forwarding value? · Can we forward value at an earlier node? Earliest analysis · A node is earliest, if on some execution path, no earlier node can compute the forwarding value Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 35 - Zhai, Colohan, Steffan and Mowry

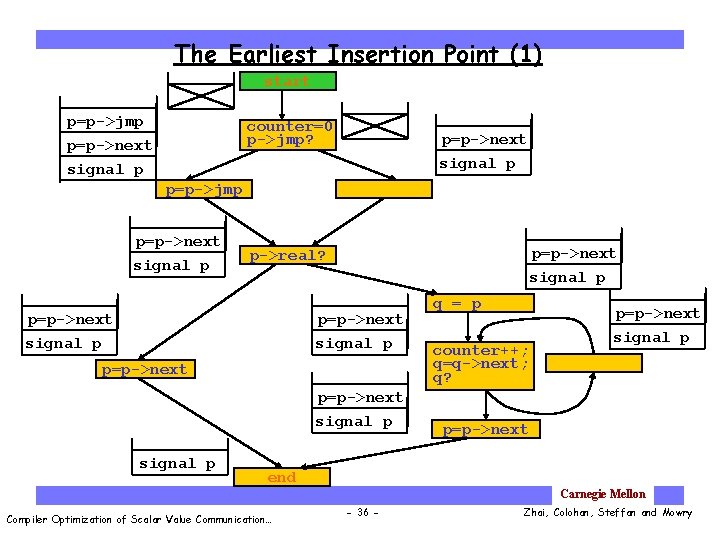

The Earliest Insertion Point (1) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 36 - Zhai, Colohan, Steffan and Mowry

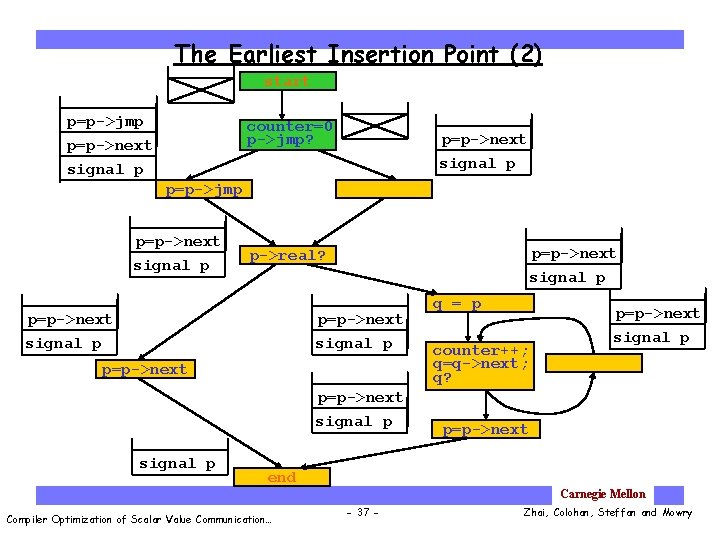

The Earliest Insertion Point (2) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 37 - Zhai, Colohan, Steffan and Mowry

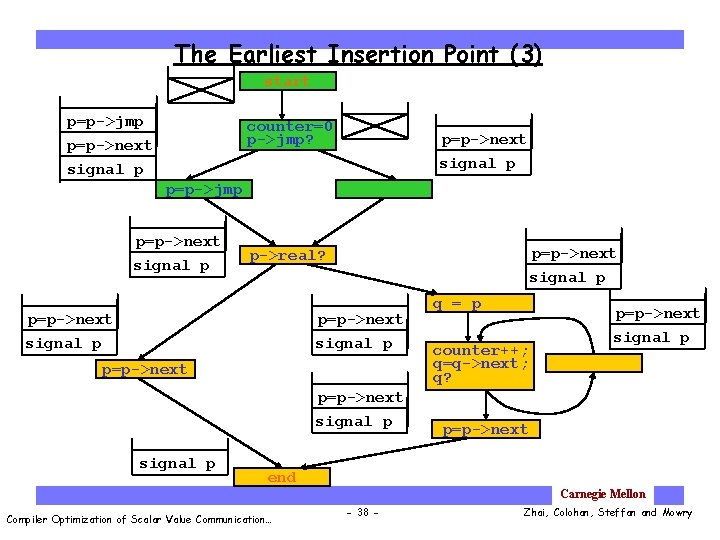

The Earliest Insertion Point (3) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 38 - Zhai, Colohan, Steffan and Mowry

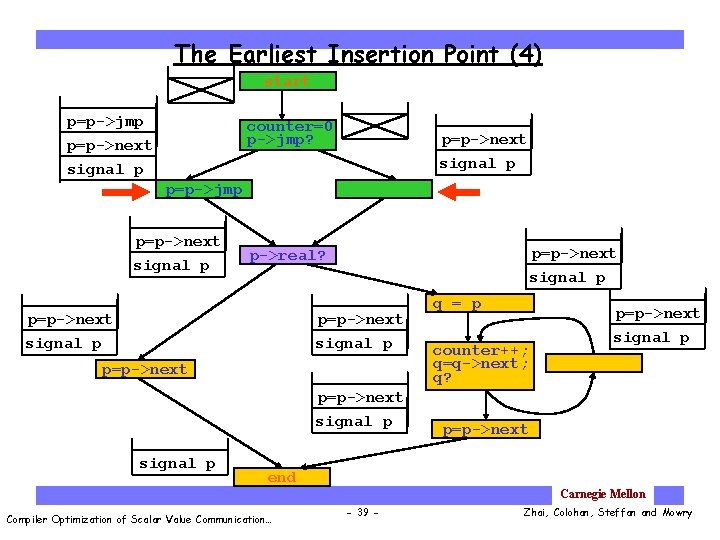

The Earliest Insertion Point (4) start p=p->jmp counter=0 p->jmp? p=p->next signal p p=p->jmp p=p->next signal p p->real? p=p->next signal p q = p counter++; q=q->next; q? p=p->next signal p p=p->next end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 39 - Zhai, Colohan, Steffan and Mowry

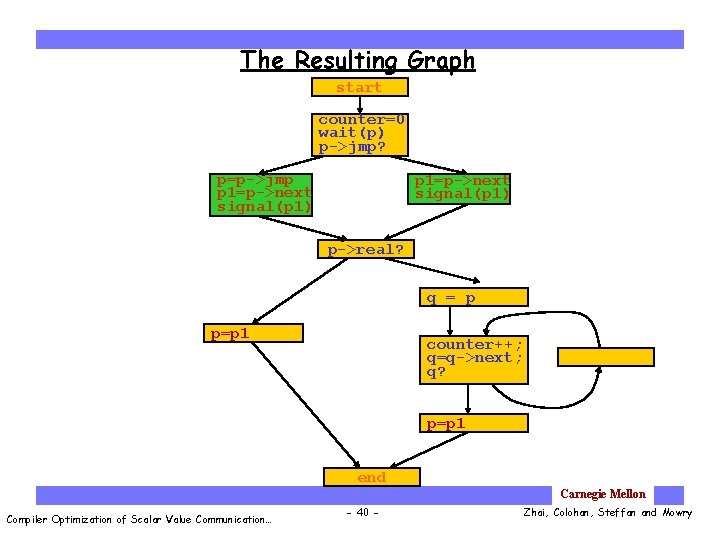

The Resulting Graph start counter=0 wait(p) p->jmp? p=p->jmp p 1=p->next signal(p 1) p->real? q = p p=p 1 counter++; q=q->next; q? p=p 1 end Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 40 - Zhai, Colohan, Steffan and Mowry

Outline Ø Compiler optimization Optimization opportunity Conservative instruction scheduling Ø Aggressive instruction scheduling Ø Speculate on control flow Ø Speculate on data dependence · Performance · Conclusions Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 41 - Zhai, Colohan, Steffan and Mowry

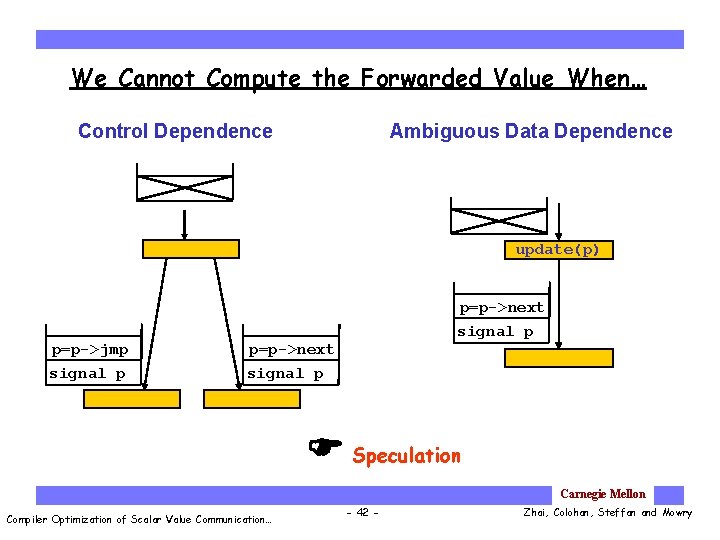

We Cannot Compute the Forwarded Value When… Control Dependence Ambiguous Data Dependence update(p) p=p->jmp signal p p=p->next signal p Speculation Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 42 - Zhai, Colohan, Steffan and Mowry

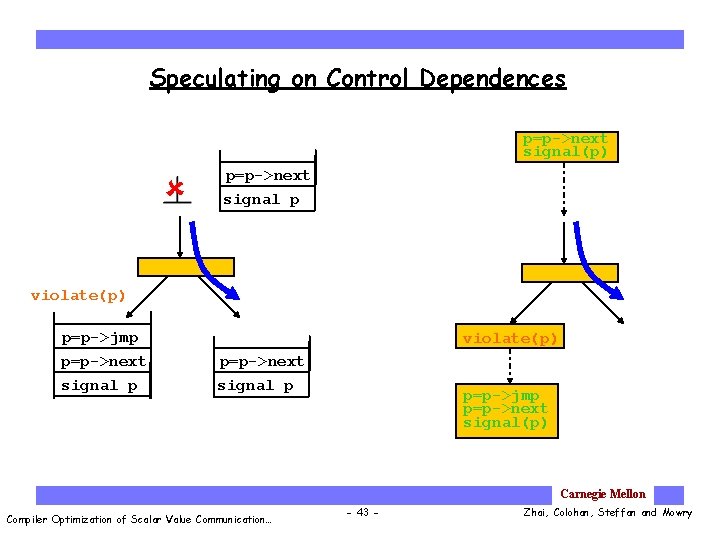

Speculating on Control Dependences p=p->next signal(p) p=p->next signal p violate(p) p=p->jmp p=p->next signal p violate(p) p=p->next signal p p=p->jmp p=p->next signal(p) Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 43 - Zhai, Colohan, Steffan and Mowry

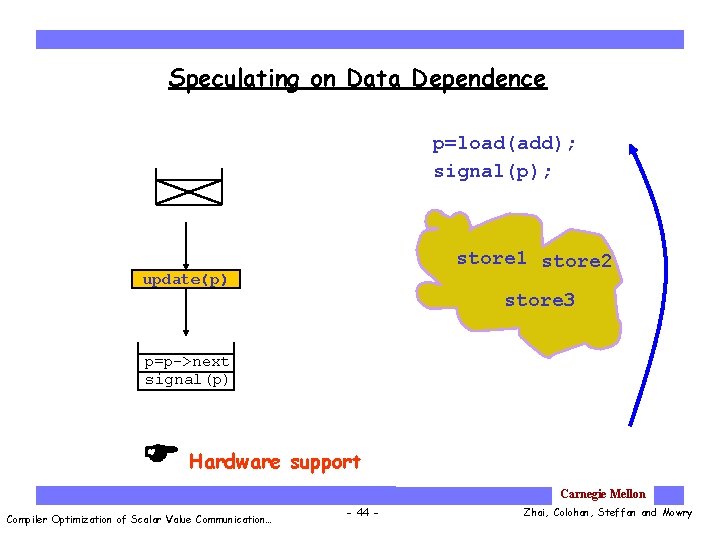

Speculating on Data Dependence p=load(add); signal(p); store 1 store 2 update(p) store 3 p=p->next signal(p) Hardware support p=load(add); signal(p); Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 44 - Zhai, Colohan, Steffan and Mowry

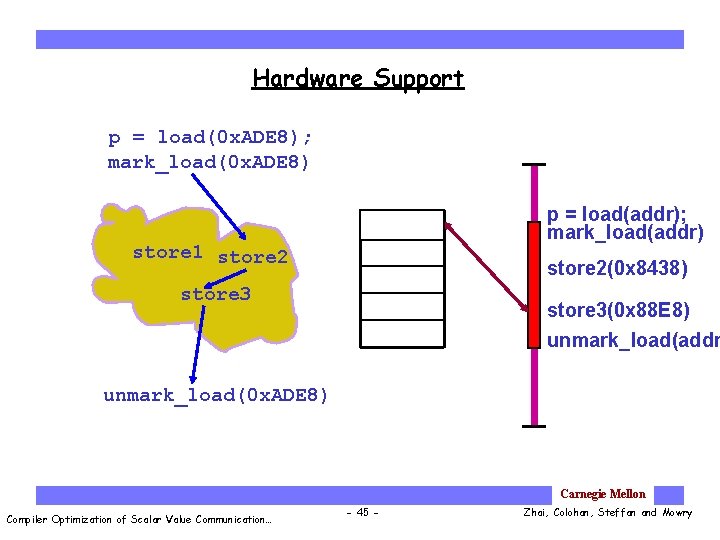

Hardware Support p = load(0 x. ADE 8); mark_load(0 x. ADE 8) 0 x. ADE 8 store 1 store 2 p = load(addr); mark_load(addr) store 2(0 x 8438) store 3(0 x 88 E 8) unmark_load(addr unmark_load(0 x. ADE 8) Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 45 - Zhai, Colohan, Steffan and Mowry

Outline Compiler optimization Optimization opportunity Conservative instruction scheduling Aggressive instruction scheduling Ø Performance Ø Conservative instruction scheduling Ø Aggressive instruction scheduling Ø Hardware optimization for scalar value communication Ø Hardware optimization for all value communication · Conclusions Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 46 - Zhai, Colohan, Steffan and Mowry

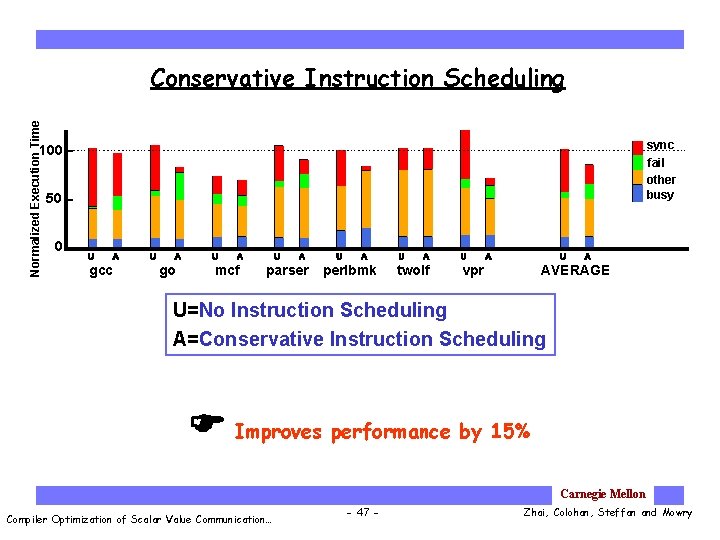

Normalized Execution Time Conservative Instruction Scheduling sync fail other busy 100 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE U=No Instruction Scheduling A=Conservative Instruction Scheduling Improves performance by 15% Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 47 - Zhai, Colohan, Steffan and Mowry

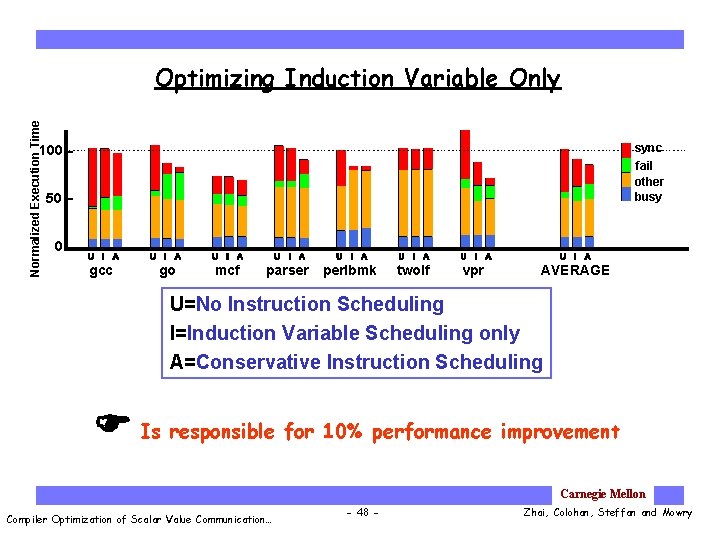

Normalized Execution Time Optimizing Induction Variable Only sync fail other busy 100 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE U=No Instruction Scheduling I=Induction Variable Scheduling only A=Conservative Instruction Scheduling Is responsible for 10% performance improvement Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 48 - Zhai, Colohan, Steffan and Mowry

![Benefits from Global Analysis · Multiscalar instruction scheduling[Vijaykumar, Thesis’ 98] · Uses local analysis Benefits from Global Analysis · Multiscalar instruction scheduling[Vijaykumar, Thesis’ 98] · Uses local analysis](http://slidetodoc.com/presentation_image_h2/0bc1d7cfe5eabb308b919bd9b9083ca2/image-49.jpg)

Benefits from Global Analysis · Multiscalar instruction scheduling[Vijaykumar, Thesis’ 98] · Uses local analysis to schedule instructions across basic blocks Normalized Execution Time · Does not allow scheduling of instruction across inner loops 100 sync fail other busy 50 0 M A gcc M=Multiscalar Scheduling A=Conservative Scheduling M A go Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 49 - Zhai, Colohan, Steffan and Mowry

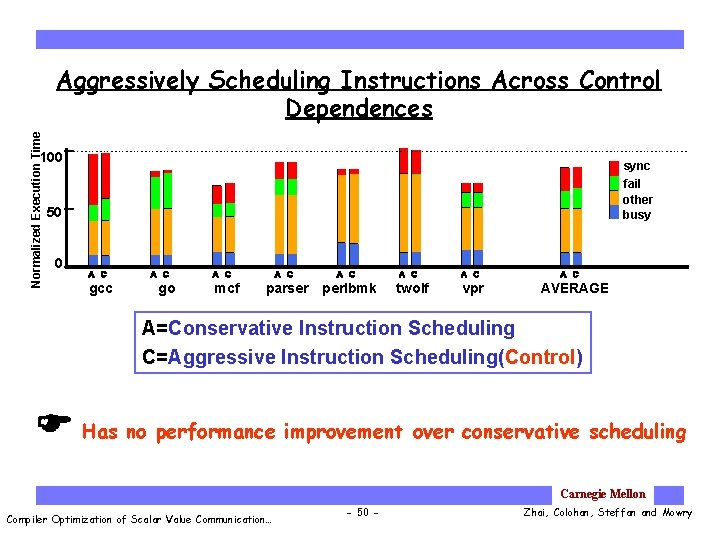

Normalized Execution Time Aggressively Scheduling Instructions Across Control Dependences 100 sync fail other busy 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE A=Conservative Instruction Scheduling C=Aggressive Instruction Scheduling(Control) Has no performance improvement over conservative scheduling Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 50 - Zhai, Colohan, Steffan and Mowry

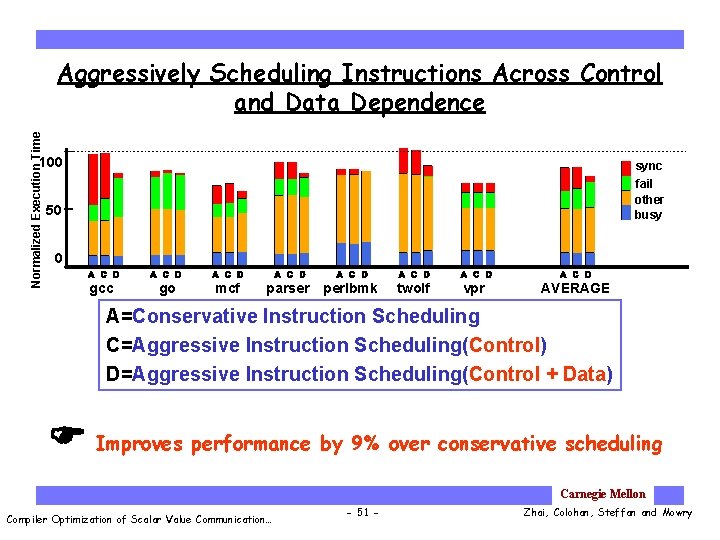

Normalized Execution Time Aggressively Scheduling Instructions Across Control and Data Dependence 100 sync fail other busy 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE A=Conservative Instruction Scheduling C=Aggressive Instruction Scheduling(Control) D=Aggressive Instruction Scheduling(Control + Data) Improves performance by 9% over conservative scheduling Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 51 - Zhai, Colohan, Steffan and Mowry

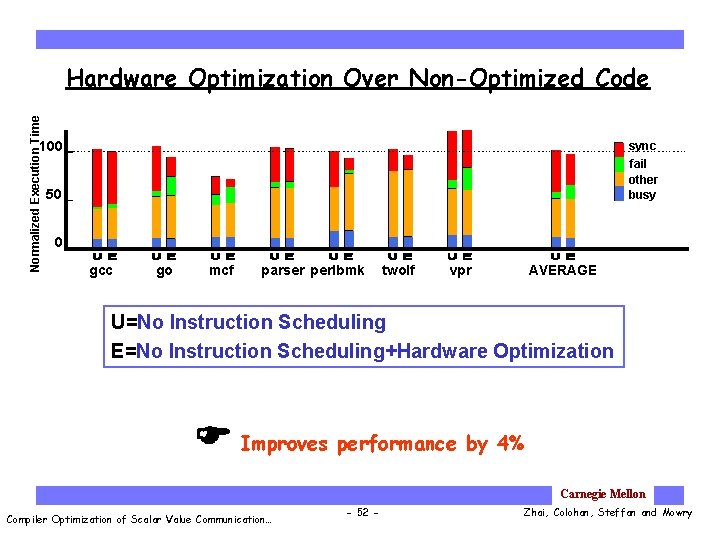

Normalized Execution Time Hardware Optimization Over Non-Optimized Code 100 sync fail other busy 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE U=No Instruction Scheduling E=No Instruction Scheduling+Hardware Optimization Improves performance by 4% Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 52 - Zhai, Colohan, Steffan and Mowry

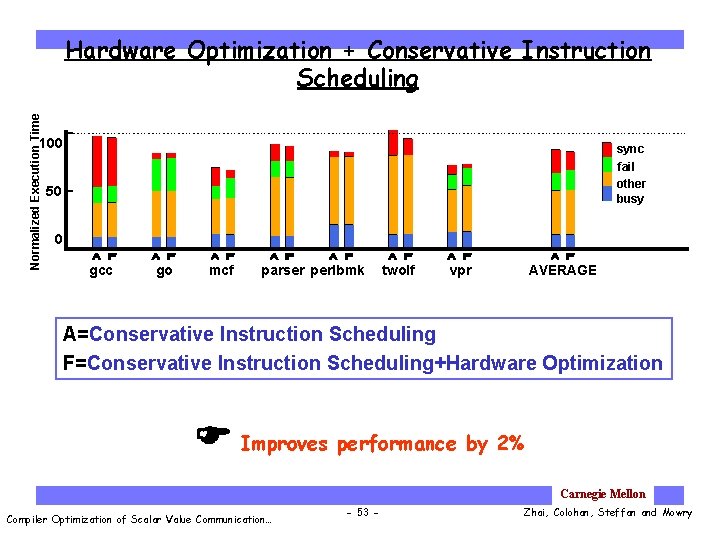

Normalized Execution Time Hardware Optimization + Conservative Instruction Scheduling 100 sync fail other busy 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE A=Conservative Instruction Scheduling F=Conservative Instruction Scheduling+Hardware Optimization Improves performance by 2% Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 53 - Zhai, Colohan, Steffan and Mowry

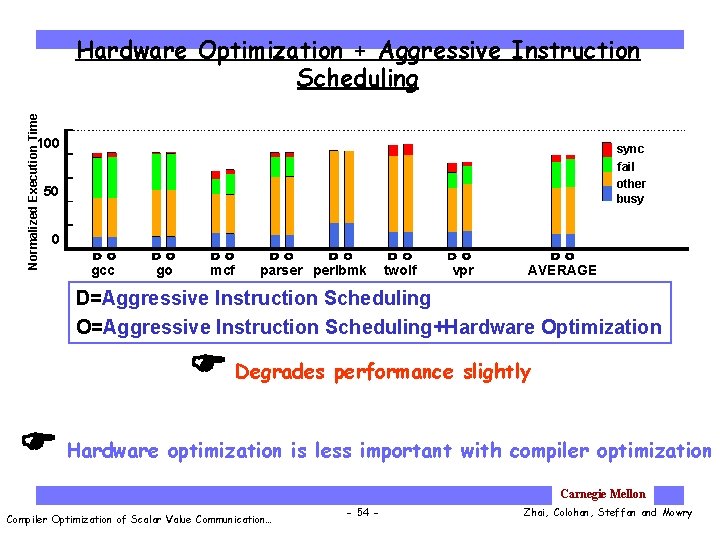

Normalized Execution Time Hardware Optimization + Aggressive Instruction Scheduling 100 sync fail other busy 50 0 gcc go mcf parser perlbmk twolf vpr AVERAGE D=Aggressive Instruction Scheduling O=Aggressive Instruction Scheduling+Hardware Optimization Degrades performance slightly Hardware optimization is less important with compiler optimization Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 54 - Zhai, Colohan, Steffan and Mowry

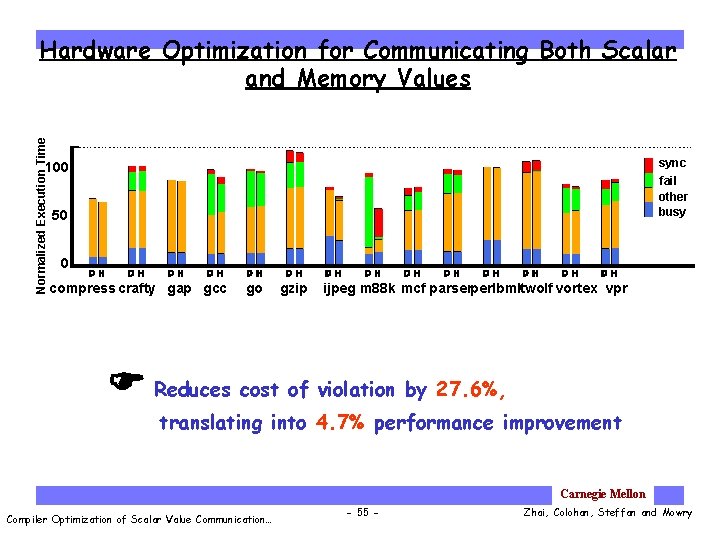

Normalized Execution Time Hardware Optimization for Communicating Both Scalar and Memory Values sync fail other busy 100 50 0 compress crafty gap gcc go gzip ijpeg m 88 k mcf parserperlbmktwolf vortex vpr Reduces cost of violation by 27. 6%, translating into 4. 7% performance improvement Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 55 - Zhai, Colohan, Steffan and Mowry

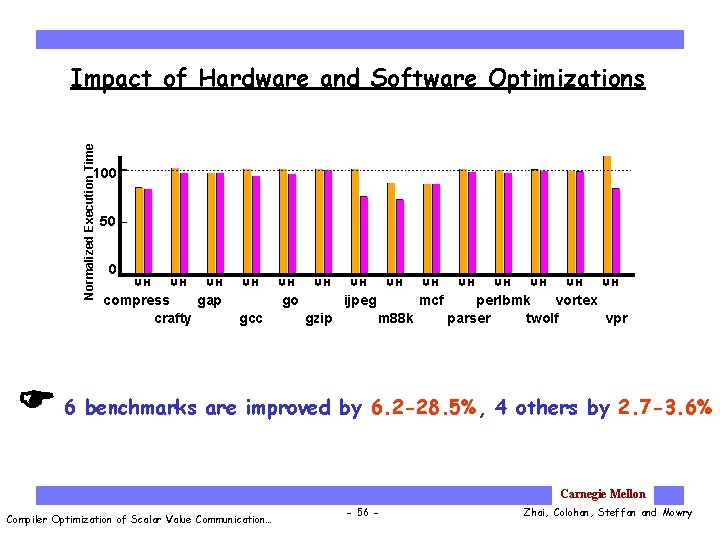

Normalized Execution Time Impact of Hardware and Software Optimizations 100 50 0 compress gap crafty go gcc ijpeg gzip mcf m 88 k perlbmk vortex parser twolf vpr 6 benchmarks are improved by 6. 2 -28. 5%, 4 others by 2. 7 -3. 6% Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 56 - Zhai, Colohan, Steffan and Mowry

Conclusions · Critical forwarding path is an important bottleneck in TLS · Loop induction variables serialize parallel threads · Can be eliminated with our instruction scheduling algorithm · Non-inductive scalars can benefit from conservative instruction scheduling · Aggressive instruction scheduling should be applied selectively · Speculating on control dependence alone is not very effective · Speculating on both control and data dependences can reduce synchronization significantly · GCC is the biggest benefactor · Hardware optimization is less important as compiler schedules instructions more aggressively Critical forwarding path is best addressed by the compiler Carnegie Mellon Compiler Optimization of Scalar Value Communication… - 57 - Zhai, Colohan, Steffan and Mowry

- Slides: 57