Competing For Memory Vivek Pai Kai Li Princeton

- Slides: 32

Competing For Memory Vivek Pai / Kai Li Princeton University

Mechanics n Feedback optionally anonymous n n n Quiz 1 Question 2 answer(s) n n No real retribution anyway Do it to make me happy #regs != #bits Registers at top of memory hierarchy Lots of acceptable answers Last Quiz, Feedback still being digested 2

The Big Picture We’ve talked about single evictions n Most computers are multiprogrammed n n n Single eviction decision still needed New concern – allocating resources How to be “fair enough” and achieve good overall throughput This is a competitive world – local and global resource allocation decisions 3

Lessons From Enhanced FIFO n Observations n n it’s easier to evict a clean page than a dirty page sometimes the disk and CPU are idle Optimization: when system’s “free”, write dirty pages back to disk, but don’t evict Called flushing – often falls to pager daemon 4

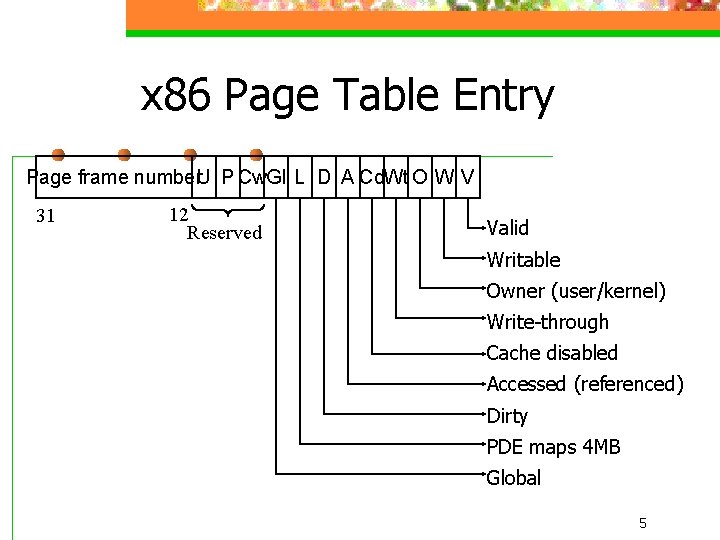

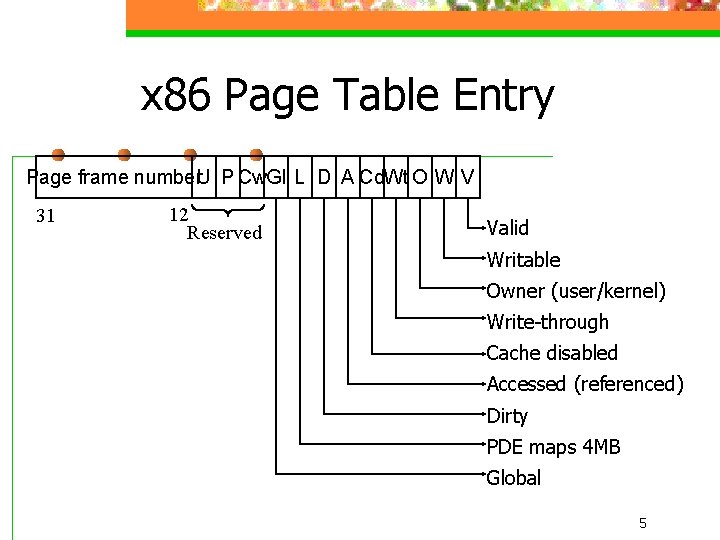

x 86 Page Table Entry Page frame number. U P Cw. Gl L D A Cd. Wt O W V 31 12 Reserved Valid Writable Owner (user/kernel) Write-through Cache disabled Accessed (referenced) Dirty PDE maps 4 MB Global 5

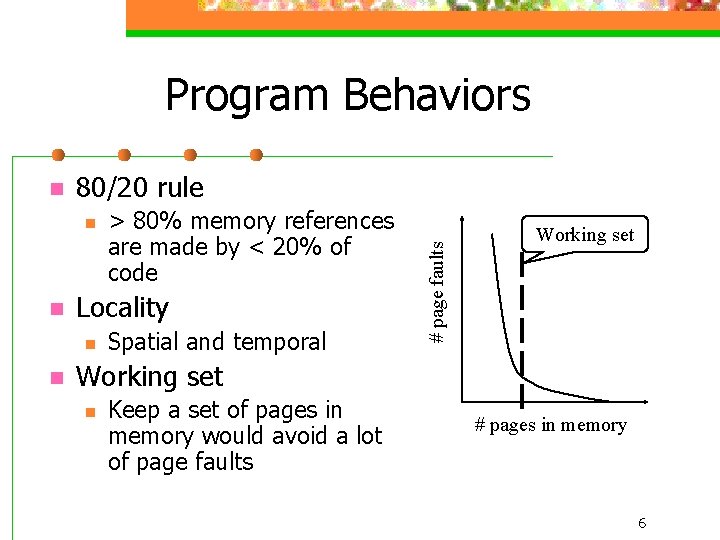

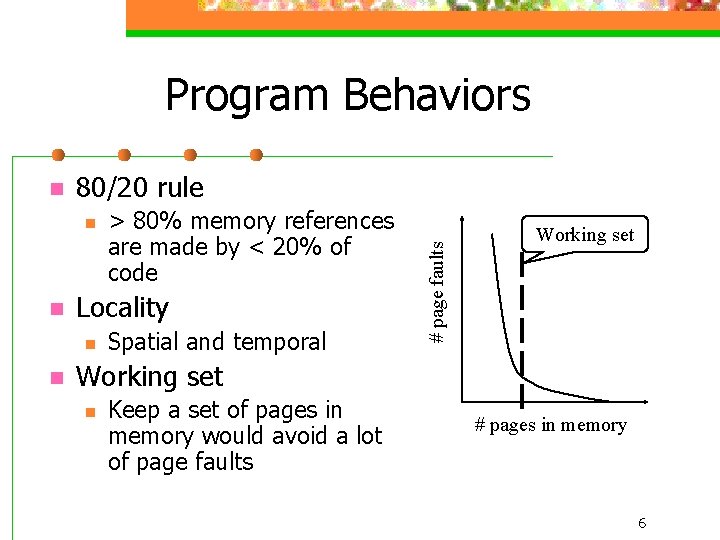

Program Behaviors 80/20 rule n n Locality n n > 80% memory references are made by < 20% of code Spatial and temporal # page faults n Working set n Keep a set of pages in memory would avoid a lot of page faults # pages in memory 6

Observations re Working Set Working set isn’t static n There often isn’t a single “working set” n n Multiple plateaus in previous curve Program coding style affects working set Working set is hard to gauge n What’s the working set of an interactive program? 7

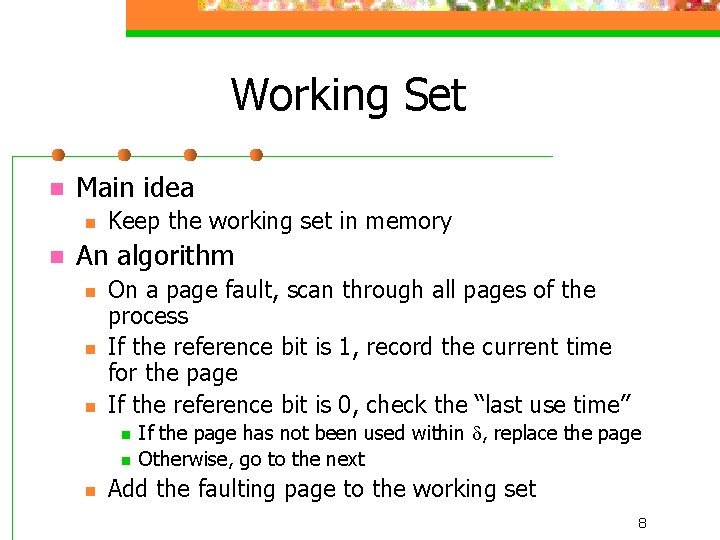

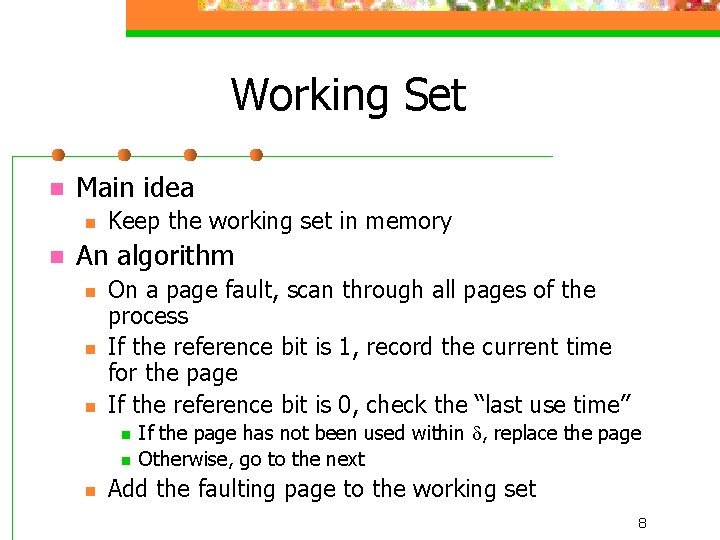

Working Set n Main idea n n Keep the working set in memory An algorithm n n n On a page fault, scan through all pages of the process If the reference bit is 1, record the current time for the page If the reference bit is 0, check the “last use time” n n n If the page has not been used within d, replace the page Otherwise, go to the next Add the faulting page to the working set 8

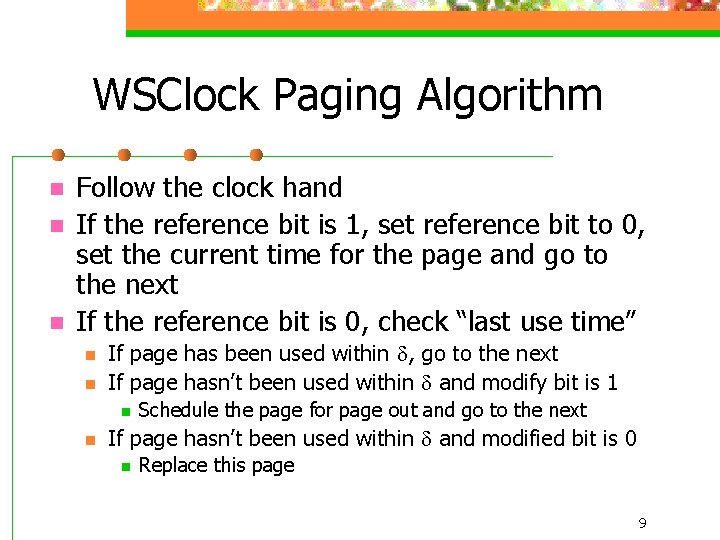

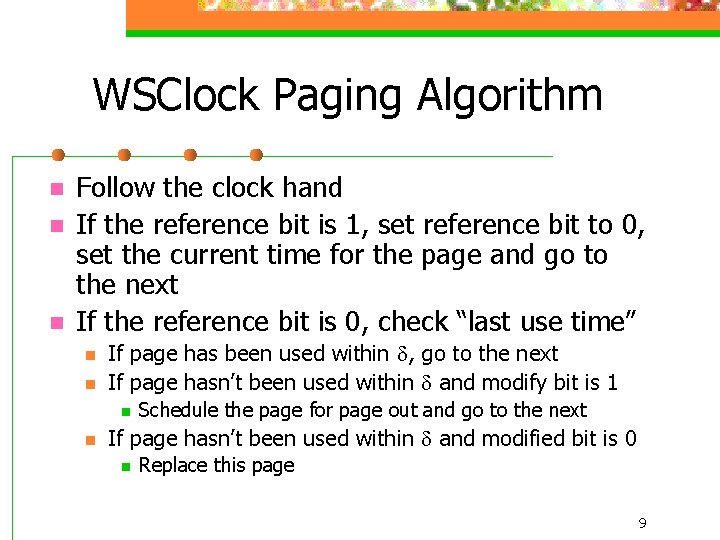

WSClock Paging Algorithm n n n Follow the clock hand If the reference bit is 1, set reference bit to 0, set the current time for the page and go to the next If the reference bit is 0, check “last use time” n n If page has been used within d, go to the next If page hasn’t been used within d and modify bit is 1 n n Schedule the page for page out and go to the next If page hasn’t been used within d and modified bit is 0 n Replace this page 9

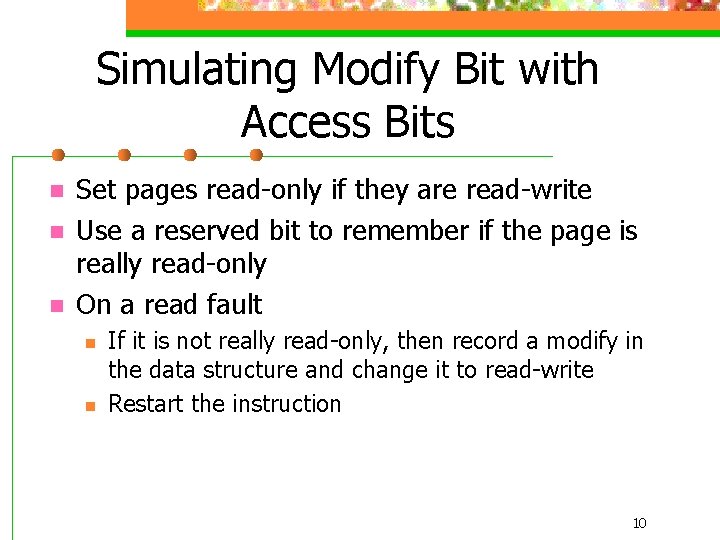

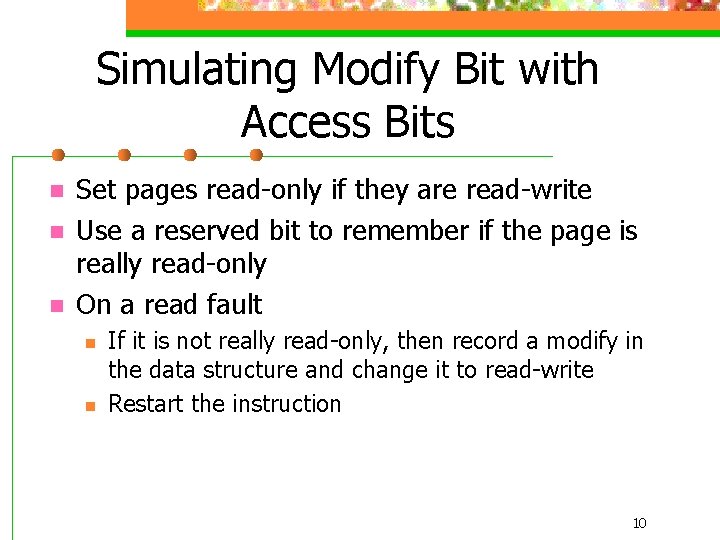

Simulating Modify Bit with Access Bits n n n Set pages read-only if they are read-write Use a reserved bit to remember if the page is really read-only On a read fault n n If it is not really read-only, then record a modify in the data structure and change it to read-write Restart the instruction 10

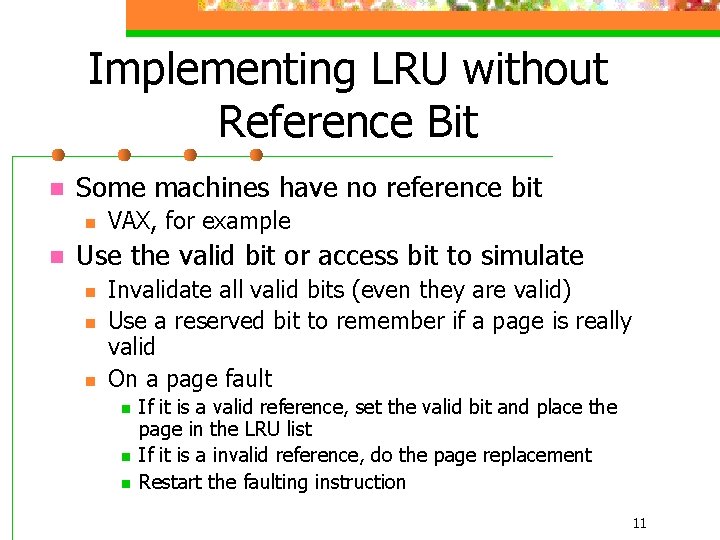

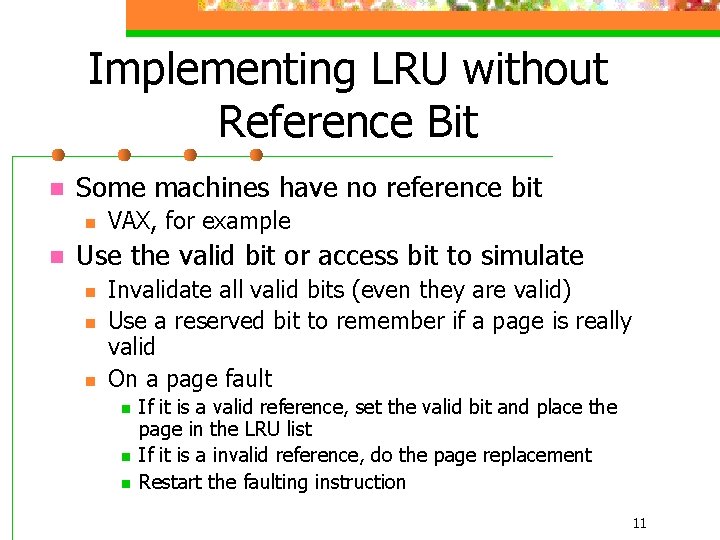

Implementing LRU without Reference Bit n Some machines have no reference bit n n VAX, for example Use the valid bit or access bit to simulate n n n Invalidate all valid bits (even they are valid) Use a reserved bit to remember if a page is really valid On a page fault n n n If it is a valid reference, set the valid bit and place the page in the LRU list If it is a invalid reference, do the page replacement Restart the faulting instruction 11

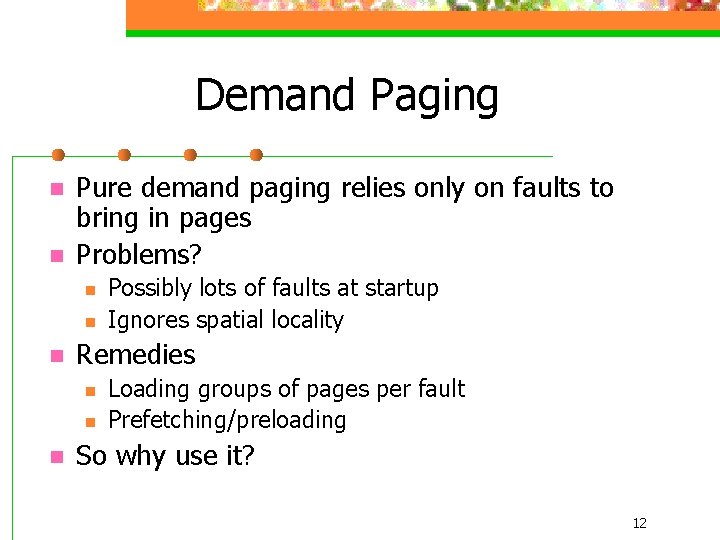

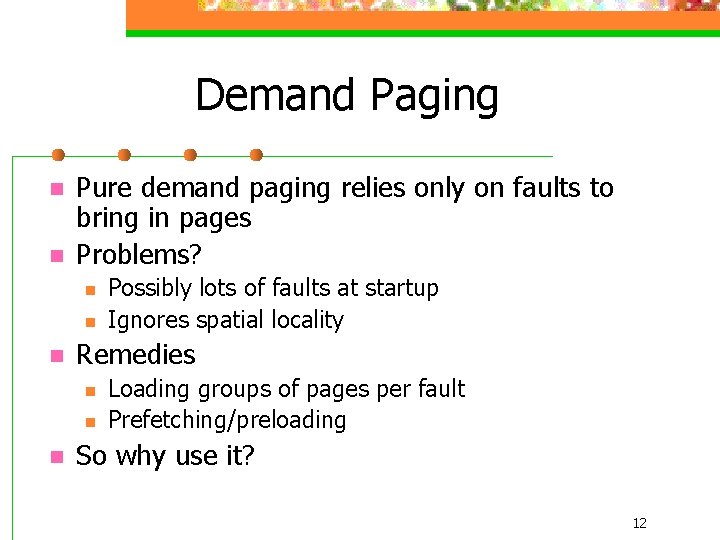

Demand Paging n n Pure demand paging relies only on faults to bring in pages Problems? n n n Remedies n n n Possibly lots of faults at startup Ignores spatial locality Loading groups of pages per fault Prefetching/preloading So why use it? 12

Speed and Sluggishness n n n Slow is >. 1 seconds (100 ms) Speedy is <<. 1 seconds Monitors tend to be 60+ Hz = <16. 7 ms between screen paints n Disks have seek + rotational delay n n n Seek is somewhere between 7 -16 ms At 7200 rpm, one rotation = 1/120 sec = 8 ms. Half -rotation is 4 ms Conclusion? One disk access OK, six are bad 13

Memory Pressure “Swap” space n Region of disk used to hold “overflow” n Contains only data pages (stack/heap/globals). Why? n Swap may exist as “regular file, ” but dedicated region of disk more common 14

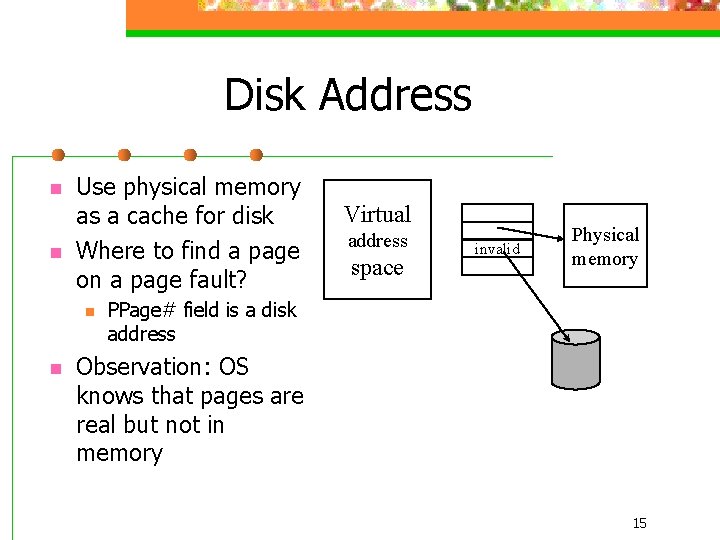

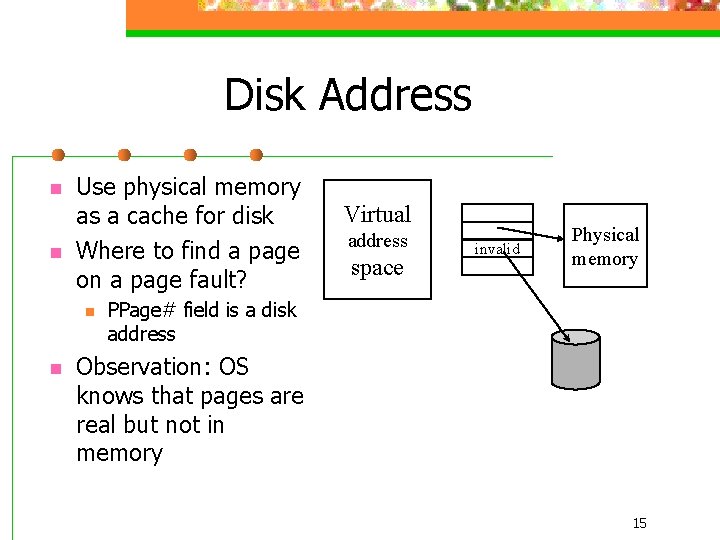

Disk Address n n Use physical memory as a cache for disk Where to find a page on a page fault? n n Virtual address space invalid Physical memory PPage# field is a disk address Observation: OS knows that pages are real but not in memory 15

Imagine a Global LRU Global – across all processes n Idea – when a page is needed, pick the oldest page in the system n Problems? Process mixes? n n Interactive processes Active large-memory sweep processes Mitigating damage? 16

Source of Disk Access n VM System n n Filesystem n n Main memory caches - full image on disk Even here, caching very useful New competitive pressure/decisions n n How do we allocate memory to these two? How do we know we’re right? 17

Partitioning Memory n Originally, specified by administrator n n 20% used as filesystem cache by default On fileservers, admin would set to 80% Each subsystem owned pages, replaced them Observation: they’re all basically pages n n Why not let them compete? Result: unified memory systems – file/VM 18

File Access Efficiency n read(fd, buf, size) n n Buffer in process’s memory Data exists in two places – filesystem cache & process’s memory Known as “double buffering” Various scenarios n n Many processes read same file Process wants only parts of a file, but doesn’t know which parts in advance 19

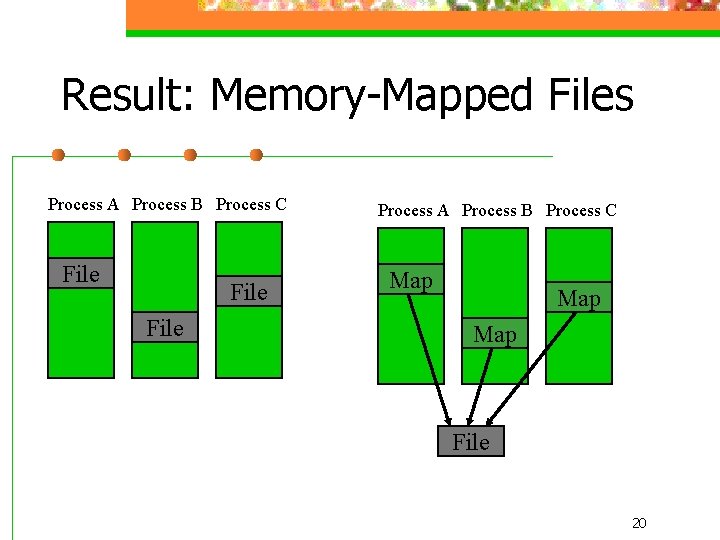

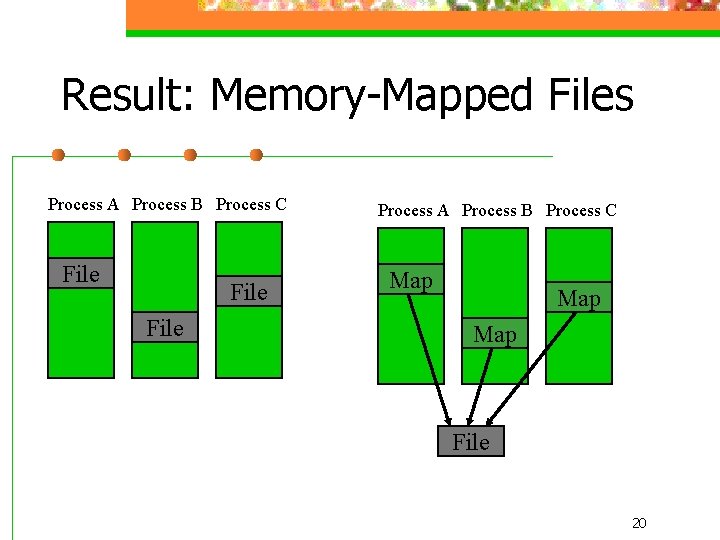

Result: Memory-Mapped Files Process A Process B Process C File Process A Process B Process C Map Map File 20

Lazy Versus Eager n Eager: do things right away n n read(fd, buf, size) – returns # bytes read Bytes must be read before read completes What happens if size is big? Lazy: do them as they’re needed n n mmap(…) – returns pointer to mapping Mapping must exist before mmap completes When/how are bytes read? What happens if size is big? 21

Semantics: How Things Behave n What happens when n n Two process obtain data (read or mmap) One process modifies data Two processes obtain data (read or mmap) A third process modifies data The two processes access the data 22

Being Too Smart… Assume a unified VM/File scheme n You’ve implemented perfect Global LRU n n What happens on a filesystem “dump”? 23

Amdahl’s Law Gene Amdahl (IBM, then Amdahl) n Noticed the bottlenecks to speedup n Assume speedup affects one component n New time = (1 -not affected) + affected/speedup n In other words, diminishing returns n 24

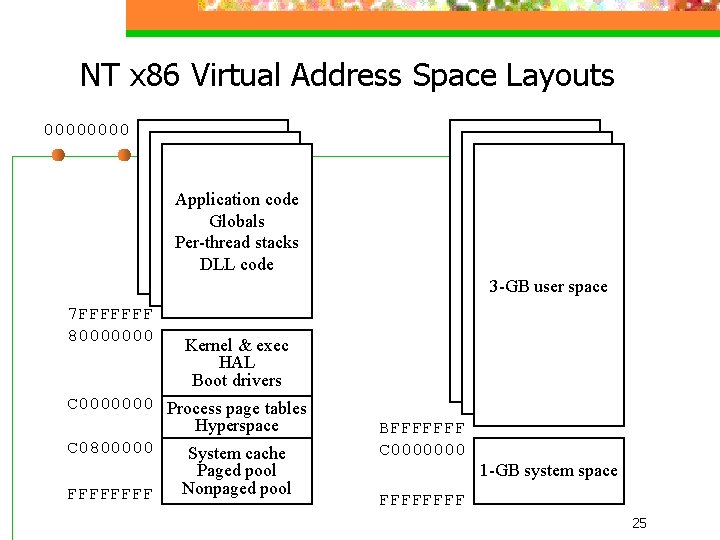

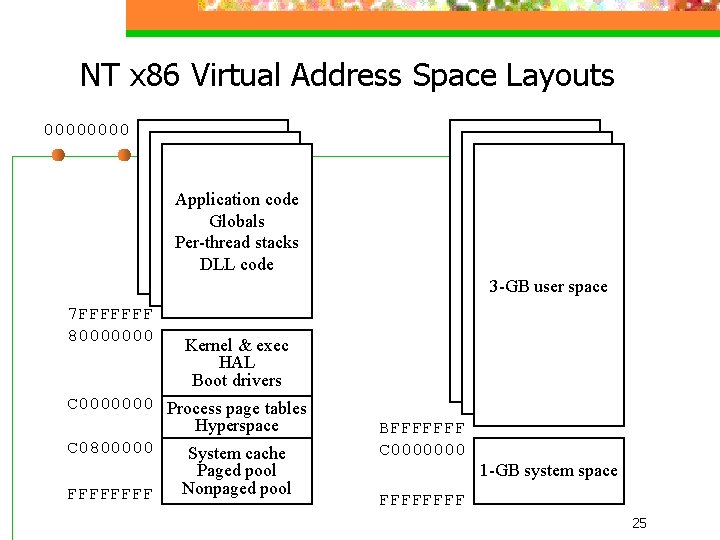

NT x 86 Virtual Address Space Layouts 0000 Application code Globals Per-thread stacks DLL code 3 -GB user space 7 FFFFFFF 80000000 Kernel & exec HAL Boot drivers C 0000000 Process page tables Hyperspace C 0800000 System cache Paged pool Nonpaged pool FFFF BFFFFFFF C 0000000 1 -GB system space FFFF 25

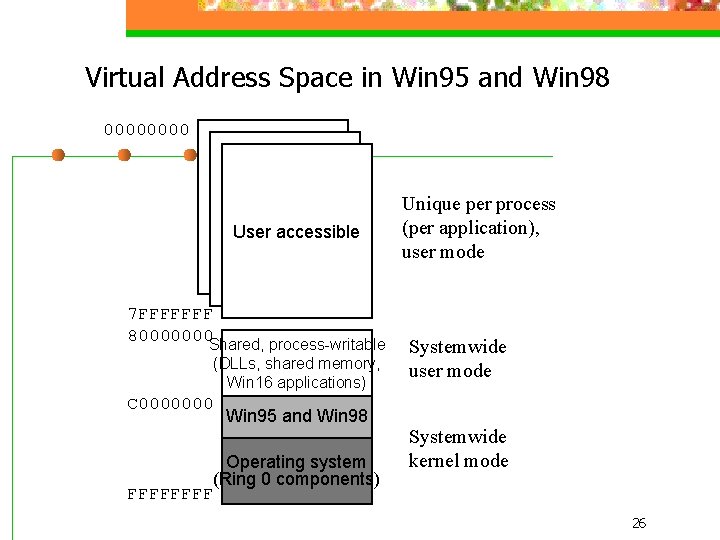

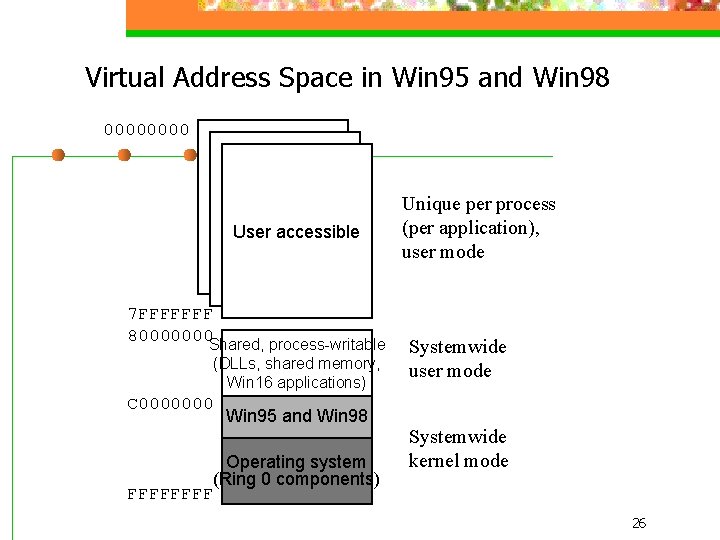

Virtual Address Space in Win 95 and Win 98 0000 User accessible 7 FFFFFFF 80000000 Shared, process-writable (DLLs, shared memory, Win 16 applications) C 0000000 Win 95 and Win 98 Operating system (Ring 0 components) FFFF Unique per process (per application), user mode Systemwide kernel mode 26

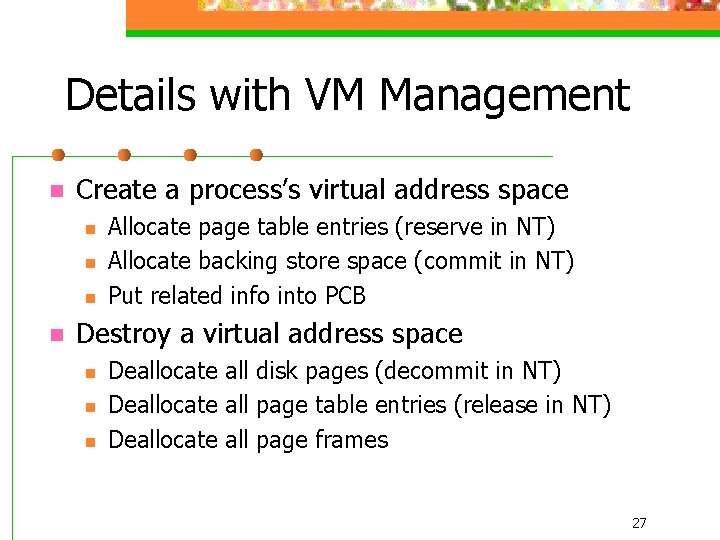

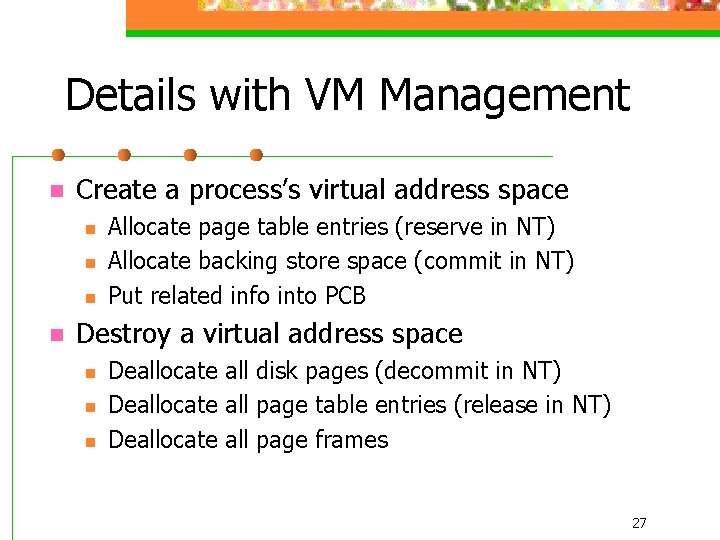

Details with VM Management n Create a process’s virtual address space n n Allocate page table entries (reserve in NT) Allocate backing store space (commit in NT) Put related info into PCB Destroy a virtual address space n n n Deallocate all disk pages (decommit in NT) Deallocate all page table entries (release in NT) Deallocate all page frames 27

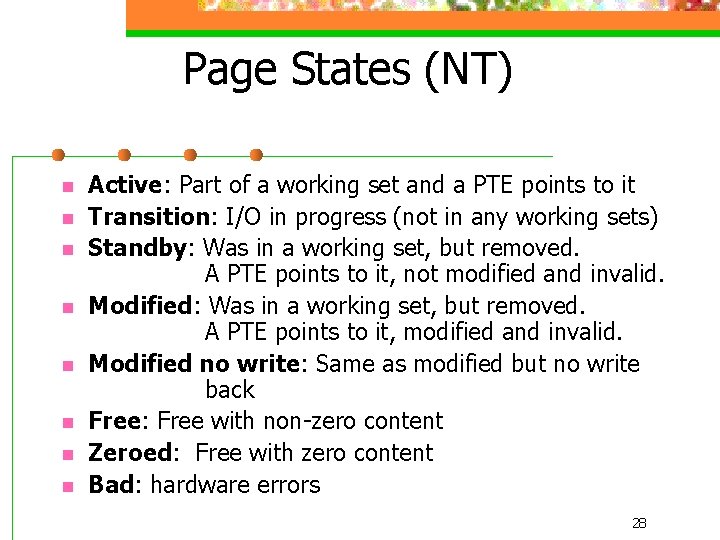

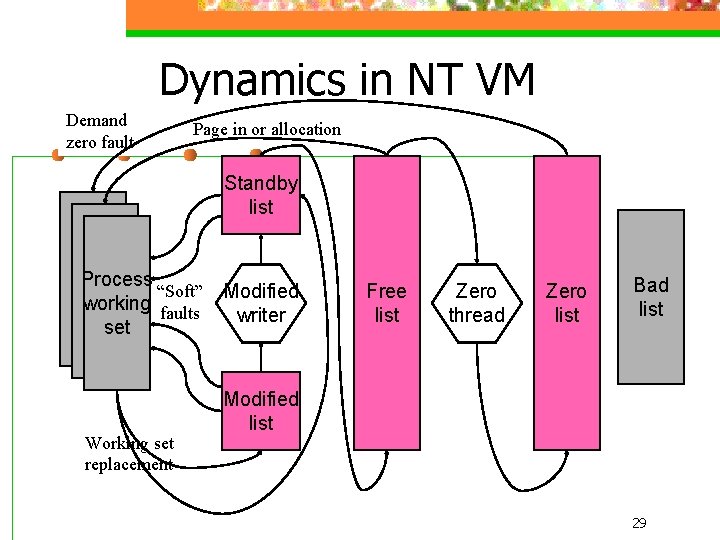

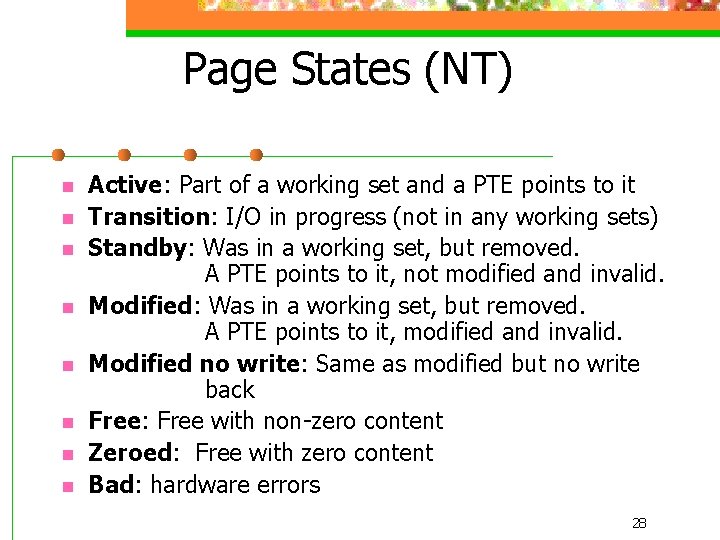

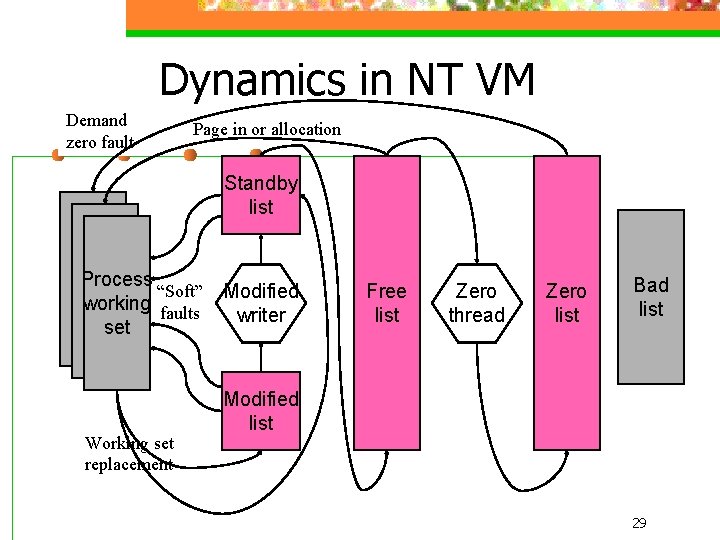

Page States (NT) n n n n Active: Part of a working set and a PTE points to it Transition: I/O in progress (not in any working sets) Standby: Was in a working set, but removed. A PTE points to it, not modified and invalid. Modified: Was in a working set, but removed. A PTE points to it, modified and invalid. Modified no write: Same as modified but no write back Free: Free with non-zero content Zeroed: Free with zero content Bad: hardware errors 28

Dynamics in NT VM Demand zero fault Page in or allocation Standby list Process “Soft” working faults set Working set replacement Modified writer Free list Zero thread Zero list Bad list Modified list 29

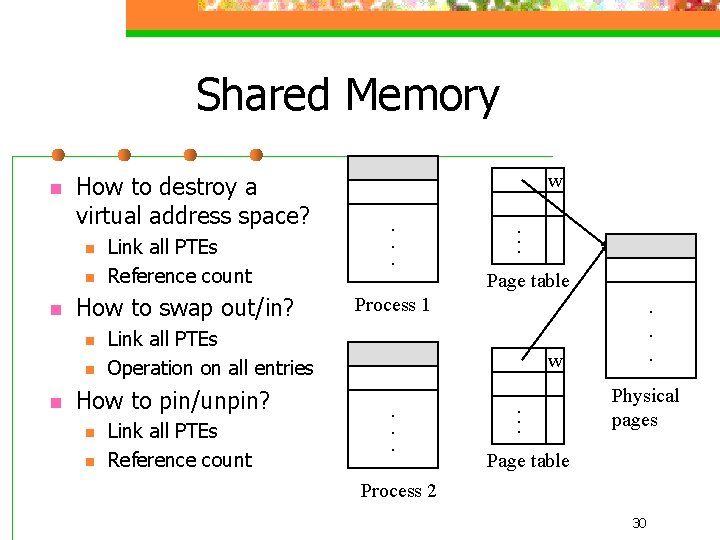

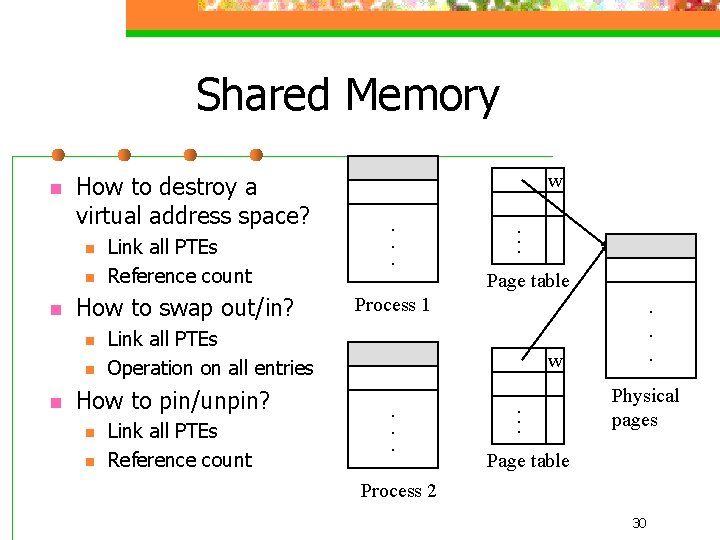

Shared Memory n How to destroy a virtual address space? n n n How to swap out/in? n n n Link all PTEs Reference count n . . . Page table Process 1 Link all PTEs Operation on all entries How to pin/unpin? n w Link all PTEs Reference count . . . w. . . Physical pages Page table Process 2 30

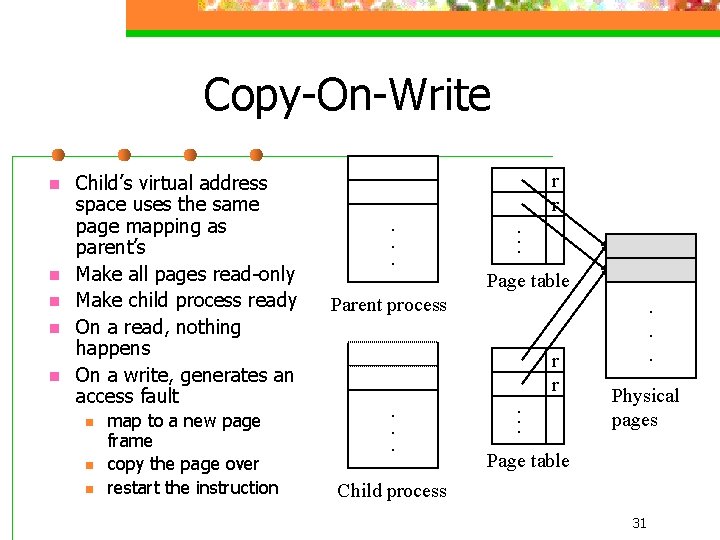

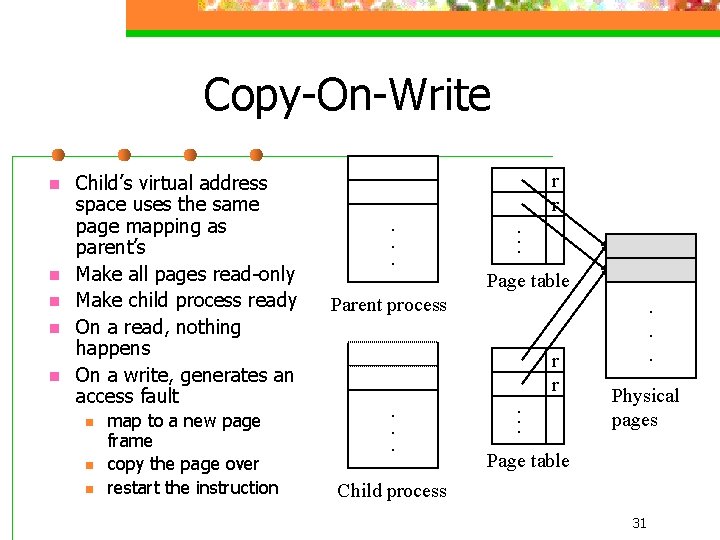

Copy-On-Write n n n Child’s virtual address space uses the same page mapping as parent’s Make all pages read-only Make child process ready On a read, nothing happens On a write, generates an access fault n n n map to a new page frame copy the page over restart the instruction . . . r r Page table Parent process . . . r r . . . Physical pages Page table Child process 31

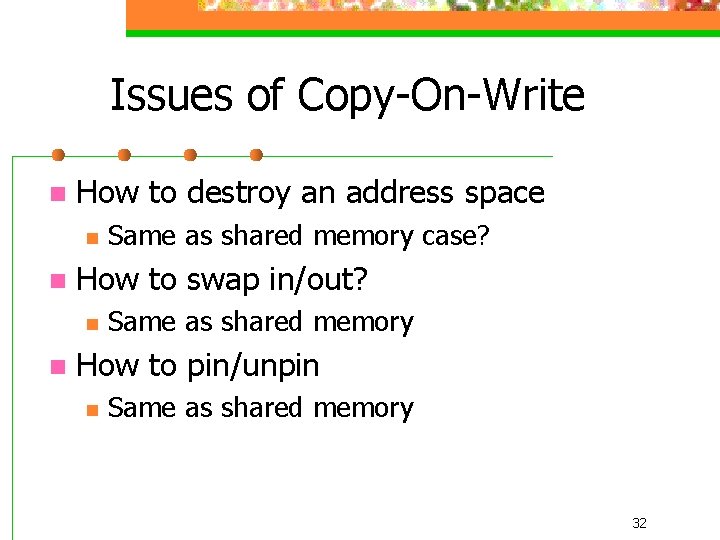

Issues of Copy-On-Write n How to destroy an address space n n How to swap in/out? n n Same as shared memory case? Same as shared memory How to pin/unpin n Same as shared memory 32