COMP 5331 Other Data Stream Models Prepared by

COMP 5331 Other Data Stream Models Prepared by Raymond Wong Presented by Raymond Wong raywong@cse COMP 5331 1

Entire Data Streams n n n Association Clustering Classification COMP 5331 2

Clustering over data streams n n BIRCH Advantages n n Incremental Scalable COMP 5331 3

Entire Data Streams n n n Association Clustering Classification COMP 5331 4

Data Mining over Static Data 1. 2. 3. Association Clustering Classification Static Data COMP 5331 Output (Data Mining Results) 5

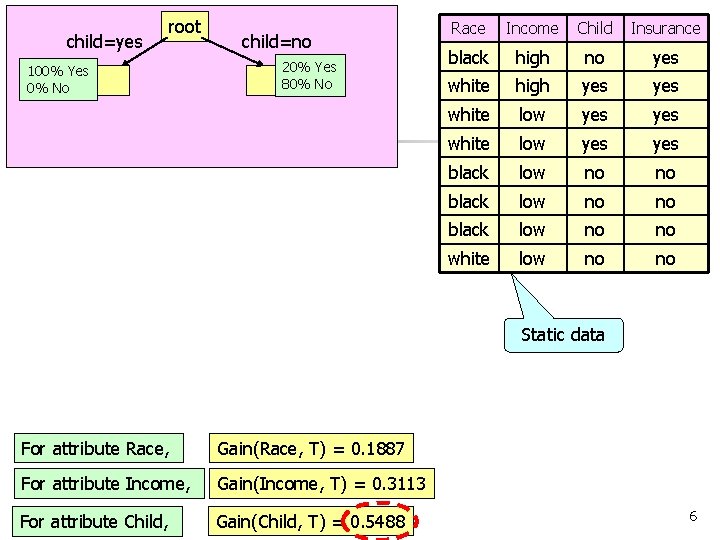

child=yes root 100% Yes 0% No child=no 20% Yes 80% No Race Income Child Insurance black high no yes white high yes yes white low yes black low no no white low no no Static data For attribute Race, Gain(Race, T) = 0. 1887 For attribute Income, Gain(Income, T) = 0. 3113 COMP 5331 For attribute Child, Gain(Child, T) = 0. 5488 6

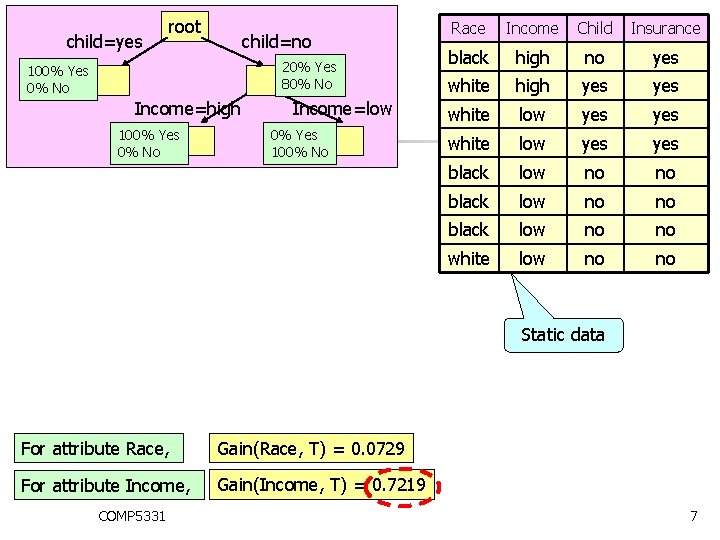

child=yes root child=no 20% Yes 80% No 100% Yes 0% No Income=high 100% Yes 0% No Income=low 0% Yes 100% No Race Income Child Insurance black high no yes white high yes yes white low yes black low no no white low no no Static data For attribute Race, Gain(Race, T) = 0. 0729 For attribute Income, Gain(Income, T) = 0. 7219 COMP 5331 7

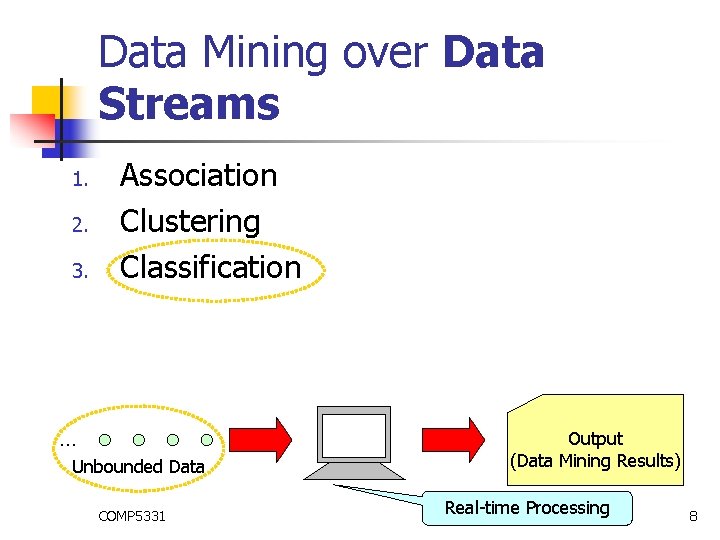

Data Mining over Data Streams 1. 2. 3. Association Clustering Classification … Unbounded Data COMP 5331 Output (Data Mining Results) Real-time Processing 8

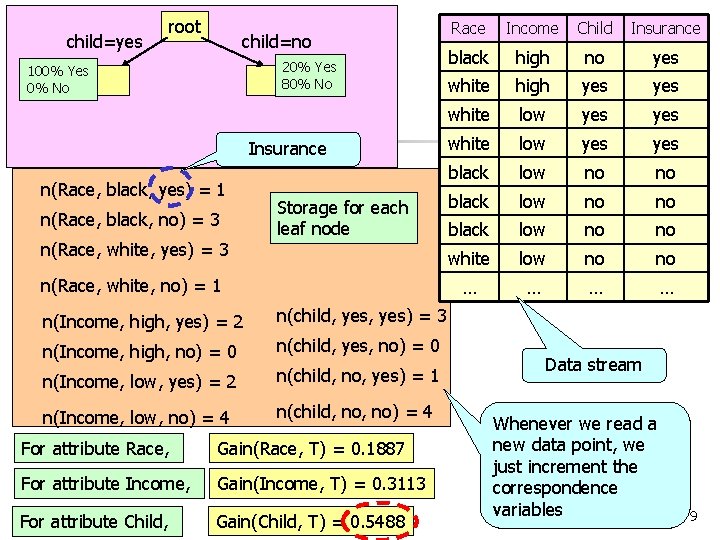

child=yes root child=no 20% Yes 80% No 100% Yes 0% No Insurance n(Race, black, yes) = 1 n(Race, black, no) = 3 n(Race, white, yes) = 3 Storage for each leaf node n(Race, white, no) = 1 n(Income, high, yes) = 2 n(child, yes) = 3 n(Income, high, no) = 0 n(child, yes, no) = 0 n(Income, low, yes) = 2 n(child, no, yes) = 1 n(Income, low, no) = 4 n(child, no) = 4 For attribute Race, Gain(Race, T) = 0. 1887 For attribute Income, Gain(Income, T) = 0. 3113 COMP 5331 For attribute Child, Gain(Child, T) = 0. 5488 Race Income Child Insurance black high no yes white high yes yes white low yes black low no no white low no no … … Data stream Whenever we read a new data point, we just increment the correspondence variables 9

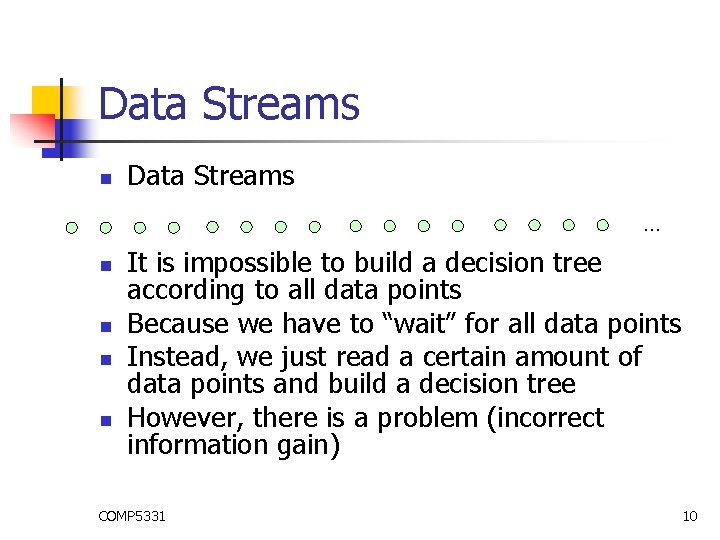

Data Streams n Data Streams … n n It is impossible to build a decision tree according to all data points Because we have to “wait” for all data points Instead, we just read a certain amount of data points and build a decision tree However, there is a problem (incorrect information gain) COMP 5331 10

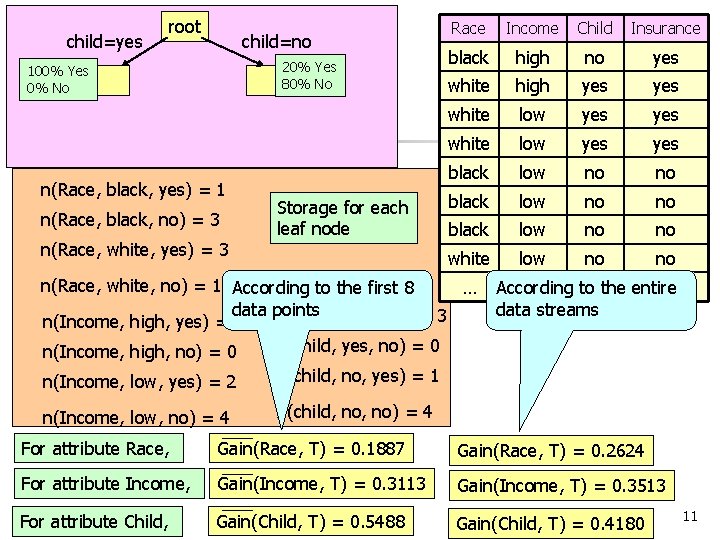

child=yes root child=no 20% Yes 80% No 100% Yes 0% No n(Race, black, yes) = 1 n(Race, black, no) = 3 n(Race, white, yes) = 3 Storage for each leaf node Race Income Child Insurance black high no yes white high yes yes white low yes black low no no white low no no n(Race, white, no) = 1 According to the first 8 … data points n(child, yes) = 3 n(Income, high, yes) = 2 n(child, yes, no) = 0 n(Income, high, no) = 0 n(Income, low, yes) = 2 n(child, no, yes) = 1 n(Income, low, no) = 4 n(child, no) = 4 … … According to…the entire data streams For attribute Race, Gain(Race, T) = 0. 1887 Gain(Race, T) = 0. 2624 For attribute Income, Gain(Income, T) = 0. 3113 Gain(Income, T) = 0. 3513 COMP 5331 For attribute Child, Gain(Child, T) = 0. 5488 Gain(Child, T) = 0. 4180 11

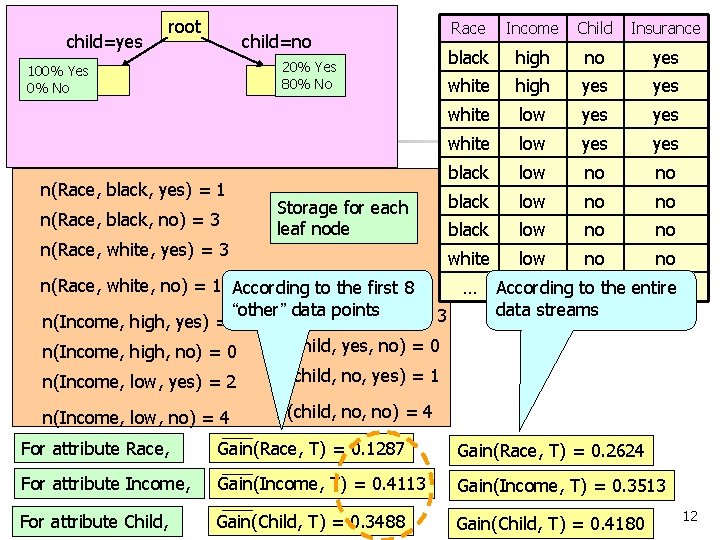

child=yes root child=no 20% Yes 80% No 100% Yes 0% No n(Race, black, yes) = 1 n(Race, black, no) = 3 n(Race, white, yes) = 3 Storage for each leaf node Race Income Child Insurance black high no yes white high yes yes white low yes black low no no white low no no n(Race, white, no) = 1 According to the first 8 … “other”n(child, data points yes, yes) = 3 n(Income, high, yes) = 2 n(child, yes, no) = 0 n(Income, high, no) = 0 n(Income, low, yes) = 2 n(child, no, yes) = 1 n(Income, low, no) = 4 n(child, no) = 4 … … According to…the entire data streams For attribute Race, Gain(Race, T) = 0. 1287 Gain(Race, T) = 0. 2624 For attribute Income, Gain(Income, T) = 0. 4113 Gain(Income, T) = 0. 3513 COMP 5331 For attribute Child, Gain(Child, T) = 0. 3488 Gain(Child, T) = 0. 4180 12

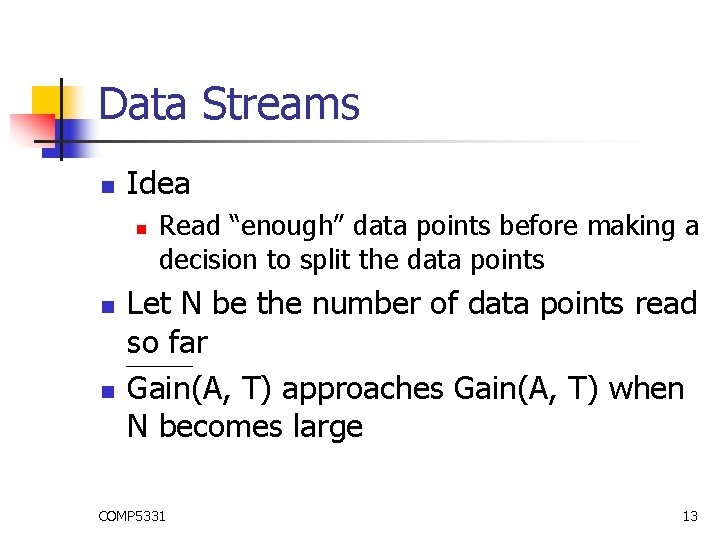

Data Streams n Idea n n n Read “enough” data points before making a decision to split the data points Let N be the number of data points read so far Gain(A, T) approaches Gain(A, T) when N becomes large COMP 5331 13

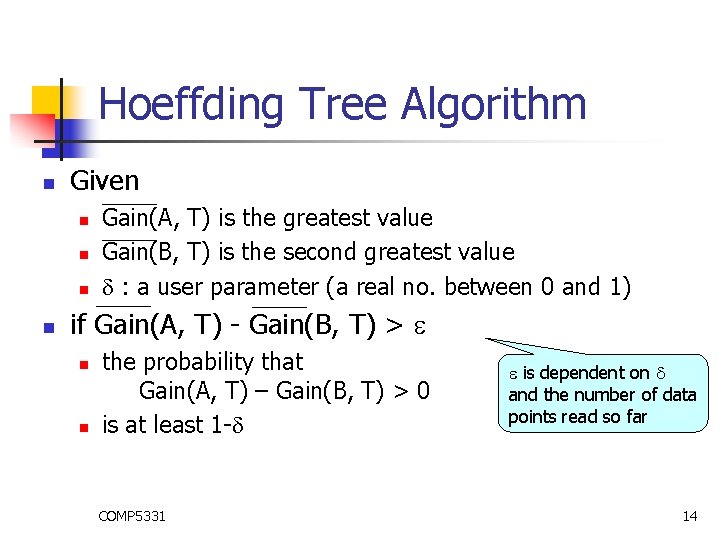

Hoeffding Tree Algorithm n Given n n Gain(A, T) is the greatest value Gain(B, T) is the second greatest value : a user parameter (a real no. between 0 and 1) if Gain(A, T) - Gain(B, T) > n n the probability that Gain(A, T) – Gain(B, T) > 0 is at least 1 - COMP 5331 is dependent on and the number of data points read so far 14

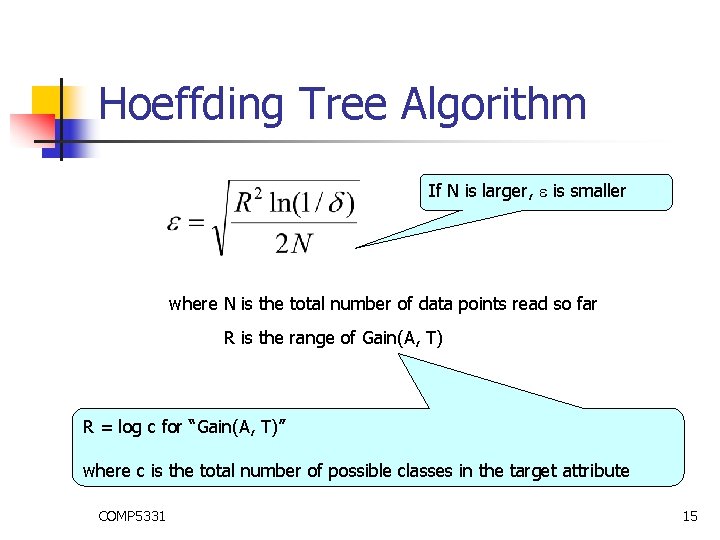

Hoeffding Tree Algorithm If N is larger, is smaller where N is the total number of data points read so far R is the range of Gain(A, T) R = log c for “Gain(A, T)” where c is the total number of possible classes in the target attribute COMP 5331 15

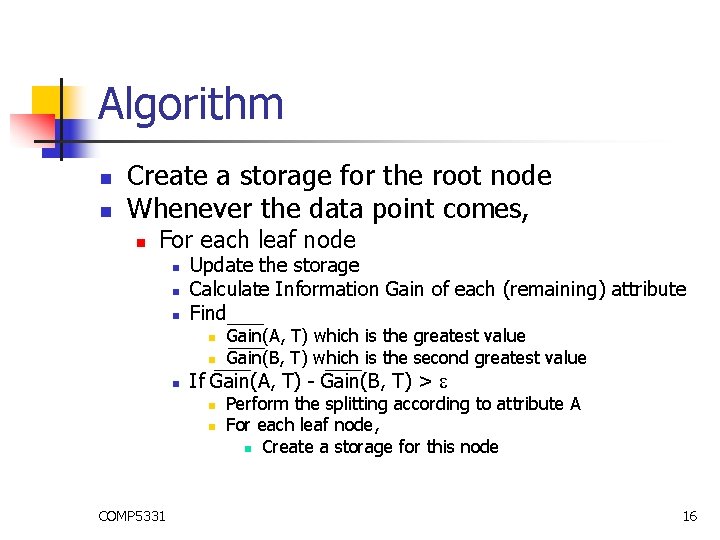

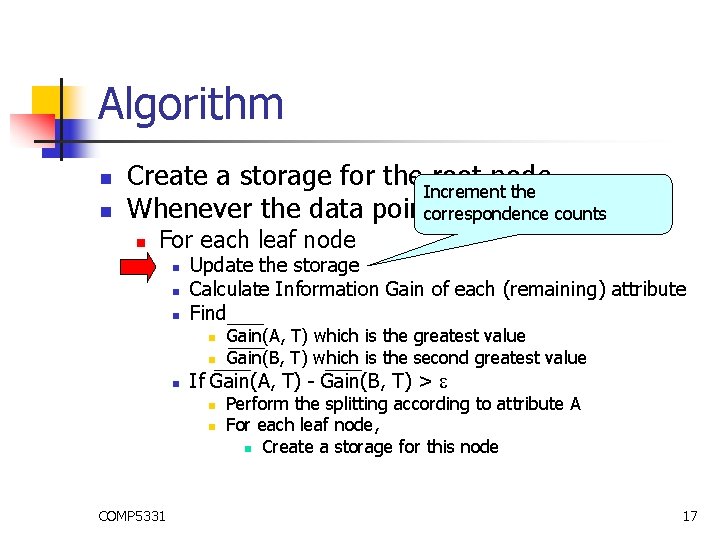

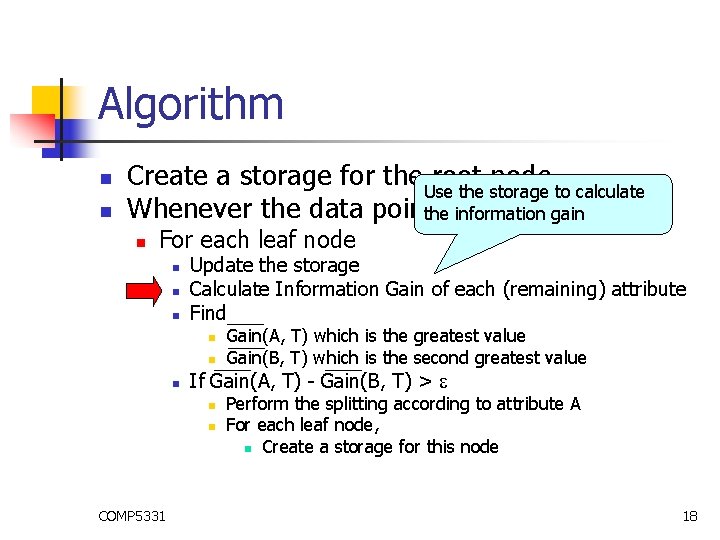

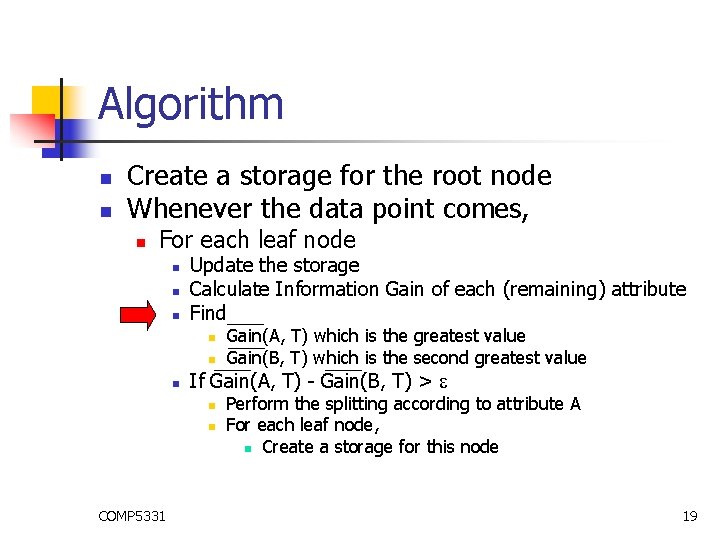

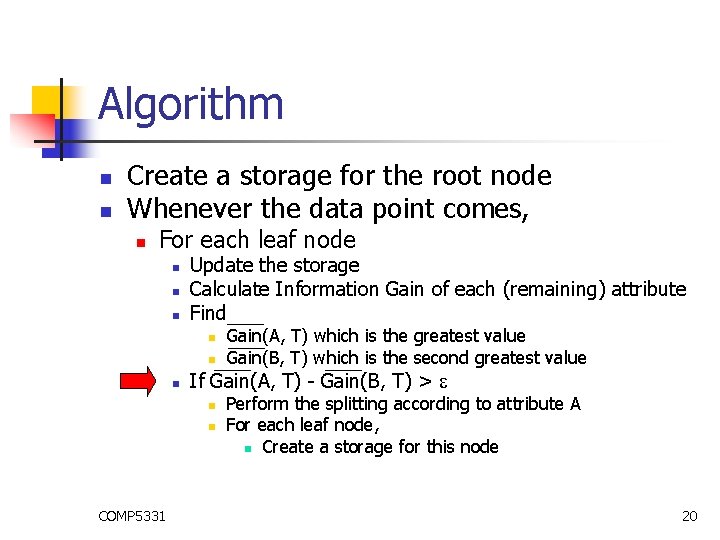

Algorithm n n Create a storage for the root node Whenever the data point comes, n For each leaf node n n n Update the storage Calculate Information Gain of each (remaining) attribute Find n n n If Gain(A, T) - Gain(B, T) > n n COMP 5331 Gain(A, T) which is the greatest value Gain(B, T) which is the second greatest value Perform the splitting according to attribute A For each leaf node, n Create a storage for this node 16

Algorithm n n Create a storage for the Increment root node the Whenever the data pointcorrespondence comes, counts n For each leaf node n n n Update the storage Calculate Information Gain of each (remaining) attribute Find n n n If Gain(A, T) - Gain(B, T) > n n COMP 5331 Gain(A, T) which is the greatest value Gain(B, T) which is the second greatest value Perform the splitting according to attribute A For each leaf node, n Create a storage for this node 17

Algorithm n n Create a storage for the Use root node the storage to calculate Whenever the data pointthecomes, information gain n For each leaf node n n n Update the storage Calculate Information Gain of each (remaining) attribute Find n n n If Gain(A, T) - Gain(B, T) > n n COMP 5331 Gain(A, T) which is the greatest value Gain(B, T) which is the second greatest value Perform the splitting according to attribute A For each leaf node, n Create a storage for this node 18

Algorithm n n Create a storage for the root node Whenever the data point comes, n For each leaf node n n n Update the storage Calculate Information Gain of each (remaining) attribute Find n n n If Gain(A, T) - Gain(B, T) > n n COMP 5331 Gain(A, T) which is the greatest value Gain(B, T) which is the second greatest value Perform the splitting according to attribute A For each leaf node, n Create a storage for this node 19

Algorithm n n Create a storage for the root node Whenever the data point comes, n For each leaf node n n n Update the storage Calculate Information Gain of each (remaining) attribute Find n n n If Gain(A, T) - Gain(B, T) > n n COMP 5331 Gain(A, T) which is the greatest value Gain(B, T) which is the second greatest value Perform the splitting according to attribute A For each leaf node, n Create a storage for this node 20

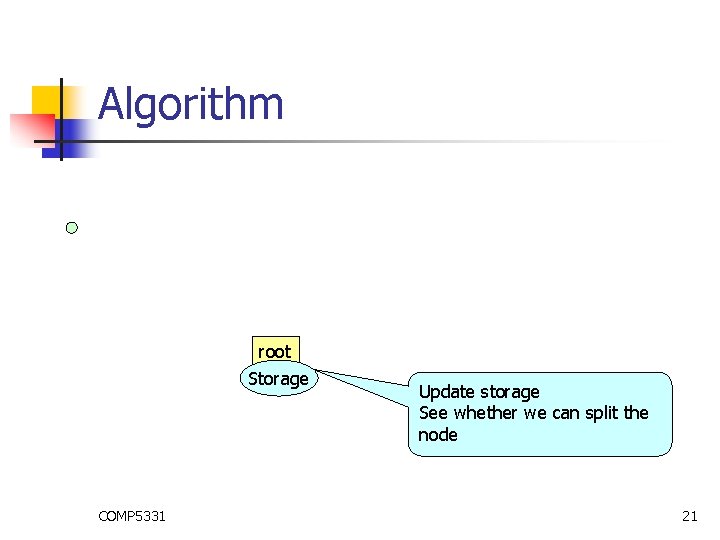

Algorithm root Storage COMP 5331 Update storage See whether we can split the node 21

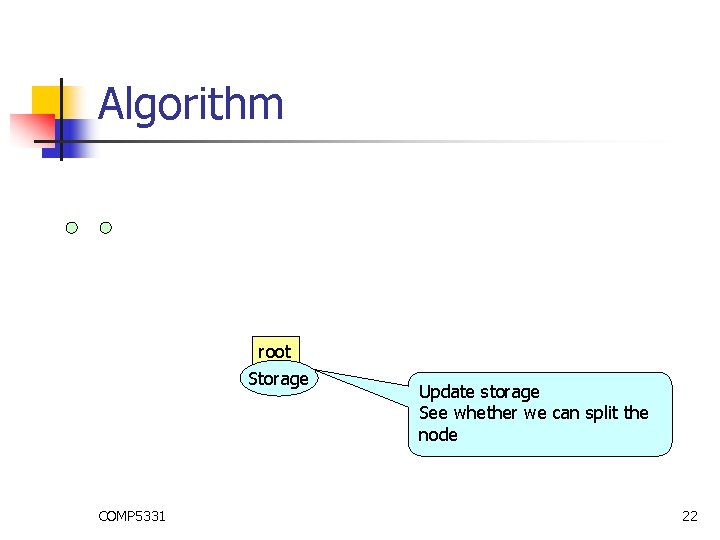

Algorithm root Storage COMP 5331 Update storage See whether we can split the node 22

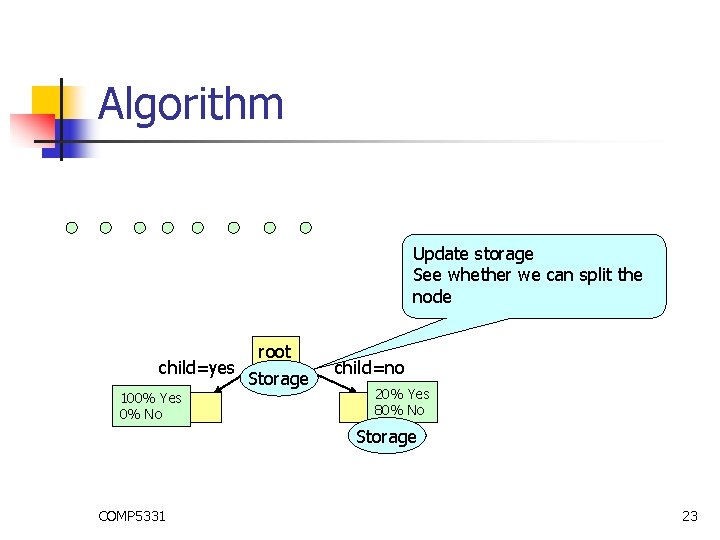

Algorithm Update storage See whether we can split the node child=yes 100% Yes 0% No root Storage child=no 20% Yes 80% No Storage COMP 5331 23

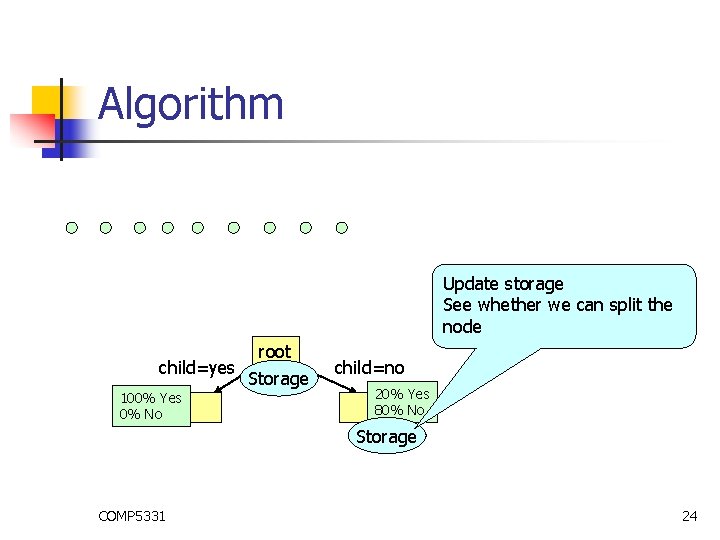

Algorithm Update storage See whether we can split the node child=yes 100% Yes 0% No root Storage child=no 20% Yes 80% No Storage COMP 5331 24

- Slides: 24