COMP 5331 Knowledge Discovery and Data Mining Acknowledgement

- Slides: 103

COMP 5331: Knowledge Discovery and Data Mining Acknowledgement: Slides modified by Dr. Lei Chen based on the slides provided by Jiawei Han, Micheline Kamber, and Jian Pei And slides provide by Raymond Wong and Tan, Steinbach, Kumar 1 1

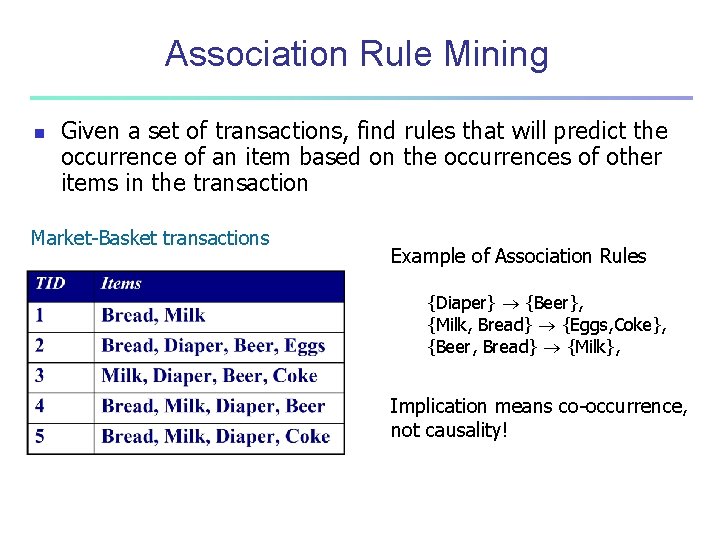

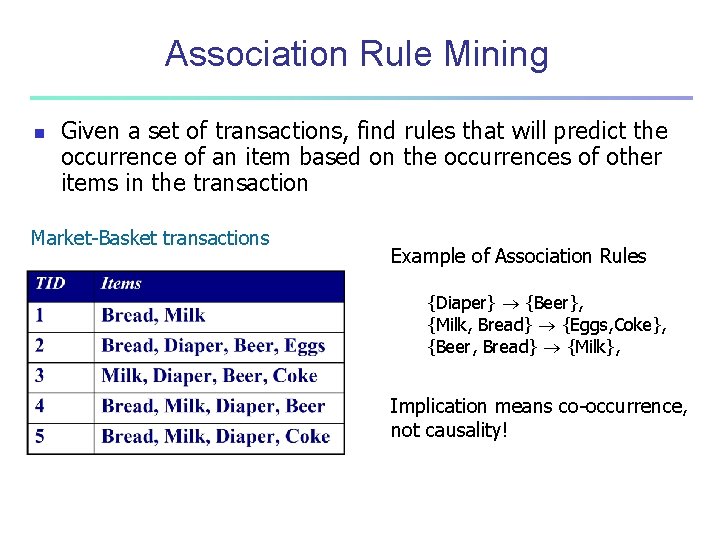

Association Rule Mining n Given a set of transactions, find rules that will predict the occurrence of an item based on the occurrences of other items in the transaction Market-Basket transactions Example of Association Rules {Diaper} {Beer}, {Milk, Bread} {Eggs, Coke}, {Beer, Bread} {Milk}, Implication means co-occurrence, not causality!

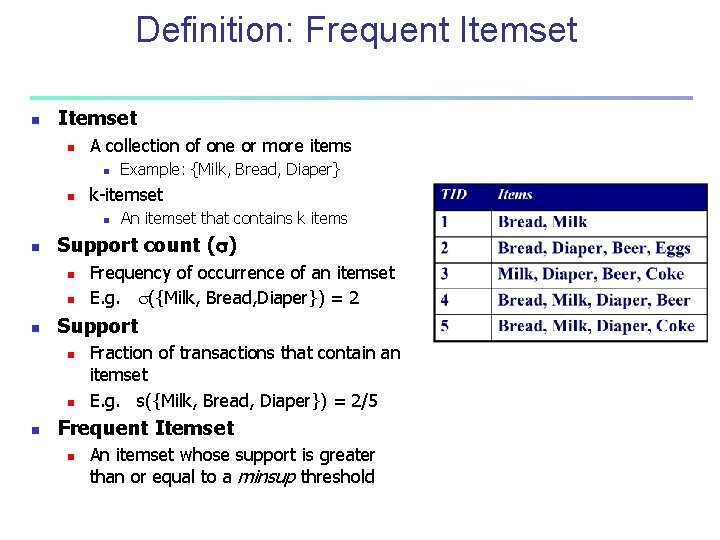

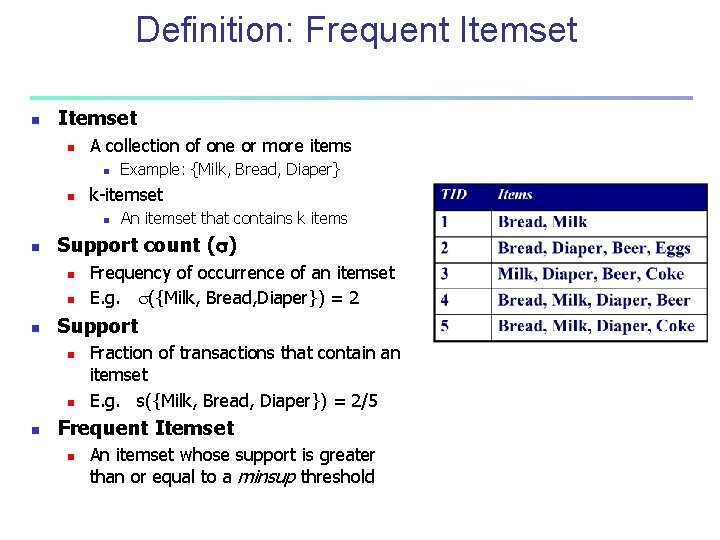

Definition: Frequent Itemset n A collection of one or more items n n k-itemset n n n Frequency of occurrence of an itemset E. g. ({Milk, Bread, Diaper}) = 2 Support n n n An itemset that contains k items Support count ( ) n n Example: {Milk, Bread, Diaper} Fraction of transactions that contain an itemset E. g. s({Milk, Bread, Diaper}) = 2/5 Frequent Itemset n An itemset whose support is greater than or equal to a minsup threshold

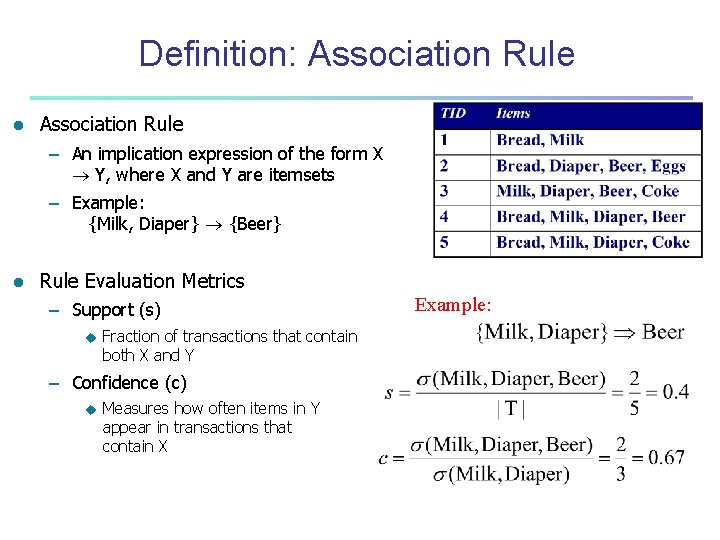

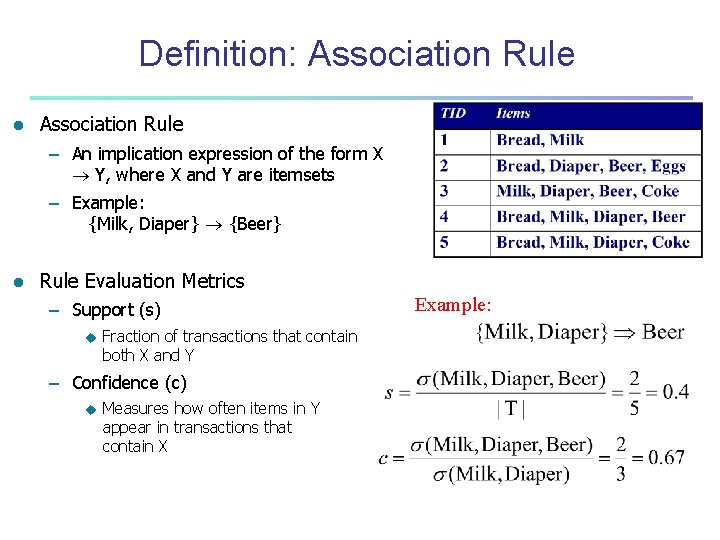

Definition: Association Rule l Association Rule – An implication expression of the form X Y, where X and Y are itemsets – Example: {Milk, Diaper} {Beer} l Rule Evaluation Metrics – Support (s) u Fraction of transactions that contain both X and Y – Confidence (c) u Measures how often items in Y appear in transactions that contain X Example:

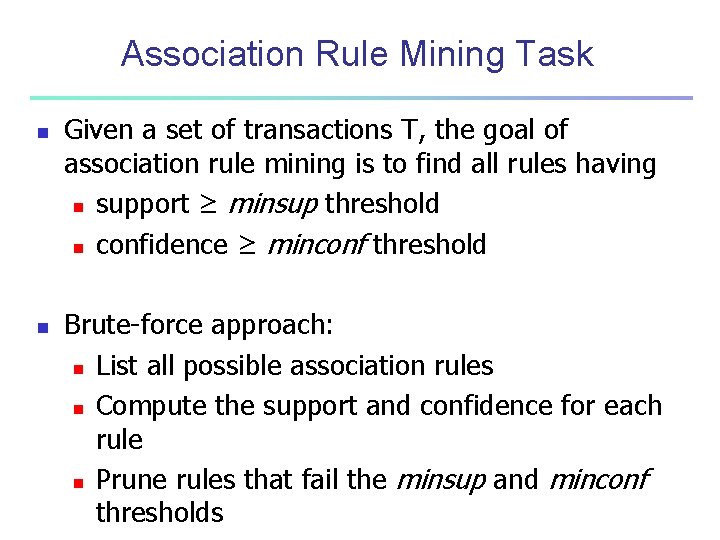

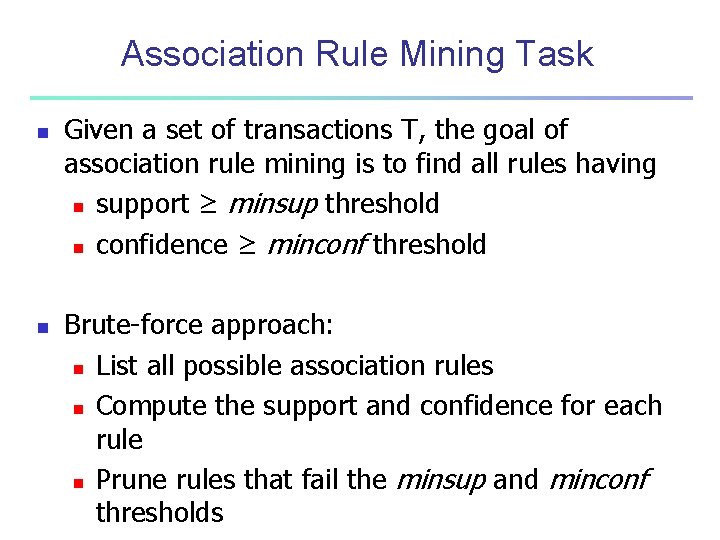

Association Rule Mining Task n n Given a set of transactions T, the goal of association rule mining is to find all rules having n support ≥ minsup threshold n confidence ≥ minconf threshold Brute-force approach: n List all possible association rules n Compute the support and confidence for each rule n Prune rules that fail the minsup and minconf thresholds

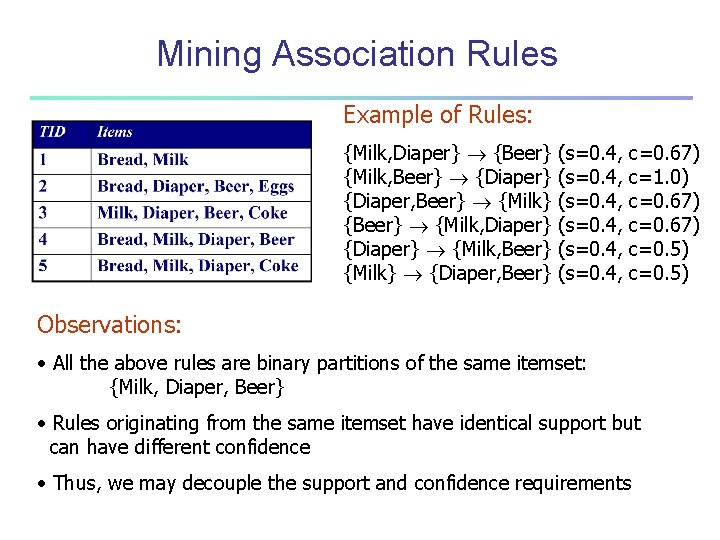

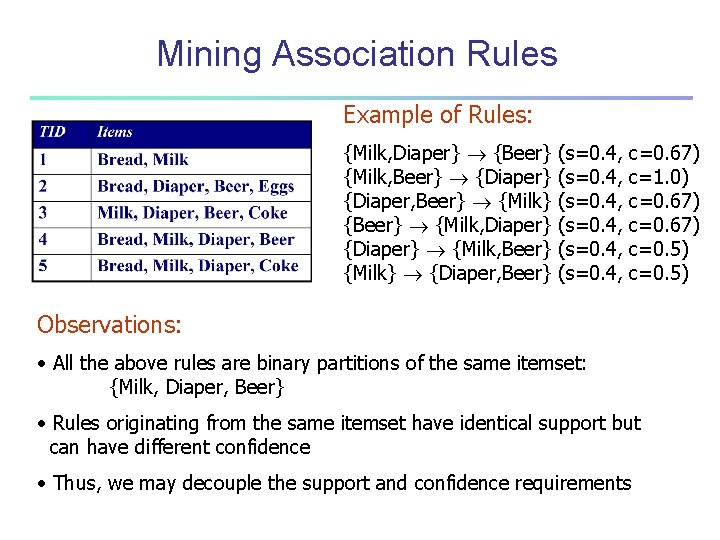

Mining Association Rules Example of Rules: {Milk, Diaper} {Beer} {Milk, Beer} {Diaper} {Diaper, Beer} {Milk} {Beer} {Milk, Diaper} {Diaper} {Milk, Beer} {Milk} {Diaper, Beer} (s=0. 4, c=0. 67) c=1. 0) c=0. 67) c=0. 5) Observations: • All the above rules are binary partitions of the same itemset: {Milk, Diaper, Beer} • Rules originating from the same itemset have identical support but can have different confidence • Thus, we may decouple the support and confidence requirements

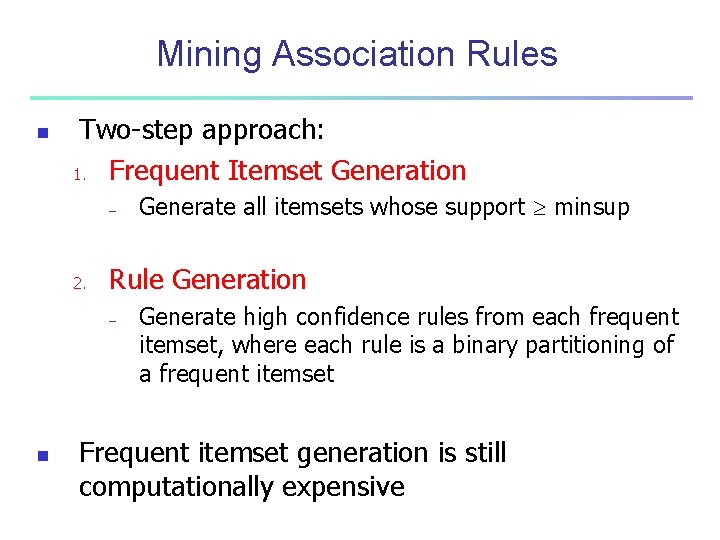

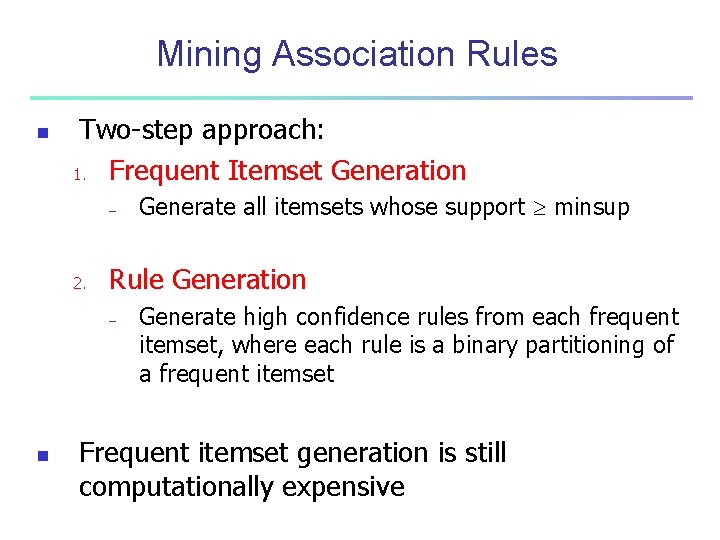

Mining Association Rules n Two-step approach: 1. Frequent Itemset Generation – 2. Rule Generation – n Generate all itemsets whose support minsup Generate high confidence rules from each frequent itemset, where each rule is a binary partitioning of a frequent itemset Frequent itemset generation is still computationally expensive

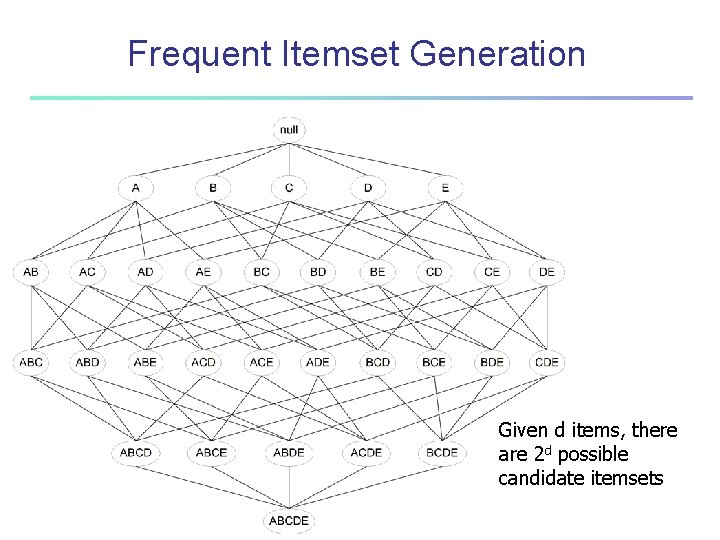

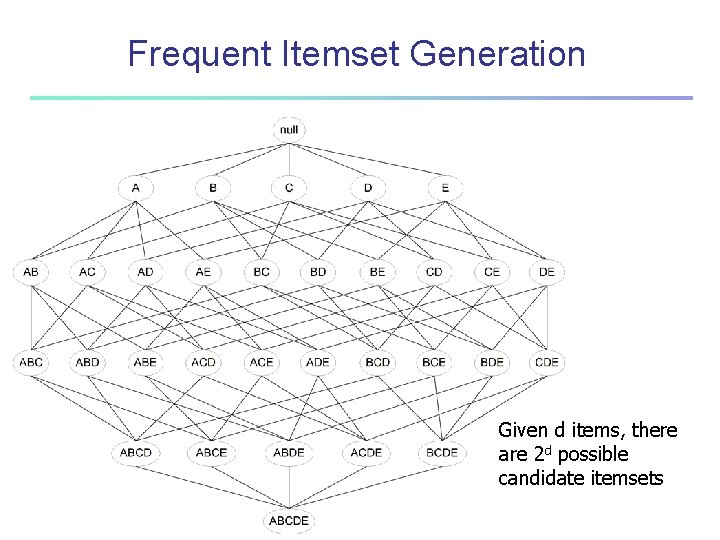

Frequent Itemset Generation Given d items, there are 2 d possible candidate itemsets

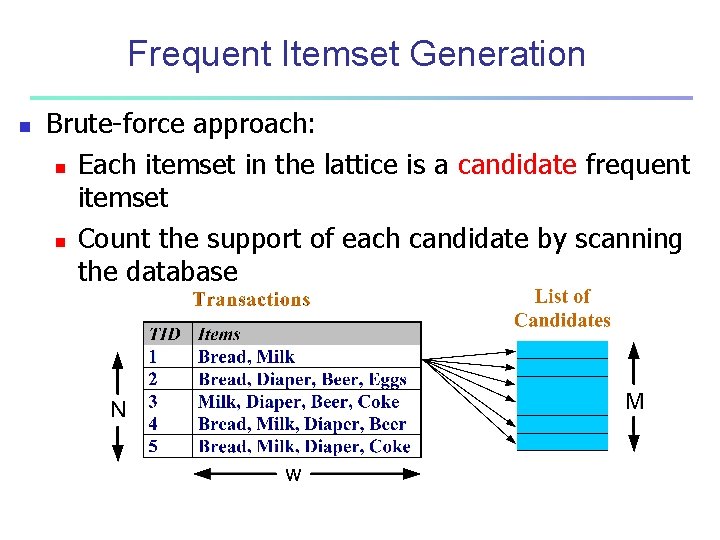

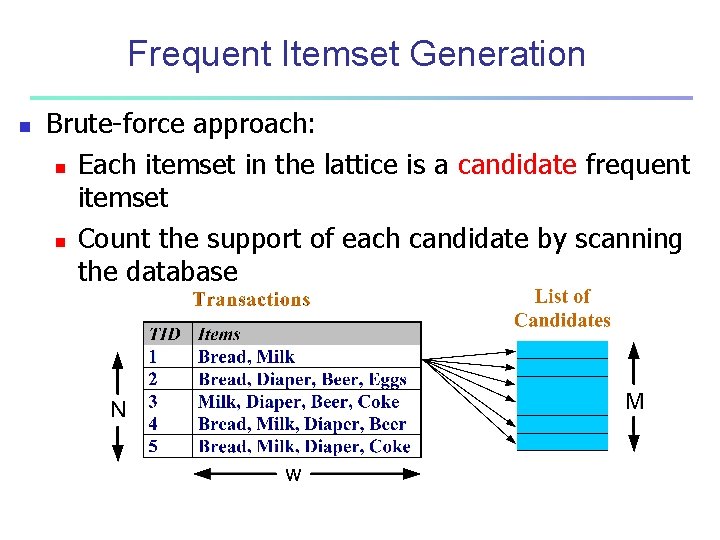

Frequent Itemset Generation n Brute-force approach: n Each itemset in the lattice is a candidate frequent itemset n Count the support of each candidate by scanning the database

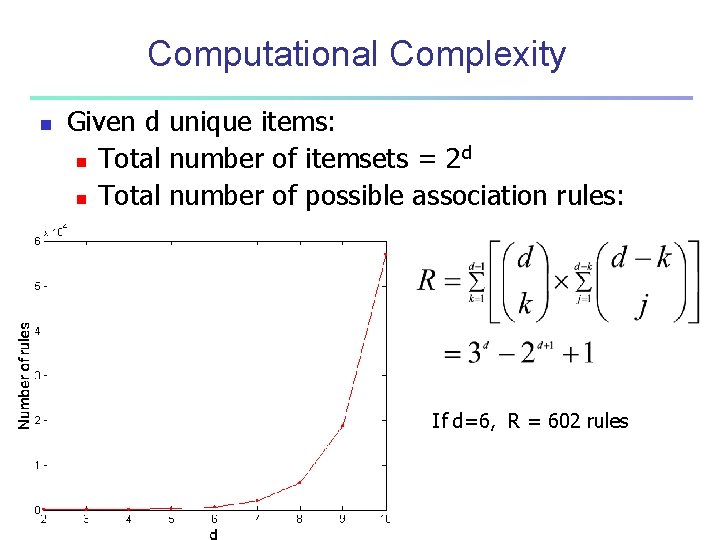

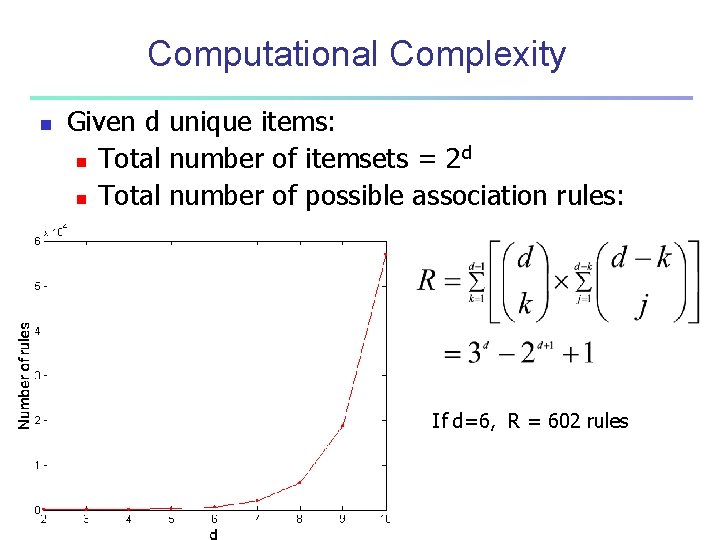

Computational Complexity n Given d unique items: d n Total number of itemsets = 2 n Total number of possible association rules: If d=6, R = 602 rules

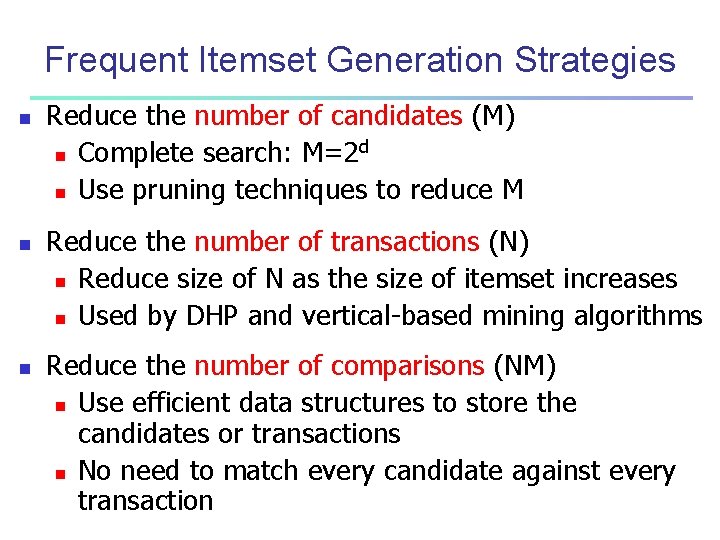

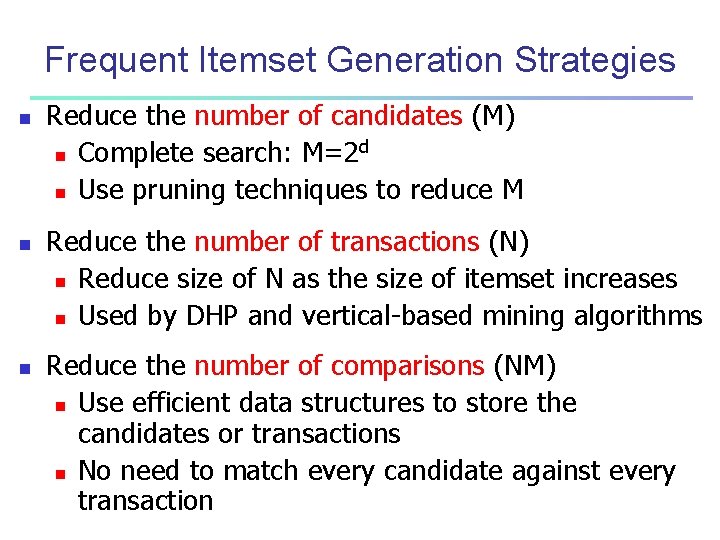

Frequent Itemset Generation Strategies n n n Reduce the number of candidates (M) d n Complete search: M=2 n Use pruning techniques to reduce M Reduce the number of transactions (N) n Reduce size of N as the size of itemset increases n Used by DHP and vertical-based mining algorithms Reduce the number of comparisons (NM) n Use efficient data structures to store the candidates or transactions n No need to match every candidate against every transaction

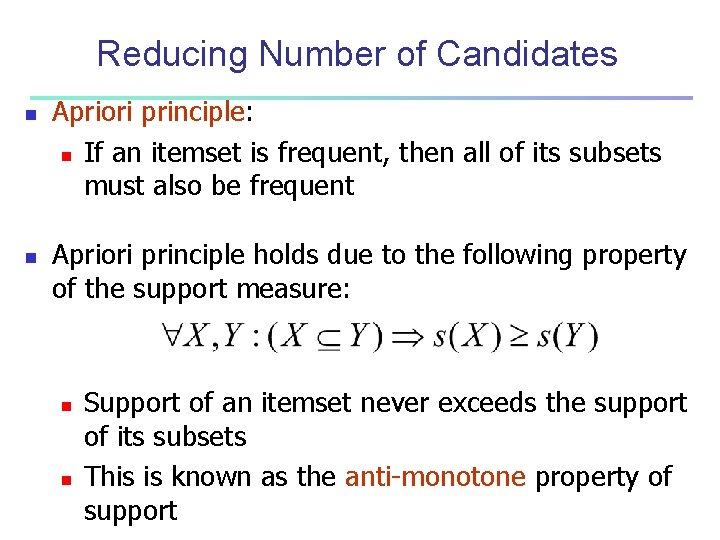

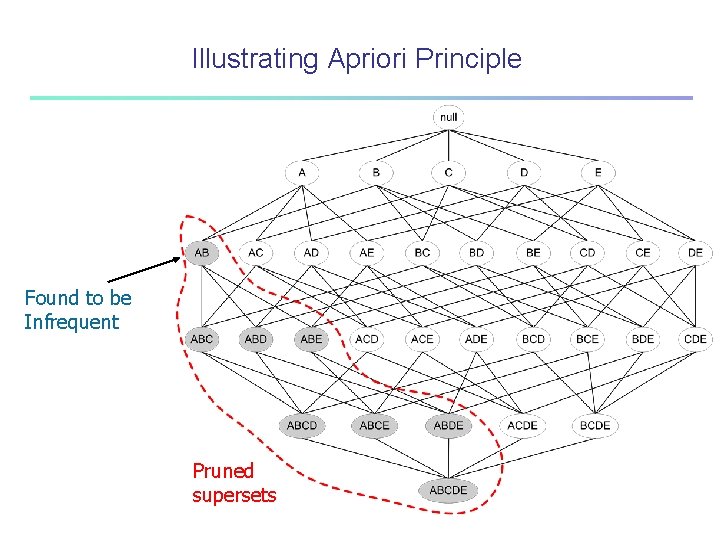

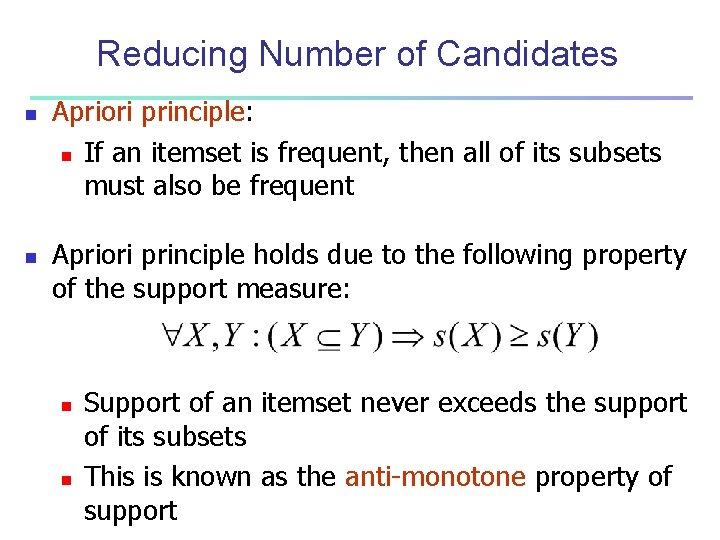

Reducing Number of Candidates n n Apriori principle: n If an itemset is frequent, then all of its subsets must also be frequent Apriori principle holds due to the following property of the support measure: n n Support of an itemset never exceeds the support of its subsets This is known as the anti-monotone property of support

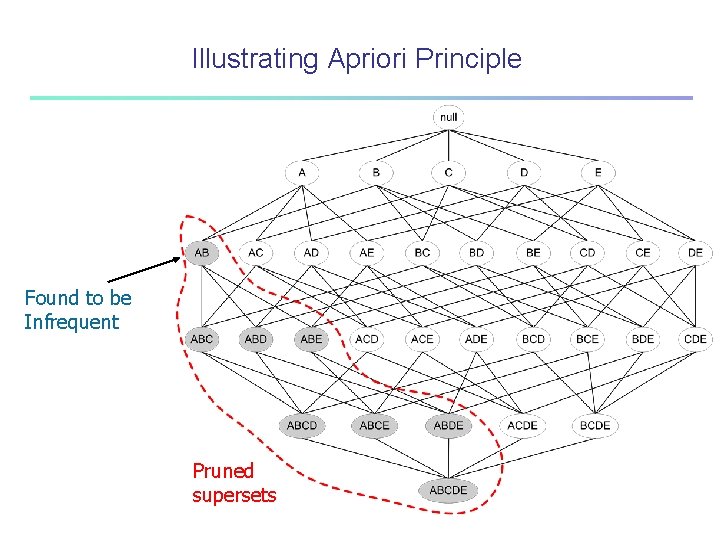

Illustrating Apriori Principle Found to be Infrequent Pruned supersets

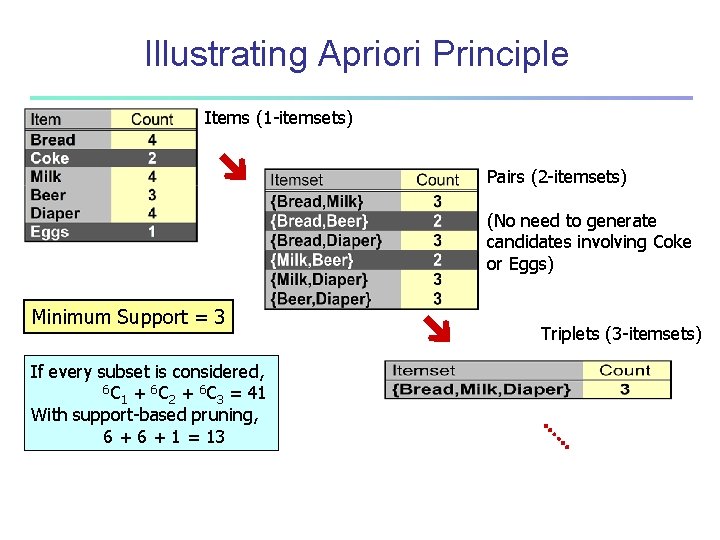

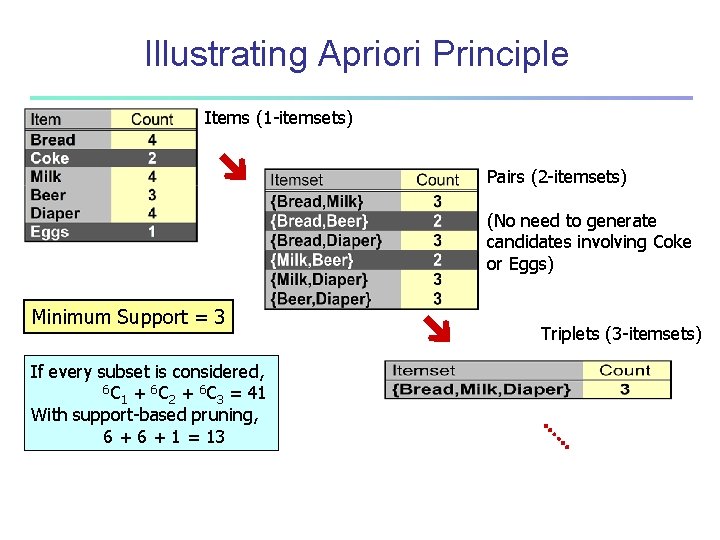

Illustrating Apriori Principle Items (1 -itemsets) Pairs (2 -itemsets) (No need to generate candidates involving Coke or Eggs) Minimum Support = 3 If every subset is considered, 6 C + 6 C = 41 1 2 3 With support-based pruning, 6 + 1 = 13 Triplets (3 -itemsets)

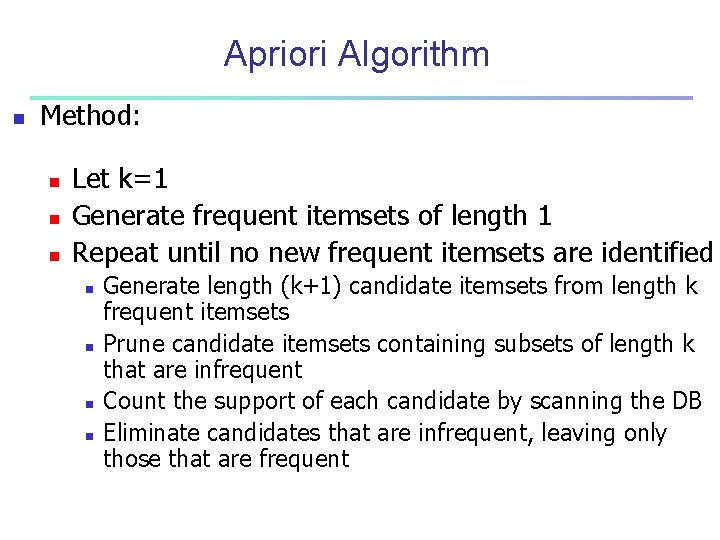

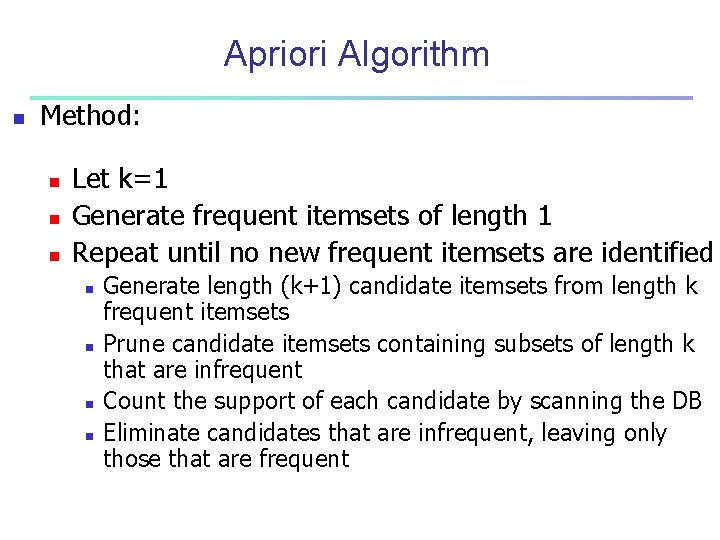

Apriori Algorithm n Method: n n n Let k=1 Generate frequent itemsets of length 1 Repeat until no new frequent itemsets are identified n n Generate length (k+1) candidate itemsets from length k frequent itemsets Prune candidate itemsets containing subsets of length k that are infrequent Count the support of each candidate by scanning the DB Eliminate candidates that are infrequent, leaving only those that are frequent

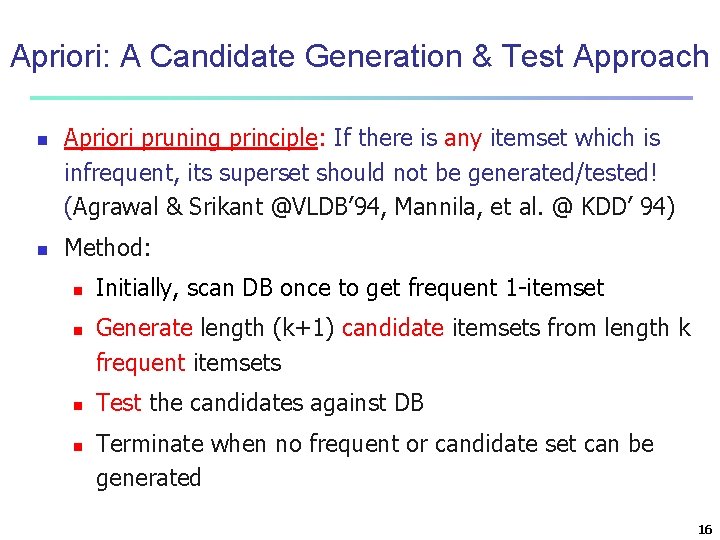

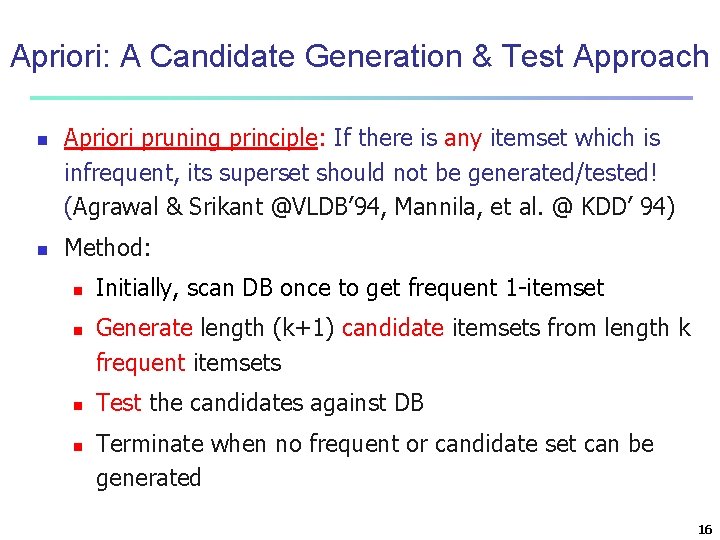

Apriori: A Candidate Generation & Test Approach n n Apriori pruning principle: If there is any itemset which is infrequent, its superset should not be generated/tested! (Agrawal & Srikant @VLDB’ 94, Mannila, et al. @ KDD’ 94) Method: n n Initially, scan DB once to get frequent 1 -itemset Generate length (k+1) candidate itemsets from length k frequent itemsets Test the candidates against DB Terminate when no frequent or candidate set can be generated 16

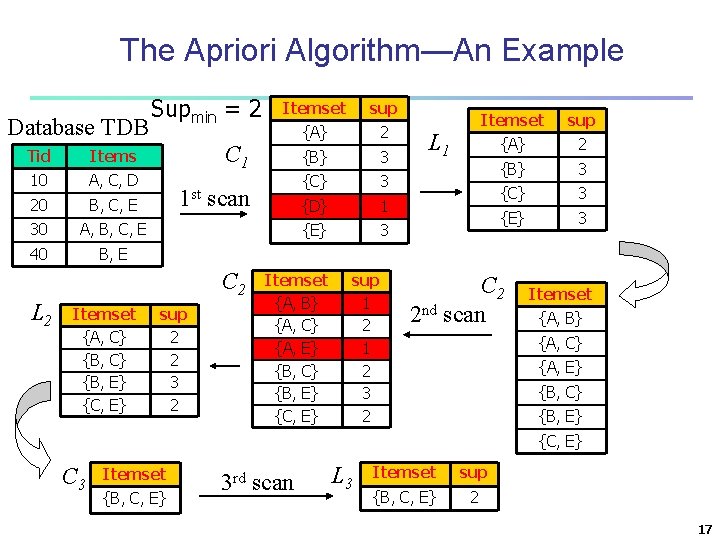

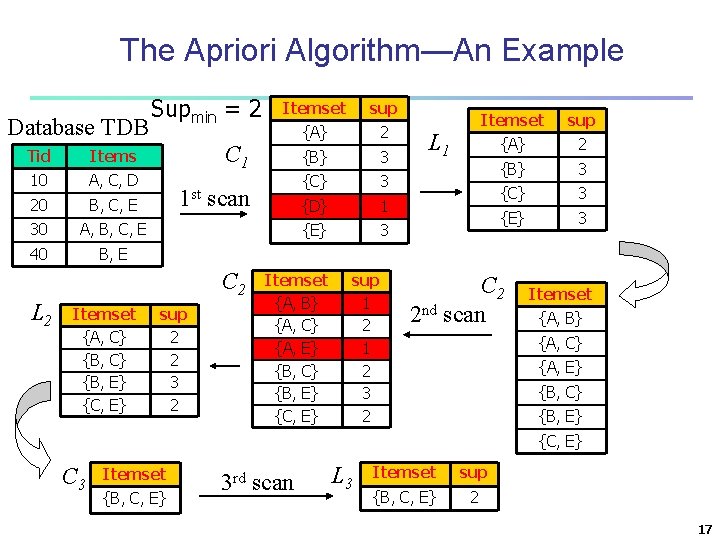

The Apriori Algorithm—An Example Database TDB Tid Items 10 A, C, D 20 B, C, E 30 A, B, C, E 40 B, E Supmin = 2 Itemset {A, C} {B, E} {C, E} sup {A} 2 {B} 3 {C} 3 {D} 1 {E} 3 C 1 1 st scan C 2 L 2 Itemset sup 2 2 3 2 Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} sup 1 2 3 2 L 1 Itemset sup {A} 2 {B} 3 {C} 3 {E} 3 C 2 2 nd scan Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} C 3 Itemset {B, C, E} 3 rd scan L 3 Itemset sup {B, C, E} 2 17

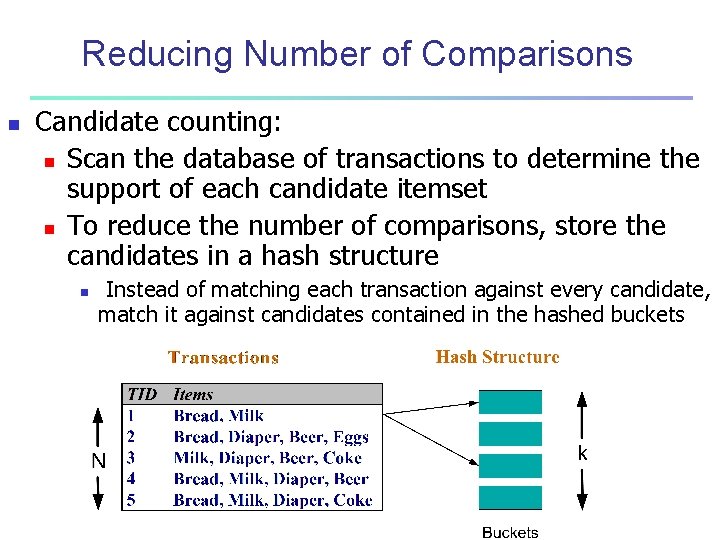

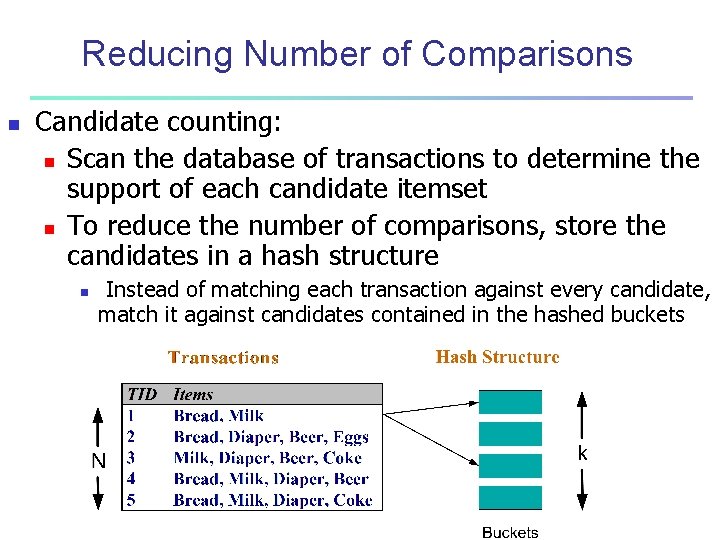

Reducing Number of Comparisons n Candidate counting: n Scan the database of transactions to determine the support of each candidate itemset n To reduce the number of comparisons, store the candidates in a hash structure n Instead of matching each transaction against every candidate, match it against candidates contained in the hashed buckets

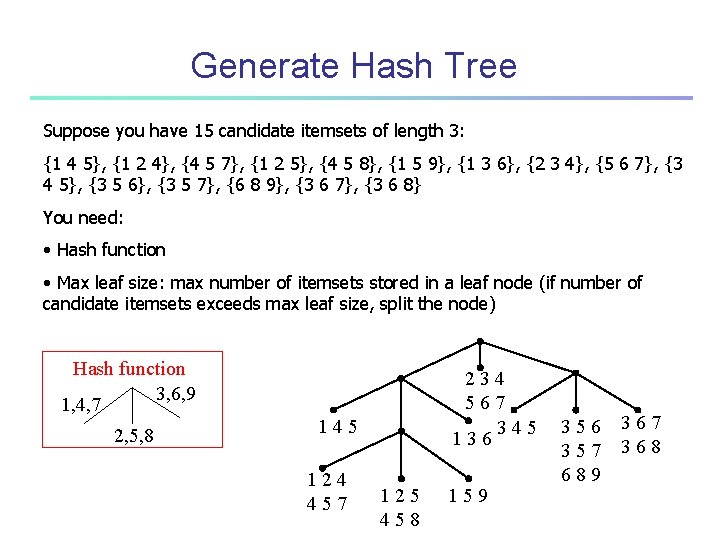

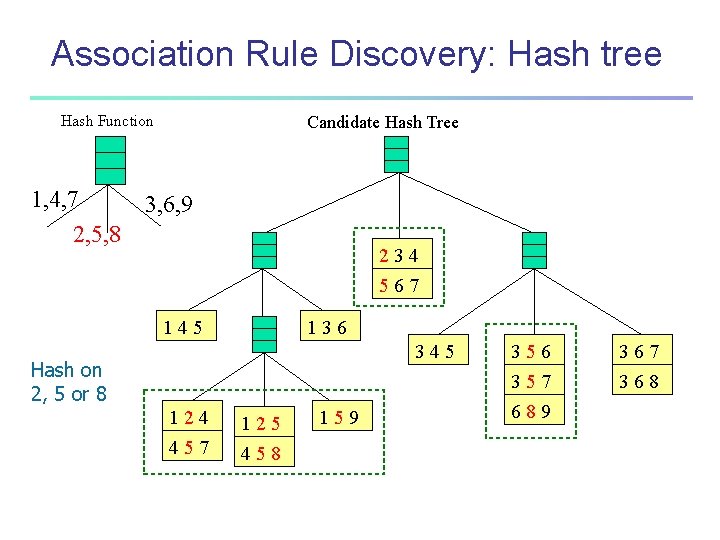

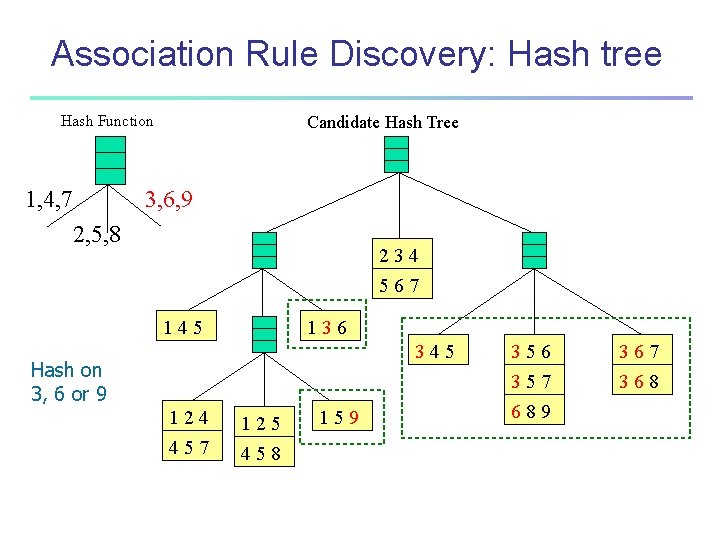

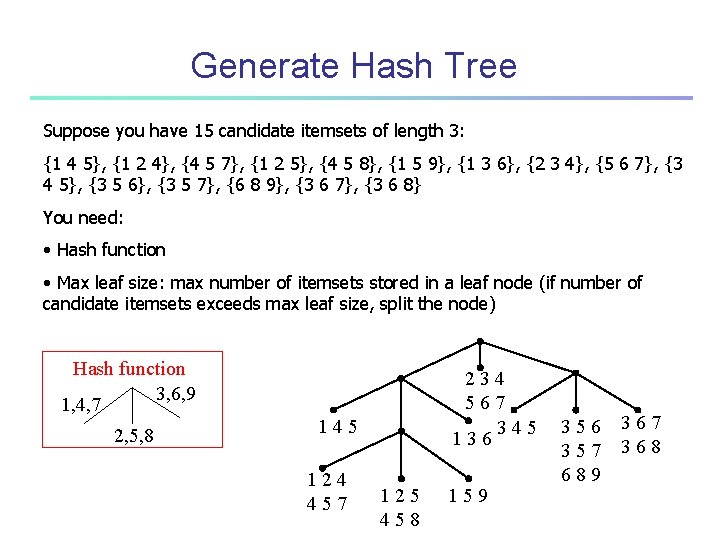

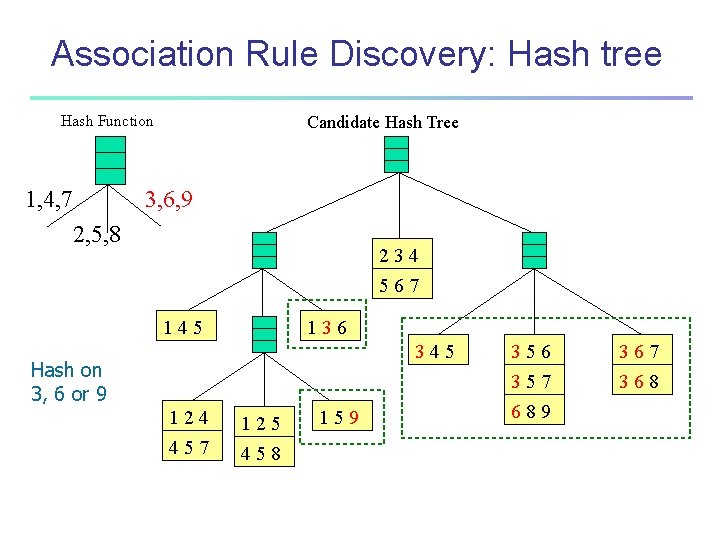

Generate Hash Tree Suppose you have 15 candidate itemsets of length 3: {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8} You need: • Hash function • Max leaf size: max number of itemsets stored in a leaf node (if number of candidate itemsets exceeds max leaf size, split the node) Hash function 3, 6, 9 1, 4, 7 2, 5, 8 234 567 345 136 145 124 457 125 458 159 356 357 689 367 368

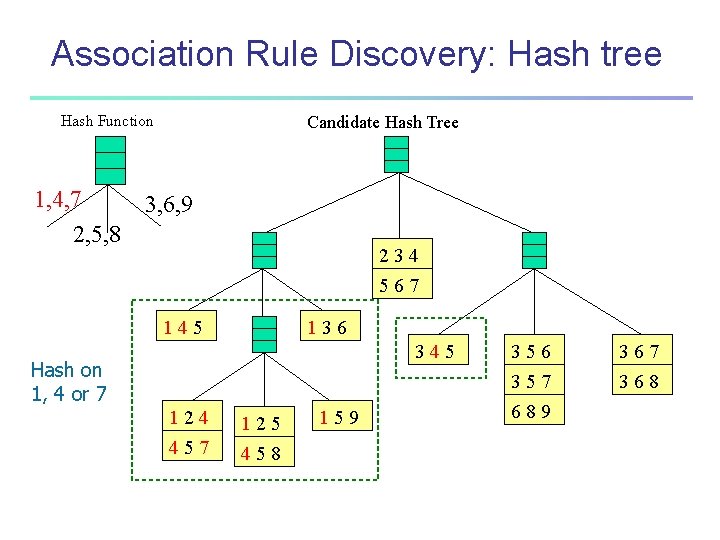

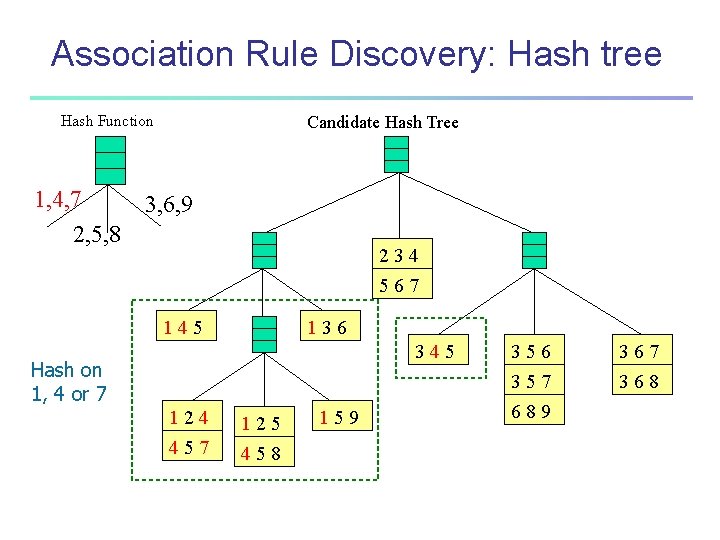

Association Rule Discovery: Hash tree Hash Function 1, 4, 7 2, 5, 8 Candidate Hash Tree 3, 6, 9 234 567 145 136 345 Hash on 1, 4 or 7 124 125 457 458 159 356 357 689 367 368

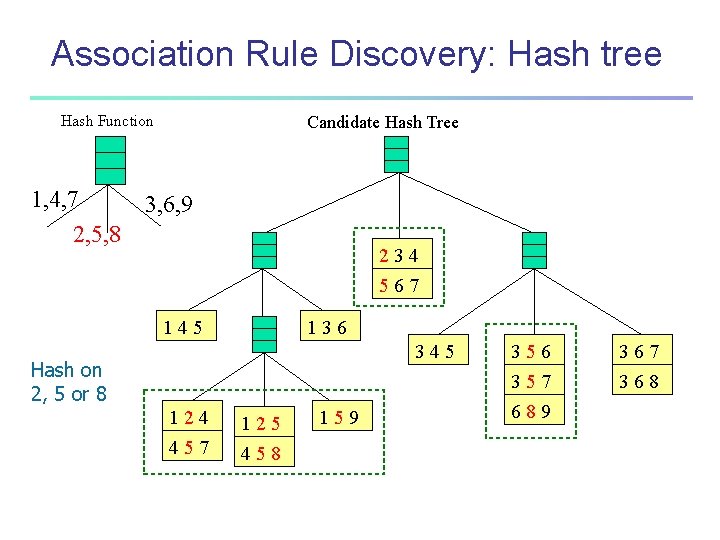

Association Rule Discovery: Hash tree Hash Function 1, 4, 7 2, 5, 8 Candidate Hash Tree 3, 6, 9 234 567 145 136 345 Hash on 2, 5 or 8 124 125 457 458 159 356 357 689 367 368

Association Rule Discovery: Hash tree Hash Function 1, 4, 7 2, 5, 8 Candidate Hash Tree 3, 6, 9 234 567 145 136 345 Hash on 3, 6 or 9 124 125 457 458 159 356 357 689 367 368

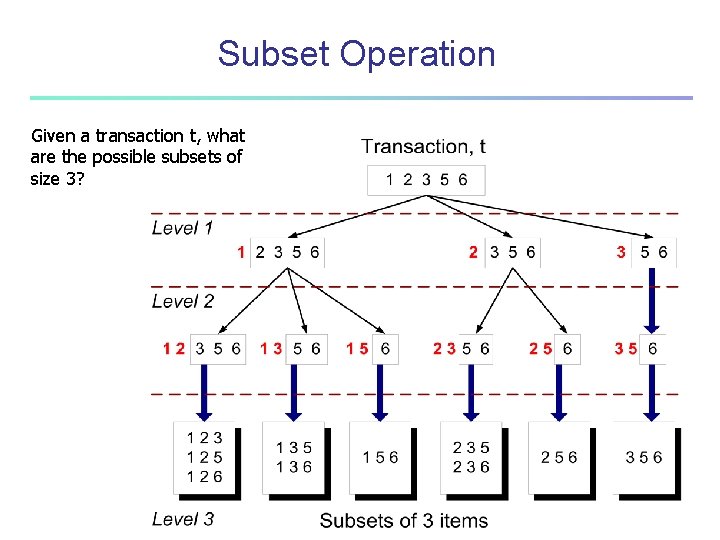

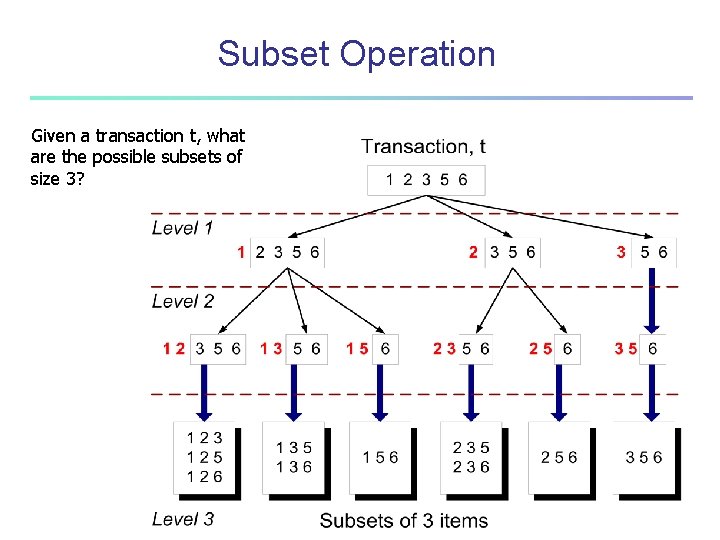

Subset Operation Given a transaction t, what are the possible subsets of size 3?

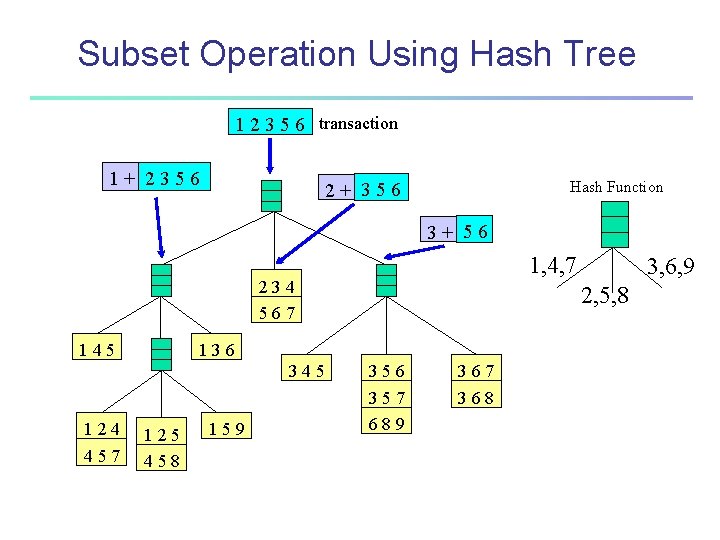

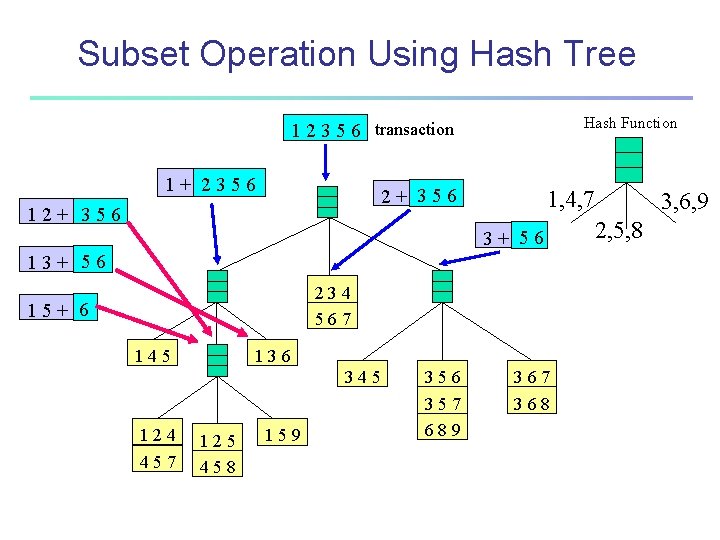

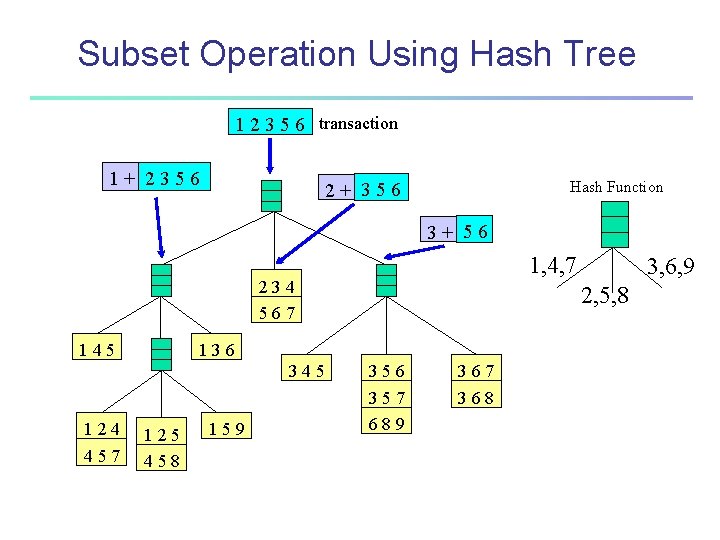

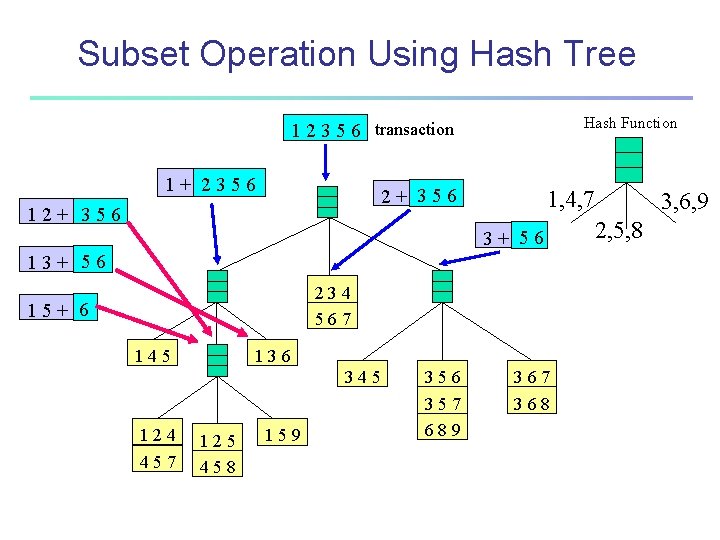

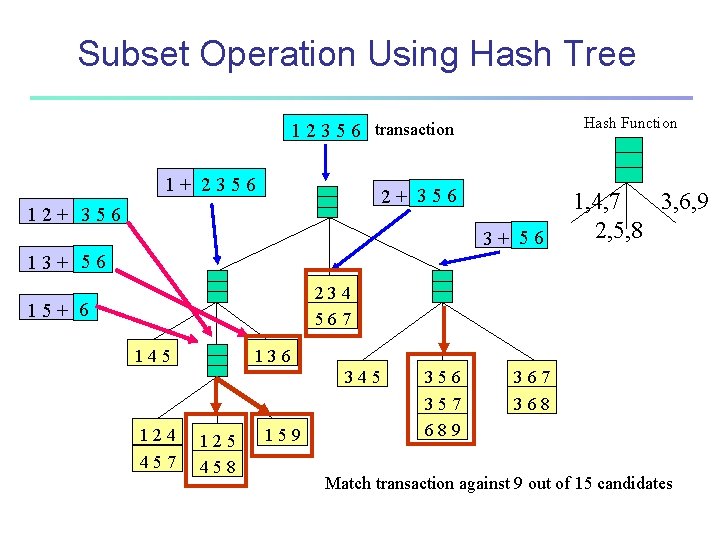

Subset Operation Using Hash Tree 1 2 3 5 6 transaction 1+ 2356 Hash Function 2+ 356 3+ 56 1, 4, 7 234 567 145 124 457 2, 5, 8 136 345 125 458 159 3, 6, 9 356 357 689 367 368

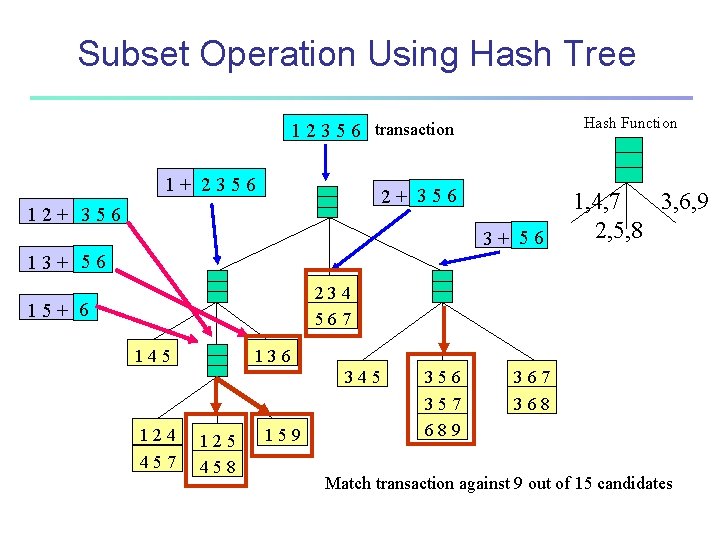

Subset Operation Using Hash Tree Hash Function 1 2 3 5 6 transaction 1+ 2356 2+ 356 1, 4, 7 3+ 56 13+ 56 234 567 15+ 6 145 136 345 124 457 125 458 159 356 357 689 367 368 3, 6, 9 2, 5, 8

Subset Operation Using Hash Tree Hash Function 1 2 3 5 6 transaction 1+ 2356 2+ 356 12+ 356 3+ 56 1, 4, 7 3, 6, 9 2, 5, 8 13+ 56 234 567 15+ 6 145 136 345 124 457 125 458 159 356 357 689 367 368 Match transaction against 9 out of 15 candidates

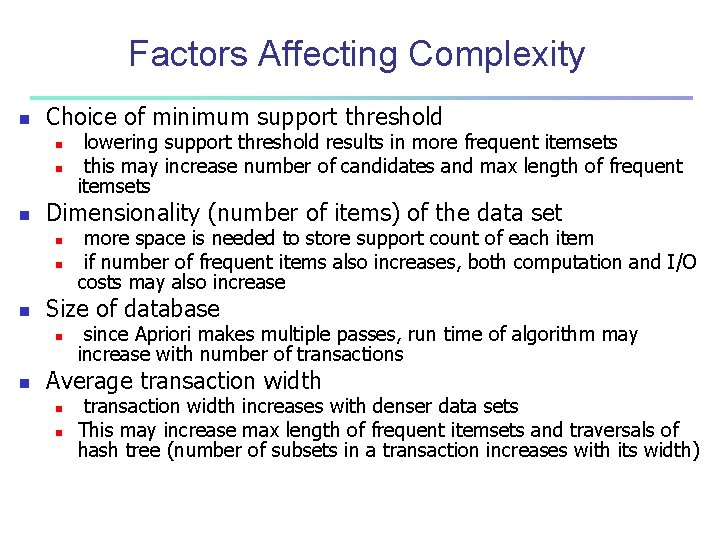

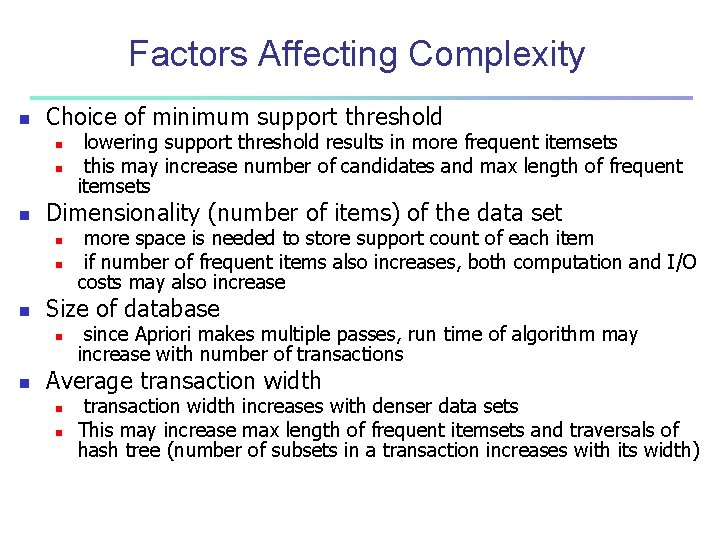

Factors Affecting Complexity n Choice of minimum support threshold n n n Dimensionality (number of items) of the data set n n n more space is needed to store support count of each item if number of frequent items also increases, both computation and I/O costs may also increase Size of database n n lowering support threshold results in more frequent itemsets this may increase number of candidates and max length of frequent itemsets since Apriori makes multiple passes, run time of algorithm may increase with number of transactions Average transaction width n n transaction width increases with denser data sets This may increase max length of frequent itemsets and traversals of hash tree (number of subsets in a transaction increases with its width)

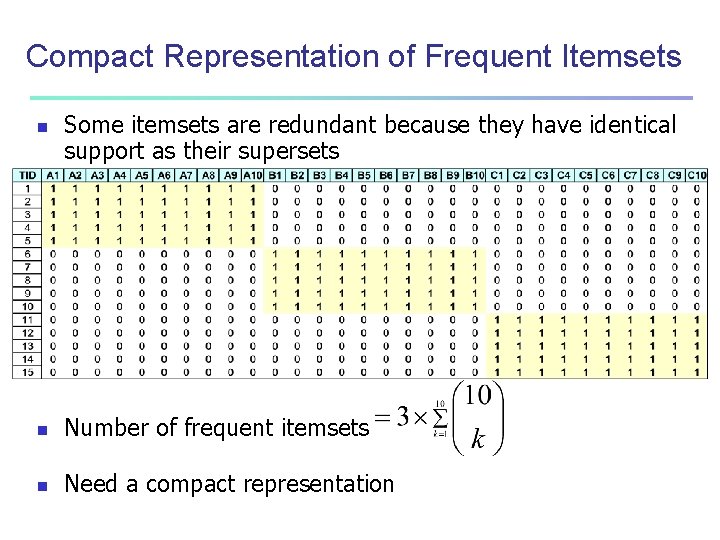

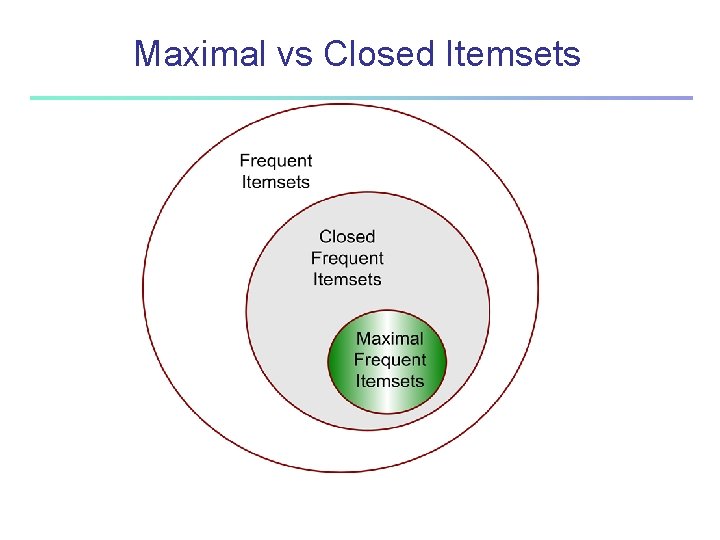

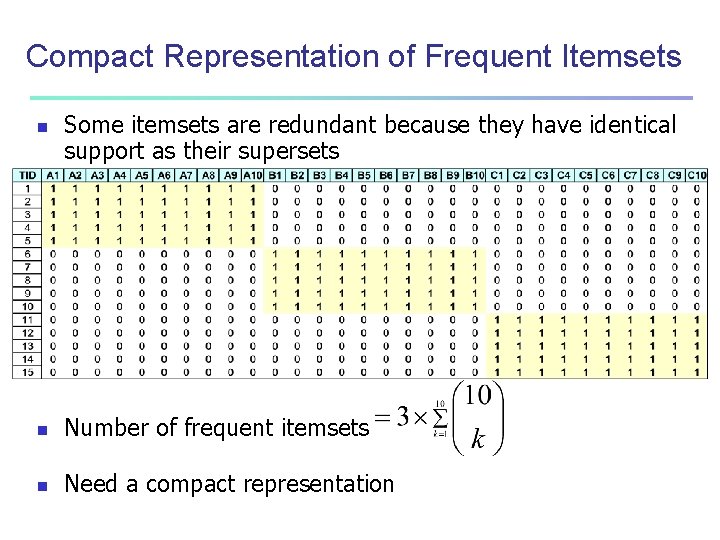

Compact Representation of Frequent Itemsets n Some itemsets are redundant because they have identical support as their supersets n Number of frequent itemsets n Need a compact representation

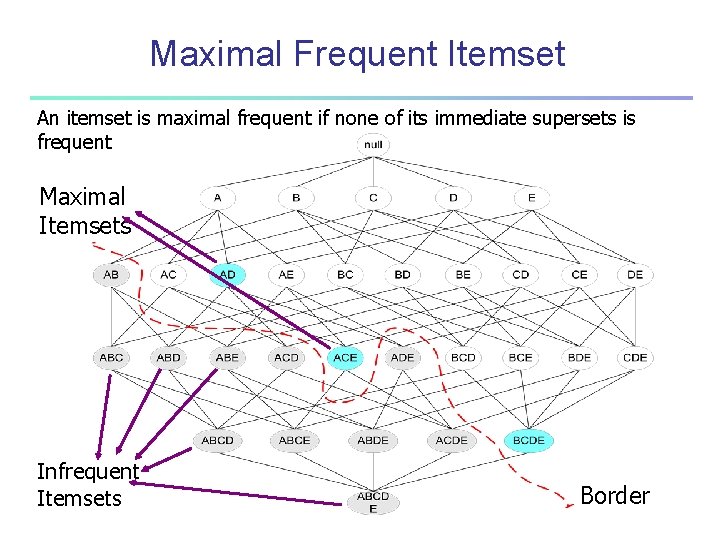

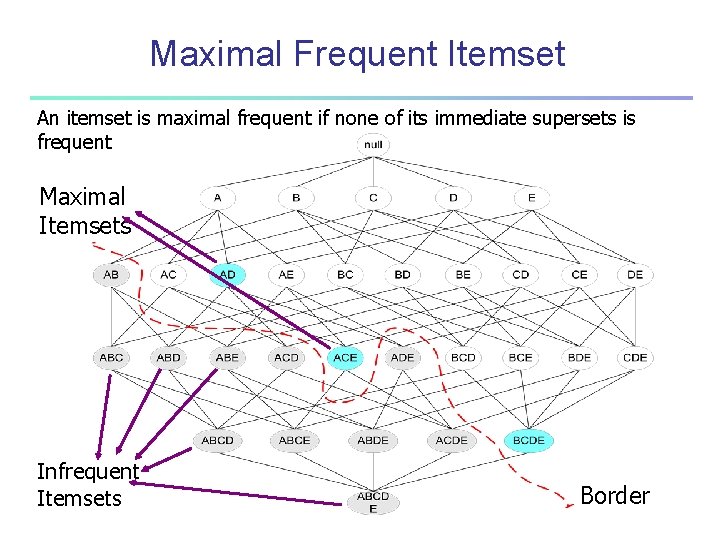

Maximal Frequent Itemset An itemset is maximal frequent if none of its immediate supersets is frequent Maximal Itemsets Infrequent Itemsets Border

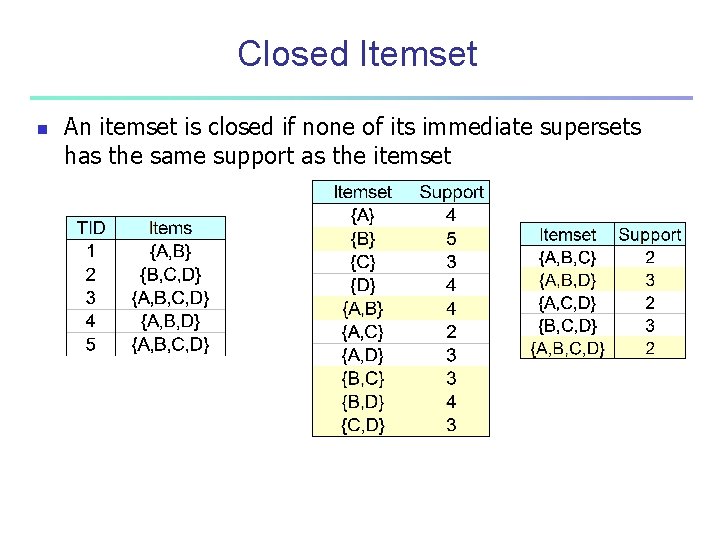

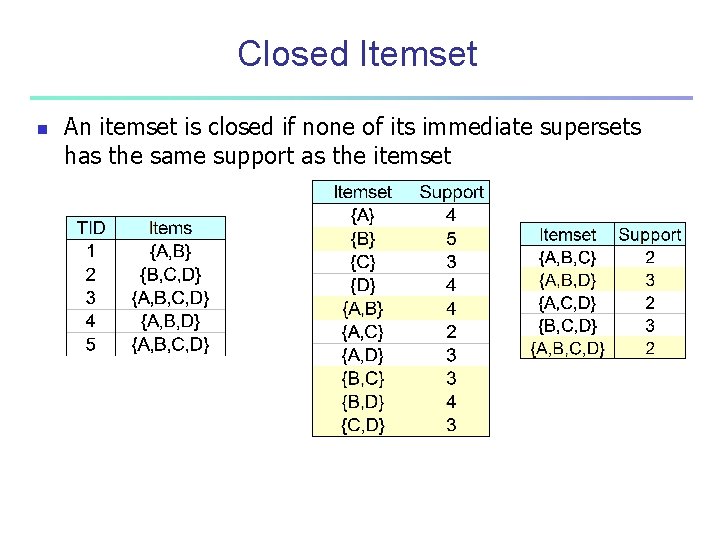

Closed Itemset n An itemset is closed if none of its immediate supersets has the same support as the itemset

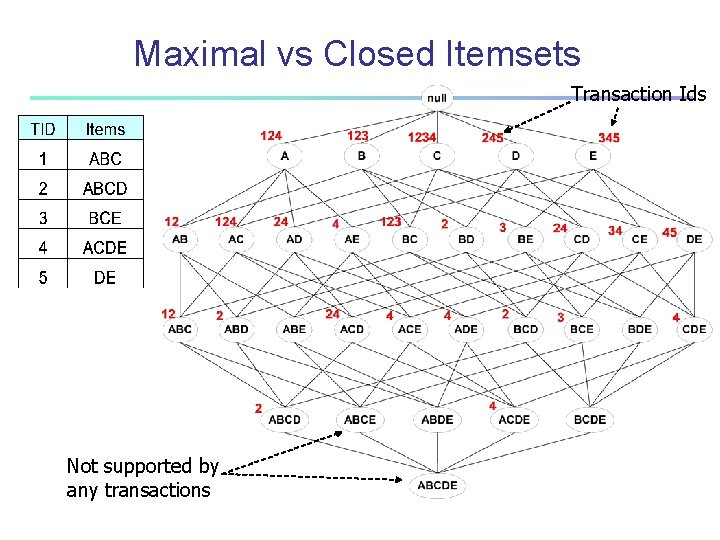

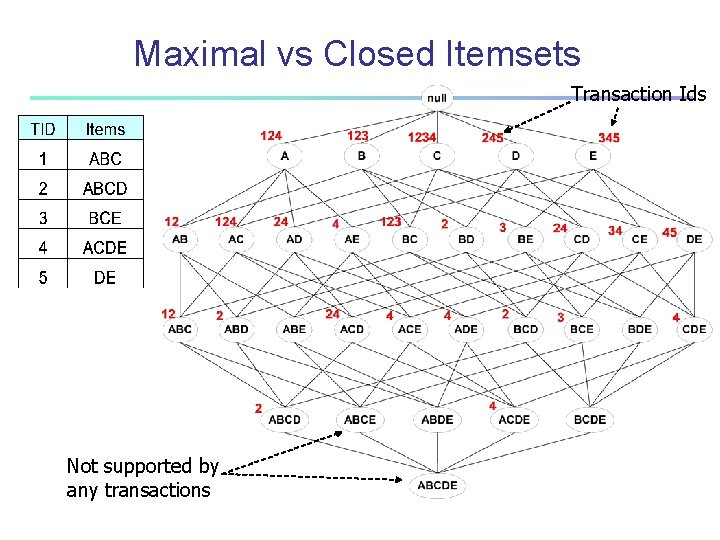

Maximal vs Closed Itemsets Transaction Ids Not supported by any transactions

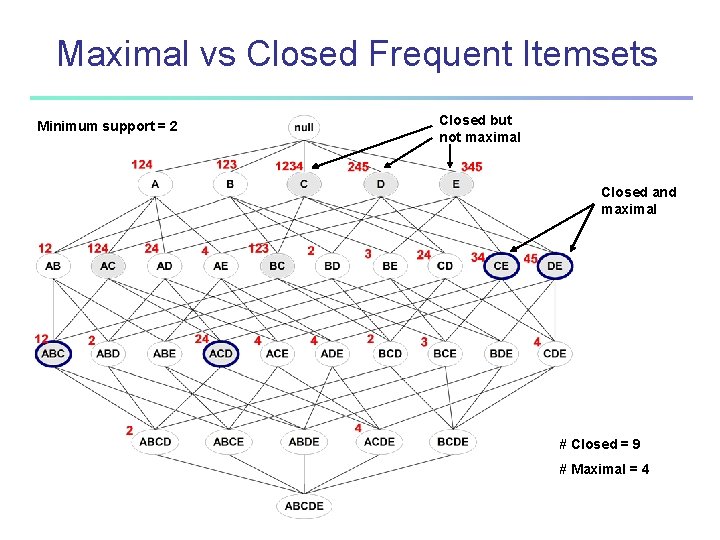

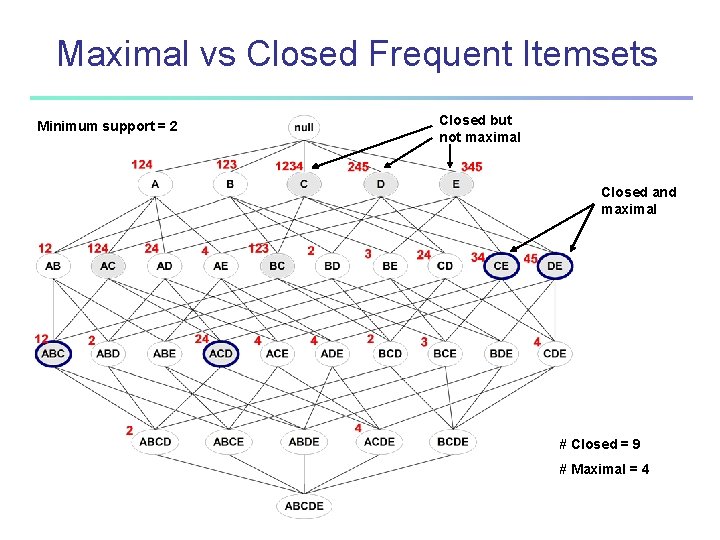

Maximal vs Closed Frequent Itemsets Minimum support = 2 Closed but not maximal Closed and maximal # Closed = 9 # Maximal = 4

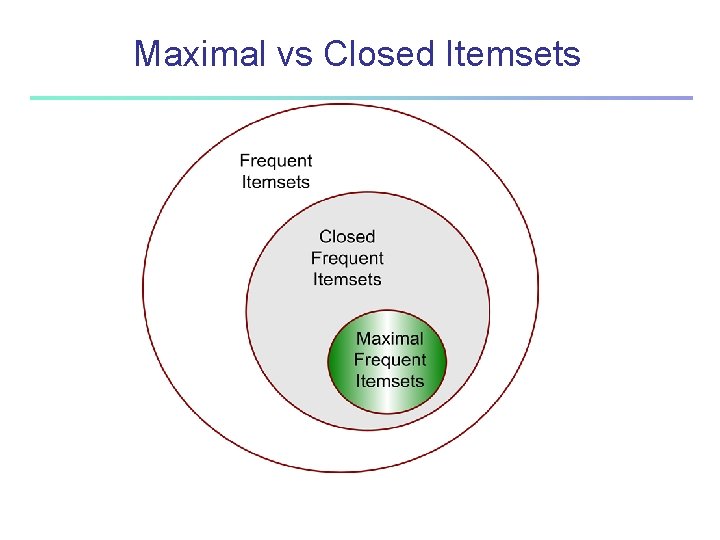

Maximal vs Closed Itemsets

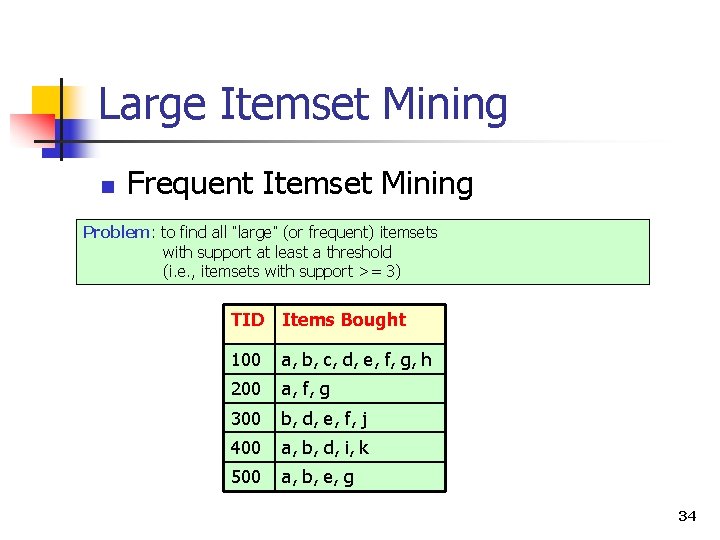

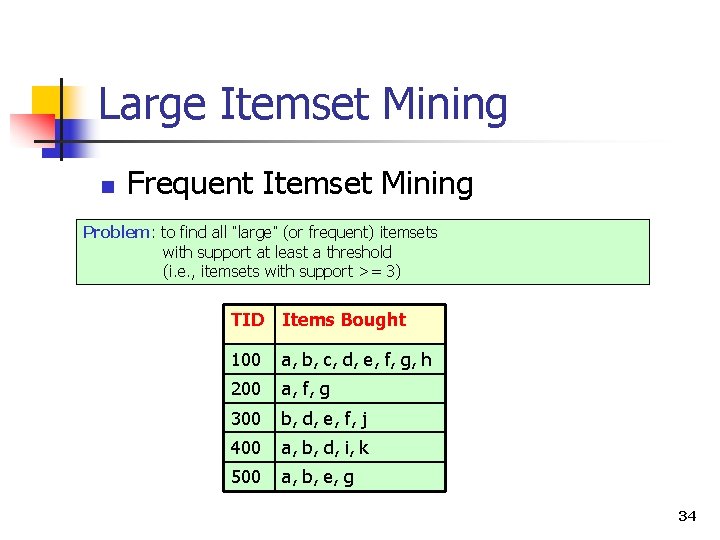

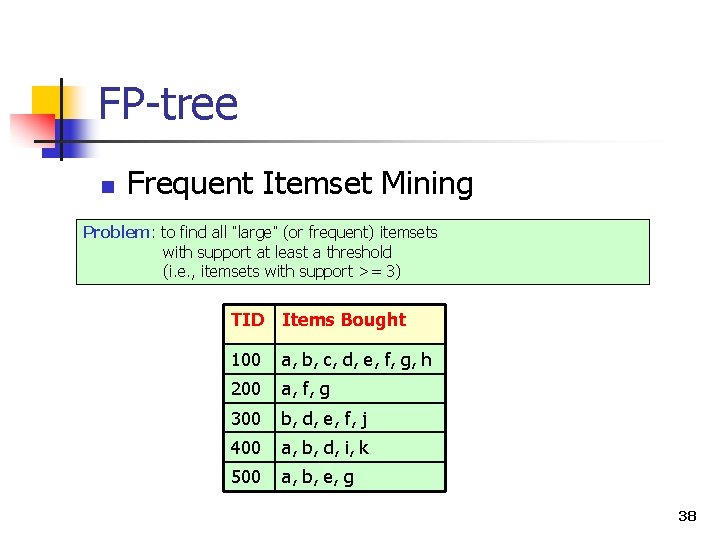

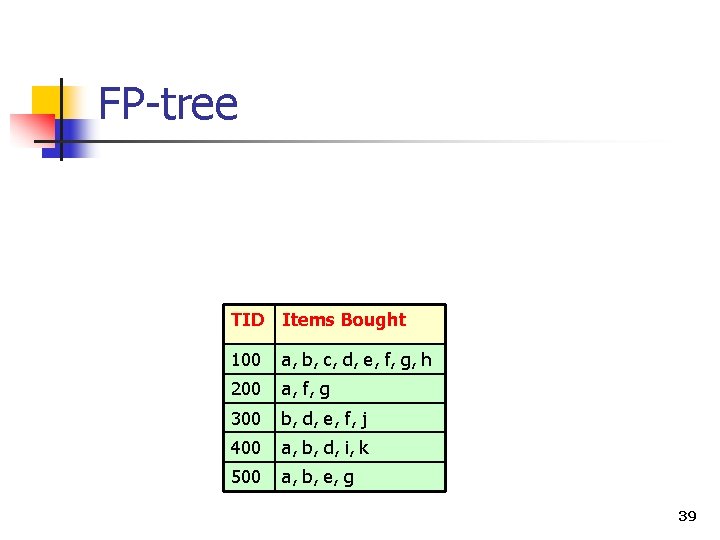

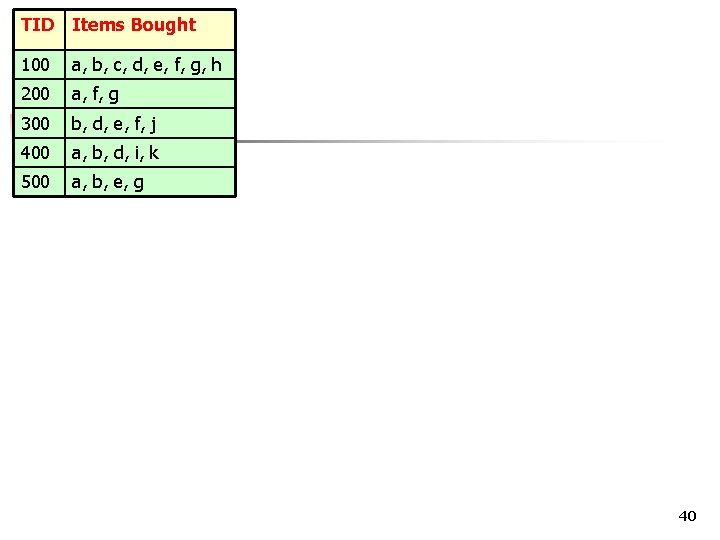

Large Itemset Mining n Frequent Itemset Mining Problem: to find all “large” (or frequent) itemsets with support at least a threshold (i. e. , itemsets with support >= 3) TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g 34

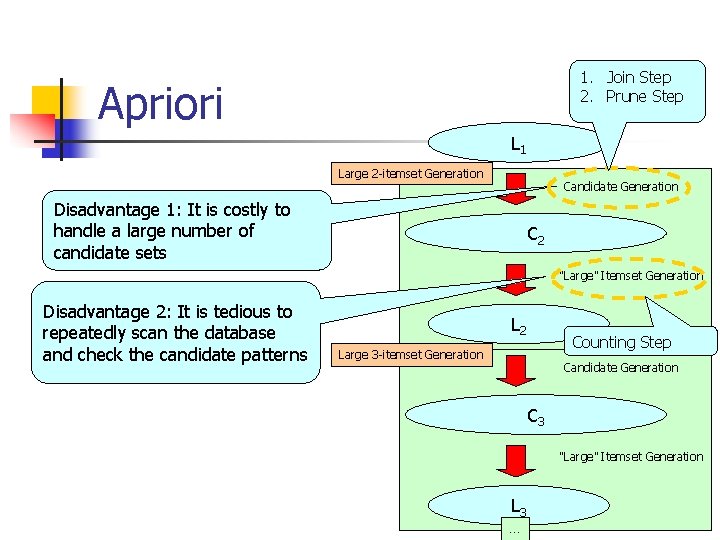

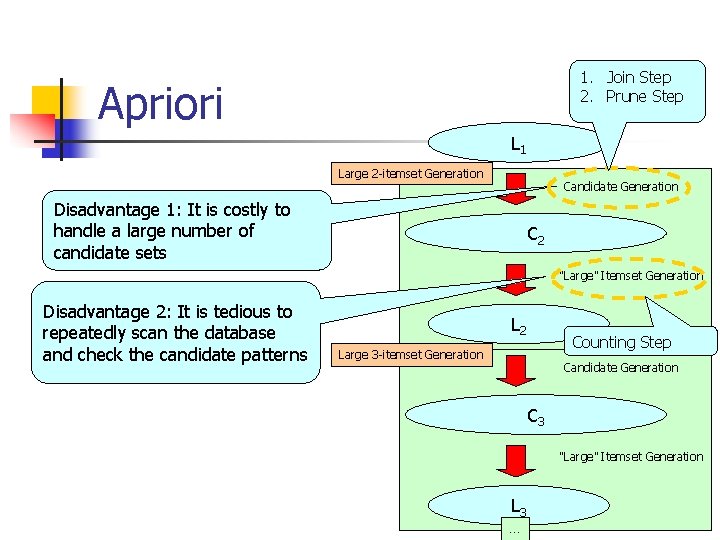

1. Join Step 2. Prune Step Apriori L 1 Large 2 -itemset Generation Candidate Generation Disadvantage 1: It is costly to handle a large number of candidate sets C 2 “Large” Itemset Generation Disadvantage 2: It is tedious to repeatedly scan the database and check the candidate patterns L 2 Counting Step Large 3 -itemset Generation Candidate Generation C 3 “Large” Itemset Generation L 3 … 35

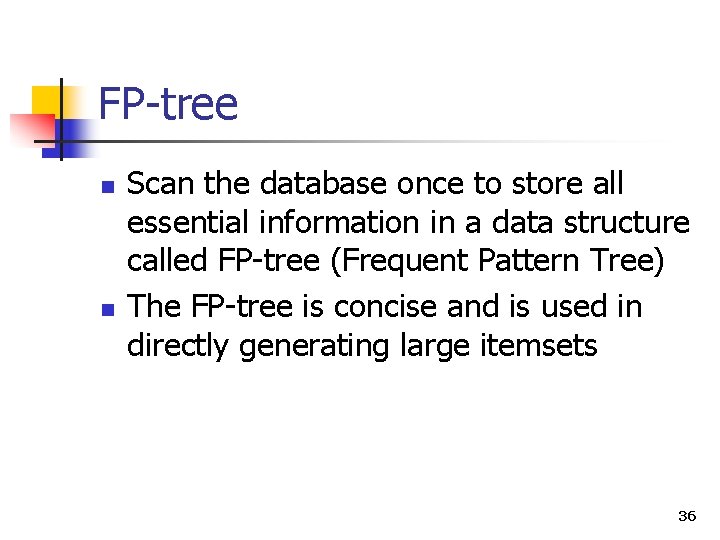

FP-tree n n Scan the database once to store all essential information in a data structure called FP-tree (Frequent Pattern Tree) The FP-tree is concise and is used in directly generating large itemsets 36

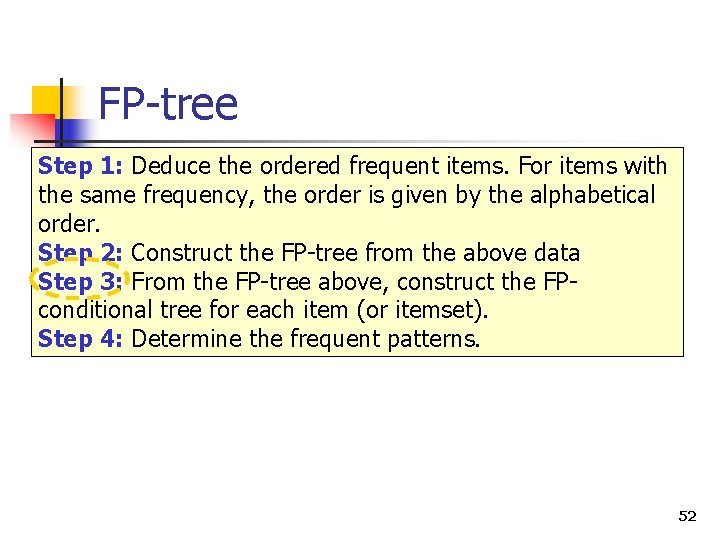

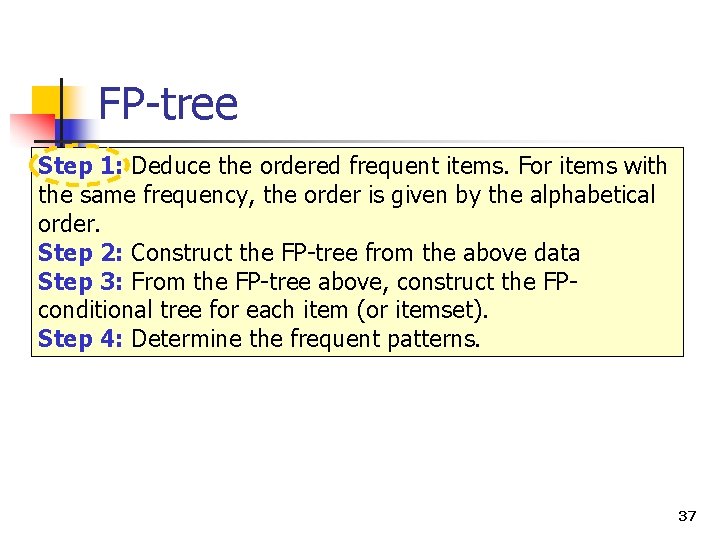

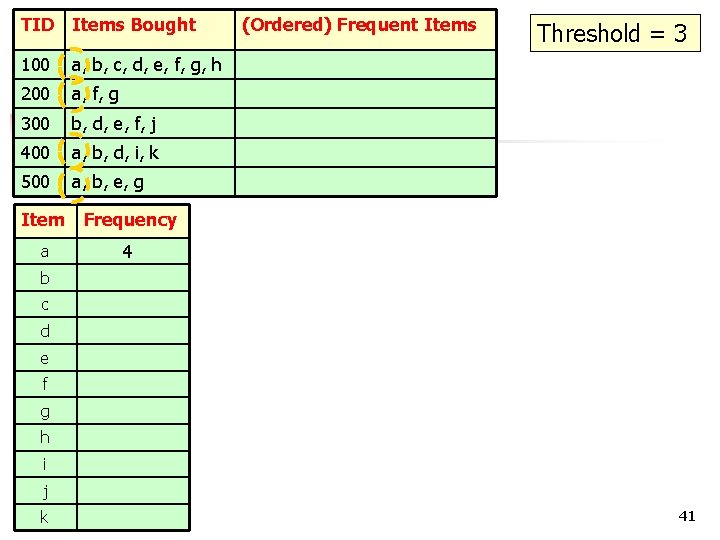

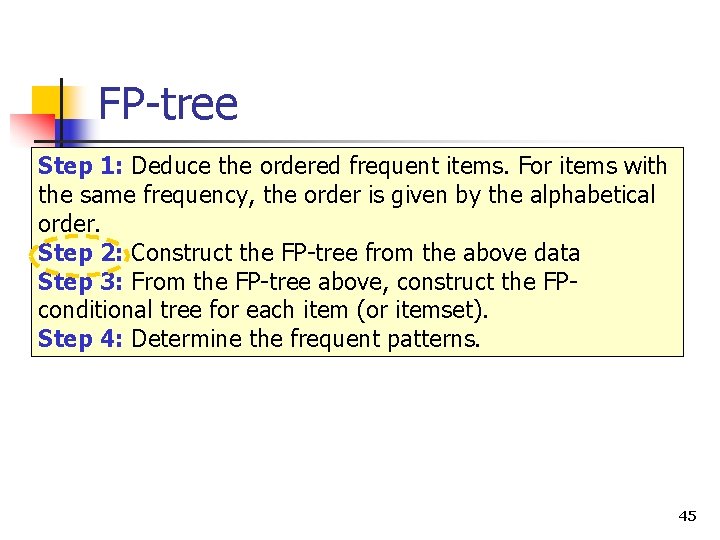

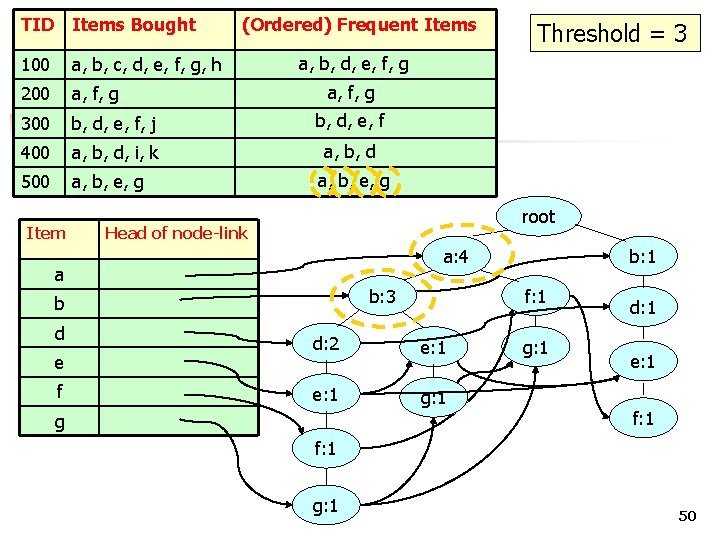

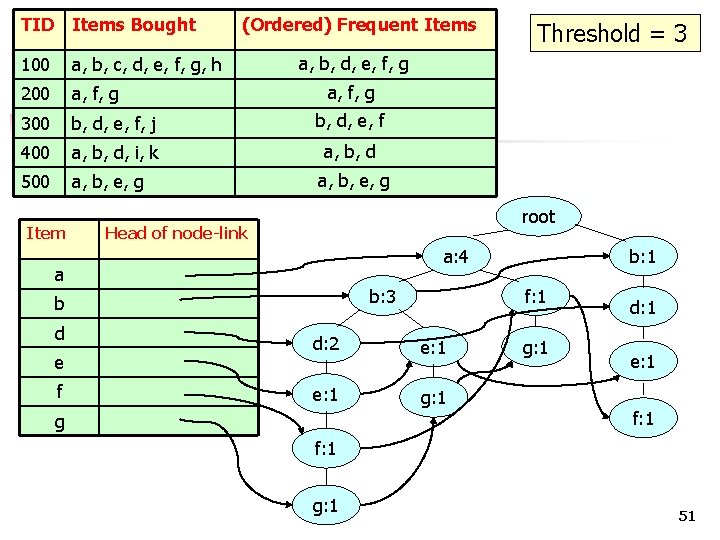

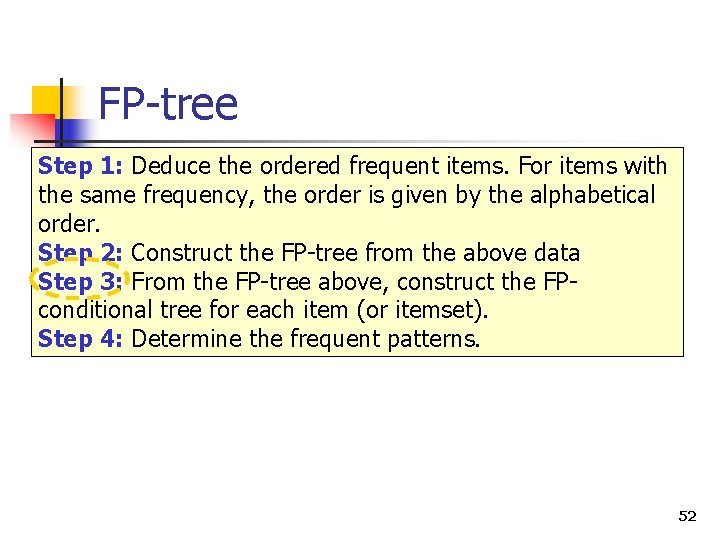

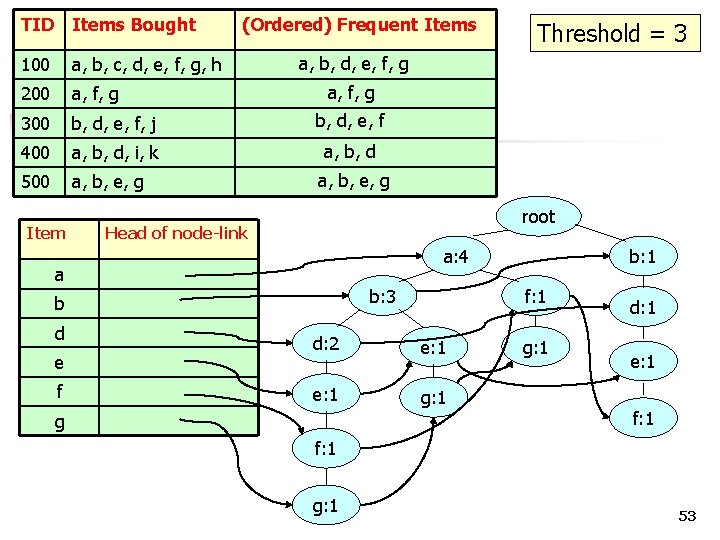

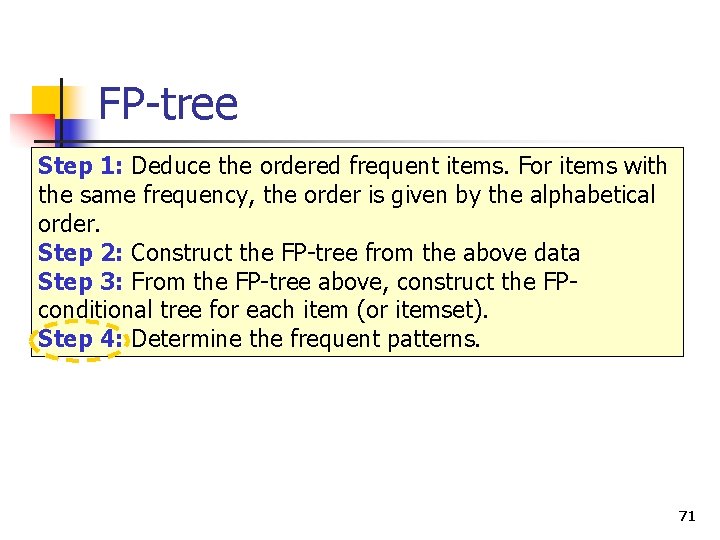

FP-tree Step 1: Deduce the ordered frequent items. For items with the same frequency, the order is given by the alphabetical order. Step 2: Construct the FP-tree from the above data Step 3: From the FP-tree above, construct the FPconditional tree for each item (or itemset). Step 4: Determine the frequent patterns. 37

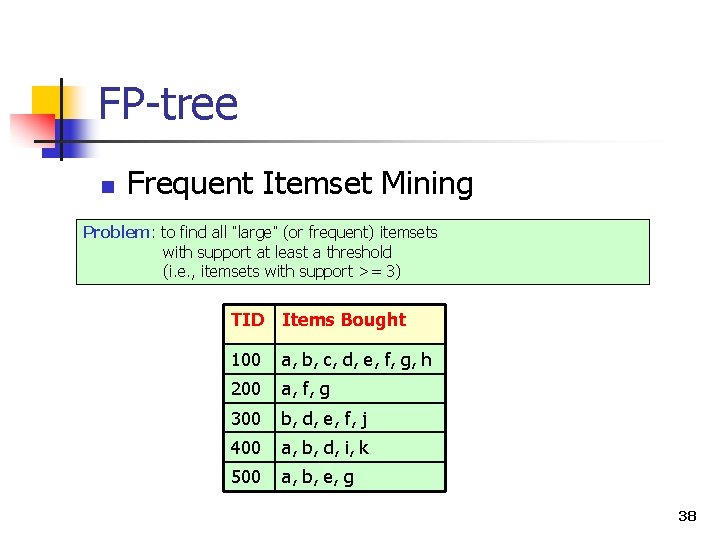

FP-tree n Frequent Itemset Mining Problem: to find all “large” (or frequent) itemsets with support at least a threshold (i. e. , itemsets with support >= 3) TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g 38

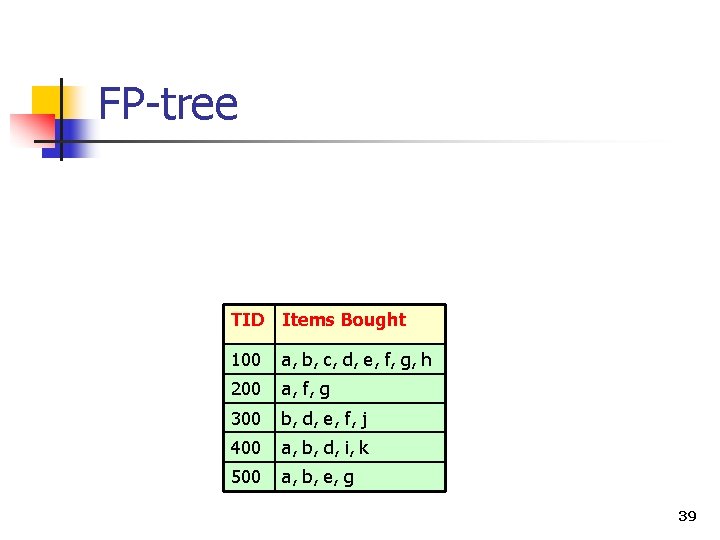

FP-tree TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g 39

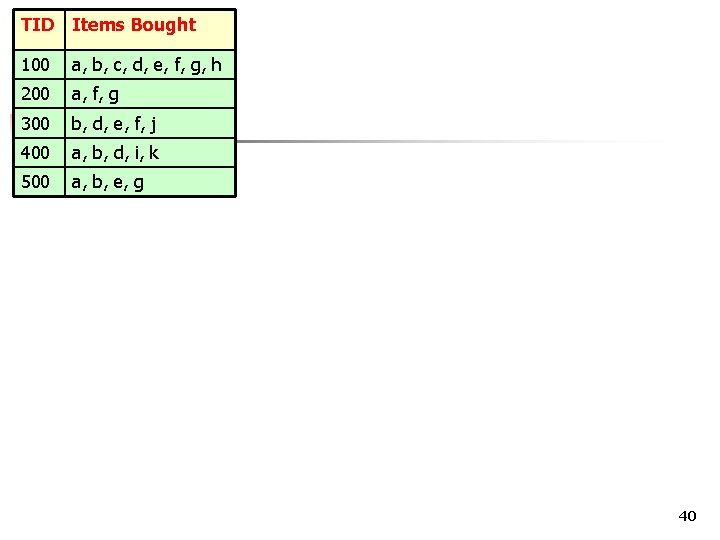

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g FP-tree 40

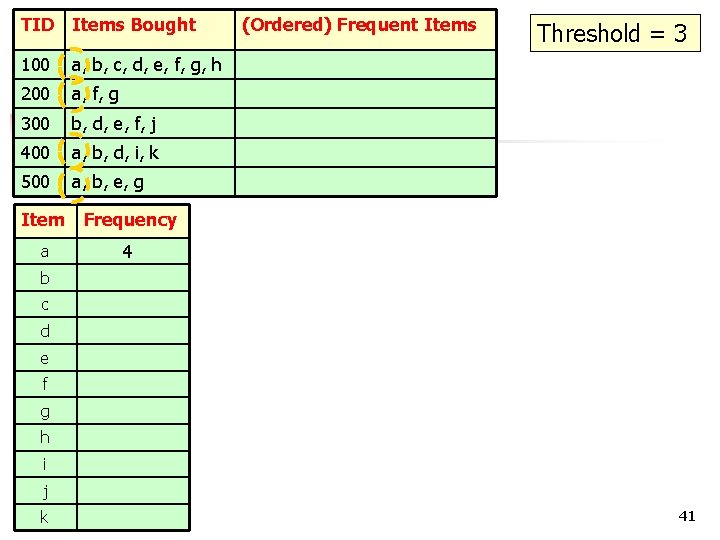

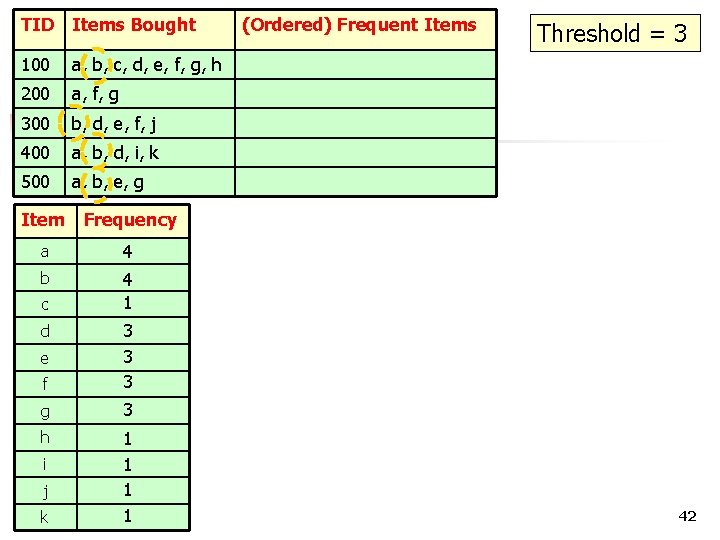

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g Item Frequency a 4 (Ordered) Frequent Items Threshold = 3 b c d e f g h i j k COMP 5331 41

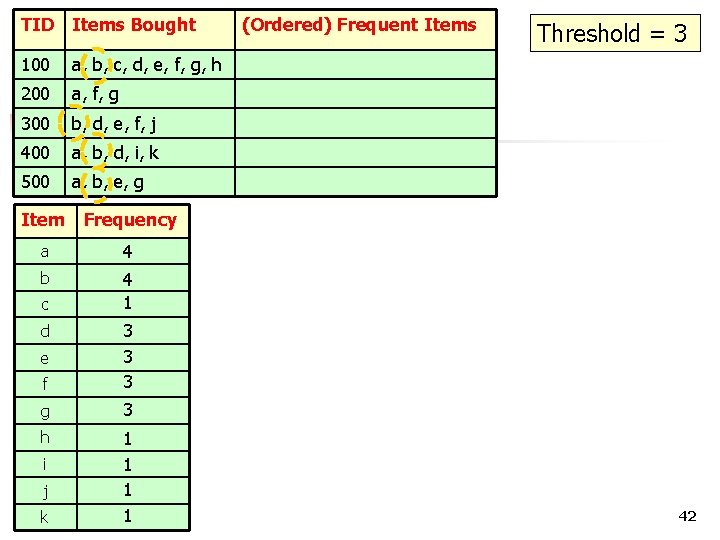

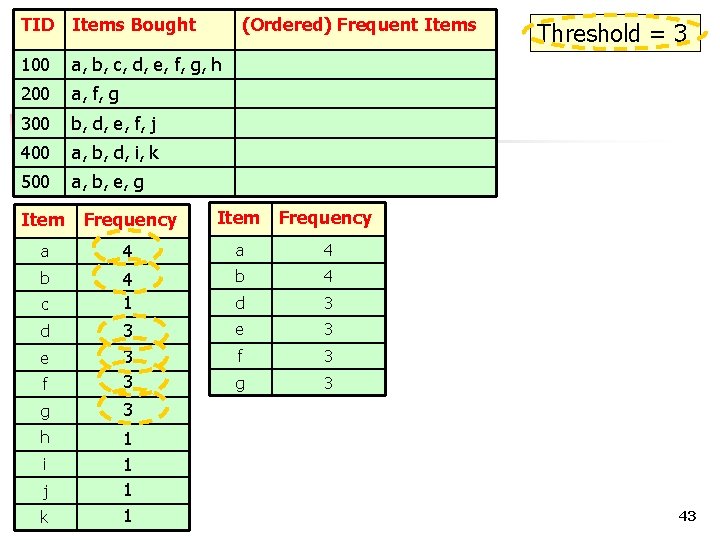

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g Item Frequency a 4 b 4 1 c f 3 3 3 g 3 d e h i j k 1 1 1 COMP 5331 1 (Ordered) Frequent Items Threshold = 3 42

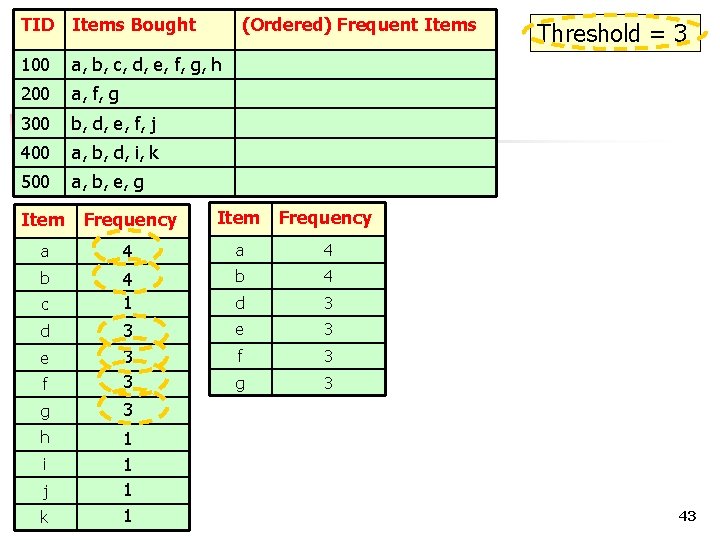

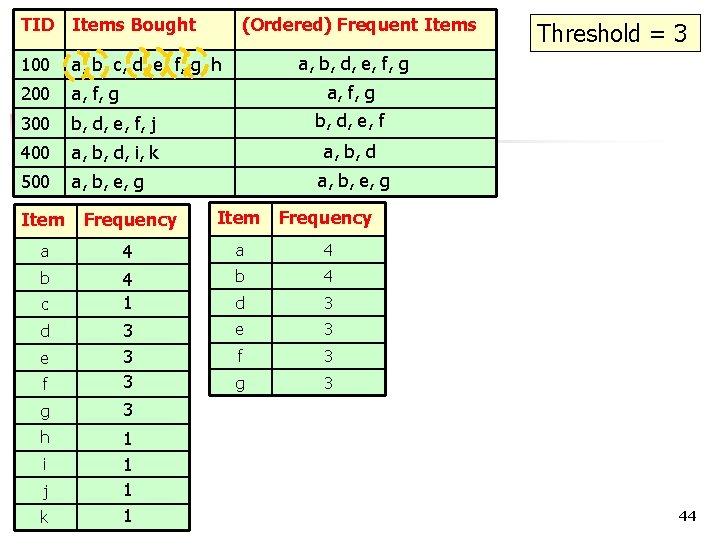

TID Items Bought (Ordered) Frequent Items 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j 400 a, b, d, i, k 500 a, b, e, g Item Frequency a 4 b 4 1 b 4 d 3 e 3 f 3 3 3 g 3 c d e h i j k 1 1 1 COMP 5331 1 Threshold = 3 43

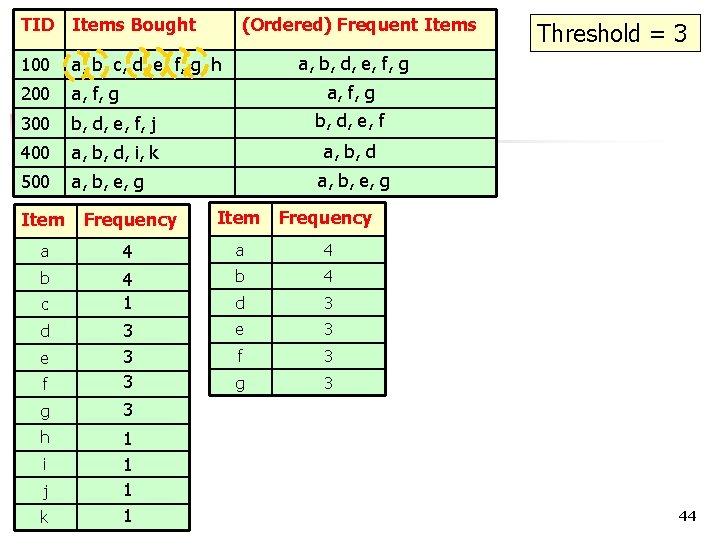

TID Items Bought (Ordered) Frequent Items 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g a, b, d, e, f, g a, b, e, g Item Frequency a 4 b 4 1 b 4 d 3 e 3 f 3 3 3 g 3 c d e h i j k Threshold = 3 1 1 1 COMP 5331 1 44

FP-tree Step 1: Deduce the ordered frequent items. For items with the same frequency, the order is given by the alphabetical order. Step 2: Construct the FP-tree from the above data Step 3: From the FP-tree above, construct the FPconditional tree for each item (or itemset). Step 4: Determine the frequent patterns. 45

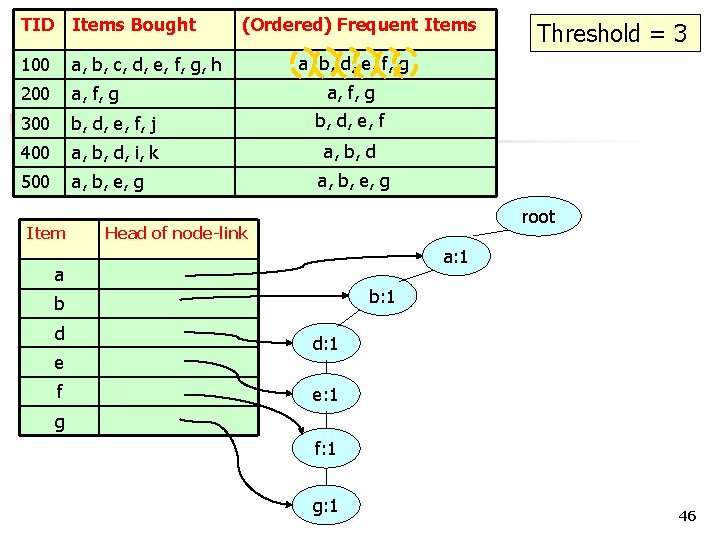

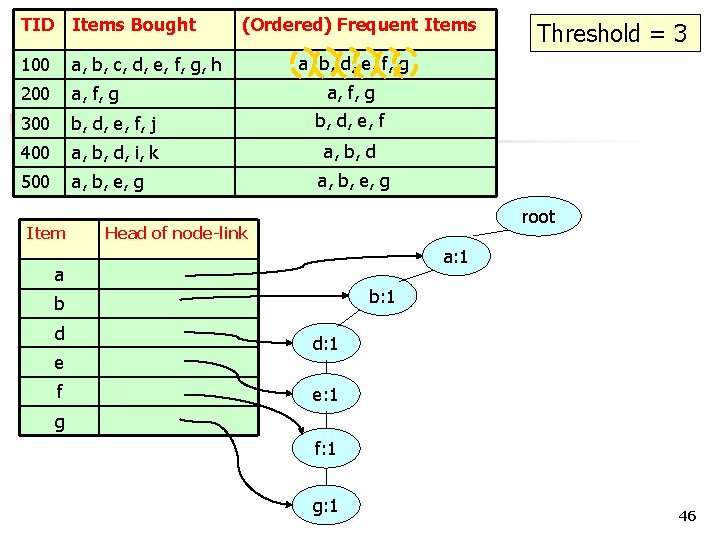

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 1 a b: 1 b d e f Threshold = 3 d: 1 e: 1 g f: 1 g: 1 46

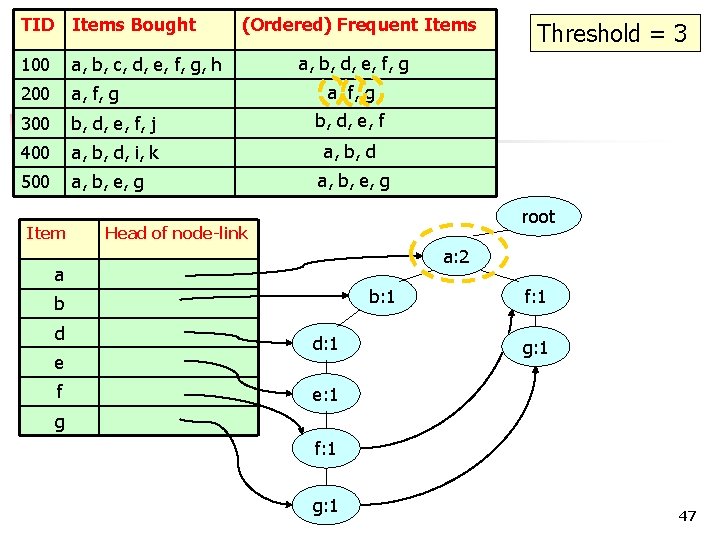

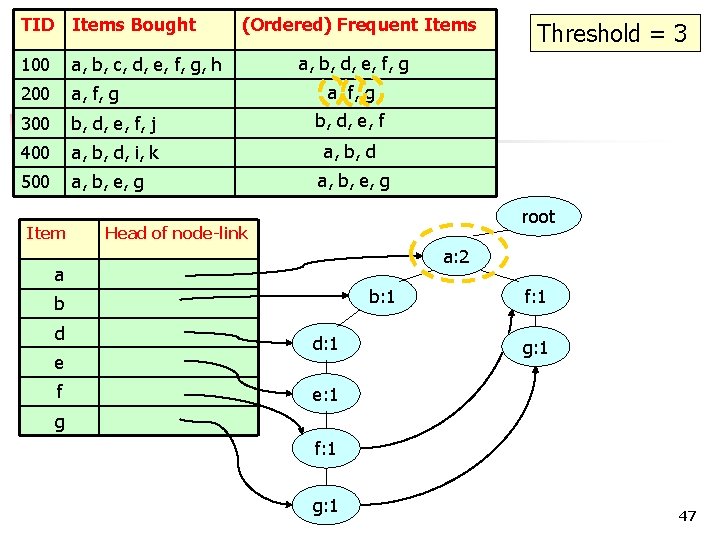

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 2 a: 1 a b: 1 b d e f Threshold = 3 d: 1 f: 1 g: 1 e: 1 g f: 1 g: 1 47

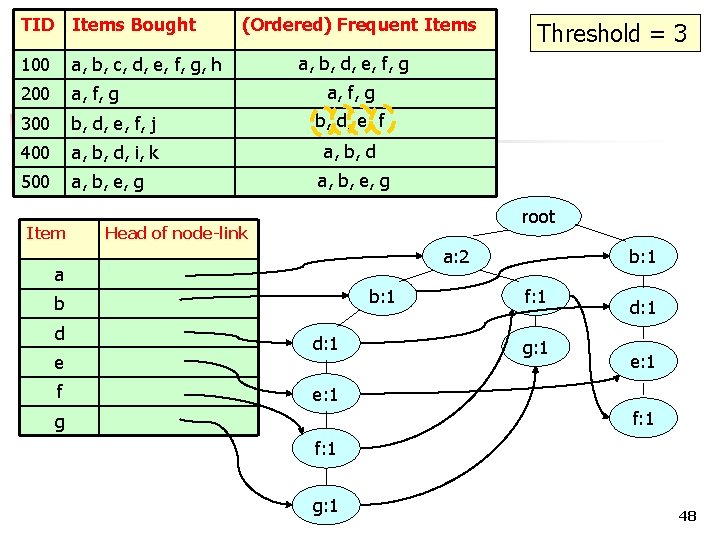

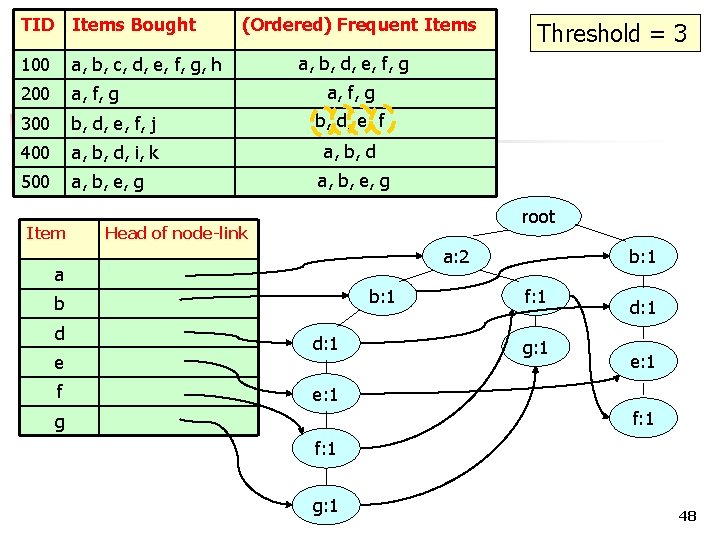

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 2 a b: 1 b d e f Threshold = 3 d: 1 b: 1 f: 1 g: 1 d: 1 e: 1 f: 1 g: 1 48

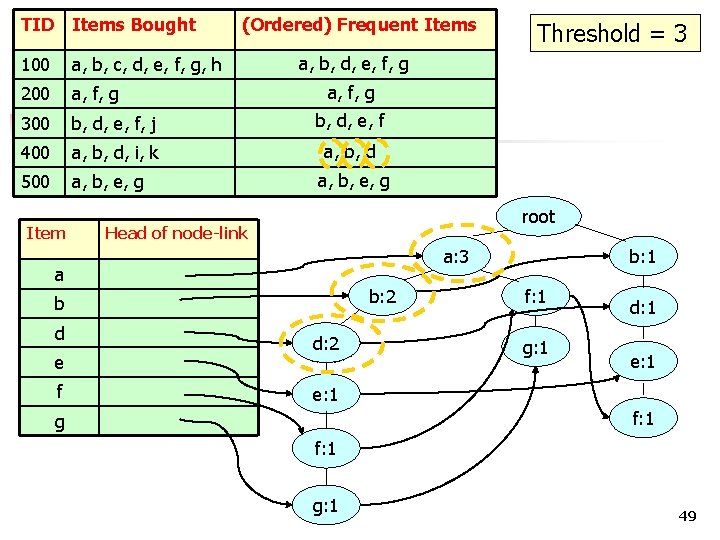

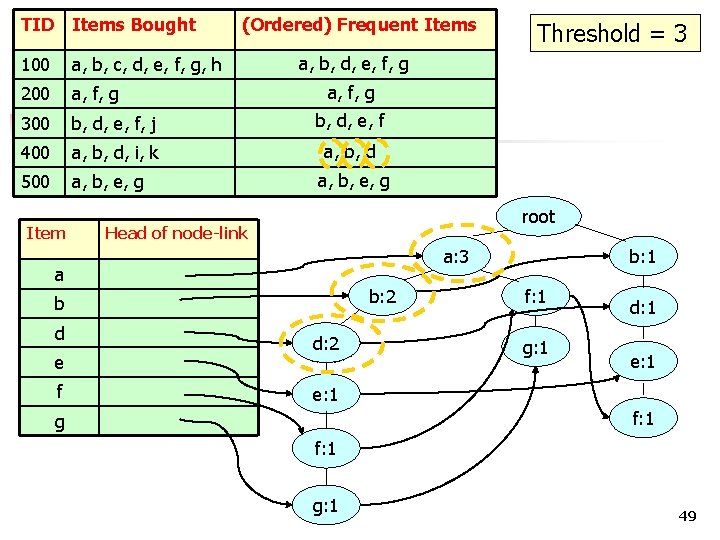

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 3 a: 2 a b: 2 b: 1 b d e f Threshold = 3 d: 2 d: 1 b: 1 f: 1 g: 1 d: 1 e: 1 f: 1 g: 1 49

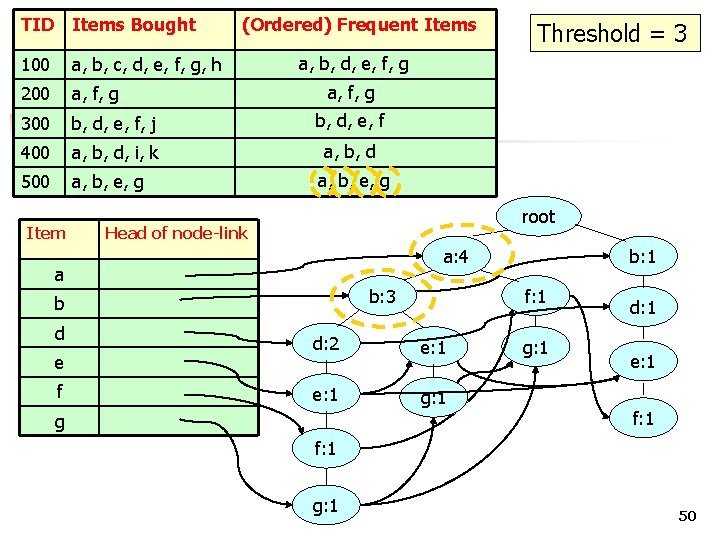

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 4 a: 3 a b: 3 b: 2 b d e f Threshold = 3 f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 50

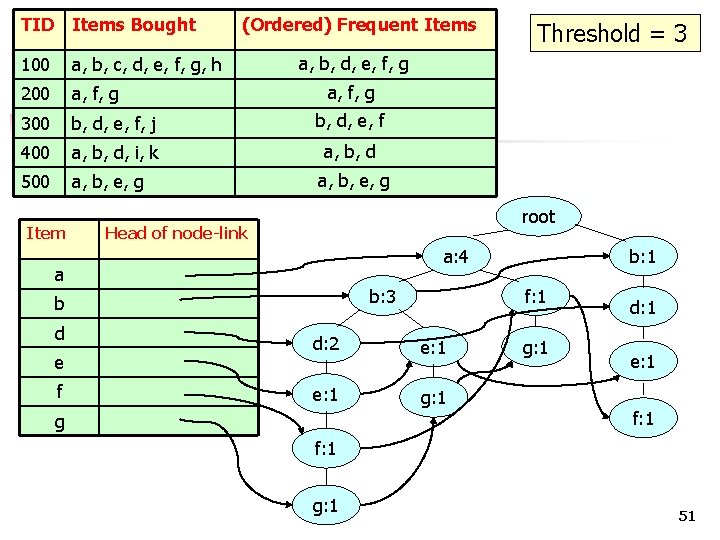

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 4 a b: 3 b d e f Threshold = 3 f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 51

FP-tree Step 1: Deduce the ordered frequent items. For items with the same frequency, the order is given by the alphabetical order. Step 2: Construct the FP-tree from the above data Step 3: From the FP-tree above, construct the FPconditional tree for each item (or itemset). Step 4: Determine the frequent patterns. 52

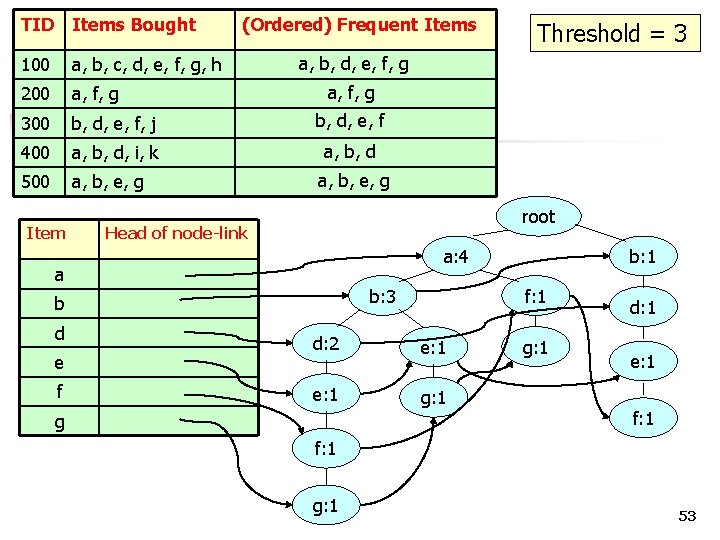

TID Items Bought 100 a, b, c, d, e, f, g, h 200 a, f, g 300 b, d, e, f, j b, d, e, f 400 a, b, d, i, k a, b, d 500 a, b, e, g Item (Ordered) Frequent Items a, b, d, e, f, g a, b, e, g root Head of node-link a: 4 a b: 3 b d e f Threshold = 3 f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 53

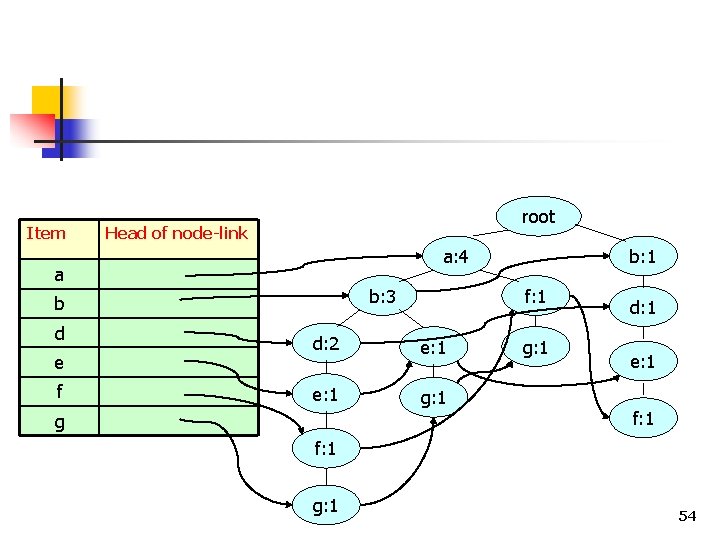

Item root Head of node-link a: 4 a b: 3 b d e f f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 54

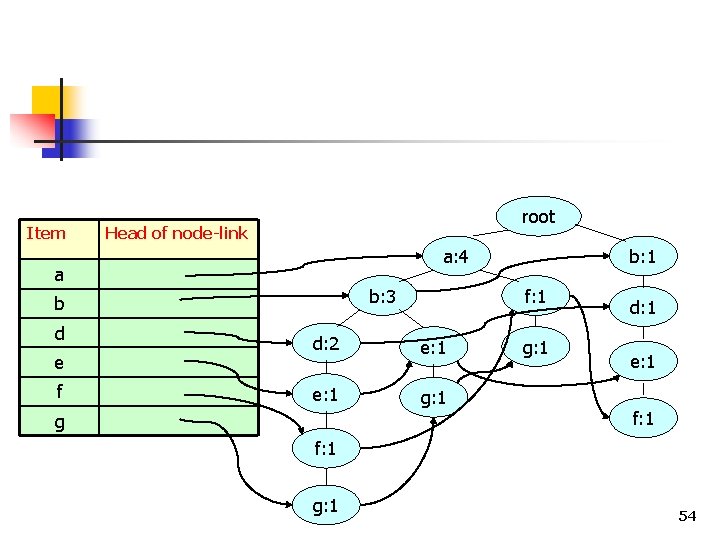

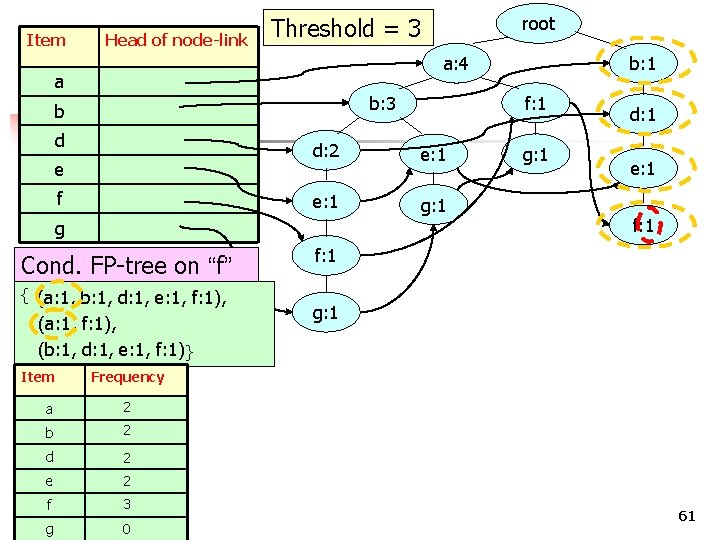

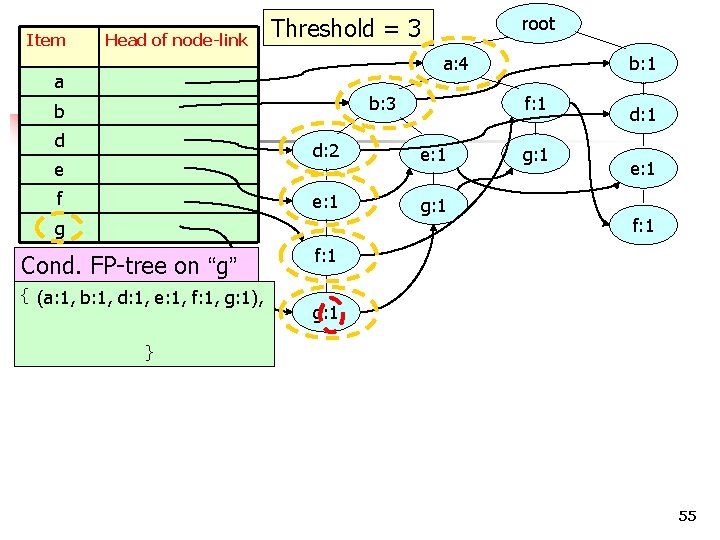

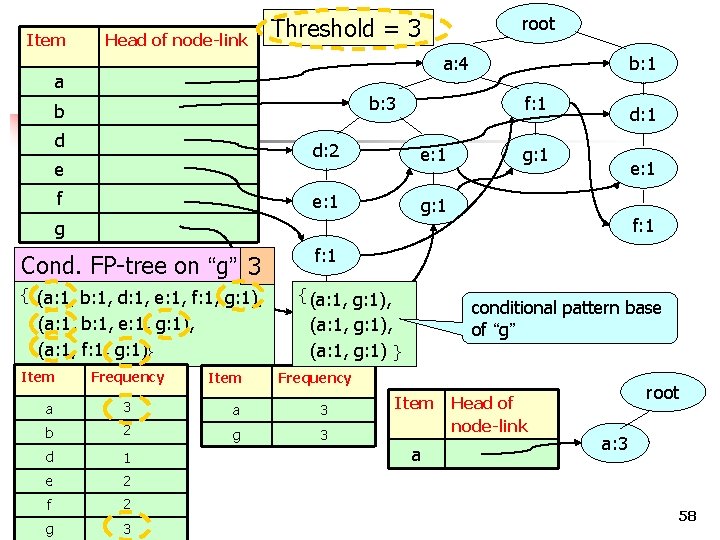

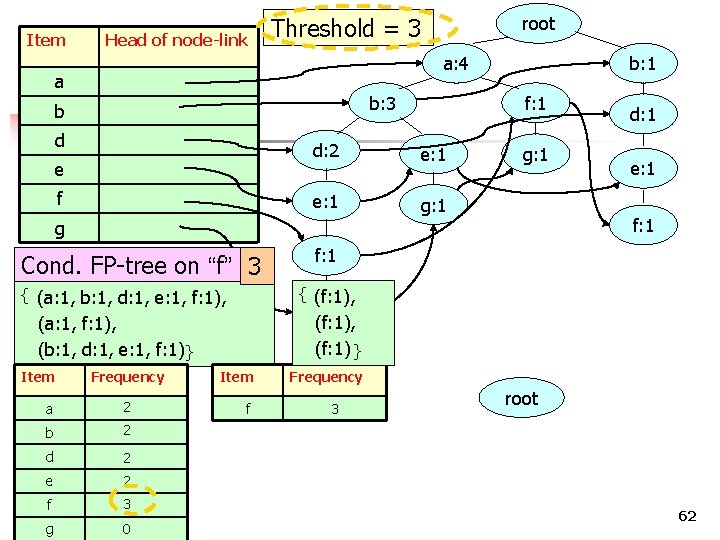

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f Cond. FP-tree on “g” { (a: 1, b: 1, d: 1, e: 1, f: 1, g: 1), f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 } 55

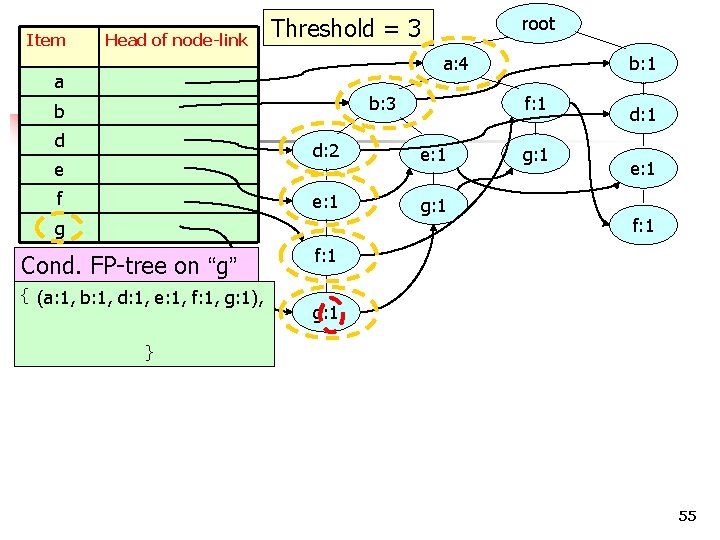

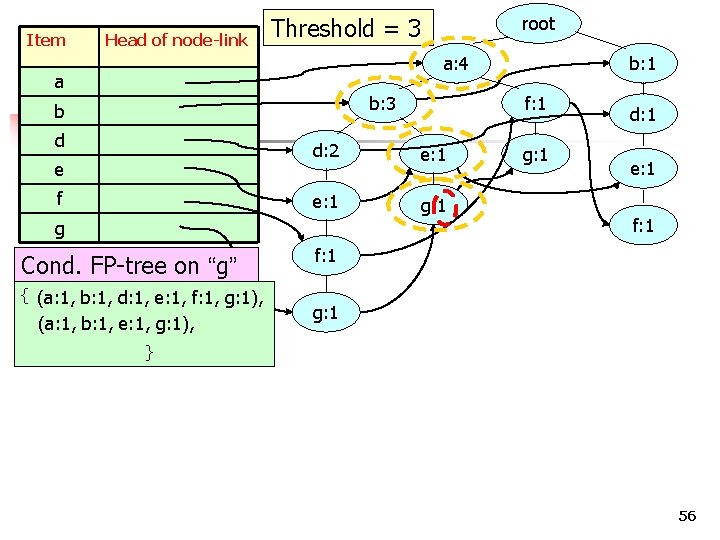

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f Cond. FP-tree on “g” { (a: 1, b: 1, d: 1, e: 1, f: 1, g: 1), (a: 1, b: 1, e: 1, g: 1), f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 } 56

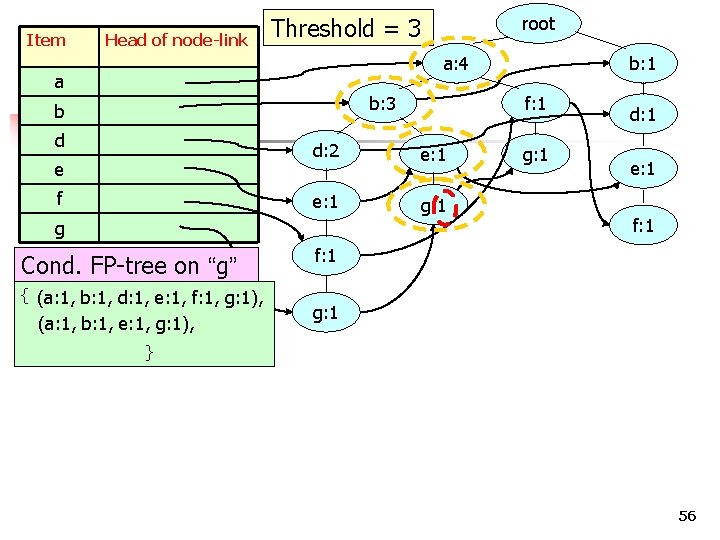

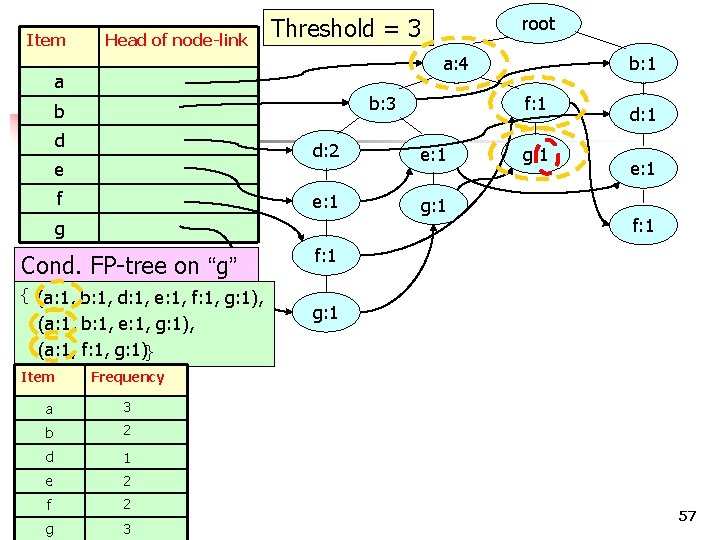

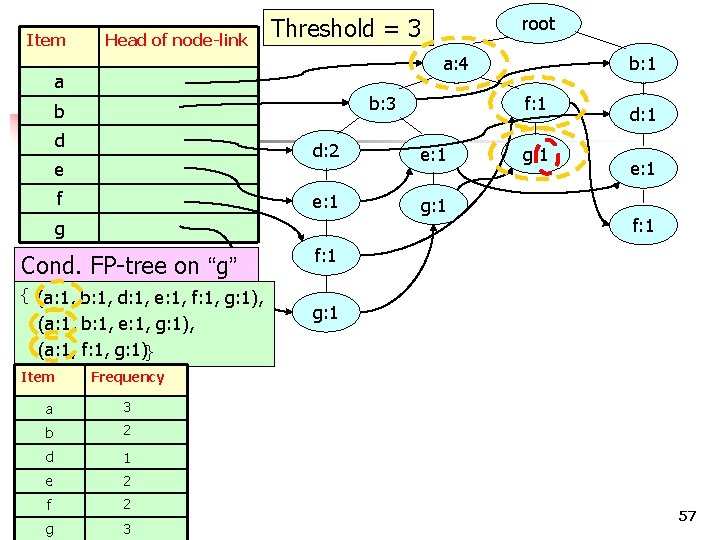

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “g” { (a: 1, b: 1, d: 1, e: 1, f: 1, g: 1), (a: 1, b: 1, e: 1, g: 1), (a: 1, f: 1, g: 1)} e: 1 g: 1 3 b 2 d 1 e 2 g g: 1 d: 1 e: 1 f: 1 g: 1 Frequency a f b: 1 f: 1 d: 2 g Item root Threshold = 3 2 COMP 5331 3 57

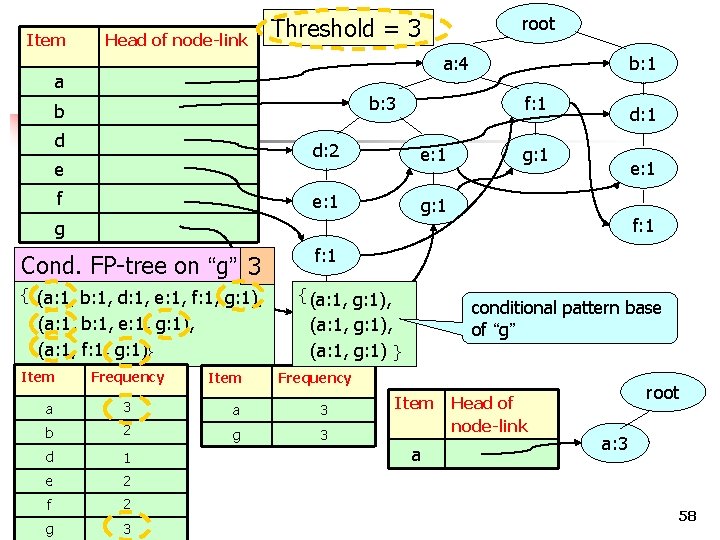

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 d: 1 g: 1 e: 1 f: 1 g Cond. FP-tree on “g” 3 { (a: 1, b: 1, d: 1, e: 1, f: 1, g: 1), (a: 1, b: 1, e: 1, g: 1), (a: 1, f: 1, g: 1)} Item Frequency Item f: 1 { (a: 1, g: 1), Frequency a 3 b 2 g 3 d 1 e 2 f g 2 COMP 5331 3 conditional pattern base of “g” g: 1 (a: 1, g: 1), (a: 1, g: 1) } Item a Head of node-link root a: 3 58

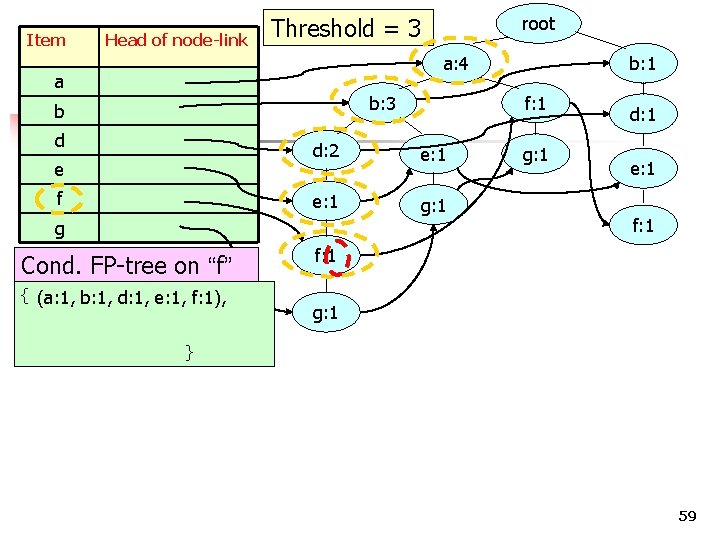

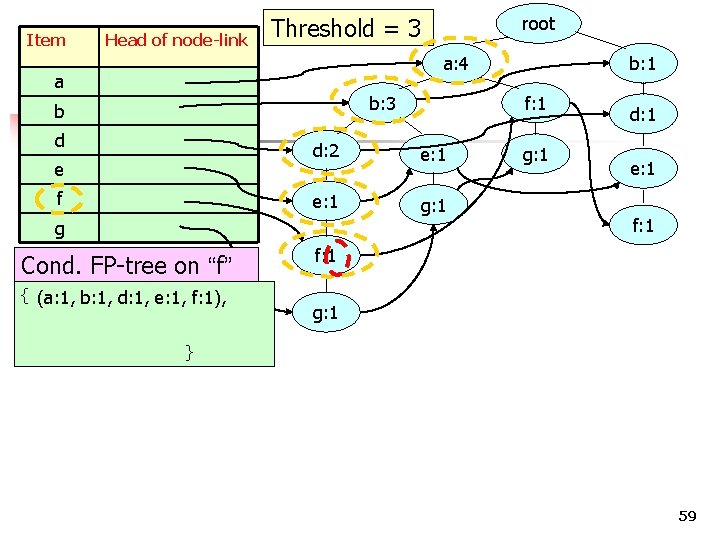

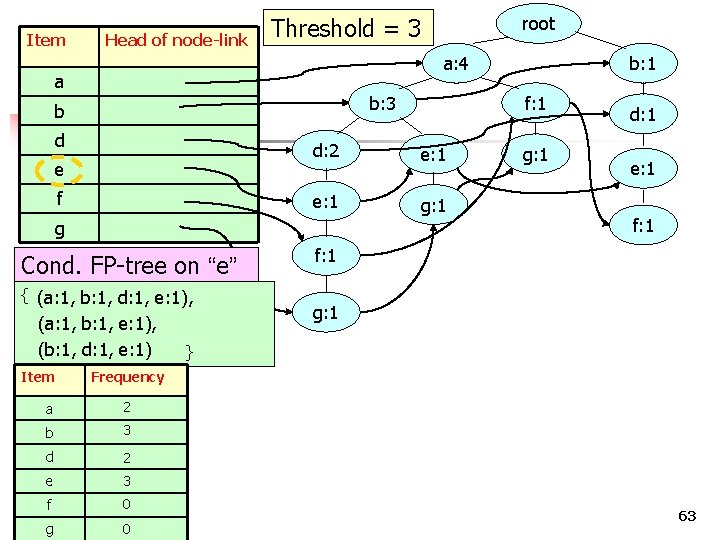

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f Cond. FP-tree on “f” { (a: 1, b: 1, d: 1, e: 1, f: 1), f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 } 59

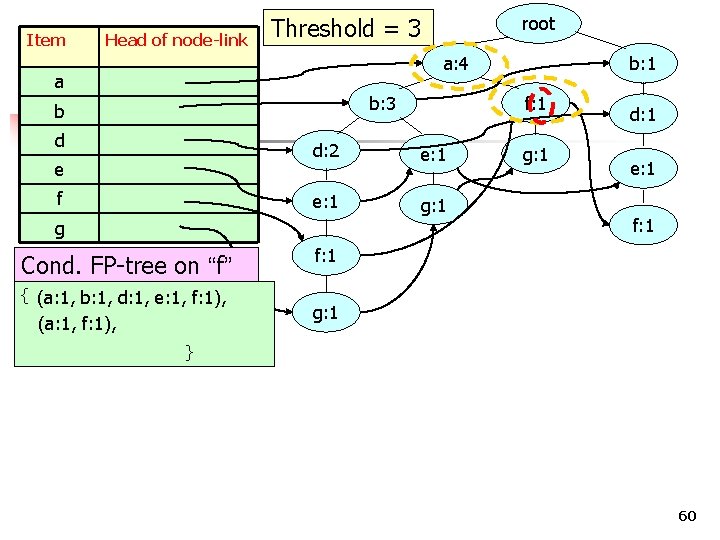

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f Cond. FP-tree on “f” { (a: 1, b: 1, d: 1, e: 1, f: 1), (a: 1, f: 1), f: 1 d: 2 e: 1 g: 1 g b: 1 g: 1 d: 1 e: 1 f: 1 g: 1 } 60

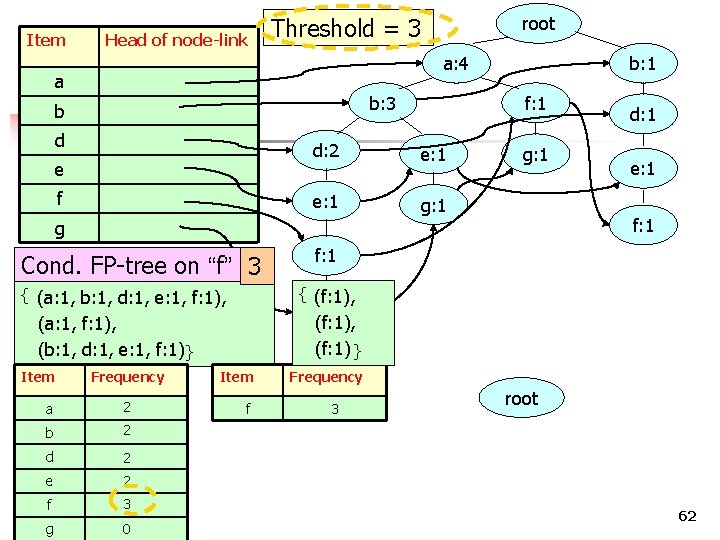

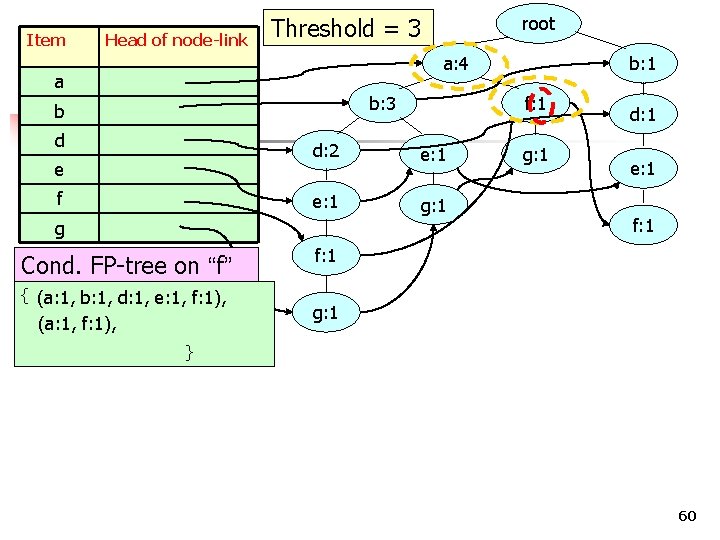

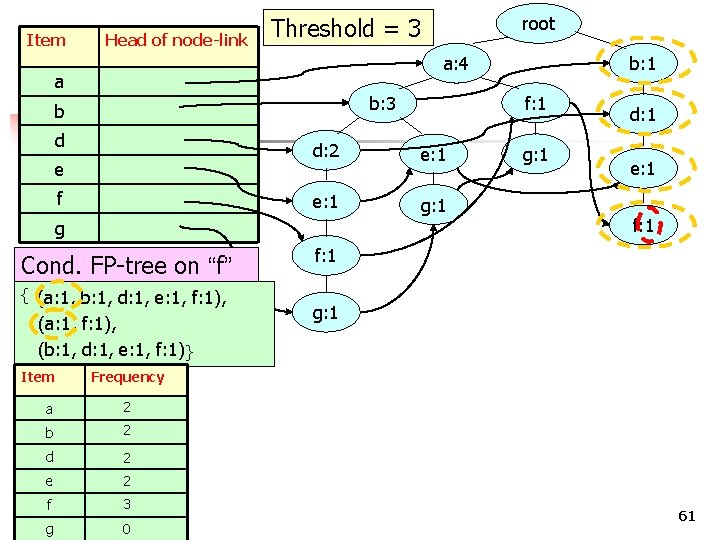

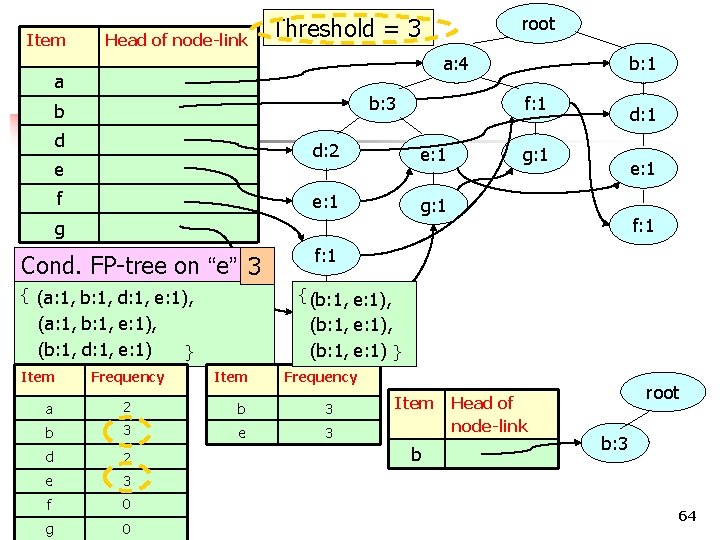

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “f” { (a: 1, b: 1, d: 1, e: 1, f: 1), (a: 1, f: 1), (b: 1, d: 1, e: 1, f: 1) } e: 1 g: 1 2 b 2 d 2 e 2 g g: 1 d: 1 e: 1 f: 1 g: 1 Frequency a f b: 1 f: 1 d: 2 g Item root Threshold = 3 3 COMP 5331 0 61

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 (f: 1), (f: 1) } (a: 1, f: 1), (b: 1, d: 1, e: 1, f: 1) } Frequency a 2 b 2 d 2 e 2 f g 3 COMP 5331 0 f: 1 { (f: 1), { (a: 1, b: 1, d: 1, e: 1, f: 1), Item e: 1 f: 1 g Cond. FP-tree on “f” 3 d: 1 Item f Frequency 3 root 62

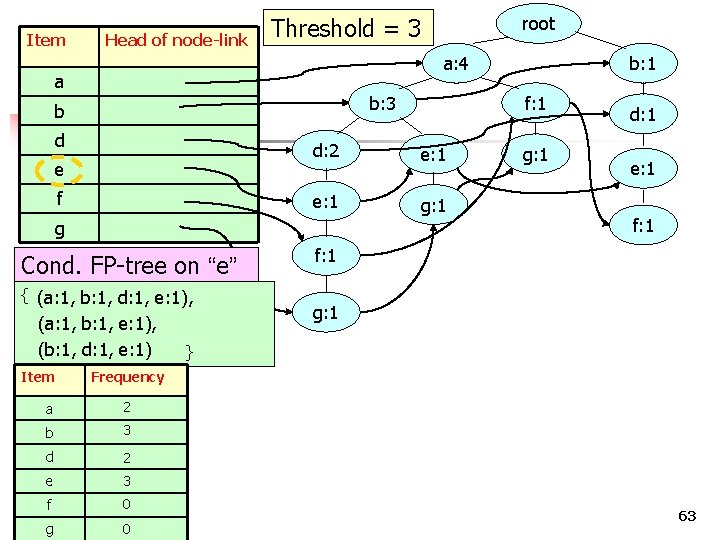

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “e” { (a: 1, b: 1, d: 1, e: 1), (a: 1, b: 1, e: 1), (b: 1, d: 1, e: 1) e: 1 g: 1 2 b 3 d 2 e 3 g g: 1 d: 1 e: 1 f: 1 g: 1 } Frequency a f b: 1 f: 1 d: 2 g Item root Threshold = 3 0 COMP 5331 0 63

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 d: 1 g: 1 e: 1 f: 1 g Cond. FP-tree on “e” 3 { (a: 1, b: 1, d: 1, e: 1), (a: 1, b: 1, e: 1), (b: 1, d: 1, e: 1) Item Frequency f: 1 { (b: 1, e: 1), g: 1 (b: 1, e: 1), (b: 1, e: 1) } } Item Frequency a 2 b 3 e 3 d 2 e 3 f g 0 COMP 5331 0 Item b Head of node-link root b: 3 64

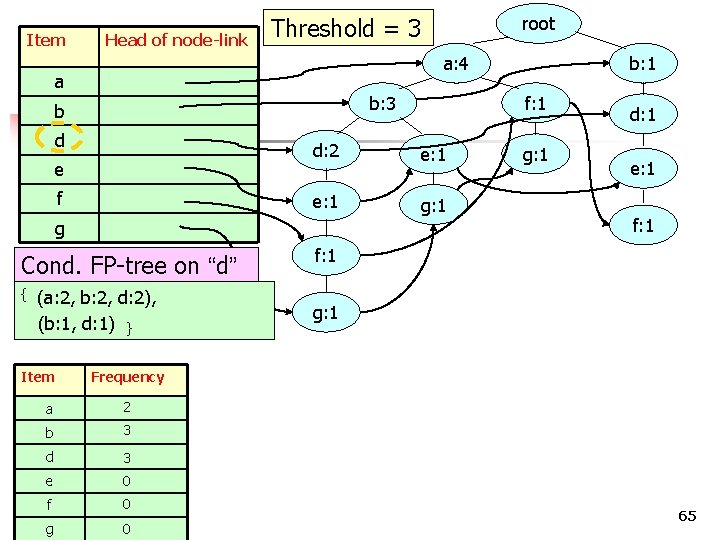

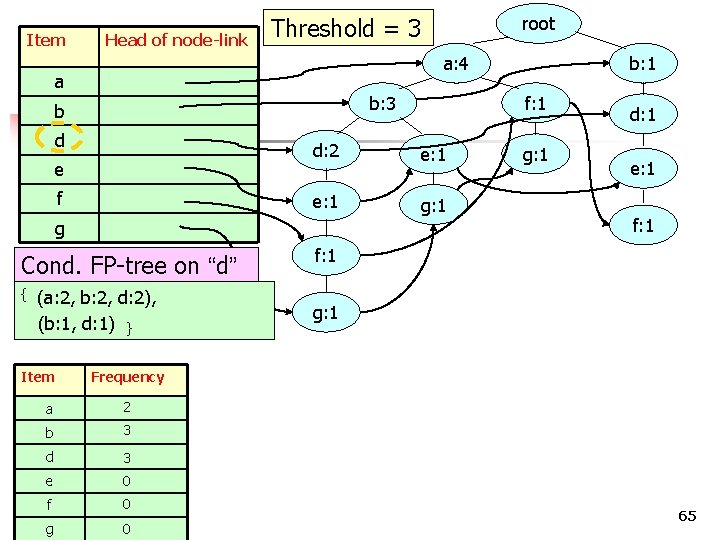

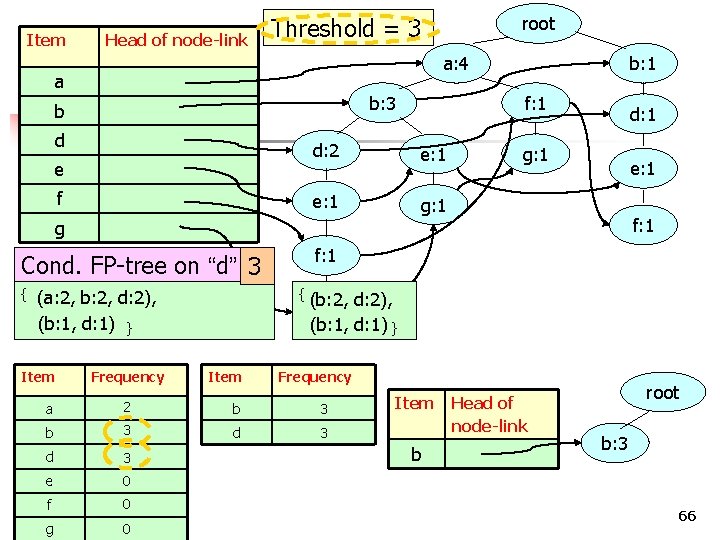

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “d” (a: 2, b: 2, d: 2), (b: 1, d: 1) } Item e: 1 g: 1 2 b 3 d 3 e 0 g g: 1 d: 1 e: 1 f: 1 g: 1 Frequency a f b: 1 f: 1 d: 2 g { root Threshold = 3 0 COMP 5331 0 65

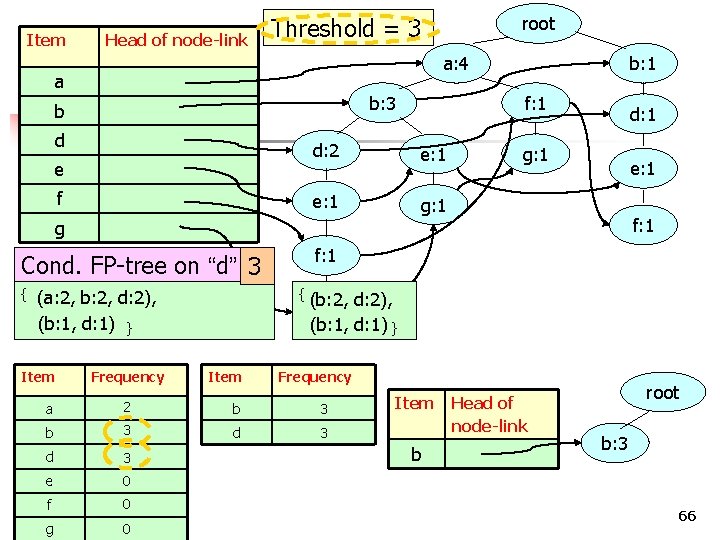

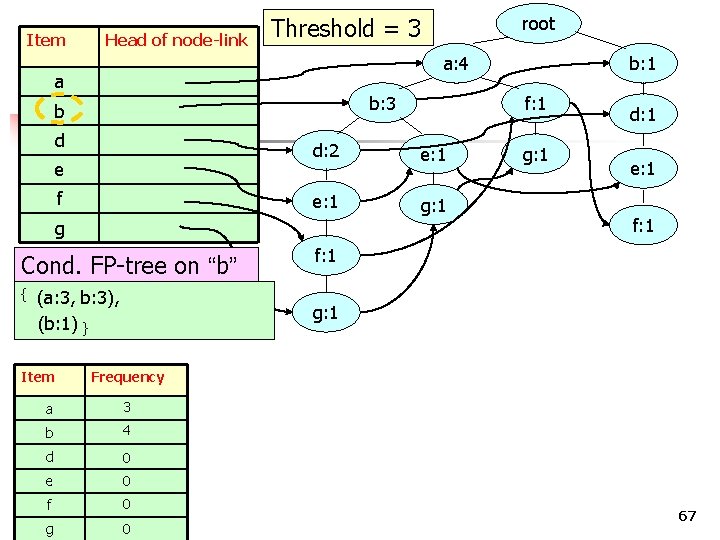

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 d: 1 g: 1 e: 1 f: 1 g Cond. FP-tree on “d” 3 { { (b: 2, (a: 2, b: 2, d: 2), (b: 1, d: 1) } Item Frequency f: 1 d: 2), g: 1 (b: 1, d: 1) } Item Frequency a 2 b 3 d 3 e 0 f g 0 COMP 5331 0 Item b Head of node-link root b: 3 66

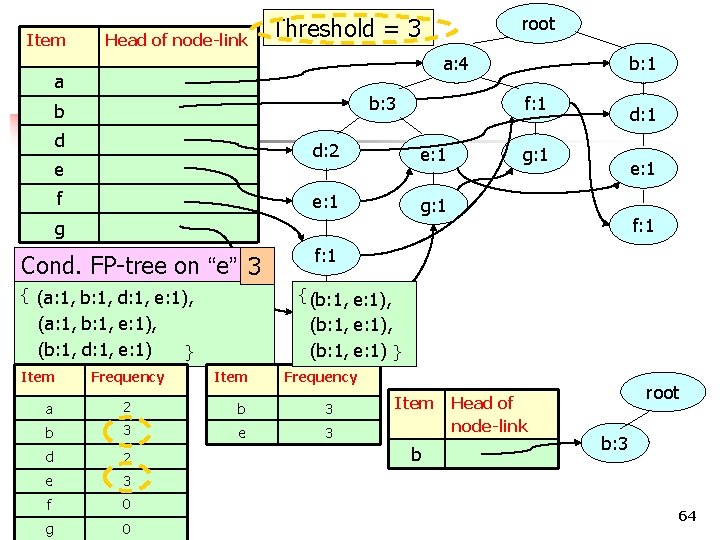

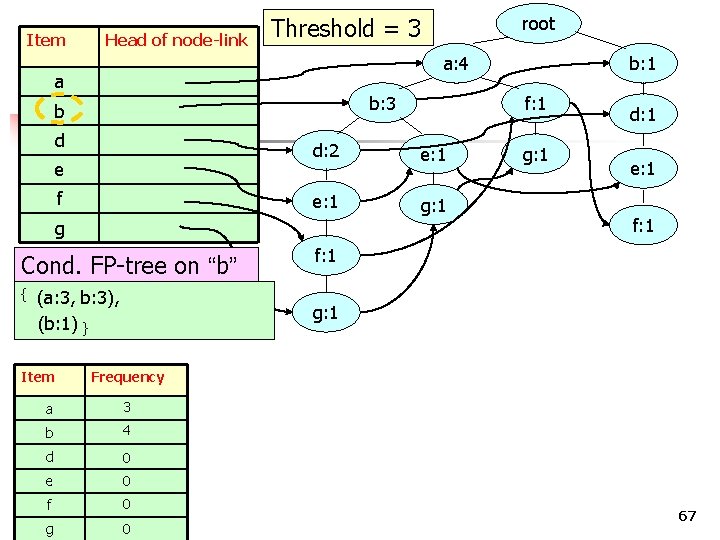

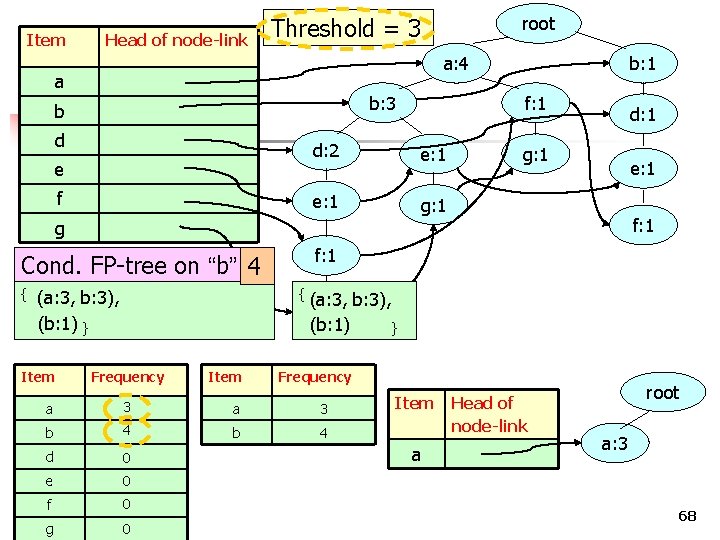

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “b” (a: 3, b: 3), (b: 1) } Item e: 1 g: 1 e: 1 f: 1 Frequency 3 b 4 d 0 e 0 g g: 1 d: 1 g: 1 a f b: 1 f: 1 d: 2 g { root Threshold = 3 0 COMP 5331 0 67

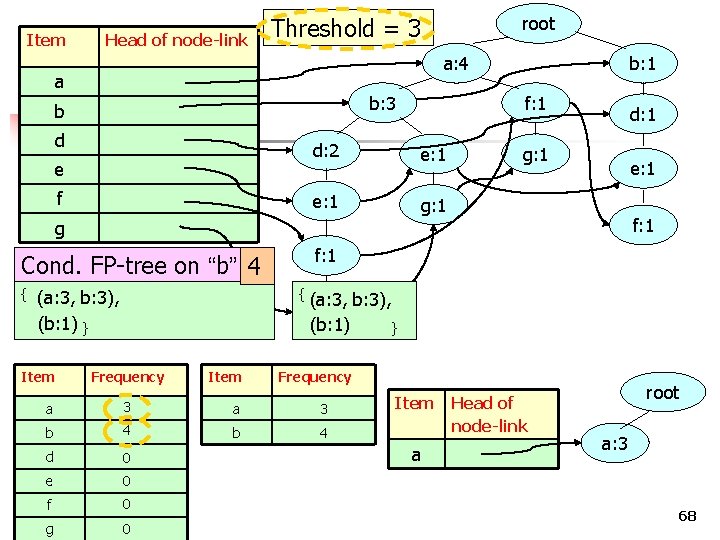

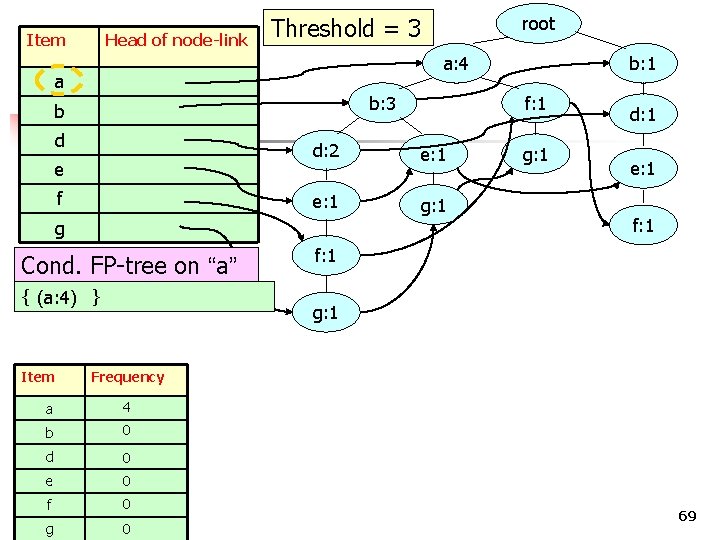

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 d: 1 g: 1 e: 1 f: 1 g Cond. FP-tree on “b” 4 { { (a: 3, b: 3), (b: 1) } Item f: 1 g: 1 (b: 1) Frequency Item 3 a 3 b 4 d 0 e 0 g 0 COMP 5331 0 } Frequency a f b: 3), Item a Head of node-link root a: 3 68

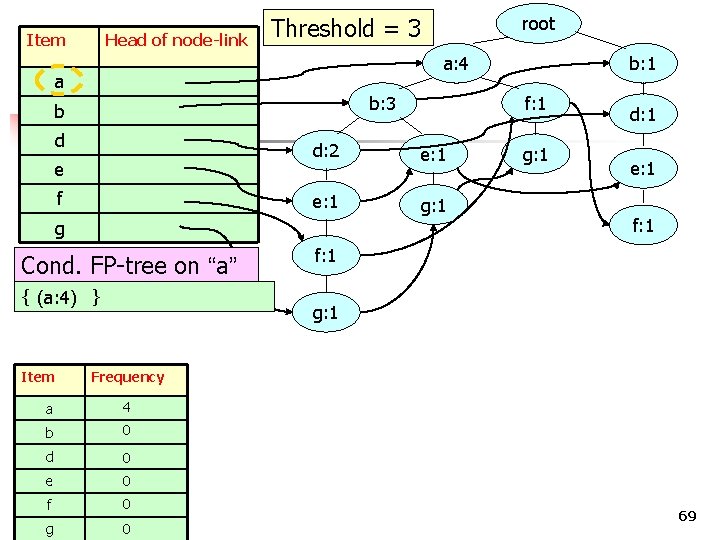

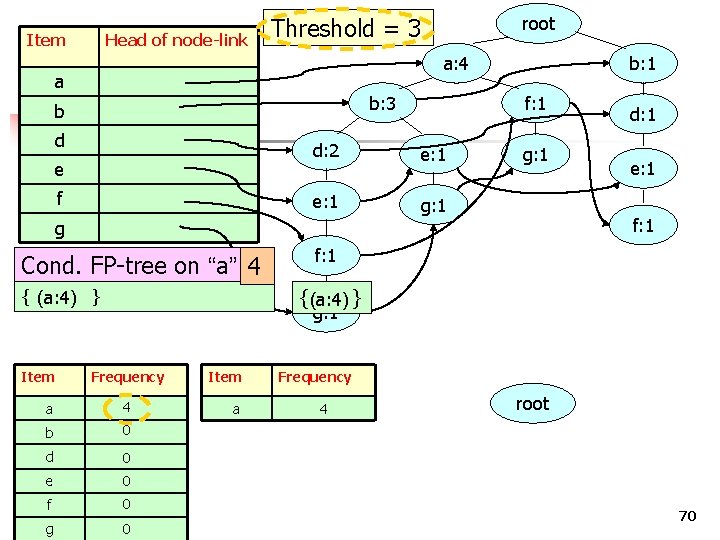

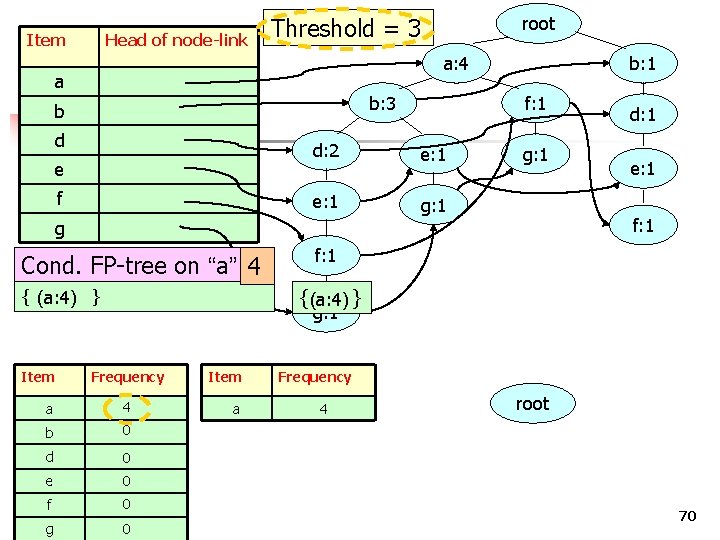

Item Head of node-link a: 4 a b: 3 b d e f Cond. FP-tree on “a” { (a: 4) } e: 1 g: 1 e: 1 f: 1 Frequency 4 b 0 d 0 e 0 g g: 1 d: 1 g: 1 a f b: 1 f: 1 d: 2 g Item root Threshold = 3 0 COMP 5331 0 69

Item Head of node-link root Threshold = 3 a: 4 a b: 3 b d e f b: 1 f: 1 d: 2 e: 1 g: 1 Frequency a 4 b 0 d 0 e 0 f g f: 1 { (a: 4) } Item e: 1 f: 1 g Cond. FP-tree on “a” 4 d: 1 0 COMP 5331 0 Item a Frequency 4 root 70

FP-tree Step 1: Deduce the ordered frequent items. For items with the same frequency, the order is given by the alphabetical order. Step 2: Construct the FP-tree from the above data Step 3: From the FP-tree above, construct the FPconditional tree for each item (or itemset). Step 4: Determine the frequent patterns. 71

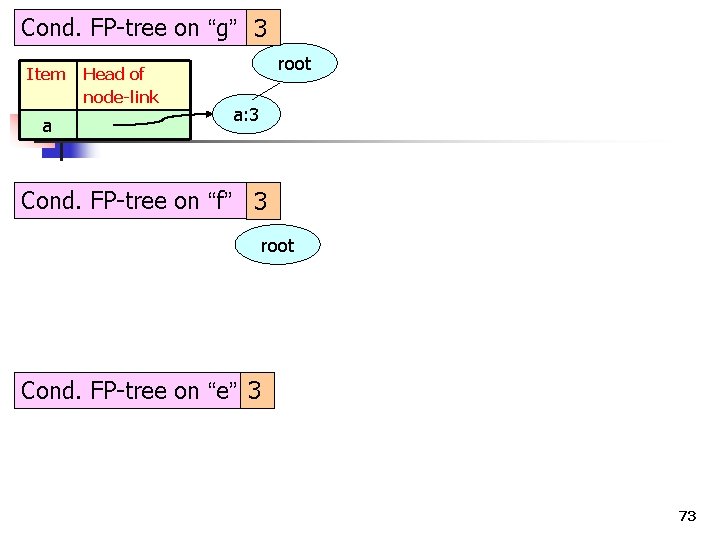

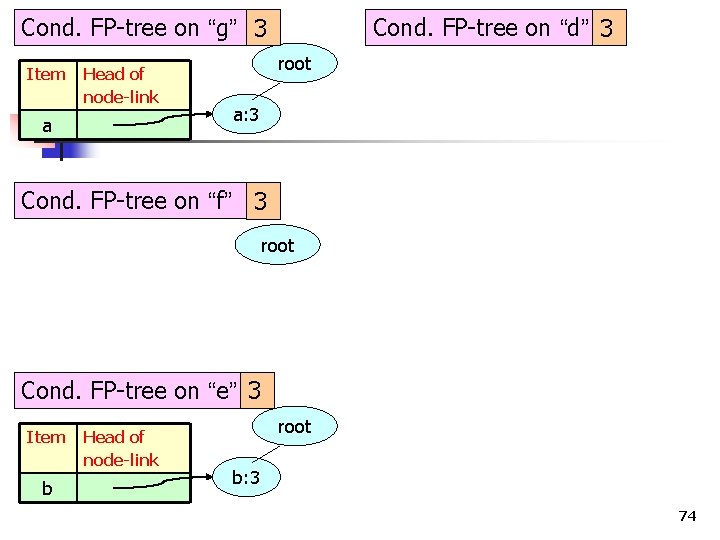

Cond. FP-tree on “g” 3 72

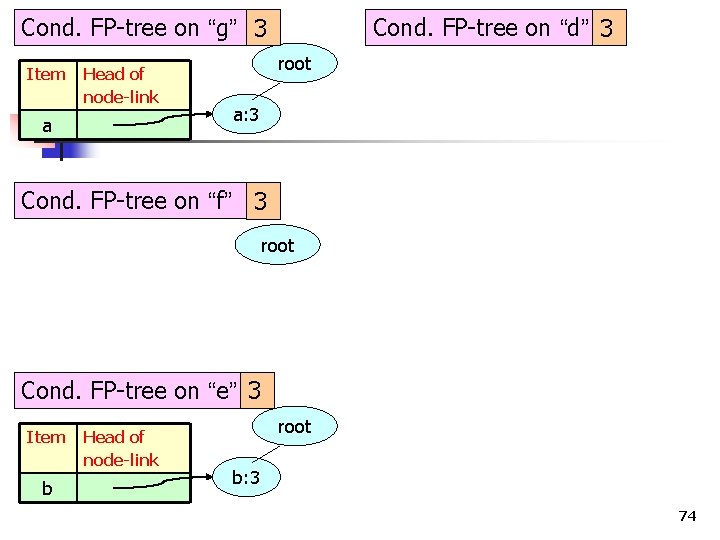

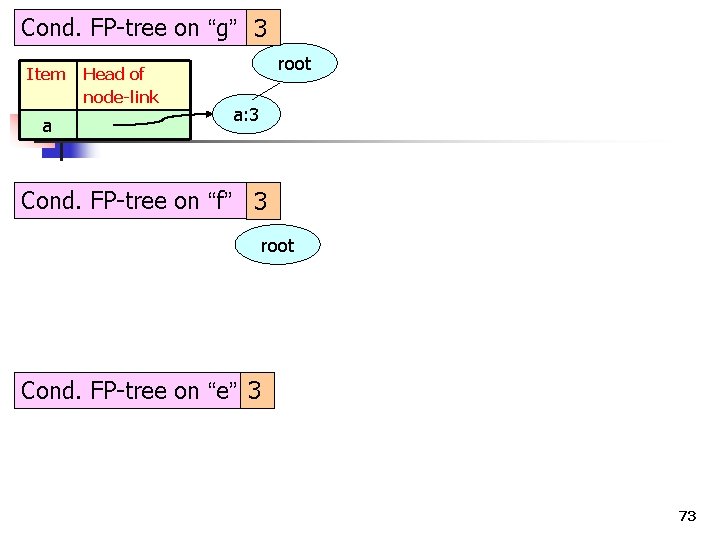

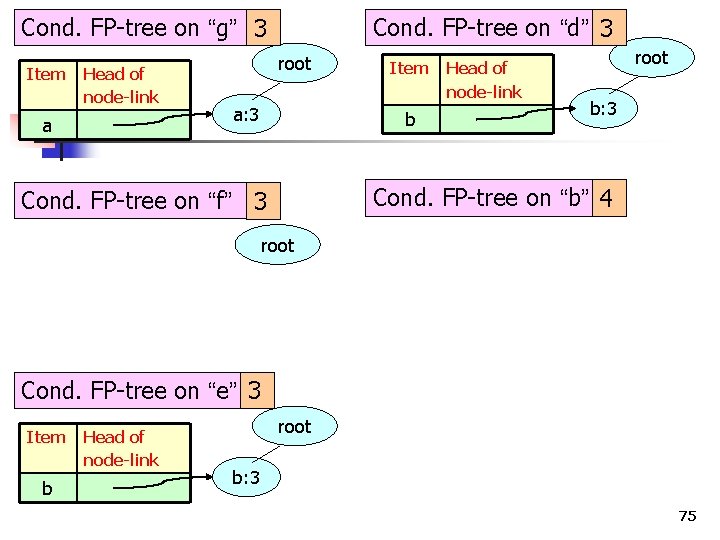

Cond. FP-tree on “g” 3 Item a Head of node-link root a: 3 Cond. FP-tree on “f” 3 root Cond. FP-tree on “e” 3 73

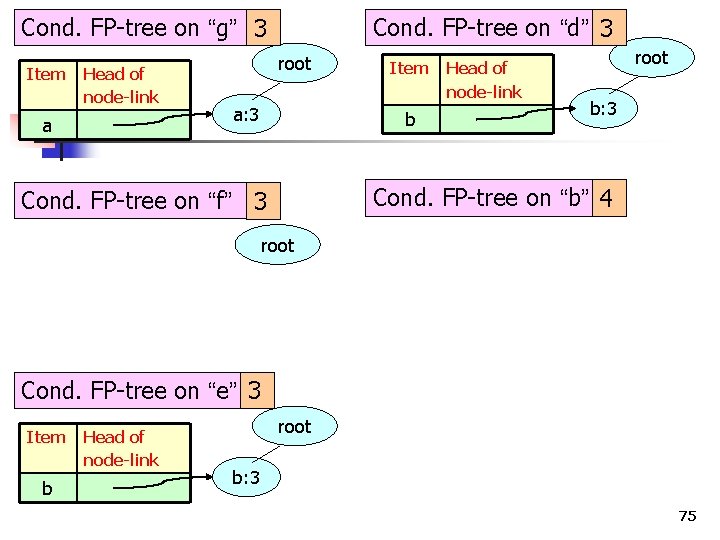

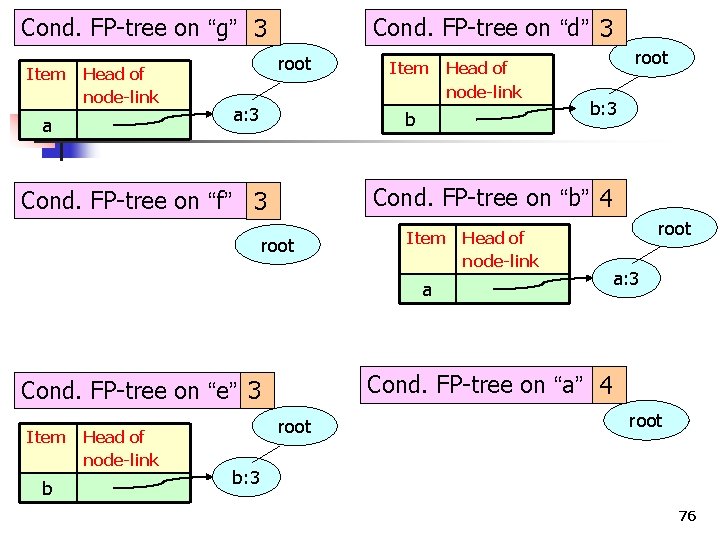

Cond. FP-tree on “g” 3 Item Head of node-link a Cond. FP-tree on “d” 3 root a: 3 Cond. FP-tree on “f” 3 root Cond. FP-tree on “e” 3 Item b Head of node-link root b: 3 74

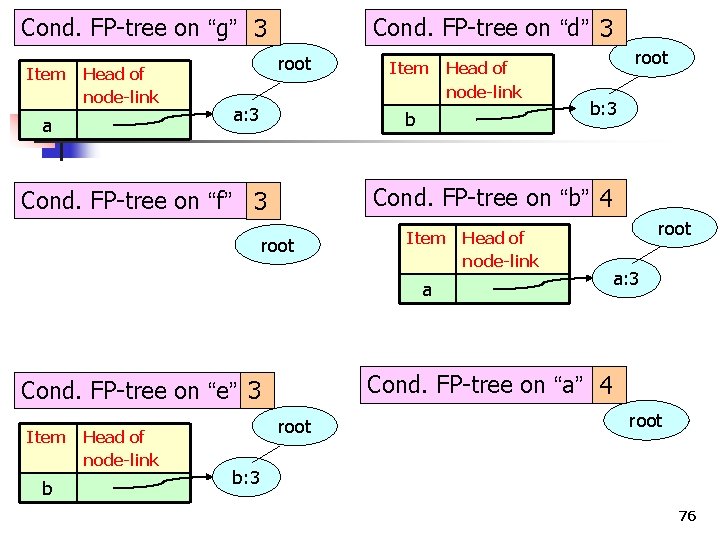

Cond. FP-tree on “g” 3 Item Head of node-link a Cond. FP-tree on “d” 3 root a: 3 Item b Head of node-link root b: 3 Cond. FP-tree on “b” 4 Cond. FP-tree on “f” 3 root Cond. FP-tree on “e” 3 Item b Head of node-link root b: 3 75

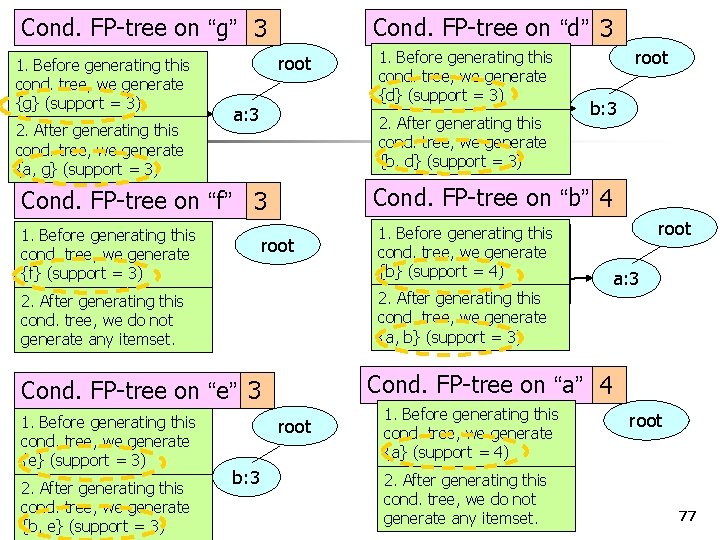

Cond. FP-tree on “g” 3 Item Head of node-link a Cond. FP-tree on “d” 3 root a: 3 Item Head of node-link b root b: 3 Cond. FP-tree on “b” 4 Cond. FP-tree on “f” 3 root Item Head of node-link a b Head of node-link a: 3 Cond. FP-tree on “a” 4 Cond. FP-tree on “e” 3 Item root b: 3 76

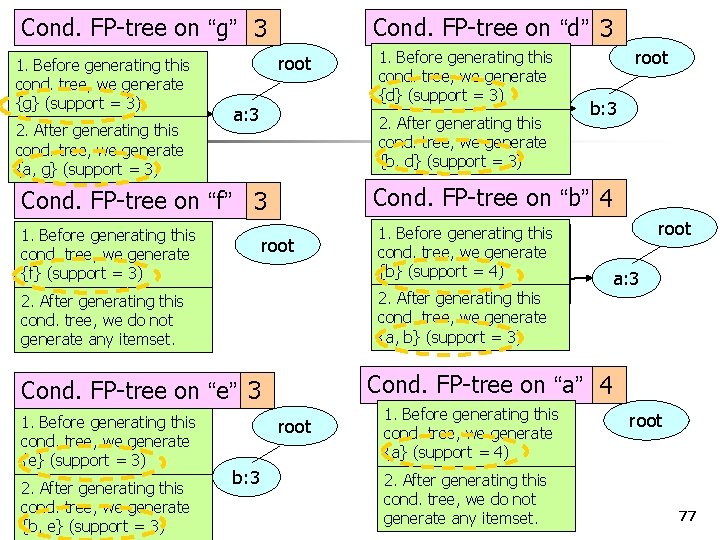

Cond. FP-tree on “g” 3 1. Before generating this Item Head of cond. tree, we generate node-link {g} (support = 3) a generating this 2. After cond. tree, we generate {a, g} (support = 3) Cond. FP-tree on “d” 3 root a: 3 b generating this 2. After 1. Before generating this cond. tree, we generate {f} (support = 3) b: 3 Cond. FP-tree on “b” 4 root 1. Before Item generating Head of this cond. tree, we generate node-link {b} (support = 4) a 2. After generating this cond. tree, we do not generate any itemset. root a: 3 cond. tree, we generate {a, b} (support = 3) Cond. FP-tree on “a” 4 Cond. FP-tree on “e” 3 2. After b generating this cond. tree, we generate COMP 5331 {b, e} (support = 3) root cond. tree, we generate {b, d} (support = 3) Cond. FP-tree on “f” 3 1. Before generating this Itemtree, Head of cond. we generate node-link {e} (support = 3) 1. Before generating this Itemtree, Head of cond. we generate node-link {d} (support = 3) root b: 3 1. Before generating this cond. tree, we generate {a} (support = 4) 2. After generating this cond. tree, we do not generate any itemset. root 77

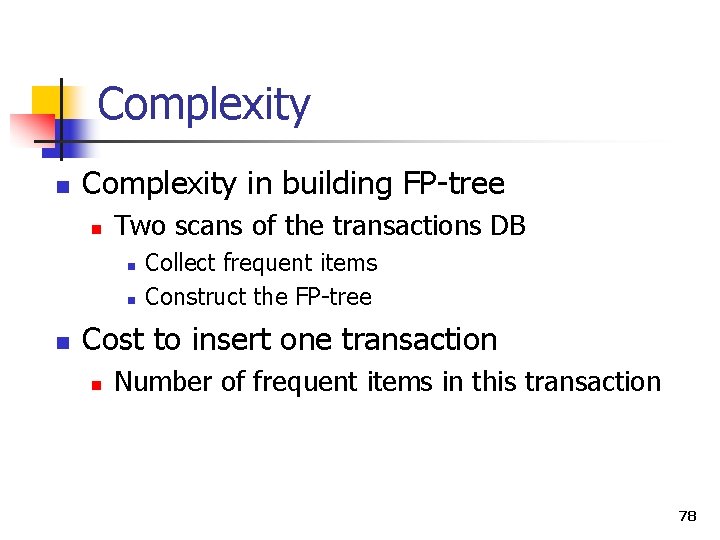

Complexity n Complexity in building FP-tree n Two scans of the transactions DB n n n Collect frequent items Construct the FP-tree Cost to insert one transaction n Number of frequent items in this transaction 78

Size of the FP-tree n The size of the FP-tree is bounded by the overall occurrences of the frequent items in the database 79

Height of the Tree n The height of the tree is bounded by the maximum number of frequent items in any transaction in the database 80

Compression n With respect to the total number of items stored, n is FP-tree more compressed compared with the original databases? COMP 5331 81

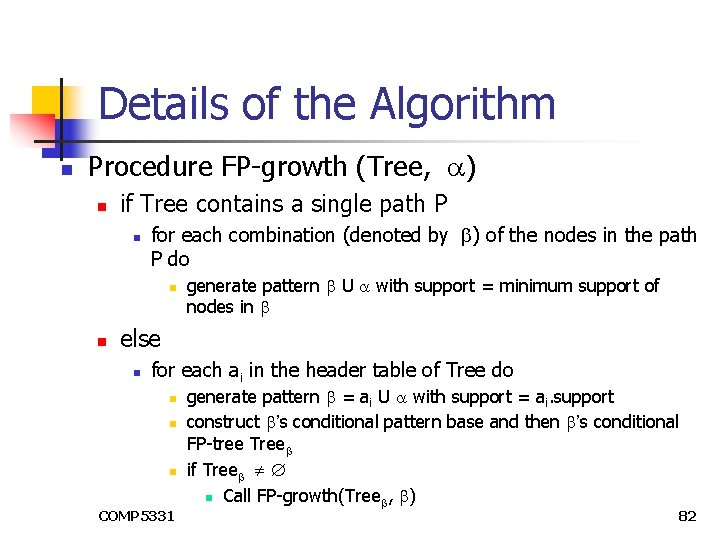

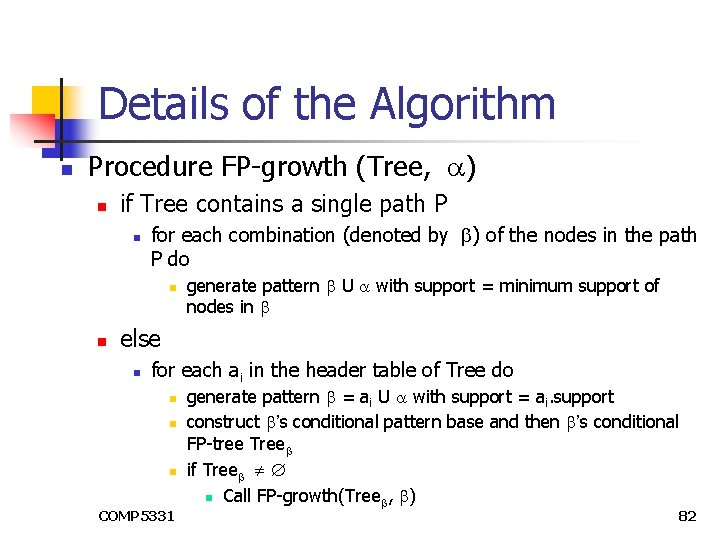

Details of the Algorithm n Procedure FP-growth (Tree, ) n if Tree contains a single path P n for each combination (denoted by ) of the nodes in the path P do n n generate pattern U with support = minimum support of nodes in else n for each ai in the header table of Tree do n n n COMP 5331 generate pattern = ai U with support = ai. support construct ’s conditional pattern base and then ’s conditional FP-tree Tree if Tree n Call FP-growth(Tree , ) 82

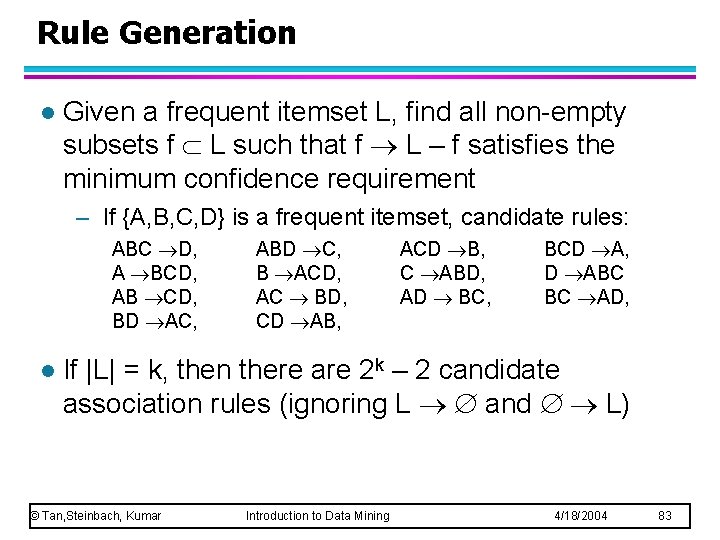

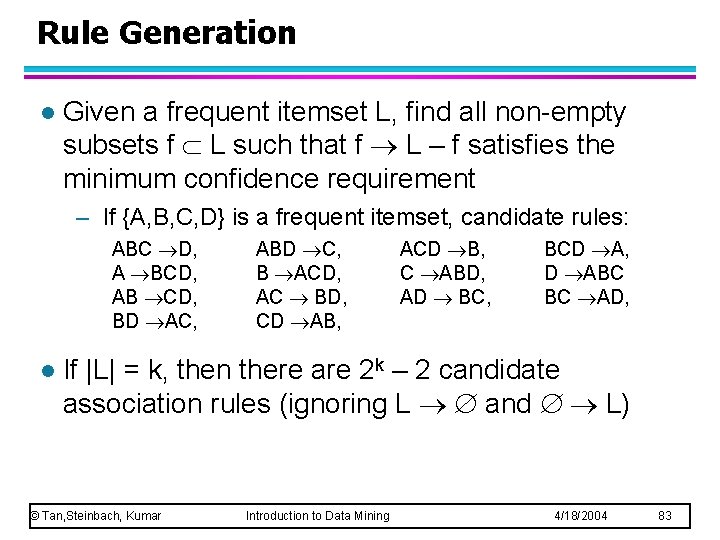

Rule Generation l Given a frequent itemset L, find all non-empty subsets f L such that f L – f satisfies the minimum confidence requirement – If {A, B, C, D} is a frequent itemset, candidate rules: ABC D, A BCD, AB CD, BD AC, l ABD C, B ACD, AC BD, CD AB, ACD B, C ABD, AD BC, BCD A, D ABC BC AD, If |L| = k, then there are 2 k – 2 candidate association rules (ignoring L and L) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 83

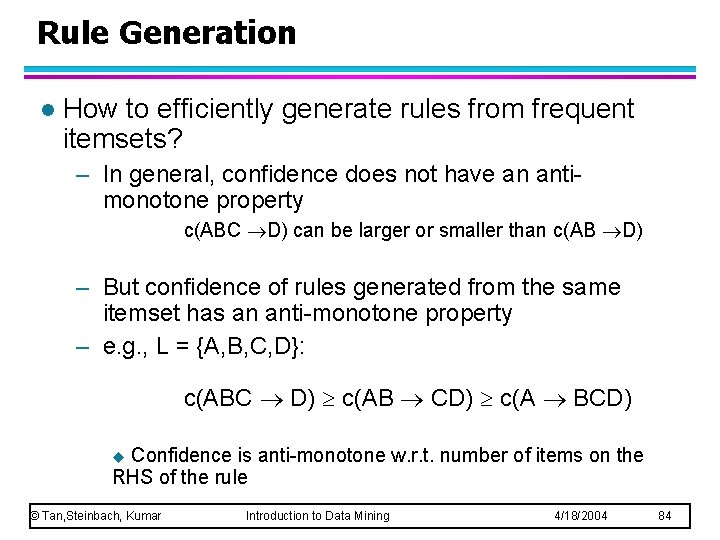

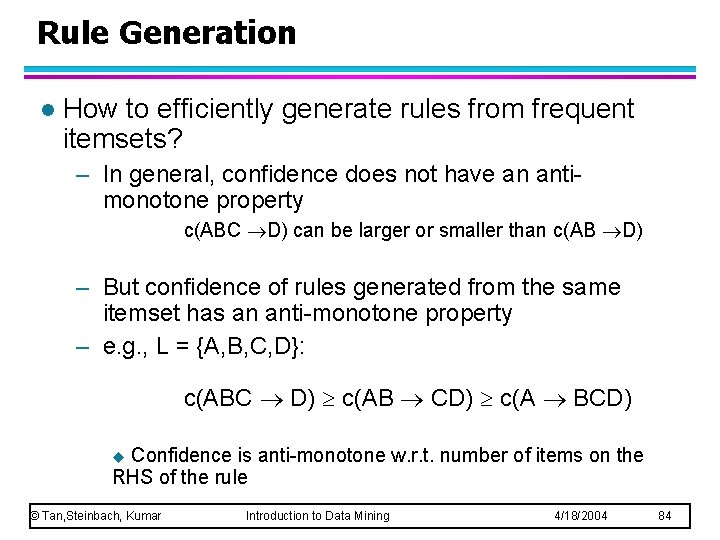

Rule Generation l How to efficiently generate rules from frequent itemsets? – In general, confidence does not have an antimonotone property c(ABC D) can be larger or smaller than c(AB D) – But confidence of rules generated from the same itemset has an anti-monotone property – e. g. , L = {A, B, C, D}: c(ABC D) c(AB CD) c(A BCD) Confidence is anti-monotone w. r. t. number of items on the RHS of the rule u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 84

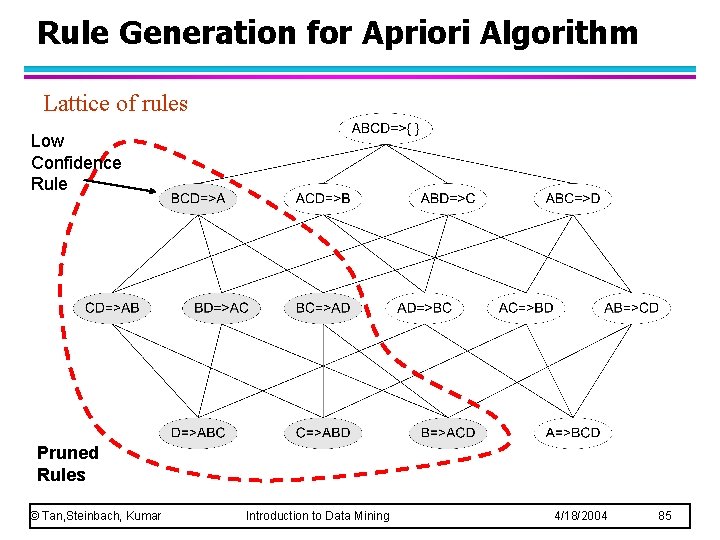

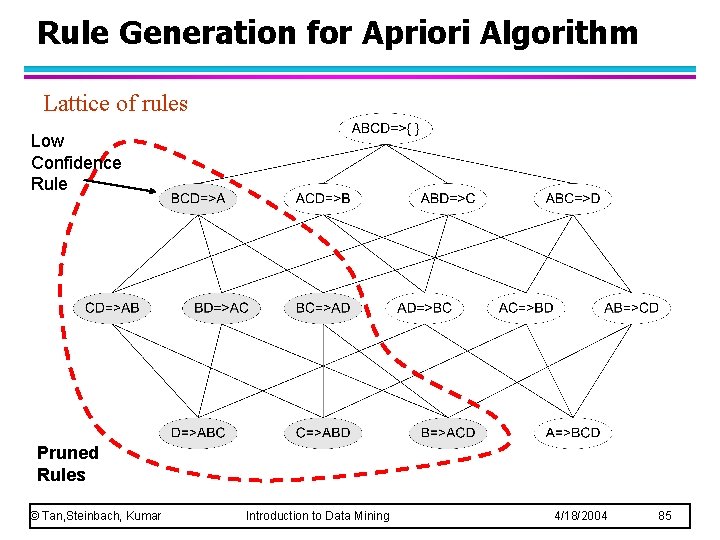

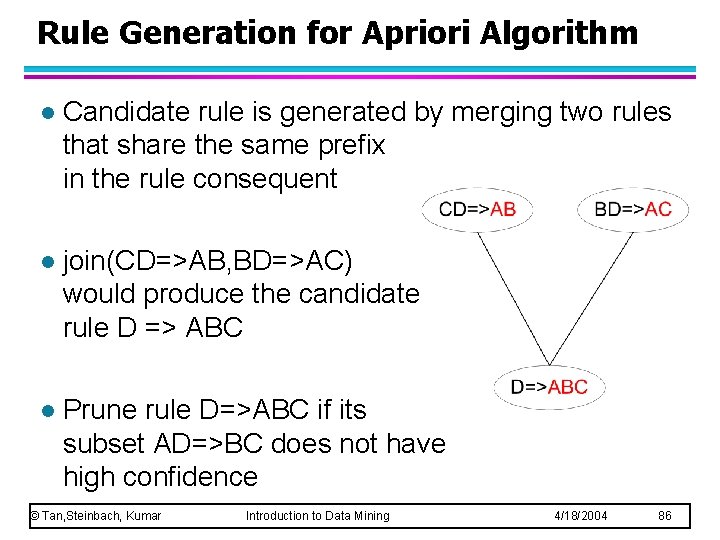

Rule Generation for Apriori Algorithm Lattice of rules Low Confidence Rule Pruned Rules © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 85

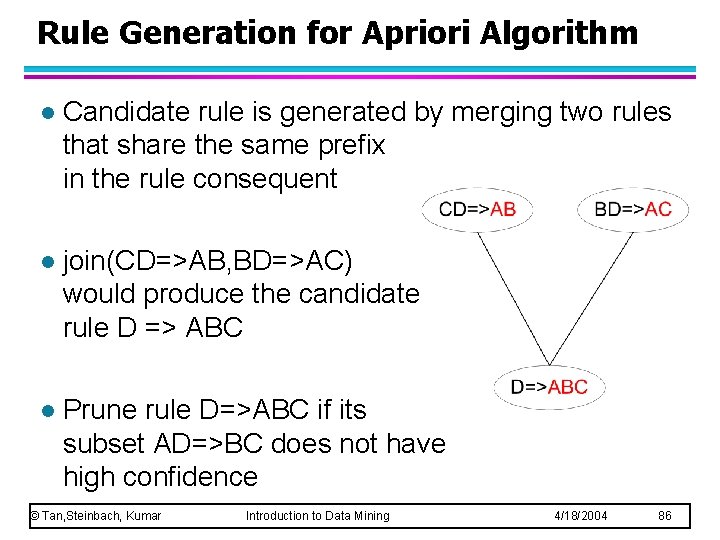

Rule Generation for Apriori Algorithm l Candidate rule is generated by merging two rules that share the same prefix in the rule consequent l join(CD=>AB, BD=>AC) would produce the candidate rule D => ABC l Prune rule D=>ABC if its subset AD=>BC does not have high confidence © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 86

Pattern Evaluation l Association rule algorithms tend to produce too many rules – many of them are uninteresting or redundant – Redundant if {A, B, C} {D} and {A, B} {D} have same support & confidence l Interestingness measures can be used to prune/rank the derived patterns l In the original formulation of association rules, support & confidence are the only measures used © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 87

Application of Interestingness Measures © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 88

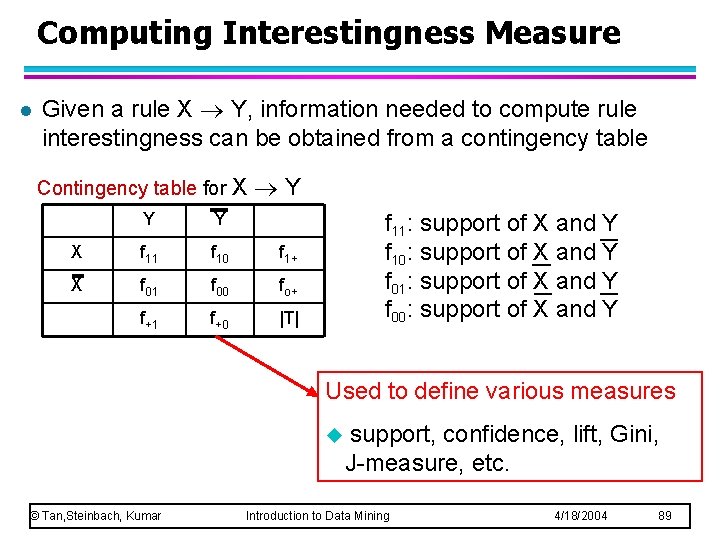

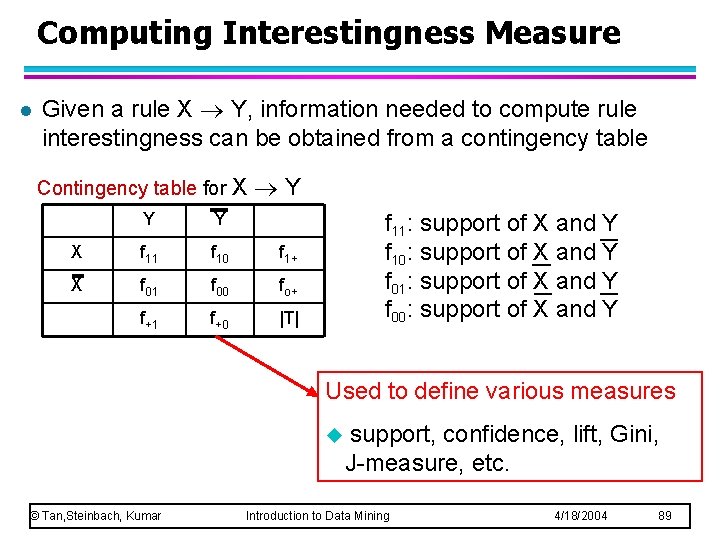

Computing Interestingness Measure l Given a rule X Y, information needed to compute rule interestingness can be obtained from a contingency table Contingency table for X Y Y Y X f 11 f 10 f 1+ X f 01 f 00 fo+ f+1 f+0 |T| f 11: support of X and Y f 10: support of X and Y f 01: support of X and Y f 00: support of X and Y Used to define various measures u © Tan, Steinbach, Kumar support, confidence, lift, Gini, J-measure, etc. Introduction to Data Mining 4/18/2004 89

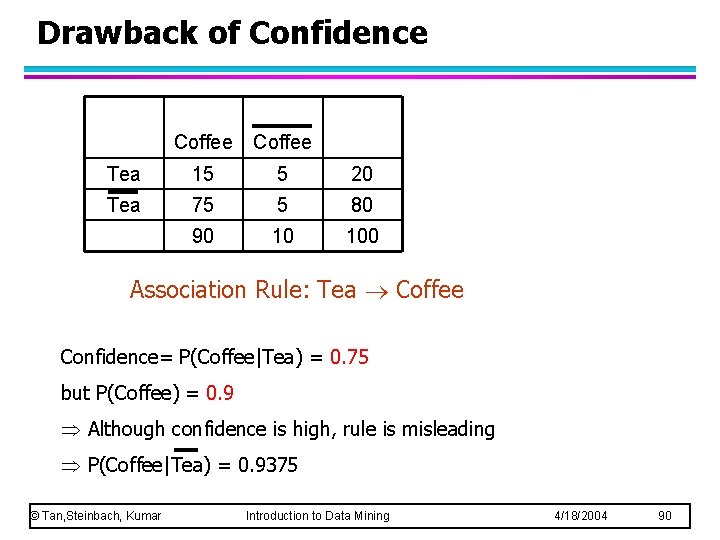

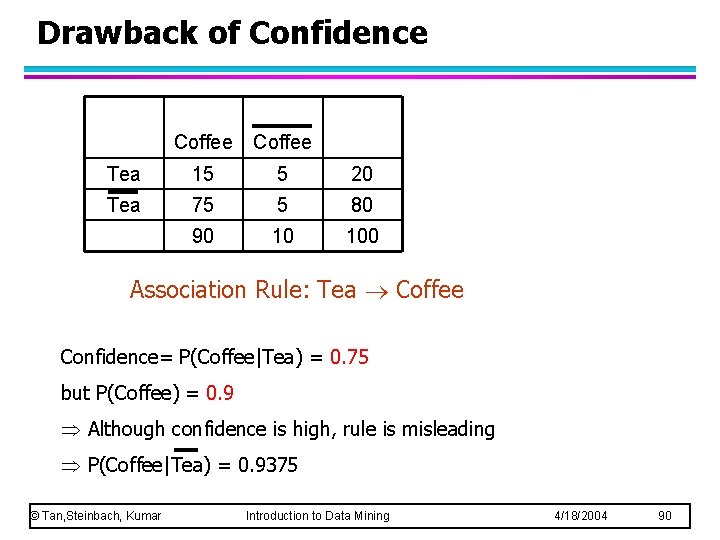

Drawback of Confidence Coffee Tea 15 5 20 Tea 75 5 80 90 10 100 Association Rule: Tea Coffee Confidence= P(Coffee|Tea) = 0. 75 but P(Coffee) = 0. 9 Although confidence is high, rule is misleading P(Coffee|Tea) = 0. 9375 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 90

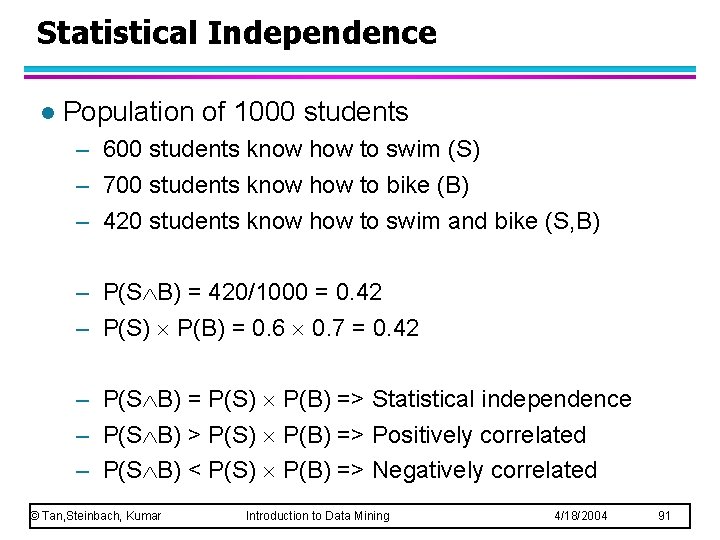

Statistical Independence l Population of 1000 students – 600 students know how to swim (S) – 700 students know how to bike (B) – 420 students know how to swim and bike (S, B) – P(S B) = 420/1000 = 0. 42 – P(S) P(B) = 0. 6 0. 7 = 0. 42 – P(S B) = P(S) P(B) => Statistical independence – P(S B) > P(S) P(B) => Positively correlated – P(S B) < P(S) P(B) => Negatively correlated © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 91

Statistical-based Measures l Measures that take into account statistical dependence © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 92

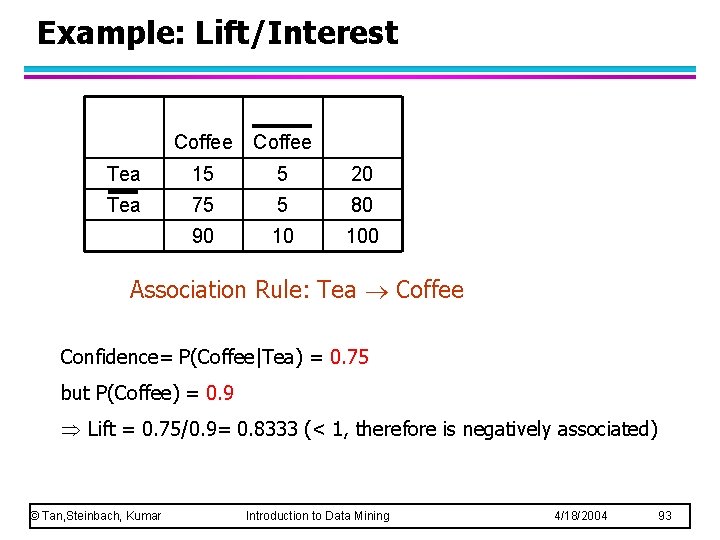

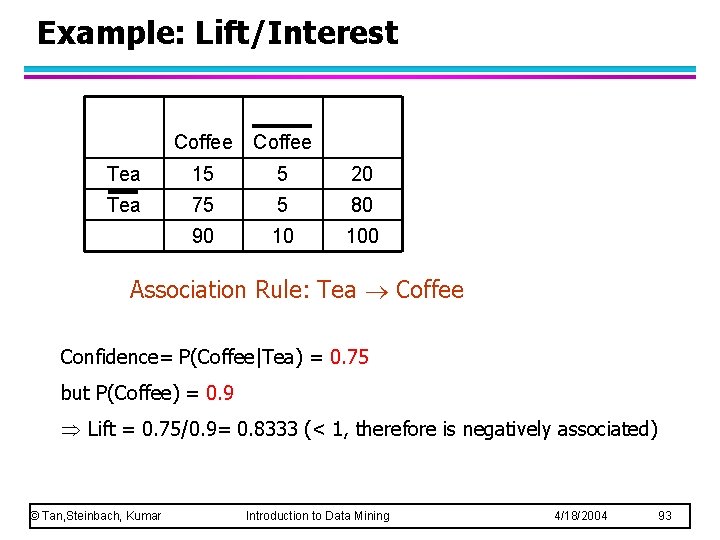

Example: Lift/Interest Coffee Tea 15 5 20 Tea 75 5 80 90 10 100 Association Rule: Tea Coffee Confidence= P(Coffee|Tea) = 0. 75 but P(Coffee) = 0. 9 Lift = 0. 75/0. 9= 0. 8333 (< 1, therefore is negatively associated) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 93

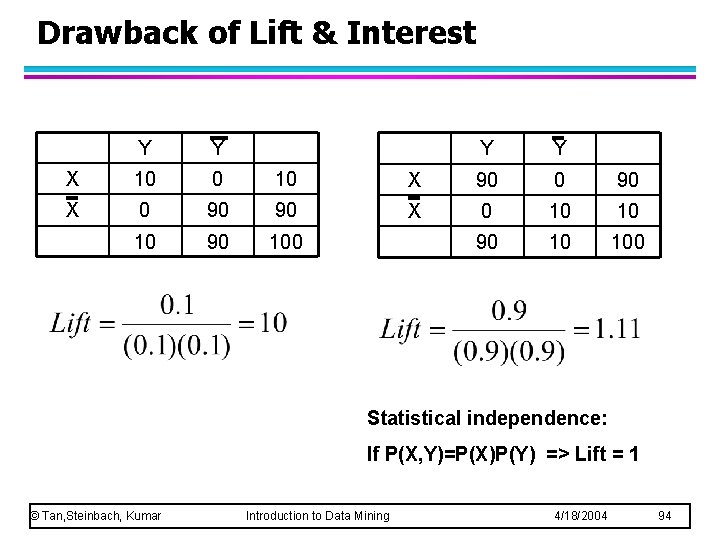

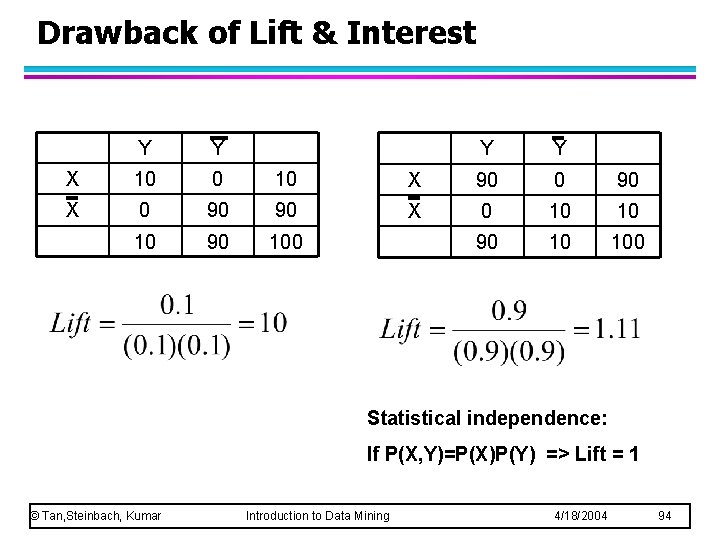

Drawback of Lift & Interest Y Y X 10 0 10 X 0 90 90 100 Y Y X 90 0 90 X 0 10 10 90 10 100 Statistical independence: If P(X, Y)=P(X)P(Y) => Lift = 1 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 94

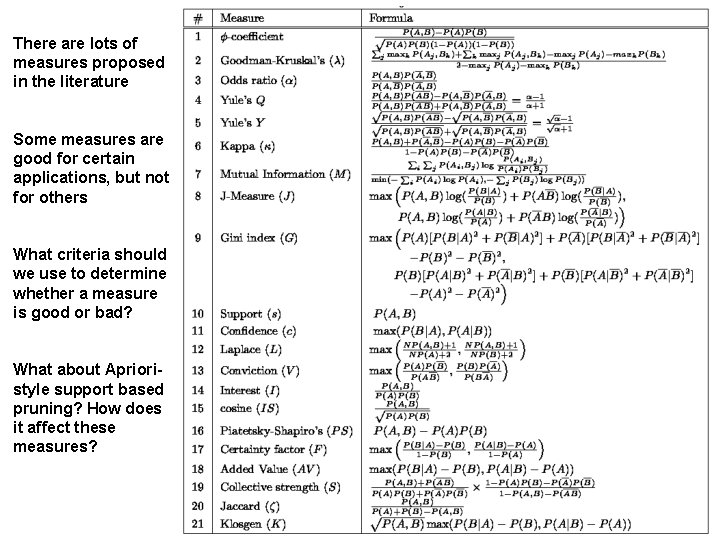

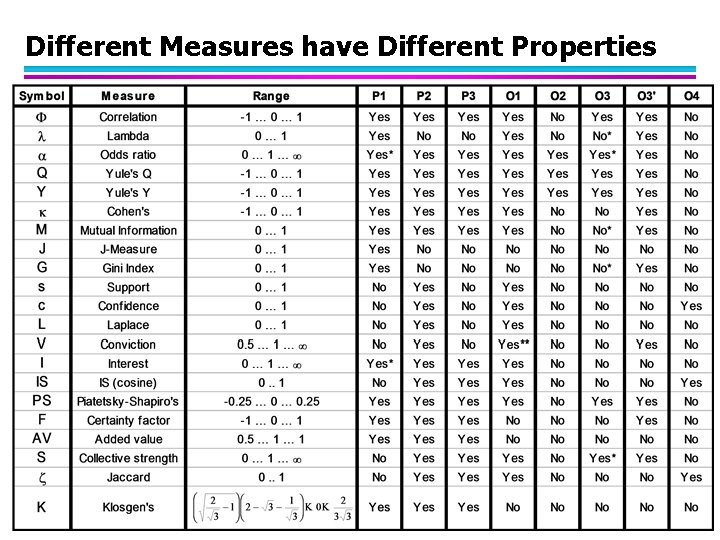

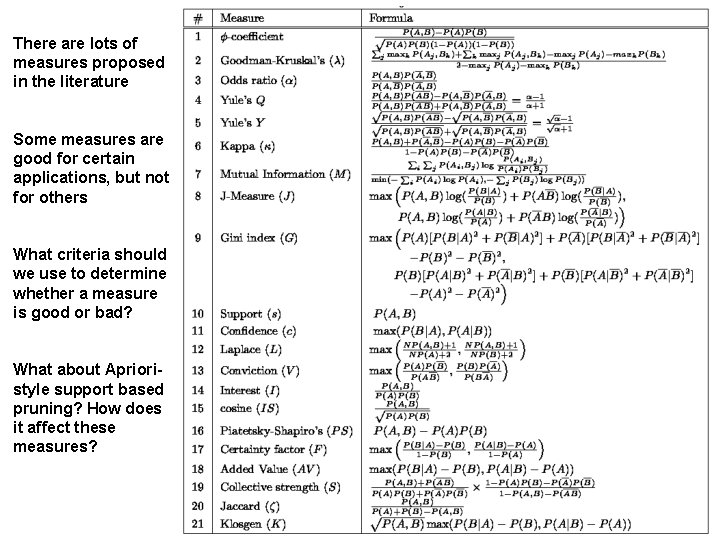

There are lots of measures proposed in the literature Some measures are good for certain applications, but not for others What criteria should we use to determine whether a measure is good or bad? What about Aprioristyle support based pruning? How does it affect these measures?

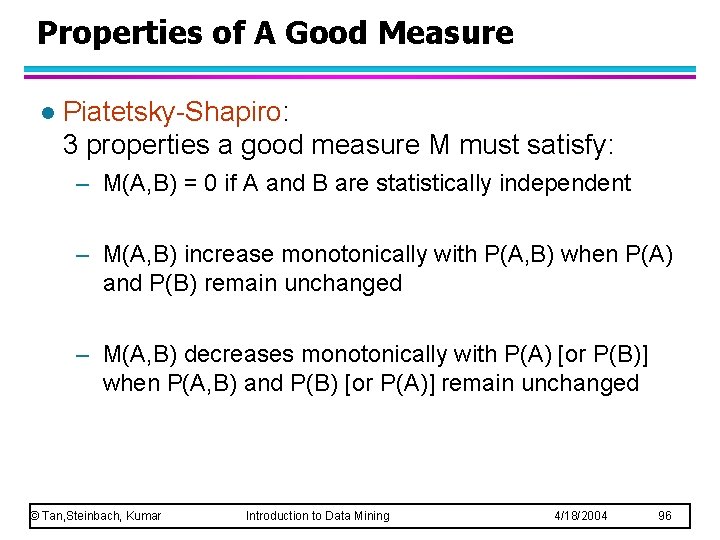

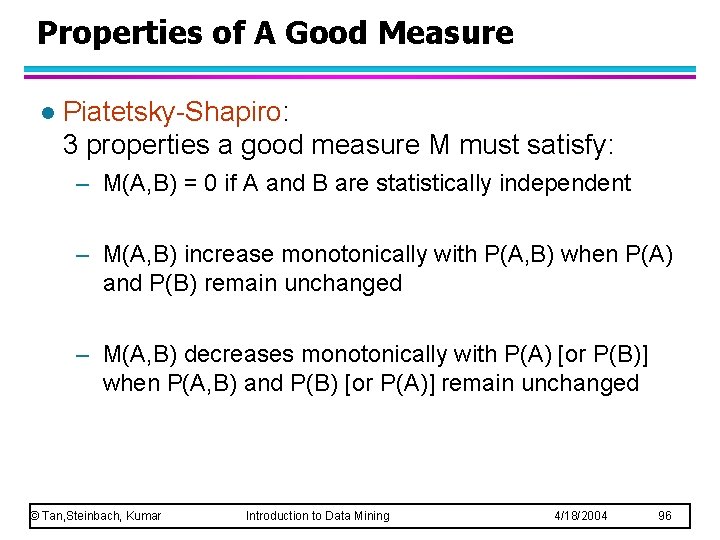

Properties of A Good Measure l Piatetsky-Shapiro: 3 properties a good measure M must satisfy: – M(A, B) = 0 if A and B are statistically independent – M(A, B) increase monotonically with P(A, B) when P(A) and P(B) remain unchanged – M(A, B) decreases monotonically with P(A) [or P(B)] when P(A, B) and P(B) [or P(A)] remain unchanged © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 96

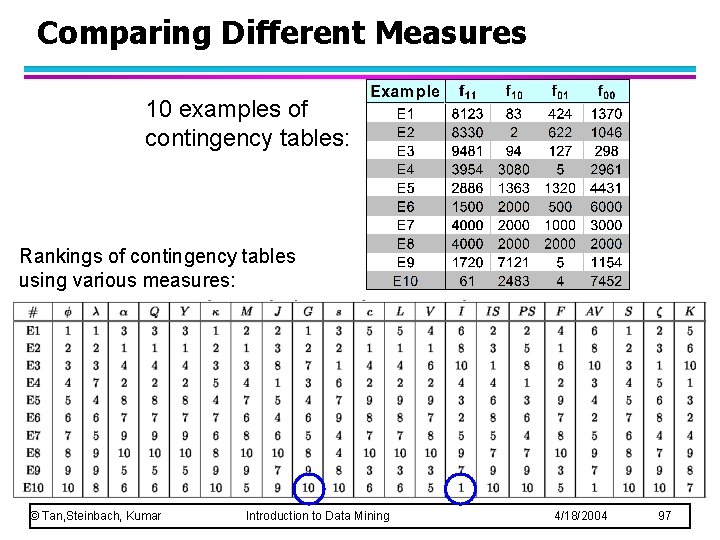

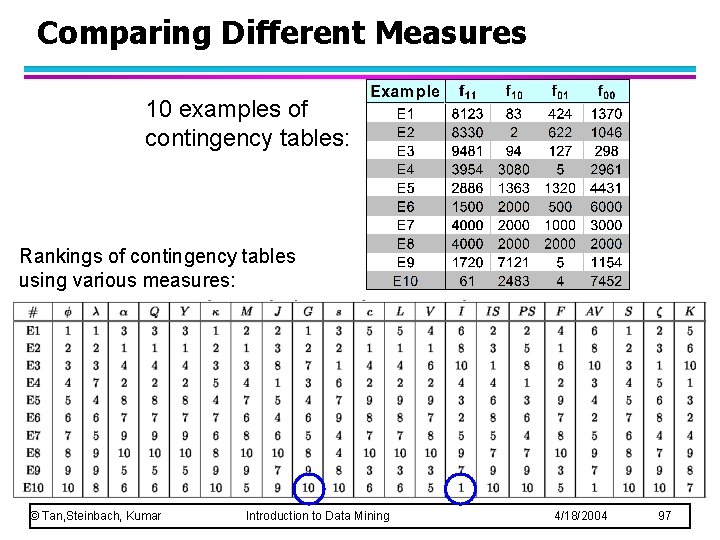

Comparing Different Measures 10 examples of contingency tables: Rankings of contingency tables using various measures: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 97

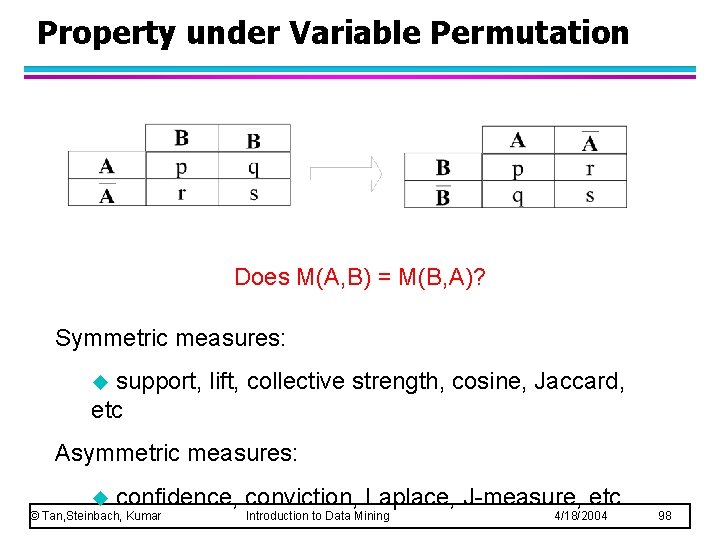

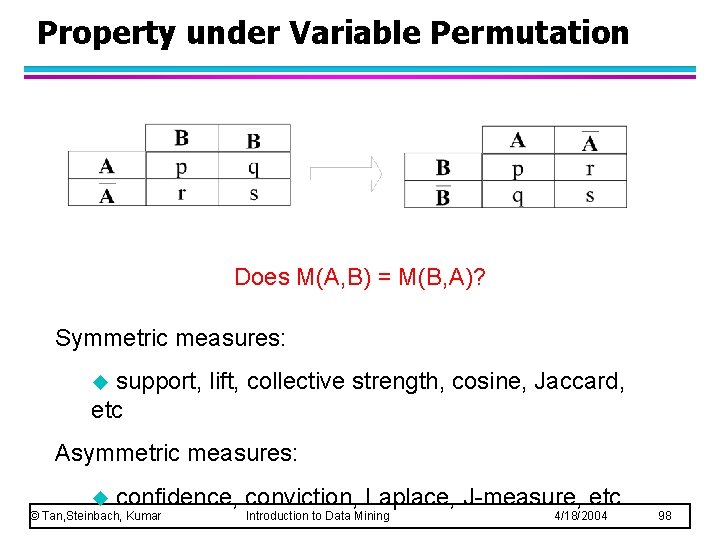

Property under Variable Permutation Does M(A, B) = M(B, A)? Symmetric measures: support, lift, collective strength, cosine, Jaccard, etc u Asymmetric measures: u confidence, conviction, Laplace, J-measure, etc © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 98

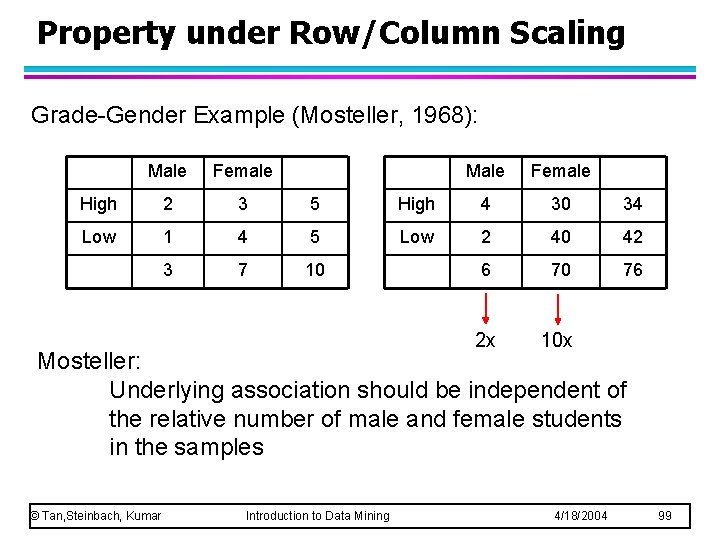

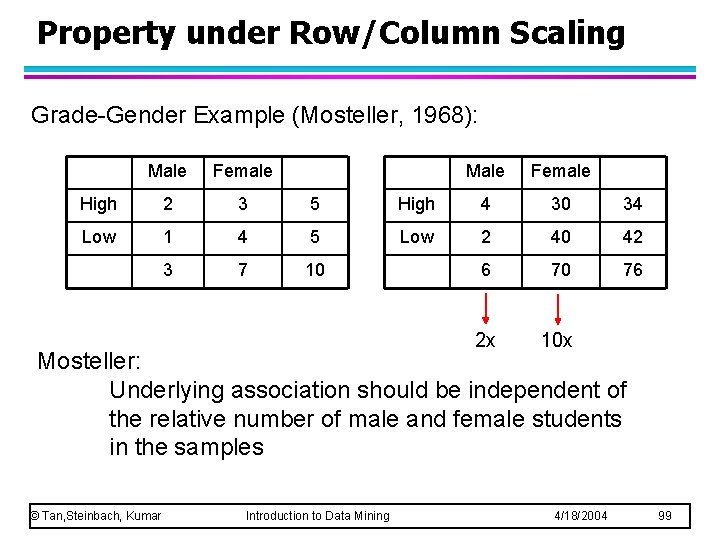

Property under Row/Column Scaling Grade-Gender Example (Mosteller, 1968): Male Female High 2 3 5 Low 1 4 5 3 7 10 Male Female High 4 30 34 Low 2 40 42 6 70 76 2 x 10 x Mosteller: Underlying association should be independent of the relative number of male and female students in the samples © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 99

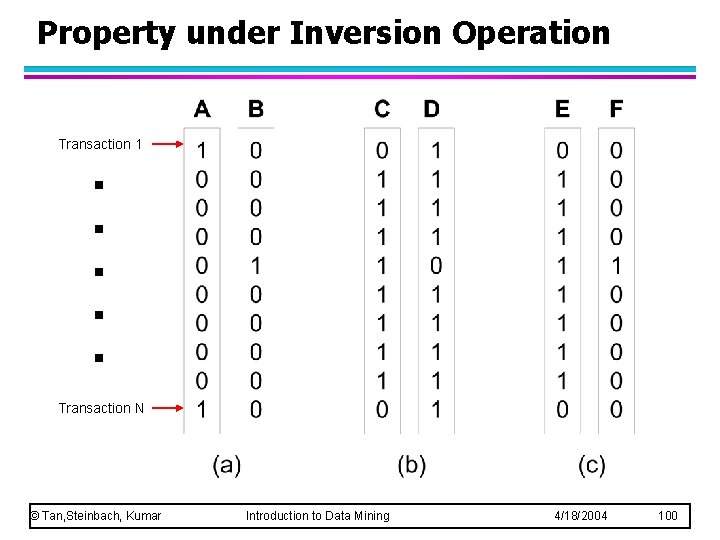

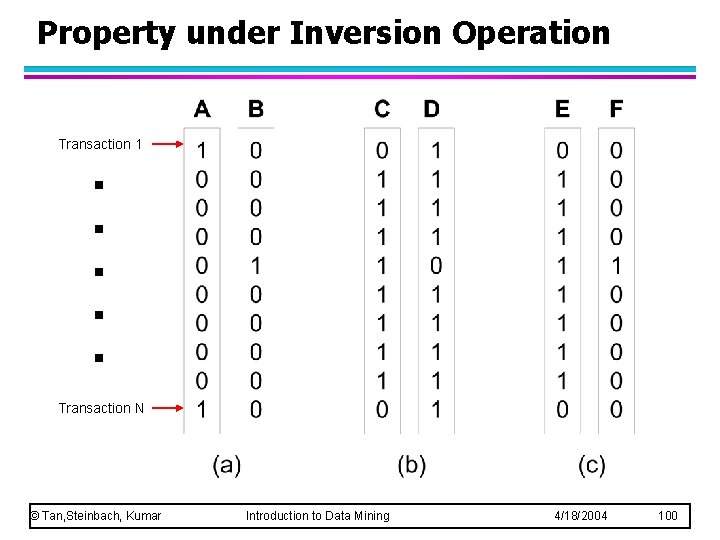

Property under Inversion Operation . . . Transaction 1 Transaction N © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 100

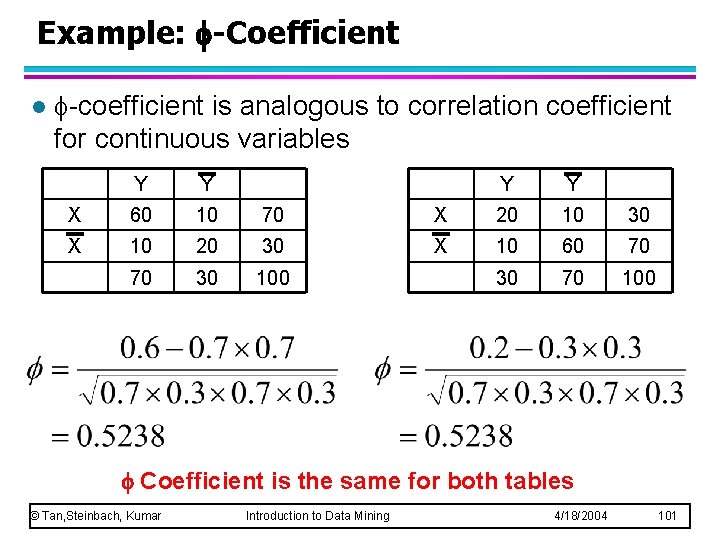

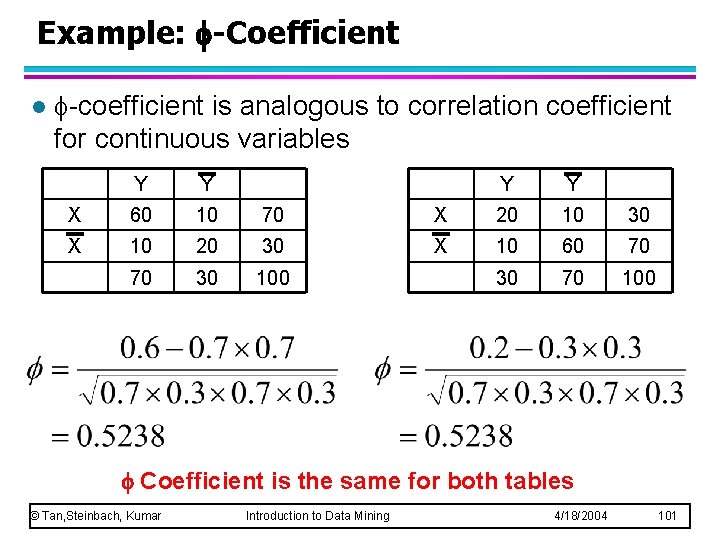

Example: -Coefficient l -coefficient is analogous to correlation coefficient for continuous variables Y Y X 60 10 70 X 10 20 30 70 30 100 Y Y X 20 10 30 X 10 60 70 30 70 100 Coefficient is the same for both tables © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 101

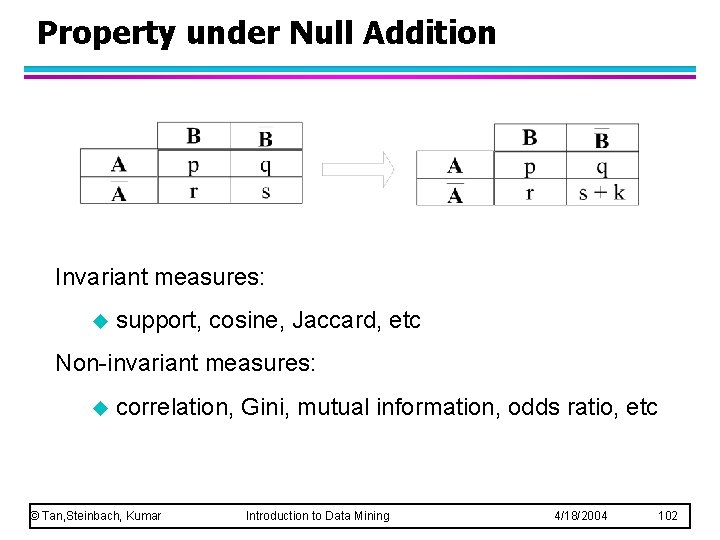

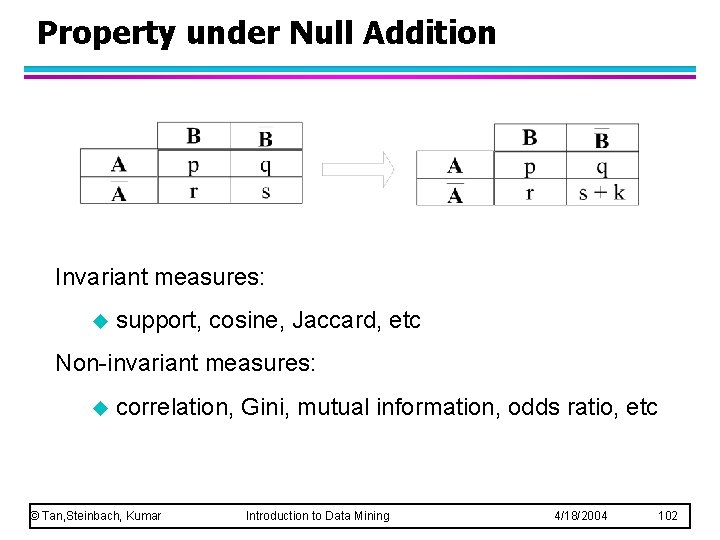

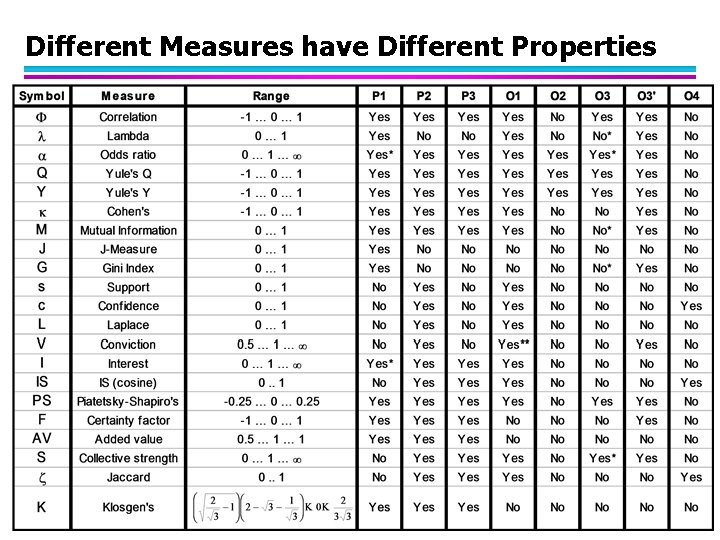

Property under Null Addition Invariant measures: u support, cosine, Jaccard, etc Non-invariant measures: u correlation, Gini, mutual information, odds ratio, etc © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 102

Different Measures have Different Properties © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 103