Communicating Efficiently Coding Theory The Problem The Problem

Communicating Efficiently Coding Theory

The Problem

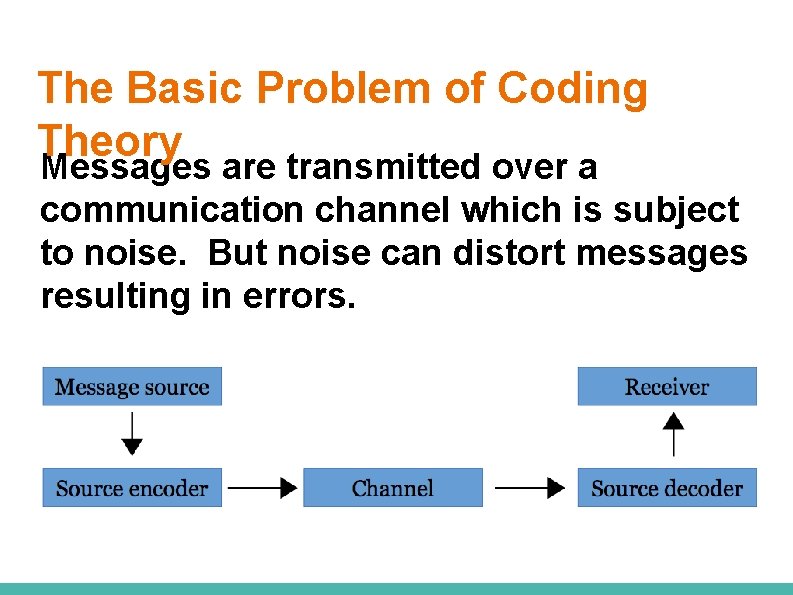

The Problem Sending data from one place to another (through a channel) may yield errors. ● How do you detect such errors? ● How do you correct the errors?

Mind Test “It tkaes a geart dael of cuoarge to satnd up to yuor enimees, but a geart dael mroe to satnd up to yuor freidns. ” -Dumbledore

Your Brain is a Code-cracking Machine. For emaxlpe, it deson’t mttaer in waht oredr the ltteers in a wrod aepapr, the olny iprmoatnt tihng is taht the frist and lsat ltteer are in the rghit pcale. The rset can be a toatl mses and you can sitll raed it wouthit pobelrm.

Your Brain is a Code-cracking Machine. ● S 1 M 1 L 4 RLY, Y 0 UR M 1 ND 15 R 34 D 1 NG 7 H 15 4 U 70 M 471 C 4 LLY W 17 H 0 U 7 3 V 3 N 7 H 1 NK 1 NG 4 B 0 U 7 17. ● http: //www. livescience. com/18392 reading-jumbled-words. html

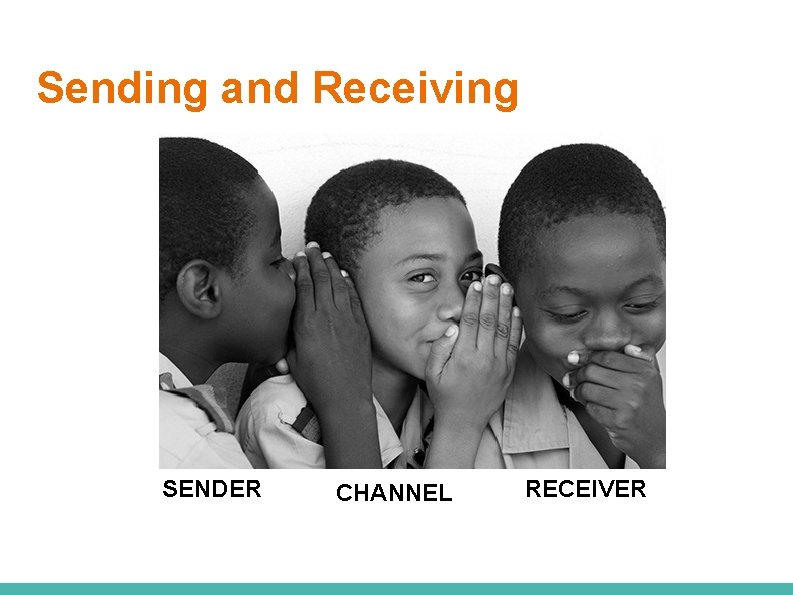

Sending and Receiving SENDER CHANNEL RECEIVER

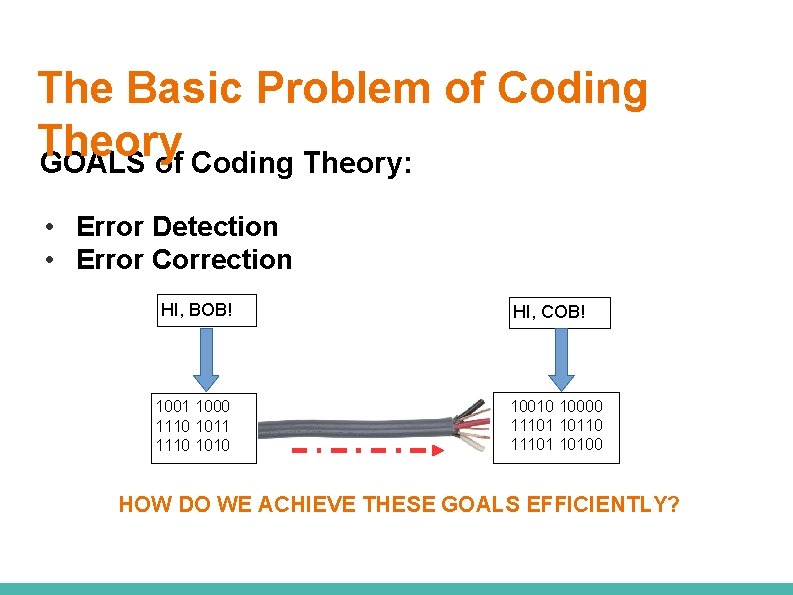

The Basic Problem of Coding Theory Messages are transmitted over a communication channel which is subject to noise. But noise can distort messages resulting in errors.

The Basic Problem of Coding Theory GOALS of Coding Theory: • Error Detection • Error Correction HI, BOB! 1001 1000 1110 1011 1110 1010 HI, COB! 10010 10000 11101 10110 11101 10100 HOW DO WE ACHIEVE THESE GOALS EFFICIENTLY?

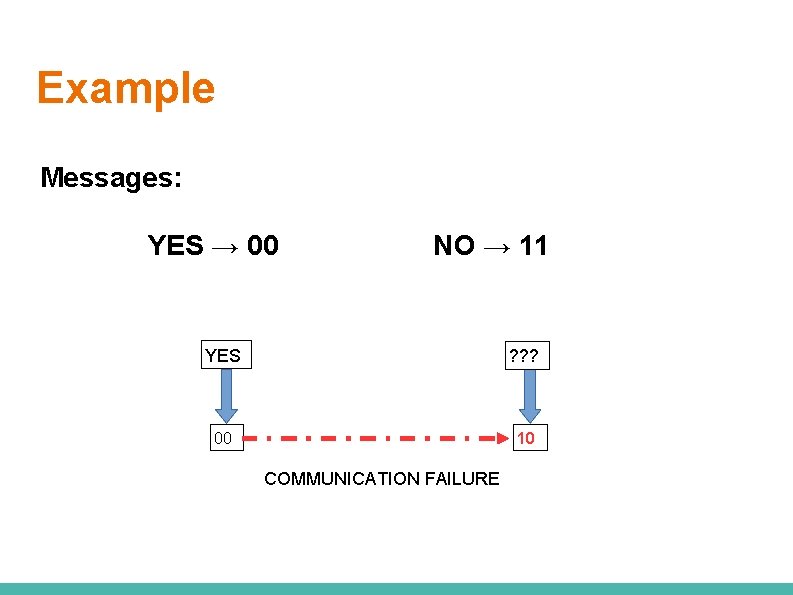

Example Messages: YES → 00 NO → 11 YES ? ? ? 00 10 COMMUNICATION FAILURE

What is coding theory?

What is coding theory? CODING THEORY deals with the design of errorcorrecting codes for the reliable transmission of information across noisy channels.

Imperfect Transmission of Data is usually transferred in the form of a series of 0 s and 1 s. However, transmission of data is not perfect.

Some Causes An electric surge, cross-contamination from another data stream, or human error can easily change some of the 0 s to 1 s and vice versa.

Noise in Communication Information media, such as communication systems and storage devices of data, are not absolutely reliable in practice because of noise or other forms of introduced interference.

Applications

Applications of Coding Theory • Transmission of pictures from distant space • Quality of sound in CDs • Establishment of computer networks • Communication through telephone lines • Messaging through wireless communication

Applications of Coding Theory When photographs are transmitted to Earth from deep space, errorcontrol codes are used to guard against the noise caused by atmospheric interruptions.

Applications of Coding Theory Compact discs (CDs) use error- control codes so that a CD player can read data from a CD even if it has been corrupted causing imperfections on the CD.

Tasks of Coding Theory

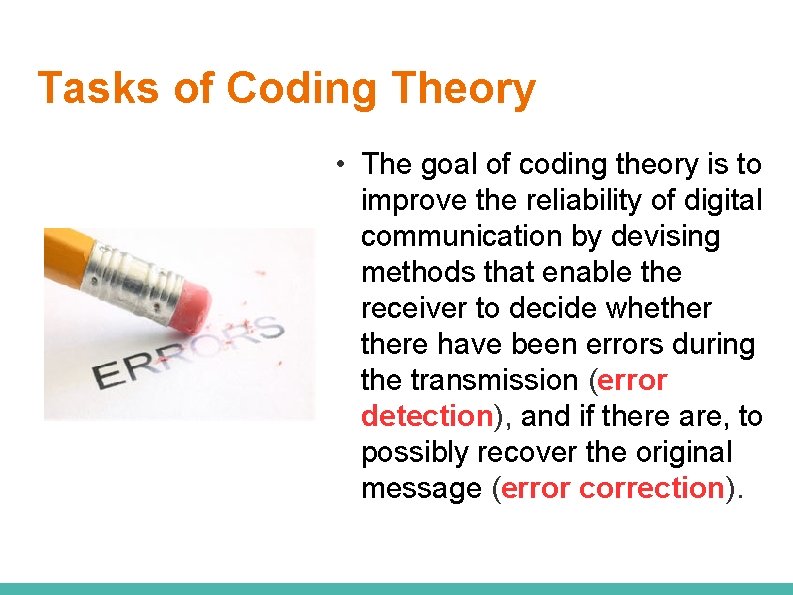

Tasks of Coding Theory • The goal of coding theory is to improve the reliability of digital communication by devising methods that enable the receiver to decide whethere have been errors during the transmission (error detection), and if there are, to possibly recover the original message (error correction).

Terms to Understand

Source Coding Source coding involves changing the message source, such as a data terminal or the human voice, to a suitable code for transmission through the channel. The source encoder transforms the source output into a sequence of symbols which we call a “message”. An example of source coding is the ASCII code.

Terminologies Codeword a string of 0's and 1's representing an actual message Stop → 000 Go → 101 Wait → 110

Terminologies Stop → 000 Go → 101 Wait → 110 000, 101, 110 are codewords 100, 111, 001, 010, 011 are NOT codewords The LENGTH OF A CODEWORD is the number of binary digits it has.

Terminologies Code - the collection or set of all codewords EXAMPLE: Stop → 000 Go → 101 Wait → 110 The code is C = {000, 101, 110}.

Terminologies Encode STOP “message“ becomes a “codeword“ STOP 000 SOURCE ENCODER 000

Terminologies Decode “received word“ is reverted back to a “message“ 000 SOURCE DECODER STOP

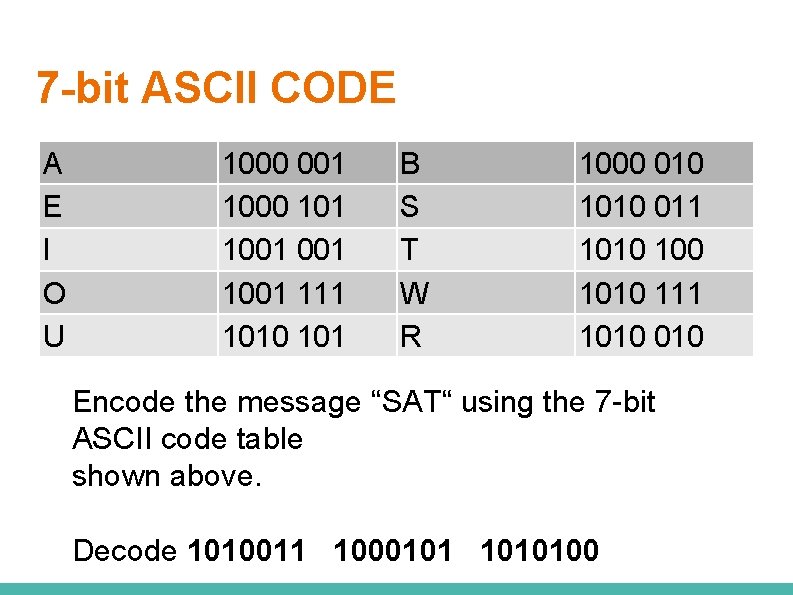

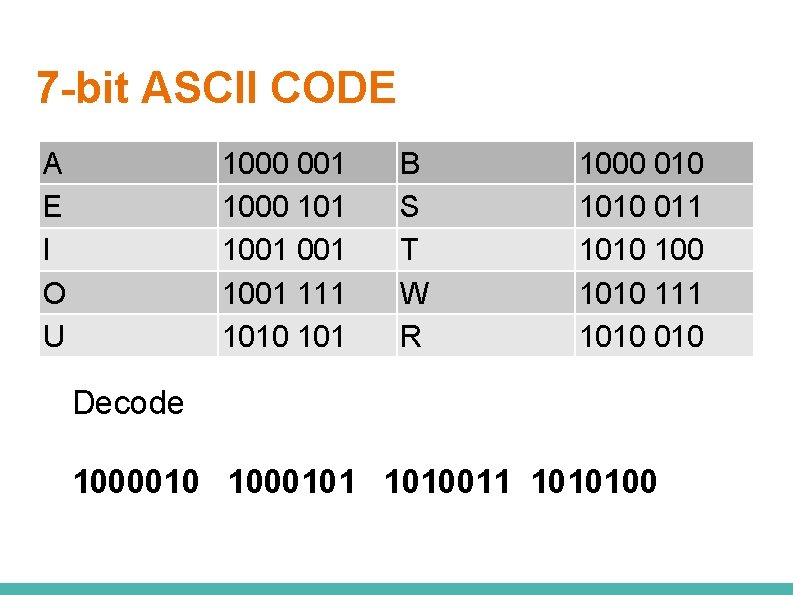

7 -bit ASCII CODE A E I O U 1000 001 1000 101 1001 111 1010 101 B S T W R 1000 010 1010 011 1010 111 1010 Encode the message “SAT“ using the 7 -bit ASCII code table shown above. Decode 1010011 1000101 1010100

7 -bit ASCII CODE A E I O U 1000 001 1000 101 1001 111 1010 101 B S T W R 1000 010 1010 011 1010 111 1010 Decode 1000010 1000101 1010011 1010100

Simple Codes

Parity Check Codes The simplest form of error detection is parity, where a single bit is appended to a bit string. A bit string has odd parity if the number of 1 s in the string is odd. A bit string has even parity if the number of 1 s in the string is even. Even parity is more common, but both are used.

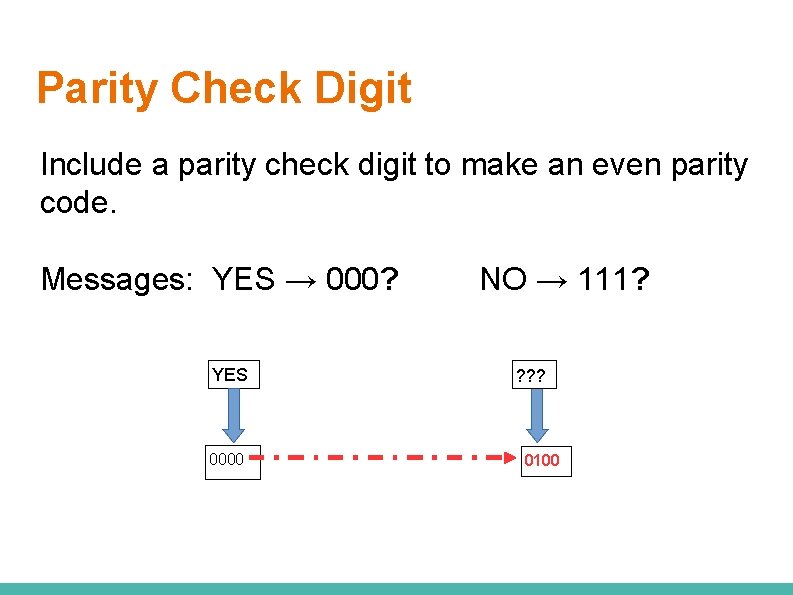

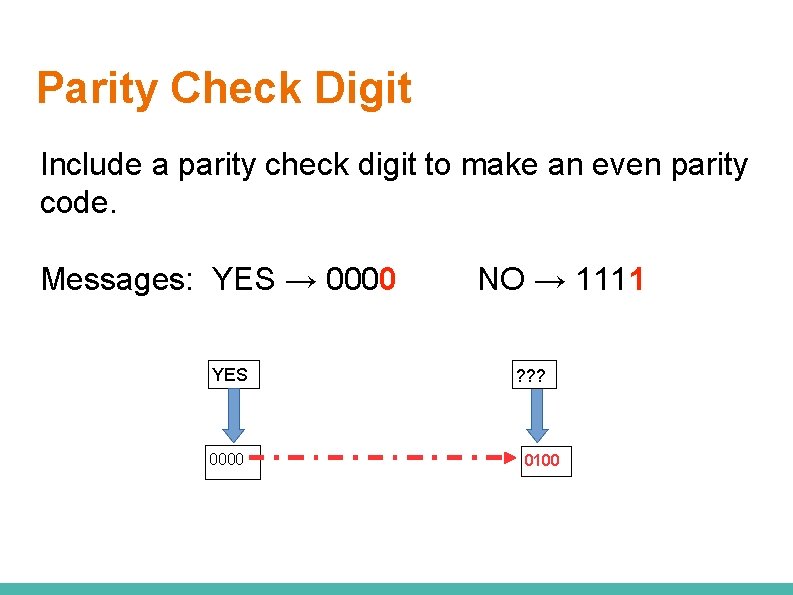

Parity Check Digit Include a parity check digit to make an even parity code. Messages: YES → 000? YES 0000 NO → 111? ? 0100

Parity Check Digit Include a parity check digit to make an even parity code. Messages: YES → 0000 YES 0000 NO → 1111 ? ? ? 0100

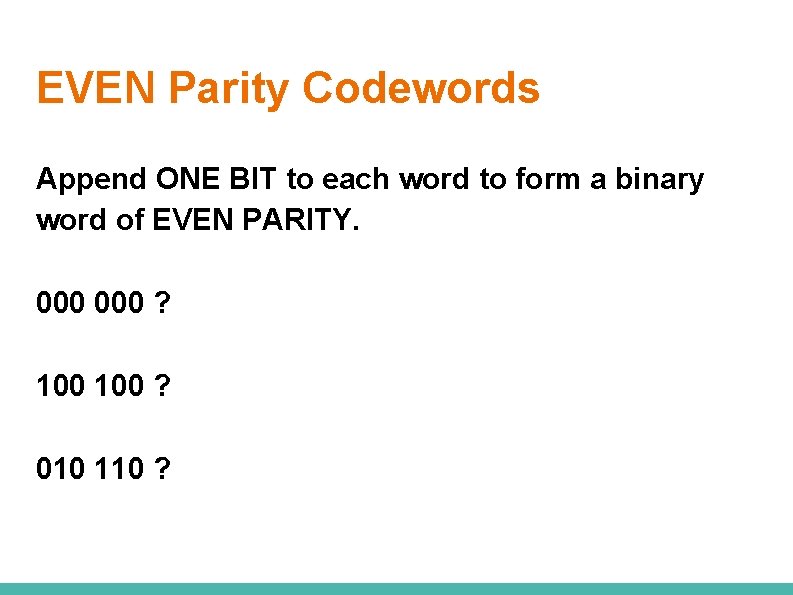

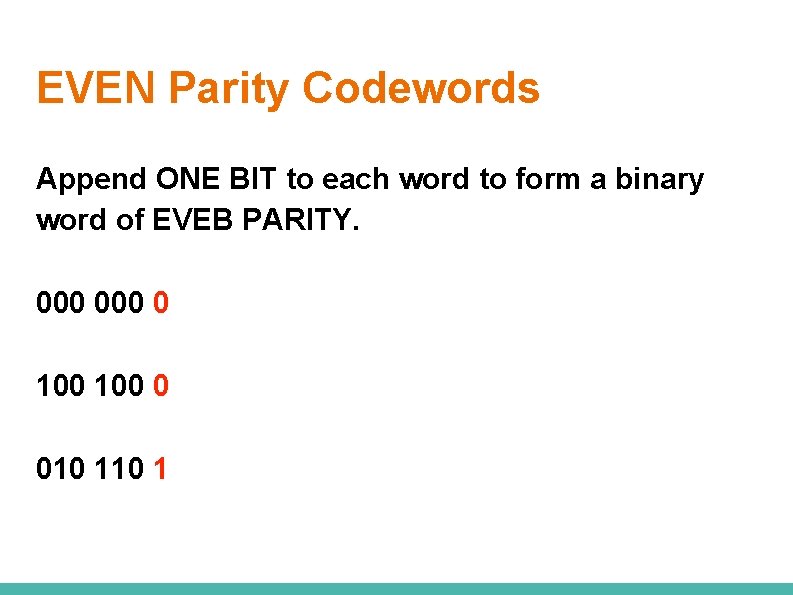

EVEN Parity Codewords Append ONE BIT to each word to form a binary word of EVEN PARITY. 000 ? 100 ? 010 110 ?

EVEN Parity Codewords Append ONE BIT to each word to form a binary word of EVEB PARITY. 000 0 100 0 010 1

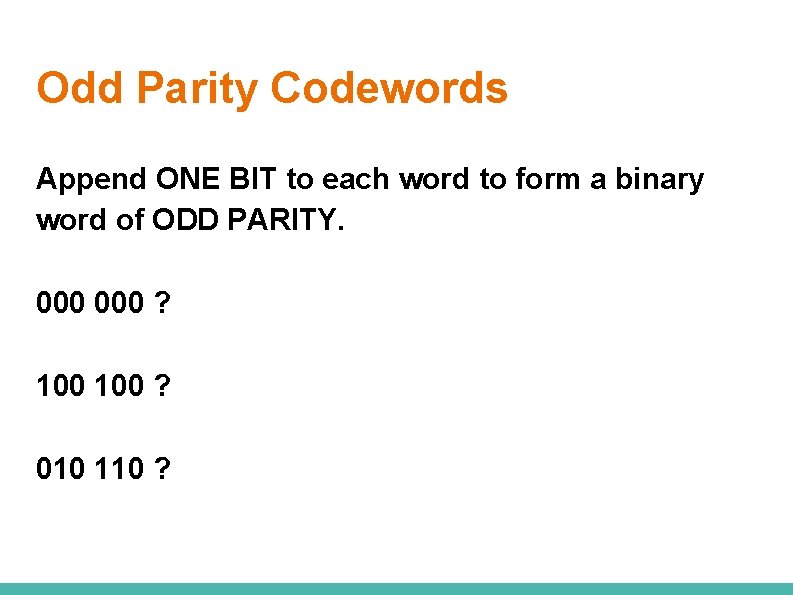

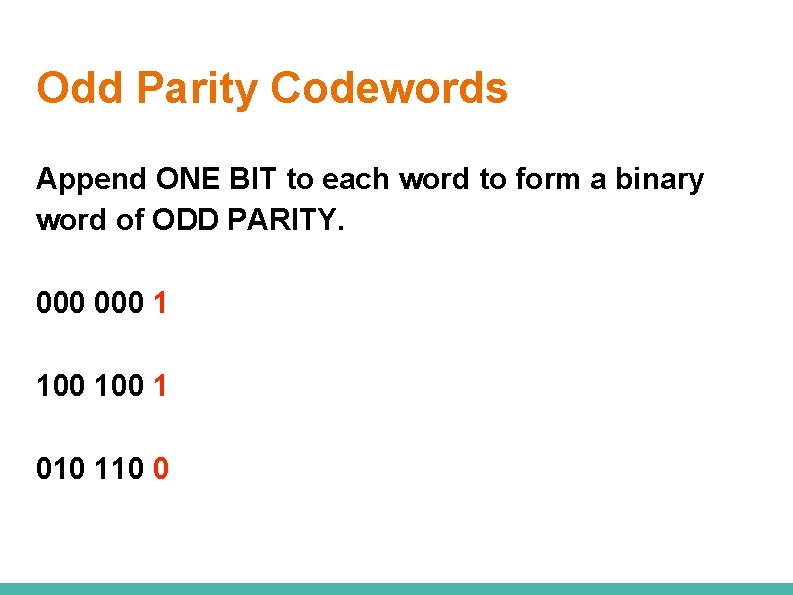

Odd Parity Codewords Append ONE BIT to each word to form a binary word of ODD PARITY. 000 ? 100 ? 010 110 ?

Odd Parity Codewords Append ONE BIT to each word to form a binary word of ODD PARITY. 000 1 100 1 010 110 0

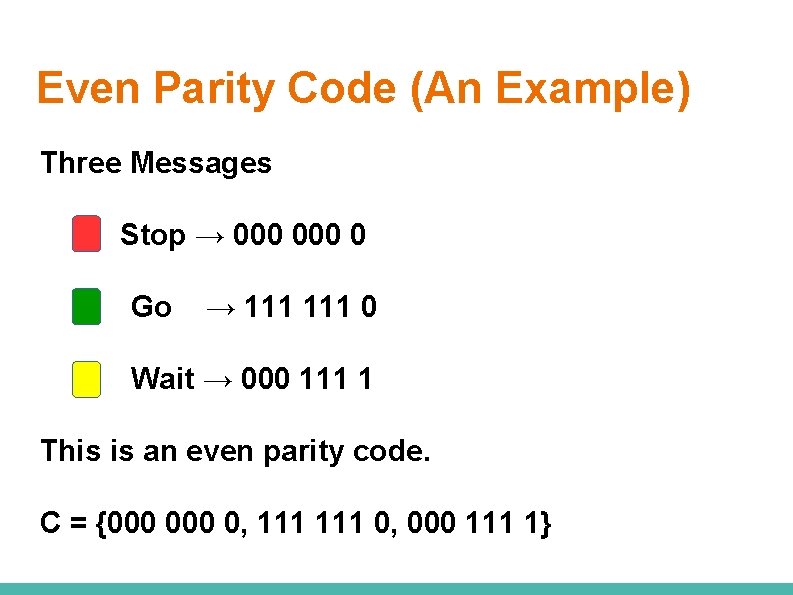

Even Parity Code (An Example) Three Messages Stop → 000 0 Go → 111 0 Wait → 000 111 1 This is an even parity code. C = {000 0, 111 0, 000 111 1}

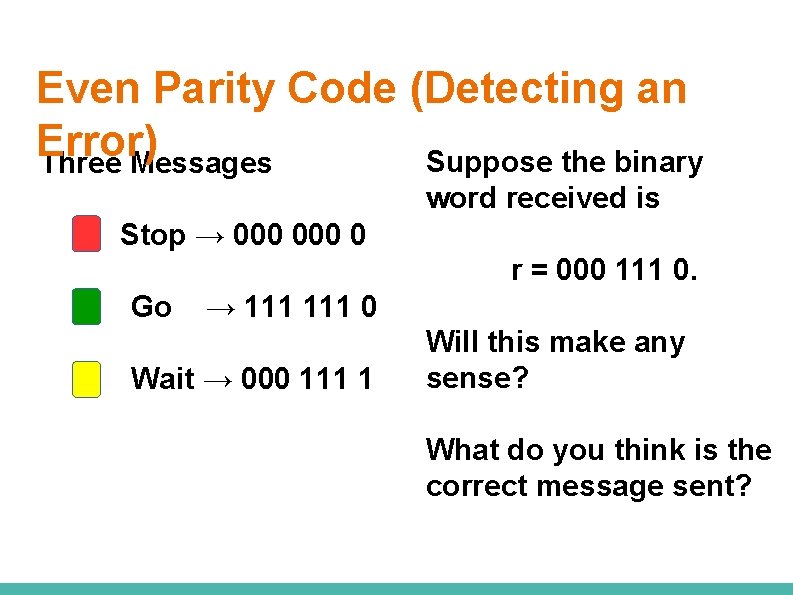

Even Parity Code (Detecting an Error) Suppose the binary Three Messages word received is Stop → 000 0 r = 000 111 0. Go → 111 0 Wait → 000 111 1 Will this make any sense? What do you think is the correct message sent?

Odd Parity Code (An Example) Write all codewords in an odd parity code of length 4. • Identify all binary words of length 3. • How many are they? • Add a parity check digit to each binary word to create all codewords of length 4.

Odd Parity Code of Length 4 0 1 0 1

Repetition Codes The simplest possible error-correcting code is the repetition code. For instance, if we wanted to send the message 1010, we could repeat each letter a certain number of times and send, say r = 3 times and we obtain, 111 000.

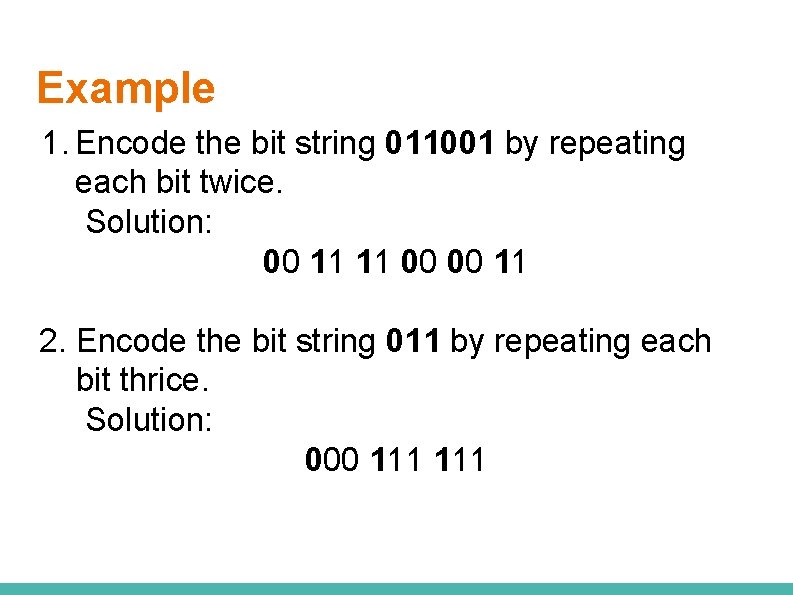

Example 1. Encode the bit string 011001 by repeating each bit twice. Solution: 00 11 11 00 00 11 2. Encode the bit string 011 by repeating each bit thrice. Solution: 000 111

Majority decoding Consider a code where each bit is repeated 3 times. Suppose we want to transmit the following bit string: 0110100. If no error is made during transmission, the receiver gets 000 111 000.

Majority decoding Now assume that the receiver gets 000 111 110 000 111 000 010. Has there been any error in this transmitted word? How do you correct it?

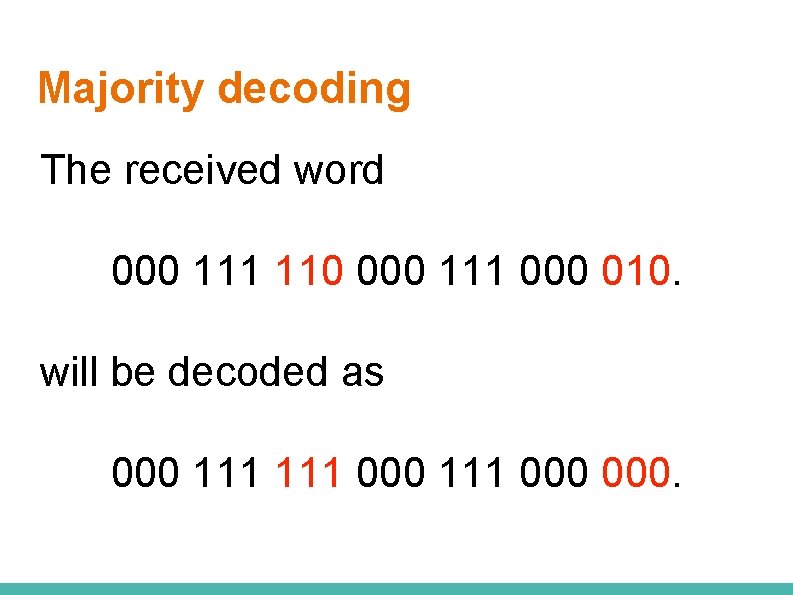

Majority decoding The received word 000 111 110 000 111 000 010. will be decoded as 000 111 000.

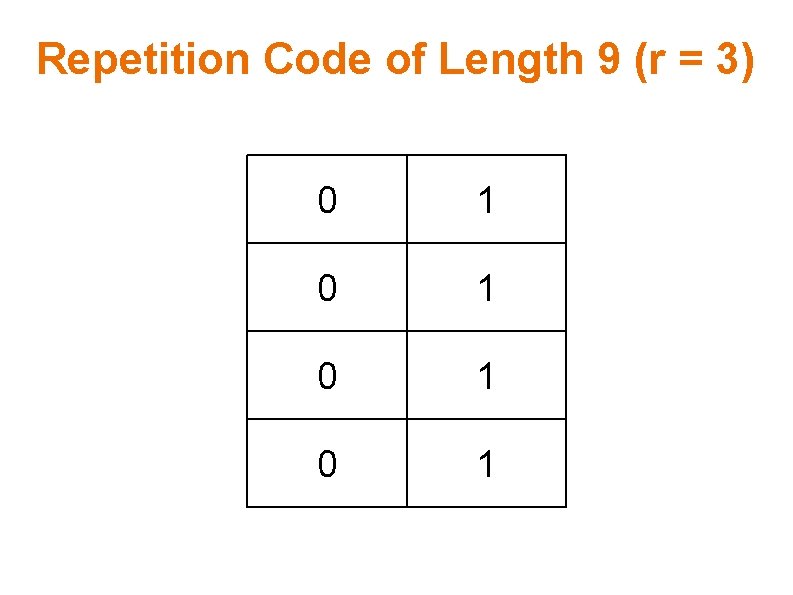

Repetition Code (An Example) Write all codewords in a repetition code of length 9 with r = 3. • Identify all binary words of length 3. • How many are they? • Repeat every bit in each binary word 3 times to form the corresponding codeword.

Repetition Code of Length 9 (r = 3) 0 1 0 1

Error Detection and Error Correction

Hamming Distance The Hamming distance between two words is the number of differences between corresponding bits. If x and y are two bits (binary strings) then their hamming distance is denoted by d(x, y). What is d(0010, 1100)? What is d(101011, 111111)?

Nearest Neighbor Decoding • Suppose that when a codeword x from a code C is sent, the bit string y is received. • If the transmission was error-free, then y would be the same as x. But if there are transmission errors, then y would not be the same as x. How can we correct errors? That is, how can we recover x?

Nearest Neighbor Decoding One approach would be to compute the Hamming distance between y and each of the codewords in C. Then, to decode y, we take the codeword of minimum Hamming distance from y, if such a codeword is unique.

Nearest Neighbor Decoding If the distance between the closest codewords in C is large enough and if sufficiently few errors were made in transmission, this codeword should be x. This type of decoding is called nearest neighbor decoding.

Example Given the code C = {0000, 0000 1111, 1111}, decode the received word y = 1111 1010. Decode this received word using the nearest-neighbour decoding process.

Example Given the code C = {0000, 0000 1111, 1111}, decode the received word y = 1111 1010. We find the hamming distance between the received word y and each codeword x in C.

Example Given the code C = {0000, 0000 1111, 1111}, decode the received word y = 1111 1010. d(y, 0000) = ? d(y, 0000 1111) = ? d(y, 1111) = ?

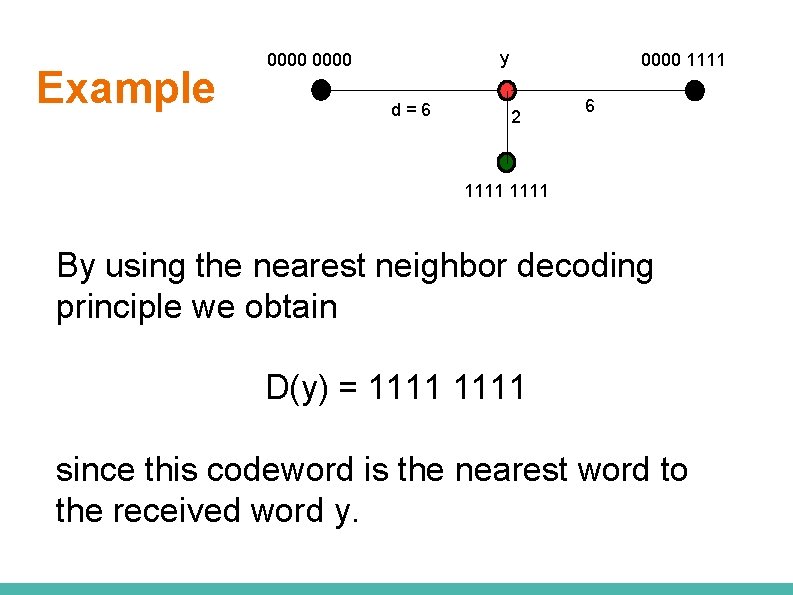

Example y 0000 d=6 0000 1111 2 6 1111 By using the nearest neighbor decoding principle we obtain D(y) = 1111 since this codeword is the nearest word to the received word y.

Minimum Distance of a Code The minimum distance of a code is the smallest distance between any two distinct codewords in the code.

Minimum Distance of a Code Example: Given C = {111, 110, 011}, we have d(111, 110) = 1, d(111, 011) = 1, d(110, 011) = 2. Therefore, the minimum distance of C is d(C) = 1.

Detection and Correction Capability of a Code Let C be a set of codewords and let 2 e + 1 be its minimum Hamming distance. Then It is possible to detect up to 2 e errors. It is possible to correct up to e errors.

Error Detection and Error Correction Suppose a code has minimum distance equal to 2 e + 1 = 15. How many errors can this code detect? How many errors can this code correct?

Error Detection and Error Correction Suppose a code has minimum distance equal to 2 e + 1 = 20. How many errors can this code detect? How many errors can this code correct?

Detection and Correction Capability of a Code Minimum Error Detection/ Correction Distance 1 2 3 4 5 No detection possible 1 -error detecting 2 -error detecting/ 1 -error correcting 3 -error detecting/ 1 -error correcting 4 -error detecting/ 2 -error correcting

- Slides: 64