Collective Vision Using Extremely Large Photograph Collections Mark

- Slides: 16

Collective Vision: Using Extremely Large Photograph Collections Mark Lenz Camera. Net Seminar University of Wisconsin – Madison February 2, 2010 Acknowledgments: These slides combine and modify slides provided by Yantao Zheng et al. (National University of Singapore/Google)

Last Time • Distributed Collaboration • Google Goggles – Personal object recognition • World-Wide Landmark Recognition – Building the engine

Today • World-Wide Landmark Recognition – Querying the engine • Building Rome in a Day – Distributed matching and reconstruction • Discussion

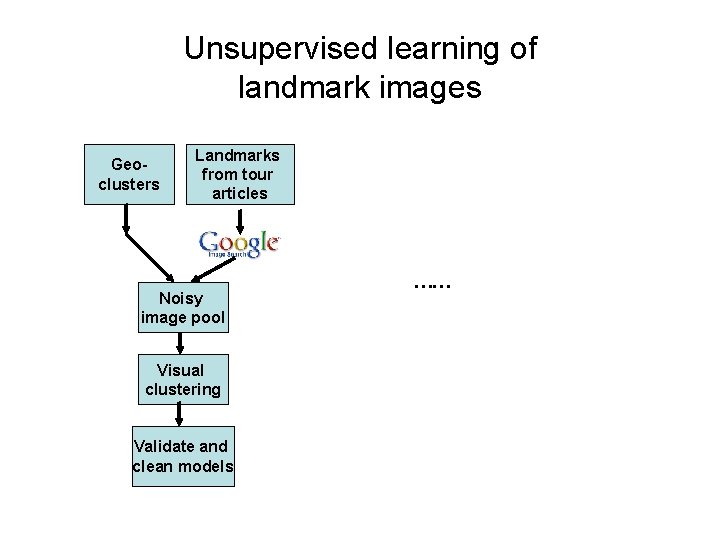

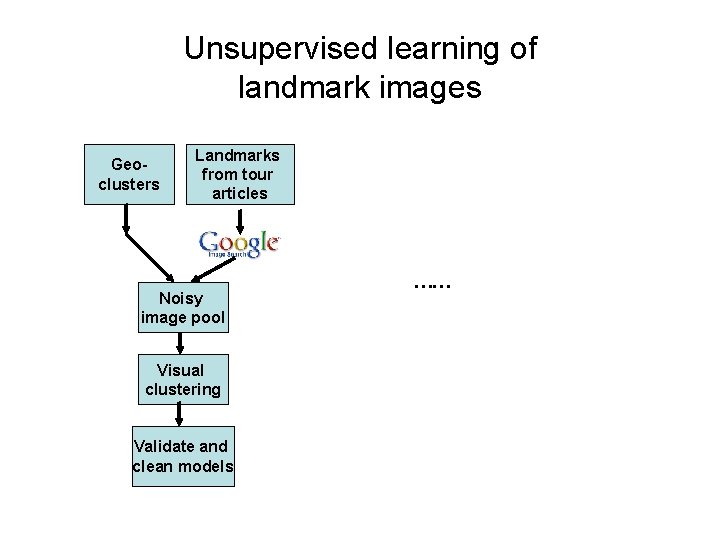

Unsupervised learning of landmark images Geoclusters Landmarks from tour articles Noisy image pool Visual clustering Validate and clean models ……

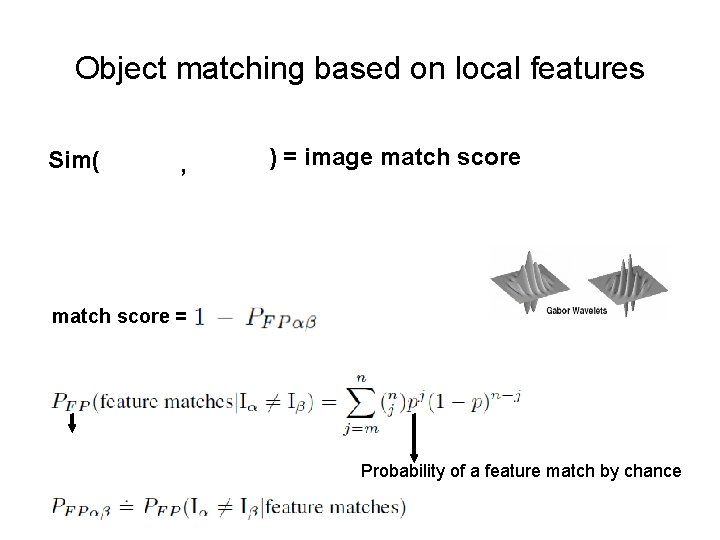

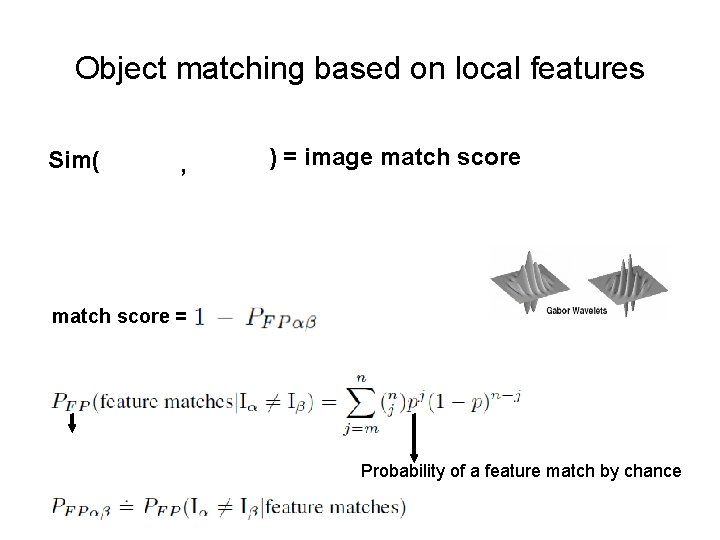

Object matching based on local features Sim( , ) = image match score = Probability of a feature match by chance

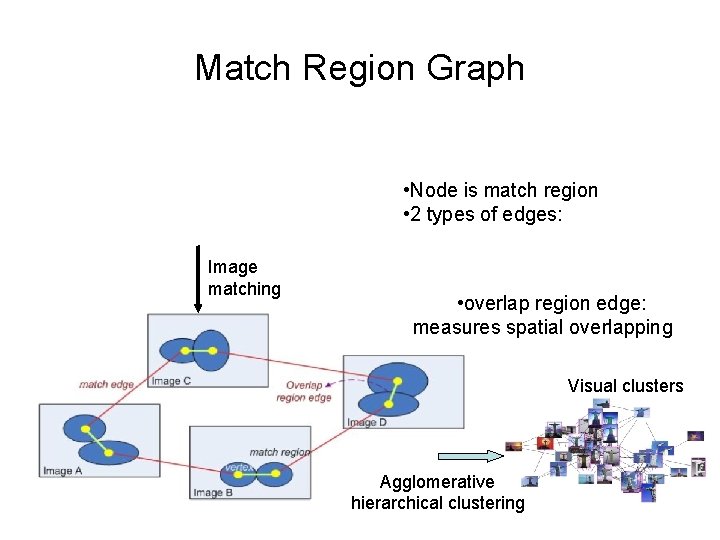

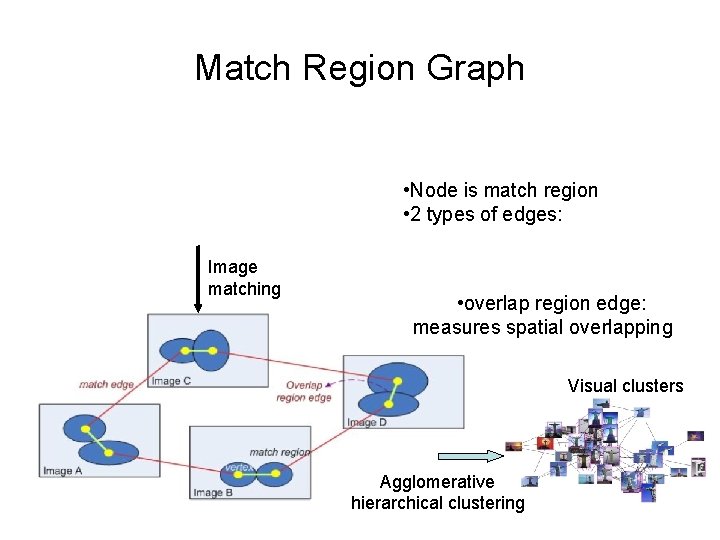

Match Region Graph • Node is match region • 2 types of edges: Image matching • overlap region edge: measures spatial overlapping Visual clusters Agglomerative hierarchical clustering

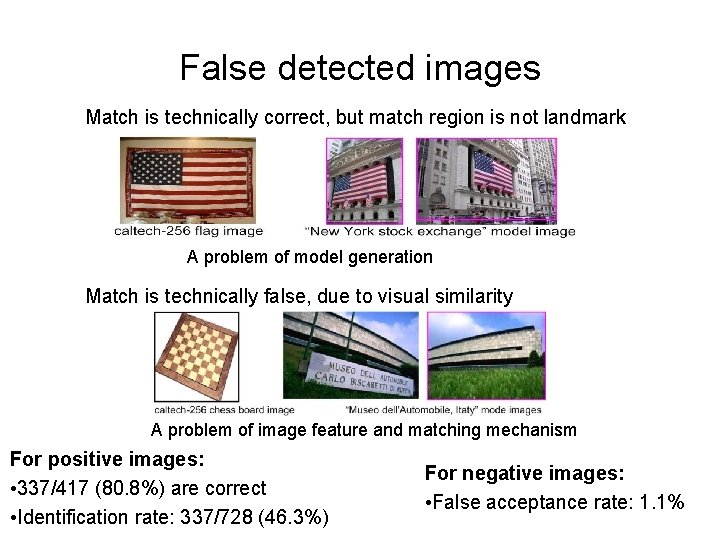

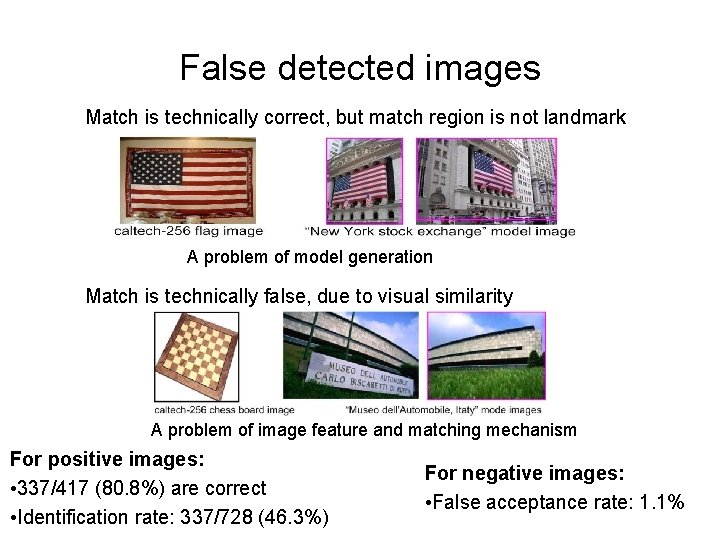

False detected images Match is technically correct, but match region is not landmark A problem of model generation Match is technically false, due to visual similarity A problem of image feature and matching mechanism For positive images: • 337/417 (80. 8%) are correct • Identification rate: 337/728 (46. 3%) For negative images: • False acceptance rate: 1. 1%

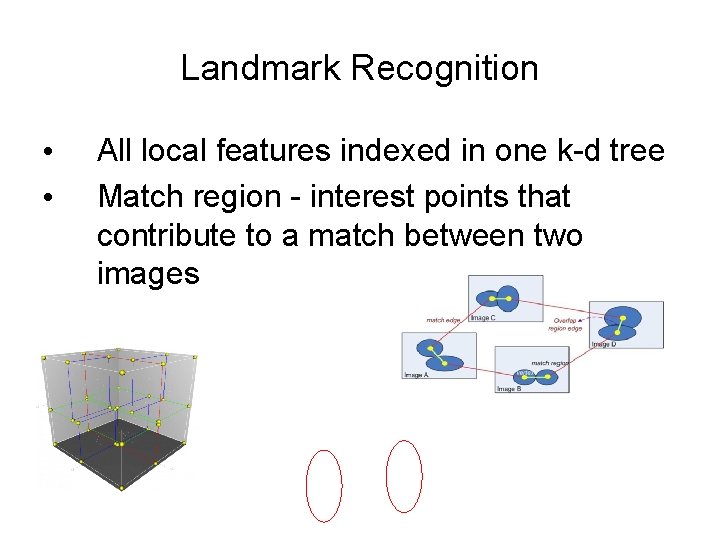

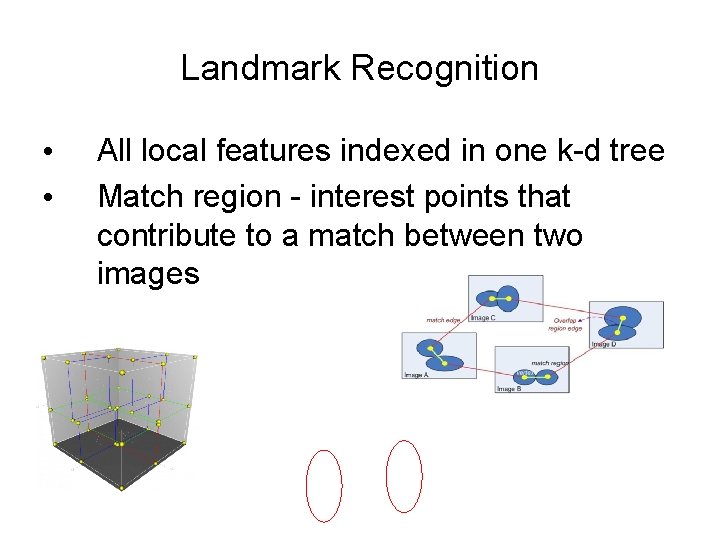

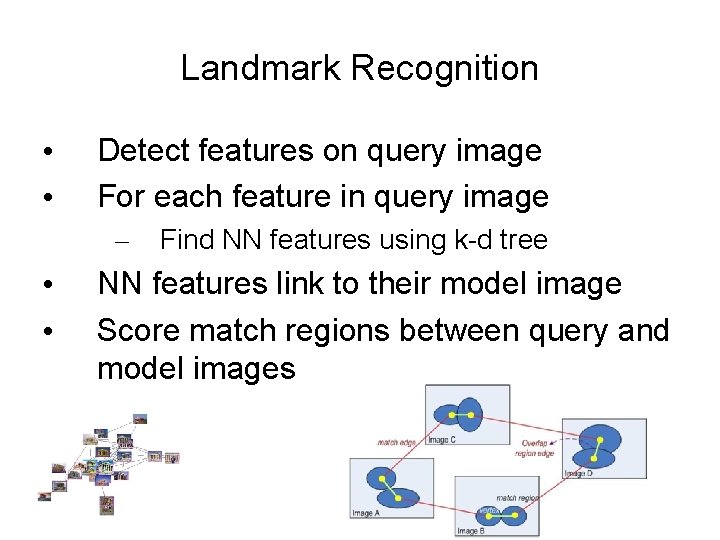

Landmark Recognition • • All local features indexed in one k-d tree Match region - interest points that contribute to a match between two images

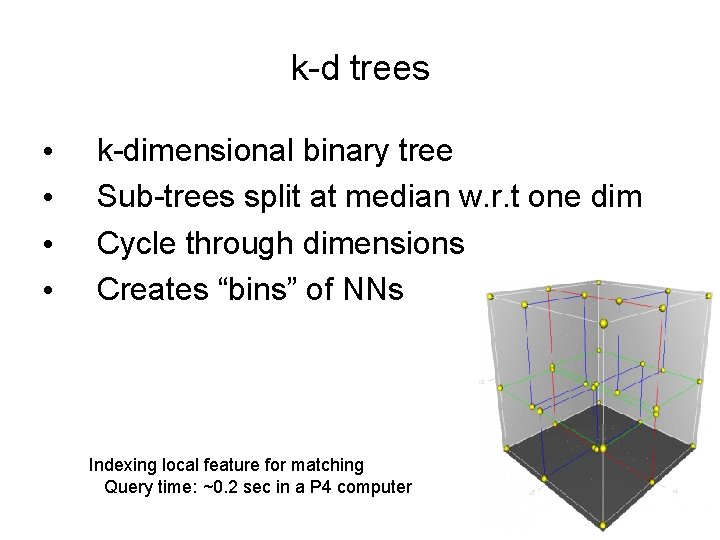

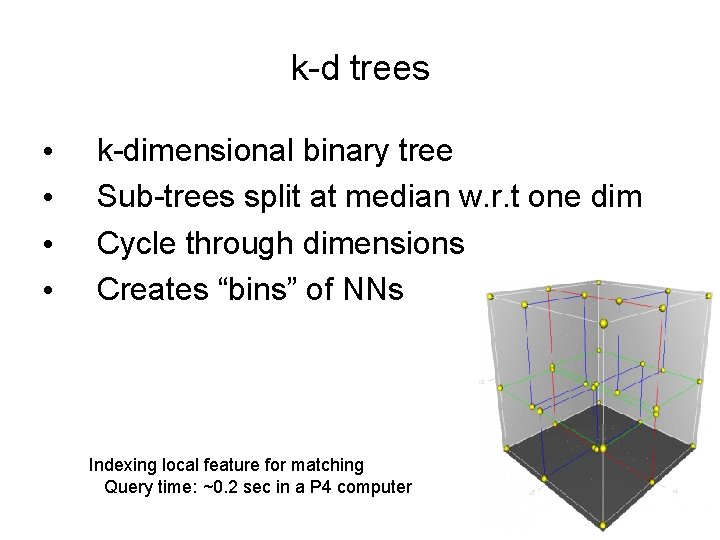

k-d trees • • k-dimensional binary tree Sub-trees split at median w. r. t one dim Cycle through dimensions Creates “bins” of NNs Indexing local feature for matching Query time: ~0. 2 sec in a P 4 computer

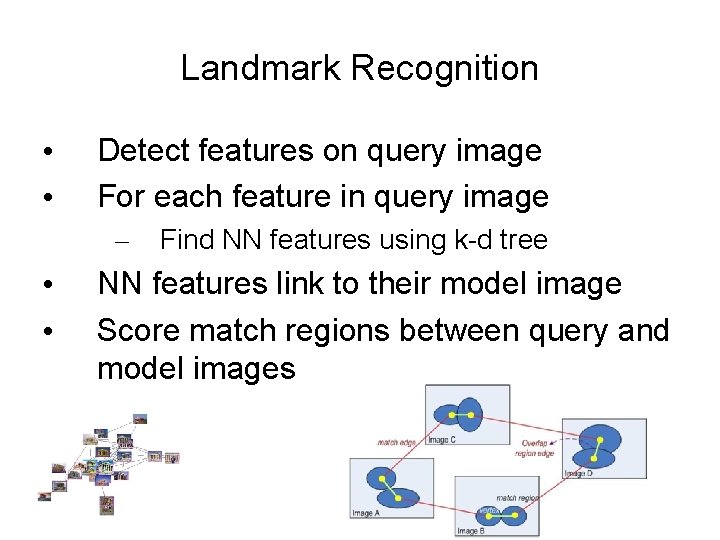

Landmark Recognition • • Detect features on query image For each feature in query image – • • Find NN features using k-d tree NN features link to their model image Score match regions between query and model images

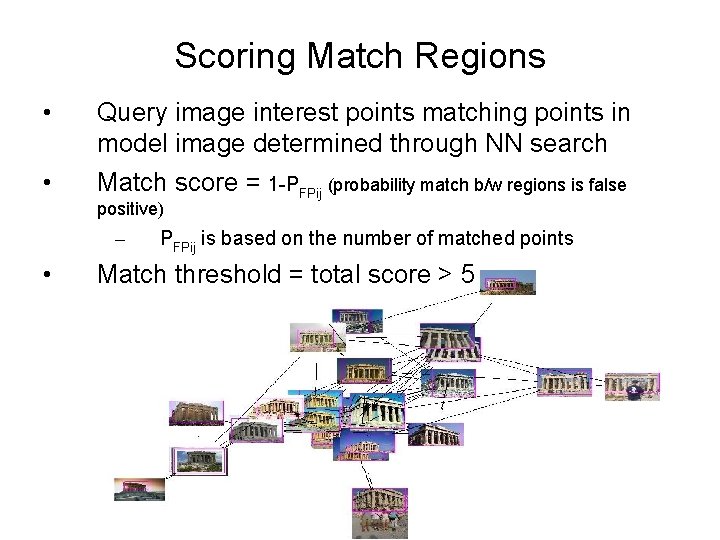

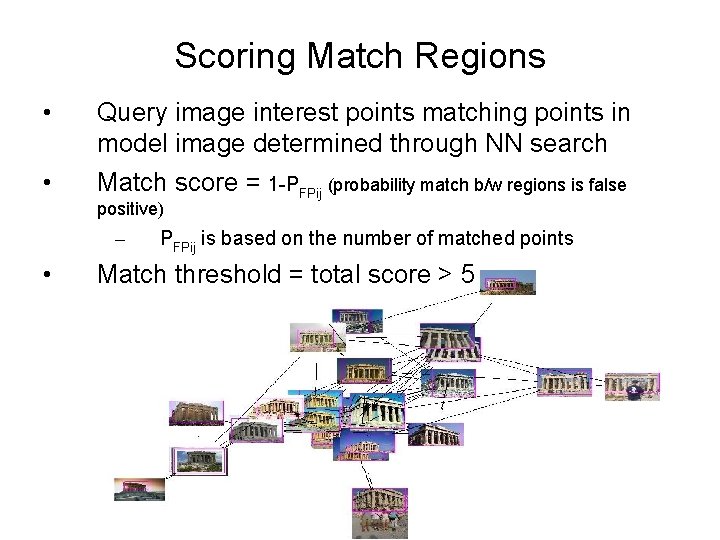

Scoring Match Regions • Query image interest points matching points in model image determined through NN search • Match score = 1 -PFPij (probability match b/w regions is false positive) – • PFPij is based on the number of matched points Match threshold = total score > 5

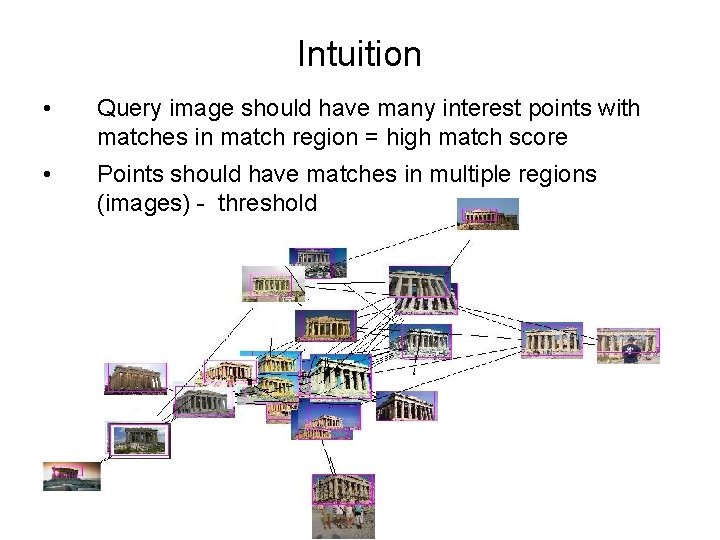

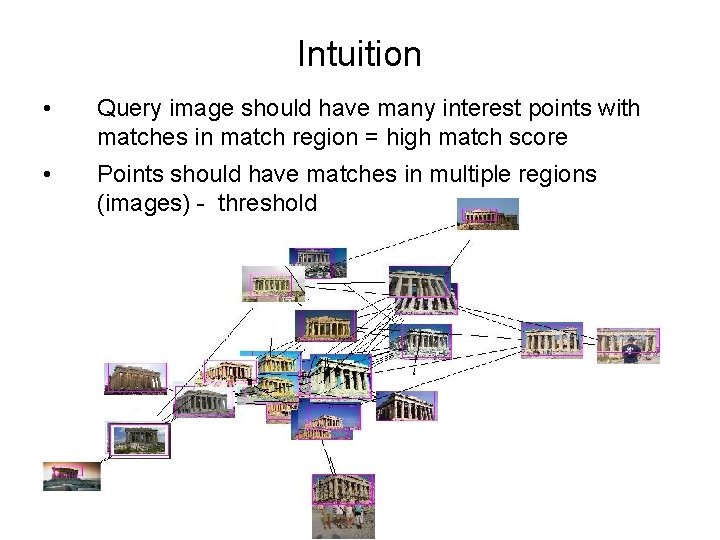

Intuition • Query image should have many interest points with matches in match region = high match score • Points should have matches in multiple regions (images) - threshold

Building Rome in a Day • Use photos from photo-sharing websites to build 3 D models of cities • Web photos less structured than automated image capture (e. g. aerial) • Increased efficiency through distributed computations

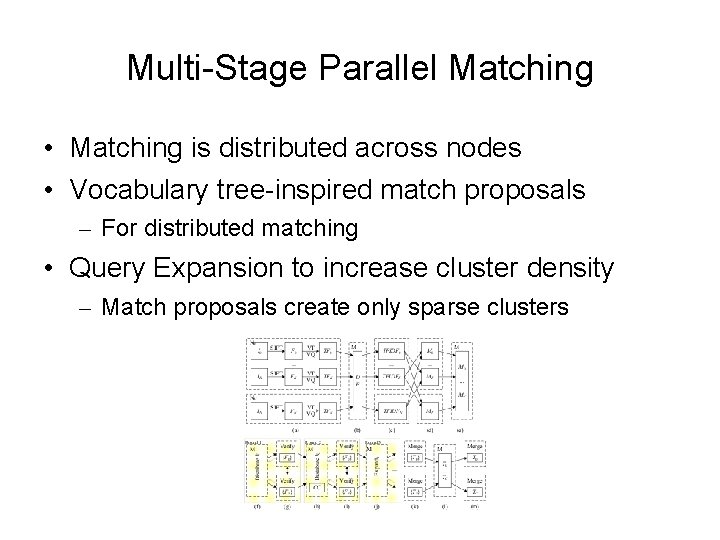

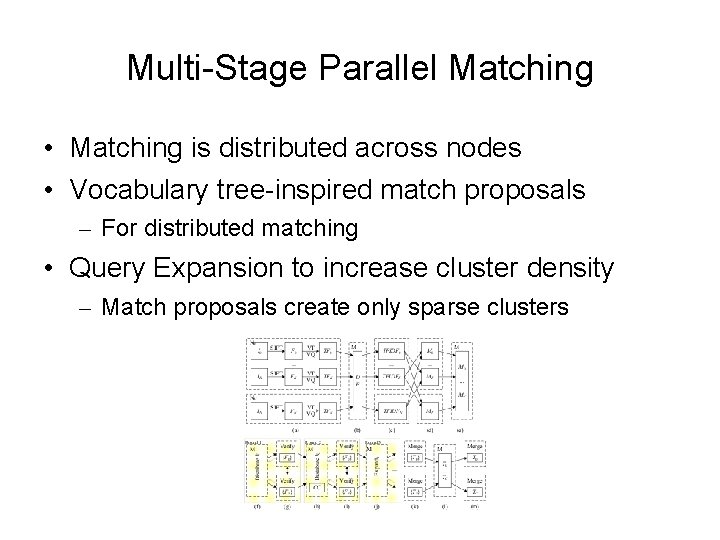

Multi-Stage Parallel Matching • Matching is distributed across nodes • Vocabulary tree-inspired match proposals – For distributed matching • Query Expansion to increase cluster density – Match proposals create only sparse clusters

Conclusion • Distributed Collaboration • Google Goggles – Personal object recognition • World-Wide Landmark Recognition • Building Rome in a Day – Distributed matching and reconstruction

Thoughts for Discussion • Geo-clustering to filter out seldom traveled/photographed sites • Match region graph for view comparison • Pre-tag landmarks such as exits • Augmented reality • Distributed matching of features • Ad-hoc wireless network range • Other thoughts. . .