Collaborative capturing and interpretation of interactions Y Sumi

Collaborative capturing and interpretation of interactions Y. Sumi, I. Sadanori, T. Matsuguchi, S. Fels, and K. Mase Pervasive 2004 Workshop on Memory and Sharing of Experiences, pp. 1 -7, 2004.

Overview • • Introduction Capturing interactions by multiple sensors Related works Implementation Interpreting interactions Video summary Corpus viewer: Tool for analyzing interaction patterns conclusions

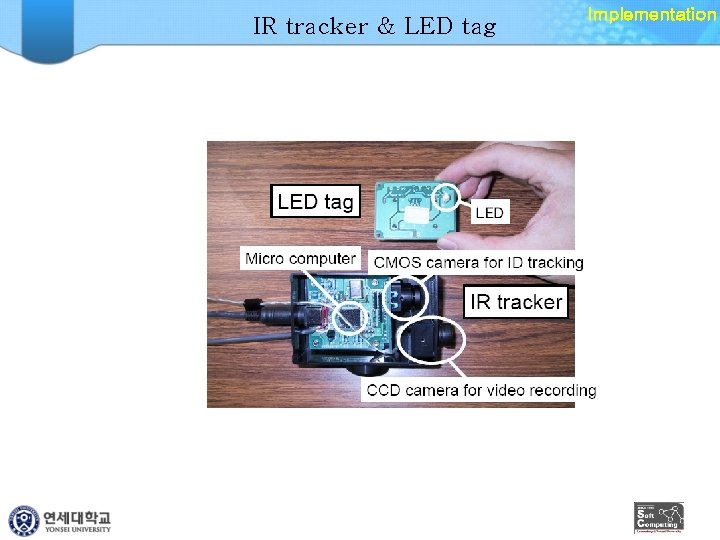

Introduction • Interaction corpus – Action highlights • Generate diary – Social protocols of human interactions • Sensors – Video cameras, microphone and physiological sensors • ID tags – LED tag: infrared LED – IR tracker: • Infrared signal tracking device • Position and identity

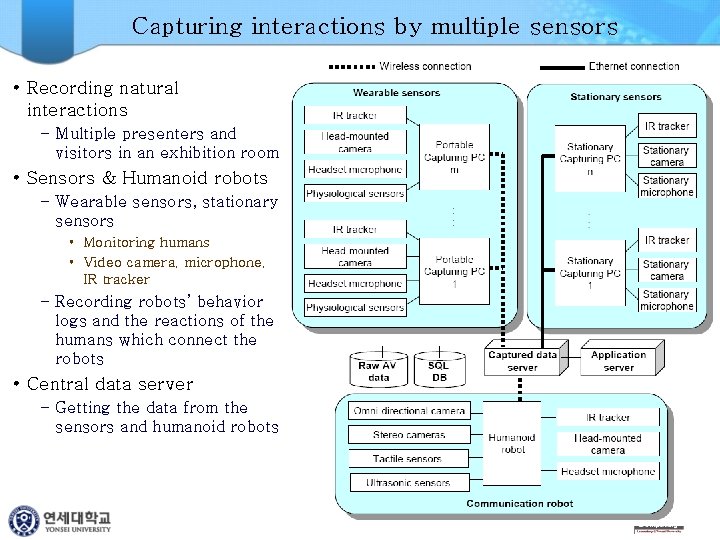

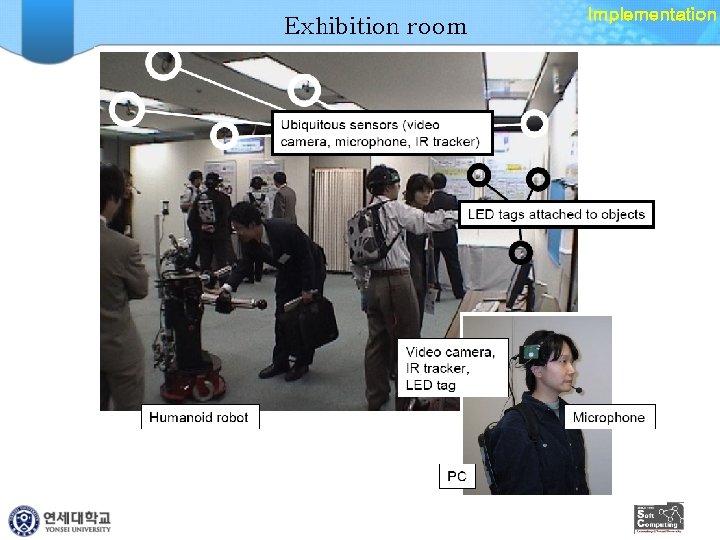

Capturing interactions by multiple sensors • Recording natural interactions – Multiple presenters and visitors in an exhibition room • Sensors & Humanoid robots – Wearable sensors, stationary sensors • Monitoring humans • Video camera, microphone, IR tracker – Recording robots’ behavior logs and the reactions of the humans which connect the robots • Central data server – Getting the data from the sensors and humanoid robots

Related works • Smart environment – Supporting humans in a room – The Smart rooms, Intelligent room, Aware. Home, Kidsroom and Easy. Living – Recognition of human behavior and understanding of the human’s intention • Wearable systems – Collecting personal daily activities – Intelligent recording system • Video summary systems – The physical quantity of video data captured by fixed cameras

Exhibition room Implementation

IR tracker & LED tag Implementation

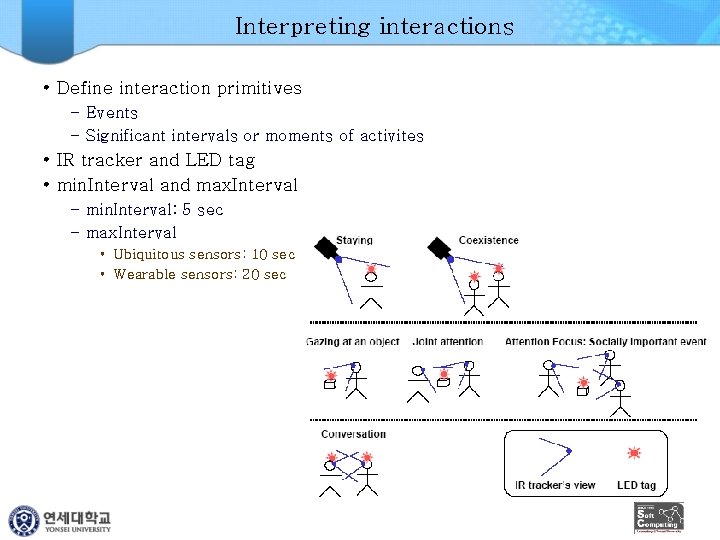

Interpreting interactions • Define interaction primitives – Events – Significant intervals or moments of activites • IR tracker and LED tag • min. Interval and max. Interval – min. Interval: 5 sec – max. Interval • Ubiquitous sensors: 10 sec • Wearable sensors: 20 sec

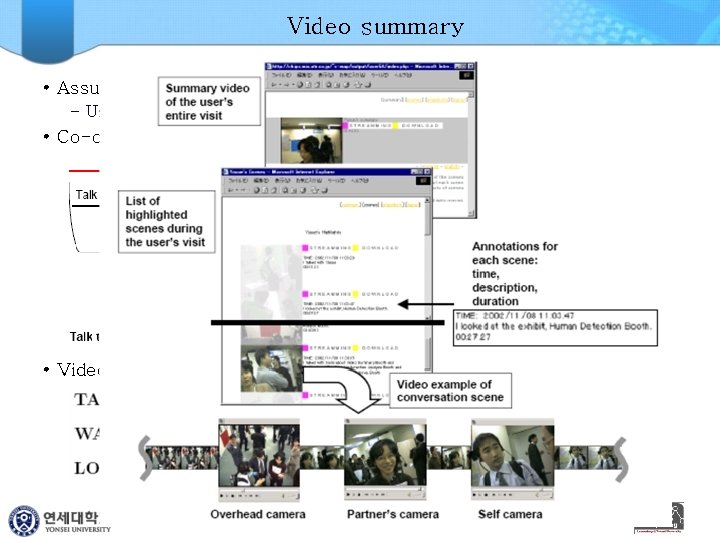

Video summary • Assumptions – User , Booth • Co-occurences • Video summarization

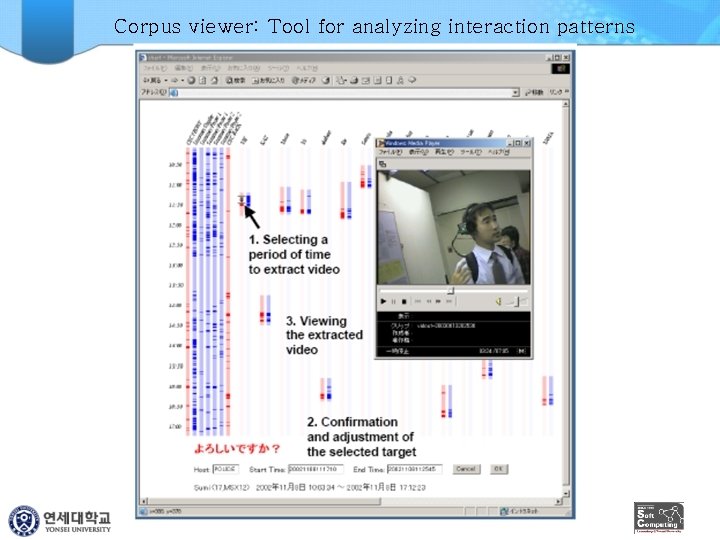

Corpus viewer: Tool for analyzing interaction patterns

conclusions • • Method to build an interaction corpus using multiple sensors Segment and interpret interactions from huge data Provide a video summary Help social scientists

Using context and similarity for face and location identification M. Davis, M. Smith, F. Stentiford, A. Bambidele, J. Canny, N. Good, S. King and R. Janakiraman Proceedings of the IS&T/SPIE 18 th Annual Symposium on Electronic Imaging Science and Technology Internet Imaging VII, 2006.

Overview • • Introduction System Overview Content Analysis Experimental Data Experimental Design Evaluation Discussion and Results Conclusions & Future Work

Introduction • New way for the unsolved image content recognition – Mobile media capture, context-sensing, programmable computation and networking in the form of the nearly ubiquitous cameraphone • Cameraphone – Platform for multimedia computing – Combination with the analysis of automatically gathered contextual metadata and media content analysis • Contextual metadata – – Temporal Spatial Social Face recognition and place recognition • Precision of face recognition – PCA 40%, SFA 50% • Precision of location recognition – Color histogram 30%, CVA 50%, contextual metadata and CVA 67%

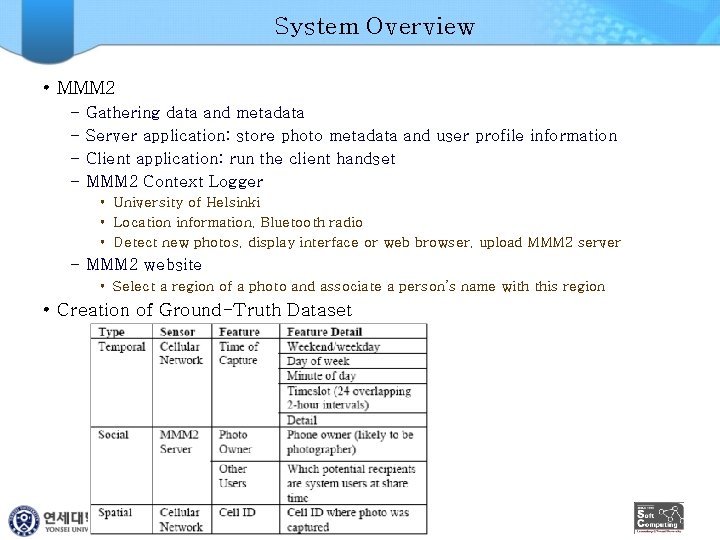

System Overview • MMM 2 – – Gathering data and metadata Server application: store photo metadata and user profile information Client application: run the client handset MMM 2 Context Logger • University of Helsinki • Location information, Bluetooth radio • Detect new photos, display interface or web browser, upload MMM 2 server – MMM 2 website • Select a region of a photo and associate a person’s name with this region • Creation of Ground-Truth Dataset

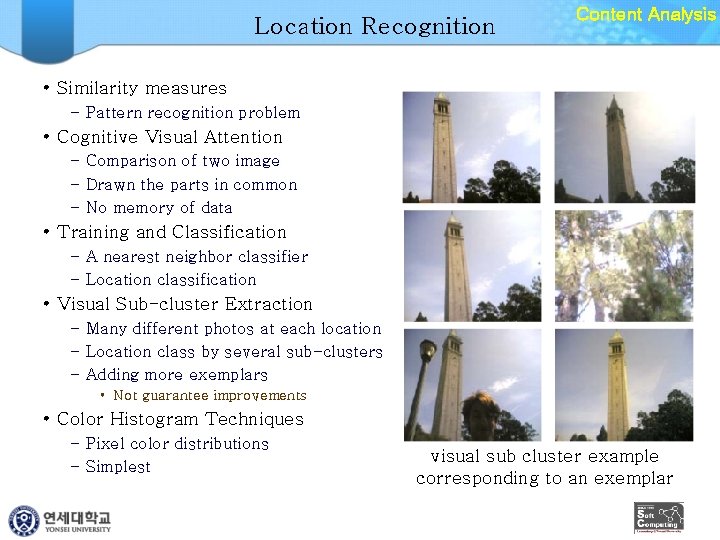

Location Recognition Content Analysis • Similarity measures – Pattern recognition problem • Cognitive Visual Attention – Comparison of two image – Drawn the parts in common – No memory of data • Training and Classification – A nearest neighbor classifier – Location classification • Visual Sub-cluster Extraction – Many different photos at each location – Location class by several sub-clusters – Adding more exemplars • Not guarantee improvements • Color Histogram Techniques – Pixel color distributions – Simplest visual sub cluster example corresponding to an exemplar

Face Recognition & GPS Content Analysis • PCA – Eigenface principle – Short training time – Best accuracy • LDA+PCA – LDA: Multiple images training • Bayesian MAP & ML – Maximum a posteriori (MAP), maximum likelihood (ML) – Difference or similarity between two photos • SFA (Sparse Factor Analysis) – – Y: a vector of (partially) observed values, X: latent vector representing user preference, m: “model” predicting user behavior, N: noise function • GPS Clustering – Suitable format – K-means and farthest first cluster

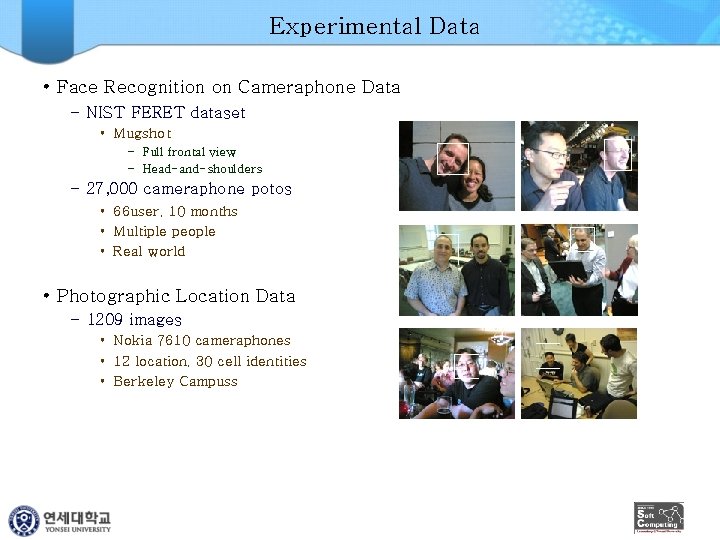

Experimental Data • Face Recognition on Cameraphone Data – NIST FERET dataset • Mugshot – Full frontal view – Head-and-shoulders – 27, 000 cameraphone potos • 66 user, 10 months • Multiple people • Real world • Photographic Location Data – 1209 images • Nokia 7610 cameraphones • 12 location, 30 cell identities • Berkeley Campuss

Experimental Design • Training gallery – Hand-labeled with the names – Min of distances between all images in the photo and training gallery image k • SFA model – Training • Contextual metadata and the face recognizer outputs • Contextual metadata only – Evaluation • Precision-recall plots for each of the computer vision algorithms – Time • • Training time: 2 minutes Training for the Bayesian classifiers: 7 hours PCA and LDA classifiers: less than 10 minutes Face recognition for 4 algorithms: less than 1 minute

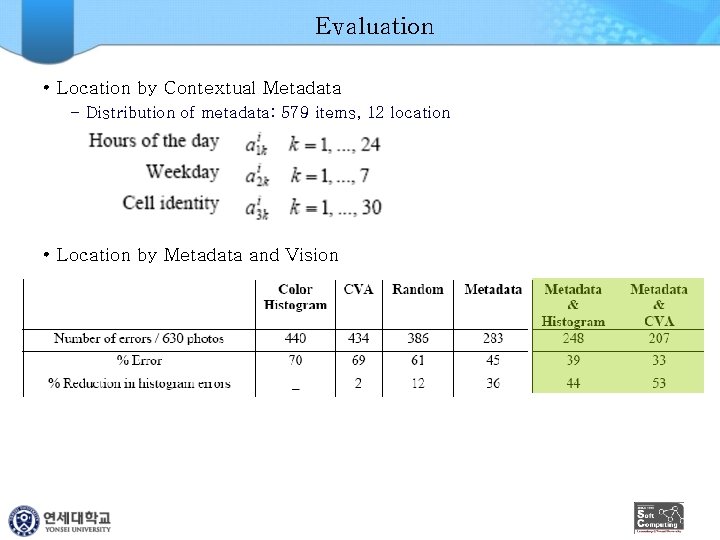

Evaluation • Location by Contextual Metadata – Distribution of metadata: 579 items, 12 location • Location by Metadata and Vision

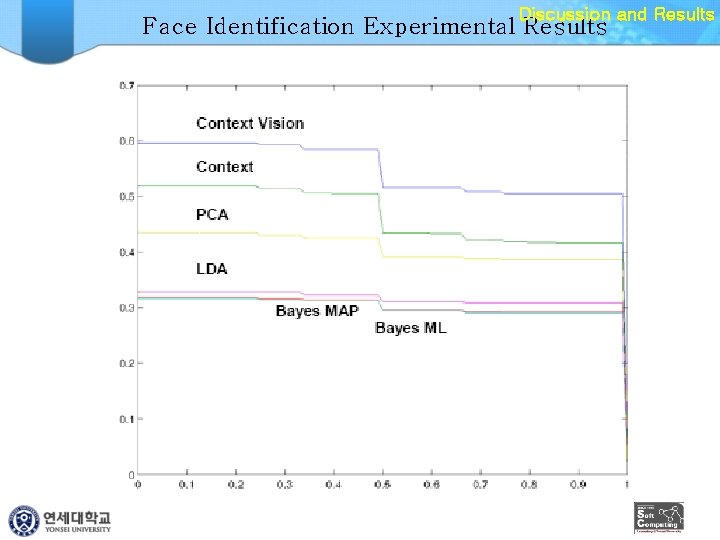

Discussion and Results Face Identification Experimental Results

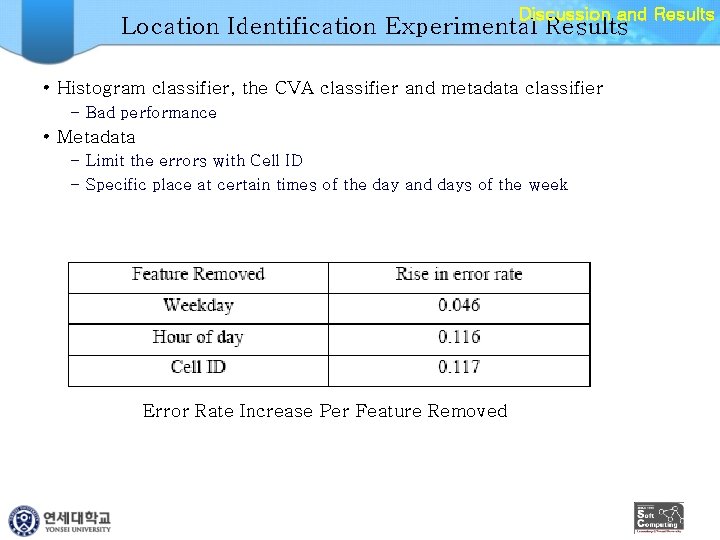

Discussion and Results Location Identification Experimental Results • Histogram classifier, the CVA classifier and metadata classifier – Bad performance • Metadata – Limit the errors with Cell ID – Specific place at certain times of the day and days of the week Error Rate Increase Per Feature Removed

Conclusions & Future Work • New approach to the automatic identification of human faces and location if mobile images • Combination of attributes – Contextual metadata – Image processing • Torso-matching • Context-aware location recognition research

- Slides: 24