Clustering Paolo Ferragina Dipartimento di Informatica Universit di

- Slides: 44

Clustering Paolo Ferragina Dipartimento di Informatica Università di Pisa Chap 16 and 17

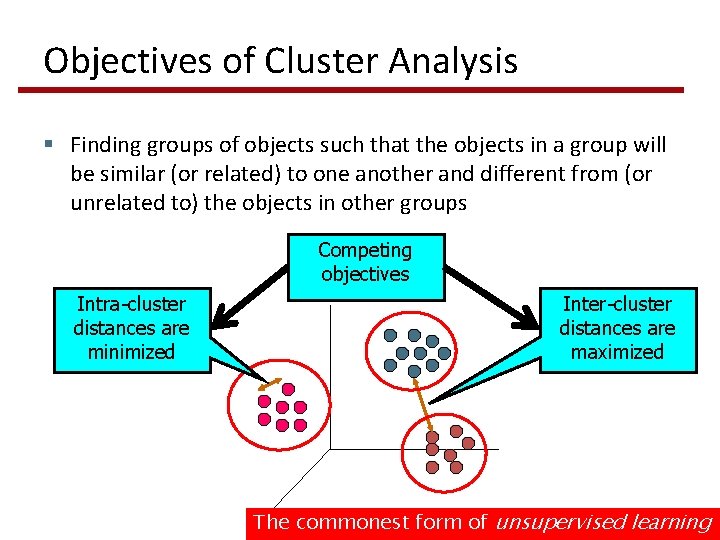

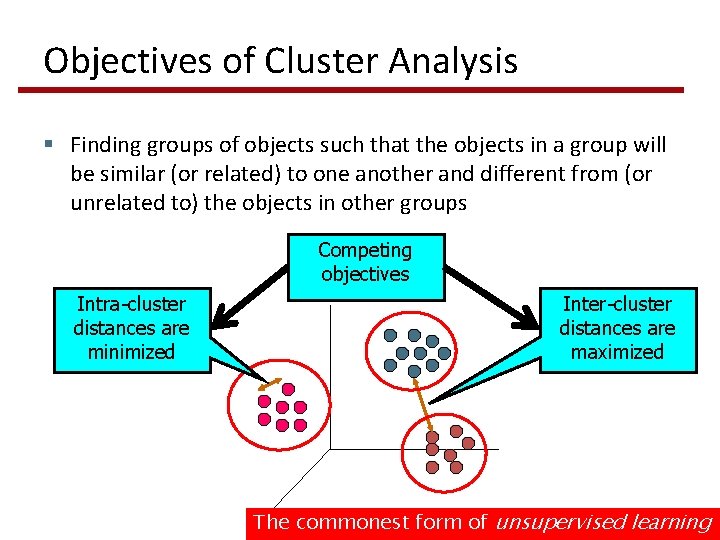

Objectives of Cluster Analysis § Finding groups of objects such that the objects in a group will be similar (or related) to one another and different from (or unrelated to) the objects in other groups Competing objectives Intra-cluster distances are minimized Inter-cluster distances are maximized The commonest form of unsupervised learning

Google News: automatic clustering gives an effective news presentation metaphor

Sec. 16. 1 For improving search recall § Cluster hypothesis - Documents in the same cluster behave similarly with respect to relevance to information needs § Therefore, to improve search recall: § Cluster docs in corpus a priori § When a query matches a doc D, also return other docs in the cluster containing D § Hope if we do this: The query “car” will also return docs containing automobile But also for speeding up the search operation

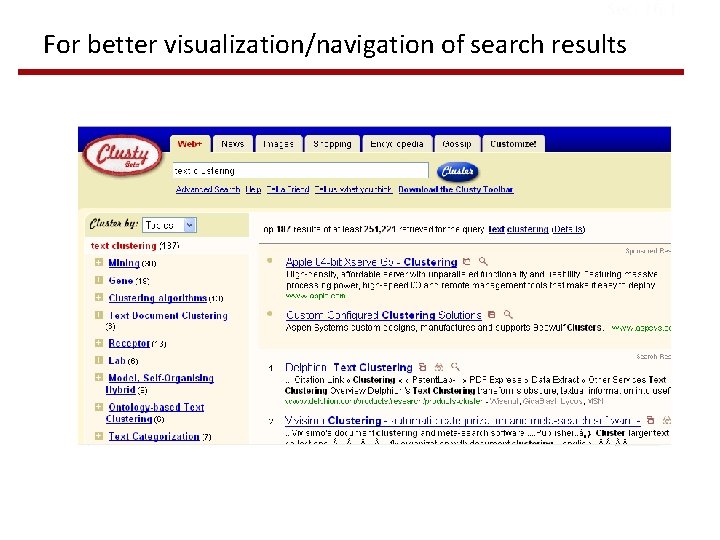

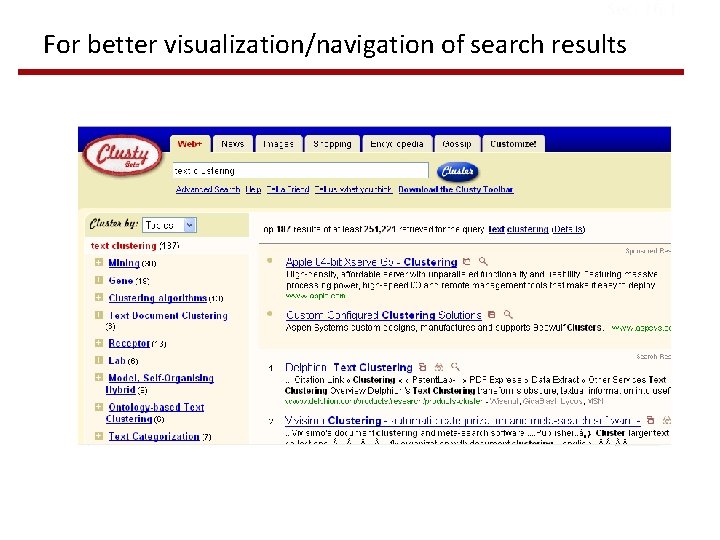

Sec. 16. 1 For better visualization/navigation of search results

Sec. 16. 2 Issues for clustering § Representation for clustering § Document representation § Vector space? Normalization? § Need a notion of similarity/distance § How many clusters? § Fixed a priori? § Completely data driven?

Notion of similarity/distance § Ideal: semantic similarity § Practical: term-statistical similarity § Docs as vectors § We will use cosine similarity. § For many algorithms, easier to think in terms of a distance (rather than similarity) between docs.

Clustering Algorithms § Flat algorithms § Create a set of clusters § Usually start with a random (partial) partitioning § Refine it iteratively § K means clustering § Hierarchical algorithms § Create a hierarchy of clusters (dendogram) § Bottom-up, agglomerative § Top-down, divisive

Hard vs. soft clustering § Hard clustering: Each document belongs to exactly one cluster § More common and easier to do § Soft clustering: Each document can belong to more than one cluster. § Makes more sense for applications like creating browsable hierarchies § News is a proper example § Search results is another example

Flat & Partitioning Algorithms § Given: a set of n documents and the number K § Find: a partition in K clusters that optimizes the chosen partitioning criterion § Globally optimal § Intractable for many objective functions § Ergo, exhaustively enumerate all partitions § Locally optimal § Effective heuristic methods: K-means and K-medoids algorithms

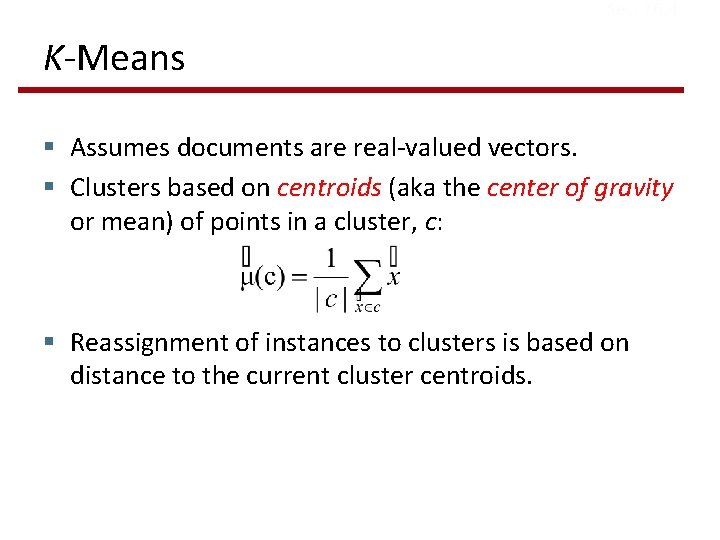

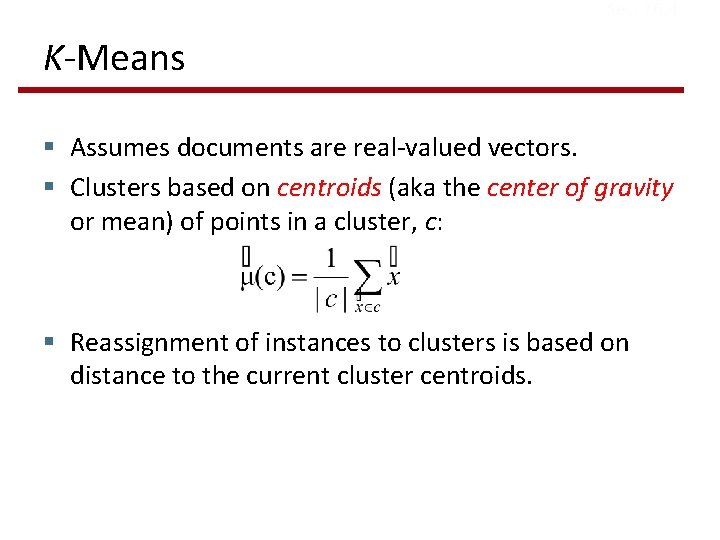

Sec. 16. 4 K-Means § Assumes documents are real-valued vectors. § Clusters based on centroids (aka the center of gravity or mean) of points in a cluster, c: § Reassignment of instances to clusters is based on distance to the current cluster centroids.

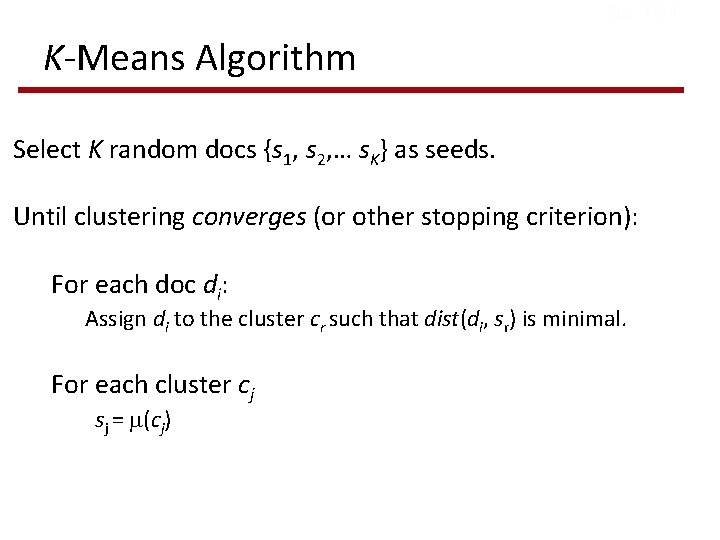

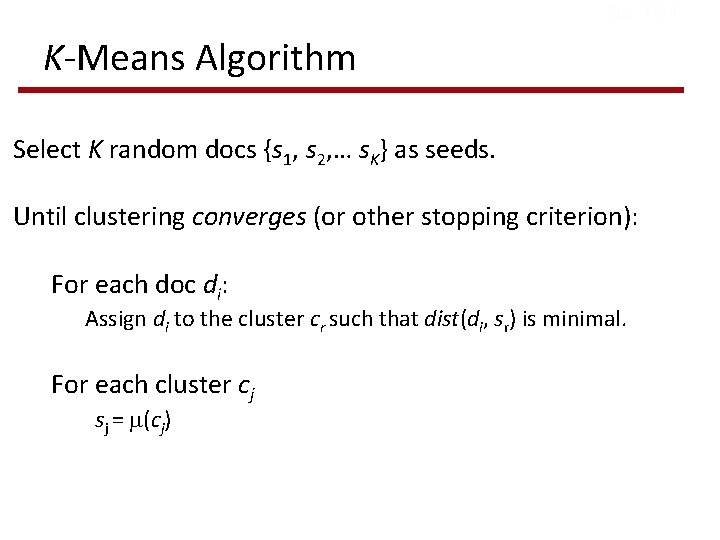

Sec. 16. 4 K-Means Algorithm Select K random docs {s 1, s 2, … s. K} as seeds. Until clustering converges (or other stopping criterion): For each doc di: Assign di to the cluster cr such that dist(di, sr) is minimal. For each cluster cj sj = (cj)

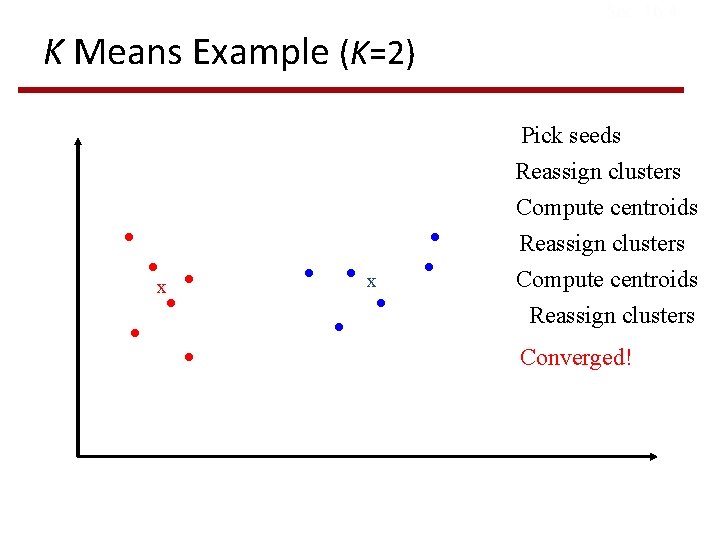

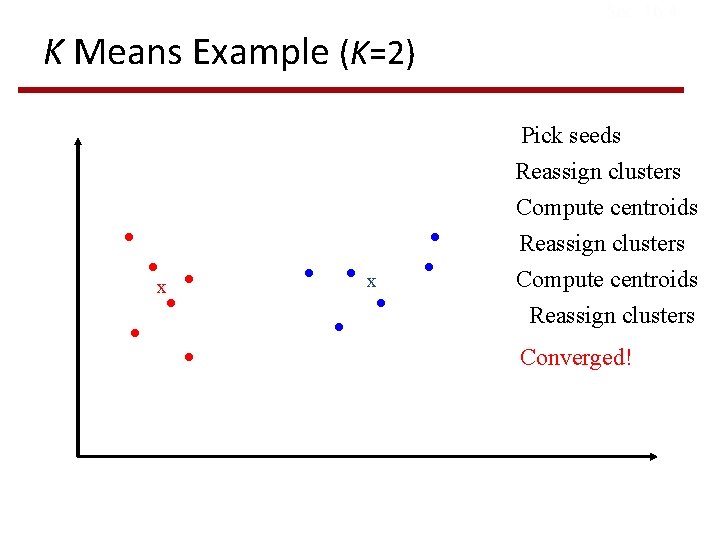

Sec. 16. 4 K Means Example (K=2) Pick seeds x x Reassign clusters Compute centroids Reassign clusters Converged!

Sec. 16. 4 Termination conditions § Several possibilities: § A fixed number of iterations. § Doc partition unchanged. § Centroid positions don’t change.

Sec. 16. 4 Convergence § Why should the K-means algorithm ever reach a fixed point? § K-means is a special case of a general procedure known as the Expectation Maximization (EM) algorithm § EM is known to converge § Number of iterations could be large § But in practice usually isn’t

Sec. 16. 4 Convergence of K-Means § Define goodness measure of cluster c as sum of squared distances from cluster centroid: § G(c, s) = Σj in c (dj – sc)2 (sum over all di in cluster c) § G(C, s) = Σc G(c, s) § Reassignment monotonically decreases G § It is a coordinate descent algorithm (optimize one component at a time) § At any step we have some value for G(C, s) 1) Fix s, optimize C assign doc to the closest centroid G(C’, s) < G(C, s) 2) Fix C’, optimize s take the new centroids G(C’, s’) < G(C’, s) < G(C, s) The new cost is smaller than the original one local minimum

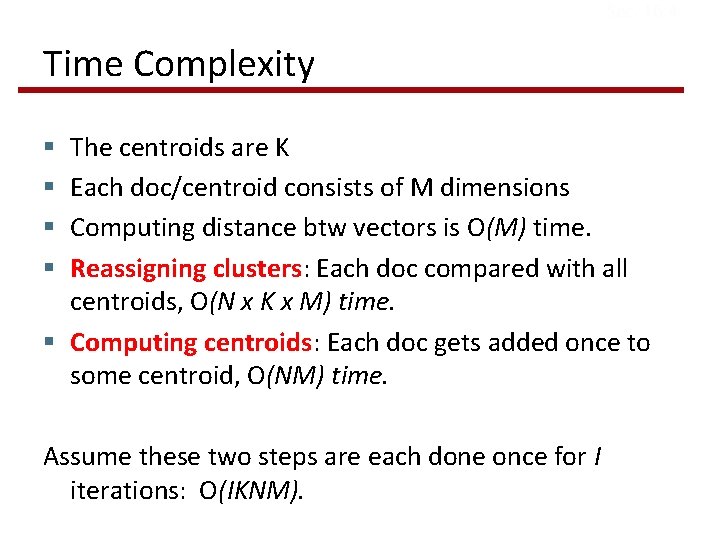

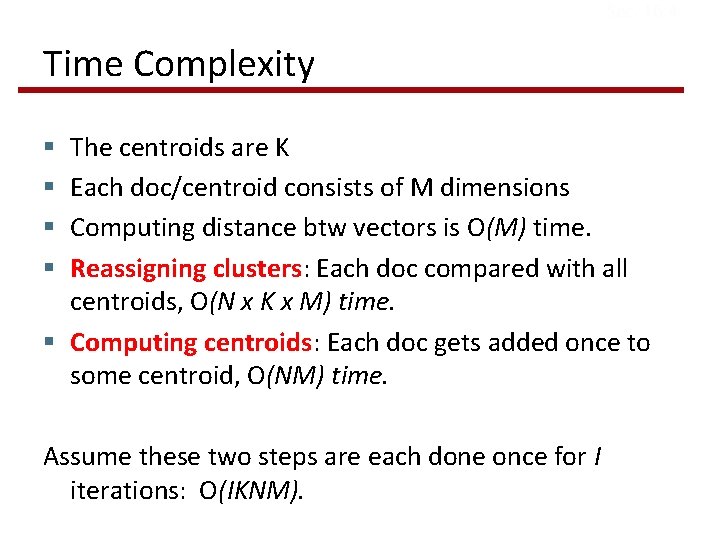

Sec. 16. 4 Time Complexity The centroids are K Each doc/centroid consists of M dimensions Computing distance btw vectors is O(M) time. Reassigning clusters: Each doc compared with all centroids, O(N x K x M) time. § Computing centroids: Each doc gets added once to some centroid, O(NM) time. § § Assume these two steps are each done once for I iterations: O(IKNM).

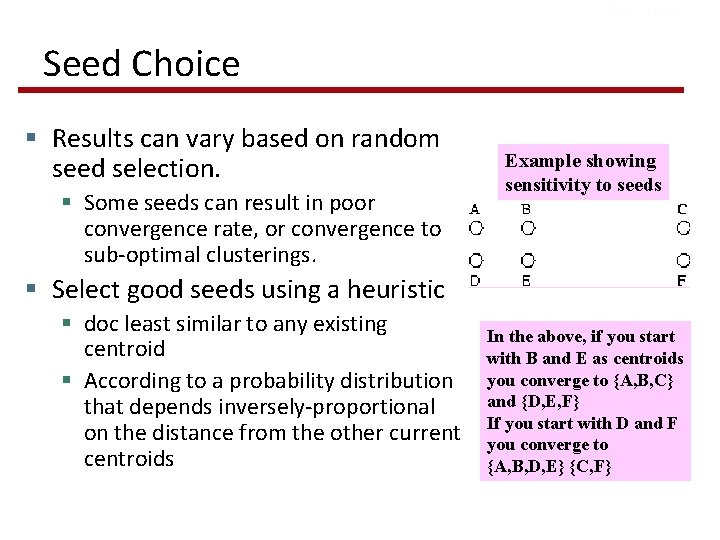

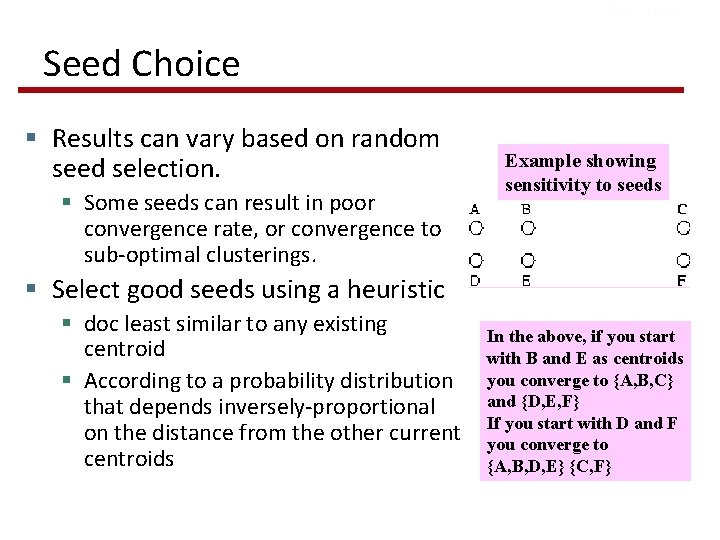

Sec. 16. 4 Seed Choice § Results can vary based on random seed selection. § Some seeds can result in poor convergence rate, or convergence to sub-optimal clusterings. Example showing sensitivity to seeds § Select good seeds using a heuristic § doc least similar to any existing centroid § According to a probability distribution that depends inversely-proportional on the distance from the other current centroids In the above, if you start with B and E as centroids you converge to {A, B, C} and {D, E, F} If you start with D and F you converge to {A, B, D, E} {C, F}

How Many Clusters? § Number of clusters K is given § Partition n docs into predetermined number of clusters § Finding the “right” number of clusters is part of the problem § Can usually take an algorithm for one flavor and convert to the other.

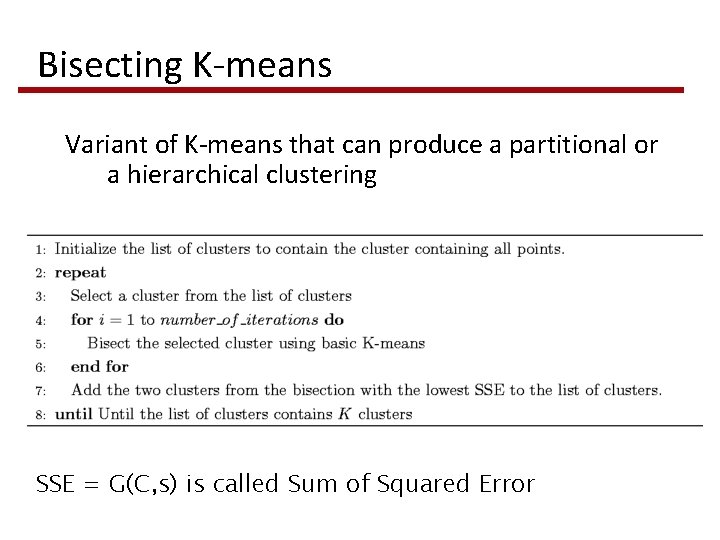

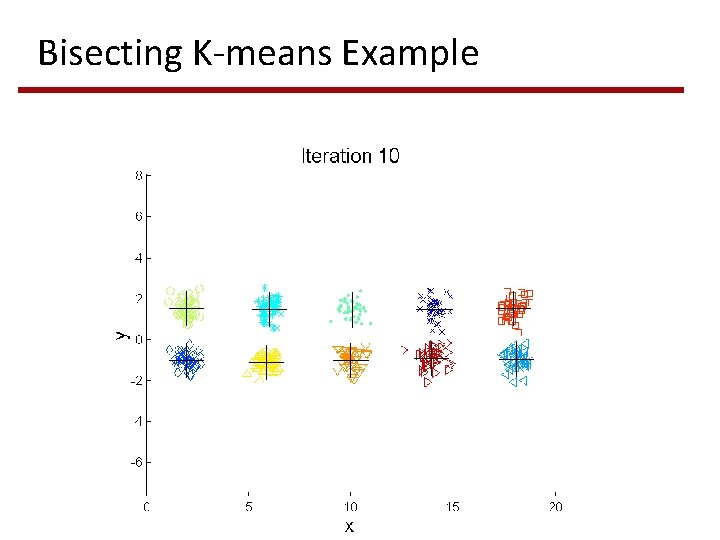

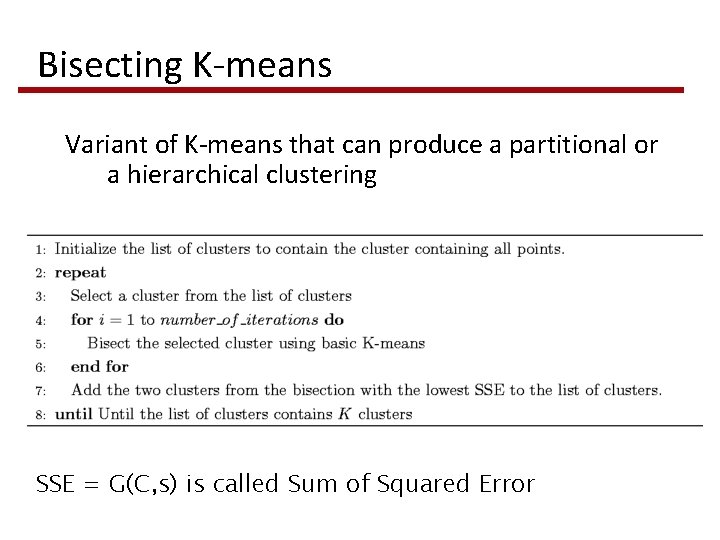

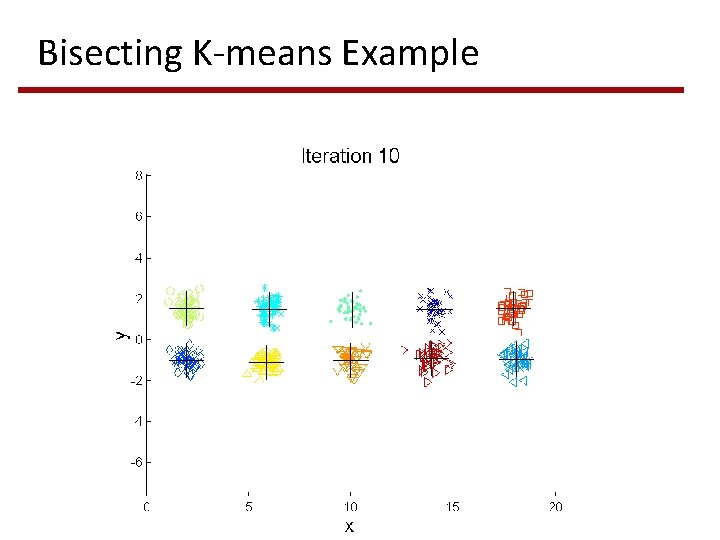

Bisecting K-means Variant of K-means that can produce a partitional or a hierarchical clustering SSE = G(C, s) is called Sum of Squared Error

Bisecting K-means Example

K-means Pros § Simple § Fast for low dimensional data § It can find pure sub-clusters if large number of clusters is specified (but, over-partitioning) Cons § K-Means cannot handle non-globular data of different sizes and densities § K-Means will not identify outliers § K-Means is restricted to data which has the notion of a center (centroid)

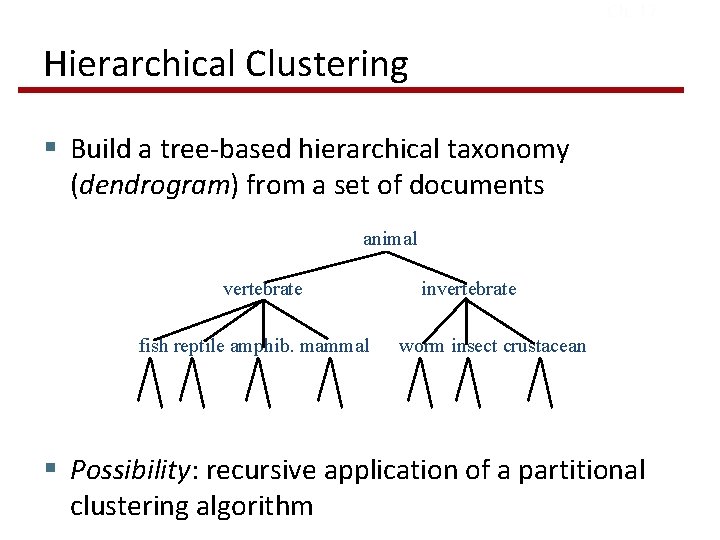

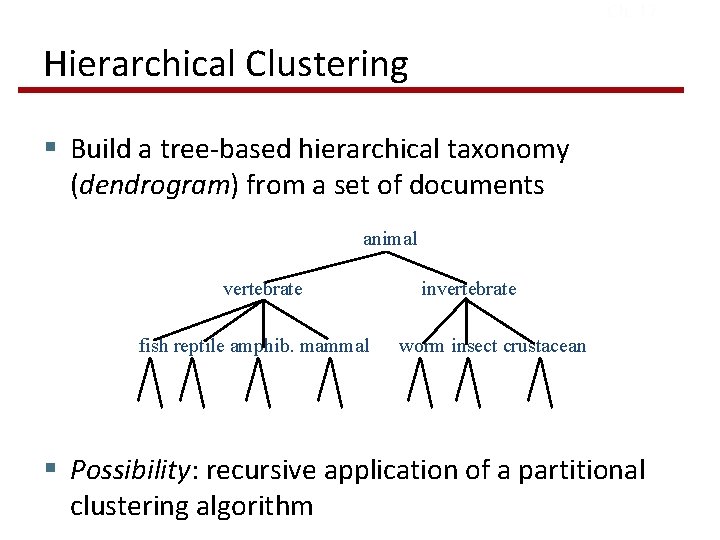

Ch. 17 Hierarchical Clustering § Build a tree-based hierarchical taxonomy (dendrogram) from a set of documents animal vertebrate fish reptile amphib. mammal invertebrate worm insect crustacean § Possibility: recursive application of a partitional clustering algorithm

Strengths of Hierarchical Clustering § No assumption of any particular number of clusters § Any desired number of clusters can be obtained by ‘cutting’ the dendogram at the proper level § They may correspond to meaningful taxonomies § Example in biological sciences (e. g. , animal kingdom, phylogeny reconstruction, …)

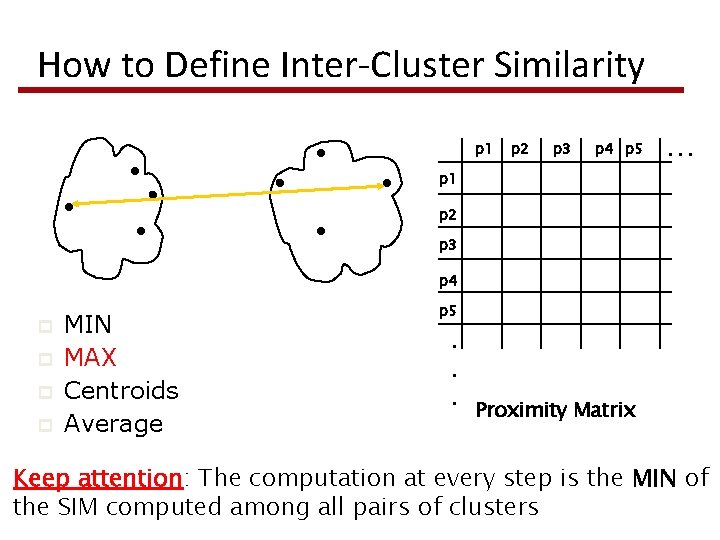

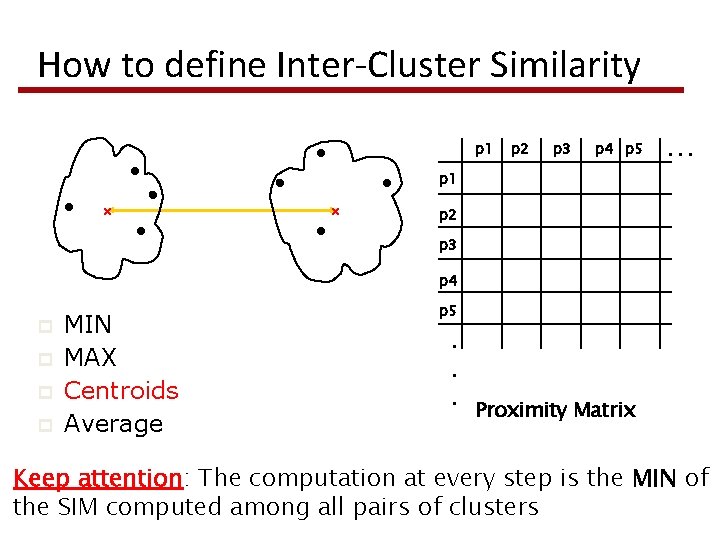

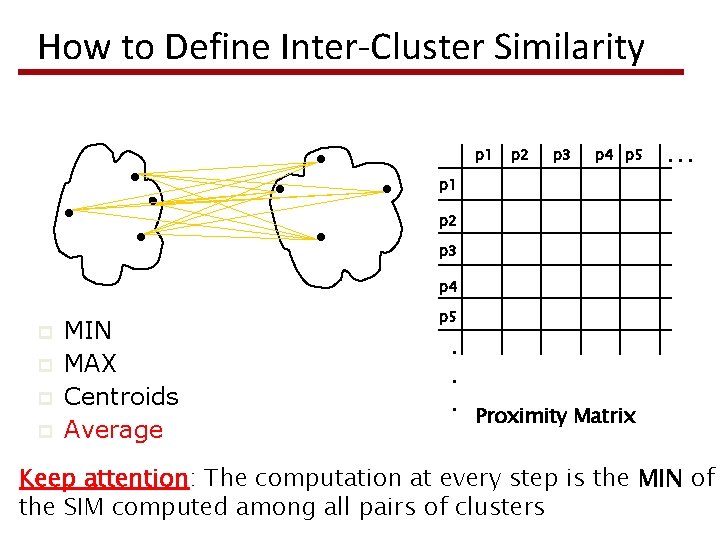

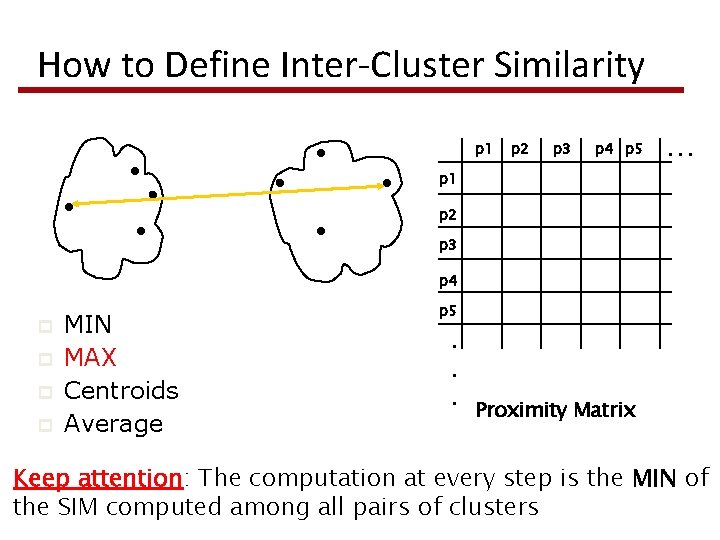

Sec. 17. 1 Hierarchical Agglomerative Clustering (HAC) § Starts with each doc in a separate cluster § Then repeatedly join the most similar pair of clusters, until there is only one cluster. § Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters § The history of merging forms a binary tree or hierarchy.

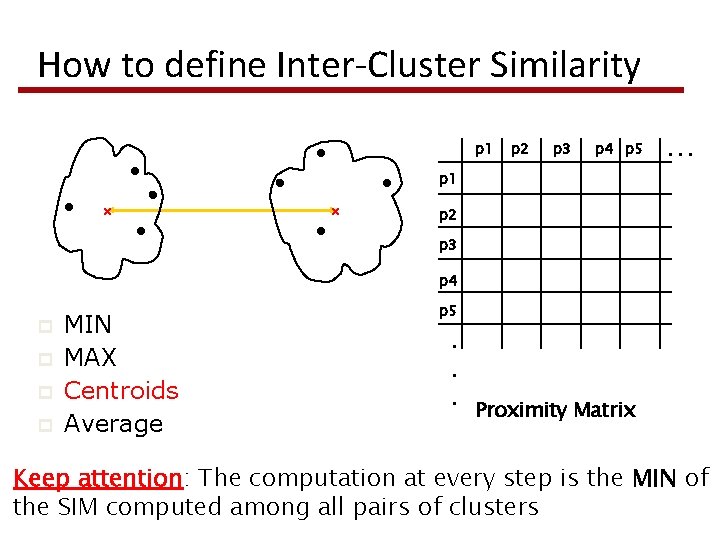

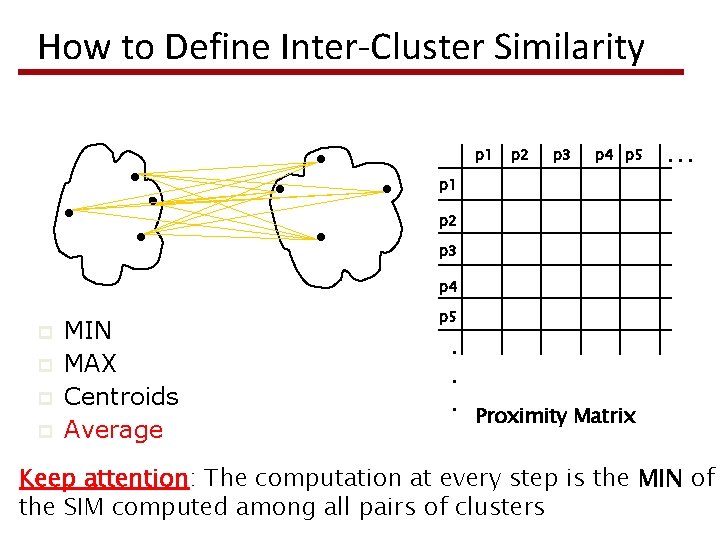

Sec. 17. 2 Similarity of pair of clusters § Single-link § Similarity of the closest points § Complete-link § Similarity of the farthest points § Centroid § Similarity among centroids § Average-link § Similarity = Average distance between all pairs of items Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

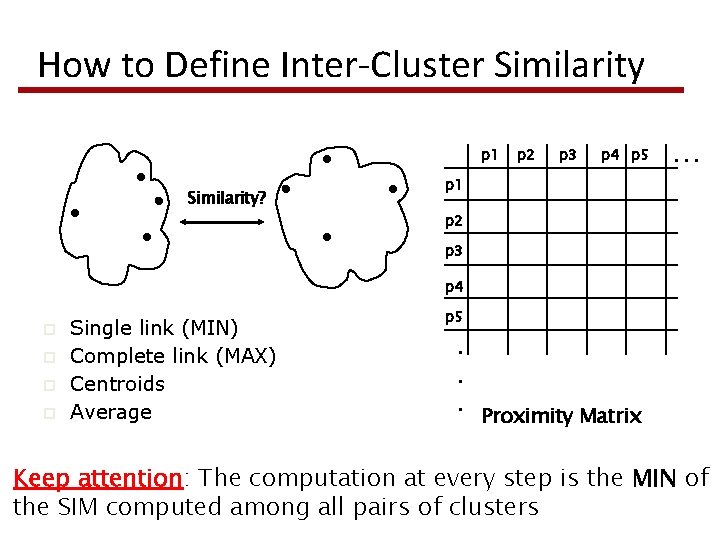

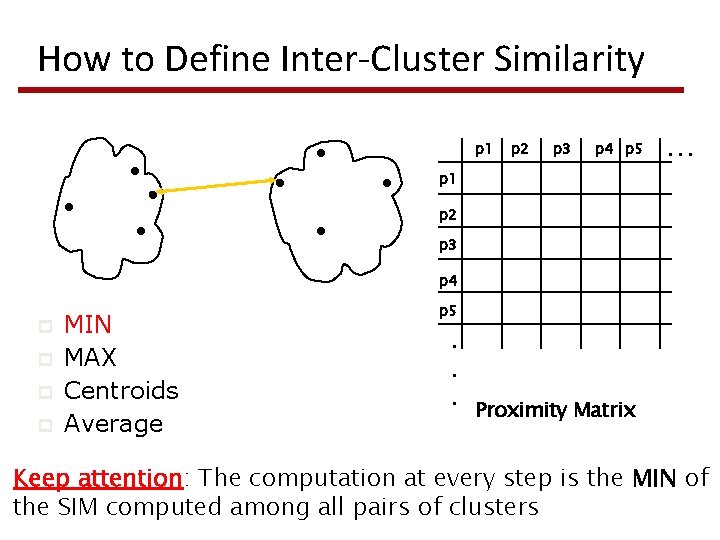

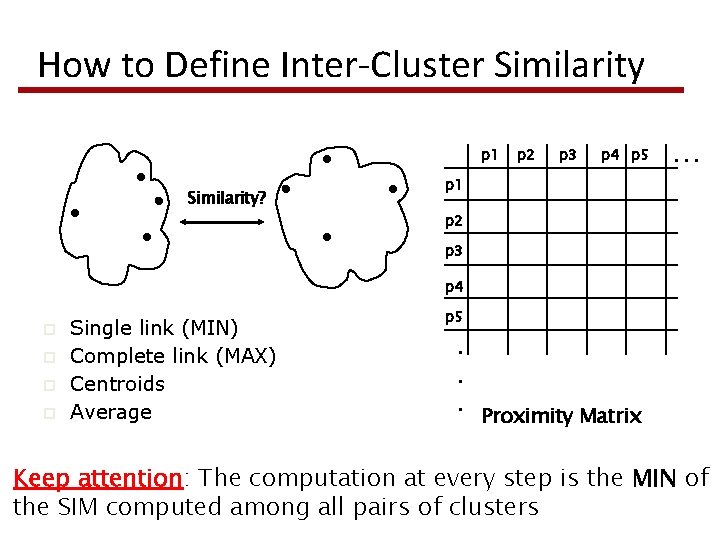

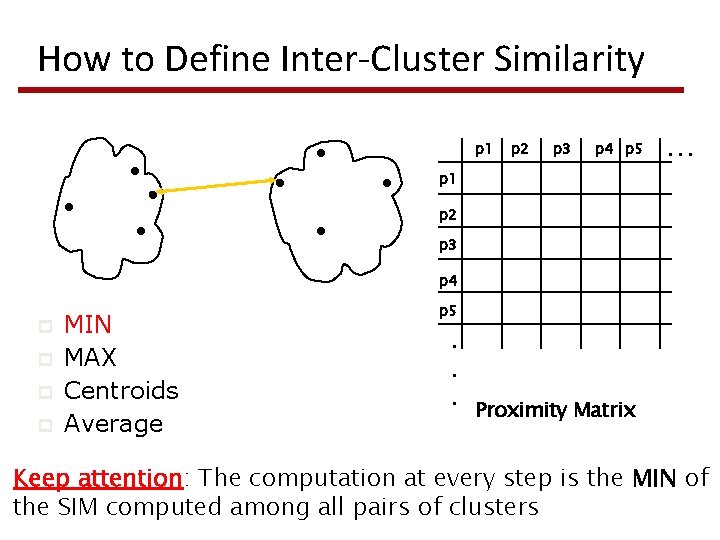

How to Define Inter-Cluster Similarity p 1 Similarity? p 2 p 3 p 4 p 5 . . . p 1 p 2 p 3 p 4 p p Single link (MIN) Complete link (MAX) Centroids Average p 5 . . . Proximity Matrix Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 . . . p 1 p 2 p 3 p 4 p p MIN MAX Centroids Average p 5 . . . Proximity Matrix Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 . . . p 1 p 2 p 3 p 4 p p MIN MAX Centroids Average p 5 . . . Proximity Matrix Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

How to define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 . . . p 1 p 2 p 3 p 4 p p MIN MAX Centroids Average p 5 . . . Proximity Matrix Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 . . . p 1 p 2 p 3 p 4 p p MIN MAX Centroids Average p 5 . . . Proximity Matrix Keep attention: The computation at every step is the MIN of the SIM computed among all pairs of clusters

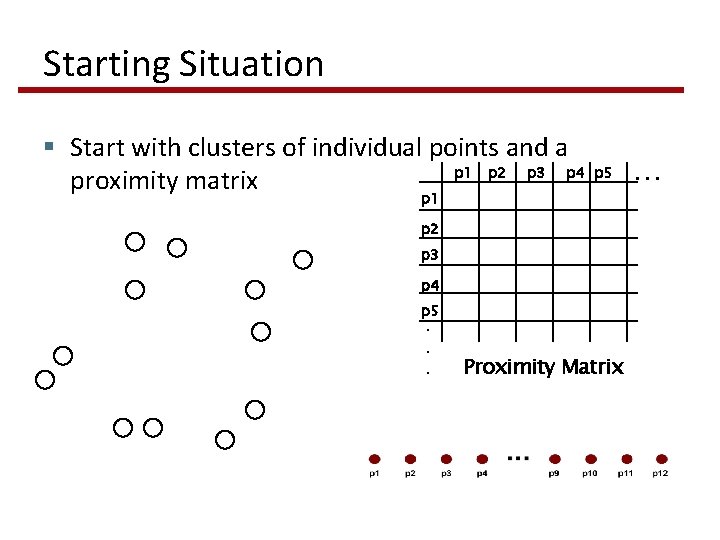

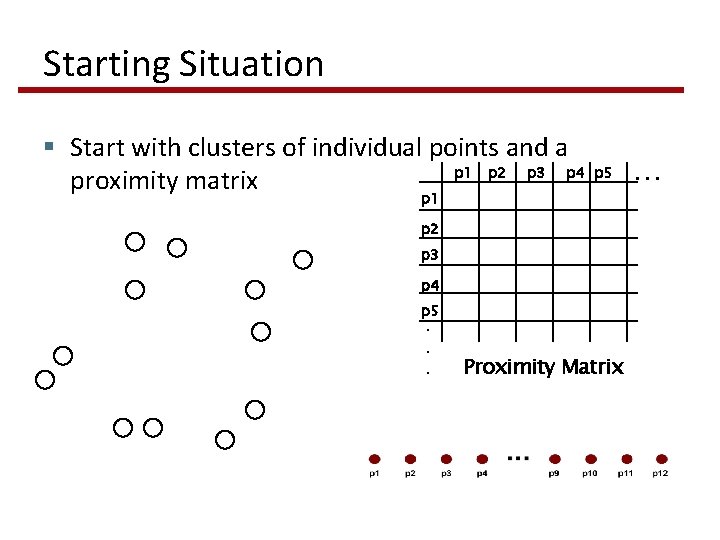

Starting Situation § Start with clusters of individual points and a p 1 p 2 p 3 p 4 proximity matrix p 1 p 5 p 2 p 3 p 4 p 5. . . Proximity Matrix . . .

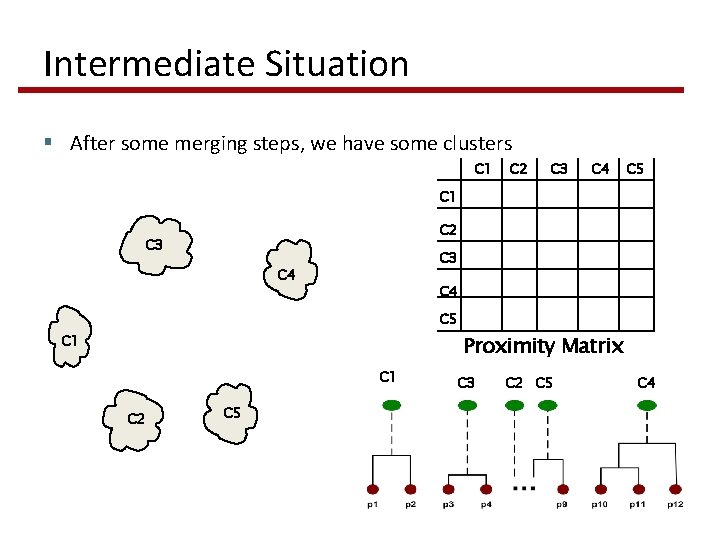

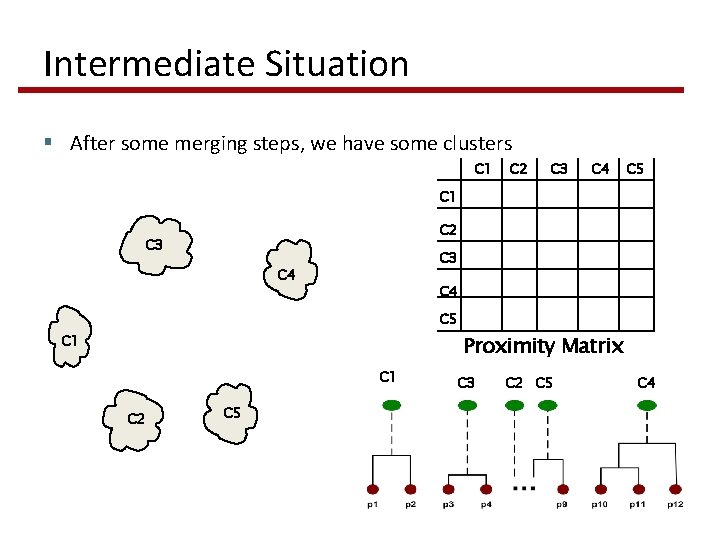

Intermediate Situation § After some merging steps, we have some clusters C 1 C 2 C 3 C 4 C 5 C 1 Proximity Matrix C 1 C 2 C 5 C 3 C 2 C 5 C 4

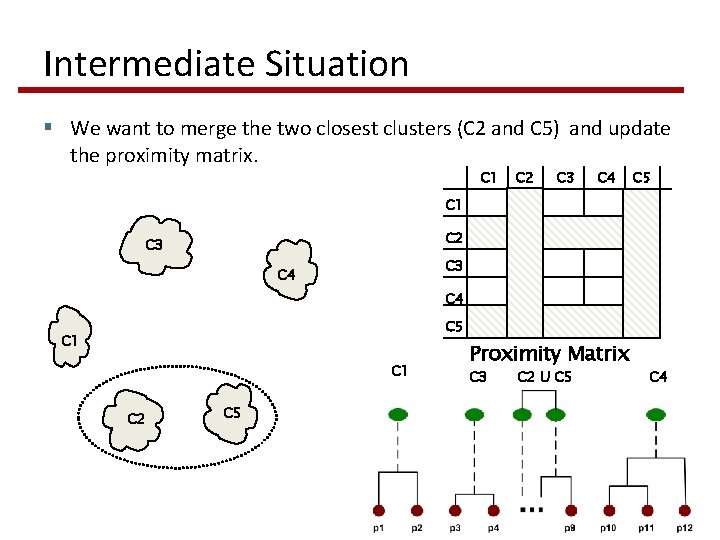

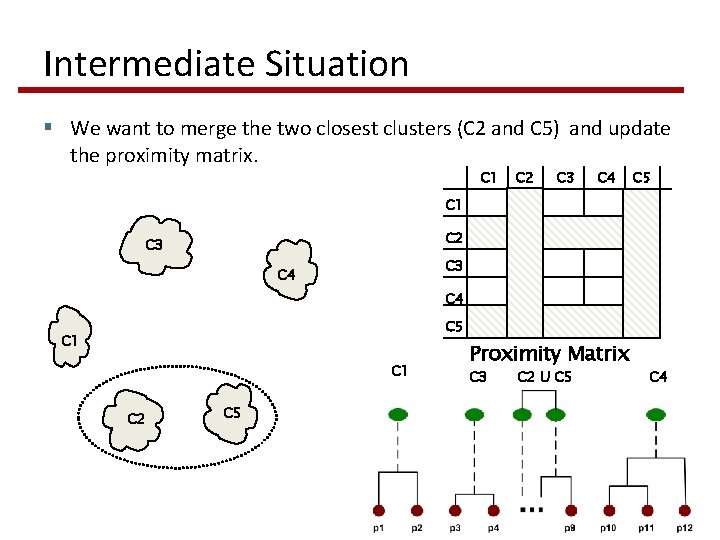

Intermediate Situation § We want to merge the two closest clusters (C 2 and C 5) and update the proximity matrix. C 1 C 2 C 3 C 4 C 5 C 1 C 2 C 5 Proximity Matrix C 3 C 2 U C 5 C 4

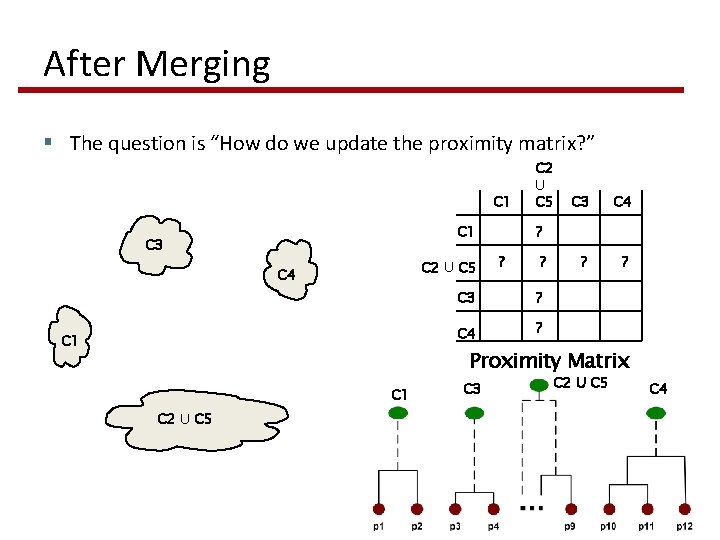

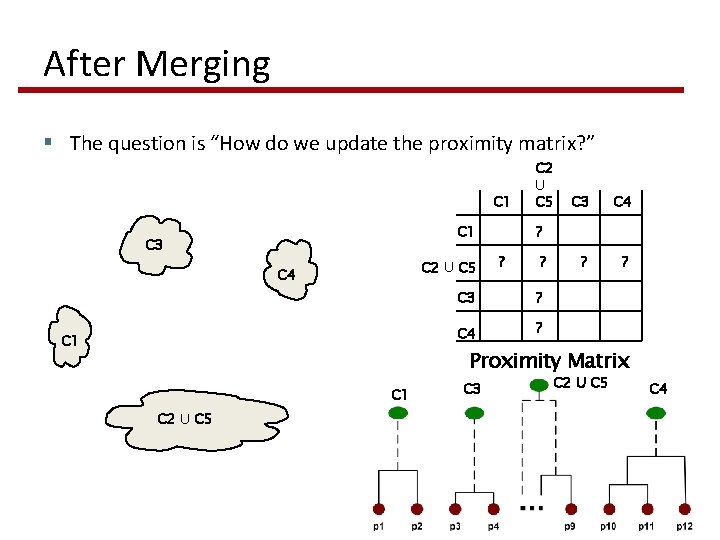

After Merging § The question is “How do we update the proximity matrix? ” C 1 C 3 C 2 U C 5 C 4 C 1 C 2 U C 5 C 3 C 4 ? ? ? C 3 ? C 4 ? Proximity Matrix C 1 C 2 U C 5 C 3 C 2 U C 5 C 4

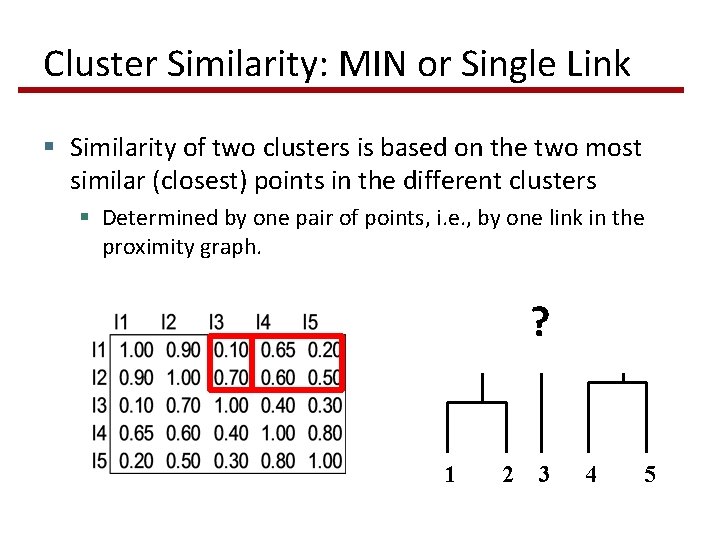

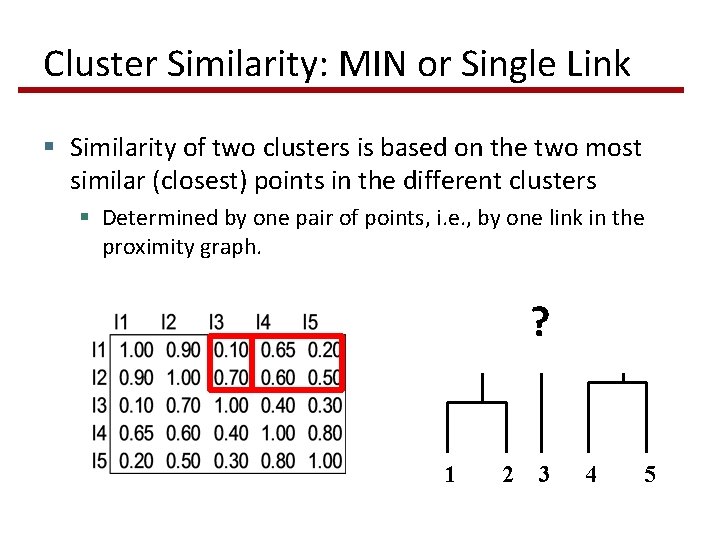

Cluster Similarity: MIN or Single Link § Similarity of two clusters is based on the two most similar (closest) points in the different clusters § Determined by one pair of points, i. e. , by one link in the proximity graph. ? 1 2 3 4 5

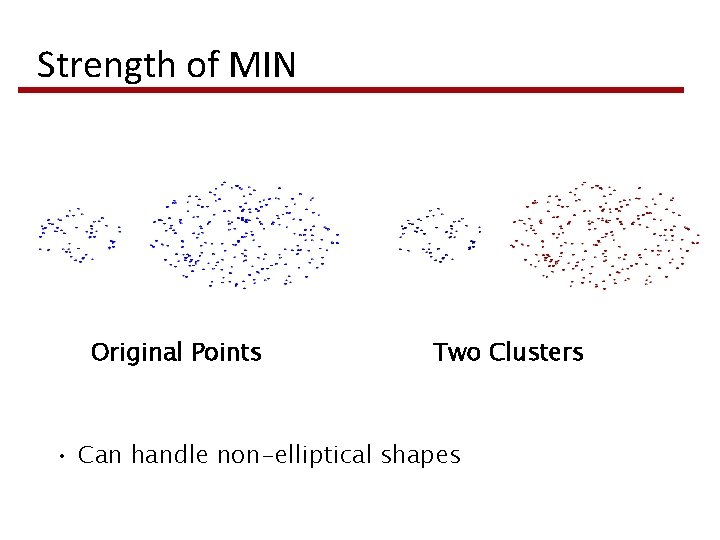

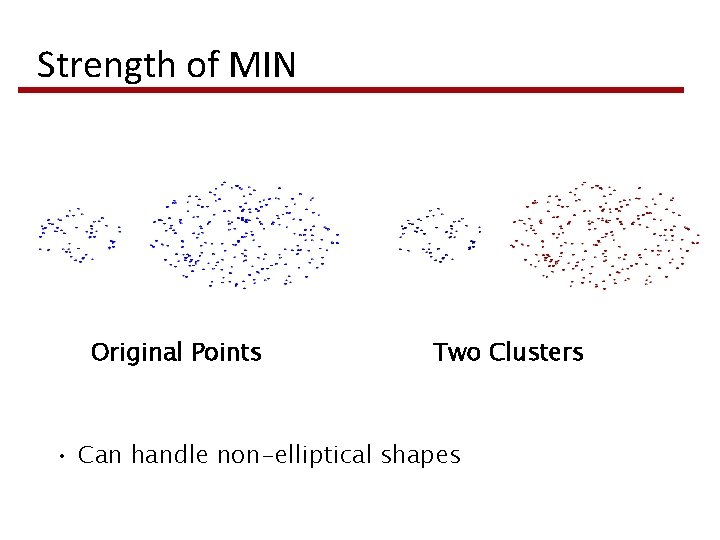

Strength of MIN Original Points Two Clusters • Can handle non-elliptical shapes

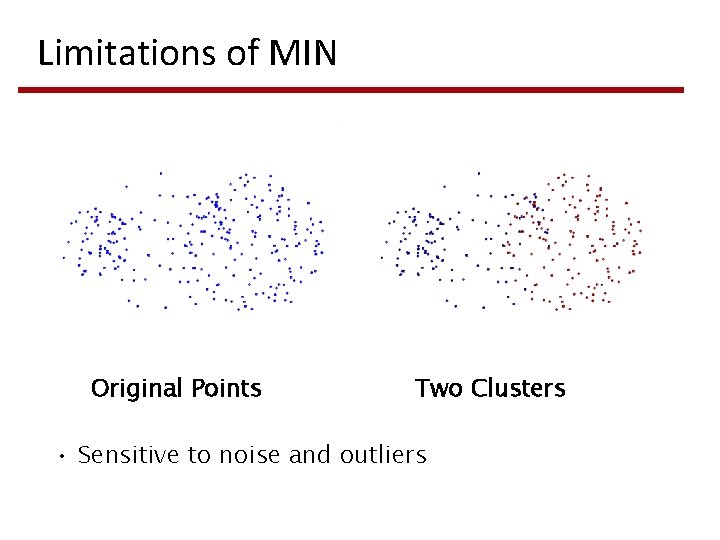

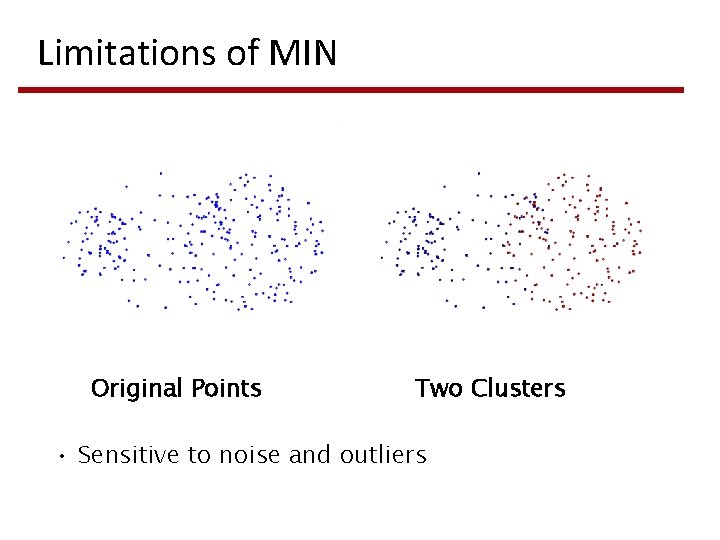

Limitations of MIN Original Points Two Clusters • Sensitive to noise and outliers

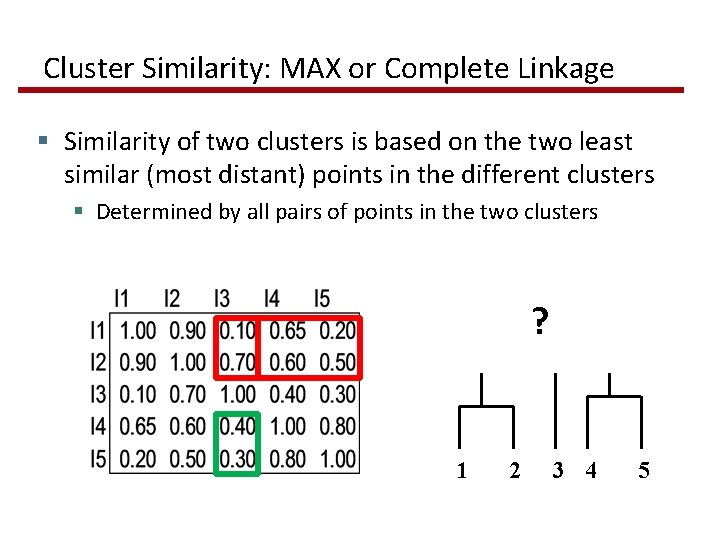

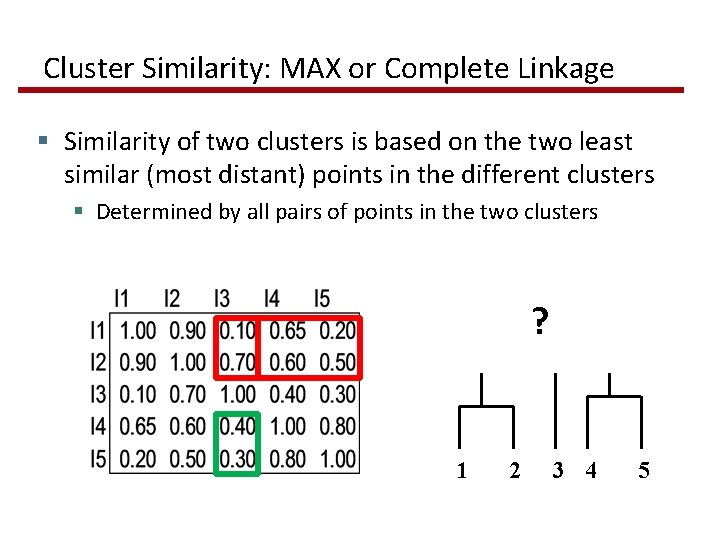

Cluster Similarity: MAX or Complete Linkage § Similarity of two clusters is based on the two least similar (most distant) points in the different clusters § Determined by all pairs of points in the two clusters ? 1 2 3 4 5

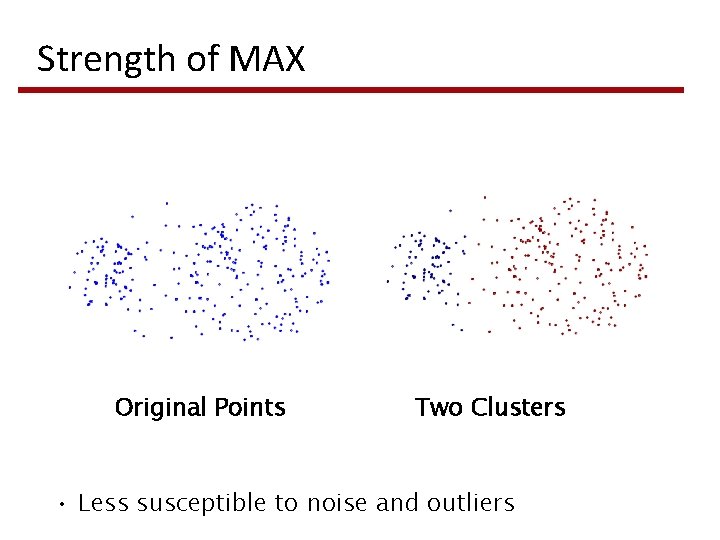

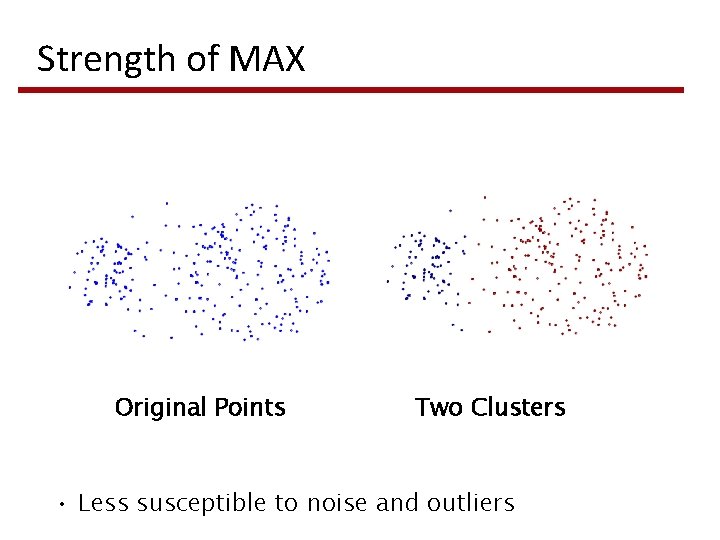

Strength of MAX Original Points Two Clusters • Less susceptible to noise and outliers

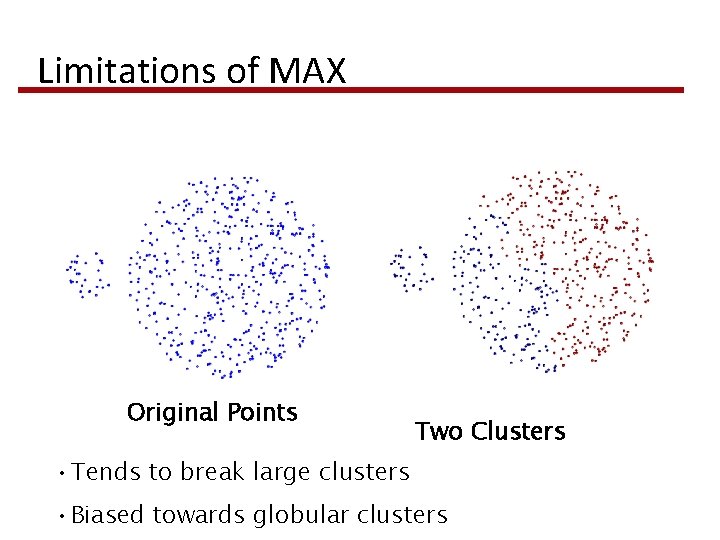

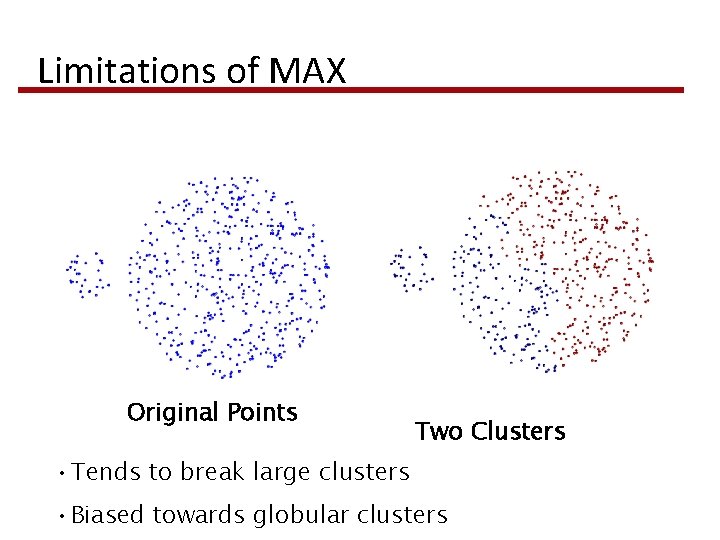

Limitations of MAX Original Points Two Clusters • Tends to break large clusters • Biased towards globular clusters

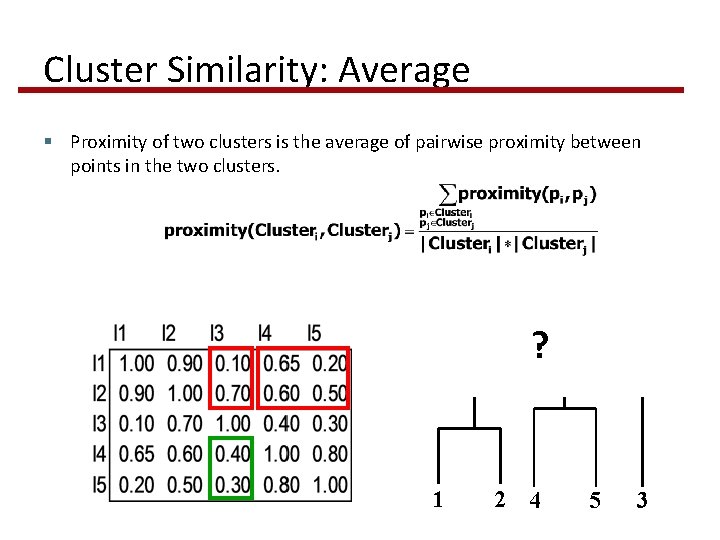

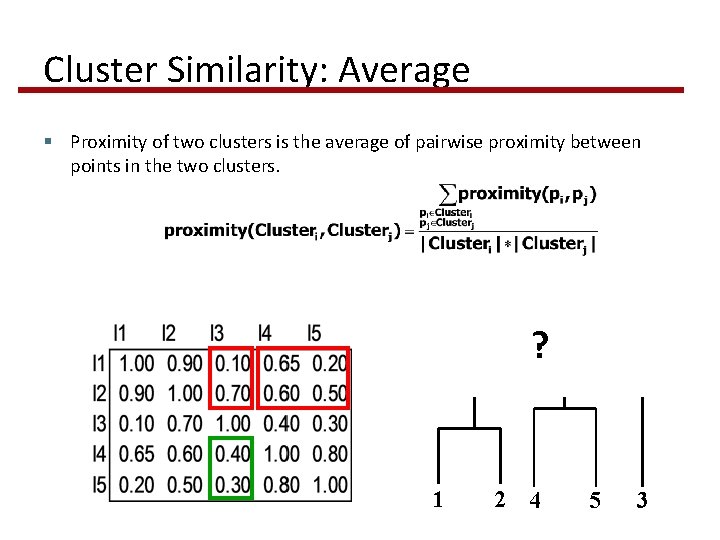

Cluster Similarity: Average § Proximity of two clusters is the average of pairwise proximity between points in the two clusters. ? 1 2 4 5 3

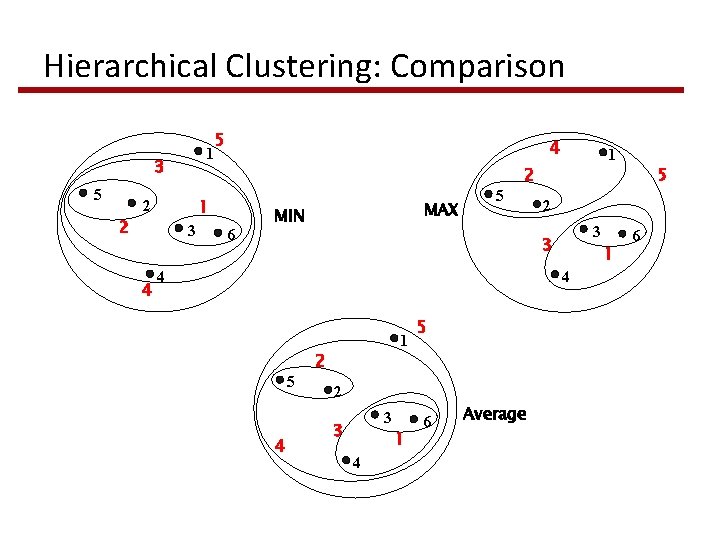

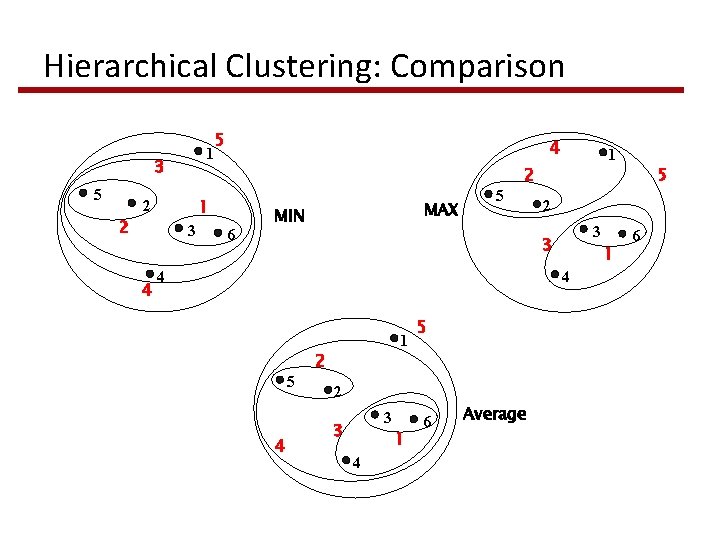

Hierarchical Clustering: Comparison 1 3 5 4 1 2 2 3 4 5 6 MAX MIN 5 1 2 2 3 3 4 1 4 5 4 1 2 5 2 3 3 1 4 6 Average 5 6

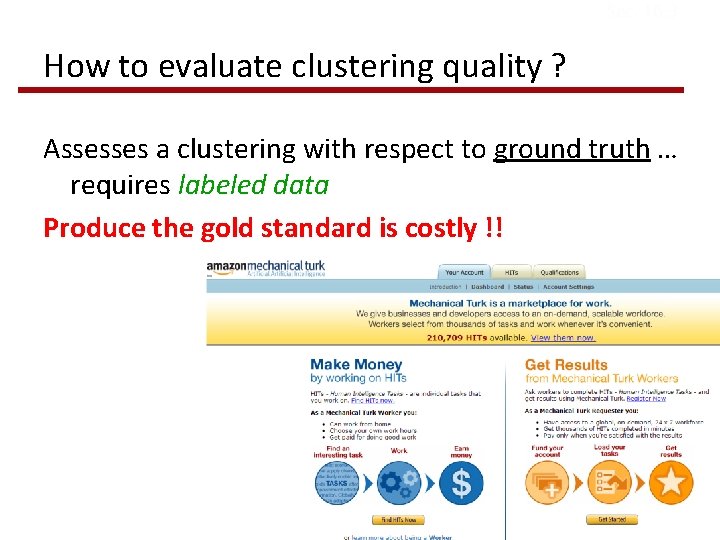

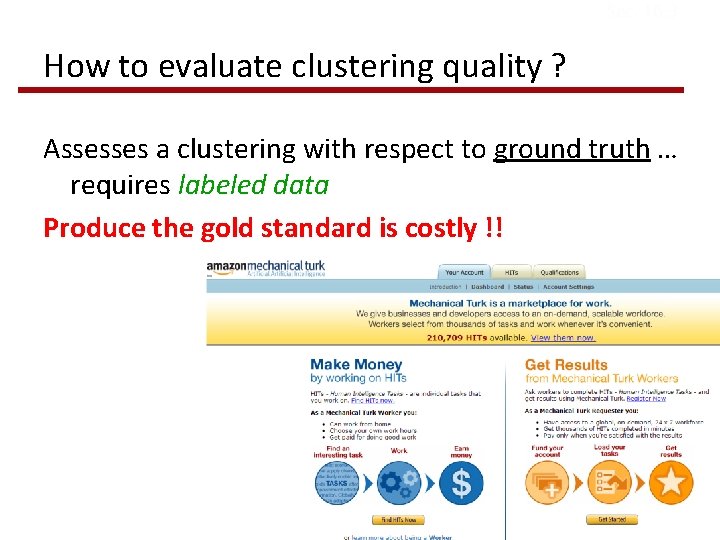

Sec. 16. 3 How to evaluate clustering quality ? Assesses a clustering with respect to ground truth … requires labeled data Produce the gold standard is costly !!