Clustering methods Part 4 Clustering Cost function Pasi

![Cost function example [Theodoridis, Koutroumbas, 2006] 1 Data Set x 1 1. 1 x Cost function example [Theodoridis, Koutroumbas, 2006] 1 Data Set x 1 1. 1 x](https://slidetodoc.com/presentation_image_h2/5d38da17ca53e0903ae938f964361e1c/image-14.jpg)

- Slides: 79

Clustering methods: Part 4 Clustering Cost function Pasi Fränti 29. 4. 2014 Speech and Image Processing Unit School of Computing University of Eastern Finland

Data types • • • Numeric Binary Categorical Text Time series

Part I: Numeric data

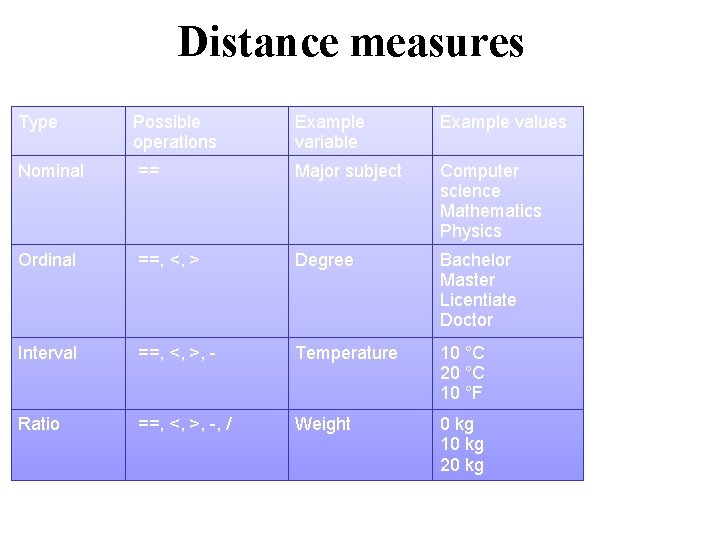

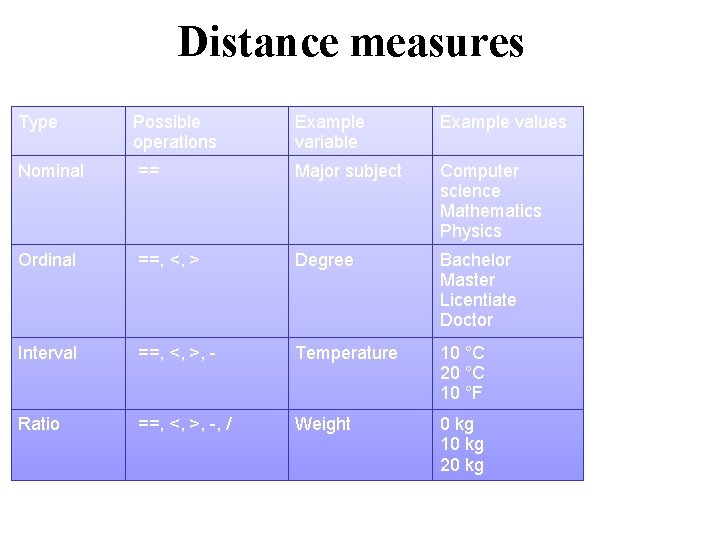

Distance measures Type Possible operations Example variable Example values Nominal == Major subject Computer science Mathematics Physics Ordinal ==, <, > Degree Bachelor Master Licentiate Doctor Interval ==, <, >, - Temperature 10 °C 20 °C 10 °F Ratio ==, <, >, -, / Weight 0 kg 10 kg 20 kg

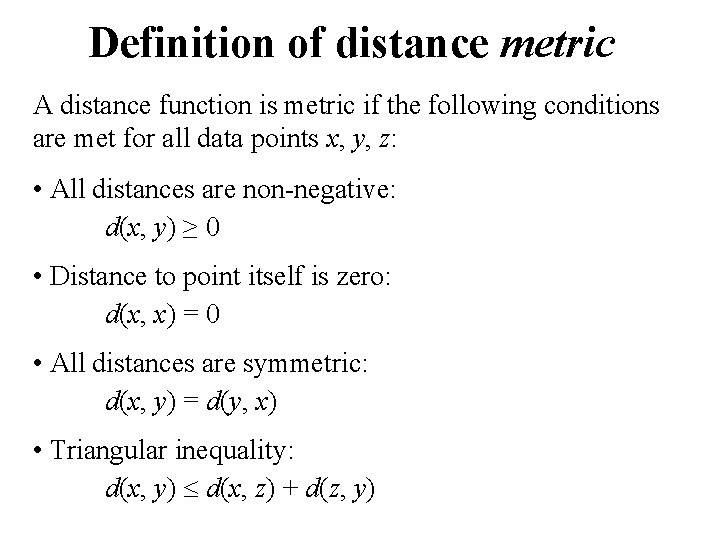

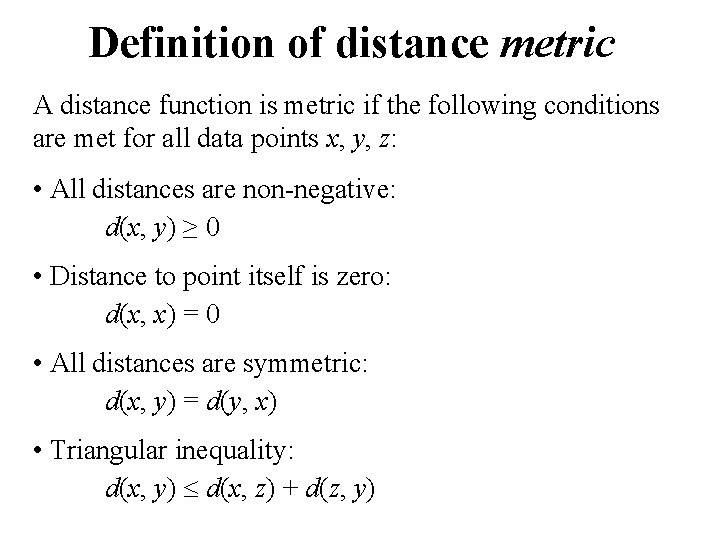

Definition of distance metric A distance function is metric if the following conditions are met for all data points x, y, z: • All distances are non-negative: d(x, y) ≥ 0 • Distance to point itself is zero: d(x, x) = 0 • All distances are symmetric: d(x, y) = d(y, x) • Triangular inequality: d(x, y) d(x, z) + d(z, y)

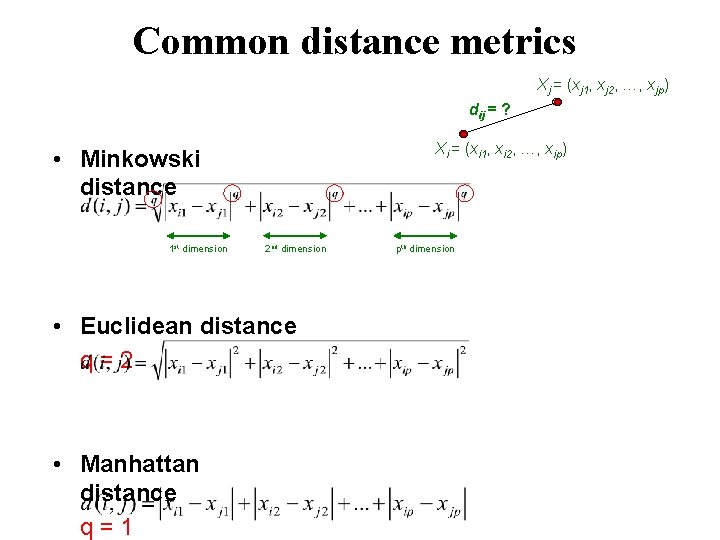

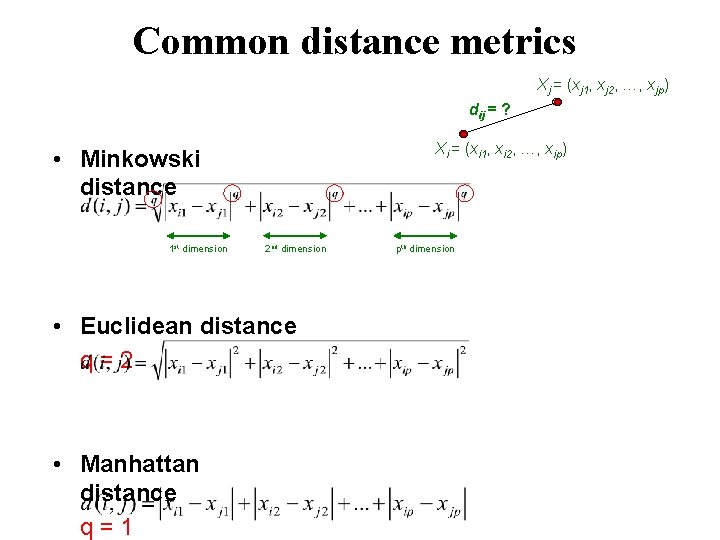

Common distance metrics Xj = (xj 1, xj 2, …, xjp) dij = ? Xi = (xi 1, xi 2, …, xip) • Minkowski distance 1 st dimension 2 nd dimension • Euclidean distance q=2 • Manhattan distance q=1 pth dimension

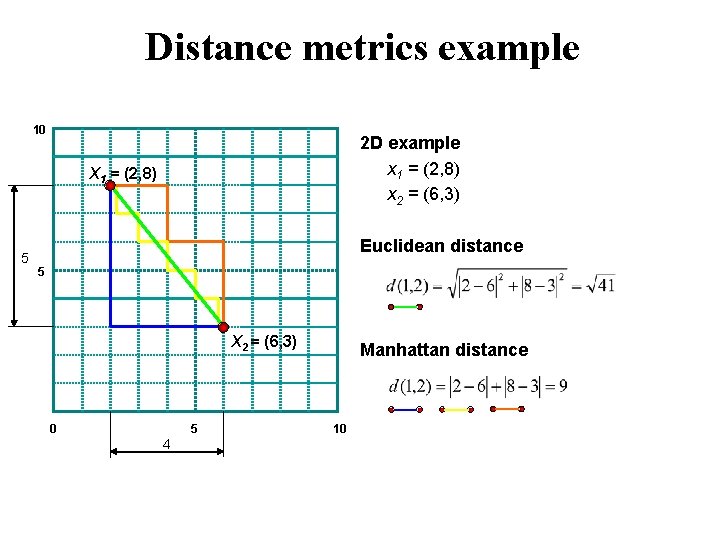

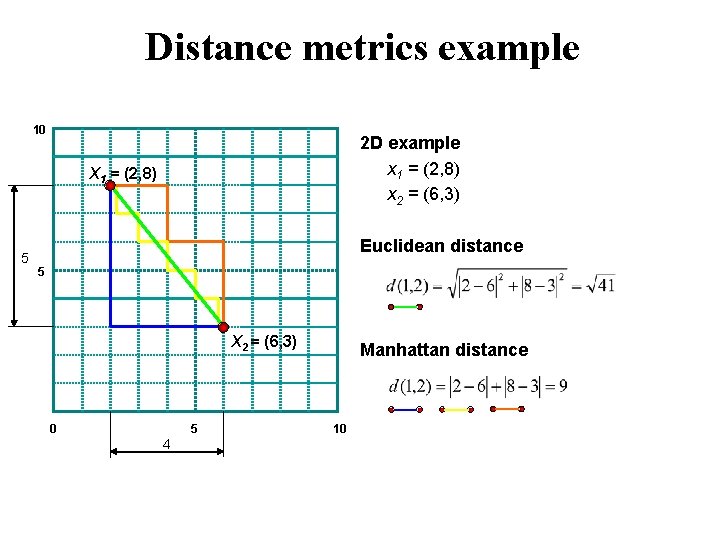

Distance metrics example 10 2 D example x 1 = (2, 8) x 2 = (6, 3) X 1 = (2, 8) 5 Euclidean distance 5 X 2 = (6, 3) 0 5 4 Manhattan distance 10

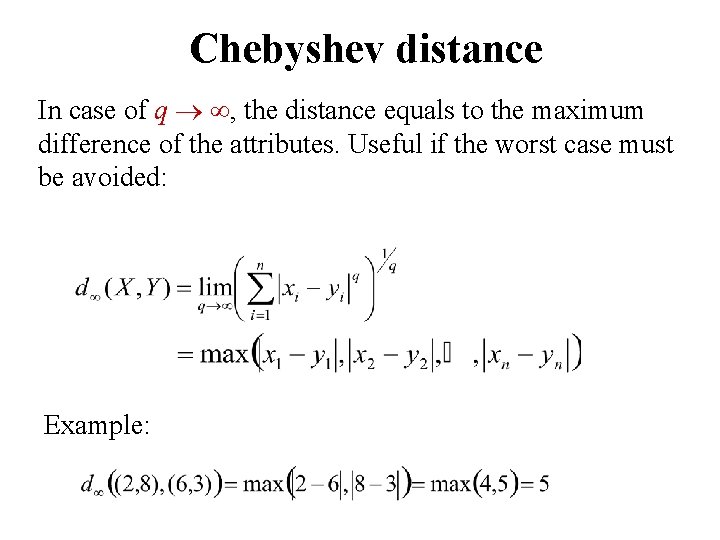

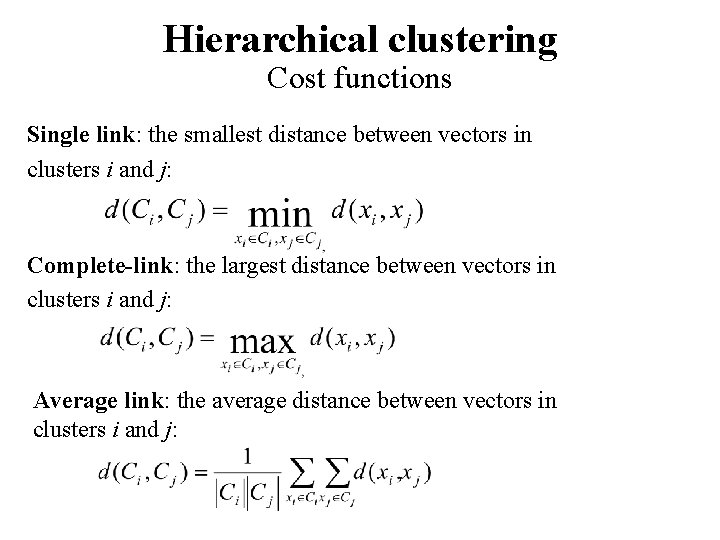

Chebyshev distance In case of q , the distance equals to the maximum difference of the attributes. Useful if the worst case must be avoided: Example:

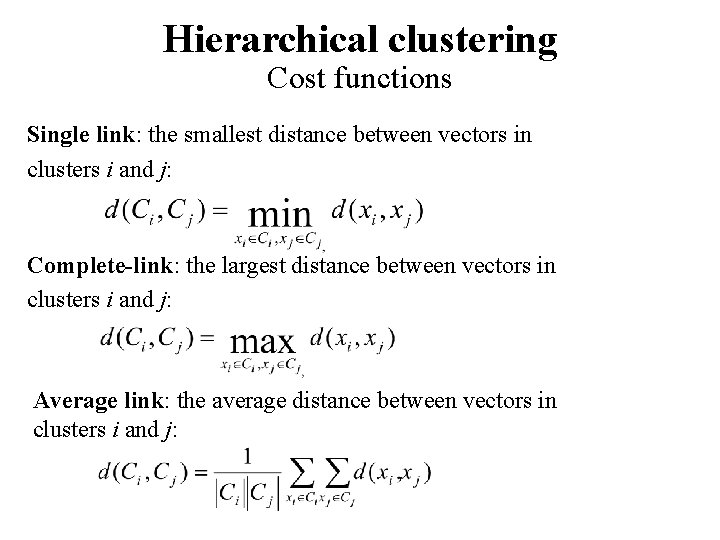

Hierarchical clustering Cost functions Single link: the smallest distance between vectors in clusters i and j: Complete-link: the largest distance between vectors in clusters i and j: Average link: the average distance between vectors in clusters i and j:

Single Link

Complete Link

Average Link

![Cost function example Theodoridis Koutroumbas 2006 1 Data Set x 1 1 1 x Cost function example [Theodoridis, Koutroumbas, 2006] 1 Data Set x 1 1. 1 x](https://slidetodoc.com/presentation_image_h2/5d38da17ca53e0903ae938f964361e1c/image-14.jpg)

Cost function example [Theodoridis, Koutroumbas, 2006] 1 Data Set x 1 1. 1 x 2 1. 2 x 3 1. 3 x 4 1. 4 x 5 Single Link: x 1 x 2 x 3 x 4 x 5 1. 5 x 6 x 7 Complete Link: x 6 x 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7

Part II: Binary data

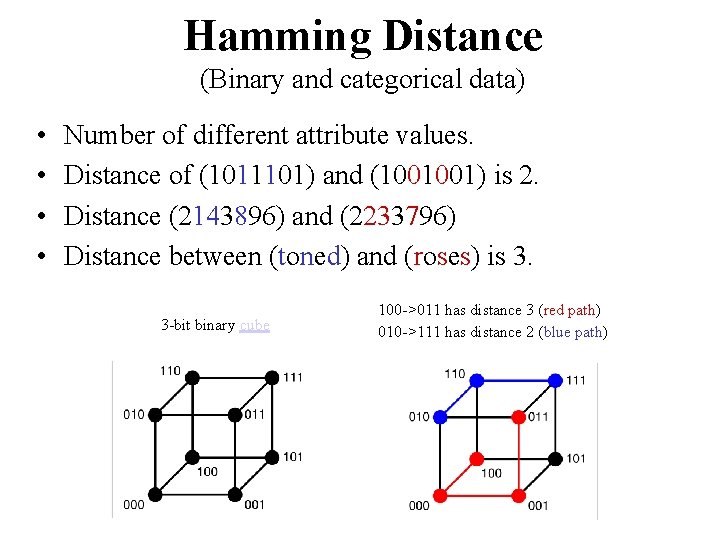

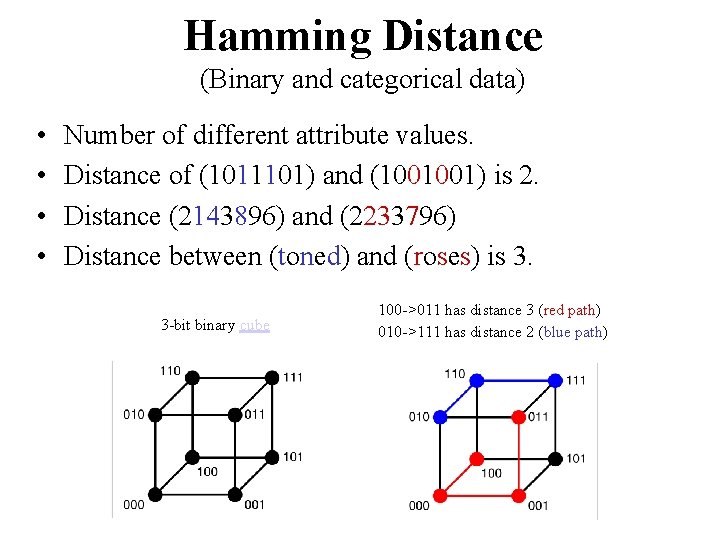

Hamming Distance (Binary and categorical data) • • Number of different attribute values. Distance of (1011101) and (1001001) is 2. Distance (2143896) and (2233796) Distance between (toned) and (roses) is 3. 3 -bit binary cube 100 ->011 has distance 3 (red path) 010 ->111 has distance 2 (blue path)

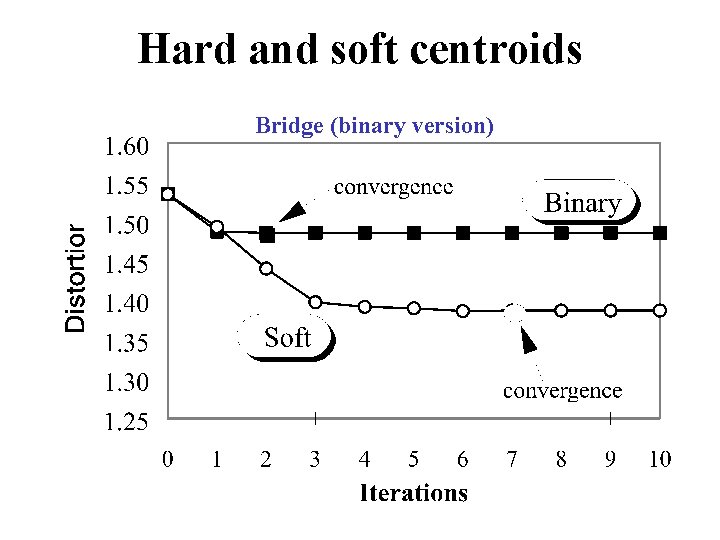

Hard thresholding of centroid (0. 40, 0. 60, 0. 75, 0. 20, 0. 45, 0. 25)

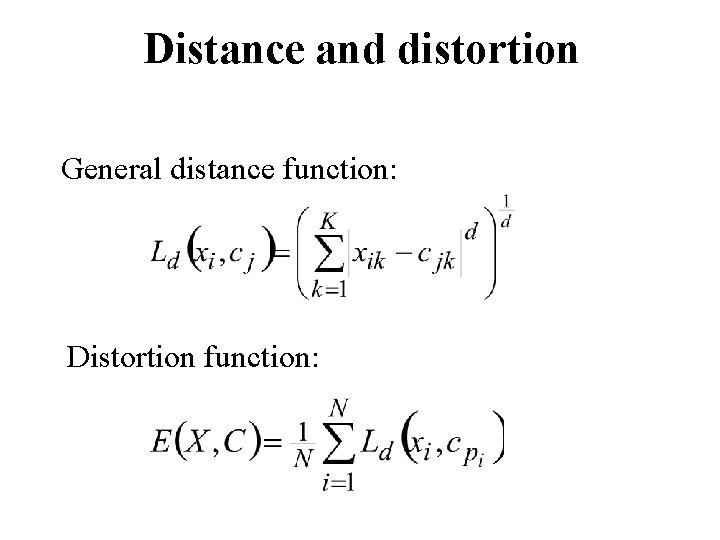

Hard and soft centroids Bridge (binary version)

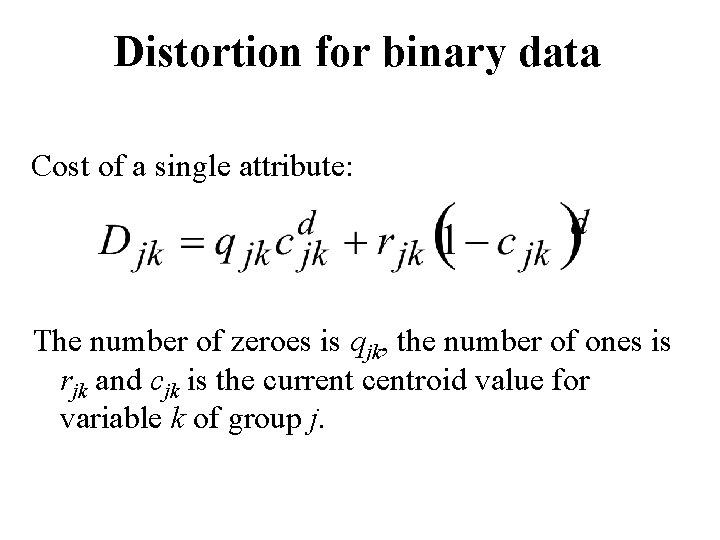

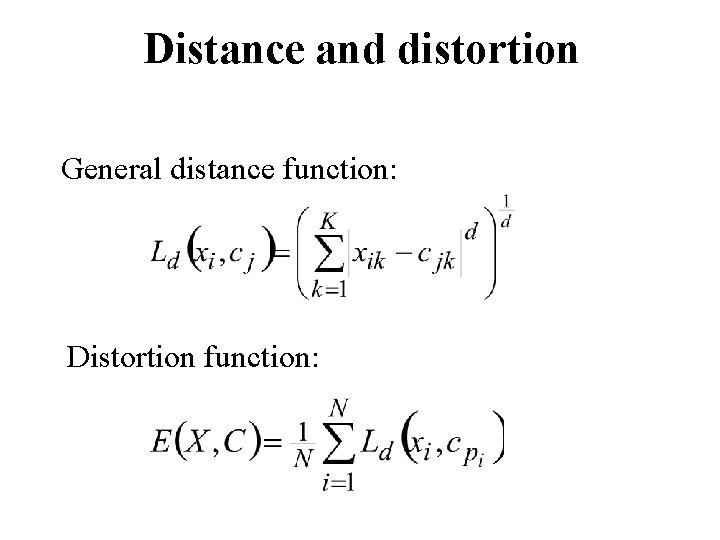

Distance and distortion General distance function: Distortion function:

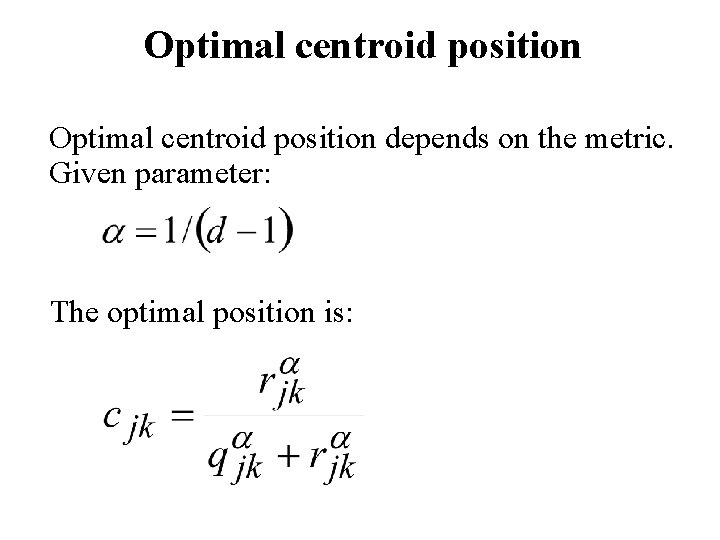

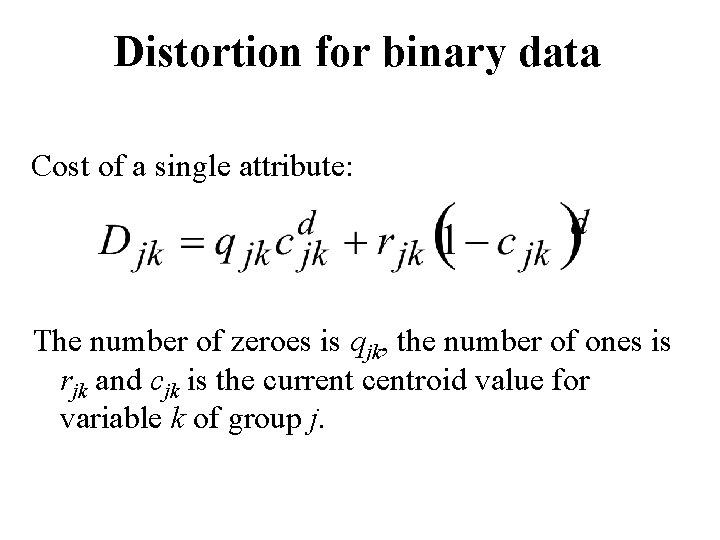

Distortion for binary data Cost of a single attribute: The number of zeroes is qjk, the number of ones is rjk and cjk is the current centroid value for variable k of group j.

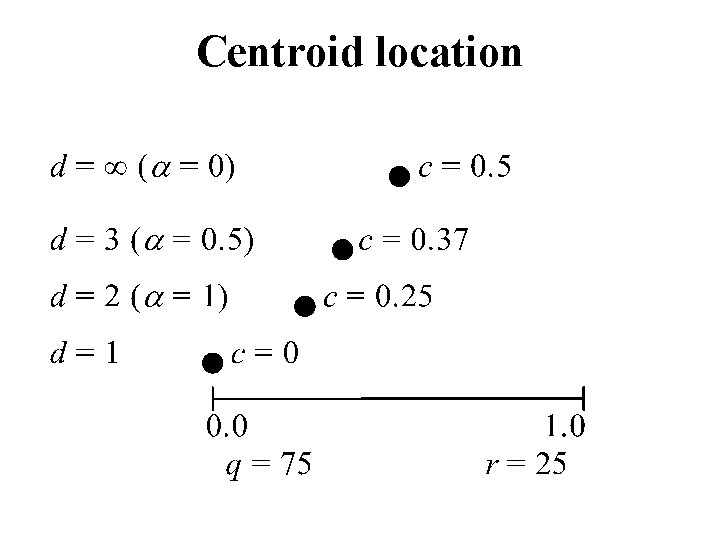

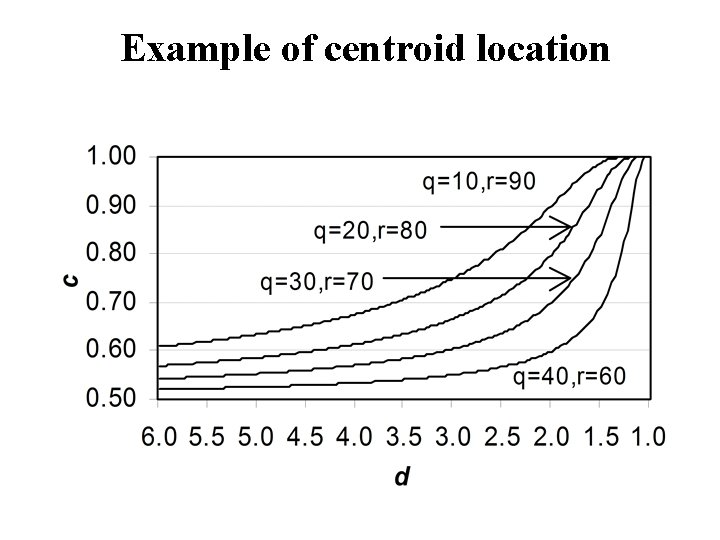

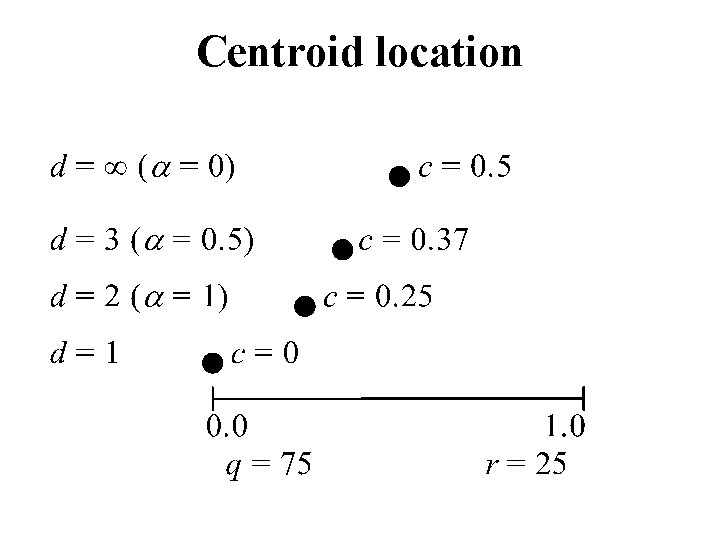

Optimal centroid position depends on the metric. Given parameter: The optimal position is:

Example of centroid location

Centroid location

Part III: Categorical data

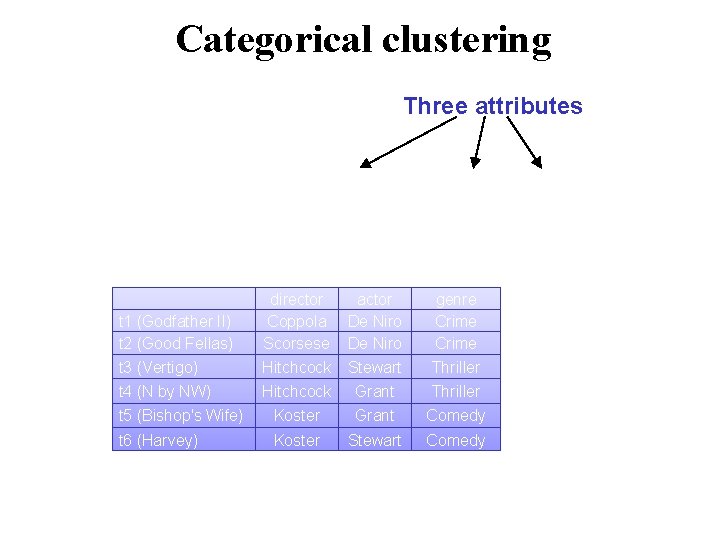

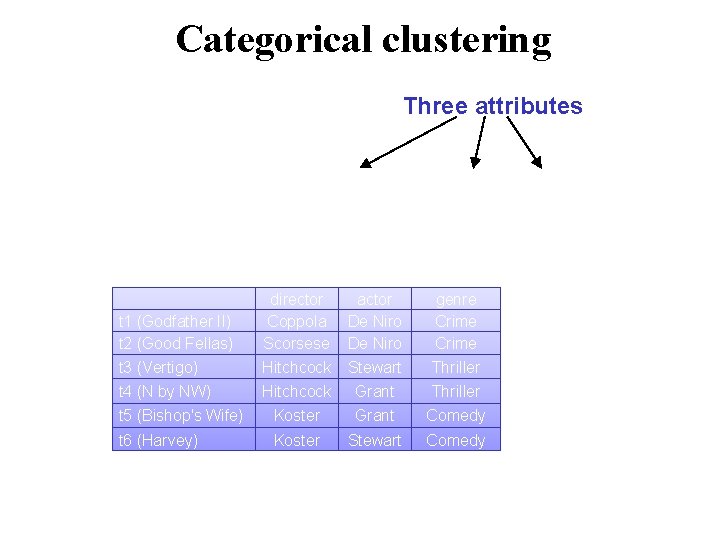

Categorical clustering Three attributes director Coppola Scorsese Hitchcock actor De Niro Stewart Grant genre Crime Thriller t 5 (Bishop's Wife) Koster Grant Comedy t 6 (Harvey) Koster Stewart Comedy t 1 (Godfather II) t 2 (Good Fellas) t 3 (Vertigo) t 4 (N by NW)

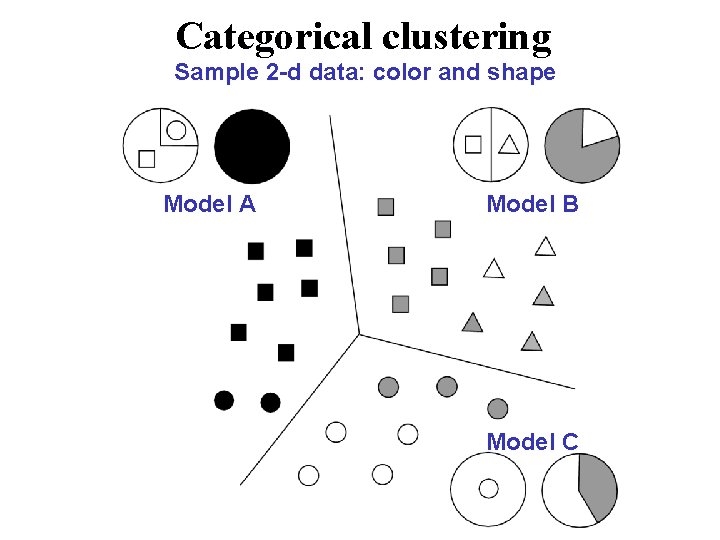

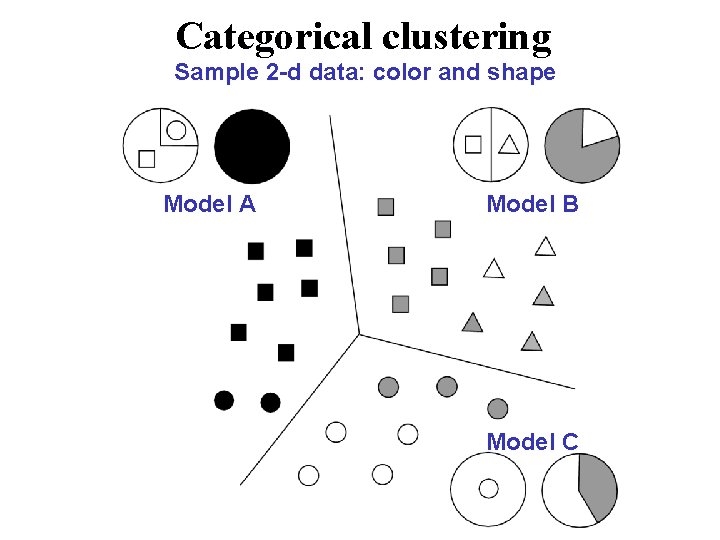

Categorical clustering Sample 2 -d data: color and shape Model A Model B Model C

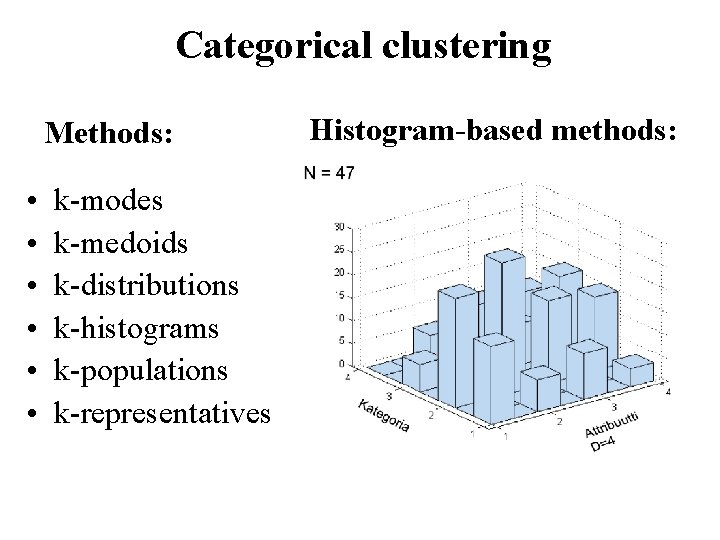

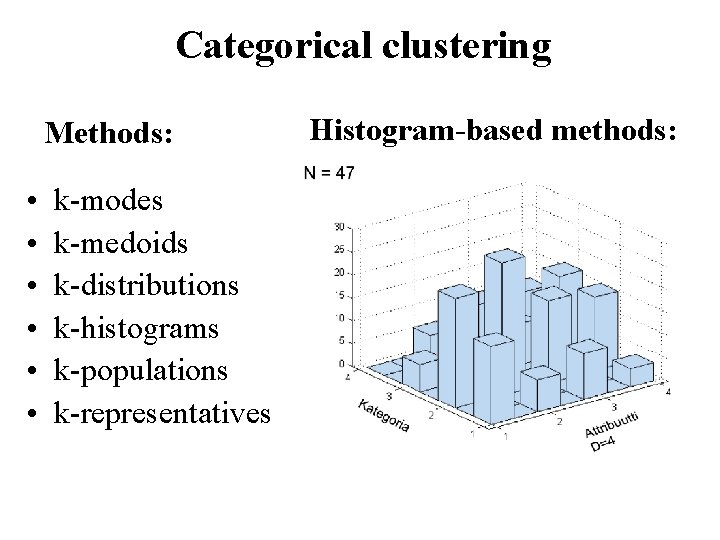

Categorical clustering Methods: • • • k-modes k-medoids k-distributions k-histograms k-populations k-representatives Histogram-based methods:

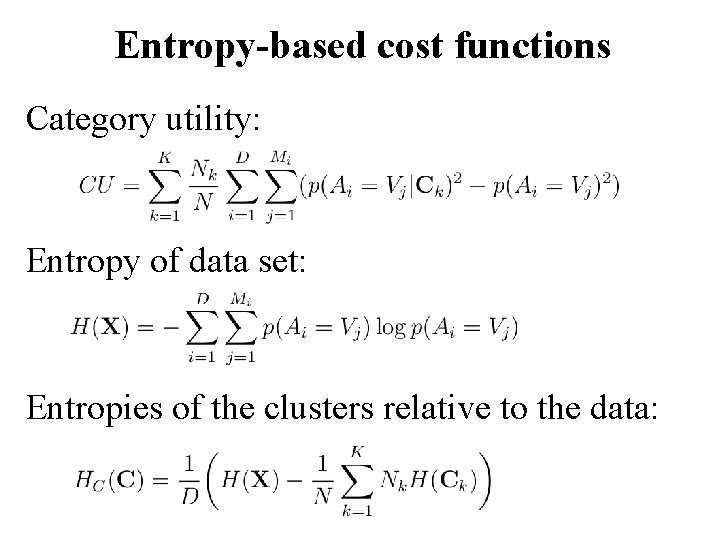

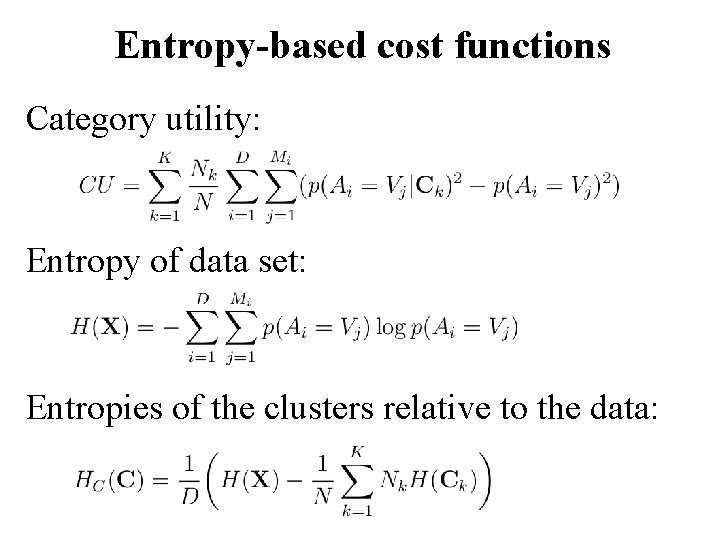

Entropy-based cost functions Category utility: Entropy of data set: Entropies of the clusters relative to the data:

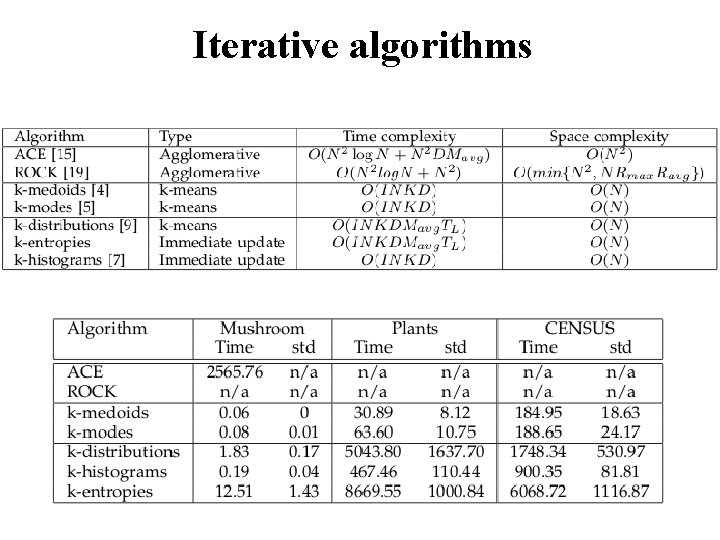

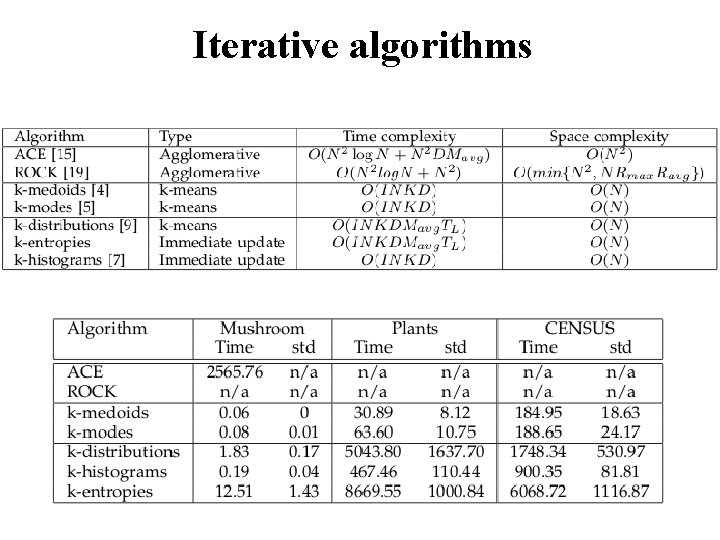

Iterative algorithms

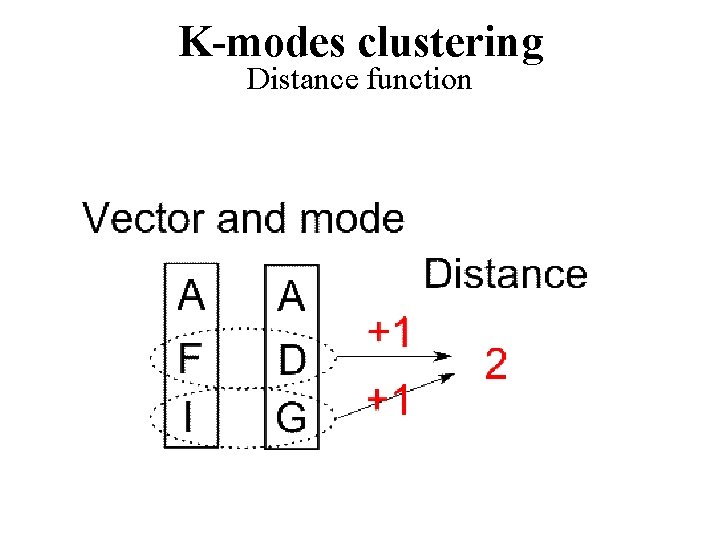

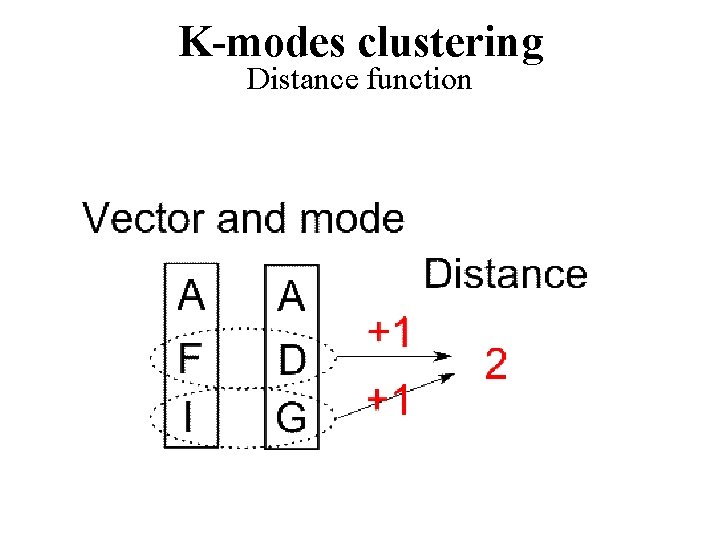

K-modes clustering Distance function

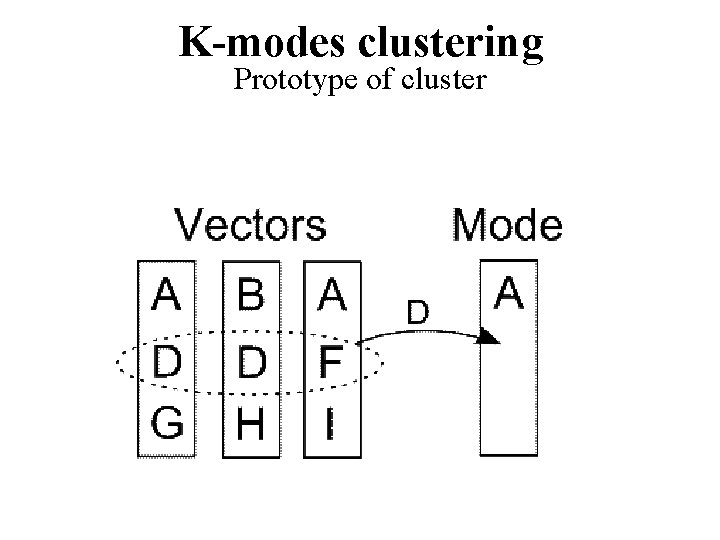

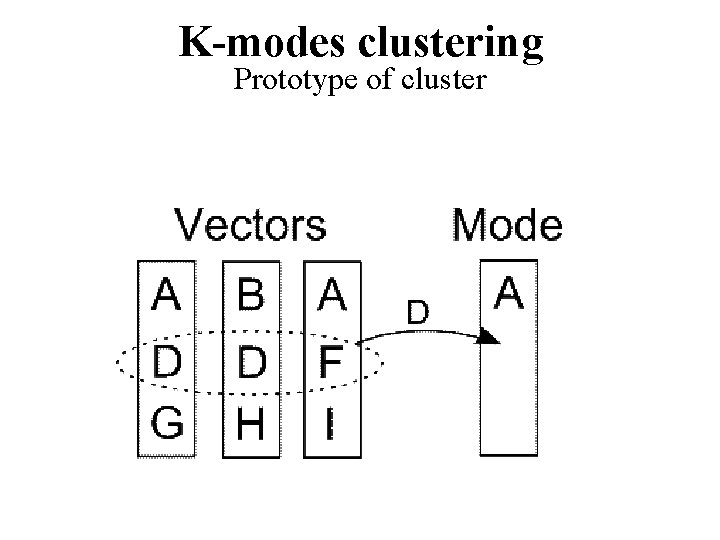

K-modes clustering Prototype of cluster

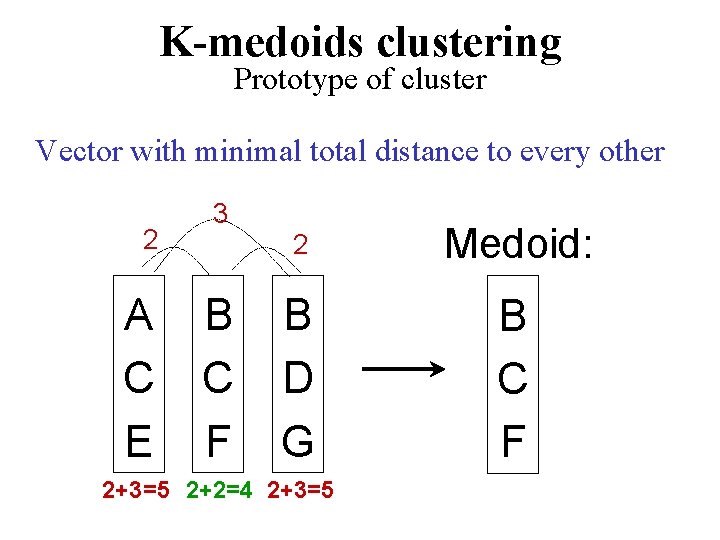

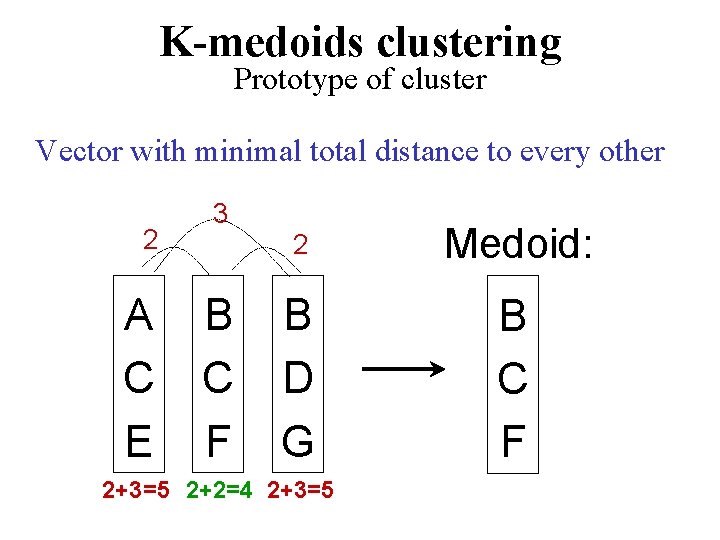

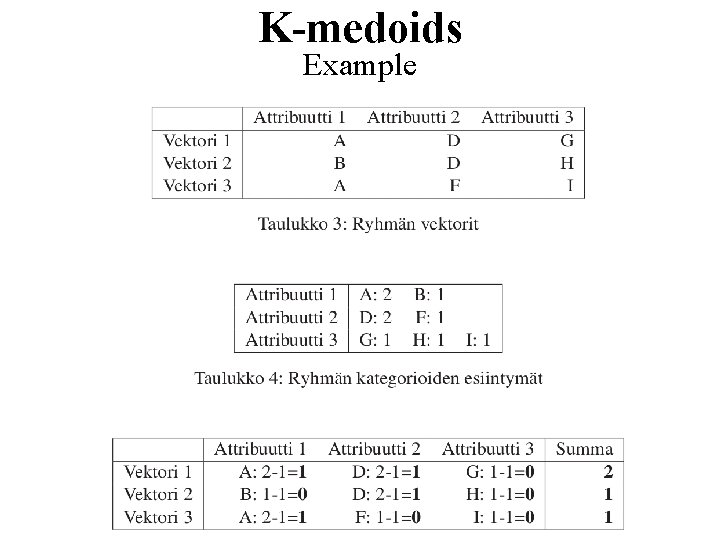

K-medoids clustering Prototype of cluster Vector with minimal total distance to every other 2 A C E 3 B C F 2 Medoid: B D G B C F 2+3=5 2+2=4 2+3=5

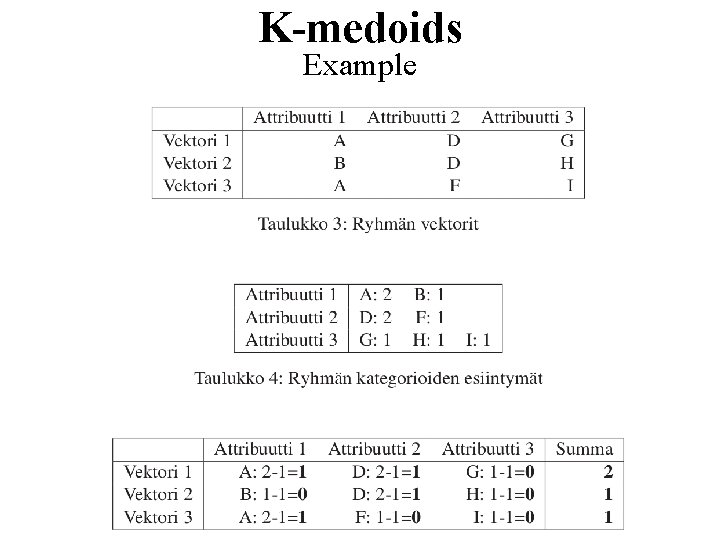

K-medoids Example

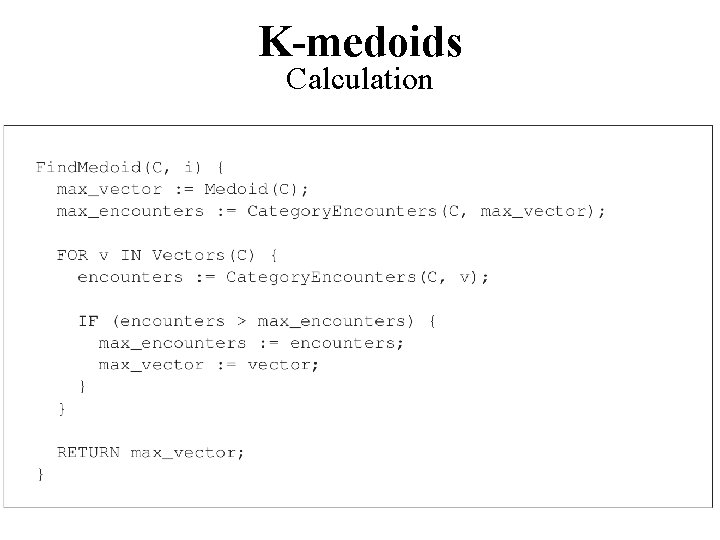

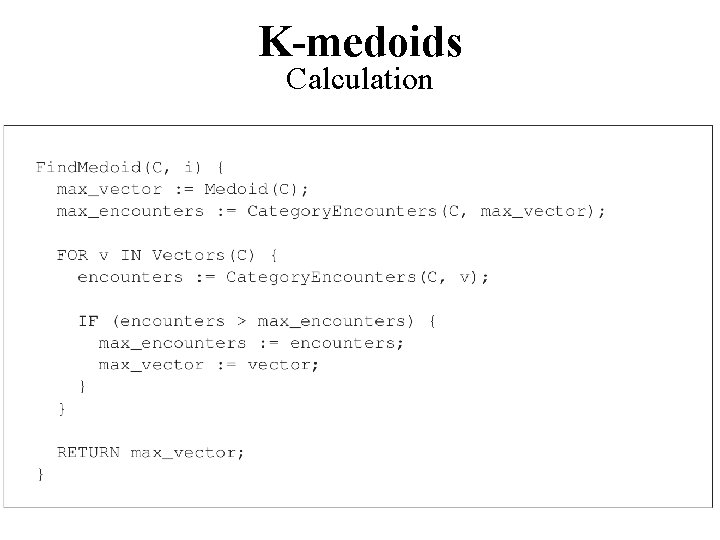

K-medoids Calculation

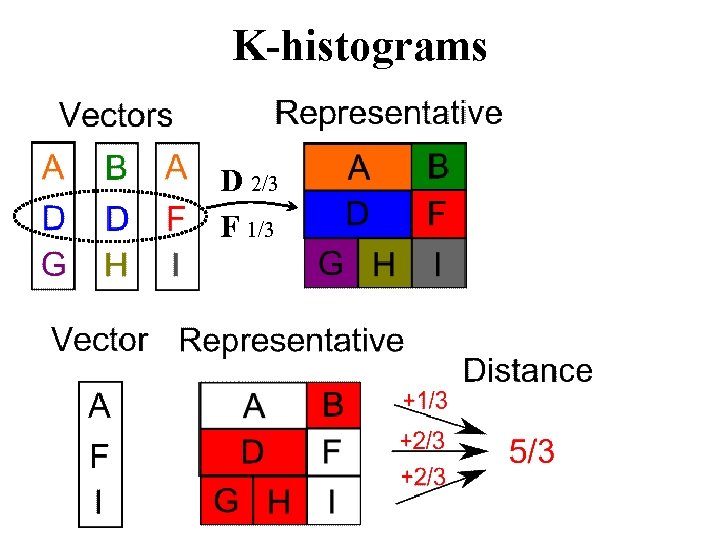

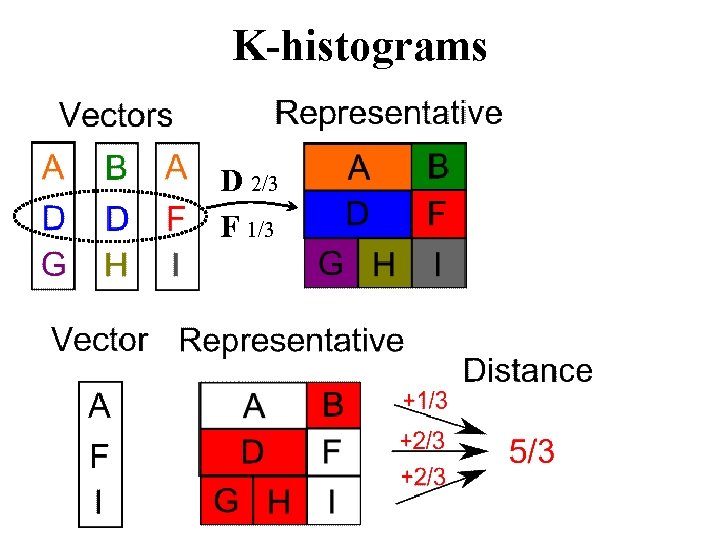

K-histograms D 2/3 F 1/3

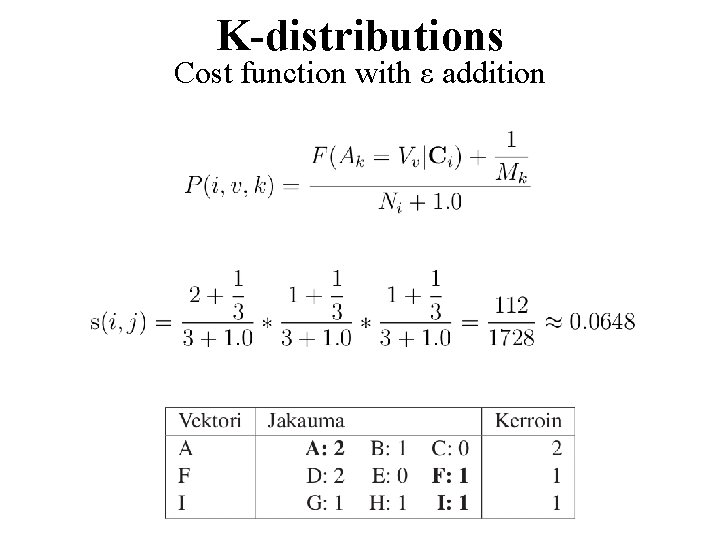

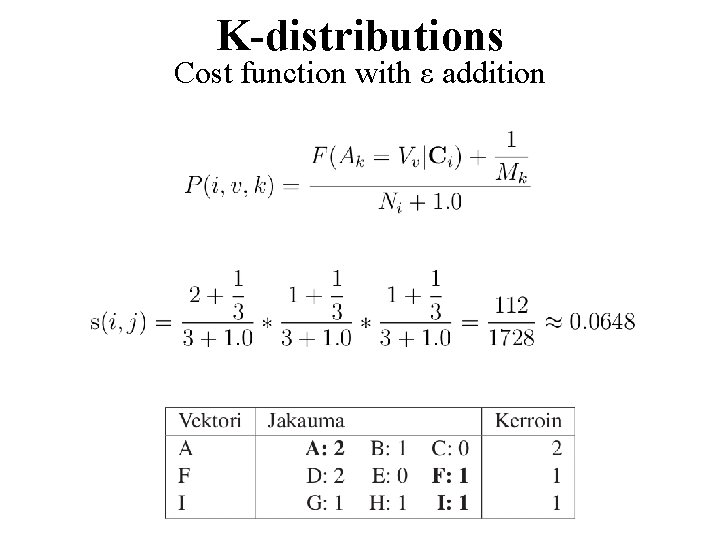

K-distributions Cost function with ε addition

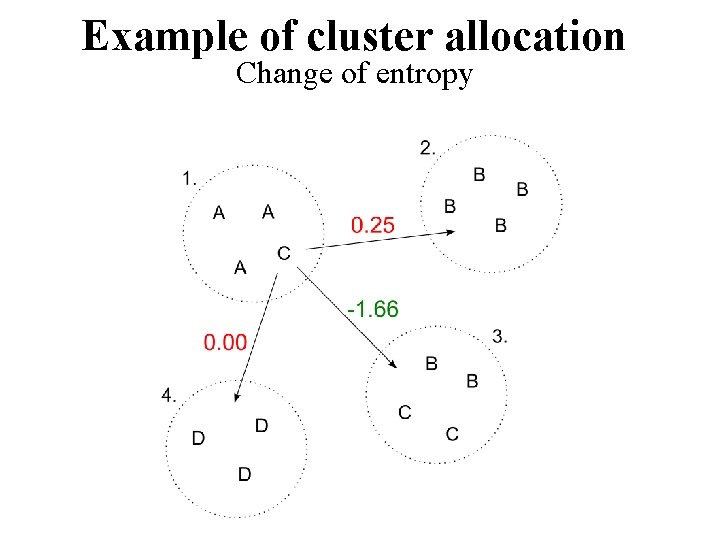

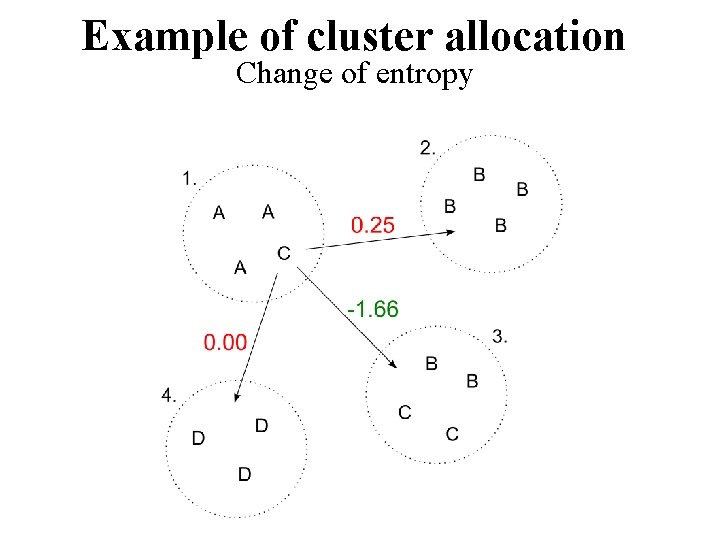

Example of cluster allocation Change of entropy

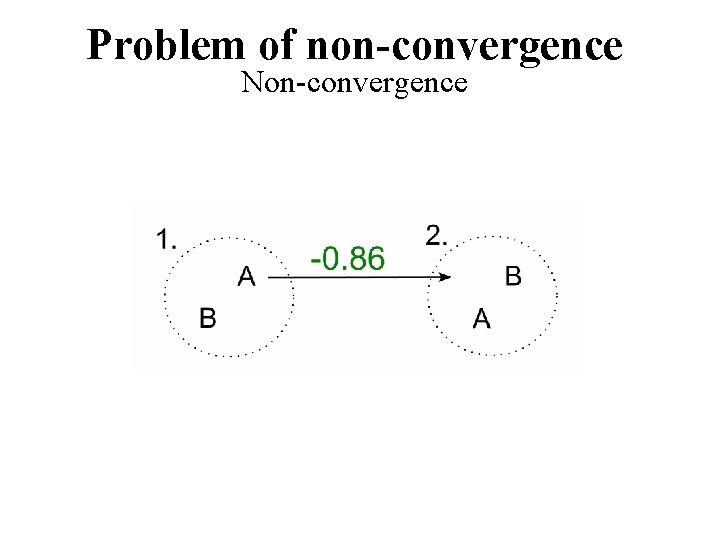

Problem of non-convergence Non-convergence

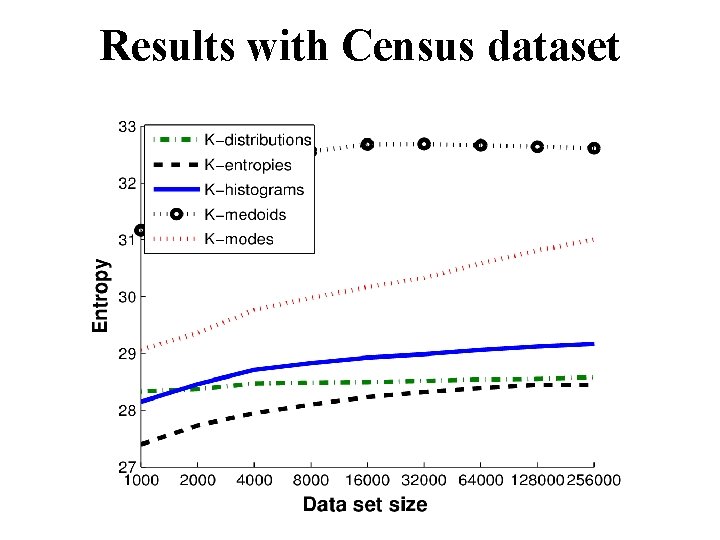

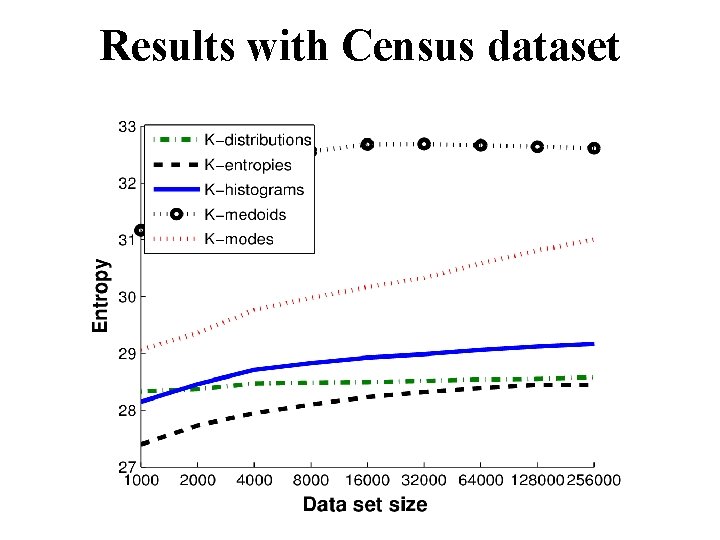

Results with Census dataset

Part IV: Text data

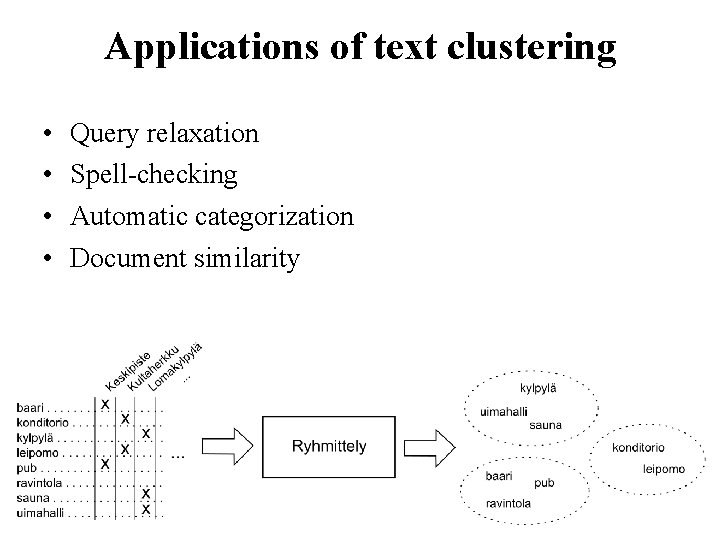

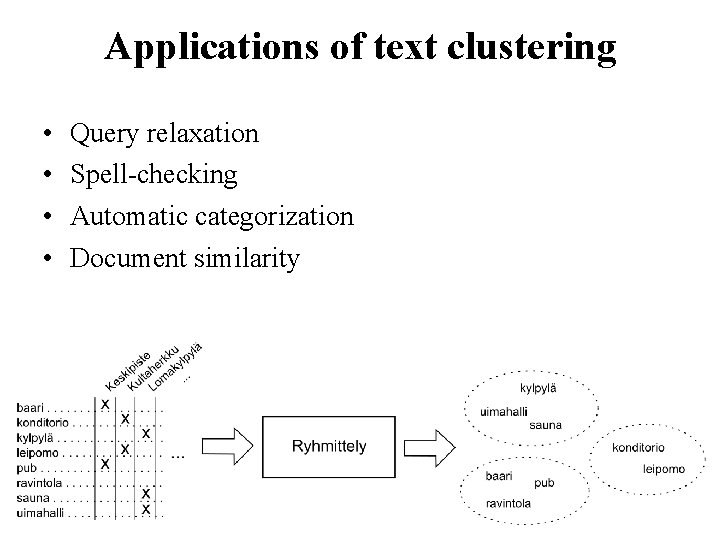

Applications of text clustering • • Query relaxation Spell-checking Automatic categorization Document similarity

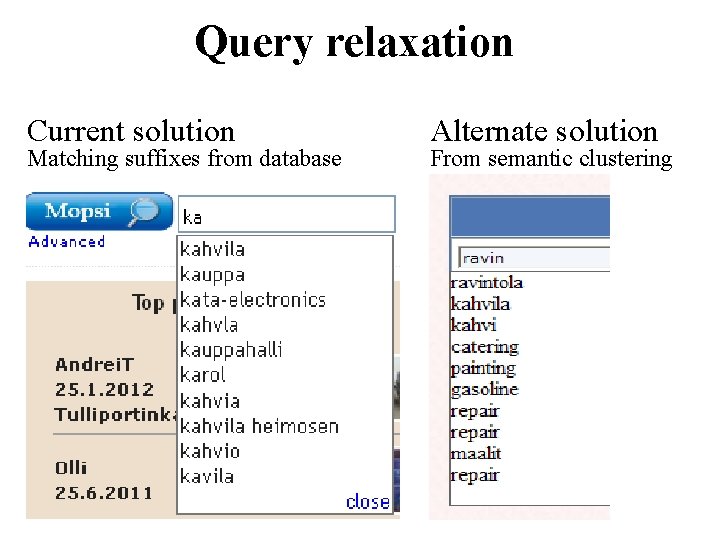

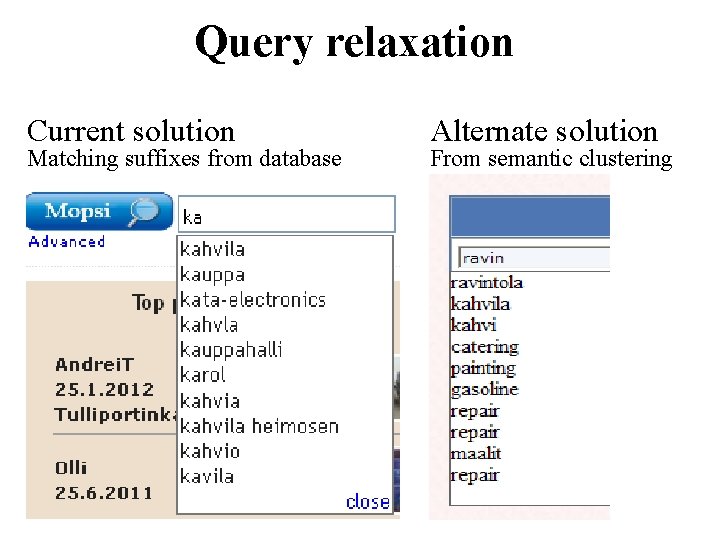

Query relaxation Current solution Matching suffixes from database Alternate solution From semantic clustering

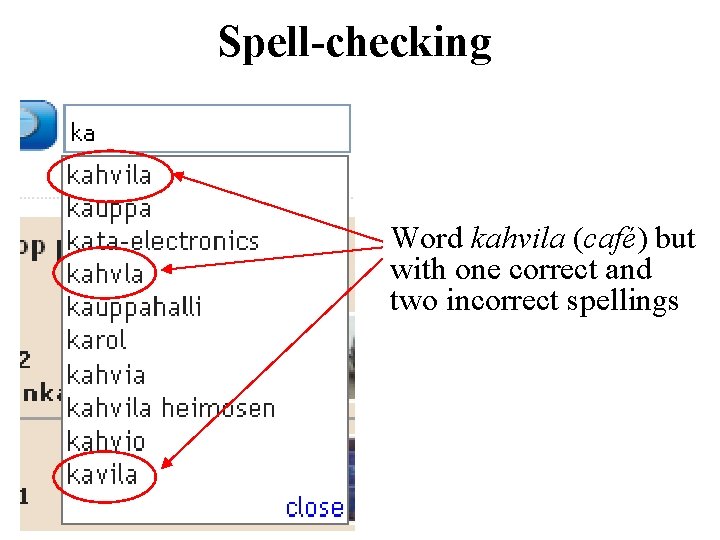

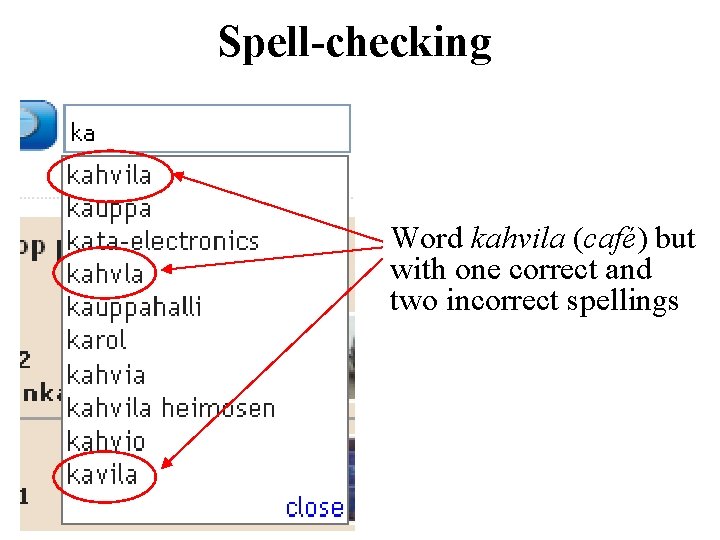

Spell-checking Word kahvila (café) but with one correct and two incorrect spellings

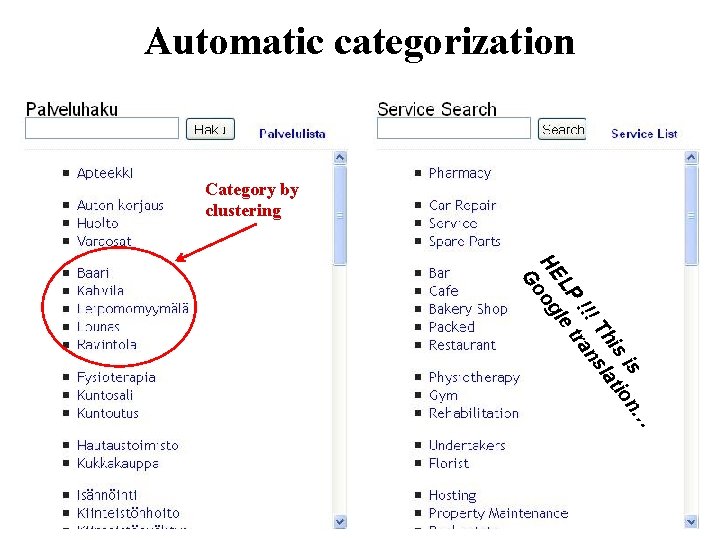

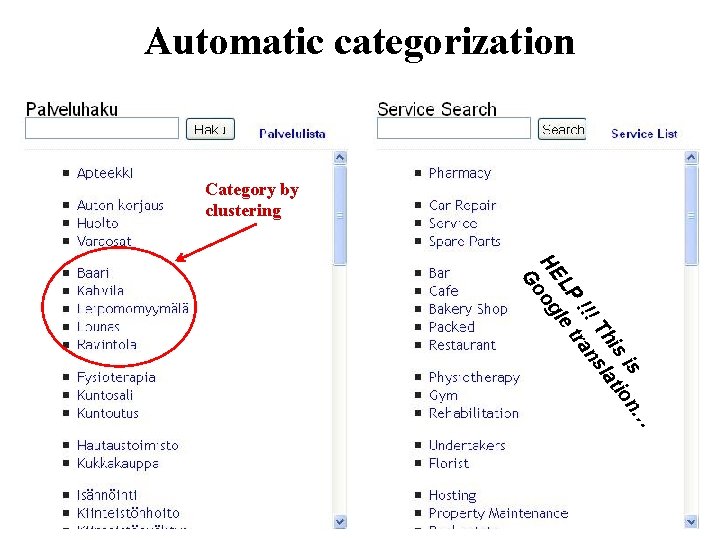

Automatic categorization Category by clustering … is n is tio Th sla !!! tran LP le HE og Go

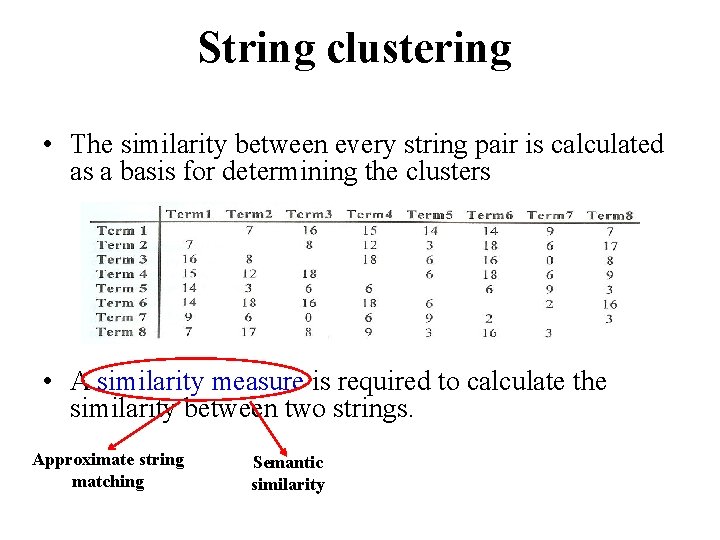

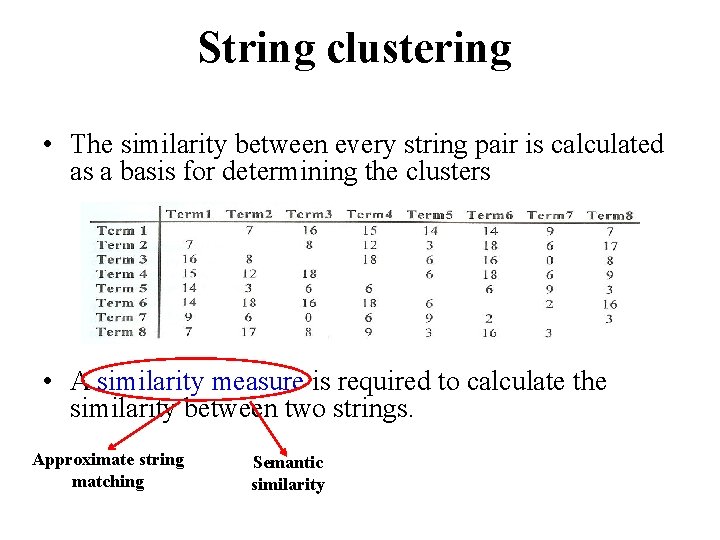

String clustering • The similarity between every string pair is calculated as a basis for determining the clusters • A similarity measure is required to calculate the similarity between two strings. Approximate string matching Semantic similarity

Document clustering Motivation: – Group related documents based on their content – No predefined training set (taxonomy) – Generate a taxonomy at runtime Clustering Process: – Data preprocessing: remove stop words, stem, feature extraction and lexical analysis – Define cost function – Perform clustering

Exact string matching • Given a text string T of length n and a pattern string P of length m, the exact string matching problem is to find all occurrences of P in T. • Example: T=“AGCTTGA” • Applications: – Searching keywords in a file – Searching engines (like Google) – Database searching P=“GCT”

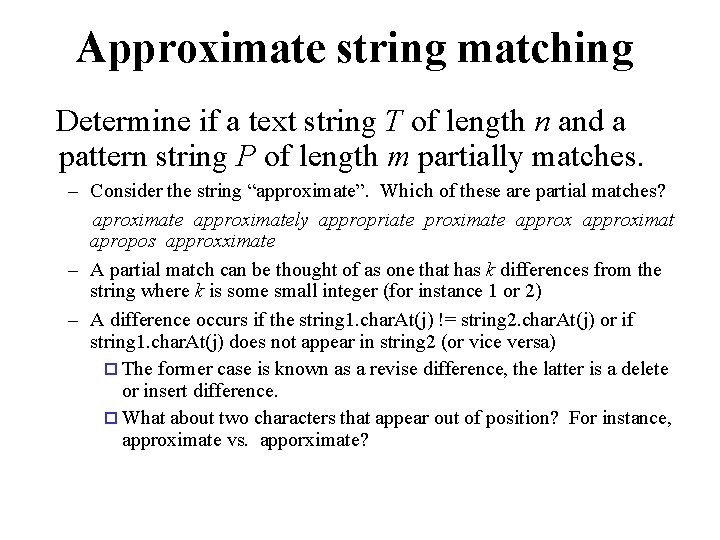

Approximate string matching Determine if a text string T of length n and a pattern string P of length m partially matches. – Consider the string “approximate”. Which of these are partial matches? aproximate approximately appropriate proximate approximat apropos approxximate – A partial match can be thought of as one that has k differences from the string where k is some small integer (for instance 1 or 2) – A difference occurs if the string 1. char. At(j) != string 2. char. At(j) or if string 1. char. At(j) does not appear in string 2 (or vice versa) ¨ The former case is known as a revise difference, the latter is a delete or insert difference. ¨ What about two characters that appear out of position? For instance, approximate vs. apporximate?

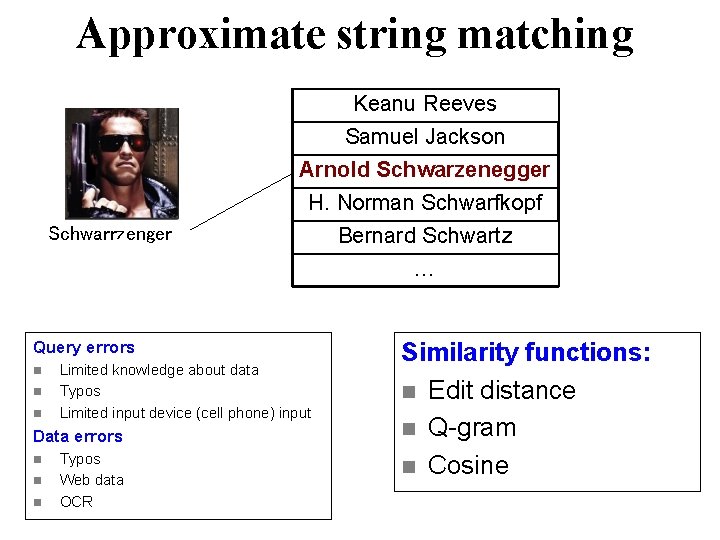

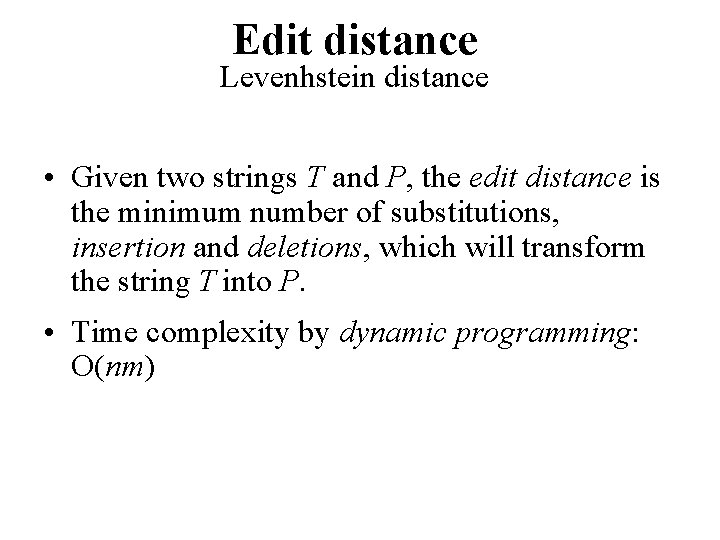

Approximate string matching Keanu Reeves Samuel Jackson Arnold Schwarzenegger H. Norman Schwarfkopf Schwarrzenger Query errors n n n Limited knowledge about data Typos Limited input device (cell phone) input Data errors n n n Typos Web data OCR Bernard Schwartz … Similarity functions: n Edit distance n Q-gram n Cosine

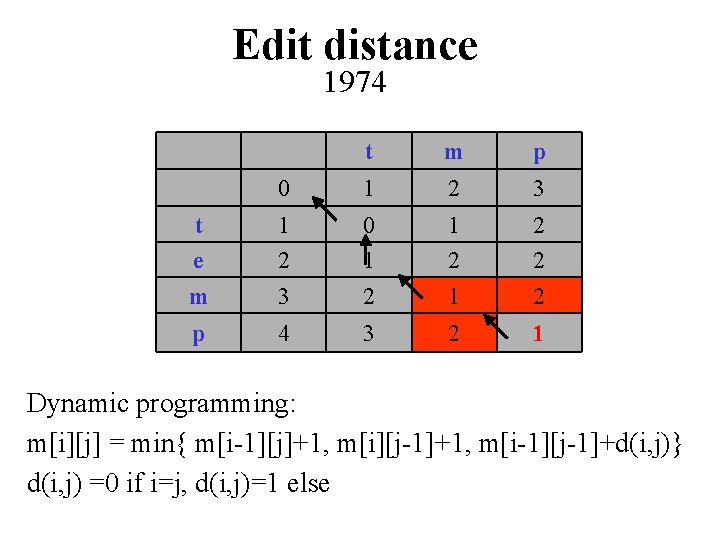

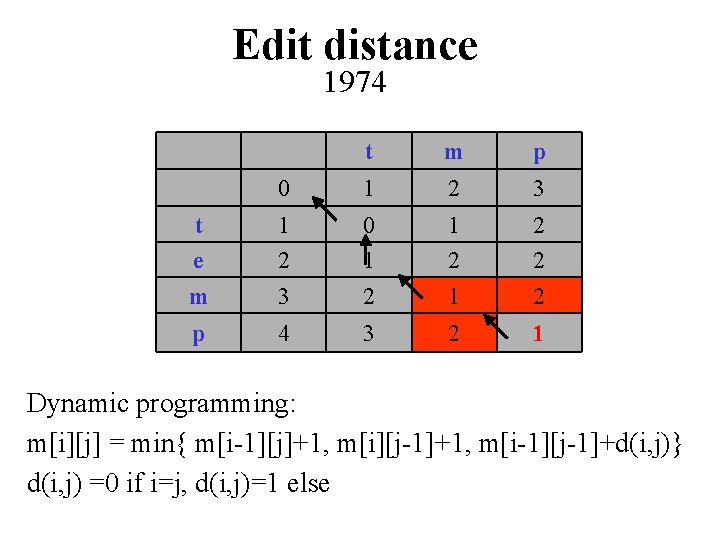

Edit distance Levenhstein distance • Given two strings T and P, the edit distance is the minimum number of substitutions, insertion and deletions, which will transform the string T into P. • Time complexity by dynamic programming: O(nm)

Edit distance 1974 t m p 0 1 2 3 t e m 1 2 3 0 1 2 1 2 2 2 p 4 3 2 1 Dynamic programming: m[i][j] = min{ m[i-1][j]+1, m[i][j-1]+1, m[i-1][j-1]+d(i, j)} d(i, j) =0 if i=j, d(i, j)=1 else

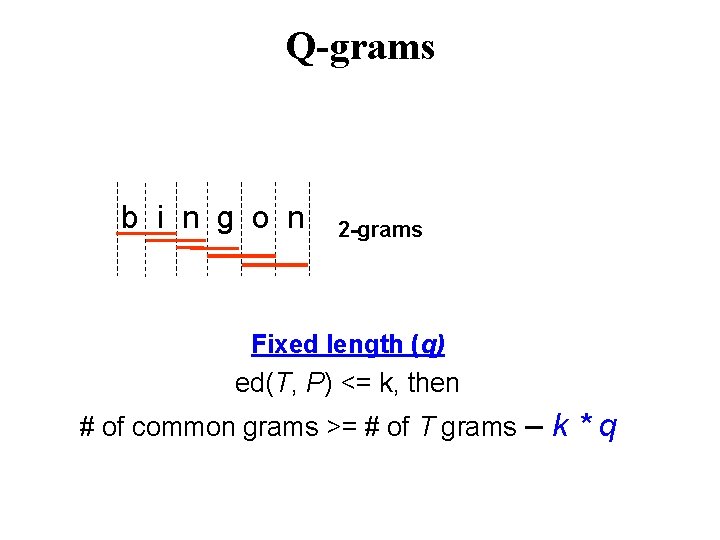

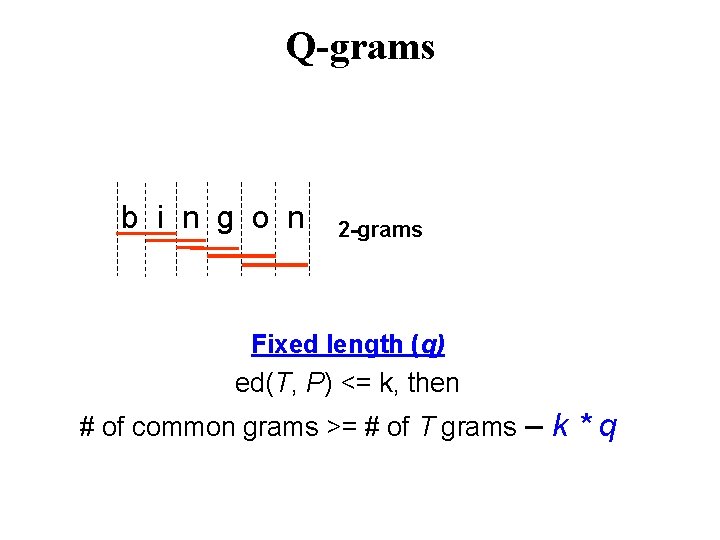

Q-grams b i n g o n 2 -grams Fixed length (q) ed(T, P) <= k, then # of common grams >= # of T grams – k * q

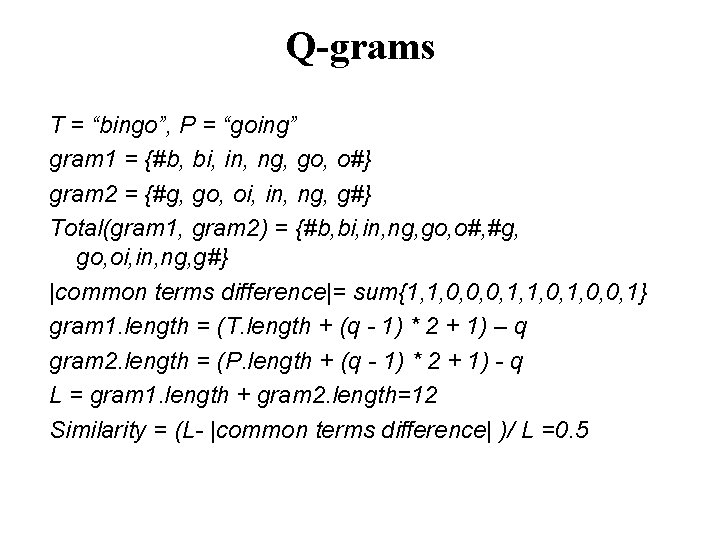

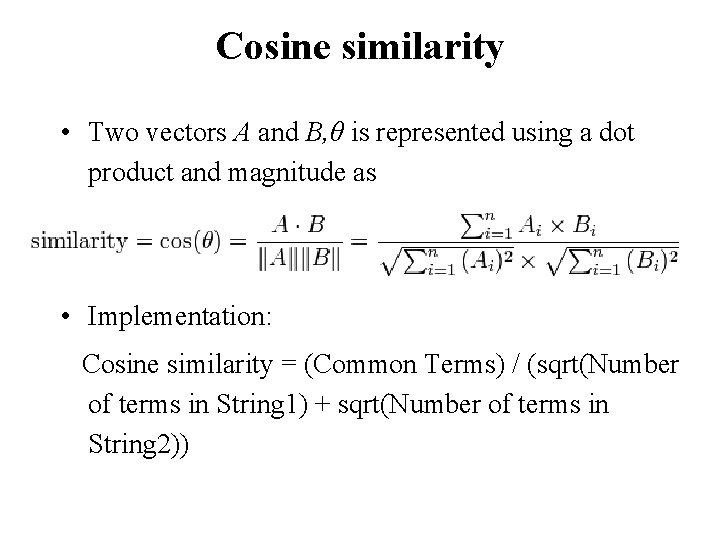

Q-grams T = “bingo”, P = “going” gram 1 = {#b, bi, in, ng, go, o#} gram 2 = {#g, go, oi, in, ng, g#} Total(gram 1, gram 2) = {#b, bi, in, ng, go, o#, #g, go, oi, in, ng, g#} |common terms difference|= sum{1, 1, 0, 0, 0, 1, 1, 0, 0, 1} gram 1. length = (T. length + (q - 1) * 2 + 1) – q gram 2. length = (P. length + (q - 1) * 2 + 1) - q L = gram 1. length + gram 2. length=12 Similarity = (L- |common terms difference| )/ L =0. 5

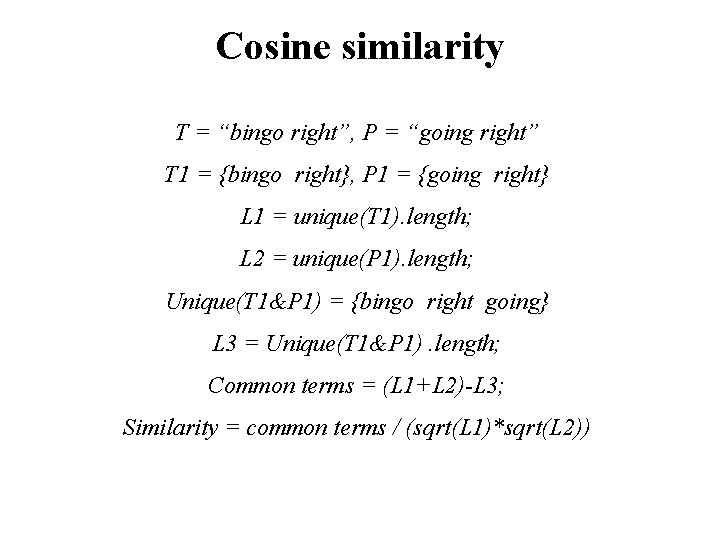

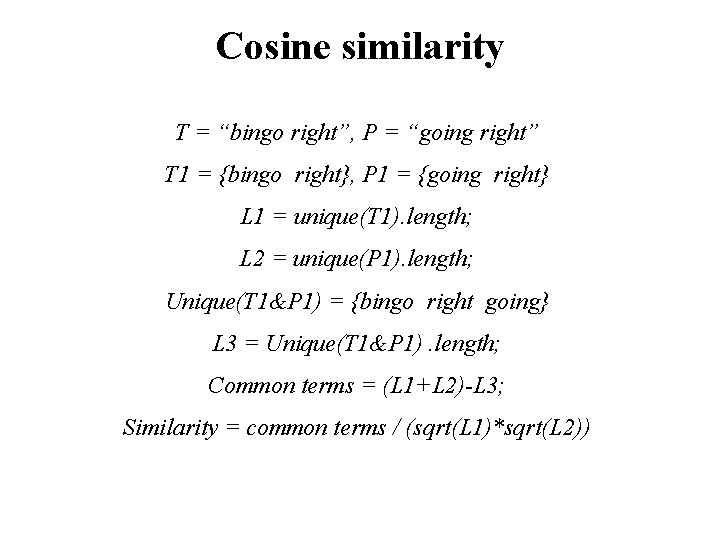

Cosine similarity • Two vectors A and B, θ is represented using a dot product and magnitude as • Implementation: Cosine similarity = (Common Terms) / (sqrt(Number of terms in String 1) + sqrt(Number of terms in String 2))

Cosine similarity T = “bingo right”, P = “going right” T 1 = {bingo right}, P 1 = {going right} L 1 = unique(T 1). length; L 2 = unique(P 1). length; Unique(T 1&P 1) = {bingo right going} L 3 = Unique(T 1&P 1). length; Common terms = (L 1+L 2)-L 3; Similarity = common terms / (sqrt(L 1)*sqrt(L 2))

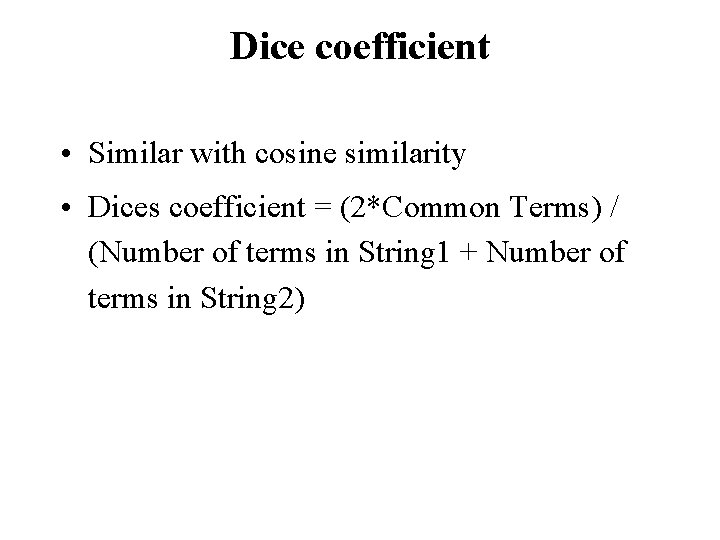

Dice coefficient • Similar with cosine similarity • Dices coefficient = (2*Common Terms) / (Number of terms in String 1 + Number of terms in String 2)

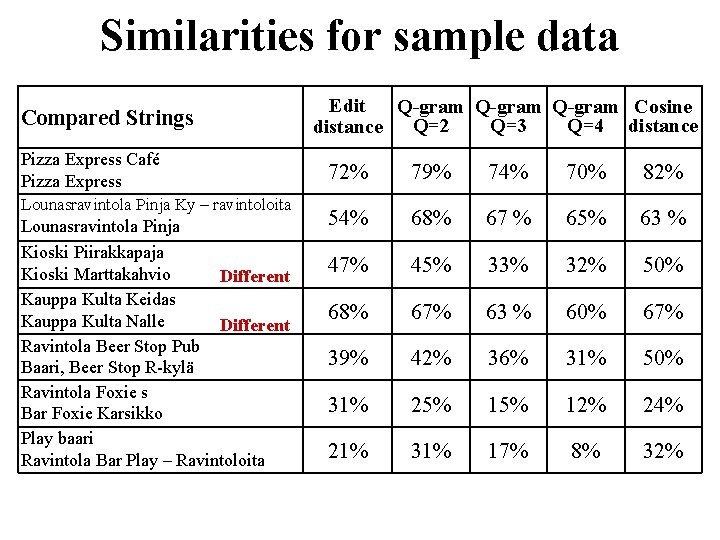

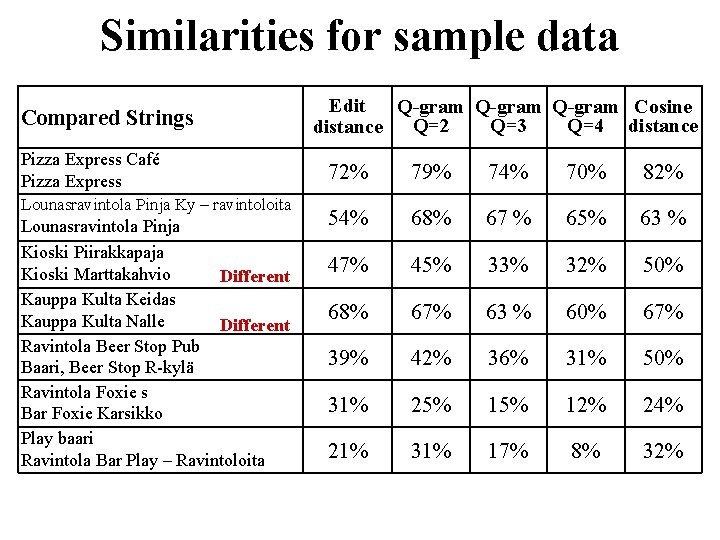

Similarities for sample data Compared Strings Pizza Express Café Pizza Express Lounasravintola Pinja Ky – ravintoloita Lounasravintola Pinja Kioski Piirakkapaja Kioski Marttakahvio Different Kauppa Kulta Keidas Kauppa Kulta Nalle Different Ravintola Beer Stop Pub Baari, Beer Stop R-kylä Ravintola Foxie s Bar Foxie Karsikko Play baari Ravintola Bar Play – Ravintoloita Edit Q-gram Cosine Q=3 Q=4 distance Q=2 72% 79% 74% 70% 82% 54% 68% 67 % 65% 63 % 47% 45% 33% 32% 50% 68% 67% 63 % 60% 67% 39% 42% 36% 31% 50% 31% 25% 12% 24% 21% 31% 17% 8% 32%

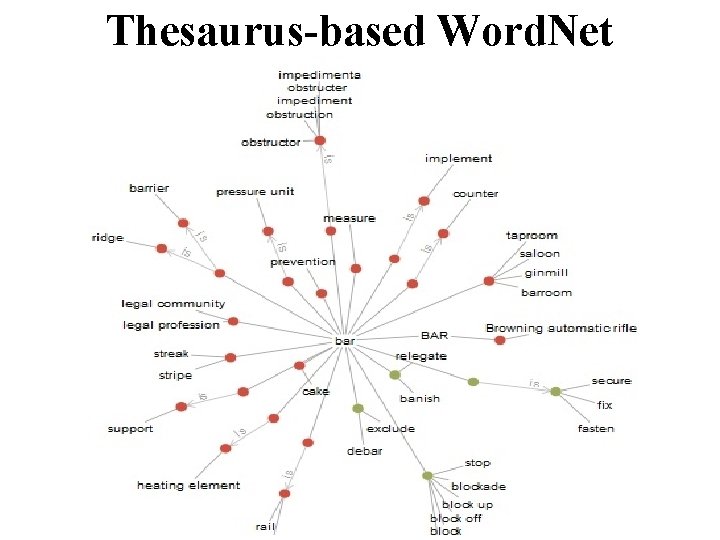

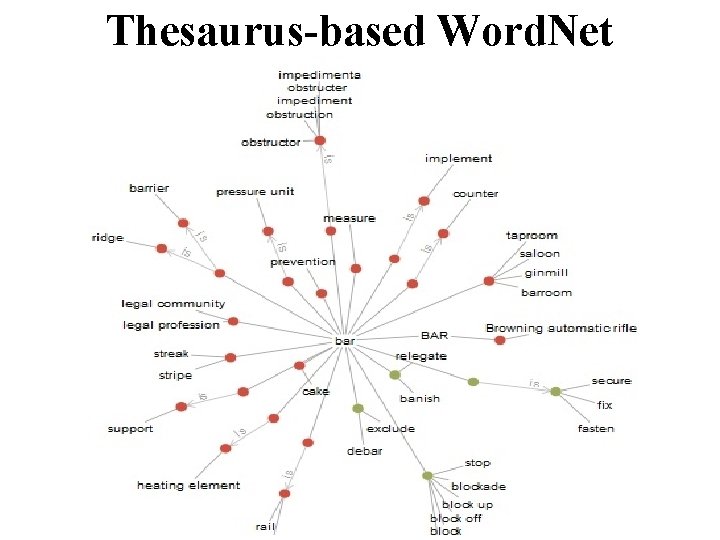

Thesaurus-based Word. Net

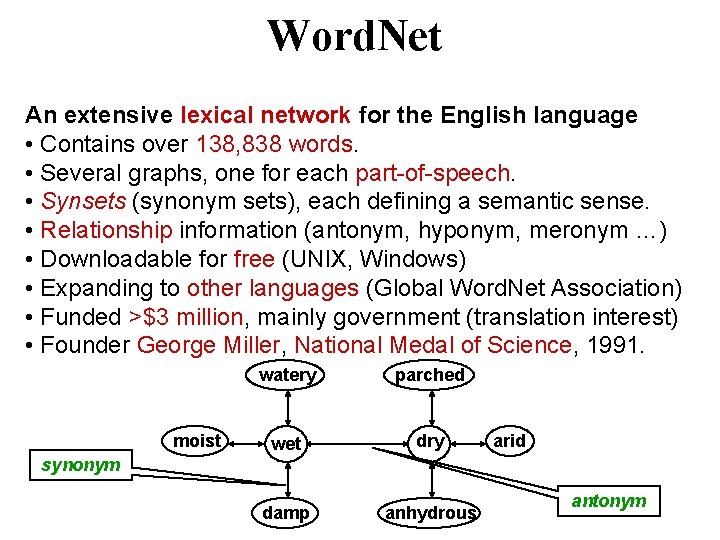

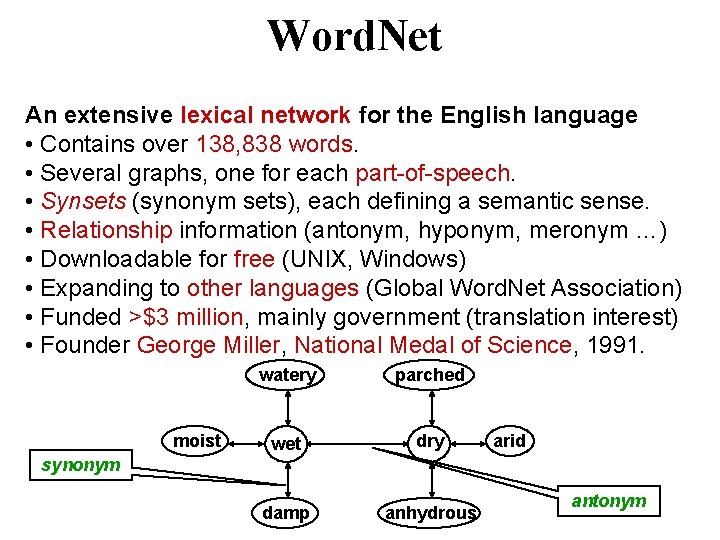

Word. Net An extensive lexical network for the English language • Contains over 138, 838 words. • Several graphs, one for each part-of-speech. • Synsets (synonym sets), each defining a semantic sense. • Relationship information (antonym, hyponym, meronym …) • Downloadable for free (UNIX, Windows) • Expanding to other languages (Global Word. Net Association) • Funded >$3 million, mainly government (translation interest) • Founder George Miller, National Medal of Science, 1991. moist watery parched wet dry damp anhydrous arid synonym antonym

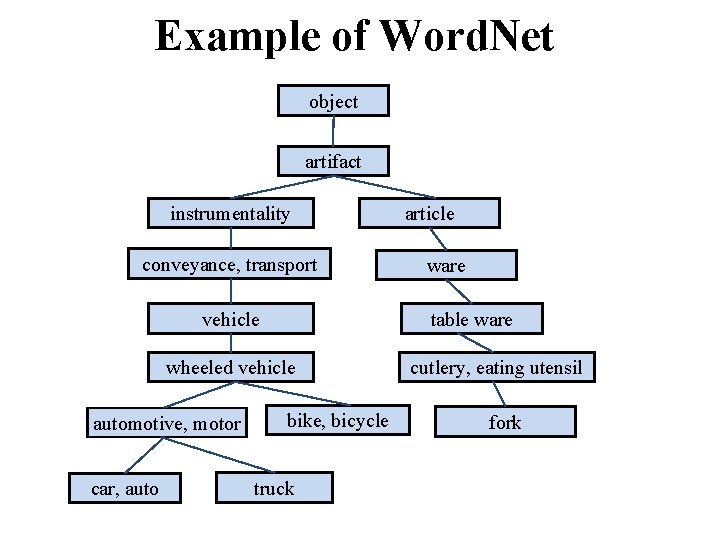

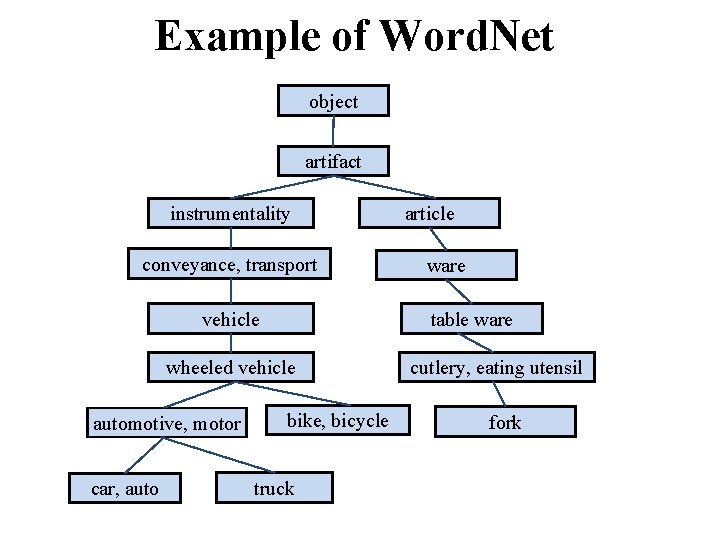

Example of Word. Net object artifact instrumentality conveyance, transport wheeled vehicle car, auto ware table ware vehicle automotive, motor article bike, bicycle truck cutlery, eating utensil fork

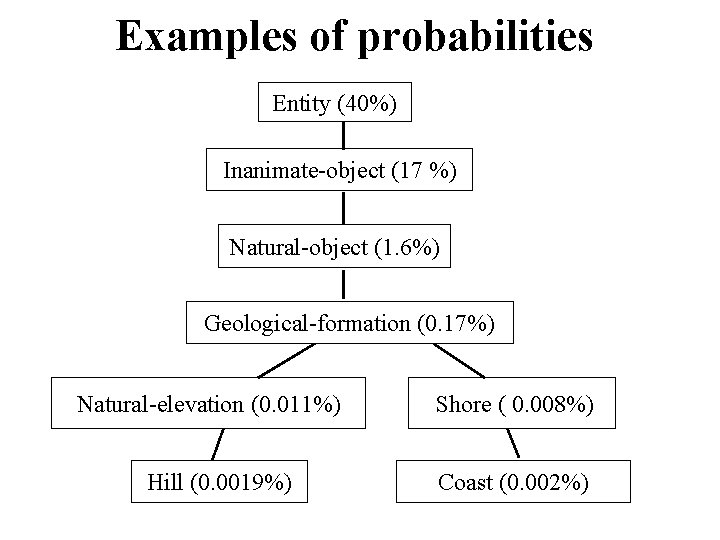

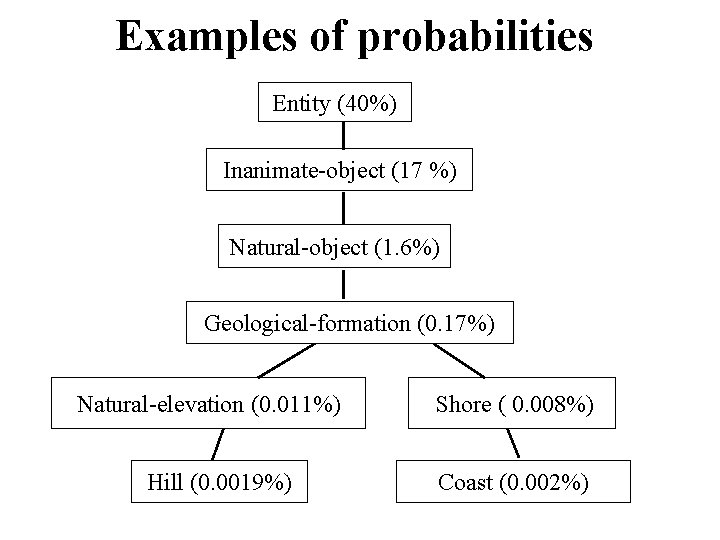

Examples of probabilities Entity (40%) Inanimate-object (17 %) Natural-object (1. 6%) Geological-formation (0. 17%) Natural-elevation (0. 011%) Hill (0. 0019%) Shore ( 0. 008%) Coast (0. 002%)

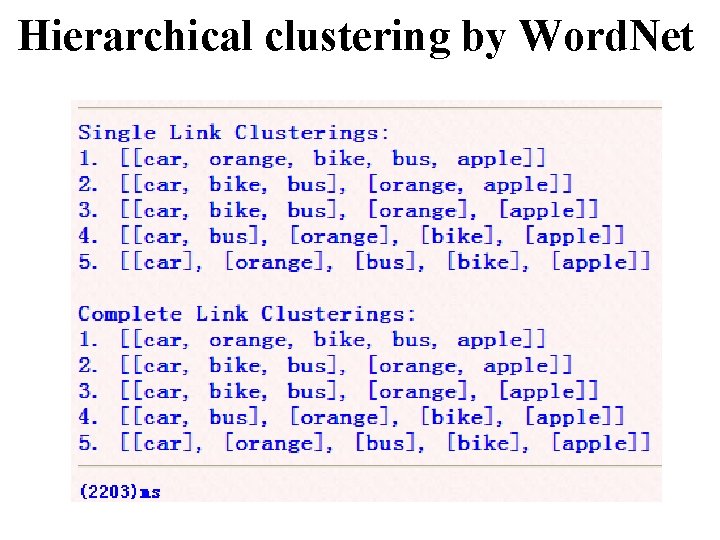

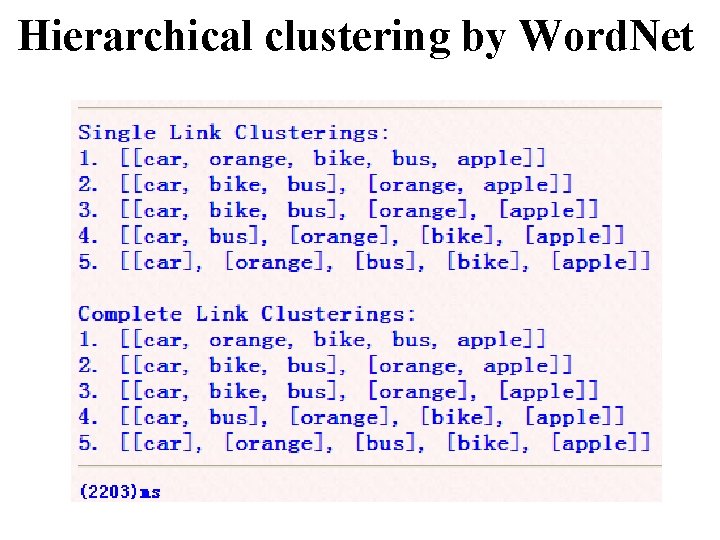

Hierarchical clustering by Word. Net

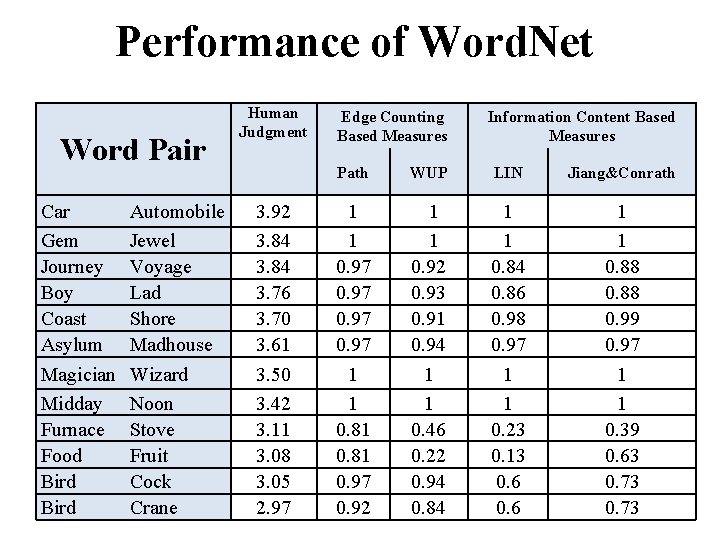

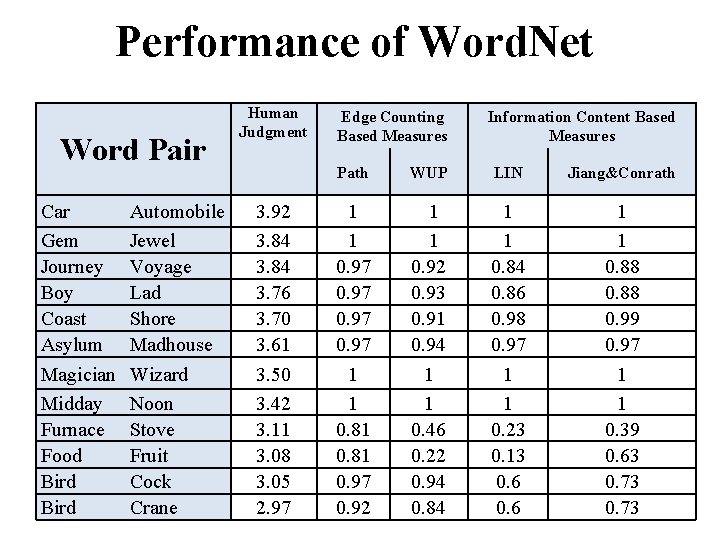

Performance of Word. Net Word Pair Car Gem Journey Boy Coast Asylum Magician Midday Furnace Food Bird Automobile Jewel Voyage Lad Shore Madhouse Wizard Noon Stove Fruit Cock Crane Human Judgment 3. 92 3. 84 3. 76 3. 70 3. 61 3. 50 3. 42 3. 11 3. 08 3. 05 2. 97 Edge Counting Based Measures Information Content Based Measures Path WUP LIN Jiang&Conrath 1 1 0. 97 1 1 0. 81 0. 97 0. 92 1 1 0. 92 0. 93 0. 91 0. 94 1 1 0. 46 0. 22 0. 94 0. 84 1 1 0. 84 0. 86 0. 98 0. 97 1 1 0. 23 0. 13 0. 6 1 1 0. 88 0. 99 0. 97 1 1 0. 39 0. 63 0. 73

Part V: Time series

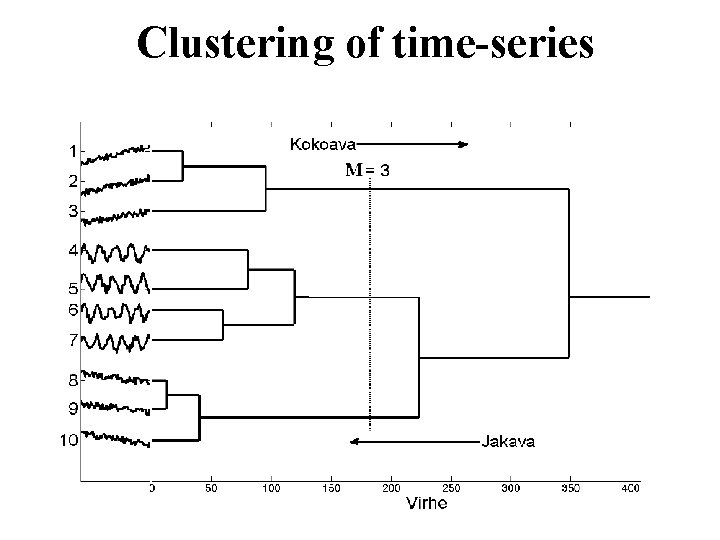

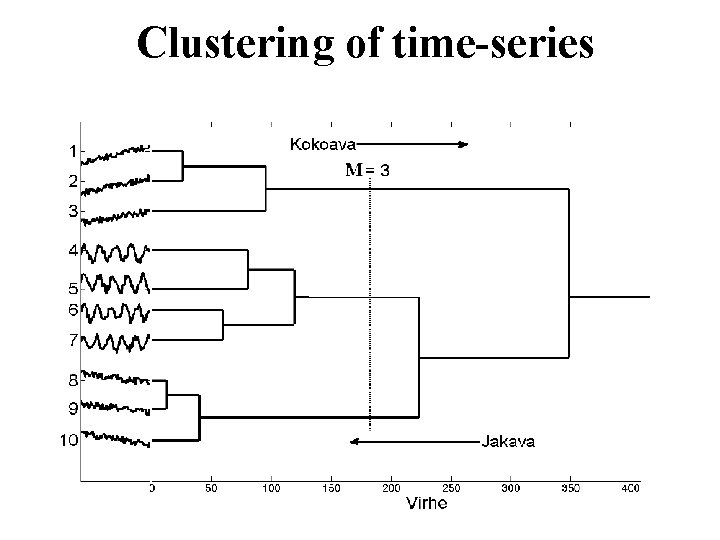

Clustering of time-series

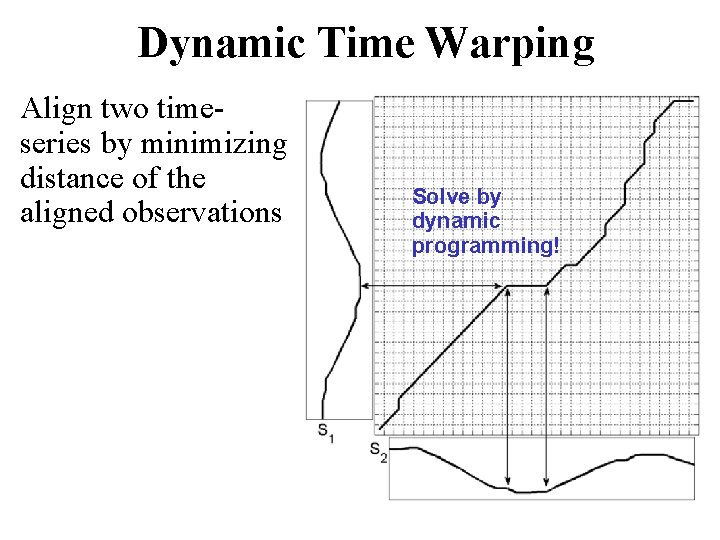

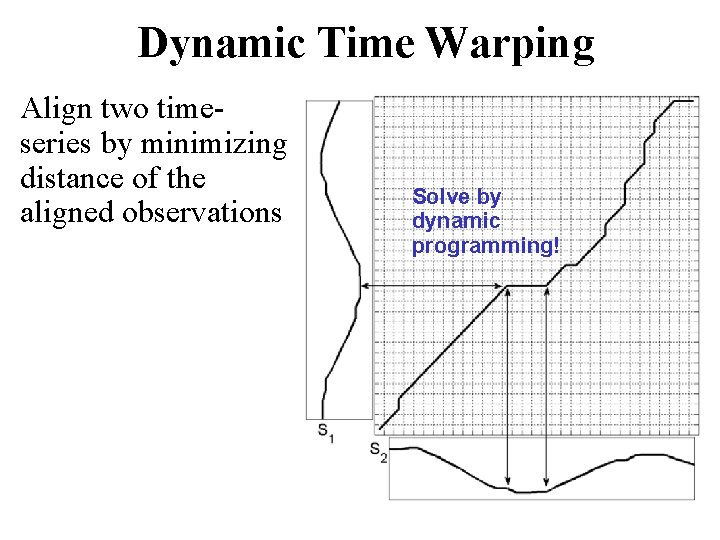

Dynamic Time Warping Align two timeseries by minimizing distance of the aligned observations Solve by dynamic programming!

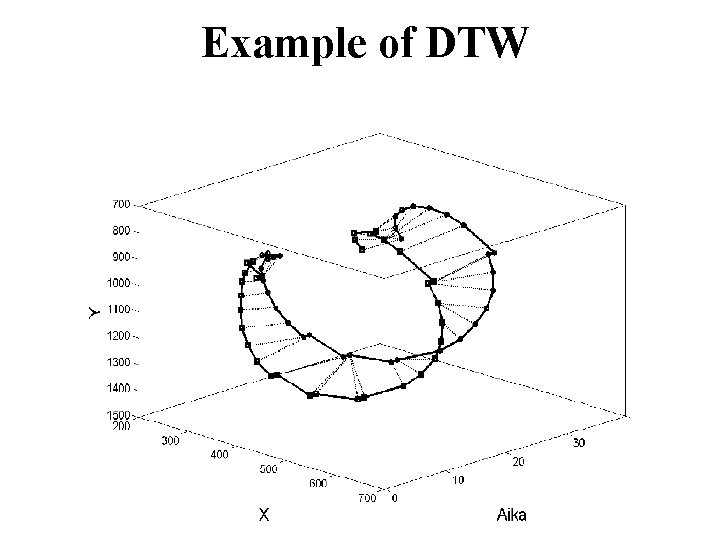

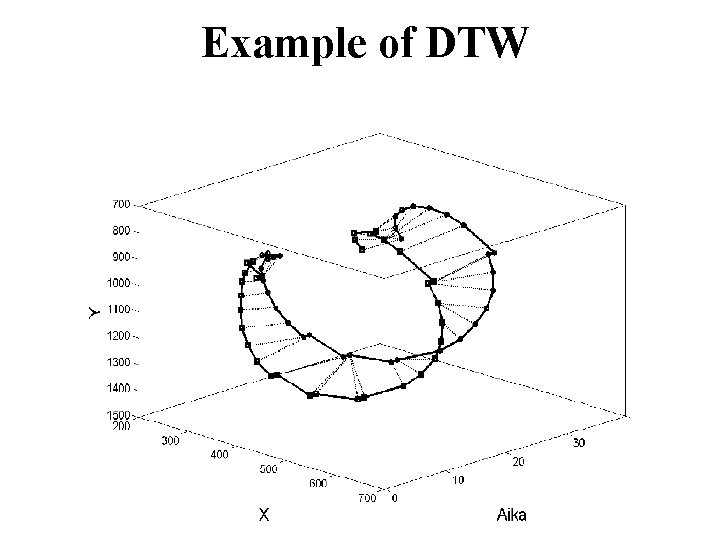

Example of DTW

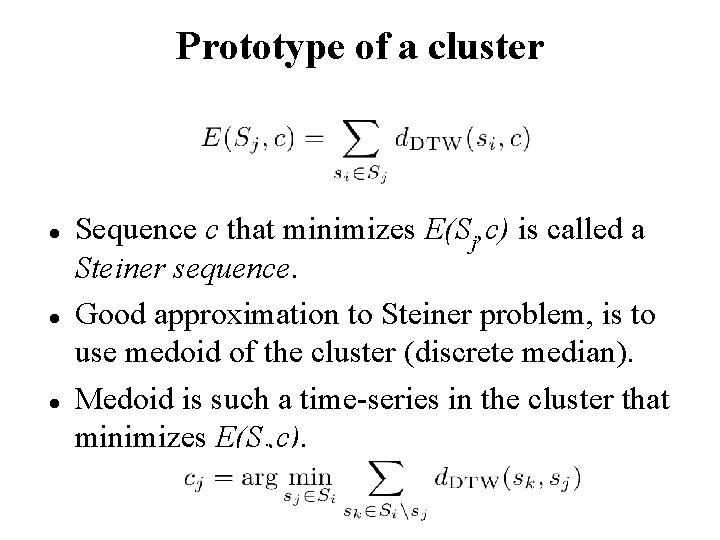

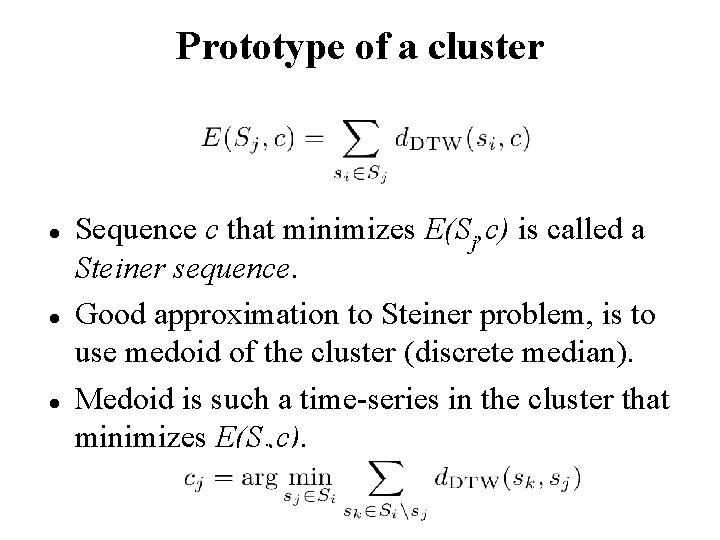

Prototype of a cluster Sequence c that minimizes E(Sj, c) is called a Steiner sequence. Good approximation to Steiner problem, is to use medoid of the cluster (discrete median). Medoid is such a time-series in the cluster that minimizes E(Sj, c).

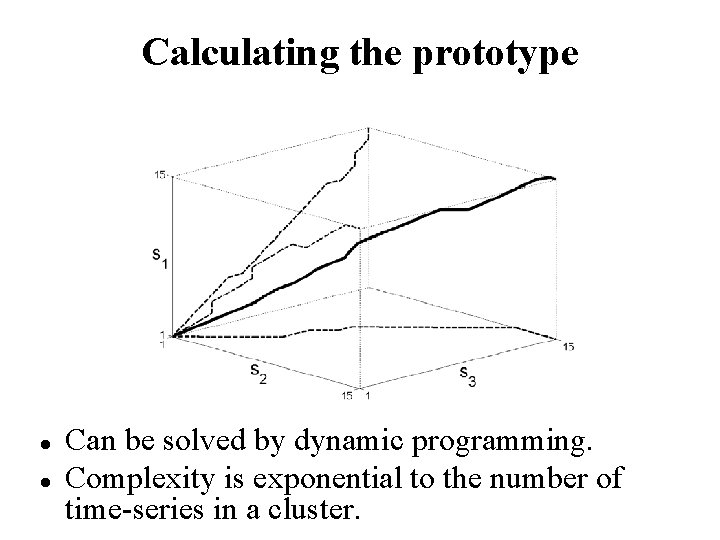

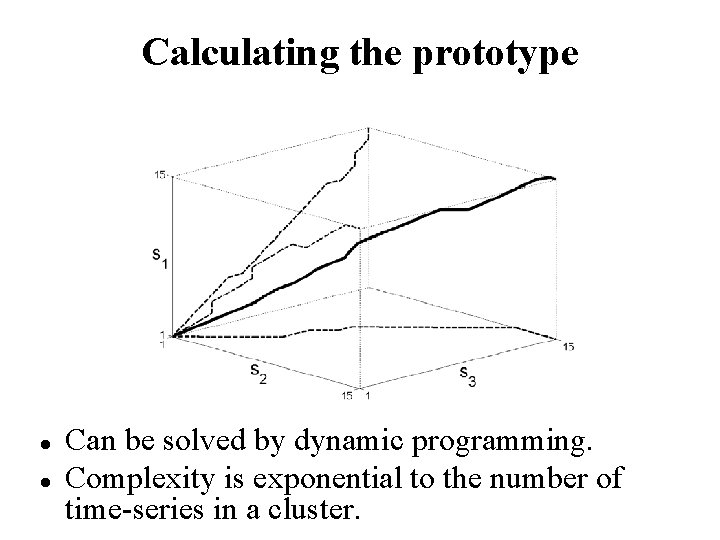

Calculating the prototype Can be solved by dynamic programming. Complexity is exponential to the number of time-series in a cluster.

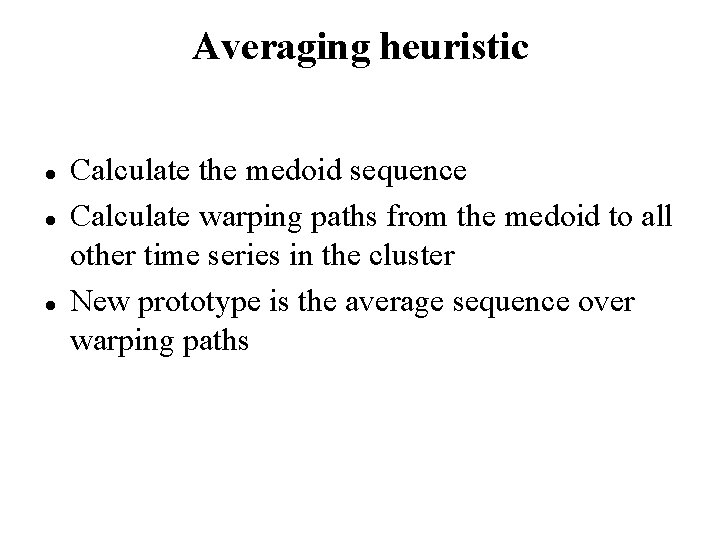

Averaging heuristic Calculate the medoid sequence Calculate warping paths from the medoid to all other time series in the cluster New prototype is the average sequence over warping paths

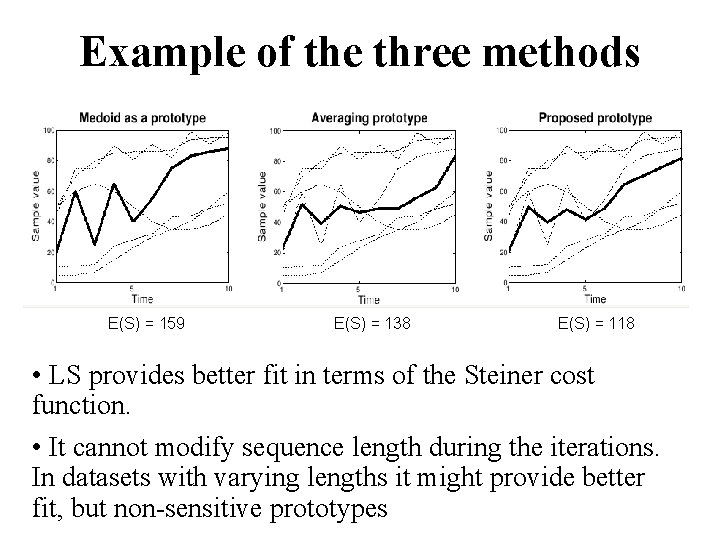

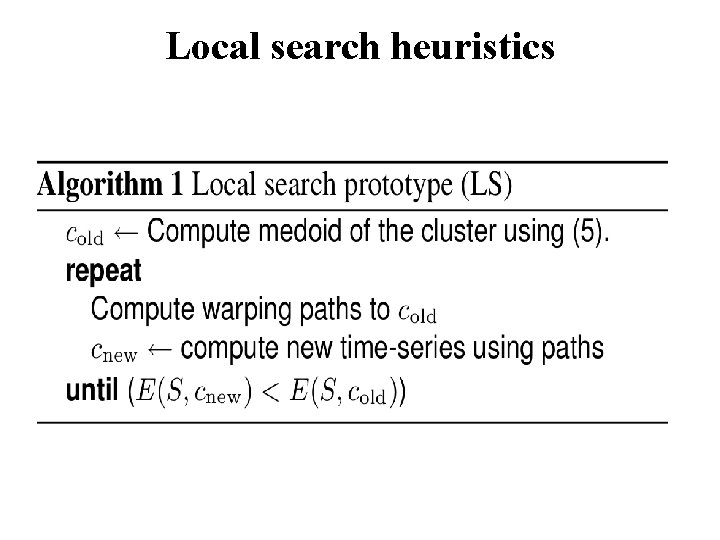

Local search heuristics

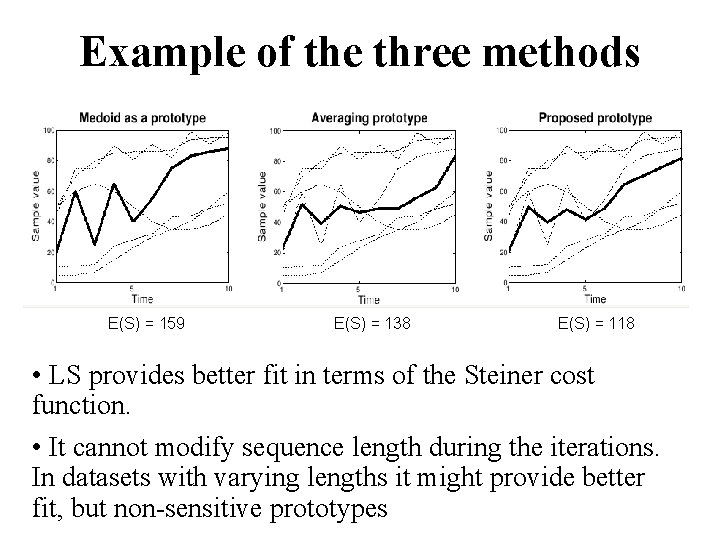

Example of the three methods E(S) = 159 E(S) = 138 E(S) = 118 • LS provides better fit in terms of the Steiner cost function. • It cannot modify sequence length during the iterations. In datasets with varying lengths it might provide better fit, but non-sensitive prototypes

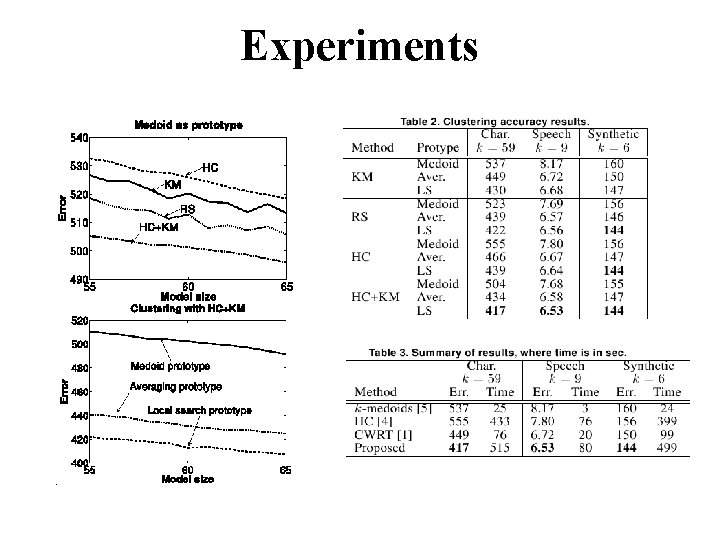

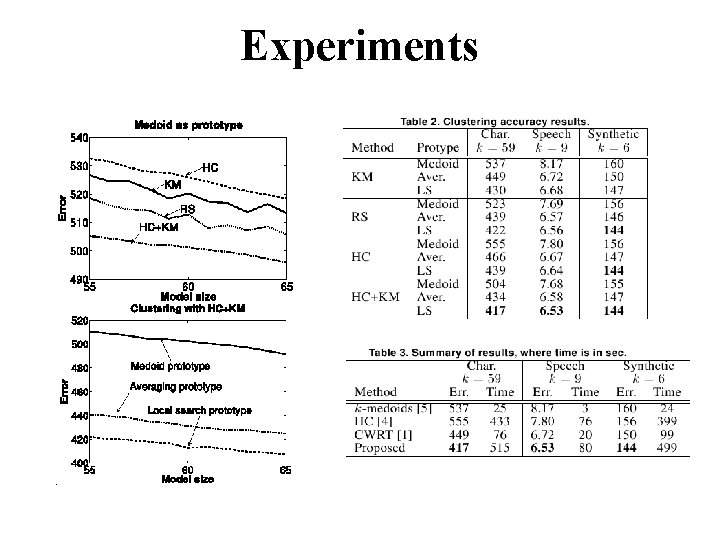

Experiments

Part VI: Other clustering problems

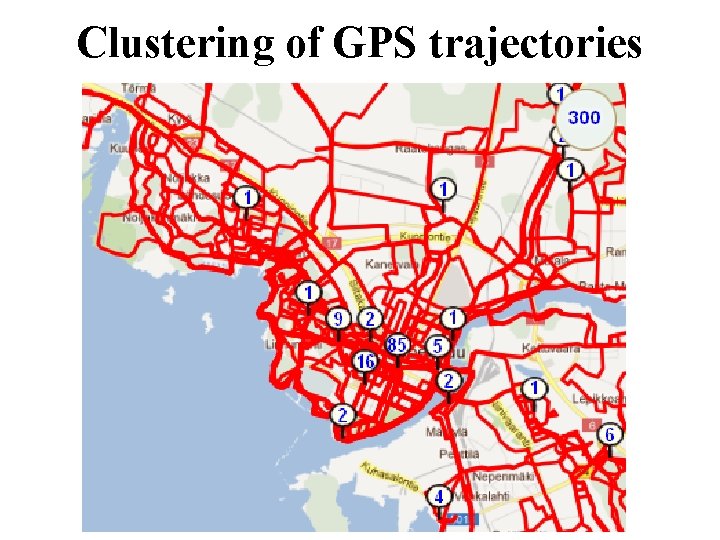

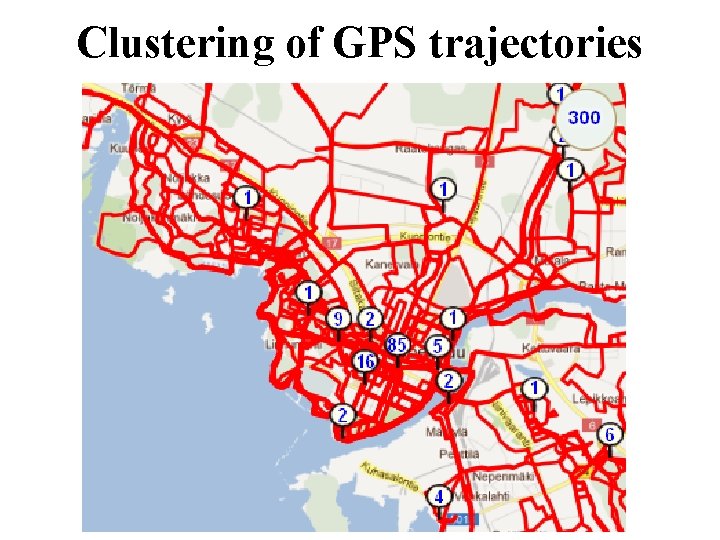

Clustering of GPS trajectories

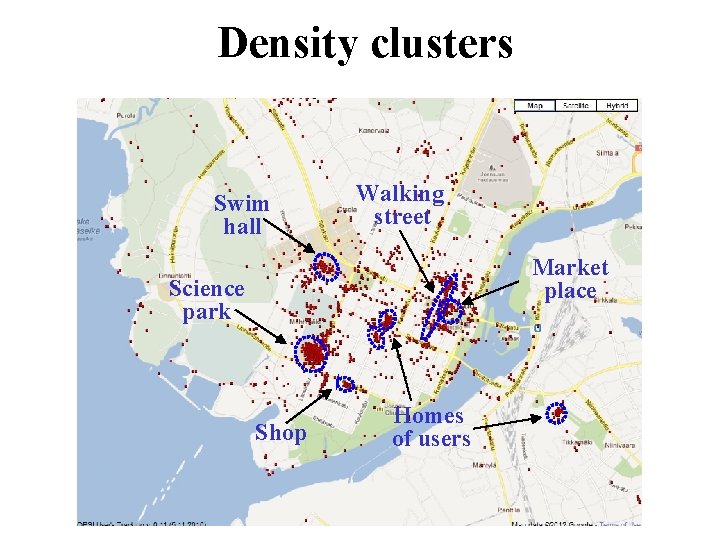

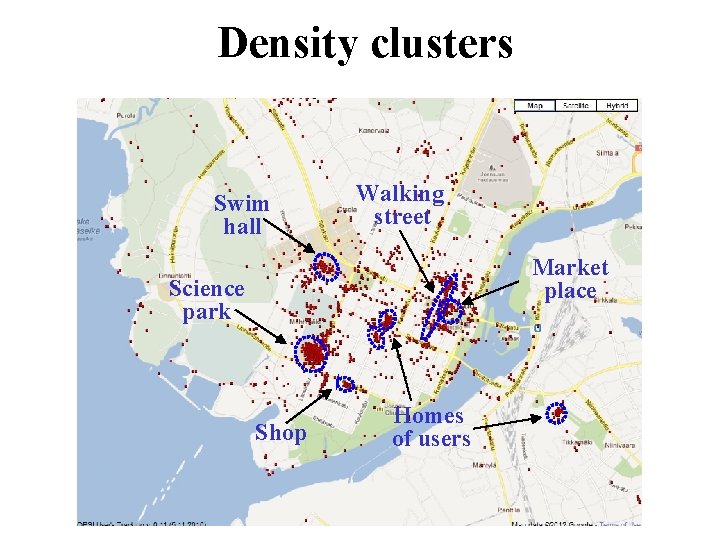

Density clusters Swim hall Walking street Market place Science park Shop Homes of users

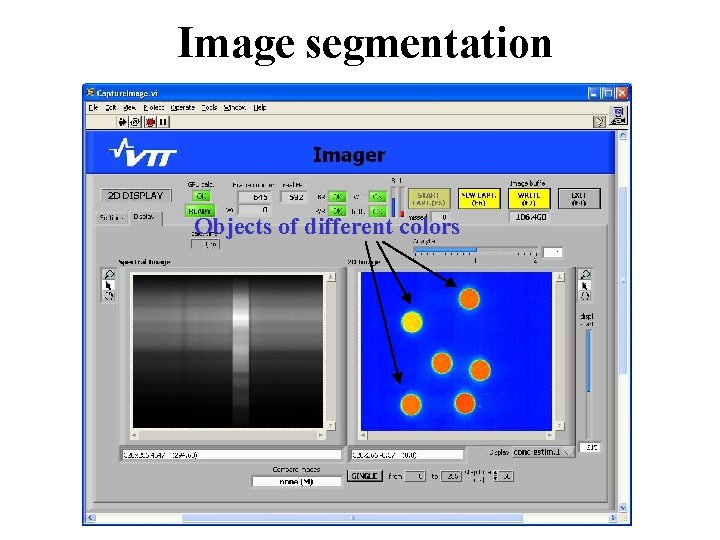

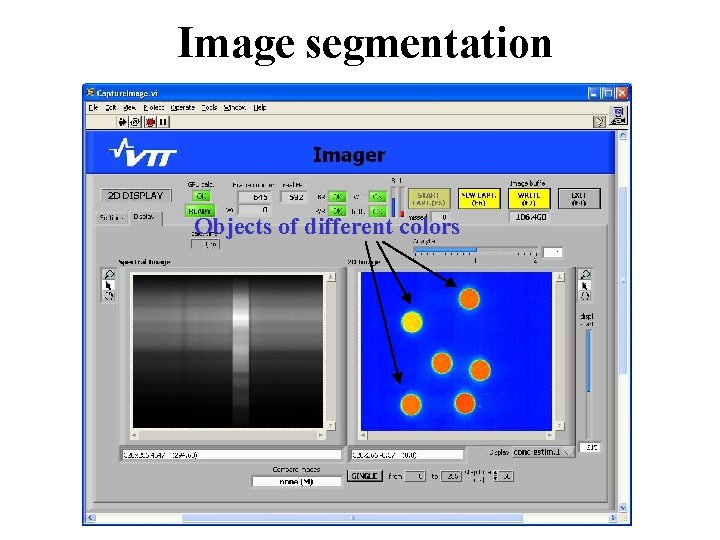

Image segmentation Objects of different colors

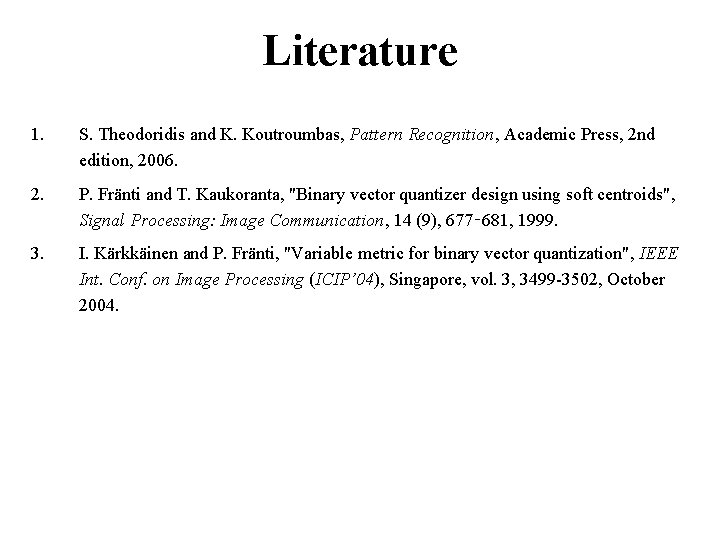

Literature 1. S. Theodoridis and K. Koutroumbas, Pattern Recognition, Academic Press, 2 nd edition, 2006. 2. P. Fränti and T. Kaukoranta, "Binary vector quantizer design using soft centroids", Signal Processing: Image Communication, 14 (9), 677‑ 681, 1999. 3. I. Kärkkäinen and P. Fränti, "Variable metric for binary vector quantization", IEEE Int. Conf. on Image Processing (ICIP’ 04), Singapore, vol. 3, 3499 -3502, October 2004.

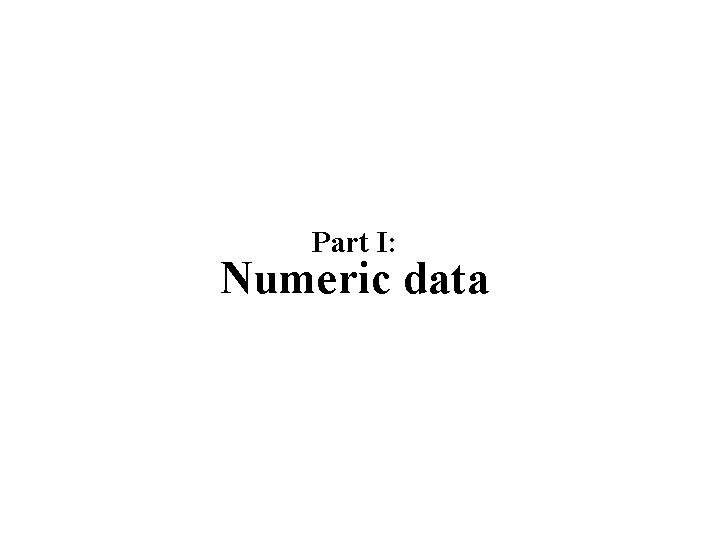

Literature Modified k-modes + k-histograms: M. Ng, M. J. Li, J. Z. Huang and Z. He, On the Impact of Dissimilarity Measure in k-Modes Clustering Algorithm, IEEE Trans. on Pattern Analysis and Machine Intelligence, 29 (3), 503 -507, March, 2007. ACE: K. Chen and L. Liu, The “Best k'' for entropy-based categorical dataclustering, Int. Conf. on Scientific and Statistical Database Management (SSDBM'2005), pp. 253 -262, Berkeley, USA, 2005. ROCK: S. Guha, R. Rastogi and K. Shim, “Rock: A robust clustering algorithm for categorical attributes”, Information Systems, Vol. 25, No. 5, pp. 345 -366, 200 x. K-medoids: L. Kaufman and P. J. Rousseeuw, Finding groups in data: an introduction to cluster analysis, John Wiley Sons, New York, 1990. K-modes: Z. Huang, Extensions to k-means algorithm for clustering large data sets with categorical values, Data mining knowledge discovery, Vol. 2, No. 3, pp. 283 -304, 1998. K-distributions: Z. Cai, D. Wang and L. Jiang, K-Distributions: A New Algorithm for Clustering Categorical Data, Int. Conf. on Intelligent Computing (ICIC 2007), pp. 436 -443, Qingdao, China, 2007. K-histograms: Zengyou He, Xiaofei Xu, Shengchun Deng and Bin Dong, K-Histograms: An Efficient Clustering Algorithm for Categorical Dataset, Co. RR, abs/cs/0509033, http: //arxiv. org/abs/cs/0509033, 2005.