Clustering IV Outline Impossibility theorem for clustering Densitybased

Clustering IV

Outline • Impossibility theorem for clustering • Density-based clustering and subspace clustering • Bi-clustering or co-clustering • Validating clustering results • Randomization tests

General form of impossibility results • Define a set of simple axioms (properties) that a computational task should satisfy • Prove that there does not exist an algorithm that can simultaneously satisfy all the axioms impossibility

Computational task: clustering • A clustering function operates on a set X of n points. X = {1, 2, …, n} • Distance function d: X x X R with d(i, j)≥ 0, d(i, j)=d(j, i), and d(i, j)=0 only if i=j • Clustering function f: f(X, d) = Γ, where Γ is a partition of X

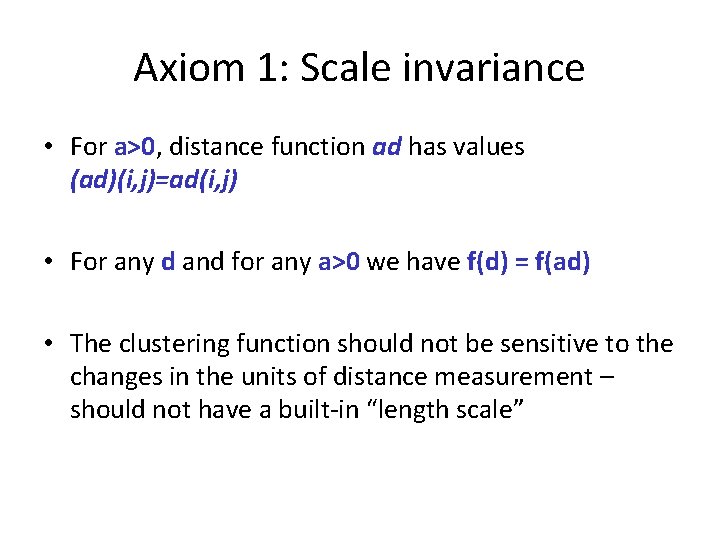

Axiom 1: Scale invariance • For a>0, distance function ad has values (ad)(i, j)=ad(i, j) • For any d and for any a>0 we have f(d) = f(ad) • The clustering function should not be sensitive to the changes in the units of distance measurement – should not have a built-in “length scale”

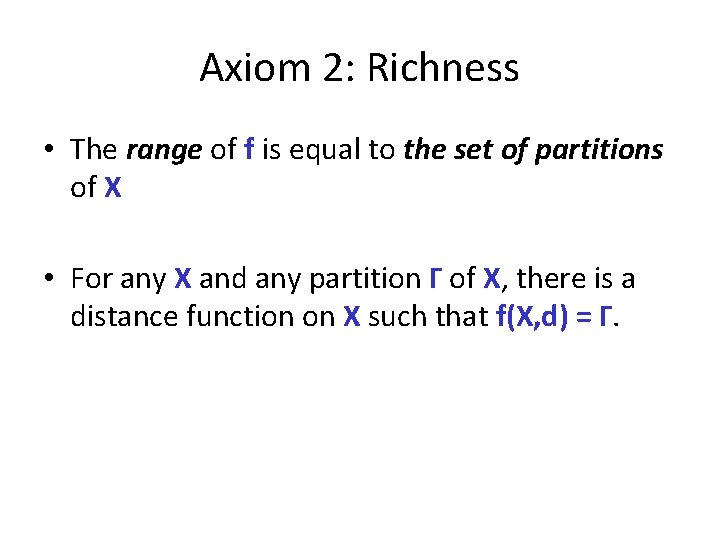

Axiom 2: Richness • The range of f is equal to the set of partitions of X • For any X and any partition Γ of X, there is a distance function on X such that f(X, d) = Γ.

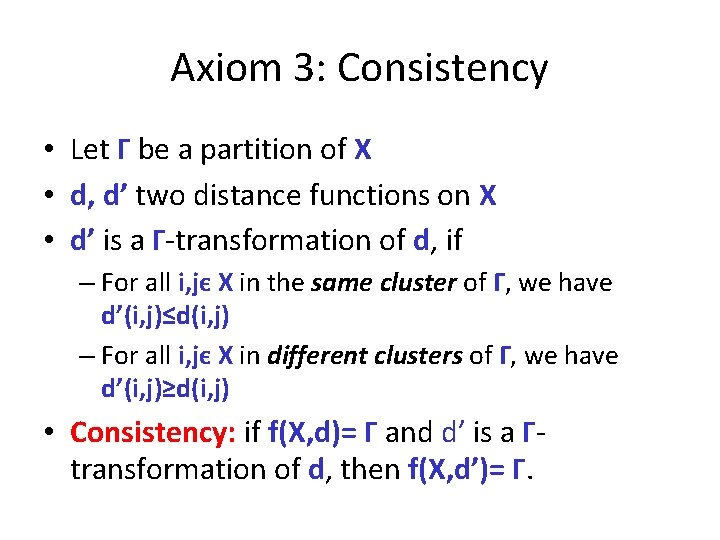

Axiom 3: Consistency • Let Γ be a partition of X • d, d’ two distance functions on X • d’ is a Γ-transformation of d, if – For all i, jє X in the same cluster of Γ, we have d’(i, j)≤d(i, j) – For all i, jє X in different clusters of Γ, we have d’(i, j)≥d(i, j) • Consistency: if f(X, d)= Γ and d’ is a Γtransformation of d, then f(X, d’)= Γ.

Axiom 3: Consistency • Intuition: Shrinking distances between points inside a cluster and expanding distances between points in different clusters does not change the result

Examples • Single-link agglomerative clustering • Repeatedly merge clusters whose closest points are at minimum distance • Continue until a stopping criterion is met – k-cluster stopping criterion: continue until there are k clusters – distance-r stopping criterion: continue until all distances between clusters are larger than r – scale-a stopping criterion: let d* be the maximum pairwise distance; continue until all distances are larger than ad*

Examples (cont. ) • Single-link agglomerative clustering with k-cluster stopping criterion does not satisfy richness axiom • Single-link agglomerative clustering with distance-r stopping criterion does not satisfy scale-invariance property • Single-link agglomerative clustering with scale-a stopping criterion does not satisfy consistency property

Centroid-based clustering and consistency • k-centroid clustering: – S subset of X for which ∑iєXminjєS{d(i, j)} is minimized – Partition of X is defined by assigning each element of X to the centroid that is the closest to it • Theorem: for every k≥ 2 and for n sufficiently large relative to k, the k-centroid clustering function does not satisfy the consistency property

k-centroid clustering and the consistency axiom • • • Intuition of the proof Let k=2 and X be partitioned into parts Y and Z d(i, j) ≤ r for every i, j є Y d(i, j) ≤ ε, with ε<r for every i, j є Z d(i, j) > r for every i є Y and j є Z • Split part Y into subparts Y 1 and Y 2 • Shrink distances in Y 1 appropriately • What is the result of this shrinking?

Impossibility theorem • For n≥ 2, there is no clustering function that satisfies all three axioms of scale-invariance, richness and consistency

Impossibility theorem (proof sketch) • A partition Γ’ is a refinement of partition Γ, if each cluster C’є Γ’ is included in some set Cє Γ • There is a partial order between partitions: Γ’≤ Γ • Antichain of partitions: a collection of partitions such that no one is a refinement of others • Theorem: If a clustering function f satisfies scale-invariance and consistency, then, the range of f is an anti-chain

What does an impossibility result really mean • Suggests a technical underpinning for the difficulty in unifying the initial, informal concept of clustering • Highlights basic trade-offs that are inherent to the clustering problem • Distinguishes how clustering methods resolve these tradeoffs (by looking at the methods not only at an operational level)

Outline • Impossibility theorem for clustering • Density-based clustering and subspace clustering • Bi-clustering or co-clustering • Validating clustering results • Randomization tests

Density-Based Clustering Methods • Clustering based on density (local cluster criterion), such as density-connected points • Major features: – – Discover clusters of arbitrary shape Handle noise One scan Need density parameters as termination condition • Several interesting studies: – – DBSCAN: Ester, et al. (KDD’ 96) OPTICS: Ankerst, et al (SIGMOD’ 99). DENCLUE: Hinneburg & D. Keim (KDD’ 98) CLIQUE: Agrawal, et al. (SIGMOD’ 98)

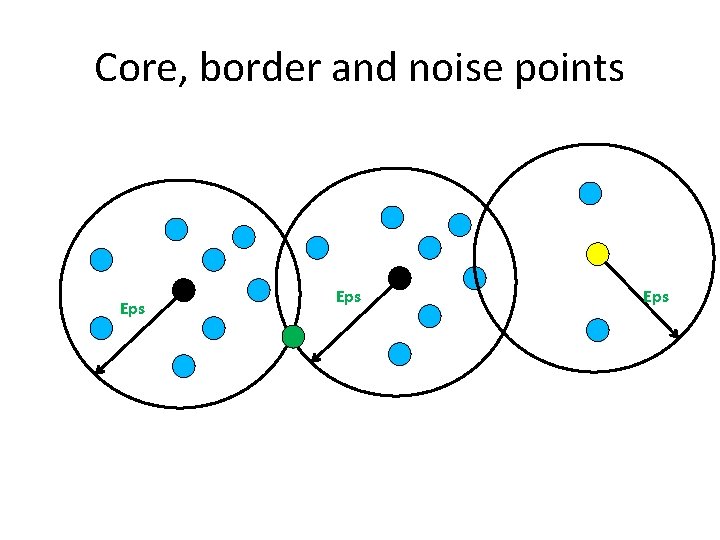

Classification of points in densitybased clustering • Core points: Interior points of a density-based cluster. A point p is a core point if for distance Eps : – |NEps(p)={q | dist(p, q) <= e }| ≥ Min. Pts • Border points: Not a core point but within the neighborhood of a core point (it can be in the neighborhoods of many core points) • Noise points: Not a core or a border point

Core, border and noise points Eps Eps

DBSCAN: The Algorithm – Label all points as core, border, or noise points – Eliminate noise points – Put an edge between all core points that are within Eps of each other – Make each group of connected core points into a separate cluster – Assign each border point to one of the cluster of its associated core points

Time and space complexity of DBSCAN • For a dataset X consisting of n points, the time complexity of DBSCAN is O(n x time to find points in the Eps-neighborhood) • Worst case O(n 2) • In low-dimensional spaces O(nlogn); efficient data structures (e. g. , kd-trees) allow for efficient retrieval of all points within a given distance of a specified point

Strengths and weaknesses of DBSCAN • Resistant to noise • Finds clusters of arbitrary shapes and sizes • Difficulty in identifying clusters with varying densities • Problems in high-dimensional spaces; notion of density unclear • Can be computationally expensive when the computation of nearest neighbors is expensive

Generic density-based clustering on a grid • Define a set of grid cells • Assign objects to appropriate cells and compute the density of each cell • Eliminate cells that have density below a given threshold τ • Form clusters from “contiguous” (adjacent) groups of dense cells

Questions • How do we define the grid? • How do we measure the density of a grid cell? • How do we deal with multidimensional data?

Clustering High-Dimensional Data • Clustering high-dimensional data – Many applications: text documents, DNA micro-array data – Major challenges: • Many irrelevant dimensions may mask clusters • Distance measure becomes meaningless—due to equi-distance • Clusters may exist only in some subspaces • Methods – Feature transformation: only effective if most dimensions are relevant • PCA & SVD useful only when features are highly correlated/redundant – Feature selection: wrapper or filter approaches • useful to find a subspace where the data have nice clusters – Subspace-clustering: find clusters in all the possible subspaces • CLIQUE

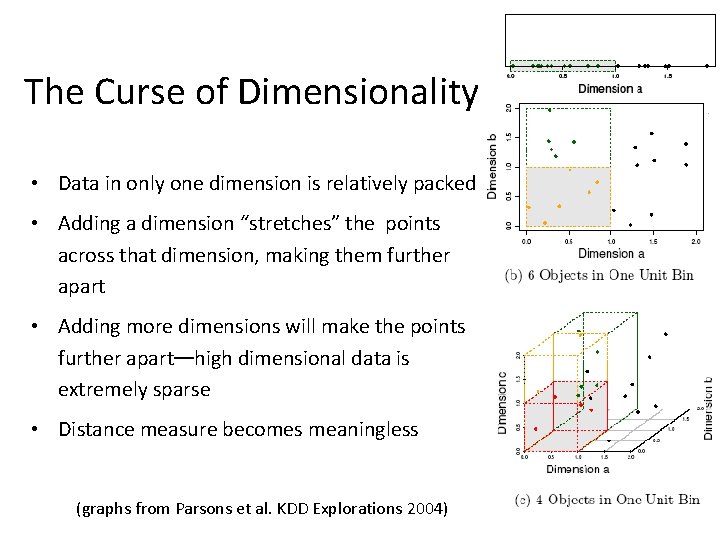

The Curse of Dimensionality • Data in only one dimension is relatively packed • Adding a dimension “stretches” the points across that dimension, making them further apart • Adding more dimensions will make the points further apart—high dimensional data is extremely sparse • Distance measure becomes meaningless (graphs from Parsons et al. KDD Explorations 2004)

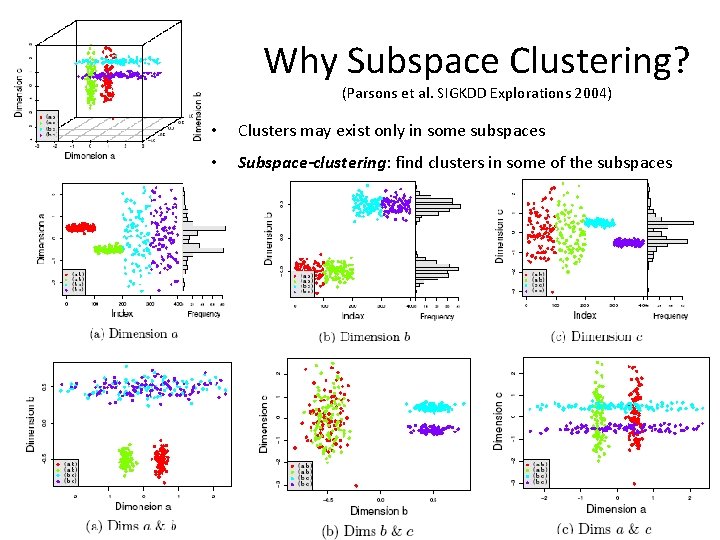

Why Subspace Clustering? (Parsons et al. SIGKDD Explorations 2004) • Clusters may exist only in some subspaces • Subspace-clustering: find clusters in some of the subspaces

CLIQUE (Clustering In QUEst) • Agrawal, Gehrke, Gunopulos, Raghavan (SIGMOD’ 98) • Automatically identifying subspaces of a high dimensional data space that allow better clustering than original space • CLIQUE can be considered as both density-based and grid-based – It partitions each dimension into the same number of equal length interval – It partitions an m-dimensional data space into non-overlapping rectangular units – A unit is dense if the fraction of total data points contained in the unit exceeds an input threshold τ – A cluster is a maximal set of connected dense units within a subspace

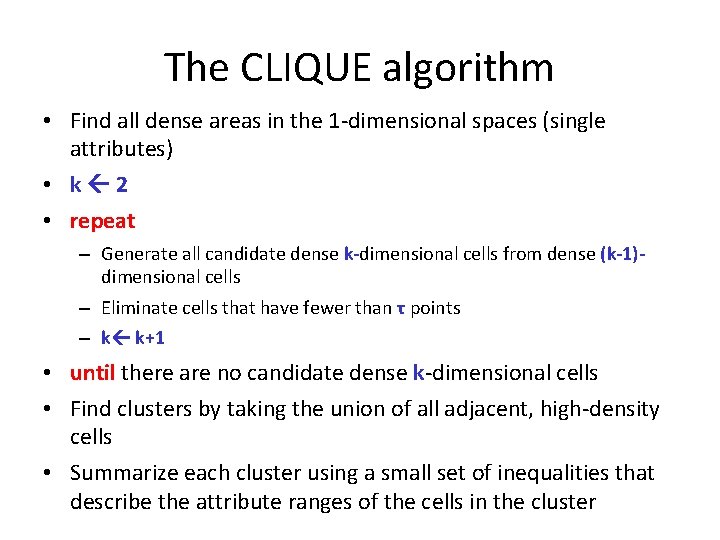

The CLIQUE algorithm • Find all dense areas in the 1 -dimensional spaces (single attributes) • k 2 • repeat – Generate all candidate dense k-dimensional cells from dense (k-1)dimensional cells – Eliminate cells that have fewer than τ points – k k+1 • until there are no candidate dense k-dimensional cells • Find clusters by taking the union of all adjacent, high-density cells • Summarize each cluster using a small set of inequalities that describe the attribute ranges of the cells in the cluster

CLIQUE: Monotonicity property • “If a set of points forms a density-based cluster in k- dimensions (attributes), then the same set of points is also part of a density-based cluster in all possible subsets of those dimensions”

Strengths and weakness of CLIQUE • automatically finds subspaces of the highest dimensionality such that high density clusters exist in those subspaces • insensitive to the order of records in input and does not presume some canonical data distribution • scales linearly with the size of input and has good scalability as the number of dimensions in the data increases • The accuracy of the clustering result may be degraded at the expense of simplicity of the method

Outline • Impossibility theorem for clustering • Density-based clustering and subspace clustering • Bi-clustering or co-clustering • Validating clustering results • Randomization tests

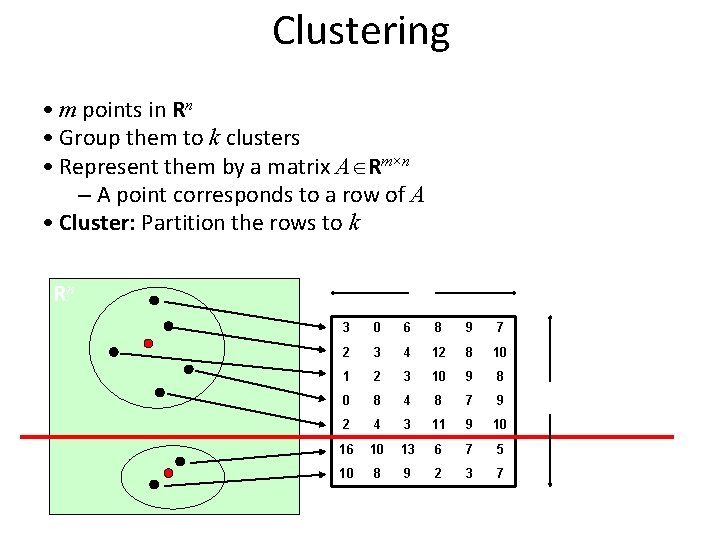

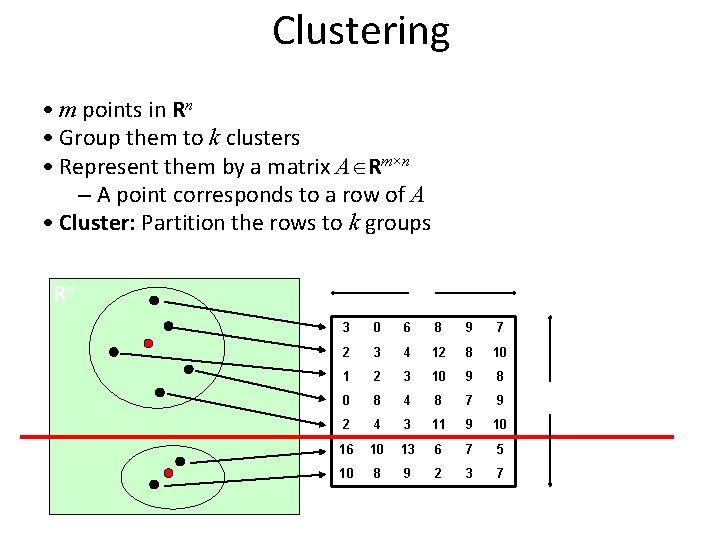

Clustering • m points in Rn • Group them to k clusters • Represent them by a matrix A Rm×n – A point corresponds to a row of A • Cluster: Partition the rows to k groups n Rn 3 0 6 8 9 7 2 3 4 12 8 10 1 2 3 10 9 8 0 8 4 8 7 9 2 4 3 11 9 10 16 10 13 6 7 5 10 8 9 2 3 7 A m

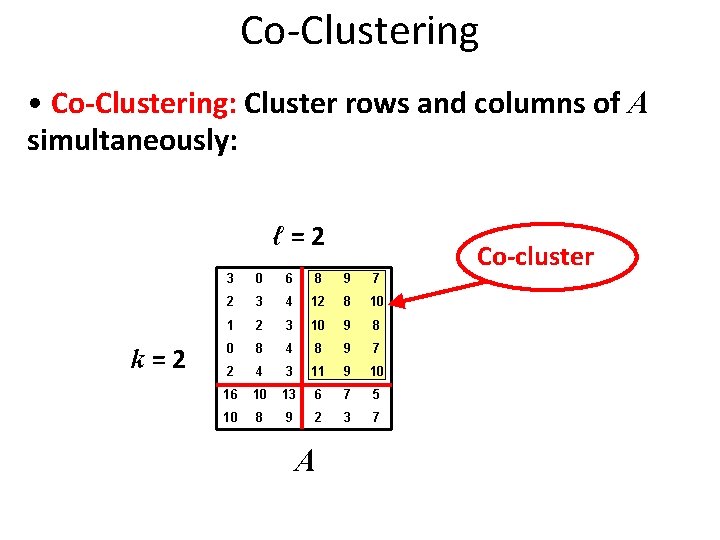

Co-Clustering • Co-Clustering: Cluster rows and columns of A simultaneously: ℓ=2 k=2 3 0 6 8 9 7 2 3 4 12 8 10 1 2 3 10 9 8 0 8 4 8 9 7 2 4 3 11 9 10 16 10 13 6 7 5 10 8 9 2 3 7 A Co-cluster

Motivation: Sponsored Search Ads Main revenue for search engines • Advertisers bid on keywords • A user makes a query • Show ads of advertisers that are relevant and have high bids • User clicks or not an ad

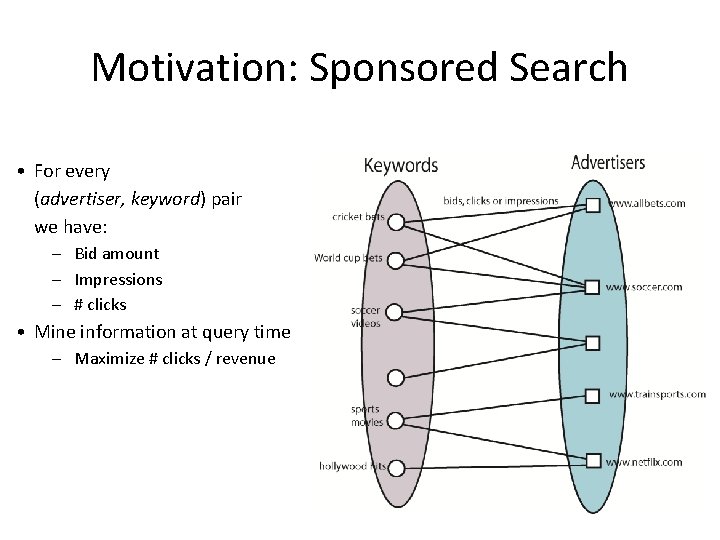

Motivation: Sponsored Search • For every (advertiser, keyword) pair we have: – Bid amount – Impressions – # clicks • Mine information at query time – Maximize # clicks / revenue

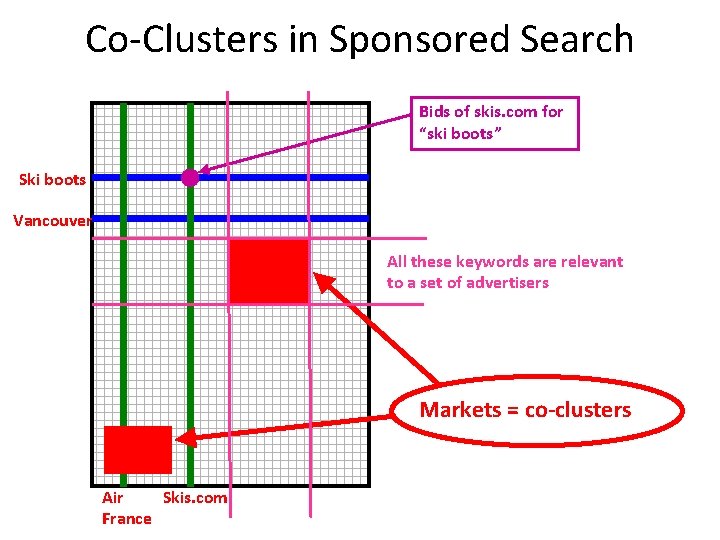

Co-Clusters in Sponsored Search Bids of skis. com for “ski boots” Ski boots Vancouver Keywords All these keywords are relevant to a set of advertisers Markets = co-clusters Air Skis. com France Advertiser

Co-Clustering in Sponsored Search Applications: • Keyword suggestion – Recommend to advertisers other relevant keywords • Broad matching / market expansion – Include more advertisers to a query • Isolate submarkets – Important for economists – Apply different advertising approaches • Build taxonomies of advertisers / keywords

Motivation: Biology • Gene-expression data in computational biology – need simultaneous characterizations of genes

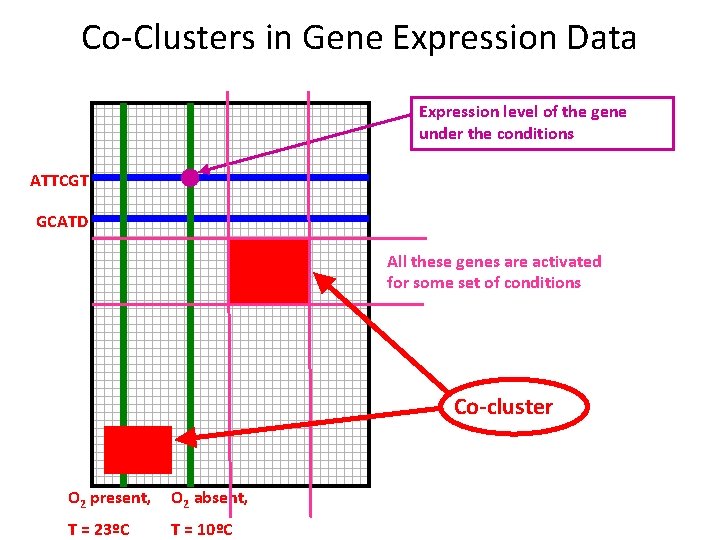

Co-Clusters in Gene Expression Data Expression level of the gene under the conditions ATTCGT GCATD Genes All these genes are activated for some set of conditions Co-cluster O 2 present, O 2 absent, Conditions T = 23ºC T = 10ºC

Clustering • m points in Rn • Group them to k clusters • Represent them by a matrix A Rm×n – A point corresponds to a row of A • Cluster: Partition the rows to k groups n Rn 3 0 6 8 9 7 2 3 4 12 8 10 1 2 3 10 9 8 0 8 4 8 7 9 2 4 3 11 9 10 16 10 13 6 7 5 10 8 9 2 3 7 A m

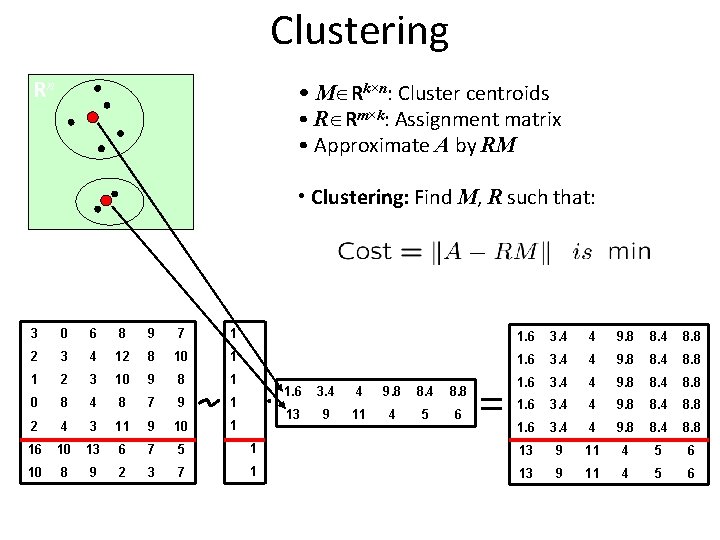

Clustering Rn • M Rk×n: Cluster centroids • R Rm×k: Assignment matrix • Approximate A by RM • Clustering: Find M, R such that: 3 0 6 8 9 7 1 1. 6 3. 4 4 9. 8 8. 4 8. 8 2 3 4 12 8 10 1 1. 6 3. 4 4 9. 8 8. 4 8. 8 1 2 3 10 9 8 1 1. 6 3. 4 4 9. 8 8. 4 8. 8 0 8 4 8 7 9 1 1. 6 3. 4 4 9. 8 8. 4 8. 8 2 4 3 11 9 10 1 1. 6 3. 4 4 9. 8 8. 4 8. 8 16 10 13 6 7 5 1 13 9 11 4 5 6 10 8 9 2 3 7 1 13 9 11 4 5 6 A R 1. 6 3. 4 4 9. 8 8. 4 8. 8 13 9 11 4 5 6 M RM

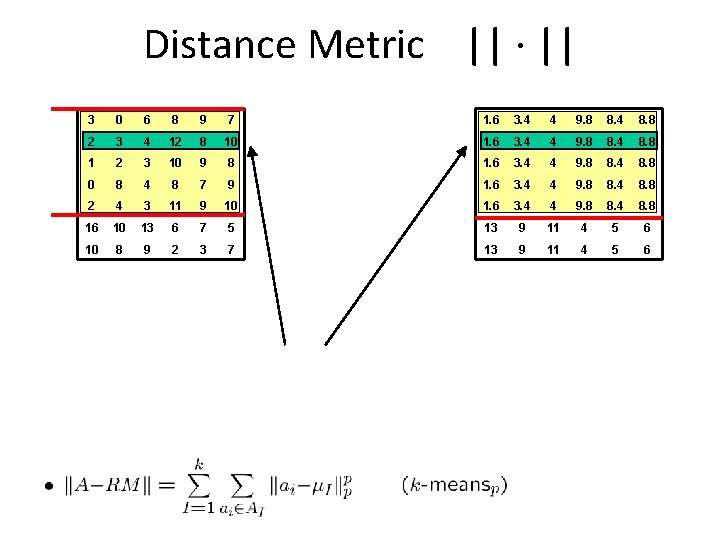

Distance Metric || · || AI 3 0 6 8 9 7 1. 6 3. 4 4 9. 8 8. 4 8. 8 2 3 4 12 8 10 1. 6 3. 4 4 9. 8 8. 4 8. 8 1 2 3 10 9 8 1. 6 3. 4 4 9. 8 8. 4 8. 8 0 8 4 8 7 9 1. 6 3. 4 4 9. 8 8. 4 8. 8 2 4 3 11 9 10 1. 6 3. 4 4 9. 8 8. 4 8. 8 16 10 13 6 7 5 13 9 11 4 5 6 10 8 9 2 3 7 13 9 11 4 5 6 A RM

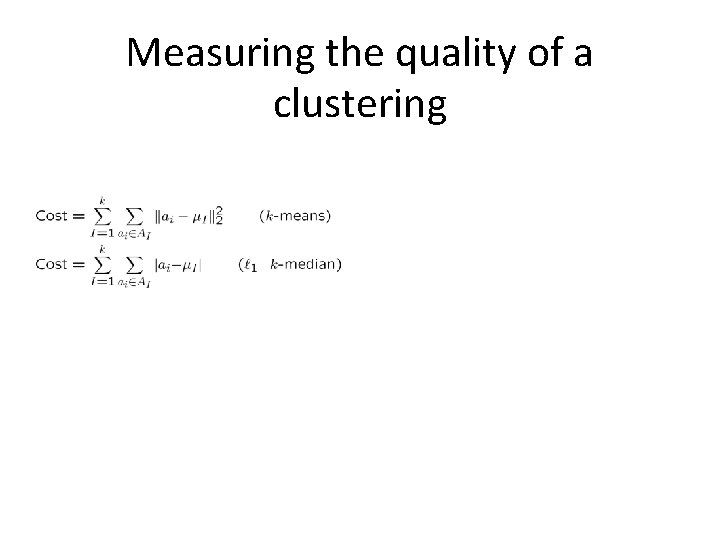

Measuring the quality of a clustering Theorem. For any k > 0, p ≥ 1 there is a 24 -approximation. Proof. Similar to general k-median, relaxed triangle inequality.

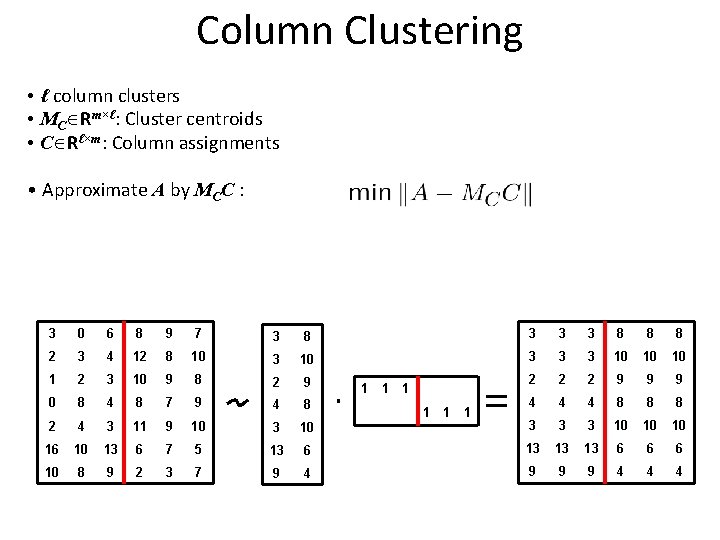

Column Clustering • ℓ column clusters • MC Rm×ℓ: Cluster centroids • C Rℓ×m: Column assignments • Approximate A by MCC : 3 0 6 8 9 7 3 8 3 3 3 8 8 8 2 3 4 12 8 10 3 3 3 10 10 10 1 2 3 10 9 8 2 9 2 2 2 9 9 9 0 8 4 8 7 9 4 8 4 4 4 8 8 8 2 4 3 11 9 10 3 3 3 10 10 10 16 10 13 6 7 5 13 6 13 13 13 6 6 6 10 8 9 2 3 7 9 4 9 9 9 4 4 4 A MC 1 1 MC C

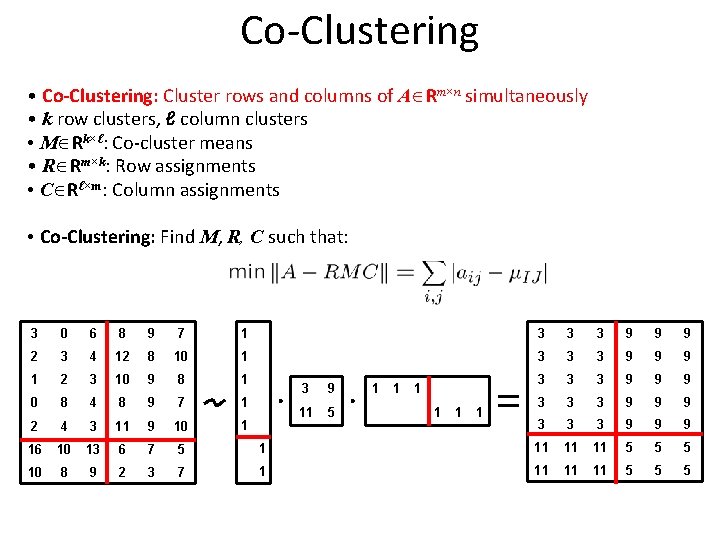

Co-Clustering • Co-Clustering: Cluster rows and columns of A Rm×n simultaneously • k row clusters, ℓ column clusters • M Rk×ℓ: Co-cluster means • R Rm×k: Row assignments • C Rℓ×m: Column assignments • Co-Clustering: Find M, R, C such that: 3 0 6 8 9 7 1 3 3 3 9 9 9 2 3 4 12 8 10 1 3 3 3 9 9 9 1 2 3 10 9 8 1 3 3 3 9 9 9 0 8 4 8 9 7 1 3 3 3 9 9 9 2 4 3 11 9 10 1 3 3 3 9 9 9 16 10 13 6 7 5 1 11 11 11 5 5 5 10 8 9 2 3 7 1 11 11 11 5 5 5 A R 3 9 11 5 M 1 1 C 1 1 RMC

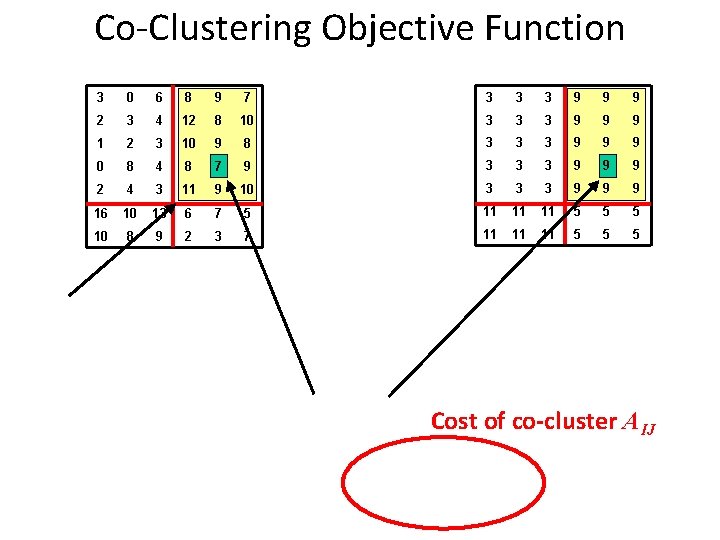

Co-Clustering Objective Function AIJ 3 0 6 8 9 7 3 3 3 9 9 9 2 3 4 12 8 10 3 3 3 9 9 9 1 2 3 10 9 8 3 3 3 9 9 9 0 8 4 8 7 9 3 3 3 9 9 9 2 4 3 11 9 10 3 3 3 9 9 9 16 10 13 6 7 5 11 11 11 5 5 5 10 8 9 2 3 7 11 11 11 5 5 5 A RMC Cost of co-cluster AIJ

Some Background • A. k. a. : biclustering, block clustering, … • Many objective functions in co-clustering – This is one of the easier – Others factor out row-column average (priors) – Others based on information theoretic ideas (e. g. KL divergence) • A lot of existing work, but mostly heuristic – k-means style, alternate between rows/columns – Spectral techniques

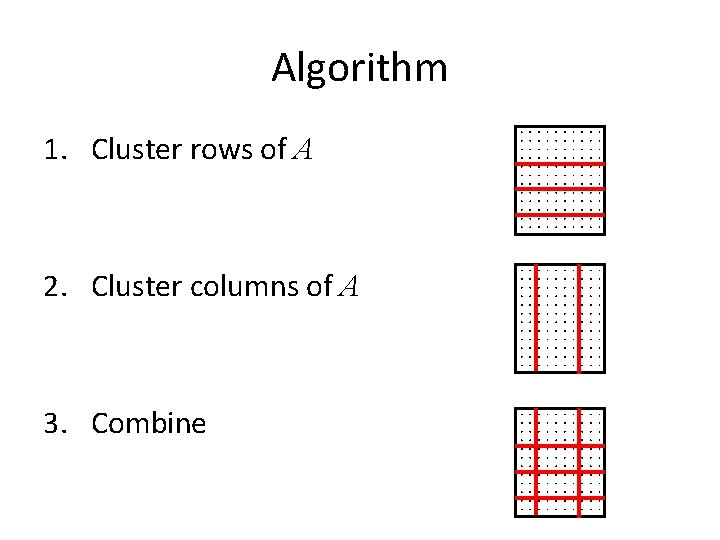

Algorithm 1. Cluster rows of A 2. Cluster columns of A 3. Combine

Properties of the algorithm Theorem 1. Algorithm with optimal row/column clusterings is 3 approximation to co-clustering optimum. Theorem 2. For ℓ 2 the algorithm with optimal row/column clusterings is a 2 -approximation.

Outline • Impossibility theorem for clustering [Jon Kleinberg, An impossibility theorem for clustering, NIPS 2002] • Bi-clustering or co-clustering • Subspace clustering and density-based clustering • Validating clustering results

Cluster Validity • For supervised classification we have a variety of measures to evaluate how good our model is – Accuracy, precision, recall • For cluster analysis, the analogous question is how to evaluate the “goodness” of the resulting clusters? • But “clusters are in the eye of the beholder”! • Then why do we want to evaluate them? – – To avoid finding patterns in noise To compare clustering algorithms To compare two sets of clusters To compare two clusters

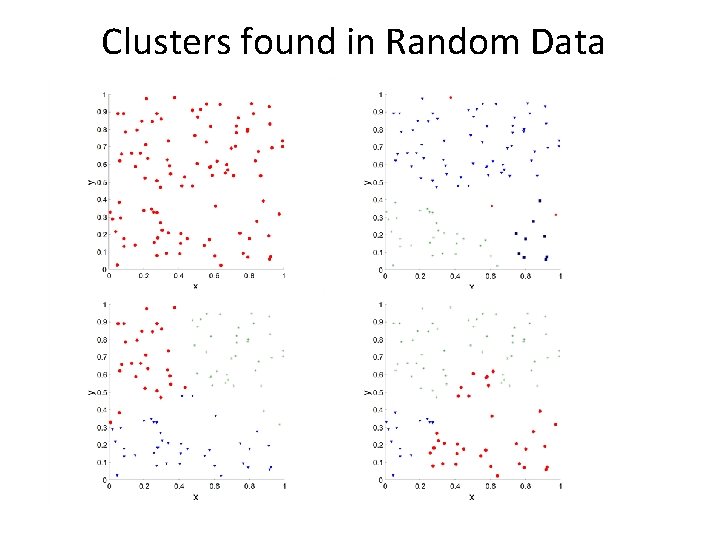

Clusters found in Random Data Random Points K-means DBSCAN Complete Link

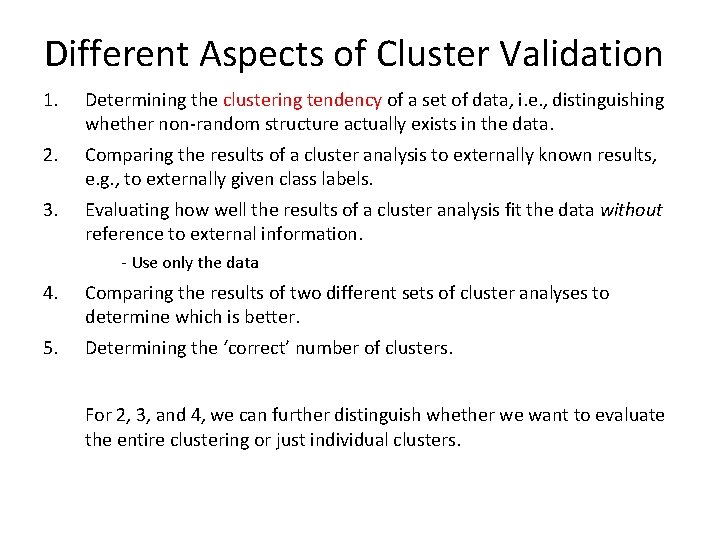

Different Aspects of Cluster Validation 1. Determining the clustering tendency of a set of data, i. e. , distinguishing whether non-random structure actually exists in the data. 2. Comparing the results of a cluster analysis to externally known results, e. g. , to externally given class labels. 3. Evaluating how well the results of a cluster analysis fit the data without reference to external information. - Use only the data 4. Comparing the results of two different sets of cluster analyses to determine which is better. 5. Determining the ‘correct’ number of clusters. For 2, 3, and 4, we can further distinguish whether we want to evaluate the entire clustering or just individual clusters.

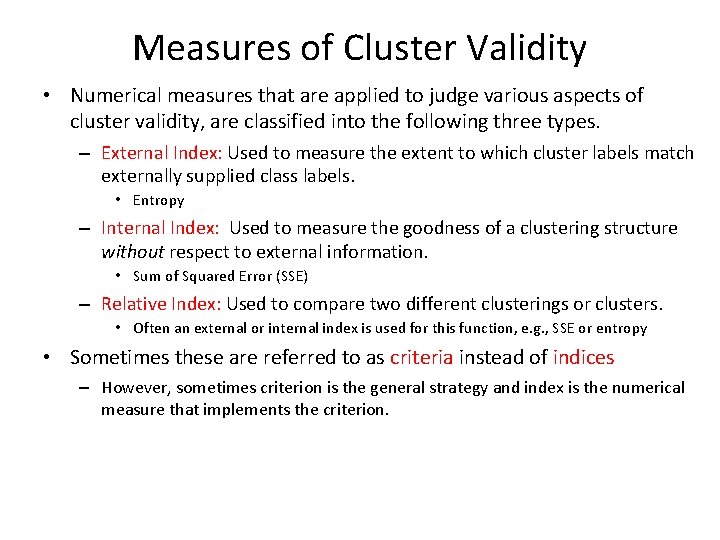

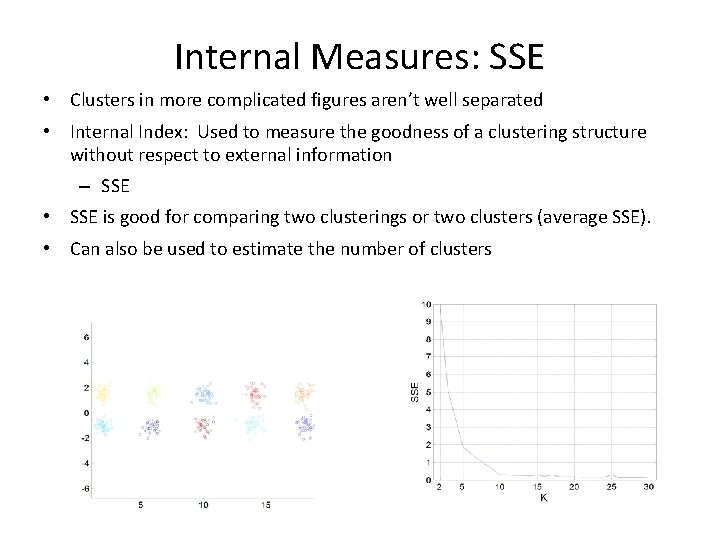

Measures of Cluster Validity • Numerical measures that are applied to judge various aspects of cluster validity, are classified into the following three types. – External Index: Used to measure the extent to which cluster labels match externally supplied class labels. • Entropy – Internal Index: Used to measure the goodness of a clustering structure without respect to external information. • Sum of Squared Error (SSE) – Relative Index: Used to compare two different clusterings or clusters. • Often an external or internal index is used for this function, e. g. , SSE or entropy • Sometimes these are referred to as criteria instead of indices – However, sometimes criterion is the general strategy and index is the numerical measure that implements the criterion.

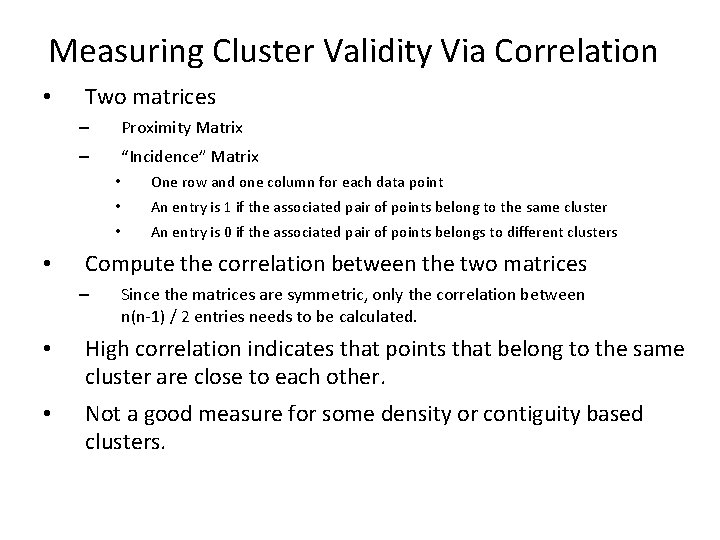

Measuring Cluster Validity Via Correlation • • Two matrices – Proximity Matrix – “Incidence” Matrix • One row and one column for each data point • An entry is 1 if the associated pair of points belong to the same cluster • An entry is 0 if the associated pair of points belongs to different clusters Compute the correlation between the two matrices – Since the matrices are symmetric, only the correlation between n(n-1) / 2 entries needs to be calculated. • High correlation indicates that points that belong to the same cluster are close to each other. • Not a good measure for some density or contiguity based clusters.

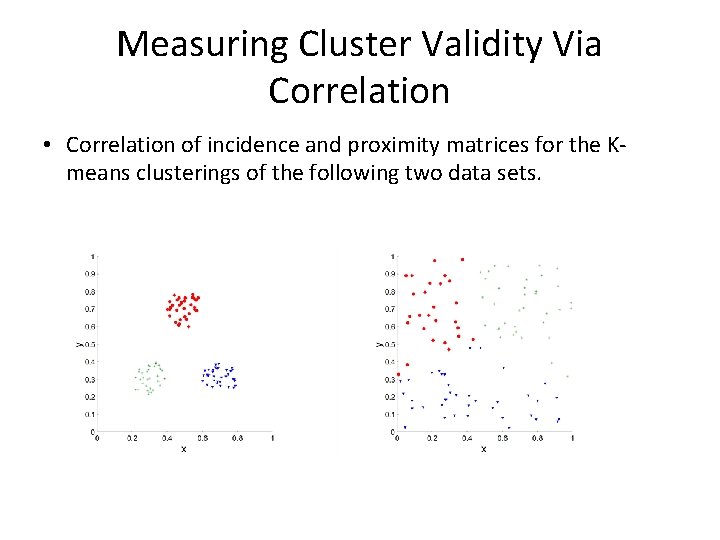

Measuring Cluster Validity Via Correlation • Correlation of incidence and proximity matrices for the Kmeans clusterings of the following two data sets. Corr = 0. 9235 Corr = 0. 5810

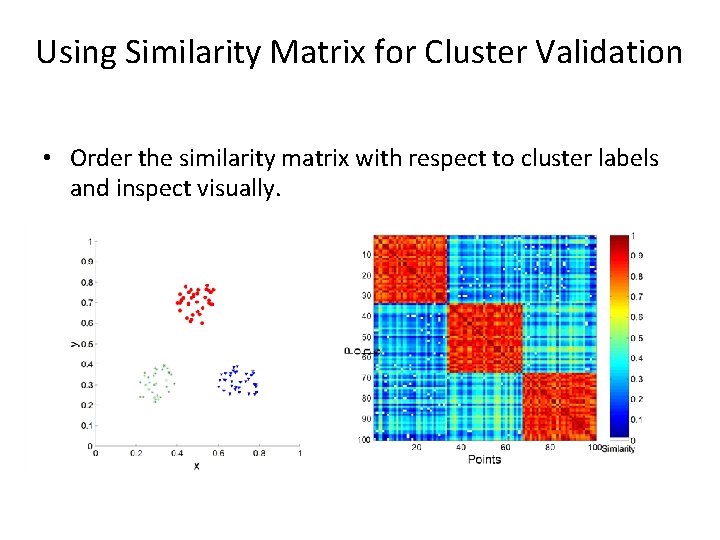

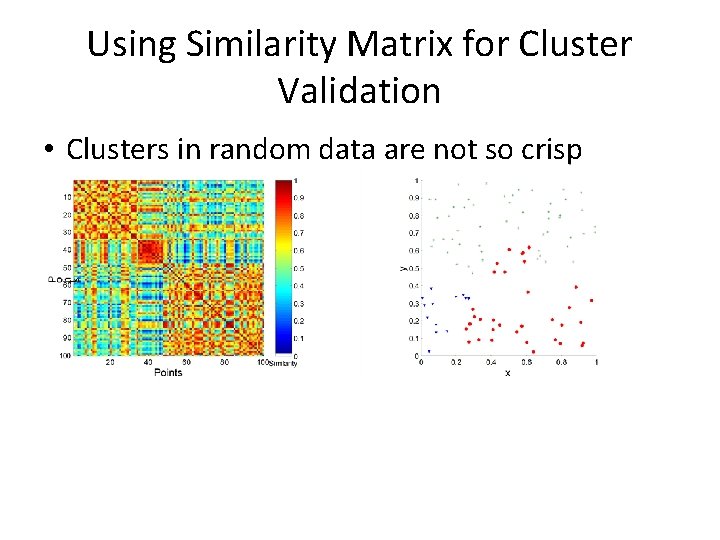

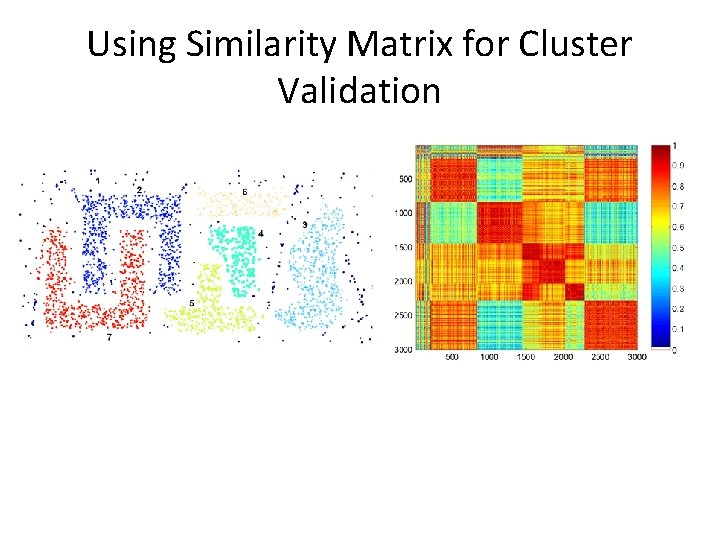

Using Similarity Matrix for Cluster Validation • Order the similarity matrix with respect to cluster labels and inspect visually.

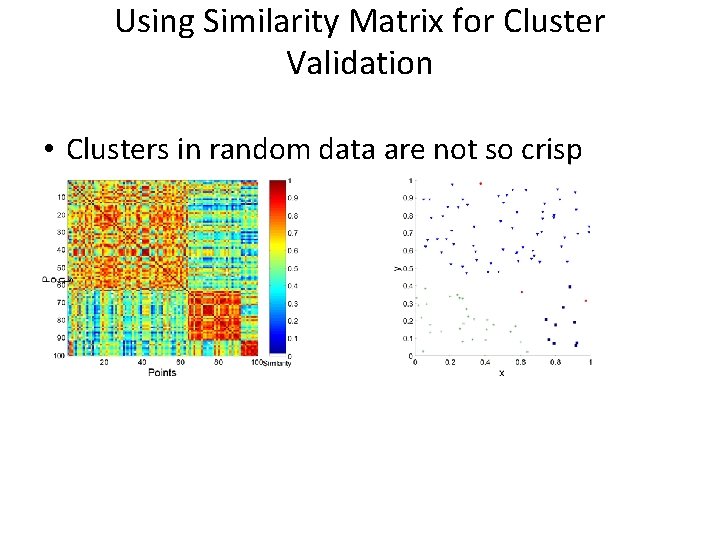

Using Similarity Matrix for Cluster Validation • Clusters in random data are not so crisp DBSCAN

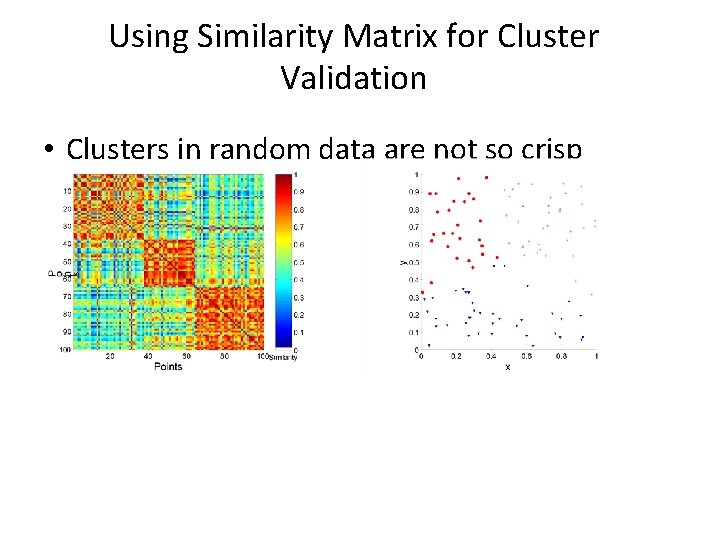

Using Similarity Matrix for Cluster Validation • Clusters in random data are not so crisp K-means

Using Similarity Matrix for Cluster Validation • Clusters in random data are not so crisp Complete Link

Using Similarity Matrix for Cluster Validation DBSCAN

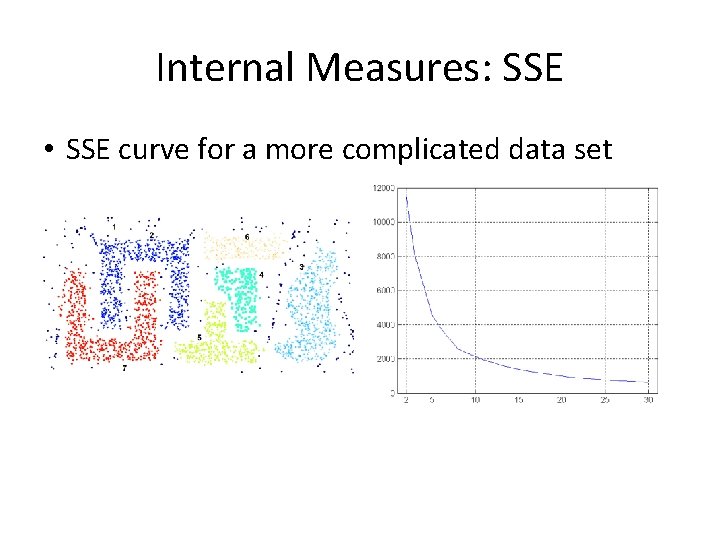

Internal Measures: SSE • Clusters in more complicated figures aren’t well separated • Internal Index: Used to measure the goodness of a clustering structure without respect to external information – SSE • SSE is good for comparing two clusterings or two clusters (average SSE). • Can also be used to estimate the number of clusters

Internal Measures: SSE • SSE curve for a more complicated data set SSE of clusters found using K-means

Framework for Cluster Validity • Need a framework to interpret any measure. For example, if our measure of evaluation has the value, 10, is that good, fair, or poor? – • Statistics provide a framework for cluster validity – The more “atypical” a clustering result is, the more likely it represents valid structure in the data – Can compare the values of an index that result from random data or clusterings to those of a clustering result. • – • If the value of the index is unlikely, then the cluster results are valid These approaches are more complicated and harder to understand. For comparing the results of two different sets of cluster analyses, a framework is less necessary. – However, there is the question of whether the difference between two index values is significant

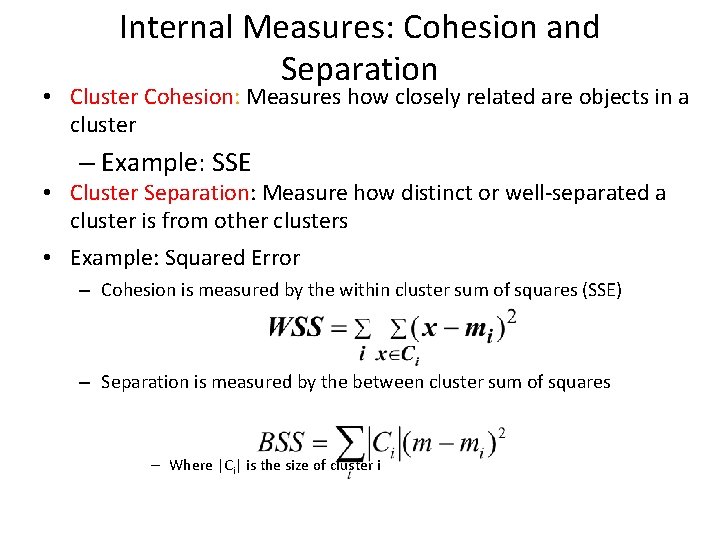

Internal Measures: Cohesion and Separation • Cluster Cohesion: Measures how closely related are objects in a cluster – Example: SSE • Cluster Separation: Measure how distinct or well-separated a cluster is from other clusters • Example: Squared Error – Cohesion is measured by the within cluster sum of squares (SSE) – Separation is measured by the between cluster sum of squares – Where |Ci| is the size of cluster i

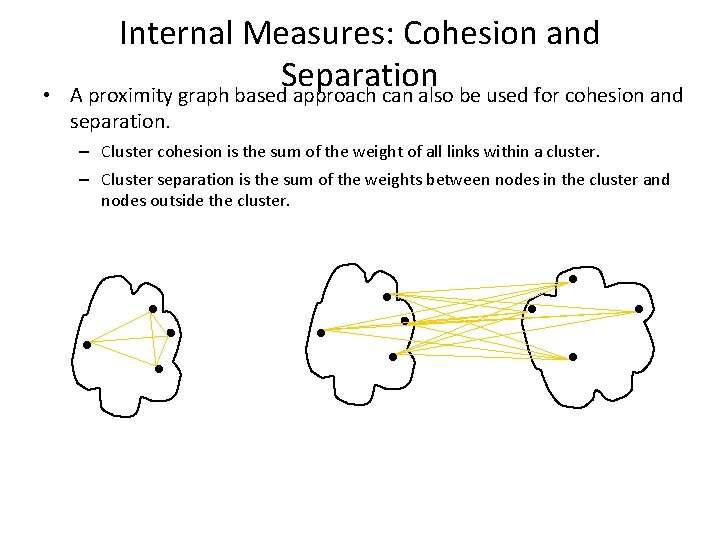

• Internal Measures: Cohesion and Separation A proximity graph based approach can also be used for cohesion and separation. – Cluster cohesion is the sum of the weight of all links within a cluster. – Cluster separation is the sum of the weights between nodes in the cluster and nodes outside the cluster. cohesion separation

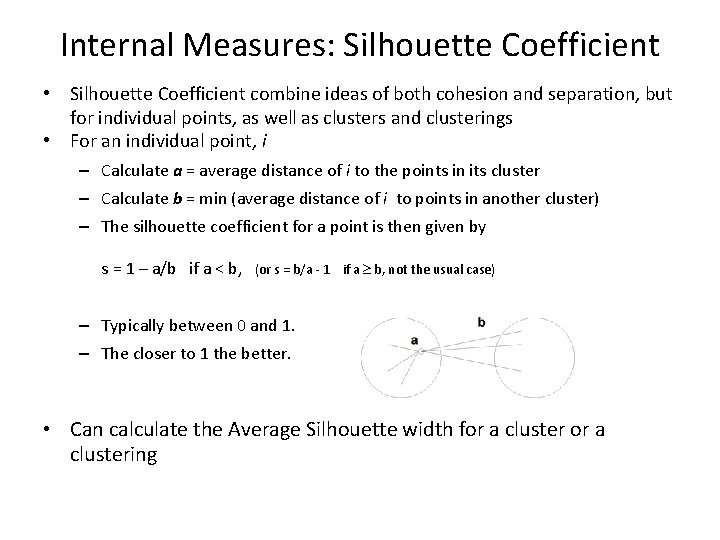

Internal Measures: Silhouette Coefficient • Silhouette Coefficient combine ideas of both cohesion and separation, but for individual points, as well as clusters and clusterings • For an individual point, i – Calculate a = average distance of i to the points in its cluster – Calculate b = min (average distance of i to points in another cluster) – The silhouette coefficient for a point is then given by s = 1 – a/b if a < b, (or s = b/a - 1 if a b, not the usual case) – Typically between 0 and 1. – The closer to 1 the better. • Can calculate the Average Silhouette width for a clustering

Final Comment on Cluster Validity “The validation of clustering structures is the most difficult and frustrating part of cluster analysis. Without a strong effort in this direction, cluster analysis will remain a black art accessible only to those true believers who have experience and great courage. ” Algorithms for Clustering Data, Jain and Dubes

Assessing the significance of clustering (and other data mining) results • • Data X and algorithm A Beautiful result A(D) But: what does it mean? How to determine whether the result is really interesting or just due to chance?

Examples • Pattern discovery: frequent itemsets or association rules • From data X we can find a collection of nice patterns • Significance of individual patterns is sometimes straightforward to test • What about the whole collection of patterns? Is it surprising to see such a collection?

Examples • In clustering or mixture modeling: we always get a result • How to test if the whole idea of components/clusters in the data is good?

Classical methods

Classical methods

Randomization methods • Goal in assessing the significance of results: could the result have occurred by chance? • Randomization methods: create datasets that somehow reflect the characteristics of the true data

Randomization • Create randomized versions from the data X • X 1, X 2, …, Xk • Run algorithm A on these, producing results A(X 1), A(X 2), …, A(Xk) • Check if the result A(X) on the real data is somehow different from these • Empirical p-value: the fraction of cases for which the result on real data is (say) larger than A(X) • If the empirical p-value is small, then there is something interesting in the data

Questions • How is the data randomized? • Can the sample X 1, X 2, …, Xk be computed efficiently? • Can the values A(X 1), A(X 2), …, A(Xk) be computed efficiently?

How to randomize? • How are datasets Xi generated? • Randomly from a “null model”/ ”null hypothesis”

- Slides: 78