Cloud Computing Skepticism Abhishek Verma Saurabh Nangia Outline

Cloud Computing Skepticism Abhishek Verma, Saurabh Nangia

Outline �Cloud computing hype �Cynicism �Map. Reduce Vs Parallel DBMS �Cost of a cloud �Discussion 2

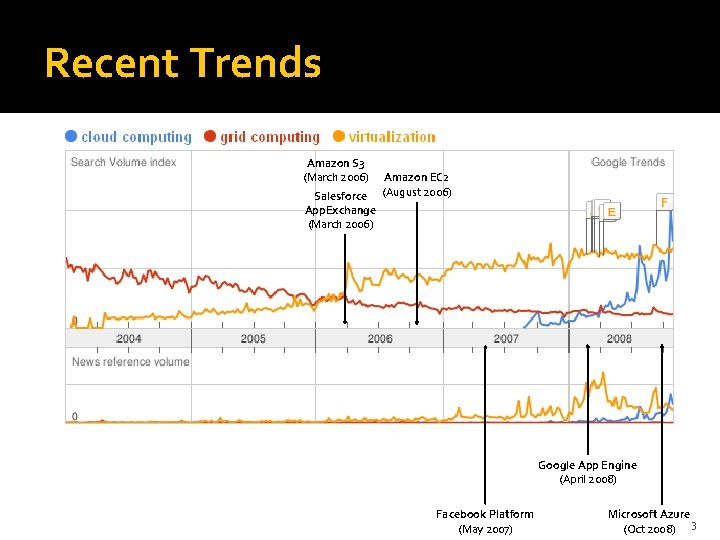

Recent Trends Amazon S 3 (March 2006) Salesforce App. Exchange (March 2006) Amazon EC 2 (August 2006) Google App Engine (April 2008) Facebook Platform (May 2007) Microsoft Azure (Oct 2008) 3

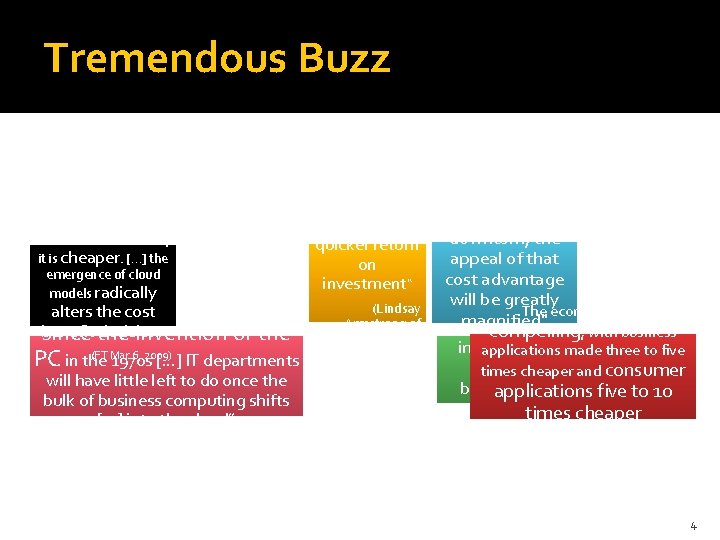

Tremendous Buzz “Cloud computing achieves a “Not only is it faster and more flexible, it is cheaper. […] the quicker return on investment“ emergence of cloud models radically “Revolution, the biggest upheaval alters the cost benefit decision “ since the invention of the (FT Mar 6, 2009) PC in the 1970 s […] IT departments will have little left to do once the bulk of business computing shifts […] into the cloud” (Nicholas Carr, 2008) (Lindsay Armstrong of salesforce. com, Dec 2008) “ Economic downturn, the appeal of that cost advantage will be greatly The economics are magnified" “No less compelling, with business (IDC, 2008) influential applications made three to five than etimes cheaper and consumer business” applications five to 10 (Gartner, 2008) times cheaper (Merrill Lynch, May, 2008) 4

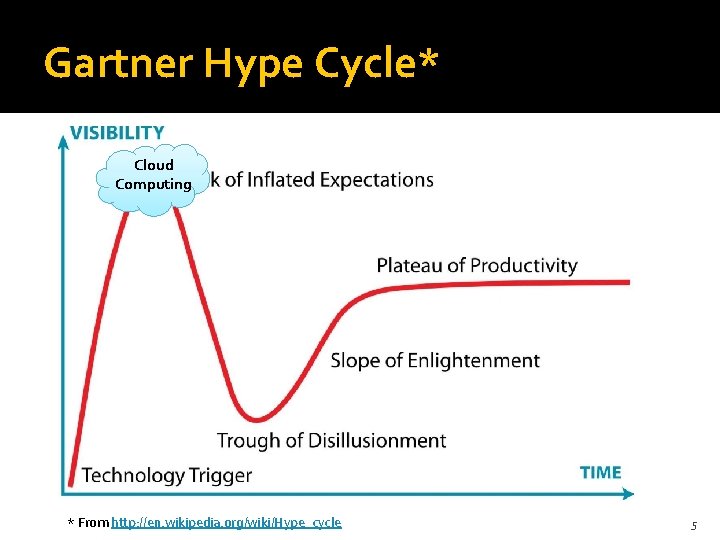

Gartner Hype Cycle* Cloud Computing * From http: //en. wikipedia. org/wiki/Hype_cycle 5

Blind men and an Elephant 6

“Cloud computing is simply a buzzword used to repackage grid computing and utility computing, both of which have existed for decades. ” whatis. com Definition of Cloud Computing 7

“The interesting thing about cloud computing is that we’ve redefined cloud computing to include everything that we already do. […] The computer industry is the only industry that is more fashion-driven than women’s fashion. Maybe I’m an idiot, but I have no idea what anyone is talking about. What is it? It’s complete gibberish. It’s insane. When is this idiocy going to stop? ” Larry Ellison During Oracle’s Analyst Day From http: //blogs. wsj. com/biztech/2008/09/25/larry-ellisons-brilliant-anti-cloud-computing-rant/ 8

From http: //geekandpoke. typepad. com/geekandpoke/2009/03/let-the-clouds-make-your-life-easier. html 9

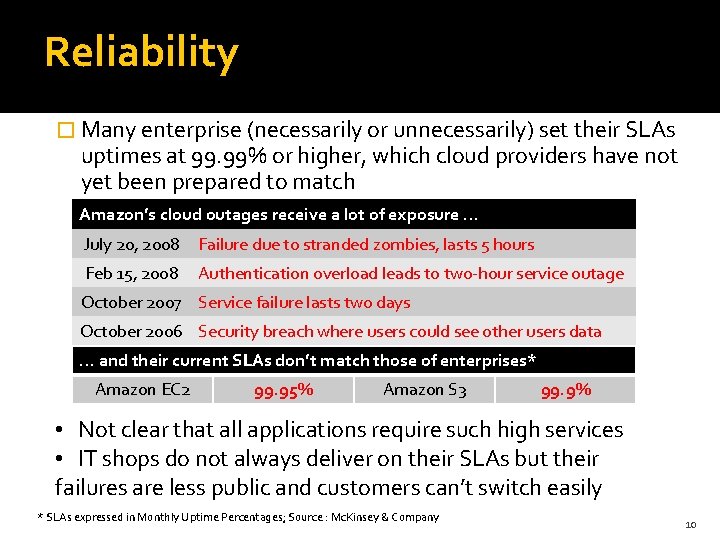

Reliability � Many enterprise (necessarily or unnecessarily) set their SLAs uptimes at 99. 99% or higher, which cloud providers have not yet been prepared to match Amazon’s cloud outages receive a lot of exposure … July 20, 2008 Failure due to stranded zombies, lasts 5 hours Feb 15, 2008 Authentication overload leads to two-hour service outage October 2007 Service failure lasts two days October 2006 Security breach where users could see other users data … and their current SLAs don’t match those of enterprises* Amazon EC 2 99. 95% Amazon S 3 99. 9% • Not clear that all applications require such high services • IT shops do not always deliver on their SLAs but their failures are less public and customers can’t switch easily * SLAs expressed in Monthly Uptime Percentages; Source : Mc. Kinsey & Company 10

A Comparison of Approaches to Large-Scale Data Analysis* Andrew Pavlo, Erik Paulson, Alexander Rasin, Daniel J. Abadi, David J. De. Witt, Samuel Madden, Michael Stonebraker To appear in SIGMOD ‘ 09 *Basic ideas from Map. Reduce - a major step backwards, D. De. Witt and M. Stonebraker

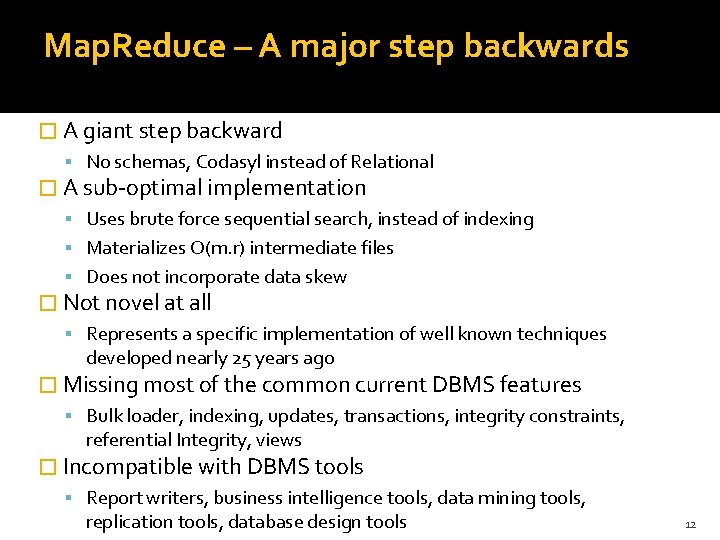

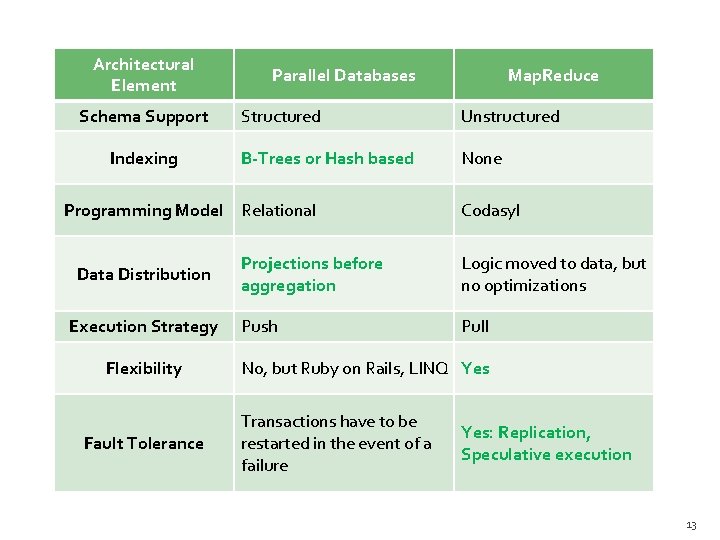

Map. Reduce – A major step backwards � A giant step backward No schemas, Codasyl instead of Relational � A sub-optimal implementation Uses brute force sequential search, instead of indexing Materializes O(m. r) intermediate files Does not incorporate data skew � Not novel at all Represents a specific implementation of well known techniques developed nearly 25 years ago � Missing most of the common current DBMS features Bulk loader, indexing, updates, transactions, integrity constraints, referential Integrity, views � Incompatible with DBMS tools Report writers, business intelligence tools, data mining tools, replication tools, database design tools 12

Architectural Element Schema Support Indexing Parallel Databases Structured Unstructured B-Trees or Hash based None Programming Model Relational Data Distribution Execution Strategy Flexibility Fault Tolerance Map. Reduce Codasyl Projections before aggregation Logic moved to data, but no optimizations Push Pull No, but Ruby on Rails, LINQ Yes Transactions have to be restarted in the event of a failure Yes: Replication, Speculative execution 13

Map. Reduce II* � Map. Reduce didn't kill our dog, steal our car, or try and date our daughters. � Map. Reduce is not a database system, so don't judge it as one Both analyze and perform computations on huge datasets � Map. Reduce has excellent scalability; the proof is Google's use Does it scale linearly? No scientific evidence � Map. Reduce is cheap and databases are expensive � We are the old guard trying to defend our turf/legacy from the young turks Propagation of ideas between sub-disciplines is very slow and sketchy Very little information is passed from generation to generation * http: //www. databasecolumn. com/2008/01/mapreduce-continued. html 14

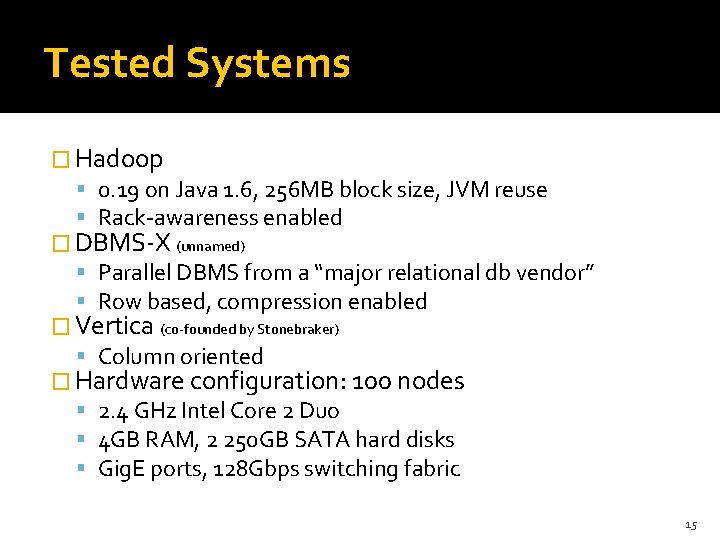

Tested Systems � Hadoop 0. 19 on Java 1. 6, 256 MB block size, JVM reuse Rack-awareness enabled � DBMS-X (unnamed) Parallel DBMS from a “major relational db vendor” Row based, compression enabled � Vertica (co-founded by Stonebraker) Column oriented � Hardware configuration: 100 nodes 2. 4 GHz Intel Core 2 Duo 4 GB RAM, 2 250 GB SATA hard disks Gig. E ports, 128 Gbps switching fabric 15

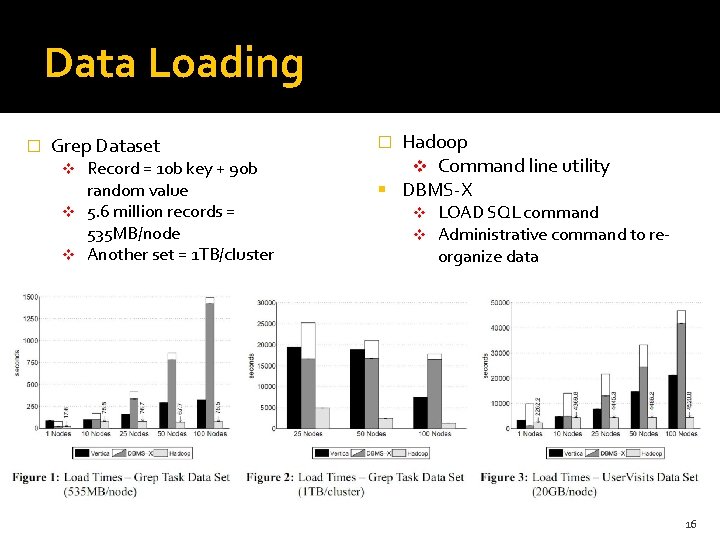

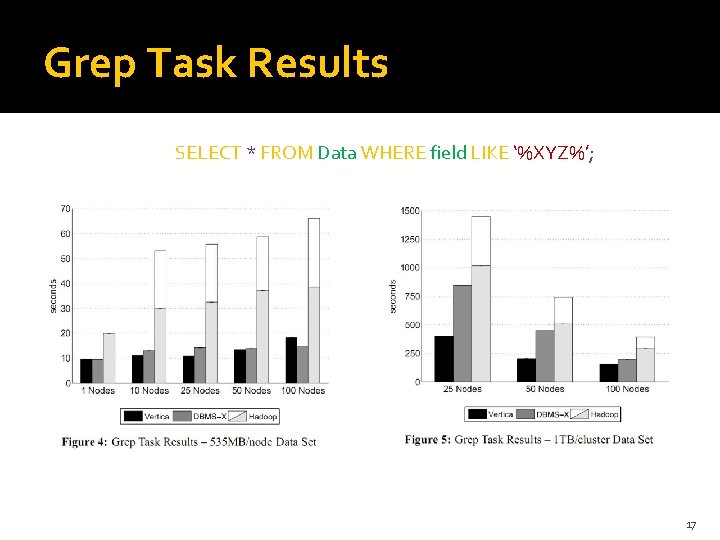

Data Loading � Grep Dataset Record = 10 b key + 90 b random value v 5. 6 million records = 535 MB/node v Another set = 1 TB/cluster v Hadoop v Command line utility DBMS-X � v v LOAD SQL command Administrative command to reorganize data 16

Grep Task Results SELECT * FROM Data WHERE field LIKE ‘%XYZ%’; 17

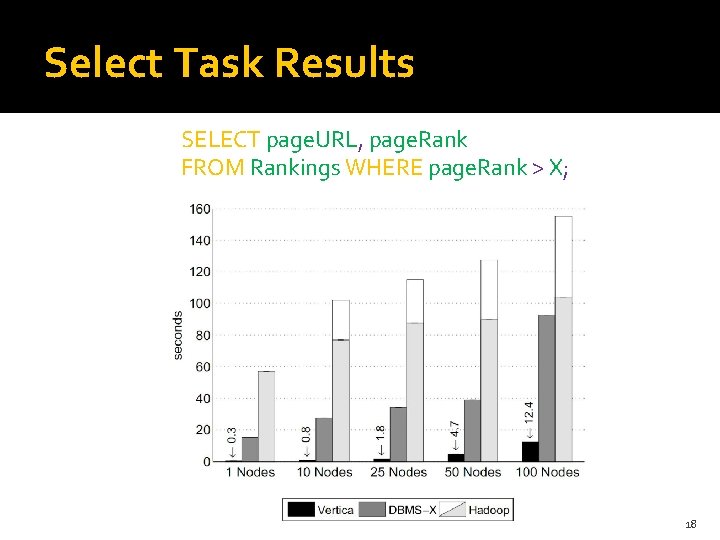

Select Task Results SELECT page. URL, page. Rank FROM Rankings WHERE page. Rank > X; 18

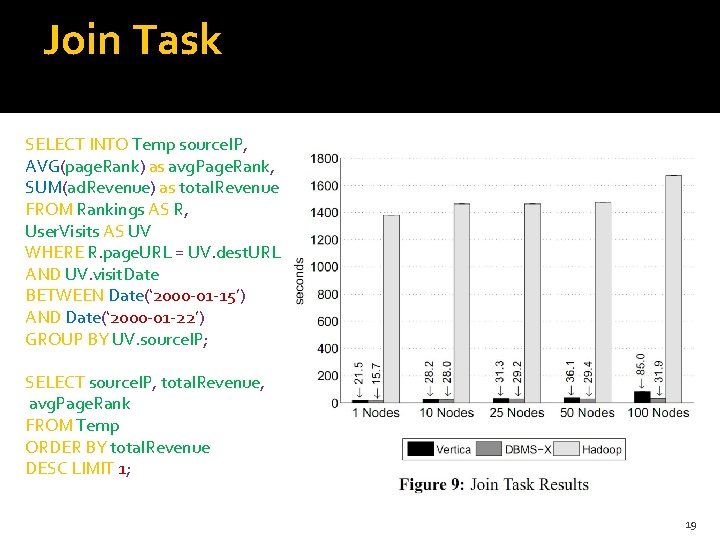

Join Task SELECT INTO Temp source. IP, AVG(page. Rank) as avg. Page. Rank, SUM(ad. Revenue) as total. Revenue FROM Rankings AS R, User. Visits AS UV WHERE R. page. URL = UV. dest. URL AND UV. visit. Date BETWEEN Date(‘ 2000 -01 -15’) AND Date(‘ 2000 -01 -22’) GROUP BY UV. source. IP; SELECT source. IP, total. Revenue, avg. Page. Rank FROM Temp ORDER BY total. Revenue DESC LIMIT 1; 19

Concluding Remarks � DBMS-X 3. 2 times, Vertica 2. 3 times faster than Hadoop � Parallel DBMS win because B-tree indices to speed the execution of selection operations, novel storage mechanisms (e. g. , column-orientation) aggressive compression techniques with ability to operate directly on compressed data sophisticated parallel algorithms for querying large amounts of relational data. � Ease of installation and use � Fault tolerance? � Loading data? 20

The Cost of a Cloud: Research Problem in Data Center Networks Albert Greenberg, James Hamilton, David A. Maltz, Parveen Patel MSR Redmond Presented by: Saurabh Nangia

Overview �Cost of cloud service �Improving low utilization Network agility Incentive for resource consumption Geo-distributed network of DC

Cost of a Cloud? �Where does the cost go in today’s cloud service data centers?

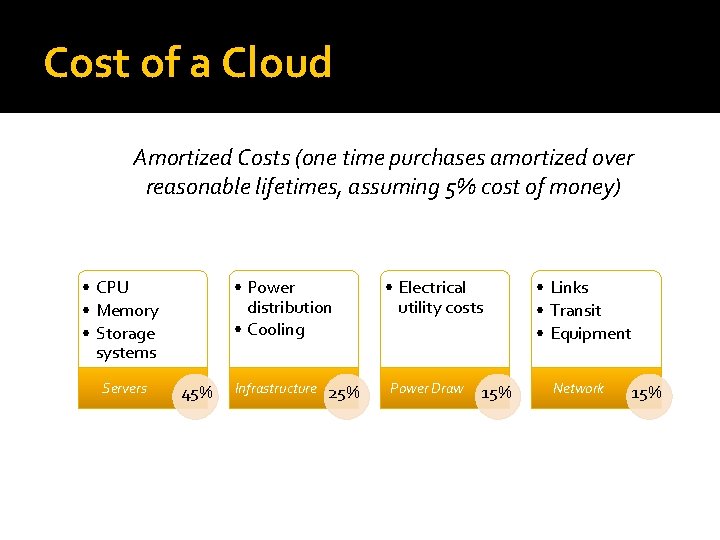

Cost of a Cloud Amortized Costs (one time purchases amortized over reasonable lifetimes, assuming 5% cost of money) • CPU • Memory • Storage systems Servers • Power distribution • Cooling 45% Infrastructure 25% • Electrical utility costs Power Draw 15% • Links • Transit • Equipment Network 15%

Are Clouds any different? �Can existing solutions for the enterprise data center work for cloud service data centers?

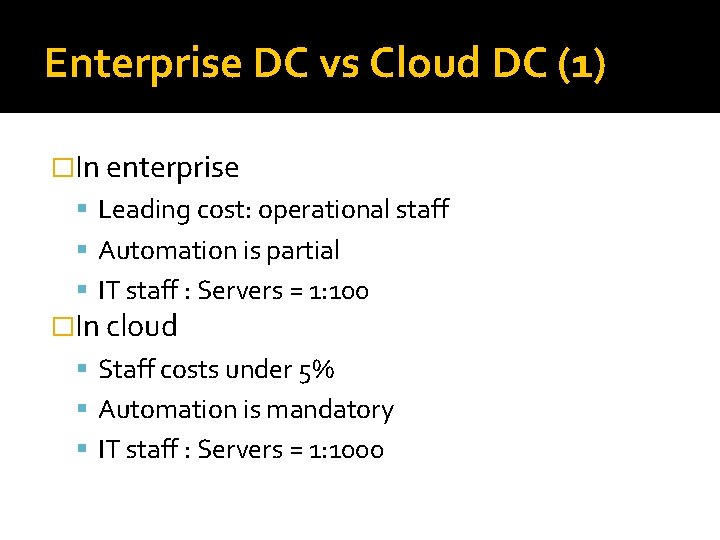

Enterprise DC vs Cloud DC (1) �In enterprise Leading cost: operational staff Automation is partial IT staff : Servers = 1: 100 �In cloud Staff costs under 5% Automation is mandatory IT staff : Servers = 1: 1000

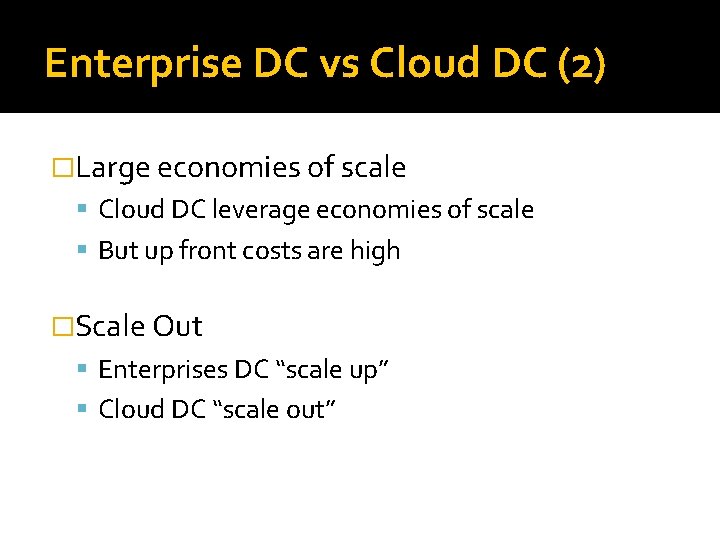

Enterprise DC vs Cloud DC (2) �Large economies of scale Cloud DC leverage economies of scale But up front costs are high �Scale Out Enterprises DC “scale up” Cloud DC “scale out”

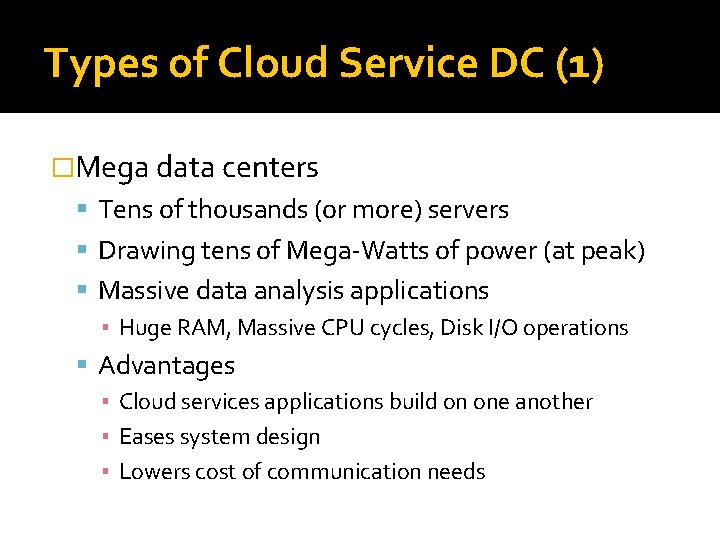

Types of Cloud Service DC (1) �Mega data centers Tens of thousands (or more) servers Drawing tens of Mega-Watts of power (at peak) Massive data analysis applications ▪ Huge RAM, Massive CPU cycles, Disk I/O operations Advantages ▪ Cloud services applications build on one another ▪ Eases system design ▪ Lowers cost of communication needs

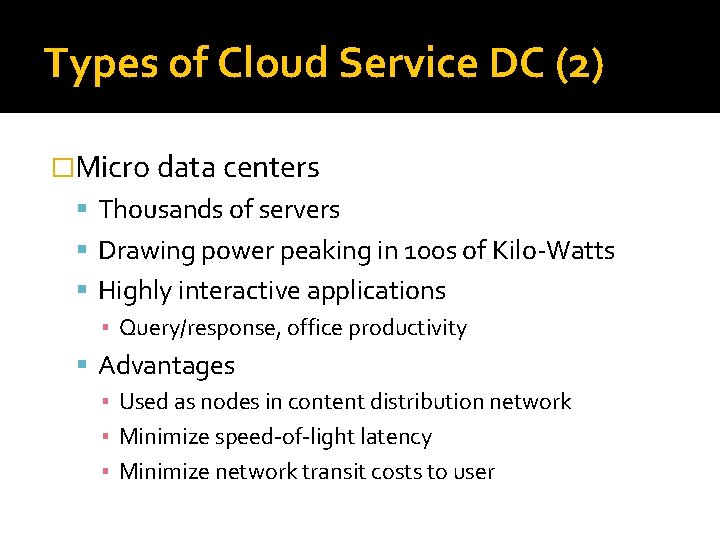

Types of Cloud Service DC (2) �Micro data centers Thousands of servers Drawing power peaking in 100 s of Kilo-Watts Highly interactive applications ▪ Query/response, office productivity Advantages ▪ Used as nodes in content distribution network ▪ Minimize speed-of-light latency ▪ Minimize network transit costs to user

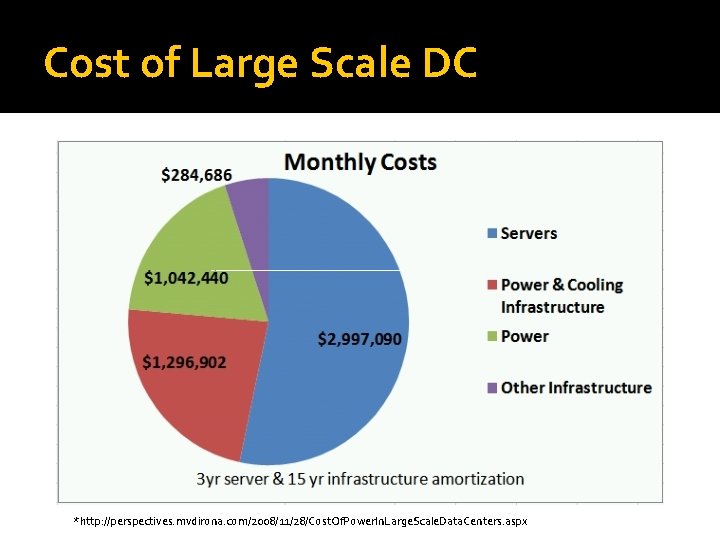

Cost Breakdown

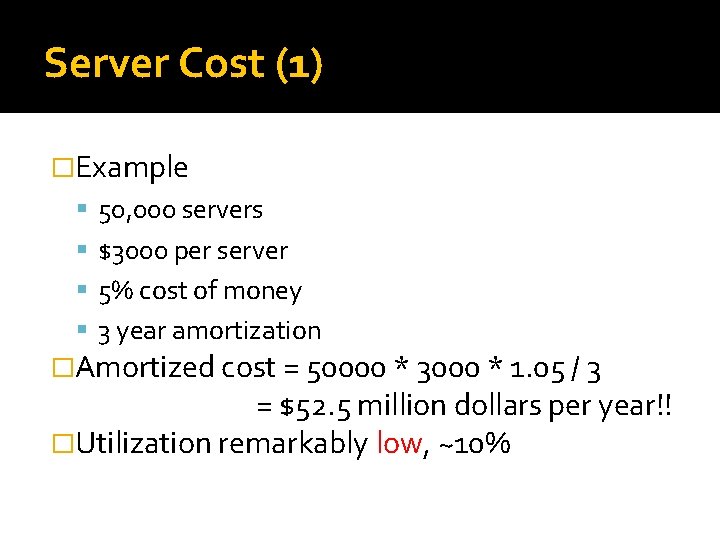

Server Cost (1) �Example 50, 000 servers $3000 per server 5% cost of money 3 year amortization �Amortized cost = 50000 * 3000 * 1. 05 / 3 = $52. 5 million dollars per year!! �Utilization remarkably low, ~10%

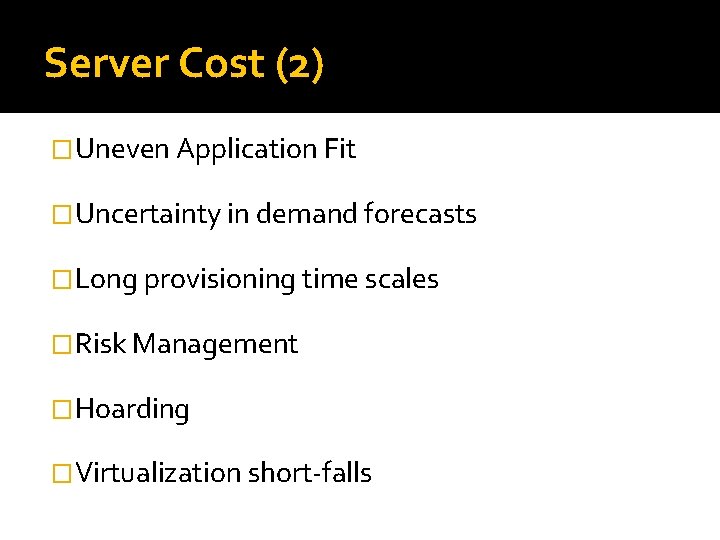

Server Cost (2) �Uneven Application Fit �Uncertainty in demand forecasts �Long provisioning time scales �Risk Management �Hoarding �Virtualization short-falls

Reducing Server Cost �Solution: Agility to dynamically grow and shrink resources to meet demand, and to draw those resources from the most optimal location. �Barrier: Network Increases fragmentation of resources Therefore, low server utlization

Infrastructure Cost � Infrastructure is overhead of Cloud DC � Facilities dedicated to Consistent power delivery Evacuating heat � Large scale generators, transformers, UPS � Amortized cost: $18. 4 million per year!! Infra cost: $200 M 5% cost of money 15 year amortization

Reducing Infrastructure Cost �Reason of high cost: requirement for delivering consistent power �Relaxing the requirement implies scaling out �Deploy larger numbers of smaller data centers Resilience at data center level Layers of redundancy within data center can be stripped out (no UPS & generators) �Geo-diverse deployment of micro data centers

Power �Power Usage Efficiency (PUE) = (Total Facility Power)/(IT Equipment Power) �Typically PUE ~ 1. 7 Inefficient facilities, PUE of 2. 0 to 3. 0 Leading facilities, PUE of 1. 2 �Amortized cost = $9. 3 million per year!! PUE: 1. 7 $. 07 per KWH 50000 servers each drawing average 180 W

Reducing Power Costs �Decreasing power cost -> decrease need of infrastructure cost �Goal: Energy proportionality server running at N% load consume N% power �Hardware innovation High efficiency power supplies Voltage regulation modules �Reduce amount of cooling for data center Equipment failure rates increase with temp Make network more mesh-like & resilient

Network �Capital cost of networking gear Switches, routers and load balancers �Wide area networking Peering: traffic handed off to ISP for end users Inter-data center links b/w geo distributed DC Regional facilities (backhaul, metro-area connectivity, co-location space) to reach interconnection sites �Back-of-the-envelope calculations difficult

Reducing Network Costs �Sensitive to site selection & industry dynamics �Solution: Clever design of peering & transit strategies Optimal placement of micro & mega DC Better design of services (partitioning state) Better data partitioning & replication

Perspective �On is better than off Server should be engaged in revenue production Challenge: Agility �Build in resilience at systems level Stripping out layers of redundancy inside each DC, and instead using other DC to mask DC failure Challenge: Systems software & Network research

Cost of Large Scale DC *http: //perspectives. mvdirona. com/2008/11/28/Cost. Of. Power. In. Large. Scale. Data. Centers. aspx

Solutions!

Improving DC efficiency �Increasing Network Agility �Appropriate incentives to shape resource consumption �Joint optimization of Network & DC resources �New mechanisms for geo-distributing states

Agility �Any server can be dynamically assigned to any service anywhere in DC �Conventional DC Fragment network & server capacity Limit dynamic growth and shrink of server pools

Networking in Current DC �DC network two types of traffic Between external end systems & internal servers Between internal servers �Load Balancer �Virtual IP address (VIP) �Direct IP address (DIP)

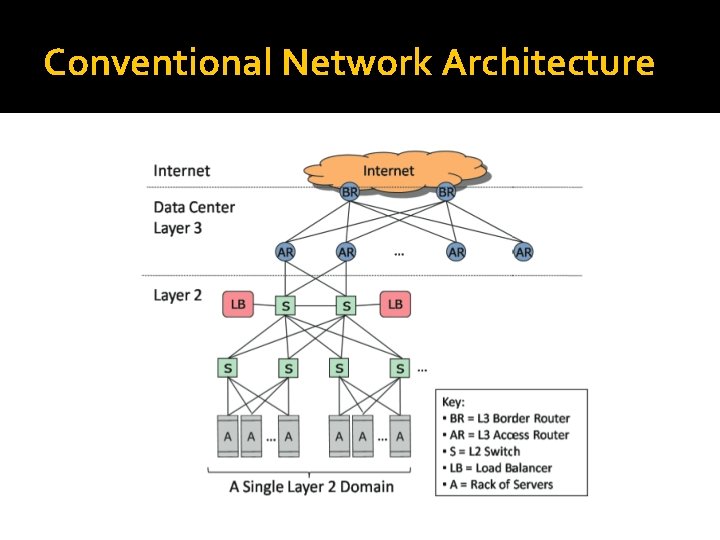

Conventional Network Architecture

Problems (1) �Static Network Assignment Individual applications mapped to specific physical switches & routers Adv: performance & security isolation Disadv: Work against agility ▪ Policy-overloaded (traffic, security, performance) ▪ VLAN spanning concentrates traffic on links high in tree

Problems (2) �Load Balancing Techniques Destination NAT ▪ All DIPs in a VIPs pool be in the same layer 2 domain ▪ Under-utilization & fragmentation Source NAT ▪ Servers spread across layer 2 domain ▪ But server never sees IP ▪ Client IP required for data mining & response customization

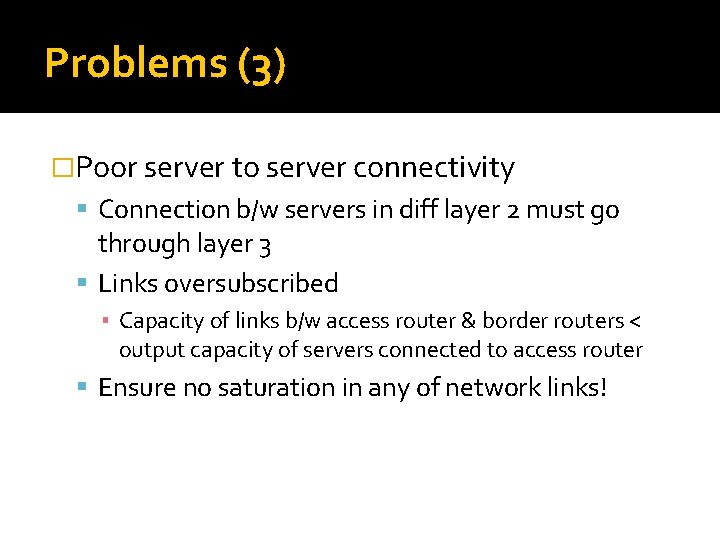

Problems (3) �Poor server to server connectivity Connection b/w servers in diff layer 2 must go through layer 3 Links oversubscribed ▪ Capacity of links b/w access router & border routers < output capacity of servers connected to access router Ensure no saturation in any of network links!

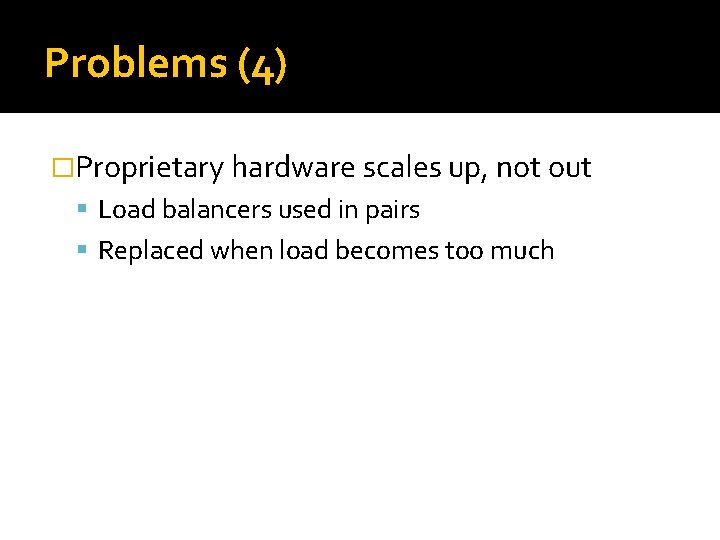

Problems (4) �Proprietary hardware scales up, not out Load balancers used in pairs Replaced when load becomes too much

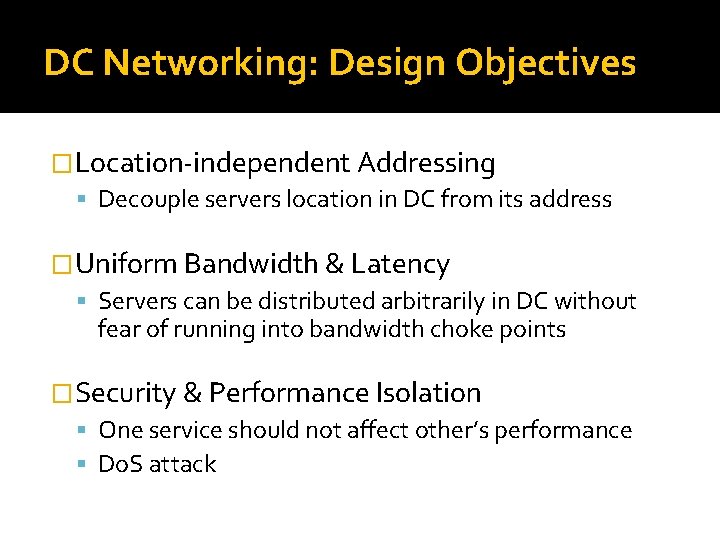

DC Networking: Design Objectives �Location-independent Addressing Decouple servers location in DC from its address �Uniform Bandwidth & Latency Servers can be distributed arbitrarily in DC without fear of running into bandwidth choke points �Security & Performance Isolation One service should not affect other’s performance Do. S attack

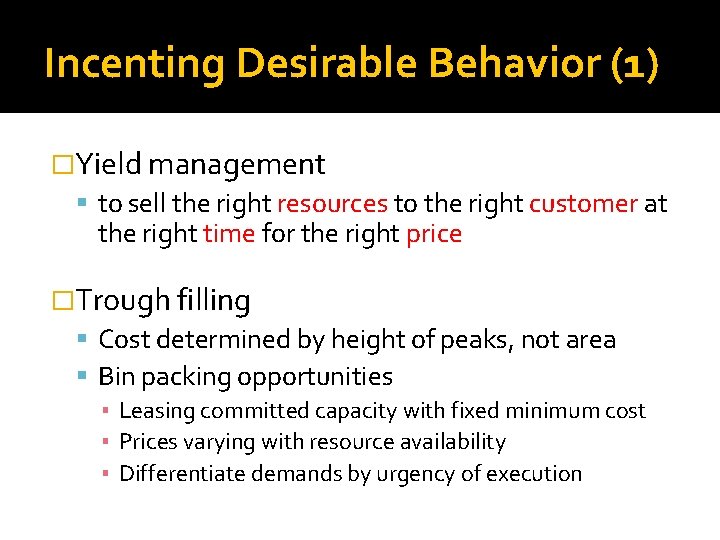

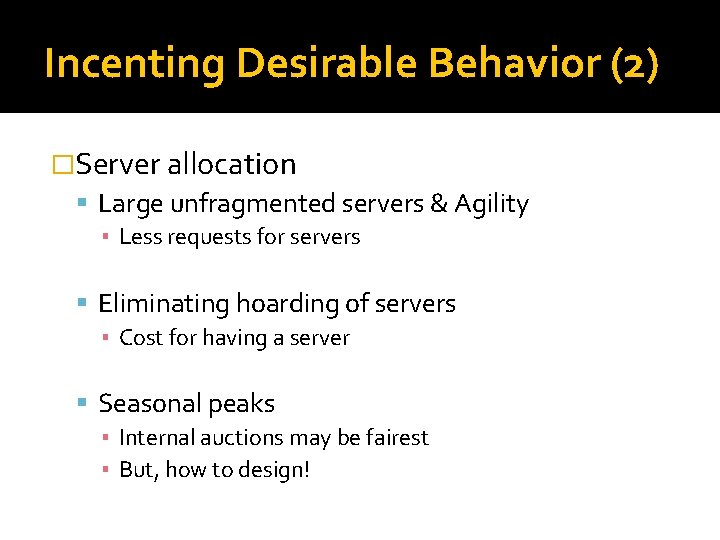

Incenting Desirable Behavior (1) �Yield management to sell the right resources to the right customer at the right time for the right price �Trough filling Cost determined by height of peaks, not area Bin packing opportunities ▪ Leasing committed capacity with fixed minimum cost ▪ Prices varying with resource availability ▪ Differentiate demands by urgency of execution

Incenting Desirable Behavior (2) �Server allocation Large unfragmented servers & Agility ▪ Less requests for servers Eliminating hoarding of servers ▪ Cost for having a server Seasonal peaks ▪ Internal auctions may be fairest ▪ But, how to design!

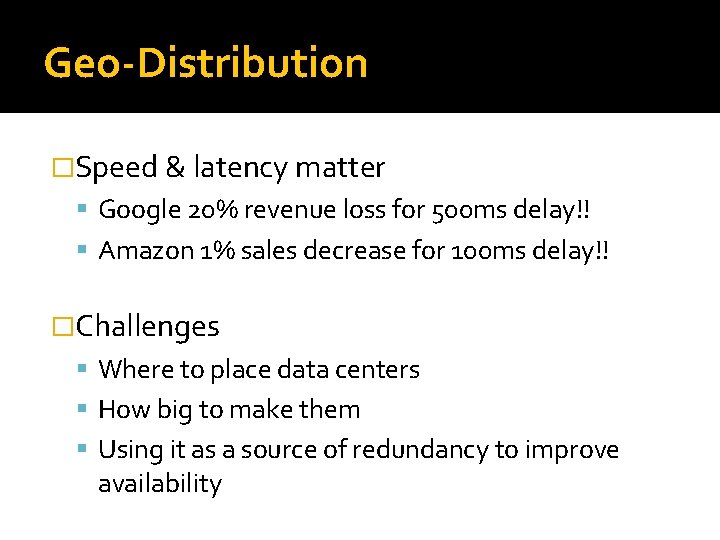

Geo-Distribution �Speed & latency matter Google 20% revenue loss for 500 ms delay!! Amazon 1% sales decrease for 100 ms delay!! �Challenges Where to place data centers How big to make them Using it as a source of redundancy to improve availability

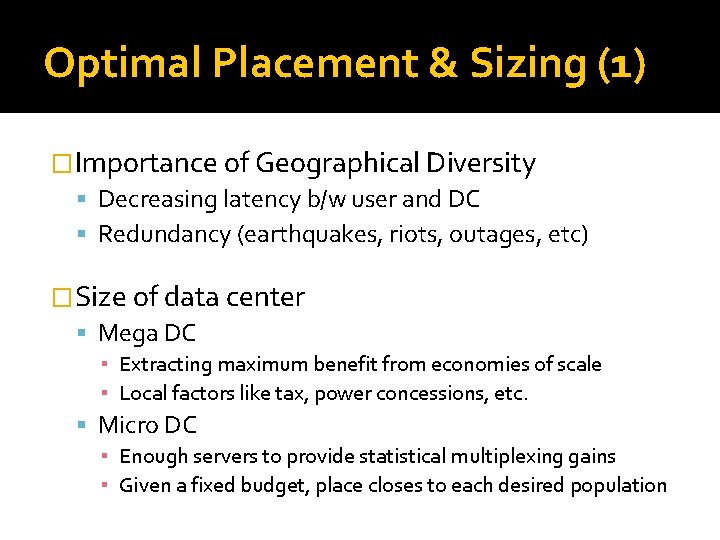

Optimal Placement & Sizing (1) �Importance of Geographical Diversity Decreasing latency b/w user and DC Redundancy (earthquakes, riots, outages, etc) �Size of data center Mega DC ▪ Extracting maximum benefit from economies of scale ▪ Local factors like tax, power concessions, etc. Micro DC ▪ Enough servers to provide statistical multiplexing gains ▪ Given a fixed budget, place closes to each desired population

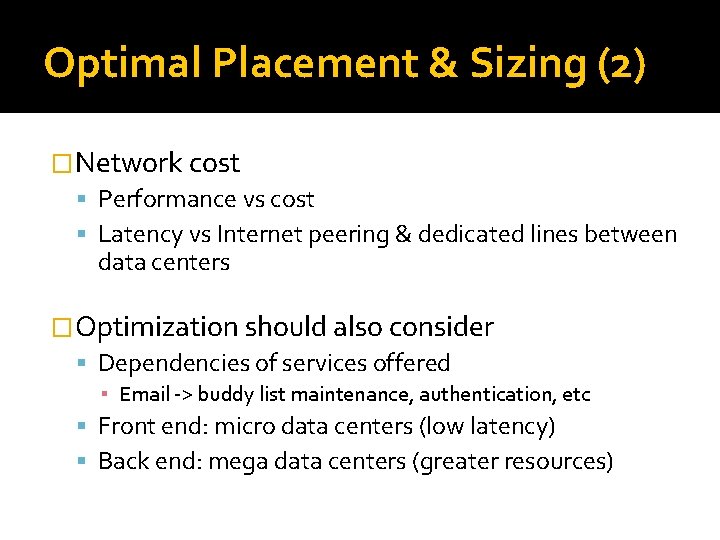

Optimal Placement & Sizing (2) �Network cost Performance vs cost Latency vs Internet peering & dedicated lines between data centers �Optimization should also consider Dependencies of services offered ▪ Email -> buddy list maintenance, authentication, etc Front end: micro data centers (low latency) Back end: mega data centers (greater resources)

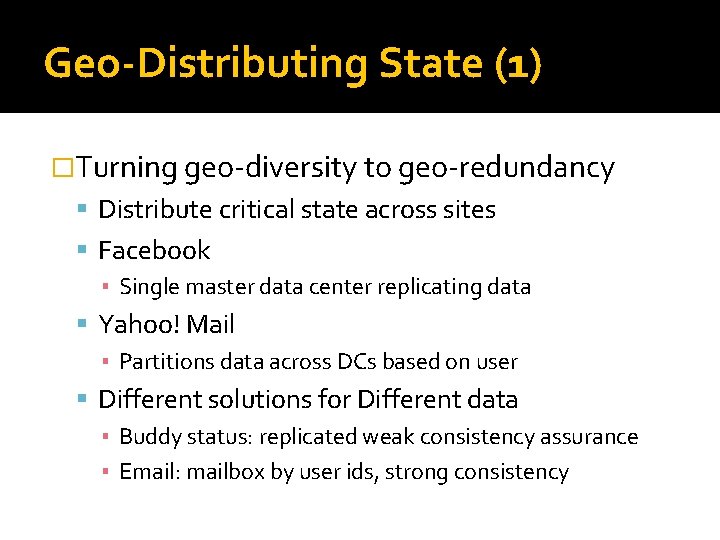

Geo-Distributing State (1) �Turning geo-diversity to geo-redundancy Distribute critical state across sites Facebook ▪ Single master data center replicating data Yahoo! Mail ▪ Partitions data across DCs based on user Different solutions for Different data ▪ Buddy status: replicated weak consistency assurance ▪ Email: mailbox by user ids, strong consistency

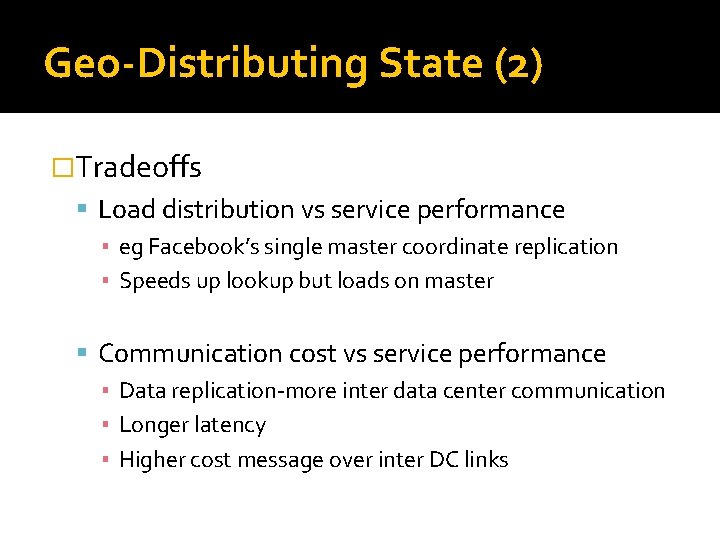

Geo-Distributing State (2) �Tradeoffs Load distribution vs service performance ▪ eg Facebook’s single master coordinate replication ▪ Speeds up lookup but loads on master Communication cost vs service performance ▪ Data replication-more inter data center communication ▪ Longer latency ▪ Higher cost message over inter DC links

Summary �Data center costs Server, Infrastructure, Power, Networking �Improving efficiency Network Agility Resource Consumption Shaping Geo-diversifying DC

Opinions

�Richard Stallman, GNU founder Cloud Computing is a trap “. . cloud computing was simply a trap aimed at forcing more people to buy into locked, proprietary systems that would cost them more and more over time. ” "It's stupidity. It's worse than stupidity: it's a marketing hype campaign"

�Open Cloud Manifesto a document put together by IBM, Cisco, AT&T, Sun Microsystems and over 50 others to promote interoperability "Cloud providers must not use their market position to lock customers into their particular platforms and limit their choice of providers, ” Failed? Google, Amazon, Salesforce and Microsoft, four very big players in the area, are notably absent from the list of supporters

�Larry Ellison, Oracle founder "fashion-driven" and "complete gibberish” “What is it? . . . Is it - 'Oh, I am going to access data on a server on the Internet. ' That is cloud computing? “ “Then there is a definition: What is cloud computing? It is using a computer that is out there. That is one of the definitions: 'That is out there. ' These people who are writing this crap are out there. They are insane. I mean it is the stupidest. ”

�Sam Johnston, Strategic Consultant Specializing in Cloud Computing, Oracle would be out badmouthing cloud computing as it has the potential to disrupt their entire business. "Who needs a database server when you can buy cloud storage like electricity and let someone else worry about the details? Not me, that's for sure - unless I happen to be one of a dozen or so big providers who are probably using open source tech anyway, ”

�Marc Benioff, head of salesforce. com “Cloud computing isn't just candyfloss thinking – it's the future. If it isn't, I don't know what is. We're in it. You're going to see this model dominate our industry. " Is data really safe in the cloud? "All complex systems have planned and unplanned downtime. The reality is we are able to provide higher levels of reliability and availability than most companies could provide on their own, " says Benioff

�John Chambers, Cisco Systems’ CEO "a security nightmare. ” “cloud computing was inevitable, but that it would shake up the way that networks are secured…”

�James Hamilton, VP Amazon Web Services “any company not fully understanding cloud computing economics and not having cloud computing as a tool to deploy where it makes sense is giving up a very valuable competitive edge” “No matter how large the IT group, if I led the team, I would be experimenting with cloud computing and deploying where it make sense” 67

To Cloud or Not to Cloud?

References �“Clearing the air on cloud computing”, Mc. Kinsey&Company �http: //geekandpoke. typepad. com/ �“Clearing the Air - Adobe Air, Google Gears and Microsoft Mesh”, Farhad Javidi �http: //en. wikipedia. org/wiki/Hype_cycle �“A Comparison of Approaches to Large-Scale Data Analysis”, Pavlo et al �Map. Reduce - a major step backwards, D. De. Witt and M. Stonebraker 69

- Slides: 69