Classroom Assessment Validity And Bias in Assessment Classroom

Classroom Assessment Validity And Bias in Assessment

Classroom Assessment: Validity • A characteristic of the inferences made from test scores; not a characteristic of the test itself. • Requires a well-defined assessment domain (or construct) and, typically, a sampling strategy for selecting elements from that domain.

Validity: A matter of degree. • Validity often depends on the situation. • For example, a history test… – Might have high validity for inferring students’ knowledge of events leading up to the American revolution. – But, have less validity for inferring whether students can reason and apply their knowledge to current or future events.

Validity is Related to the Learning Targets A good question to ask yourself is: Do scores on the assessment allow me to make inferences that are directly related to performance on the learning targets I am trying to assess? • Do the learning targets address only facts and verbal knowledge? Or • Do the learning targets address higher-order outcomes such as reasoning and application?

Validity Requires Evidence • Validity is concerned with the collection of evidence to support an argument that a particular score-based inference is the correct inference. • While validity is best viewed as a unitary concept, there are several ways of collecting evidence to support arguments for validity. – Content evidence. – Criterion evidence. – Construct evidence.

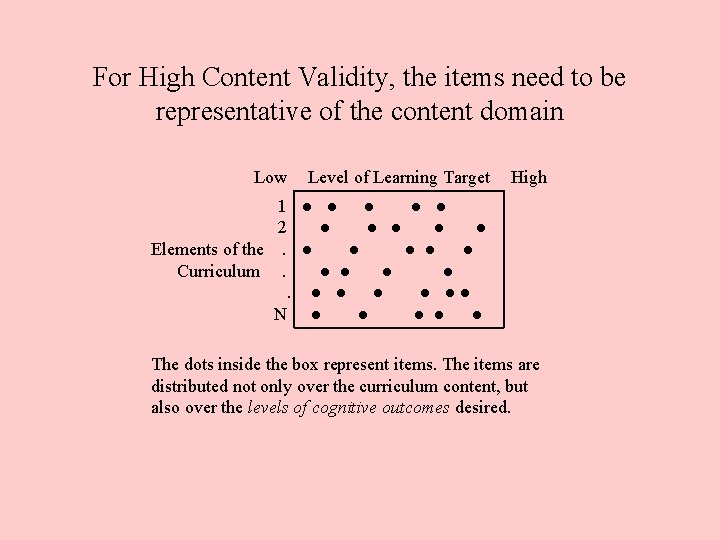

Content-related Evidence of Validity • Important Question: Are the items or tasks sampled truly representative of the assessment domain? – Is there sufficient sampling of the content? – Is there sufficient sampling of the type of learning desired?

More on Content-related Evidence of Validity • An important source of evidence for classroom tests. • Generally enhanced by the use of learning targets or assessment domain specifications. – Declarative knowledge specifications. – Procedural knowledge specifications. • Again, representative sampling is key.

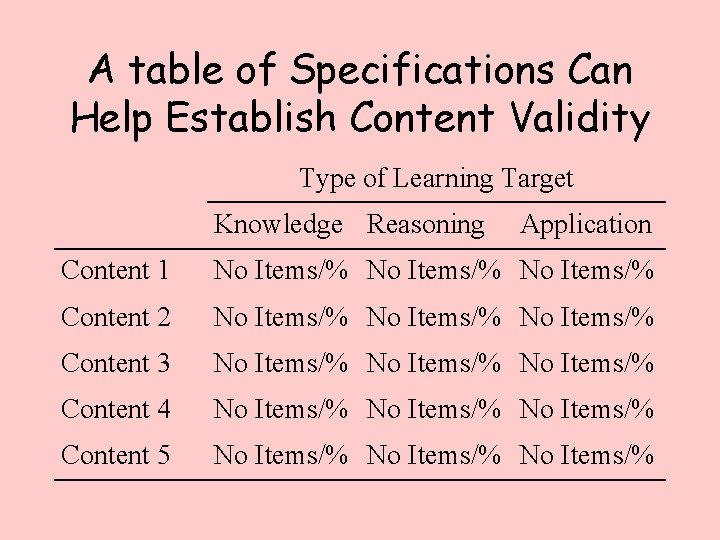

A table of Specifications Can Help Establish Content Validity Type of Learning Target Knowledge Reasoning Application Content 1 No Items/% Content 2 No Items/% Content 3 No Items/% Content 4 No Items/% Content 5 No Items/%

For High Content Validity, the items need to be representative of the content domain Low Level of Learning Target High 1 ● ● ● 2 ● ● ● Elements of the. ● ● ● Curriculum. ● ● ● ● ●● N ● ● ● The dots inside the box represent items. The items are distributed not only over the curriculum content, but also over the levels of cognitive outcomes desired.

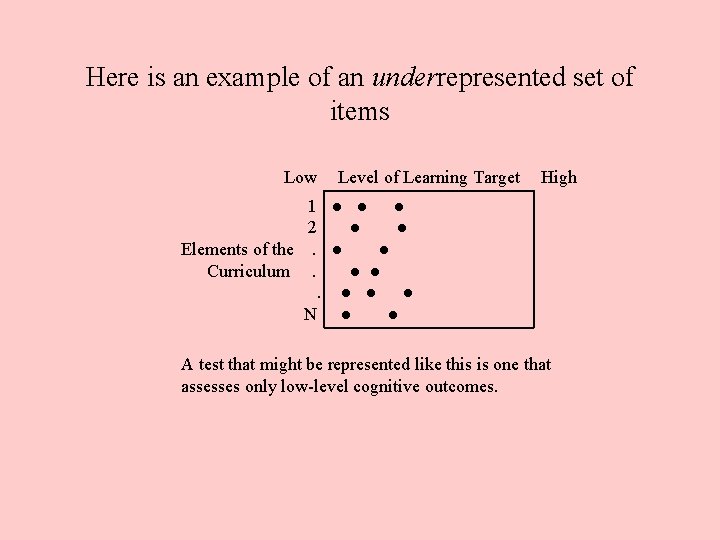

Here is an example of an underrepresented set of items Low Level of Learning Target High 1 ● ● ● 2 ● ● Elements of the. ● ● Curriculum. ● ● ● N ● ● A test that might be represented like this is one that assesses only low-level cognitive outcomes.

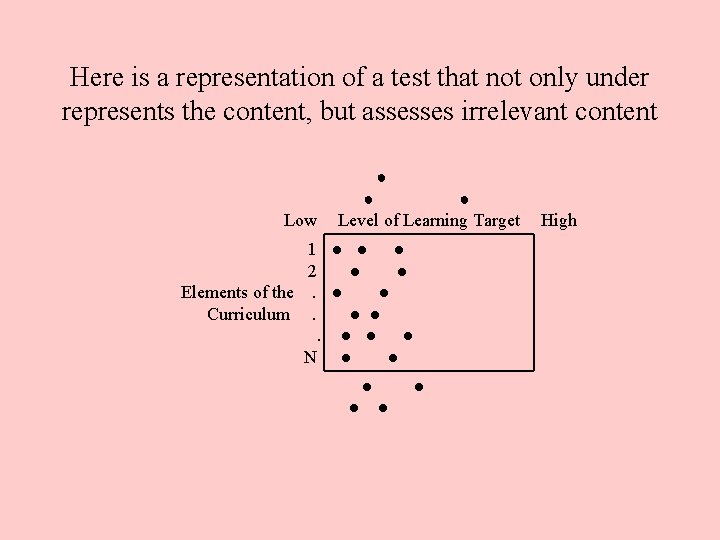

Here is a representation of a test that not only under represents the content, but assesses irrelevant content ● Low ● ● Level of Learning Target 1 ● ● ● 2 ● ● Elements of the. ● ● Curriculum. ● ● ● N ● ● ● High

Criterion-related Evidence of Validity: Two types • Concurrent validity. – Correlate scores on new test with scores on established test. – Correlate scores on classroom test with what teacher already knows about students. • Predictive validity. – Correlate current test scores with scores collected at a later date. – Example: SAT.

Construct-related Evidence of Validity • The gathering of evidence to support an assertion that inferences based on a test are valid. • Entails testing hypotheses involving the construct (e. g. , learning, anxiety, attitude, etc. ) being assessed and performance on the assessment.

More Construct-related Evidence of Validity • Three approaches: – Intervention studies: Hypothesis: students will perform higher on the test following an instructional intervention. – Differential-population studies: Hypothesis: different populations will perform differently on the assessment. – Related measures studies: Hypothesis: students will perform similarly on similar tasks.

When Collecting Evidence of Validity, Note the Following • Structured (formal) assessments are likely to yield more valid inferences than unstructured (informal) assessments. • Major focus: Accuracy of assessmentbased inferences. • Major sources of invalidity: – construct underrepresentation. – construct irrelevance.

Two Important Relationships Between Reliability and Validity • Reliability is a necessary but not sufficient condition for Validity. • Validity necessarily implies reliability. – Pam Moss, notwithstanding.

A final word Validity, or our notions of validity, apply to all manner of assessments. This includes scores (or grades) placed on report cards. The question to ask is, “Are the inferences to be drawn from a report card grade valid? ”

End

- Slides: 18