Classifying Job Execution Using Deep Learning Ashfaq Munshi

Classifying Job Execution Using Deep Learning Ashfaq Munshi, Saeed Bidhendi, Faramarz Munshi March 6, 2018

Overview • Background and Motivation • Multivariate Time Series (MTS) Classification • Generalize Across Clusters • Conclusion 2 © Pepperdata, Inc.

Background and Motivation

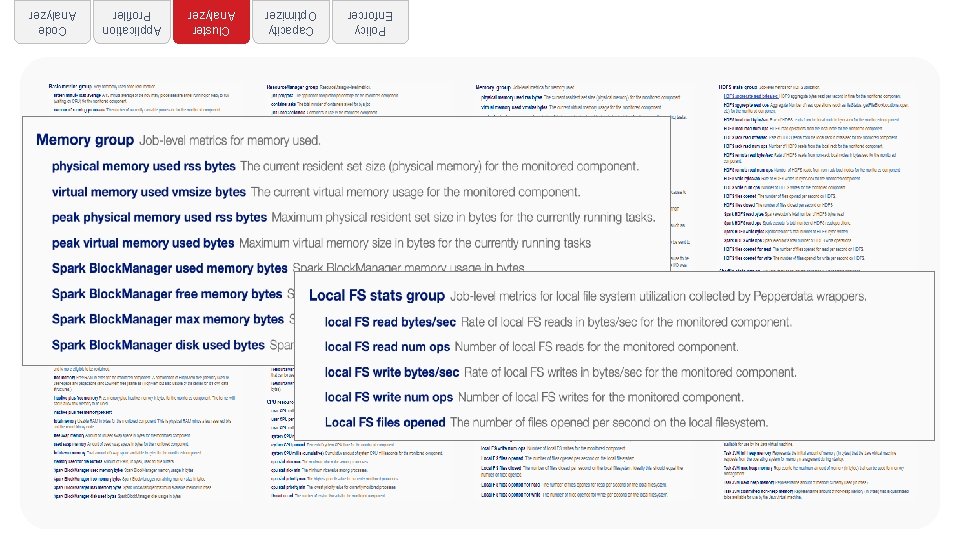

Policy Enforcer Capacity Optimizer Cluster Analyzer Application Profiler Code Analyzer

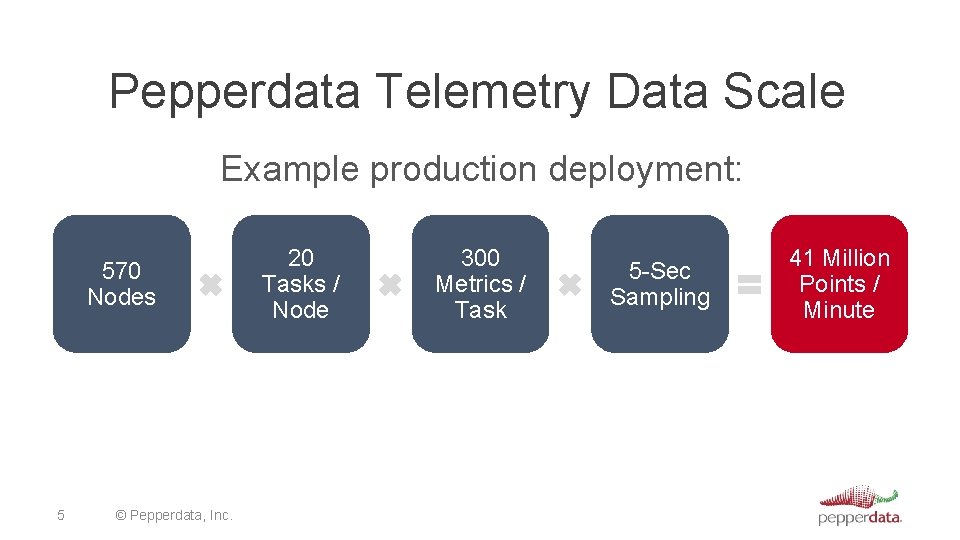

Pepperdata Telemetry Data Scale Example production deployment: 570 Nodes 5 © Pepperdata, Inc. 20 Tasks / Node 300 Metrics / Task 5 -Sec Sampling 41 Million Points / Minute

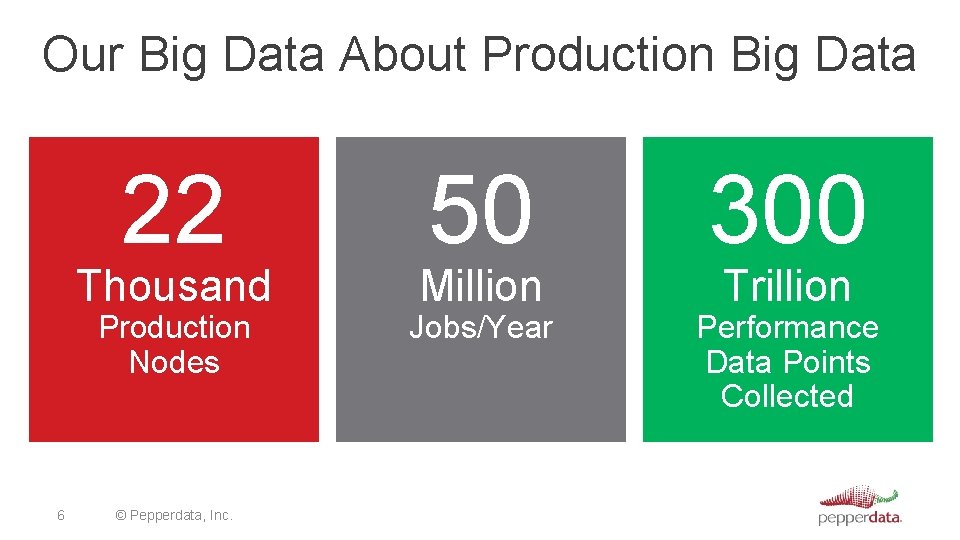

Our Big Data About Production Big Data 22 Thousand Production Nodes 6 © Pepperdata, Inc. 50 300 Jobs/Year Performance Data Points Collected Million Trillion

Problem Build a model that labels jobs on any cluster into meaningful groups that are structurally similar 7 © Pepperdata, Inc.

What is Structural Similarity? • Jobs that have similar time series for • • CPU Memory Network HDFS reads • Captures runtime characteristics and resource use 8 © Pepperdata, Inc.

Example of Structural Similarity 9 © Pepperdata, Inc.

Why? • Better scheduling • Queues • Hints for scheduler • Understanding job characteristics • • 10 Variations in run-time Effects of queue assignments Effects of hardware and system differences … Compare jobs across different clusters © Pepperdata, Inc.

Our Approach • Build a deep learning model for job similarity • Essentially a multivariate time series classification • problem (MTS) Use customer labeled data as training data • Generalize across clusters • Merge labels across different customers • Generate a final set of labels across all clusters / customers 11 © Pepperdata, Inc.

Multivariate Time Series

Previous Approaches • Two recent approaches from the literature • Use an ESN (“Echo State Network”) to map MTS into • • 13 state clouds [Wang, Liu 2015] Use Dynamic Time Warping with Mahalanobis distance metric [Mei, Liu, Wang, Gao 2016] Dataset is from UCI, a small subset of UCR and others Number of series ~ 10 K Data points per series ~ 200 © Pepperdata, Inc.

Time Series and Images • Map the time series into • Gramian Angular Summation Fields • Gramian Angular Difference Fields • Markov Transition Fields • Feed images into a tiled CNN for classification [Wang & Oats, 2015] 14 © Pepperdata, Inc.

![Gramian Angular Fields • Normalize the time series into [-1, 1] • Transform to Gramian Angular Fields • Normalize the time series into [-1, 1] • Transform to](http://slidetodoc.com/presentation_image_h/291d9880012f33a40425046d2bdd047b/image-15.jpg)

Gramian Angular Fields • Normalize the time series into [-1, 1] • Transform to Polar Coordinates [Wang & Oats, 2015] 15 © Pepperdata, Inc.

![Example GADF Image [Wang & Oats, 2015] 16 © Pepperdata, Inc. Example GADF Image [Wang & Oats, 2015] 16 © Pepperdata, Inc.](http://slidetodoc.com/presentation_image_h/291d9880012f33a40425046d2bdd047b/image-16.jpg)

Example GADF Image [Wang & Oats, 2015] 16 © Pepperdata, Inc.

Our “Off the Shelf” Approach (PD) • Make TS for each variable the same length by zero • • • 17 padding Convert each TS into a GADF image Interpolate any missing data points in the image using linear interpolation on the image Stack the images for the variables © Pepperdata, Inc.

Our “Off the shelf” Approach (PD) • Use Google’s pre-trained CNN; trained on inception v 3 • Embed into 2, 048 -dimensional vector space • Train MLP • • 18 2 hidden layers (50 nodes each) Re. LU activation Dropout for regularization (. 1, . 2) Softmax final layer © Pepperdata, Inc.

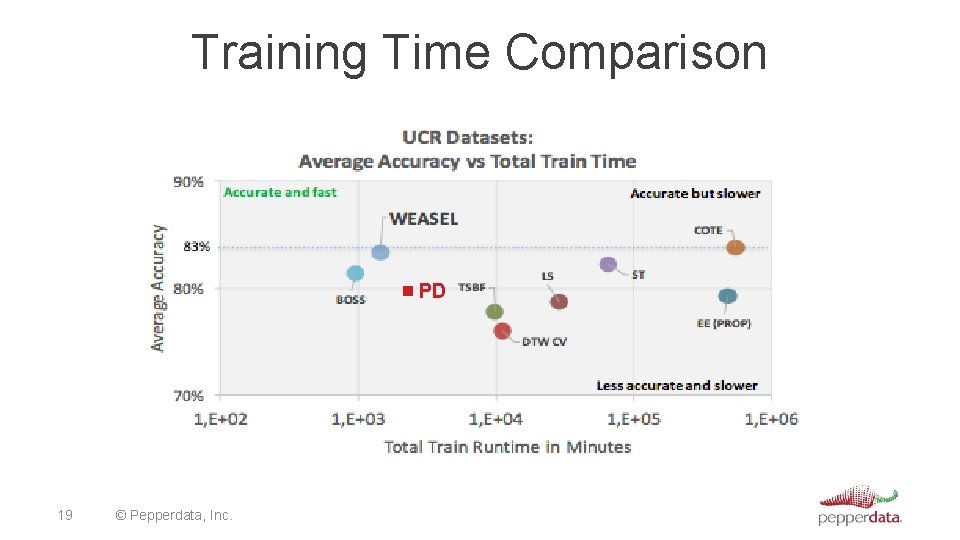

Training Time Comparison PD 19 © Pepperdata, Inc.

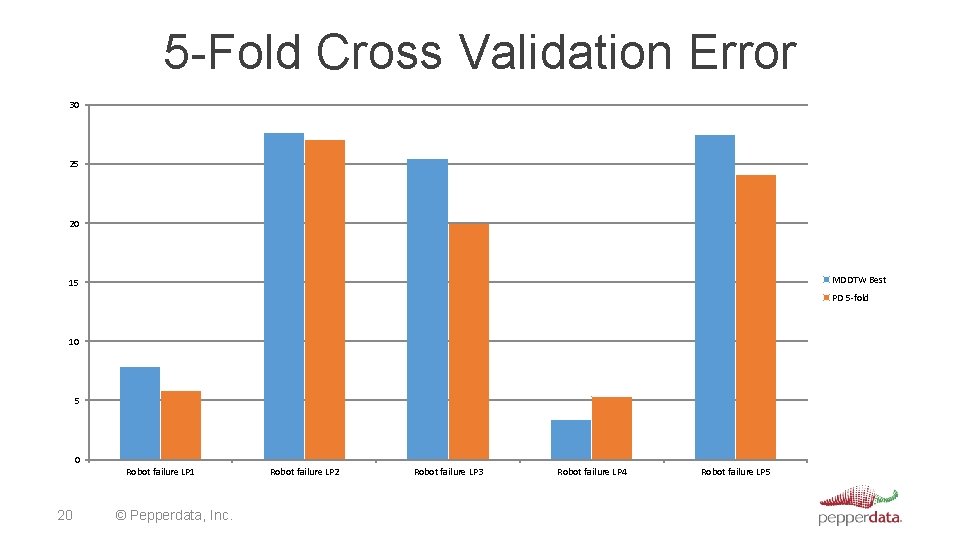

5 -Fold Cross Validation Error 30 25 20 MDDTW Best 15 PD 5 -fold 10 5 0 Robot failure LP 1 20 © Pepperdata, Inc. Robot failure LP 2 Robot failure LP 3 Robot failure LP 4 Robot failure LP 5

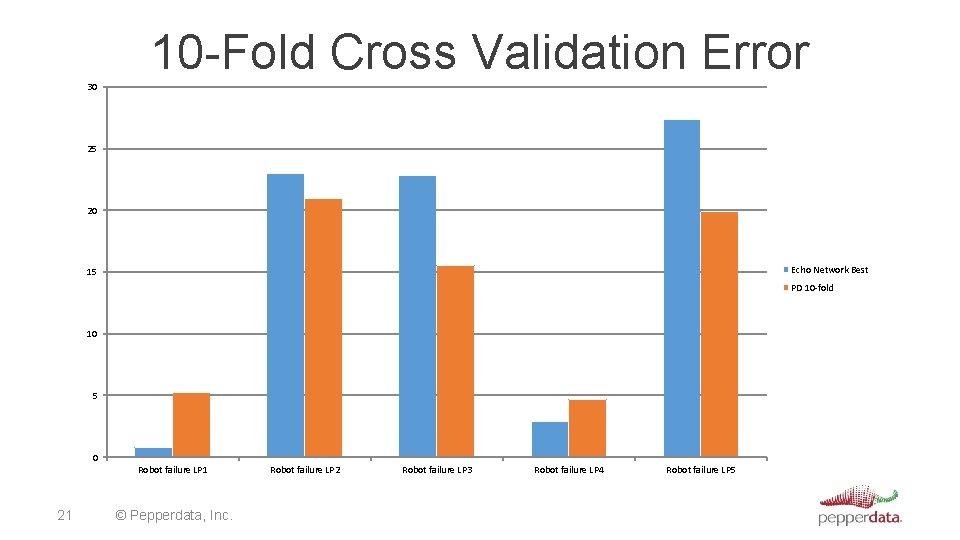

10 -Fold Cross Validation Error 30 25 20 Echo Network Best 15 PD 10 -fold 10 5 0 Robot failure LP 1 21 © Pepperdata, Inc. Robot failure LP 2 Robot failure LP 3 Robot failure LP 4 Robot failure LP 5

PD Data • Four variables: • CPU, Virtual Memory, HDFS reads, Network Ops • Each time series collected over one week • 10 data points to 10 K+ data points • • Missing data 22 © Pepperdata, Inc. Extremely noisy For periods longer than a week, data is much larger Sampling rate is the same for all TS

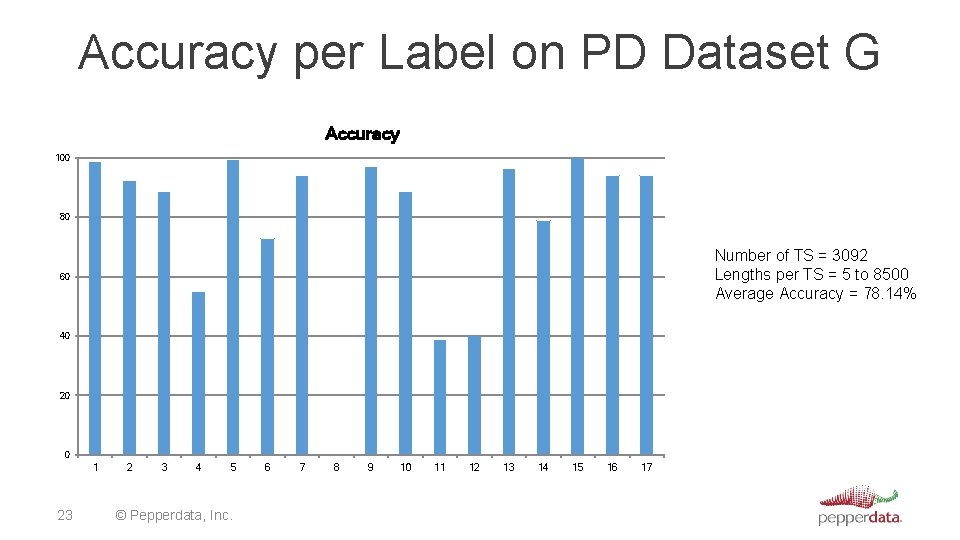

Accuracy per Label on PD Dataset G Accuracy 100 80 Number of TS = 3092 Lengths per TS = 5 to 8500 Average Accuracy = 78. 14% 60 40 20 0 1 23 2 3 4 5 © Pepperdata, Inc. 6 7 8 9 10 11 12 13 14 15 16 17

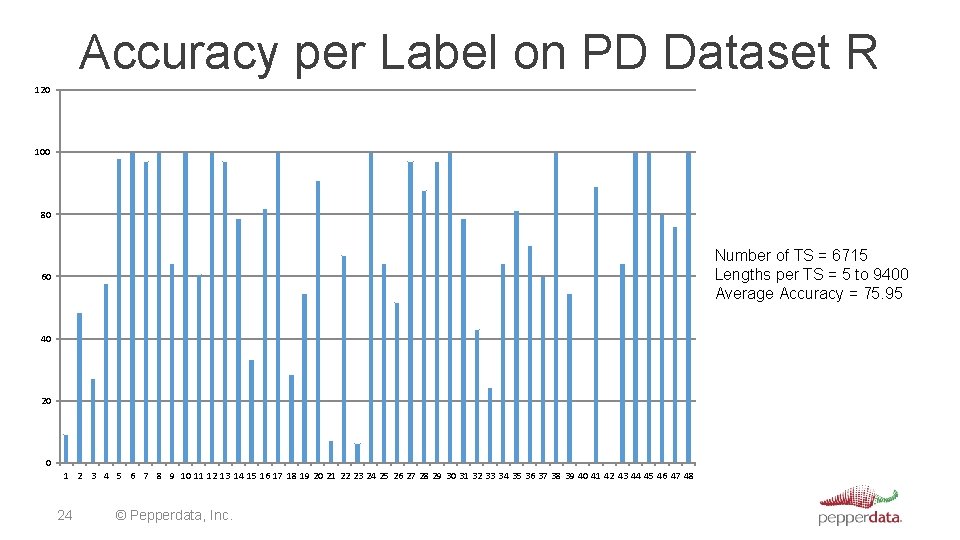

Accuracy per Label on PD Dataset R 120 100 80 Number of TS = 6715 Lengths per TS = 5 to 9400 Average Accuracy = 75. 95 60 40 20 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 24 © Pepperdata, Inc.

Generalizing Across Clusters

Problem Given a set of labels for each cluster, generate a new set of labels that have high accuracy across all the clusters 26 © Pepperdata, Inc.

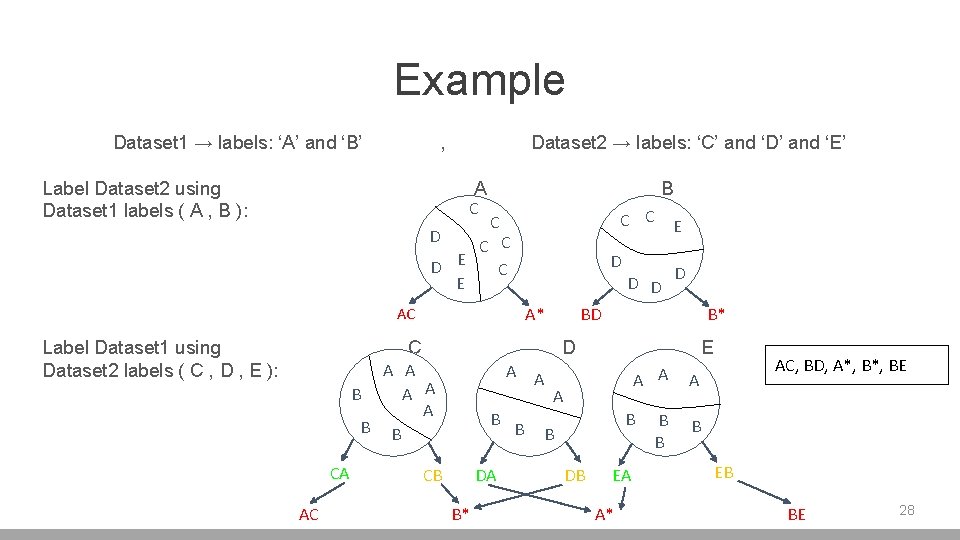

Merging Algorithm • Input • Dataset 1 with labels ‘A’, ‘B’ • Dataset 2 with labels ‘C’, ‘D’, ‘E’ • Output • A unified set of labels across both datasets 27 © Pepperdata, Inc.

Example Dataset 1 → labels: ‘A’ and ‘B’ , Label Dataset 2 using Dataset 1 labels ( A , B ): Dataset 2 → labels: ‘C’ and ‘D’ and ‘E’ A C D B C C E C C C D E C E D D D A* AC Label Dataset 1 using Dataset 2 labels ( C , D , E ): BD C AC B* D A A B A A A B B CA D A A B CB DA B* B E A A A B B DB EA A* B B AC, BD, A*, BE A B EB BE 28

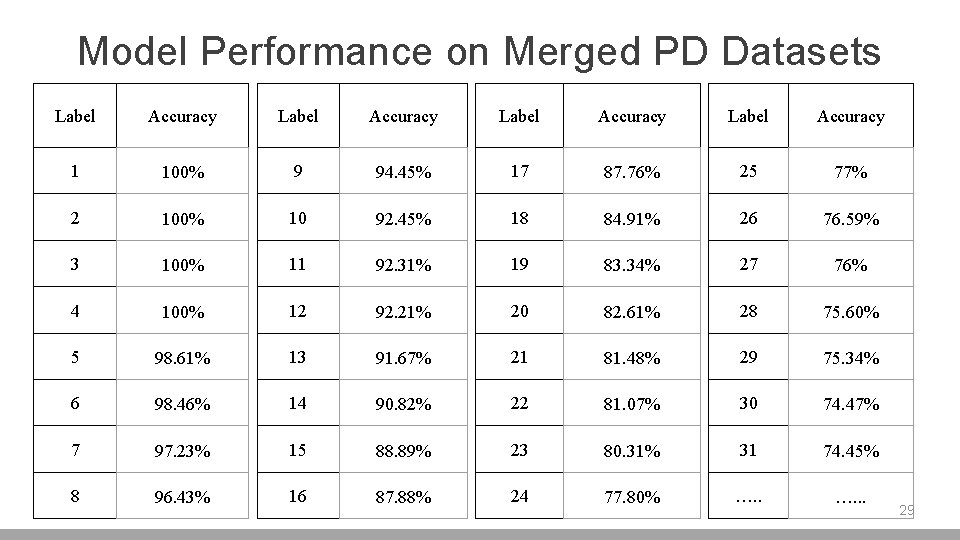

Model Performance on Merged PD Datasets Label Accuracy 1 100% 9 94. 45% 17 87. 76% 25 77% 2 100% 10 92. 45% 18 84. 91% 26 76. 59% 3 100% 11 92. 31% 19 83. 34% 27 76% 4 100% 12 92. 21% 20 82. 61% 28 75. 60% 5 98. 61% 13 91. 67% 21 81. 48% 29 75. 34% 6 98. 46% 14 90. 82% 22 81. 07% 30 74. 47% 7 97. 23% 15 88. 89% 23 80. 31% 31 74. 45% 8 96. 43% 16 87. 88% 24 77. 80% …. . . 29

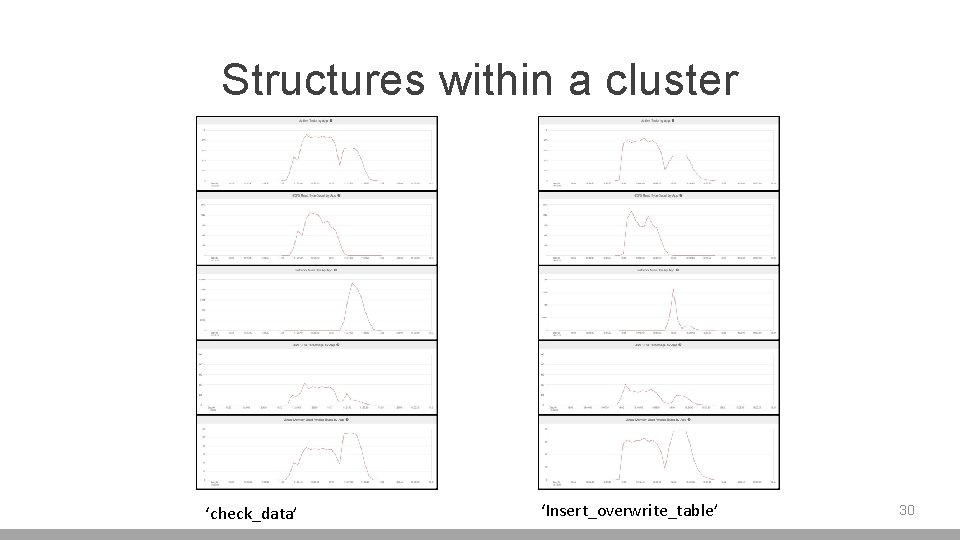

Structures within a cluster ‘check_data’ ‘Insert_overwrite_table’ 30

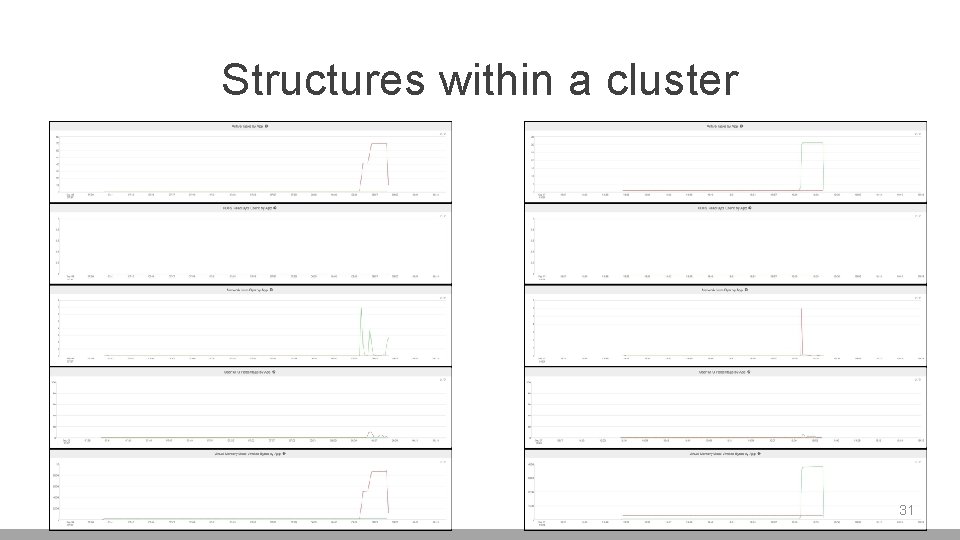

Structures within a cluster 31

Structures between clusters 32

Results Across all of PD customer clusters 44 labels have accuracy over 50% using merge algorithm 33 © Pepperdata, Inc.

Next Steps Do these 44 labels really describe distinct job types on Big Data clusters? If so, what does this imply for scheduling and resource allocation? 34 © Pepperdata, Inc.

Thank You

- Slides: 35