Classical Situation heaven hell World deterministic State observable

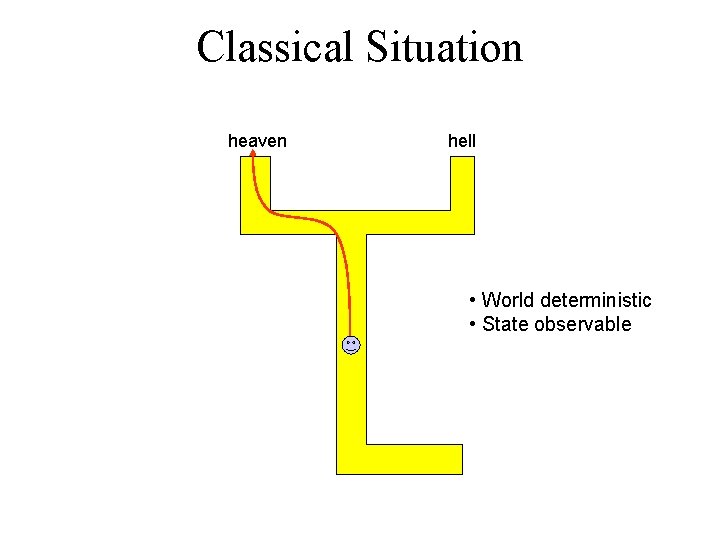

Classical Situation heaven hell • World deterministic • State observable

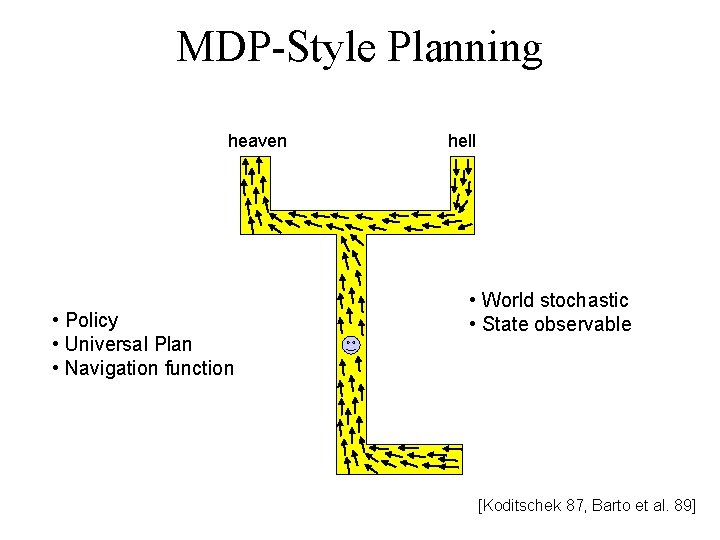

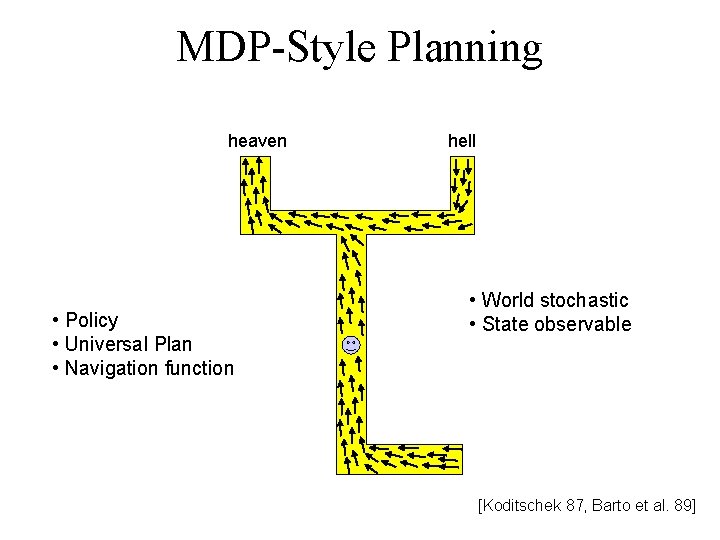

MDP-Style Planning heaven • Policy • Universal Plan • Navigation function hell • World stochastic • State observable [Koditschek 87, Barto et al. 89]

![Stochastic, Partially Observable heaven? hell? sign [Sondik 72] [Littman/Cassandra/Kaelbling 97] Stochastic, Partially Observable heaven? hell? sign [Sondik 72] [Littman/Cassandra/Kaelbling 97]](http://slidetodoc.com/presentation_image_h2/02484e4d567b605aff57e6f5ae8aeda0/image-3.jpg)

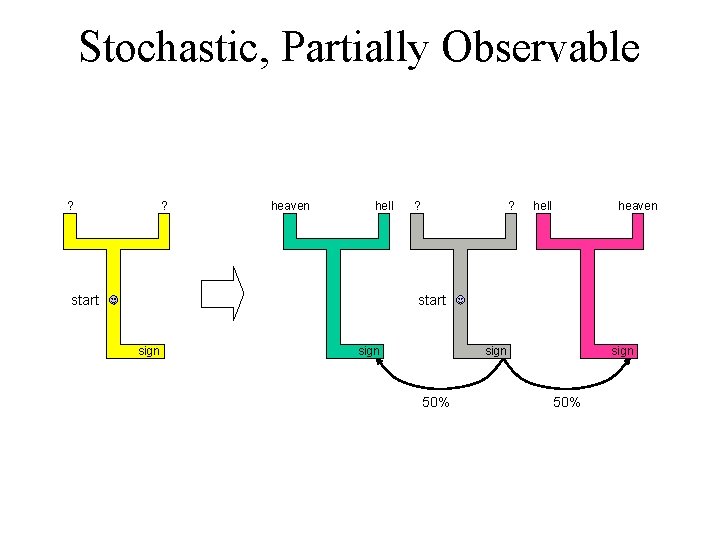

Stochastic, Partially Observable heaven? hell? sign [Sondik 72] [Littman/Cassandra/Kaelbling 97]

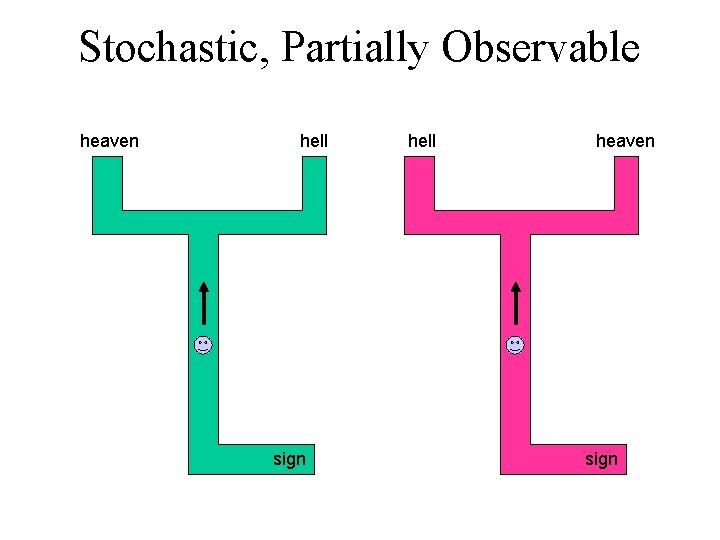

Stochastic, Partially Observable heaven hell sign hell heaven sign

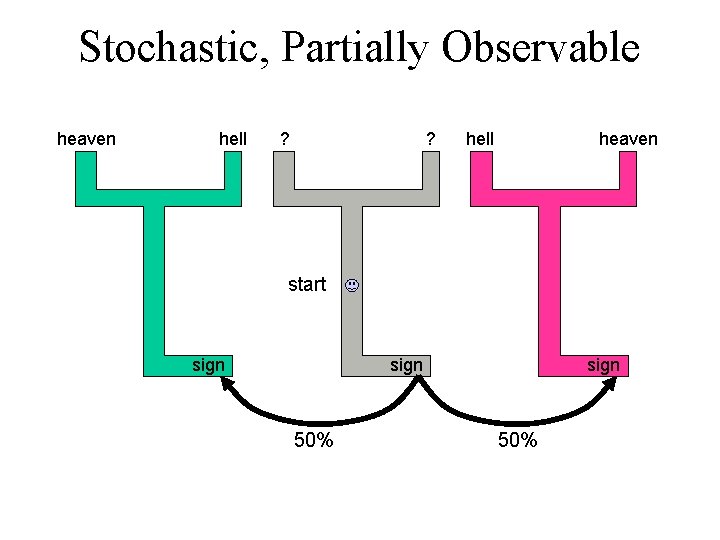

Stochastic, Partially Observable heaven hell ? ? hell heaven start sign 50%

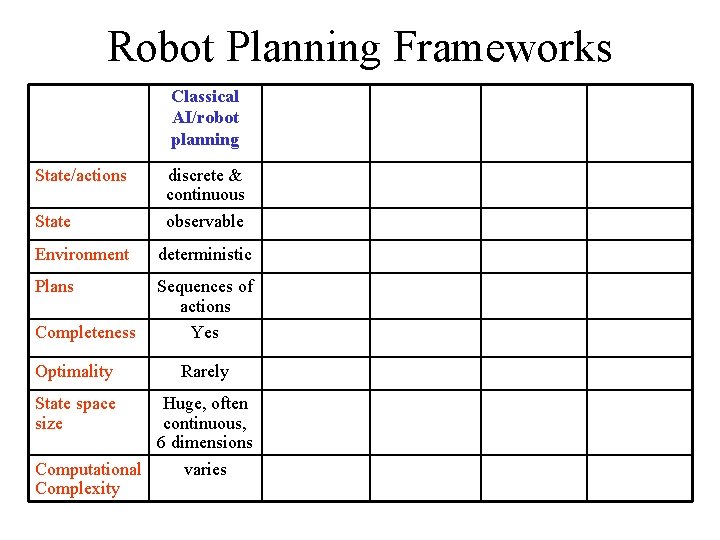

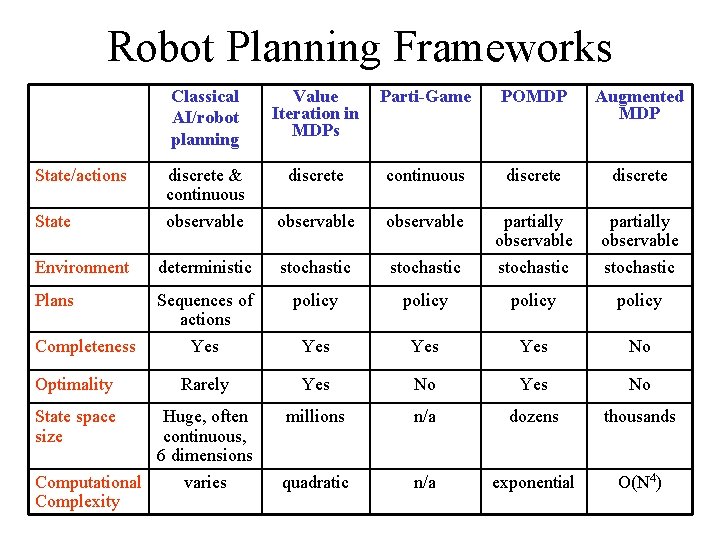

Robot Planning Frameworks Classical AI/robot planning State/actions State discrete & continuous observable Environment deterministic Plans Sequences of actions Yes Completeness Optimality State space size Rarely Huge, often continuous, 6 dimensions Computational varies Complexity

MDP-Style Planning heaven • Policy • Universal Plan • Navigation function hell • World stochastic • State observable [Koditschek 87, Barto et al. 89]

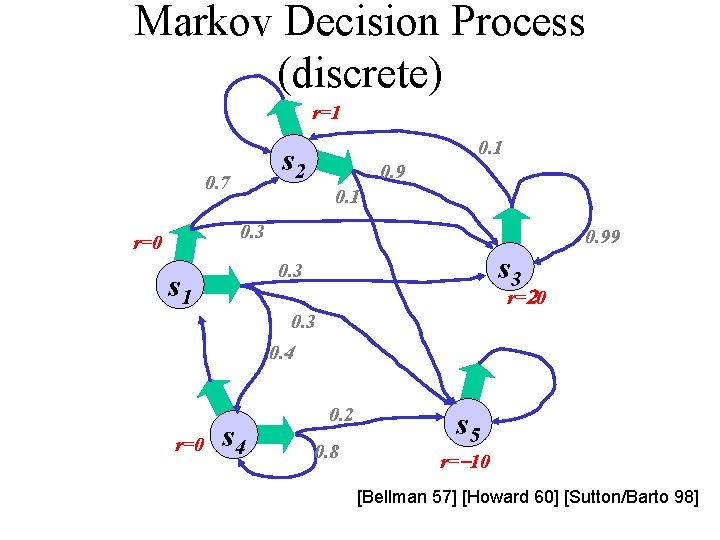

Markov Decision Process (discrete) r=1 0. 1 s 2 0. 7 0. 9 0. 1 0. 3 r=0 0. 99 s 3 0. 3 s 1 r=20 0. 3 0. 4 r=0 s 4 0. 2 0. 8 s 5 r=-10 [Bellman 57] [Howard 60] [Sutton/Barto 98]

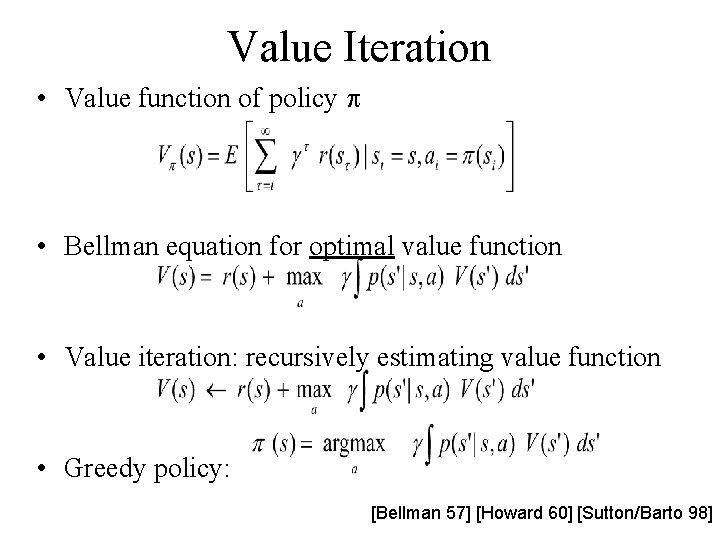

Value Iteration • Value function of policy p • Bellman equation for optimal value function • Value iteration: recursively estimating value function • Greedy policy: [Bellman 57] [Howard 60] [Sutton/Barto 98]

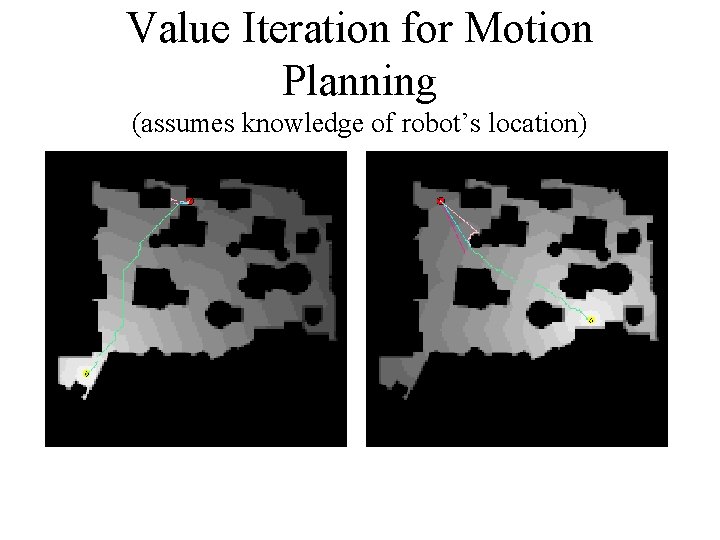

Value Iteration for Motion Planning (assumes knowledge of robot’s location)

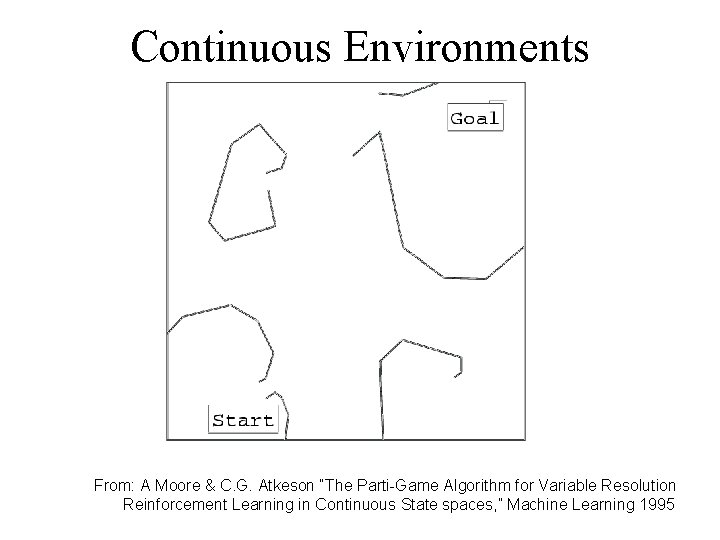

Continuous Environments From: A Moore & C. G. Atkeson “The Parti-Game Algorithm for Variable Resolution Reinforcement Learning in Continuous State spaces, ” Machine Learning 1995

![Approximate Cell Decomposition [Latombe 91] From: A Moore & C. G. Atkeson “The Parti-Game Approximate Cell Decomposition [Latombe 91] From: A Moore & C. G. Atkeson “The Parti-Game](http://slidetodoc.com/presentation_image_h2/02484e4d567b605aff57e6f5ae8aeda0/image-12.jpg)

Approximate Cell Decomposition [Latombe 91] From: A Moore & C. G. Atkeson “The Parti-Game Algorithm for Variable Resolution Reinforcement Learning in Continuous State spaces, ” Machine Learning 1995

![Parti-Game [Moore 96] From: A Moore & C. G. Atkeson “The Parti-Game Algorithm for Parti-Game [Moore 96] From: A Moore & C. G. Atkeson “The Parti-Game Algorithm for](http://slidetodoc.com/presentation_image_h2/02484e4d567b605aff57e6f5ae8aeda0/image-13.jpg)

Parti-Game [Moore 96] From: A Moore & C. G. Atkeson “The Parti-Game Algorithm for Variable Resolution Reinforcement Learning in Continuous State spaces, ” Machine Learning 1995

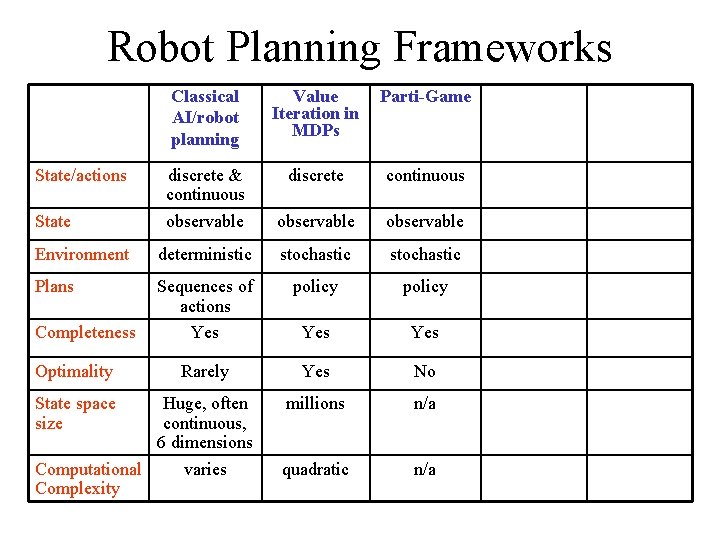

Robot Planning Frameworks Classical AI/robot planning Value Iteration in MDPs Parti-Game discrete & continuous observable discrete continuous observable Environment deterministic stochastic Plans Sequences of actions Yes policy Yes Rarely Yes No millions n/a quadratic n/a State/actions State Completeness Optimality State space size Huge, often continuous, 6 dimensions Computational varies Complexity

Stochastic, Partially Observable ? ? heaven hell start ? ? hell heaven start sign 50%

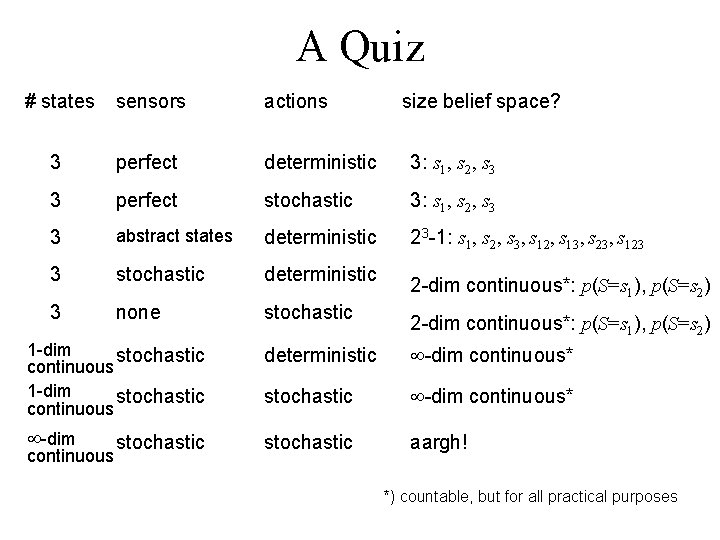

A Quiz # states sensors actions size belief space? 3 perfect deterministic 3: s 1, s 2, s 3 3 perfect stochastic 3: s 1, s 2, s 3 3 abstract states deterministic 23 -1: s 1, s 2, s 3, s 12, s 13, s 23, s 123 3 stochastic deterministic 3 none stochastic 2 -dim continuous*: p(S=s 1), p(S=s 2) 1 -dim stochastic continuous deterministic -dim continuous* stochastic -dim continuous* -dim stochastic aargh! continuous stochastic *) countable, but for all practical purposes

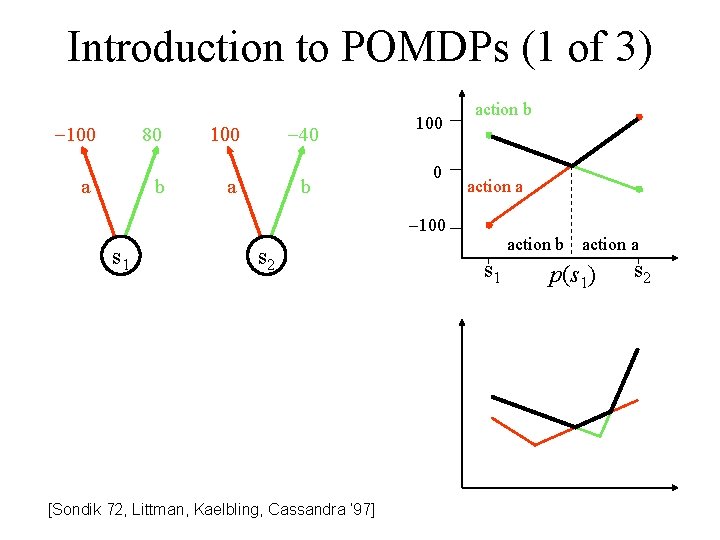

Introduction to POMDPs (1 of 3) -100 80 a b 100 -40 a b 100 0 action b action a -100 s 1 s 2 [Sondik 72, Littman, Kaelbling, Cassandra ‘ 97] action b s 1 action a p(s 1) s 2

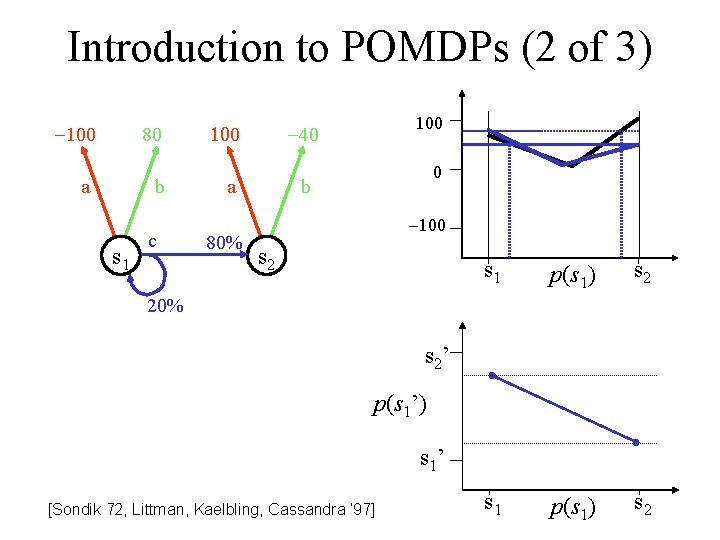

Introduction to POMDPs (2 of 3) -100 80 a b s 1 c 100 a 80% 100 -40 0 b -100 s 2 s 1 p(s 1) s 2 20% s 2’ p(s 1’) s 1’ [Sondik 72, Littman, Kaelbling, Cassandra ‘ 97]

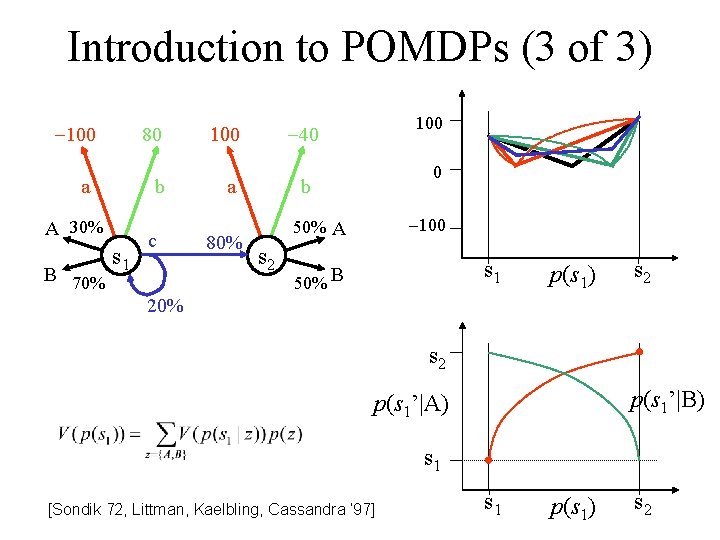

Introduction to POMDPs (3 of 3) -100 80 a b A 30% B 70% s 1 c 100 a 80% 100 -40 0 b -100 50% A s 2 s 1 50% B p(s 1) s 2 20% s 2 p(s 1’|B) p(s 1’|A) s 1 [Sondik 72, Littman, Kaelbling, Cassandra ‘ 97] s 1 p(s 1) s 2

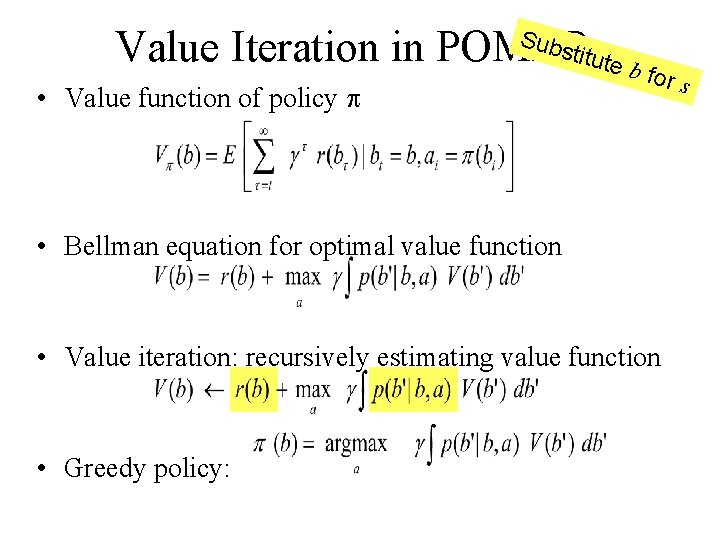

titute Value Iteration in POMDPs bf Subs • Value function of policy p or s • Bellman equation for optimal value function • Value iteration: recursively estimating value function • Greedy policy:

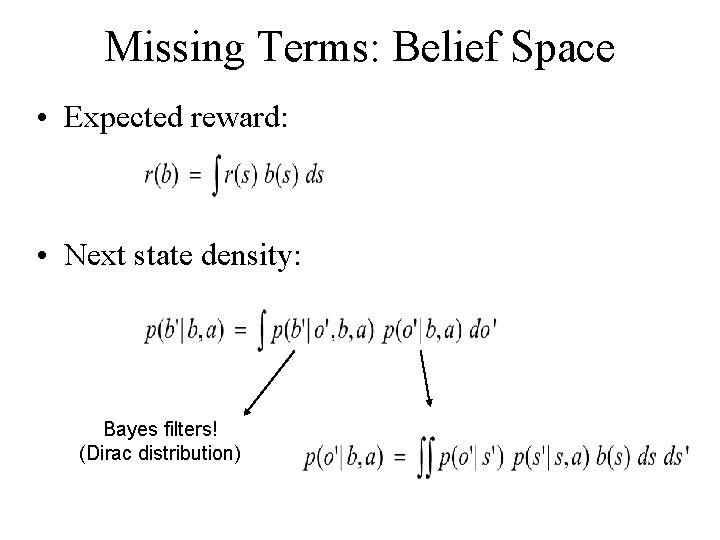

Missing Terms: Belief Space • Expected reward: • Next state density: Bayes filters! (Dirac distribution)

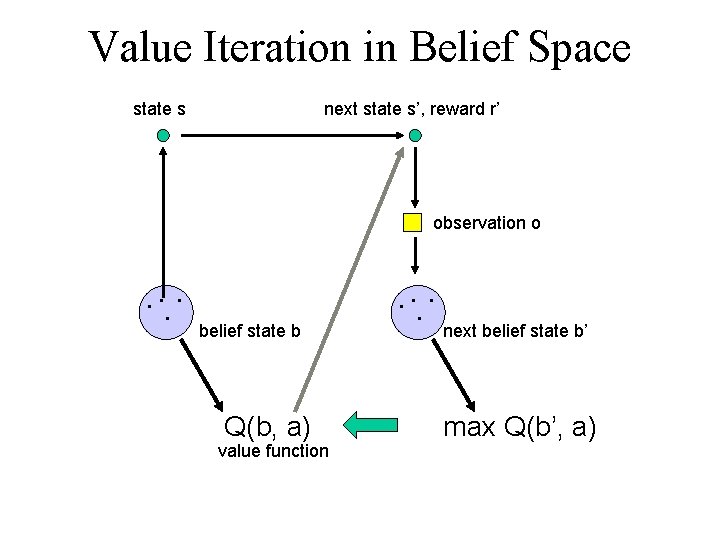

Value Iteration in Belief Space state s next state s’, reward r’ observation o . . belief state b Q(b, a) value function . . next belief state b’ max Q(b’, a)

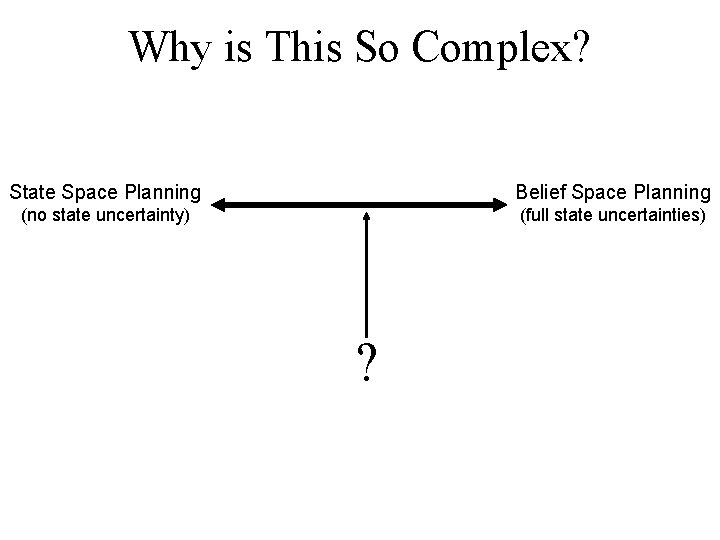

Why is This So Complex? State Space Planning Belief Space Planning (no state uncertainty) (full state uncertainties) ?

![Augmented MDPs: uncertainty (entropy) conventional state space [Roy et al, 98/99] Augmented MDPs: uncertainty (entropy) conventional state space [Roy et al, 98/99]](http://slidetodoc.com/presentation_image_h2/02484e4d567b605aff57e6f5ae8aeda0/image-24.jpg)

Augmented MDPs: uncertainty (entropy) conventional state space [Roy et al, 98/99]

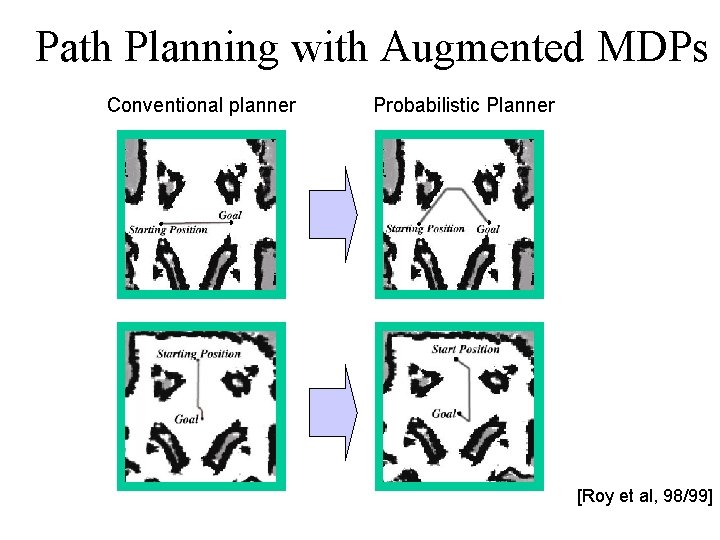

Path Planning with Augmented MDPs Conventional planner Probabilistic information Planner gain [Roy et al, 98/99]

Robot Planning Frameworks Classical AI/robot planning Value Iteration in MDPs Parti-Game POMDP Augmented MDP discrete & continuous observable discrete continuous discrete observable Environment deterministic stochastic partially observable stochastic Plans Sequences of actions Yes policy Yes Yes No Rarely Yes No millions n/a dozens thousands quadratic n/a exponential O(N 4) State/actions State Completeness Optimality State space size Huge, often continuous, 6 dimensions Computational varies Complexity

- Slides: 26