Cisco Unified Computing System Nuts Bolts Sean Hicks

Cisco Unified Computing System Nuts & Bolts Sean Hicks General Data. Tech, L. P. Managing Principal - Compute & Storage

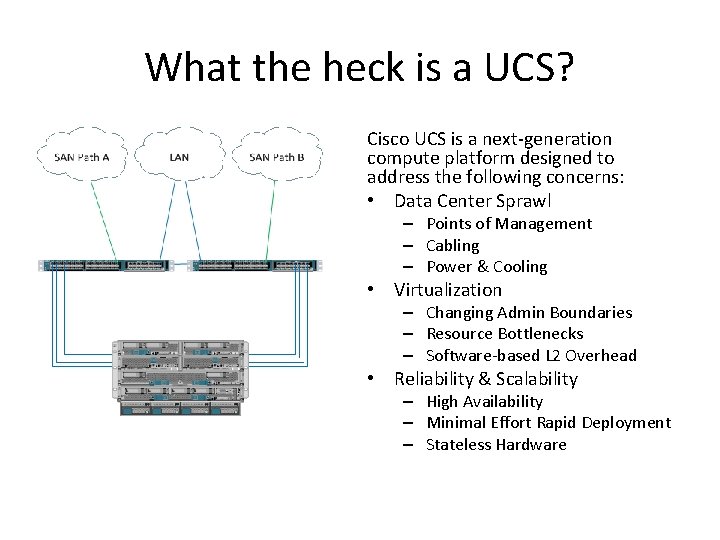

What the heck is a UCS? Cisco UCS is a next-generation compute platform designed to address the following concerns: • Data Center Sprawl – Points of Management – Cabling – Power & Cooling • Virtualization – Changing Admin Boundaries – Resource Bottlenecks – Software-based L 2 Overhead • Reliability & Scalability – High Availability – Minimal Effort Rapid Deployment – Stateless Hardware

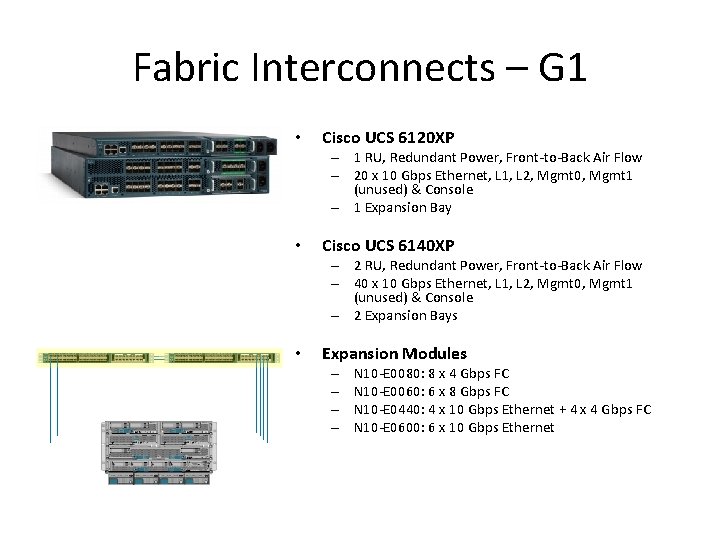

Fabric Interconnects – G 1 • Cisco UCS 6120 XP – 1 RU, Redundant Power, Front-to-Back Air Flow – 20 x 10 Gbps Ethernet, L 1, L 2, Mgmt 0, Mgmt 1 (unused) & Console – 1 Expansion Bay • Cisco UCS 6140 XP – 2 RU, Redundant Power, Front-to-Back Air Flow – 40 x 10 Gbps Ethernet, L 1, L 2, Mgmt 0, Mgmt 1 (unused) & Console – 2 Expansion Bays • Expansion Modules – – N 10 -E 0080: 8 x 4 Gbps FC N 10 -E 0060: 6 x 8 Gbps FC N 10 -E 0440: 4 x 10 Gbps Ethernet + 4 x 4 Gbps FC N 10 -E 0600: 6 x 10 Gbps Ethernet

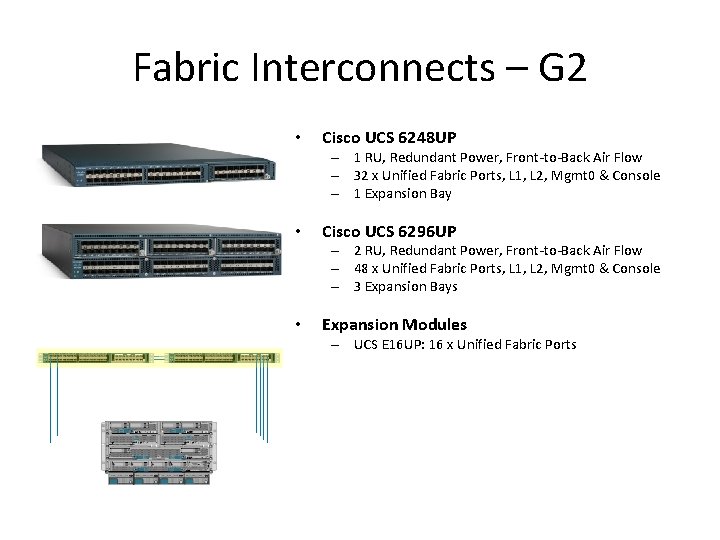

Fabric Interconnects – G 2 • Cisco UCS 6248 UP – 1 RU, Redundant Power, Front-to-Back Air Flow – 32 x Unified Fabric Ports, L 1, L 2, Mgmt 0 & Console – 1 Expansion Bay • Cisco UCS 6296 UP – 2 RU, Redundant Power, Front-to-Back Air Flow – 48 x Unified Fabric Ports, L 1, L 2, Mgmt 0 & Console – 3 Expansion Bays • Expansion Modules – UCS E 16 UP: 16 x Unified Fabric Ports

End-Host Virtualization In its default configuration (End-Host mode), a Fabric Interconnect appears to the network as a server with a bunch of NICs, much like a Hypervisor. It is not a true Ethernet switch! • Does not participate in Spanning Tree elections. Allows for rapid L 2 link establishment and multiple active uplinks. • Does not learn northbound MAC addresses. Makes intelligent forwarding decisions based on learned (internal server) versus unlearned (external network) MAC addresses. • Traversing frames are forwarded/dropped based on pinning traffic to interfaces. Déjà vu check, reverse path forwarding check, inter-uplink port blocking and bcast/mcast link election defeat the purpose of Spanning Tree between the FI and the northbound switch. • Server-to-server in same VLAN is the only locally switched traffic. Ethernet Switch Visio Stencil You see, Kyle? ! Totally different!!! Fabric Interconnect Visio Stencil

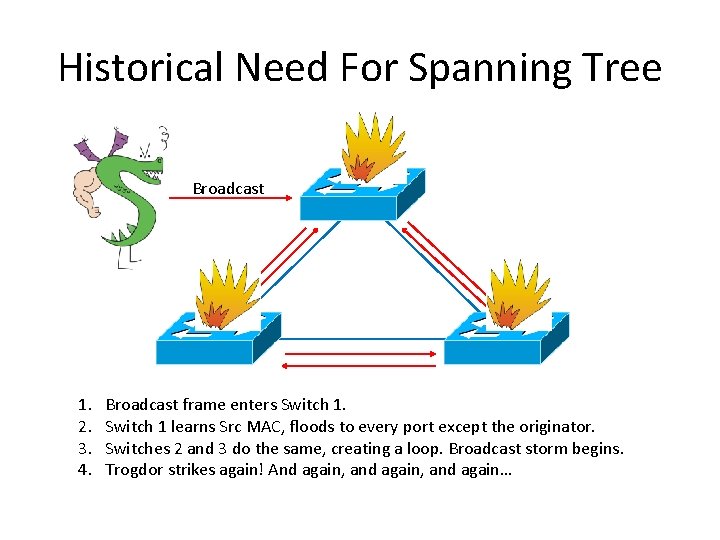

Historical Need For Spanning Tree Broadcast 1. 2. 3. 4. Broadcast frame enters Switch 1 learns Src MAC, floods to every port except the originator. Switches 2 and 3 do the same, creating a loop. Broadcast storm begins. Trogdor strikes again! And again, and again…

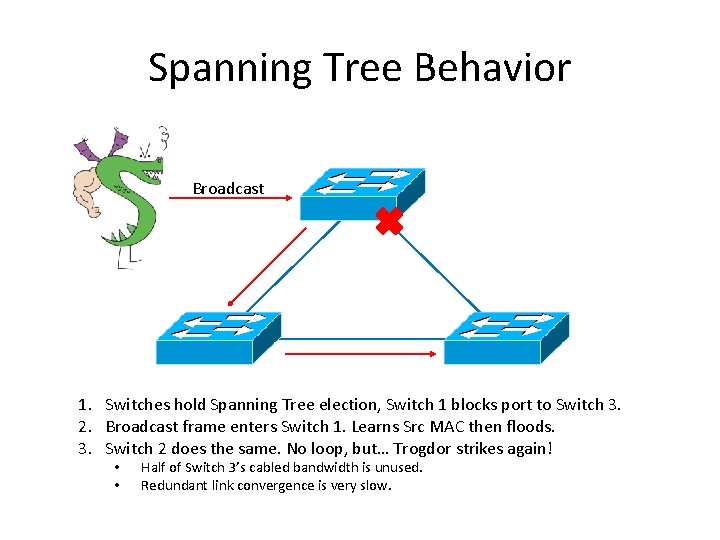

Spanning Tree Behavior Broadcast 1. Switches hold Spanning Tree election, Switch 1 blocks port to Switch 3. 2. Broadcast frame enters Switch 1. Learns Src MAC then floods. 3. Switch 2 does the same. No loop, but… Trogdor strikes again! • • Half of Switch 3’s cabled bandwidth is unused. Redundant link convergence is very slow.

EHV Bcast/Mcast Behavior Broadcast Bcast/Mcast LAN Uplink 1. 2. 3. 4. Broadcast frame enters Switch 1. Learns Src MAC and floods. Broadcast frame enters Switch 3. Learns Src MAC and floods. FI sees unlearned Bcast on Bcast/Mcast LAN Uplink, forwards to Server Ports. FI sees unlearned Bcast on any other LAN Uplink, drops the frame. • • All LAN Uplinks on FI are active and utilized for pinned MAC addresses. MAC’s dynamically and rapidly re-pin for LAN Uplink state change.

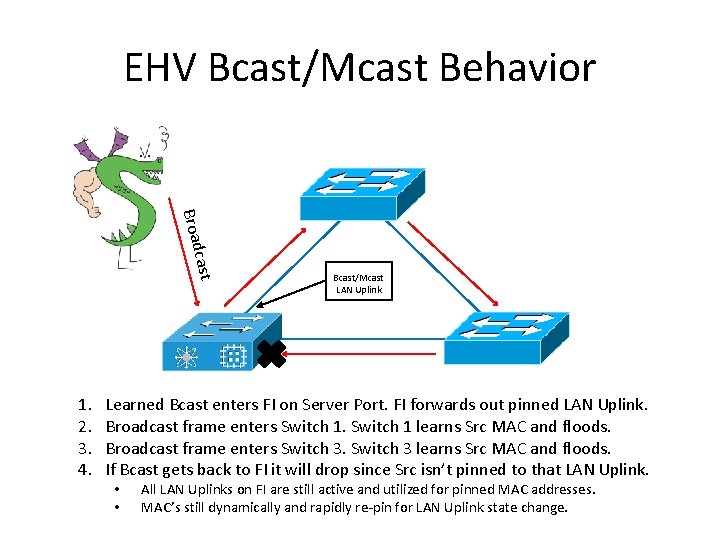

EHV Bcast/Mcast Behavior cast Broad 1. 2. 3. 4. Bcast/Mcast LAN Uplink Learned Bcast enters FI on Server Port. FI forwards out pinned LAN Uplink. Broadcast frame enters Switch 1 learns Src MAC and floods. Broadcast frame enters Switch 3 learns Src MAC and floods. If Bcast gets back to FI it will drop since Src isn’t pinned to that LAN Uplink. • • All LAN Uplinks on FI are still active and utilized for pinned MAC addresses. MAC’s still dynamically and rapidly re-pin for LAN Uplink state change.

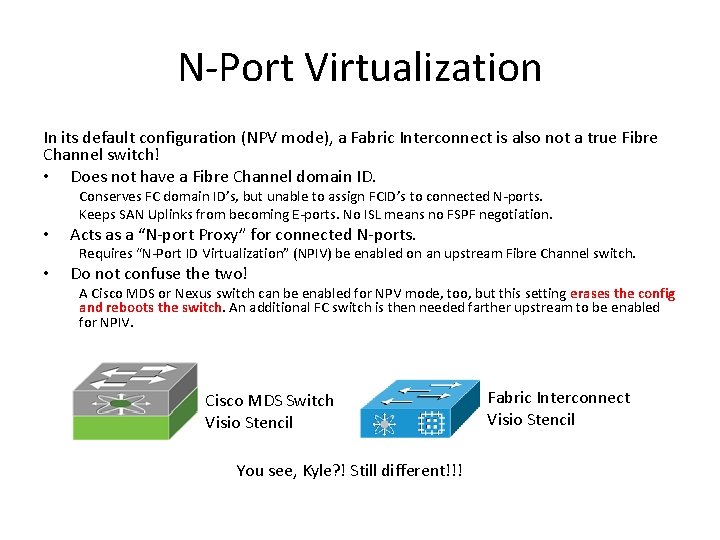

N-Port Virtualization In its default configuration (NPV mode), a Fabric Interconnect is also not a true Fibre Channel switch! • Does not have a Fibre Channel domain ID. Conserves FC domain ID’s, but unable to assign FCID’s to connected N-ports. Keeps SAN Uplinks from becoming E-ports. No ISL means no FSPF negotiation. • Acts as a “N-port Proxy” for connected N-ports. Requires “N-Port ID Virtualization” (NPIV) be enabled on an upstream Fibre Channel switch. • Do not confuse the two! A Cisco MDS or Nexus switch can be enabled for NPV mode, too, but this setting erases the config and reboots the switch. An additional FC switch is then needed farther upstream to be enabled for NPIV. Cisco MDS Switch Visio Stencil You see, Kyle? ! Still different!!! Fabric Interconnect Visio Stencil

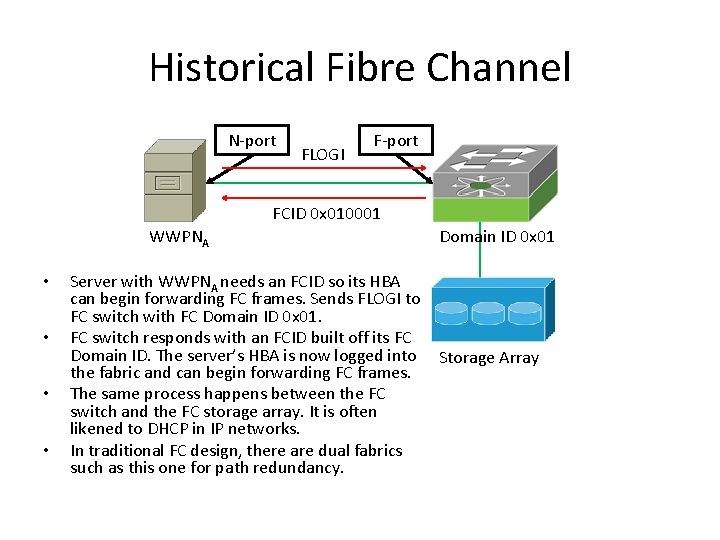

Historical Fibre Channel N-port FLOGI F-port FCID 0 x 010001 WWPNA • • Server with WWPNA needs an FCID so its HBA can begin forwarding FC frames. Sends FLOGI to FC switch with FC Domain ID 0 x 01. FC switch responds with an FCID built off its FC Domain ID. The server’s HBA is now logged into the fabric and can begin forwarding FC frames. The same process happens between the FC switch and the FC storage array. It is often likened to DHCP in IP networks. In traditional FC design, there are dual fabrics such as this one for path redundancy. Domain ID 0 x 01 Storage Array

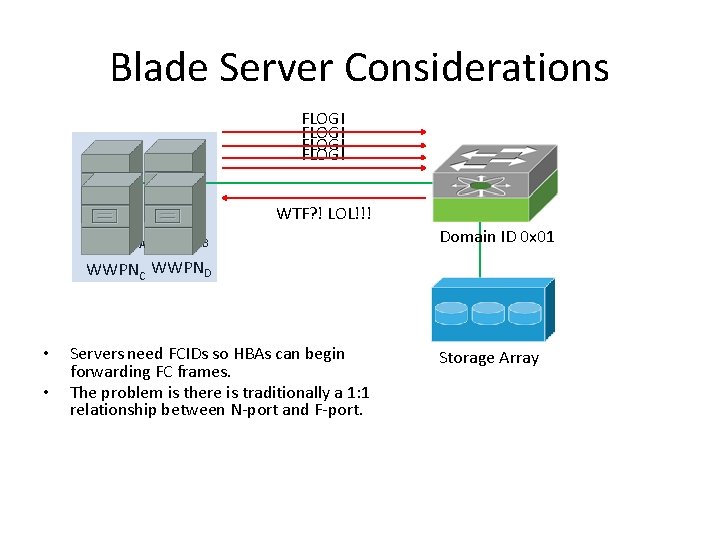

Blade Server Considerations FLOGI WTF? ! LOL!!! WWPNA WWPNB Domain ID 0 x 01 WWPNC WWPND • • Servers need FCIDs so HBAs can begin forwarding FC frames. The problem is there is traditionally a 1: 1 relationship between N-port and F-port. Storage Array

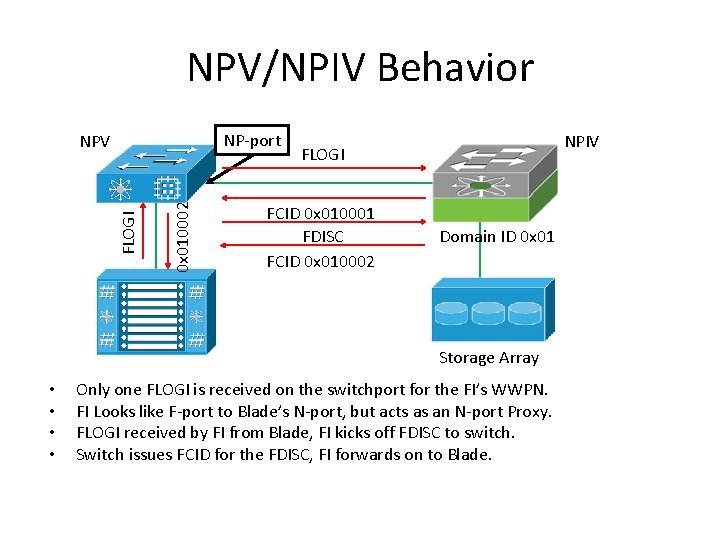

NPV/NPIV Behavior NP-port 0 x 010002 FLOGI NPV NPIV FLOGI FCID 0 x 010001 FDISC FCID 0 x 010002 Domain ID 0 x 01 Storage Array • • Only one FLOGI is received on the switchport for the FI’s WWPN. FI Looks like F-port to Blade’s N-port, but acts as an N-port Proxy. FLOGI received by FI from Blade, FI kicks off FDISC to switch. Switch issues FCID for the FDISC, FI forwards on to Blade.

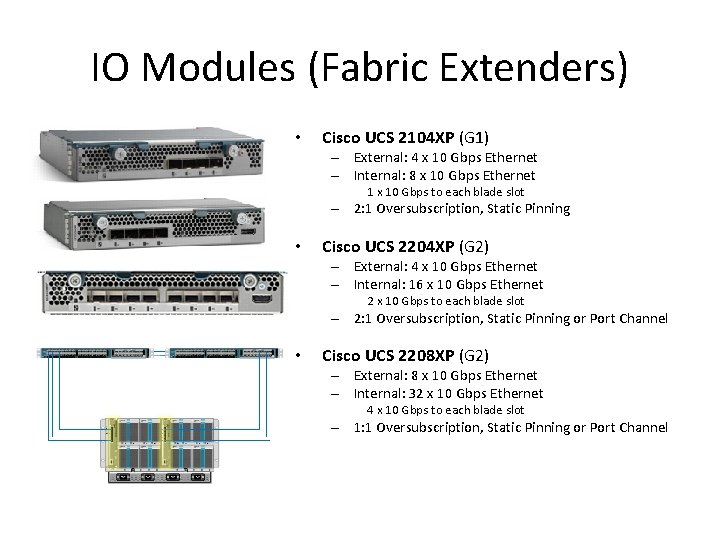

IO Modules (Fabric Extenders) • Cisco UCS 2104 XP (G 1) – External: 4 x 10 Gbps Ethernet – Internal: 8 x 10 Gbps Ethernet 1 x 10 Gbps to each blade slot – 2: 1 Oversubscription, Static Pinning • Cisco UCS 2204 XP (G 2) – External: 4 x 10 Gbps Ethernet – Internal: 16 x 10 Gbps Ethernet 2 x 10 Gbps to each blade slot – 2: 1 Oversubscription, Static Pinning or Port Channel • Cisco UCS 2208 XP (G 2) – External: 8 x 10 Gbps Ethernet – Internal: 32 x 10 Gbps Ethernet 4 x 10 Gbps to each blade slot – 1: 1 Oversubscription, Static Pinning or Port Channel

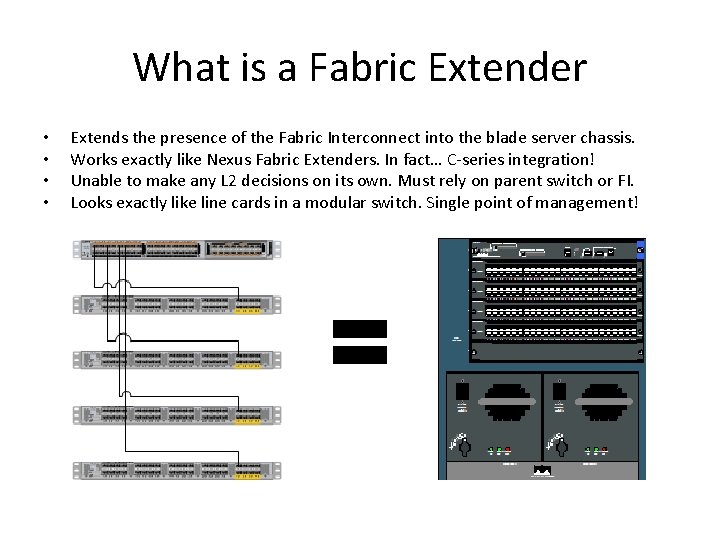

What is a Fabric Extender • • Extends the presence of the Fabric Interconnect into the blade server chassis. Works exactly like Nexus Fabric Extenders. In fact… C-series integration! Unable to make any L 2 decisions on its own. Must rely on parent switch or FI. Looks exactly like line cards in a modular switch. Single point of management!

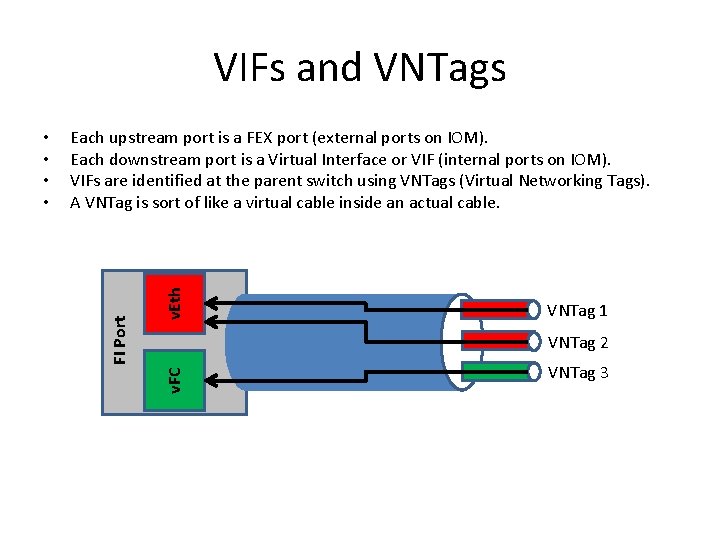

VIFs and VNTags v. Eth Each upstream port is a FEX port (external ports on IOM). Each downstream port is a Virtual Interface or VIF (internal ports on IOM). VIFs are identified at the parent switch using VNTags (Virtual Networking Tags). A VNTag is sort of like a virtual cable inside an actual cable. VNTag 1 VNTag 2 v. FC FI Port • • VNTag 3

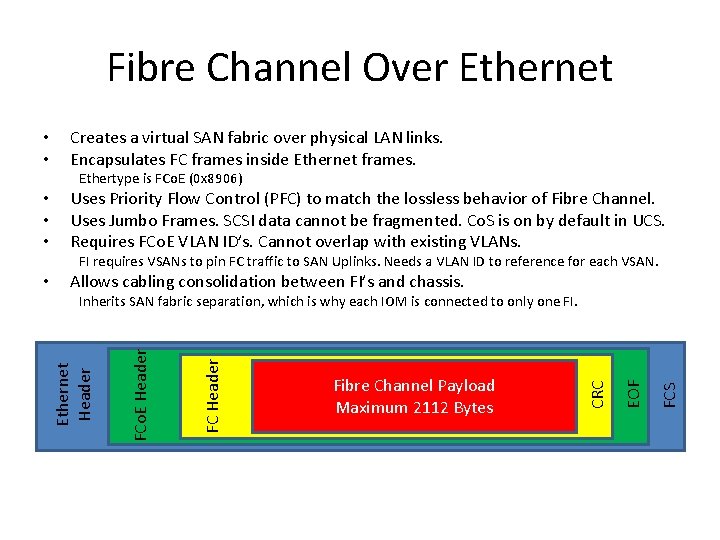

Fibre Channel Over Ethernet • • Creates a virtual SAN fabric over physical LAN links. Encapsulates FC frames inside Ethernet frames. Ethertype is FCo. E (0 x 8906) • • • Uses Priority Flow Control (PFC) to match the lossless behavior of Fibre Channel. Uses Jumbo Frames. SCSI data cannot be fragmented. Co. S is on by default in UCS. Requires FCo. E VLAN ID’s. Cannot overlap with existing VLANs. FI requires VSANs to pin FC traffic to SAN Uplinks. Needs a VLAN ID to reference for each VSAN. Allows cabling consolidation between FI’s and chassis. FCS EOF Fibre Channel Payload Maximum 2112 Bytes CRC FC Header FCo. E Header Inherits SAN fabric separation, which is why each IOM is connected to only one FI. Ethernet Header •

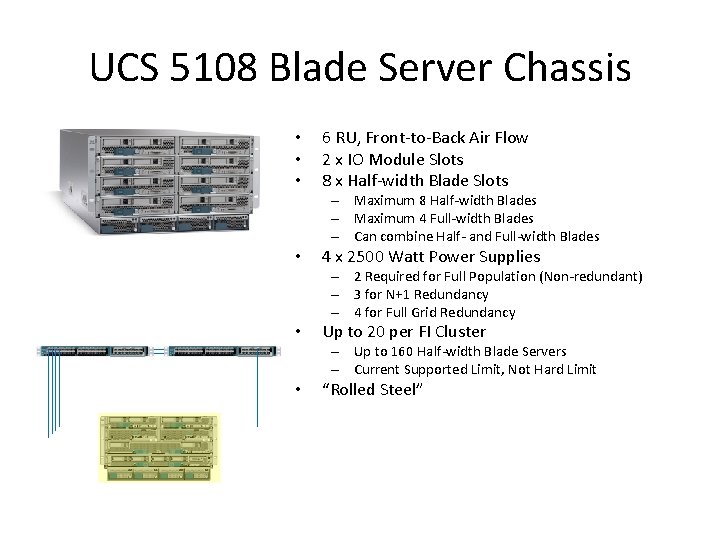

UCS 5108 Blade Server Chassis • • • 6 RU, Front-to-Back Air Flow 2 x IO Module Slots 8 x Half-width Blade Slots – Maximum 8 Half-width Blades – Maximum 4 Full-width Blades – Can combine Half- and Full-width Blades • 4 x 2500 Watt Power Supplies – 2 Required for Full Population (Non-redundant) – 3 for N+1 Redundancy – 4 for Full Grid Redundancy • Up to 20 per FI Cluster – Up to 160 Half-width Blade Servers – Current Supported Limit, Not Hard Limit • “Rolled Steel”

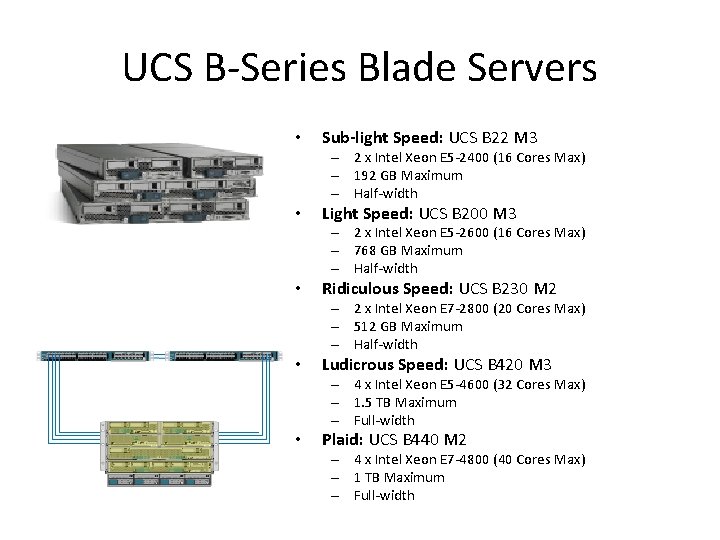

UCS B-Series Blade Servers • Sub-light Speed: UCS B 22 M 3 – 2 x Intel Xeon E 5 -2400 (16 Cores Max) – 192 GB Maximum – Half-width • Light Speed: UCS B 200 M 3 – 2 x Intel Xeon E 5 -2600 (16 Cores Max) – 768 GB Maximum – Half-width • Ridiculous Speed: UCS B 230 M 2 – 2 x Intel Xeon E 7 -2800 (20 Cores Max) – 512 GB Maximum – Half-width • Ludicrous Speed: UCS B 420 M 3 – 4 x Intel Xeon E 5 -4600 (32 Cores Max) – 1. 5 TB Maximum – Full-width • Plaid: UCS B 440 M 2 – 4 x Intel Xeon E 7 -4800 (40 Cores Max) – 1 TB Maximum – Full-width

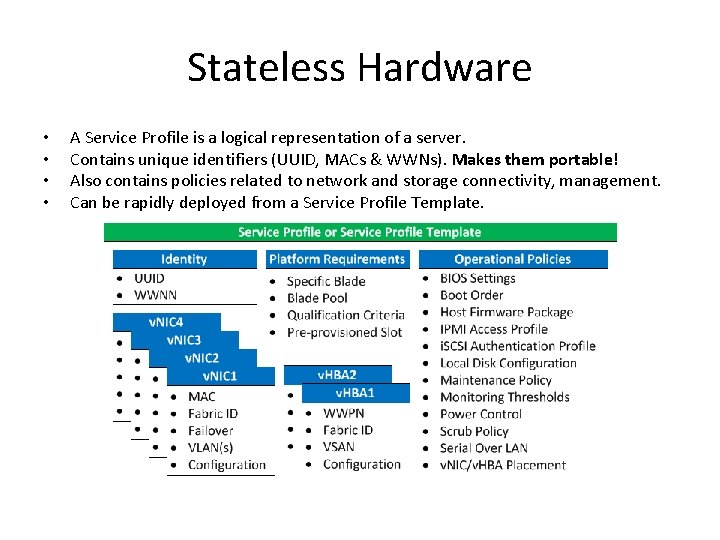

Stateless Hardware • • A Service Profile is a logical representation of a server. Contains unique identifiers (UUID, MACs & WWNs). Makes them portable! Also contains policies related to network and storage connectivity, management. Can be rapidly deployed from a Service Profile Template.

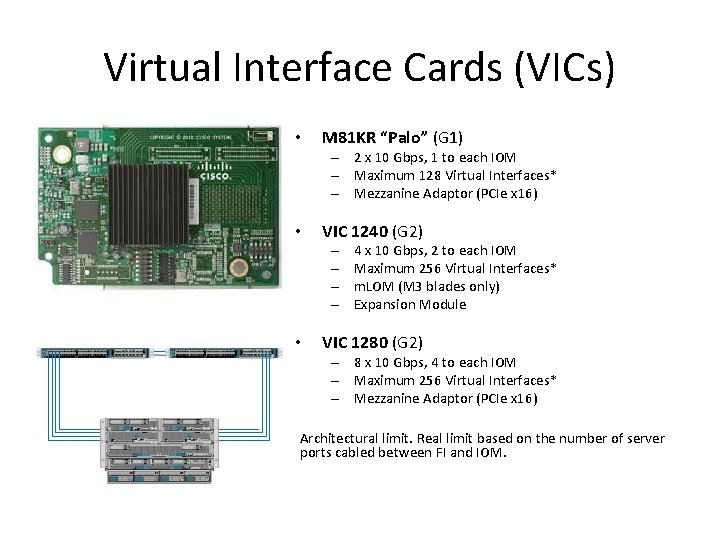

Virtual Interface Cards (VICs) • M 81 KR “Palo” (G 1) – 2 x 10 Gbps, 1 to each IOM – Maximum 128 Virtual Interfaces* – Mezzanine Adaptor (PCIe x 16) • VIC 1240 (G 2) – – • 4 x 10 Gbps, 2 to each IOM Maximum 256 Virtual Interfaces* m. LOM (M 3 blades only) Expansion Module VIC 1280 (G 2) – 8 x 10 Gbps, 4 to each IOM – Maximum 256 Virtual Interfaces* – Mezzanine Adaptor (PCIe x 16) Architectural limit. Real limit based on the number of server ports cabled between FI and IOM.

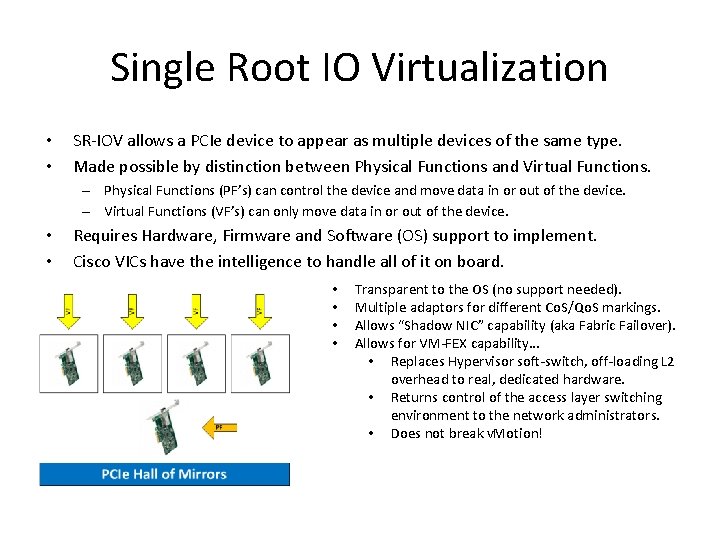

Single Root IO Virtualization • • SR-IOV allows a PCIe device to appear as multiple devices of the same type. Made possible by distinction between Physical Functions and Virtual Functions. – Physical Functions (PF’s) can control the device and move data in or out of the device. – Virtual Functions (VF’s) can only move data in or out of the device. • • Requires Hardware, Firmware and Software (OS) support to implement. Cisco VICs have the intelligence to handle all of it on board. • • Transparent to the OS (no support needed). Multiple adaptors for different Co. S/Qo. S markings. Allows “Shadow NIC” capability (aka Fabric Failover). Allows for VM-FEX capability… • Replaces Hypervisor soft-switch, off-loading L 2 overhead to real, dedicated hardware. • Returns control of the access layer switching environment to the network administrators. • Does not break v. Motion!

Cable Less, Scale Rapidly • • • 2 Fabric Interconnects 1 Chassis, 8 Blades, 2 Cables 2 Chassis, 16 Blades, 4 Cables 3 Chassis, 24 Blades, 6 Cables 4 Chassis, 32 Blades, 8 Cables 5 Chassis, 40 Blades, 10 Cables 6 Chassis, 48 Blades, 12 Cables 13 Chassis, 104 Blades, 26 Cables 20 Chassis, 160 Blades, 40 Cables Assumes bandwidth requirement of 2 x 10 Gbps to each UCS 5108.

Single Point of Management The pair of Fabric Interconnects represents a single UCS Manager domain. Every connected physical blade server chassis is actually a module in a single logical blade server chassis. Every component in this larger, logical chassis is configured, operated and monitored via the pair of Fabric Interconnects across their Mgmt 0 ports and Cluster IP address must be in the same broadcast domain.

High Availability The Fabric Interconnects negotiate high availability for the cluster IP address of the UCS Manager domain through a primary-subordinate election over their L 1 and L 2 ports (known as cluster links) and through the connected chassis (failsafe mechanism to prevent management split brain in the event of cluster links loss). The dual fabric, active-active design provides LAN and SAN connectivity to every blade in every chassis even if one of the Fabric Interconnects malfunctions. HA for UCS Manager is unavailable until the first connected chassis is acknowledged.

Questions & Answers Contact Information: shicks@gdt. com (214) 857 -6281 http: //www. linkedin. com/in/seanphicks

- Slides: 26