CHEP 2000 Padova Peta Byte Storage Facility at

CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC Razvan Popescu - Brookhaven National Laboratory

Who are we? t Relativistic Heavy-Ion Collider @ BNL – Four experiments: Phenix, Star, Phobos, Brahms. – 1. 5 PB per year. – ~500 MB/sec. – >20, 000 Spec. Int 95. t Startup in May 2000 at 50% capacity and ramp up to nominal parameters in 1 year. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 2

Overview t Data Types: – Raw: very large volume (1. 2 PB/yr. ), average bandwidth (50 MB/s). – DST: average volume (500 TB), large bandwidth (200 MB/s). – m. DST: low volume (<100 TB), large bandwidth (400 MB/s). CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 3

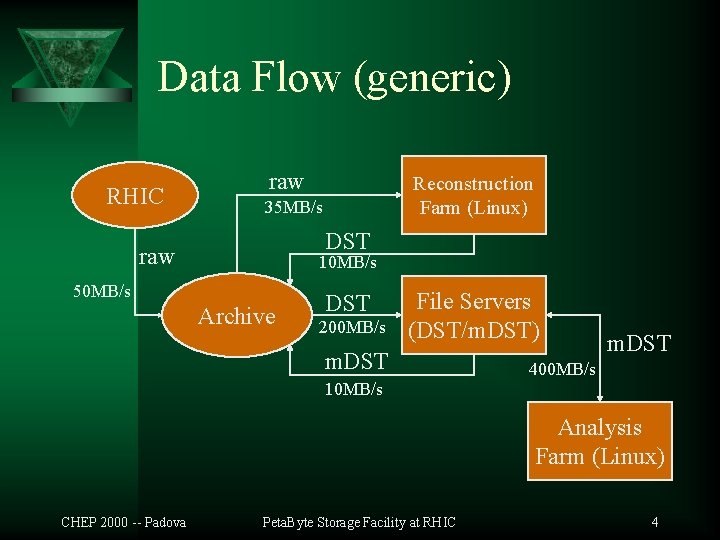

Data Flow (generic) RHIC raw Reconstruction Farm (Linux) 35 MB/s DST raw 10 MB/s 50 MB/s Archive DST 200 MB/s File Servers (DST/m. DST) m. DST 400 MB/s 10 MB/s Analysis Farm (Linux) CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 4

The Data Store t HPSS (ver. 4. 1. 1 patch level 2) – Deployed in 1998. – After overcoming some growth difficulties we consider the present implementation successful. – One major/total reconfiguration to adapt to new hardware (and system understanding). – Flexible enough for our needs. One shortage: preemptable priority schema. – Very high performance. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 5

The HPSS Archive t t t Constraints - large capacity & high bandwidth: – Two types of tape technology: SD-3 (best $/GB) & 9840 (best $/MB/s). – Two tape layers hierarchies. Easy management of the migration. Reliable and fast disk storage: – FC attached RAID disk. Platform compatible with HPSS: – IBM, SUN, SGI. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 6

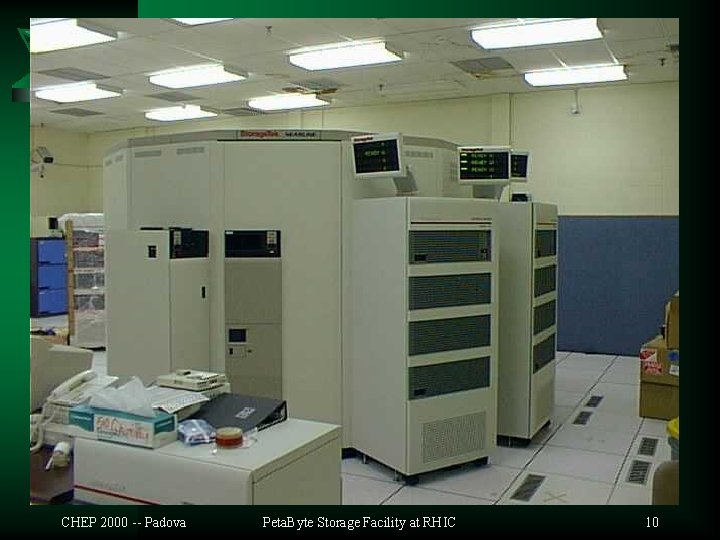

Present Resources t t t Tape Storage: – (1) STK Powderhorn silo (6000 cart. ) – (11) SD-3 (Redwood) drives. – (10) 9840 (Eagle) drives. Disk Storage: – ~8 TB of RAID disk. • 1 TB for HPSS cache. • 7 TB Unix workspace. Servers: – (5) RS/6000 H 50/70 for HPSS. – (6) E 450&E 4000 for file serving and data mining. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 7

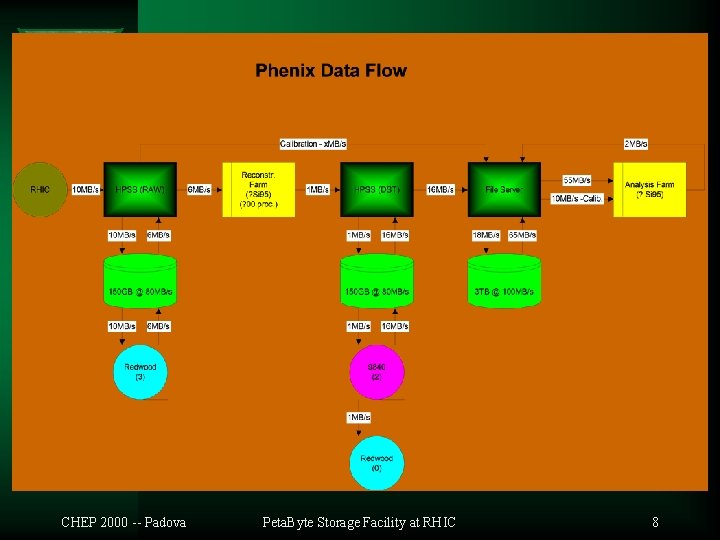

CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 8

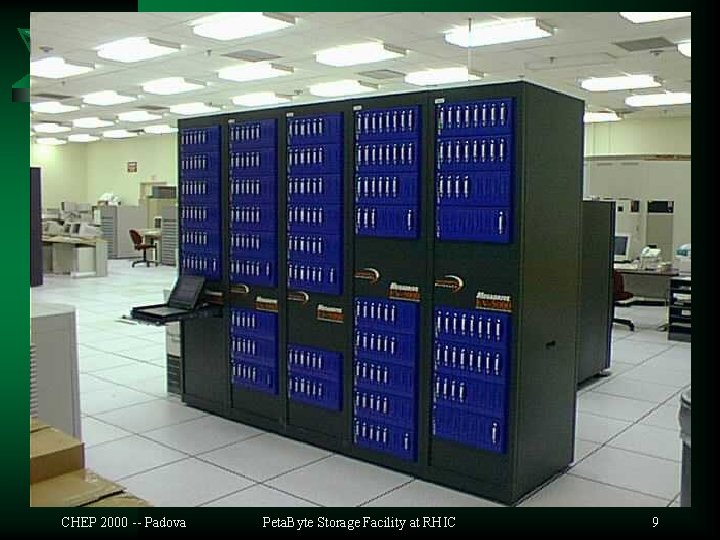

CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 9

CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 10

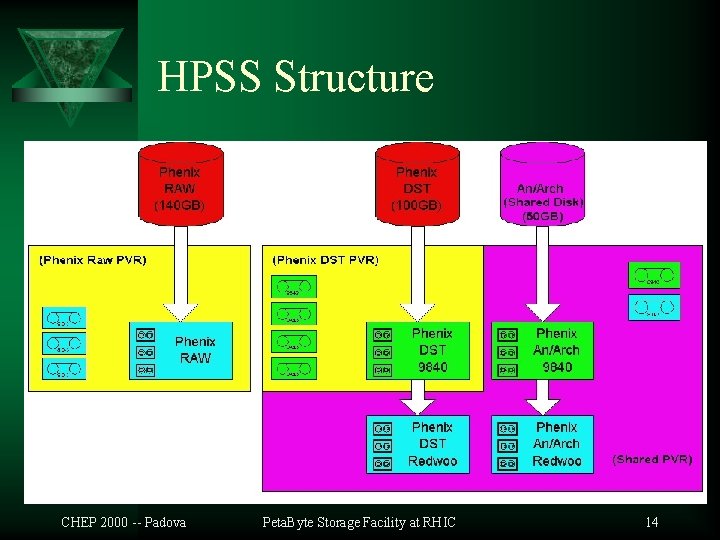

HPSS Structure t (1) Core Server: – RS/6000 Model H 50 – 4 x CPU – 2 GB RAM – Fast Ethernet (control) – OS mirrored storage for metadata (6 pv. ) CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 11

HPSS Structure t (3) Movers: – RS/6000 Model H 70 – 4 x CPU – 1 GB RAM – Fast Ethernet (control) – Gigabit Ethernet (data) (1500&9000 MTU) – 2 x FC attached RAID - 300 GB - disk cache – (3 -4) SD-3 “Redwood” tape transports – (3 -4) 9840 “Eagle” tape transports CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 12

HPSS Structure t t Guarantee availability of resources for a specific user group separate resources separate PVRs & movers. One mover per user group total exposure to single-machine failure. Guarantee availability of resources for Data Acquisition stream separate hierarchies. Result: 2 PVR&2 COS&1 Mvr per group. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 13

HPSS Structure CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 14

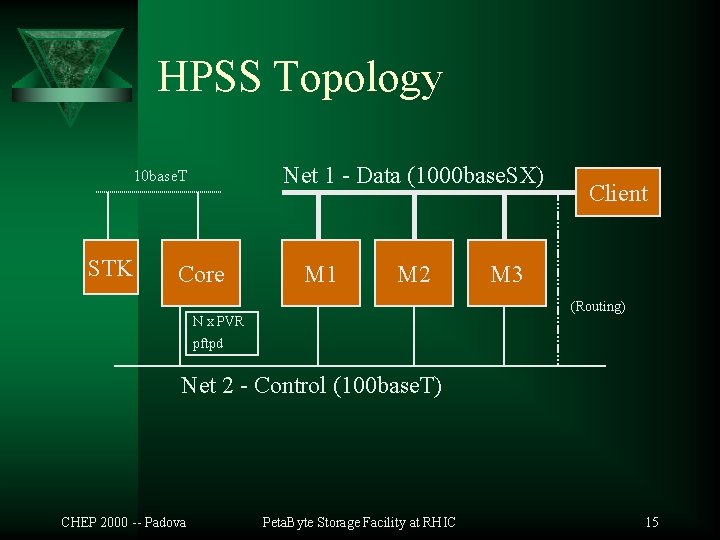

HPSS Topology Net 1 - Data (1000 base. SX) 10 base. T STK Core M 1 M 2 Client M 3 (Routing) N x PVR pftpd Net 2 - Control (100 base. T) CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 15

HPSS Performance t t 80 MB/sec for the disk subsystem. ~1 CPU per 40 MB/sec for TCPIP Gbit traffic @ 1500 MTU or 90 MB/sec @ 9000 MTU >9 MB/sec per SD-3 transport. ~10 MB/sec per 9840 transport. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 16

I/O Intensive Systems t t t Mining and Analysis systems. High I/O & moderate CPU usage. To avoid large network traffic merge file servers with HPSS movers: – Major problem with HPSS support on non-AIX platforms. – Several (Sun) SMP machines or Large (SGI) Modular System. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 17

Problems t Short lifecycle of the SD-3 heads. – ~ 500 hours < 2 months @ average usage. (6 of 10 drives in 10 months). – Built a monitoring tool to try to predict transport failure (based of soft error frequency). t t t Low throughput interface (F/W) for SD-3: high slot consumption. SD-3 production discontinued? ! 9840 ? ? ? CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 18

Issues t Tested the two tape layer hierarchies: – Cartridge based migration. – Manually scheduled reclaim. t Work with large files. Preferable ~1 GB. Tolerable >200 MB. – Is this true with 9840 tape transports? t Don’t think at NFS. Wait for DFS/GPFS? – We use exclusively pftp. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 19

Issues t Guarantee avail. of resources for specific user groups: – Separate PVRs & movers. – Total exposure to single-mach. failure ! t Reliability: – Distribute resources across movers share movers (acceptable? ). – Inter-mover traffic: • 1 CPU per 40 MB/sec TCPIP per adapter: Expensive!!! CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 20

Inter-Mover Traffic Solutions t t t Affinity. – Limited applicability. Diskless hierarchies (not for DFS/GPFS). – Not for SD-3. Not enough tests on 9840. High performance networking: SP switch. (This is your friend. ) – IBM only. Lighter protocol: HIPPI. – Expensive hardware. Multiply attached storage (SAN). Most promising! See STK’s talk. Requires HPSS modifications. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 21

Summary t t HPSS works for us. Buy an SP 2 and the SP switch. – Simplified admin. Fast interconnect. Ready for GPFS. t t t Keep an eye on the STK’s SAN/RAIT. Avoid SD-3. (not a risk anymore) Avoid small file access. At least for the moment. CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC 22

CHEP 2000 -- Padova Peta. Byte Storage Facility at RHIC Thank you! Razvan Popescu popescu@bnl. gov

- Slides: 23