CHEP 2000 Padova Feb 2000 David R Quarrie

CHEP 2000, Padova, Feb 2000 David R. Quarrie: Operational Experience with the Ba. Bar Database. BABAR Collab Operational Experience with the BABAR Database David R. Quarrie Lawrence Berkeley National Laboratory for BABAR Computing Group DRQuarrie@LBL. GOV

CHEP 2000, Padova, Feb 2000 2 Acknowledgements D. Quarrie 5, T. Adye 6, A. Adesanya 7, J-N. Albert 4, J. Becla 7, D. Brown 5, C. Bulfon 3, I. Gaponenko 5, S. Gowdy 5, A. Hanushevsky 7, A. Hasan 7, Y. Kolomensky 2, S. Mukhortov 1, S. Patton 5, G. Svarovski 1, A. Trunov 7, G. Zioulas 7 for the BABAR Computing Group 1 Budker Institute of Nuclear Physics, Russia 3 INFN, Rome, Italy 5 Lawrence 7 Stanford 2 California 4 Lab Berkeley National Laboratory, USA Institute of Technology, USA de l'Accelerateur Lineaire, France 6 Rutherford Appleton Laboratory, UK Linear Accelerator Center, USA David R. Quarrie: Operational Experience with the Ba. Bar Database

CHEP 2000, Padova, Feb 2000 Introduction l Many other talks describe other aspects of BABAR Database n n n n l 3 A. Adesanya, An interactive browser for BABAR databases J. Becla, Improving Performance of Object Oriented Databases, BABAR Case Studies I. Gaponenko, An Overview of the BABAR Conditions Database A. Hanushevsky, Practical Security in large-Scale Distributed Object Oriented Databases A. Hanushevsky, Disk Cache Management in Large-Scale Object Oriented Databases E. Leonardi, Distributing Data around the BABAR collaboration’s Objectivity Federations S. Patton, Schema migration for BABAR Objectivity Federations G. Zioulas, The BABAR Online Databases Focus on some of the operational aspects n Lessons learnt during 12 months of production running David R. Quarrie: Operational Experience with the Ba. Bar Database

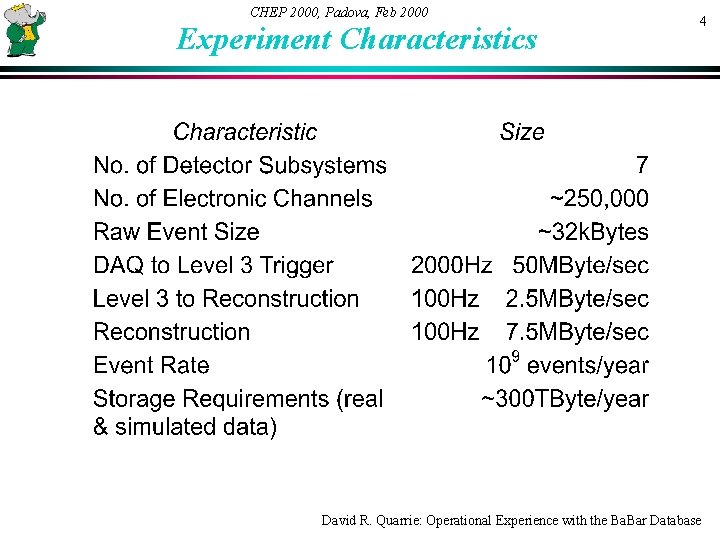

CHEP 2000, Padova, Feb 2000 Experiment Characteristics 4 David R. Quarrie: Operational Experience with the Ba. Bar Database

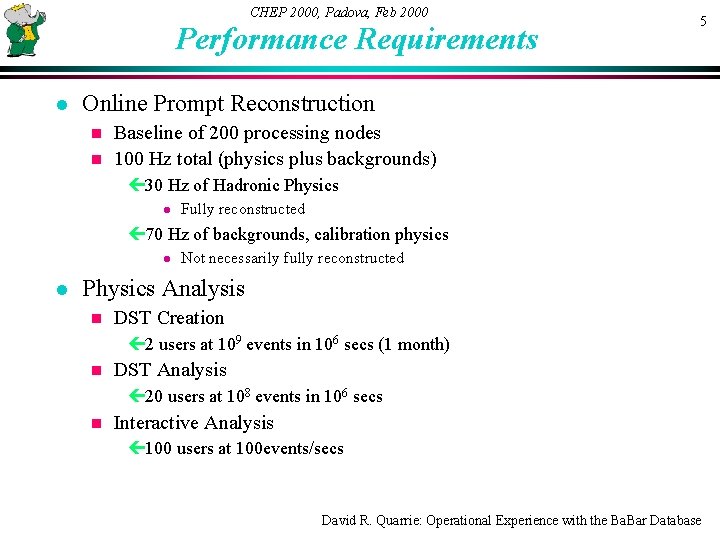

CHEP 2000, Padova, Feb 2000 Performance Requirements l 5 Online Prompt Reconstruction Baseline of 200 processing nodes n 100 Hz total (physics plus backgrounds) n ç 30 Hz of Hadronic Physics l Fully reconstructed ç 70 Hz of backgrounds, calibration physics l l Not necessarily fully reconstructed Physics Analysis n DST Creation ç 2 users at 109 events in 106 secs (1 month) n DST Analysis ç 20 users at 108 events in 106 secs n Interactive Analysis ç 100 users at 100 events/secs David R. Quarrie: Operational Experience with the Ba. Bar Database

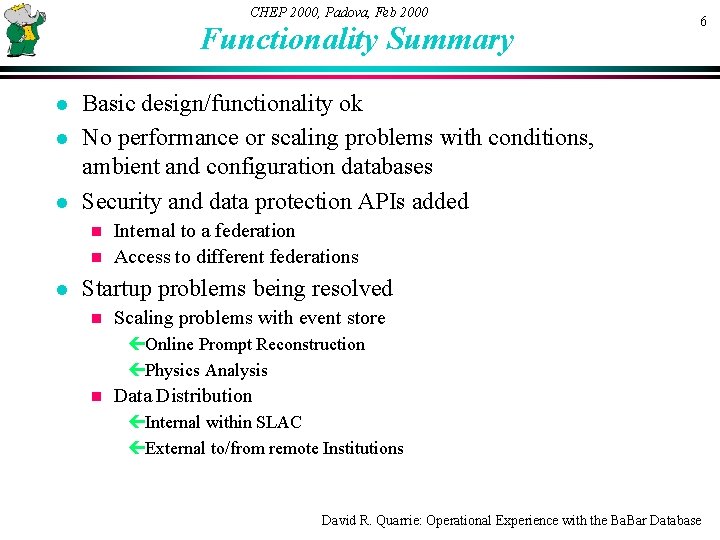

CHEP 2000, Padova, Feb 2000 Functionality Summary l l l 6 Basic design/functionality ok No performance or scaling problems with conditions, ambient and configuration databases Security and data protection APIs added Internal to a federation n Access to different federations n l Startup problems being resolved n Scaling problems with event store çOnline Prompt Reconstruction çPhysics Analysis n Data Distribution çInternal within SLAC çExternal to/from remote Institutions David R. Quarrie: Operational Experience with the Ba. Bar Database

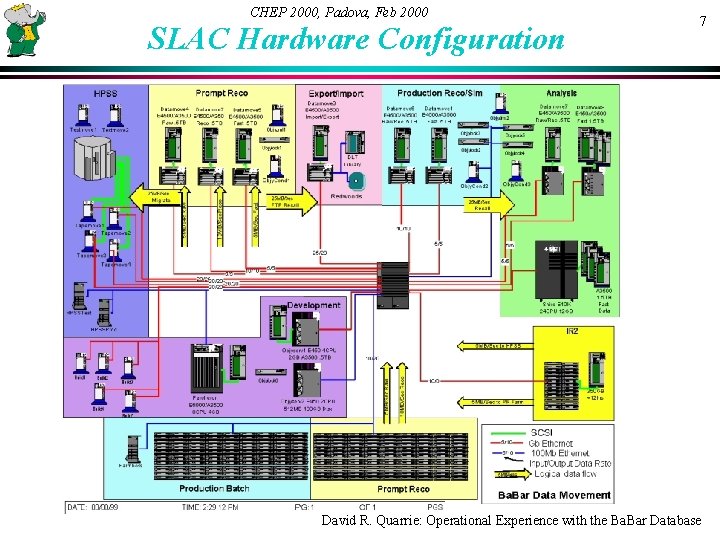

CHEP 2000, Padova, Feb 2000 SLAC Hardware Configuration 7 David R. Quarrie: Operational Experience with the Ba. Bar Database

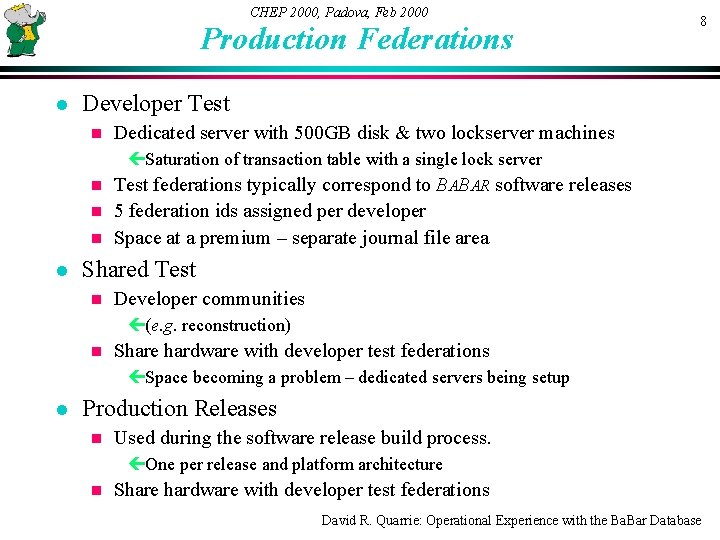

CHEP 2000, Padova, Feb 2000 Production Federations l 8 Developer Test n Dedicated server with 500 GB disk & two lockserver machines çSaturation of transaction table with a single lock server Test federations typically correspond to BABAR software releases n 5 federation ids assigned per developer n Space at a premium – separate journal file area n l Shared Test n Developer communities ç(e. g. reconstruction) n Share hardware with developer test federations çSpace becoming a problem – dedicated servers being setup l Production Releases n Used during the software release build process. çOne per release and platform architecture n Share hardware with developer test federations David R. Quarrie: Operational Experience with the Ba. Bar Database

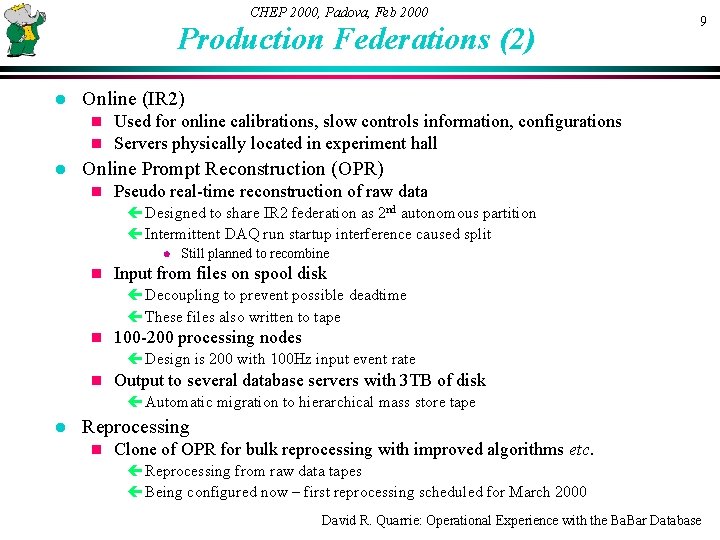

CHEP 2000, Padova, Feb 2000 Production Federations (2) l Online (IR 2) n n l 9 Used for online calibrations, slow controls information, configurations Servers physically located in experiment hall Online Prompt Reconstruction (OPR) n Pseudo real-time reconstruction of raw data çDesigned to share IR 2 federation as 2 nd autonomous partition çIntermittent DAQ run startup interference caused split l n Still planned to recombine Input from files on spool disk çDecoupling to prevent possible deadtime çThese files also written to tape n 100 -200 processing nodes çDesign is 200 with 100 Hz input event rate n Output to several database servers with 3 TB of disk çAutomatic migration to hierarchical mass store tape l Reprocessing n Clone of OPR for bulk reprocessing with improved algorithms etc. çReprocessing from raw data tapes çBeing configured now – first reprocessing scheduled for March 2000 David R. Quarrie: Operational Experience with the Ba. Bar Database

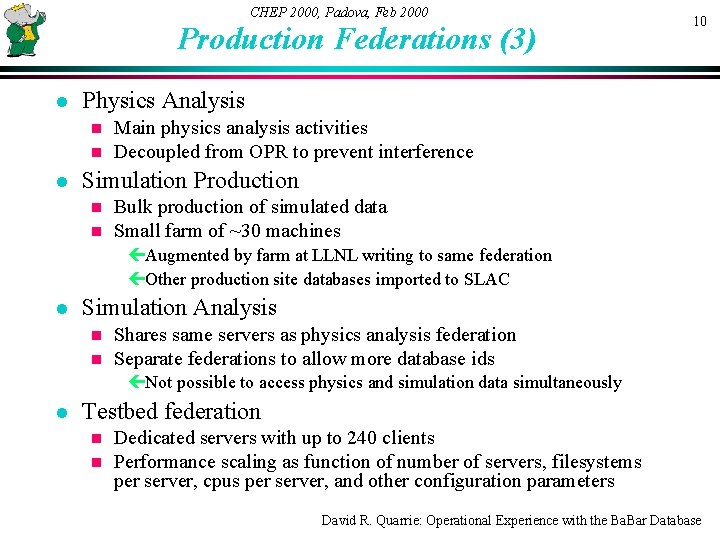

CHEP 2000, Padova, Feb 2000 Production Federations (3) l Physics Analysis n n l 10 Main physics analysis activities Decoupled from OPR to prevent interference Simulation Production n n Bulk production of simulated data Small farm of ~30 machines çAugmented by farm at LLNL writing to same federation çOther production site databases imported to SLAC l Simulation Analysis n n Shares same servers as physics analysis federation Separate federations to allow more database ids çNot possible to access physics and simulation data simultaneously l Testbed federation n n Dedicated servers with up to 240 clients Performance scaling as function of number of servers, filesystems per server, cpus per server, and other configuration parameters David R. Quarrie: Operational Experience with the Ba. Bar Database

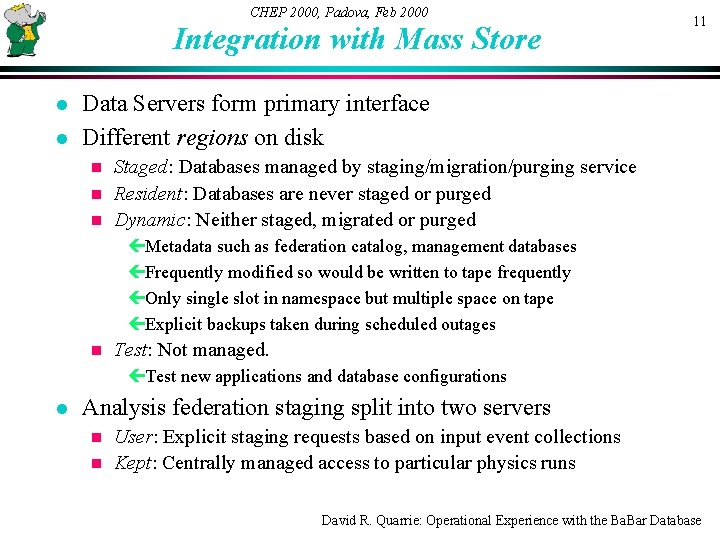

CHEP 2000, Padova, Feb 2000 Integration with Mass Store l l 11 Data Servers form primary interface Different regions on disk Staged: Databases managed by staging/migration/purging service n Resident: Databases are never staged or purged n Dynamic: Neither staged, migrated or purged n çMetadata such as federation catalog, management databases çFrequently modified so would be written to tape frequently çOnly single slot in namespace but multiple space on tape çExplicit backups taken during scheduled outages n Test: Not managed. çTest new applications and database configurations l Analysis federation staging split into two servers User: Explicit staging requests based on input event collections n Kept: Centrally managed access to particular physics runs n David R. Quarrie: Operational Experience with the Ba. Bar Database

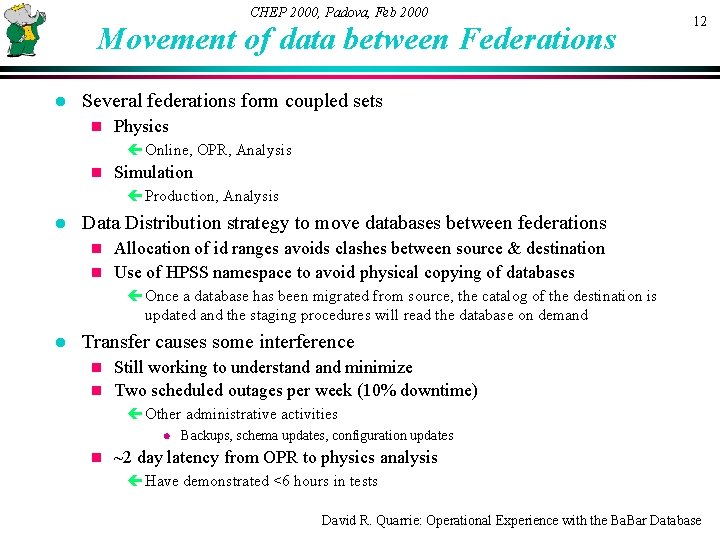

CHEP 2000, Padova, Feb 2000 Movement of data between Federations l 12 Several federations form coupled sets n Physics çOnline, OPR, Analysis n Simulation çProduction, Analysis l Data Distribution strategy to move databases between federations Allocation of id ranges avoids clashes between source & destination n Use of HPSS namespace to avoid physical copying of databases n çOnce a database has been migrated from source, the catalog of the destination is updated and the staging procedures will read the database on demand l Transfer causes some interference Still working to understand minimize n Two scheduled outages per week (10% downtime) n çOther administrative activities l n Backups, schema updates, configuration updates ~2 day latency from OPR to physics analysis çHave demonstrated <6 hours in tests David R. Quarrie: Operational Experience with the Ba. Bar Database

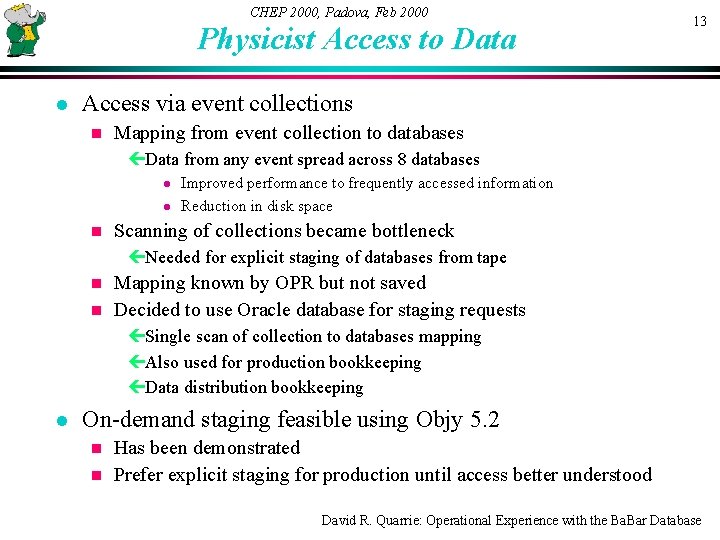

CHEP 2000, Padova, Feb 2000 Physicist Access to Data l 13 Access via event collections n Mapping from event collection to databases çData from any event spread across 8 databases l l n Improved performance to frequently accessed information Reduction in disk space Scanning of collections became bottleneck çNeeded for explicit staging of databases from tape Mapping known by OPR but not saved n Decided to use Oracle database for staging requests n çSingle scan of collection to databases mapping çAlso used for production bookkeeping çData distribution bookkeeping l On-demand staging feasible using Objy 5. 2 Has been demonstrated n Prefer explicit staging for production until access better understood n David R. Quarrie: Operational Experience with the Ba. Bar Database

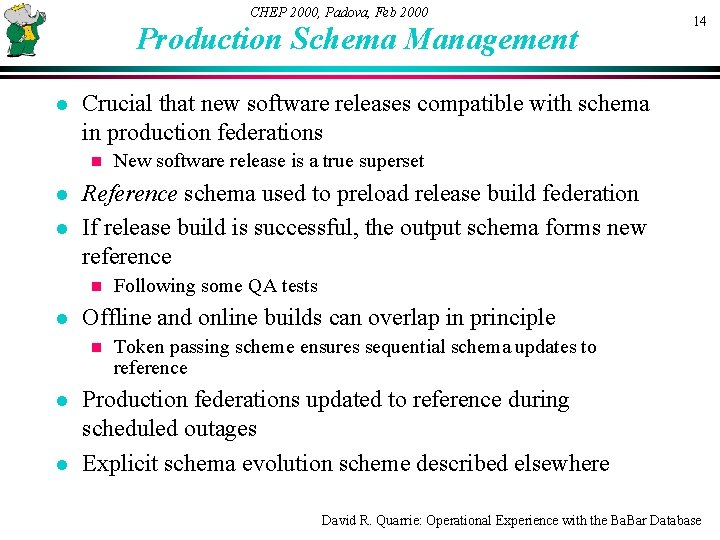

CHEP 2000, Padova, Feb 2000 Production Schema Management l Crucial that new software releases compatible with schema in production federations n l l l Following some QA tests Offline and online builds can overlap in principle n l New software release is a true superset Reference schema used to preload release build federation If release build is successful, the output schema forms new reference n l 14 Token passing scheme ensures sequential schema updates to reference Production federations updated to reference during scheduled outages Explicit schema evolution scheme described elsewhere David R. Quarrie: Operational Experience with the Ba. Bar Database

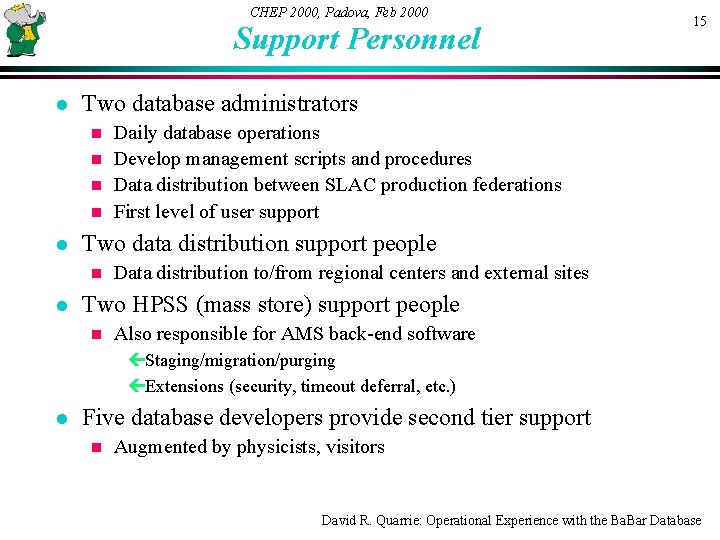

CHEP 2000, Padova, Feb 2000 Support Personnel l 15 Two database administrators Daily database operations n Develop management scripts and procedures n Data distribution between SLAC production federations n First level of user support n l Two data distribution support people n l Data distribution to/from regional centers and external sites Two HPSS (mass store) support people n Also responsible for AMS back-end software çStaging/migration/purging çExtensions (security, timeout deferral, etc. ) l Five database developers provide second tier support n Augmented by physicists, visitors David R. Quarrie: Operational Experience with the Ba. Bar Database

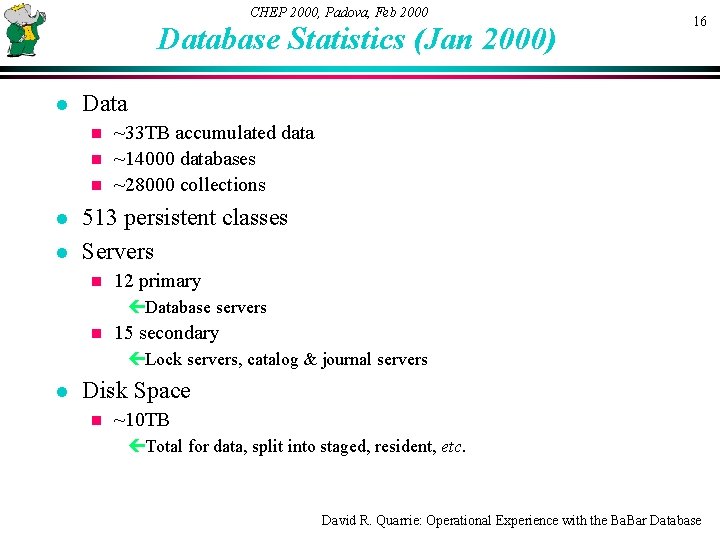

CHEP 2000, Padova, Feb 2000 Database Statistics (Jan 2000) l 16 Data ~33 TB accumulated data n ~14000 databases n ~28000 collections n l l 513 persistent classes Servers n 12 primary çDatabase servers n 15 secondary çLock servers, catalog & journal servers l Disk Space n ~10 TB çTotal for data, split into staged, resident, etc. David R. Quarrie: Operational Experience with the Ba. Bar Database

CHEP 2000, Padova, Feb 2000 Database Statistics (2) l 17 Sites n >30 sites using Objectivity çUSA, UK, France, Italy, Germany, Russia l Users n ~655 licensees çPeople who have signed the license agreement n ~430 users çPeople who have created a test federation n ~90 simultaneous users at SLAC çMonitoring distributed oolockmon statistics n ~60 developers çHave created or modified a persistent class çA wide range of expertise l 10 -15 experts David R. Quarrie: Operational Experience with the Ba. Bar Database

CHEP 2000, Padova, Feb 2000 Ongoing and Future Operational Activities l 18 Improved performance Both hardware and software improvements n Reduced payload per event n Design goals almost met n l Improved automation n l Less burden on the support staff Reduced downtime and latency Outage level <10% n Latency <6 hours n l Large file handling issues n l l Problems handling 10 GB database files for external distribution Better cleanup after problems Remerge online and OPR federations David R. Quarrie: Operational Experience with the Ba. Bar Database

CHEP 2000, Padova, Feb 2000 Conclusions l Basic design and technology ok n l 19 No killer problems Initial performance problems being overcome Ongoing process n Design goals almost met n l Still learning how to manage a large system n n n l Still more to automate n l Multiple federations Multiple servers Multiple sites Large user community Large developer community Many manual procedures More features on the way Multi-dimensional indexing n Parallel iteration n David R. Quarrie: Operational Experience with the Ba. Bar Database

- Slides: 19