Chebychev Hoffding Chernoff Histo 1 X 2Bin300 12

Chebychev, Hoffding, Chernoff

![Histo 1 X = 2*Bin(300, 1/2) – 300 E[X] = 0 Histo 1 X = 2*Bin(300, 1/2) – 300 E[X] = 0](http://slidetodoc.com/presentation_image_h2/61da5519bb7c2c5a4d5c7d9850d4f028/image-2.jpg)

Histo 1 X = 2*Bin(300, 1/2) – 300 E[X] = 0

![Histo 2 Y = 2*Bin(30, 1/2) – 30 E[Y] = 0 Histo 2 Y = 2*Bin(30, 1/2) – 30 E[Y] = 0](http://slidetodoc.com/presentation_image_h2/61da5519bb7c2c5a4d5c7d9850d4f028/image-3.jpg)

Histo 2 Y = 2*Bin(30, 1/2) – 30 E[Y] = 0

![Histo 3 Z = 4*Bin(10, 1/4) – 10 E[Z] = 0 Histo 3 Z = 4*Bin(10, 1/4) – 10 E[Z] = 0](http://slidetodoc.com/presentation_image_h2/61da5519bb7c2c5a4d5c7d9850d4f028/image-4.jpg)

Histo 3 Z = 4*Bin(10, 1/4) – 10 E[Z] = 0

![Histo 4 W=0 E[W] = 0 Histo 4 W=0 E[W] = 0](http://slidetodoc.com/presentation_image_h2/61da5519bb7c2c5a4d5c7d9850d4f028/image-5.jpg)

Histo 4 W=0 E[W] = 0

A natural question: • • Is there a good parameter that allow to distinguish between these distributions? Is there a way to measure the spread?

Variance and Standard Deviation • The variance of X, denoted by Var(X) is the mean squared deviation of X from its expected value = E(X): Var(X) = E[(X- )2]. The standard deviation of X, denoted by SD(X) is the square root of the variance of X.

![Computational Formula for Variance Claim: Var(X) = E[(X- )2]. Proof: E[ (X- )2] = Computational Formula for Variance Claim: Var(X) = E[(X- )2]. Proof: E[ (X- )2] =](http://slidetodoc.com/presentation_image_h2/61da5519bb7c2c5a4d5c7d9850d4f028/image-8.jpg)

Computational Formula for Variance Claim: Var(X) = E[(X- )2]. Proof: E[ (X- )2] = E[X 2 – 2 X + 2] E[ (X- )2] = E[X 2] – 2 E[X] + 2 E[ (X- )2] = E[X 2] – 2 2+ 2 E[ (X- )2] = E[X 2] – E[X]2

Properties of Variance and SD 1. Claim: Var(X) >= 0. Pf: Var(X) = å (x- )2 P(X=x) >= 0 2. Claim: Var(X) = 0 iff P[X= ] = 1.

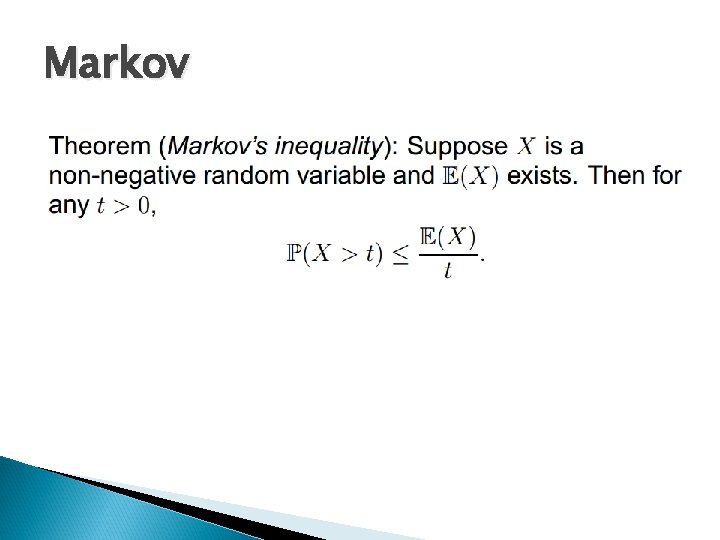

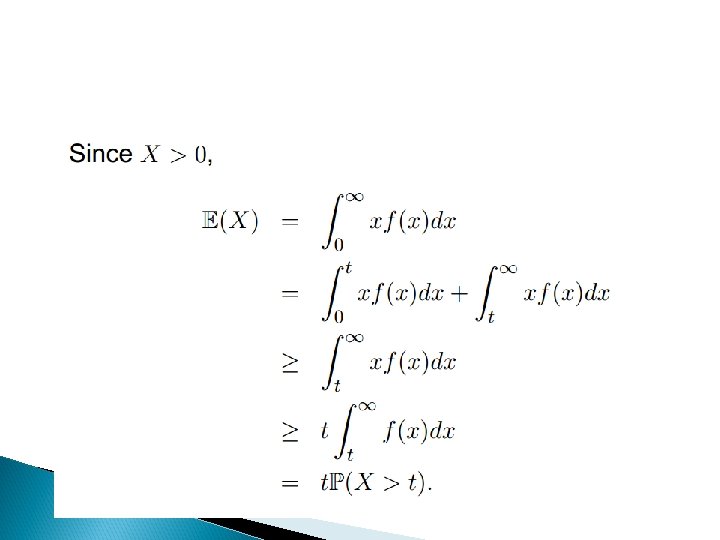

Markov

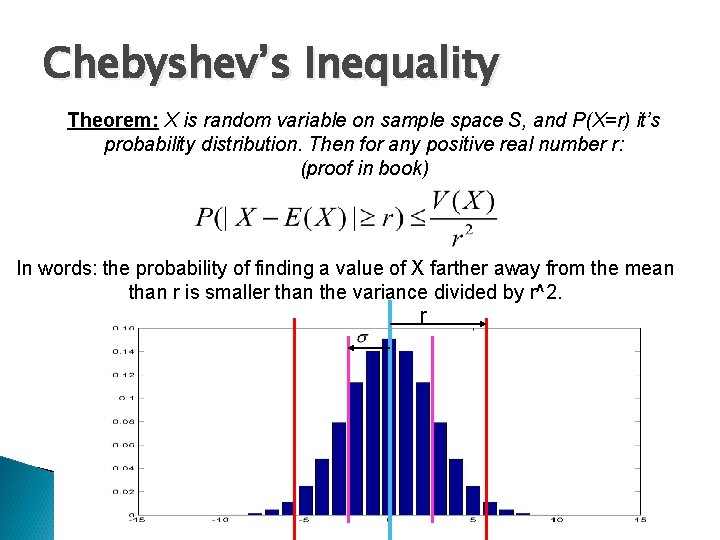

Chebyshev’s Inequality Theorem: X is random variable on sample space S, and P(X=r) it’s probability distribution. Then for any positive real number r: (proof in book) In words: the probability of finding a value of X farther away from the mean than r is smaller than the variance divided by r^2. r

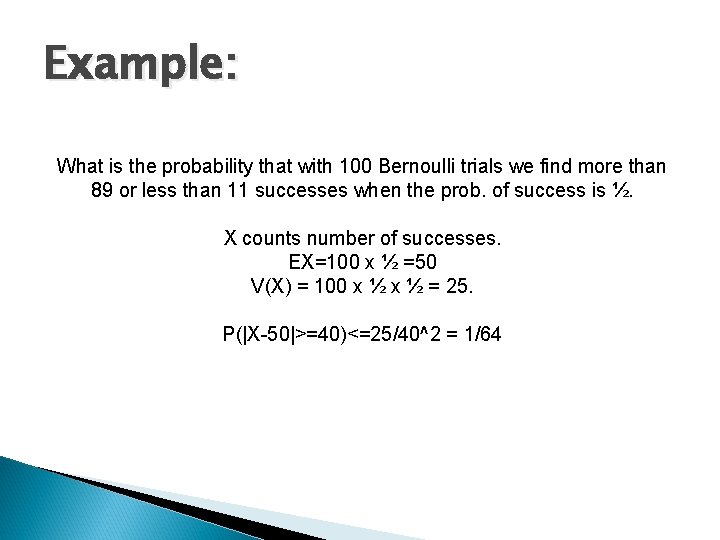

Example: What is the probability that with 100 Bernoulli trials we find more than 89 or less than 11 successes when the prob. of success is ½. X counts number of successes. EX=100 x ½ =50 V(X) = 100 x ½ = 25. P(|X-50|>=40)<=25/40^2 = 1/64

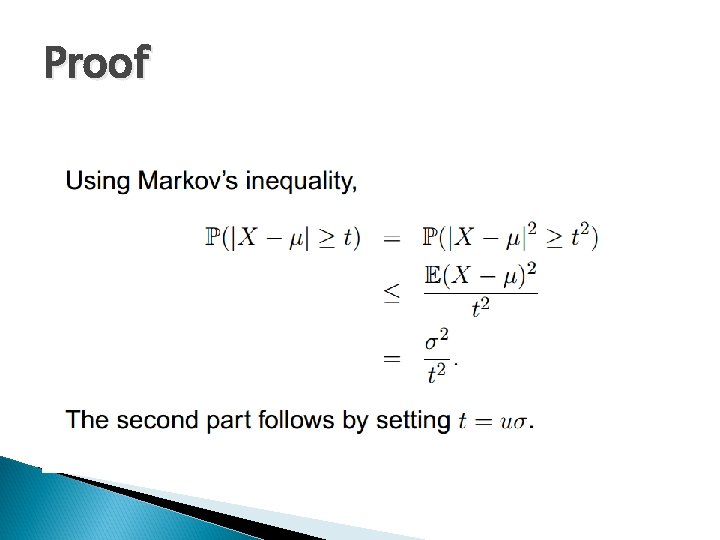

Proof

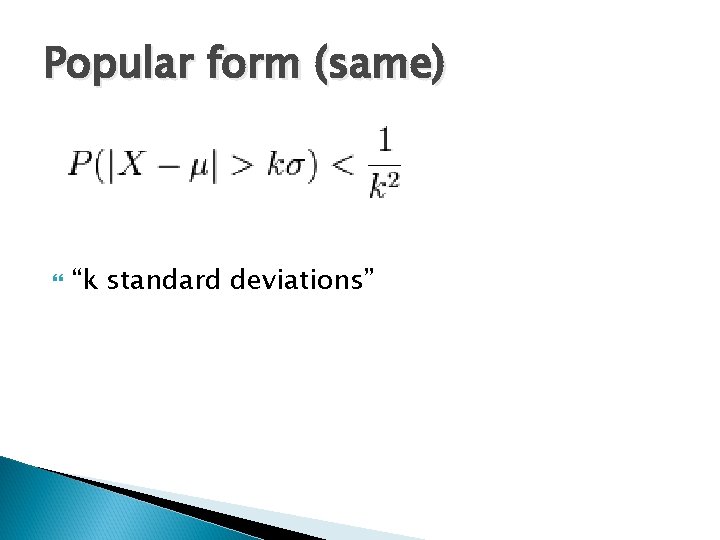

Popular form (same) “k standard deviations”

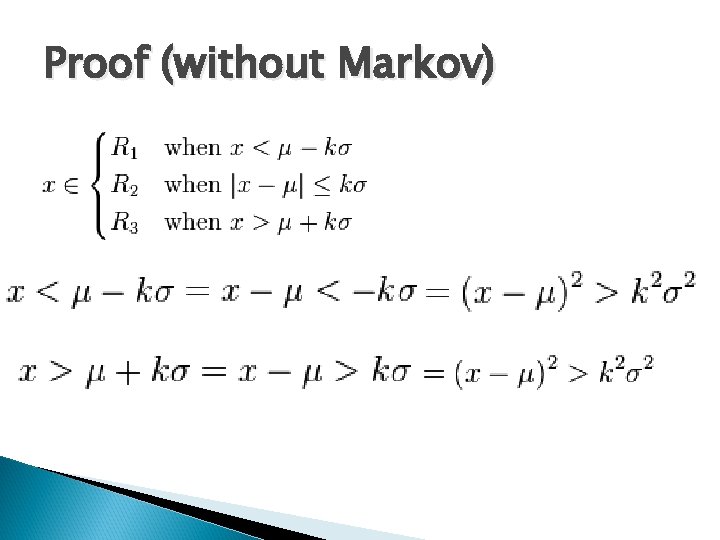

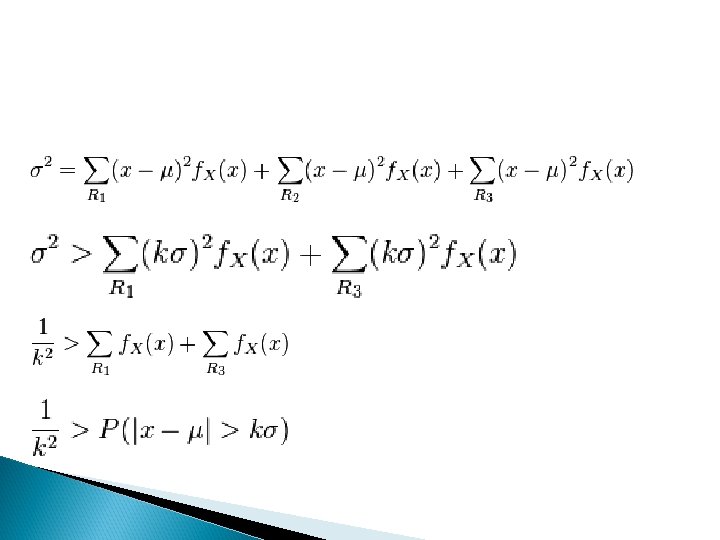

Proof (without Markov)

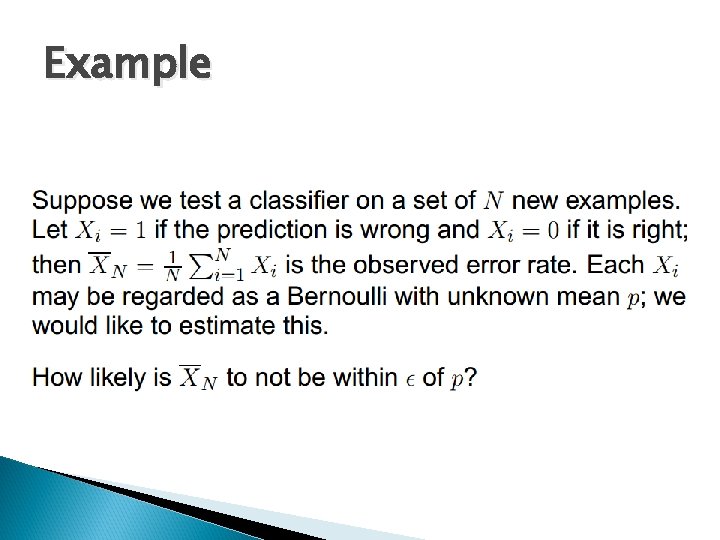

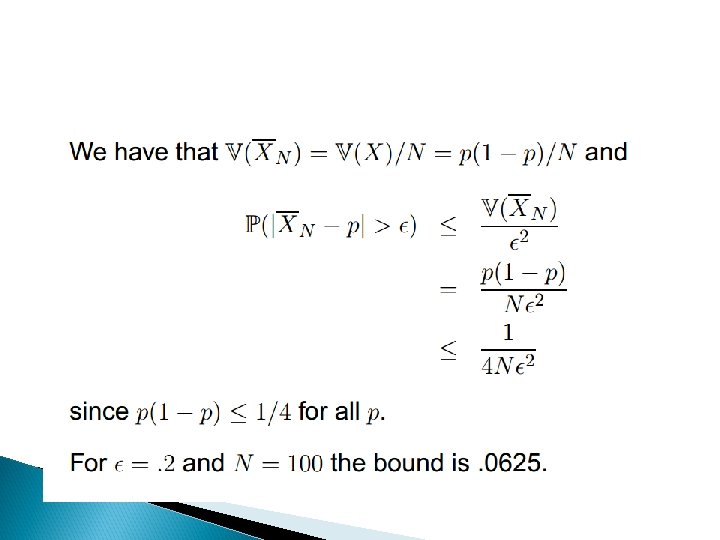

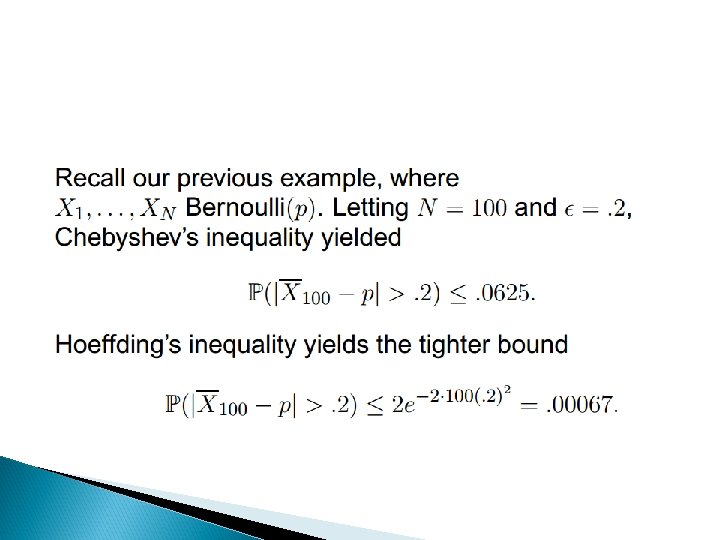

Example

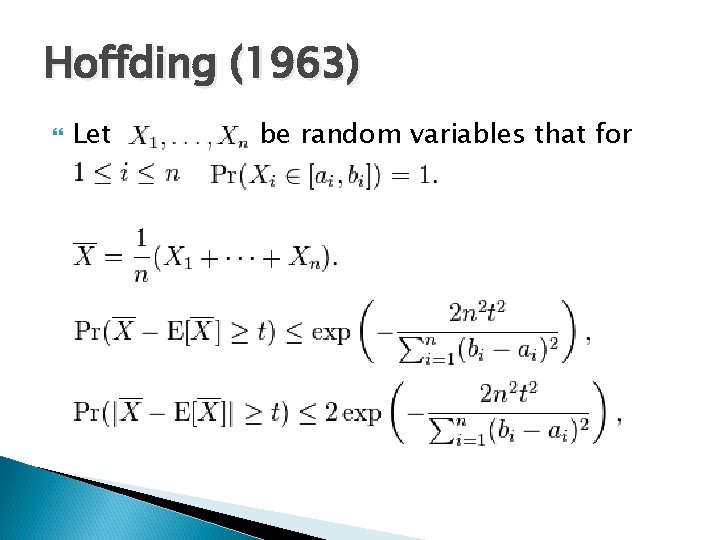

Hoffding (1963) Let be random variables that for

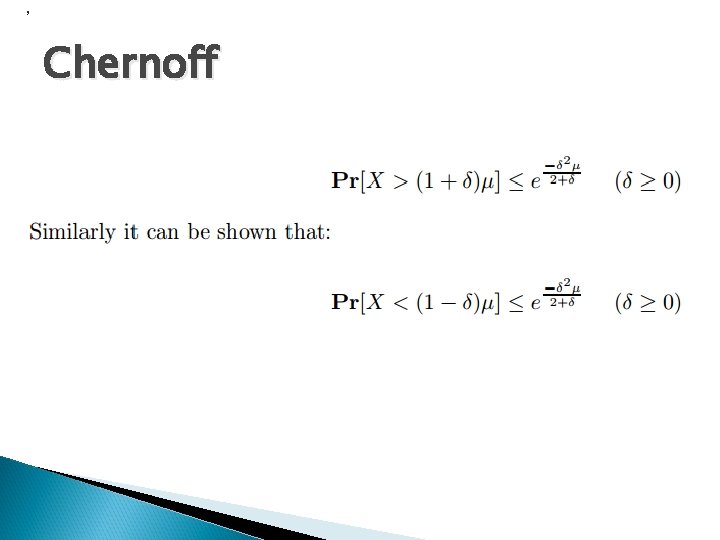

, Chernoff

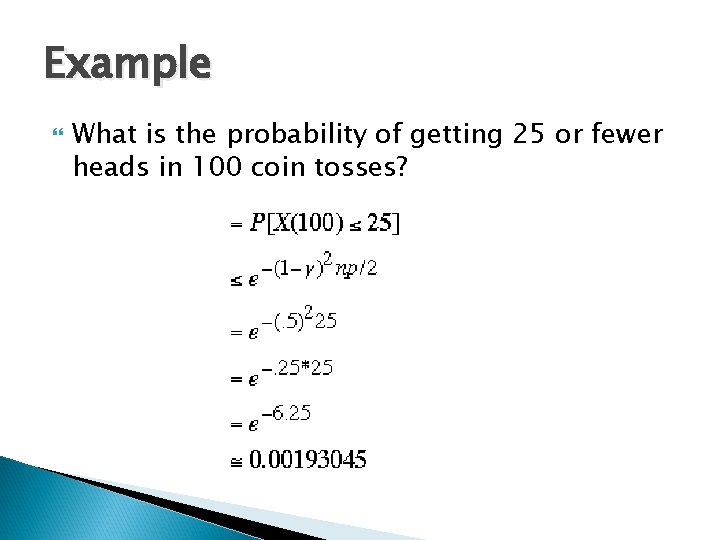

Example What is the probability of getting 25 or fewer heads in 100 coin tosses?

- Slides: 23