Charm Tutorial Presented by Abhinav Bhatele Chao Mei

Charm++ Tutorial Presented by: Abhinav Bhatele Chao Mei Aaron Becker

Overview Introduction – Virtualization – Data Driven Execution – Object-based Parallelization Charm++ features – – – Chares and Chare Arrays Parameter Marshalling Structured Dagger Construct Adaptive MPI Load Balancing Tools – Parallel Debugger – Projections – Live. Viz Conclusion 2

Outline Introduction Charm++ features – Chares and Chare Arrays – Parameter Marshalling Structured Dagger Construct Adaptive MPI Tools – Parallel Debugger – Projections Load Balancing Live. Viz Conclusion 3

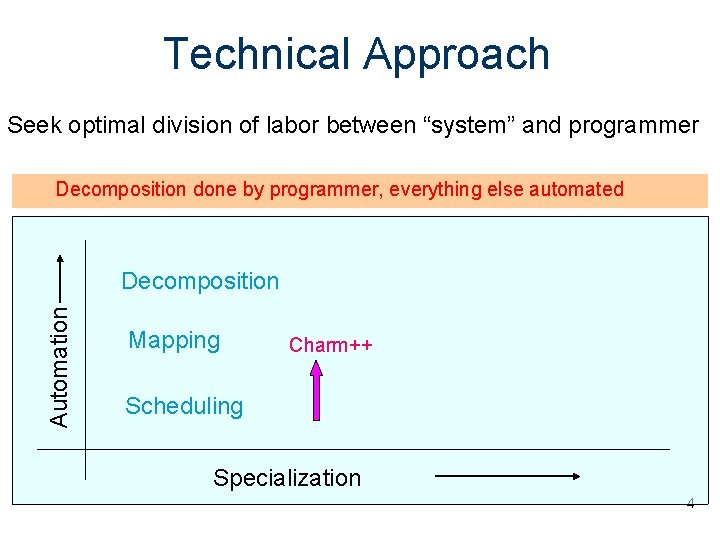

Technical Approach Seek optimal division of labor between “system” and programmer Decomposition done by programmer, everything else automated Automation Decomposition Mapping Charm++ Scheduling Specialization 4

Virtualization: Object-based Decomposition Divide the computation into a large number of pieces – Independent of number of processors – Typically larger than number of processors Let the system map objects to processors 5

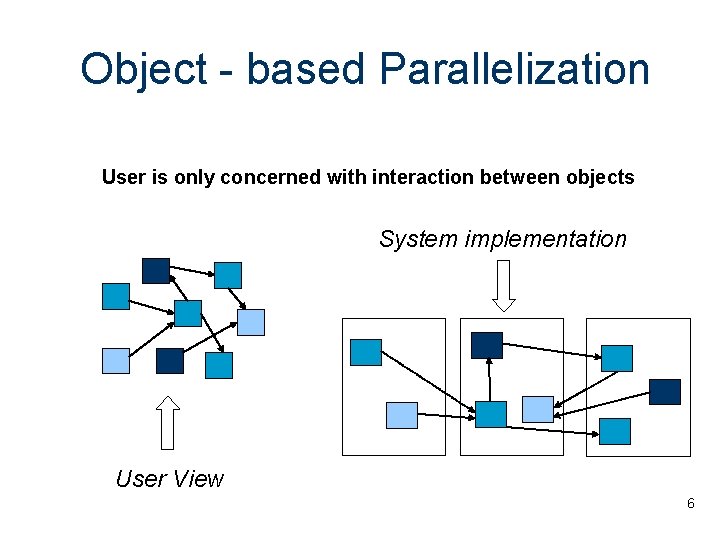

Object - based Parallelization User is only concerned with interaction between objects System implementation User View 6

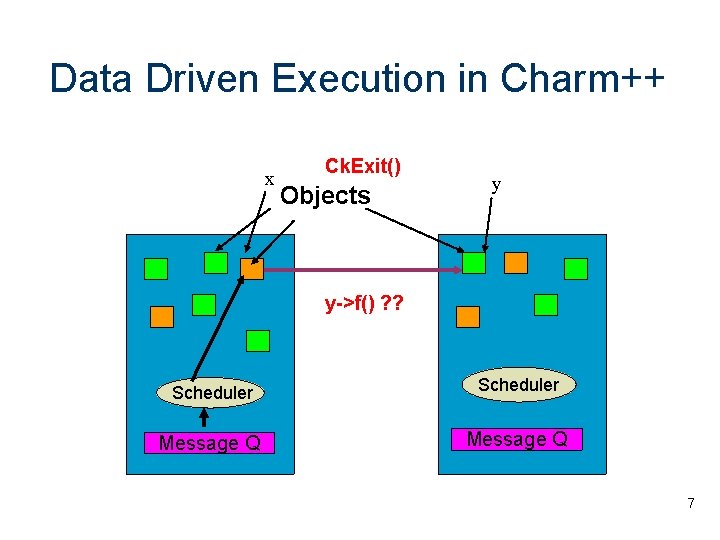

Data Driven Execution in Charm++ x Ck. Exit() Objects y y->f() ? ? Scheduler Message Q 7

Outline Introduction Charm++ features – Chares and Chare Arrays – Parameter Marshalling Structured Dagger Construct Adaptive MPI Tools – Parallel Debugger – Projections Load Balancing Live. Viz Conclusion 8

Chares – Concurrent Objects Can be dynamically created on any available processor Can be accessed from remote processors Send messages to each other asynchronously Contain “entry methods” 9

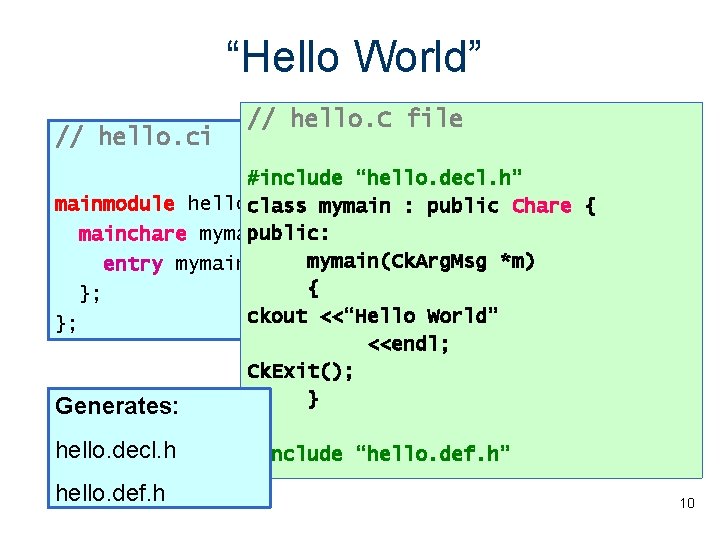

“Hello World” // hello. ci // hello. C file #include “hello. decl. h” mainmodule helloclass { mymain : public Chare { public: mainchare mymain { mymain(Ck. Arg. Msg *m) entry mymain(Ck. Arg. Msg *m); { }; ckout <<“Hello World” }; <<endl; Ck. Exit(); } Generates: }; hello. decl. h #include “hello. def. h” hello. def. h 10

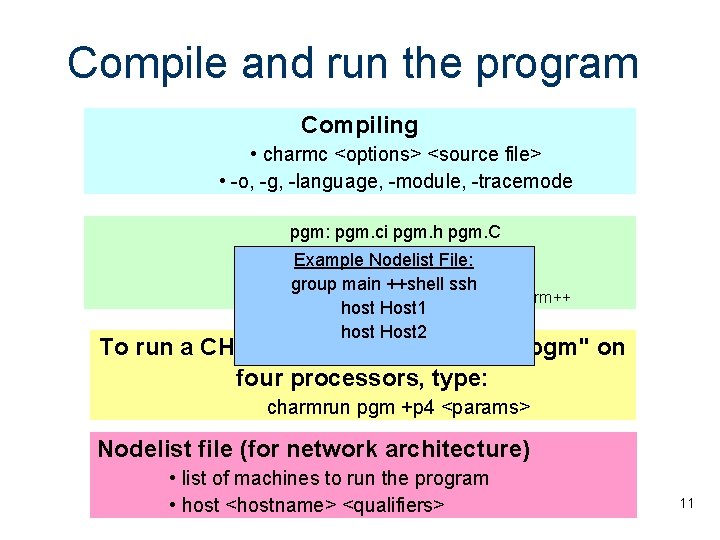

Compile and run the program Compiling • charmc <options> <source file> • -o, -g, -language, -module, -tracemode pgm: pgm. ci pgm. h pgm. C Examplecharmc Nodelistpgm. ci File: charmc pgm. C group main ++shell ssh charmc –o pgm. o –language charm++ host Host 1 host Host 2 To run a CHARM++ program named ``pgm'' on four processors, type: charmrun pgm +p 4 <params> Nodelist file (for network architecture) • list of machines to run the program • host <hostname> <qualifiers> 11

Charm++ solution: Proxy classes Proxy class generated for each chare class – For instance, CProxy_Y is the proxy class generated for chare class Y. – Proxy objects know where the real object is – Methods invoked on this object simply put the data in an “envelope” and send it out to the destination Given a proxy p, you can invoke methods – p. method(msg); 12

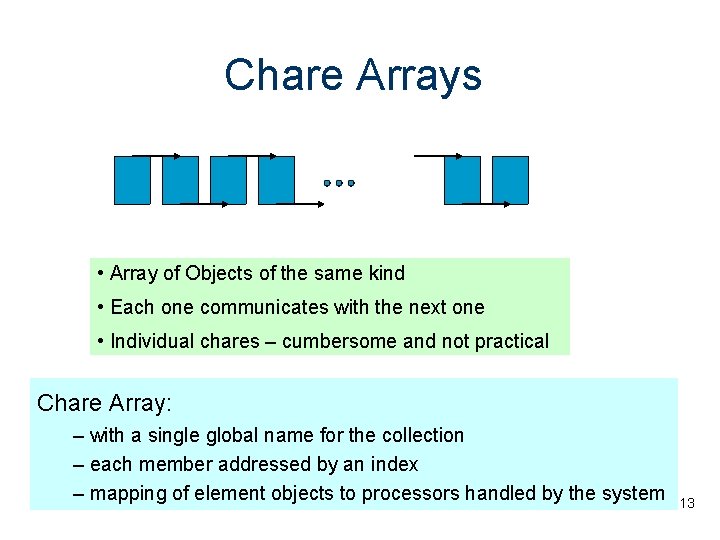

Chare Arrays • Array of Objects of the same kind • Each one communicates with the next one • Individual chares – cumbersome and not practical Chare Array: – with a single global name for the collection – each member addressed by an index – mapping of element objects to processors handled by the system 13

![Chare Arrays A [0] A [1] A [2] A [3] A [. . ] Chare Arrays A [0] A [1] A [2] A [3] A [. . ]](http://slidetodoc.com/presentation_image_h2/93d9ced407da38da38964d2315e68a1d/image-14.jpg)

Chare Arrays A [0] A [1] A [2] A [3] A [. . ] User’s view System view A [0] A [1] 14

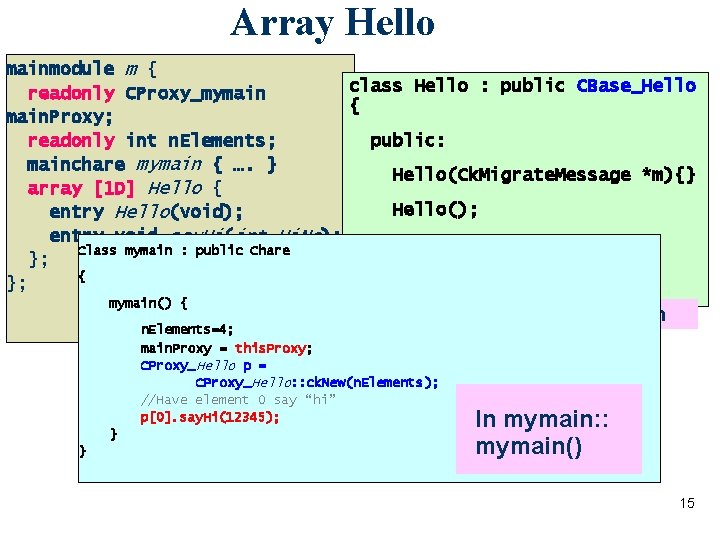

Array Hello mainmodule m { class Hello : public CBase_Hello readonly CProxy_mymain { main. Proxy; public: readonly int n. Elements; mainchare mymain { …. } Hello(Ck. Migrate. Message *m){} array [1 D] Hello { Hello(); entry Hello(void); entry void say. Hi(int Hi. No); void say. Hi(int hi. No); Class mymain : public Chare }; { }; }; mymain() { (. ci) file n. Elements=4; main. Proxy = this. Proxy; CProxy_Hello p = CProxy_Hello: : ck. New(n. Elements); //Have element 0 say “hi” p[0]. say. Hi(12345); } } Class Declaration In mymain: : mymain() 15

Array Hello Element index void Hello: : say. Hi(int hi. No) { ckout << hi. No <<"from element" << this. Index << endl; if (this. Index < n. Elements-1) Array //Pass the hello on: Proxy this. Proxy[this. Index+1]. say. Hi(hi. No+1); else //We've been around once-- we're done. main. Proxy. done(); } void mymain: : done(void){ Ck. Exit(); } Read-only 16

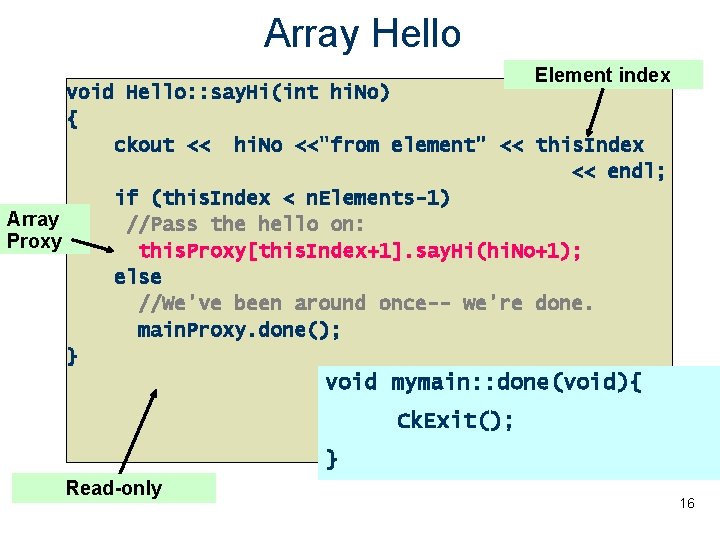

Sorting numbers Sort n integers in increasing order. Create n chares, each keeping one number. In every odd iteration chares numbered 2 i swaps with chare 2 i+1 if required. In every even iteration chares 2 i swaps with chare 2 i-1 if required. After each iteration all chares report to the mainchare. After everybody reports mainchares signals next iteration. Sorting completes in n iterations. Even round: Odd round: 17

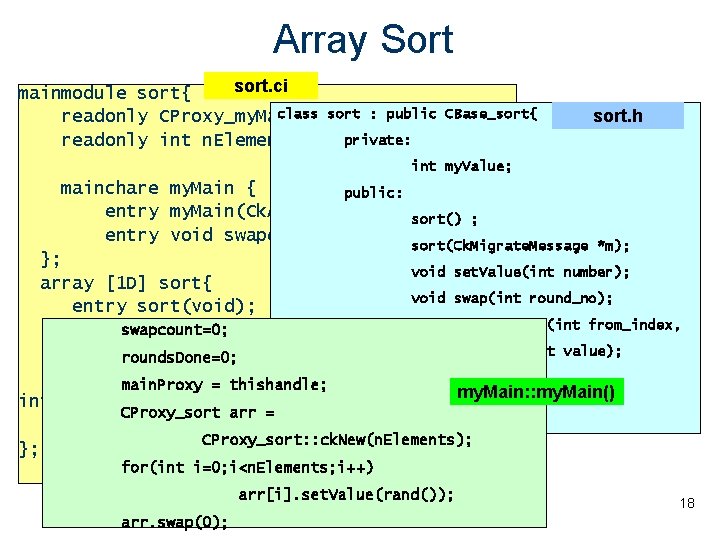

Array Sort sort. ci mainmodule sort{ class sort : public readonly CProxy_my. Main main. Proxy; private: readonly int n. Elements; CBase_sort{ sort. h int my. Value; mainchare my. Main { public: entry my. Main(Ck. Arg. Msg *m); entry void swapdone(void); sort() ; sort(Ck. Migrate. Message *m); }; void set. Value(int number); array [1 D] sort{ void swap(int round_no); entry sort(void); void swap. Receive(int from_index, entryswapcount=0; void set. Value(int myvalue); int value); entryrounds. Done=0; void swap(int round_no); }; entrymain. Proxy void swap. Receive(int from_index, = thishandle; my. Main: : my. Main() int value); CProxy_sort arr = }; CProxy_sort: : ck. New(n. Elements); }; for(int i=0; i<n. Elements; i++) arr[i]. set. Value(rand()); arr. swap(0); 18

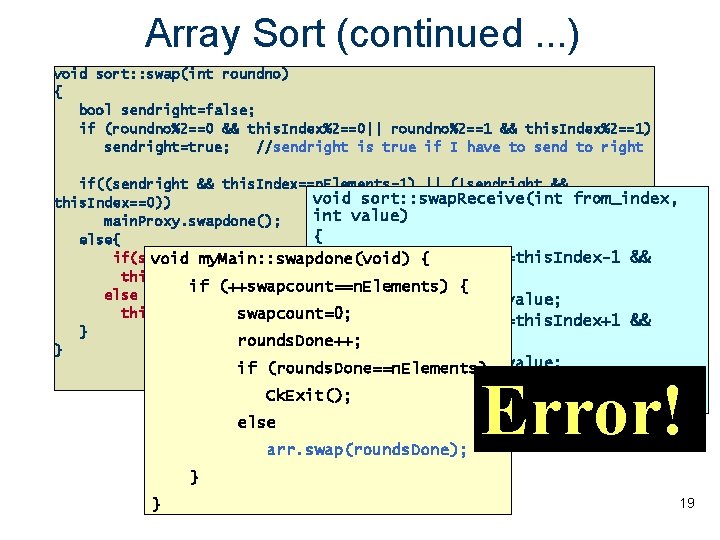

Array Sort (continued. . . ) void sort: : swap(int roundno) { bool sendright=false; if (roundno%2==0 && this. Index%2==0|| roundno%2==1 && this. Index%2==1) sendright=true; //sendright is true if I have to send to right if((sendright && this. Index==n. Elements-1) || (!sendright && void sort: : swap. Receive(int from_index, this. Index==0)) int value) main. Proxy. swapdone(); { else{ if(from_index==this. Index-1 && if(sendright) void my. Main: : swapdone(void) { this. Proxy[this. Index+1]. swap. Receive(this. Index, my. Value); value>my. Value) { if (++swapcount==n. Elements) else my. Value=value; this. Proxy[this. Index-1]. swap. Receive(this. Index, my. Value); swapcount=0; if(from_index==this. Index+1 && } rounds. Done++; value<my. Value) } my. Value=value; if (rounds. Done==n. Elements) main. Proxy. swapdone(); Ck. Exit(); } else arr. swap(rounds. Done); Error!! } } 19

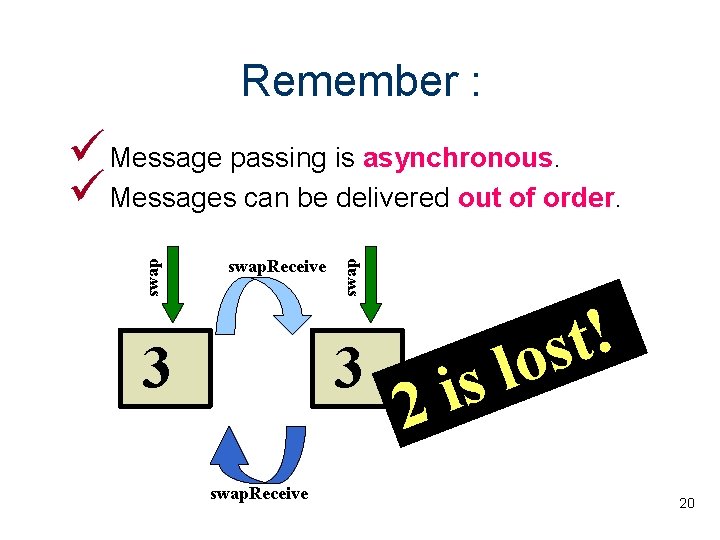

Remember : swap. Receive 3 swap Message passing is asynchronous. Messages can be delivered out of order. 23 swap. Receive s i 2 ! t s o l 20

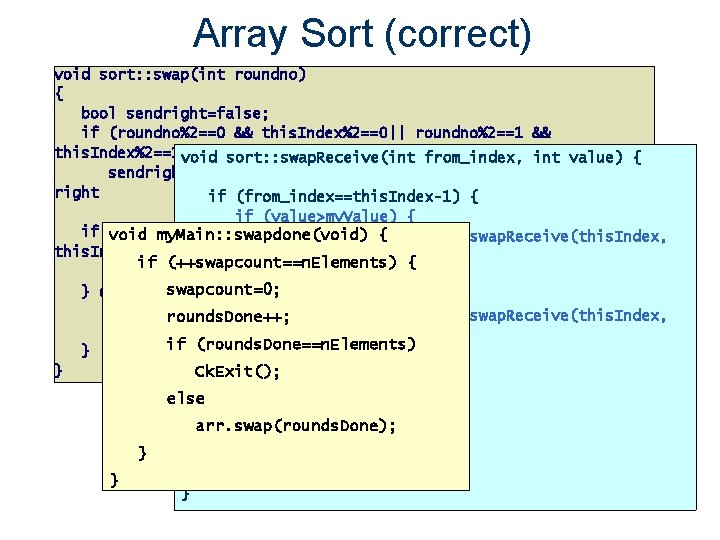

Array Sort (correct) void sort: : swap(int roundno) { bool sendright=false; if (roundno%2==0 && this. Index%2==0|| roundno%2==1 && this. Index%2==1) void sort: : swap. Receive(int from_index, int value) { sendright=true; //sendright is true if I have to send to right if (from_index==this. Index-1) { if (value>my. Value) { if ((sendright && this. Index==n. Elements-1) || (!sendright && void my. Main: : swapdone(void) { this. Proxy[this. Index-1]. swap. Receive(this. Index, this. Index==0)) my. Value); { if (++swapcount==n. Elements) { main. Proxy. swapdone(); my. Value=value; swapcount=0; } else { if (sendright) this. Proxy[this. Index-1]. swap. Receive(this. Index, rounds. Done++; this. Proxy[this. Index+1]. swap. Receive(this. Index, my. Value); value); if (rounds. Done==n. Elements) } } } Ck. Exit(); } else if (from_index==this. Index+1) my. Value=value; arr. swap(rounds. Done); } } main. Proxy. swapdone(); } 21

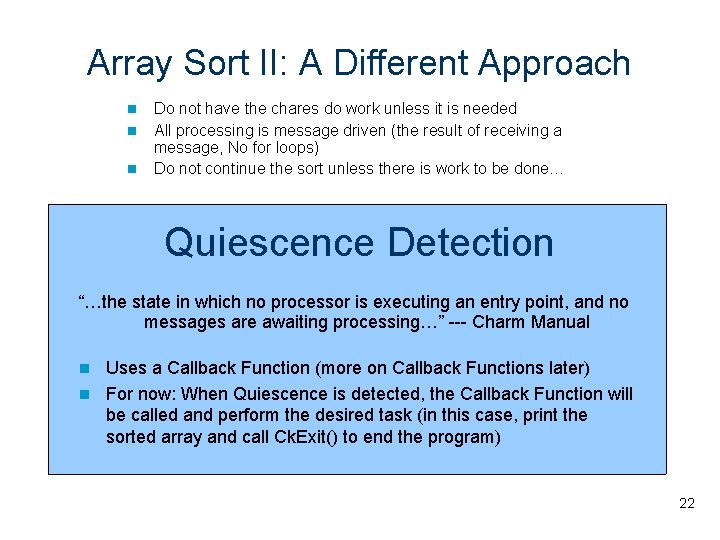

Array Sort II: A Different Approach Do not have the chares do work unless it is needed All processing is message driven (the result of receiving a message, No for loops) Do not continue the sort unless there is work to be done… Quiescence Detection “…the state in which no processor is executing an entry point, and no messages are awaiting processing…” --- Charm Manual Uses a Callback Function (more on Callback Functions later) For now: When Quiescence is detected, the Callback Function will be called and perform the desired task (in this case, print the sorted array and call Ck. Exit() to end the program) 22

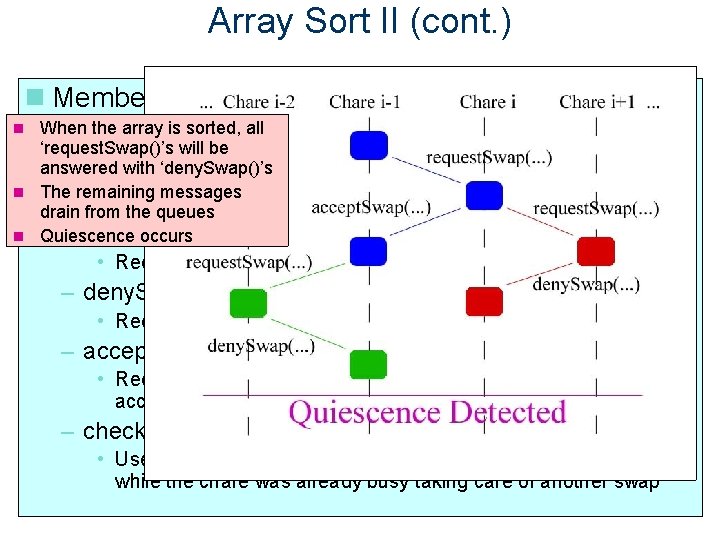

Array Sort II (cont. ) Member Functions accepted, the When athe chare swap is requests denied, ano swap more When array is sorted, all – init. Swap. Sequence. With(int index) two involved in the withchares processing a neighboring is done will chare, will ‘request. Swap()’s beitswap • Received when the receiving chare should perform a swap with must check their otherare neighbors receive either an ‘deny. Swap()’s accept or a deny answered with No further messages queued the chare at index (used to start the sort, each chare told to in return More messages are queued The remaining messages both of its drain fromcheck thenext queues What happens depends on neighbors) the Quiescence occurs –response… request. Swap(int req. Index, int value) • Received when chare at req. Index wants to swap values – deny. Swap(int index) • Received in response to request. Swap() call… request is denied – accept. Swap(int index, int value) • Received in response to request. Swap() call… request is accepted – check. For. Pending() • Used to check if a request for a swap was received and buffered while the chare was already busy taking care of another swap 23

Array Sort II (cont. ) void Bubble: : request. Swap(int req. Index, int value) { void Bubble: : deny. Swap(int ///// CODE REMOVED TO SAVE ROOM : Verify the Parameters ///// Process the Request ///// index) { // Finished with the swap so exit the swap sequence void Bubble: : init. Swap. Sequence. With(int index) { Main: : Main(Ck. Arg. Msg is. Swapping. With = -1; // Check to*m) see {if there is a situation where two neighbors are both sending request. Swap() // messages to each other at the can drop/ignore void Bubble: : check. For. Pending() { same time. If so, one of them (either one) ///// Verify the Parameter ///// // the request. ///// CODE REMOVED TO SAVE ROOM : Read Command Line Parameters ///// // Check to see if there any pending items if (req. Index == is. Swapping. With) { // Check to see if there is a pending initiate swap check. For. Pending(); if (index < 0 || index >= n. Elements || index == /////if. Setup the Detection ///// (pending. Init. Index 0) }{ the request. Swap() // Have. Quiescence one element> ignore and have the other handle it this. Index) (this. Index 2 == 0) {initiate swap. . . resend request to self // if There is a %pending return; // Do Nothing is. Swapping. With = -1; Ck. Callback callback(Ck. Index_Main: : quiescense. Handler(), thishandle); // (Note: init. Swap. Sequence. With() does // The odd index will understand that a response is not going to come Ck. Start. QD(callback); // }not this function so it is safe to do a standard call to it from here. ) ///// Initiate the Swap Sequence ///// elsecall { init. Swap. Sequence. With(pending. Init. Index); return; // The even index is going to ignore the request for a swap void Bubble: : accept. Swap(int index, int value) { } the Computation ///// Start ///// pending. Init. Index = -1; // Check to see if this element is already in a swap } } // sequence // know Swap needed so replace my. Value with the value of // // Print//out a message to let theisuser theis computation is about Check to see if this element already taking part in a swap sequence ifto(is. Swapping. With >= 0) { the other if (is. Swapping. With 0) { is a pending // start // Check to see if >= there requestelement for a swap Ck. Printf("Running Bubble on %d for %d elements. . . n", Ck. Num. Pes(), if (pending. Request. Index >= processors 0) { my. Value = value; if (index == is. Swapping. With || index == n. Elements); // Buffer the index and value for later pending. Init. Index) pending. Request. Index = req. Index; // pending. Request. Value There is a pending request for a swap. . . resend request to self return; = value; // Since the valueand ofpending. Request. Value this element has just changed, // Set main. Proxy the proxy this // Finished for now. . . Thischare request will be processed later. . . // return; (Note: to This function clears pending. Request. Index } = thishandle; main. Proxy //request. Swap() request a soswap with the other neighbor //calls Buffer the index (as for later // before making the call to when request. Swap() this pending. Init. Index = index; //Check function the end, not enter this aifpending statement // long for aswill is not request or init // to see again if this at value should be execution swapped owntheir // Create array< of chares being a number in the array) if ((req. Index this. Index && (each value >element my. Value) || (req. Index > an this. Index && value < my. Value)) { back //the a second time which means there will not be infinite loop of calls // already in the works for the other neighbor) arr = CProxy_Bubble: : ck. New(n. Elements); // This is all that can be done for now so just // and forth between the two functions as one might think at first glance. // A Swap is Needed, inform req. Index, swap and exitis. Swapping. With swapping sequence // and set accordingly. // return this. Proxy[req. Index]. accept. Swap(this. Index, my. Value); // Inform req. Index // Tell each element in theis. Swapping. With array toint check its be oni =neighboors this. Index + ((this. Index // my. Value Also note that will -1 if thisvalues function is return; called. ) > index) ? 1 : -1); = value; // Swap for (int i = temp. Index 1; i < n. Elements; i+=2)if { (oni >= 0 && oni < n. Elements } = pending. Request. Index; && pending. Request. Index != Ifarr[i]. init. Swap. Sequence. With(i there isn't a pending request/init with if (iint > //0) - the 1); the other neighbor for this element temp. Value = pending. Request. Value; oni && pending. Init. Index != oni) { then make a-request with the other neighbor to see if a swap is needed now that this if (ipending. Request. Index < //n. Elements 1) arr[i]. init. Swap. Sequence. With(i + 1); // Flag this element as being in a swap sequence = -1; // element has a new value is. Swapping. With = oni; } is. Swapping. With = index; pending. Request. Value = -1; int oni = this. Index + ((this. Index > req. Index) ? 1 : -1); this. Proxy[oni]. request. Swap(this. Index, my. Value); } if (oni >= 0 && oni < n. Elements && pending. Request. Index != oni && pending. Init. Index != oni) { this. Proxy[this. Index]. request. Swap(temp. Index, temp. Value); is. Swapping. With = oni; } else my. Value); { // Initiate Swap Sequence with the specified index } this. Proxy[oni]. request. Swap(this. Index, void Bubble: : display. Value() { void }Main: : quiescense. Handler() { } is. Swapping. With = -1; this. Proxy[index]. request. Swap(this. Index, my. Value); // After all the activity has stopped, start the final sequence }of printing if (this. Index == 0) // the array exiting the program} } else and { Ck. Printf("n"); arr[0]. display. Value(); // No Swap is Needed, inform req. Index and Ck. Printf("Final exit swapping sequence Bubble[%06 d]. display. Value() - my. Value = %dn", } // Check to req. Index seemy. Value); if--there any pending items this. Proxy[req. Index]. deny. Swap(this. Index); // Inform this. Index, } if (is. Swapping. With < 0) fflush(stdout); void Main: : done(void) { // Check. Donen"); to see if there any pending items as long as this element is not already check. For. Pending(); Ck. Printf("All // trying to swap with another element if (this. Index < n. Elements - 1) Ck. Exit(); } if (is. Swapping. With < 0) this. Proxy[this. Index + 1]. display. Value(); } check. For. Pending(); else } main. Proxy. done(); 24 }

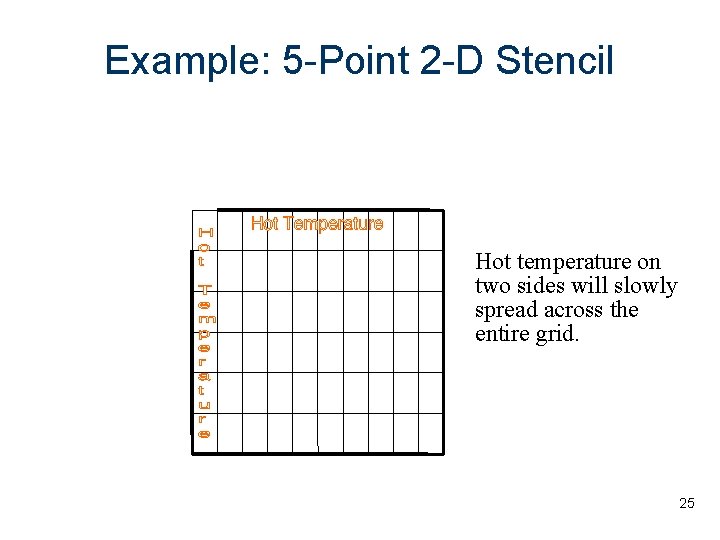

Example: 5 -Point 2 -D Stencil Hot temperature on two sides will slowly spread across the entire grid. 25

Example: 5 -Point 2 -D Stencil Input: 2 D array of values with boundary conditions In each iteration, each array element is computed as the average of itself and its neighbors(average on 5 points) Iterations are repeated till some threshold difference value is reached 26

Parallel Solution! 27

Parallel Solution! Slice up the 2 D array into sets of columns Chare = computations in one set At the end of each iteration – Chares exchange boundaries – Determine maximum change in computation Output result at each step or when threshold is reached 28

Arrays as Parameters Array cannot be passed as pointer specify the length of the array in the interface file – – entry void bar(int n, double arr[n]) n is size of arr[] 29

![Stencil Code void Ar 1: : do. Work(int senders. ID, int n, double arr[]) Stencil Code void Ar 1: : do. Work(int senders. ID, int n, double arr[])](http://slidetodoc.com/presentation_image_h2/93d9ced407da38da38964d2315e68a1d/image-30.jpg)

Stencil Code void Ar 1: : do. Work(int senders. ID, int n, double arr[]) { max. Change = 0. 0; if (senders. ID == this. Index-1) { leftmsg = 1; } //set boolean to indicate we received the left message else if (senders. ID == this. Index+1) { rightmsg = 1; } //set boolean to indicate we received the right message // Rest of the code on a following slide … } 30

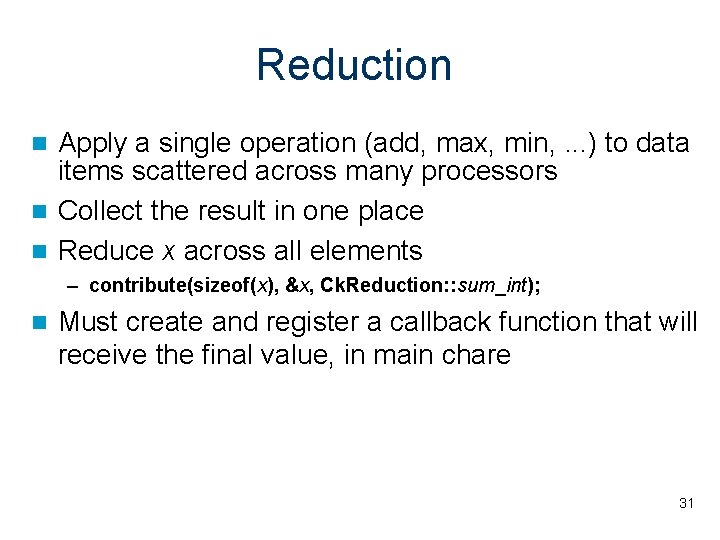

Reduction Apply a single operation (add, max, min, . . . ) to data items scattered across many processors Collect the result in one place Reduce x across all elements – contribute(sizeof(x), &x, Ck. Reduction: : sum_int); Must create and register a callback function that will receive the final value, in main chare 31

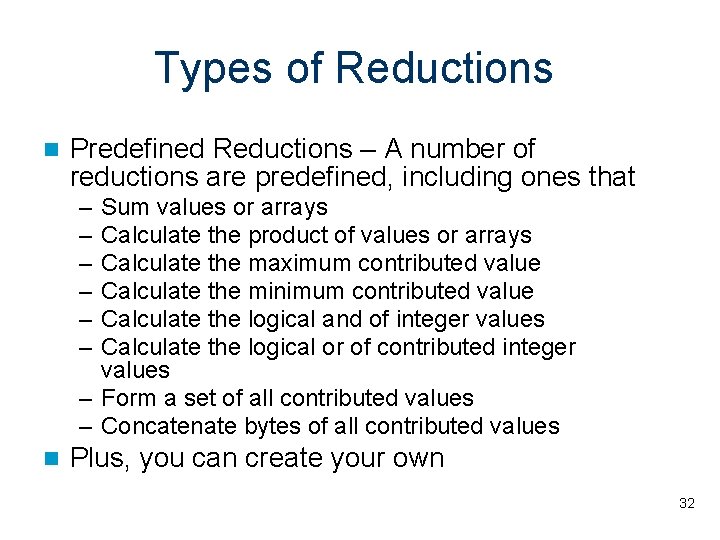

Types of Reductions Predefined Reductions – A number of reductions are predefined, including ones that – – – Sum values or arrays Calculate the product of values or arrays Calculate the maximum contributed value Calculate the minimum contributed value Calculate the logical and of integer values Calculate the logical or of contributed integer values – Form a set of all contributed values – Concatenate bytes of all contributed values Plus, you can create your own 32

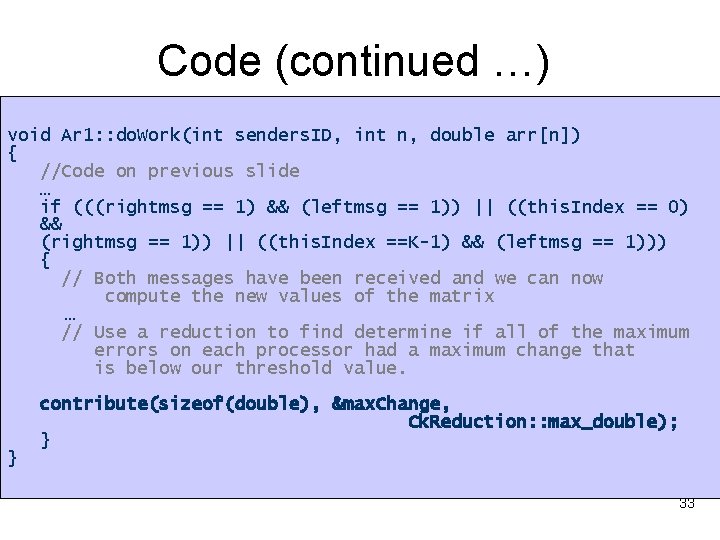

Code (continued …) void Ar 1: : do. Work(int senders. ID, int n, double arr[n]) { //Code on previous slide … if (((rightmsg == 1) && (leftmsg == 1)) || ((this. Index == 0) && (rightmsg == 1)) || ((this. Index ==K-1) && (leftmsg == 1))) { // Both messages have been received and we can now compute the new values of the matrix … // Use a reduction to find determine if all of the maximum errors on each processor had a maximum change that is below our threshold value. } contribute(sizeof(double), &max. Change, Ck. Reduction: : max_double); } 33

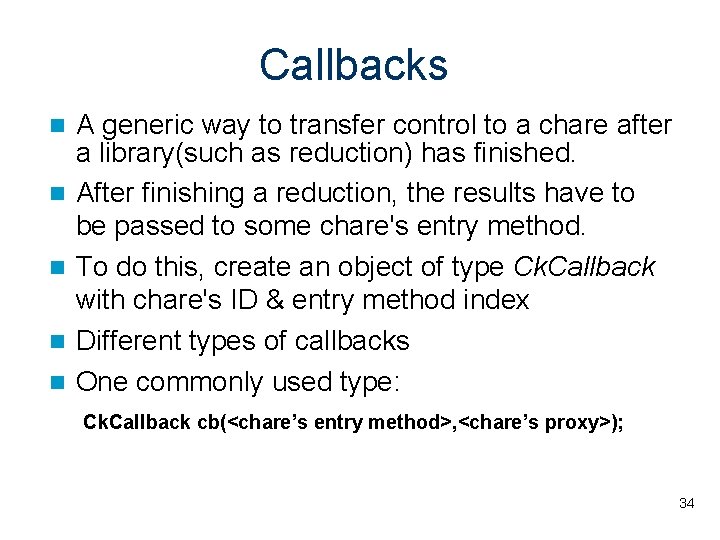

Callbacks A generic way to transfer control to a chare after a library(such as reduction) has finished. After finishing a reduction, the results have to be passed to some chare's entry method. To do this, create an object of type Ck. Callback with chare's ID & entry method index Different types of callbacks One commonly used type: Ck. Callback cb(<chare’s entry method>, <chare’s proxy>); 34

Outline Introduction Charm++ features – Chares and Chare Arrays – Parameter Marshalling Structured Dagger Construct Adaptive MPI Tools – Parallel Debugger – Projections Load Balancing Live. Viz Conclusion 35

Structured Dagger Motivation: – Keeping flags & buffering manually can complicate code in charm++ model. – Considerable overhead in the form of thread creation and synchronization 36 Parallel Programming Laboratory

Advantages Reduce the complexity of program development – Facilitate a clear expression of flow of control Take advantage of adaptive messagedriven execution – Without adding significant overhead 37 Parallel Programming Laboratory

What is it? A coordination language built on top of Charm++ – Structured notation for specifying intra-process control dependences in message-driven programs Allows easy expression of dependences among messages, computations and also among computations within the same object using various structured constructs 38 Parallel Programming Laboratory

Structured Dagger Constructs To Be Covered in Advanced Charm++ Session atomic {code} overlap {code} when <entrylist> {code} if/else/for/while foreach 39 Parallel Programming Laboratory

![Stencil Example Using Structured Dagger stencil. ci array[1 D] Ar 1 { … entry Stencil Example Using Structured Dagger stencil. ci array[1 D] Ar 1 { … entry](http://slidetodoc.com/presentation_image_h2/93d9ced407da38da38964d2315e68a1d/image-40.jpg)

Stencil Example Using Structured Dagger stencil. ci array[1 D] Ar 1 { … entry void Get. Messages () { when rightmsg. Entry(), leftmsg. Entry() { atomic { Ck. Printf(“Got both left and right messages n”); do. Work(right, left); } } }; entry void rightmsg. Entry(); entry void leftmsg. Entry(); … }; 40 Parallel Programming Laboratory

AMPI = Adaptive MPI Motivation: – Typical MPI implementations are not suitable for the new generation parallel applications • Dynamically varying: load shifting, adaptive refinement – Some legacy codes in MPI can be easily ported and run fast in current new machines – Facilitate those who are familiar with MPI 41 Parallel Programming Laboratory

What is it? An MPI implementation built on Charm++ (MPI with virtualization) To provide benefits of Charm++ Runtime System to standard MPI programs – Load Balancing, Checkpointing, Adaptability to dynamic number of physical processors 42

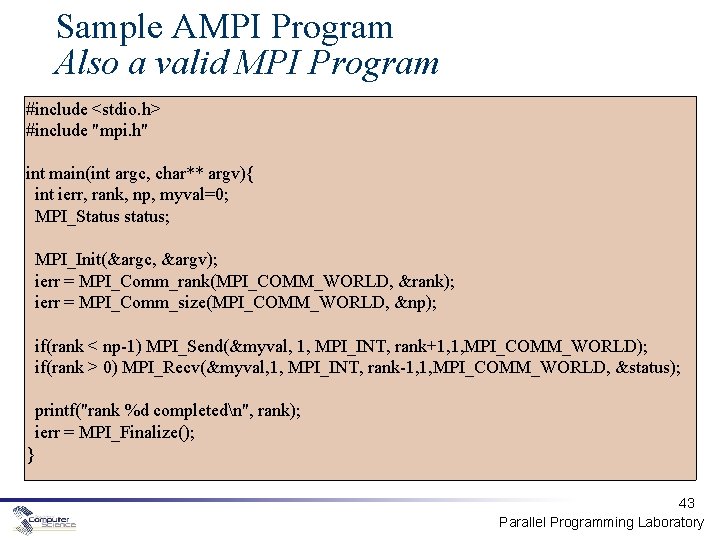

Sample AMPI Program Also a valid MPI Program #include <stdio. h> #include "mpi. h" int main(int argc, char** argv){ int ierr, rank, np, myval=0; MPI_Status status; MPI_Init(&argc, &argv); ierr = MPI_Comm_rank(MPI_COMM_WORLD, &rank); ierr = MPI_Comm_size(MPI_COMM_WORLD, &np); if(rank < np-1) MPI_Send(&myval, 1, MPI_INT, rank+1, 1, MPI_COMM_WORLD); if(rank > 0) MPI_Recv(&myval, 1, MPI_INT, rank-1, 1, MPI_COMM_WORLD, &status); printf("rank %d completedn", rank); ierr = MPI_Finalize(); } 43 Parallel Programming Laboratory

AMPI Compilation Compile: charmc sample. c -language ampi -o sample Run: charmrun. /sample +p 16 +vp 128 [args] Instead of Traditional MPI equivalent: mpirun. /sample -np 128 [args] 44 Parallel Programming Laboratory

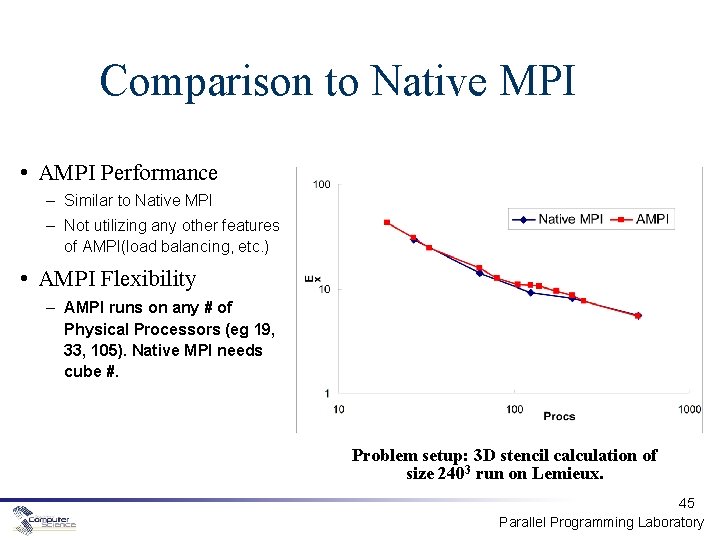

Comparison to Native MPI • AMPI Performance – Similar to Native MPI – Not utilizing any other features of AMPI(load balancing, etc. ) • AMPI Flexibility – AMPI runs on any # of Physical Processors (eg 19, 33, 105). Native MPI needs cube #. Problem setup: 3 D stencil calculation of size 2403 run on Lemieux. 45 Parallel Programming Laboratory

Current AMPI Capabilities Automatic checkpoint/restart mechanism – Robust implementation available Load Balancing and “process” Migration MPI 1. 1 compliant, Most of MPI 2 implemented Interoperability – With Frameworks – With Charm++ Performance visualization More on the next session! 46 Parallel Programming Laboratory

Outline Introduction Charm++ features – Chares and Chare Arrays – Parameter Marshalling Structured Dagger Construct Adaptive MPI Tools – Parallel Debugger – Projections Load Balancing Live. Viz Conclusion 47

Parallel debugging support Parallel debugger (charmdebug) Allows programmer to view the changing state of the parallel program Java GUI client 48 Parallel Programming Laboratory

Debugger features Provides a means to easily access and view the major programmer visible entities, including objects and messages in queues, during program execution Provides an interface to set and remove breakpoints on remote entry points, which capture the major programmer-visible control flows 49 Parallel Programming Laboratory

Debugger features (contd. ) Provides the ability to freeze and unfreeze the execution of selected processors of the parallel program, which allows a consistent snapshot Provides a way to attach a sequential debugger (like GDB) to a specific subset of processes of the parallel program during execution, which keeps a manageable number of sequential debugger windows open 50 Parallel Programming Laboratory

Alternative debugging support Uses gdb for debugging • Runs each node under gdb in an xterm window, prompting the user to begin execution Charm program has to be compiled using ‘-g’ and run with ‘++debug’ as a command-line option. 51 Parallel Programming Laboratory

Projections: Quick Introduction (More detailed in later session!) Projections is a tool used to analyze the performance of your application The tracemode option is used when you build your application to enable tracing You get one log file per processor, plus a separate file with global information These files are read by Projections so you can use the Projections views to analyze performance 52 Parallel Programming Laboratory

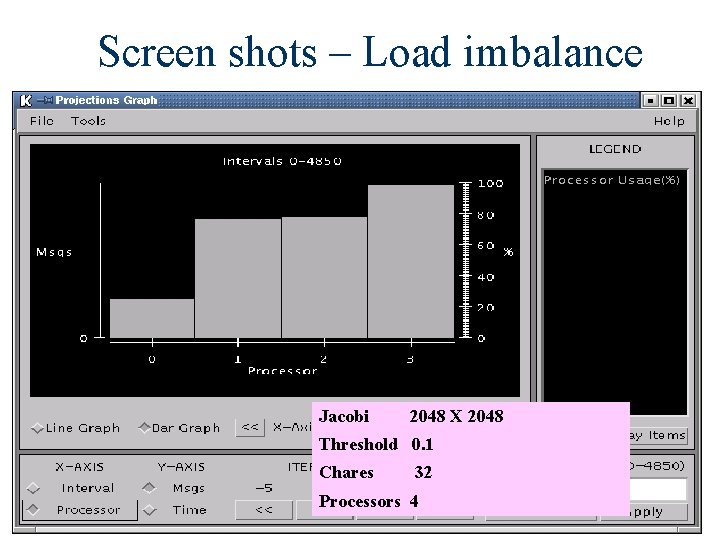

Screen shots – Load imbalance Jacobi 2048 X 2048 Threshold 0. 1 Chares 32 Processors 4 53

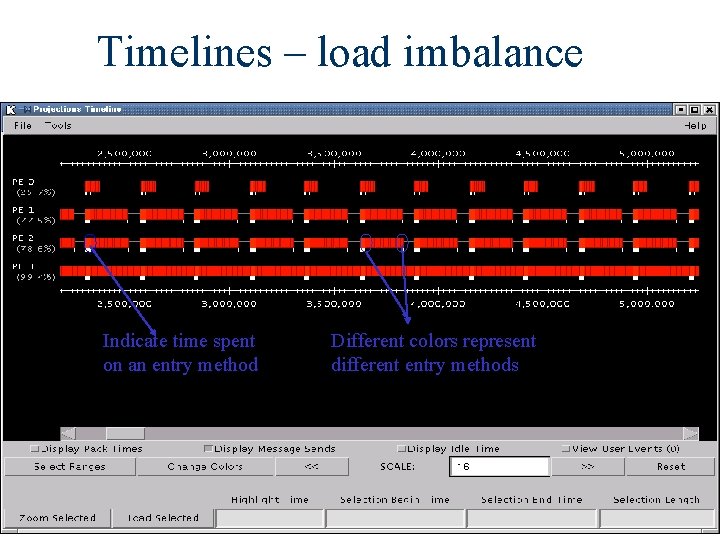

Timelines – load imbalance Indicate time spent on an entry method Different colors represent different entry methods 54

Outline Introduction Charm++ features – Chares and Chare Arrays – Parameter Marshalling Structured Dagger Construct Adaptive MPI Tools – Parallel Debugger – Projections Load Balancing Live. Viz Conclusion 55

Load Balancing Goal: higher processor utilization Object migration allows us to move the work load among processors easily Measurement-based Load Balancing Two approaches to distributing work: • Centralized • Distributed Principle of Persistence 56

Migration Array objects can migrate from one processor to another Migration creates a new object on the destination processor while destroying the original Need a way of packing an object into a message, then unpacking it on the receiving processor 57

PUP is a framework for packing and unpacking migratable objects into messages To migrate, must implement pack/unpack or pup method Pup method combines 3 functions – Data structure traversal : compute message size, in bytes – Pack : write object into message – Unpack : read object out of message 58

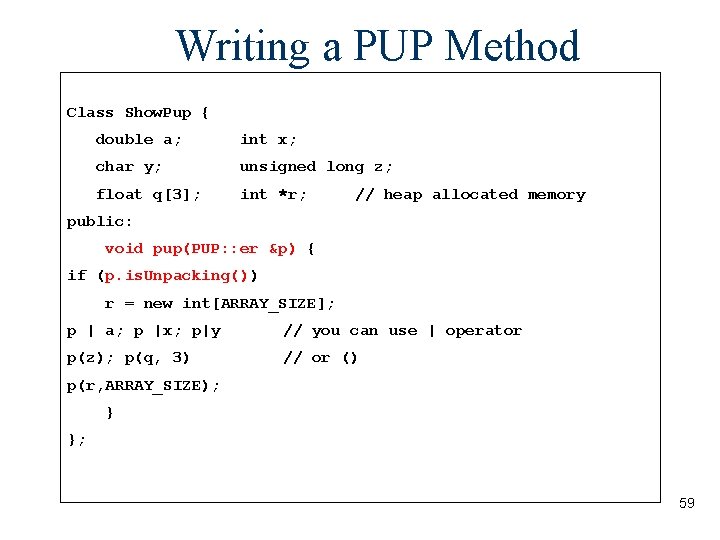

Writing a PUP Method Class Show. Pup { double a; int x; char y; unsigned long z; float q[3]; int *r; // heap allocated memory public: void pup(PUP: : er &p) { if (p. is. Unpacking()) r = new int[ARRAY_SIZE]; p | a; p |x; p|y // you can use | operator p(z); p(q, 3) // or () p(r, ARRAY_SIZE); } }; 59

The Principle of Persistence Big Idea: the past predicts the future Patterns of communication and computation remain nearly constant By measuring these patterns we can improve our load balancing techniques 60

Centralized Load Balancing Uses information about activity on all processors to make load balancing decisions Advantage: Global information gives higher quality balancing Disadvantage: Higher communication costs and latency Algorithms: Greedy, Refine, Recursive Bisection, Metis 61

Neighborhood Load Balancing Load balances among a small set of processors (the neighborhood) Advantage: Lower communication costs Disadvantage: Could leave a system which is poorly balanced globally Algorithms: Neighbor. LB, Workstation. LB 62

When to Re-balance Load? Default: Load balancer will migrate when needed Programmer Control: At. Sync load balancing At. Sync method: enable load balancing at specific point – Object ready to migrate – Re-balance if needed – At. Sync() called when your chare is ready to be load balanced – load balancing may not start right away – Resume. From. Sync() called when load balancing for this chare has finished 63

Using a Load Balancer link a LB module – -module <strategy> – Refine. LB, Neighbor. LB, Greedy. Comm. LB, others… – Every. LB will include all load balancing strategies compile time option (specify default balancer) – -balancer Refine. LB runtime option – +balancer Refine. LB 64

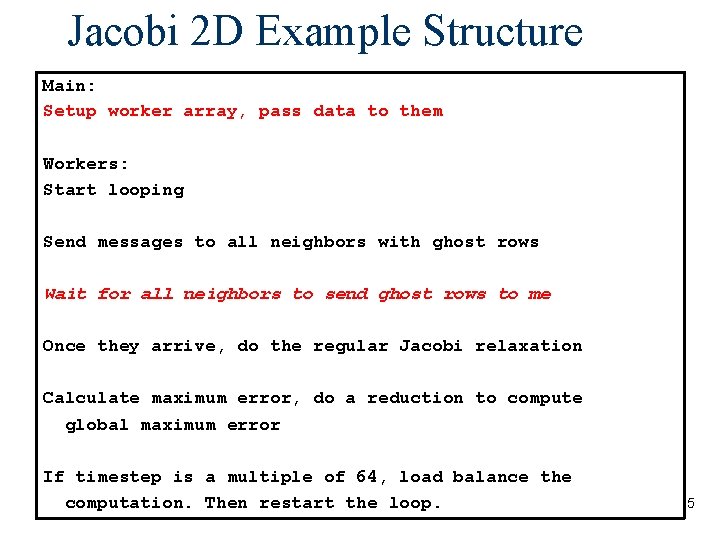

Load Balancing in Jacobi 2 D Main: Setup worker array, pass data to them Workers: Start looping Send messages to all neighbors with ghost rows Wait for all neighbors to send ghost rows to me Once they arrive, do the regular Jacobi relaxation Calculate maximum error, do a reduction to compute global maximum error If timestep is a multiple of 64, load balance the computation. Then restart the loop. 65

Load Balancing in Jacobi 2 D (cont. ) worker: : worker(void) { //Initialize other parameters Void worker: : do. Compute(void){ uses. At. Sync=Cmi. True; // }do all the jacobi computation sync. Count++; if(sync. Count%64==0) At. Sync(); else contribute(1*sizeof(float), &error. Max, Ck. Reduction: : max_float ); void worker: : Resume. From. Sync(void){ } contribute(1*sizeof(float), &error. Max, Ck. Reduction: : max_float); } 66

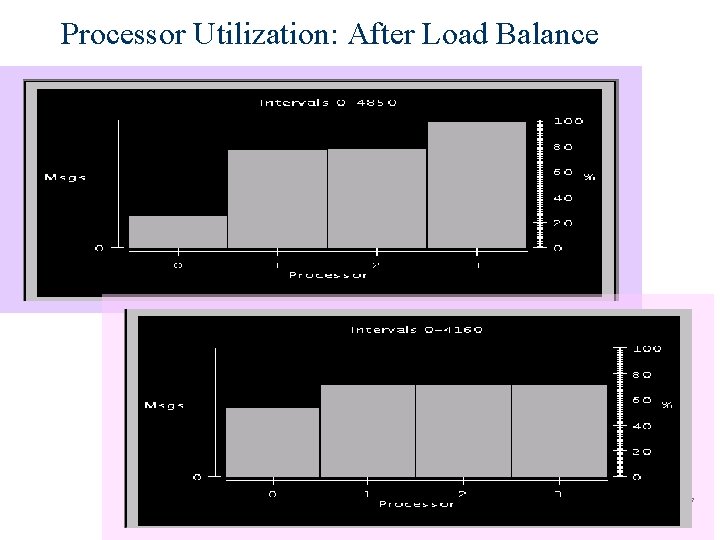

Processor Utilization: After Load Balance 67

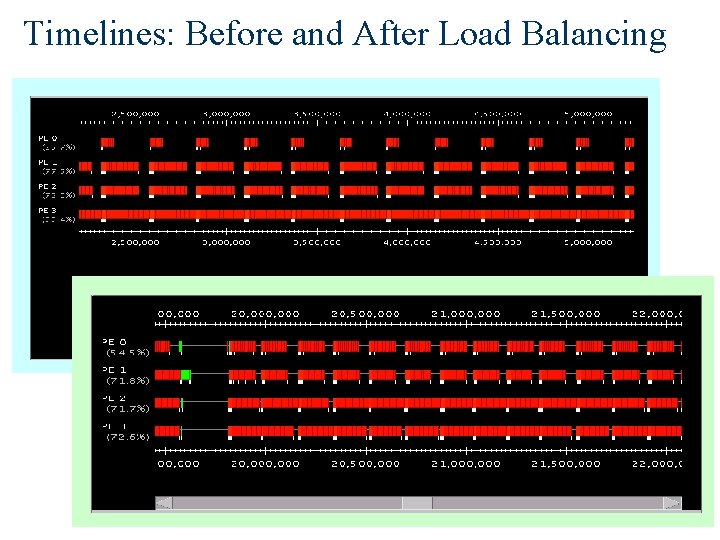

Timelines: Before and After Load Balancing 68

Live. Viz – What is it? Charm++ library Visualization tool Inspect your program’s current state Java client runs on any machine You code the image generation 2 D and 3 D modes 69

Live. Viz – Monitoring Your Application Live. Viz allows you to watch your application’s progress Doesn’t slow down computation when there is no client 70

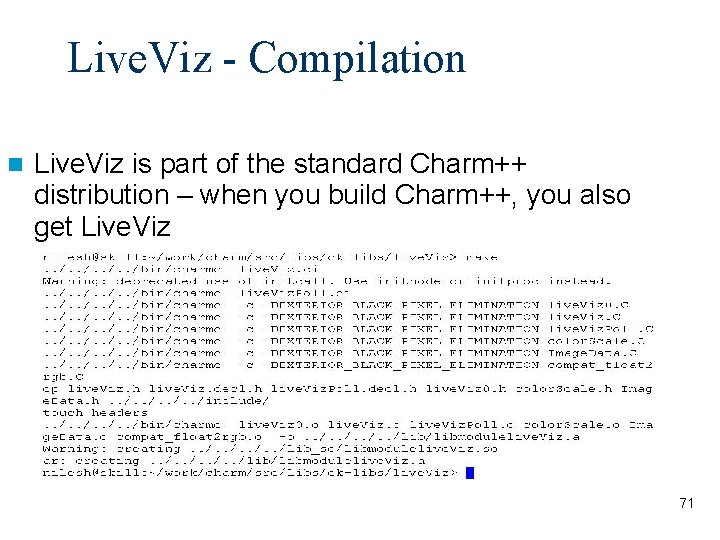

Live. Viz - Compilation Live. Viz is part of the standard Charm++ distribution – when you build Charm++, you also get Live. Viz 71

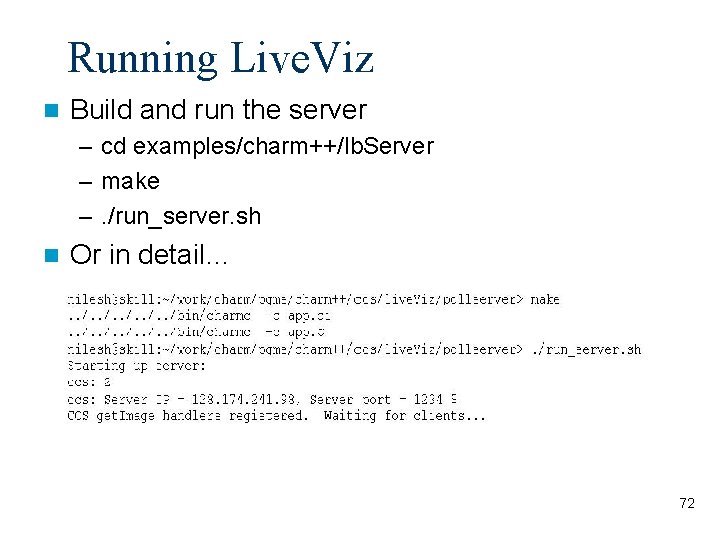

Running Live. Viz Build and run the server – cd examples/charm++/lb. Server – make –. /run_server. sh Or in detail… 72

![Running Live. Viz Run the client – live. Viz [<host> [<port>]] Brings up a Running Live. Viz Run the client – live. Viz [<host> [<port>]] Brings up a](http://slidetodoc.com/presentation_image_h2/93d9ced407da38da38964d2315e68a1d/image-73.jpg)

Running Live. Viz Run the client – live. Viz [<host> [<port>]] Brings up a result window: 73

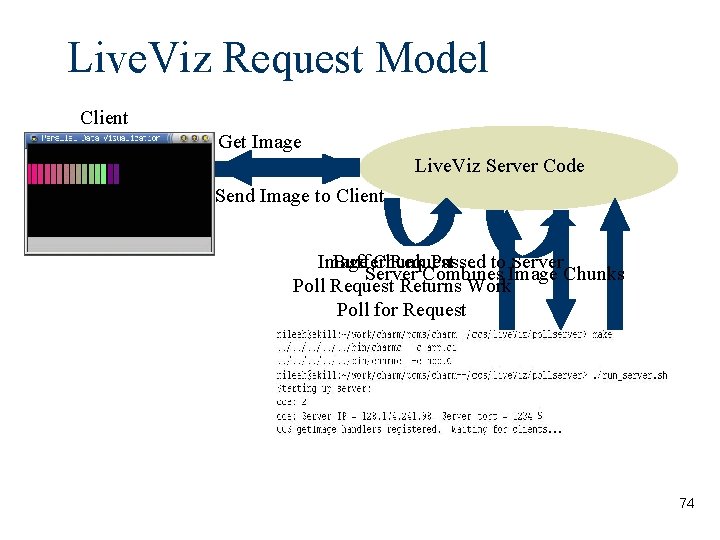

Live. Viz Request Model Client Get Image Live. Viz Server Code Send Image to Client Image Buffer Chunk Request Passed to Server Combines Image Chunks Poll Request Returns Work Poll for Request 74

Jacobi 2 D Example Structure Main: Setup worker array, pass data to them Workers: Start looping Send messages to all neighbors with ghost rows Wait for all neighbors to send ghost rows to me Once they arrive, do the regular Jacobi relaxation Calculate maximum error, do a reduction to compute global maximum error If timestep is a multiple of 64, load balance the computation. Then restart the loop. 75

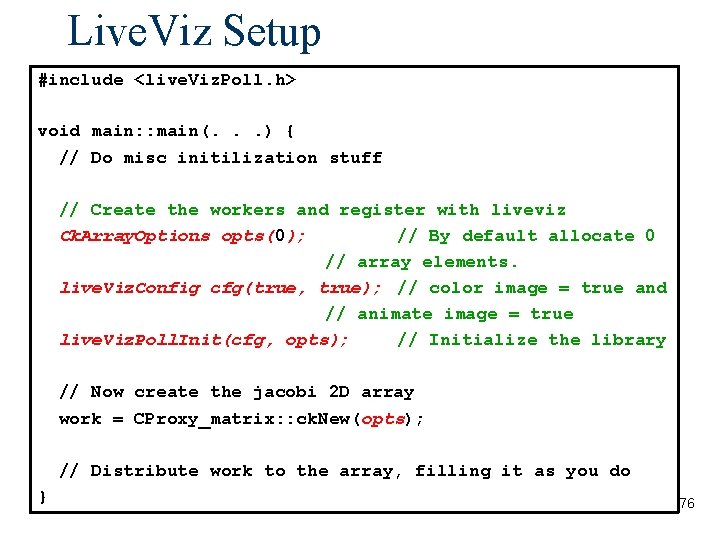

Live. Viz Setup #include <live. Viz. Poll. h> void main: : main(. . . ) { // Do misc initilization stuff // Create the workers and register with liveviz Ck. Array. Options opts(0); // By default allocate 0 // array elements. live. Viz. Config cfg(true, true); // color image = true and // animate image = true live. Viz. Poll. Init(cfg, opts); // Initialize the library // Now create the jacobi (empty)2 D jacobi array 2 D array work = CProxy_matrix: : ck. New(opts); CProxy_matrix: : ck. New(0); // Distribute work to the array, filling it as you do } 76

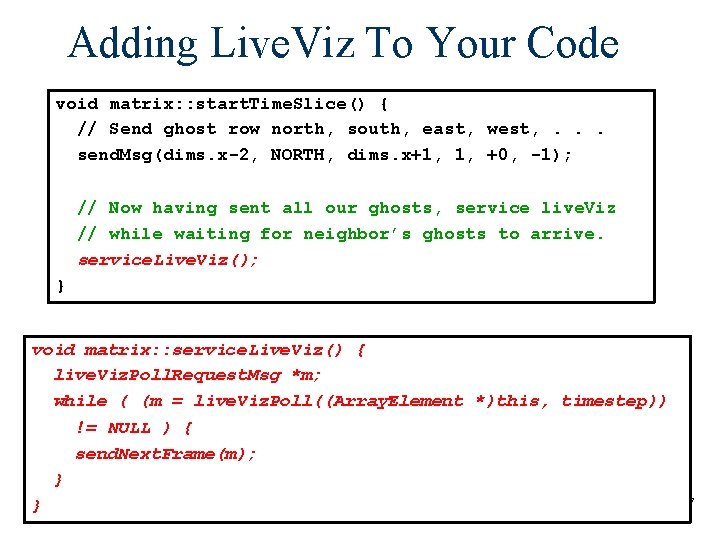

Adding Live. Viz To Your Code void matrix: : start. Time. Slice() { // Send ghost row north, south, east, west, . . . send. Msg(dims. x-2, NORTH, dims. x+1, 1, +0, -1); // Now having sent all our ghosts, service live. Viz // while waiting for neighbor’s ghosts to arrive. service. Live. Viz(); } void matrix: : service. Live. Viz() { live. Viz. Poll. Request. Msg *m; while ( (m = live. Viz. Poll((Array. Element *)this, timestep)) != NULL ) { send. Next. Frame(m); } } 77

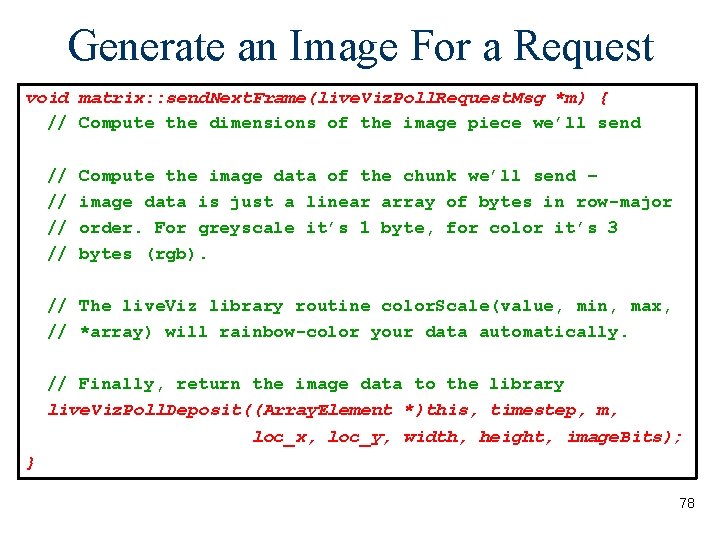

Generate an Image For a Request void matrix: : send. Next. Frame(live. Viz. Poll. Request. Msg *m) { // Compute the dimensions of the image piece we’ll send // // Compute the image data of the chunk we’ll send – image data is just a linear array of bytes in row-major order. For greyscale it’s 1 byte, for color it’s 3 bytes (rgb). // The live. Viz library routine color. Scale(value, min, max, // *array) will rainbow-color your data automatically. // Finally, return the image data to the library live. Viz. Poll. Deposit((Array. Element *)this, timestep, m, loc_x, loc_y, width, height, image. Bits); } 78

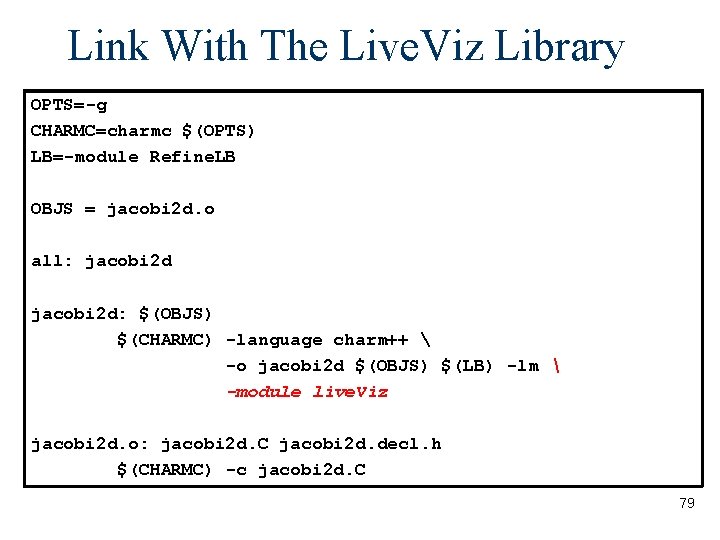

Link With The Live. Viz Library OPTS=-g CHARMC=charmc $(OPTS) LB=-module Refine. LB OBJS = jacobi 2 d. o all: jacobi 2 d: $(OBJS) $(CHARMC) -language charm++ –lm -o jacobi 2 d $(OBJS) $(LB) -lm -module live. Viz jacobi 2 d. o: jacobi 2 d. C jacobi 2 d. decl. h $(CHARMC) -c jacobi 2 d. C 79

Live. Viz Summary Easy to use visualization library Simple code handles any number of clients Doesn’t slow computation when there are no clients connected Works in parallel, with load balancing, etc. 80

Advanced Features Groups Node Groups Priorities Entry Method Attributes Communications Optimization Checkpoint/Restart 81

Conclusions Better Software Engineering – Logical Units decoupled from number of processors – Adaptive overlap between computation and communication – Automatic load balancing and profiling Powerful Parallel Tools – Projections – Parallel Debugger – Live. Viz 82

More Information http: //charm. cs. uiuc. edu – Manuals – Papers – Download files – FAQs ppl@cs. uiuc. edu 83

- Slides: 83