Chapter 9 Approximation Algorithms 9 1 NPComplete Problem

Chapter 9 Approximation Algorithms 9 -1

NP-Complete Problem n n Enumeration Branch an Bound Greedy Approximation n PTAS K-Approximation No Approximation 9 -2

Approximation algorithm n n Up to now, the best algorithm for solving an NP-complete problem requires exponential time in the worst case. It is too time-consuming. To reduce the time required for solving a problem, we can relax the problem, and obtain a feasible solution “close” to an optimal solution 9 -3

Approximation Ratios n Optimization Problems n n We have some problem instance x that has many feasible “solutions”. We are trying to minimize (or maximize) some cost function c(S) for a “solution” S to x. For example, n n n �Finding a minimum spanning tree of a graph Finding a smallest vertex cover of a graph �Finding a smallest traveling salesperson tour in a graph 9 -4

Approximation Ratios n An approximation produces a solution T n Relative approximation ratio n T is a k-approximation to the optimal solution OPT if c(T)/c(OPT) < k (assuming a minimizing problem; a maximization approximation would be the reverse) n Absolute approximation ratio n n For example, chromatic number problem If the optimal solution of this instance is three and the approximation T is four, then T is a 1 -approximation to the optimal solution. 9 -5

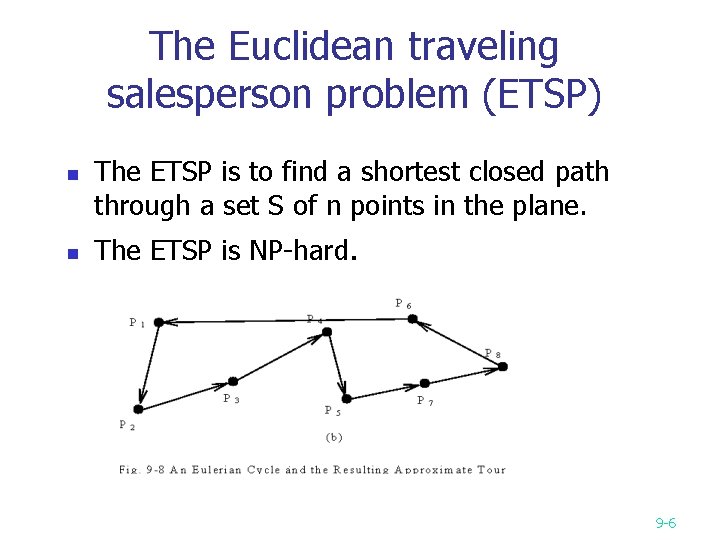

The Euclidean traveling salesperson problem (ETSP) n n The ETSP is to find a shortest closed path through a set S of n points in the plane. The ETSP is NP-hard. 9 -6

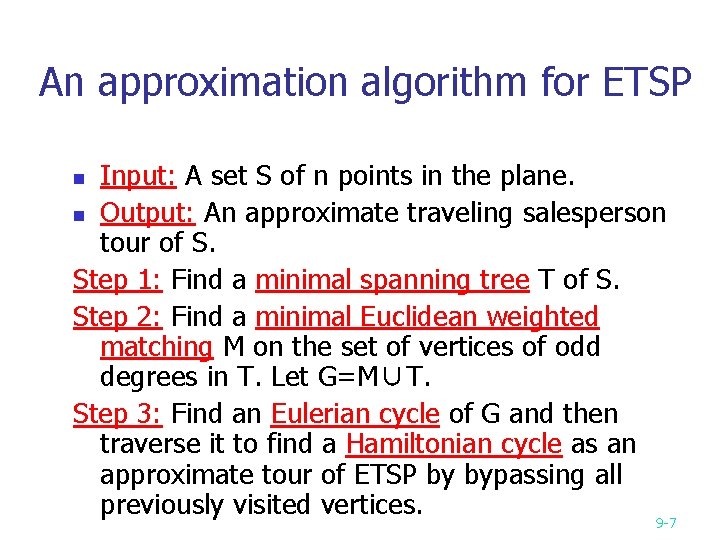

An approximation algorithm for ETSP Input: A set S of n points in the plane. n Output: An approximate traveling salesperson tour of S. Step 1: Find a minimal spanning tree T of S. Step 2: Find a minimal Euclidean weighted matching M on the set of vertices of odd degrees in T. Let G=M∪T. Step 3: Find an Eulerian cycle of G and then traverse it to find a Hamiltonian cycle as an approximate tour of ETSP by bypassing all previously visited vertices. 9 -7 n

An example for ETSP algorithm n Step 1: Find a minimal spanning tree. 9 -8

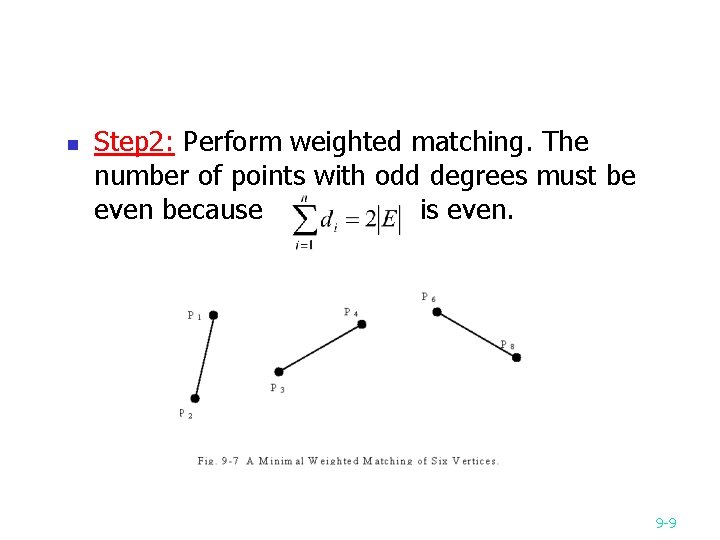

n Step 2: Perform weighted matching. The number of points with odd degrees must be even because is even. 9 -9

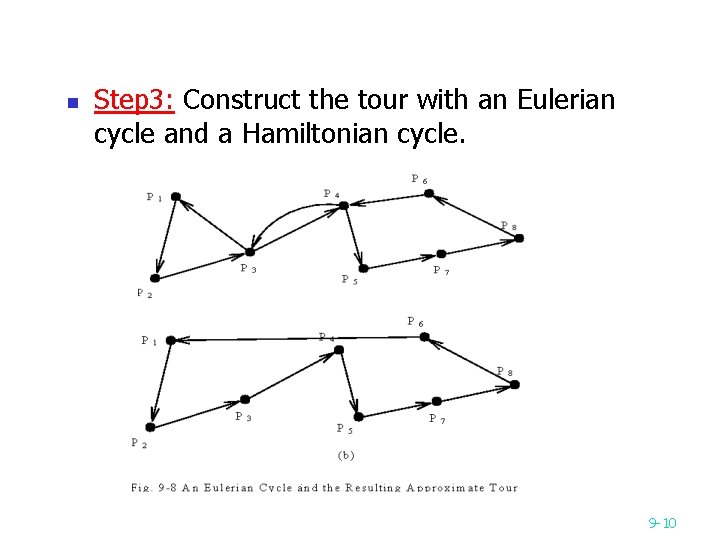

n Step 3: Construct the tour with an Eulerian cycle and a Hamiltonian cycle. 9 -10

n n Time complexity: O(n 3) Step 1: O(nlogn) Step 2: O(n 3) Step 3: O(n) How close the approximate solution to an optimal solution? n The approximate tour is within 3/2 of the optimal one. (The approximate rate is 3/2. ) (See the proof on the next page. ) 9 -11

Proof of approximate rate optimal tour L: j 1…i 1 j 2…i 2 j 3…i 2 m {i 1, i 2, …, i 2 m}: the set of odd degree vertices in T. 2 matchings: M 1={[i 1, i 2], [i 3, i 4], …, [i 2 m-1, i 2 m]} M 2={[i 2, i 3], [i 4, i 5], …, [i 2 m, i 1]} length(L) length(M 1) + length(M 2) (triangular inequality) 2 length(M ) length(M) 1/2 length(L ) G = T∪M length(T) + length(M) length(L) + 1/2 length(L) = 3/2 length(L) n 9 -12

The bottleneck traveling salesperson problem (BTSP) Minimize the longest edge of a tour. This is a mini-max problem. This problem is NP-hard. The input data for this problem fulfill the following assumptions: n n n The graph is a complete graph. All edges obey the triangular inequality rule. 9 -13

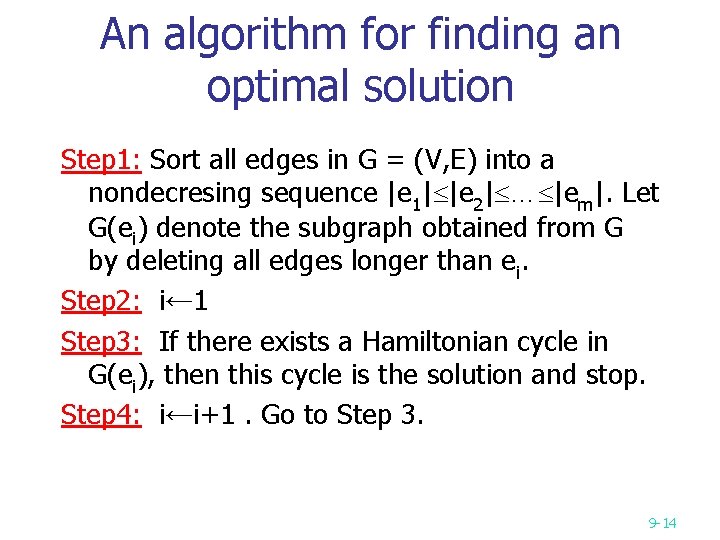

An algorithm for finding an optimal solution Step 1: Sort all edges in G = (V, E) into a nondecresing sequence |e 1| |e 2| … |em|. Let G(ei) denote the subgraph obtained from G by deleting all edges longer than ei. Step 2: i← 1 Step 3: If there exists a Hamiltonian cycle in G(ei), then this cycle is the solution and stop. Step 4: i←i+1. Go to Step 3. 9 -14

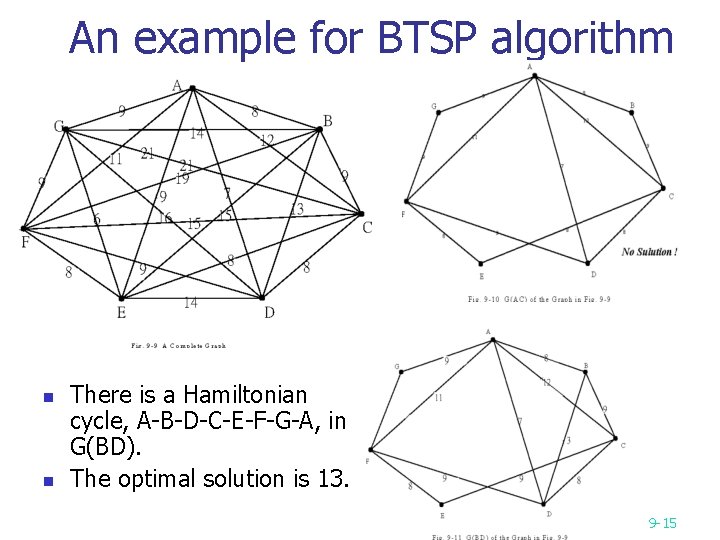

An example for BTSP algorithm n n n e. g. There is a Hamiltonian cycle, A-B-D-C-E-F-G-A, in G(BD). The optimal solution is 13. 9 -15

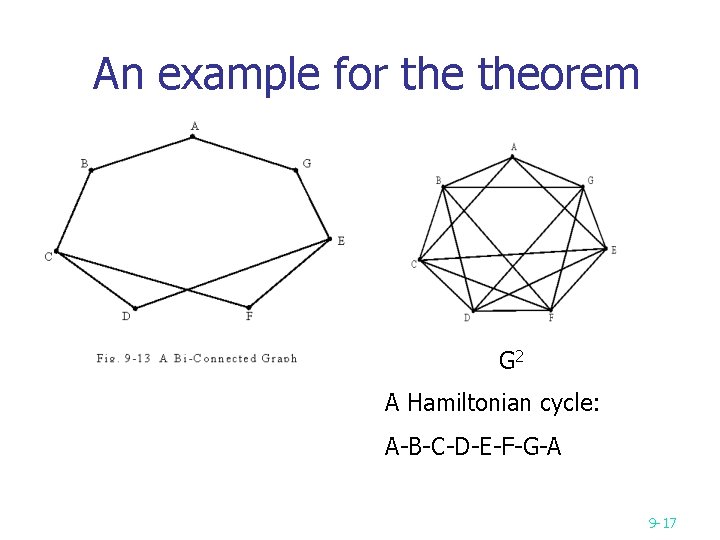

Theorem for Hamiltonian cycles n n Def : The t-th power of G=(V, E), denoted as Gt=(V, Et), is a graph that an edge (u, v) Et if there is a path from u to v with at most t edges in G. Theorem: If a graph G is bi-connected, then G 2 has a Hamiltonian cycle. 9 -16

An example for theorem G 2 A Hamiltonian cycle: A-B-C-D-E-F-G-A 9 -17

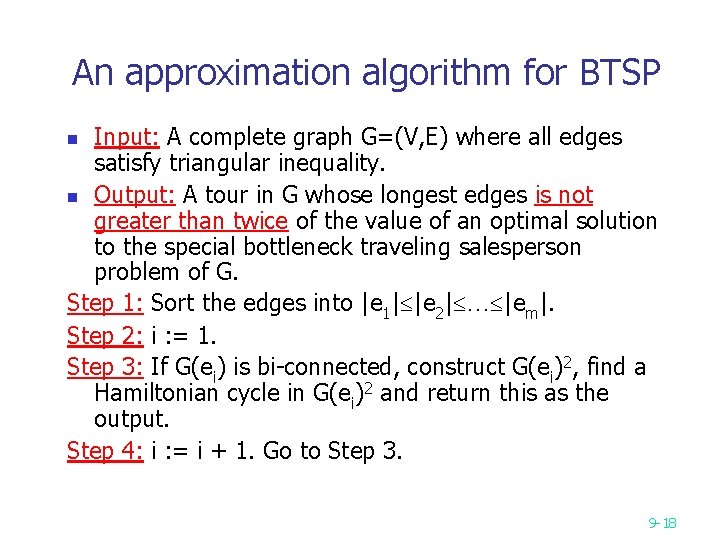

An approximation algorithm for BTSP Input: A complete graph G=(V, E) where all edges satisfy triangular inequality. n Output: A tour in G whose longest edges is not greater than twice of the value of an optimal solution to the special bottleneck traveling salesperson problem of G. Step 1: Sort the edges into |e 1| |e 2| … |em|. Step 2: i : = 1. Step 3: If G(ei) is bi-connected, construct G(ei)2, find a Hamiltonian cycle in G(ei)2 and return this as the output. Step 4: i : = i + 1. Go to Step 3. n 9 -18

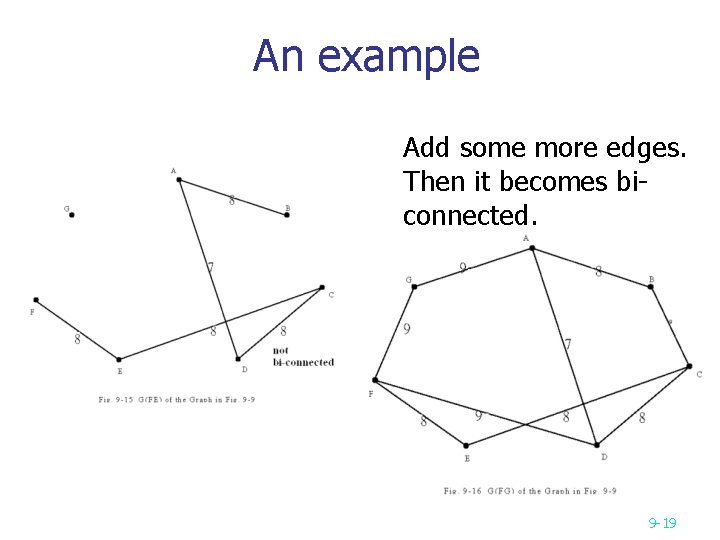

An example Add some more edges. Then it becomes biconnected. 9 -19

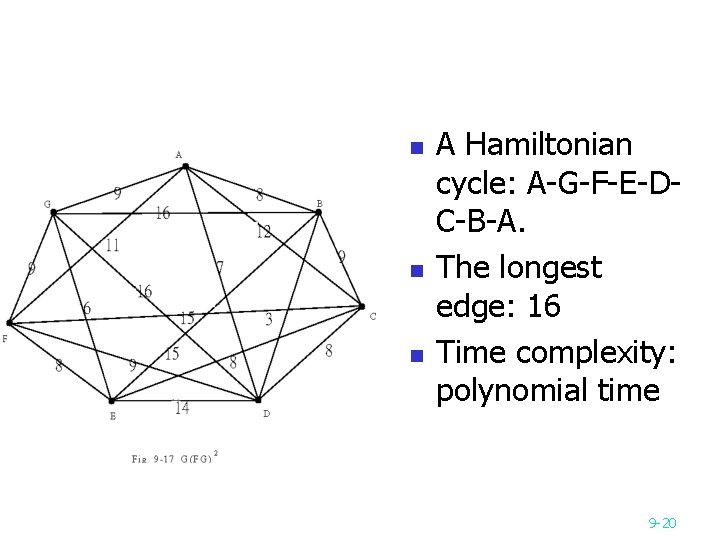

n n n A Hamiltonian cycle: A-G-F-E-DC-B-A. The longest edge: 16 Time complexity: polynomial time 9 -20

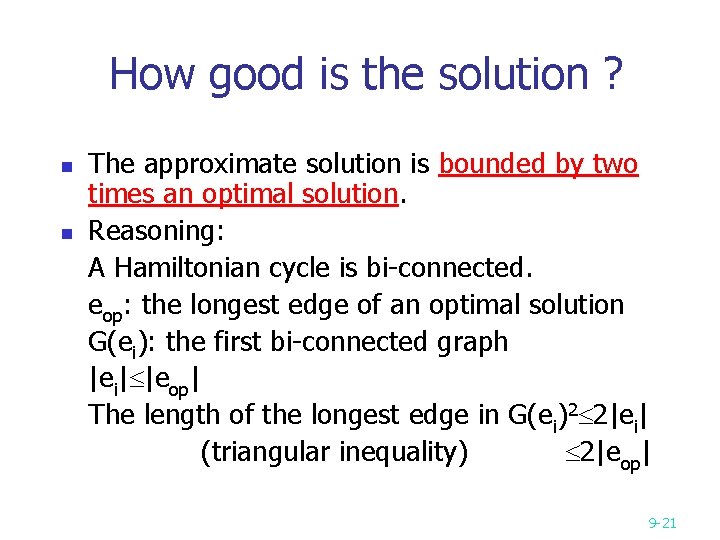

How good is the solution ? n n The approximate solution is bounded by two times an optimal solution. Reasoning: A Hamiltonian cycle is bi-connected. eop: the longest edge of an optimal solution G(ei): the first bi-connected graph |ei| |eop| The length of the longest edge in G(ei)2 2|ei| (triangular inequality) 2|eop| 9 -21

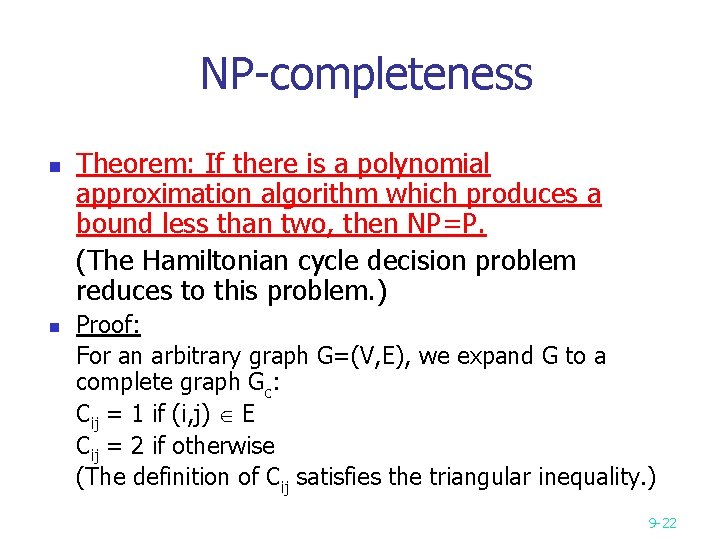

NP-completeness n n Theorem: If there is a polynomial approximation algorithm which produces a bound less than two, then NP=P. (The Hamiltonian cycle decision problem reduces to this problem. ) Proof: For an arbitrary graph G=(V, E), we expand G to a complete graph Gc: Cij = 1 if (i, j) E Cij = 2 if otherwise (The definition of Cij satisfies the triangular inequality. ) 9 -22

Let V* denote the value of an optimal solution of the bottleneck TSP of Gc. V* = 1 G has a Hamiltonian cycle Because there are only two kinds of edges, 1 and 2 in Gc, if we can produce an approximate solution whose value is less than 2 V*, then we can also solve the Hamiltonian cycle decision problem. 9 -23

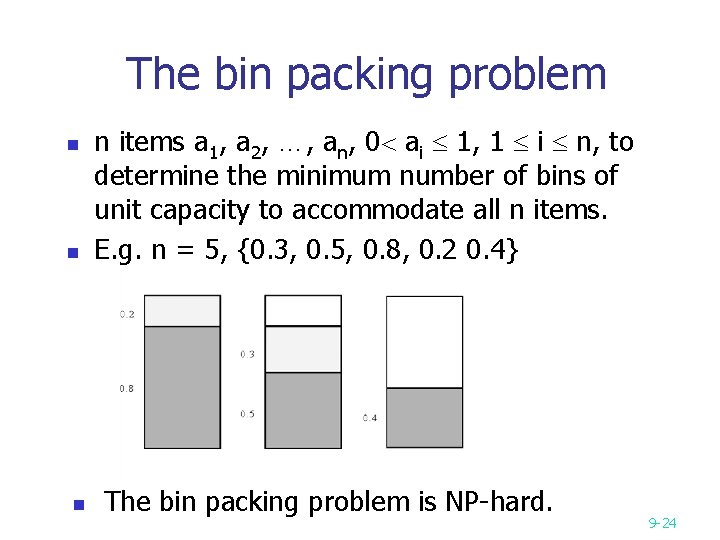

The bin packing problem n n items a 1, a 2, …, an, 0 ai 1, 1 i n, to determine the minimum number of bins of unit capacity to accommodate all n items. E. g. n = 5, {0. 3, 0. 5, 0. 8, 0. 2 0. 4} The bin packing problem is NP-hard. 9 -24

An approximation algorithm for the bin packing problem n n An approximation algorithm: (first-fit) place ai into the lowest-indexed bin which can accommodate ai. Theorem: The number of bins used in the first-fit algorithm is at most twice of the optimal solution. 9 -25

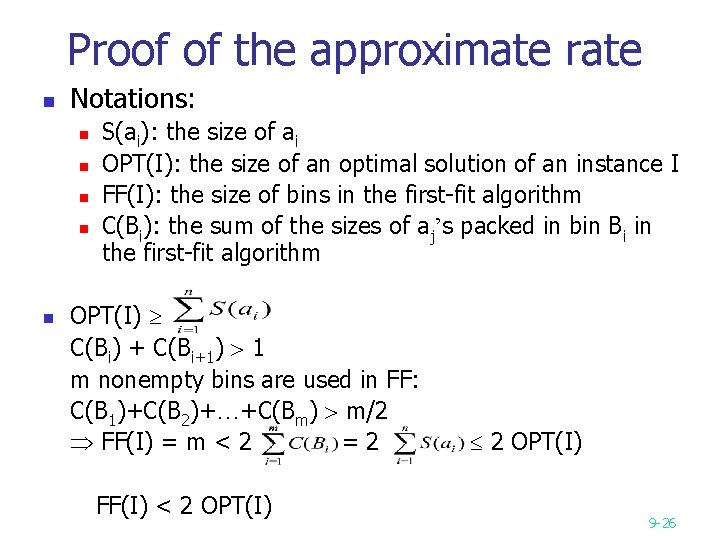

Proof of the approximate rate n Notations: n n n S(ai): the size of ai OPT(I): the size of an optimal solution of an instance I FF(I): the size of bins in the first-fit algorithm C(Bi): the sum of the sizes of aj’s packed in bin Bi in the first-fit algorithm OPT(I) C(Bi) + C(Bi+1) 1 m nonempty bins are used in FF: C(B 1)+C(B 2)+…+C(Bm) m/2 FF(I) = m < 2 =2 FF(I) < 2 OPT(I) 9 -26

Knapsack problem n Fractional knapsack problem n n P 0/1 knapsack problem n n NP-Complete Approximation n PTAS 9 -27

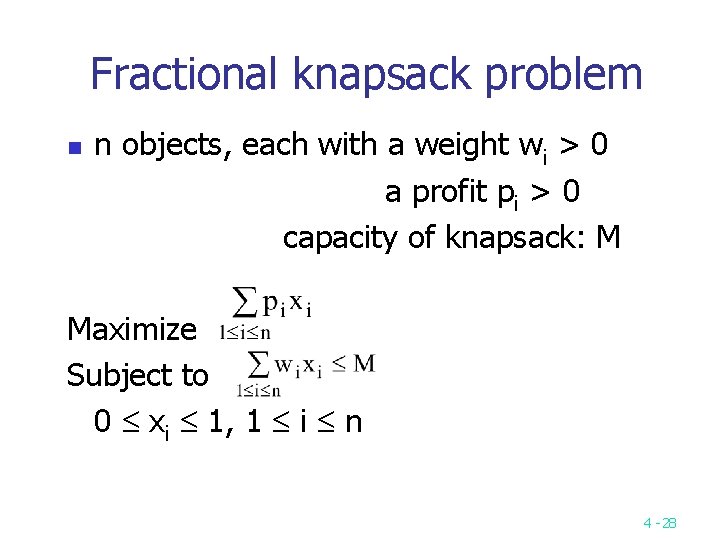

Fractional knapsack problem n n objects, each with a weight wi > 0 a profit pi > 0 capacity of knapsack: M Maximize Subject to 0 xi 1, 1 i n 4 -28

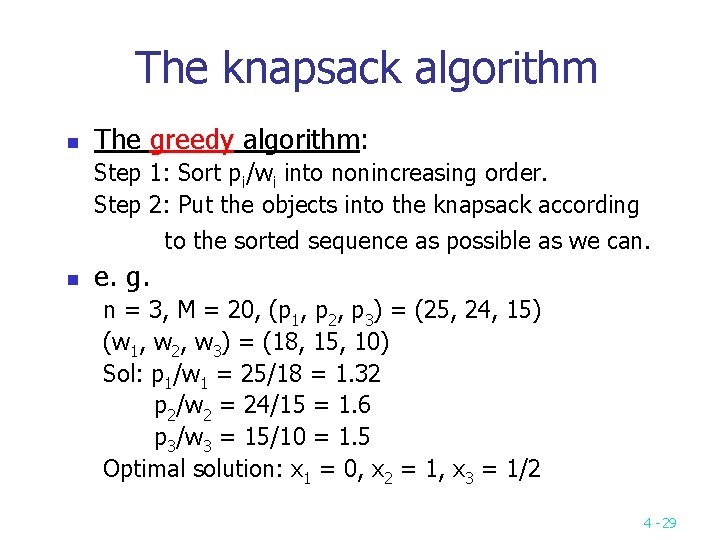

The knapsack algorithm n The greedy algorithm: Step 1: Sort pi/wi into nonincreasing order. Step 2: Put the objects into the knapsack according to the sorted sequence as possible as we can. n e. g. n = 3, M = 20, (p 1, p 2, p 3) = (25, 24, 15) (w 1, w 2, w 3) = (18, 15, 10) Sol: p 1/w 1 = 25/18 = 1. 32 p 2/w 2 = 24/15 = 1. 6 p 3/w 3 = 15/10 = 1. 5 Optimal solution: x 1 = 0, x 2 = 1, x 3 = 1/2 4 -29

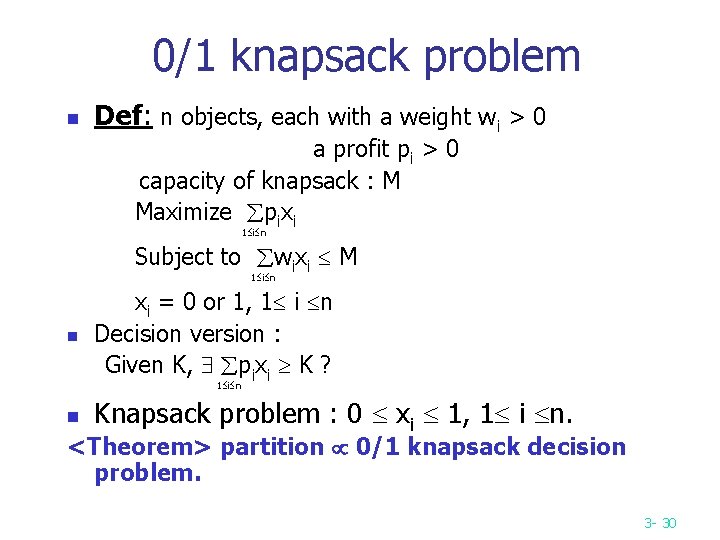

0/1 knapsack problem n Def: n objects, each with a weight wi > 0 a profit pi > 0 capacity of knapsack : M Maximize pixi 1 i n Subject to wixi M 1 i n n xi = 0 or 1, 1 i n Decision version : Given K, pixi K ? 1 i n n Knapsack problem : 0 xi 1, 1 i n. <Theorem> partition 0/1 knapsack decision problem. 3 - 30

Polynomial-Time Approximation Schemes n n A problem L has a polynomial-time approximation scheme (PTAS) if it has a polynomial-time (1+ε)-approximation algorithm, for any fixed ε >0 (this value can appear in the running time). 0/1 Knapsack has a PTAS, with a running time that is O(n^3 / ε). 9 -31

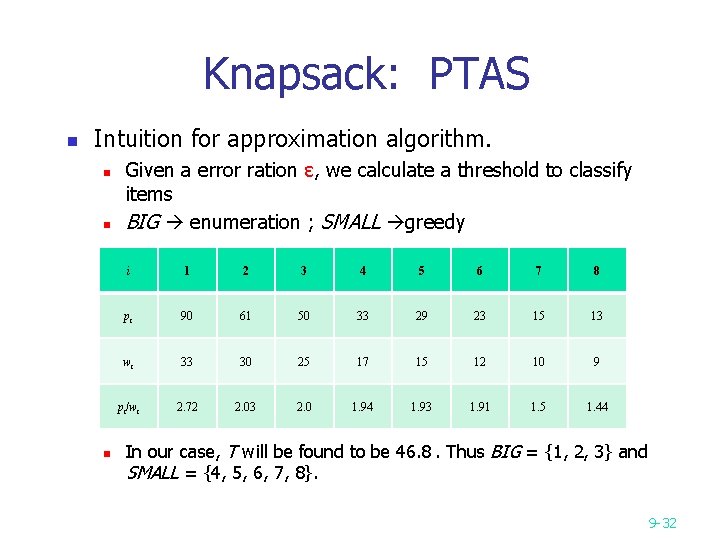

Knapsack: PTAS n Intuition for approximation algorithm. n n n Given a error ration ε, we calculate a threshold to classify items BIG enumeration ; SMALL greedy i 1 2 3 4 5 6 7 8 pi 90 61 50 33 29 23 15 13 wi 33 30 25 17 15 12 10 9 pi/wi 2. 72 2. 03 2. 0 1. 94 1. 93 1. 91 1. 5 1. 44 In our case, T will be found to be 46. 8. Thus BIG = {1, 2, 3} and SMALL = {4, 5, 6, 7, 8}. 9 -32

Knapsack: PTAS n For the BIG, we try to enumerate all possible solutions. n Solution 1: n n We select items 1 and 2. The sum of normalized profits is 15. The corresponding sum of original profits is 90 + 61 = 151. The sum of weights is 63. Solution 2: n We select items 1, 2, and 3. The sum of normalized profits is 20. The corresponding sum of original profits is 90 + 61 + 50 = 201. The sum of weights is 88. 9 -33

Knapsack: PTAS n n For the SMALL, we use greedy strategy to find a possible solutions. Solution 1: n n For Solution 1, we can add items 4 and 6. The sum of profits will be 151 + 33 + 23 = 207. Solution 2: n For Solution 2, we can not add any item from SMALL. Thus the sum of profits is 201. 9 -34

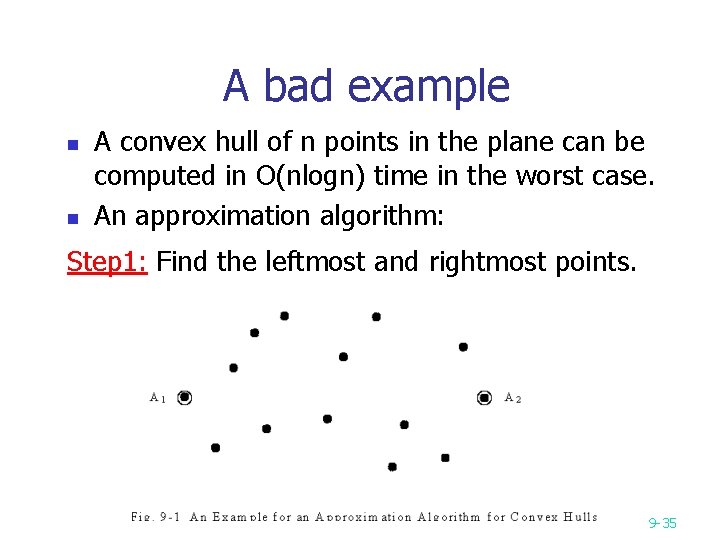

A bad example n n A convex hull of n points in the plane can be computed in O(nlogn) time in the worst case. An approximation algorithm: Step 1: Find the leftmost and rightmost points. 9 -35

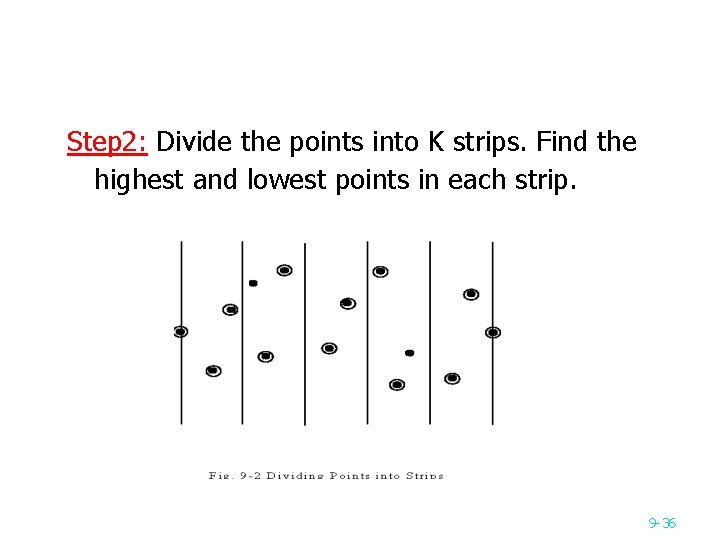

Step 2: Divide the points into K strips. Find the highest and lowest points in each strip. 9 -36

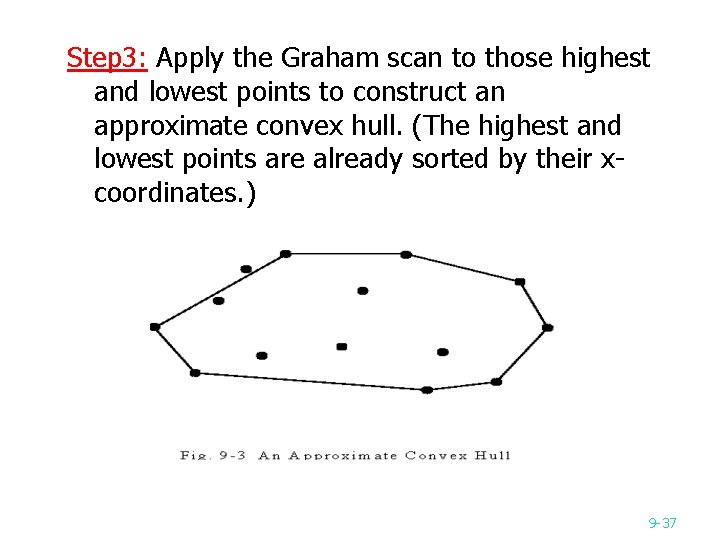

Step 3: Apply the Graham scan to those highest and lowest points to construct an approximate convex hull. (The highest and lowest points are already sorted by their xcoordinates. ) 9 -37

Time complexity n Time complexity: O(n+k) Step 1: O(n) Step 2: O(n) Step 3: O(k) 9 -38

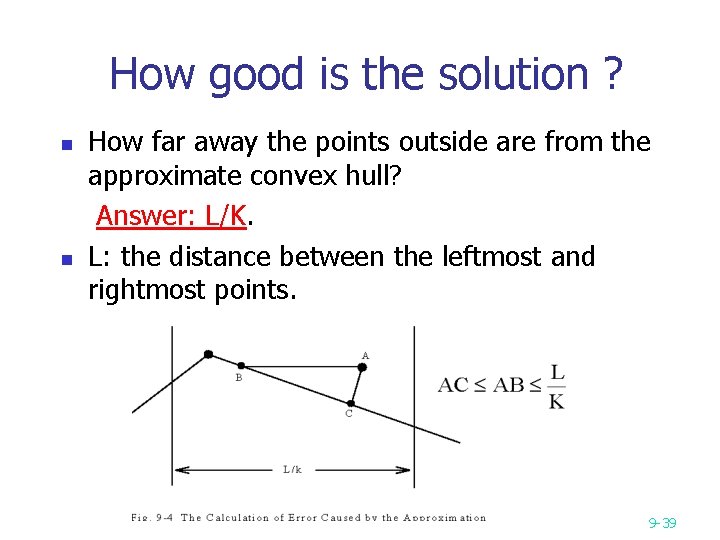

How good is the solution ? n n How far away the points outside are from the approximate convex hull? Answer: L/K. L: the distance between the leftmost and rightmost points. 9 -39

- Slides: 39