Chapter 6 UNCONSTRAINED MULTIVARIABLE OPTIMIZATION 1 Chapter 6

Chapter 6 UNCONSTRAINED MULTIVARIABLE OPTIMIZATION 1

Chapter 6 6. 1 Function Values Only 6. 2 First Derivatives of f (gradient and conjugate direction methods) 6. 3 Second Derivatives of f (e. g. , Newton’s method) 6. 4 Quasi-Newton methods 2

3 Chapter 6

4 Chapter 6

5 Chapter 6

6 Chapter 6

7 Chapter 6

8 Chapter 6

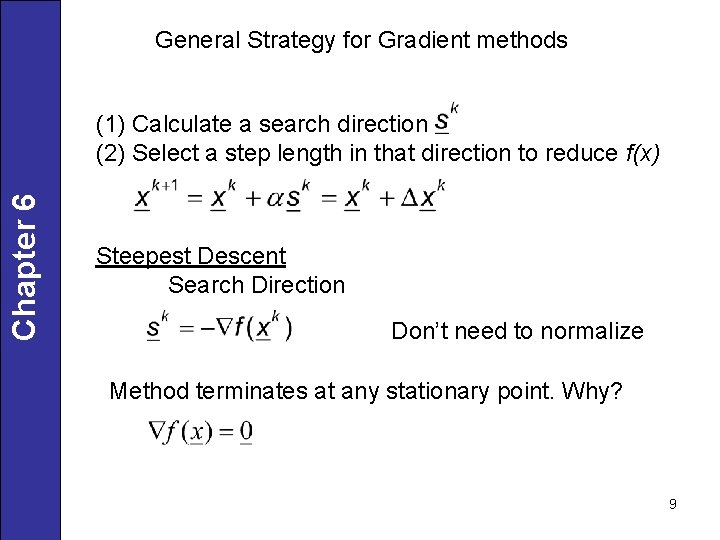

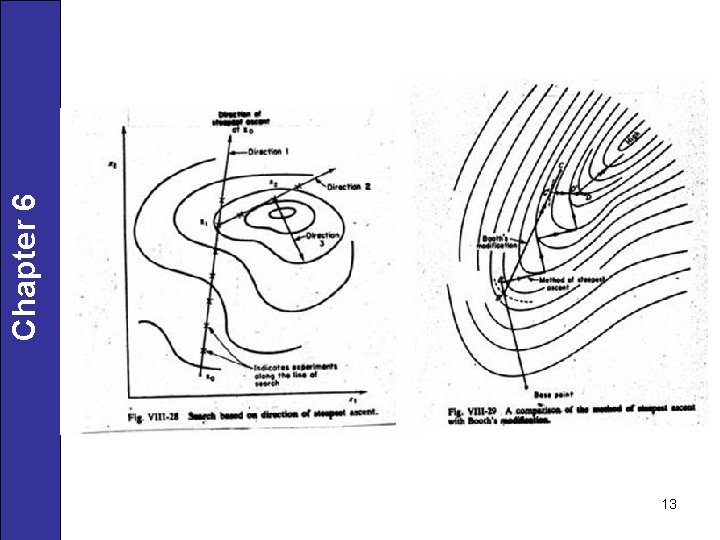

General Strategy for Gradient methods Chapter 6 (1) Calculate a search direction (2) Select a step length in that direction to reduce f(x) Steepest Descent Search Direction Don’t need to normalize Method terminates at any stationary point. Why? 9

So procedure can stop at saddle point. Need to show Chapter 6 is positive definite for a minimum. Step Length How to pick a • analytically • numerically 10

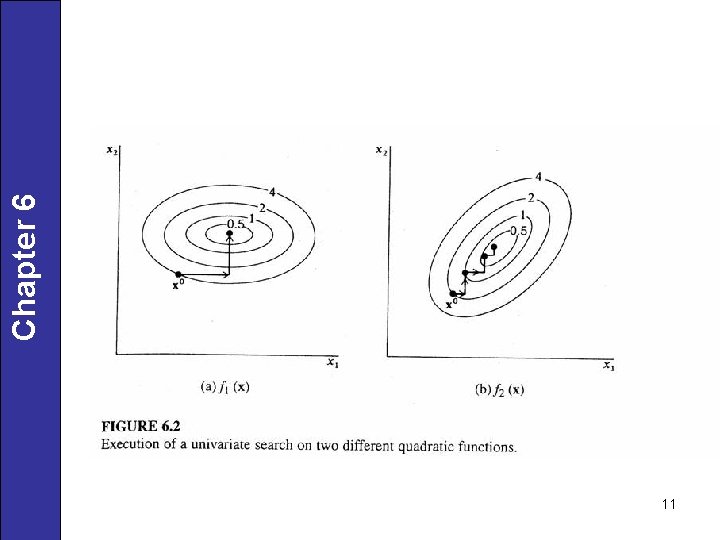

11 Chapter 6

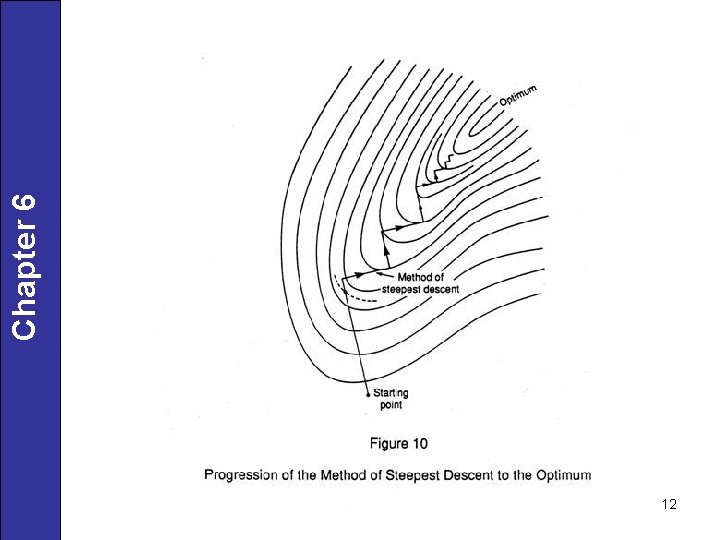

12 Chapter 6

13 Chapter 6

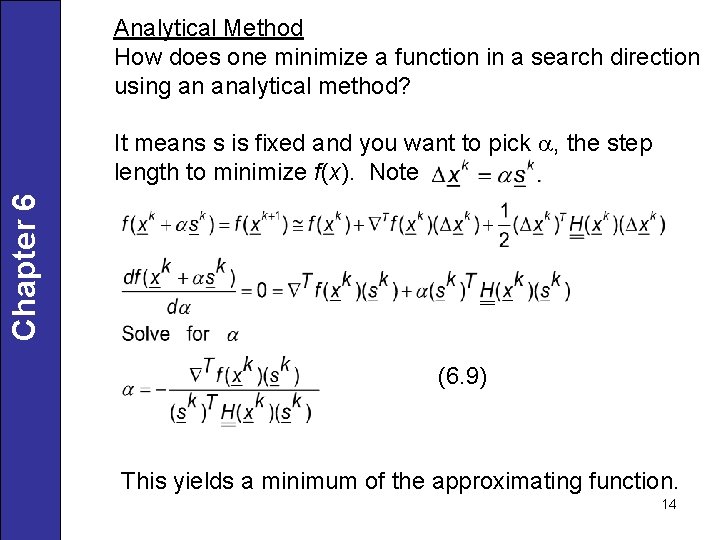

Analytical Method How does one minimize a function in a search direction using an analytical method? Chapter 6 It means s is fixed and you want to pick a, the step length to minimize f(x). Note (6. 9) This yields a minimum of the approximating function. 14

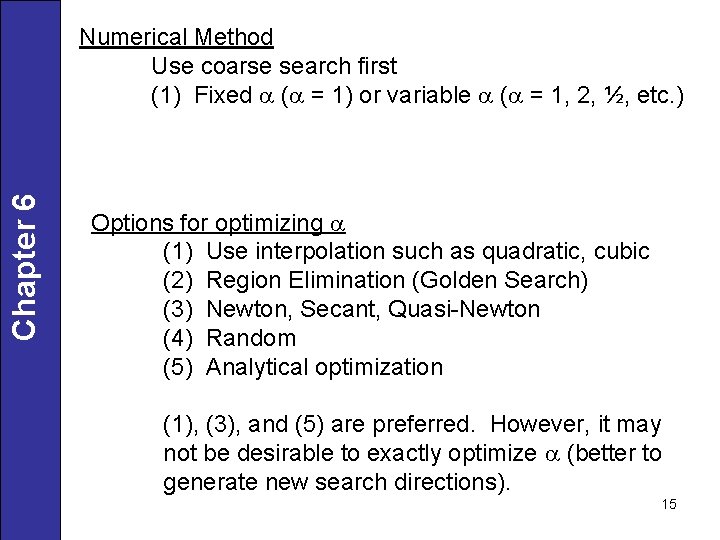

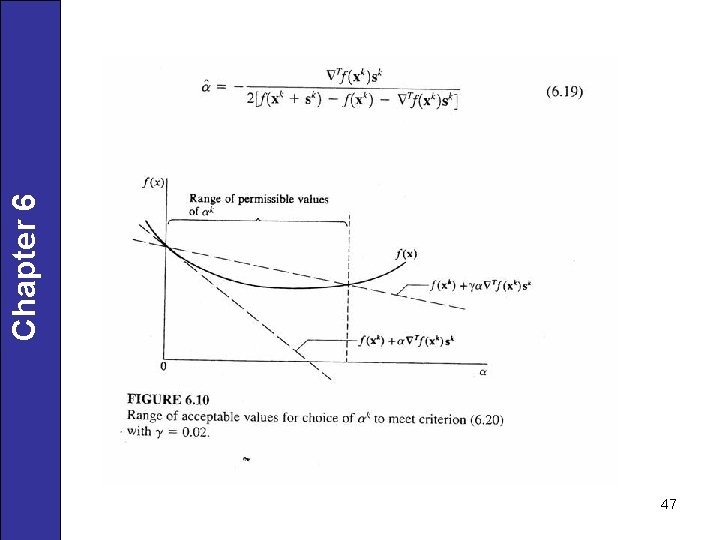

Chapter 6 Numerical Method Use coarse search first (1) Fixed a (a = 1) or variable a (a = 1, 2, ½, etc. ) Options for optimizing a (1) Use interpolation such as quadratic, cubic (2) Region Elimination (Golden Search) (3) Newton, Secant, Quasi-Newton (4) Random (5) Analytical optimization (1), (3), and (5) are preferred. However, it may not be desirable to exactly optimize a (better to generate new search directions). 15

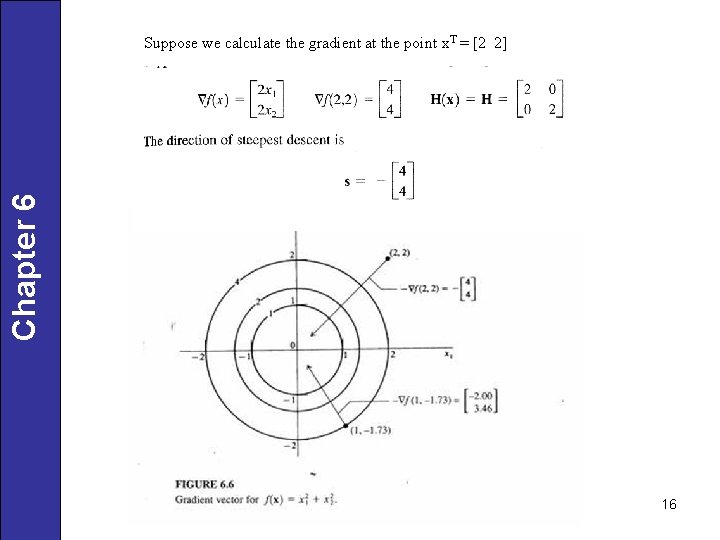

Chapter 6 Suppose we calculate the gradient at the point x. T = [2 2] 16

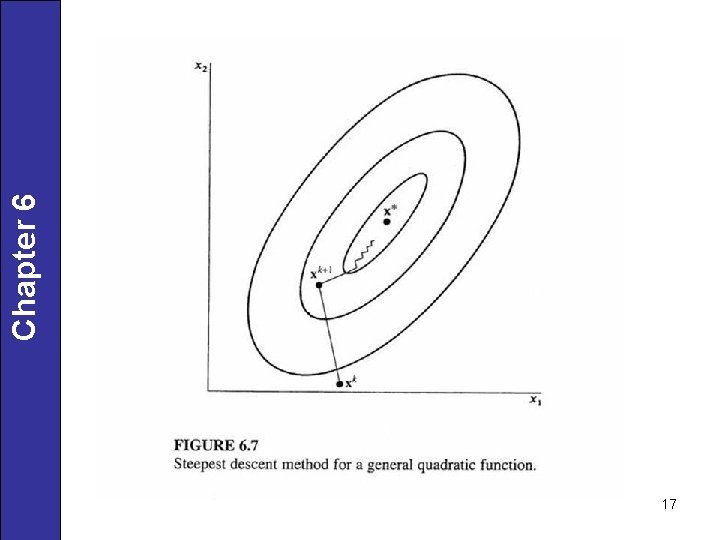

17 Chapter 6

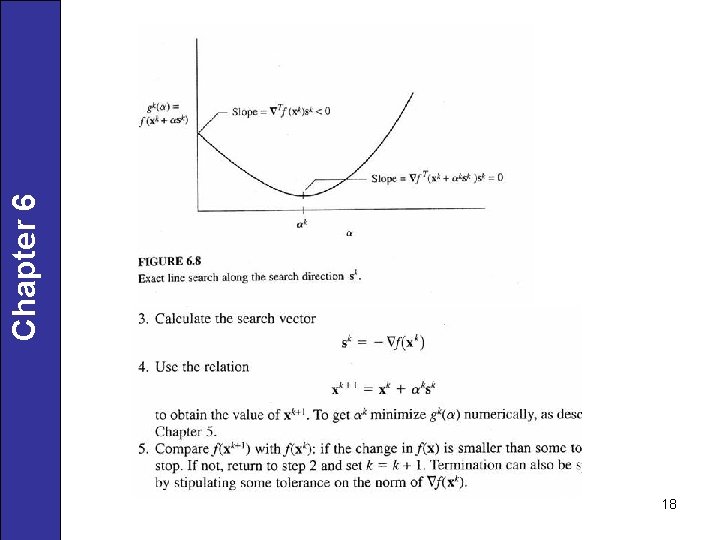

18 Chapter 6

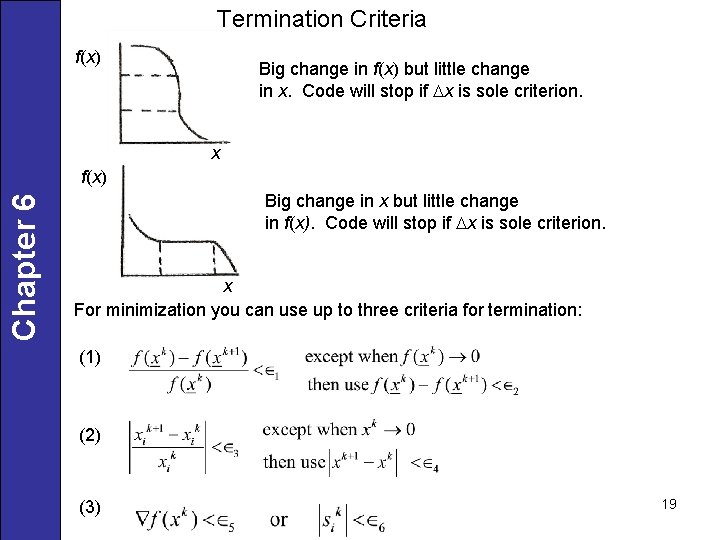

Termination Criteria f(x) Big change in f(x) but little change in x. Code will stop if Dx is sole criterion. x Chapter 6 f(x) Big change in x but little change in f(x). Code will stop if Dx is sole criterion. x For minimization you can use up to three criteria for termination: (1) (2) (3) 19

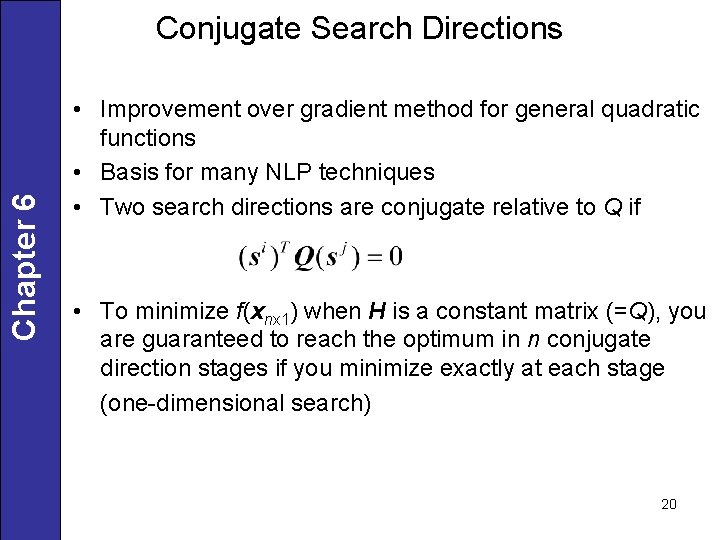

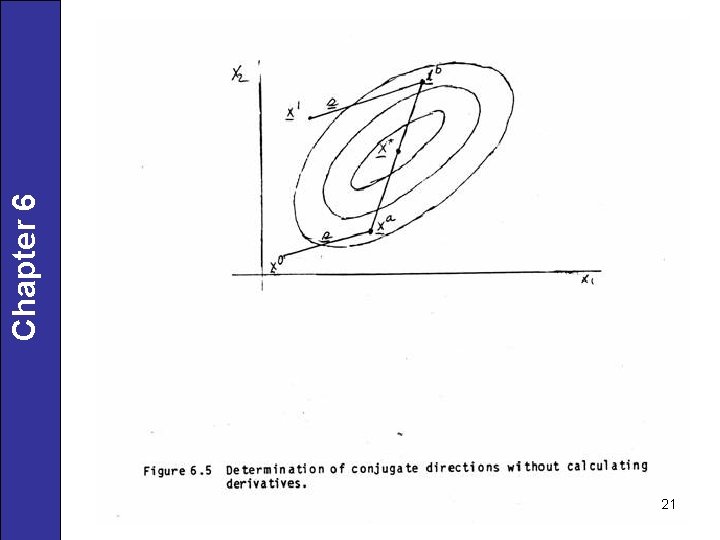

Chapter 6 Conjugate Search Directions • Improvement over gradient method for general quadratic functions • Basis for many NLP techniques • Two search directions are conjugate relative to Q if • To minimize f(xnx 1) when H is a constant matrix (=Q), you are guaranteed to reach the optimum in n conjugate direction stages if you minimize exactly at each stage (one-dimensional search) 20

21 Chapter 6

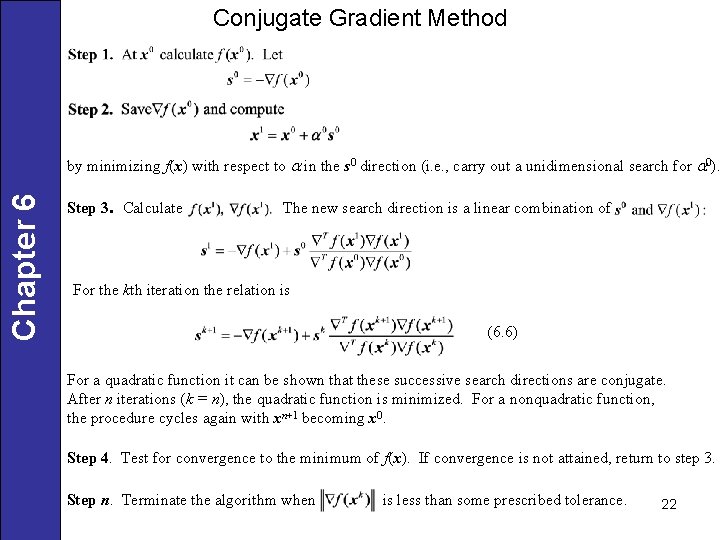

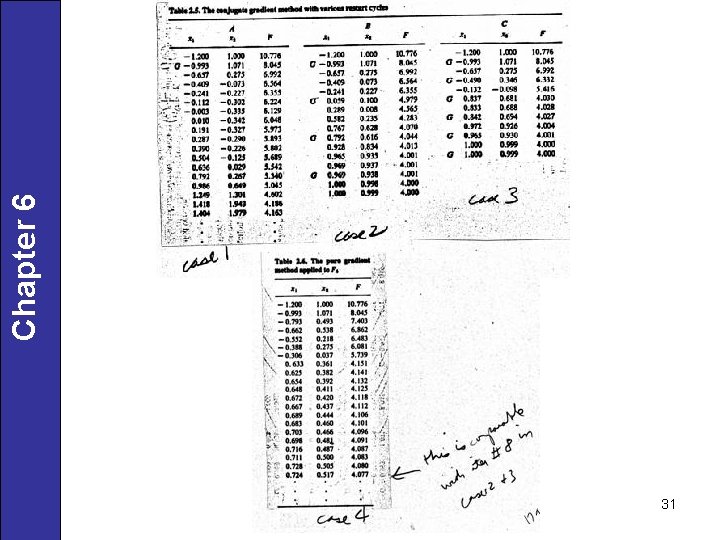

Conjugate Gradient Method Chapter 6 by minimizing f(x) with respect to a in the s 0 direction (i. e. , carry out a unidimensional search for a 0). Step 3. Calculate The new search direction is a linear combination of For the kth iteration the relation is (6. 6) For a quadratic function it can be shown that these successive search directions are conjugate. After n iterations (k = n), the quadratic function is minimized. For a nonquadratic function, the procedure cycles again with xn+1 becoming x 0. Step 4. Test for convergence to the minimum of f(x). If convergence is not attained, return to step 3. Step n. Terminate the algorithm when is less than some prescribed tolerance. 22

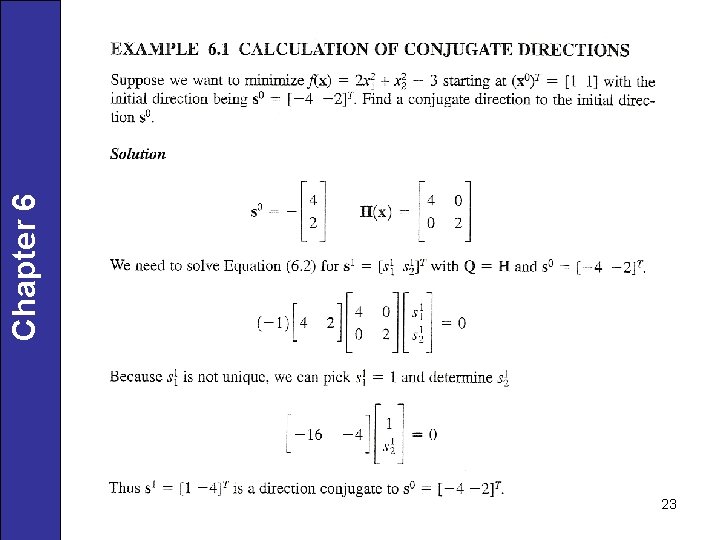

23 Chapter 6

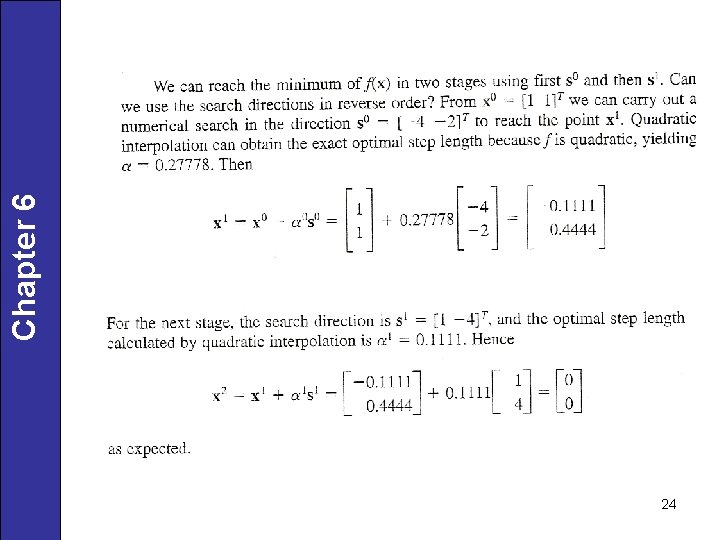

24 Chapter 6

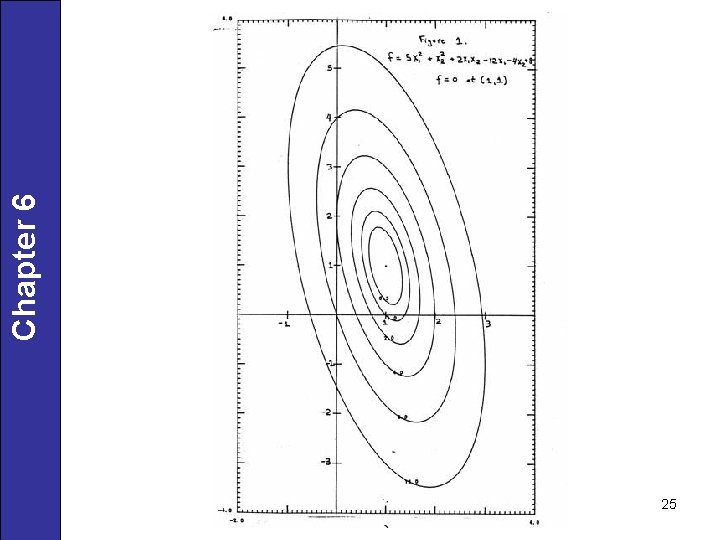

25 Chapter 6

26 Chapter 6

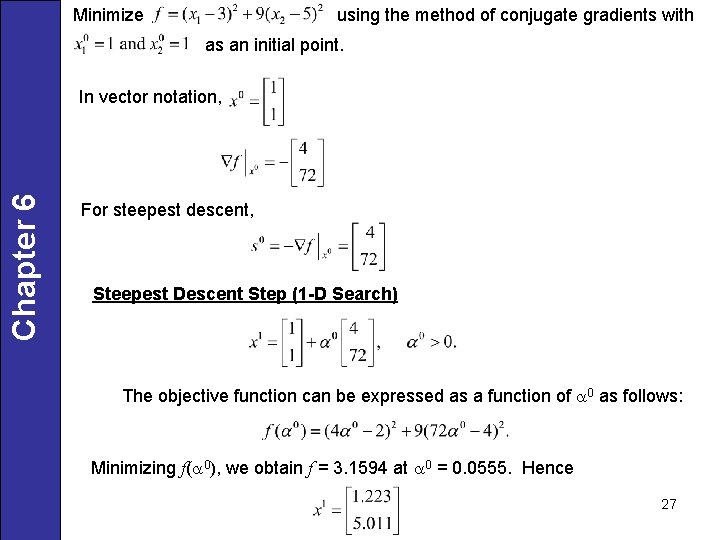

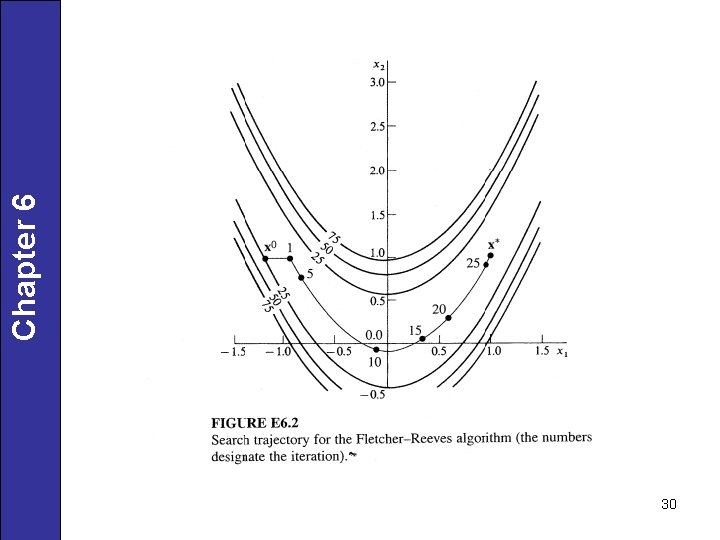

Minimize using the method of conjugate gradients with as an initial point. Chapter 6 In vector notation, For steepest descent, Steepest Descent Step (1 -D Search) The objective function can be expressed as a function of a 0 as follows: Minimizing f(a 0), we obtain f = 3. 1594 at a 0 = 0. 0555. Hence 27

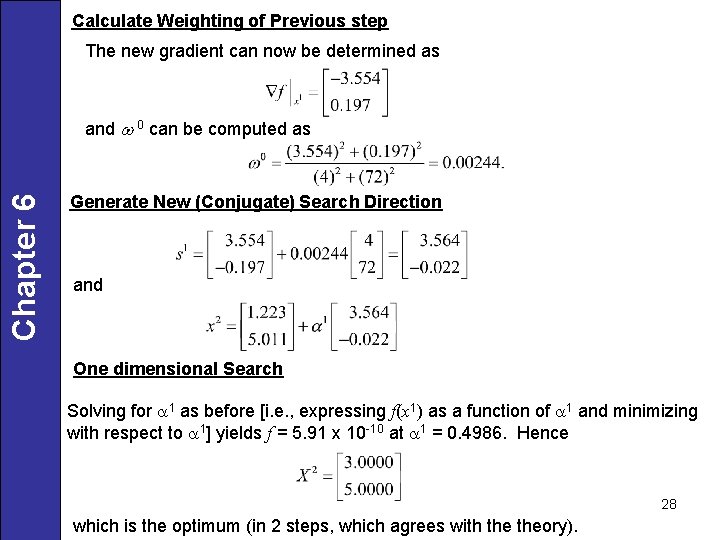

Calculate Weighting of Previous step The new gradient can now be determined as Chapter 6 and w 0 can be computed as Generate New (Conjugate) Search Direction and One dimensional Search Solving for a 1 as before [i. e. , expressing f(x 1) as a function of a 1 and minimizing with respect to a 1] yields f = 5. 91 x 10 -10 at a 1 = 0. 4986. Hence 28 which is the optimum (in 2 steps, which agrees with theory).

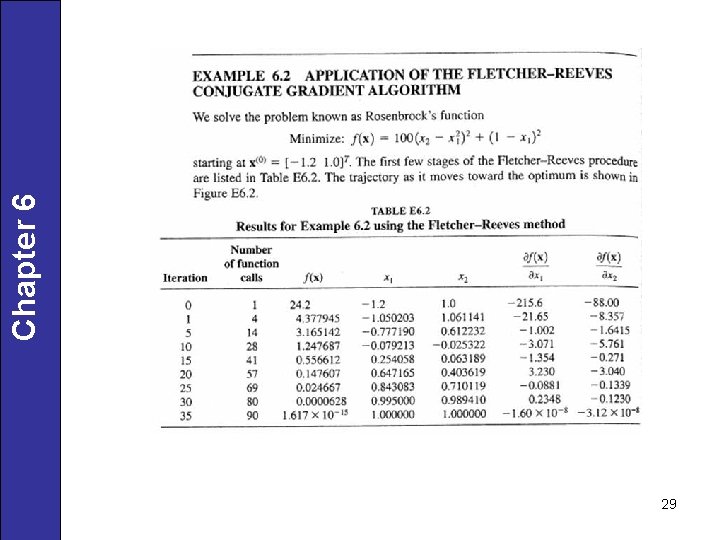

29 Chapter 6

30 Chapter 6

31 Chapter 6

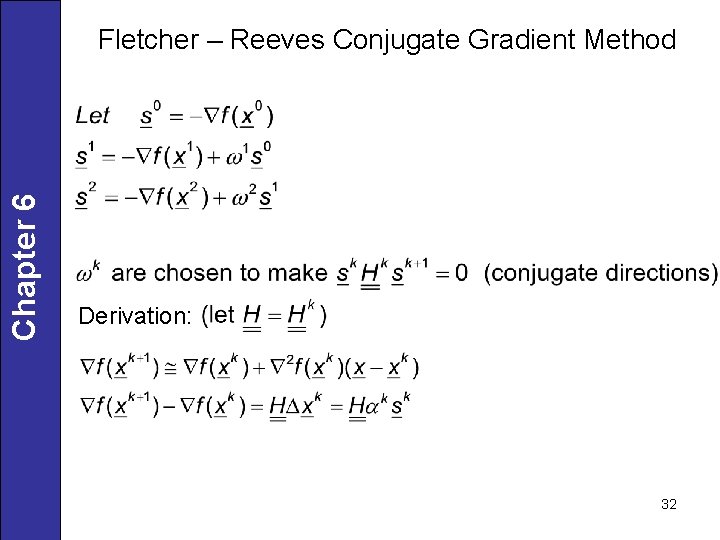

Chapter 6 Fletcher – Reeves Conjugate Gradient Method Derivation: 32

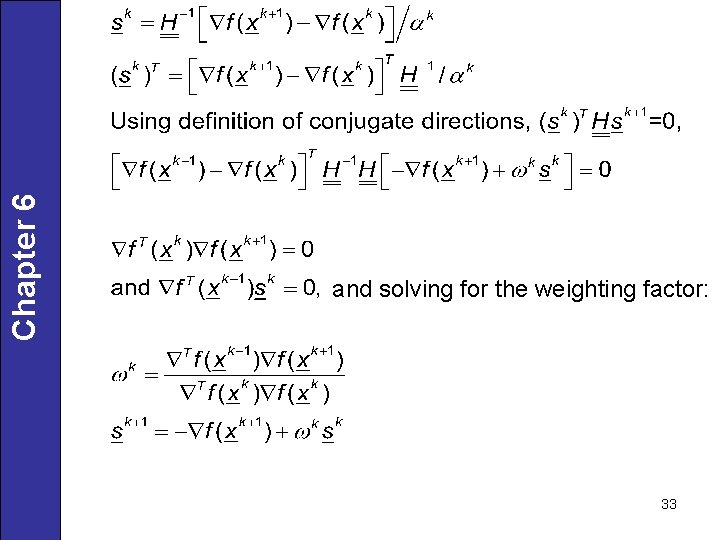

Chapter 6 and solving for the weighting factor: 33

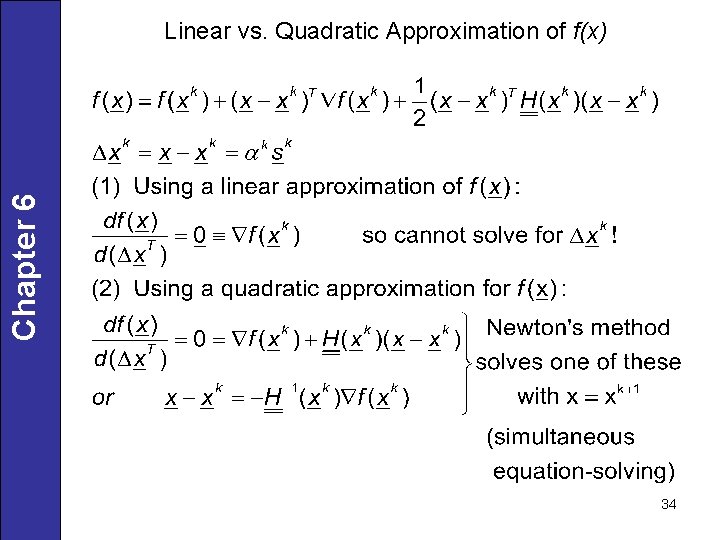

Chapter 6 Linear vs. Quadratic Approximation of f(x) 34

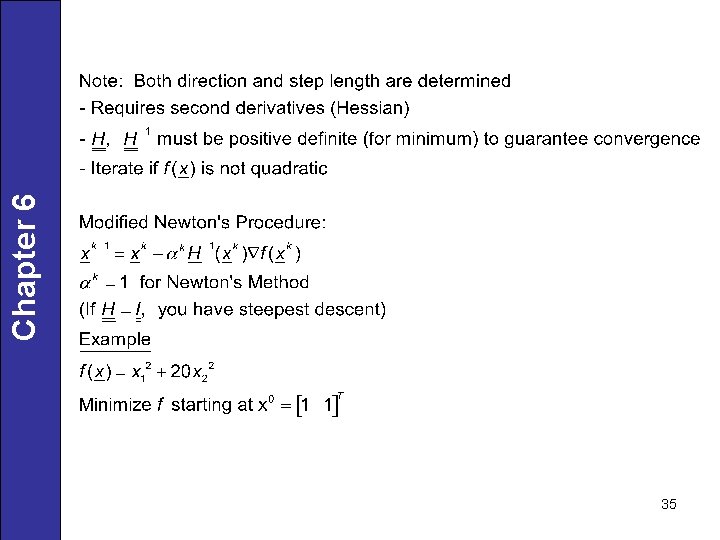

35 Chapter 6

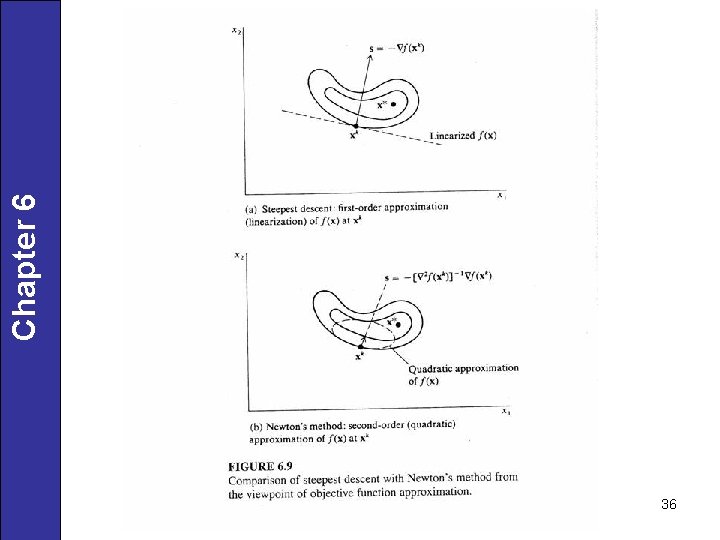

36 Chapter 6

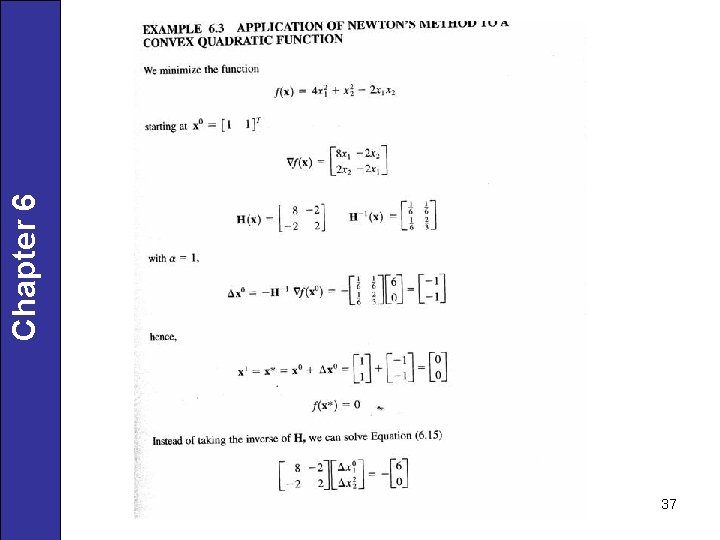

37 Chapter 6

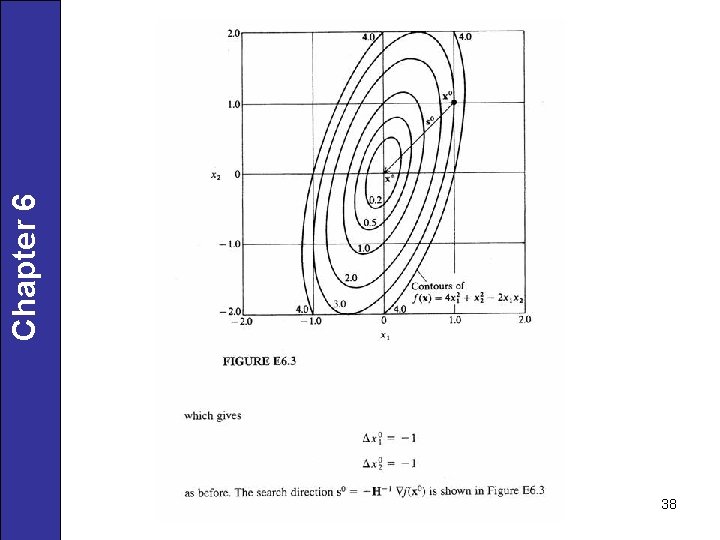

38 Chapter 6

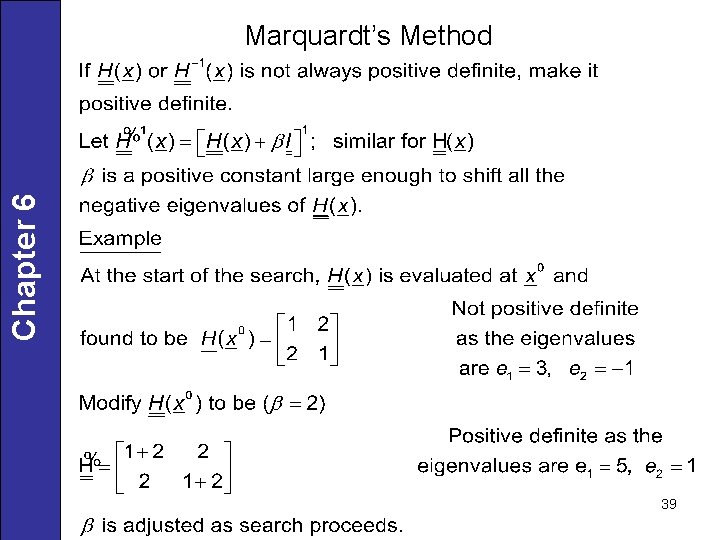

Chapter 6 Marquardt’s Method 39

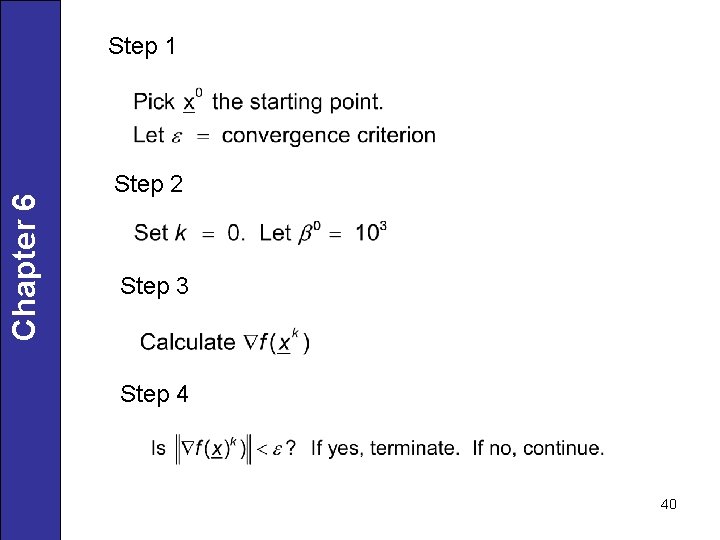

Chapter 6 Step 1 Step 2 Step 3 Step 4 40

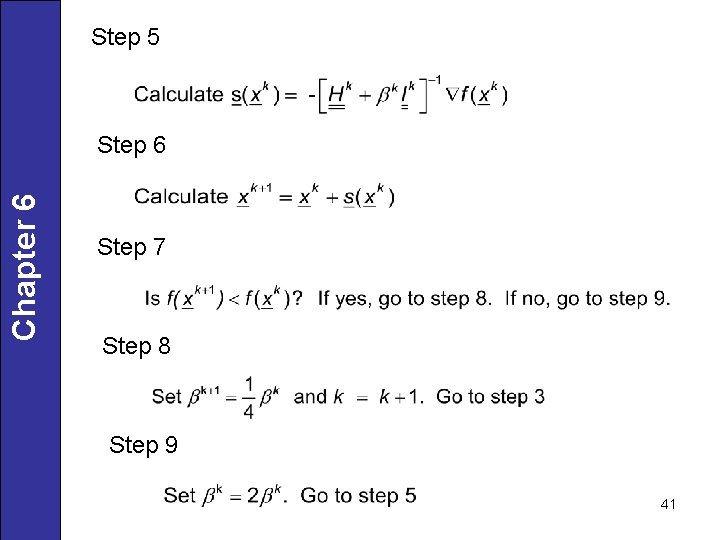

Step 5 Chapter 6 Step 7 Step 8 Step 9 41

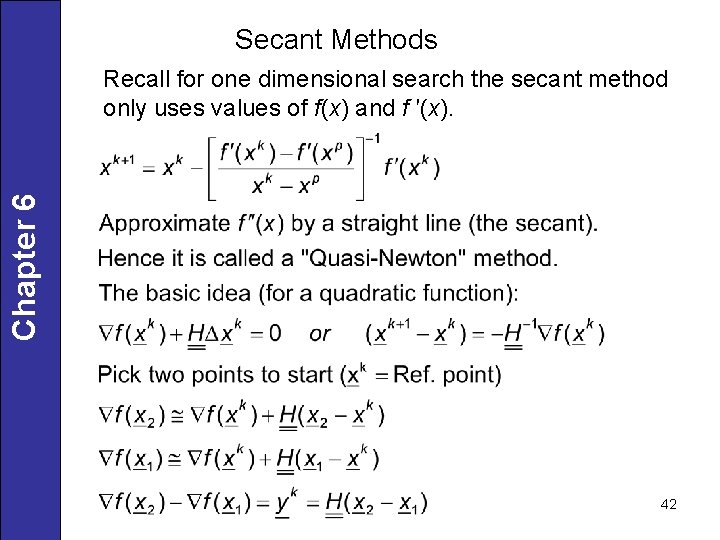

Secant Methods Chapter 6 Recall for one dimensional search the secant method only uses values of f(x) and f ′(x). 42

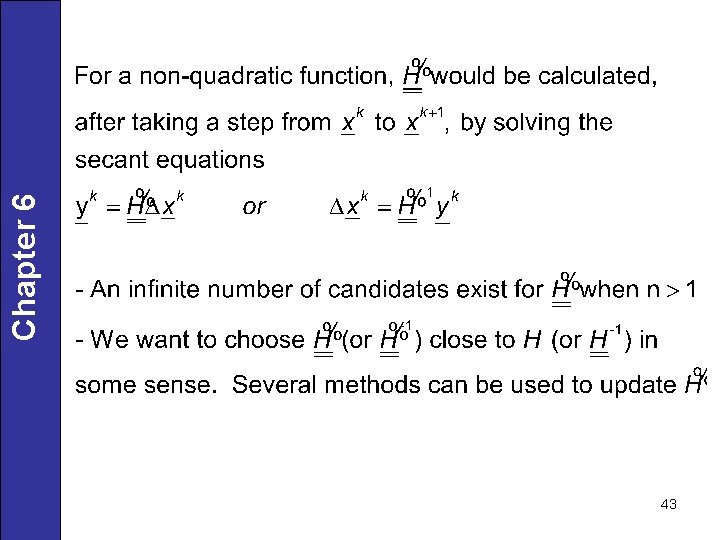

43 Chapter 6

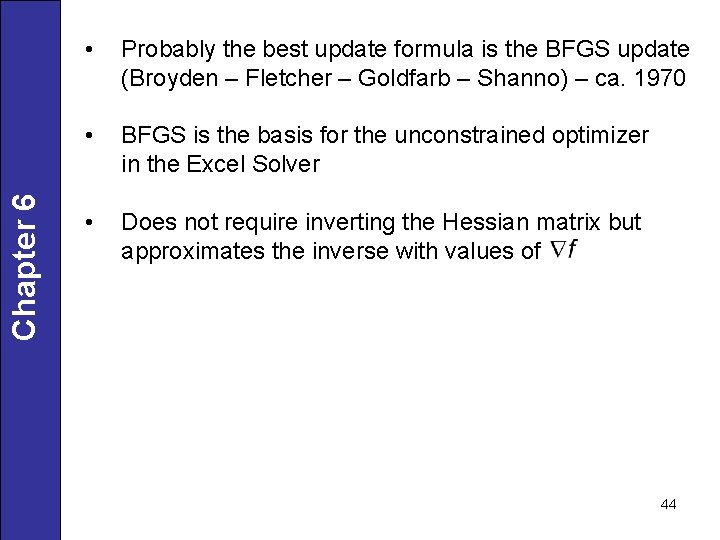

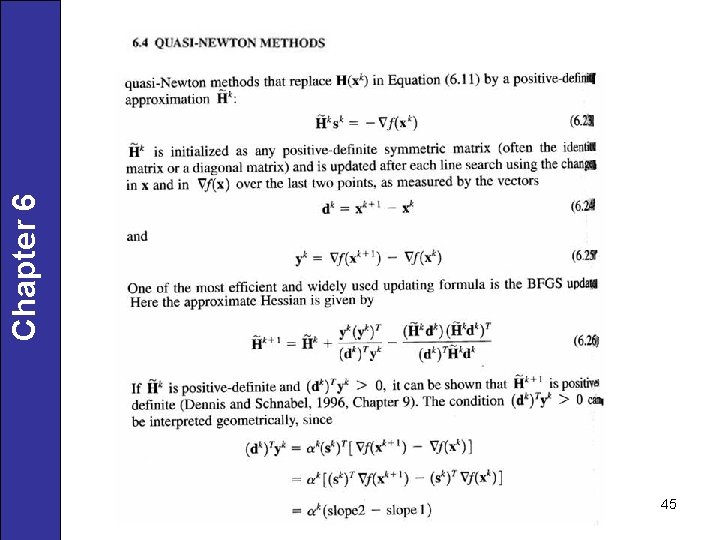

Chapter 6 • Probably the best update formula is the BFGS update (Broyden – Fletcher – Goldfarb – Shanno) – ca. 1970 • BFGS is the basis for the unconstrained optimizer in the Excel Solver • Does not require inverting the Hessian matrix but approximates the inverse with values of 44

45 Chapter 6

46 Chapter 6

47 Chapter 6

48 Chapter 6

- Slides: 48