Chapter 5 Part 2 Variables Describing Variables Quantitative

Chapter 5 Part 2 Variables

Describing Variables Quantitative – Varies in amount Vs. Categorical – Varies in kind, type n Examples: Quantitative = “quantity” or “amount” of something Severity of depression Intelligence Speed of response Number of stressful life events per month

Describing Variables Quantitative – Varies in amount Vs. Categorical – Varies in kind, type n Examples: Categorical Variables Profession category Gender

Describing Variables Quantitative variables can be Continuous (Not Limited in values) OR Discrete – No Intermediate values n Continuous = infinite increments of measurement are possible e. g. , speed, loudness, body weight Real and Apparent Limits of continuous variables n n Continuous variables are actually measured on discontinuous basis quite often e. g. , body weight, blood pressure, some test scores; what are the Real versus Apparent Limits? Why does this distinction matter? [ex. lbs. vs kg (2. 2 lbs) vs stone (14 lbs. ) Is 8 kg 17 or 18 lbs? Discrete = fixed number of items, events, with no partial items or events e. g. , number of divorces, grade in school,

Describing Variables Continuous – Not Limited in values n Example: 1 to 100 points on a test Discrete – No Intermediate values n n Example: 1=male 2=female there is no 1. 5 Apparent Limits Continuous variables are supposed to have infinitely small points that separate amounts or levels e. g. , IQ testing, temperature n One can technically have tiny increments of IQ, temperature, speed, etc. The Apparent Limits: arbitrary “point” value imposed by researchers to estimate a measure n n 1 IQ point; 1 degree of temperature Celsius, 1 mph Apparent limits of a measure are technically, ESTIMATES. “This amount is roughly 1 degree or 1 IQ point difference”

Describing Variables Discrete – No Intermediate values n n Real Limits – Score +/- half the distance to the next score (e. g. , 3. 5 – 4. 5) NOTE: apparent and real limits apply to continuous variables.

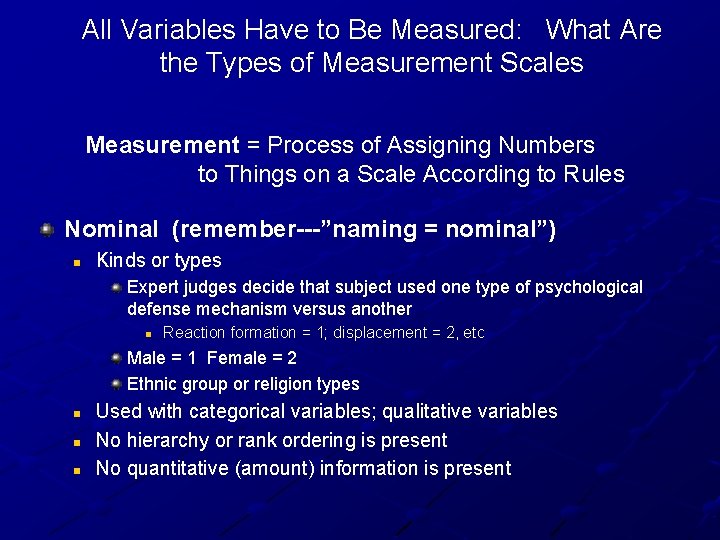

All Variables Have to Be Measured: What Are the Types of Measurement Scales Measurement = Process of Assigning Numbers to Things on a Scale According to Rules Nominal (remember---”naming = nominal”) n Kinds or types Expert judges decide that subject used one type of psychological defense mechanism versus another n Reaction formation = 1; displacement = 2, etc Male = 1 Female = 2 Ethnic group or religion types n n n Used with categorical variables; qualitative variables No hierarchy or rank ordering is present No quantitative (amount) information is present

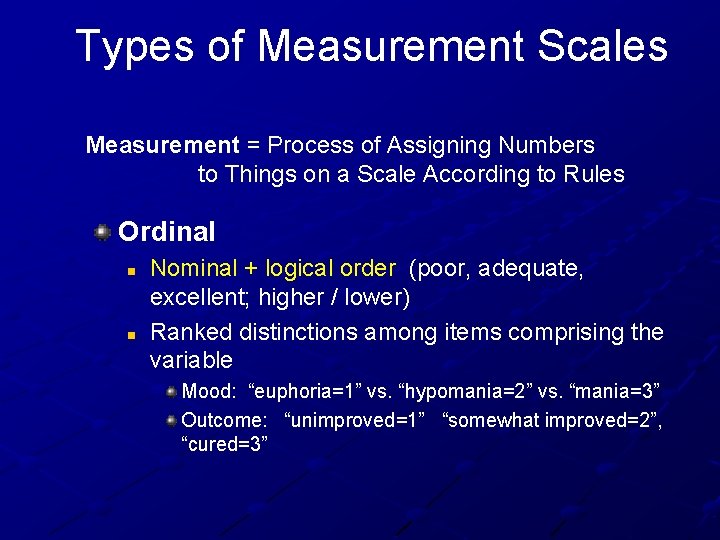

Types of Measurement Scales Measurement = Process of Assigning Numbers to Things on a Scale According to Rules Ordinal n n Nominal + logical order (poor, adequate, excellent; higher / lower) Ranked distinctions among items comprising the variable Mood: “euphoria=1” vs. “hypomania=2” vs. “mania=3” Outcome: “unimproved=1” “somewhat improved=2”, “cured=3”

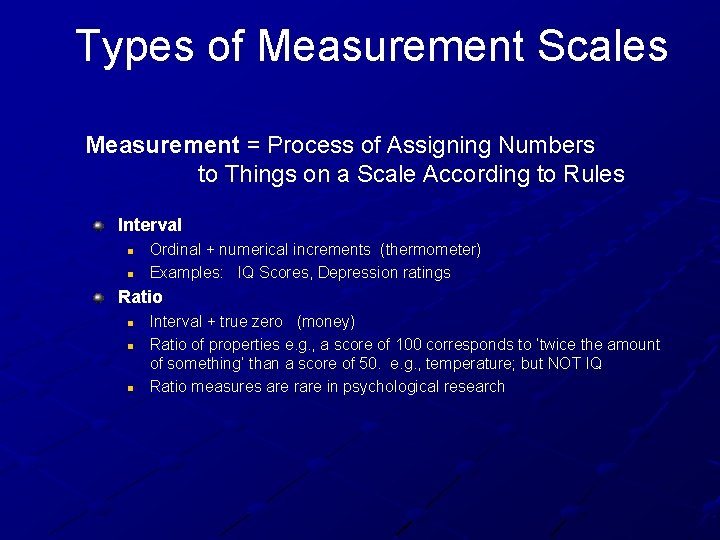

Types of Measurement Scales Measurement = Process of Assigning Numbers to Things on a Scale According to Rules Interval n n Ordinal + numerical increments (thermometer) Examples: IQ Scores, Depression ratings Ratio n n n Interval + true zero (money) Ratio of properties e. g. , a score of 100 corresponds to ‘twice the amount of something’ than a score of 50. e. g. , temperature; but NOT IQ Ratio measures are rare in psychological research

Types of Measurement Scales Why do these types of measurement matter? n n Scientists need to think about the types of measurement because it impacts VALIDITY of how they construe the phenomenon Measures from experiments subjected to statistical tests (e. g. , were results probably not due to chance)? Different types of tests may be best suited to different types of measurement

Judging Measurements: Error Variance n n Also called Random Error Changes in the value of the DV not associated with the IV. Systematic Error n Error due to consistent bias See videos (Norm A. and measurement error)

Random Error Random error is unsystematic error n n n Different levels of concentration in looking at a tape measure Random wind gusts affecting cyclists speed on trials Measuring out grams of food in an eating study

Random Error Random error is unsystematic error Random errors primarily affect individual scores, not average scores of large groups n Random errors (under-measure, overmeasure) tend to balance one another out in large groups of measures Tend to “average out” to approximately zero Tends to not significantly affect the outcomes of studies

Bias and Random Error in Measurement Bias n Changes in how accurately something is measured caused by the researcher Intentional or unintentional Biased experimental conditions Example: [next slide]

Example: Biased Experimental Conditions Researcher interested primarily in how bingeprone women at risk for bulimia preferentially memorized series of pictures of food and nonfood slides n n n Some test groups were mostly women; but some included undergraduate women and men so all could earn extra credit Research assistants knew the purpose of the study and gave less clear, less enthusiastic instructions to groups when males were present Solution to Biased Conditions: STANDARDIZE the conditions better

Bias and Random Error in Measurement Bias n Changes in how accurately something is measured caused by the researcher Intentional or unintentional Biased experimental conditions Bias in creating participants’ expectations

Biased Conditions and Extraneous Variables Note that if experimenters introduce bias into experimental conditions, this is the same as noting an ‘extraneous variable’ If the bias is introduced for one experimental group (e. g. , women but not men’ therapy, but not therapy+medication) n Then…. the extraneous variable is a confounded variable

Examples: Bias in Creating Participants’ Expectations Researcher inadvertently tells some subjects that a certain step in the experimental process should improve their performance On days when the “real” treatment is administered, research assistant protects their clothing by wearing a white doctor’s lab coat; does not “need” to wear the coat for “control” group days because they don’t need to protect their clothing on such days n Is this extraneous variable also a confound? Solutions: Keep research assistants “blind” to procedures and standardize procedures

Bias and Random Error in Measurement Bias n Changes in how accurately something is measured caused by the researcher Intentional or unintentional Biased experimental conditions Bias in creating participants’ expectations n Bias and “demand characteristics”

Example: Bias and Demand Characteristics Orne (1962) Participants are eager to cooperate and help researchers find the “right answer”; they produce responses they believe will support the researcher’s hypothesis

Example: Bias and Demand Characteristics An experimenter wants to examine over-eating tendencies in people who severely restrict their food intake n n All participants complete a series of questionnaires asking about their tendencies to binge or over eat, five minutes before being asked to “consume what for you is a normal meal” in a a laboratory eating situation? Solution? ---complete questionnaires several weeks prior to the experiment; disguise the eating questions by putting them in a large personality inventory

Bias and Random Error in Measurement Bias n n n Affects individual scores Does not tend to average to “ 0” when large groups of individuals are measured because bias tends to lean in one direction or another (excess or deficits in measurement) Affects outcome of experiment and conclusions that can be drawn.

Judging Measurements: Validity The measurement tests what it’s supposed to test Construct Validity – Measures the idea it’s supposed to, and nothing else n Face Validity – Test seems superficially valid Examples: n n The things that normally make me happy no longer give me any joy I am sad or blue every day, or nearly so If I eat a sweet roll, my body will turn it to fat Once I start eating, I cannot seem to easily stop

Judging Measurements: Validity The measurement tests what it’s supposed to test Construct Validity – Measures the idea it’s supposed to, and nothing else n n Face Validity – Test seems superficially valid Content Validity – Test encompasses all aspects of construct e. g. , cognitive, emotional, physiological, behavioral Examples n n IQ: vocabulary, concept comparisons, visual-spatial problem-solving, breadth of general knowledge, symbol manipulation Anxiety: physiological fight/flight symptoms, cognitive symptoms, emotional reactivity

Judging Measurements: Validity The measurement tests what it’s supposed to test Construct Validity – Measures the idea it’s supposed to, and nothing else n n n Face Validity – Test seems superficially valid Content Validity – Tests whole idea, not part Criterion Validity – Test correlates to other tests of the same idea Comparing a new test to a known “gold standard” Concurrent, predictive validity See Video on Sensitifity/Specificity (UW Meaurement Error)

Judging Measurements: Reliability Measurement gives the same result on different occasions. Test-Retest Reliability n Same test gives the same score on different occasions Internal Consistency n Each part of a test measures the same idea

- Slides: 26