Chapter 4 The Processor 1 Multiple Exceptions Pipelining

- Slides: 22

Chapter 4 The Processor 1

Multiple Exceptions § Pipelining overlaps multiple instructions § Could have multiple exceptions at once § Simple approach: deal with exception from earliest instruction § Flush subsequent instructions § “Precise” exceptions - always associating the proper exception with the correct instruction § Imprecise exceptions - Interrupts or exceptions in pipelined computers that are not associated with the exact instruction that was the cause of the interrupt or exception. § In complex pipelines § Multiple instructions issued per cycle § Out-of-order completion § Maintaining precise exceptions is difficult! 2

Imprecise Exceptions § Just stop pipeline and save state § Including exception cause(s) § Let the handler work out § Which instruction(s) had exceptions § Which to complete or flush § May require “manual” completion § Simplifies hardware, but more complex handler software § Not feasible for complex multiple-issue out-of-order pipelines 3

§ 4. 10 Parallelism via Instructions Instruction-Level Parallelism (ILP) § Pipelining: executing multiple instructions in parallel § To increase ILP § Deeper pipeline § Less work per stage shorter clock cycle § Multiple issue § § Replicate pipeline stages multiple pipelines Start multiple instructions per clock cycle CPI < 1, so use Instructions Per Cycle (IPC) E. g. , 4 GHz 4 -way multiple-issue § 16 BIPS, peak CPI = 0. 25, peak IPC = 4 § But dependencies reduce this in practice 4

Multiple Issue § Static multiple issue § Compiler groups instructions to be issued together § Packages them into “issue slots” § Compiler detects and avoids hazards § Dynamic multiple issue § CPU examines instruction stream and chooses instructions to issue each cycle § Compiler can help by reordering instructions § CPU resolves hazards using advanced techniques at runtime 5

Speculation § “Guess” what to do with an instruction § Start operation as soon as possible § Check whether guess was right § If so, complete the operation § If not, roll-back and do the right thing § Common to static and dynamic multiple issue § Examples § Speculate on branch outcome § instructions after the branch could be executed earlier § Roll back if path taken is different § Speculate on load § a store that precedes a load does not refer to the same address, which would allow the load to be executed before the store § Roll back if location is updated 6

Compiler/Hardware Speculation § Compiler can reorder instructions § e. g. , move an instruction across a branch or a store across a load § insert additional instructions that check the accuracy of the speculation, and include “fix-up” instructions to recover from incorrect guess § Hardware can look ahead for instructions to execute § Buffer results until it determines they are actually needed § Flush buffers on incorrect speculation § Buffers written to registers or memory if speculation is correct 7

Speculation and Exceptions § What if exception occurs on a speculatively executed instruction? § e. g. , speculative load before null-pointer check, i. e. address it uses is not within bounds when the speculation is incorrect § Compiler will ignore such exceptions until they really should occur § Hardware buffers the exceptions until it is known the instruction causing it is no longer speculative § Static speculation § Can add ISA support for deferring exceptions § Dynamic speculation § Can buffer exceptions until instruction completion (which may not occur) 8

Static Multiple Issue § Compiler groups instructions into “issue packets” § Group of instructions that can be issued on a single cycle § Determined by pipeline resources required § Most static issue processors also rely on the compiler to take on some responsibility for handling data and control hazards. § The compiler’s responsibilities may include static branch prediction and code scheduling to reduce or prevent all hazards. § Think of an issue packet as a very long instruction § Specifies multiple concurrent operations § Very Long Instruction Word (VLIW) 9

Scheduling Static Multiple Issue § Compiler must remove some/all hazards § Reorder instructions into issue packets § No dependencies with a packet § Possibly some dependencies between packets § Varies between ISAs; compiler must know! § Pad with nop if necessary 10

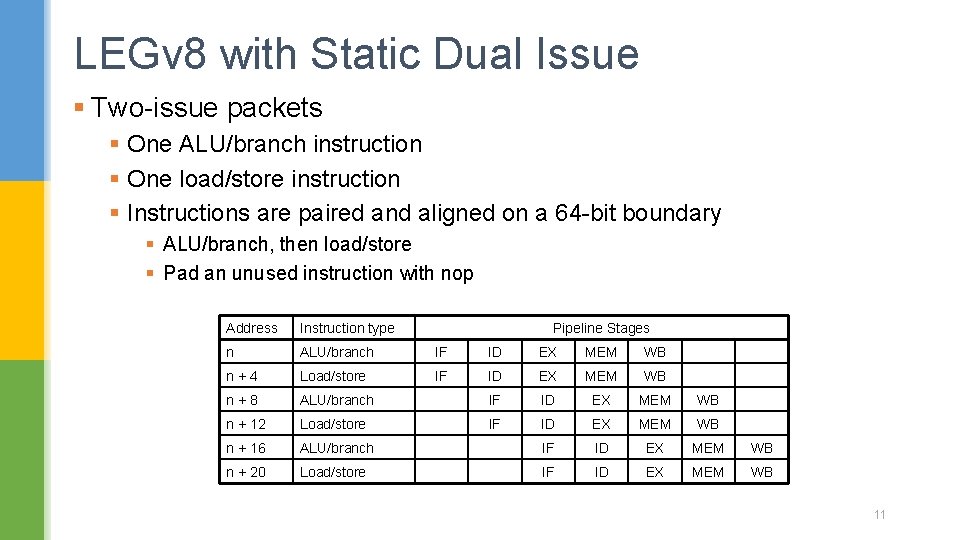

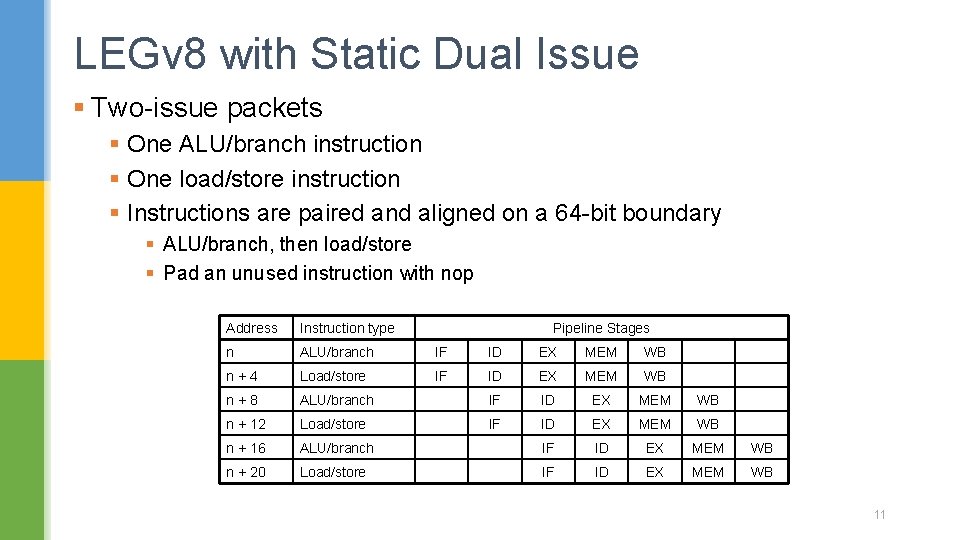

LEGv 8 with Static Dual Issue § Two-issue packets § One ALU/branch instruction § One load/store instruction § Instructions are paired and aligned on a 64 -bit boundary § ALU/branch, then load/store § Pad an unused instruction with nop Address Instruction type Pipeline Stages n ALU/branch IF ID EX MEM WB n+4 Load/store IF ID EX MEM WB n+8 ALU/branch IF ID EX MEM WB n + 12 Load/store IF ID EX MEM WB n + 16 ALU/branch IF ID EX MEM WB n + 20 Load/store IF ID EX MEM WB 11

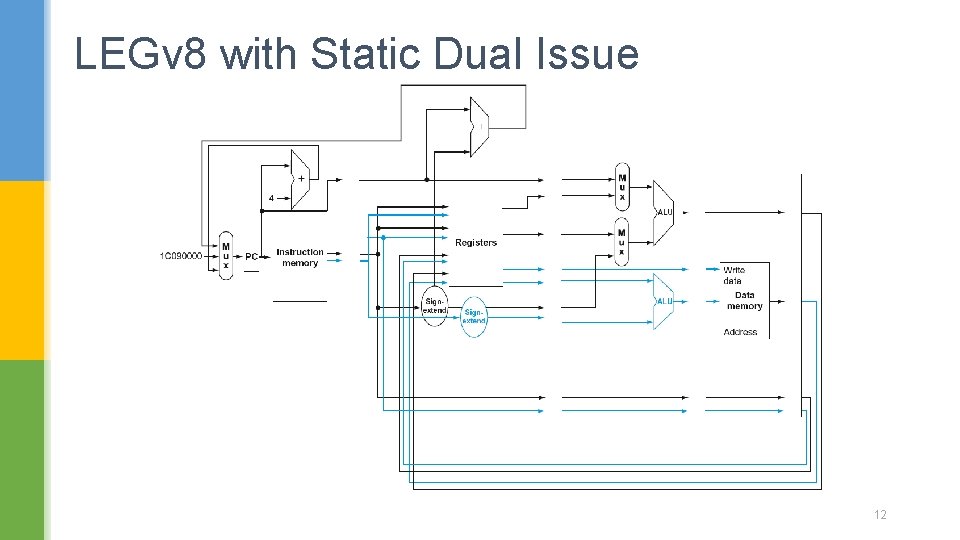

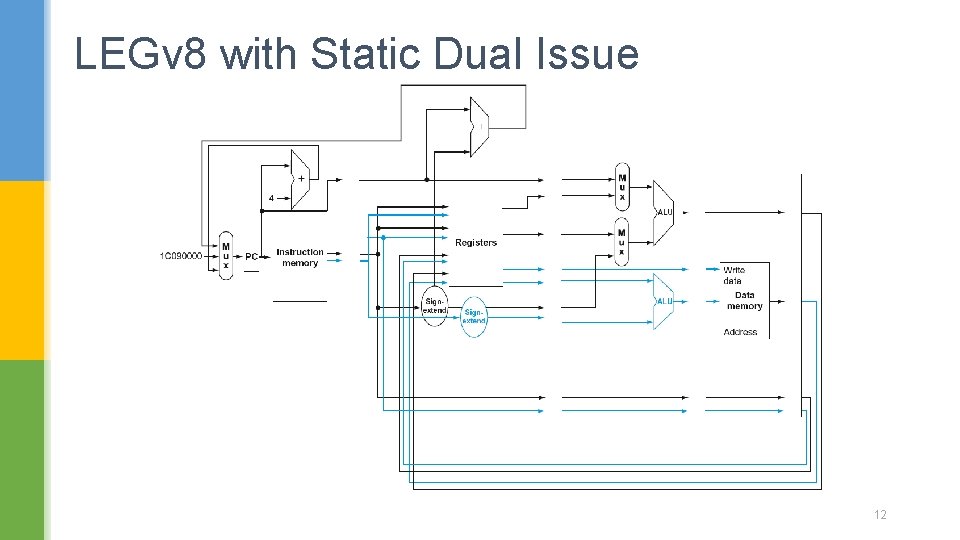

LEGv 8 with Static Dual Issue 12

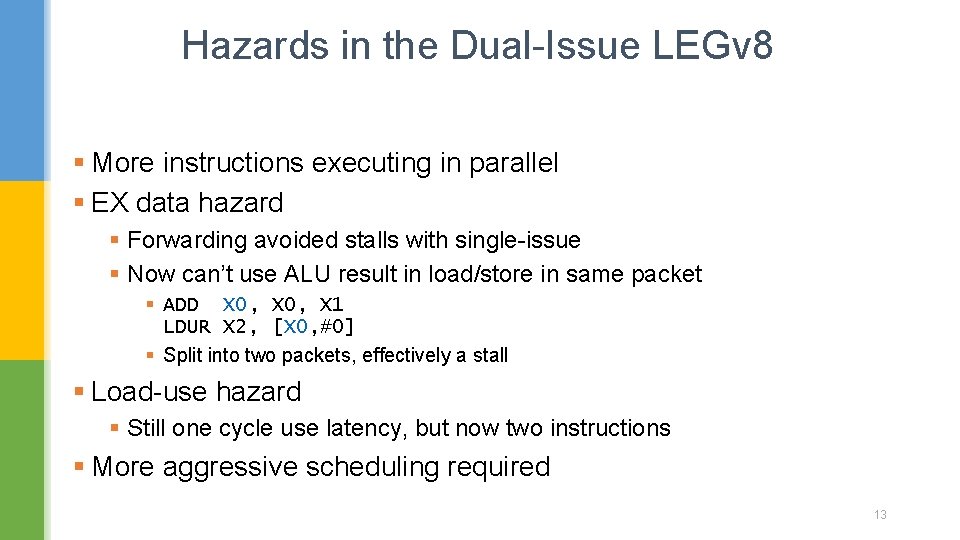

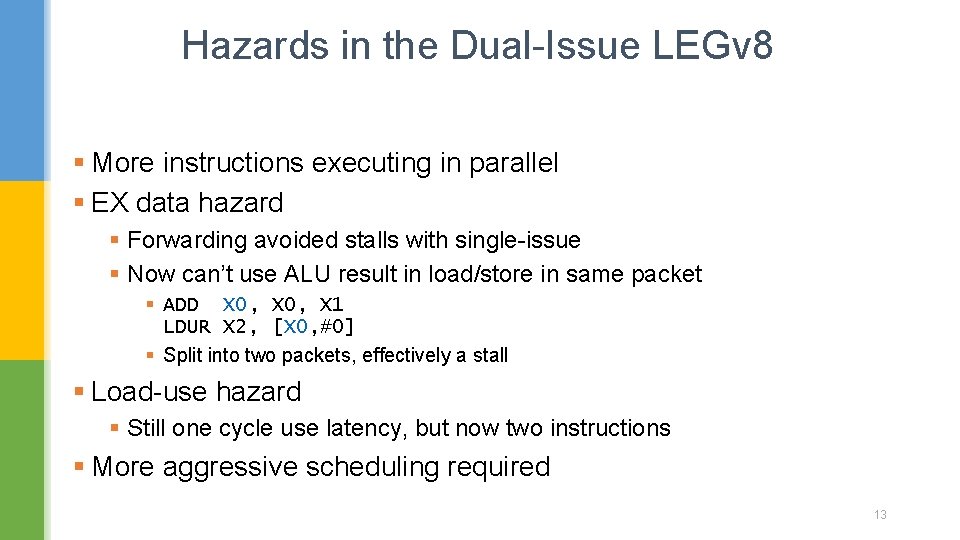

Hazards in the Dual-Issue LEGv 8 § More instructions executing in parallel § EX data hazard § Forwarding avoided stalls with single-issue § Now can’t use ALU result in load/store in same packet § ADD X 0, X 1 LDUR X 2, [X 0, #0] § Split into two packets, effectively a stall § Load-use hazard § Still one cycle use latency, but now two instructions § More aggressive scheduling required 13

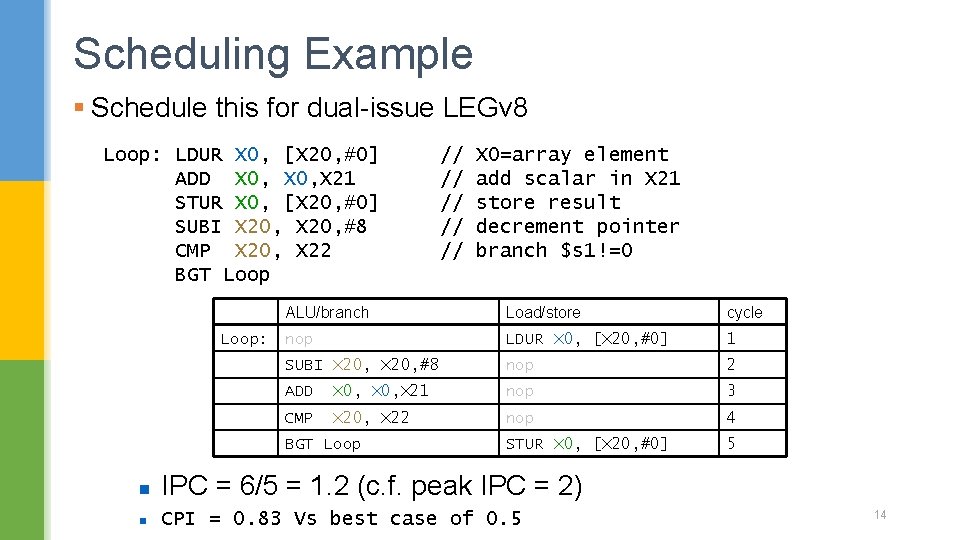

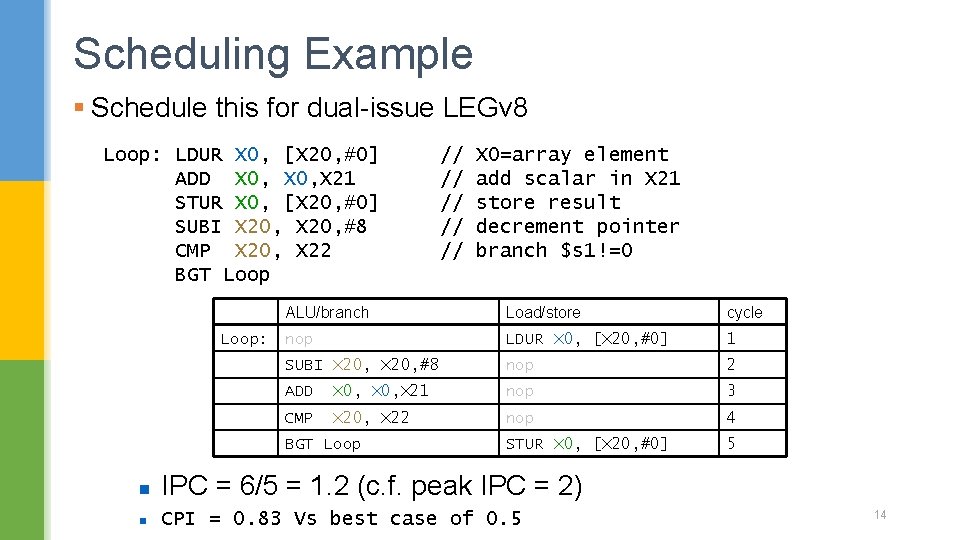

Scheduling Example § Schedule this for dual-issue LEGv 8 Loop: LDUR X 0, [X 20, #0] ADD X 0, X 21 STUR X 0, [X 20, #0] SUBI X 20, #8 CMP X 20, X 22 BGT Loop: // // // X 0=array element add scalar in X 21 store result decrement pointer branch $s 1!=0 ALU/branch Load/store cycle nop LDUR X 0, [X 20, #0] 1 SUBI X 20, #8 nop 2 ADD X 0, X 21 nop 3 CMP X 20, X 22 nop 4 STUR X 0, [X 20, #0] 5 BGT Loop n IPC = 6/5 = 1. 2 (c. f. peak IPC = 2) n CPI = 0. 83 Vs best case of 0. 5 14

Loop Unrolling § Replicate loop body to expose more parallelism § Reduces loop-control overhead § Use different registers per replication § Called “register renaming” § Avoid loop-carried “anti-dependencies” § Load followed by a store of the same register § Aka “name dependence” § Reuse of a register name 15

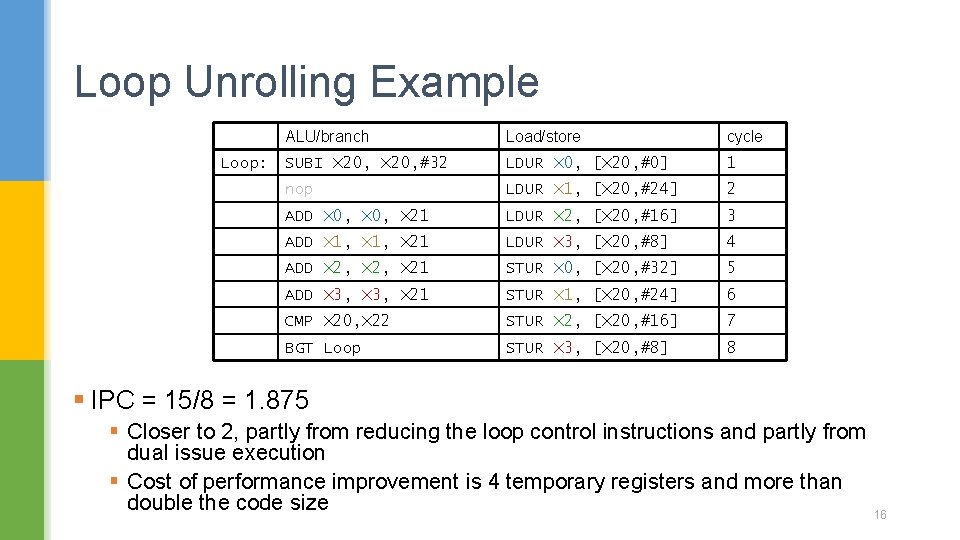

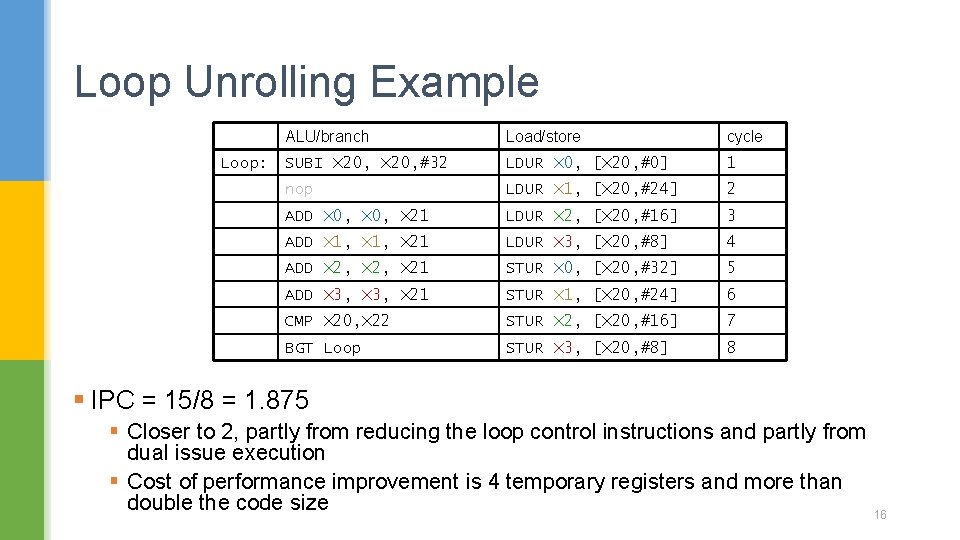

Loop Unrolling Example Loop: ALU/branch Load/store cycle SUBI X 20, #32 LDUR X 0, [X 20, #0] 1 nop LDUR X 1, [X 20, #24] 2 ADD X 0, X 21 LDUR X 2, [X 20, #16] 3 ADD X 1, X 21 LDUR X 3, [X 20, #8] 4 ADD X 2, X 21 STUR X 0, [X 20, #32] 5 ADD X 3, X 21 STUR X 1, [X 20, #24] 6 CMP X 20, X 22 STUR X 2, [X 20, #16] 7 BGT Loop STUR X 3, [X 20, #8] 8 § IPC = 15/8 = 1. 875 § Closer to 2, partly from reducing the loop control instructions and partly from dual issue execution § Cost of performance improvement is 4 temporary registers and more than double the code size 16

Dynamic Multiple Issue § “Superscalar” processors § CPU decides whether to issue 0, 1, 2, … each cycle § Avoiding structural and data hazards § Avoids the need for compiler scheduling § Though it may still help to achieve good performance by moving the dependences apart and thereby increasing issue rate § Code semantics ensured by the CPU § Differences between superscalar and a VLIW processor § the code, whether scheduled or not, is guaranteed by the hardware to execute correctly § compiled code will always run correctly independent of the issue rate or pipeline structure of the processor § Not the case in some VLIW § Either recompilation required across different processor models, or § Performance poor although runs correctly 17

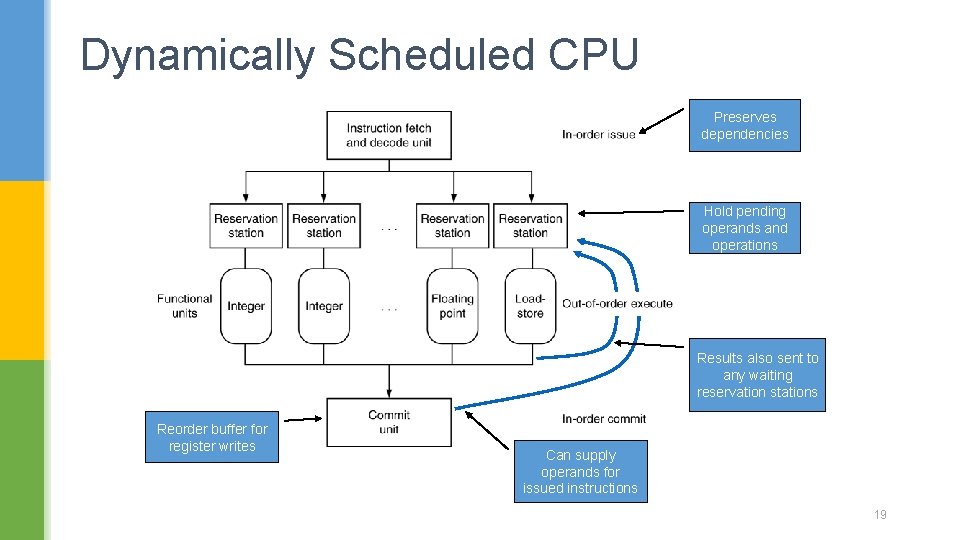

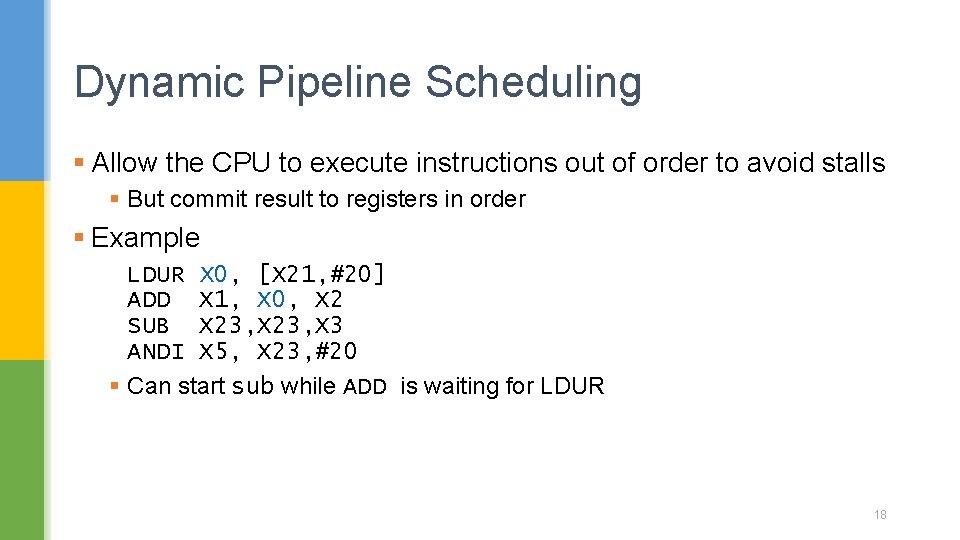

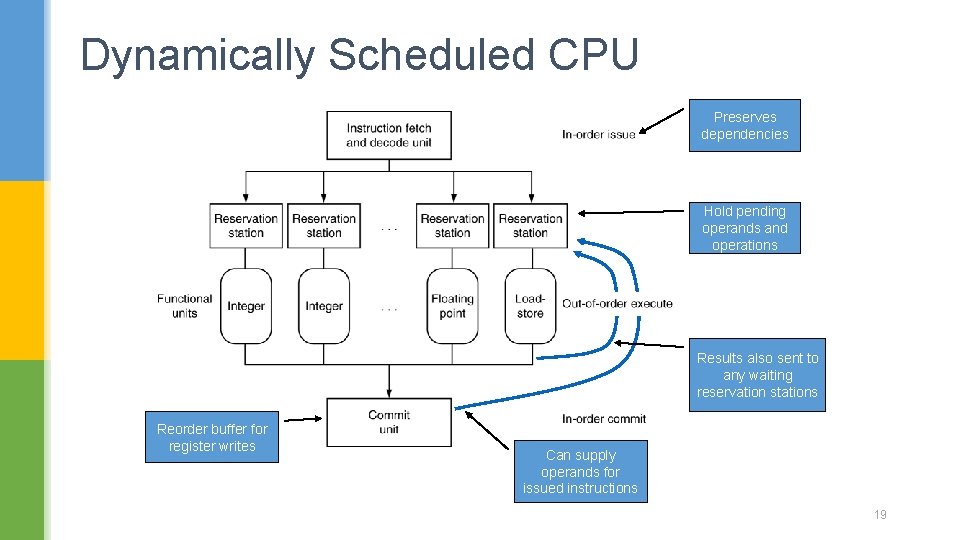

Dynamic Pipeline Scheduling § Allow the CPU to execute instructions out of order to avoid stalls § But commit result to registers in order § Example LDUR X 0, [X 21, #20] ADD X 1, X 0, X 2 SUB X 23, X 3 ANDI X 5, X 23, #20 § Can start sub while ADD is waiting for LDUR 18

Dynamically Scheduled CPU Preserves dependencies Hold pending operands and operations Results also sent to any waiting reservation stations Reorder buffer for register writes Can supply operands for issued instructions 19

Register Renaming § Reservation stations and reorder buffer effectively provide register renaming § On instruction issue, it is copied to a reservation station § If operand is available in register file or reorder buffer § Copied to reservation station § No longer required in the register; can be overwritten § If operand is not in the register file or reorder buffer § It will be provided to the reservation station by a functional unit § Register update may not be required § Out-of-order execution § instructions can be executed in a different order than they were fetched § The processor executes the instructions in some order that preserves the data flow order of the program 20

In-order commit § The instruction fetch and decode unit issues instructions in order § The commit unit writes the results to registers and memory in program fetch order § The functional units are free to initiate execution whenever the data they need are available § Today, all dynamically scheduled pipelines use in-order commit. 21

Speculation § Predict branch and continue fetch and issue on the predicted path § Don’t commit until branch outcome determined § Load speculation § Avoid load and cache miss delay § § Predict the effective address Predict loaded value Load before completing outstanding stores Bypass stored values to load unit § Don’t commit load until speculation cleared 22