Chapter 3 Sample Statistics By the end of

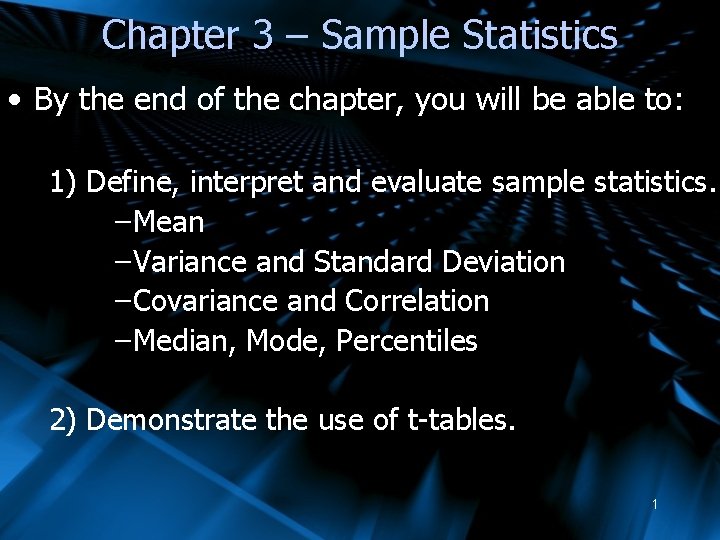

Chapter 3 – Sample Statistics • By the end of the chapter, you will be able to: 1) Define, interpret and evaluate sample statistics. – Mean – Variance and Standard Deviation – Covariance and Correlation – Median, Mode, Percentiles 2) Demonstrate the use of t-tables. 1

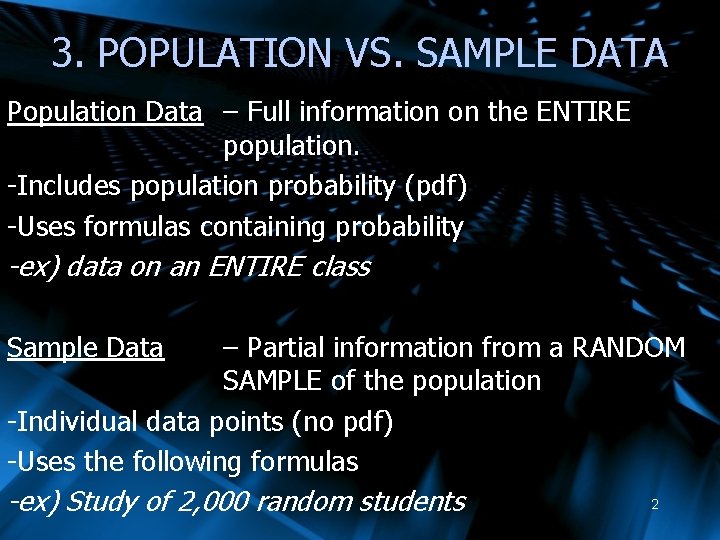

3. POPULATION VS. SAMPLE DATA Population Data – Full information on the ENTIRE population. -Includes population probability (pdf) -Uses formulas containing probability -ex) data on an ENTIRE class Sample Data – Partial information from a RANDOM SAMPLE of the population -Individual data points (no pdf) -Uses the following formulas -ex) Study of 2, 000 random students 2

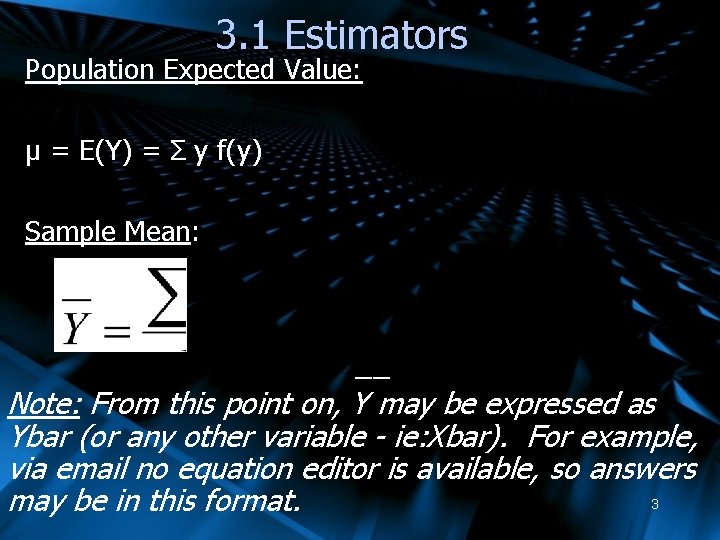

3. 1 Estimators Population Expected Value: μ = E(Y) = Σ y f(y) Sample Mean: __ Note: From this point on, Y may be expressed as Ybar (or any other variable - ie: Xbar). For example, via email no equation editor is available, so answers 3 may be in this format.

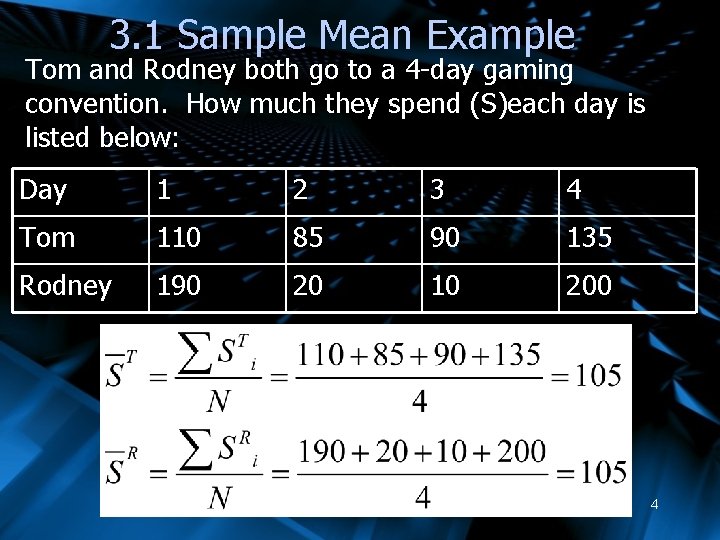

3. 1 Sample Mean Example Tom and Rodney both go to a 4 -day gaming convention. How much they spend (S)each day is listed below: Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 4

![3. 2 Estimators Population Variance: σY 2 = Var(Y) = Σ [y-E(y)]2 f(y) Sample 3. 2 Estimators Population Variance: σY 2 = Var(Y) = Σ [y-E(y)]2 f(y) Sample](http://slidetodoc.com/presentation_image_h2/5c8281bf4acd3054d3250b41e83bd9d9/image-5.jpg)

3. 2 Estimators Population Variance: σY 2 = Var(Y) = Σ [y-E(y)]2 f(y) Sample Variance: 5

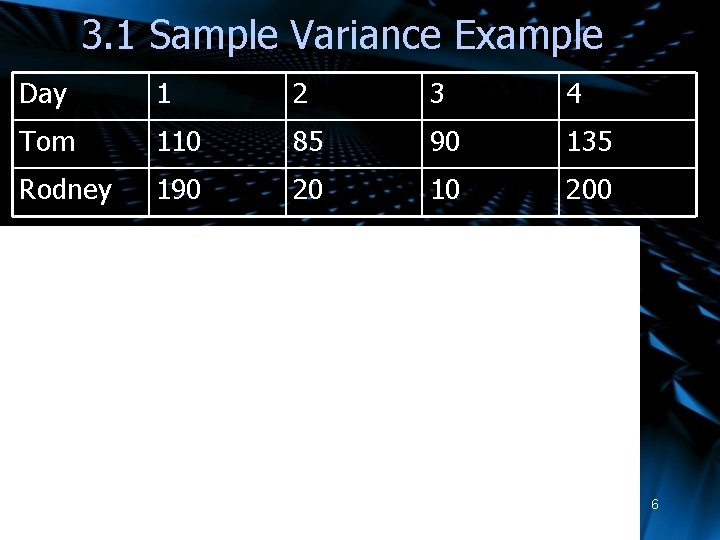

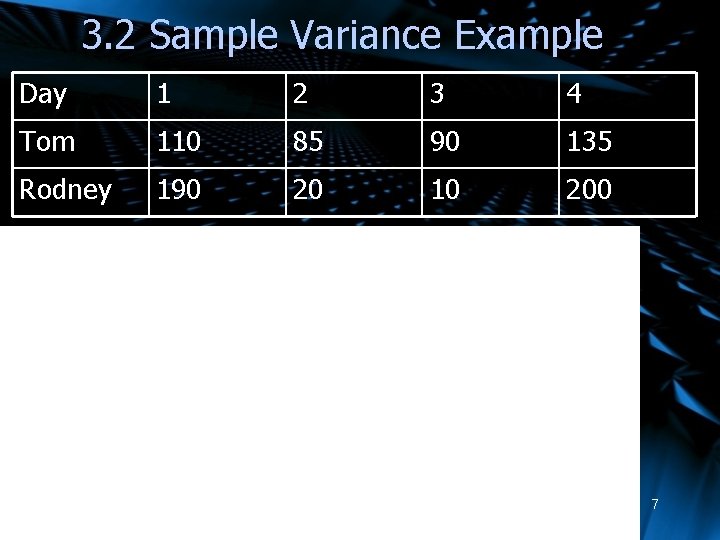

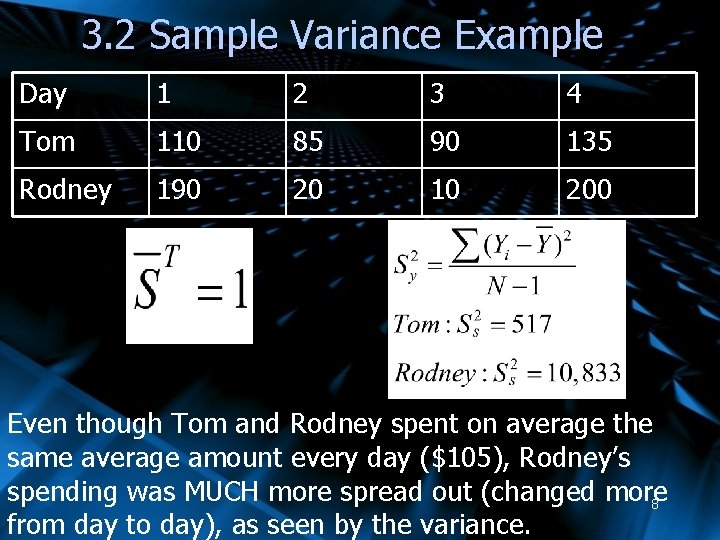

3. 1 Sample Variance Example Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 6

3. 2 Sample Variance Example Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 7

3. 2 Sample Variance Example Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 Even though Tom and Rodney spent on average the same average amount every day ($105), Rodney’s spending was MUCH more spread out (changed more 8 from day to day), as seen by the variance.

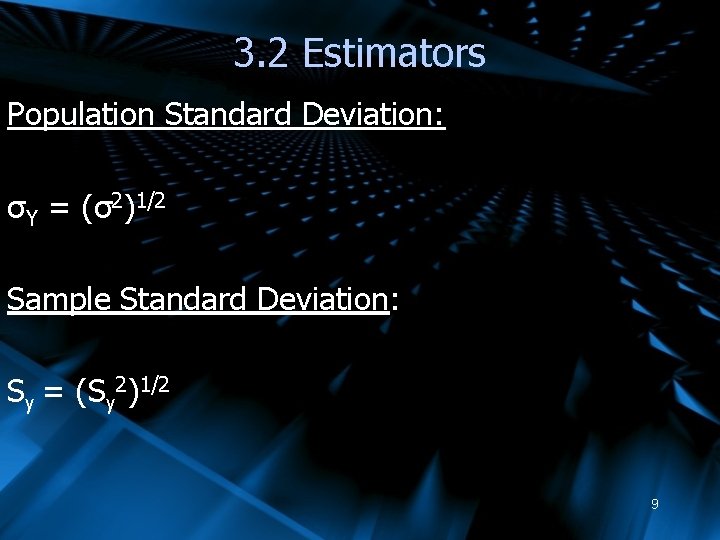

3. 2 Estimators Population Standard Deviation: σY = (σ2)1/2 Sample Standard Deviation: Sy = (Sy 2)1/2 9

3. 2 Sample Standard Deviation Example 10

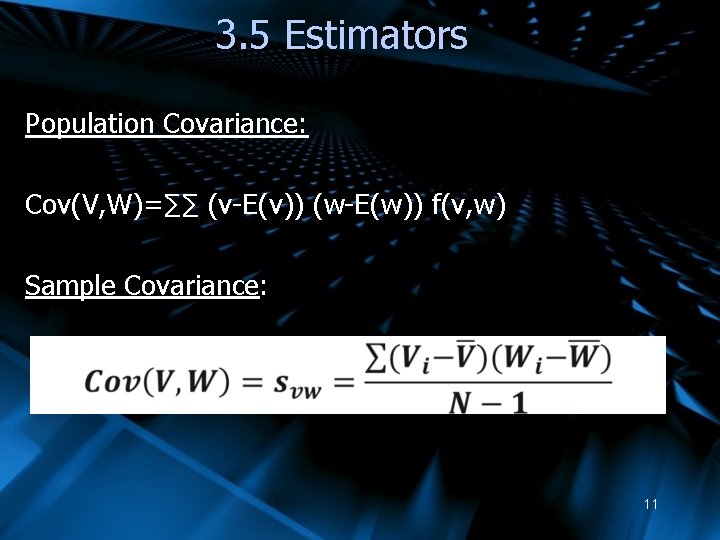

3. 5 Estimators Population Covariance: Cov(V, W)=∑∑ (v-E(v)) (w-E(w)) f(v, w) Sample Covariance: 11

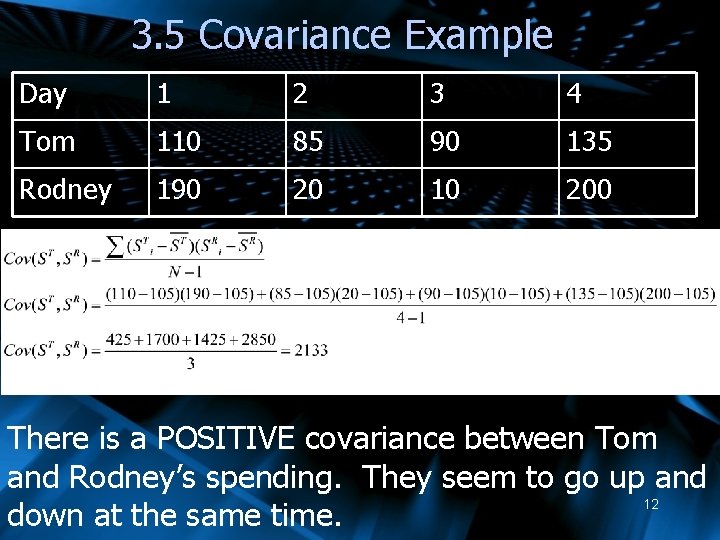

3. 5 Covariance Example Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 There is a POSITIVE covariance between Tom and Rodney’s spending. They seem to go up and 12 down at the same time.

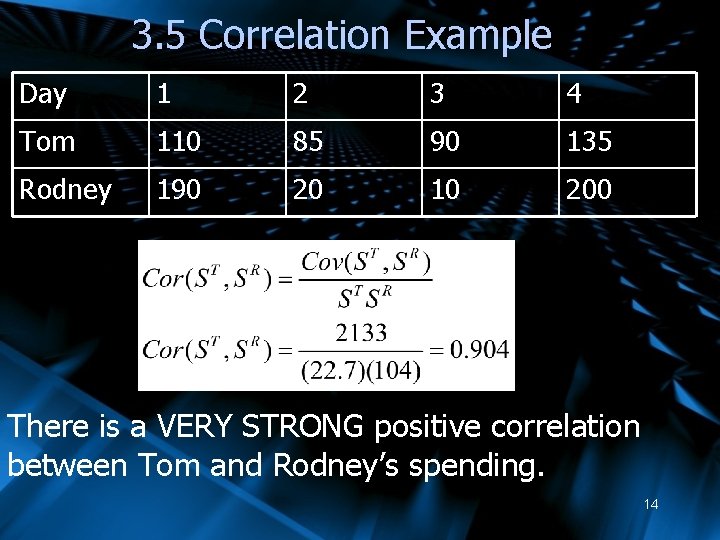

3. 5 Estimators Population Correlation: σvw = corr(V, W)= Cov(V, W)/ σv σw Sample Correlation: rvw = corr(V, W)= Cov(V, W)/ Sv Sw 13

3. 5 Correlation Example Day 1 2 3 4 Tom 110 85 90 135 Rodney 190 20 10 200 There is a VERY STRONG positive correlation between Tom and Rodney’s spending. 14

3. 1 Median –Value point in the middle of the data set -(Data must be arranged in ascending order) Calculating: Odd number of observations = middle data point For example, with 3 data points, the median is the 2 nd data point : 15

3. 1 Median Calculating: Even number of observations = average of middle 2 data points For example, if you have 42 data points: The median would be the average of the 21 st and 22 nd data point. 16

3. 1 Median Usage: -The mean is usually used as an “average” or measurement of “central location” -If there are strong outliers (values way above or way below most others), that could influence the mean, and the median may be a better measure Example: At the end of term, 6/60 students were enrolled but never did anything and got 0%. A median may be a 17 better measure of average.

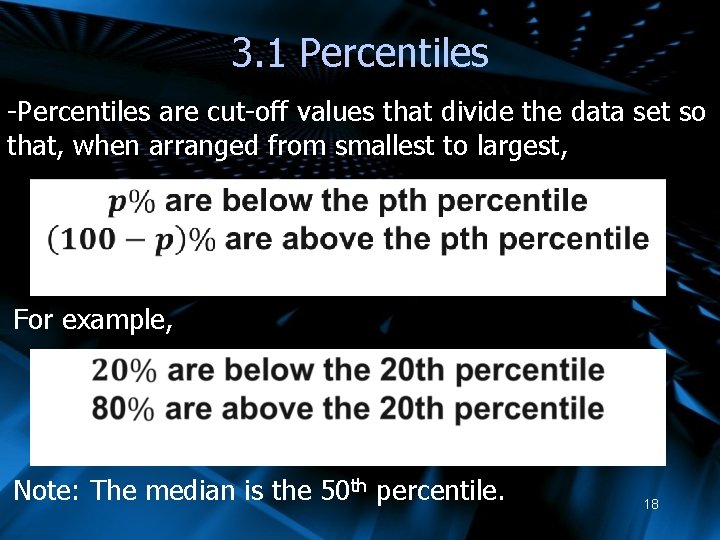

3. 1 Percentiles -Percentiles are cut-off values that divide the data set so that, when arranged from smallest to largest, For example, Note: The median is the 50 th percentile. 18

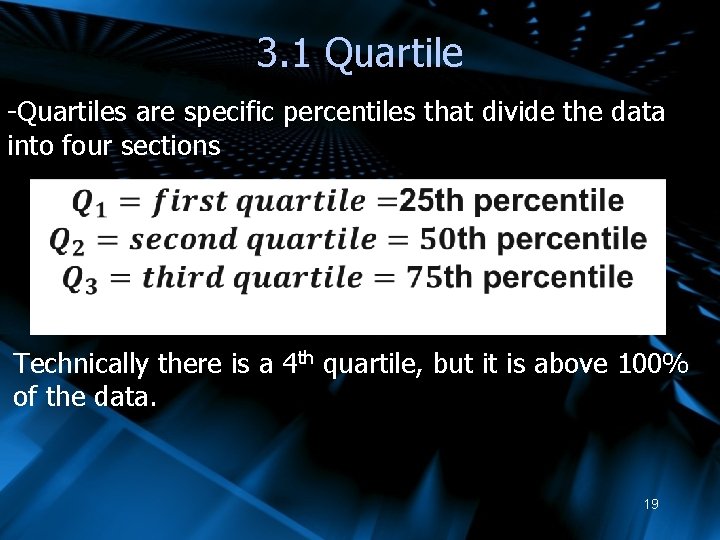

3. 1 Quartile -Quartiles are specific percentiles that divide the data into four sections Technically there is a 4 th quartile, but it is above 100% of the data. 19

3. 2 Max, Min, Range and Mode Min = minimum = lowest value in the data set Max = maximum = highest value in the data set Range = max – min Mode = the value(s) that show up most 20

3. 6 Degrees of Freedom Some distributions (such as the t-distribution) depend on DEGREES OF FREEDOM Degrees of Freedom are generally dependent on two things: Ø Sample size (as sample rises, so does degrees of freedom) Ø Complication of test (more complicated statistical tests reduce degrees of freedom) Ø Simple conclusions are easier to make than complicated ones 21

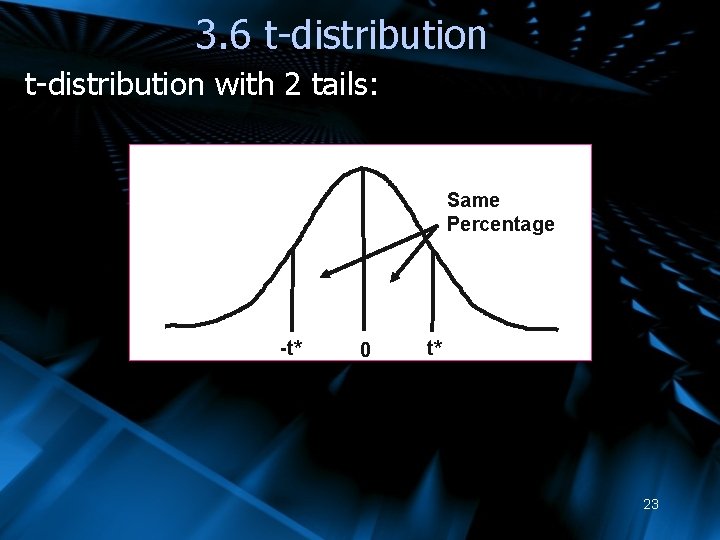

3. 6 t-distribution Ø t-tables are both similar in shape to a normal table (bell curve) and statistically related to it ØThe t-table is symmetric Ø 50% probability is on each half of the table Ø Statistical analysis often requires us to find critical t-values (t*) on one or both sides of the central mean of zero ØThese are sometimes referred to one-tailed or two-tailed values 22

3. 6 t-distribution with 2 tails: Same Percentage -t* 0 t* 23

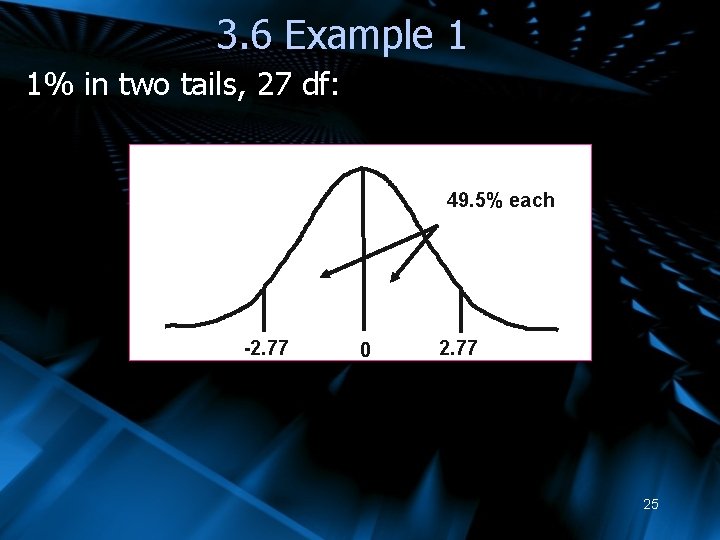

3. 6 t-distribution Example 1: Find the critical t-values (t*) with 1% in two tails with 27 df (Note: 1% in both tails = 0. 5% in each tail) For p=0. 495, df 27 gives t*=2. 77, -2. 77 24

3. 6 Example 1 1% in two tails, 27 df: 49. 5% each -2. 77 0 2. 77 25

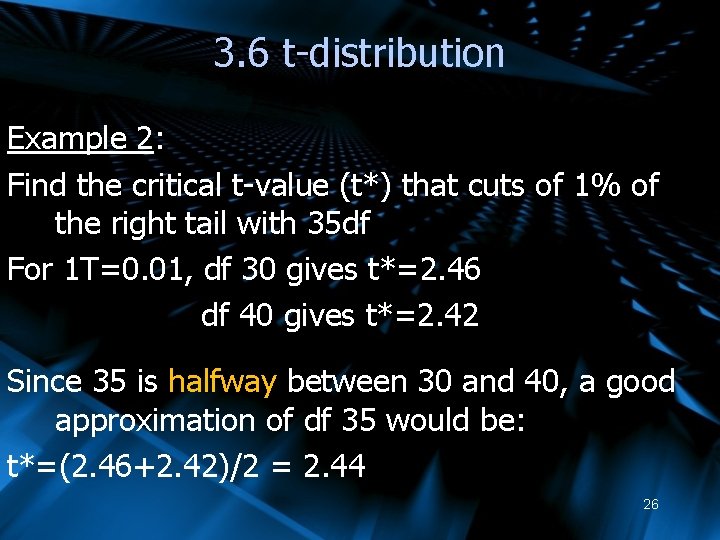

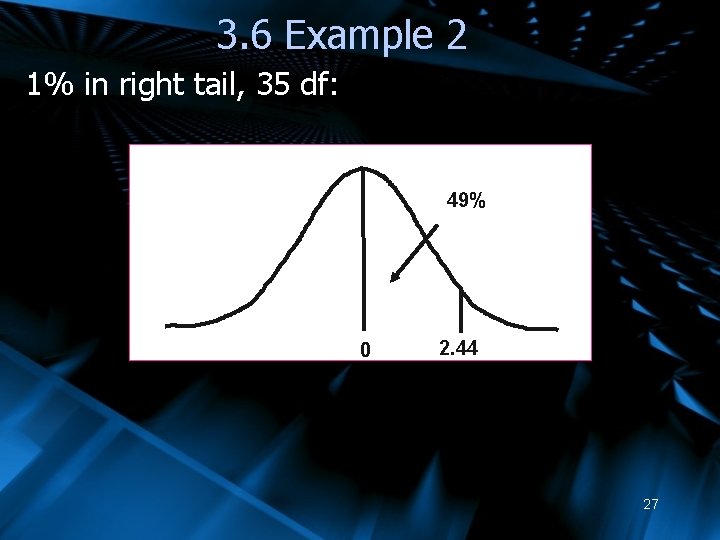

3. 6 t-distribution Example 2: Find the critical t-value (t*) that cuts of 1% of the right tail with 35 df For 1 T=0. 01, df 30 gives t*=2. 46 df 40 gives t*=2. 42 Since 35 is halfway between 30 and 40, a good approximation of df 35 would be: t*=(2. 46+2. 42)/2 = 2. 44 26

3. 6 Example 2 1% in right tail, 35 df: 49% 0 2. 44 27

3. 6 t-distribution Typically, the following variable (similar to the normal Z variable seen earlier) will have a tdistribution: (we will see examples later) 28

7. 5 Estimators as random variables Each of these estimators will give us a result based upon the data available. Therefore, two different data sets can yield two different point estimates. Therefore the value of the point estimate can be seen as being the result of a chance experiment – obtaining a data set. Therefore each point estimate is a random variable, with a probability distribution that can be analyzed using the expectation and variance operator. 29

7. 5 What distribution to use? IF: (when examining a sample mean) A) The population has a normal distribution (this is a reasonable assumption for many populations) And B) You know the population mean The sample mean follows a NORMAL DISTRIBUTION 30

7. 5 What distribution to use? If the population doesn’t have a normal distribution: The central limit theorem states that: “In selecting random samples of size n from a population, the sampling distribution of the sample mean can be approximated by a normal distribution as the same size becomes large. ” ØGeneral statistic practice assumes that a sample size of 30 or more is “large” enough ØIf outliers are an issue, 50 may be a better goal 31

7. 5 What distribution to use? If you don’t know the population mean: A t-distribution can be used instead of a normal distribution. For this course, we will always assume: a) A normal distribution is appropriate BUT b) We don’t have the population mean, so the t-distribution will be used 32

7. 5 Estimators Distribution Since the sample mean is a variable, we can easily apply expectation and summation rules to find the expected value of the sample mean: 33

7. 5 Estimators Distribution If we make the simplifying assumption that there is no covariance between data points (ie: one person’s consumption is unaffected by the next person’s consumption), we can easily calculate variance for the sample mean: 34

7. 5 Estimators Distribution If we don’t know the population variance of Ybar, we can calculate its sample variance, therefore, 35

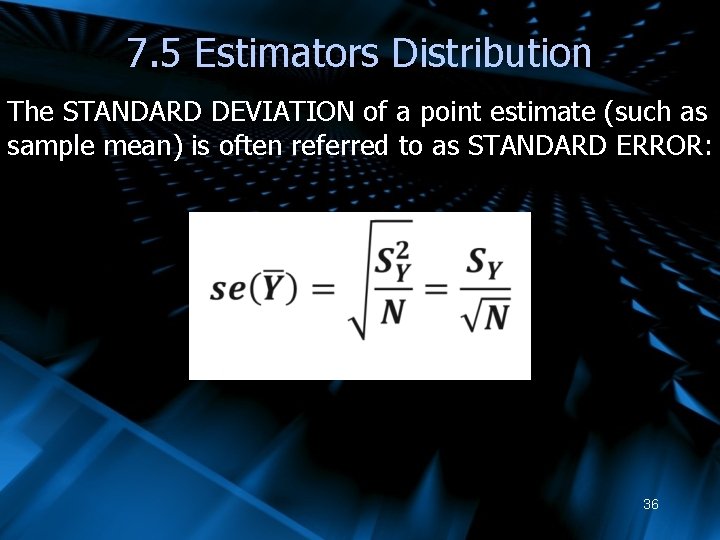

7. 5 Estimators Distribution The STANDARD DEVIATION of a point estimate (such as sample mean) is often referred to as STANDARD ERROR: 36

- Slides: 36