Chapter 3 Linear Algebra February 26 Matrices 3

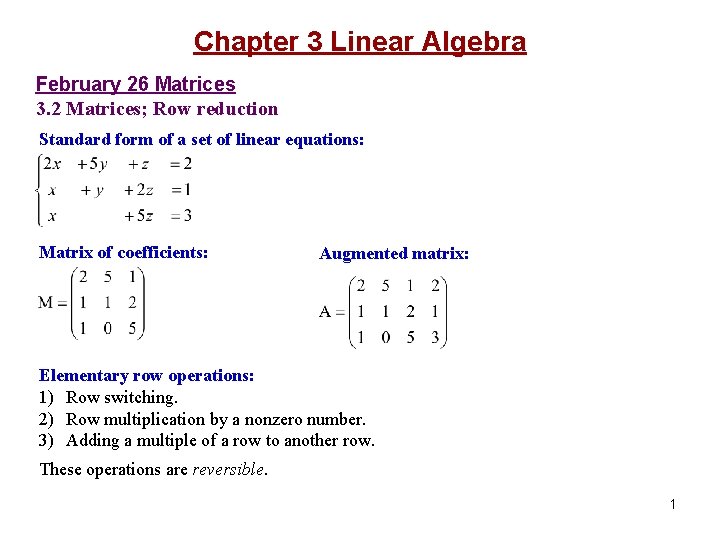

Chapter 3 Linear Algebra February 26 Matrices 3. 2 Matrices; Row reduction Standard form of a set of linear equations: Matrix of coefficients: Augmented matrix: Elementary row operations: 1) Row switching. 2) Row multiplication by a nonzero number. 3) Adding a multiple of a row to another row. These operations are reversible. 1

Solving a set of linear equations by row reduction: Row reduced matrix 2

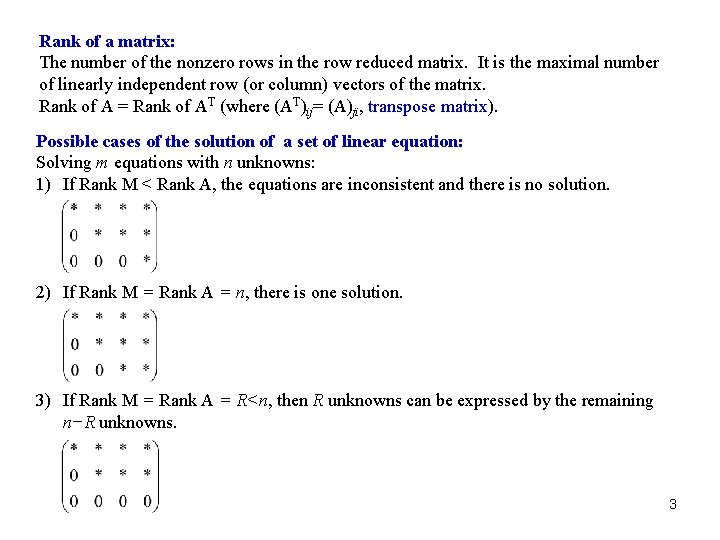

Rank of a matrix: The number of the nonzero rows in the row reduced matrix. It is the maximal number of linearly independent row (or column) vectors of the matrix. Rank of A = Rank of AT (where (AT)ij= (A)ji, transpose matrix). Possible cases of the solution of a set of linear equation: Solving m equations with n unknowns: 1) If Rank M < Rank A, the equations are inconsistent and there is no solution. 2) If Rank M = Rank A = n, there is one solution. 3) If Rank M = Rank A = R<n, then R unknowns can be expressed by the remaining n−R unknowns. 3

Read: Chapter 3: 1 -2 Homework: 3. 2. 4, 8, 10, 12, 15. Due: March 9 4

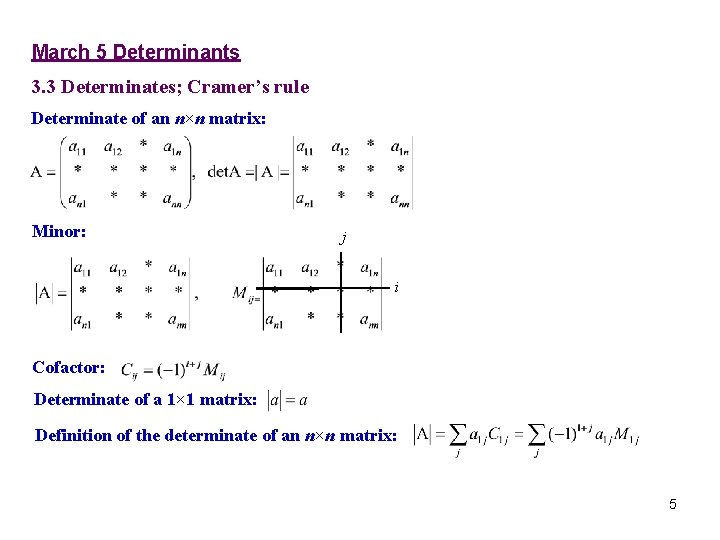

March 5 Determinants 3. 3 Determinates; Cramer’s rule Determinate of an n×n matrix: Minor: j i Cofactor: Determinate of a 1× 1 matrix: Definition of the determinate of an n×n matrix: 5

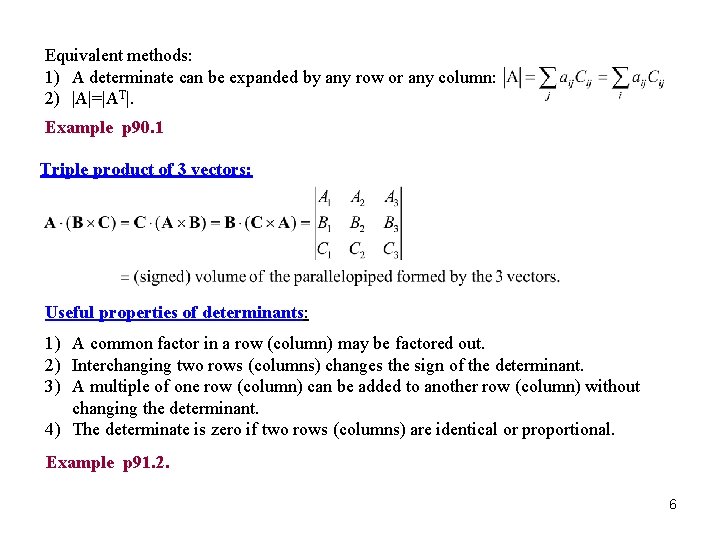

Equivalent methods: 1) A determinate can be expanded by any row or any column: 2) |A|=|AT|. Example p 90. 1 Triple product of 3 vectors: Useful properties of determinants: 1) A common factor in a row (column) may be factored out. 2) Interchanging two rows (columns) changes the sign of the determinant. 3) A multiple of one row (column) can be added to another row (column) without changing the determinant. 4) The determinate is zero if two rows (columns) are identical or proportional. Example p 91. 2. 6

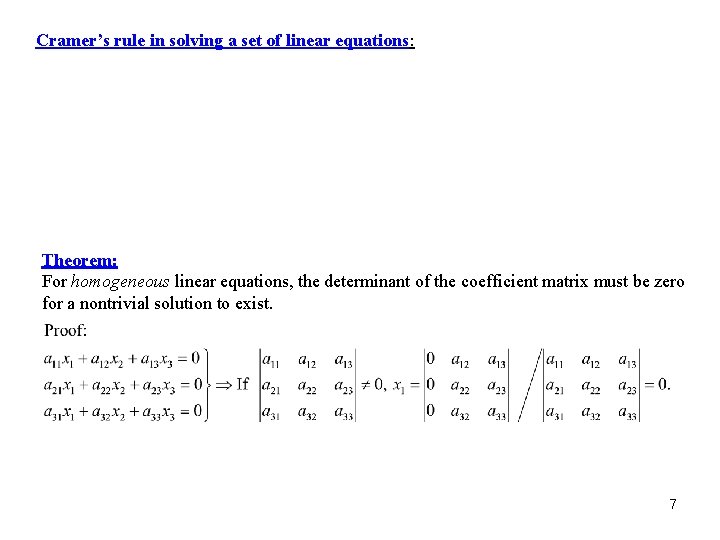

Cramer’s rule in solving a set of linear equations: Theorem: For homogeneous linear equations, the determinant of the coefficient matrix must be zero for a nontrivial solution to exist. 7

Read: Chapter 3: 3 Homework: 3. 3. 1, 10, 15, 17. (No computer work is needed. ) Due: March 23 8

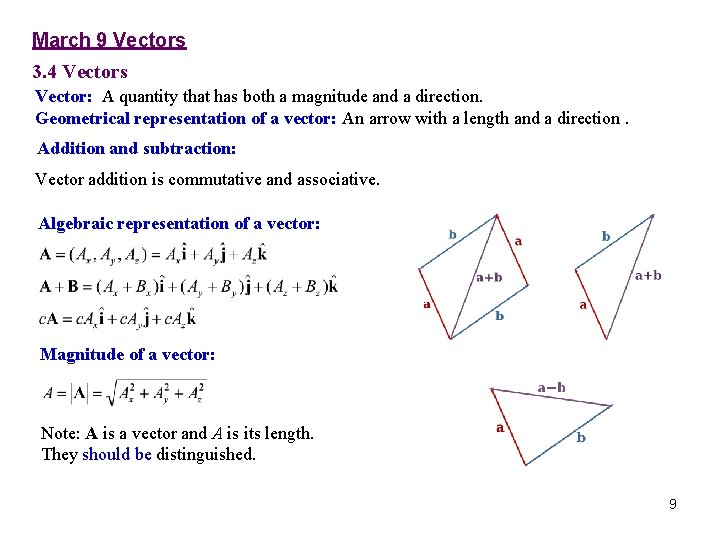

March 9 Vectors 3. 4 Vectors Vector: A quantity that has both a magnitude and a direction. Geometrical representation of a vector: An arrow with a length and a direction. Addition and subtraction: Vector addition is commutative and associative. Algebraic representation of a vector: Magnitude of a vector: Note: A is a vector and A is its length. They should be distinguished. 9

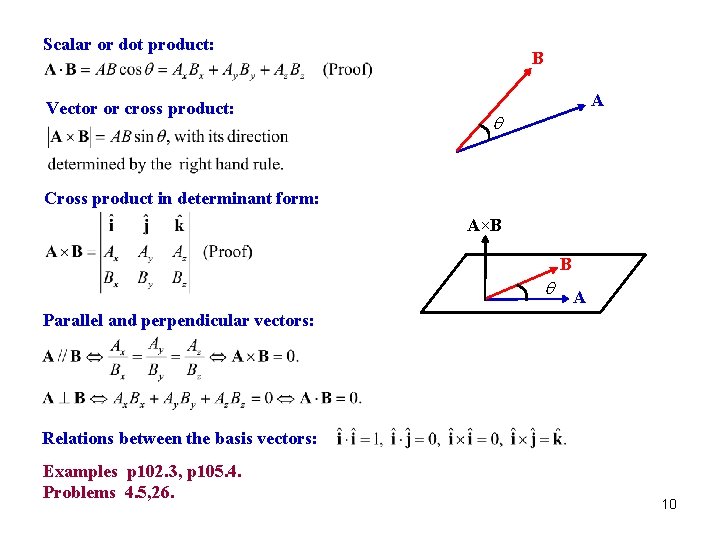

Scalar or dot product: Vector or cross product: B A q Cross product in determinant form: A×B B q A Parallel and perpendicular vectors: Relations between the basis vectors: Examples p 102. 3, p 105. 4. Problems 4. 5, 26. 10

Read: Chapter 3: 4 Homework: 3. 4. 5, 7, 12, 18, 26. Due: March 23 11

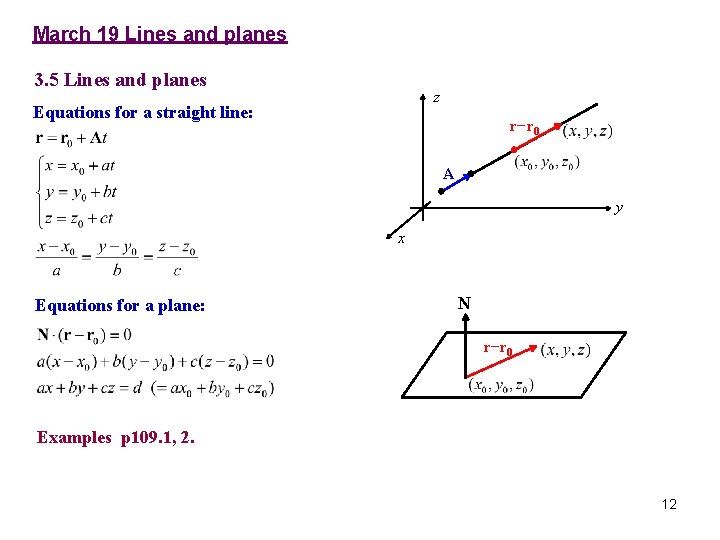

March 19 Lines and planes 3. 5 Lines and planes z Equations for a straight line: r−r 0 A y x Equations for a plane: N r−r 0 Examples p 109. 1, 2. 12

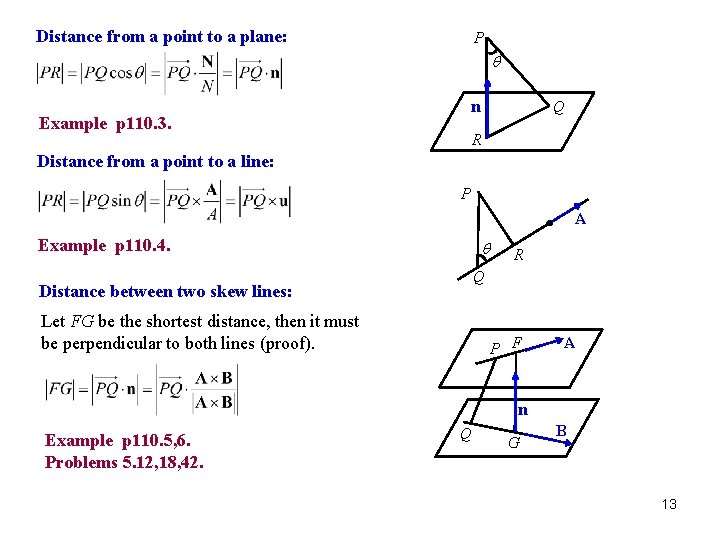

Distance from a point to a plane: P q n Example p 110. 3. Q R Distance from a point to a line: P A Example p 110. 4. q R Q Distance between two skew lines: Let FG be the shortest distance, then it must be perpendicular to both lines (proof). P F A n Example p 110. 5, 6. Problems 5. 12, 18, 42. Q G B 13

Read: Chapter 3: 5 Homework: 3. 5. 7, 12, 18, 20, 26, 32, 37, 42. Due: March 30 14

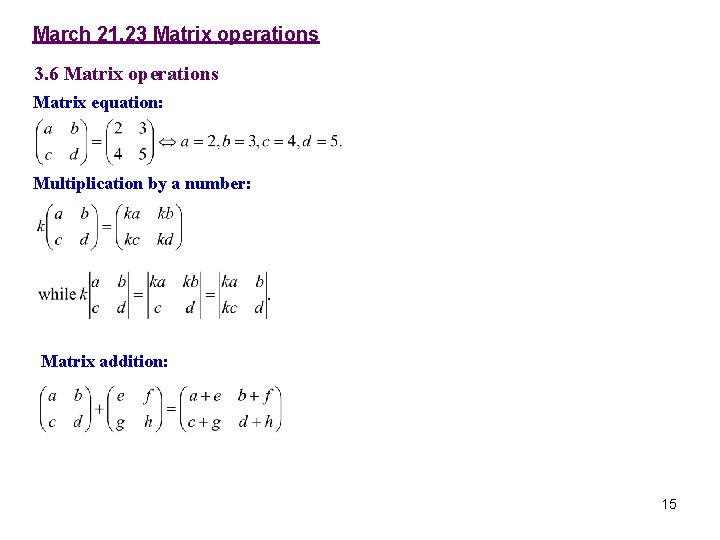

March 21, 23 Matrix operations 3. 6 Matrix operations Matrix equation: Multiplication by a number: Matrix addition: 15

Matrix multiplication: Note: 1) The element on the ith row and jth column of AB is equal to the dot product between the ith row of A and the jth column of B. 2) The number of columns in A is equal to the number of rows in B. Example: More about matrix multiplication: 1) The product is associative: A(BC)=(AB)C 2) The product is distributive: A(B+C)=AB+AC 3) In general the product is not commutative: AB BA. [A, B]=AB−BA is called the commutator. Unit matrix: Zero matrix: Product theorem: 16

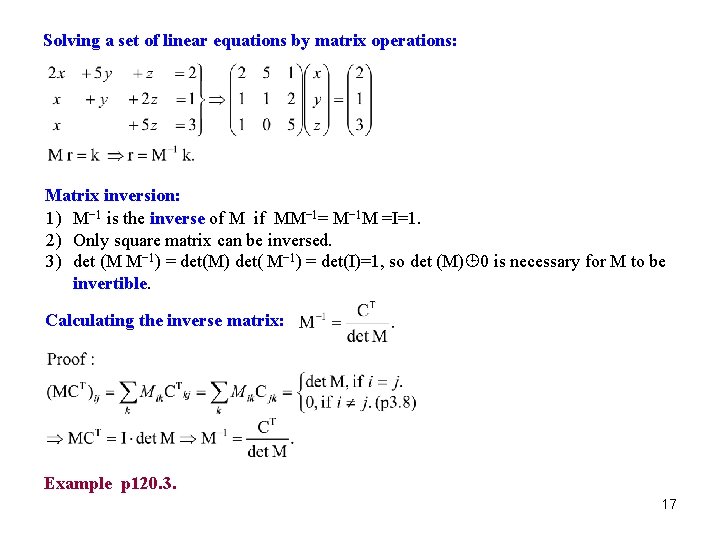

Solving a set of linear equations by matrix operations: Matrix inversion: 1) M− 1 is the inverse of M if MM− 1= M− 1 M =I=1. 2) Only square matrix can be inversed. 3) det (M M− 1) = det(M) det( M− 1) = det(I)=1, so det (M) 0 is necessary for M to be invertible. Calculating the inverse matrix: Example p 120. 3. 17

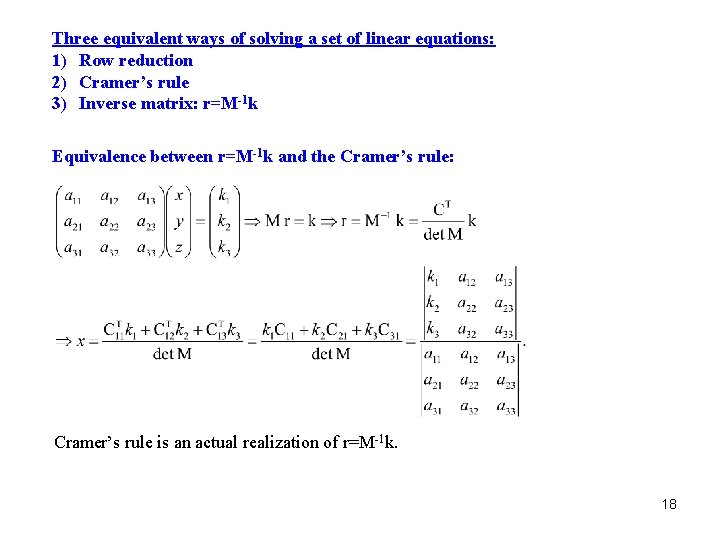

Three equivalent ways of solving a set of linear equations: 1) Row reduction 2) Cramer’s rule 3) Inverse matrix: r=M-1 k Equivalence between r=M-1 k and the Cramer’s rule: Cramer’s rule is an actual realization of r=M-1 k. 18

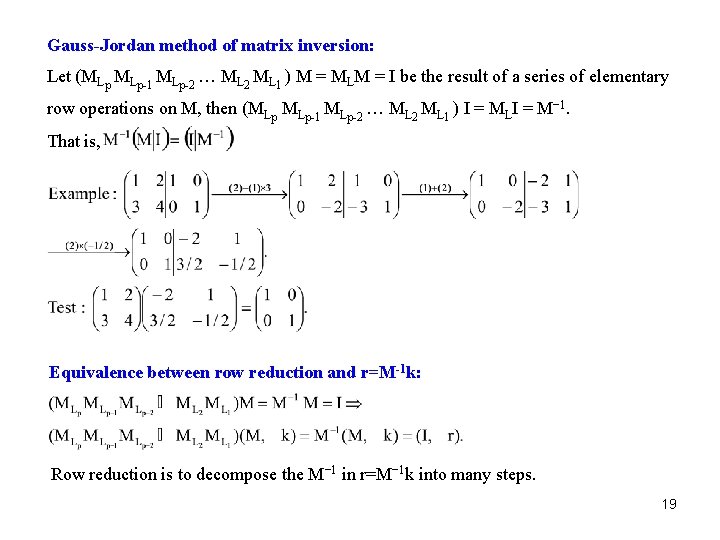

Gauss-Jordan method of matrix inversion: Let (MLp MLp-1 MLp-2 … ML 2 ML 1 ) M = MLM = I be the result of a series of elementary row operations on M, then (MLp MLp-1 MLp-2 … ML 2 ML 1 ) I = MLI = M− 1. That is, Equivalence between row reduction and r=M-1 k: Row reduction is to decompose the M− 1 in r=M− 1 k into many steps. 19

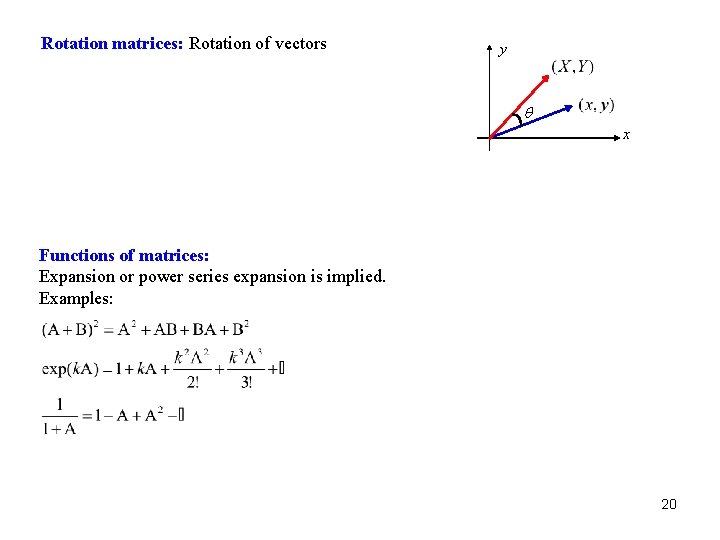

Rotation matrices: Rotation of vectors y q x Functions of matrices: Expansion or power series expansion is implied. Examples: 20

Read: Chapter 3: 6 Homework: 3. 6. 9, 13, 15, 18, 21 Due: March 30 21

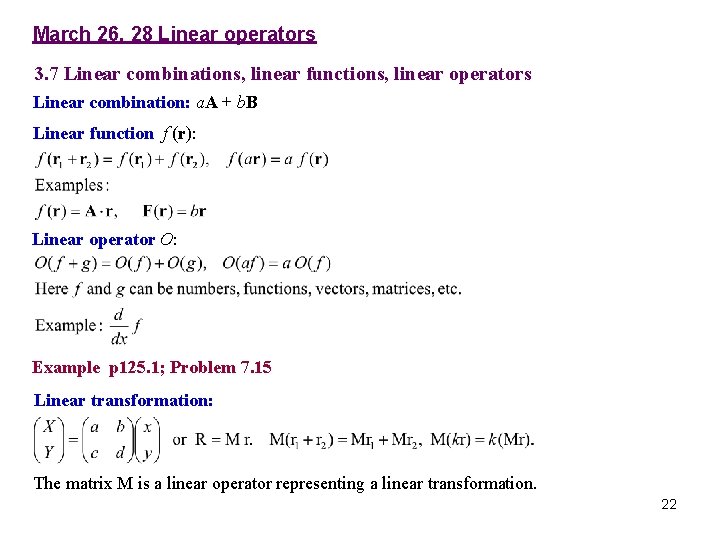

March 26, 28 Linear operators 3. 7 Linear combinations, linear functions, linear operators Linear combination: a. A + b. B Linear function f (r): Linear operator O: Example p 125. 1; Problem 7. 15 Linear transformation: The matrix M is a linear operator representing a linear transformation. 22

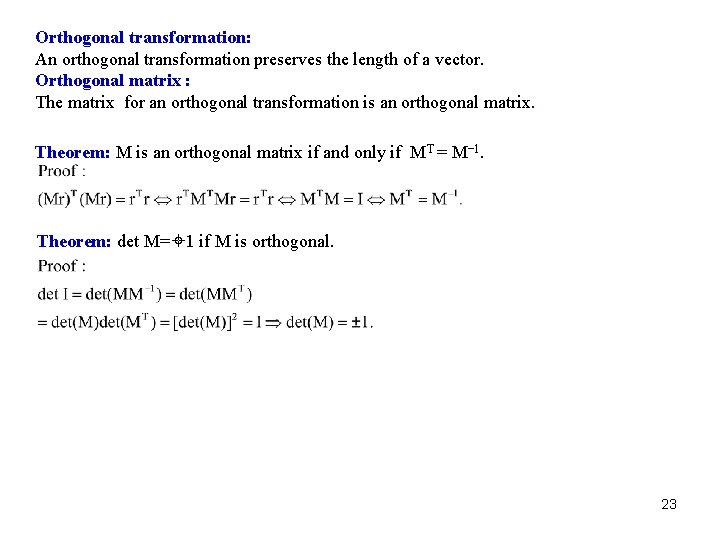

Orthogonal transformation: An orthogonal transformation preserves the length of a vector. Orthogonal matrix : The matrix for an orthogonal transformation is an orthogonal matrix. Theorem: M is an orthogonal matrix if and only if MT = M− 1. Theorem: det M= 1 if M is orthogonal. 23

2× 2 orthogonal matrix: Conclusion: A 2× 2 orthogonal matrix corresponds to either a rotation (with det M=1) or a reflection (with det M=− 1). 24

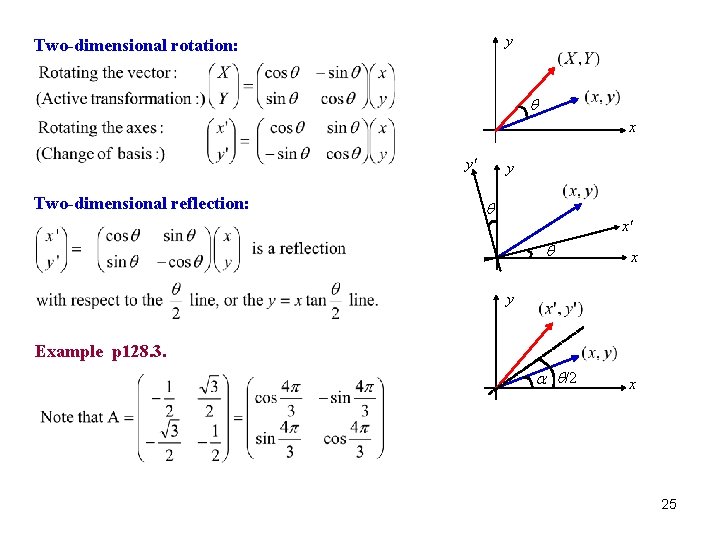

y Two-dimensional rotation: q x y' Two-dimensional reflection: y q x' q x y Example p 128. 3. a q/2 x 25

Read: Chapter 3: 7 Homework: 3. 7. 9, 15, 22, 26. Due: April 6 26

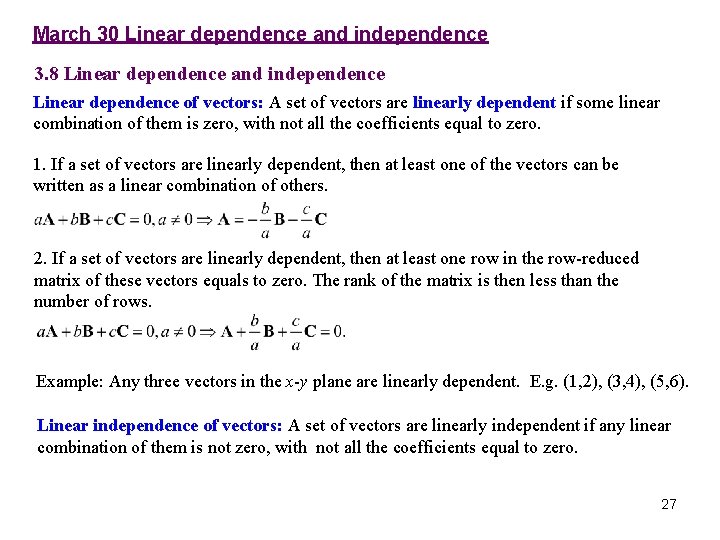

March 30 Linear dependence and independence 3. 8 Linear dependence and independence Linear dependence of vectors: A set of vectors are linearly dependent if some linear combination of them is zero, with not all the coefficients equal to zero. 1. If a set of vectors are linearly dependent, then at least one of the vectors can be written as a linear combination of others. 2. If a set of vectors are linearly dependent, then at least one row in the row-reduced matrix of these vectors equals to zero. The rank of the matrix is then less than the number of rows. Example: Any three vectors in the x-y plane are linearly dependent. E. g. (1, 2), (3, 4), (5, 6). Linear independence of vectors: A set of vectors are linearly independent if any linear combination of them is not zero, with not all the coefficients equal to zero. 27

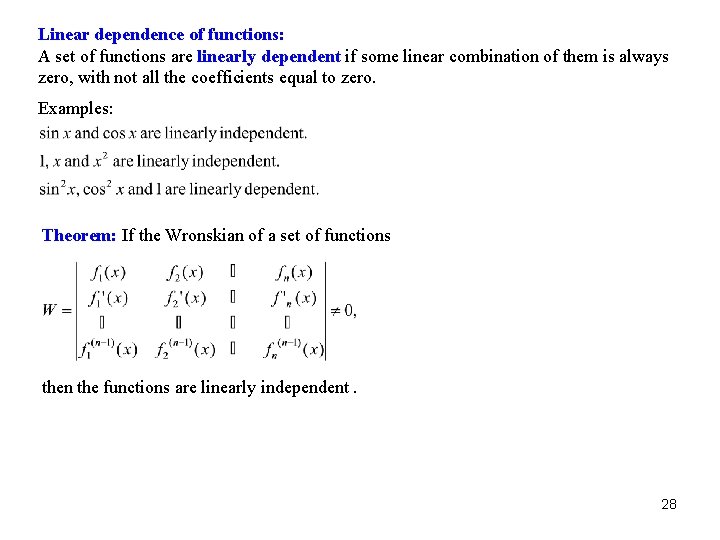

Linear dependence of functions: A set of functions are linearly dependent if some linear combination of them is always zero, with not all the coefficients equal to zero. Examples: Theorem: If the Wronskian of a set of functions then the functions are linearly independent. 28

Examples p 133. 1, 2. Note: W=0 does not always imply the functions are linearly dependent. E. g. , x 2 and x|x| about x=0. However, when the functions are analytic (infinitely differentiable), which we meet often, W=0 implies linear dependence. 29

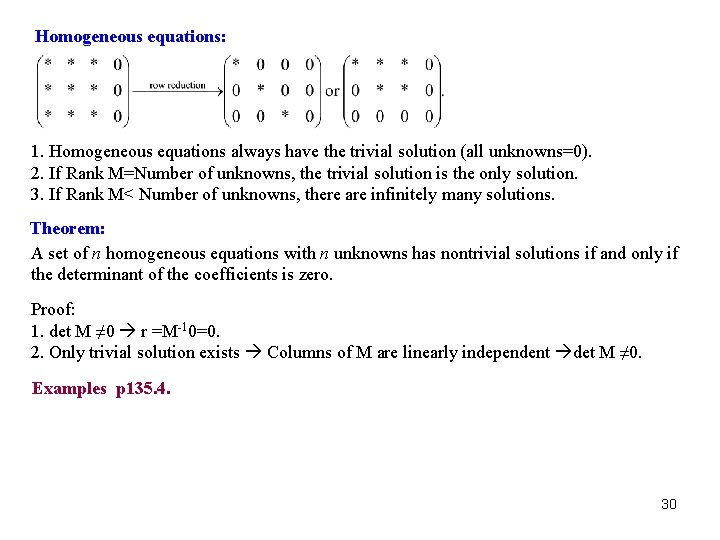

Homogeneous equations: 1. Homogeneous equations always have the trivial solution (all unknowns=0). 2. If Rank M=Number of unknowns, the trivial solution is the only solution. 3. If Rank M< Number of unknowns, there are infinitely many solutions. Theorem: A set of n homogeneous equations with n unknowns has nontrivial solutions if and only if the determinant of the coefficients is zero. Proof: 1. det M ≠ 0 r =M-10=0. 2. Only trivial solution exists Columns of M are linearly independent det M ≠ 0. Examples p 135. 4. 30

Read: Chapter 3: 8 Homework: 3. 8. 7, 10, 13, 17, 24. Due: April 6 31

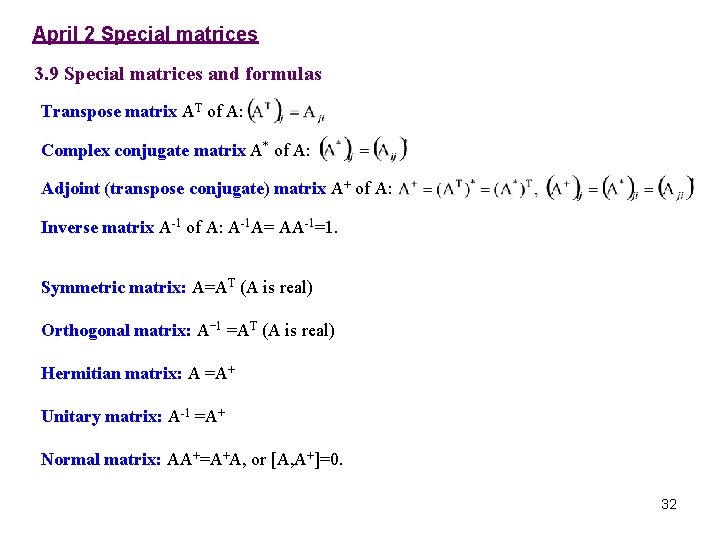

April 2 Special matrices 3. 9 Special matrices and formulas Transpose matrix AT of A: Complex conjugate matrix A* of A: Adjoint (transpose conjugate) matrix A+ of A: Inverse matrix A-1 of A: A-1 A= AA-1=1. Symmetric matrix: A=AT (A is real) Orthogonal matrix: A− 1 =AT (A is real) Hermitian matrix: A =A+ Unitary matrix: A-1 =A+ Normal matrix: AA+=A+A, or [A, A+]=0. 32

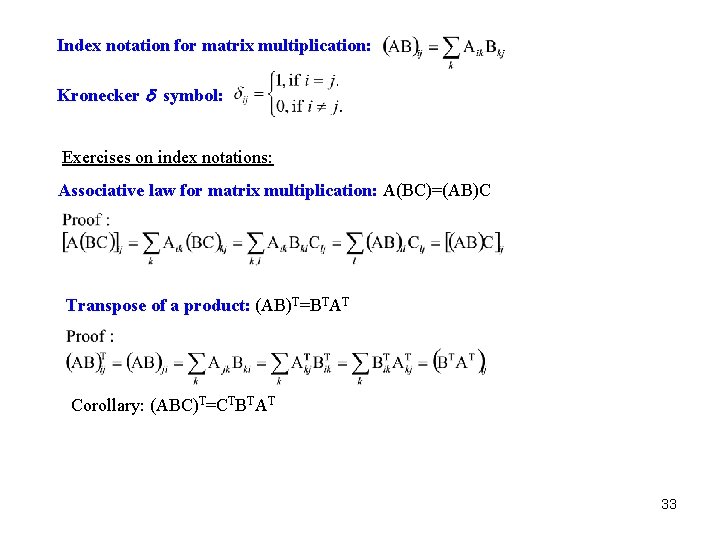

Index notation for matrix multiplication: Kronecker d symbol: Exercises on index notations: Associative law for matrix multiplication: A(BC)=(AB)C Transpose of a product: (AB)T=BTAT Corollary: (ABC)T=CTBTAT 33

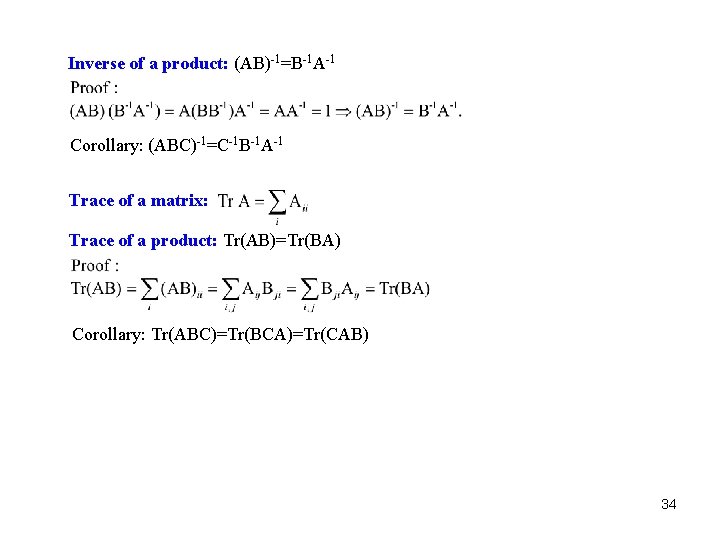

Inverse of a product: (AB)-1=B-1 A-1 Corollary: (ABC)-1=C-1 B-1 A-1 Trace of a matrix: Trace of a product: Tr(AB)=Tr(BA) Corollary: Tr(ABC)=Tr(BCA)=Tr(CAB) 34

Read: Chapter 3: 9 Homework: 3. 9. 2, 4, 5, 23, 24. Due: April 13 35

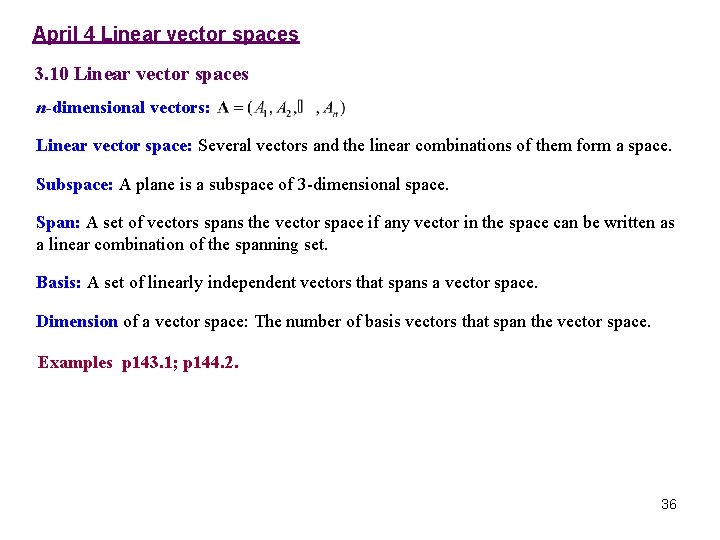

April 4 Linear vector spaces 3. 10 Linear vector spaces n-dimensional vectors: Linear vector space: Several vectors and the linear combinations of them form a space. Subspace: A plane is a subspace of 3 -dimensional space. Span: A set of vectors spans the vector space if any vector in the space can be written as a linear combination of the spanning set. Basis: A set of linearly independent vectors that spans a vector space. Dimension of a vector space: The number of basis vectors that span the vector space. Examples p 143. 1; p 144. 2. 36

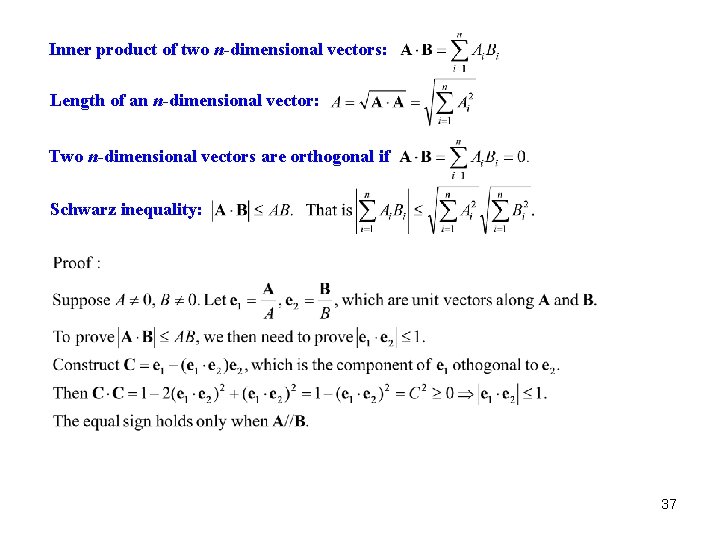

Inner product of two n-dimensional vectors: Length of an n-dimensional vector: Two n-dimensional vectors are orthogonal if Schwarz inequality: 37

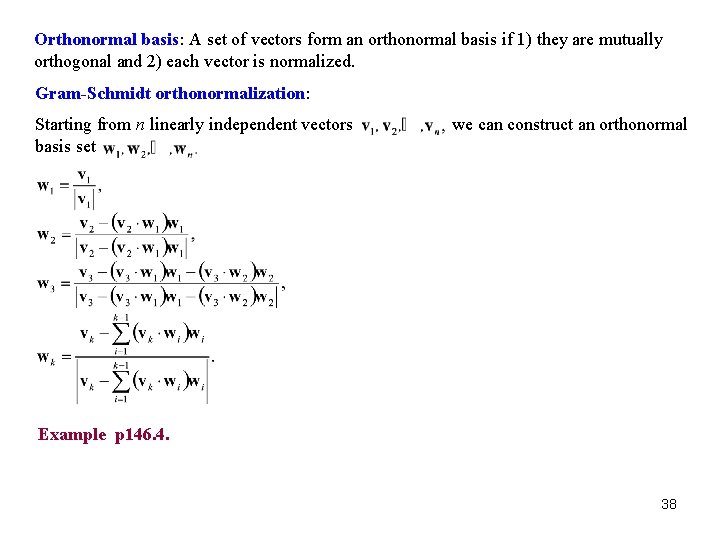

Orthonormal basis: A set of vectors form an orthonormal basis if 1) they are mutually orthogonal and 2) each vector is normalized. Gram-Schmidt orthonormalization: Starting from n linearly independent vectors basis set we can construct an orthonormal Example p 146. 4. 38

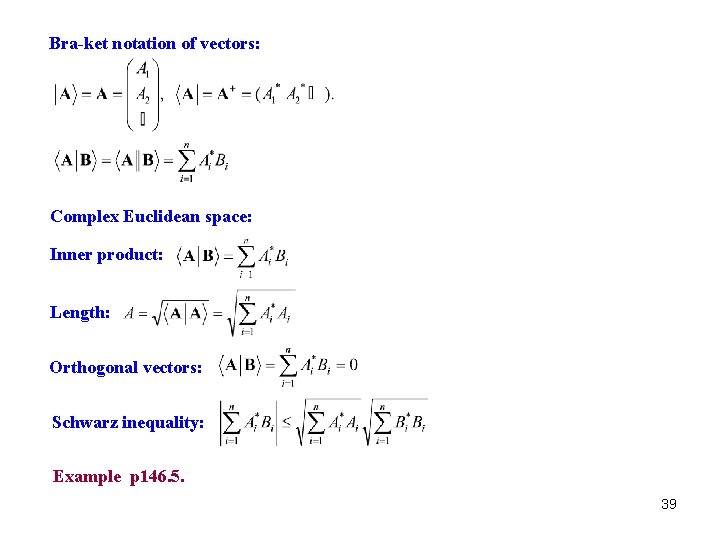

Bra-ket notation of vectors: Complex Euclidean space: Inner product: Length: Orthogonal vectors: Schwarz inequality: Example p 146. 5. 39

Read: Chapter 3: 10 Homework: 3. 10. 1, 10. Due: April 13 40

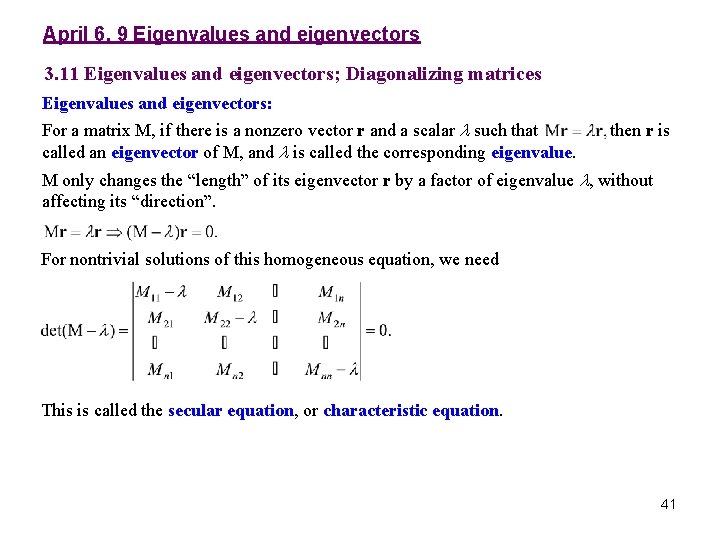

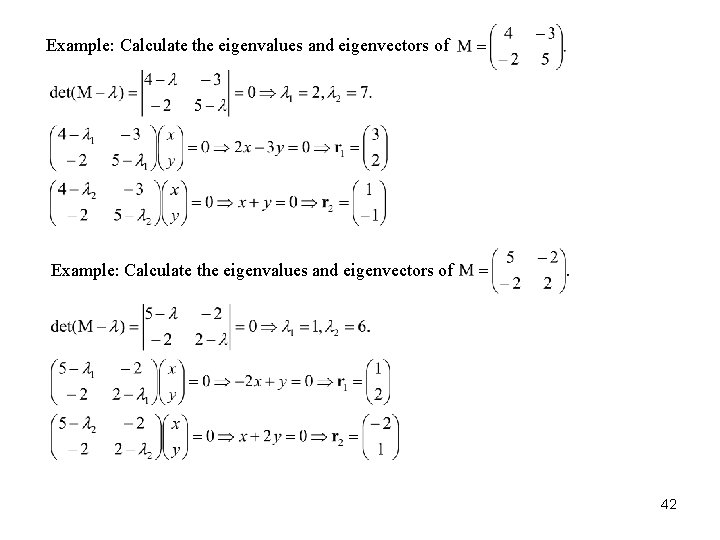

April 6, 9 Eigenvalues and eigenvectors 3. 11 Eigenvalues and eigenvectors; Diagonalizing matrices Eigenvalues and eigenvectors: For a matrix M, if there is a nonzero vector r and a scalar l such that called an eigenvector of M, and l is called the corresponding eigenvalue. then r is M only changes the “length” of its eigenvector r by a factor of eigenvalue l, without affecting its “direction”. For nontrivial solutions of this homogeneous equation, we need This is called the secular equation, or characteristic equation. 41

Example: Calculate the eigenvalues and eigenvectors of 42

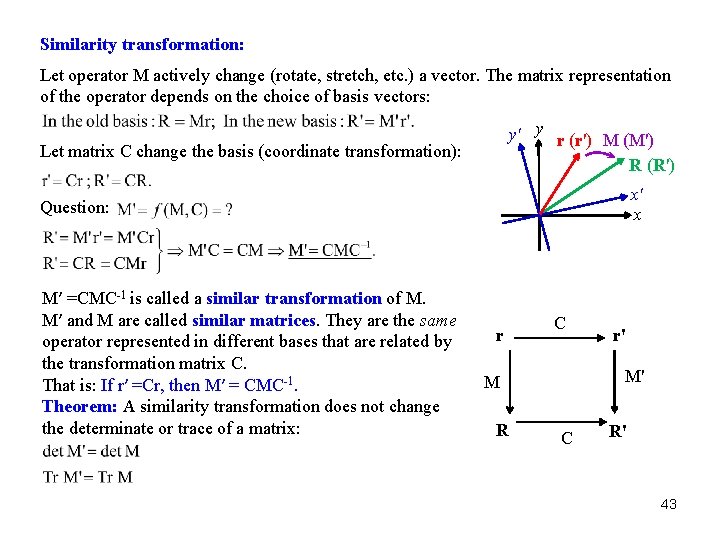

Similarity transformation: Let operator M actively change (rotate, stretch, etc. ) a vector. The matrix representation of the operator depends on the choice of basis vectors: y' y r (r') M (M') R (R') Let matrix C change the basis (coordinate transformation): x' x Question: M′ =CMC-1 is called a similar transformation of M. M′ and M are called similar matrices. They are the same operator represented in different bases that are related by the transformation matrix C. That is: If r′ =Cr, then M′ = CMC-1. Theorem: A similarity transformation does not change the determinate or trace of a matrix: r C M' M R r' C R' 43

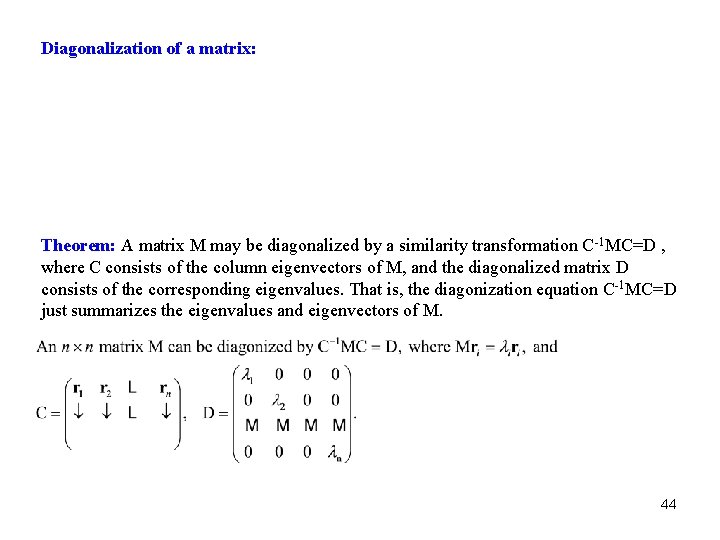

Diagonalization of a matrix: Theorem: A matrix M may be diagonalized by a similarity transformation C-1 MC=D , where C consists of the column eigenvectors of M, and the diagonalized matrix D consists of the corresponding eigenvalues. That is, the diagonization equation C-1 MC=D just summarizes the eigenvalues and eigenvectors of M. 44

45

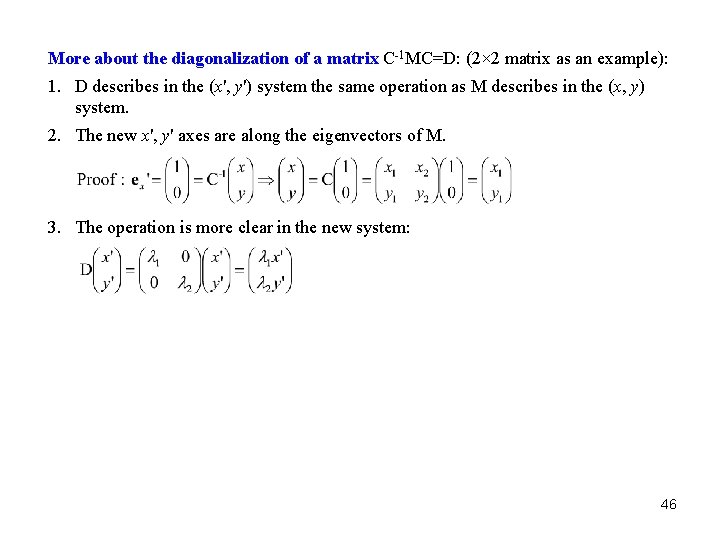

More about the diagonalization of a matrix C-1 MC=D: (2× 2 matrix as an example): 1. D describes in the (x', y') system the same operation as M describes in the (x, y) system. 2. The new x', y' axes are along the eigenvectors of M. 3. The operation is more clear in the new system: 46

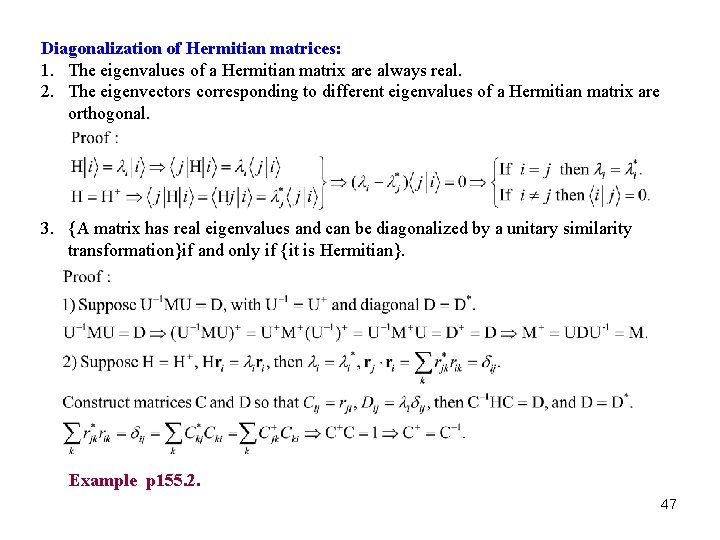

Diagonalization of Hermitian matrices: 1. The eigenvalues of a Hermitian matrix are always real. 2. The eigenvectors corresponding to different eigenvalues of a Hermitian matrix are orthogonal. 3. {A matrix has real eigenvalues and can be diagonalized by a unitary similarity transformation}if and only if {it is Hermitian}. Example p 155. 2. 47

Corollary: {A matrix has real eigenvalues and can be diagonalized by an orthogonal similarity transformation} if and only if {it is symmetric}. 48

Read: Chapter 3: 11 Homework: 3. 11. 3, 14, 19, 32, 33, 42. Due: April 20 49

- Slides: 49