Chapter 12 Exact Discrete Optimization Methods q Many

- Slides: 68

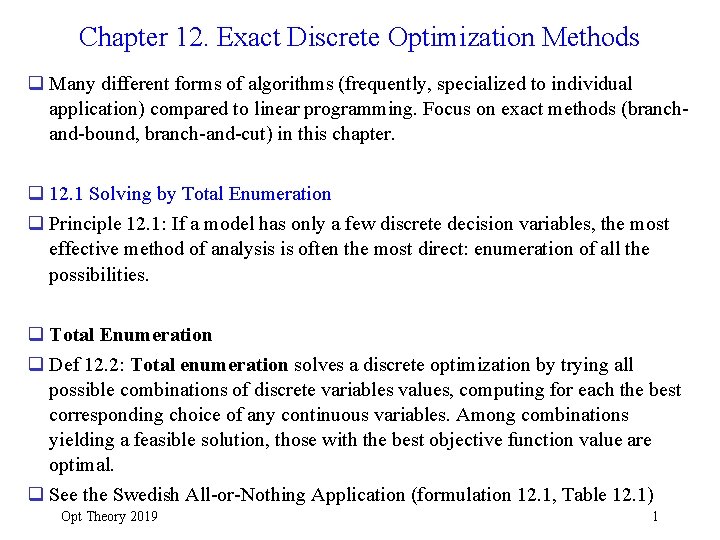

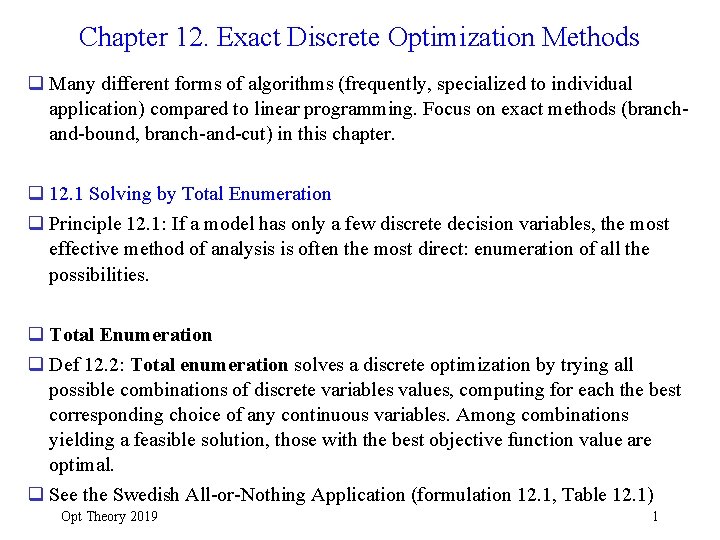

Chapter 12. Exact Discrete Optimization Methods q Many different forms of algorithms (frequently, specialized to individual application) compared to linear programming. Focus on exact methods (branchand-bound, branch-and-cut) in this chapter. q 12. 1 Solving by Total Enumeration q Principle 12. 1: If a model has only a few discrete decision variables, the most effective method of analysis is often the most direct: enumeration of all the possibilities. q Total Enumeration q Def 12. 2: Total enumeration solves a discrete optimization by trying all possible combinations of discrete variables values, computing for each the best corresponding choice of any continuous variables. Among combinations yielding a feasible solution, those with the best objective function value are optimal. q See the Swedish All-or-Nothing Application (formulation 12. 1, Table 12. 1) Opt Theory 2019 1

TABLE 12. 1 Enumeration of the Swedish Steel All-or. Nothing Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

q Opt Theory 2019 3

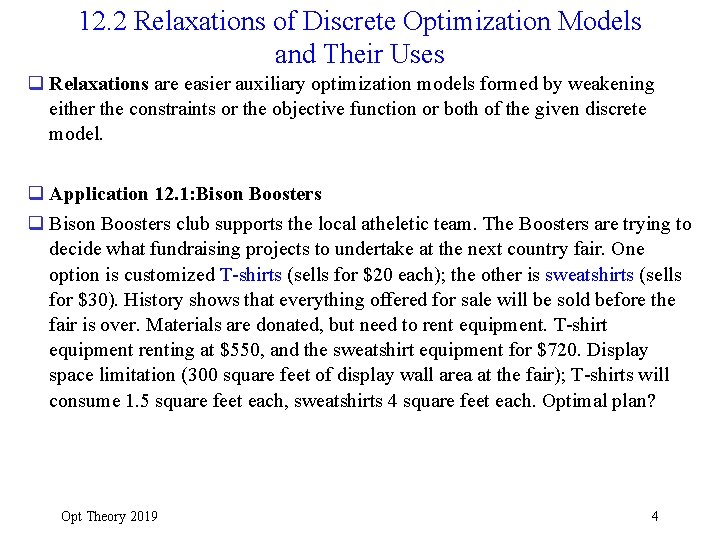

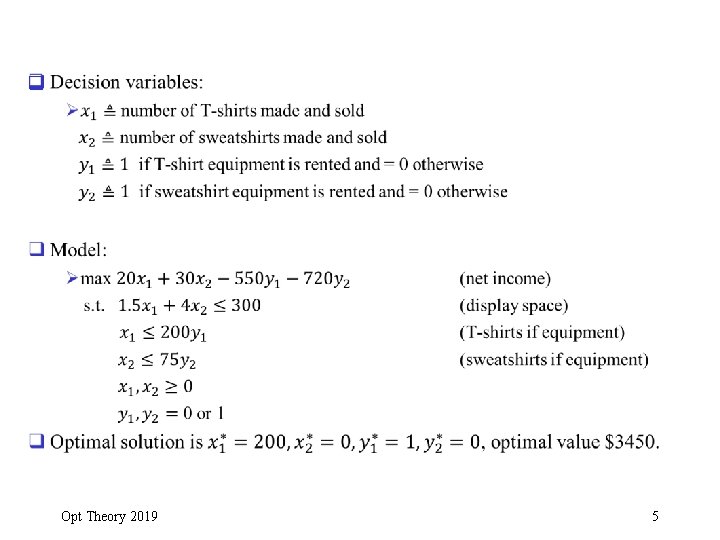

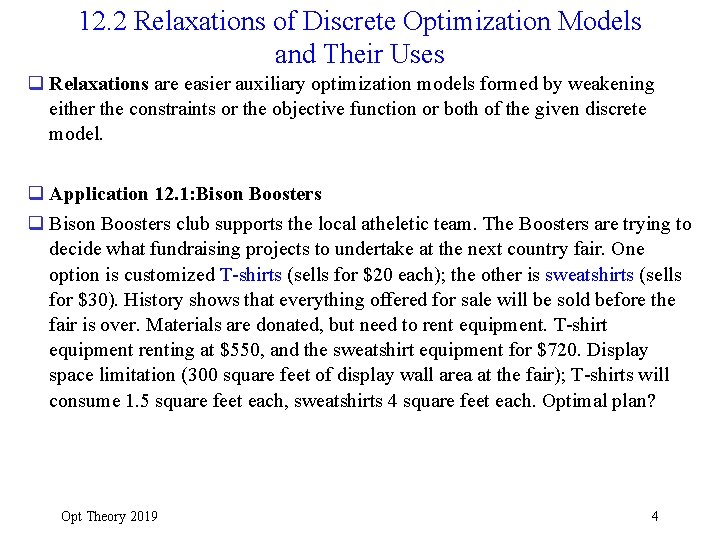

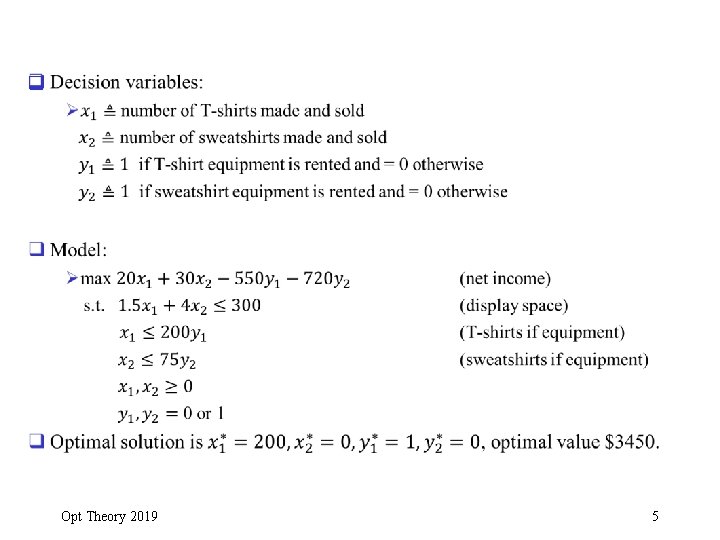

12. 2 Relaxations of Discrete Optimization Models and Their Uses q Relaxations are easier auxiliary optimization models formed by weakening either the constraints or the objective function or both of the given discrete model. q Application 12. 1: Bison Boosters q Bison Boosters club supports the local atheletic team. The Boosters are trying to decide what fundraising projects to undertake at the next country fair. One option is customized T-shirts (sells for $20 each); the other is sweatshirts (sells for $30). History shows that everything offered for sale will be sold before the fair is over. Materials are donated, but need to rent equipment. T-shirt equipment renting at $550, and the sweatshirt equipment for $720. Display space limitation (300 square feet of display wall area at the fair); T-shirts will consume 1. 5 square feet each, sweatshirts 4 square feet each. Optimal plan? Opt Theory 2019 4

q Opt Theory 2019 5

q Opt Theory 2019 6

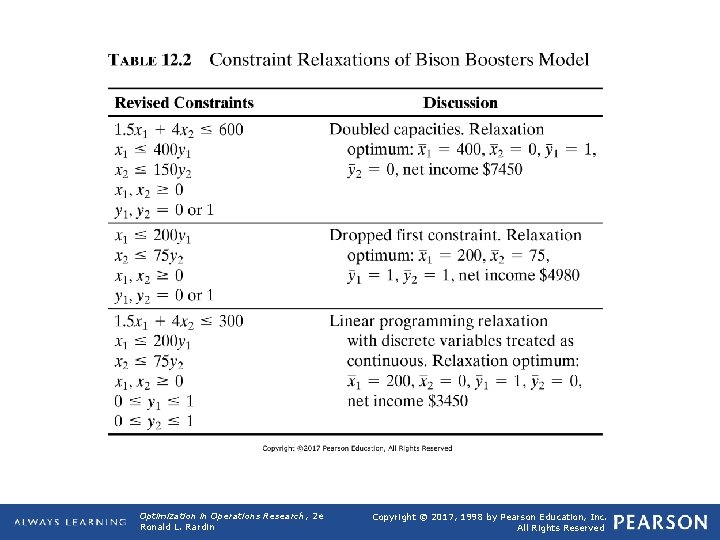

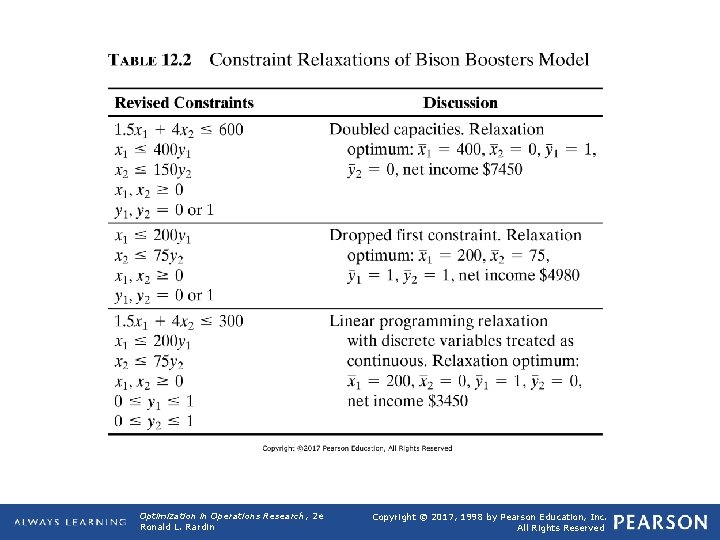

TABLE 12. 2 Constraint Relaxations of Bison Boosters Model Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

q Opt Theory 2019 8

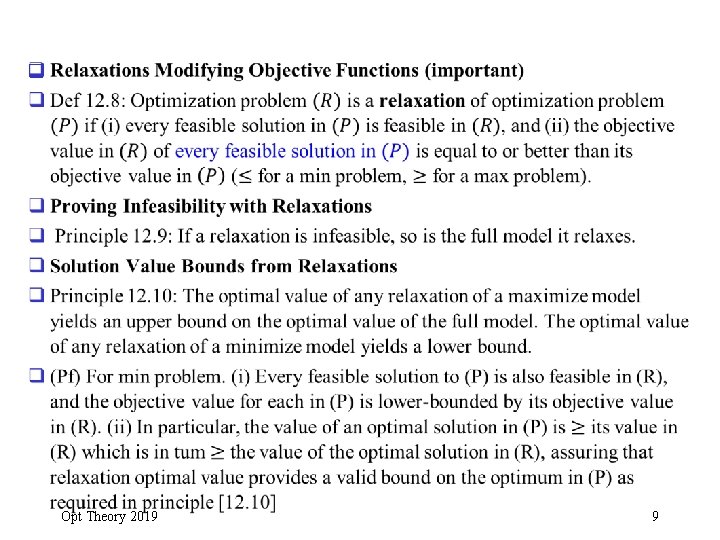

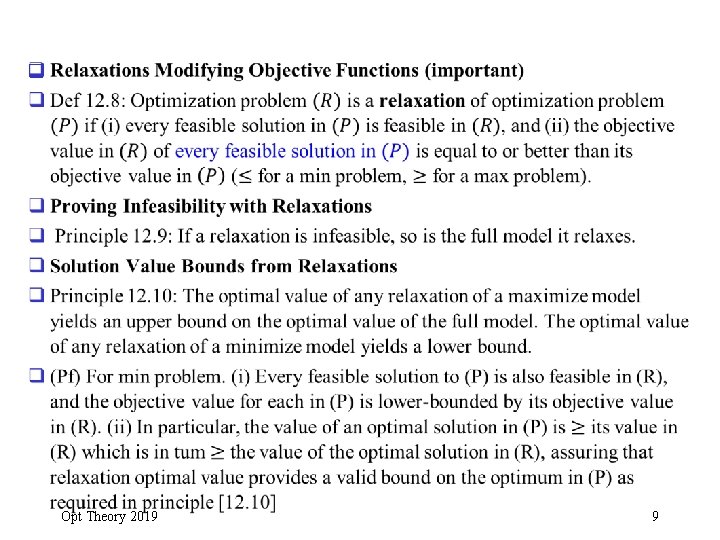

q Opt Theory 2019 9

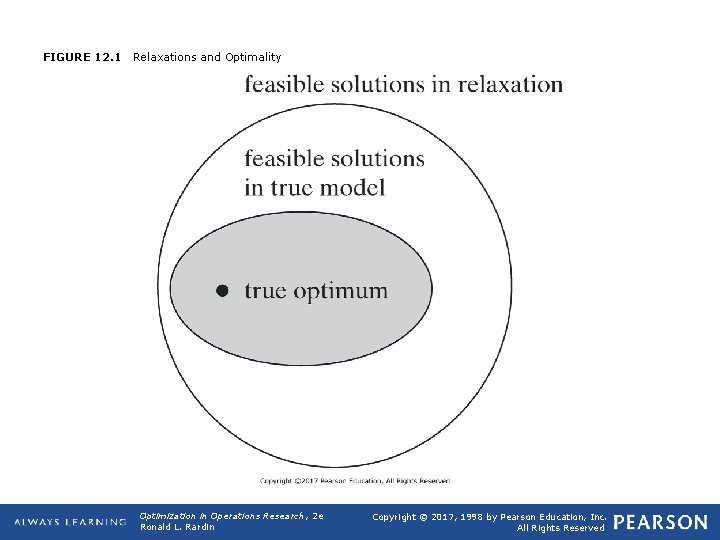

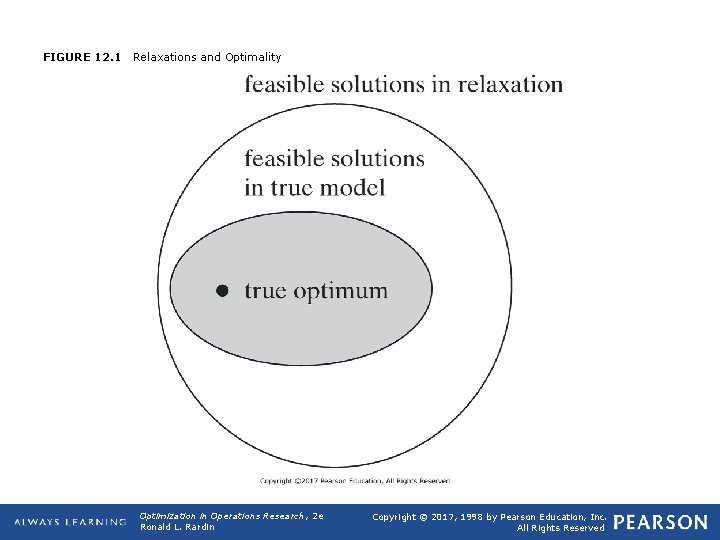

FIGURE 12. 1 Relaxations and Optimality Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

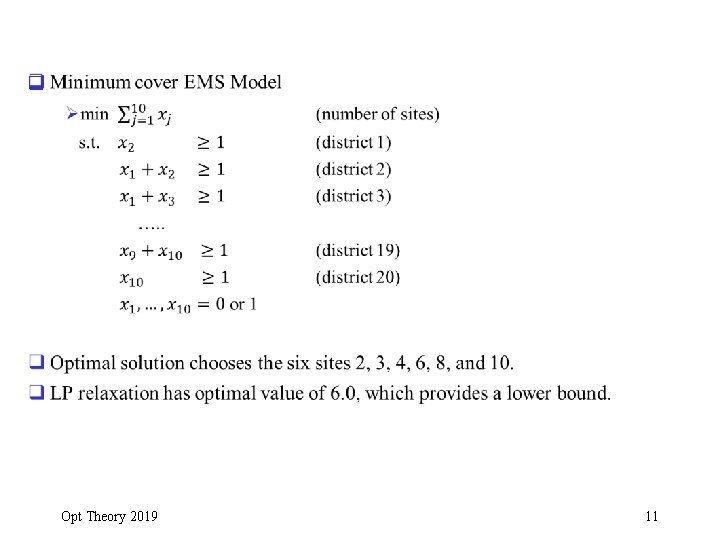

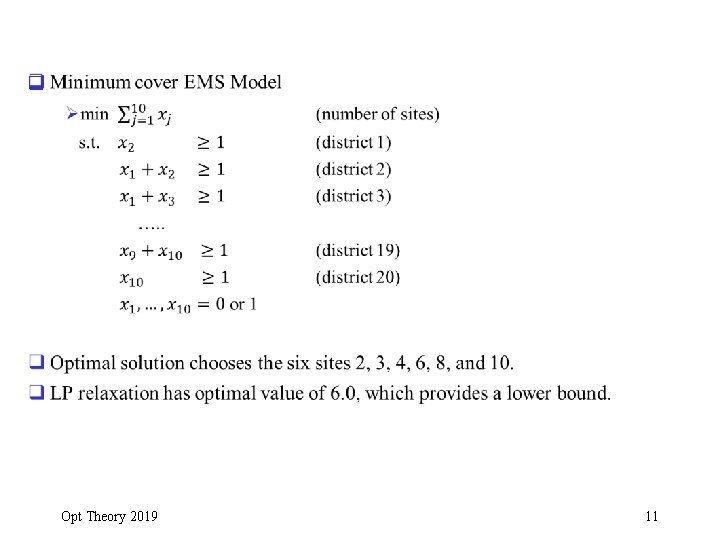

q Opt Theory 2019 11

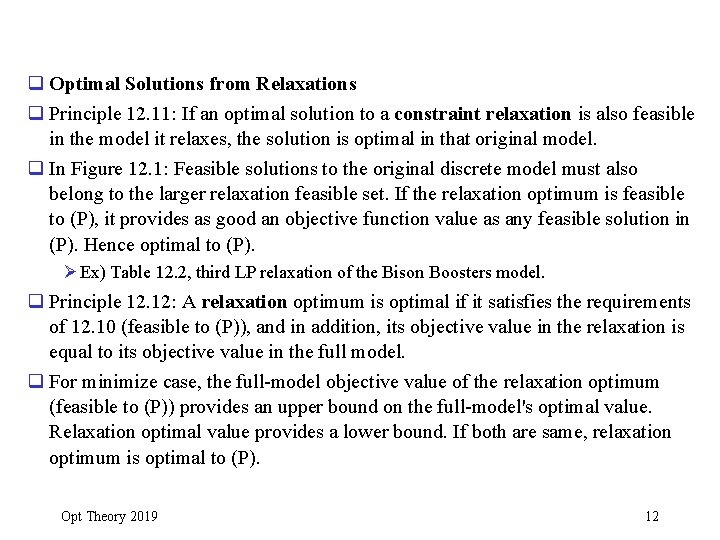

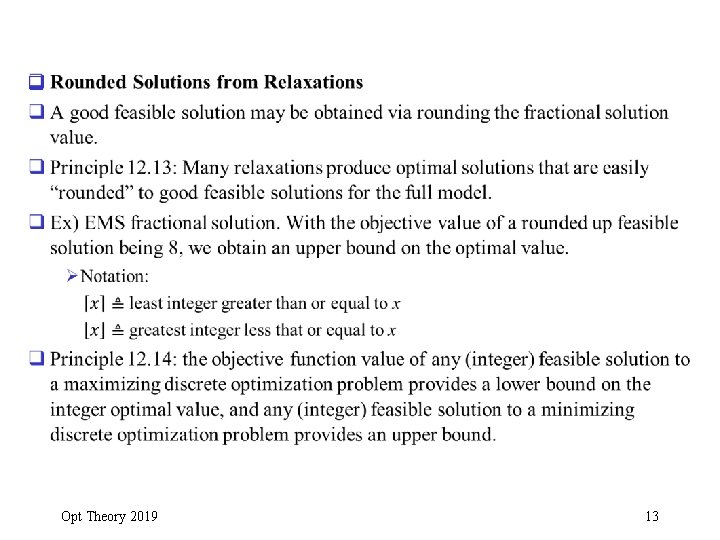

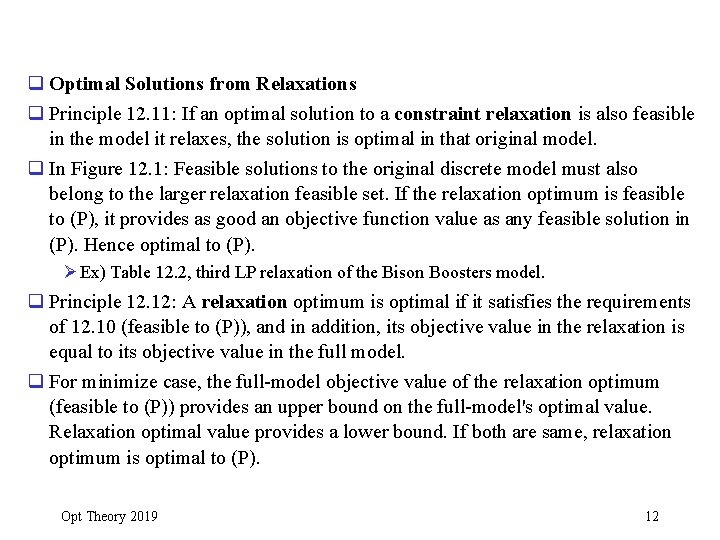

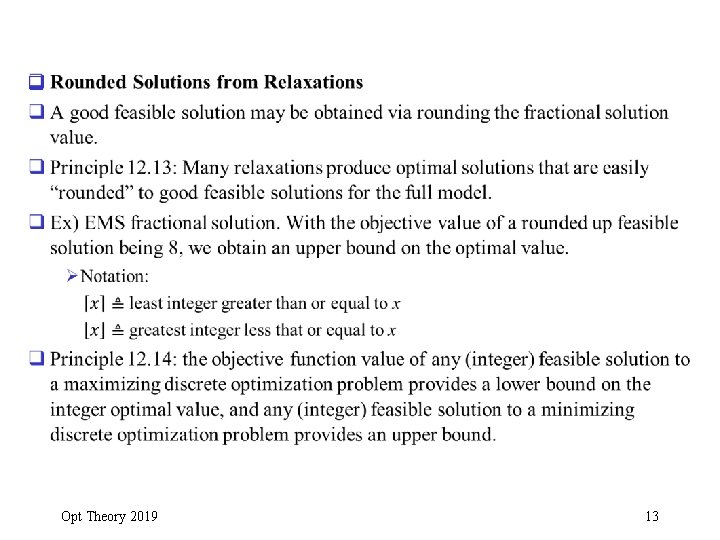

q Optimal Solutions from Relaxations q Principle 12. 11: If an optimal solution to a constraint relaxation is also feasible in the model it relaxes, the solution is optimal in that original model. q In Figure 12. 1: Feasible solutions to the original discrete model must also belong to the larger relaxation feasible set. If the relaxation optimum is feasible to (P), it provides as good an objective function value as any feasible solution in (P). Hence optimal to (P). Ø Ex) Table 12. 2, third LP relaxation of the Bison Boosters model. q Principle 12. 12: A relaxation optimum is optimal if it satisfies the requirements of 12. 10 (feasible to (P)), and in addition, its objective value in the relaxation is equal to its objective value in the full model. q For minimize case, the full-model objective value of the relaxation optimum (feasible to (P)) provides an upper bound on the full-model's optimal value. Relaxation optimal value provides a lower bound. If both are same, relaxation optimum is optimal to (P). Opt Theory 2019 12

q Opt Theory 2019 13

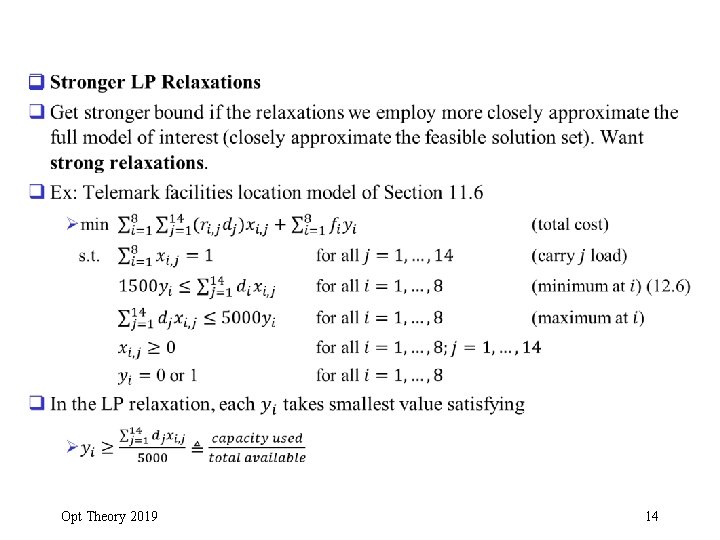

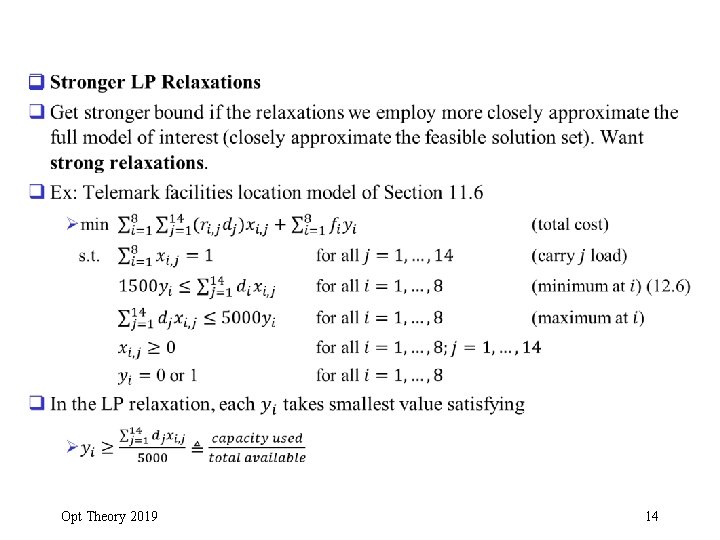

q Opt Theory 2019 14

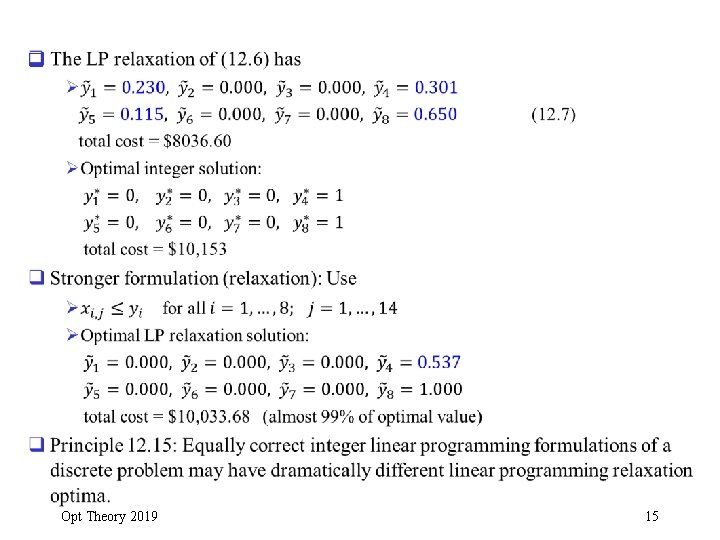

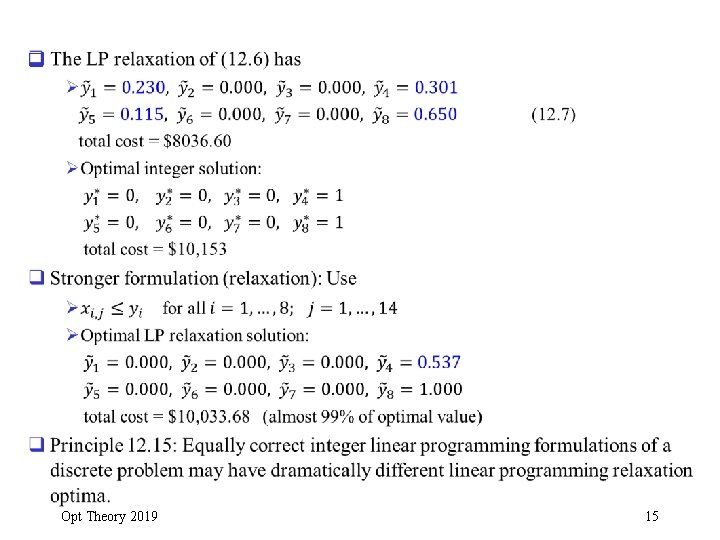

q Opt Theory 2019 15

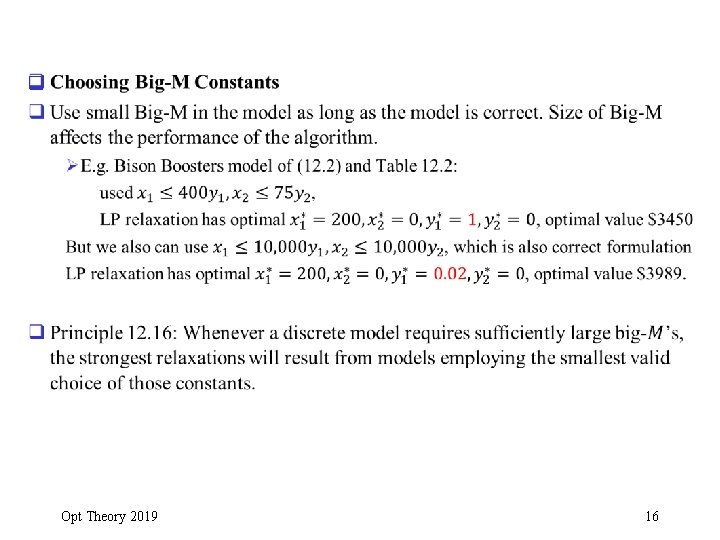

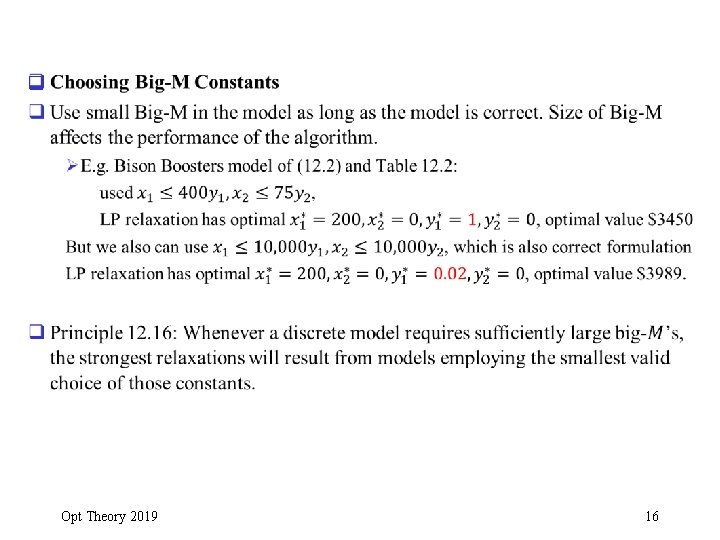

q Opt Theory 2019 16

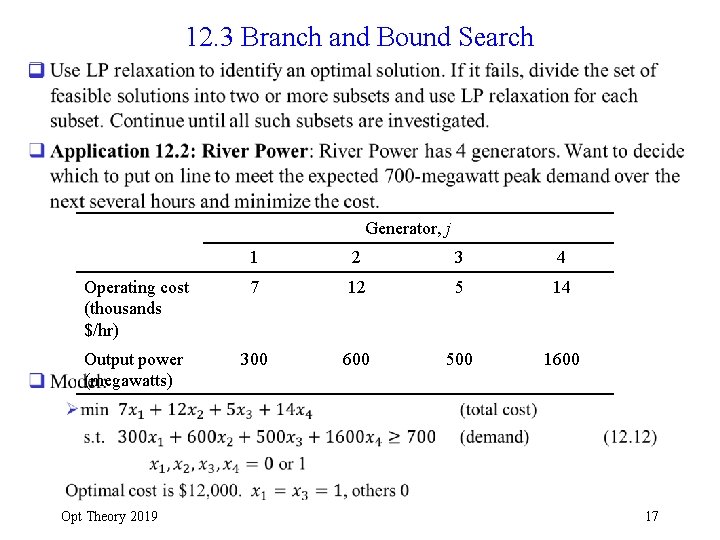

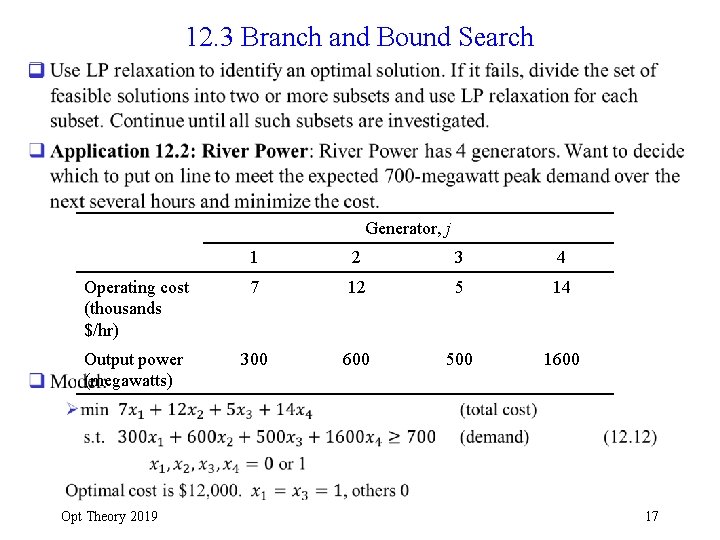

12. 3 Branch and Bound Search q Generator, j 1 2 3 4 Operating cost (thousands $/hr) 7 12 5 14 Output power (megawatts) 300 600 500 1600 Opt Theory 2019 17

q Opt Theory 2019 18

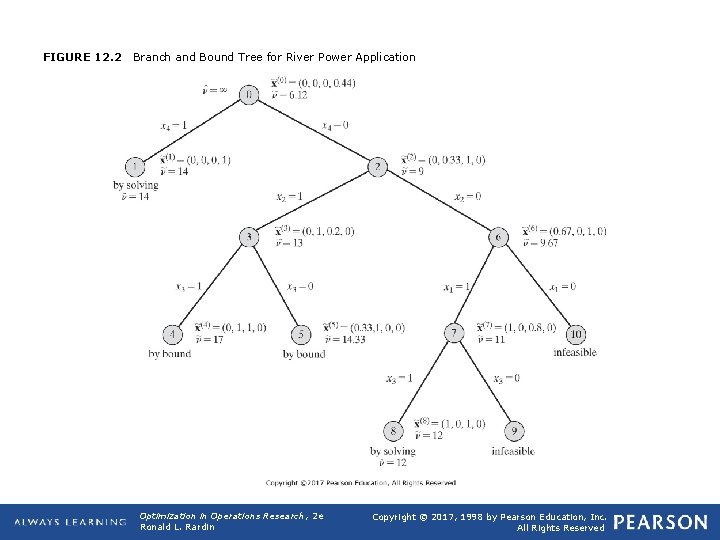

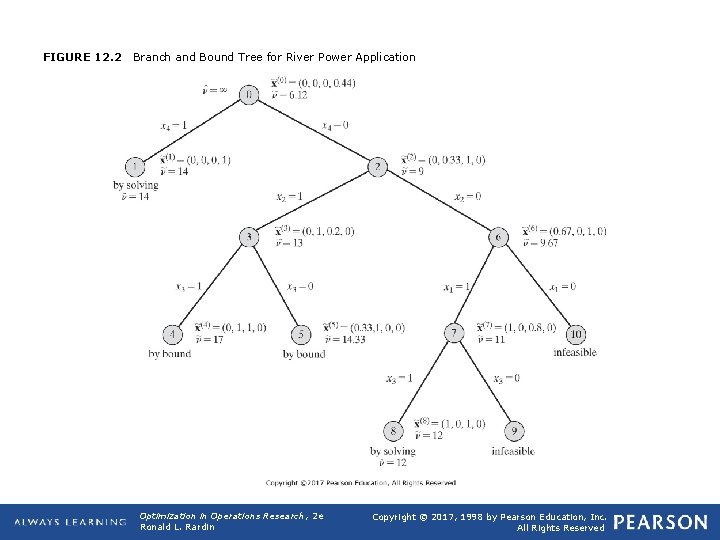

FIGURE 12. 2 Branch and Bound Tree for River Power Application Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

q Opt Theory 2019 20

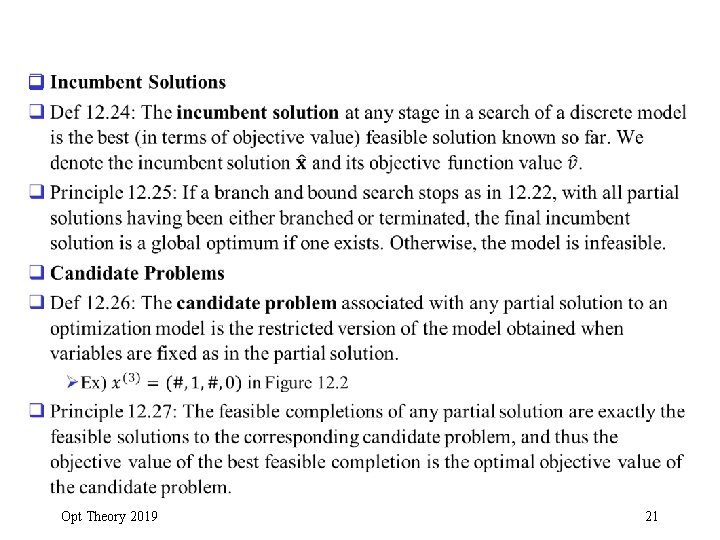

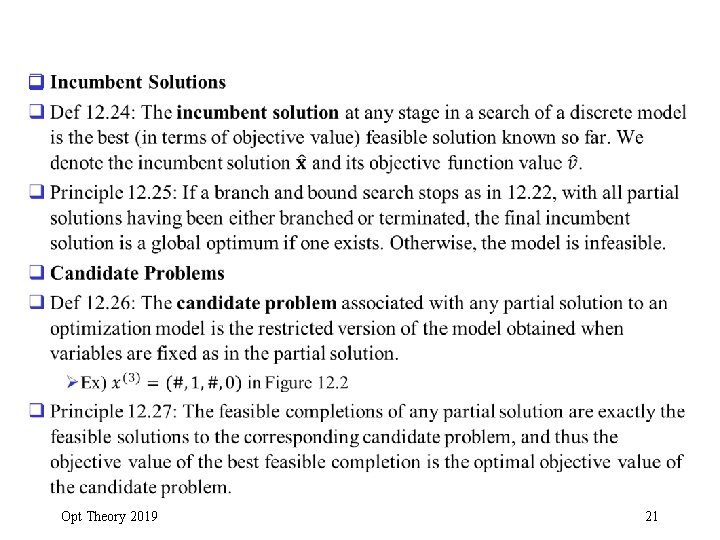

q Opt Theory 2019 21

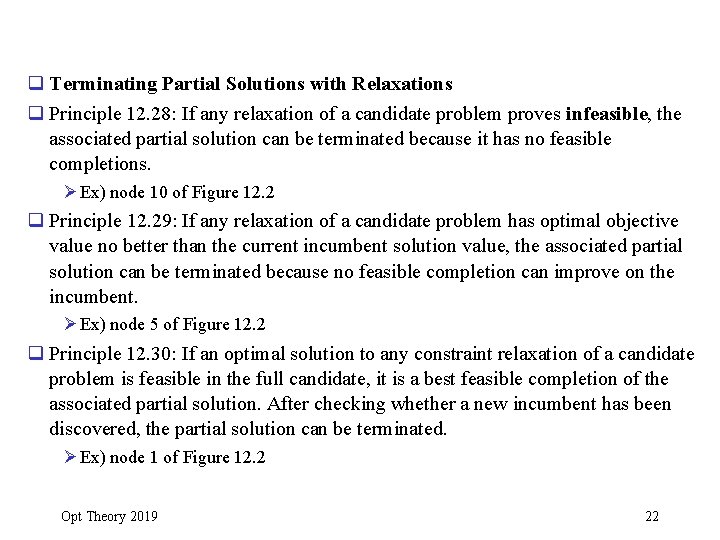

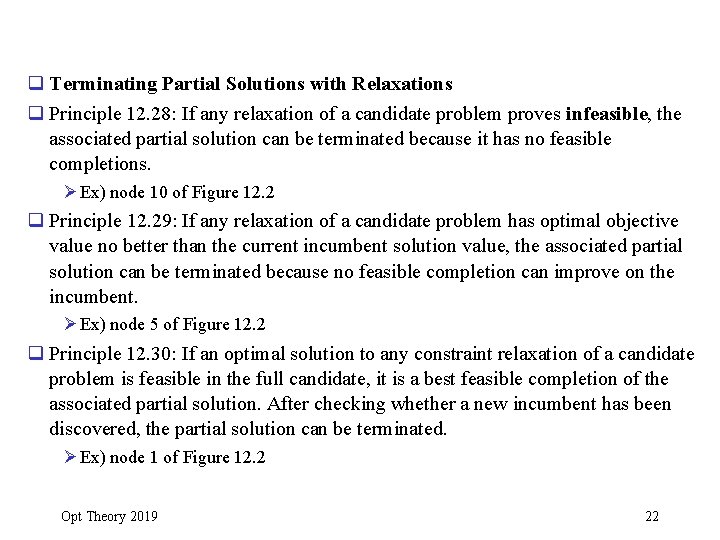

q Terminating Partial Solutions with Relaxations q Principle 12. 28: If any relaxation of a candidate problem proves infeasible, the associated partial solution can be terminated because it has no feasible completions. Ø Ex) node 10 of Figure 12. 2 q Principle 12. 29: If any relaxation of a candidate problem has optimal objective value no better than the current incumbent solution value, the associated partial solution can be terminated because no feasible completion can improve on the incumbent. Ø Ex) node 5 of Figure 12. 2 q Principle 12. 30: If an optimal solution to any constraint relaxation of a candidate problem is feasible in the full candidate, it is a best feasible completion of the associated partial solution. After checking whether a new incumbent has been discovered, the partial solution can be terminated. Ø Ex) node 1 of Figure 12. 2 Opt Theory 2019 22

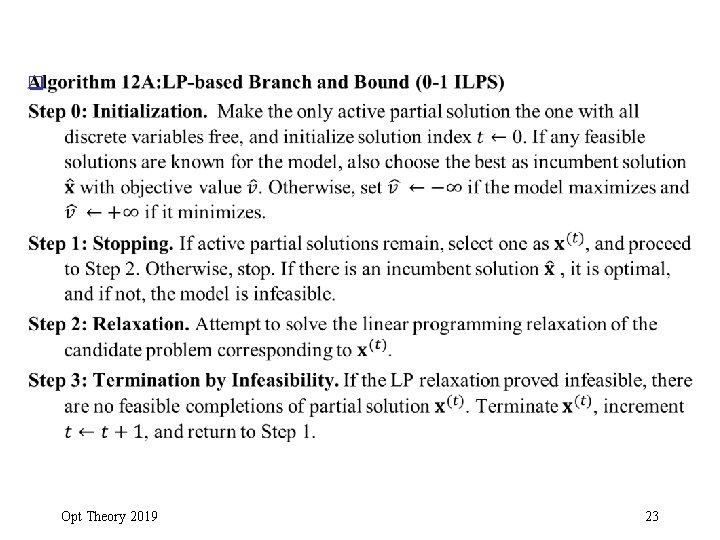

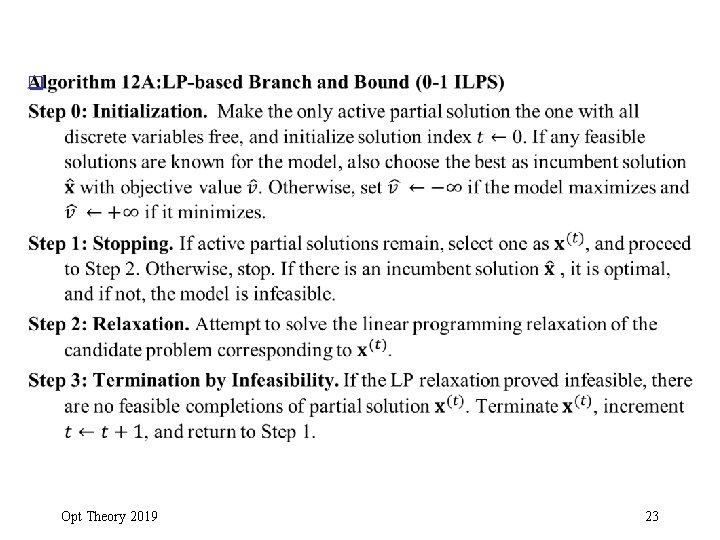

q Opt Theory 2019 23

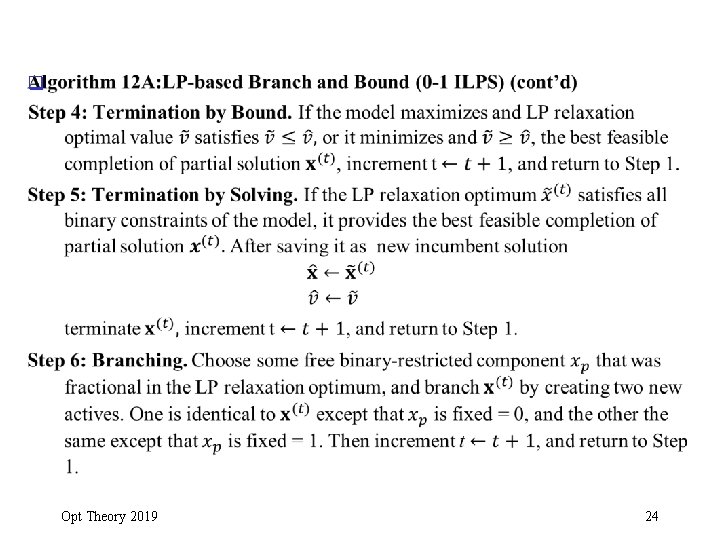

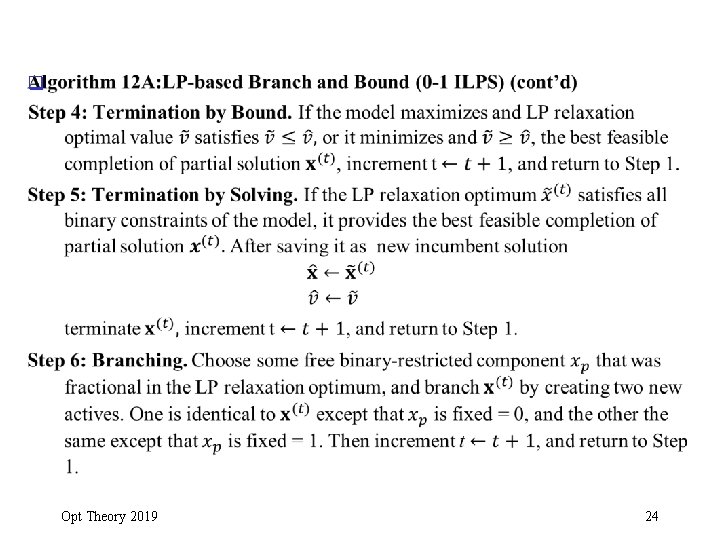

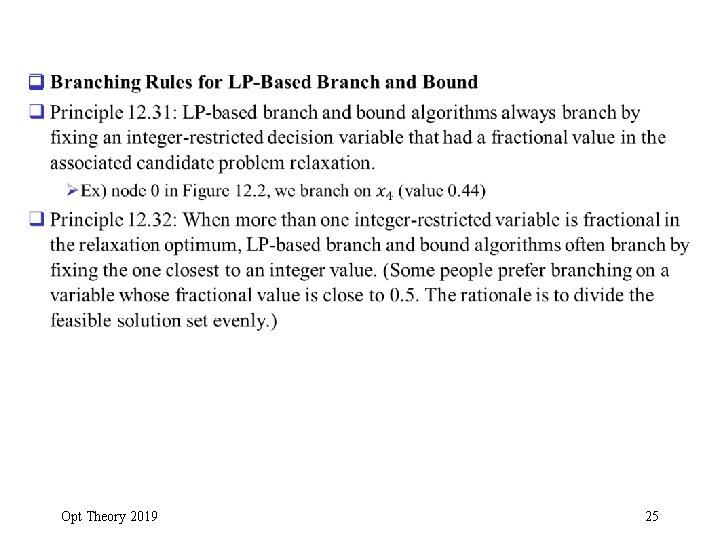

q Opt Theory 2019 24

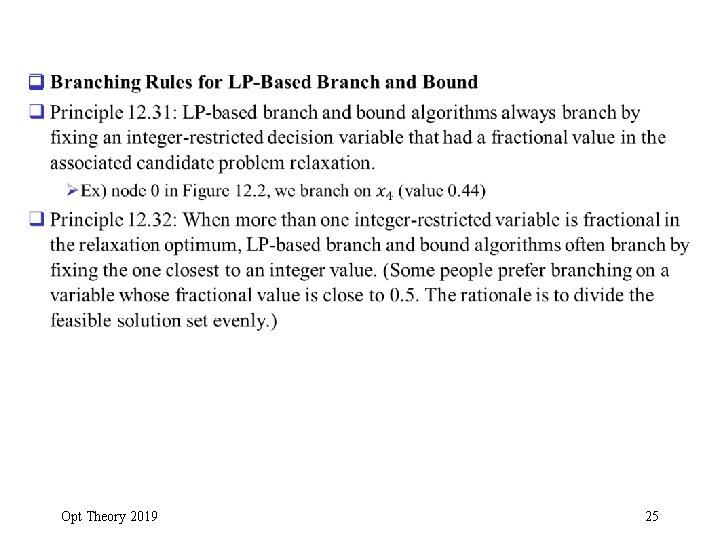

q Opt Theory 2019 25

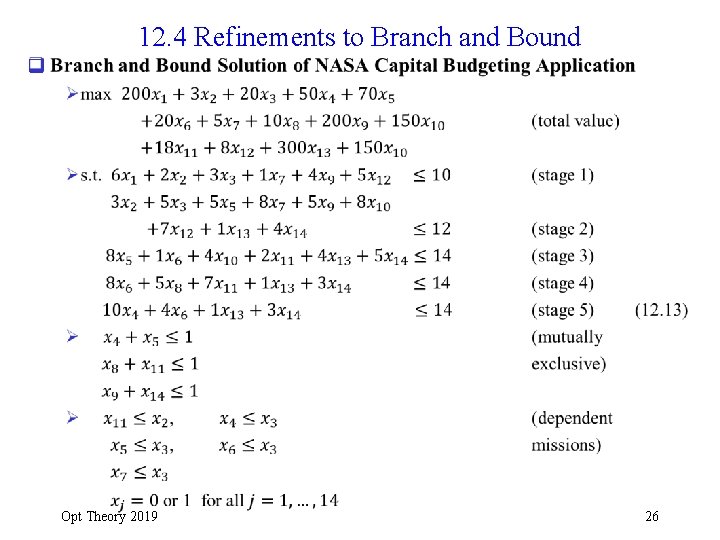

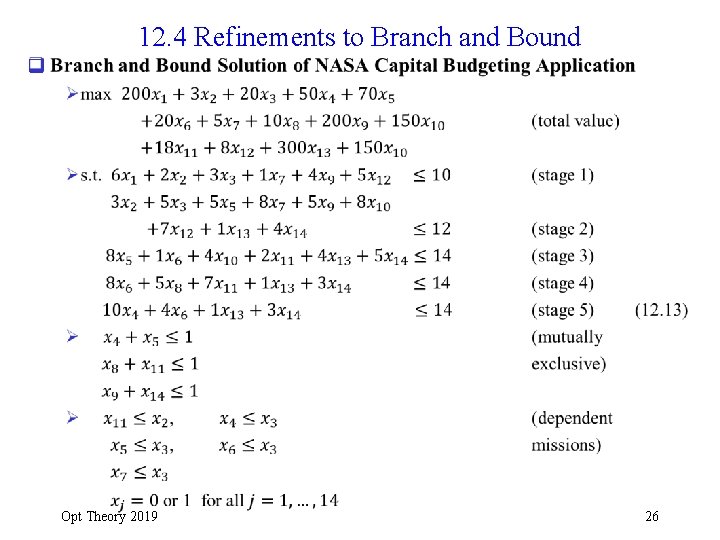

12. 4 Refinements to Branch and Bound q Opt Theory 2019 26

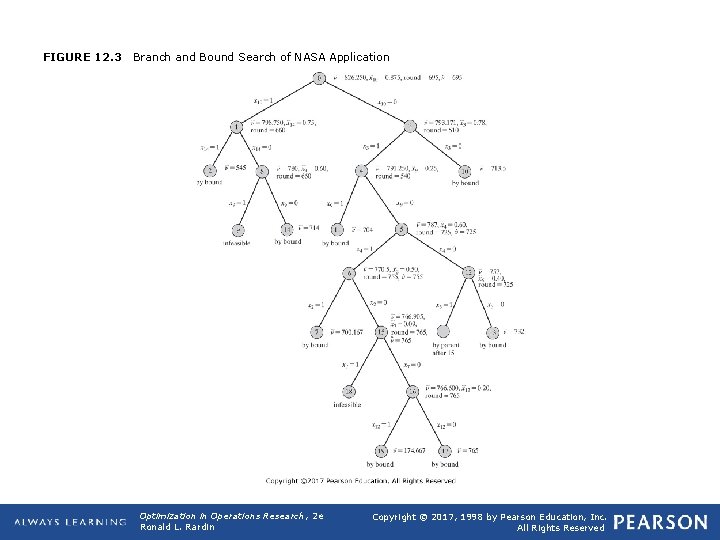

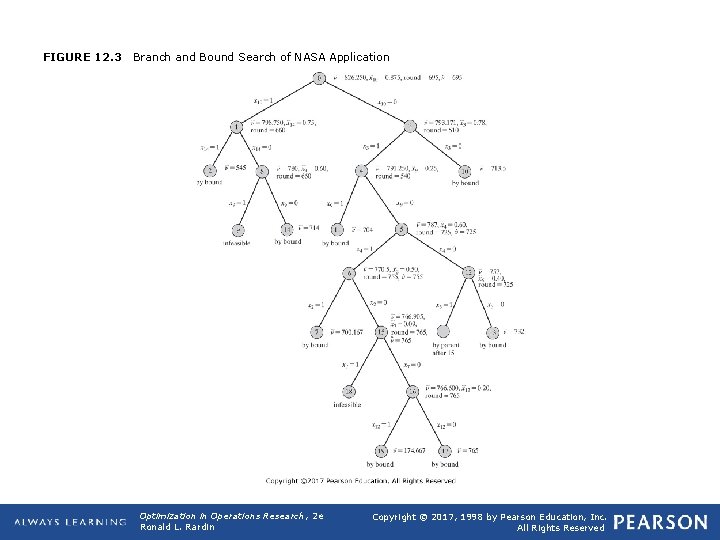

FIGURE 12. 3 Branch and Bound Search of NASA Application Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

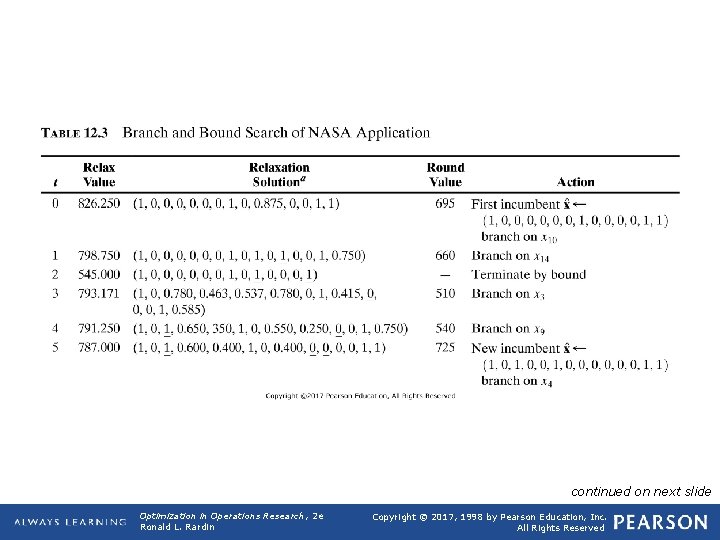

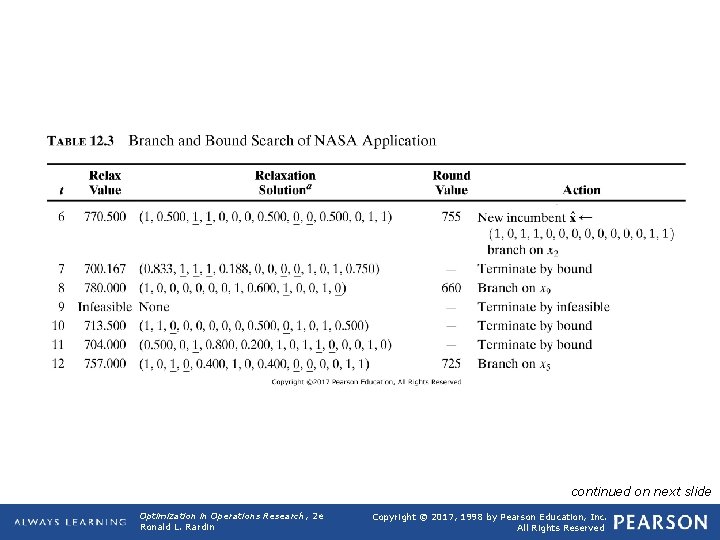

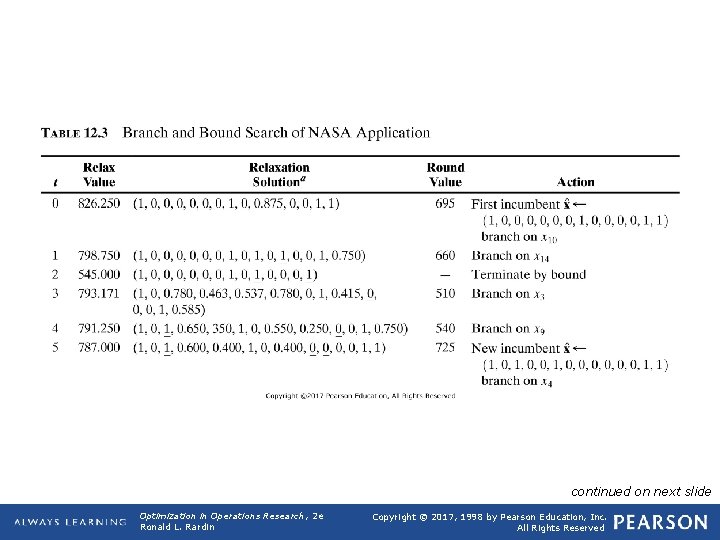

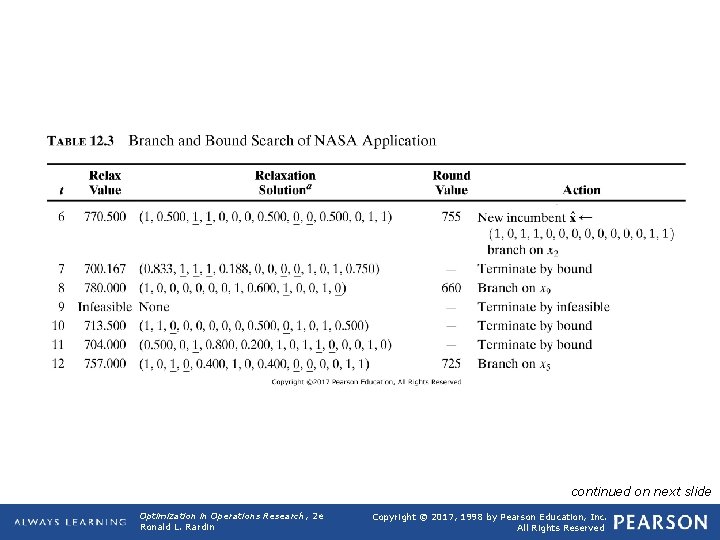

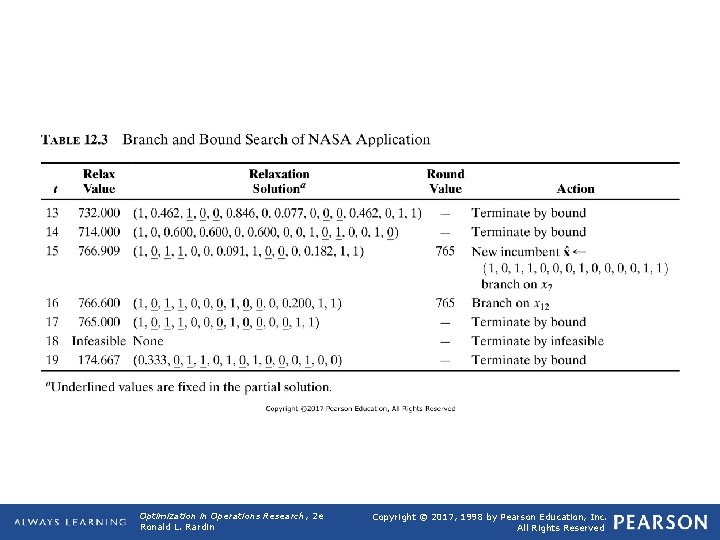

TABLE 12. 3 Branch and Bound Search of NASA Application continued on next slide Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

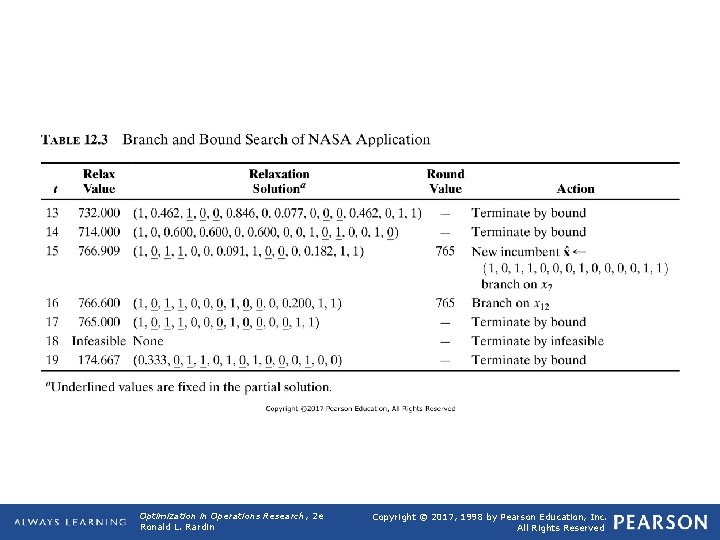

TABLE 12. 3 (continued) Branch and Bound Search of NASA Application continued on next slide Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

TABLE 12. 3 (continued) Branch and Bound Search of NASA Application Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

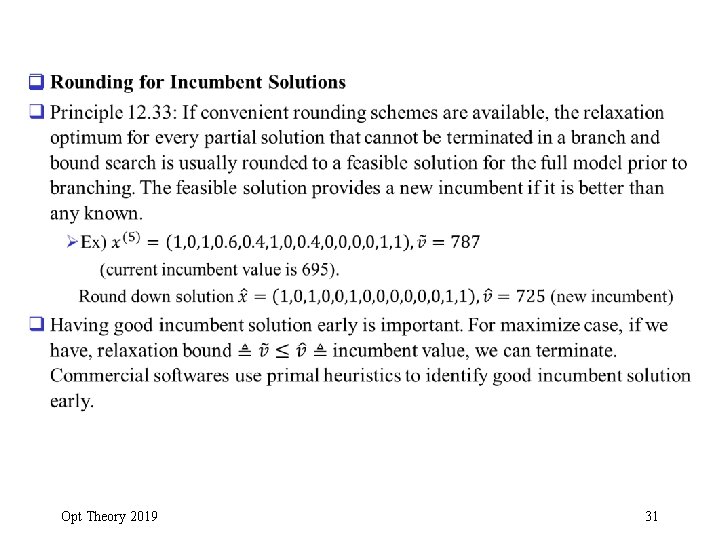

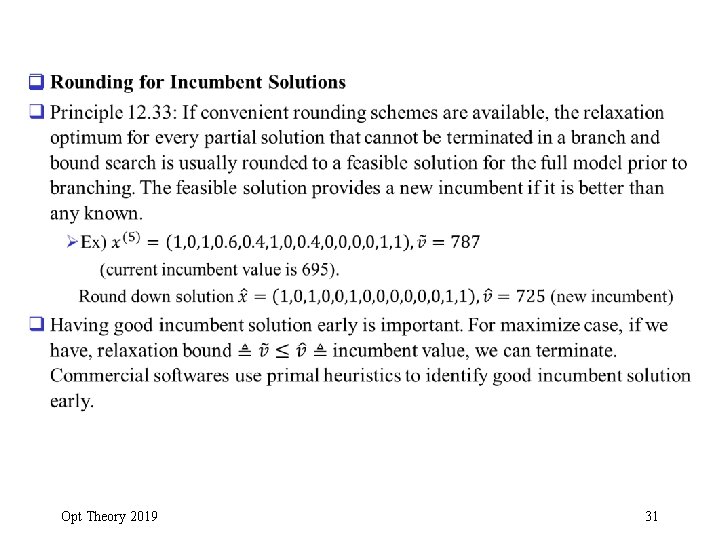

q Opt Theory 2019 31

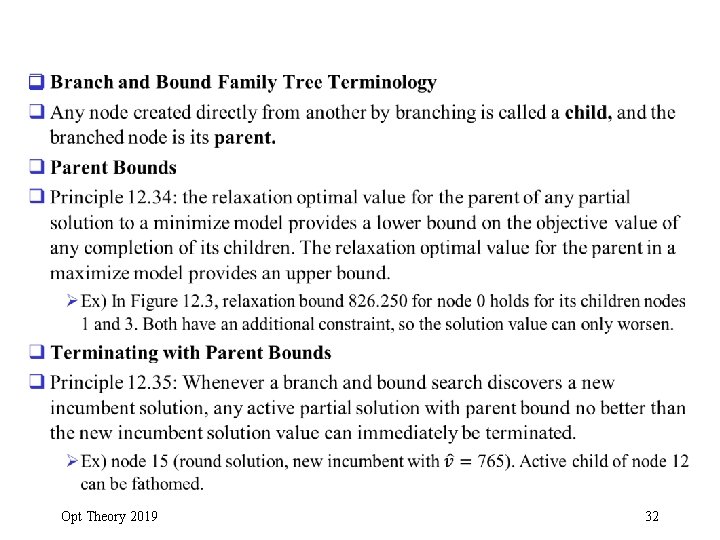

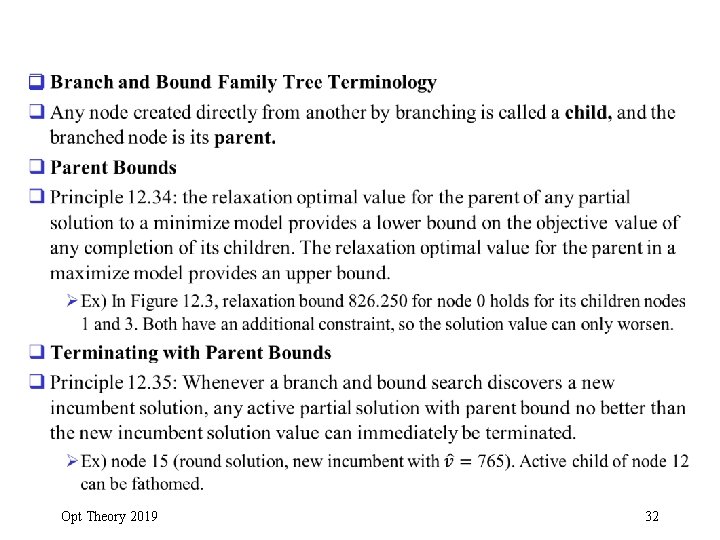

q Opt Theory 2019 32

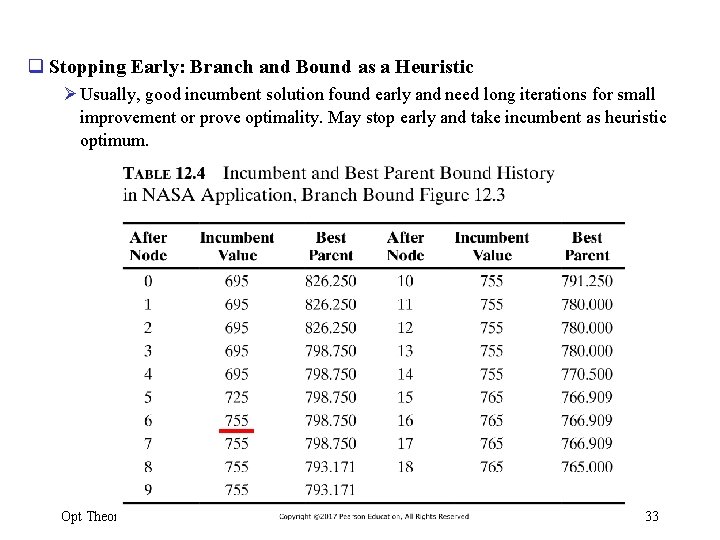

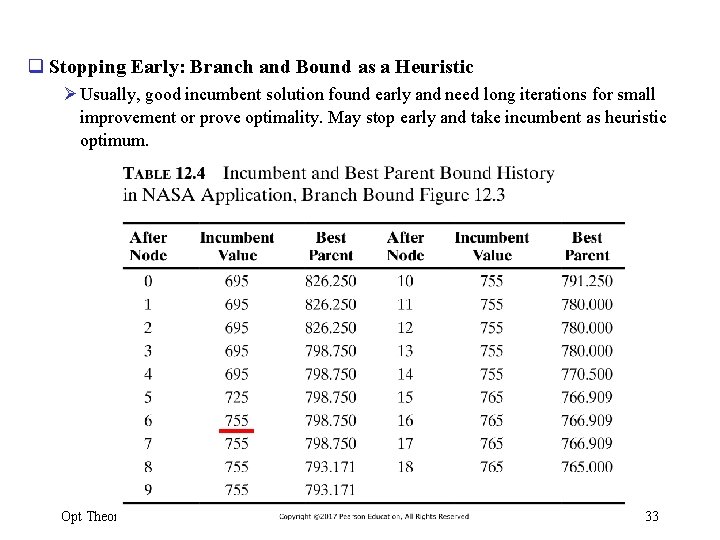

q Stopping Early: Branch and Bound as a Heuristic Ø Usually, good incumbent solution found early and need long iterations for small improvement or prove optimality. May stop early and take incumbent as heuristic optimum. Opt Theory 2019 33

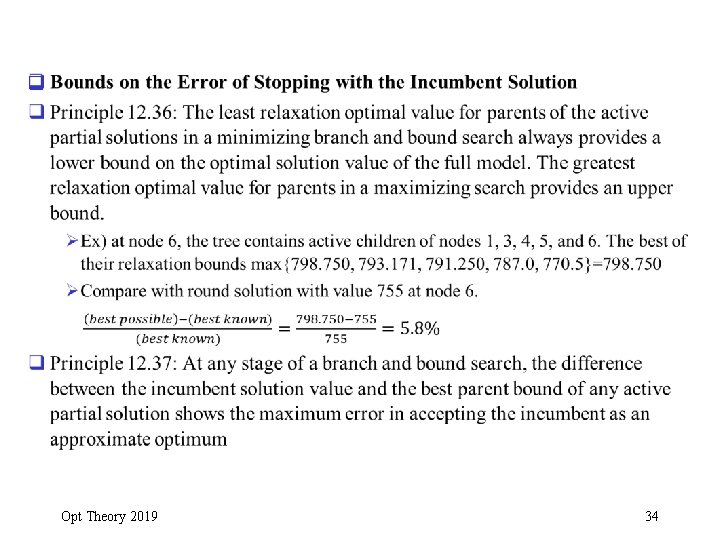

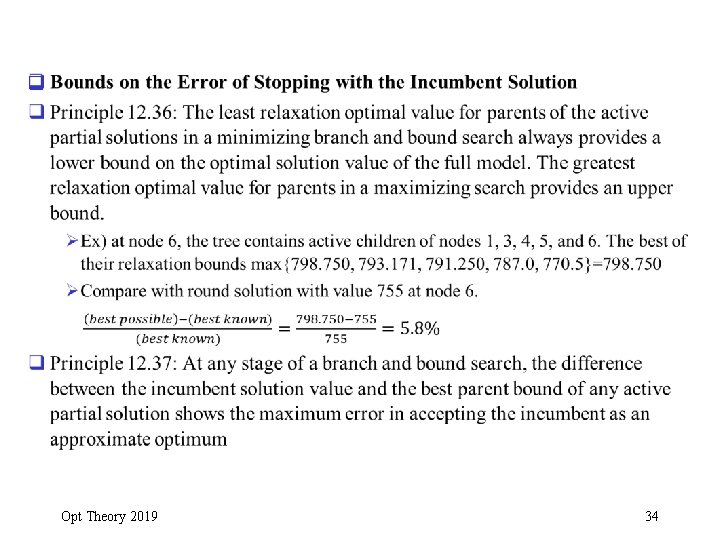

q Opt Theory 2019 34

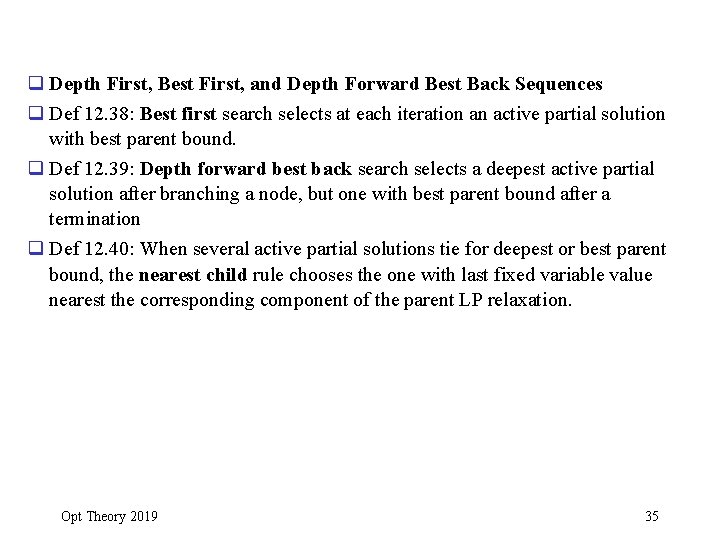

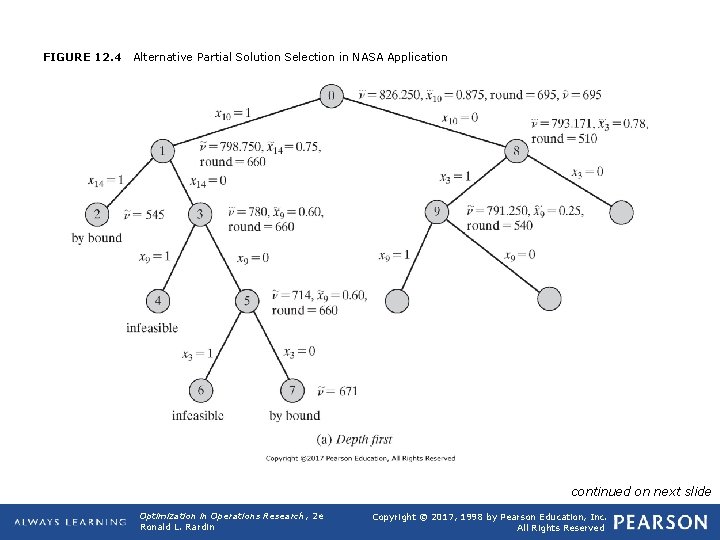

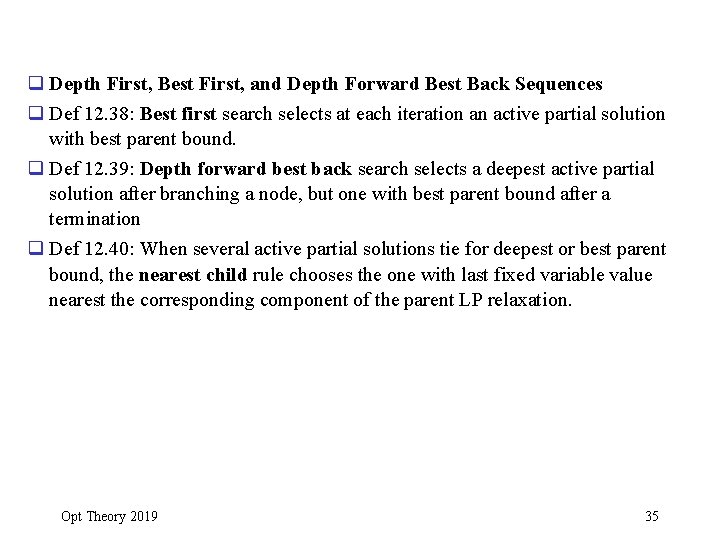

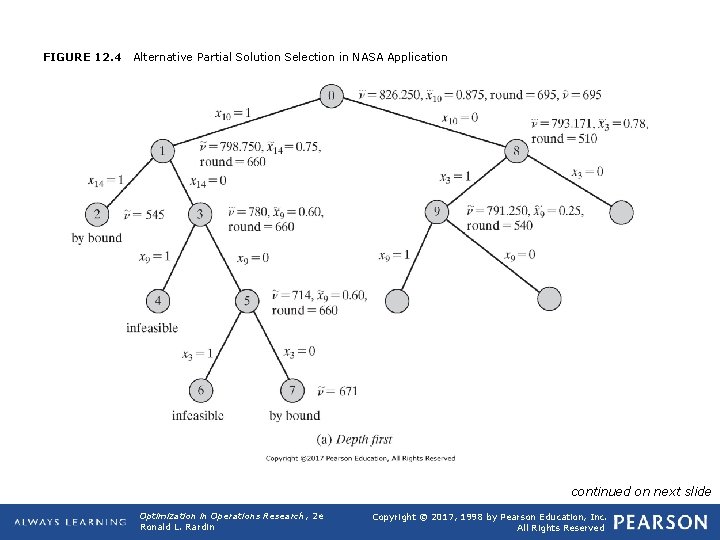

q Depth First, Best First, and Depth Forward Best Back Sequences q Def 12. 38: Best first search selects at each iteration an active partial solution with best parent bound. q Def 12. 39: Depth forward best back search selects a deepest active partial solution after branching a node, but one with best parent bound after a termination q Def 12. 40: When several active partial solutions tie for deepest or best parent bound, the nearest child rule chooses the one with last fixed variable value nearest the corresponding component of the parent LP relaxation. Opt Theory 2019 35

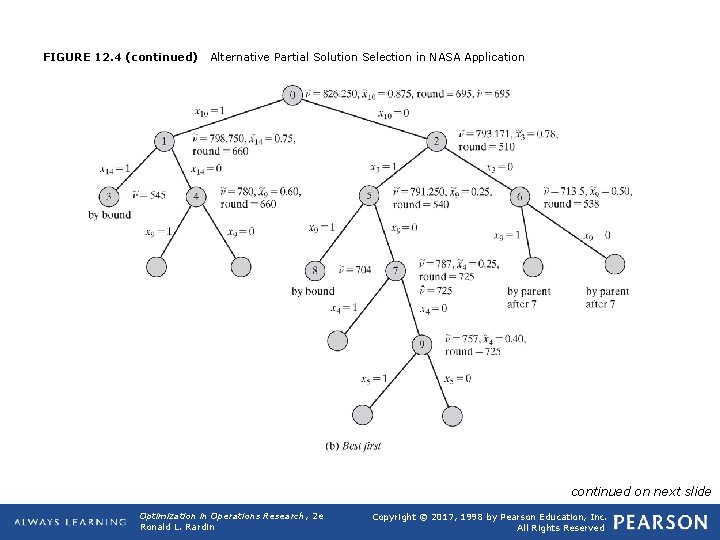

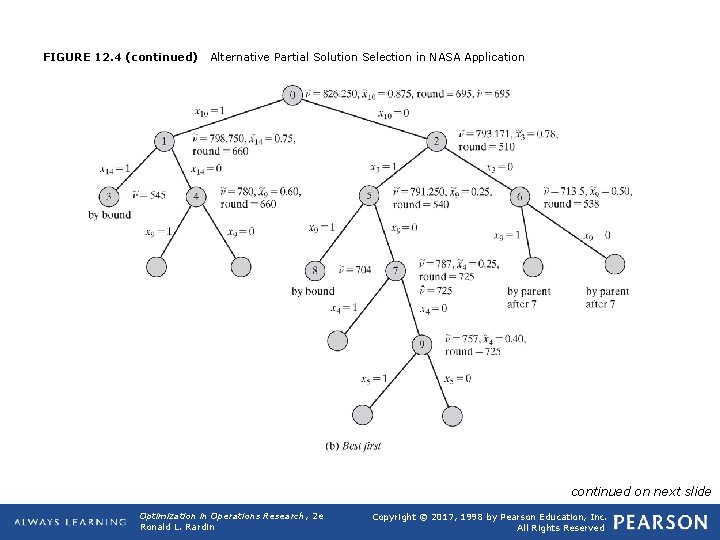

FIGURE 12. 4 Alternative Partial Solution Selection in NASA Application continued on next slide Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

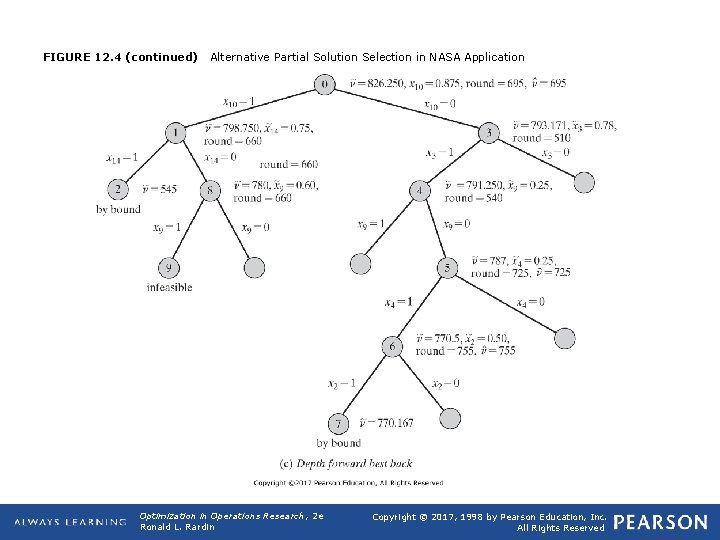

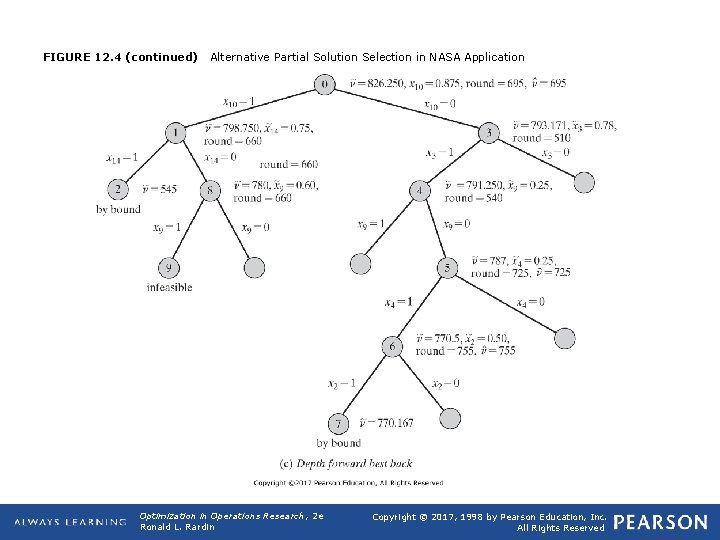

FIGURE 12. 4 (continued) Alternative Partial Solution Selection in NASA Application continued on next slide Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

FIGURE 12. 4 (continued) Alternative Partial Solution Selection in NASA Application Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

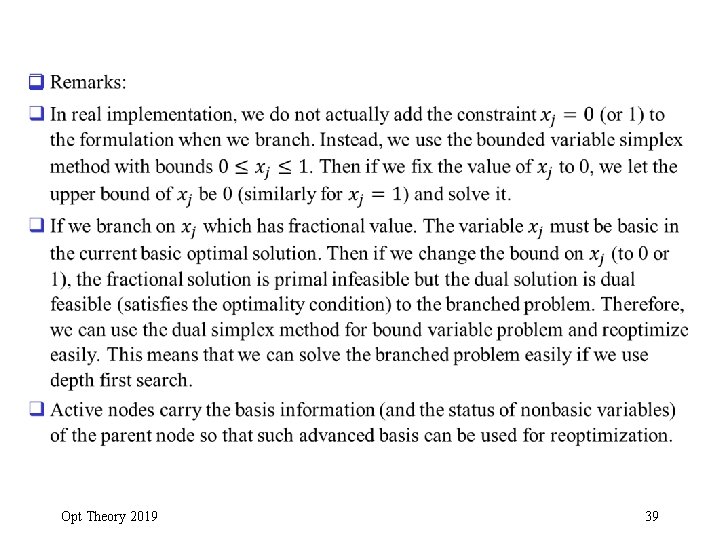

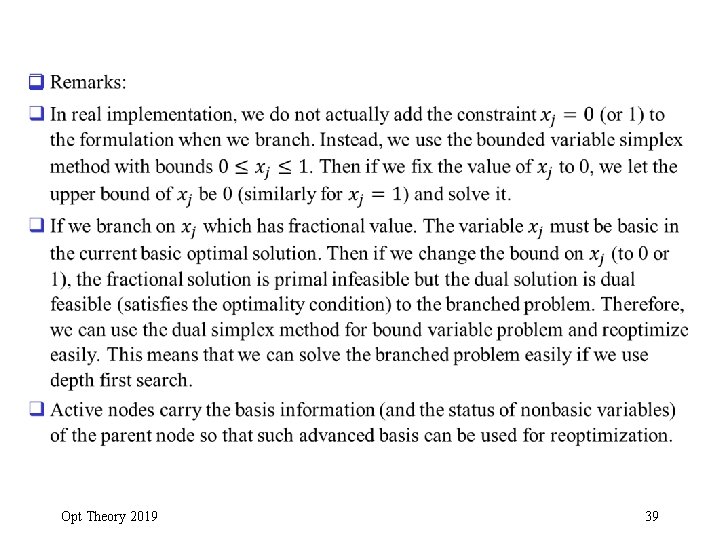

q Opt Theory 2019 39

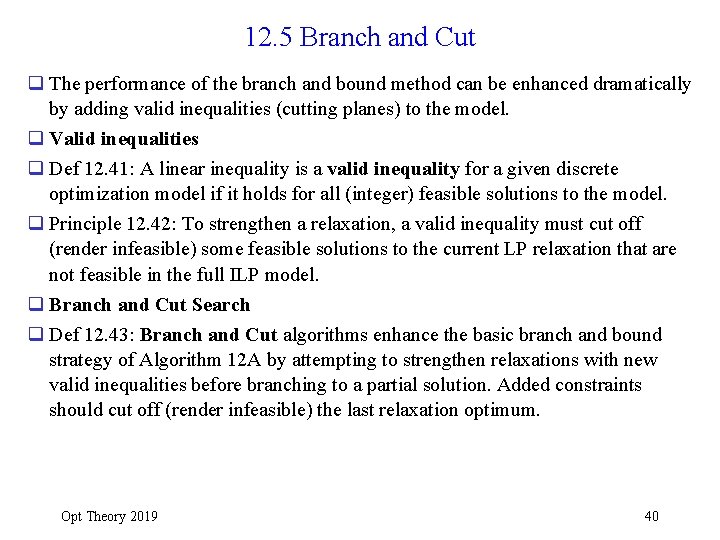

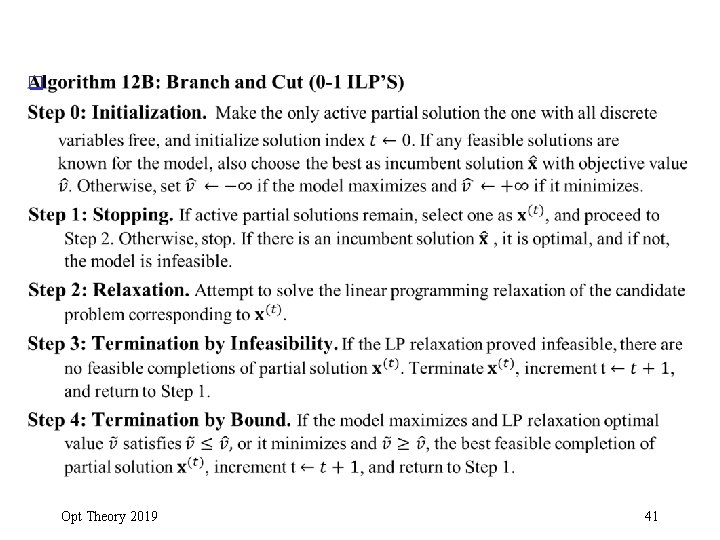

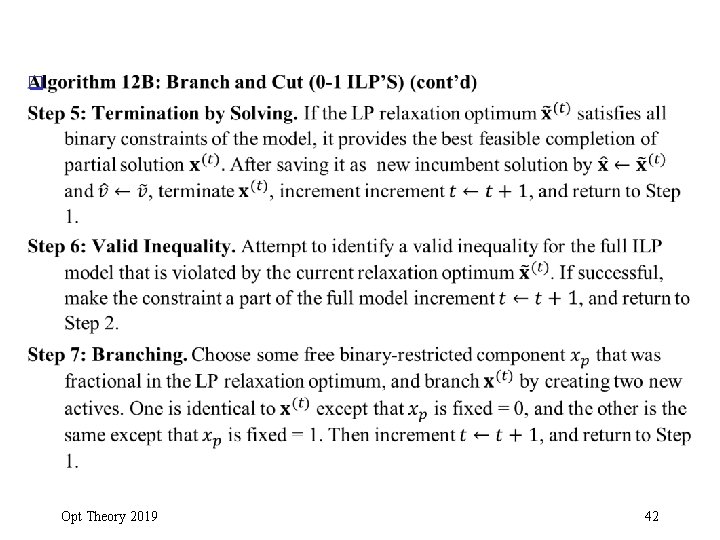

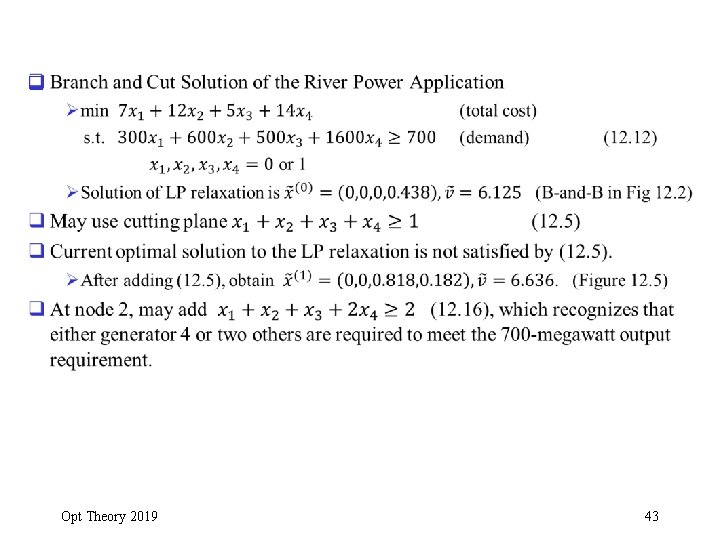

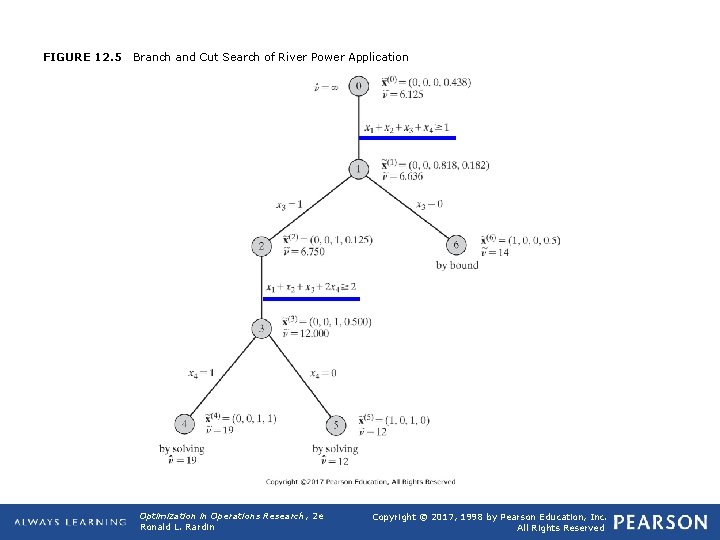

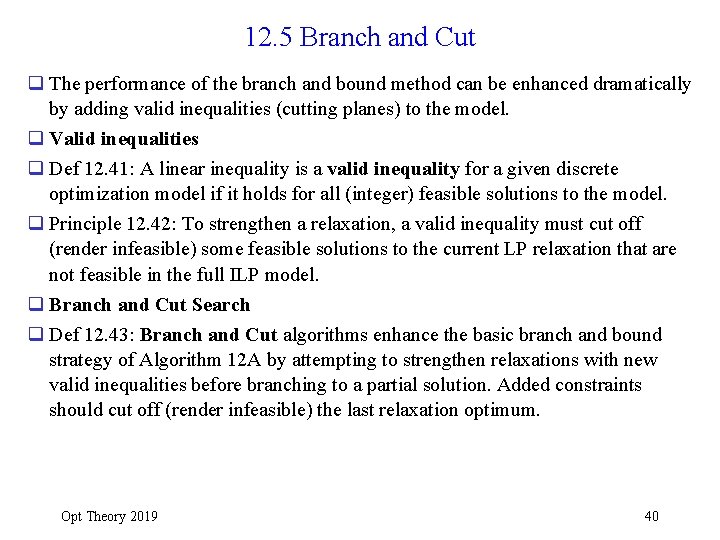

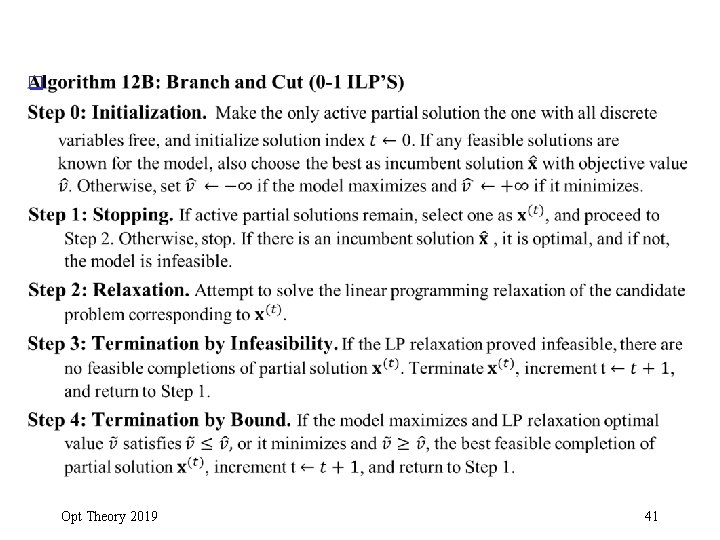

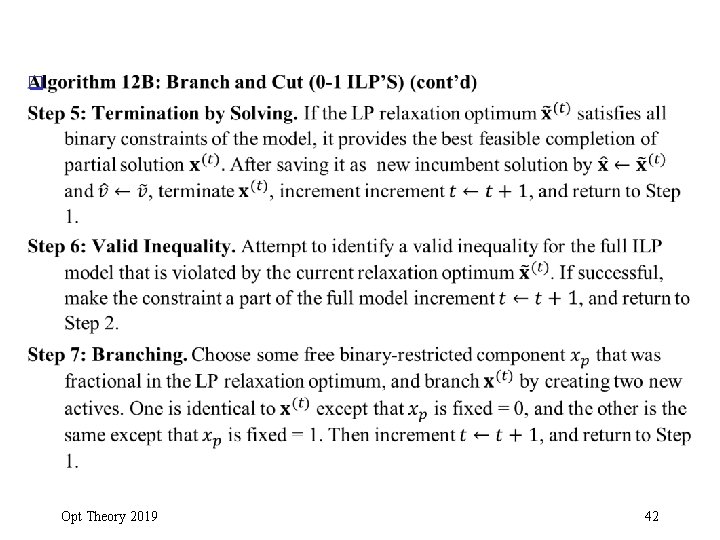

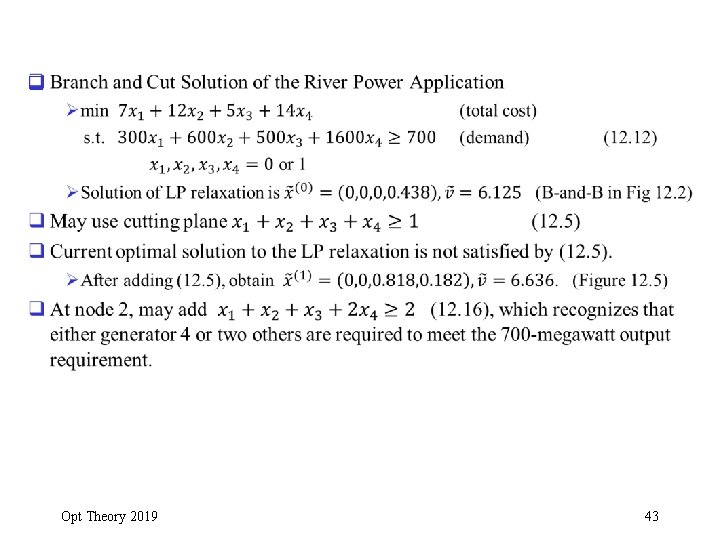

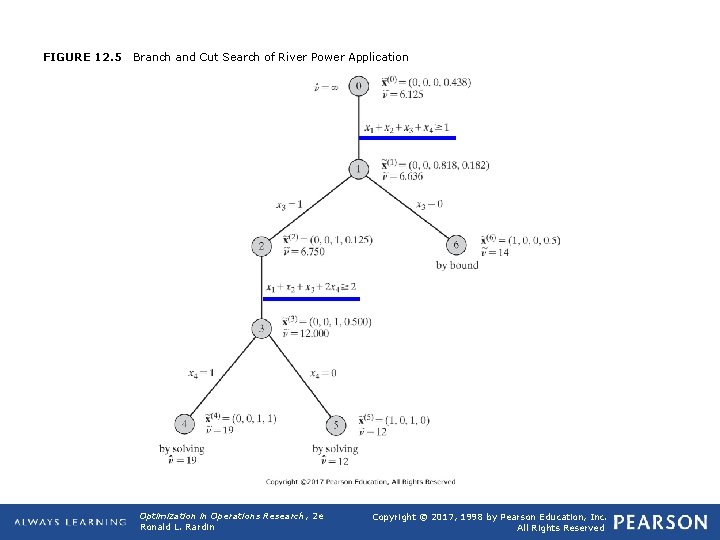

12. 5 Branch and Cut q The performance of the branch and bound method can be enhanced dramatically by adding valid inequalities (cutting planes) to the model. q Valid inequalities q Def 12. 41: A linear inequality is a valid inequality for a given discrete optimization model if it holds for all (integer) feasible solutions to the model. q Principle 12. 42: To strengthen a relaxation, a valid inequality must cut off (render infeasible) some feasible solutions to the current LP relaxation that are not feasible in the full ILP model. q Branch and Cut Search q Def 12. 43: Branch and Cut algorithms enhance the basic branch and bound strategy of Algorithm 12 A by attempting to strengthen relaxations with new valid inequalities before branching to a partial solution. Added constraints should cut off (render infeasible) the last relaxation optimum. Opt Theory 2019 40

q Opt Theory 2019 41

q Opt Theory 2019 42

q Opt Theory 2019 43

FIGURE 12. 5 Branch and Cut Search of River Power Application Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

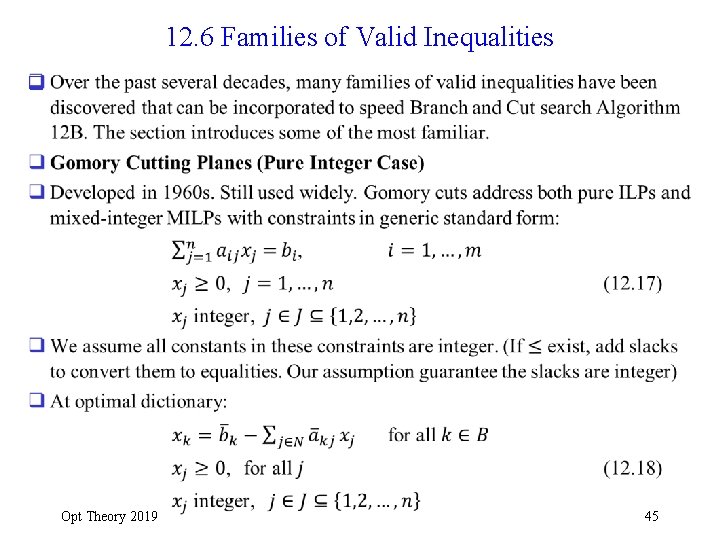

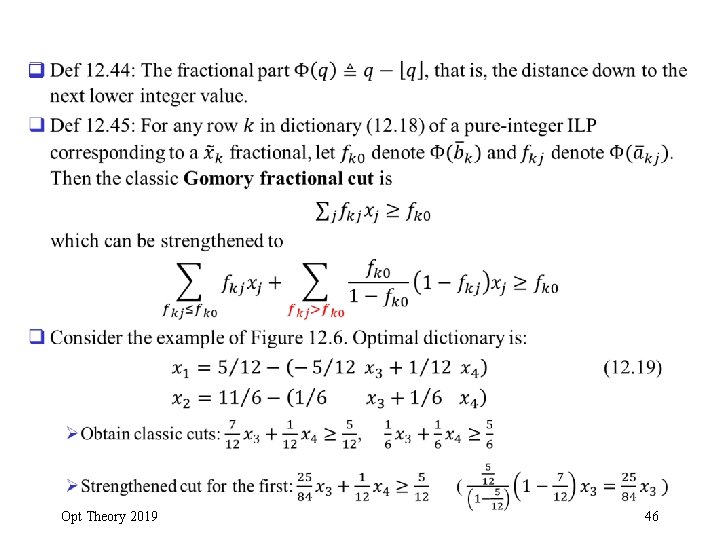

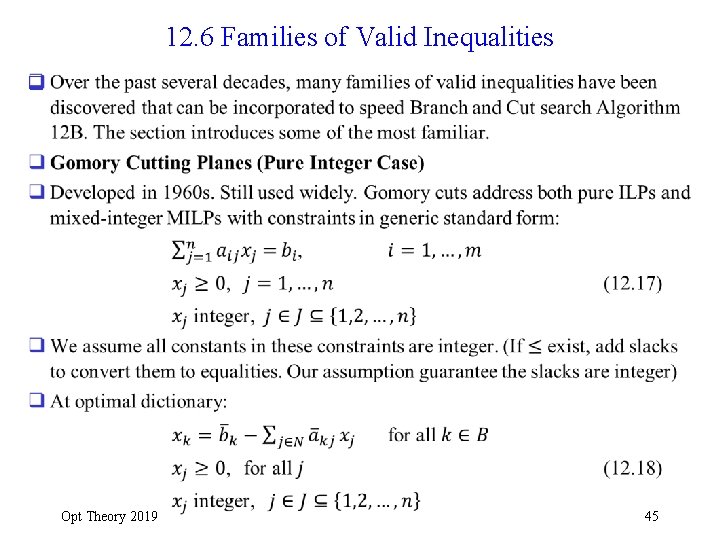

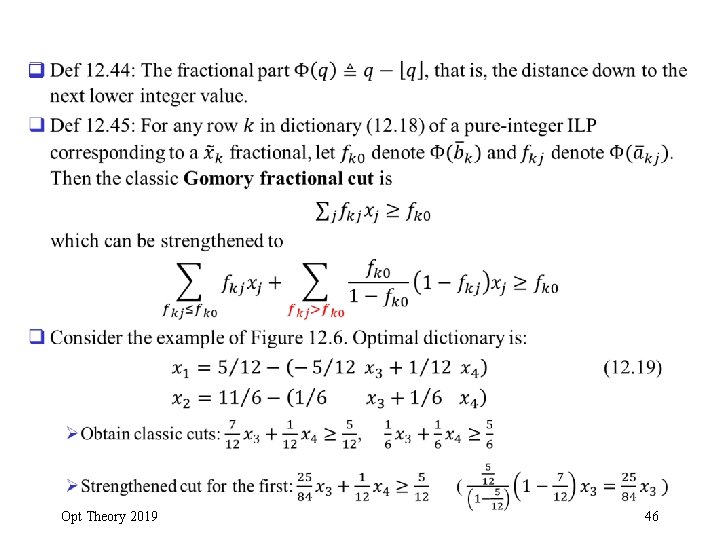

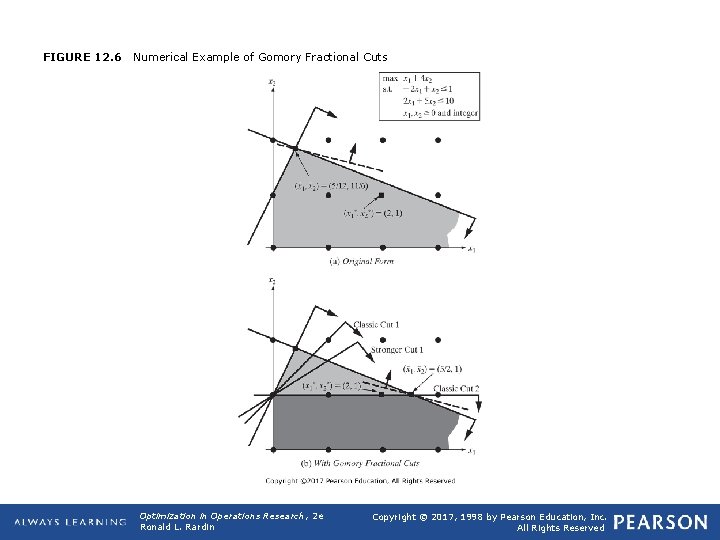

12. 6 Families of Valid Inequalities q Opt Theory 2019 45

q Opt Theory 2019 46

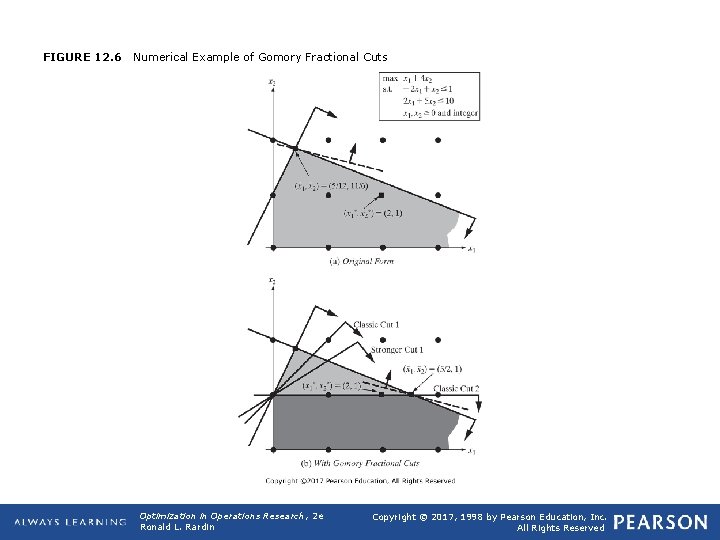

FIGURE 12. 6 Numerical Example of Gomory Fractional Cuts Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

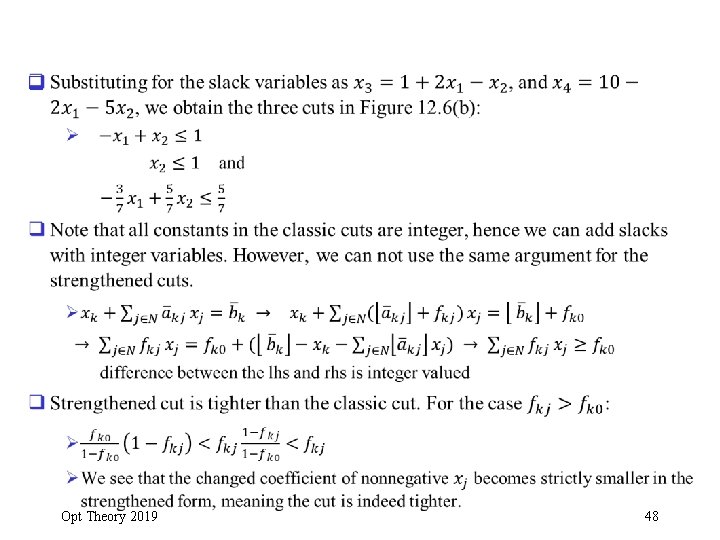

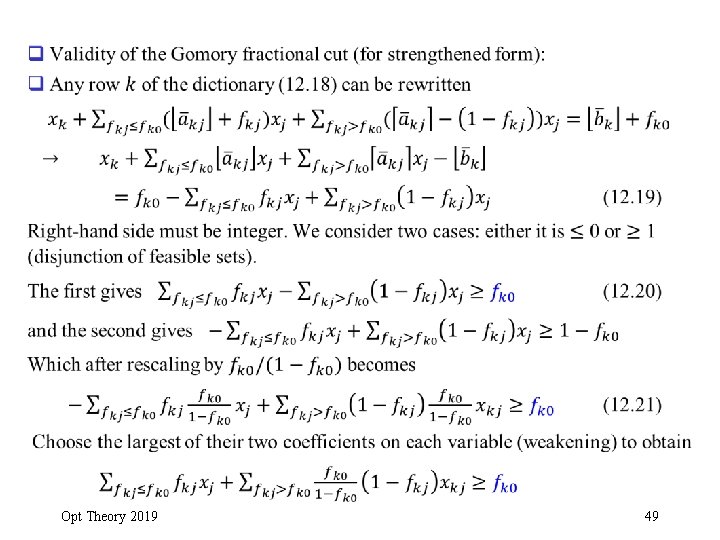

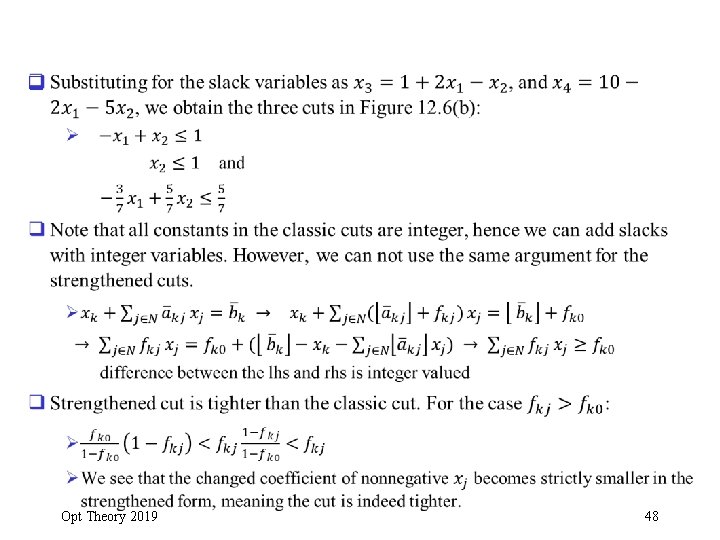

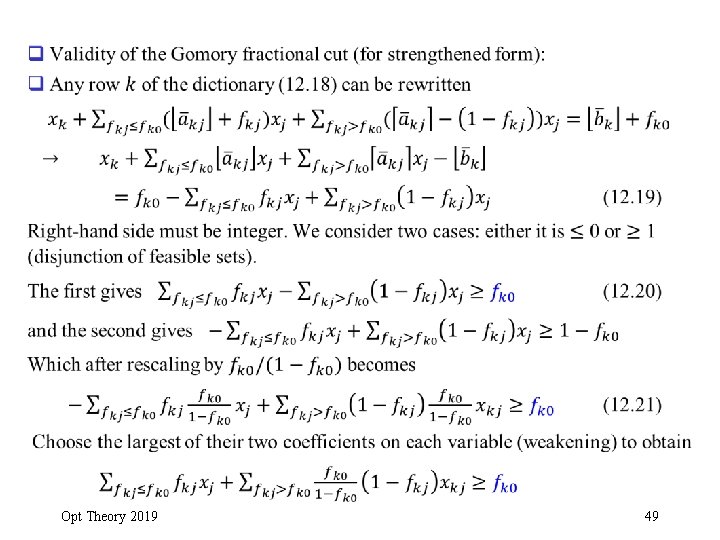

q Opt Theory 2019 48

q Opt Theory 2019 49

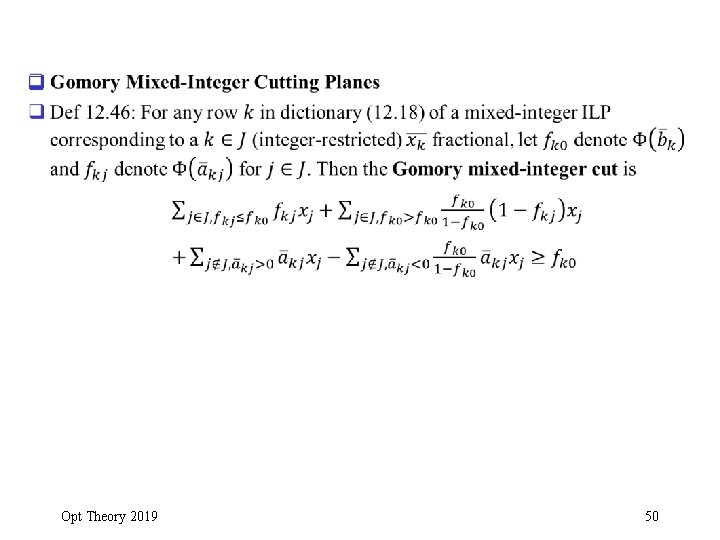

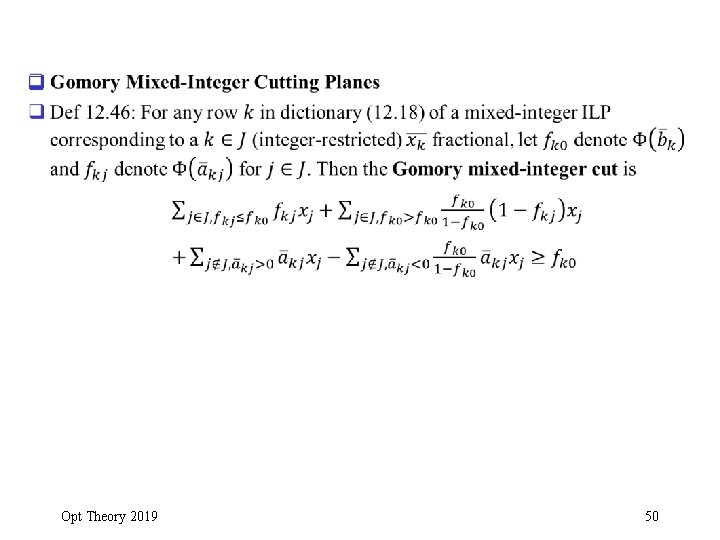

q Opt Theory 2019 50

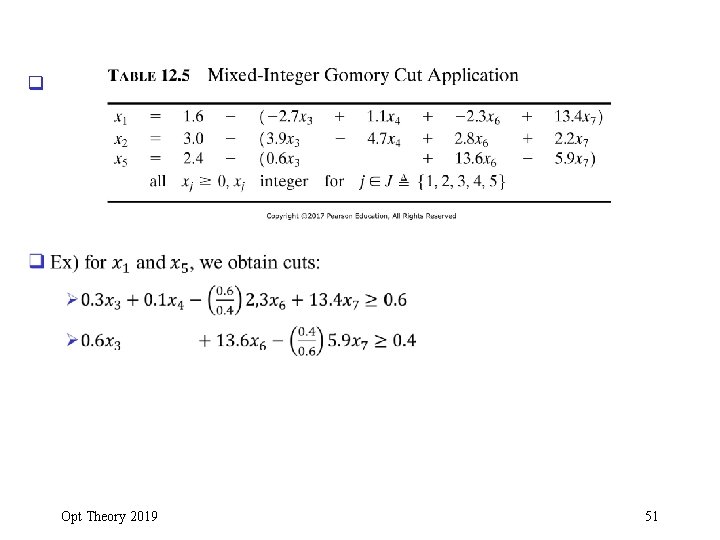

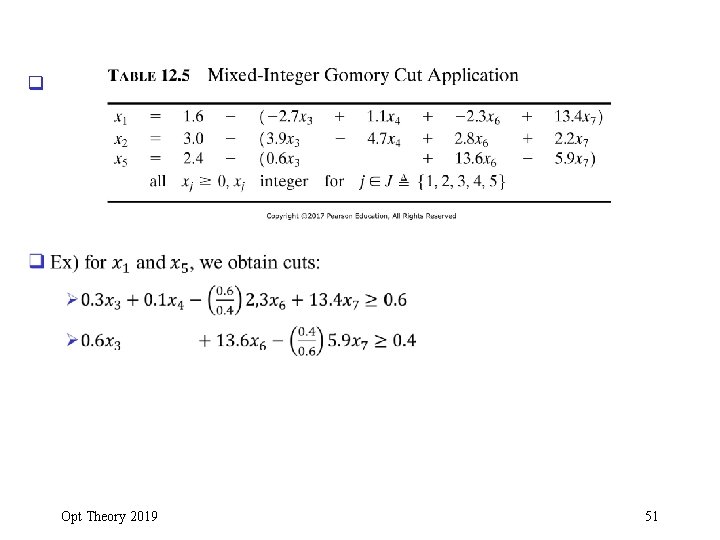

q Opt Theory 2019 51

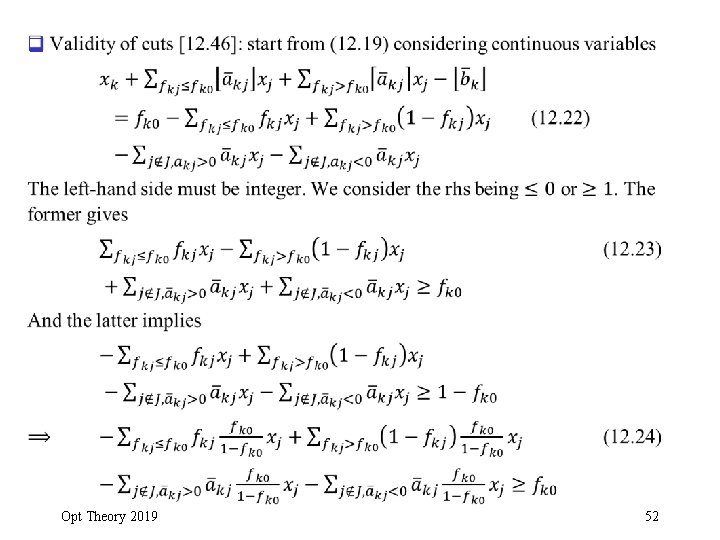

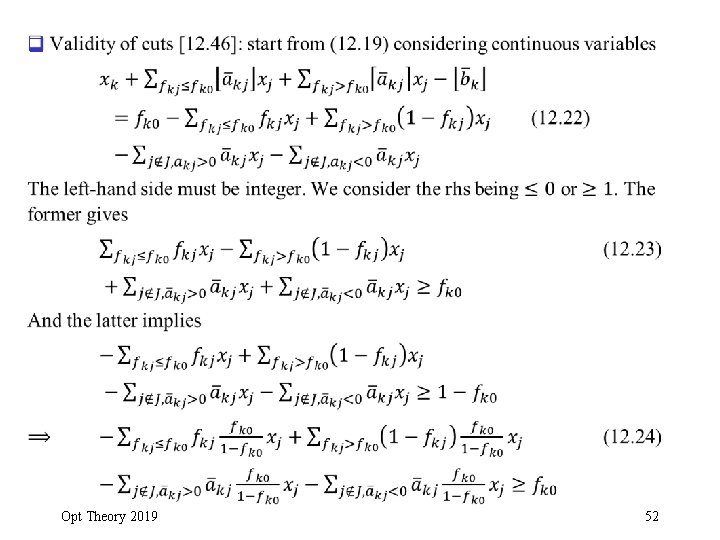

q Opt Theory 2019 52

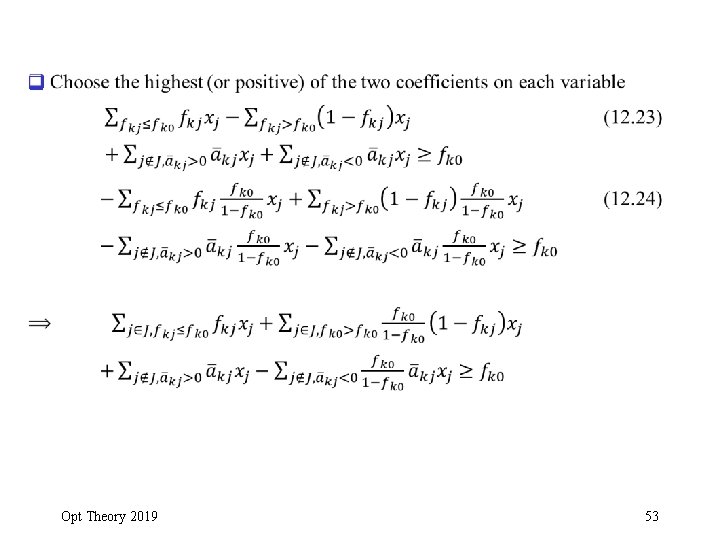

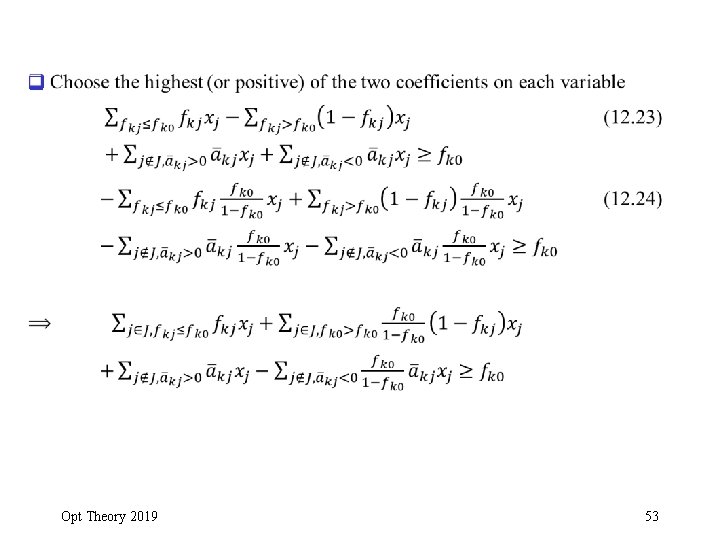

q Opt Theory 2019 53

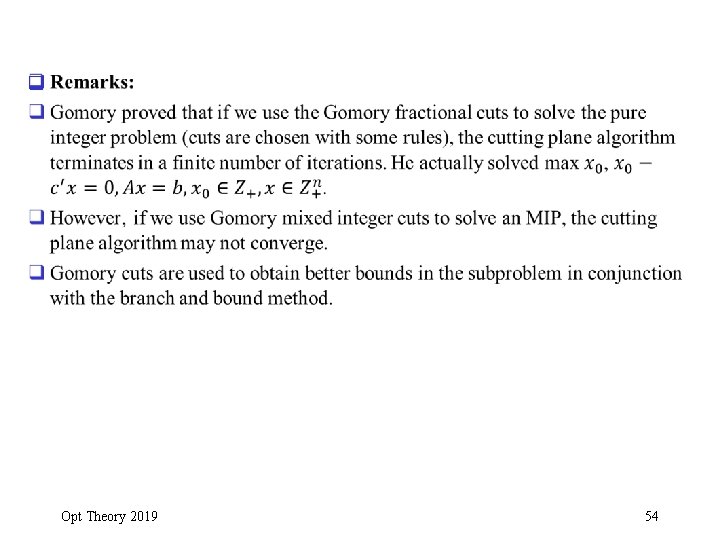

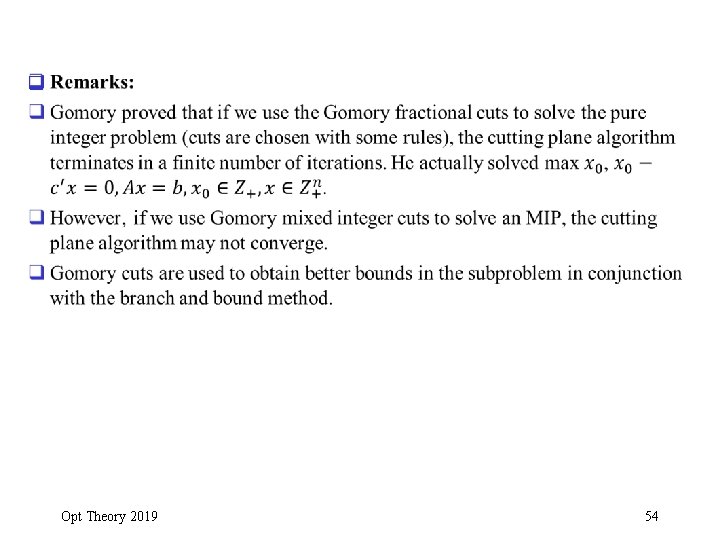

q Opt Theory 2019 54

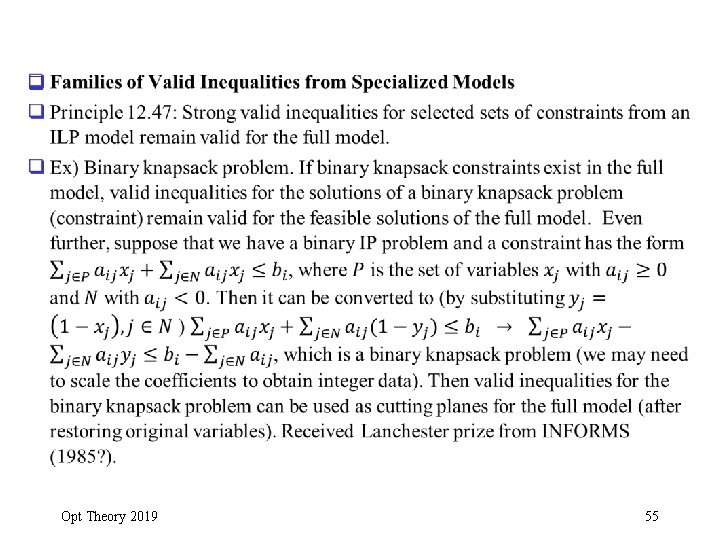

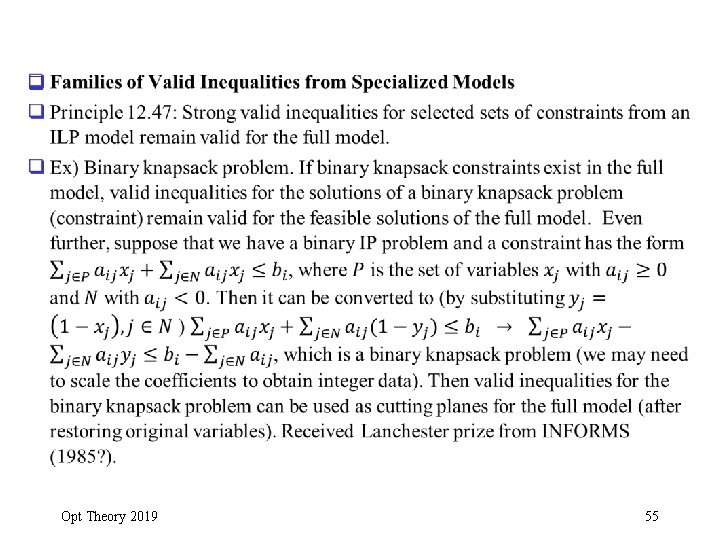

q Opt Theory 2019 55

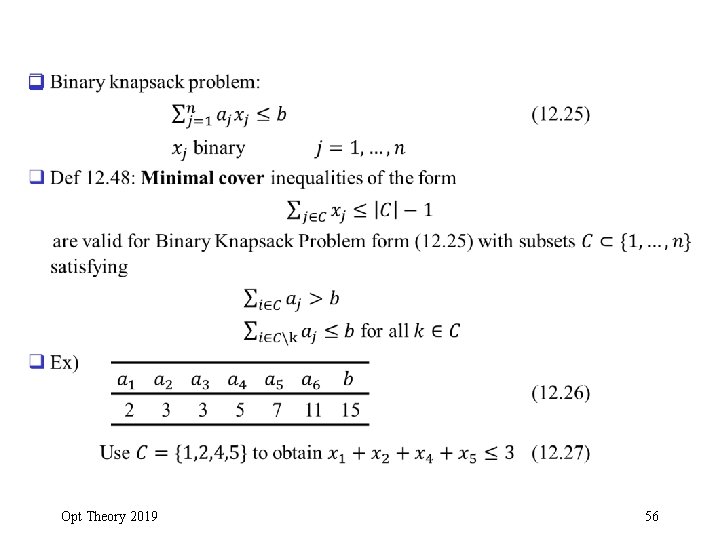

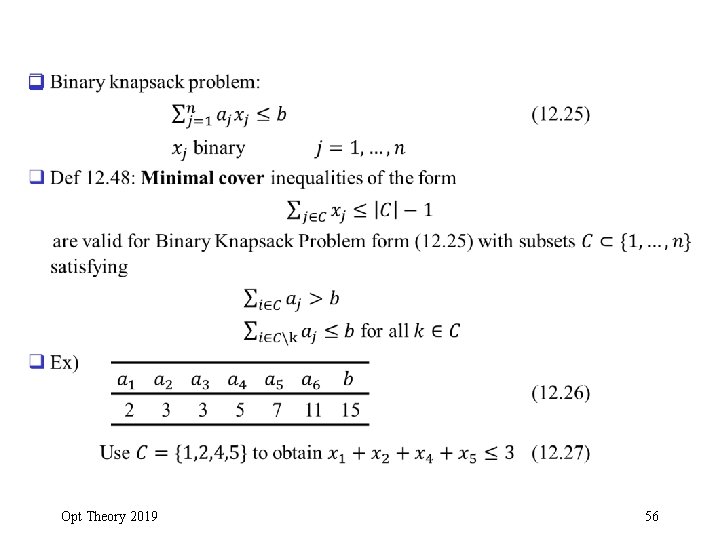

q Opt Theory 2019 56

q Opt Theory 2019 57

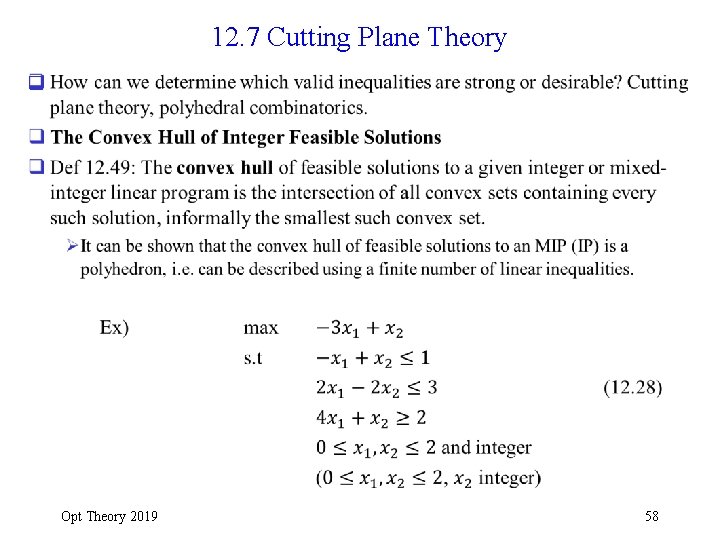

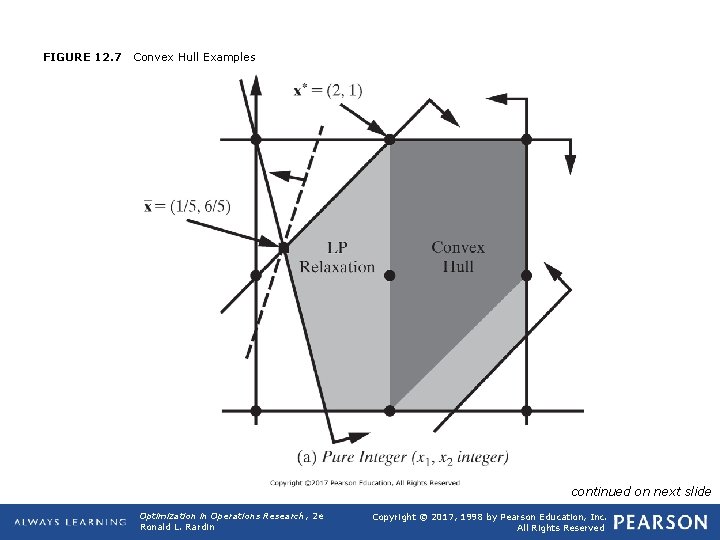

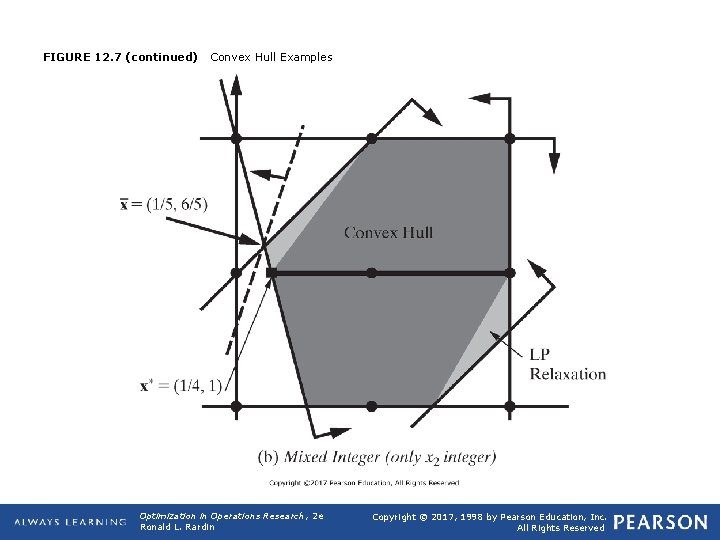

12. 7 Cutting Plane Theory q Opt Theory 2019 58

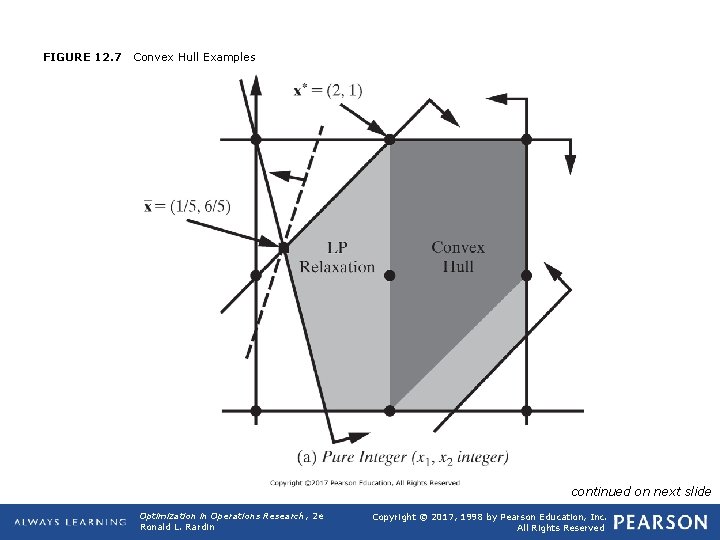

FIGURE 12. 7 Convex Hull Examples continued on next slide Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

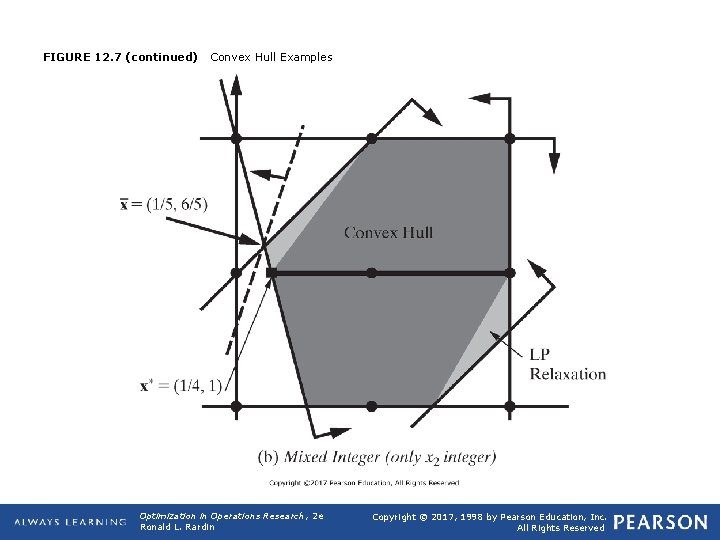

FIGURE 12. 7 (continued) Convex Hull Examples Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

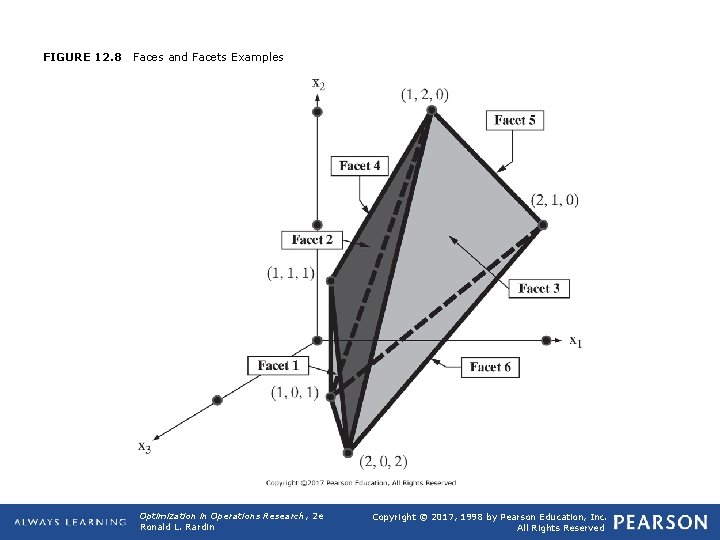

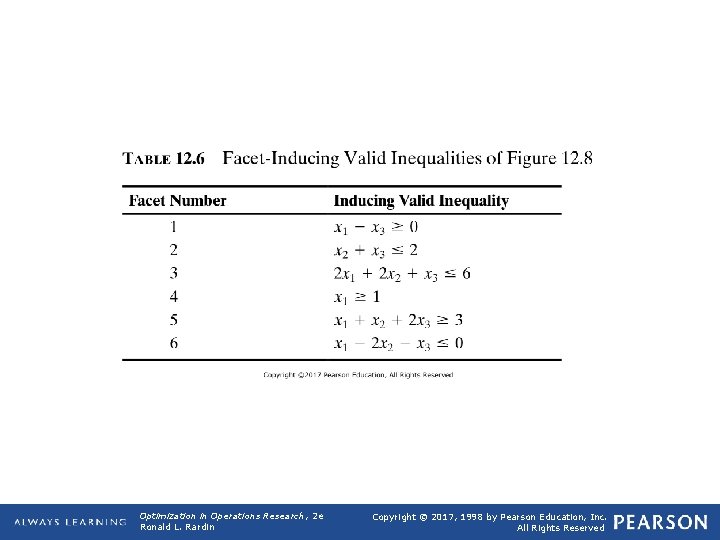

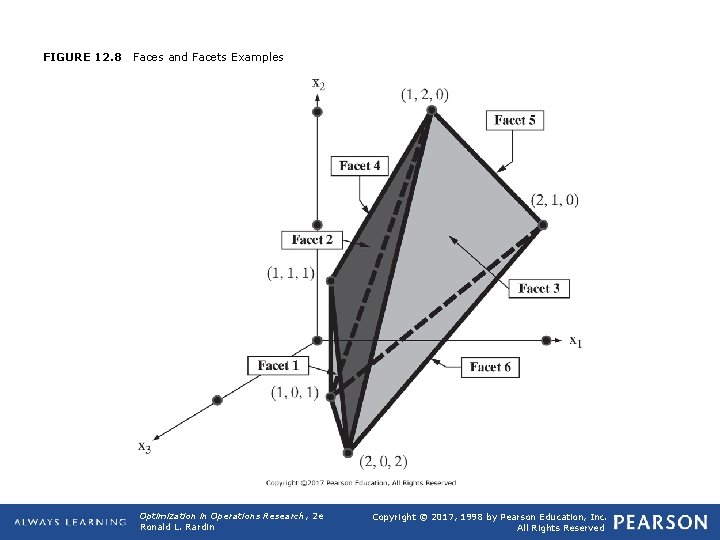

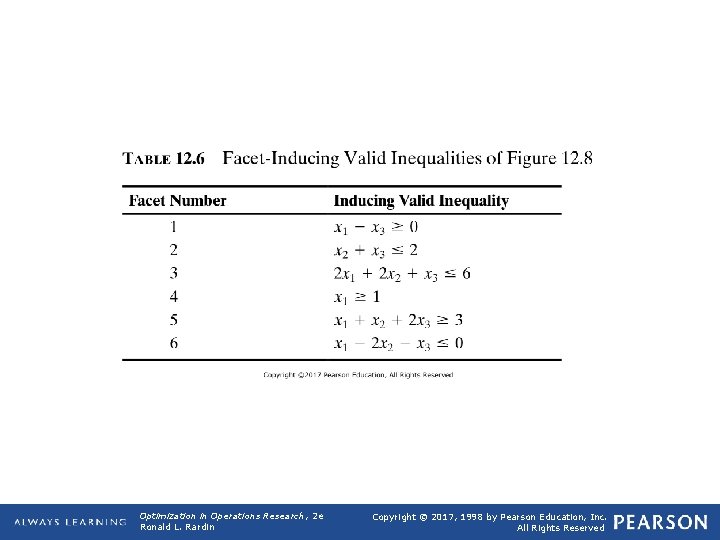

q Linear Programs over Convex Hulls q Principle 12. 50: Assuming rational coefficient data, convex hulls of solutions to integer and mixed-integer linear programs are polyhedral sets, that is, sets fully defined by (a finite number of) linear constraints. Ø Proof not given here. q Principle 12. 51: If an ILP or MILP has a finite optimum, it has one at an extreme-point of the convex hull of its integer-feasible solutions (need proof). Ø It can be shown that an extreme point of the convex hull is a feasible solution to the ILP or MILP. Hence optimizing over convex hull (with linear function) provides optimal solution to ILP or MILP (if finite optimum exists). q Principle 12. 51(with converse) says that solving ILP or MILP and solving over the convex hull are equivalent (if finite optimum exists). q Faces, Facets, and Categories of Valid Inequalities q Def 12. 52: A face of the convex hull is any subset satisfying some valid inequality as equality at all subset points. Opt Theory 2019 61

q Def 12. 53: A facet is a face of maximum dimension, that is, of dimension one less than the dimension of the convex hull itself. Opt Theory 2019 62

FIGURE 12. 8 Faces and Facets Examples Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

TABLE 12. 6 Facet-Inducing Valid Inequalities of Figure 12. 8 Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

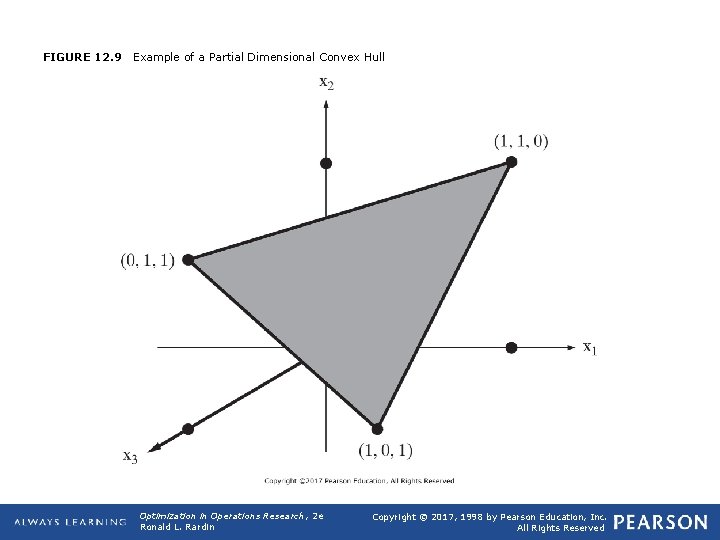

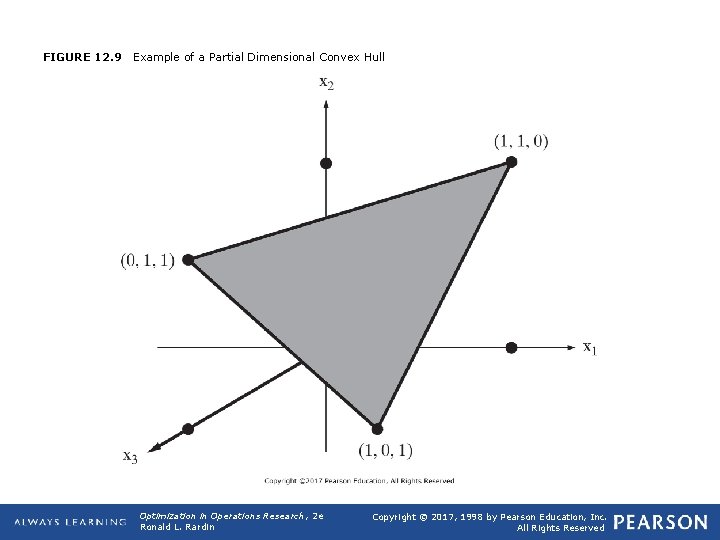

FIGURE 12. 9 Example of a Partial Dimensional Convex Hull Optimization in Operations Research, 2 e Ronald L. Rardin Copyright © 2017, 1998 by Pearson Education, Inc. All Rights Reserved

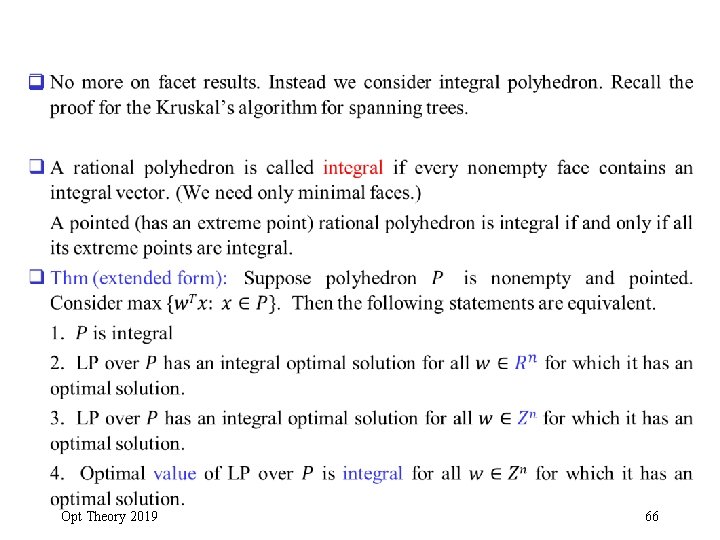

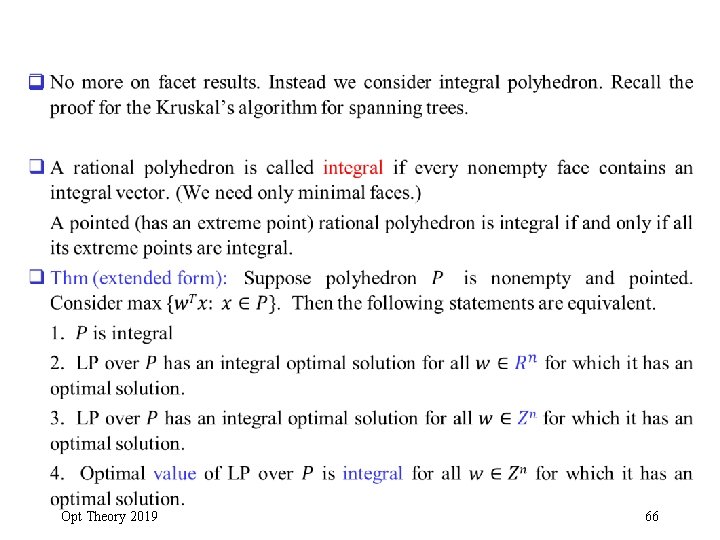

q Opt Theory 2019 66

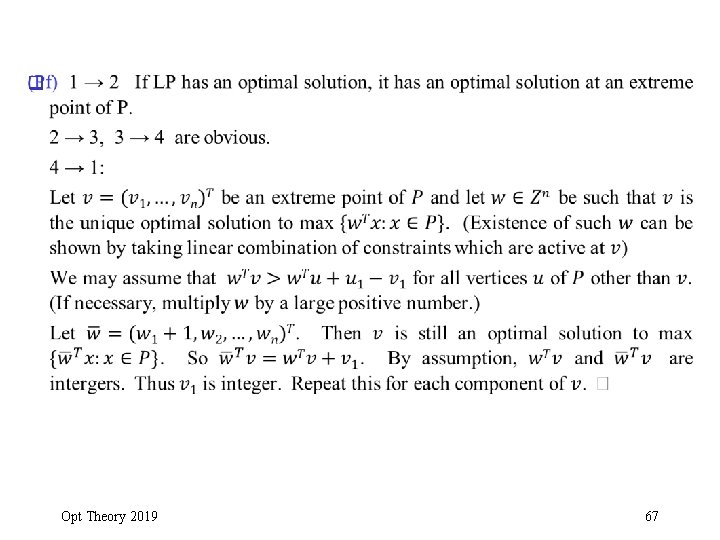

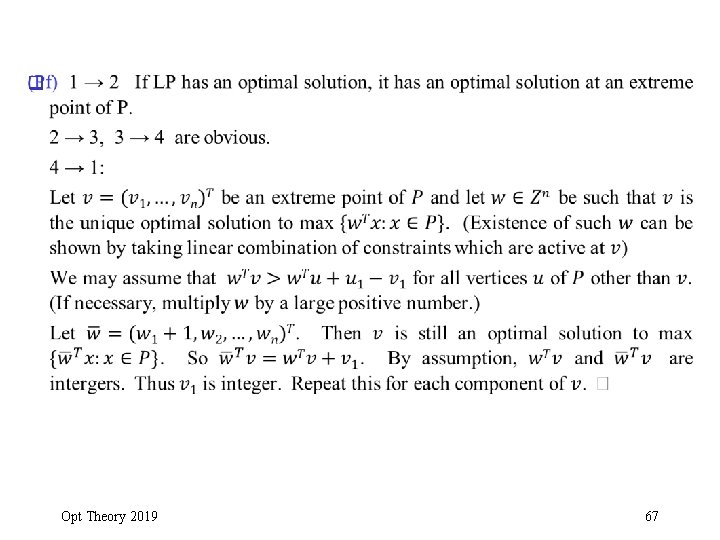

q Opt Theory 2019 67

q The proof of the correctedness of the Kruskal’s algorithm showed that if we solve the LP relaxation with any objective function, we obtained an integer optimal solution. Therefore, by theorem, the LP relaxation gives an integral polyhedron (every extreme point is integral). The LP relaxation gives the convex hull of feasible integer solutions. Such result has important implications for other results (e. g. , Lagrangian relaxation algorithm for the traveling salesman problem) Opt Theory 2019 68