Chapter 12 Distributed DBMS Reliability CSCI 5533 Distributed

Chapter 12: Distributed DBMS Reliability CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability Presented by (Team 7): Yashika Tamang Spencer Riner 1

Outline 12. 0 Reliability 12. 1 Reliability concepts and measures 12. 2 Failures in Distributed DBMS 12. 3. Local Reliability Protocol 12. 4. Distributed Reliability Protocols 12. 5. Dealing with site failures 12. 6 Network Partitioning 12. 7 Architectural Considerations 12. 8 Conclusion 12. 9 Class Exercise CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 2

12. 0 Reliability A reliable DDBMS is one that can continue to process user requests even when the underlying system is unreliable, i. e. , failures occur Data replication + Easy scaling = Reliable system Distribution enhances system reliability (not enough) ◦ Need number of protocols to be implemented to exploit distribution and replication Reliability is closely related to the problem of how to maintain the atomicity and durability properties of transactions. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 3

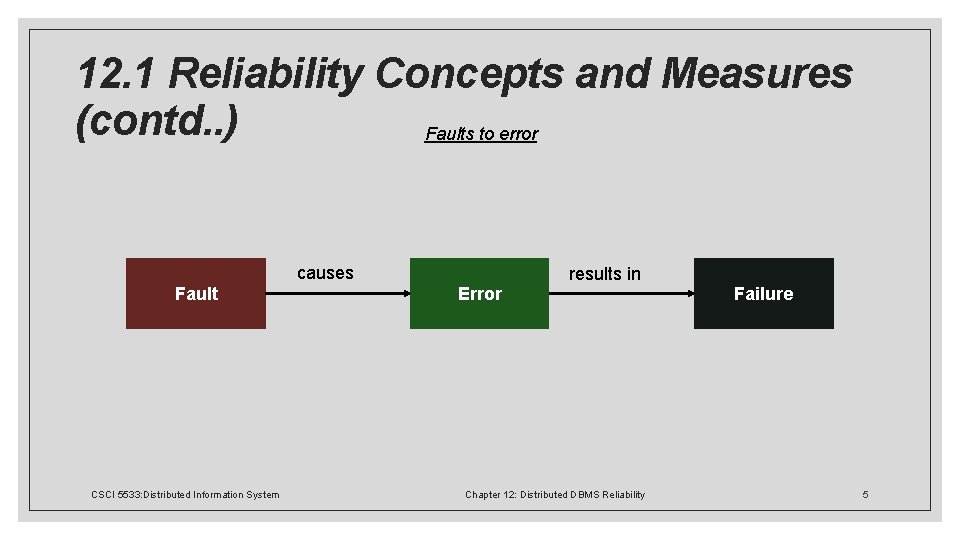

12. 1 Reliability Concepts and Measures Fundamental Definitions ◦ 12. 1. 1 System, State and Failure ◦ Reliability refers to a system that consists of a set of components. ◦ The system has a state, which changes as the system operates. ◦ The behavior of the system : authoritative specification indicates the valid behavior of each system state. ◦ Any deviation of a system from the behavior described in the specification is considered a failure. ◦ The internal state of a system such that there exist circumstances in which further processing, by the normal algorithms of the system, will lead to a failure which is not attributed to a subsequent fault, is called erroneous state. ◦ The part of the state which is incorrect is an error. ◦ An error in the internal states of the components of a system or in the design of a system is a fault. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 4

12. 1 Reliability Concepts and Measures (contd. . ) Faults to error causes Fault results in Error CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability Failure 5

12. 1 Reliability Concepts and Measures (contd. . ) Types of faults ◦ Hard faults ◦ Permanent ◦ Resulting failures are called hard failures ◦ Soft faults ◦ Transient or intermittent ◦ Account for more than 90% of all failures ◦ Resulting failures are called soft failures CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 6

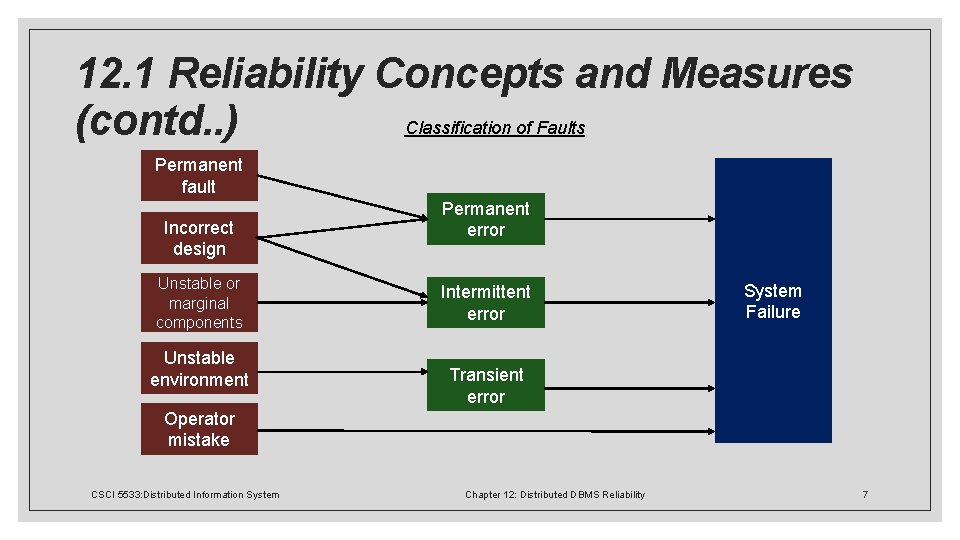

12. 1 Reliability Concepts and Measures Classification of Faults (contd. . ) Permanent fault Incorrect design Unstable or marginal components Unstable environment Permanent error Intermittent error System Failure Transient error Operator mistake CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 7

12. 1 Reliability Concepts and Measures Fault tolerant measures (contd. . ) ◦ 12. 1. 2 Reliability and Availability ◦ Reliability: ◦ A measure of success with which a system conforms to some authoritative specification of its behavior. ◦ Probability that the system has not experienced any failures within a given time period. ◦ Typically used to describe systems that cannot be repaired or where the continuous operation of the system is critical. ◦ Availability: ◦ The fraction of the time that a system meets its specification. ◦ The probability that the system is operational at a given time t. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 8

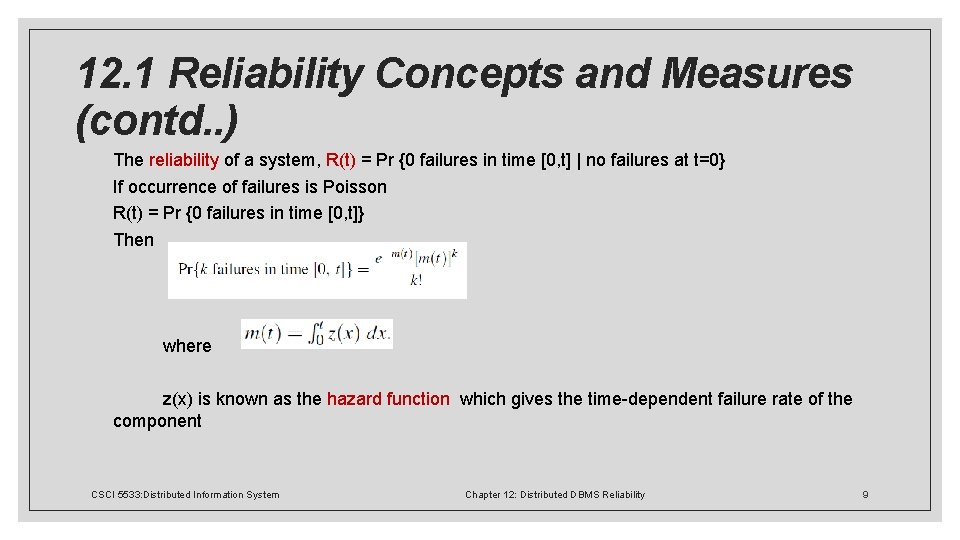

12. 1 Reliability Concepts and Measures (contd. . ) The reliability of a system, R(t) = Pr {0 failures in time [0, t] | no failures at t=0} If occurrence of failures is Poisson R(t) = Pr {0 failures in time [0, t]} Then where z(x) is known as the hazard function which gives the time-dependent failure rate of the component CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 9

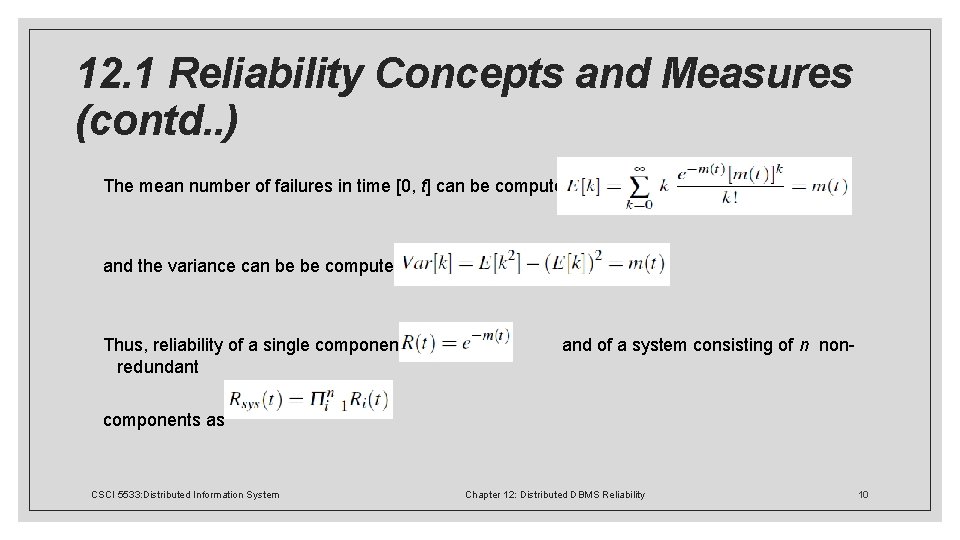

12. 1 Reliability Concepts and Measures (contd. . ) The mean number of failures in time [0, t] can be computed as and the variance can be be computed as Thus, reliability of a single component is and of a system consisting of n nonredundant components as CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 10

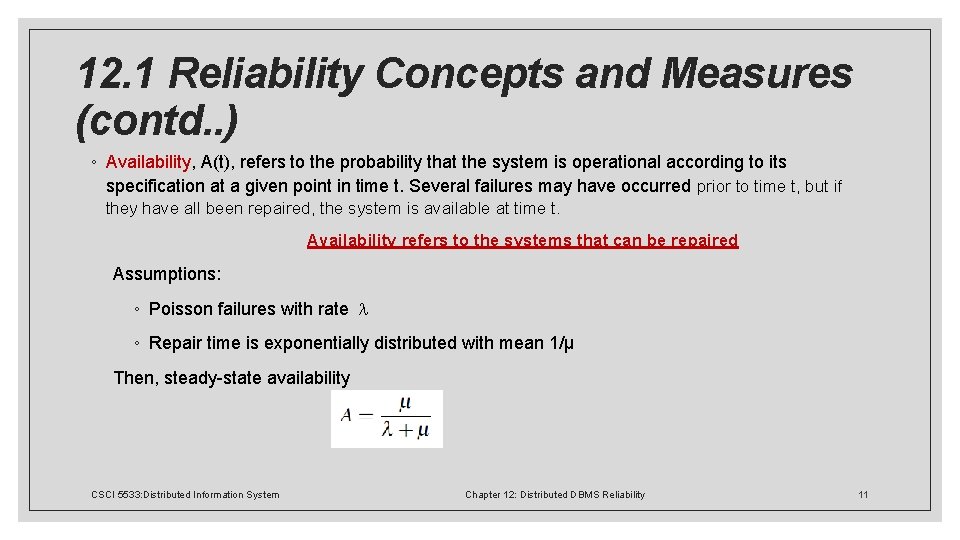

12. 1 Reliability Concepts and Measures (contd. . ) ◦ Availability, A(t), refers to the probability that the system is operational according to its specification at a given point in time t. Several failures may have occurred prior to time t, but if they have all been repaired, the system is available at time t. Availability refers to the systems that can be repaired Assumptions: ◦ Poisson failures with rate ◦ Repair time is exponentially distributed with mean 1/μ Then, steady-state availability CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 11

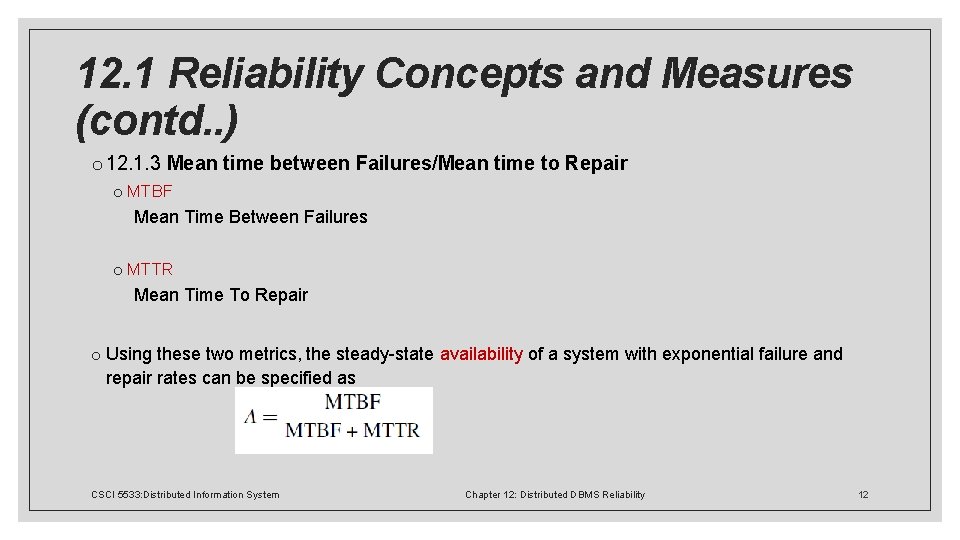

12. 1 Reliability Concepts and Measures (contd. . ) o 12. 1. 3 Mean time between Failures/Mean time to Repair o MTBF Mean Time Between Failures o MTTR Mean Time To Repair o Using these two metrics, the steady-state availability of a system with exponential failure and repair rates can be specified as CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 12

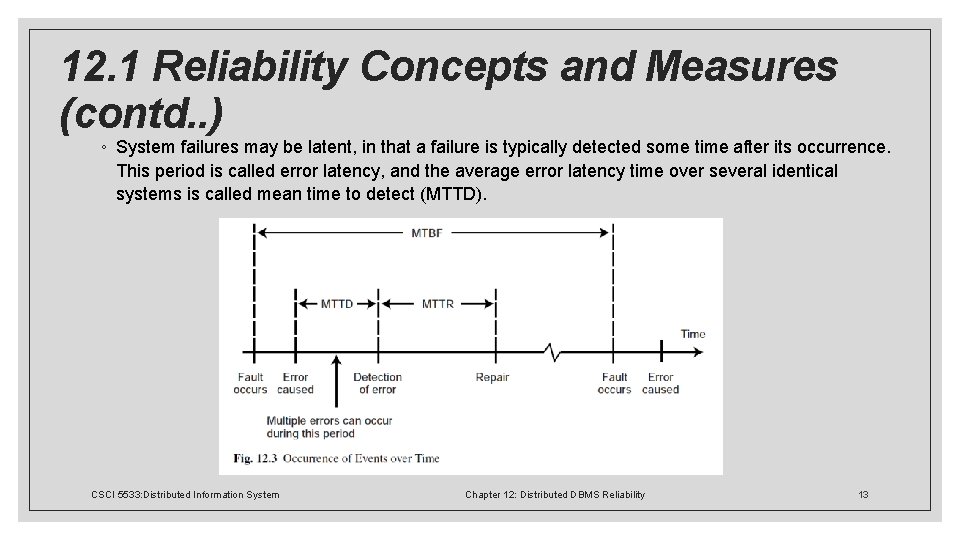

12. 1 Reliability Concepts and Measures (contd. . ) ◦ System failures may be latent, in that a failure is typically detected some time after its occurrence. This period is called error latency, and the average error latency time over several identical systems is called mean time to detect (MTTD). CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 13

12. 2 Failures in Distributed DBMS Types of Failures ◦ Transaction failures ◦ Reasons : error in the transaction, potential deadlock etc. ◦ Approach to handle such failures is to abort the transaction. ◦ Site (system) failures ◦ Reasons : Hardware or software failures ◦ Results in loss of main memory, processor failure or power supply, failed site becomes unreachable from other sites ◦ Total failure and partial failure CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 14

12. 2 Failures in Distributed DBMS (contd. . ) ◦ Media (disk) failures ◦ Reasons : operating system errors, hardware, faults such as head crashes or controller failures ◦ Refers to the secondary storage device that stores the database. ◦ Results in either all or part of the database being inaccessible or destroyed. ◦ Communication failures ◦ Types: lost (or undeliverable) messages, and communication line failures ◦ Network partitioning ◦ If the network is partitioned, the sites in each partition may continue to operate. ◦ executing transactions that access data stored in multiple partitions becomes a major issue. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 15

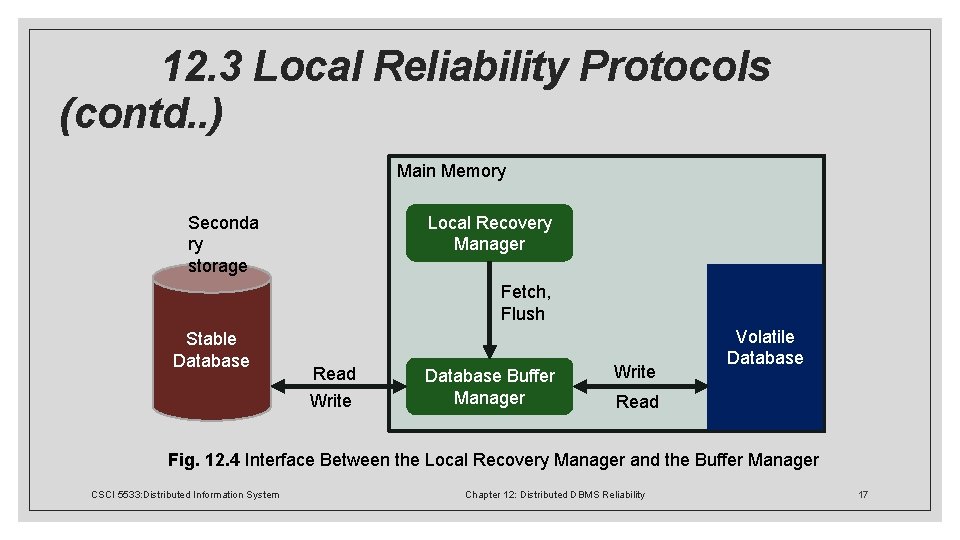

12. 3 Local Reliability Protocols Functions of LRM ◦ LRM (Local Recovery Manager) ◦ The execution of the commands such as begin_transaction, read, write, commit and abort to maintain the atomicity and durability properties of the transactions. ◦ 12. 3. 1 Architectural considerations ◦ Volatile storage ◦ Consists of the main memory of the computer system (RAM). ◦ The database kept in volatile storage is volatile database. ◦ Stable storage ◦ Database is permanently stored in the secondary storage. ◦ Resilient to failures, hence less frequent failures. ◦ The database kept in volatile storage is stable database. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 16

12. 3 Local Reliability Protocols (contd. . ) Main Memory Seconda ry storage Local Recovery Manager Fetch, Flush Stable Database Read Write Database Buffer Manager Write Volatile Database Read Fig. 12. 4 Interface Between the Local Recovery Manager and the Buffer Manager CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 17

12. 3 Local Reliability Protocols (contd. . ) ◦ 12. 3. 2 Recovery Information ◦ When a system failure occurs, the volatile database is lost. ◦ DBMS must maintain some information about its state at the time of the failure to be able to bring the database to the state that it was in when the failure occurred. ◦ Recovery information depends on methods of execution updates: ◦ In-place updating ◦ Physically changes the value of the data item in the stable database, as a result previous values are lost ◦ Out-of-place updating ◦ Does not change the value of the data item in the stable database but maintains the new value separately CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 18

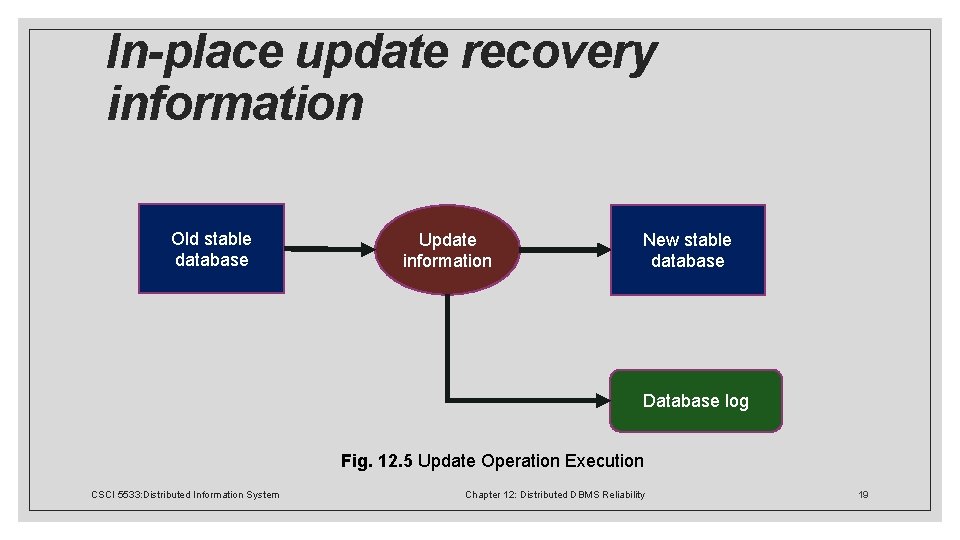

In-place update recovery information Old stable database Update information New stable database Database log Fig. 12. 5 Update Operation Execution CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 19

Logging Database Log ◦ Every action of a transaction must not only perform the action but must also write a log record to an append-only file. The log contains information used by the recovery process to restore the consistency of a system. Each log record describes a significant event during transaction processing. Types of log records: ◦ <Ti, start> : if transaction Ti has started ◦ <Ti, Xj, V 1, V 2>: before Ti executes a write(Xj), where V 1 is the old value before write and V 2 is the new values after the write ◦ <Ti, commit>: if Ti has committed ◦ <Ti, abort>: if Ti has aborted ◦ <checkpoint> CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 20

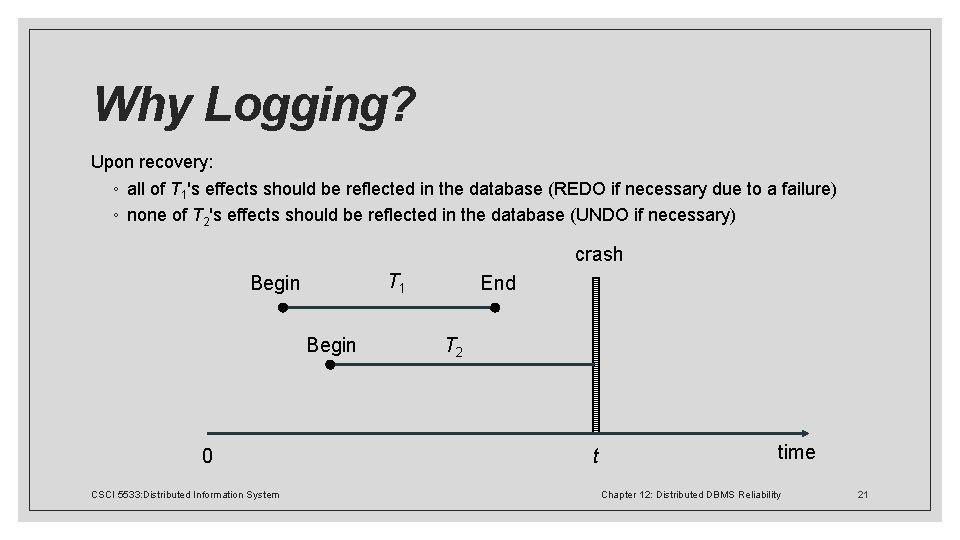

Why Logging? Upon recovery: ◦ all of T 1's effects should be reflected in the database (REDO if necessary due to a failure) ◦ none of T 2's effects should be reflected in the database (UNDO if necessary) crash T 1 Begin 0 End T 2 t time CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 21

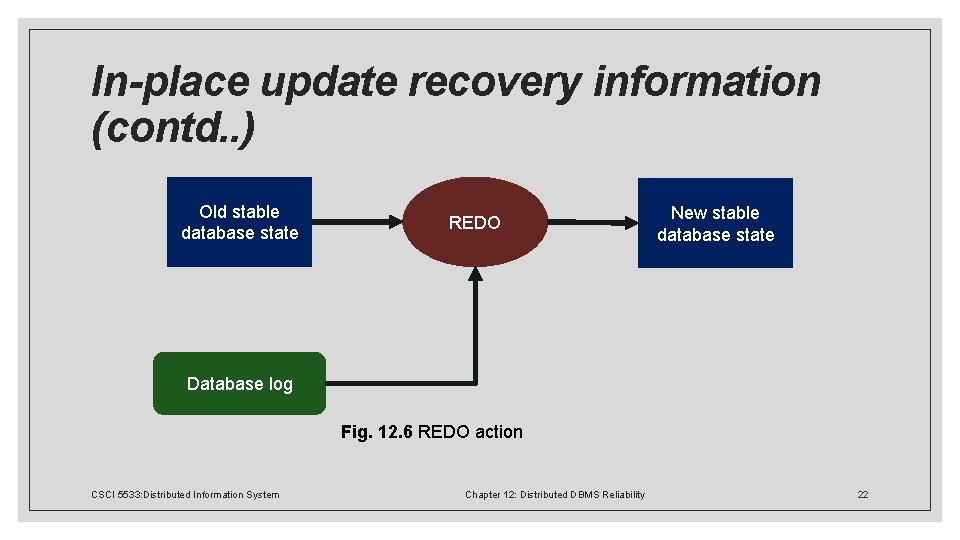

In-place update recovery information (contd. . ) Old stable database state REDO New stable database state Database log Fig. 12. 6 REDO action CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 22

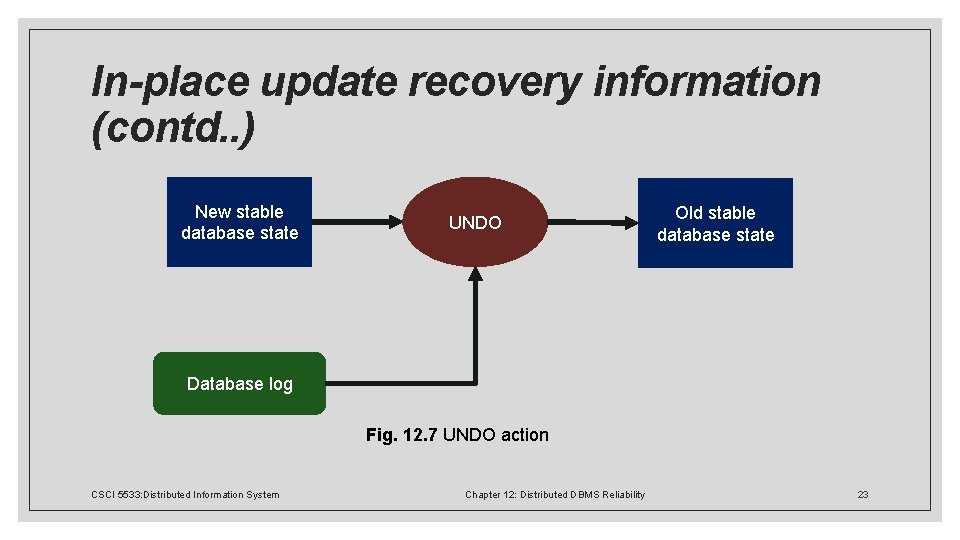

In-place update recovery information (contd. . ) New stable database state UNDO Old stable database state Database log Fig. 12. 7 UNDO action CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 23

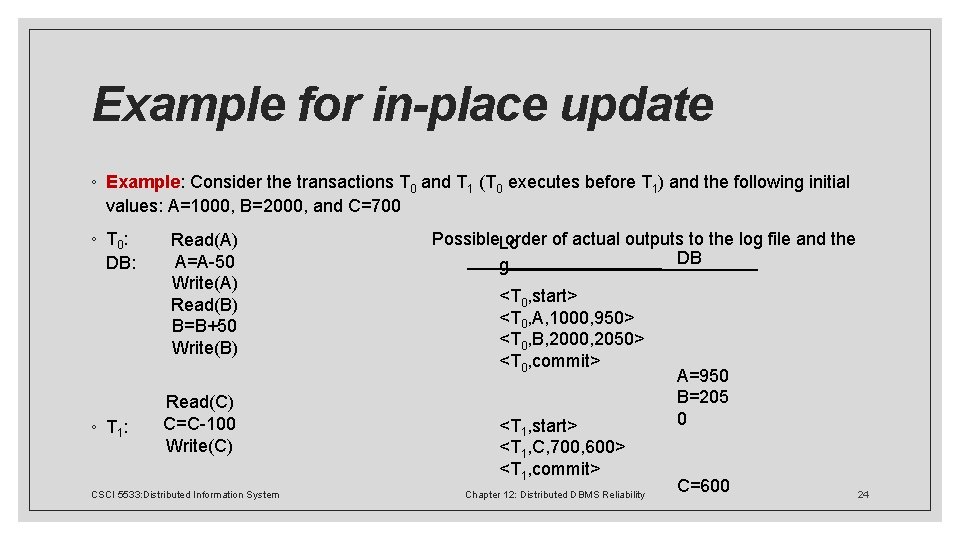

Example for in-place update ◦ Example: Consider the transactions T 0 and T 1 (T 0 executes before T 1) and the following initial values: A=1000, B=2000, and C=700 ◦ T 0: Possible order of actual outputs to the log file and the Read(A) Lo DB A=A-50 DB: g Write(A) <T 0, start> Read(B) <T 0, A, 1000, 950> B=B+50 <T 0, B, 2000, 2050> Write(B) <T 0, commit> A=950 B=205 Read(C) 0 C=C-100 <T 1, start> ◦ T 1 : Write(C) <T 1, C, 700, 600> <T 1, commit> C=600 CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 24

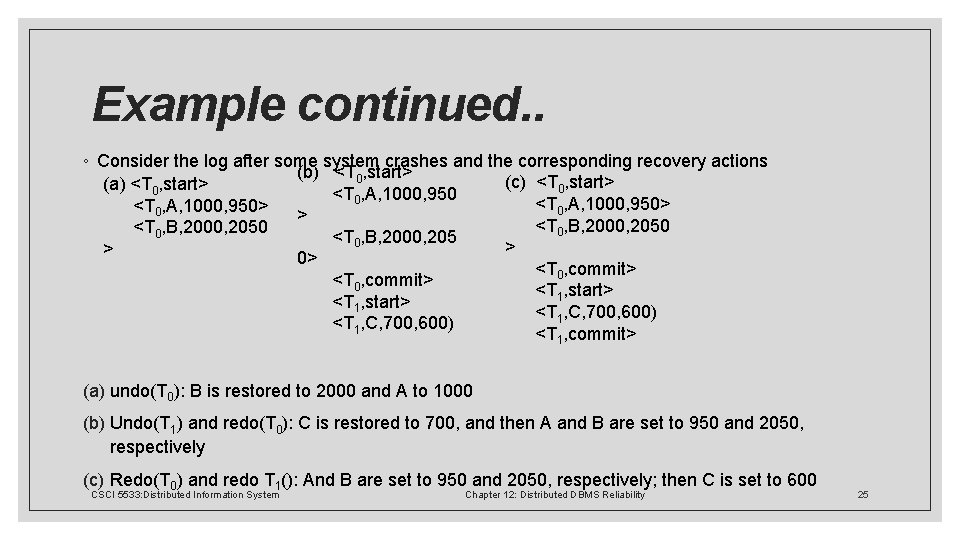

Example continued. . ◦ Consider the log after some system crashes and the corresponding recovery actions (b) <T 0, start> (c) <T 0, start> (a) <T 0, start> <T 0, A, 1000, 950> > <T 0, B, 2000, 2050 <T 0, B, 2000, 205 > > 0> <T 0, commit> <T 1, start> <T 1, C, 700, 600) <T 1, commit> (a) undo(T 0): B is restored to 2000 and A to 1000 (b) Undo(T 1) and redo(T 0): C is restored to 700, and then A and B are set to 950 and 2050, respectively (c) Redo(T 0) and redo T 1(): And B are set to 950 and 2050, respectively; then C is set to 600 CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 25

When to write log records into the stable store? Assume a transaction T updates a page P ◦ Fortunate case ◦ System writes P in stable database ◦ System updates stable log for this update ◦ SYSTEM FAILURE OCCURS!. . . (before T commits) We can recover (undo) by restoring P to its old state by using the log ◦ Unfortunate case ◦ System writes P in stable database ◦ SYSTEM FAILURE OCCURS!. . . (before stable log is updated) We cannot recover from this failure because there is no log record to restore the old value. ◦ Solution: Write-Ahead Log (WAL) protocol CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 26

Write-Ahead Log protocol Notice: ◦ If a system crashes before a transaction is committed, then all the operations must be undone. Only need the before images (undo portion of the log). ◦ Once a transaction is committed, some of its actions might have to be redone. Need the after images (redo portion of the log). WAL protocol : ◦ Before a stable database is updated, the undo portion of the log should be written to the stable log ◦ When a transaction commits, the redo portion of the log must be written to stable log prior to the updating of the stable database. CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 27

Out-of-place update recovery information Shadowing ◦ When an update occurs, don't change the old page, but create a shadow page with the new values and write it into the stable database. ◦ Update the access paths so that subsequent accesses are to the new shadow page. ◦ The old page retained for recovery. Differential files ◦ For each file F maintain ◦ a read only part FR ◦ a differential file consisting of insertions part DF+ and deletions part DF◦ Thus, F = (FR DF+) – DF◦ Updates treated as delete old value, insert new value CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 28

Execution strategies Dependent upon ◦ Can the buffer manager decide to write some of the buffer pages being accessed by a transaction into stable storage or does it wait for LRM to instruct it? ◦ fix/no-fix decision ◦ Does the LRM force the buffer manager to write certain buffer pages into stable database at the end of a transaction's execution? ◦ flush/no-flush decision Possible execution strategies: ◦ ◦ no-fix/no-flush no-fix/flush fix/no-flush fix/flush CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 29

No-fix/No-flush Abort ◦ Buffer manager may have written some of the updated pages into stable database ◦ LRM performs transaction undo (or partial undo) Commit ◦ LRM writes an “end_of_transaction” record into the log. Recover ◦ For those transactions that have both a “begin_transaction” and an “end_of_transaction” record in the log, a partial redo is initiated by LRM ◦ For those transactions that only have a “begin_transaction” in the log, a global undo is executed by LRM CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 30

No-fix/flush Abort ◦ Buffer manager may have written some of the updated pages into stable database ◦ LRM performs transaction undo (or partial undo) Commit ◦ LRM issues a flush command to the buffer manager for all updated pages ◦ LRM writes an “end_of_transaction” record into the log. Recover ◦ No need to perform redo ◦ Perform global undo CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 31

Fix/No-flush Abort ◦ None of the updated pages have been written into stable database ◦ Release the fixed pages Commit ◦ LRM writes an “end_of_transaction” record into the log. ◦ LRM sends an unfix command to the buffer manager for all pages that were previously fixed Recover ◦ Perform partial redo ◦ No need to perform global undo CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 32

Fix/Flush Abort ◦ None of the updated pages have been written into stable database ◦ Release the fixed pages Commit (the following must be done atomically) ◦ LRM issues a flush command to the buffer manager for all updated pages ◦ LRM sends an unfix command to the buffer manager for all pages that were previously fixed ◦ LRM writes an “end_of_transaction” record into the log. Recover ◦ No need to do anything CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 33

12. 3 Local Reliability Protocols ◦ 12. 3. 4 Checkpointing ◦ Process of building a "wall" that signifies the database is up-to-date and consistent ◦ Achieved in three steps: ◦ Write a begin_checkpoint record into the log ◦ Collect the checkpoint data into the stable storage ◦ Write an end_checkpoint record into the log ◦ First and third steps ensure atomicity ◦ Different alternatives for Step 2 ◦ Transaction-consistent checkpointing – incurs a significant delay ◦ Alternatives: action-consistent checkpoints, fuzzy checkpoints CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 34

12. 3 Local Reliability Protocols ◦ 12. 3. 5 Handling Media Failures ◦ May be catastrophic or result in partial loss ◦ Maintain an archive copy of the database and the log on a different storage medium ◦ Typically magnetic tape or CD-ROM ◦ Three levels of media hierarchy: main memory, random access disk storage, magnetic tape ◦ When media failure occurs, database is recovered by redoing and undoing transactions as stored in the archive log ◦ How is the archive database stored? ◦ Perform archiving concurrent with normal processing ◦ Archive database incrementally so that each version contains changes since last archive CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 35

12. 4 Distributed Reliability Protocols ◦ Protocols address the distributed execution of the begin_transaction, read, write, abort, commit, and recover commands ◦ Execution of the begin_transaction, read, and write commands does not cause significant problems ◦ Begin_transaction is executed in same manner as centralized case by transaction manager ◦ Abort is executed by undoing its effects ◦ Common implementation for distributed reliability protocols ◦ Assume there is a coordinator process at the originating site of a transaction ◦ At each site where the transaction executes there are participant processes ◦ Distributed reliability protocols are implemented between coordinator and participants CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 36

12. 4 Distributed Reliability Protocols ◦ 12. 4. 1 Components of Distributed Reliability Protocols ◦ ◦ Reliability techniques consist of commit, termination, and recovery protocols Commit and recover commands executed differently in a distributed DBMS than centralized Termination protocols are unique to distributed systems Termination vs. recovery protocols ◦ Opposite faces of recovery problem ◦ Given a site failure, termination protocols address how the operational sites deal with the failure ◦ Recovery protocols address procedure the process at the failed site must go through to recover its state ◦ Commit protocols must maintain atomicity of distributed transactions ◦ Ideally recovery protocols are independent – no need to consult other sites to terminate transaction CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 37

12. 4 Distributed Reliability Protocols ◦ 12. 4. 2 Two-Phase Commit Protocol ◦ Insists that all sites involved in the execution of a distributed transaction agree to commit the transaction before its effects are made permanent ◦ Scheduler issues, deadlocks necessitate synchronization ◦ Global commit rule: ◦ If even one participant votes to abort the transaction, the coordinator must reach a global abort decision ◦ If all the participants vote to commit the transaction, the coordinator must reach a global commit decision ◦ A participant may unilaterally abort a transaction until an affirmative vote is registered ◦ A participant's vote cannot be changed ◦ Timers used to exit from wait states CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 38

12. 4 Distributed Reliability Protocols ◦ 12. 4. 2 Two-Phase Commit Protocol ◦ Centralized vs. Linear/nested 2 PC ◦ Centralized – participants do not communicate among themselves ◦ Linear – participants can communicate with one another ◦ Sites in linear system become numbered, pass messages to one another with "vote-commit" or "vote-abort" messages ◦ If last node decides to commit, send message back to coordinator node-by-node ◦ Distributed 2 PC eliminates second phase of linear protocol ◦ Coordinator sends Prepare message to all participants ◦ Each participant sends its decision to all other participants and coordinator ◦ Participants must know identity of other participants for linear and distributed, not centralized CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 39

12. 4 Distributed Reliability Protocols ◦ 12. 4. 3 Variations of 2 PC ◦ Presumed Abort 2 PC Protocol ◦ Participant polls coordinator and there is no information about transaction, aborts ◦ Coordinator can forget about transaction immediately after aborting ◦ Expected to be more efficient, saves message transmission between coordinator and participants ◦ Presumed Commit 2 PC Protocol ◦ Likewise, if no information about transaction exists, should be considered committed ◦ Most transactions are expected to commit ◦ Could cause inconsistency – must create a collecting record CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 40

12. 5 Dealing with Site Failures ◦ 12. 5. 1 Termination and Recovery Protocols for 2 PC ◦ Termination Protocols ◦ Coordinator Timeouts ◦ Timeout in the WAIT state – coordinator can globally abort transaction, cannot commit ◦ Timeout in the COMMIT or ABORT states – Coordinator sends "global-commit" or "global-abort" commands ◦ Participant Timeouts ◦ Timeout in the INITIAL state – Coordinator must have failed in the INITIAL state ◦ Timeout in the READY state – Has voted to commit, does not know global decision of coordinator CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 41

12. 5 Dealing with Site Failures ◦ 12. 5. 1 Termination and Recovery Protocols for 2 PC ◦ Recovery Protocols ◦ Coordinator Site Failures ◦ Coordinator fails while in the INITIAL state – Starts commit process upon recovery ◦ Coordinator fails while in the WAIT state – Coordinator restarts commit process for transaction by sending prepare message ◦ Coordinator fails while in the COMMIT or ABORT states – Does not need to act upon recovery (participants informed) ◦ Participant Site Failures ◦ Participant fails in the INITIAL state – Upon recovery, participant should abort unilaterally ◦ Participant fails while in the READY state – Can treat as timeout in the READY state, hand to termination ◦ Participant fails while in the ABORT or COMMIT state – Participant takes no special action CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 42

12. 5 Dealing with Site Failures ◦ 12. 5. 2 Three-Phase Commit Protocol ◦ Designed as a non-blocking protocol ◦ To be non-blocking, state transition diagram must contain neither of the following: ◦ No state that is "adjacent" to both a commit and an abort state ◦ No non-committable state that is "adjacent" to a commit state ◦ Adjacency refers to ability to go from one state to another with a single transition ◦ To make 2 PC non-blocking, add another state between WAIT/READY and COMMIT states that serves as a buffer ◦ Three phases: ◦ INITIAL to WAIT/READY ◦ WAIT/READY to ABORT or PRECOMMIT ◦ PRECOMMIT to COMMIT CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 43

12. 5 Dealing with Site Failures ◦ 12. 5. 2 Termination and Recovery Protocols for 3 PC ◦ Termination Protocols ◦ Coordinator Timeouts ◦ Timeout in the WAIT state – coordinator can globally abort transaction, cannot commit ◦ Timeout in the PRECOMMIT state – Coordinator sends prepare-to-commit message, globally commits to operational participants ◦ Timeout in the COMMIT or ABORT states – Can follow termination protocol ◦ Participant Timeouts ◦ Timeout in the INITIAL state – Coordinator must have failed in the INITIAL state ◦ Timeout in the READY state – Has voted to commit, elects new coordinator ◦ Timeout in the PRECOMMIT state – Handled identically to timeout in READY state CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 44

12. 5 Dealing with Site Failures ◦ 12. 5. 1 Termination and Recovery Protocols for 2 PC ◦ Recovery Protocols ◦ Coordinator Site Failures ◦ Coordinator fails while in the INITIAL state – Starts commit process upon recovery ◦ Coordinator fails while in the WAIT state – Coordinator restarts commit process for transaction by sending prepare message ◦ Coordinator fails while in the COMMIT or ABORT states – Does not need to act upon recovery (participants informed) ◦ Participant Site Failures ◦ Participant fails in the INITIAL state – Upon recovery, participant should abort unilaterally ◦ Participant fails while in the READY state – Can treat as timeout in the READY state, hand to termination ◦ Participant fails while in the ABORT or COMMIT state – Participant takes no special action CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 45

12. 6 Network Partitioning ◦ Types of network partitioning ◦ Simple partitioning – network divided into only two components ◦ Multiple partitioning – network divided into more than two components ◦ No non-blocking atomic commitment protocol exists that is resilient to multiple partitioning ◦ Possible to design non-blocking atomic commit protocol resilient to simple partitioning ◦ Concern is with termination of transactions that were active at time of partitioning ◦ Strategies: permit all partitions to continue normal operations, accept possible inconsistency of database; or block operation in some partitions to maintain consistency ◦ Known as optimistic vs. Pessimistic strategies ◦ Protocols: centralized, vote-based CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 46

12. 6 Network Partitioning ◦ 12. 6. 1 Centralized Protocols ◦ Based on centralized concurrency control algorithms from Ch. 11 ◦ Permit the operation of the partition that contains the central site ◦ Manages the lock tables ◦ Dependent on concurrency control mechanism employed by DDBMS ◦ Expect each site to be able to differentiate network partitioning properly CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 47

12. 6 Network Partitioning ◦ 12. 6. 2 Voting-based Protocols ◦ Uses quorum-based voting and a commit method to ensure atomicity ◦ Generalized from majority method ◦ Abort quorum + Commit quorum > Total number of votes ◦ Ensures transaction cannot be committed and aborted at the same time ◦ Before a transaction commits, it must obtain a commit quorum ◦ Before a transaction aborts, it must obtain an abort quorum CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 48

12. 7 Architectural Considerations ◦ Up until this point, discussion has been abstract ◦ Execution depends heavily on: ◦ A highly-detailed model of the architecture ◦ The recovery procedures that the local recovery manager implements ◦ Confine architectural discussion to: ◦ Implementation of the coordinator and participant concepts within the framework of the transaction manager ◦ The coordinator's access to the database log ◦ The changes that need to be made in the local recovery manager operations CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 49

12. 8 Conclusion ◦ Two-Phase Commit (2 PC) and Three-Phase Commit (3 PC) guarantee atomicity and durability when failures occur ◦ 3 PC can be made non-blocking, which permits sites to continue operating without waiting for recovery of failed sites ◦ Not possible to design protocols that guarantee atomicity and permit each network partition to continue its operations ◦ Distributed commit protocols add overhead to the concurrency control algorithms ◦ Failures of omission – Failure due to faults in components or operating environment (covered by this chapter) ◦ Failures of commission – Failure due to improper system design and implementation CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 50

12. 9 Class Exercise CSCI 5533: Distributed Information System Chapter 12: Distributed DBMS Reliability 51

- Slides: 51