Chapter 10 Relationships Between Measurements Variables Statistical Relationships

Chapter 10 Relationships Between Measurements Variables

Statistical Relationships Correlation: measures the strength of a certain type of relationship between two measurement variables. (How much does one variable explain the other variable) Regression: gives a numerical method for trying to predict one measurement variable from another. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 2

Statistical Relationships versus Deterministic Relationships Deterministic: if we know the value of one variable, we can determine the value of the other exactly. e. g. relationship between volume and weight of water. Statistical: natural variability exists in both measurements. Useful for describing what happens to a population or aggregate. (Therefore, even when two variables are strongly correlated we can still not predict one exactly from the other, there will always be some variability due to nature!) *When researchers make claims about statistical relationships, they are not claiming that the relationship will hold for everyone… just that the relationship will hold for MOST people Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 3

Statistical Significance A relationship is statistically significant if the chances of observing the relationship (between two measurement variables) in the sample when actually nothing is going on in the population are less than 5%. * Less than 5% of the time we will see a sample that tells us there is a relationship when in fact there is no relationship between the variables. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 4

Measuring Strength Through Correlation A Linear Relationship: How close do the points in a scatter plot follow a straight line? Correlation (or the Pearson product-moment correlation or the correlation coefficient) represented by the letter r: • Indicator of how closely the values fall to a straight line. • Measures linear relationships only; that is, it measures how close the individual points in a scatterplot are to a straight line. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 5

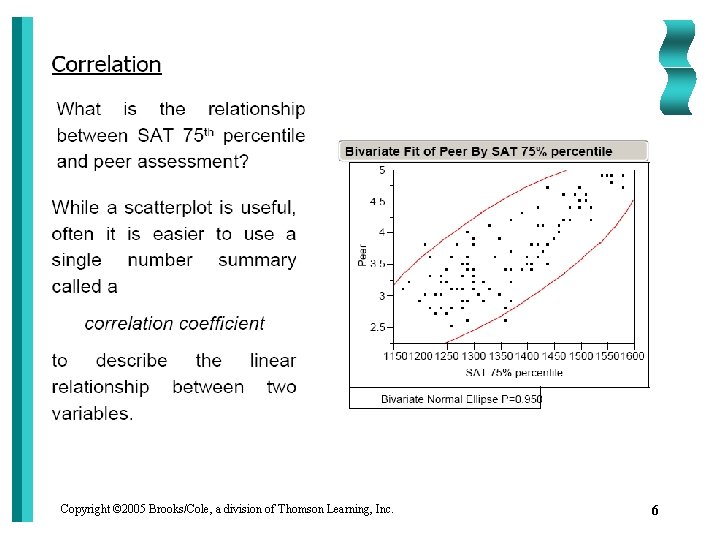

Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 6

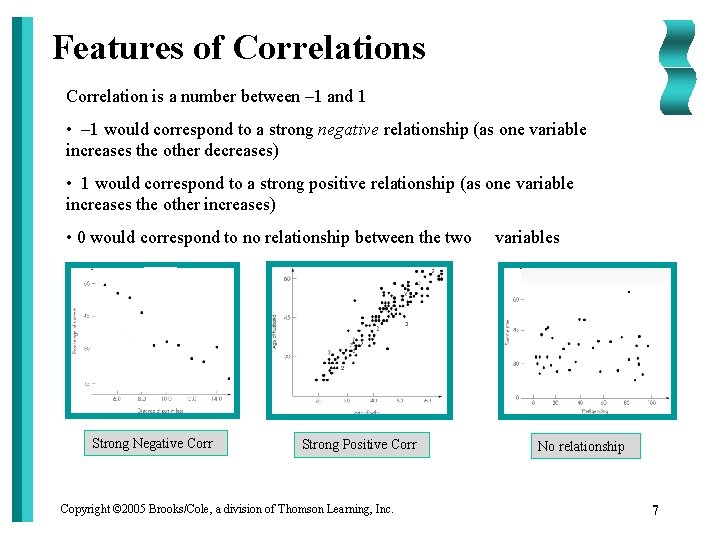

Features of Correlations Correlation is a number between – 1 and 1 • – 1 would correspond to a strong negative relationship (as one variable increases the other decreases) • 1 would correspond to a strong positive relationship (as one variable increases the other increases) • 0 would correspond to no relationship between the two Strong Negative Corr Strong Positive Corr Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. variables No relationship 7

Features of Correlations The most important features of a correlation are: • Magnitude: how close is the correlation to one or neg. one (or how strong is the relationship) • Sign is the correlation positive or negative (as one variable increases do we expect the other to increase or decrease) 1. *Correlations are unaffected if the units of measurement are changed. For example, the correlation between weight and height remains the same regardless of whether height is expressed in inches, feet or millimeters (as long as it isn’t rounded off). 1. *Interpretation of Correlation: If the correlation between two variables (say height and weight) is. 6 then we know that 60% of the variation in a persons height is explained by their weight. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 8

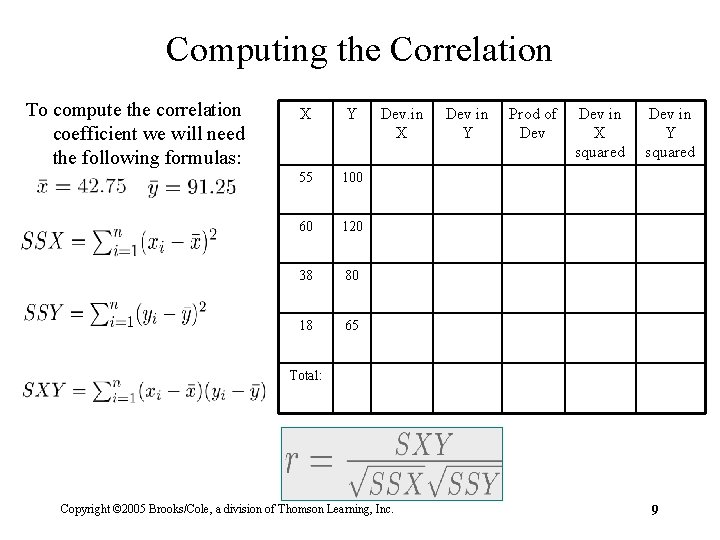

Computing the Correlation To compute the correlation coefficient we will need the following formulas: X Y 55 100 60 120 38 80 18 65 Dev. in X Dev in Y Prod of Dev in X squared Dev in Y squared Total: Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 9

Specifying Linear Relationships with Regression Goal: Find a straight line that comes as close as possible to the points in a scatterplot. • Procedure to find the line is called regression. • Resulting line is called the regression line. • Formula that describes the line is called the regression equation. • Most common procedure used gives the least squares regression line. How do we find this least squares regression line? Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 10

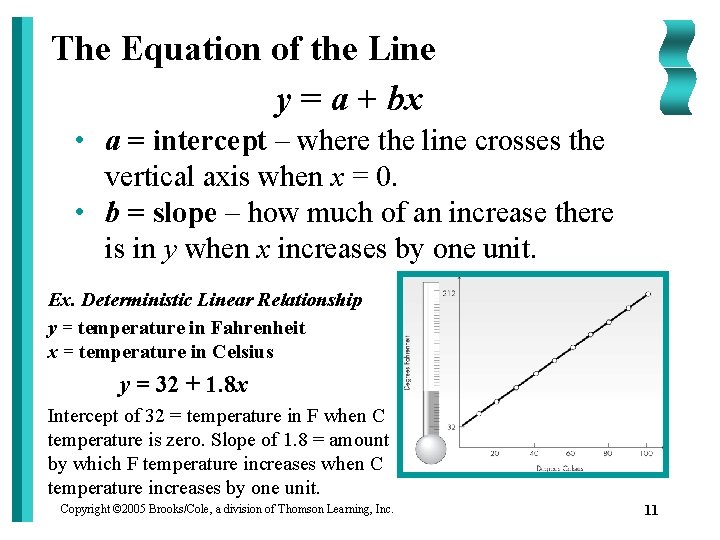

The Equation of the Line y = a + bx • a = intercept – where the line crosses the vertical axis when x = 0. • b = slope – how much of an increase there is in y when x increases by one unit. Ex. Deterministic Linear Relationship y = temperature in Fahrenheit x = temperature in Celsius y = 32 + 1. 8 x Intercept of 32 = temperature in F when C temperature is zero. Slope of 1. 8 = amount by which F temperature increases when C temperature increases by one unit. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 11

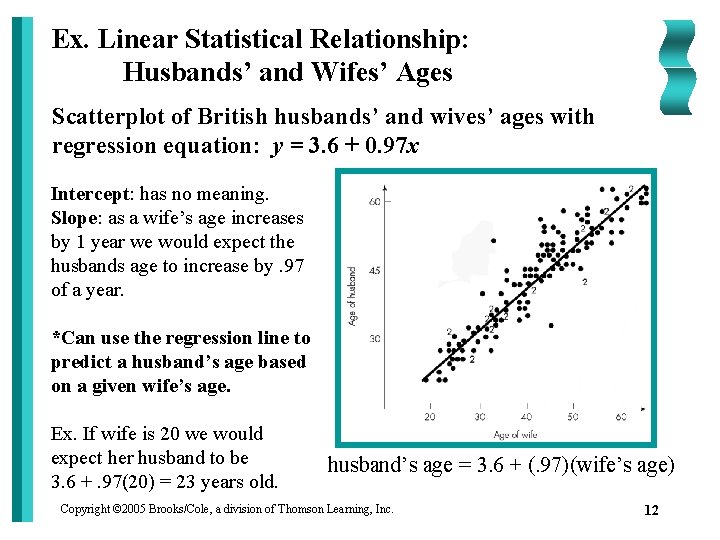

Ex. Linear Statistical Relationship: Husbands’ and Wifes’ Ages Scatterplot of British husbands’ and wives’ ages with regression equation: y = 3. 6 + 0. 97 x Intercept: has no meaning. Slope: as a wife’s age increases by 1 year we would expect the husbands age to increase by. 97 of a year. *Can use the regression line to predict a husband’s age based on a given wife’s age. Ex. If wife is 20 we would expect her husband to be 3. 6 +. 97(20) = 23 years old. husband’s age = 3. 6 + (. 97)(wife’s age) Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 12

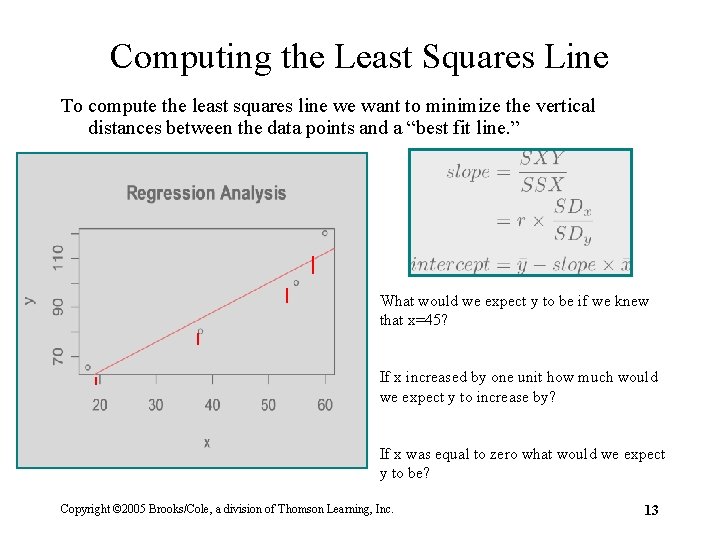

Computing the Least Squares Line To compute the least squares line we want to minimize the vertical distances between the data points and a “best fit line. ” What would we expect y to be if we knew that x=45? If x increased by one unit how much would we expect y to increase by? If x was equal to zero what would we expect y to be? Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 13

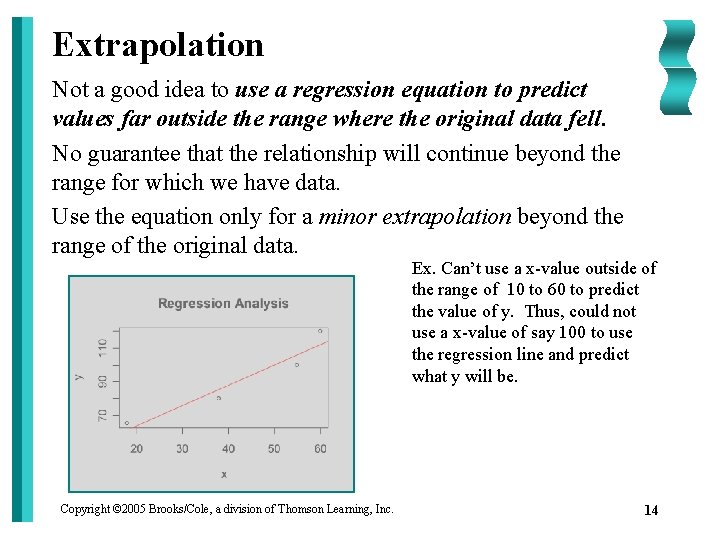

Extrapolation Not a good idea to use a regression equation to predict values far outside the range where the original data fell. No guarantee that the relationship will continue beyond the range for which we have data. Use the equation only for a minor extrapolation beyond the range of the original data. Ex. Can’t use a x-value outside of the range of 10 to 60 to predict the value of y. Thus, could not use a x-value of say 100 to use the regression line and predict what y will be. Copyright © 2005 Brooks/Cole, a division of Thomson Learning, Inc. 14

- Slides: 14