Changing Perspective Common themes throughout past papers Repeated

- Slides: 15

Changing Perspective… • Common themes throughout past papers • Repeated simple games with small number of actions • Mostly theoretical papers • Known available actions • Game-theory perspective • How are these papers different? • Evolutionary approach • Empirical papers (no analytic proofs) • Complex games, large strategy space • AI perspective

Comparing Today’s Papers • Similarities • Use genetic algorithm to generate strategies • Evaluate generated strategies against a fixed set of opponents • Describe a (potentially) iterative approach • Differences • Phelps focuses on process within each iteration • Ficici focuses on movement from one iteration to the next • Different types of games (constant sum v. general sum)

A Novel Method for Automatic Strategy Acquisition in N-Player Non-zero-sum Games (Phelps et al. ) • Double auction setting --> potentially infinitely large strategy space (intractable game) • Basis of iterative approach (not really discussed in paper) that improves set of heuristic strategies through search • Uses genetic algorithm to find best strategy for current market conditions • Evaluates strategy using replicator dynamics. Uses the size of the basin of attraction (“market share”) to quantify the fitness of the strategy.

Replicator Dynamics: Calculating the Payoff Matrix • Given a starting point, predicts trajectory of population mix of pure strategies using the equation: • The utility is based off of a roughly calibrated heuristic payoff matrix. Payoff matrix generated through simulation of the game to get the expected payoff for each agent. • Payoffs are independent of type, justified by the typedependent variations in actual game being averaged out via sampling --> simplifies payoff matrix • Assumes that can simulate game

Replicator Dynamics: Finding the Candidate Strategy • After running many trajectories, calculate the size of the basin of attraction attributable to each pure strategy • Run perturbation analysis (increase payoffs of candidate strategy) and replot replicator-dynamics direction field. Answers question of which strategy is worthwhile to concentrate efforts of improvement. • In the paper’s example, ran this algorithm on 3 strategies: truth-telling (TT), Roth-Erev (RE), and Gjerstad-Dickhaut (GD) and found that RE is the best strategy to focus improvement efforts.

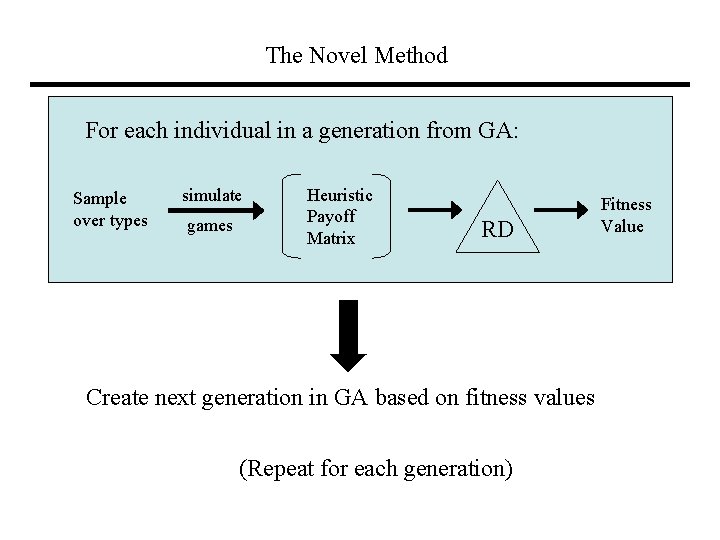

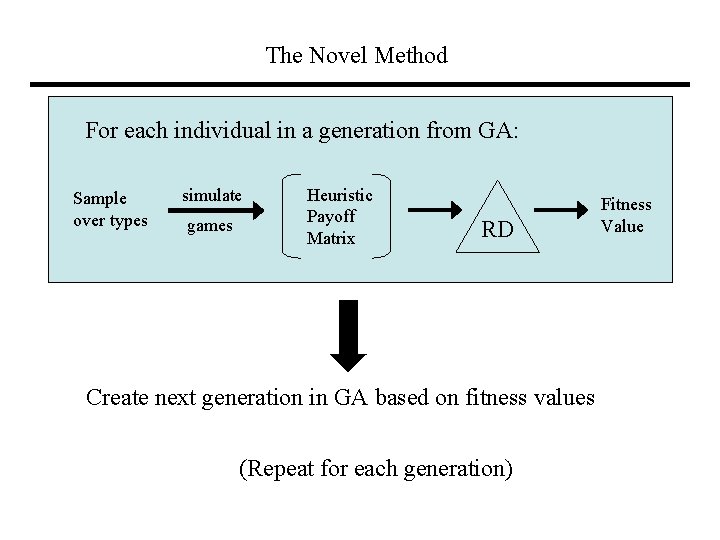

The Novel Method For each individual in a generation from GA: Sample over types simulate games Heuristic Payoff Matrix RD Create next generation in GA based on fitness values (Repeat for each generation) Fitness Value

Results • Found the optimized strategy (OS) to be an RE strategy with stateless Q-learning. OS’s market share against original RE, GD, and TT is 65%, greater than TT (32%), GD (3%) and the original RE (0%). • However, took 1800 CPU hours to compute (over 2 months) • Keep in mind, this is only for one iteration. Presumably, next step would be to substitute OS for RE in the set of strategies and run the algorithm again. But Phelps does not go into this.

Some Strengths and Weaknesses of Phelps paper • Strengths • Application to real-world setting (double auction) • Applies to general-sum game with (infinitely) large strategy space • Weaknesses • Very large computation time. Additionally, time increases exponentially with number of strategies (due to computation of RD) • Dependent on having attractors in RD (there exists games that this does not hold true) • Any more? Other remarks?

Ficici and Pollack Paper • Phelps does not address how to iterate his approach • Second paper, A Game-Theoretic Memory Mechanism for Coevolution (Ficici and Pollack) uses similar approach but with focus on moving from one iteration to the next • In each iteration, performs search for strategies (GA) • Evaluates fitness of strategies by playing them against most fit opponent from last iteration (N) • Also keeps set of potentially useful strategies in memory (M), updates memory every iteration

Motivation / Set up for Second Paper • For symmetric zero-sum 2 -player games (generalizes to asymmetric constant-sum 2 -player games) • In coevolutionary algorithms, the population contains genetic diversity for effective search for new strategies AND often represents the solution to the problem --> these two objectives can be conflicting • Avoid “forgetting” - problematic if have intransitive cycle • This paper separates these two functions: • Uses memory mechanism to hold solution and previously encountered strategies. • This lets the population maintain genetic diversity that’s useful for performing effective search.

Nash Memory Mechanism, some definitions • For mixed strategy m • C(m) = support set of m • S(m) = security set of m = {all s : E(m, s) ≥ 0} • Nash Memory Mechanism: N & M - mutually exclusive sets • N: holds best mixed strategy over time • M: holds strategies not in N, but that may be useful later, has limited capacity c. • H: search heuristic (in this paper a GA was used) • Q: set of strategies delivered by H

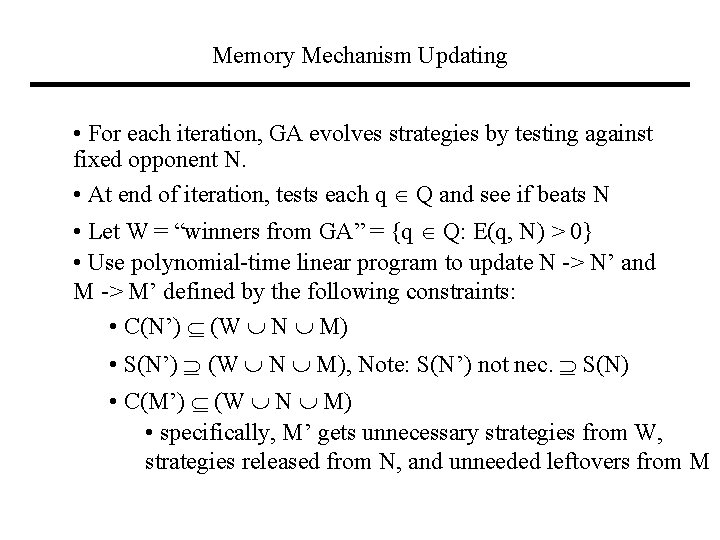

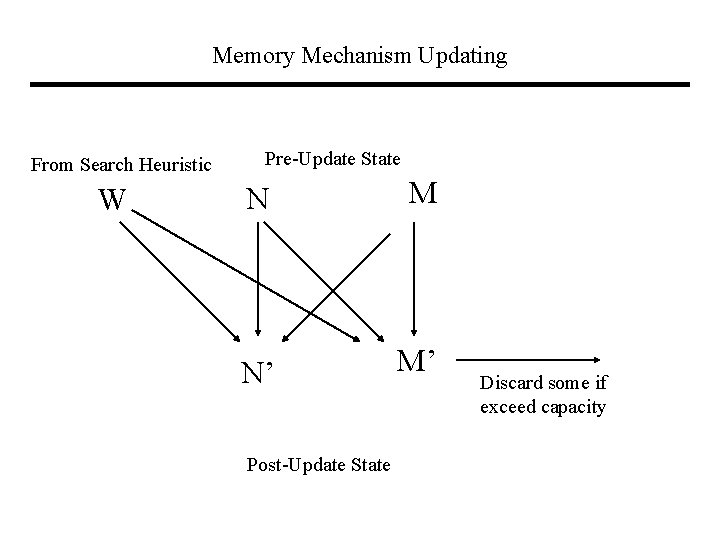

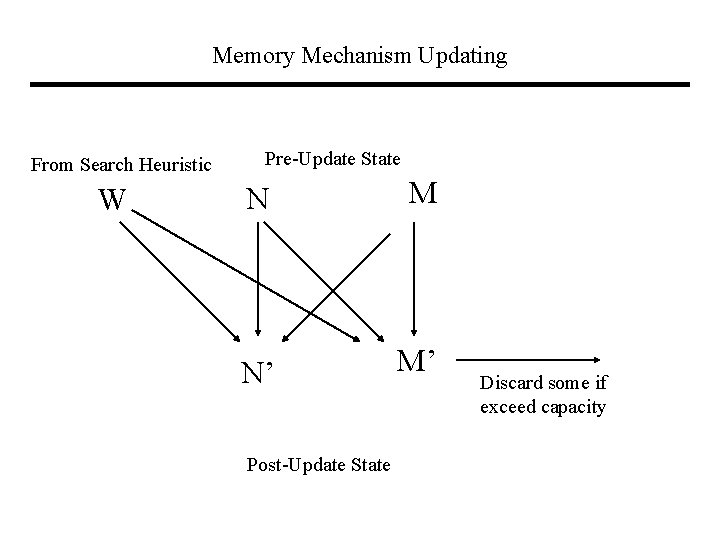

Memory Mechanism Updating • For each iteration, GA evolves strategies by testing against fixed opponent N. • At end of iteration, tests each q Q and see if beats N • Let W = “winners from GA” = {q Q: E(q, N) > 0} • Use polynomial-time linear program to update N -> N’ and M -> M’ defined by the following constraints: • C(N’) (W N M) • S(N’) (W N M), Note: S(N’) not nec. S(N) • C(M’) (W N M) • specifically, M’ gets unnecessary strategies from W, strategies released from N, and unneeded leftovers from M

Memory Mechanism Updating From Search Heuristic W Pre-Update State N M N’ M’ Post-Update State Discard some if exceed capacity

Strengths / Weaknesses of Ficici Paper • Strengths • Incorporates game theoretic notion of Nash into solution • Avoids the problems coming from forgetting in context of games with intransitive cycles • Time-efficient (polynomial time for memory update) • Outperformed other memory mechanisms (BOG, DT) in test • Weaknesses • Allows for some memory loss in update rule • No analytical proofs • Strong results only (seem to) hold for 2 -player constant sum • For general sum, lose efficiency, have multiple equilibria • Performance when intransitive cycles not as important?

Concluding Remarks • How do these papers compare to what we’ve seen previously? • Values and contexts for different approaches? • Other comments?