CERN openlab for Data Grid applications Sverre Jarp

CERN openlab for Data. Grid applications Sverre Jarp CERN openlab CTO IT Department, CERN SJ – June 2003 1

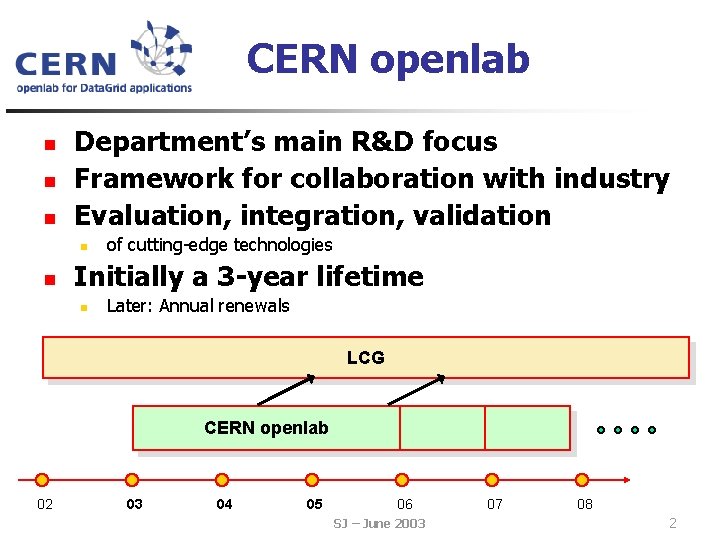

CERN openlab n n n Department’s main R&D focus Framework for collaboration with industry Evaluation, integration, validation n n of cutting-edge technologies Initially a 3 -year lifetime n Later: Annual renewals LCG CERN openlab 02 03 04 05 06 SJ – June 2003 07 08 2

Openlab sponsors n 5 current partners n Enterasys: n n HP: n n n 64 -bit Itanium processors & 10 Gbps NICs Oracle: n n n Storage Tank file system (SAN FS) w/metadata servers and data servers (currently with 28 TB) Intel: n n Integrity servers (103 * 2 -ways, 2 * 4 -ways) Two fellows (co-sponsored with CERN) IBM: n n 10 Gb. E core routers 10 g Database software w/add-on’s Two fellows One contributor n Voltaire n 96 -way Infiniband switch SJ – June 2003 3

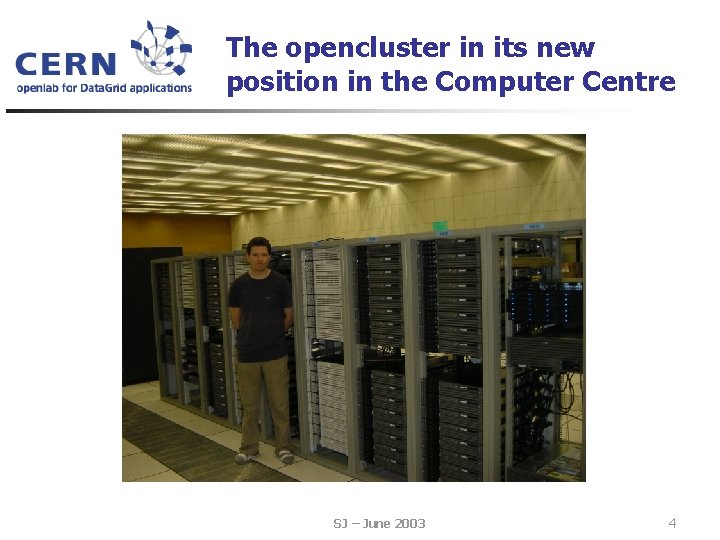

The opencluster in its new position in the Computer Centre SJ – June 2003 4

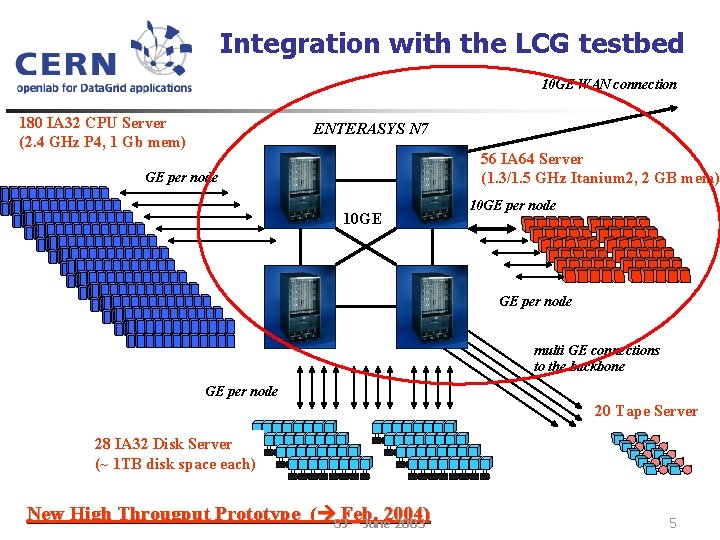

Integration with the LCG testbed 10 GE WAN connection 180 IA 32 CPU Server (2. 4 GHz P 4, 1 Gb mem) ENTERASYS N 7 56 IA 64 Server (1. 3/1. 5 GHz Itanium 2, 2 GB mem) GE per node 10 GE per node multi GE connections to the backbone GE per node 20 Tape Server 28 IA 32 Disk Server (~ 1 TB disk space each) New High Througput Prototype ( SJFeb. 2004) – June 2003 5

Recent achievements (selected amongst many others) n Hardware and software n n n Key ingredients deployed in Alice Data Challenge V Internet 2 land speed record between CERN and Cal. Tech Porting and verification of CERN/HEP software on 64 -bit architecture n CASTOR, ROOT, CLHEP, GEANT 4, ALIROOT, etc. n Parallel ROOT data analysis n Port of LCG software to Itanium SJ – June 2003 6

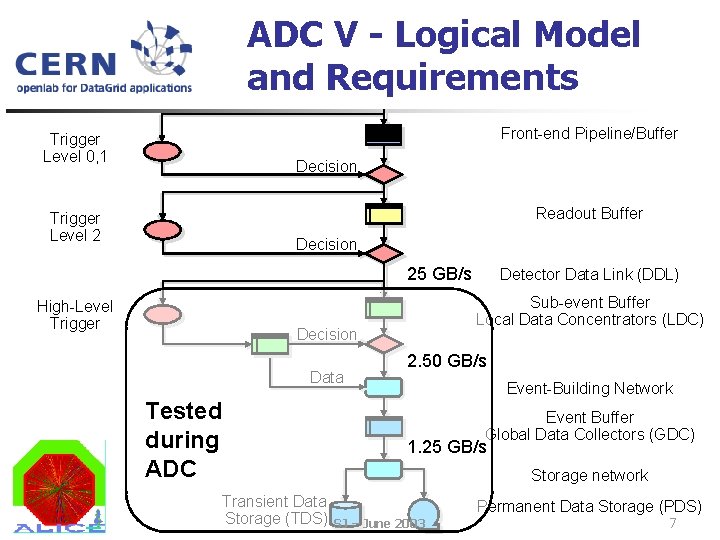

ADC V - Logical Model Detector and Requirements Digitizers Front-end Pipeline/Buffer Trigger Level 0, 1 Decision Readout Buffer Trigger Level 2 Decision 25 GB/s High-Level Trigger Sub-event Buffer Local Data Concentrators (LDC) Decision Data Tested during ADC Transient Data Storage (TDS) Detector Data Link (DDL) 2. 50 GB/s Event-Building Network Event Buffer Global Data Collectors (GDC) 1. 25 GB/s Storage network Permanent Data Storage (PDS) SJ – June 2003 7

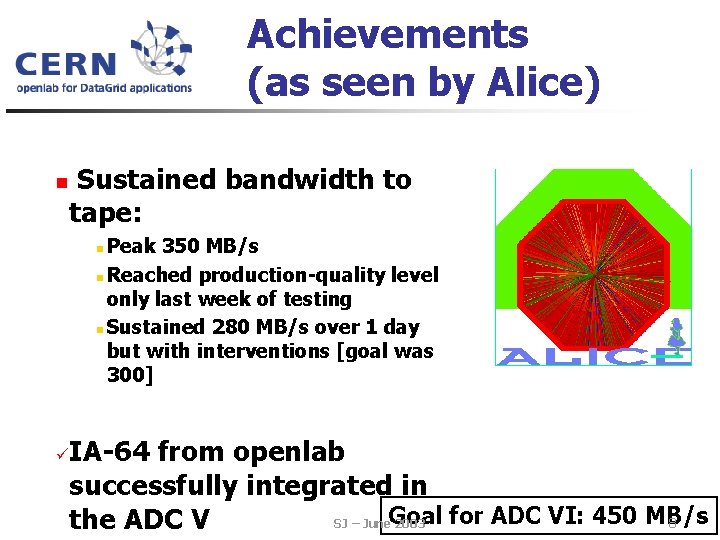

Achievements (as seen by Alice) n Sustained bandwidth to tape: Peak 350 MB/s n Reached production-quality level only last week of testing n Sustained 280 MB/s over 1 day but with interventions [goal was 300] n ü IA-64 from openlab successfully integrated in for ADC VI: 450 MB/s 8 SJ – June. Goal 2003 the ADC V

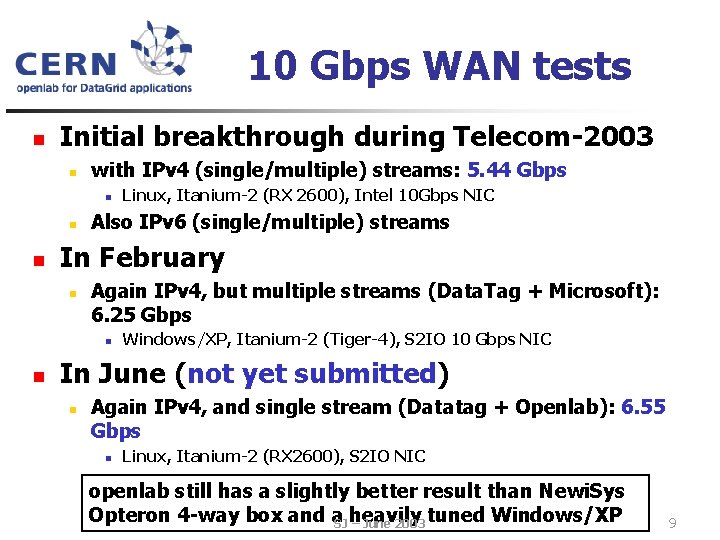

10 Gbps WAN tests n Initial breakthrough during Telecom-2003 n with IPv 4 (single/multiple) streams: 5. 44 Gbps n n n Also IPv 6 (single/multiple) streams In February n Again IPv 4, but multiple streams (Data. Tag + Microsoft): 6. 25 Gbps n n Linux, Itanium-2 (RX 2600), Intel 10 Gbps NIC Windows/XP, Itanium-2 (Tiger-4), S 2 IO 10 Gbps NIC In June (not yet submitted) n Again IPv 4, and single stream (Datatag + Openlab): 6. 55 Gbps n Linux, Itanium-2 (RX 2600), S 2 IO NIC openlab still has a slightly better result than Newi. Sys Opteron 4 -way box and a. SJ heavily – June 2003 tuned Windows/XP 9

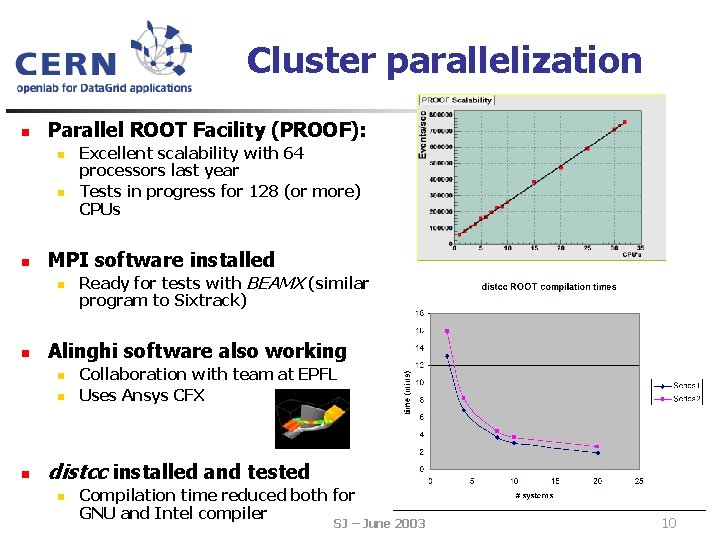

Cluster parallelization n Parallel ROOT Facility (PROOF): n n n MPI software installed n n Ready for tests with BEAMX (similar program to Sixtrack) Alinghi software also working n n n Excellent scalability with 64 processors last year Tests in progress for 128 (or more) CPUs Collaboration with team at EPFL Uses Ansys CFX distcc installed and tested n Compilation time reduced both for GNU and Intel compiler SJ – June 2003 10

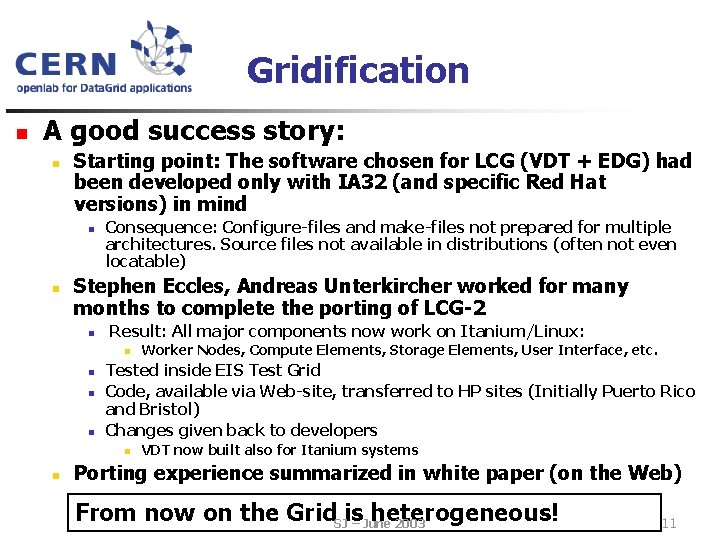

Gridification n A good success story: n Starting point: The software chosen for LCG (VDT + EDG) had been developed only with IA 32 (and specific Red Hat versions) in mind n n Consequence: Configure-files and make-files not prepared for multiple architectures. Source files not available in distributions (often not even locatable) Stephen Eccles, Andreas Unterkircher worked for many months to complete the porting of LCG-2 n Result: All major components now work on Itanium/Linux: n n Tested inside EIS Test Grid Code, available via Web-site, transferred to HP sites (Initially Puerto Rico and Bristol) Changes given back to developers n n Worker Nodes, Compute Elements, Storage Elements, User Interface, etc. VDT now built also for Itanium systems Porting experience summarized in white paper (on the Web) From now on the Grid. SJis– June heterogeneous! 2003 11

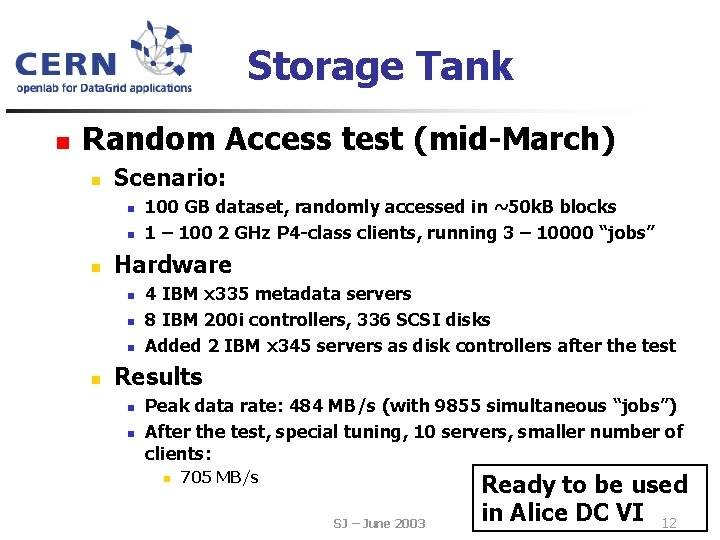

Storage Tank n Random Access test (mid-March) n Scenario: n n n Hardware n n 100 GB dataset, randomly accessed in ~50 k. B blocks 1 – 100 2 GHz P 4 -class clients, running 3 – 10000 “jobs” 4 IBM x 335 metadata servers 8 IBM 200 i controllers, 336 SCSI disks Added 2 IBM x 345 servers as disk controllers after the test Results n n Peak data rate: 484 MB/s (with 9855 simultaneous “jobs”) After the test, special tuning, 10 servers, smaller number of clients: n 705 MB/s SJ – June 2003 Ready to be used in Alice DC VI 12

Next generation disk servers n Based on state-of-the-art equipment: n 4 -way Itanium server (RX 4640) n Two full-speed PCI-X slots n n Two 3 ware 9500 RAID controllers n In excess of 400 MB/s RAID-5 read speed n n n 10 Gb. E and/or Infiniband Only 100 MB/s for write w/RAID 5 200 MB/s RAID 0 24 * S-ATA disks with 74 GB WD 740 “Raptor” @ 10 k rpm Goal: 10 Gb. E for reading (at least 500 MB/s with n Saturate Burst speed of 100 card MB/s n standard MTU and 20 streams). SJWriting as fast as possible. – June 2003 13

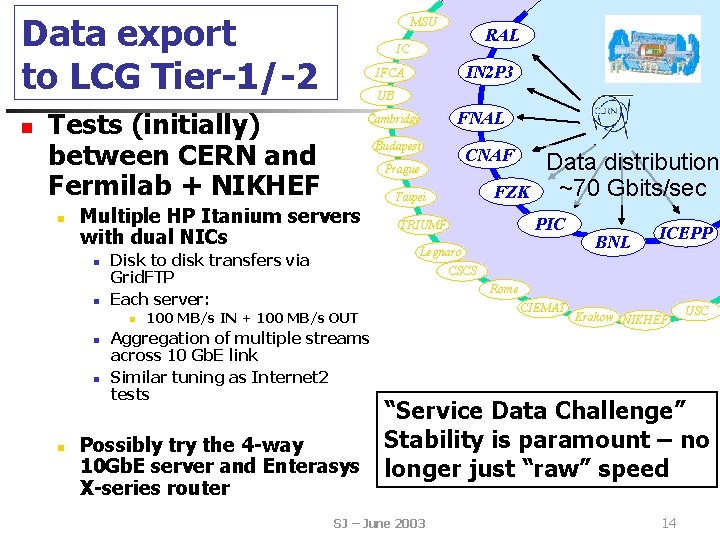

Data export to LCG Tier-1/-2 n MSU UB n n n FNAL Budapest CNAF Prague Disk to disk transfers via Grid. FTP Each server: n n Cambridge Multiple HP Itanium servers with dual NICs n IN 2 P 3 IFCA Tests (initially) between CERN and Fermilab + NIKHEF n RAL IC FZK Taipei Data distribution ~70 Gbits/sec PIC TRIUMF Legnaro BNL ICEPP CSCS Rome 100 MB/s IN + 100 MB/s OUT CIEMAT Krakow NIKHEF Aggregation of multiple streams across 10 Gb. E link Similar tuning as Internet 2 tests USC “Service Data Challenge” Stability is paramount – no Possibly try the 4 -way 10 Gb. E server and Enterasys longer just “raw” speed X-series router SJ – June 2003 14

Conclusions n 6 students, 4 fellows CERN openlab: n n n Solid collaboration with our industrial partners Encouraging results in multiple domains We believe sponsors are getting good “ROI” n n No risk of running short of R&D n n But only they can really confirm it IT Technology is still moving at an incredible pace Vital for LCG that the “right” pieces of technology are available for deployment n Performance, cost, resilience, etc. SJ – June 2003 15

- Slides: 15