CERN GDB Progress Report GDB meeting 9 Dec

CERN GDB Progress Report GDB meeting 9 Dec 2002 PEB – 10 Dec 2002 Ian Bird Ian. Bird@cern. ch

GDB Agenda CERN • Status of Regional Centre Resource table • Proposal for User Support Working Group • Report from Working Groups 1 - 4 Ian. Bird@cern. ch 2

Regional Centre Resources - Alberto Masoni CERN • Updated resource database with confirmed numbers • Switzerland Sweden both announced firm commitments to provide LCG resources • Discussion on what it means to commit resources to LCG: – Dedicated vs shared resources – LCG-specific vs existing grid infrastructure – Problem for LCG deployment: • Inter-operation with existing grid infrastructure is not simple Ian. Bird@cern. ch 3

User Support Working Group CERN • Proposed to address scope, responsibilities and expectations: – Mandate • Define the initial LCG-1 user support model, including: – Define the scope of responsibilities for a user support group serving LCG-1, – Define acceptable user expectations (or service level agreements), including the framework within which to implement these, – Propose sites to run the LCG-1 user support service • Decision: Klaus-Peter Mickel and IB to prepare proposal, for WG to form in February, since – WG effort not available now – Need to progress on setting up User Support Ian. Bird@cern. ch 4

CERN Working Group 1 Middleware Ian. Bird@cern. ch

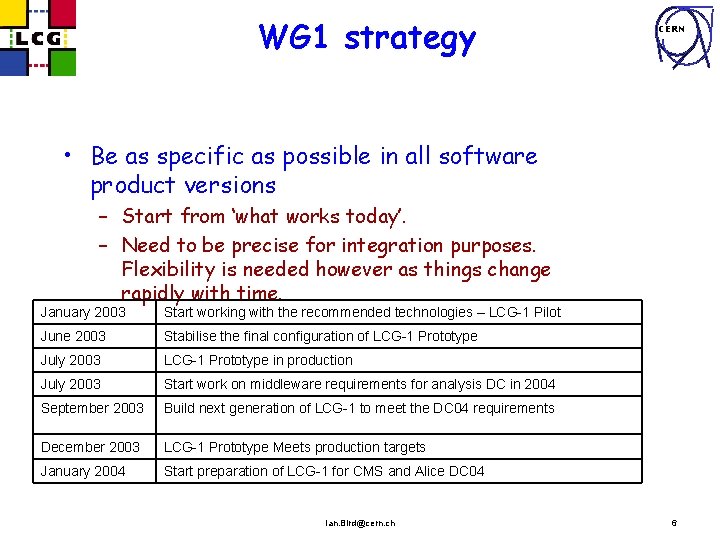

WG 1 strategy CERN • Be as specific as possible in all software product versions – Start from ‘what works today’. – Need to be precise for integration purposes. Flexibility is needed however as things change rapidly with time. January 2003 Start working with the recommended technologies – LCG-1 Pilot June 2003 Stabilise the final configuration of LCG-1 Prototype July 2003 LCG-1 Prototype in production July 2003 Start work on middleware requirements for analysis DC in 2004 September 2003 Build next generation of LCG-1 to meet the DC 04 requirements December 2003 LCG-1 Prototype Meets production targets January 2004 Start preparation of LCG-1 for CMS and Alice DC 04 Ian. Bird@cern. ch 6

Assumptions CERN • Evolution of both the pilot and LCG-1 is recommended by the GDB – Needs negotiation with the middleware providers for functionality and schedules – Needs experiments on board to satisfy their datachallenge requirements • LCG-1 pilot resources (HW) will be dedicated machines, not shared – Starting point to simplify the problem determination. – Existing cluster integration should follow as soon as possible based on the experience gained. Ian. Bird@cern. ch 7

WG 1 Strawman CERN • Starting point is VDT (1. 1. 5) with higher level services provided by the EDG software. – Preliminary informal discussions with advisors (STAG) supported this as the sensible approach. – Discussed with the EDG technical management in detail and this is 100% aligned with the EDG strategy to base future EDG releases on a fully supported Globus base. But, the timescales are different! – Experiments have discussed early versions of the proposal and have commented. No major outstanding disagreements. • The LCG-1 pilot (Jan 2003) will start with software that exists today –Not waiting for new releases • Non-VDT/EDG middleware is by no means excluded if it is useful • POOL is part of LCG-1 –But is higher-level middleware, not obligatory to use it –Probably not there from day-one Ian. Bird@cern. ch 8

Planned EDG Components CERN • • WP 1: Resource Broker WP 2: Replica Manager, RLS, Optimiser? WP 3: Some agents? RGMA may come. WP 4: Monitoring agents? Will also provide the CERN management infrastructure. • WP 5: The SE may come. Ian. Bird@cern. ch 9

Data Management/Access Highlights CERN • Data on the grid is single-sourced, R/O –I. e the grid will not synchronise replicas that are modified in different places –The experiment takes care of versioning it’s own pseudostatic files (metadata files etc)? • The missing functionality of a storage element and associated access libraries is a big problem for true transparency of use. Ian. Bird@cern. ch 10

Some Open questions CERN • • Dagman functionality, where does it go? Many security issues (WG 3) Account management – only simplest assumed. Weak system management, problem determination, user support and end-user tools. • Monitoring Tools • Packaging is, and remains an issue (WG 4) Ian. Bird@cern. ch 11

Summary CERN • LCG-1 will start integration very early 2003 – Using existing software only • Will evolve into a production service by July – S/W versions may change, controlled by GDB – Fixed-versions may need porting to new baseline – Evolutionary process not yet well defined • LCG-1 in Q 403 still a vague entity • “Main Lines” are clear and agreed but WG 1 needs to continue work. Vast amounts of details remain to be sorted. – More closely specify the functionality now. – Need still to scope the dev/porting work and see schedules – Complete work on support assumptions. Ian. Bird@cern. ch – Understand realistic performance metrics. 12

CERN Working Group 2 Resources and Schedule Ian. Bird@cern. ch

Mandate (in better order) CERN (6) Recommend an overall rollout schedule (5) Recommend responsibilities for operation of common services (4) Boundary of site and LCG responsibilities (3) Recommend services and resources to be provided by each site (2) Determine common metrics for allocating and accounting usage of various resources (1) Recommend how resource allocations process should be implemented. (policies, fair allocations, funding agency guidelines) Ian. Bird@cern. ch 14

(6) Overall rollout schedule CERN • Started on this last item – to get concrete and start the discussion. Document produced by Ian Bird – Based on Alberto’s resources table – Suggests a draft timetable for bringing in sites – Immediately brings up some questions and issues Ian. Bird@cern. ch 15

(6) Overall rollout strategy and schedule (Ian Bird – proposal) CERN • Incremental addition of resources to LCG-1 • Start with Cluster at CERN & add Tier 1 regional centers one at a time – 1 or 2 per month until June • This gets to milestone of “First Global Initial Availability” by July 2003 • Then the proposal adds many other centers in July and August including Nikhef, Taiwan, INFN Tier 2, UK Tier 2, Ohio, Russia Tier 2, California and Florida Tier 2 s, GSI Tier 2 and others • LCG-1 Full Service start – November 2003 Ian. Bird@cern. ch 16

Issues we need to clarify for (6) CERN (and the plan for getting this clarification) • Is center/site really the unit that “joins” LCG -1? Is this orthogonal to emerging Experiment -specific testbed grids? • Can the aggregate of several sites provide the guaranteed regional resource contribution to GDB? • If Regional/Experiment Grids join LCG-1 how would it be different to each site joining LCG-1? • Can a “site” be considered a degenerate Grid? • Is LCG-1 a collection of Grids or a collection of Sites? – Needs discussion here in GDB – Our WG to poll experiments and to learn about their internal plans for experiment-specific integration and test Grids and their schedules. Ian. Bird@cern. ch 17

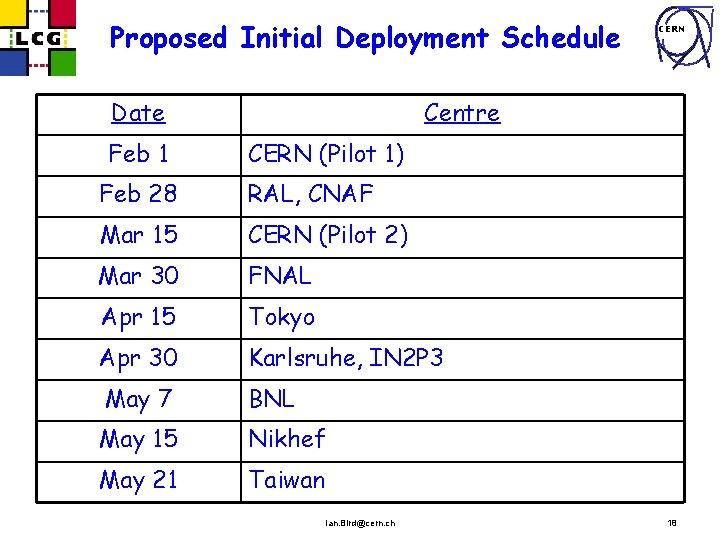

Proposed Initial Deployment Schedule Date Feb 1 CERN Centre CERN (Pilot 1) Feb 28 RAL, CNAF Mar 15 CERN (Pilot 2) Mar 30 FNAL Apr 15 Tokyo Apr 30 Karlsruhe, IN 2 P 3 May 7 BNL May 15 Nikhef May 21 Taiwan Ian. Bird@cern. ch 18

Issues we need to clarify for (6) CERN (and the plan for getting this clarification) • Need to discuss contents of Alberto’s resource table with each Regional representative and Experiment/Region person to – Ensure that distinction between resources available for LHC and resources dedicated to LCG-1 really has been understood – To add various attributes for the center and for the resources such as • Available all the time or on request • Minimum guaranteed available • Same resources switching between Experiment non-LCG use and LCG-1? • Allocation % by experiment if resource full conflict – how implemented (administratively or by batch queues? ) • Opportunistic max availability • Restriction on which experiments can use • … and several other detailed parameters Ian. Bird@cern. ch 19

Issues we need to clarify for (6) CERN (and the plan for getting this clarification) • Does staged addition of Tier 2 centers starting only after July 2003 hurt because – Allocations process in region may have been triggered – need to use resource if available – sooner rather than later ? – Delay in incorporating could allow non-LCG 1 -compatible use of resources to become difficult to change ? – Some are ready to participate and have time/energy to do so – Experiment specific test grids will incorporate some of these sites sooner – again risk of incompatibility – limited ability to cope with small staffing levels • What is the GDB’s opinion on this? • Also ask each center/experiment • Parallel deployment in each country seeded by the Tier 1? Ian. Bird@cern. ch 20

(5) Recommend responsibilities for operation of common services CERN • Common service implies shared by all in LCG-1 – Does this mean you need only one such service for LCG-1, in principle, but might want more for robustness? – Do current Grid middleware products even allow for such replication of a “singleton” service for robustness/failover purposes? • (4) Boundary of site and LCG responsibilities – Is the word project missing here after LCG? – Is this an operational question – responsibility for making it all work? Overlap with WG 4? – If LCG is a collaboration of sites, experiments, CERN – question is one of “agreements” or MOUs on who will do what to operate LCG-1 – Or is this some other responsibility? • Real issue is to create a list of common services (VO management, CA, Ops Centre, etc) and decide who operates them – LCG team or some site? Ian. Bird@cern. ch 21

Issues we seek to clarify for (2) CERN (and the plan for getting this clarification) • Proposed metrics for accounting and reporting (and allocation) • What metrics are actually in use and available for use at various centers – WG 2 will request information from each center on this in table/questionnaire format Ian. Bird@cern. ch 22

(1) Recommend how resource allocations process should be implemented. CERN • Recommend policies? • Fair allocations • Consistency with funding agency guidelines Ian. Bird@cern. ch 23

Issues we seek to clarify for (1) CERN (and the plan for getting this clarification) • Procedures for allocating resources between experiments in each of the regions, and at CERN. • Model of how all those who request, fund and provide resources to the LHC experiments interact. – WG 2 wants to hear about CERN Cocotime process – WG 2 wants to hear about process in each Region and center and how it is related to funding request process – GDB’s opinion of whether a global Cocotime-like process to schedule resources across all 4 experiments makes any sense? – Dynamic allocation/re-allocation of resources by resource broker – how? (administratively? , enough features in grid software? ) Ian. Bird@cern. ch 24

Word of Warning CERN • Keep hearing that this is for LCG-1 only – short term issues and decisions – Not sure that makes sense to answer all the questions for LCG-1 or to address policy and metrics issues. – May leave some issues for a future WG if it is clear that the functionality provided by current grid “middleware” and its state of integration with the center’s information is so primitive that no metrics are possible and no allocation, other than administrative, is possible – This is a dangerous thing to do – to NOT discuss the fundamental models, policies and procedures early on – even if the tools and capabilities are not there yet. Ian. Bird@cern. ch 25

CERN LCG/GDB WG 3 Security CERN, 9 Dec 2002 David Kelsey CLRC/RAL, UK d. p. kelsey@rl. ac. uk Ian. Bird@cern. ch

User Registration CERN • First step in User Authorisation • Long term aim is to use LHC experiment secretariats – But do we need an LCG top-level registration? • Estimates of size of community – and turnover • LCG-1 - ~1000 users? • Peak rate – 25 registrations per day in 2 Q 03 • Average rate – 1 registration per day Ian. Bird@cern. ch 27

User Registration (2) CERN • Other issues – Need to register the X. 509 DN and perhaps the certificate/public key – Registration procedure must confirm that the correct DN/Cert/Key is stored – May need a distributed Registration Authority • For those who cannot visit CERN – Today’s VO’s (EDG and elsewhere) are not sufficient • Need more secure machines and operations • Need better identity checking (RA’s) Ian. Bird@cern. ch 28

Infrastructure Registration CERN • For Grid – need to authenticate and authorize infrastructure • Therefore must register Systems, Resources, Services – And applications? • EDG does not do this today • LCG-1 needs a procedure to register the infrastructure (is this WG-2 or WG-3? ) (when? ) • As for users – need to register DN/cert/public key • WILL need a distributed Registration Authority here! • EDG Virtual Organisation Membership Service (VOMS) could be used for this Ian. Bird@cern. ch 29

Technical issues - Registration CERN • Databases and tools – Use main User registration DB (Oracle? )? – BUT need to export • to online VO authorisation – E. g. EDG VOMS (My. SQL) or VO/LDAP • to sites • Need tools to do this • Confidentiality and access rights – Once user registers • Create all the necessary accounts (automatically) Ian. Bird@cern. ch 30

Authentication CERN • EDG, Cross. Grid, Data. TAG, US/DOE • • • – Certificate Authority Managers meet in EDG WP 6 CA group 13 trusted CA’s today End of this week, considering 5 additional CA’s Inter-operates with US In good shape Nothing extra for LCG-1 to do • LCG should use existing EDG CA structure Ian. Bird@cern. ch 31

Authentication (2) CERN • Issues • Some sites do not accept user-held long term private keys – Kerberos CA (FNAL) – Virtual Smart Card (SLAC) – To be discussed this week • Do we trust online repositories? • Scaling issues for many KCA’s • May need to support multiple methods of authentication • BUT for LCG-1 – Can we just use long-term user-held keys? Ian. Bird@cern. ch 32

Authorisation CERN • Technology issues – Today VO/LDAP and mapfile tools • LCG-1 will start with this? – Coming in EDG: VOMS and LCAS, GACL etc • Experiment requirements for VO and Authorisation – Must meet SC 2 RTAG 4 requirements – Need more discussions with experiments • Roles, groups, sub-groups, capabilities etc? • Sites must be in control of local authorisation – Who controls what and how? (VO vs Site) • Revocation of VO membership and authorisation Ian. Bird@cern. ch 33

Security CERN • Operational security issues – WG 4 • LCG-1 Access policy – paper to Computing Resources Review board (Oct 2002) • Need to survey site security requirements – Also being done by PPDG-Site. AA and GGF Site. AAA • Many issues – Auditing, accounting, non-repudiation, integrity, confidentiality, firewalls etc • Firewalls (WG 4? ) – Do we use VPN’s? – Full open access or link Tier-0 and Tier-1’s? Ian. Bird@cern. ch 34

Security Policy CERN • Do we need an LCG-1 Policy? – Or is Acceptable Use enough? • What is the Policy? – A set of goals and aims – A set of rules – A mixture of both • Discussion: LCG does need a clear Policy, which includes Acceptable Use, Procedures, etc. Ian. Bird@cern. ch 35

Acceptable Use Policy CERN • User should only register once and sign once – AUP or “rules” • EDG has an AUP that all sign (electronically) before joining a VO • LCG-1 also needs a top-level VO which all users join first • We need to try to agree a single document – Start with the EDG document? Ian. Bird@cern. ch 36

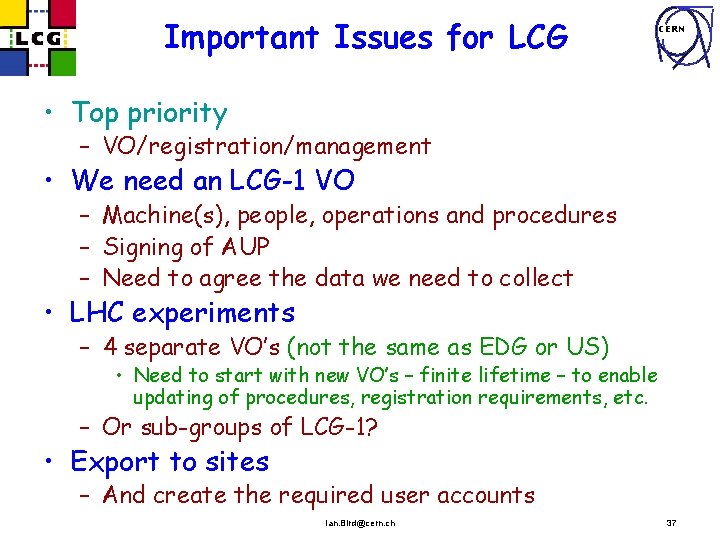

Important Issues for LCG CERN • Top priority – VO/registration/management • We need an LCG-1 VO – Machine(s), people, operations and procedures – Signing of AUP – Need to agree the data we need to collect • LHC experiments – 4 separate VO’s (not the same as EDG or US) • Need to start with new VO’s – finite lifetime – to enable updating of procedures, registration requirements, etc. – Or sub-groups of LCG-1? • Export to sites – And create the required user accounts Ian. Bird@cern. ch 37

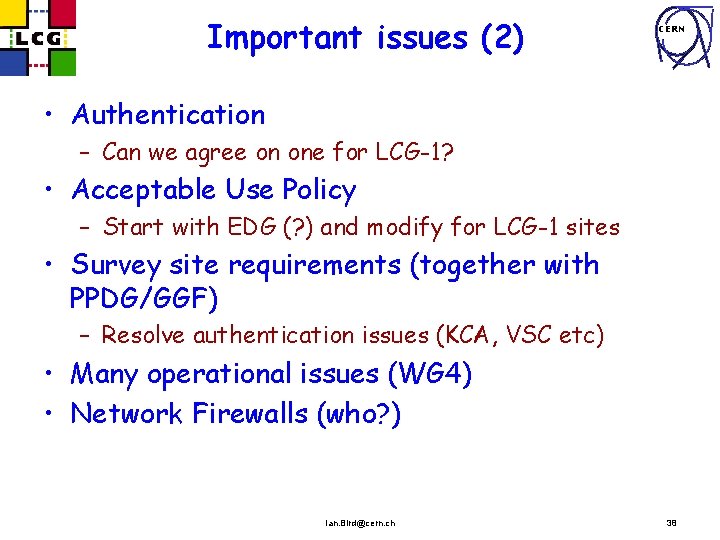

Important issues (2) CERN • Authentication – Can we agree on one for LCG-1? • Acceptable Use Policy – Start with EDG (? ) and modify for LCG-1 sites • Survey site requirements (together with PPDG/GGF) – Resolve authentication issues (KCA, VSC etc) • Many operational issues (WG 4) • Network Firewalls (who? ) Ian. Bird@cern. ch 38

CERN Working Group 4 Operations Ian. Bird@cern. ch

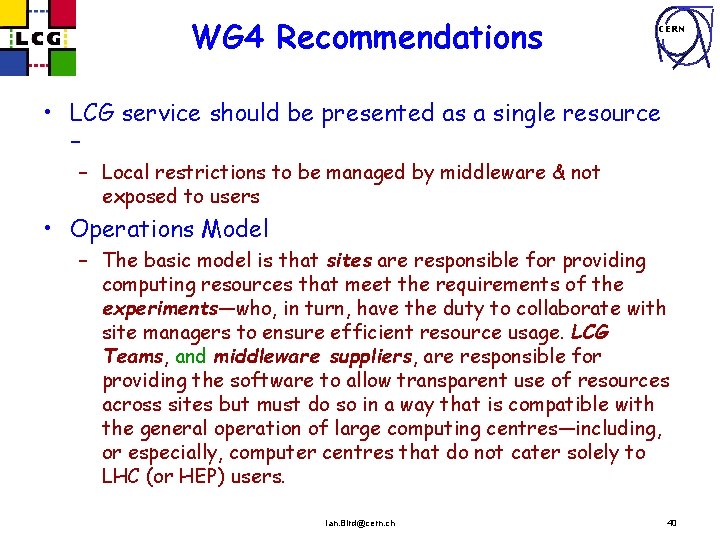

WG 4 Recommendations CERN • LCG service should be presented as a single resource – – Local restrictions to be managed by middleware & not exposed to users • Operations Model – The basic model is that sites are responsible for providing computing resources that meet the requirements of the experiments—who, in turn, have the duty to collaborate with site managers to ensure efficient resource usage. LCG Teams, and middleware suppliers, are responsible for providing the software to allow transparent use of resources across sites but must do so in a way that is compatible with the general operation of large computing centres—including, or especially, computer centres that do not cater solely to LHC (or HEP) users. Ian. Bird@cern. ch 40

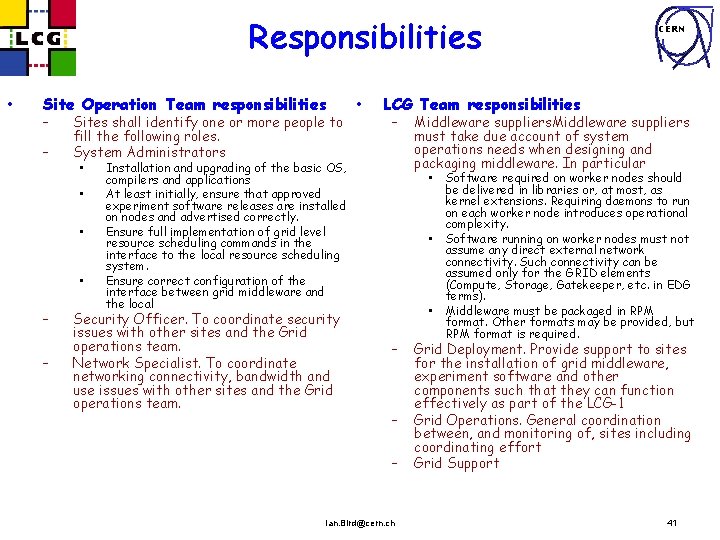

Responsibilities • Site Operation Team responsibilities – – Sites shall identify one or more people to fill the following roles. System Administrators • • – – • LCG Team responsibilities – Installation and upgrading of the basic OS, compilers and applications At least initially, ensure that approved experiment software releases are installed on nodes and advertised correctly. Ensure full implementation of grid level resource scheduling commands in the interface to the local resource scheduling system. Ensure correct configuration of the interface between grid middleware and the local Security Officer. To coordinate security issues with other sites and the Grid operations team. Network Specialist. To coordinate networking connectivity, bandwidth and use issues with other sites and the Grid operations team. CERN Middleware suppliers must take due account of system operations needs when designing and packaging middleware. In particular • • • – – – Ian. Bird@cern. ch Software required on worker nodes should be delivered in libraries or, at most, as kernel extensions. Requiring daemons to run on each worker node introduces operational complexity. Software running on worker nodes must not assume any direct external network connectivity. Such connectivity can be assumed only for the GRID elements (Compute, Storage, Gatekeeper, etc. in EDG terms). Middleware must be packaged in RPM format. Other formats may be provided, but RPM format is required. Grid Deployment. Provide support to sites for the installation of grid middleware, experiment software and other components such that they can function effectively as part of the LCG-1 Grid Operations. General coordination between, and monitoring of, sites including coordinating effort Grid Support 41

Standard LCG Operating Environment CERN • OS – – RH 7. 3. x – Provide /opt/hep/experiment for expt sw. (Discussion – should be accessible r/w by privileged expt users) – Map grid users to generic local users (but not recommended by WG 3, not practical for LCG-1? ) • Installation mechanism & certification process – Do not mandate particular installation tool (mature sites), but insist on RPMs – Tools to verify correct installation (min. set framework for site certification by LCG-1) • Experiment software – Installed without special privileges Ian. Bird@cern. ch 42

Standard operating env. Cont. CERN • Middleware deployment policy – Must be tested and certified before local admins install: Process: • Package developers – code compiles and runs correctly, Must deliver RPM • Integration: packages tested together with other LCG-1 components. Desired to have packages modular. • Testing: Extensive testing, including at production sites with experiments involved. • Certification: once shown to be compatible with LCG environment, and work correctly in test environments (and production tests) • Accounting – LCG must have a well defined accounting model for full LCG-1 availability • Configuration management – Sites to have a grid system admin, coordination between sites and reviews Ian. Bird@cern. ch 43

LCG Service Evolution CERN • Automation of installation, verification, configuration tools • Understand issues of WAN connectivity from worker nodes • (Automated) Experiment software installation mechanisms • Relax requirement on specific Linux versions • HEPi. X as forum for technical discussions – Seeded by topics from LCG/GDB Ian. Bird@cern. ch 44

(My) Conclusions from GDB CERN • WG 1 and WG 4 quite advanced – but still some work to complete • WG 2, WG 3 – should have minimum recommendations available by February • These could be sufficient to define the initial pilot of LCG-1, but – Need to analyse interim reports from deployment perspective • What is missing, contradictory between WG’s etc. – Focus on specific problems that remain unresolved • Question raised several times – how to include sites/organisations that are already part of a grid infrastructure? - issue was explicitly ignored by WG 1 – Missing: this issue needs to be resolved – need a policy • Storage/data access – DF + IGB to organise a focus group Ian. Bird@cern. ch 45

- Slides: 45