Ceph at the Tier1 Tom Byrne Outline Using

Ceph at the Tier-1 Tom Byrne

Outline • Using Ceph for grid storage • Echo and data distribution • Echo downtime reviews • Future of Ceph and Conclusions

Introduction • Echo has been in production at the Tier-1 for over 2 years now. • Ceph strengths: – Erasure Coding solves the limitations with hardware RAID for increasing capacity disks and is cheaper than replication. – Data is aggressively balanced across the cluster maximizing throughput. – Ceph is very flexible and same software can run clusters to provide a cloud backend, a file system or an object store.

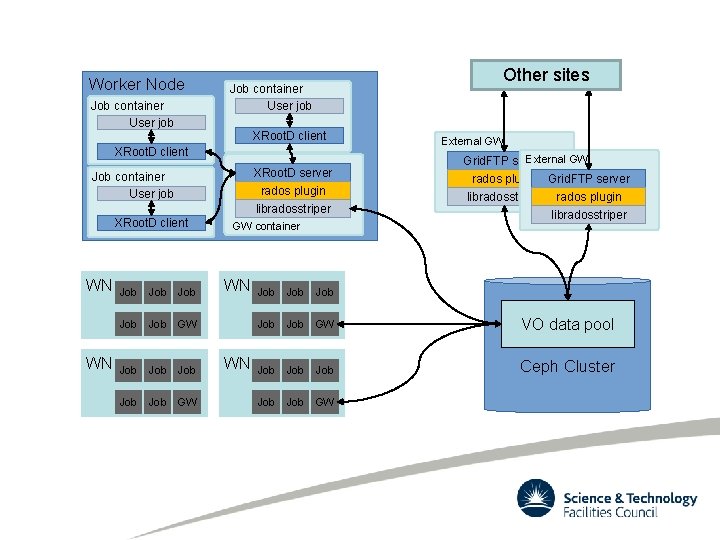

Ceph Grid Setups • Ceph can be configured in several ways to provide storage for the LHC VOs – – Object Store with Grid. FTP + XRoot. D plugins Ceph. FS + Grid. FTP servers RBD + d. Cache Rados. GW (S 3) + Dyna. Fed • List in order of production readiness. • Some setups have only been run using replication not Erasure Coding.

Echo • Big ceph cluster for WLCG Tier-1 object storage (and other users) – 181 Storage nodes • 4700+ OSDs (6 -12 TB) • 36/28 PB raw/usable – 16 PB data stored • Density and throughput over latency – EC 8+3 – 64 MB rados objects • Been through 3 major Ceph versions – ~30% OSDs still filestore – Mon stores just moved onto Rocks. DB

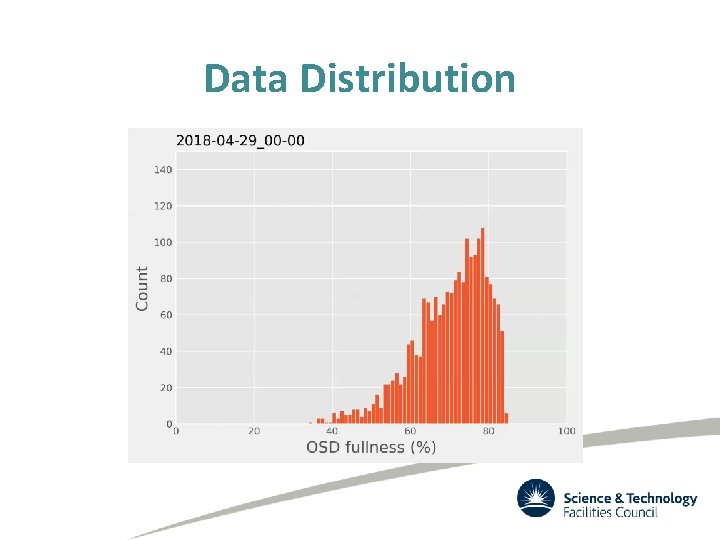

Cluster balancing • The algorithmic data placement results in a normal distribution of disk fullness if OSD fullness is unmanaged • Pre-Luminous, OSD reweights were used to improve this distribution – this method was found to be inadequate for a cluster the size of Echo • A pain point in 2018 was dealing with a very full cluster while adding hardware and increasing VO quotas

Data Distribution

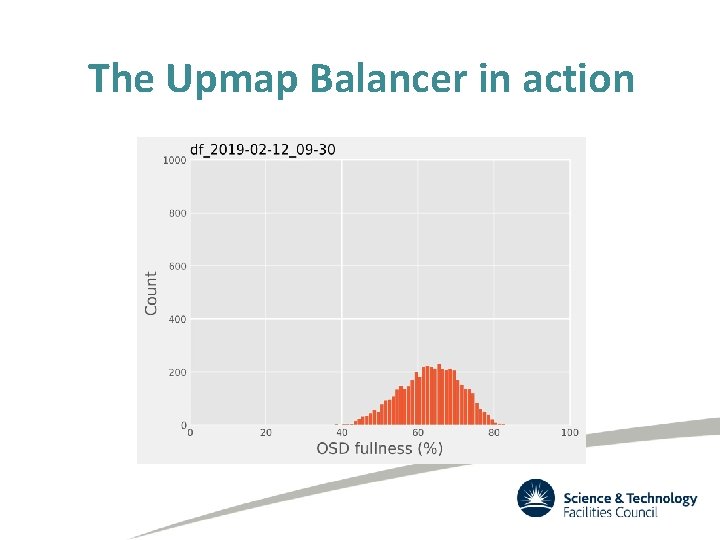

The Upmap Balancer • New feature in Ceph Luminous v 12. 2 – ‘Upmap’ • Explicitly map a specific placement group to a specific disk – Mapping stored with the CRUSH map • Automatic balancing implemented using this feature – Relatively small amount of upmaps needed to perfectly distribute a large amount of PGs/data – A little juggling required to move from a reweight-balanced cluster to an upmap-balanced one without mass data movement • Dan van der Ster wrote a script to make this a trivial operation* • Greatly improves data distribution, and therefore total space available *https: //gitlab. cern. ch/ceph-scripts/blob/master/tools/upmap-remapped. py

The Upmap Balancer in action

Inconsistent PGs • When a placement group is carrying out an internal consistency check known as scrubbing, any read errors will flag the PG up as inconsistent. • These inconsistencies can be dangerous on low replica pools with genuine corruption, but they are usually trivial to identify and rectify – E. g. 10/11 EC shards consistent, 1/11 shards unreadable • Inconsistent PGs are unavoidable, it’s just a question of how many you deal with. – Dependent on size of cluster and likelihood of disks throwing bad sectors – For a <1000 disk cluster, you might get one a week • Ceph has internal tools to repair inconsistent PGs, but human intervention required to start the process – Improving (automating) this process is on the roadmap

Downtimes • Echo became a production service at the start of February 2017. • 2017 -02 -24: 47 minutes – Stuck PG in ATLAS Pool. Normal remedies didn’t fix. • 2017 -08 -19: 7 days – Backill Bug • 2018 -08 -10: 7 days – Memory usage

Stuck PG • While rebooting a storage node for patching in February 2017, a placement group in the atlas pool became stuck in a peering state – I/O hung for any object in this PG • To restore service availability, it was decided we would manually recreate the PG – accepting loss of all 2300 files/160 GB data in that PG • The data loss occurred due to late discovery of correct remedy – We would have been able to recover without data loss if we had identified the problem (and problem OSD) before we manually removed the PG from the set http: //tracker. ceph. com/issues/18960

Backfill bug • In August we encountered an backfill bug specific to erasure coded pools when adding 30 new nodes to the cluster. – http: //tracker. ceph. com/issues/18162 • A read error on an object shard on any existing OSD in backfilling PG will: – crash the primary OSD, and the next acting primary, and so on, until the PG goes down – Misdiagnosis of the issue lead to the loss of an Atlas PG, 23, 000 files lost • Once the issue was understood we could handle the reoccurrences of the issue with no further data loss, and a Ceph upgrade fixed the issue for good. https: //www. gridpp. ac. uk/wiki/RAL_Tier 1_Incident_20170818_first_Echo_data_loss

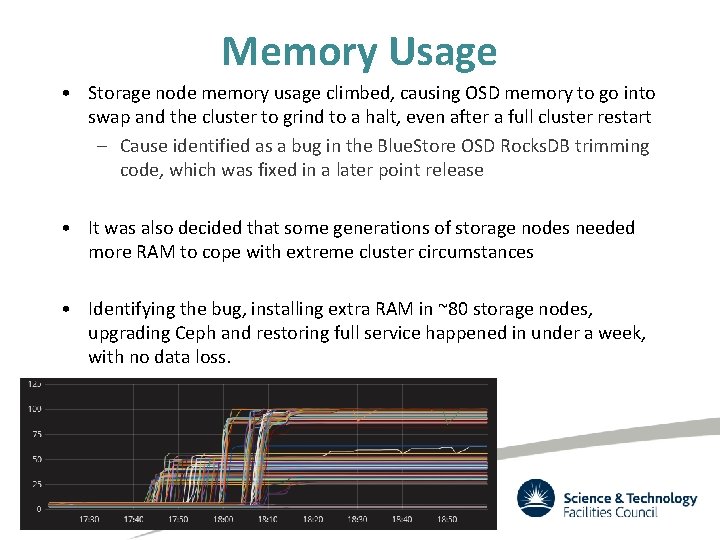

Memory Usage • Storage node memory usage climbed, causing OSD memory to go into swap and the cluster to grind to a halt, even after a full cluster restart – Cause identified as a bug in the Blue. Store OSD Rocks. DB trimming code, which was fixed in a later point release • It was also decided that some generations of storage nodes needed more RAM to cope with extreme cluster circumstances • Identifying the bug, installing extra RAM in ~80 storage nodes, upgrading Ceph and restoring full service happened in under a week, with no data loss.

Non-Downtimes The following things happened but didn’t cause Ceph to stop working: • Site wide power outage – UPS worked! Pity about the rest of the site… • Central Network service interruptions • Two major upgrades – Rolling intervention could be done. • Entire Disk server failures. • High disk failure rates caused by excessive heat at the back of racks. • Any security patching.

Future of Ceph • Continual development from the open source community to improve ceph cluster performance, stability and ease of management – Automatic balancing added and stable, automatic PG splitting/merging coming soon – Ambitious project to rewrite the OSD data paths, aiming for much better performance from network to memory • Large, growing, community – SKA precursor (Meer. KAT) uses a Ceph object store for it’s analysis pipeline + general storage – STFC has joined the Ceph foundation as an academic member

Conclusion • Using Ceph as the storage backend is working very well for the disk storage at the Tier-1 – Ceph is very well suited for a Tier-2 size cluster • Operational issues are to be expected, but being able to update, patch and deal with storage node failures without loss of availability has been fantastic • The future of Ceph is looking promising – There continues to be lots of uptake, both in scientific and commercial areas

Worker Node Job container User job Other sites Job container User job XRoot. D client XRoot. D server rados plugin libradosstriper Job container User job XRoot. D client WN WN Job Job Job GW GW container WN WN External GW Grid. FTP server rados plugin Grid. FTP server libradosstriper rados plugin libradosstriper Job Job Job GW VO data pool Job Job Ceph Cluster Job GW

- Slides: 19