Ceph A Scalable HighPerformance Distributed File System Sage

Ceph: A Scalable, High-Performance Distributed File System Sage Weil Scott Brandt Ethan Miller Darrell Long Carlos Maltzahn University of California, Santa Cruz

Project Goal l Reliable, high-performance distributed file system with excellent scalability l l Petabytes to exabytes, multi-terabyte files, billions of files Tens or hundreds of thousands of clients simultaneously accessing same files or directories POSIX interface Storage systems have long promised scalability, but have failed to deliver l l Continued reliance on traditional file systems principles l Inode tables l Block (or object) list allocation metadata Passive storage devices

Ceph— Key Design Principles Maximal separation of data and metadata 1. l l l Object-based storage Independent metadata management CRUSH – data distribution function Intelligent disks 2. l Reliable Autonomic Distributed Object Store Dynamic metadata management 3. l Adaptive and scalable

Outline Maximal separation of data and metadata 1. l l l Object-based storage Independent metadata management CRUSH – data distribution function Intelligent disks 2. l Reliable Autonomic Distributed Object Store Dynamic metadata management 3. l Adaptive and scalable

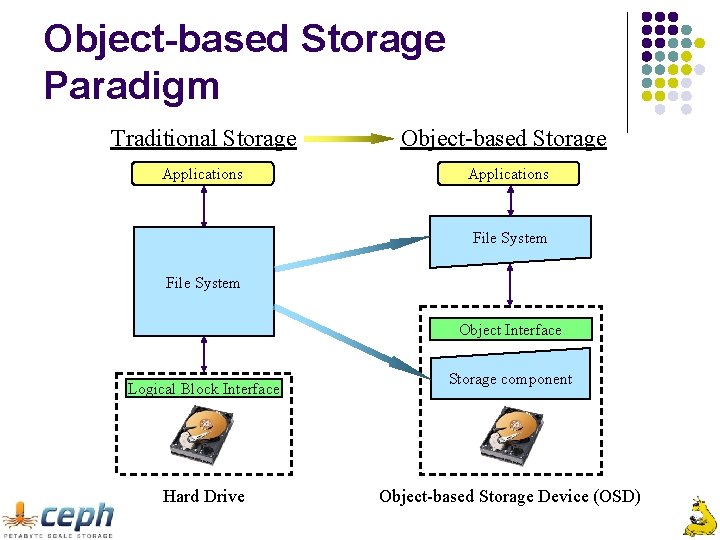

Object-based Storage Paradigm Traditional Storage Applications Object-based Storage Applications File System Object Interface Logical Block Interface Hard Drive Storage component Object-based Storage Device (OSD)

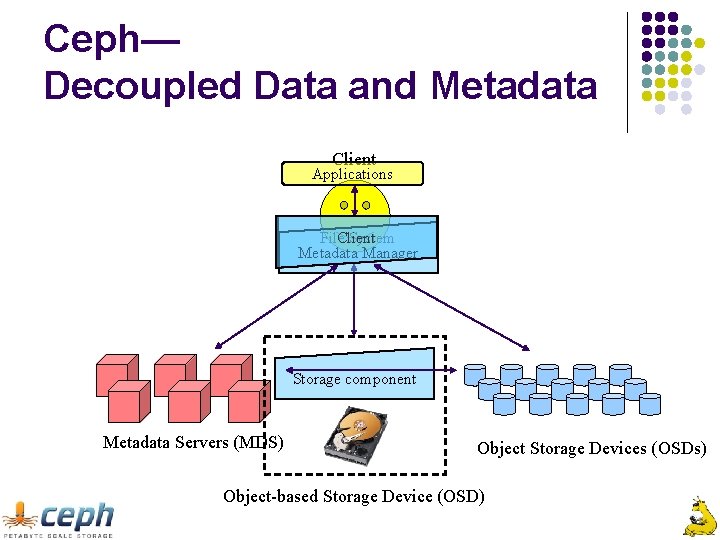

Ceph— Decoupled Data and Metadata Client Applications File. Client System Metadata Manager Storage component Metadata Servers (MDS) Object Storage Devices (OSDs) Object-based Storage Device (OSD)

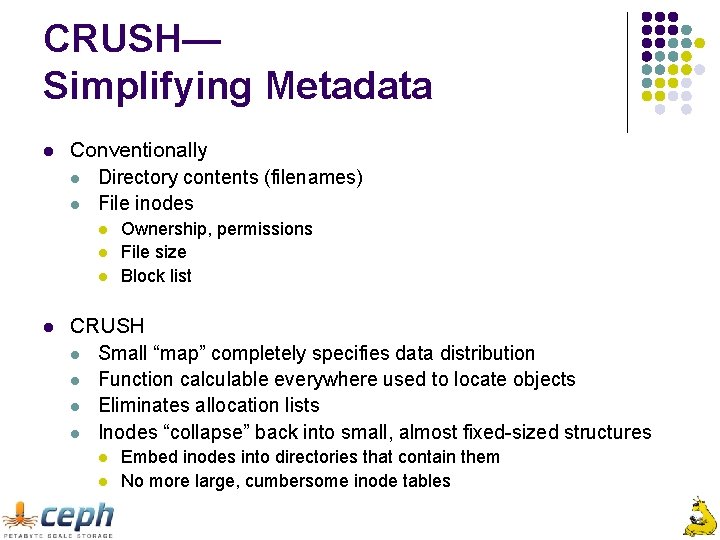

CRUSH— Simplifying Metadata l Conventionally l Directory contents (filenames) l File inodes l l Ownership, permissions File size Block list CRUSH l Small “map” completely specifies data distribution l Function calculable everywhere used to locate objects l Eliminates allocation lists l Inodes “collapse” back into small, almost fixed-sized structures l l Embed inodes into directories that contain them No more large, cumbersome inode tables

Outline Maximal separation of data and metadata 1. l l l Object-based storage Independent metadata management CRUSH – data distribution function Intelligent disks 2. l Reliable Autonomic Distributed Object Store Dynamic metadata management 3. l Adaptive and scalable

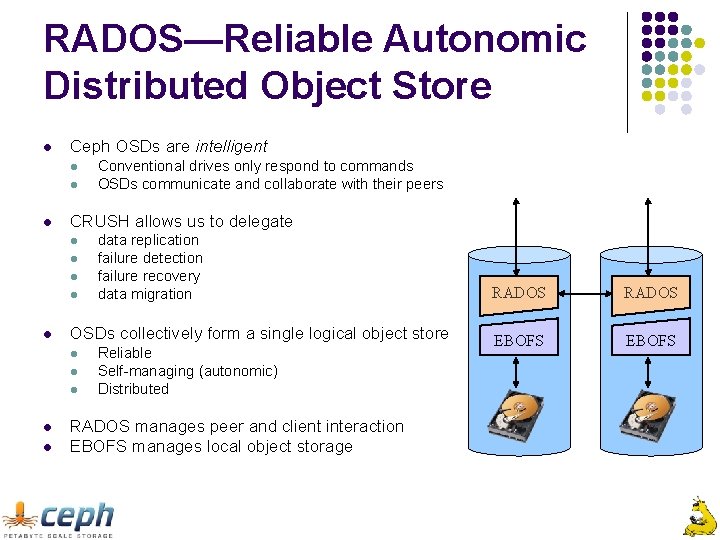

RADOS—Reliable Autonomic Distributed Object Store l Ceph OSDs are intelligent l l l CRUSH allows us to delegate l l l l data replication failure detection failure recovery data migration OSDs collectively form a single logical object store l l Conventional drives only respond to commands OSDs communicate and collaborate with their peers Reliable Self-managing (autonomic) Distributed RADOS manages peer and client interaction EBOFS manages local object storage RADOS EBOFS

RADOS – Scalability l Failure detection and recovery are distributed l l Maps updates are propagated by OSDs themselves l l No monitor broadcast necessary Identical “recovery” procedure used to respond to all map updates l l l Centralized monitors used only to update map OSD failure Cluster expansion OSDs always collaborate to realize the newly specified data distribution

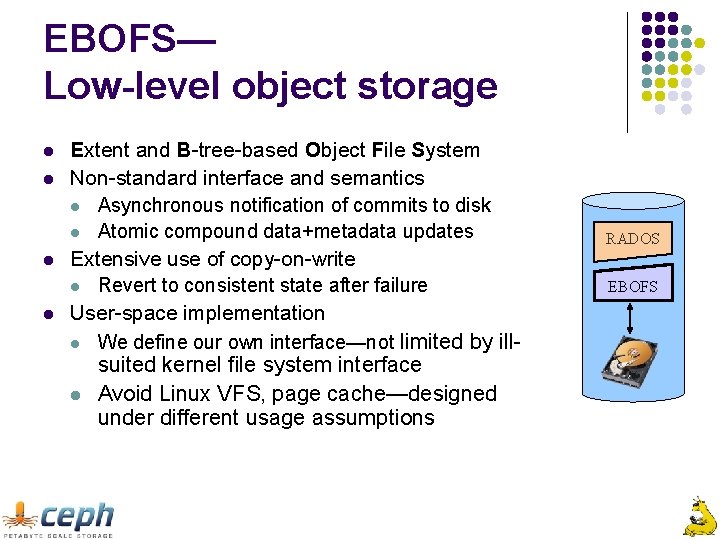

EBOFS— Low-level object storage l l Extent and B-tree-based Object File System Non-standard interface and semantics l Asynchronous notification of commits to disk l Atomic compound data+metadata updates Extensive use of copy-on-write l Revert to consistent state after failure User-space implementation l We define our own interface—not limited by illsuited kernel file system interface l Avoid Linux VFS, page cache—designed under different usage assumptions RADOS EBOFS

OSD Performance— EBOFS vs ext 3, Reiser. FSv 3, XFS l EBOFS writes saturate disk for request sizes over 32 k l Reads perform significantly better for large write sizes

Outline Maximal separation of data and metadata 1. l l l Object-based storage Independent metadata management CRUSH – data distribution function Intelligent disks 2. l Reliable Autonomic Distributed Object Store Dynamic metadata management 3. l Adaptive and scalable

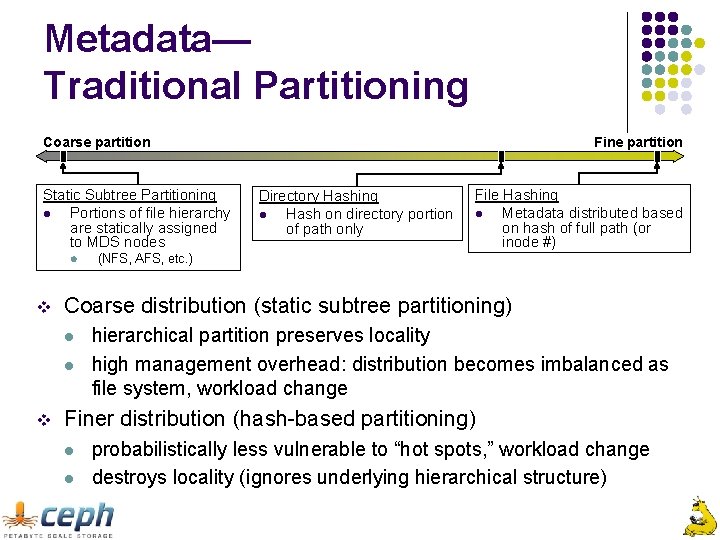

Metadata— Traditional Partitioning Coarse partition Static Subtree Partitioning l Portions of file hierarchy are statically assigned to MDS nodes l v Directory Hashing l Hash on directory portion of path only File Hashing l Metadata distributed based on hash of full path (or inode #) (NFS, AFS, etc. ) Coarse distribution (static subtree partitioning) l l v Fine partition hierarchical partition preserves locality high management overhead: distribution becomes imbalanced as file system, workload change Finer distribution (hash-based partitioning) l l probabilistically less vulnerable to “hot spots, ” workload change destroys locality (ignores underlying hierarchical structure)

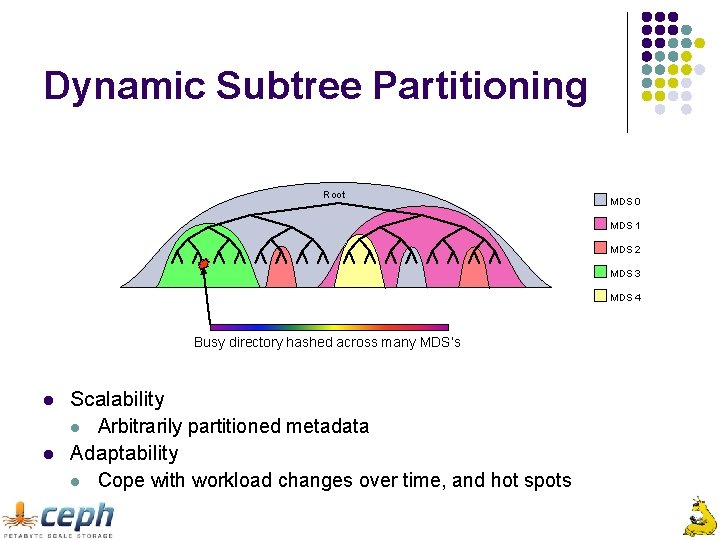

Dynamic Subtree Partitioning Root MDS 0 MDS 1 MDS 2 MDS 3 MDS 4 Busy directory hashed across many MDS’s l l Scalability l Arbitrarily partitioned metadata Adaptability l Cope with workload changes over time, and hot spots

Metadata Scalability l Up to 128 MDS nodes, and 250, 000 metadata ops/second l l I/O rates of potentially many terabytes/second Filesystems containing many petabytes (or exabytes? ) of data

Conclusions l l Ø Decoupled metadata improves scalability l Eliminating allocation lists makes metadata simple l MDS stays out of I/O path Intelligent OSDs l Manage replication, failure detection, and recovery CRUSH distribution function makes it possible l Global knowledge of complete data distribution l Data locations calculated when needed Dynamic metadata management l Preserve locality, improve performance l Adapt to varying workloads, hot spots l Scale High-performance and reliability with excellent scalability!

Ongoing and Future Work l Completion of prototype l l l MDS failure recovery Scalable security architecture [Leung, Storage. SS ’ 06] Quality of service Time travel (snapshots) RADOS improvements l l Dynamic replication of objects based on workload Reliability mechanisms: scrubbing, etc.

Thanks! http: //ceph. sourceforge. net/ Support from Lawrence Livermore, Los Alamos, and Sandia National Laboratories

- Slides: 19