CDAWeb Easy data browse and display allowing userspecified

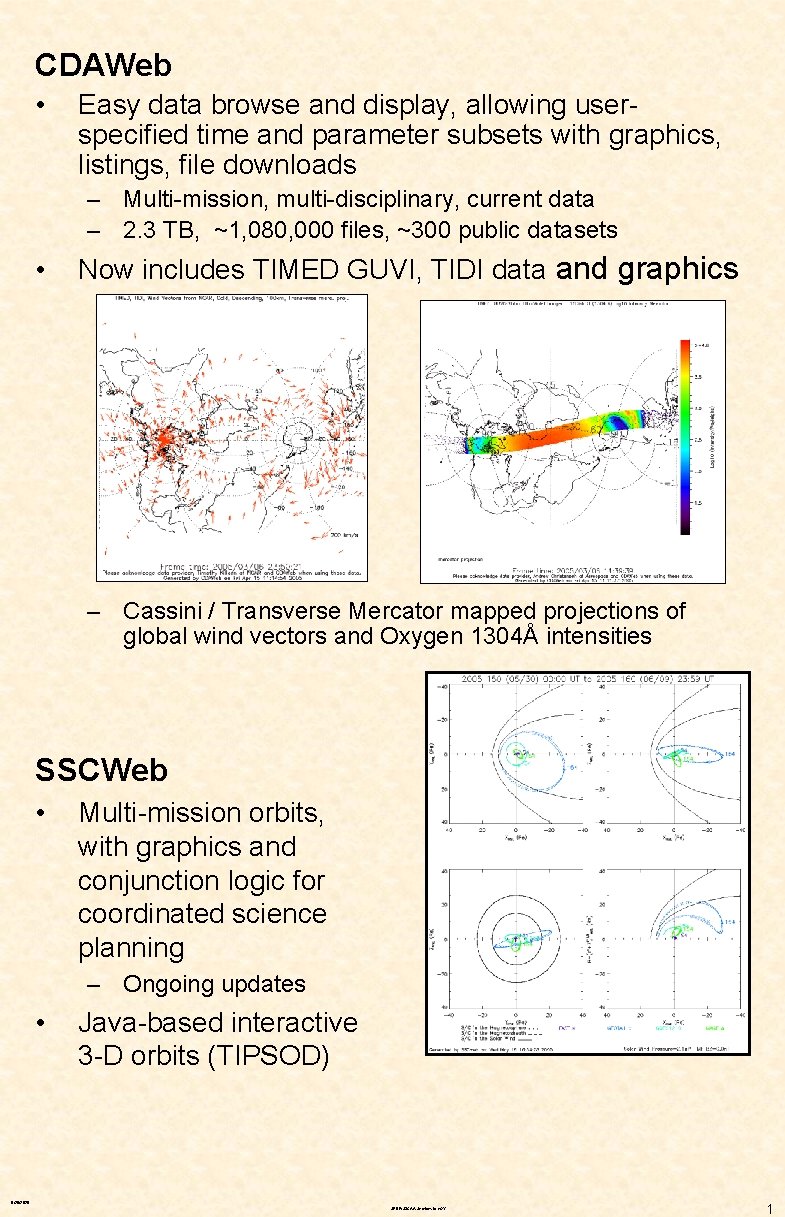

CDAWeb • Easy data browse and display, allowing userspecified time and parameter subsets with graphics, listings, file downloads – Multi-mission, multi-disciplinary, current data – 2. 3 TB, ~1, 080, 000 files, ~300 public datasets • Now includes TIMED GUVI, TIDI data and graphics – Cassini / Transverse Mercator mapped projections of global wind vectors and Oxygen 1304Å intensities SSCWeb • Multi-mission orbits, with graphics and conjunction logic for coordinated science planning – Ongoing updates • Java-based interactive 3 -D orbits (TIPSOD) 9/26/2020 SPDF/S 3 CAA Services to e. GY 1

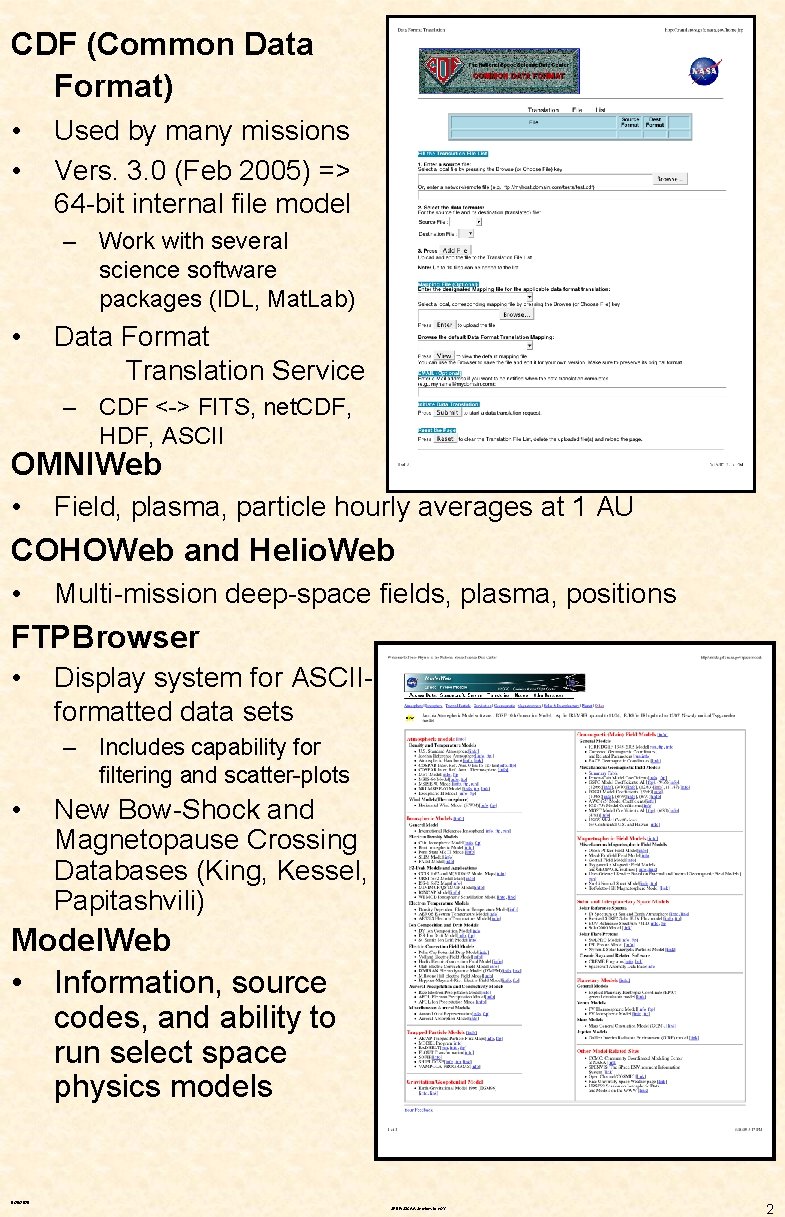

CDF (Common Data Format) • • Used by many missions Vers. 3. 0 (Feb 2005) => 64 -bit internal file model – Work with several science software packages (IDL, Mat. Lab) • Data Format Translation Service – CDF <-> FITS, net. CDF, HDF, ASCII OMNIWeb • Field, plasma, particle hourly averages at 1 AU COHOWeb and Helio. Web • Multi-mission deep-space fields, plasma, positions FTPBrowser • Display system for ASCIIformatted data sets – Includes capability for filtering and scatter-plots • New Bow-Shock and Magnetopause Crossing Databases (King, Kessel, Papitashvili) Model. Web • Information, source codes, and ability to run select space physics models 9/26/2020 SPDF/S 3 CAA Services to e. GY 2

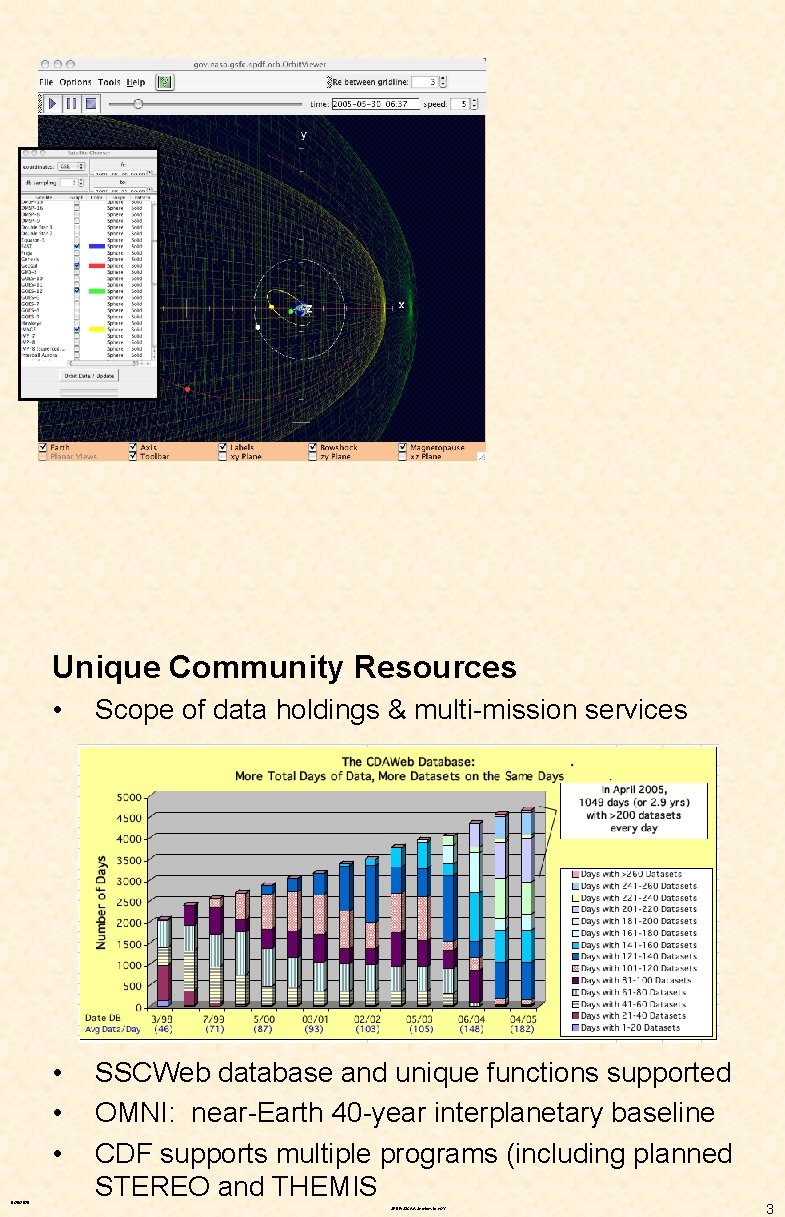

Unique Community Resources 9/26/2020 • Scope of data holdings & multi-mission services • • • SSCWeb database and unique functions supported OMNI: near-Earth 40 -year interplanetary baseline CDF supports multiple programs (including planned STEREO and THEMIS SPDF/S 3 CAA Services to e. GY 3

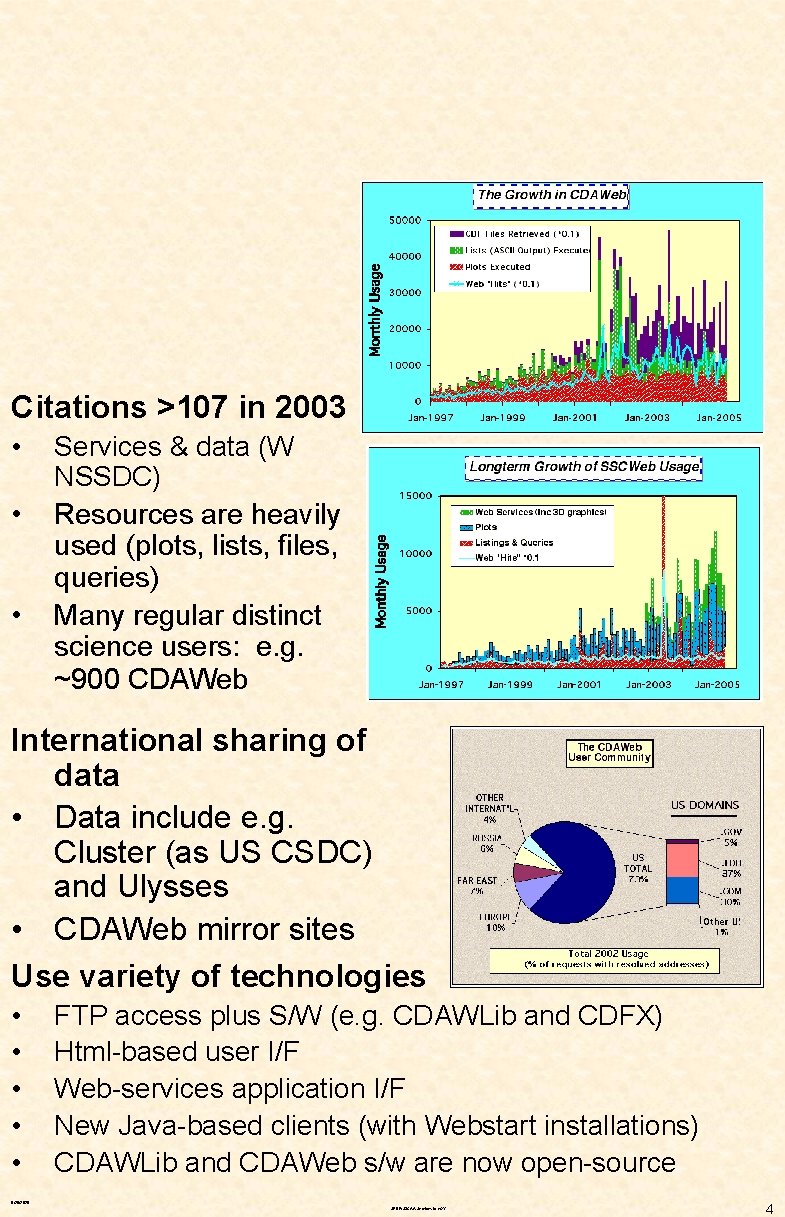

Citations >107 in 2003 • • • Services & data (W NSSDC) Resources are heavily used (plots, lists, files, queries) Many regular distinct science users: e. g. ~900 CDAWeb International sharing of data • Data include e. g. Cluster (as US CSDC) and Ulysses • CDAWeb mirror sites Use variety of technologies • • • FTP access plus S/W (e. g. CDAWLib and CDFX) Html-based user I/F Web-services application I/F New Java-based clients (with Webstart installations) CDAWLib and CDAWeb s/w are now open-source 9/26/2020 SPDF/S 3 CAA Services to e. GY 4

Relevance and Role in e. GY • Powerful collection of existing capabilities – Already serving current data and orbit information from most NASA and other space physics missions, as well as some ground-based data – Data and capabilities are available both through direct web and/or Java user interfaces and substantially also through network-accessible APIs to support services and clients including those of the proposed NASA Virtual Discipline Observatories (Vx. Os) – Already multiple international mirror sites – Core CDAWeb s/w and the underlying CDF standard data format s/w are now open source • Current S 3 C Active Archive services such as CDAWeb and SSCWeb are fully capable and expected to support additional new missions – Highly extensible to support additional data from existing missions and a wide range of other new data sources – With limited resources, science data providers should first focus to producing and validating basic data – Efforts like those of SPDF/S 3 CAA can then point to these products and sites or work these data products in turn to uniformize their formats and serve them as an integrated part of existing multi-mission, multi-disciplinary capabilities • Where data are already or can be made public at least by simple FTP/HTTP directories … – New CDAWeb Plus interface now capable of offering users an integrated view and data retrieval from distributed data sources in a CDAWeb paradigm; I. e. from multiple sources in a single user request 9/26/2020 SPDF/S 3 CAA Services to e. GY 5

Future Paradigms Virtual Observatories in S 3 C • Virtual Discipline Observatory (Vx. O) paradigm for Sun. Space-Geospace research community might be defined as a vision of a future Solar-Terrestrial data environment – – – Where data, models and services can be highly distributed While end users see an integrated view, and where All potentially-useful data are findable, accessible, useable Appropriate services & across mission-instrument boundaries Organized and customized to specific science discipline needs Sun Solar System Great Observatory • Fleet of existing missions is the foundation for the initial “spiral” in pursuing the NASA’s exploration initiative Living With a Star (LWS) International: ILWS, IHY and e. GY SPDF/S 3 CAA Strategy • Retain (& build) excellence & utility of what works now – Services and interfaces – Scope and quality of data, to most fully reflect the data needed to pursue the science of the Great Observatory initiative • • • Serve data, models, services through multiple paths Data and services as webservices to early Vx. Os Enable, partner, and leverage all parts of the Vx. O effort 9/26/2020 SPDF/S 3 CAA Services to e. GY 6

Next Steps Advertising and Dialogue • • e. GY and other programs need to understand what SPDF/S 3 CAA has to contribute SPDF/S 3 CAA needs to understand If/ what e. GY and its science community can utilize and how – And what more or different might be more useful Data Perspective • First necessity for maximum utility is enabling useful access to the right data from a full range of relevant data sources – – • Right = Current, high-resolution, high-quality, useful parameters Science function to define in detail “right” and “relevant” Cooperative effort with distributed community of data generators/providers and other data services, including Vx. O discipline groups as these become active Technical Perspective • • Maintain the core of present capabilities Do what is necessary to support new data – Includes ongoing effort to keep CDF modern – Special emphasis on new missions but also range of potential international data sources relevant to NASA science • Complete implementation of webservices APIs – Key to distributed access and evolution of services • Continue enhancements to interfaces and functions – Continuing evolution in balance of client/server functions • Continue work on integrated view of services 9/26/2020 SPDF/S 3 CAA Services to e. GY 7

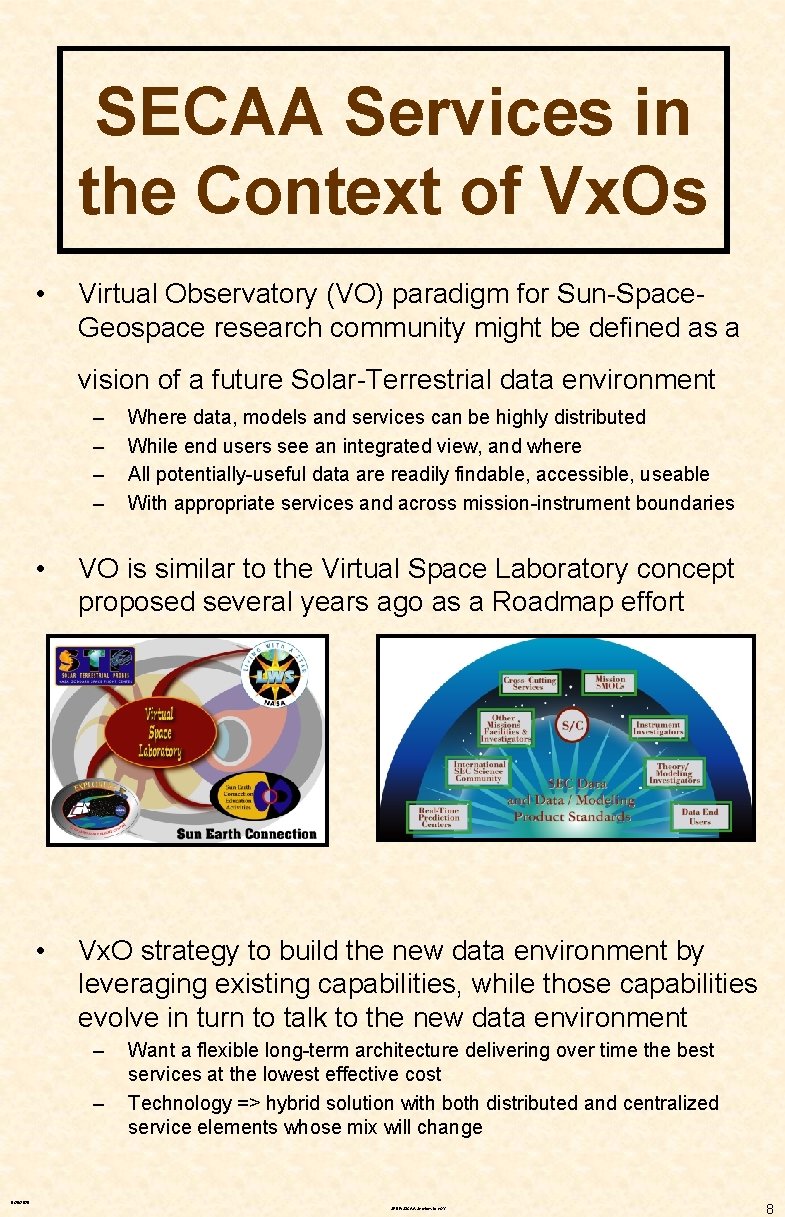

SECAA Services in the Context of Vx. Os • Virtual Observatory (VO) paradigm for Sun-Space. Geospace research community might be defined as a vision of a future Solar-Terrestrial data environment – – Where data, models and services can be highly distributed While end users see an integrated view, and where All potentially-useful data are readily findable, accessible, useable With appropriate services and across mission-instrument boundaries • VO is similar to the Virtual Space Laboratory concept proposed several years ago as a Roadmap effort • Vx. O strategy to build the new data environment by leveraging existing capabilities, while those capabilities evolve in turn to talk to the new data environment – – Want a flexible long-term architecture delivering over time the best services at the lowest effective cost Technology => hybrid solution with both distributed and centralized service elements whose mix will change 9/26/2020 SPDF/S 3 CAA Services to e. GY 8

Short-Term Plans • • Retain (+build) excellence & utility of what works now – – Must maintain performance and reliability Access to more higher quality/content) distributed) data – New services (e. g. FTP Data Finder), functions and I/F flexibility Data, models, and services through multiple paths – • – – Via Web Services with XML/WSDL-SOAP for communications, URL pointers to result files (data files or service products) Exploit these extensions directly (Java-based clients, using Web. Start for easy installation e. g. SSCWeb 3 D interactive plots) Support mapping to evolving SEC data dictionary (SPASE effort) Enable and partner in the various Vx. O efforts – – – • FTP (files+s/w), service user-I/Fs (html, Java), Web Service APIs Data and capabilities available to early SEC Vx. Os – • (& (& Data format translation and other enabling services Data/service "provider" (and “consumer”) to larger Vx. O environment Expect framework and participation will evolve to a range of cooperative and innovative partnerships (e. g. VITMO conversation) VO concept should be empowering – – – Wider range of data with much deeper analysis capabilities Wide range of service approaches • E. g Many interfaces and services evolving over time that are "easy to prove" by attaching to full data environment Multi-source data coupled to display, models and analysis, • Including accessible computing power 9/26/2020 SPDF/S 3 CAA Services to e. GY 9

Virtual Observatory Technical Questions, Concerns and Personal Observations • Technology is only one part of making the right data fully accessible to the required user community – – – • Primary consideration in implementing Virtual Observatories must be effective and adequate service to the end users of the data – – – • But software … takes time to install and learn to operate May not readily integrate data from different sources May have issues with platforms and reliance on commercial s/w Many users want/need more than simple finding and retrieval (even with supporting s/w) – • Users need ability to perform a full range of functions Users need to be able to accomplish functions quickly Users need to be able to perform functions predictably • What worked yesterday should work today Software libraries and simple finding/retrieval can offer high functional (analysis) capabilities – – – • Resource and cultural issues must have ongoing attention Full data requirements to accomplish a science program (e. g. LWS) needed center-stage early & within effective data policy framework Software is only one part (if perhaps the most fun) of the problem And need functions across mission / dataset boundaries Higher-functions hard with too much heterogeneity – Metadata standards vital; data format standards still important 9/26/2020 SPDF/S 3 CAA Services to e. GY 10

• Not everything may make sense to distribute – – • NASA science research community uses (and will use) many distinct operating systems (and versions), hardware and software platforms – – • User “wall-clock”time is the most valuable commodity • Plus development and maintenance resources of couse WAN transfer times are still a design issue • Often better to store multi-source data at the service host Data service architecture with almost all work done at the server end and talking to thin (very basic browser) clients is fairly robust • Can be made to work almost every time for almost everybody ”Duplicated" disk storage is one more tool (and OK if it works) • Can allow less complex s/w development and maintenance • Allows work offline preparing diverse data for common service Complex functionality built on distributed data and smart desktop clients will present cross-platform and maintenance challenges • E. g. Web services to talk to both Java and. NET clients • E. g. Testing for client development and support for easy installation across multiple platforms E. g. Deployed web services infrastructure may become hard to change or evolve (as technology evolves, as new requirements are identified) as more clients are developed • We‘re changing our web service I/F but are you able/willing to change your client as we change and evolve over time? KISS will always be important for effective services, software installation/operation and interfaces – – – Critical future science from a diverse research community Options for a few “power” users will confuse many “novice” users • We are ALL novices more times than we might care to admit Can there be too much data and too much capability to use? Contact information: mailto: robert. e. mcguire@nasa. gov 9/26/2020 SPDF/S 3 CAA Services to e. GY 11

See Landscape for overall poster layout and printing instructions 9/26/2020 SPDF/S 3 CAA Services to e. GY 12

The Redesigned SPDF Website • The Space Physics Data Facility (SPDF) at Goddard has just opened a revamped website both to enhance more unified access to the services funded under the Sun Solar System Connection Active Archive (S 3 CAA) effort as well as other activities and services with SPDF. • 9/26/2020 SPDF/S 3 CAA Services to e. GY The website features a “Data and Orbits” interactive page that allows users to readily identify which services support data from which missions 13

- Slides: 13